4. Results

This section presents the empirical results for the claim frequency, claim severity, and pure premium models. We begin by evaluating the performance of alternative models for claim frequency, followed by claim severity, and then assess the combined frequency–severity decomposition and direct modeling approaches.

4.1. Claim Frequency Model Performance

Table 4 summarizes the performance of the claim frequency models.

Among all models, XGBoost achieves the best performance across all evaluation metrics, including the lowest MSE and MAE, as well as the highest correlation and lift. In particular, the lift in the top decile indicates a strong ability to identify high-risk policies, highlighting the effectiveness of machine learning in risk segmentation.

Among the parametric models, the Poisson model with spline terms and the GAM provide modest improvements over the standard Poisson GLM, suggesting that incorporating nonlinear effects enhances predictive performance. In contrast, the Negative Binomial model performs similarly to the Poisson GLM, indicating that overdispersion does not substantially affect predictive accuracy in this dataset.

Overall, these results demonstrate that machine learning models provide superior predictive performance and improved risk differentiation compared to classical parametric approaches.

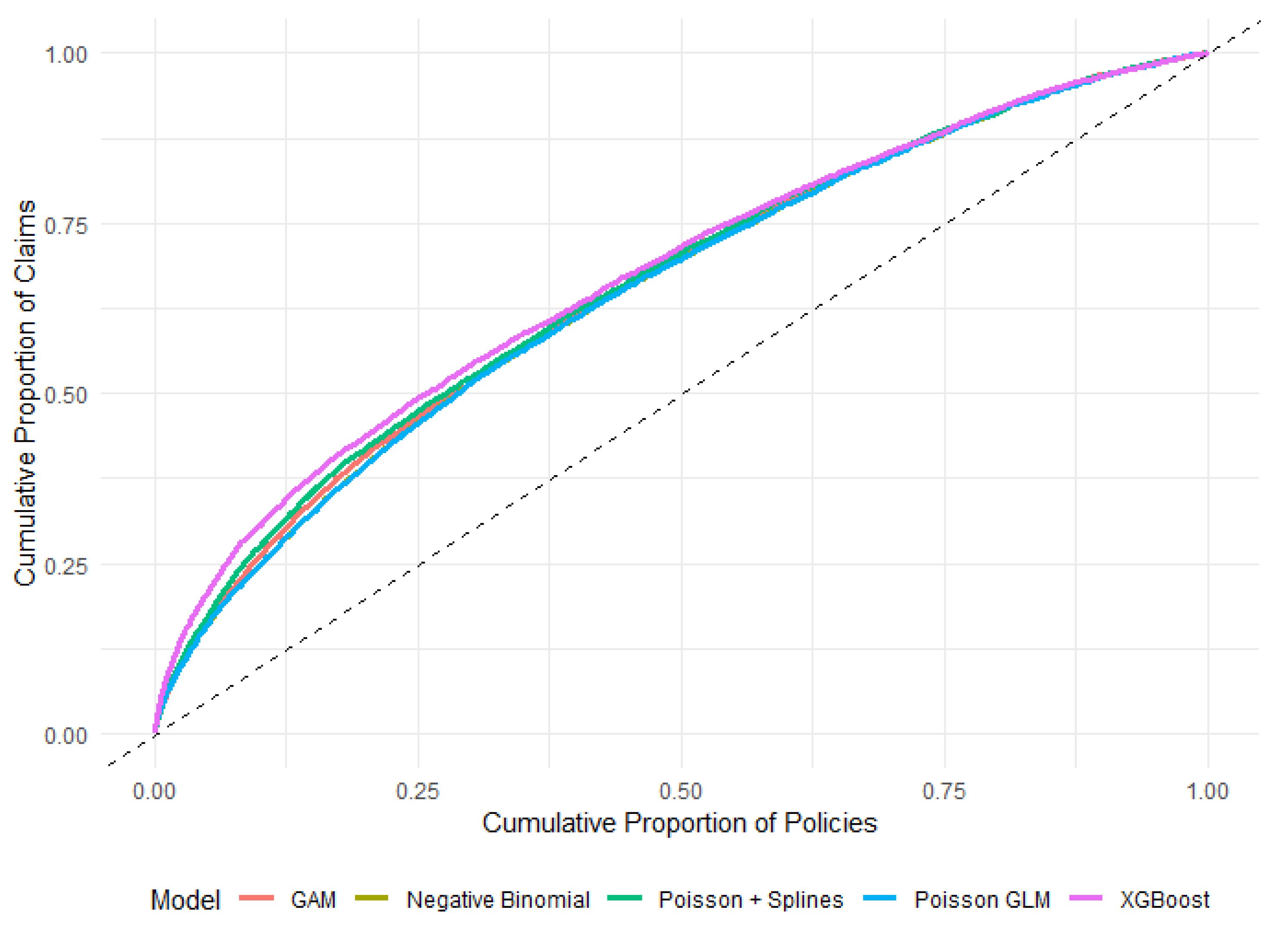

Claim Capture Curves

Figure 2 presents the cumulative claim capture curves for the competing models. The XGBoost model consistently captures a larger proportion of claims in the highest-risk segments, followed by the Poisson model with spline terms and the GAM. The standard Poisson and Negative Binomial models exhibit weaker concentration of claims.

These results are consistent with the lift statistics and confirm that models incorporating nonlinear effects improve risk segmentation, with machine learning providing additional gains.

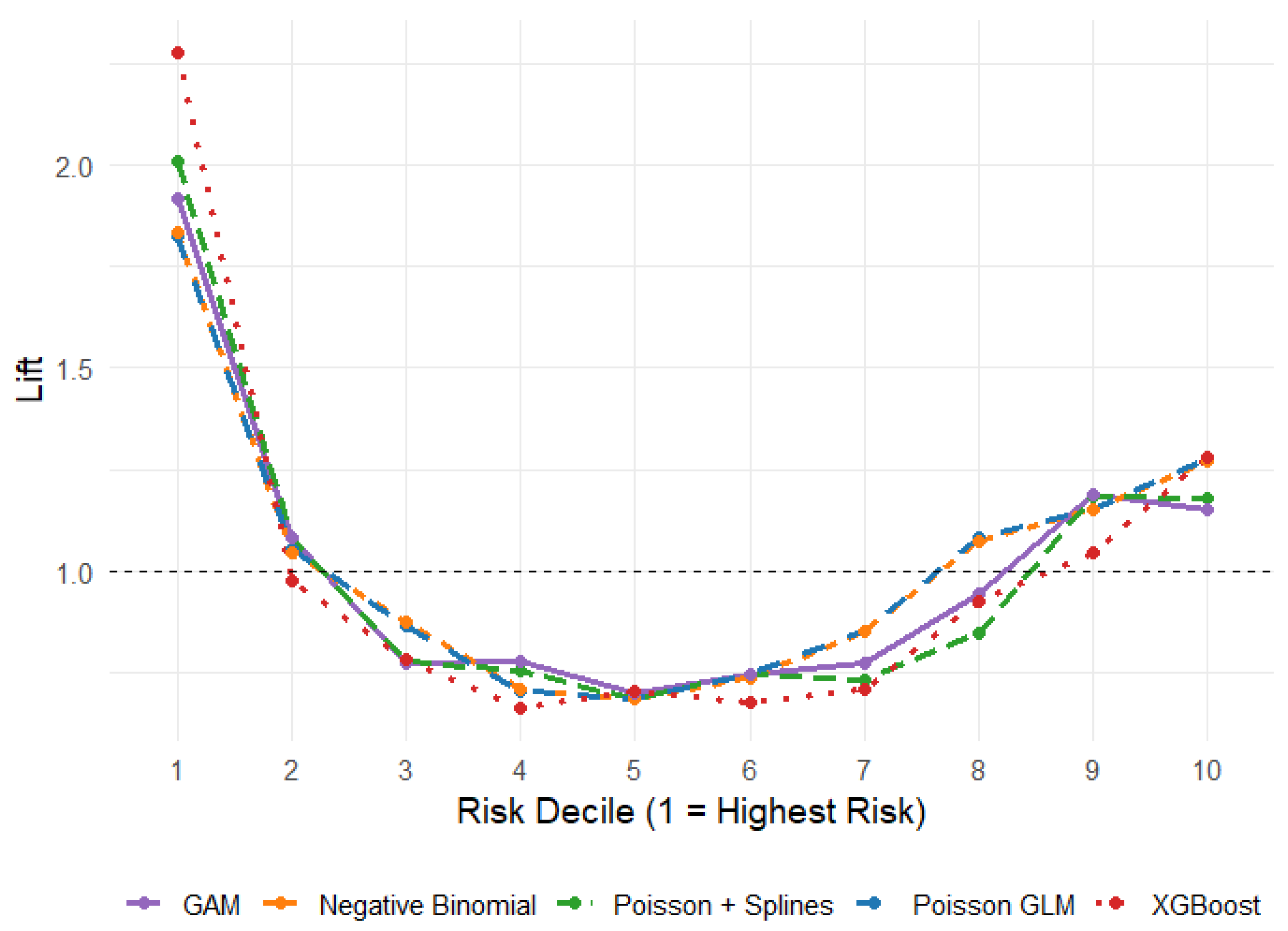

Decile Lift Analysis

Figure 3 presents the decile lift curves for the competing models. The XGBoost model achieves the highest lift in the top decile, followed by the Poisson model with spline terms and the GAM. Differences across the middle deciles are relatively small, reflecting the low overall claim frequency and limited variability in moderate-risk groups.

Interpretation of Model Components

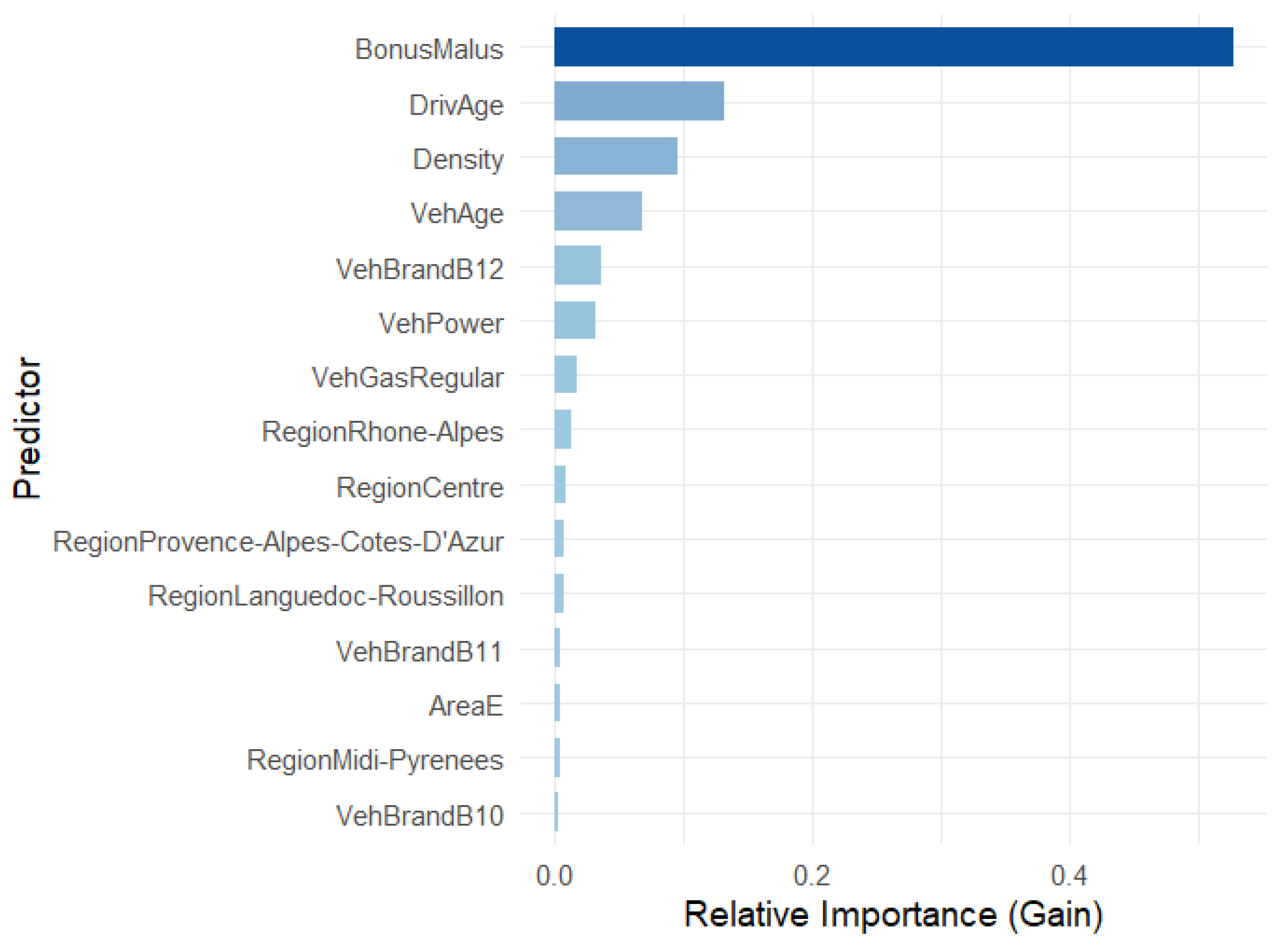

Variable importance results from the XGBoost model (

Figure 4) indicate that the bonus–malus score is the dominant predictor of claim frequency, followed by driver age, population density, and selected vehicle characteristics. These findings are consistent with actuarial intuition and highlight the importance of past claims experience and demographic factors in risk assessment.

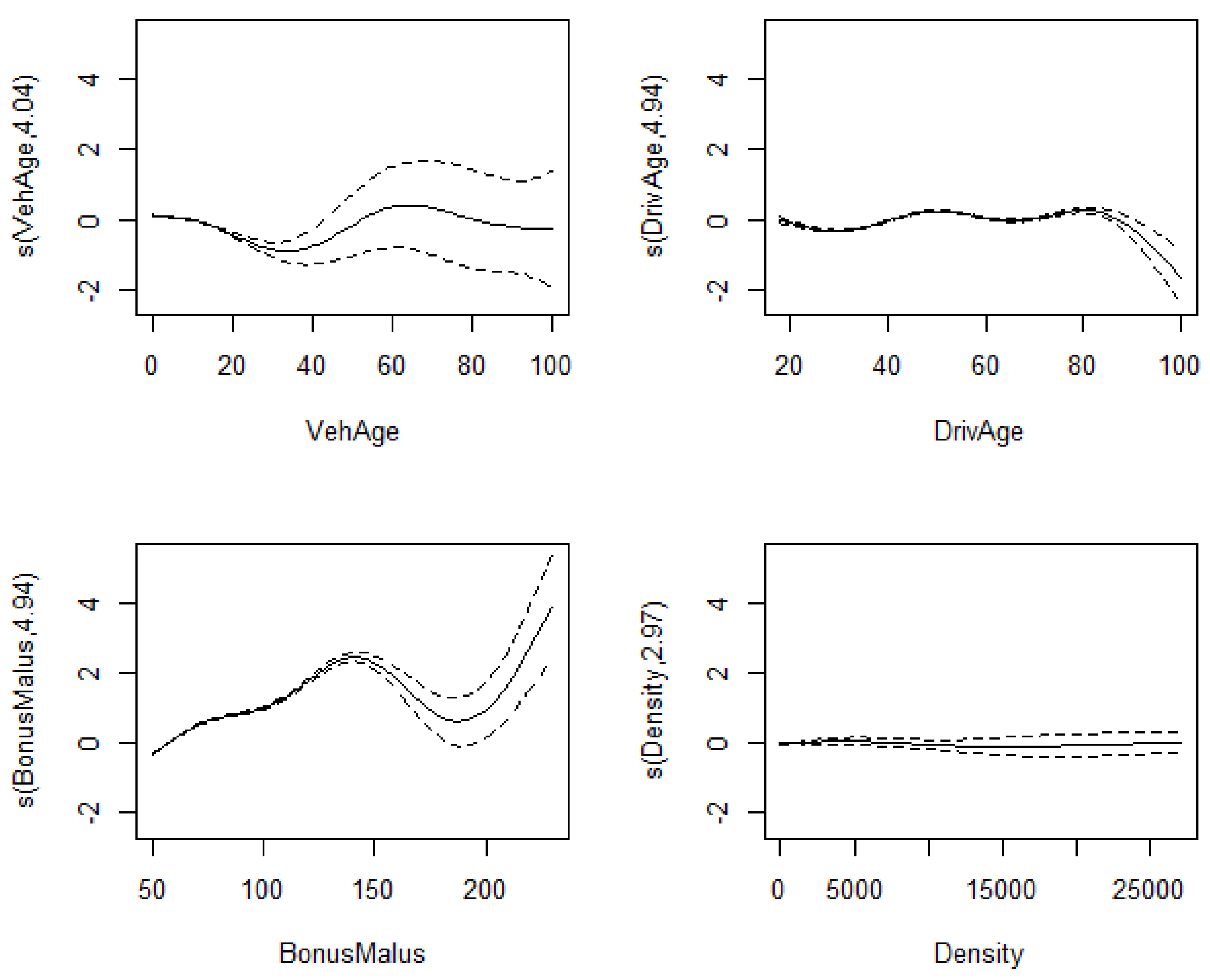

The smooth functions estimated by the GAM (

Figure 5) reveal nonlinear effects for several continuous predictors. In particular, the bonus–malus variable exhibits a strong nonlinear relationship with claim frequency, while vehicle age and population density display more moderate nonlinear patterns.

Model Selection

The results indicate that XGBoost provides the strongest overall predictive performance, achieving the lowest error measures, the highest correlation, and the greatest lift in the highest-risk decile. In addition, the Poisson model with spline terms delivers competitive performance while retaining the interpretability and transparency of the generalized linear modeling framework widely used in actuarial practice.

To enable a direct comparison between a traditional actuarial approach and a modern machine learning method in subsequent analyses, both models are retained. The Poisson spline model serves as the representative GLM-based specification, while XGBoost represents the machine learning approach. This dual-model strategy allows us to evaluate not only predictive accuracy but also the trade-offs between interpretability and performance in insurance pricing applications. These findings justify the selection of XGBoost and spline-based GLMs for subsequent pure premium modeling.

4.2. Claim Severity Model Performance

Table 5 summarizes the performance of the claim severity models.

The XGBoost model substantially outperforms the Gamma GLM across all evaluation metrics. In particular, it achieves a markedly lower MSE and MAE, along with a higher correlation with observed claim severity. These results indicate that machine learning methods are more effective in capturing the complex nonlinear relationships and interactions inherent in claim size data.

The Gamma GLM, while appropriate for modeling positive and right-skewed outcomes, assumes a log-linear relationship between covariates and severity. This assumption limits its ability to capture nonlinear effects and interactions, leading to inferior predictive performance.

Despite the improvement provided by XGBoost, the correlation remains relatively low, reflecting the inherent variability and stochastic nature of claim severity. This suggests that, although machine learning improves predictive accuracy, a substantial portion of severity variation remains unexplained.

These findings help explain the improved performance of decomposition models that incorporate XGBoost for severity, particularly in terms of prediction accuracy.

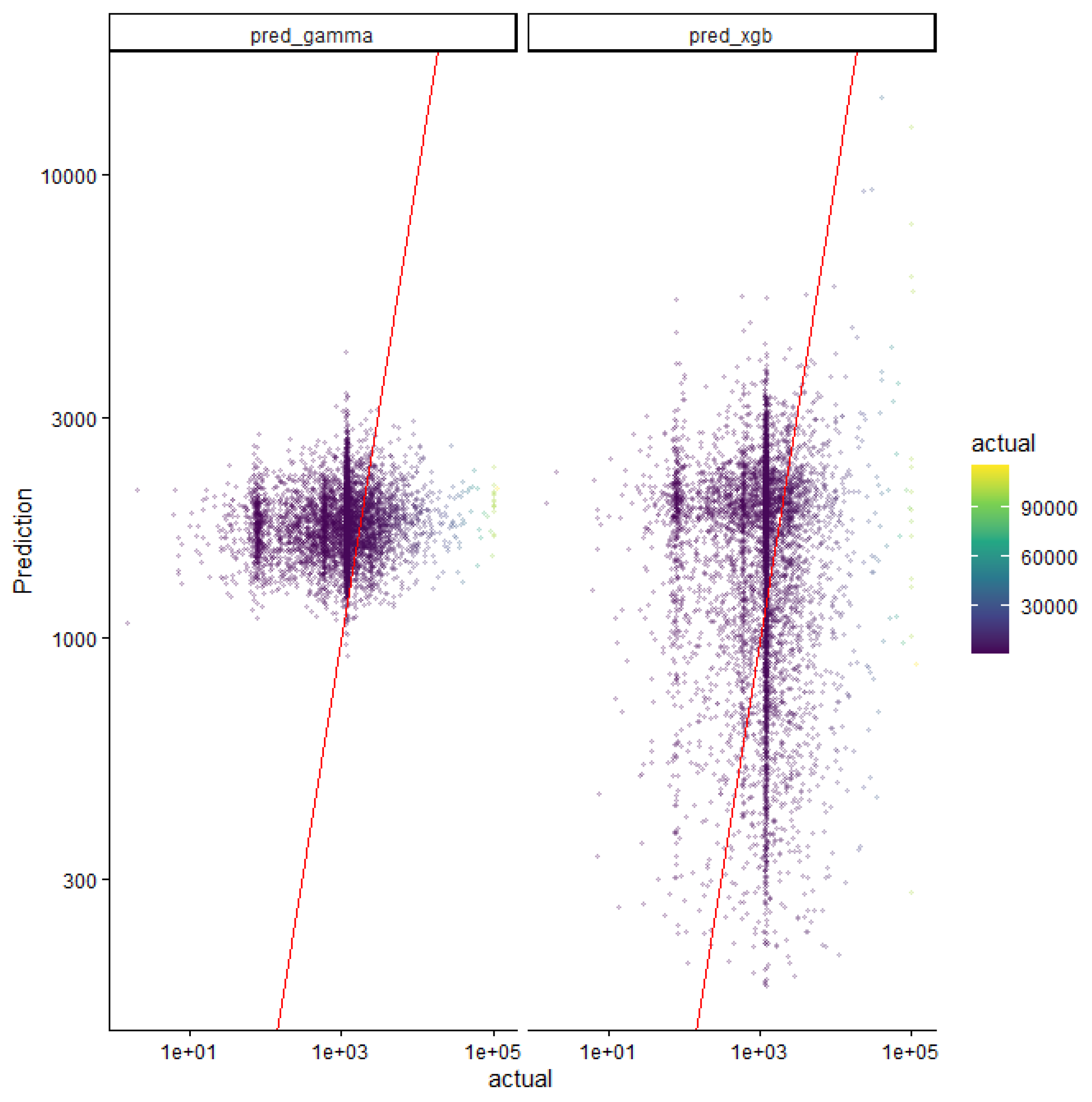

Figure 6 illustrates the relationship between actual and predicted claim severity. The Gamma GLM produces highly concentrated predictions, indicating strong shrinkage toward the mean and limited ability to capture variability in claim sizes. In contrast, the XGBoost model exhibits greater dispersion and improved alignment with observed values, highlighting its ability to capture nonlinear relationships and complex interactions.

However, both models display considerable scatter, underscoring the inherently stochastic nature of claim severity and the difficulty of accurately predicting individual claim amounts. Observations with zero or near-zero values were excluded from the log-scale visualization to avoid numerical issues associated with logarithmic transformation.

4.3. Results for Frequency–Severity Decomposition for Pure Premium

Table 6 presents the performance of the frequency–severity decomposition models using both error-based and ranking-based metrics.

Among the models considered, the XGBoost–XGBoost configuration achieves the best overall prediction accuracy, with the lowest MSE and MAE, as well as the highest correlation. This indicates that incorporating machine learning methods for both frequency and severity improves pure premium estimation.

In contrast, the ranking-based results reveal a different pattern. The XGBoost–Gamma model achieves the highest lift, indicating superior performance in identifying high-risk policies. This suggests that improvements in modeling claim frequency have a greater impact on risk segmentation than enhancements in severity modeling.

The difference between error-based and lift-based metrics highlights an important trade-off. While the XGBoost–XGBoost model minimizes prediction error, the XGBoost–Gamma model is more effective in concentrating losses within the highest-risk segment. This indicates that different model configurations may be preferred depending on the objective, such as pricing accuracy versus risk classification.

The classical Spline–Gamma model yields the weakest performance across all metrics, although it remains a useful benchmark due to its interpretability.

Overall, these results demonstrate that machine learning improves predictive performance within the decomposition framework, but its impact differs between accuracy and risk segmentation.

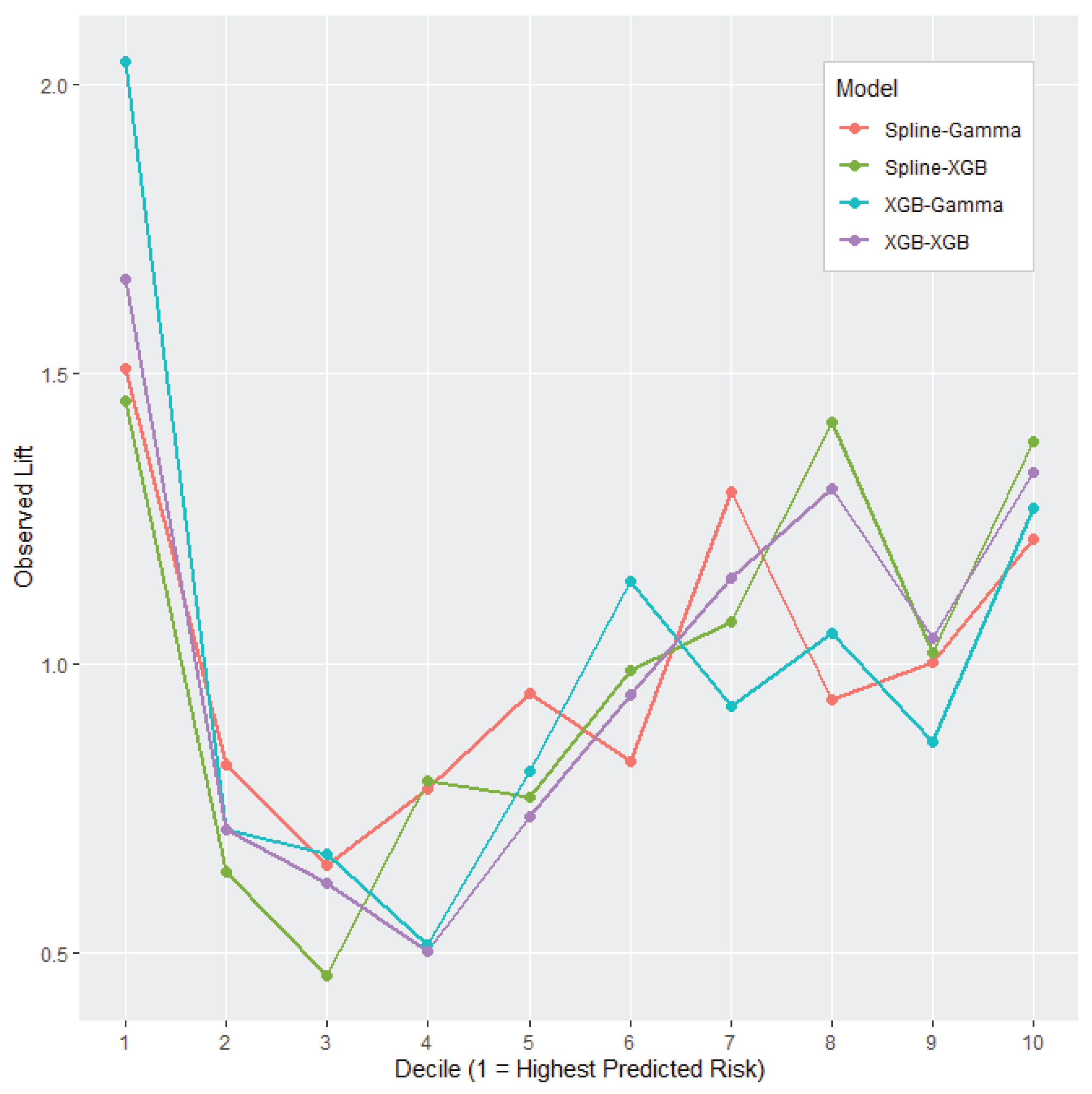

Risk Segmentation Performance

Figure 7 illustrates the decile lift curves for the decomposition models. All models exhibit a strong concentration of losses in the highest-risk decile, indicating effective identification of high-risk policies.

Among the models, the XGBoost–Gamma configuration achieves the highest lift, confirming its superior performance in risk segmentation. In contrast, although the XGBoost–XGBoost model provides the best overall prediction accuracy, its lift is lower, reinforcing the trade-off between prediction accuracy and risk ranking performance.

These findings suggest that claim frequency plays a dominant role in risk differentiation, while claim severity contributes more to overall prediction accuracy. Consequently, improvements in frequency modeling have a greater impact on identifying high-risk policies than enhancements in severity modeling.

The non-monotonic behavior observed in lower-risk deciles reflects the inherent variability of insurance losses, particularly for policies with low exposure. These results provide empirical evidence supporting the continued use of frequency–severity decomposition, particularly when combined with machine learning methods.

4.4. Comparison Between Decomposition and Direct Modeling for Total Loss

To evaluate the effectiveness of direct modeling approaches, we compare the best-performing decomposition model (XGBoost–XGBoost) with direct XGBoost and Tweedie models for total claim amount prediction.

Table 7 summarizes the performance of decomposition and direct modeling approaches.

The decomposition-based XGBoost–XGBoost model consistently outperforms both direct approaches across all evaluation metrics. It achieves the lowest MSE and MAE, as well as the highest correlation, demonstrating the effectiveness of modeling claim frequency and severity separately.

The direct XGBoost model provides competitive performance, indicating that flexible machine learning methods can capture nonlinear relationships in aggregate losses. However, it does not match the accuracy of the decomposition framework, suggesting that modeling aggregate losses directly may overlook important structural information captured by the frequency–severity approach.

The Tweedie model, despite its theoretical foundation as a compound Poisson–Gamma model, exhibits weaker performance across all metrics. This indicates that, although the data exhibit a compound structure, modeling aggregate losses within a single unified framework may be less effective than decomposing the problem into separate components.

The estimated Tweedie parameter () confirms that the data follow a compound Poisson–Gamma structure. However, the inferior predictive performance of the Tweedie model suggests that imposing a single functional relationship between covariates and aggregate loss may be overly restrictive. In contrast, the decomposition framework allows for distinct covariate effects on frequency and severity, resulting in greater modeling flexibility and improved predictive accuracy.

Overall, these results highlight the importance of structural decomposition in actuarial modeling. While machine learning enhances predictive performance within individual components, explicitly separating frequency and severity yields superior results. These findings provide empirical support for the continued use of the frequency–severity decomposition framework in modern insurance pricing.