1. Introduction

Accurate estimation of expected losses is a central challenge in automobile insurance pricing. Insurers must determine premiums that reflect the underlying risk of each policyholder while ensuring fairness, competitiveness, and regulatory compliance, as premium estimation directly affects portfolio stability and profitability.

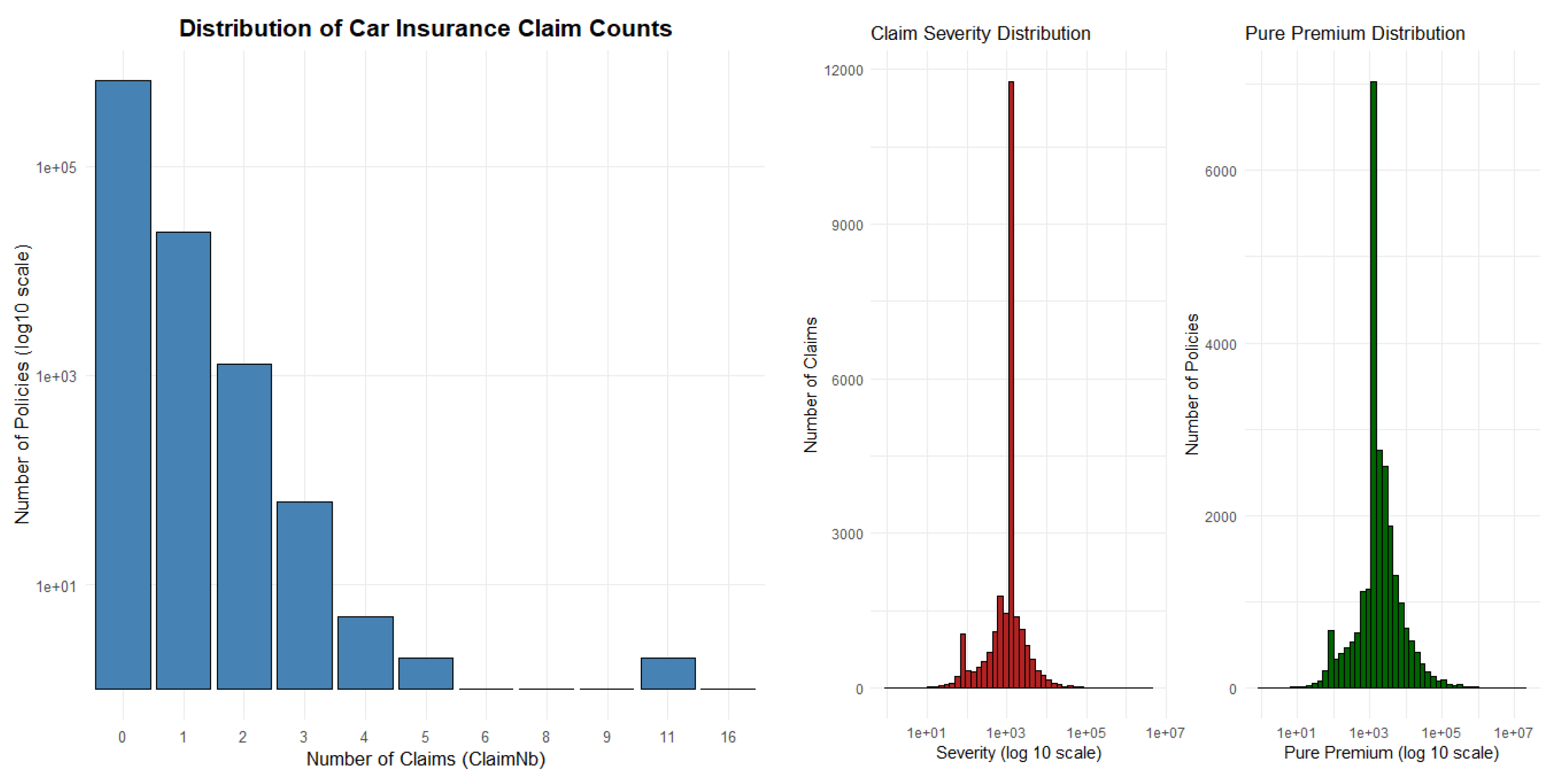

A cornerstone of actuarial practice is the frequency–severity framework, which decomposes the expected loss—or pure premium—into two latent components: the expected number of claims and the expected claim size. By modeling these components separately, actuaries can employ statistical distributions tailored to the distinct stochastic properties of each process before recombining them for final loss estimation. This framework provides both interpretability and flexibility, making it fundamental to modern insurance ratemaking.

Generalized Linear Models (GLMs) have long served as the industry standard for actuarial modeling. Existing literature highlights the capacity of GLMs to provide an interpretable yet flexible structure for non-normal, skewed, and heteroscedastic insurance data [

3,

7,

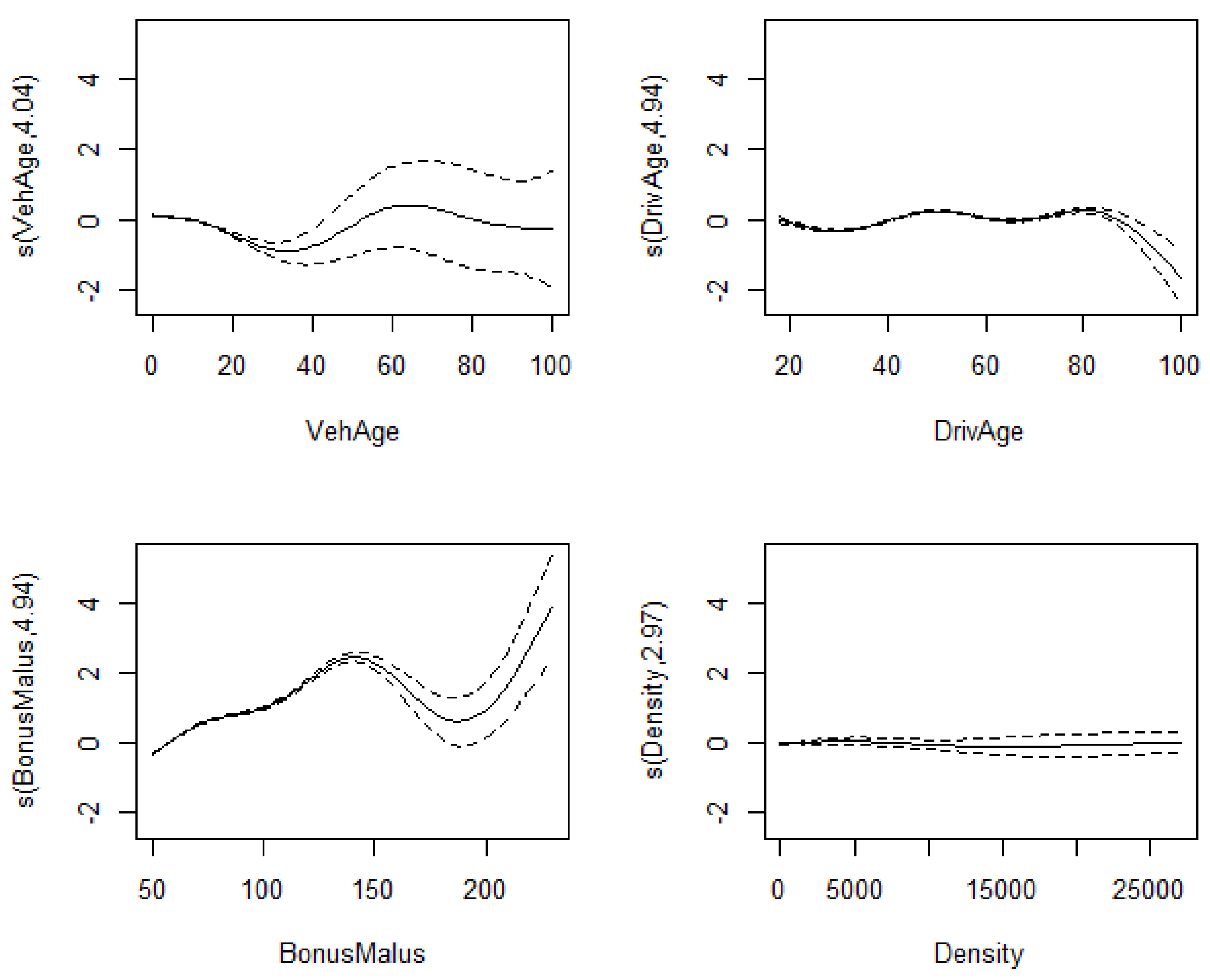

8]. While Poisson and Negative Binomial models are commonly used for claim frequency, Gamma models are widely applied to claim severity due to the strictly positive and right-skewed nature of loss amounts. Extensions such as Generalized Additive Models (GAMs) allow for nonlinear relationships while preserving model transparency [

4,

10].

Machine learning (ML) has emerged as a powerful tool in insurance analytics. Tree-based algorithms and gradient boosting methods are particularly effective in capturing complex interactions and nonlinear relationships that traditional parametric models may overlook. Empirical evidence suggests that models such as XGBoost can significantly improve performance in tasks ranging from claim frequency prediction to fraud detection [

2,

6,

9].

Recent studies have explored both direct and component-based modeling strategies using machine learning. Direct modeling of total claim amounts, using methods such as Support Vector Regression (SVR), XGBoost, and neural networks, has demonstrated strong predictive accuracy for aggregate loss estimation [

18]. Other studies incorporate machine learning within the classical frequency–severity framework, where gradient boosting models are compared with traditional GLMs [

19]. These findings indicate that machine learning consistently improves claim frequency prediction, while results for claim severity remain less conclusive due to the high variability of loss amounts.

Despite these advances, direct modeling approaches do not explicitly account for the structural decomposition of insurance losses into frequency and severity components. Moreover, existing evidence on the relative performance of decomposition-based and direct modeling approaches remains inconclusive, particularly when evaluated under both predictive accuracy and risk segmentation criteria.

Classical actuarial models continue to play an important role due to their interpretability and regulatory transparency. Consequently, recent research has focused on hybrid approaches that integrate machine learning techniques within the frequency–severity framework [

11,

12]. These approaches aim to enhance predictive performance while preserving the structural foundations of actuarial pricing.

Alternative unified modeling approaches have also been proposed, most notably through the Tweedie distribution, which provides a compound Poisson–Gamma representation of aggregate losses [

15,

16]. While such models offer strong theoretical appeal, it remains unclear whether they can outperform decomposition-based methods in practical insurance applications.

Motivated by these considerations and the lack of consensus in the literature, this study investigates whether machine learning improves insurance pricing by replacing the classical decomposition framework or by enhancing its individual components. In particular, we evaluate model performance not only in terms of prediction accuracy but also in terms of the ability to identify high-risk policies.

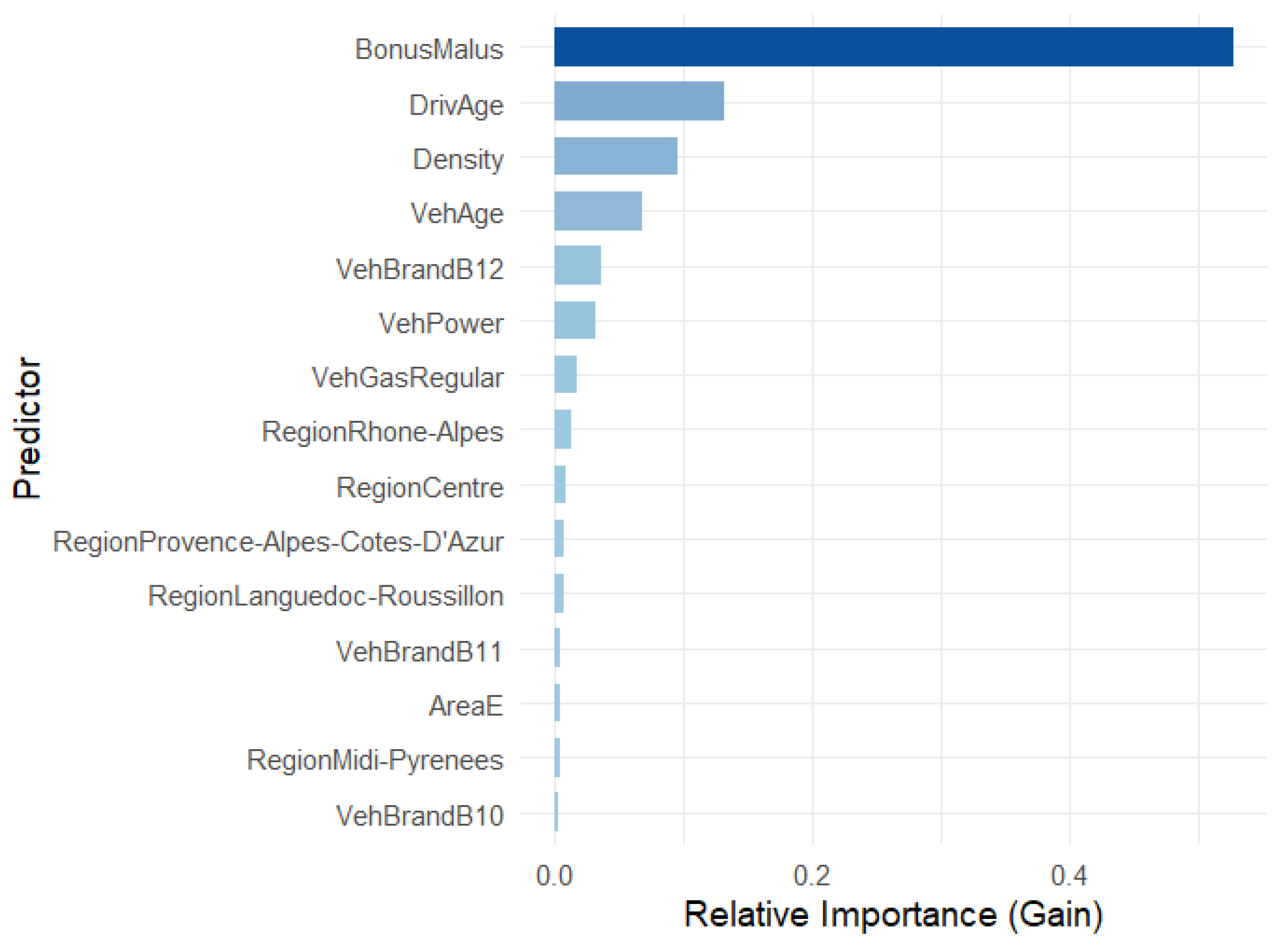

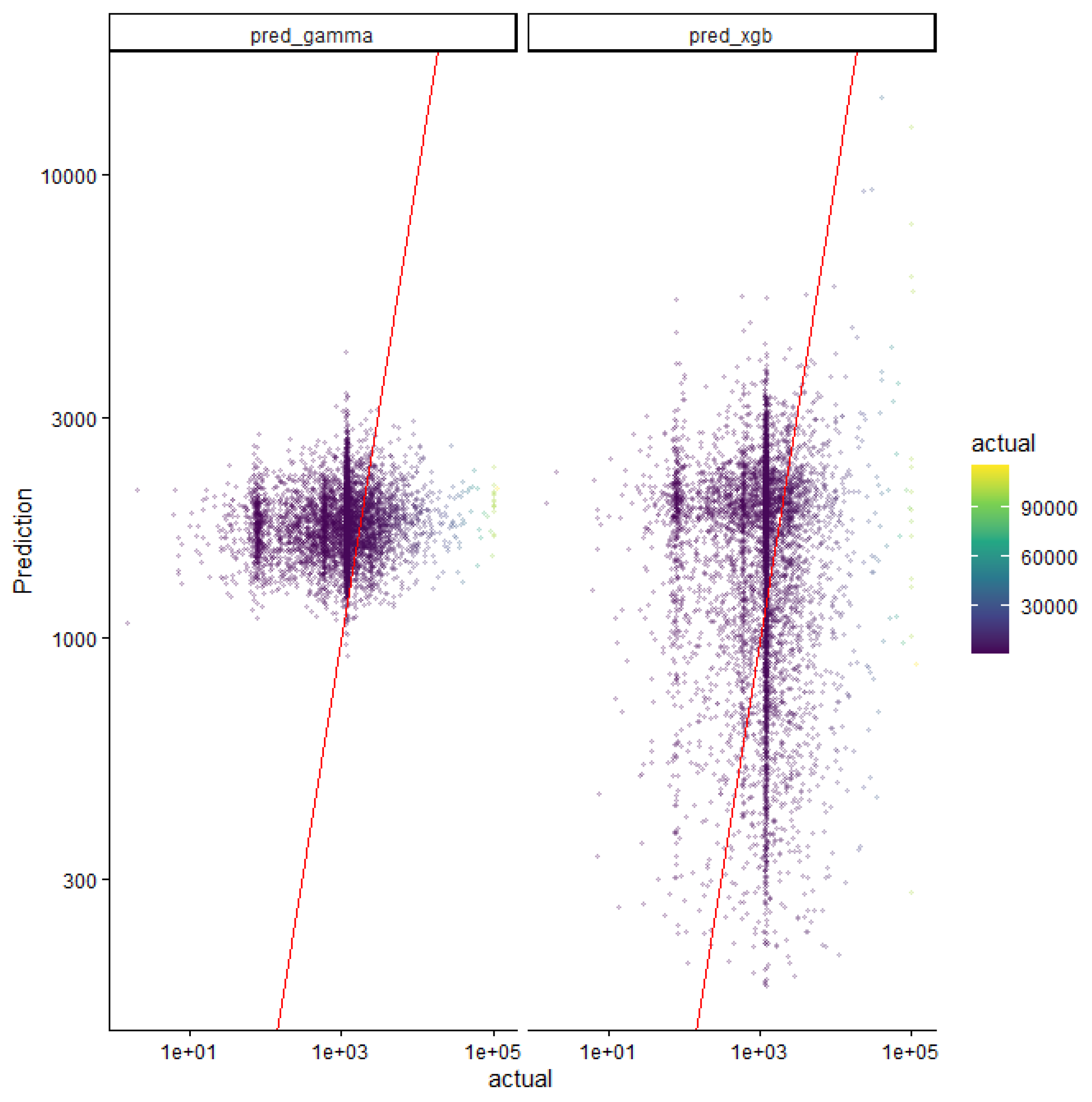

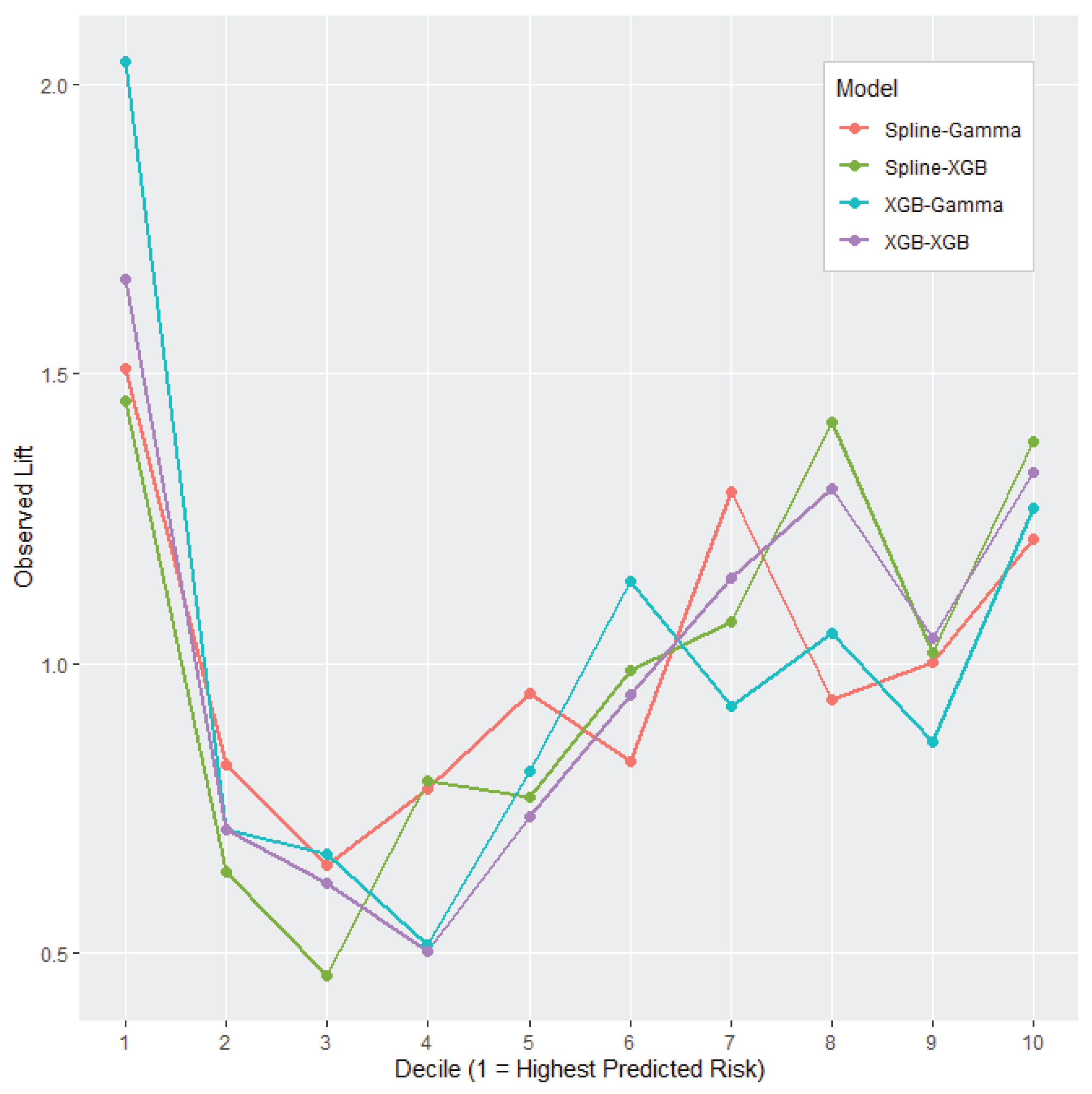

To address these questions, we develop a comprehensive modeling framework that integrates classical actuarial models with modern machine learning techniques. We evaluate several models for claim frequency, including Poisson GLMs, spline-based models, and XGBoost. For claim severity, we compare a Gamma GLM with XGBoost. These models are combined within the frequency–severity decomposition framework to estimate pure premium. In addition, we examine direct modeling approaches, including XGBoost and Tweedie models, for predicting aggregate losses.

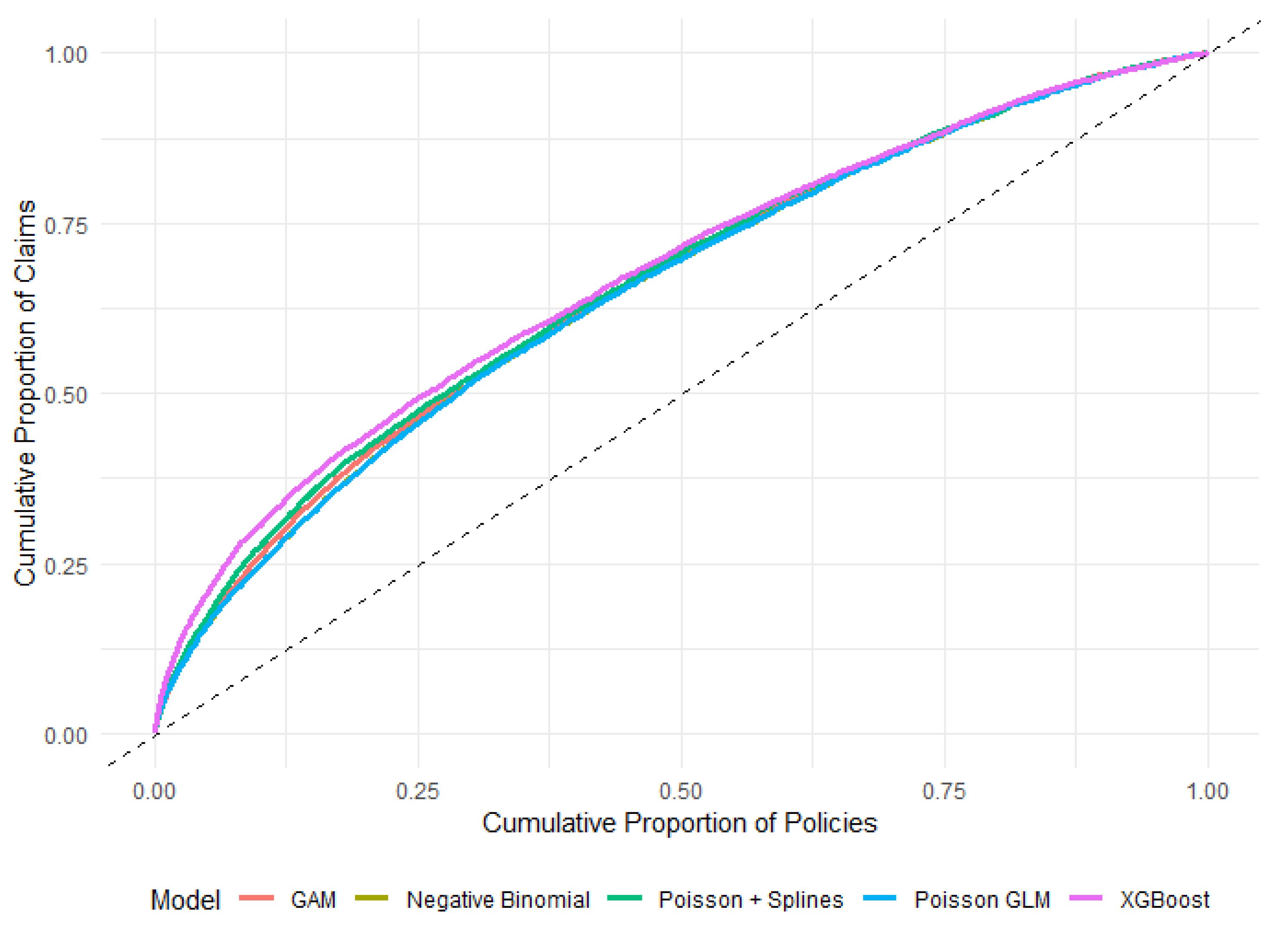

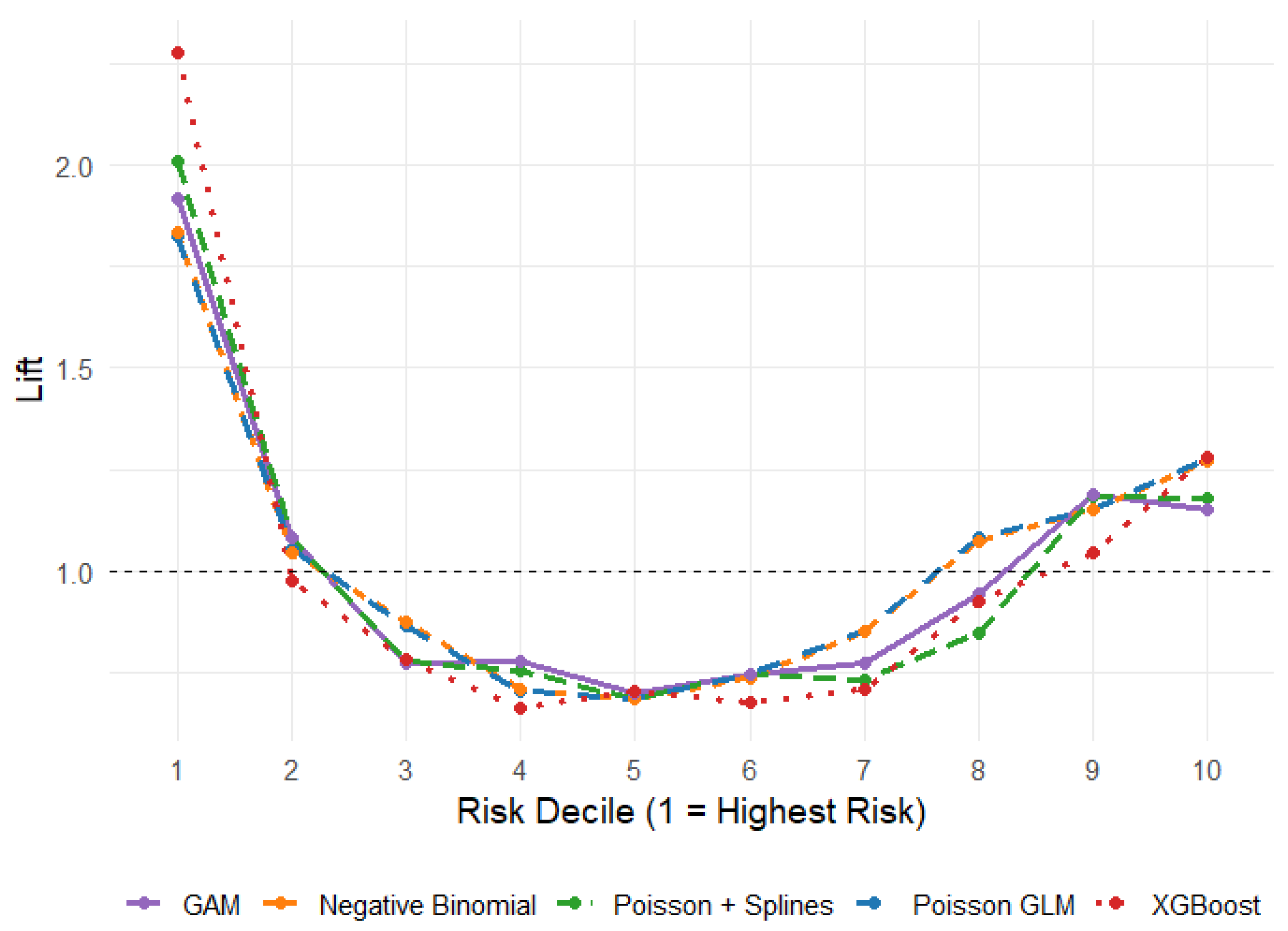

The contribution of this study is threefold. First, we demonstrate that machine learning methods significantly improve predictive performance for both claim frequency and claim severity, particularly in capturing nonlinear relationships and interactions. Second, we provide empirical evidence that the frequency–severity decomposition framework remains superior to direct modeling approaches, even when advanced machine learning methods are applied. Third, we identify a trade-off between prediction accuracy and risk segmentation: while models combining machine learning for both components achieve the best overall accuracy, models emphasizing frequency modeling provide superior identification of high-risk policies.

Overall, this study contributes to the literature on actuarial science and financial mathematics by providing a comprehensive comparison of classical and modern approaches to insurance pricing. The results highlight the continued relevance of the frequency–severity decomposition framework while demonstrating how machine learning can be effectively integrated to enhance predictive performance and risk differentiation.

The remainder of the paper is organized as follows. Section 2 describes the dataset and data preparation procedures; Section 3 presents the modeling framework and statistical methods; Section 4 reports the empirical findings; and Section 5 concludes with a discussion of the results and their implications for insurance pricing and risk management.