Submitted:

31 March 2026

Posted:

31 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- To what extent can large language models correctly apply explicitly requested software architectural patterns by creating specific architectural diagrams?

- 2.

- How does requirements representation affect the ability of LLMs to apply architectural patterns?

- 3.

- How does RAG affect the quality of the LLM-generated diagrams?

- 4.

- Can LLMs calculate reliable quantitative metrics regarding the diagrams they generated?

2. Related Work

3. Experimental Design

- Requirements format: functional and non-functional requirements expressed either as textual lists (two variants) or as an SRS document, also in two variants, as discussed below.

- Model type and size: locally executed models with varying numbers of parameters, as well as publicly available commercial models.

- Use of RAG: enabled or disabled.

- RAG source material: architectural reference material obtained either from academic textbooks or from curated web-based descriptions of architectural patterns.

- Embedding and retrieval configuration: embedding model, chunking strategy, and retrieval parameters used in the RAG pipeline.

3.1. Experimental Setup

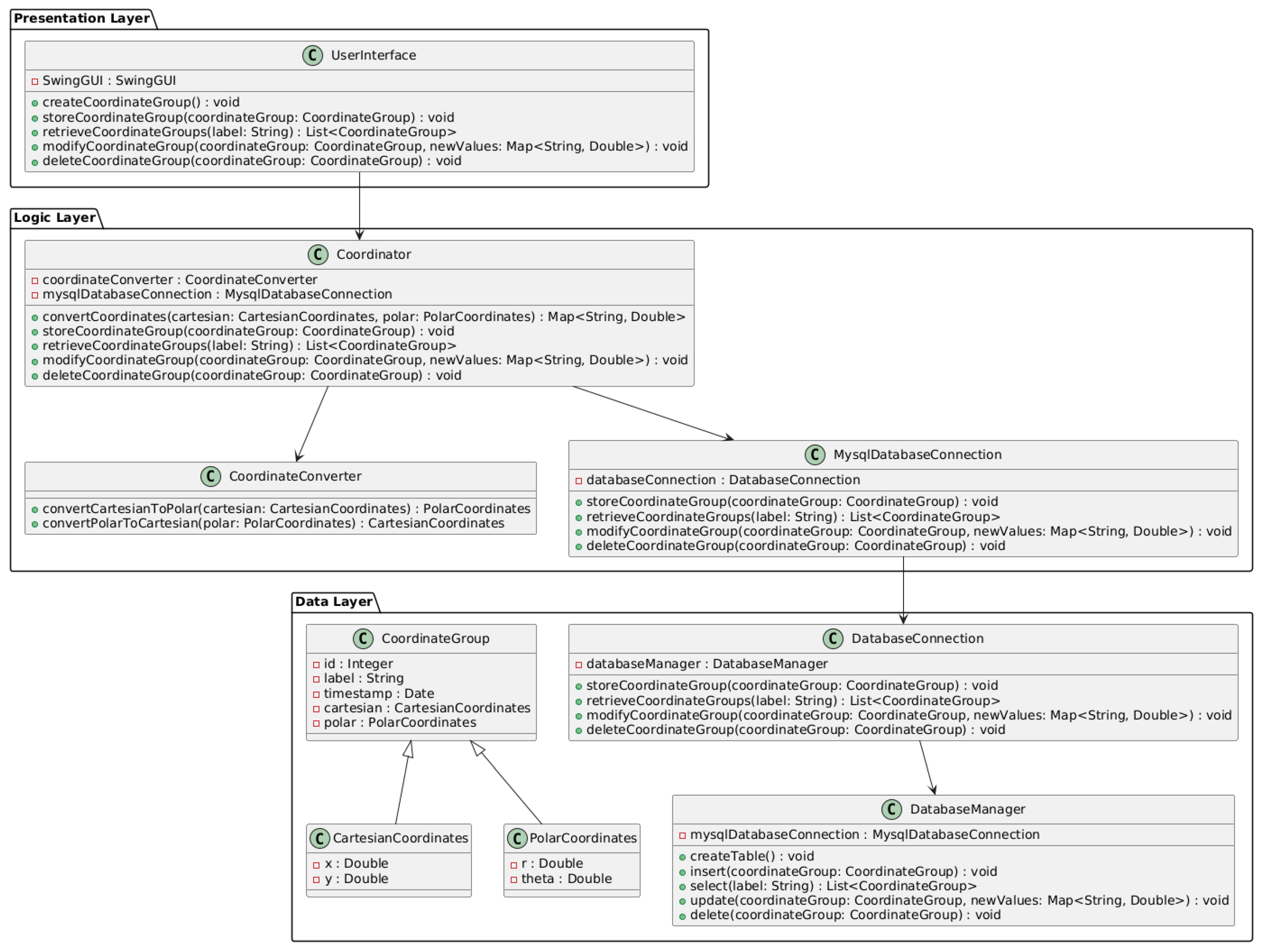

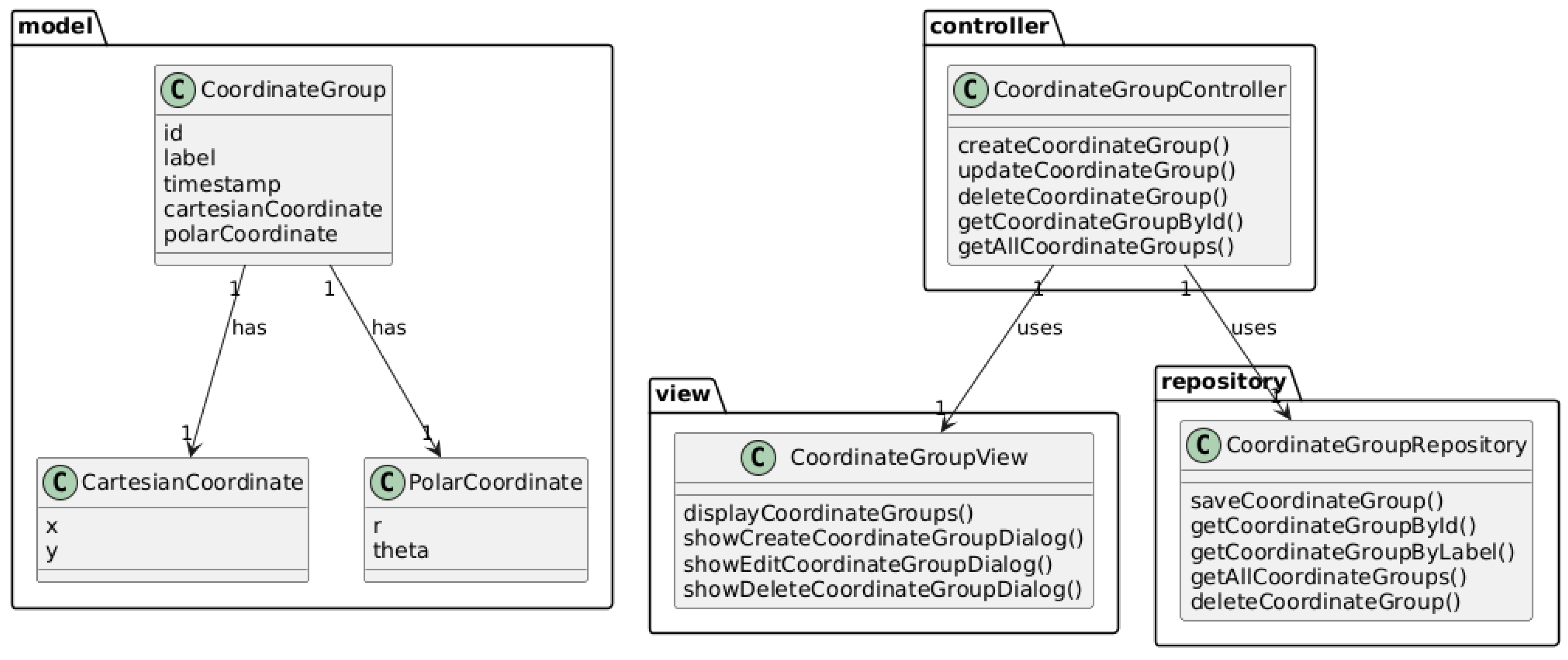

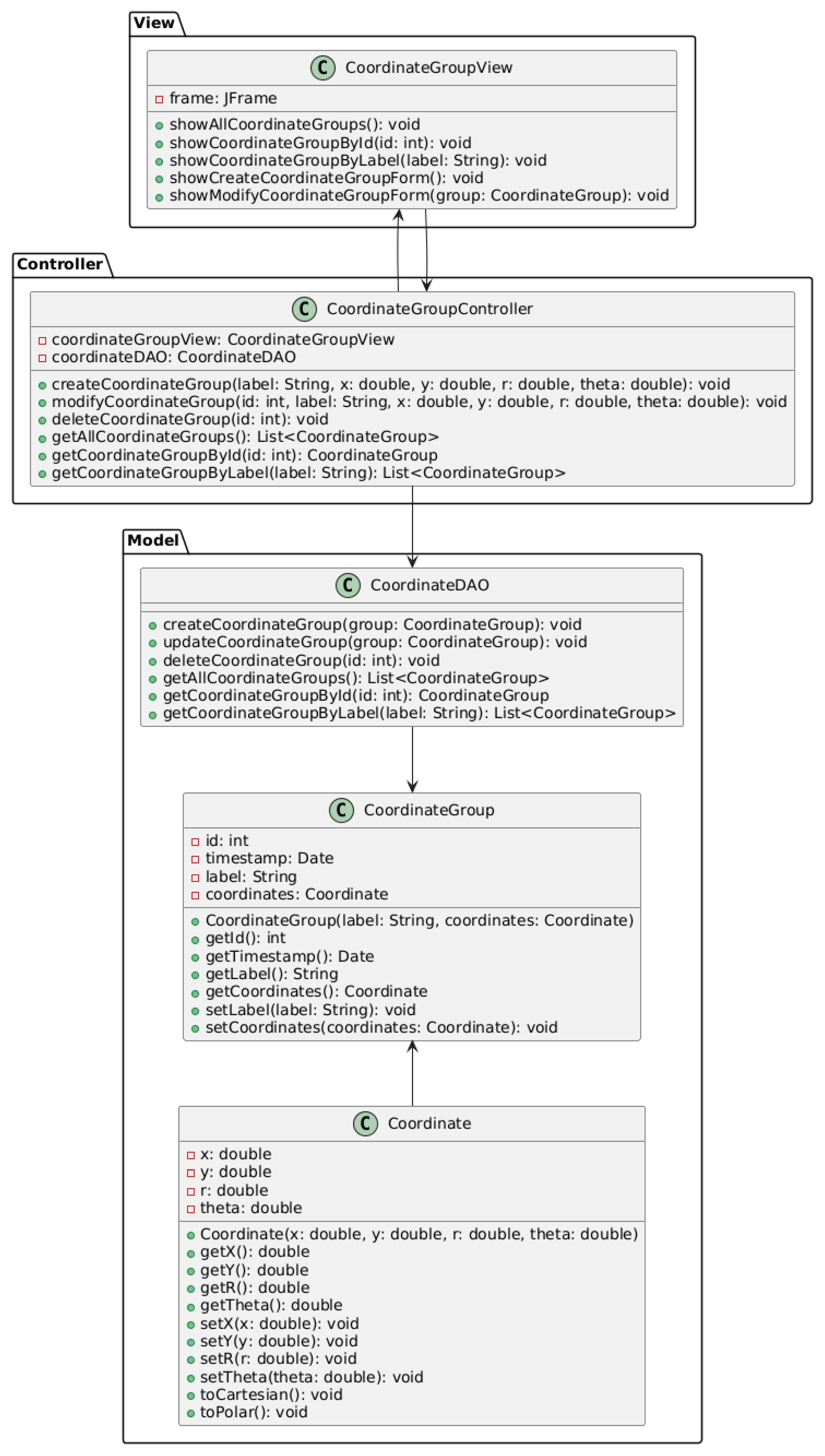

3.2. Phase 1: Dummy Coordinate Converter

3.3. Phase 2: MyCharts App, Architectured as Microservices

3.4. Qualitative Evaluation Dimensions

Phase 1 Evaluation Criteria

- Adherence to architecture: whether the LLM-generated diagram respects the structural principles of the requested architectural pattern and assigns responsibilities to appropriate components.

- Correctness of class relationships: whether associations, dependencies, and directions of interaction between classes are appropriate.

- Cohesion and coupling: whether related responsibilities are grouped within appropriate components while unnecessary inter-class dependencies are avoided.

- Consistency with requirements: whether the generated architecture satisfies the specific in-context functional and non-functional requirements described in the input.

Phase 2 Evaluation Criteria

- Functional alignment and responsibility distribution: whether each microservice corresponds to a bounded context and implements a focused set of functionalities.

- Coupling and deployment independence: whether services are loosely coupled and designed for independent deployment.

- Cohesion: whether each service maintains strong internal cohesion and whether use cases involve the appropriate set of services.

- Data management: whether each service owns and manages its own data rather than relying on shared databases.

- Data consistency: whether the design includes mechanisms for maintaining data consistency across services, for example through events or transactions.

- Communication and flow control: whether service interactions are implemented using appropriate coordination mechanisms such as choreography, orchestration, messaging systems, or API gateways.

- Non-functional requirements: whether the architecture satisfies the system-level non-functional requirements described in the input specification.

3.5. Quantitative Metrics for Microservices

- Statelessness index

- Data ownership coverage

- Service transaction share

- Service interface complexity

- Afferent service coupling

- Efferent service coupling

4. Results

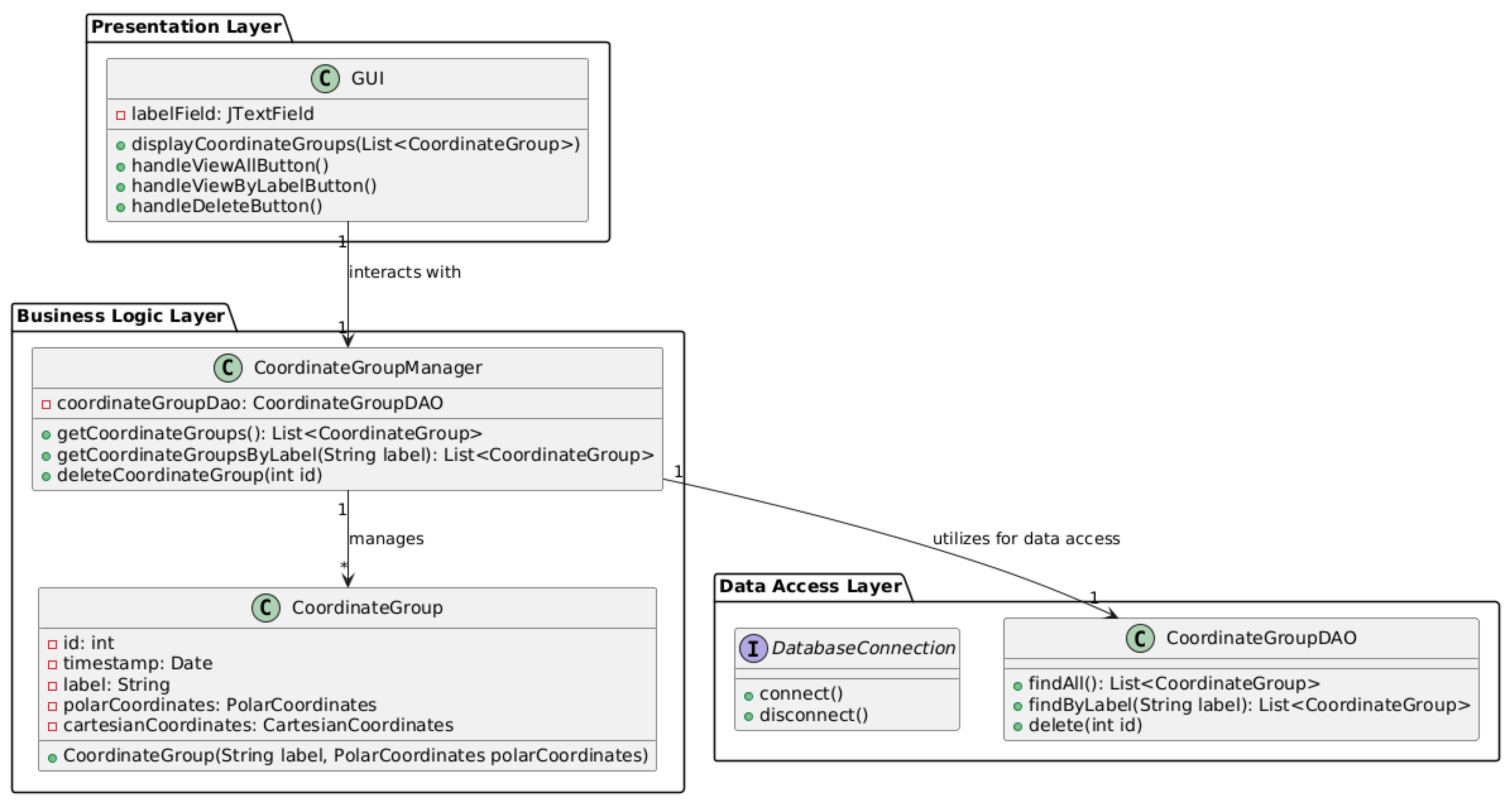

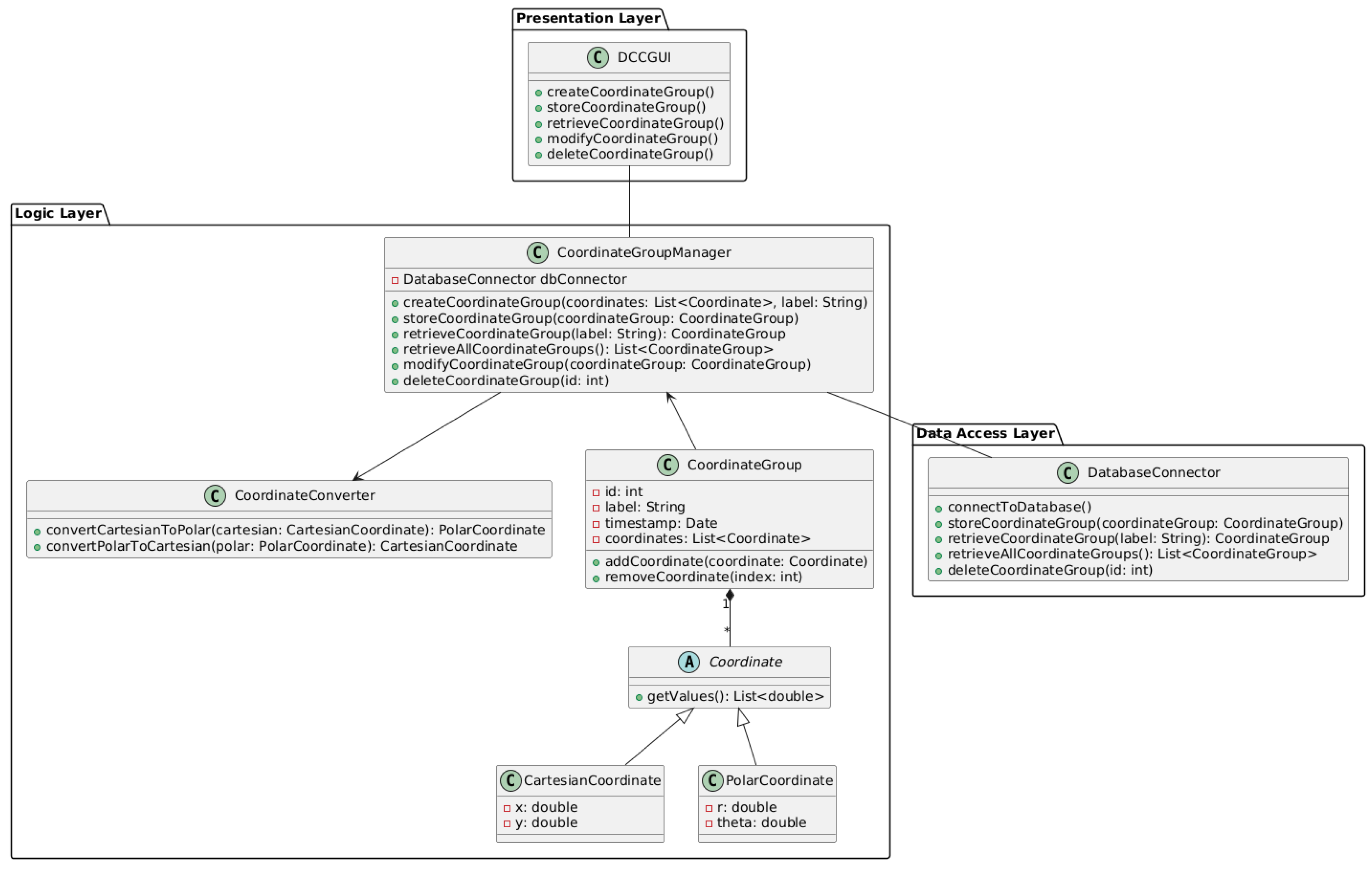

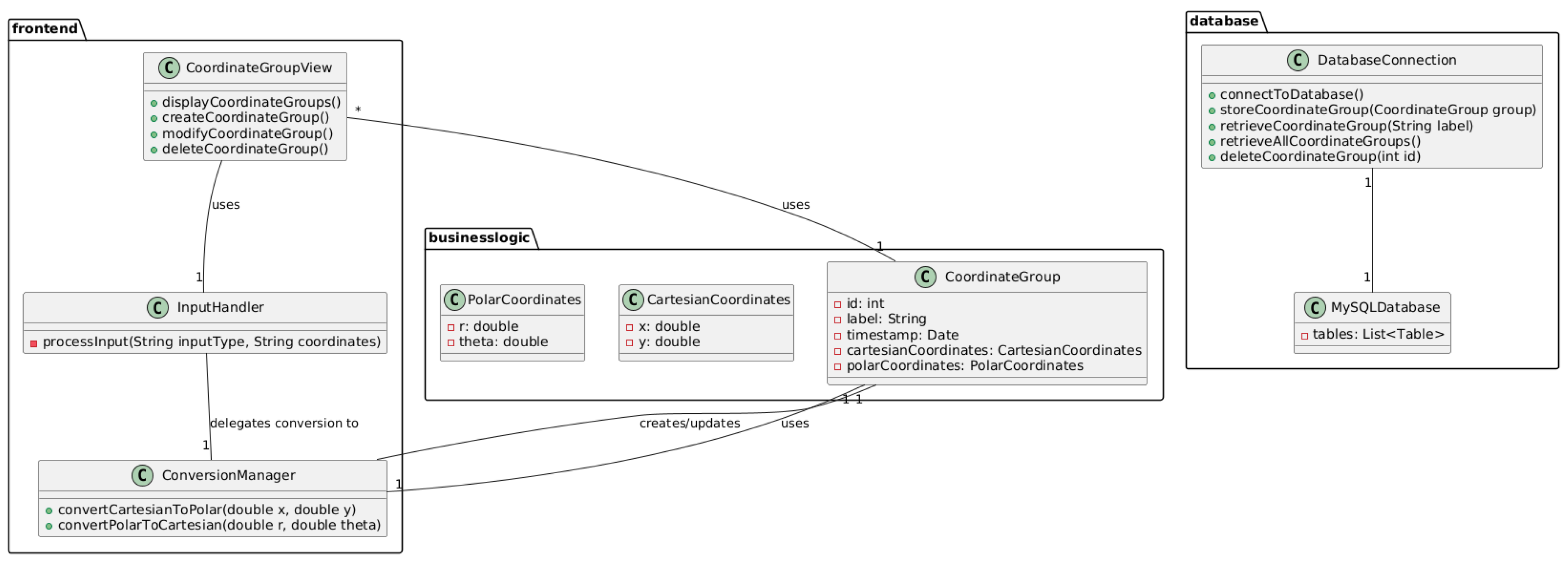

4.1. Examples of Generated UML Diagrams

4.2. Answers to Research Questions

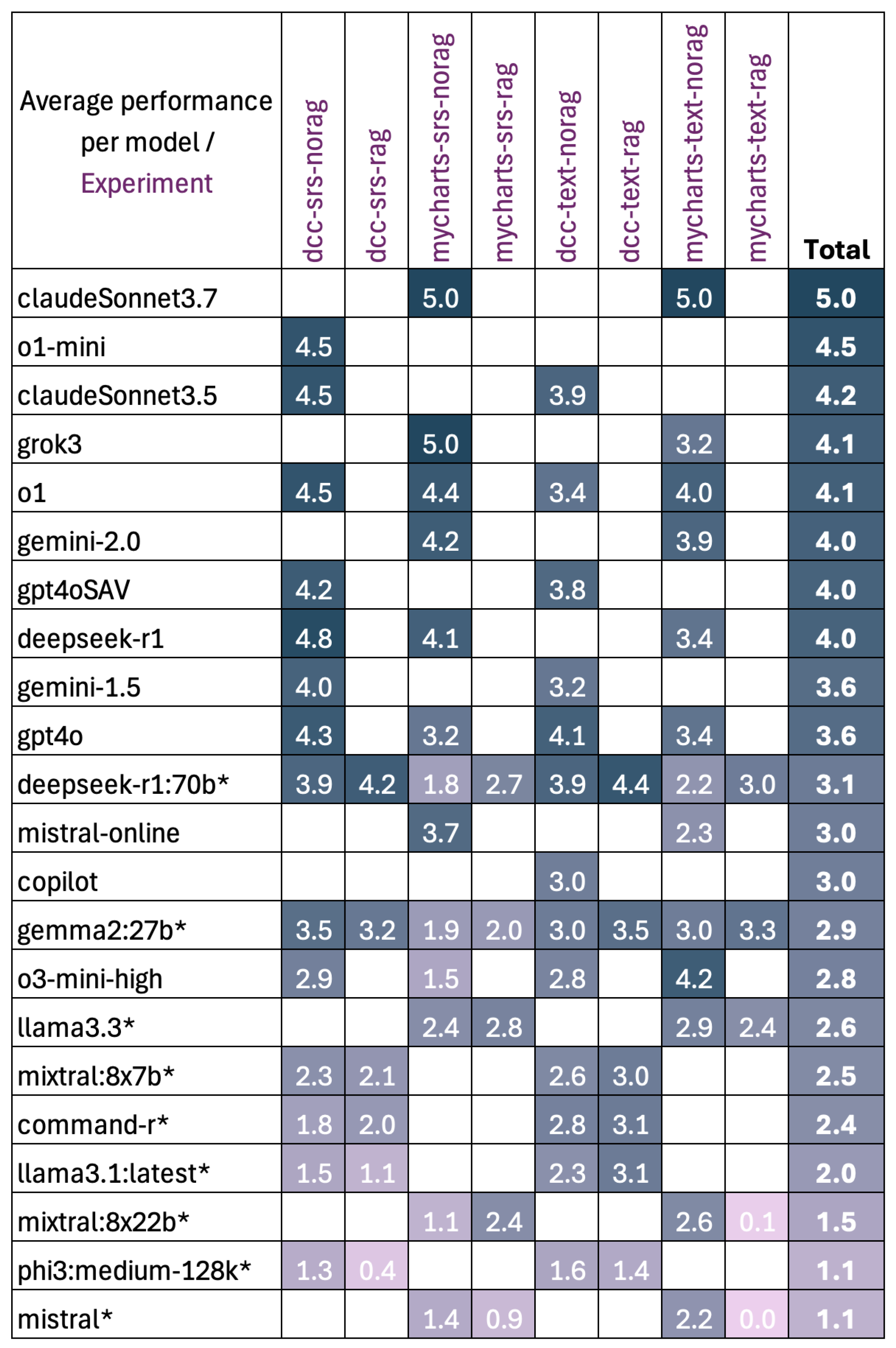

4.3. RQ1: To What Extent Can Large Language Models Correctly Apply Explicitly Requested Software Architectural Patterns by Creating Specific Architectural Diagrams?

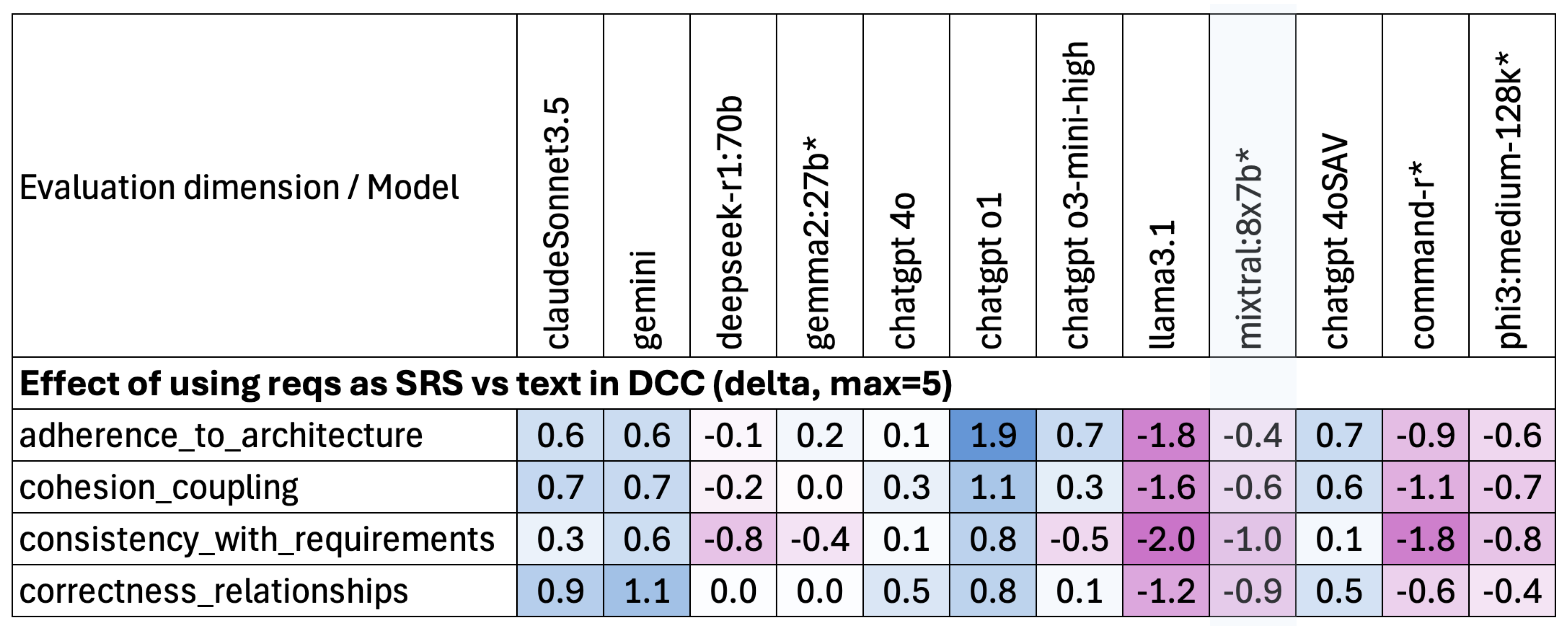

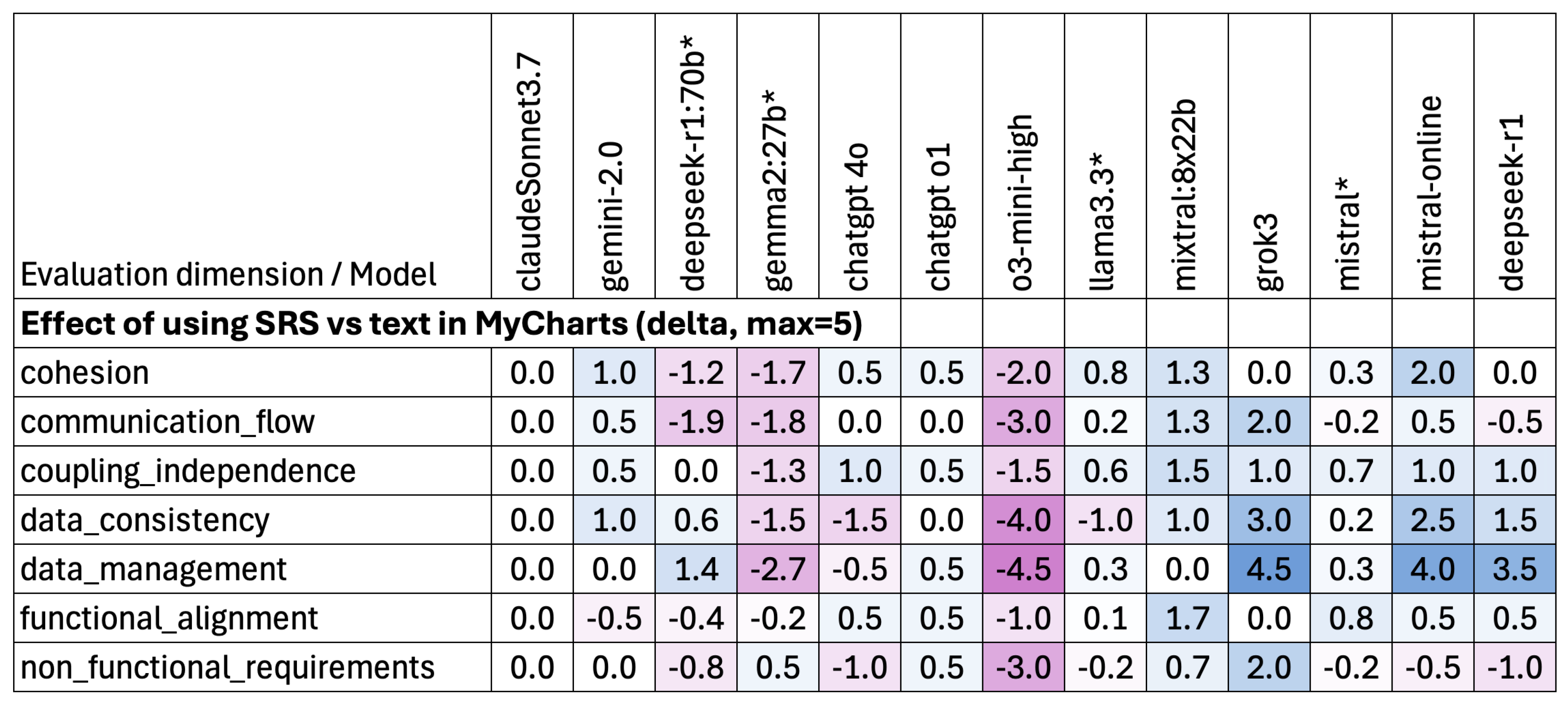

4.4. RQ2: How Does Requirements Representation Affect the Ability of LLMs to Apply Architectural Patterns?

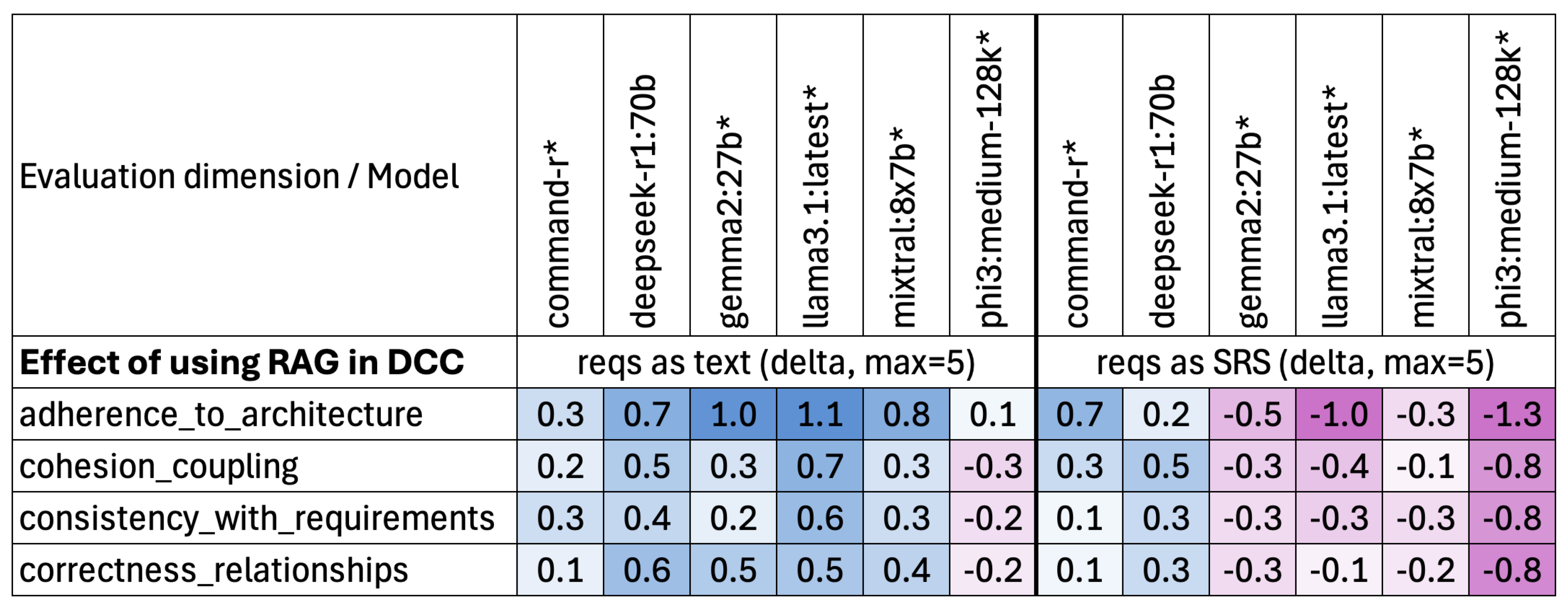

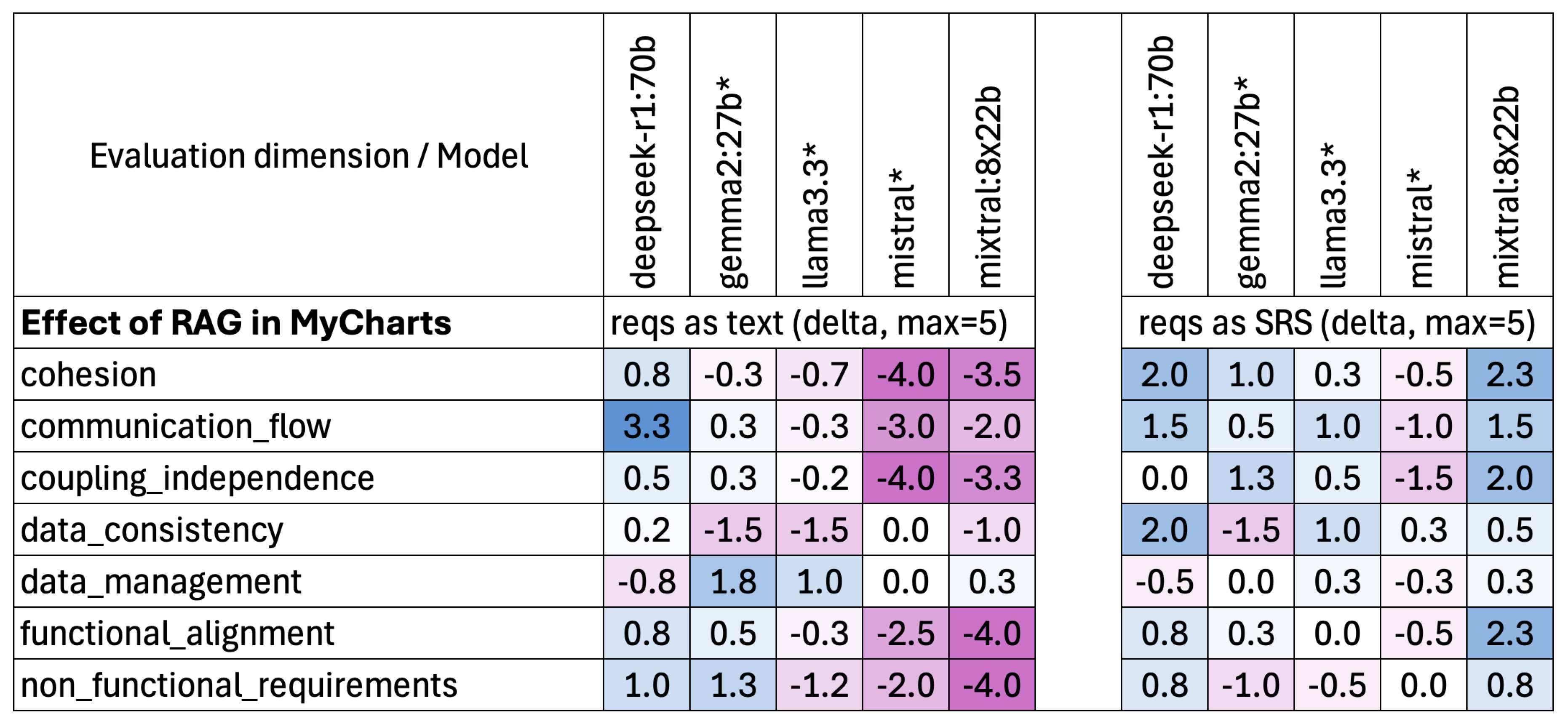

4.5. RQ3: How Does RAG Affect the Quality of the LLM-Generated Diagrams?

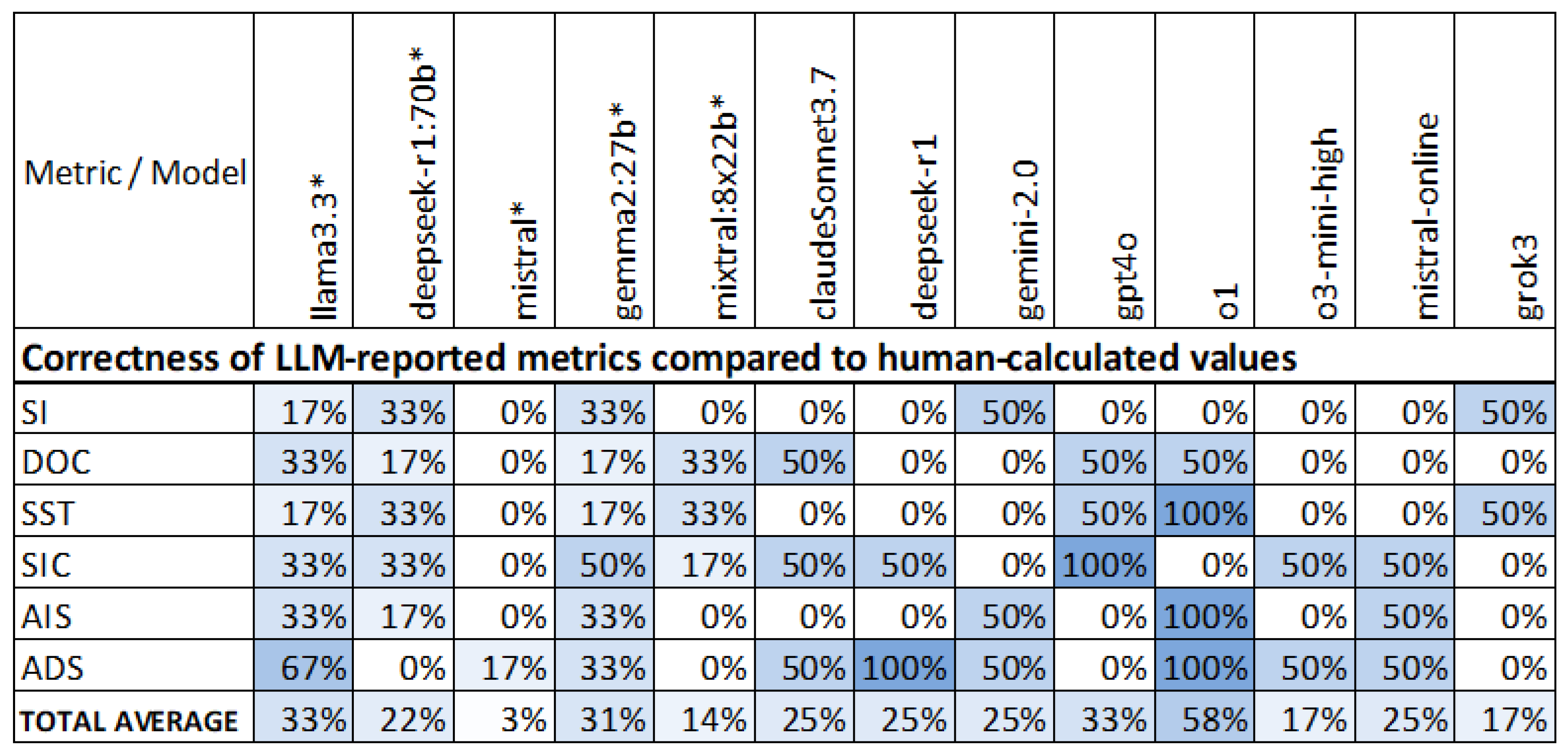

4.6. RQ4: Can LLMs Calculate Reliable Quantitative Metrics Regarding the Diagrams They Generated?

5. Threats to Validity

5.1. Internal Validity

5.1.1. Subjectivity of Expert Evaluation

5.1.2. Single-Run Executions

5.2. Construct Validity

5.2.1. Architectural Representation

5.3. External Validity

5.3.1. Model Selection

5.3.2. Problem Scope and Architectural Patterns

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- International Organization for Standardization. ISO/IEC/IEEE 42010:2022, Software, Systems and Enterprise - Architecture Description, 2022.

- Sommerville, I. Software Engineering, 10 ed.; Pearson Education Limited: Harlow, UK, 2016. Global Edition.

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; de Oliveira Pinto, H.P.; Kaplan, J.; Edwards, H.; Burda, Y.; Joseph, N.; Brockman, G.; et al. Evaluating Large Language Models Trained on Code, 2021, [arXiv:cs.LG/2107.03374]. [CrossRef]

- Wang, J.; Chen, Y. A Review on Code Generation with LLMs: Application and Evaluation. In Proceedings of the 2023 IEEE International Conference on Medical Artificial Intelligence (MedAI), 2023, pp. 284–289. [CrossRef]

- Naimi, L.; Bouziane, E.M.; Jakimi, A.; Saadane, R.; Chehri, A. Automating Software Documentation: Employing LLMs for Precise Use Case Description. Procedia Computer Science 2024, 246, 1346–1354. 28th International Conference on Knowledge Based and Intelligent information and Engineering Systems (KES 2024). [CrossRef]

- Eramo, R.; Said, B.; Oriol, M.; Bruneliere, H.; Morales, S. An architecture for model-based and intelligent automation in DevOps. Journal of Systems and Software 2024, 217, 112180. [CrossRef]

- Bouzenia, I.; Devanbu, P.; Pradel, M. RepairAgent: An Autonomous, LLM-Based Agent for Program Repair. In Proceedings of the 2025 IEEE/ACM 47th International Conference on Software Engineering (ICSE), 2025, pp. 2188–2200. [CrossRef]

- Ferrari, A.; Abualhaija, S.; Arora, C. Model Generation with LLMs: From Requirements to UML Sequence Diagrams. In Proceedings of the 2024 IEEE 32nd International Requirements Engineering Conference Workshops (REW), 2024, pp. 291–300. [CrossRef]

- Gheorghita, S.; Irimia, C.I.; Iftene, A. Automating Software Diagram Generation with Large Language Models. Procedia Computer Science 2025, 270, 713–722. 29th International Conference on Knowledge-Based and Intelligent Information & Engineering Systems (KES 2025). [CrossRef]

- Eisenreich, T.; Speth, S.; Wagner, S. From Requirements to Architecture: An AI-Based Journey to Semi-Automatically Generate Software Architectures. In Proceedings of the Proceedings of the 1st International Workshop on Designing Software, New York, NY, USA, 2024; Designing ’24, pp. 52–55. [CrossRef]

- Yang, S.; Sahraoui, H. Towards automatically extracting UML class diagrams from natural language specifications. In Proceedings of the Proceedings of the 25th International Conference on Model Driven Engineering Languages and Systems: Companion Proceedings, New York, NY, USA, 2022; MODELS ’22, pp. 396–403. [CrossRef]

- Cámara, J.; Troya, J.; Burgueño, L.; Vallecillo, A. On the assessment of generative AI in modeling tasks: an experience report with ChatGPT and UML. Software and Systems Modeling 2023, 22, 781–793. [CrossRef]

- De Bari, D.; Garaccione, G.; Coppola, R.; Torchiano, M.; Ardito, L. Evaluating Large Language Models in Exercises of UML Class Diagram Modeling. In Proceedings of the Proceedings of the 18th ACM/IEEE International Symposium on Empirical Software Engineering and Measurement, New York, NY, USA, 2024; ESEM ’24, pp. 393–399. [CrossRef]

- Al-Ahmad, B.; Alsobeh, A.; Meqdadi, O.; Shaikh, N. A Student-Centric Evaluation Survey to Explore the Impact of LLMs on UML Modeling. Information 2025, 16. [CrossRef]

- G S, N.K.; S, A.; Thushara, M.G. Comparative Analysis of Large Language Models for Automated Use Case Diagram Generation. In Proceedings of the Proceedings of the 3rd International Conference on Futuristic Technology - Volume 2: INCOFT. INSTICC, SciTePress, 2025, pp. 465–471. [CrossRef]

- Dhar, R.; Vaidhyanathan, K.; Varma, V. Can LLMs Generate Architectural Design Decisions? - An Exploratory Empirical Study. In Proceedings of the 2024 IEEE 21st International Conference on Software Architecture (ICSA), 2024, pp. 79–89. [CrossRef]

- Schindler, C.; Rausch, A. Formal Software Architecture Rule Learning: A Comparative Investigation between Large Language Models and Inductive Techniques. Electronics 2024, 13. [CrossRef]

- Jahić, J.; Sami, A. State of Practice: LLMs in Software Engineering and Software Architecture. In Proceedings of the 2024 IEEE 21st International Conference on Software Architecture Companion (ICSA-C), 2024, pp. 311–318. [CrossRef]

- Ferrari, A.; Spoletini, P. Formal requirements engineering and large language models: A two-way roadmap. Information and Software Technology 2025, 181, 107697. [CrossRef]

- Tsilimigkounakis, M. Exploring the Utilization of LLM Tools in Software Architecture. Master’s thesis, School of Electrical and Computer Engineering, National Technical University of Athens, Athens, Greece, 2024.

- International Organization for Standardization. ISO/IEC/IEEE 29148:2018, Systems and Software Engineering - Life Cycle Processes - Requirements Engineering, 2018.

- Sotiropoulos, G. Investigation of AI tools performance in the definition of Microservices Software Architectures. Master’s thesis, School of Electrical and Computer Engineering, National Technical University of Athens, Athens, Greece, 2025.

- Richardson, C. Microservices Patterns: With Examples in Java; Manning Publications: Shelter Island, NY, USA, 2019.

- Malhotra, N. Microservices Design Patterns. ValueLabs, 2023.

- Engel, T.; Langermeier, M.; Bauer, B.; Hofmann, A. Evaluation of Microservice Architectures: A Metric and Tool-Based Approach. In Proceedings of the Information Systems in the Big Data Era, Cham, 2018; pp. 74–89. [CrossRef]

- Bogner, J.; Wagner, S.; Zimmermann, A. Towards a practical maintainability quality model for service-and microservice-based systems. In Proceedings of the Proceedings of the 11th European Conference on Software Architecture: Companion Proceedings, New York, NY, USA, 2017; ECSA ’17, pp. 195–198. [CrossRef]

- Bogner, J.; Wagner, S.; Zimmermann, A. Automatically measuring the maintainability of service- and microservice-based systems: a literature review. In Proceedings of the Proceedings of the 27th International Workshop on Software Measurement and 12th International Conference on Software Process and Product Measurement, New York, NY, USA, 2017; IWSM Mensura ’17, pp. 107–115. [CrossRef]

- Object Management Group. XML Metadata Interchange (XMI) Specification, Version 2.5.1. Technical report, OMG, 2015.

- Pan, F.; Petrovic, N.; Zolfaghari, V.; Wen, L.; Knoll, A. LLM-enabled Instance Model Generation. arXiv preprint arXiv:2503.22587 2025.

- Bucaioni, A.; Di Salle, A.; Iovino, L.; Pelliccione, P.; Raimondi, F. Architecture as Code. In Proceedings of the 2025 IEEE 22nd International Conference on Software Architecture (ICSA), 2025, pp. 187–198. [CrossRef]

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).