Submitted:

29 March 2026

Posted:

30 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

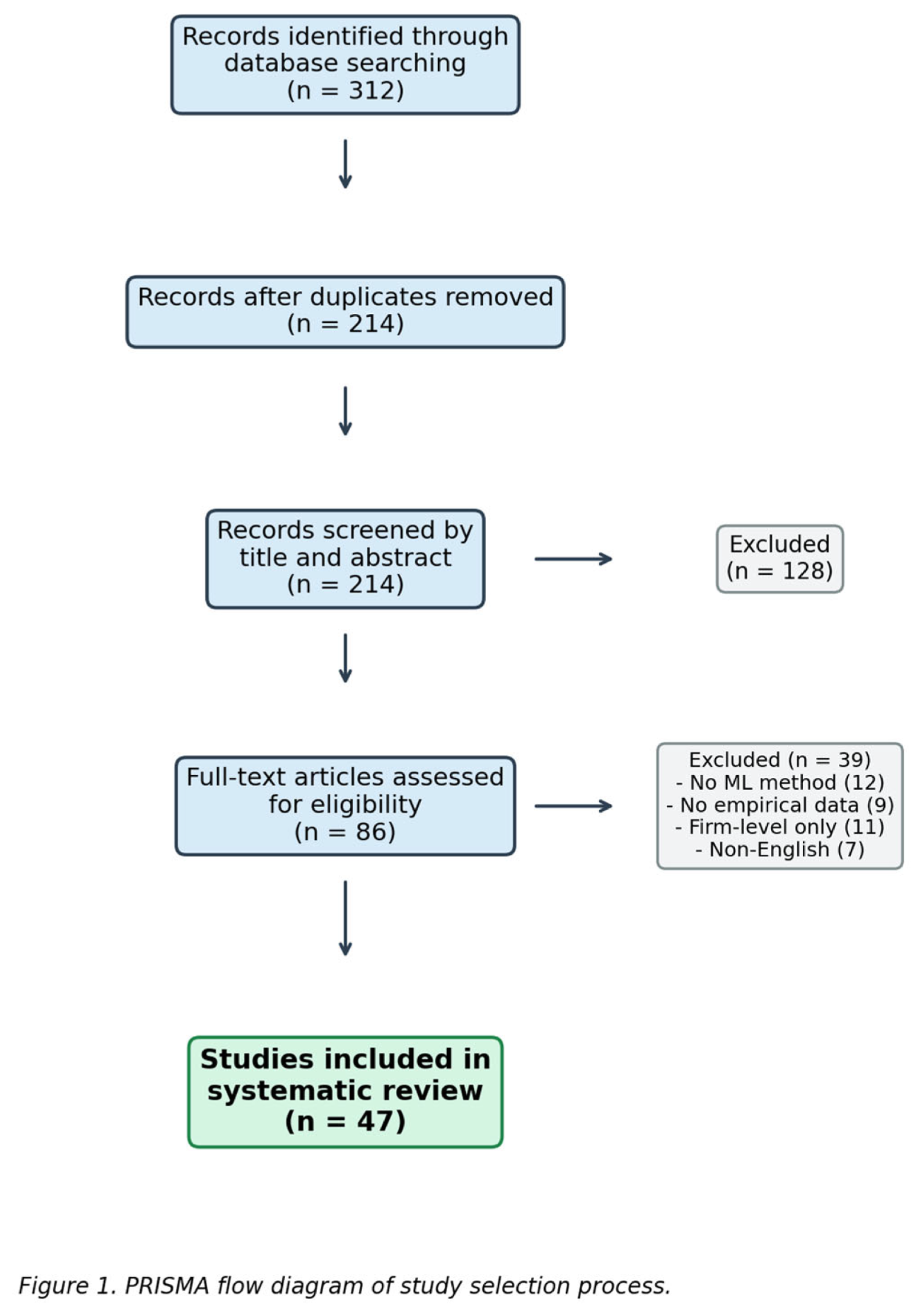

2. Review Methodology

3. The Old Guard: Traditional Approaches to Crisis Prediction

4. Tree-Based Ensemble Methods: The Workhorses

5. Neural Networks and Deep Learning: Power and Peril

6. Support Vector Machines and Kernel Methods

7. Natural Language Processing and Sentiment-Based Approaches

8. Hybrid and Emerging Approaches

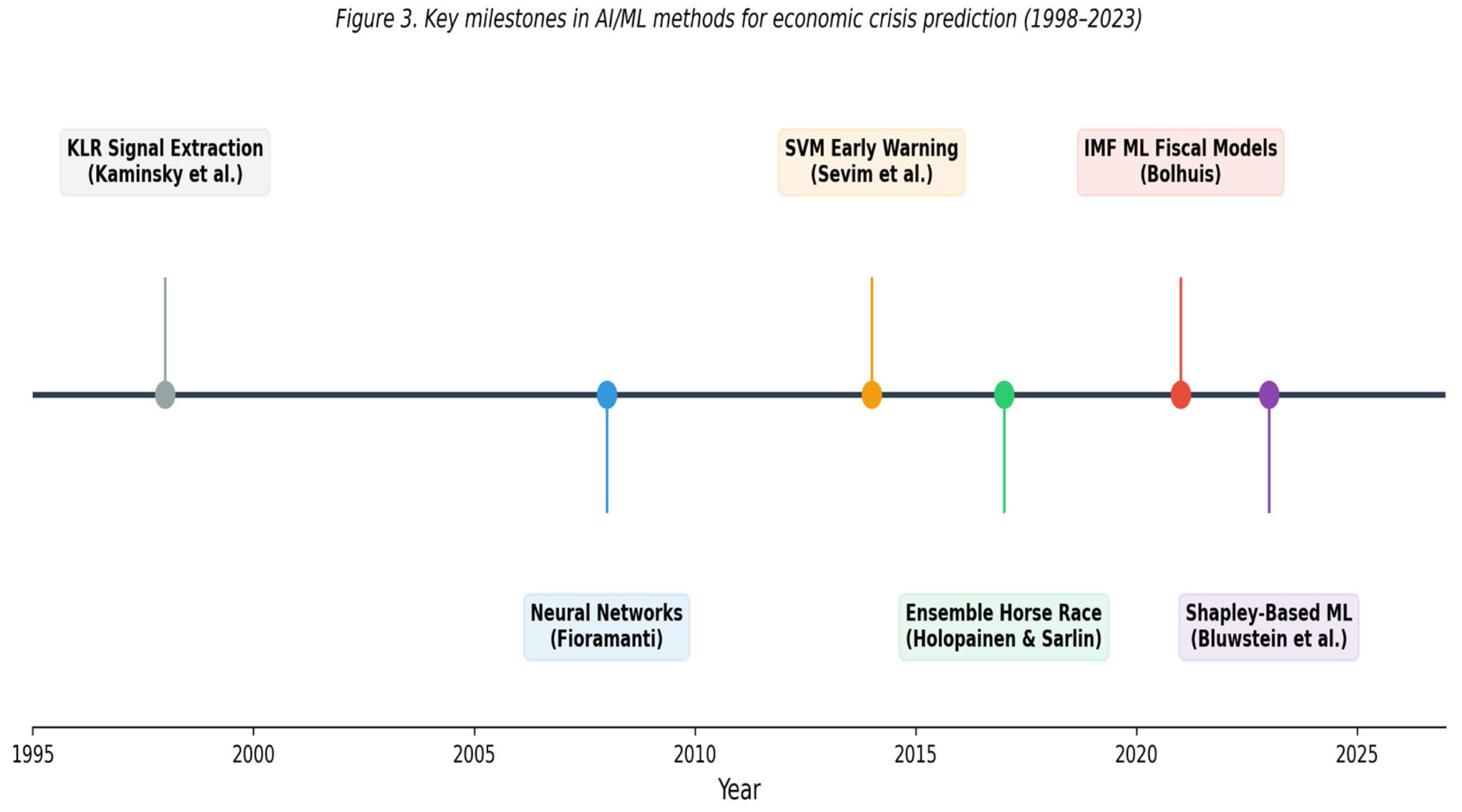

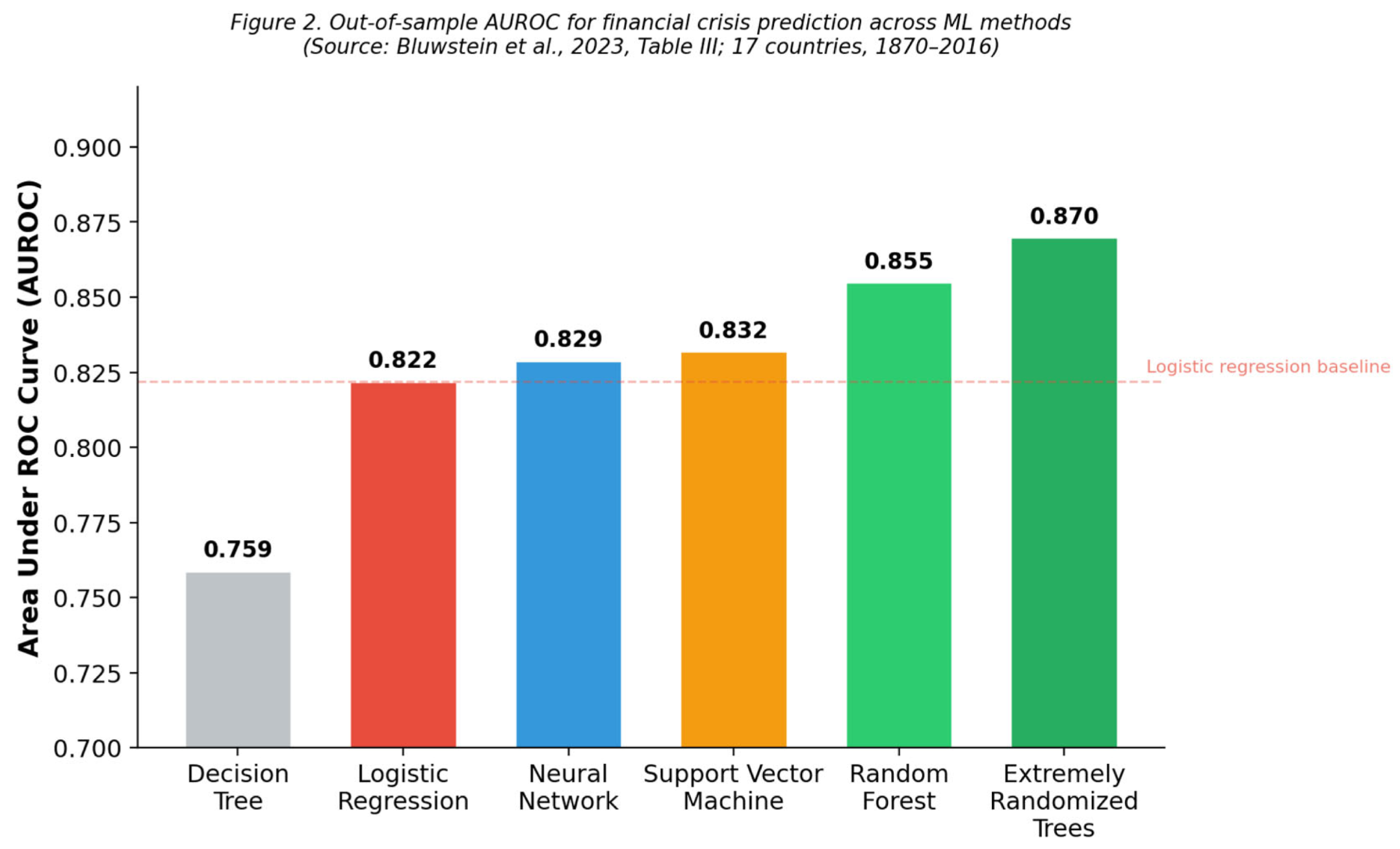

9. Comparative Synthesis

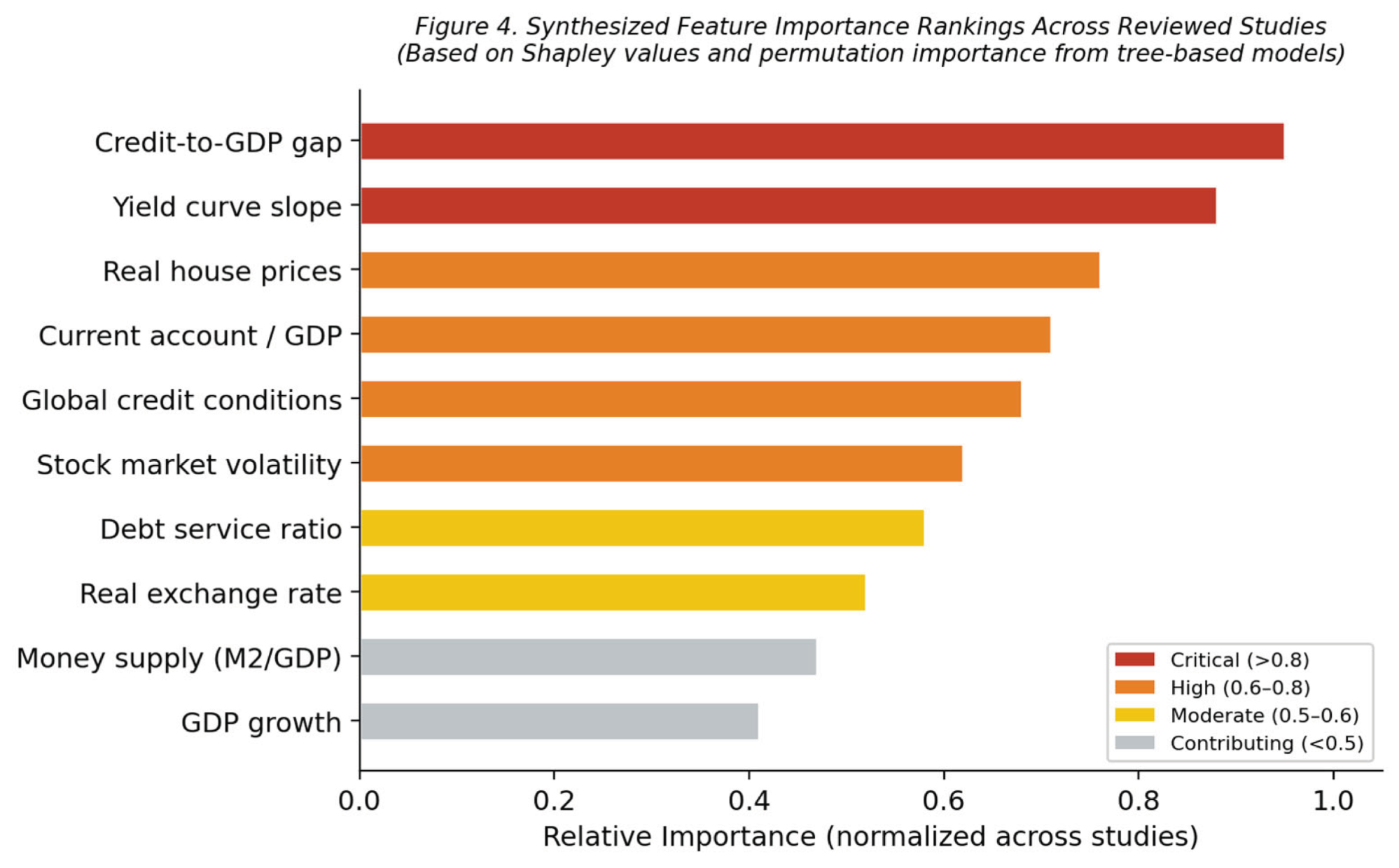

10. What Actually Predicts Crises? A Synthesis of Predictors

11. Challenges and Open Questions

11.1. The Rarity Problem

11.2. The Interpretability Imperative

11.3. Concept Drift

11.4. Evaluation Fragmentation

12. Conclusions and Future Directions

Data Availability Statement

Conflicts of Interest

References

- Barboza, F.; Kimura, H.; Altman, E. Machine learning models and bankruptcy prediction. Expert Systems with Applications 2017, 83, 405–417. [Google Scholar] [CrossRef]

- Bluwstein, K.; Buckmann, M.; Joseph, A.; Kapadia, S.; Şimşek, Ö. Credit growth, the yield curve and financial crisis prediction: Evidence from a machine learning approach. Journal of International Economics 2023, 145, 103773. [Google Scholar] [CrossRef]

- Bolhuis, M. Predicting fiscal crises: A machine learning approach. In IMF Working Paper; 2021; Volume No, p. 2021/150. [Google Scholar] [CrossRef]

- Bussière, M.; Fratzscher, M. Towards a new early warning system of financial crises. Journal of International Money and Finance 2006, 25(6), 953–973. [Google Scholar] [CrossRef]

- Chan-Lau, J.A.; Hu, R.; Ivanyna, M.; Mitra, S.; Okwuosa, I. Surrogate data models: Interpreting large-scale machine learning crisis prediction models. IMF Working Paper No. 2023/041; 2023. [CrossRef]

- Chatzis, S.P.; Siakoulis, V.; Petropoulos, A.; Stavroulakis, E.; Vlachogiannakis, N. Forecasting stock market crisis events using deep and statistical machine learning techniques. Expert Systems with Applications 2018, 112, 353–371. [Google Scholar] [CrossRef]

- Davis, E.P.; Karim, D. Comparing early warning systems for banking crises. Journal of Financial Stability 2008, 4(2), 89–120. [Google Scholar] [CrossRef]

- Demirgüç-Kunt, A.; Detragiache, E. The determinants of banking crises in developing and developed countries. IMF Staff Papers 1998, 45(1), 81–109. [Google Scholar] [CrossRef]

- Du, K.; Zhao, Y.; Mao, R.; Xing, F.; Cambria, E. Natural language processing in finance: A survey. Information Fusion 2024, 108, 102438. [Google Scholar] [CrossRef]

- Filippou, D.; Goda, T.; Mihaylov, E.; Verbrugge, R. Regional economic sentiment: Constructing quantitative estimates from the Beige Book. In Federal Reserve Bank of Cleveland Economic Commentary; 2024; Volume 08. [Google Scholar]

- Fioramanti, M. Predicting sovereign debt crises using artificial neural networks: A comparative approach. Journal of Financial Stability 2008, 4(2), 149–164. [Google Scholar] [CrossRef]

- Fouliard, J.; Howell, M.; Rey, H.; Stavrakeva, V. Answering the Queen: Machine learning and financial crises. NBER Working Paper No. 28302; 2021. [CrossRef]

- Greenwood, R.; Hanson, S.G.; Shleifer, A.; Sørensen, J.A. Predictable financial crises. The Journal of Finance 2022, 77(2), 863–921. [Google Scholar] [CrossRef]

- Gu, S.; Kelly, B.; Xiu, D. Empirical asset pricing via machine learning. The Review of Financial Studies 2020, 33(5), 2223–2273. [Google Scholar] [CrossRef]

- Holopainen, M.; Sarlin, P. Toward robust early-warning models: A horse race, ensembles and model uncertainty. Quantitative Finance 2017, 17(12), 1933–1963. [Google Scholar] [CrossRef]

- Joy, M.; Rusnák, M.; Šmídková, K.; Vašíček, B. Banking and currency crises: Differential diagnostics for developed countries. International Journal of Finance and Economics 2017, 22(1), 44–67. [Google Scholar] [CrossRef]

- Kaminsky, G.L.; Lizondo, S.; Reinhart, C.M. Leading indicators of currency crises. IMF Staff Papers 1998, 45(1), 1–48. [Google Scholar] [CrossRef]

- Kou, G.; Chao, X.; Peng, Y.; Alsaadi, F.E.; Herrera-Viedma, E. Machine learning methods for systemic risk analysis in financial sectors. Technological and Economic Development of Economy 2019, 25(5), 716–742. [Google Scholar] [CrossRef]

- Laeven, L.; Valencia, F. Systemic banking crises database II. IMF Economic Review 2020, 68(2), 307–361. [Google Scholar] [CrossRef]

- Liu, L.; Chen, C.; Wang, B. Predicting financial crises with machine learning methods. Journal of Forecasting 2022, 41(5), 871–910. [Google Scholar] [CrossRef]

- Petropoulos, F.; et al. Forecasting: Theory and practice. International Journal of Forecasting 2022, 38(3), 845–1045. [Google Scholar] [CrossRef]

- Reinhart, C.M.; Rogoff, K.S. This Time Is Different: Eight Centuries of Financial Folly; Princeton University Press, 2009. [Google Scholar] [CrossRef]

- Sevim, C.; Oztekin, A.; Bali, O.; Gumus, S.; Guresen, E. Developing an early warning system to predict currency crises. European Journal of Operational Research 2014, 237(3), 1095–1104. [Google Scholar] [CrossRef]

- Tölö, E. Predicting systemic financial crises with recurrent neural networks. Journal of Financial Stability 2020, 49, 100746. [Google Scholar] [CrossRef]

| Method Family | Predictive Accuracy | Interpretability | Data Requirements | Calibration | Scalability |

|---|---|---|---|---|---|

| Tree-Based Ensembles | High (AUROC 0.85–0.87) | Moderate (Shapley values) | Moderate | Strong | High |

| Neural Networks/Deep Learning | High (w/ sufficient data) | Low | High | Moderate | Moderate |

| Support Vector Machines | Moderate–High (AUROC 0.83) | Low–Moderate | Low–Moderate | Weak (needs post-processing) | Low |

| NLP/Sentiment-Based | Moderate–High (improving) | Moderate–High | Moderate (text corpus needed) | Varies | Moderate |

| Hybrid/Multi-Source | Highest (emerging evidence) | Low–Moderate | High | Varies | Low–Moderate |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.