Submitted:

27 March 2026

Posted:

30 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

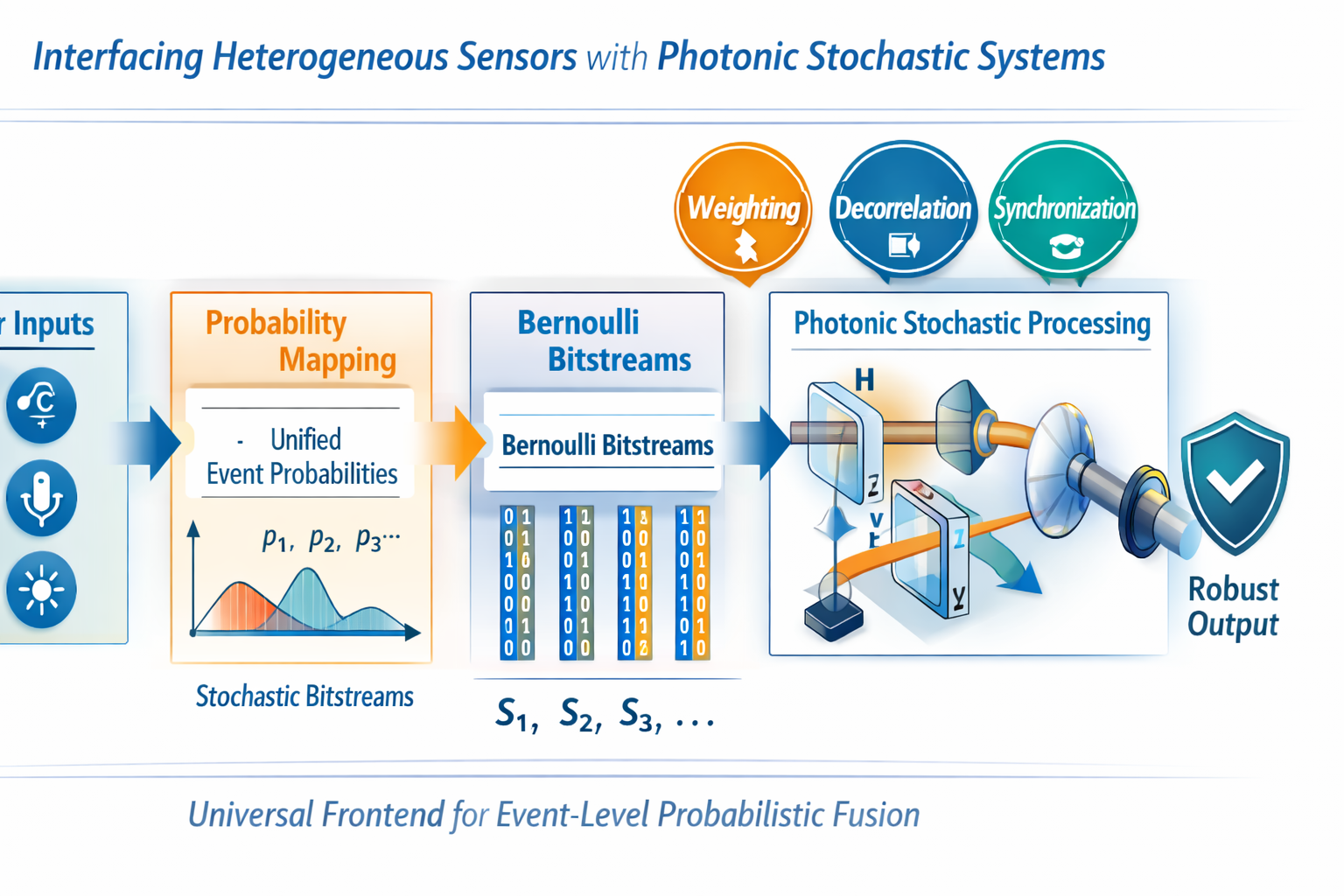

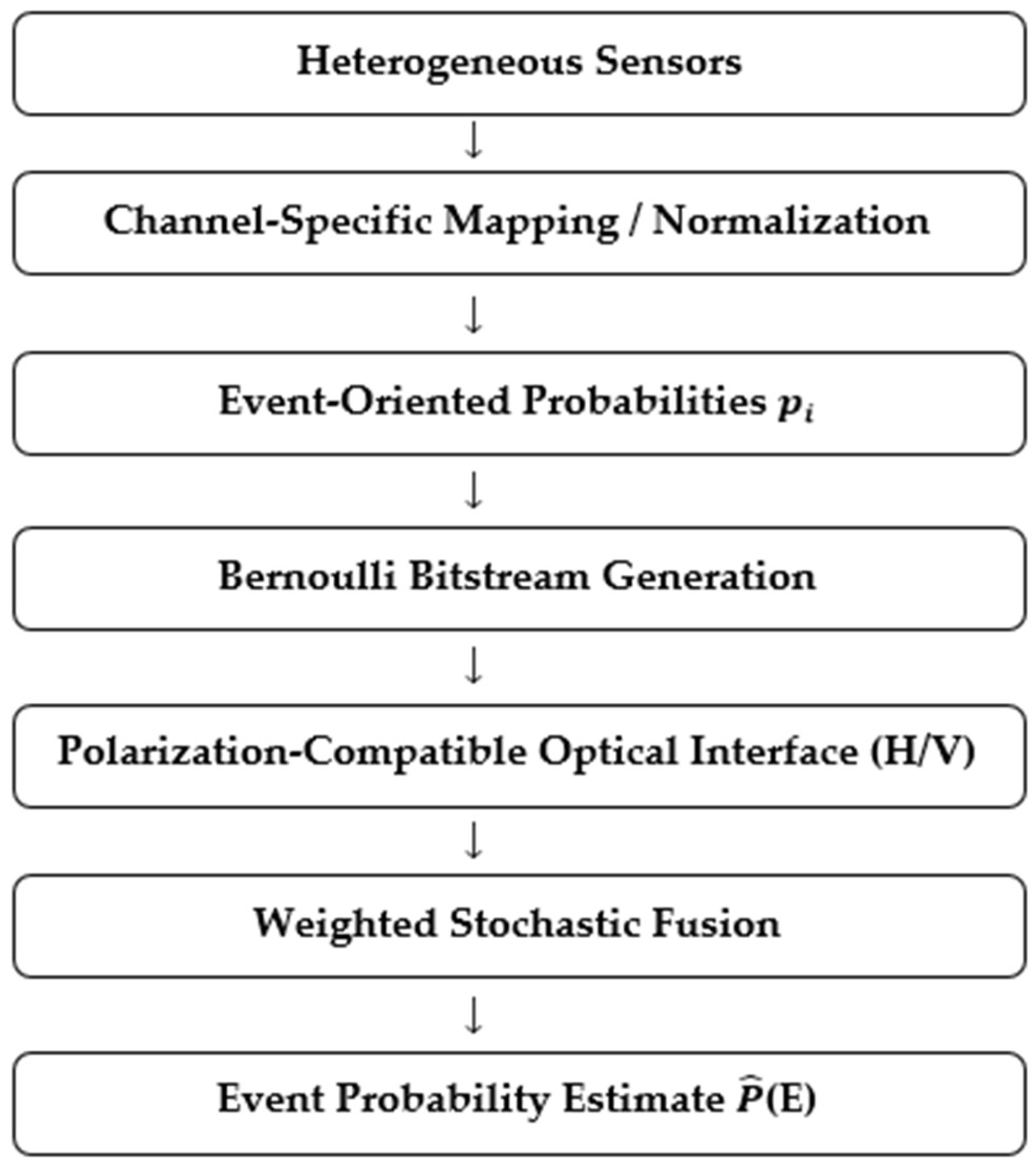

3. System Architecture

3.1. Heterogeneous Sensor Layer

3.2. Event-Oriented Mapping

3.3. Stochastic Edge Representation

3.4. Polarization-Compatible Optical Interface

3.5. Event-Level Fusion Block

3.6. Core Design Variables

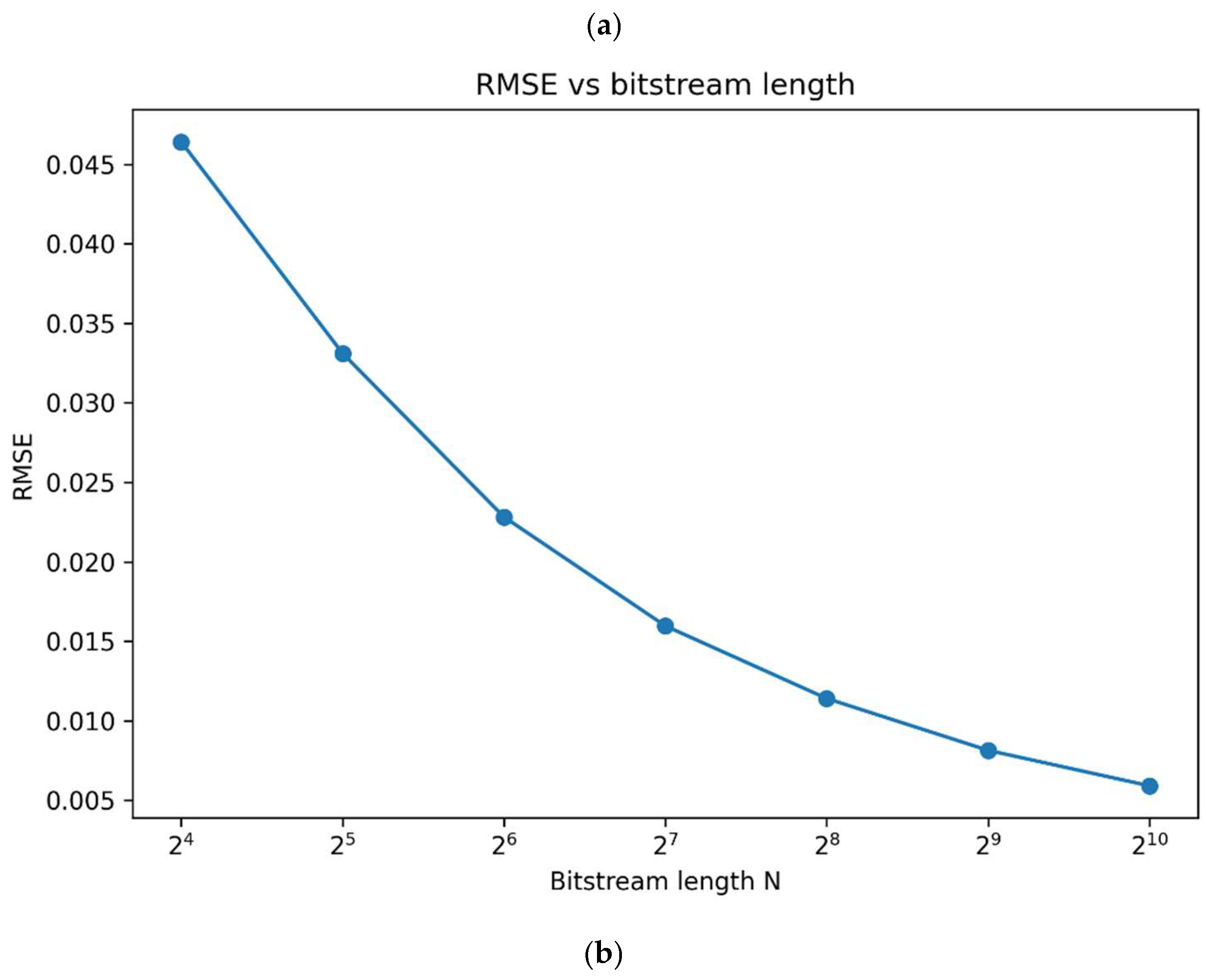

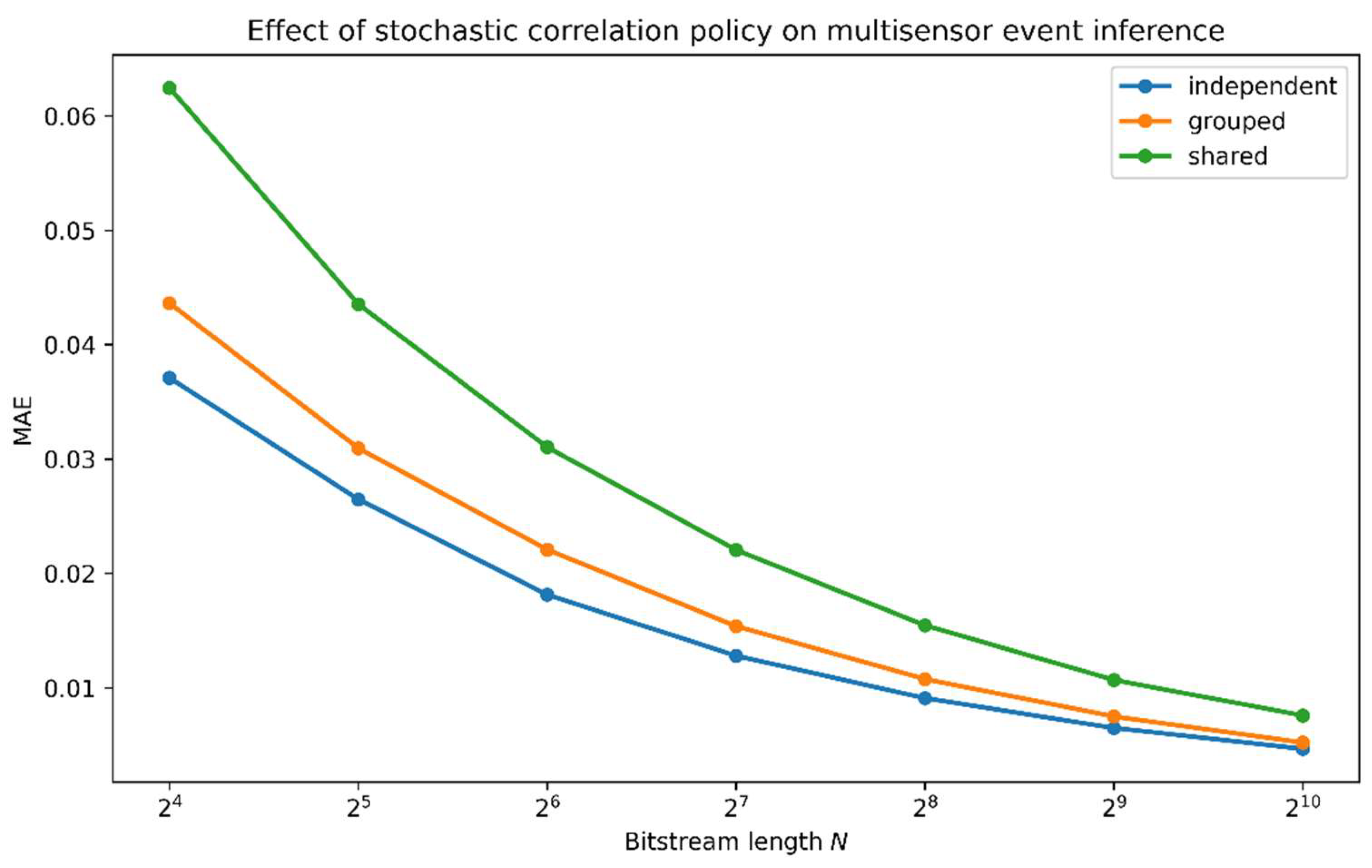

- Weight assignment. The influence of each sensor channel is controlled by wi, which may be assigned equally, manually, reliability-aware, or by data-driven calibration.

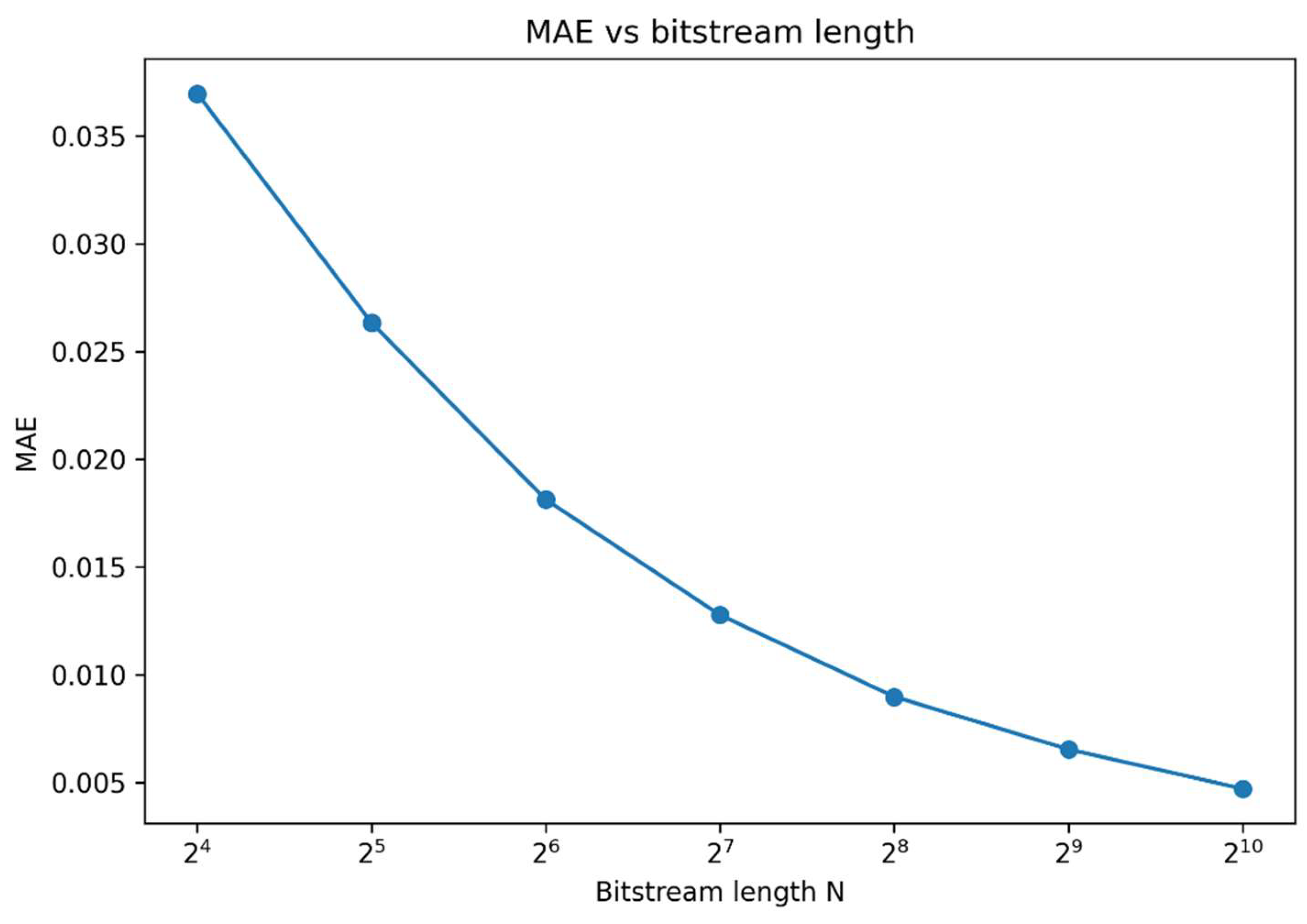

- Bitstream precision. The length of the Bernoulli stream determines the stochastic estimation accuracy and convergence to the float reference.

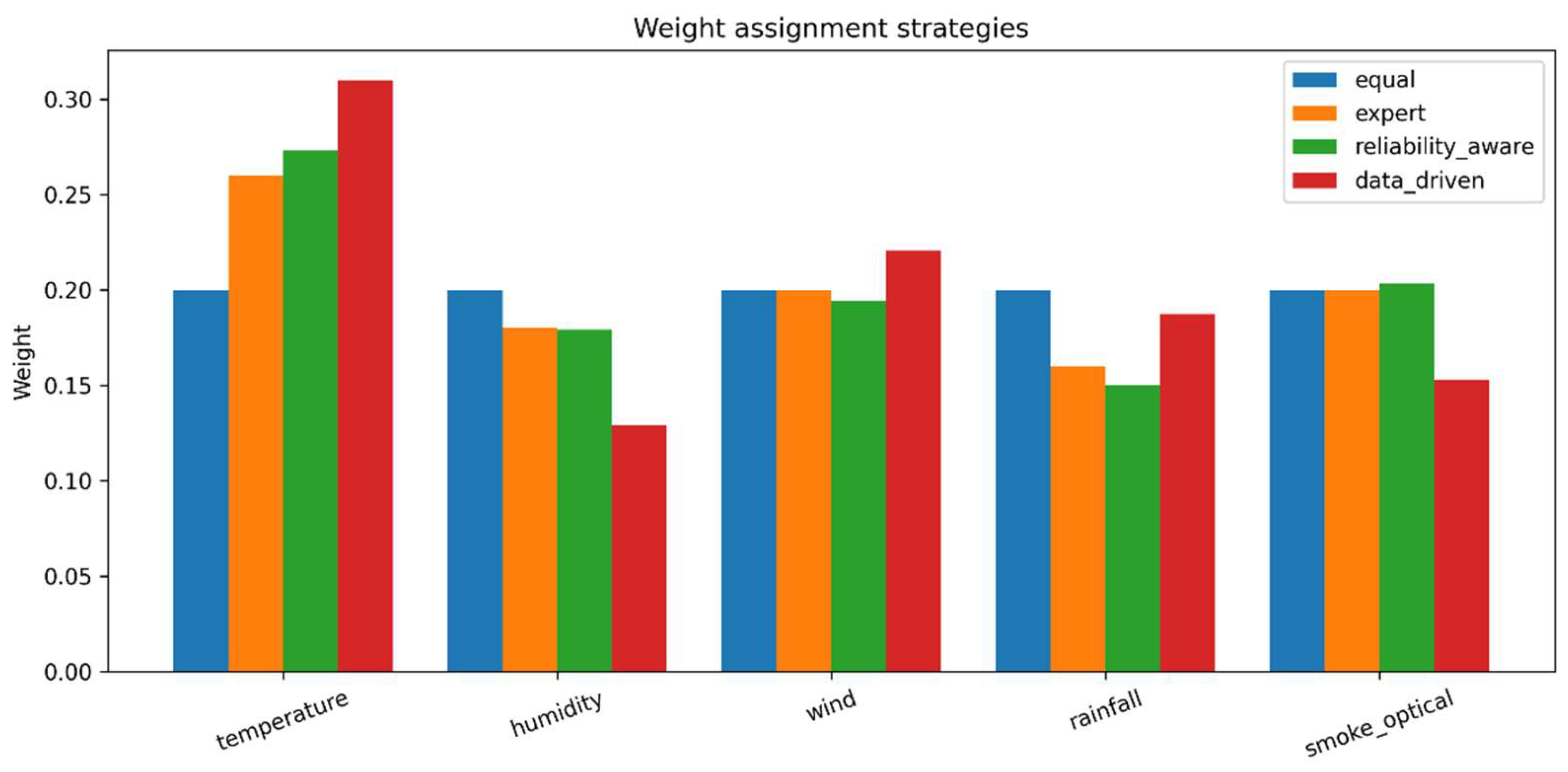

- Correlation policy. Independent, grouped, or shared-randomness stream generation changes the effective inter-channel correlation and therefore the fusion quality.

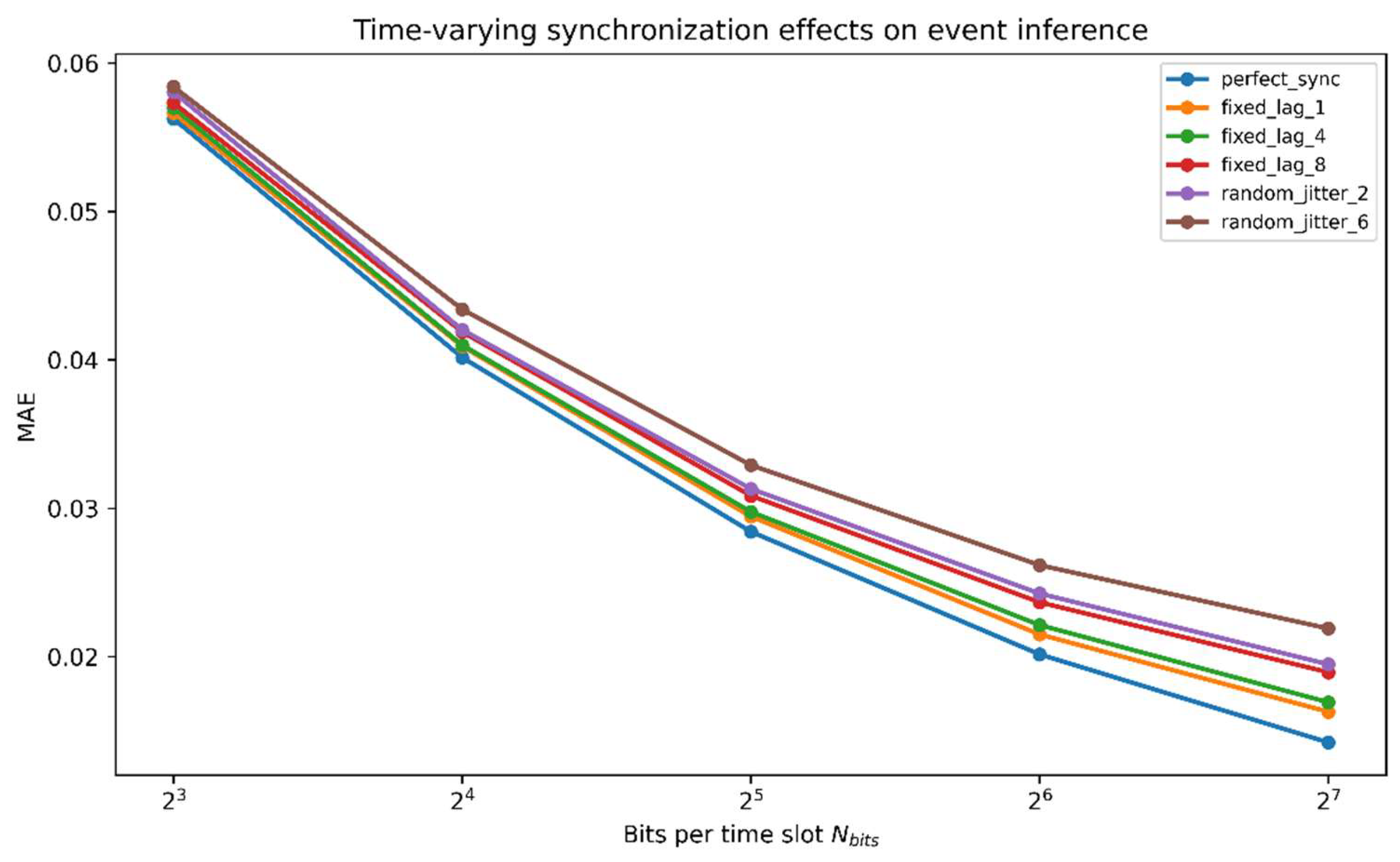

- Synchronization mode. In time-varying inference, perfect alignment, fixed lag, or random jitter affect the temporal consistency of multisensor fusion.

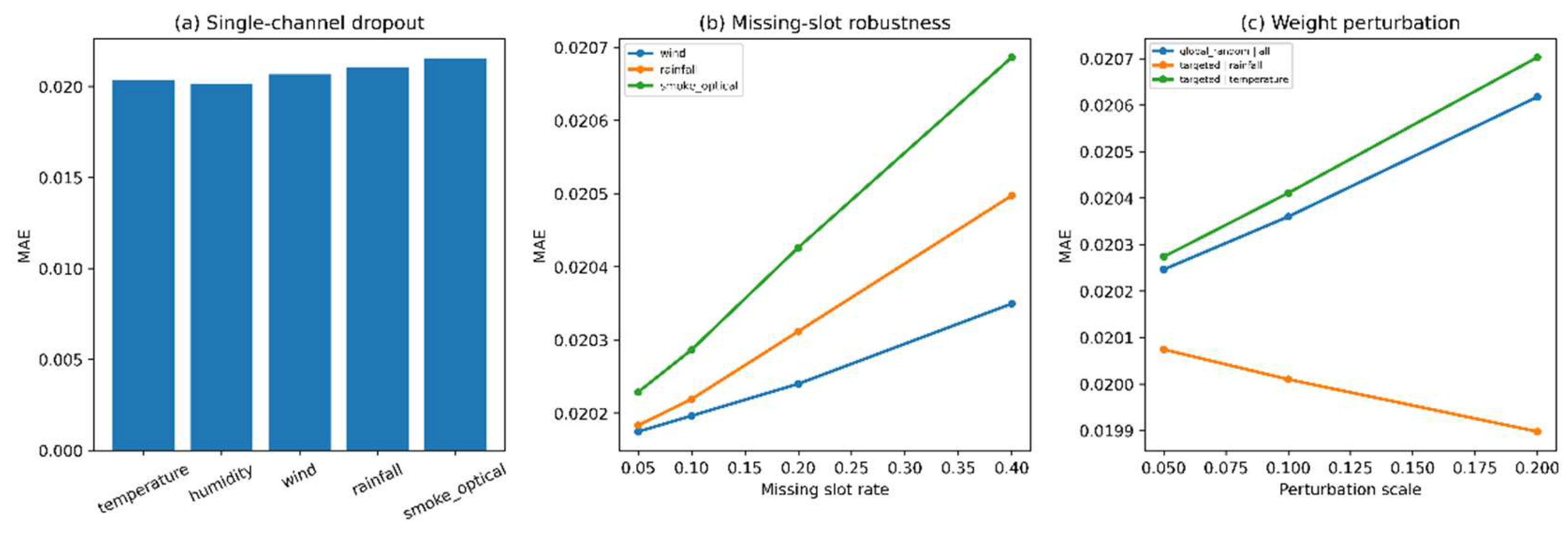

- Robustness conditions. Noise, channel dropout, missing slots, and weight perturbations influence both average inference error and temporal event tracking quality.

3.6. Architectural Scope of the Present Study

4. Methods

4.1. Event-Oriented Sensor Mapping

4.2. Event-Oriented Sensor Mapping

4.3. Bernoulli Bitstream Representation

4.4. Time-Varying Slot-Wise Formulation

4.5. Weight Assignment Strategies

4.6. Correlation Policies for Stochastic Streams

4.7. Synchronization Policies

4.8. Robustness Scenarios

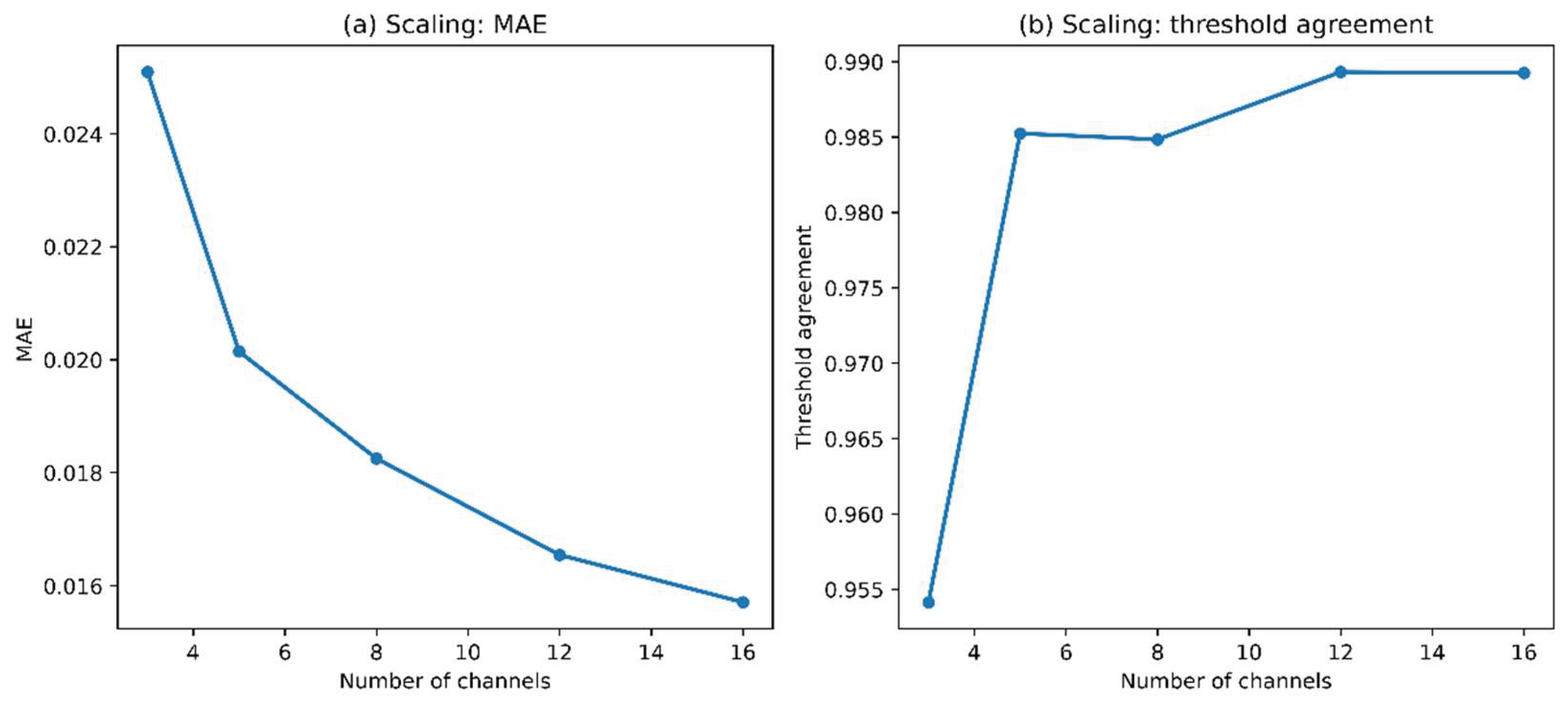

4.9. Scaling Configurations

4.10. Time-Varying Benchmark Construction

4.11. Evaluation Metrics

4.12. Reproducibility and Supplementary Implementation

5. Experimental Design

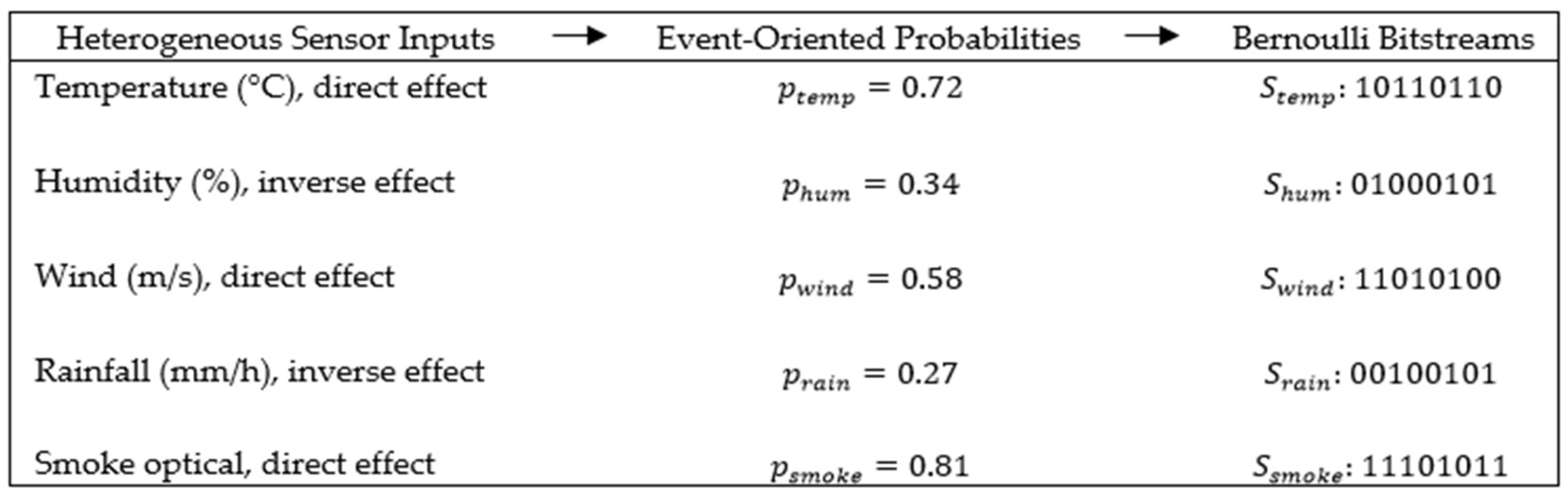

5.1. Baseline Five-Channel Heterogeneous Benchmark

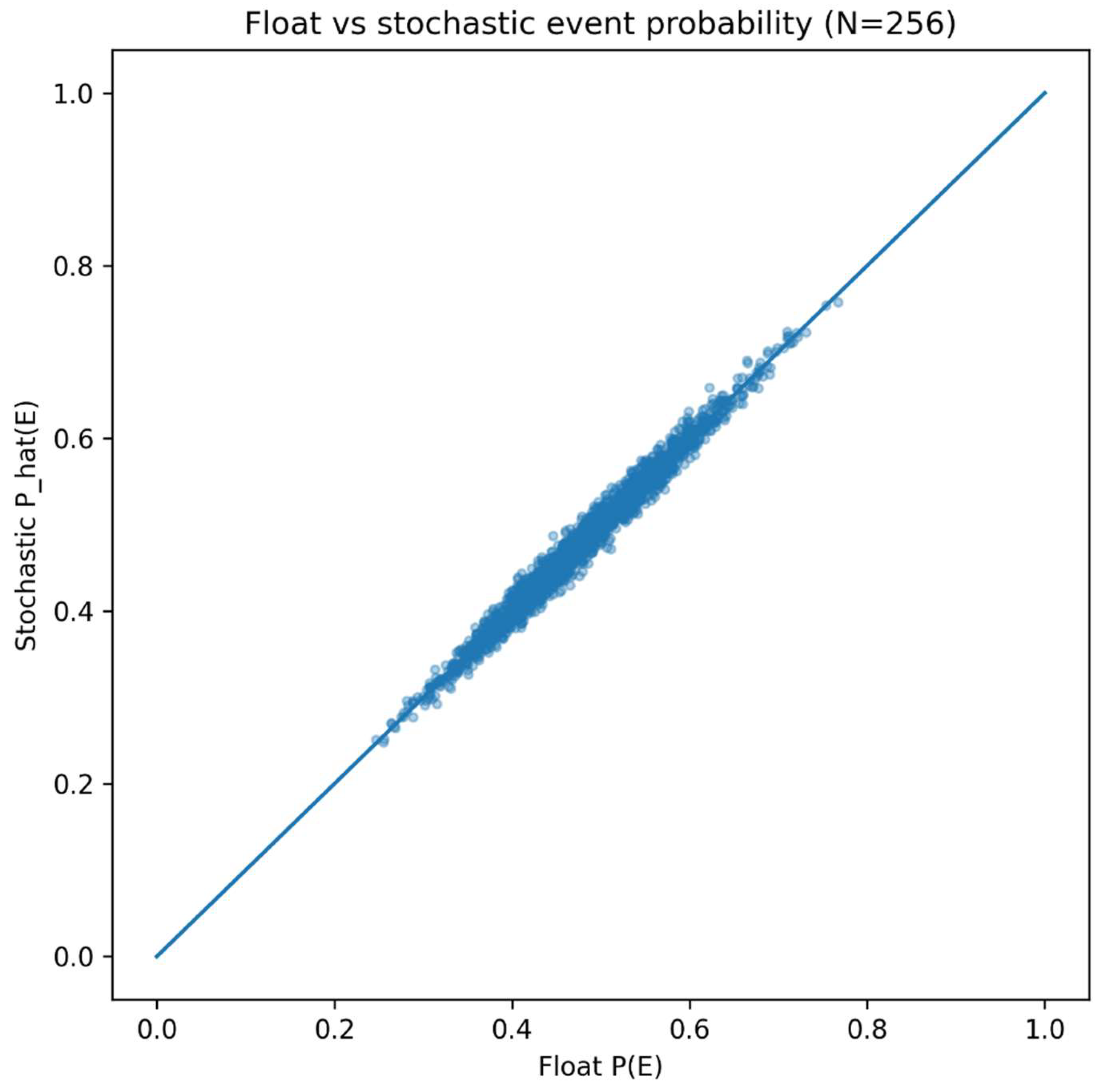

5.2. Baseline Validation Stage

5.3. Weighting-Strategy Comparison

5.4. Static Correlation Study

5.5. Time-Varying Synchronization Study

5.6. Robustness Study

5.7. Scaling Study

5.8. Evaluation Protocol

5.9. Role of the Supplementary Material in the Experimental Design

6. Results

6.1. Baseline Validation of the Universal Frontend

6.2. Weight Assignment as a Design Variable

6.3. Correlation and Synchronization Effects

6.4. Robustness under Channel Degradation and Weight Mismatch

6.5. Scaling Behavior Under Increasing Channel Count

6.6. Summary of Main Findings

7. Discussion

8. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| Mean Absolute Error | |

| Root Mean Square Error | |

| Stochastic Computing | |

| Probabilistic bit | |

| Horizontal/Vertical polarization states | |

| Comma-Separated Values | |

| JavaScript Object Notation | |

| Digital Object Identifier | |

| Float reference event probability | |

| Stochastic estimate of event probability | |

| Time-varying reference event probability | |

| Time-varying stochastic estimate of event probability | |

| Event-oriented probability contribution of sensor channel | |

| Bitstream-based estimate of channel probability | |

| Time-varying event-oriented probability contribution of channel | |

| Slot-wise estimate of channel probability | |

| Bernoulli bitstream generated from channel probability | |

| Time-varying Bernoulli bitstream | |

| Fusion weight of sensor channel | |

| Decision threshold | |

| Sigmoid slope parameter | |

| Bitstream length | |

| Bits per time slot | |

| Number of time slots |

References

- Li, X.; Dunkin, F.; Dezert, J. Multi-source information fusion: Progress and future. Chin. J. Aeronaut. 2024, 37(7), 24–58. [Google Scholar] [CrossRef]

- Shaikh, Z.A.; Van Hamme, D.; Veelaert, P.; Philips, W. Probabilistic fusion for pedestrian detection from thermal and colour images. Sensors 2022, 22(22), 8637. [Google Scholar] [CrossRef] [PubMed]

- Rabb, E.; Steckenrider, J.J. Walking trajectory estimation using multi-sensor fusion and a probabilistic step model. Sensors 2023, 23(14), 6494. [Google Scholar] [CrossRef] [PubMed]

- Qian, H.; Wang, M.; Zhu, M.; Wang, H. A review of multi-sensor fusion in autonomous driving. Sensors 2025, 25(19), 6033. [Google Scholar] [CrossRef] [PubMed]

- Alaghi, A.; Hayes, J.P. Survey of stochastic computing. ACM Trans. Embed. Comput. Syst. 2013, 12(2s), 92:1–92:19. [Google Scholar] [CrossRef]

- Camsari, K.Y.; Sutton, B.M.; Datta, S. p-bits for probabilistic spin logic. Appl. Phys. Rev. 2019, 6(1), 011305. [Google Scholar] [CrossRef]

- Xu, X.; Tan, M.; Corcoran, B.; Wu, J.; Boes, A.; Nguyen, T.G.; Chu, S.T.; Little, B.E.; Hicks, D.G.; Morandotti, R.; Mitchell, A.; Moss, D.J. 11 TOPS photonic convolutional accelerator for optical neural networks. Nature 2021, 589, 44–51. [Google Scholar] [CrossRef] [PubMed]

- Dong, B.; Aggarwal, S.; Zhou, W.; Ali, U.E.; Farmakidis, N.; Lee, J.S.; He, Y.; Li, X.; Kwong, D.-L.; Wright, C.D.; Pernice, W.H.P.; Bhaskaran, H. Higher-dimensional processing using a photonic tensor core with continuous-time data. Nat. Photonics 2023, 17, 1080–1088. [Google Scholar] [CrossRef]

- Wu, J.; Lin, X.; Guo, Y.; Liu, J.; Fang, L.; Jiao, S.; Dai, Q. Analog optical computing for artificial intelligence. Engineering 2022, 10, 133–145. [Google Scholar] [CrossRef]

- Xu, D.; Ma, Y.; Jin, G.; Cao, L. Intelligent photonics: A disruptive technology to shape the present and redefine the future. Engineering 2025, 46, 186–213. [Google Scholar] [CrossRef]

- Baek, Y.; Bae, B.; Shin, H.; Sonnadara, C.; Cho, H.; Lin, C.-Y.; Mu, Y.; Shen, C.; Shah, S.; Wang, G.; Lee, K. Edge intelligence through in-sensor and near-sensor computing for the artificial intelligence of things. npj Unconventional Computing 2025, 2, 25. [Google Scholar] [CrossRef]

- Zhang, S.; Jiang, X.; Wu, B.; Zhou, H.; Xu, W.; Zhou, H.; Ruan, Z.; Dong, J.; Zhang, X. Photonic edge intelligence chip for multi-modal sensing, inference and learning. Nat. Commun. 2025, 16, 10136. [Google Scholar] [CrossRef] [PubMed]

- Xue, H.; Zhang, M.; Yu, P.; Zhang, H.; Wu, G.; Li, Y.; Zheng, X. A novel multi-sensor fusion algorithm based on uncertainty analysis. Sensors 2021, 21(8), 2713. [Google Scholar] [CrossRef] [PubMed]

- Roheda, S.; Krim, H.; Luo, Z.-Q.; Wu, T. Event driven sensor fusion. Signal Process. 2021, 188, 108241. [Google Scholar] [CrossRef]

- Gutiérrez, R.; Rampérez, V.; Paggi, H.; Lara, J.A.; Soriano, J. On the use of information fusion techniques to improve information quality: Taxonomy, opportunities and challenges. Inf. Fusion 2022, 78, 102–137. [Google Scholar] [CrossRef]

- Mao, W.-L.; Wang, C.-C.; Chou, P.-H.; Liu, K.-C.; Tsao, Y. MECKD: Deep learning-based fall detection in multilayer mobile edge computing with knowledge distillation. IEEE Sens. J. 2024, 24(24), 42195–42209. [Google Scholar] [CrossRef]

- Angelsky, O.V.; Bekshaev, A.Y.; Zenkova, C.Y.; Ivansky, D.I.; Zheng, J. Correlation Optics, Coherence and Optical Singularities: Basic Concepts and Practical Applications. Frontiers in Physics 2022, 10, 924508. [Google Scholar] [CrossRef]

- O. Angelsky, M. Strynadko, C. Zenkova, R. Zaiats, Zhang Xinzheng, Jun Zheng, and Jingxian Cai. Supplementary materials for “ Universal Sensor Frontend for Event Inference in Photonic Stochastic Systems”. figshare (2026). [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.