Submitted:

26 March 2026

Posted:

30 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

2.1. Database Development

2.1.1. Database Construction and Description

2.1.2. Data Analysis

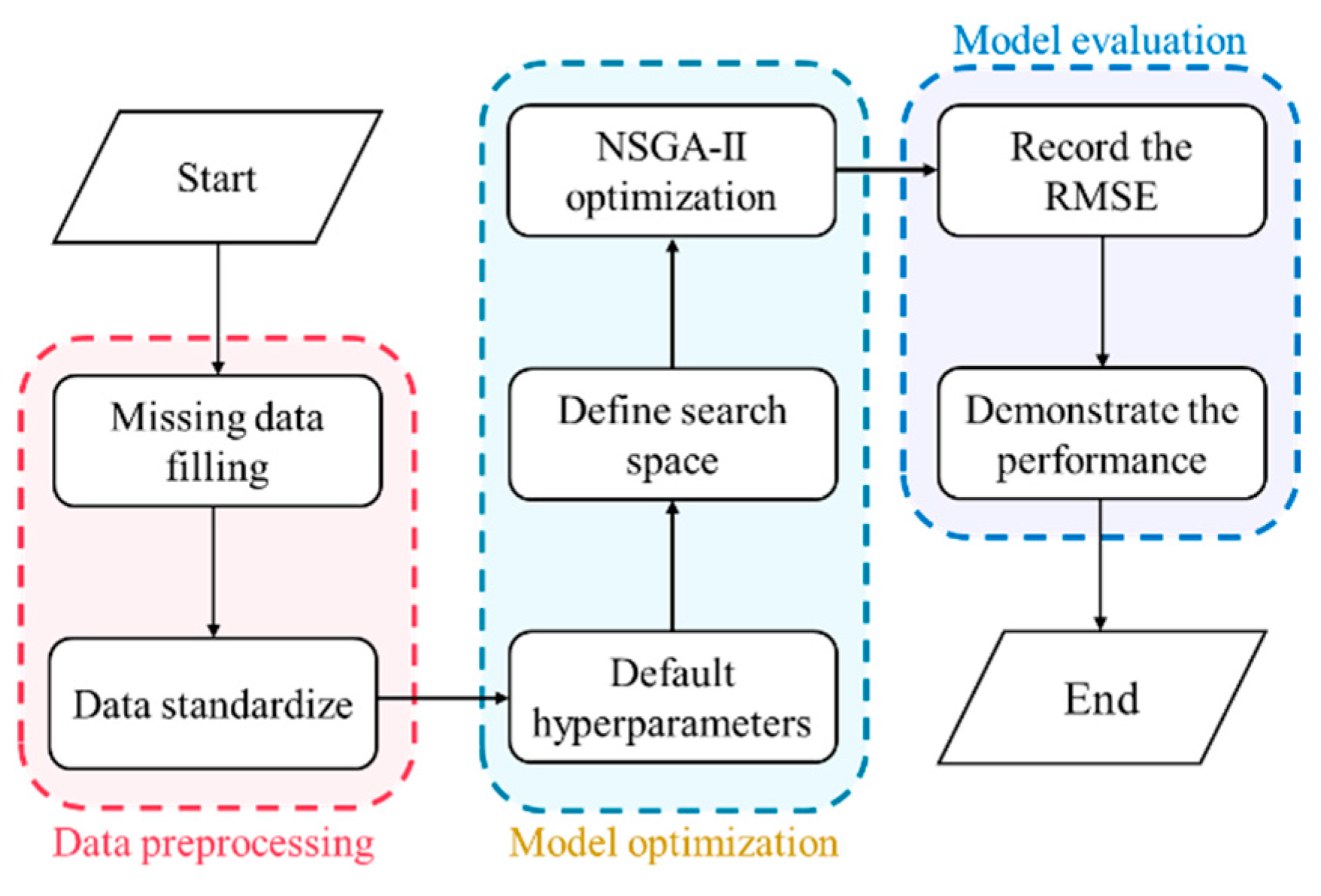

2.2.3. Data Preprocessing

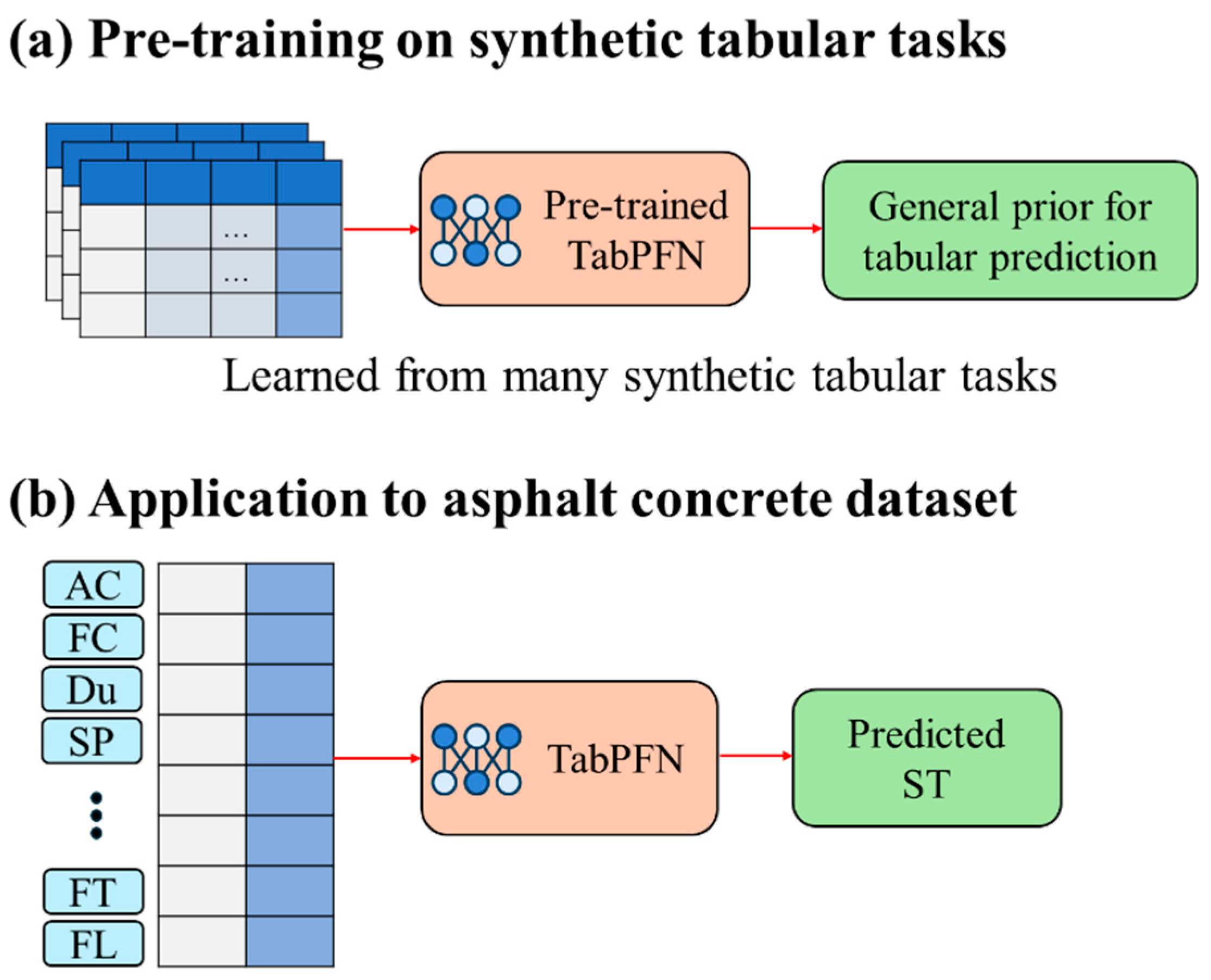

2.2. Machine Learning Models

2.3. Evaluation Metrics

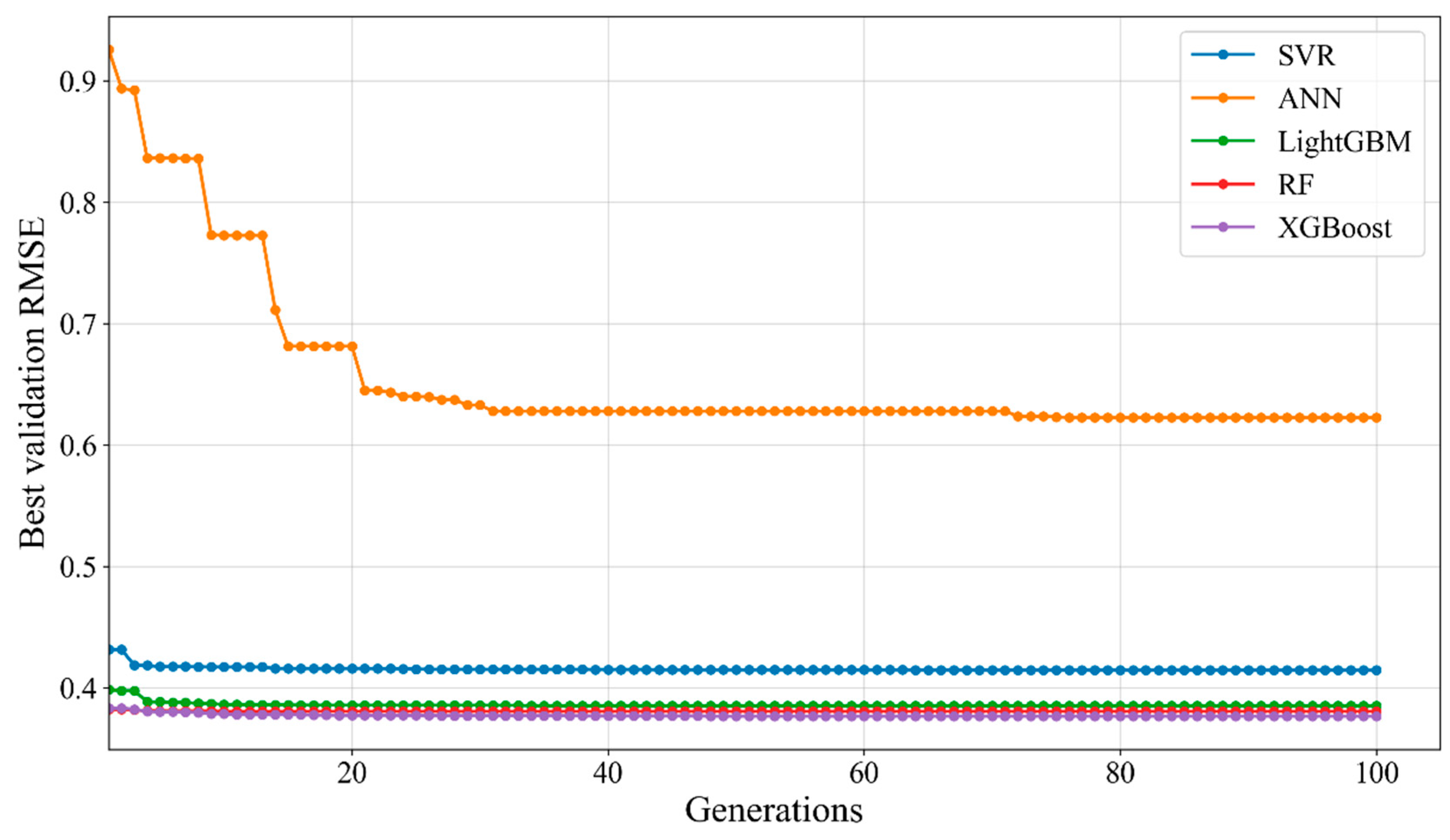

2.4. Hyperparameter Tuning Through Objective Optimization

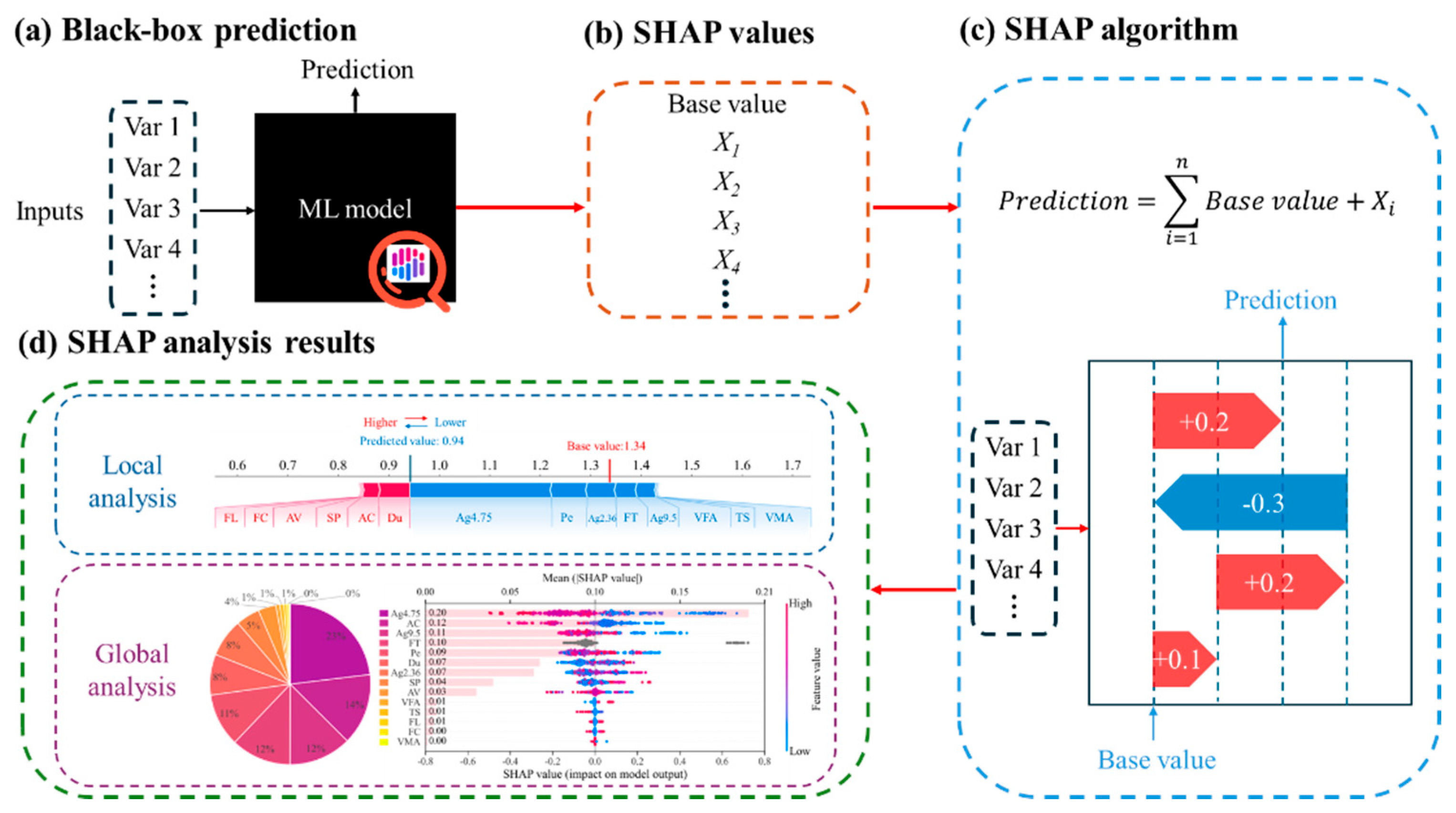

2.5. SHAP-Based Model Explanation

3. Results and Discussion

3.1. Hyperparameter Optimization Results

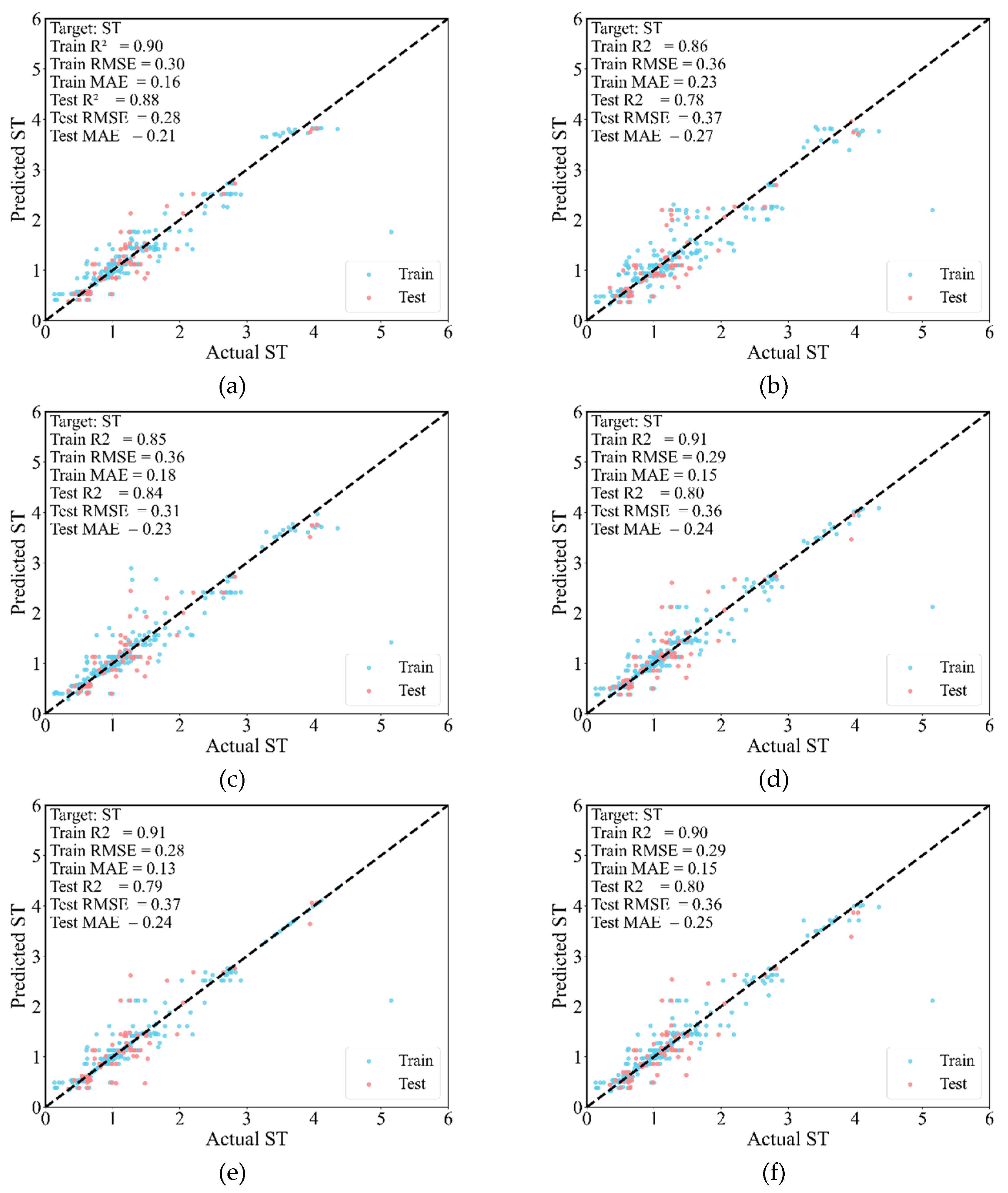

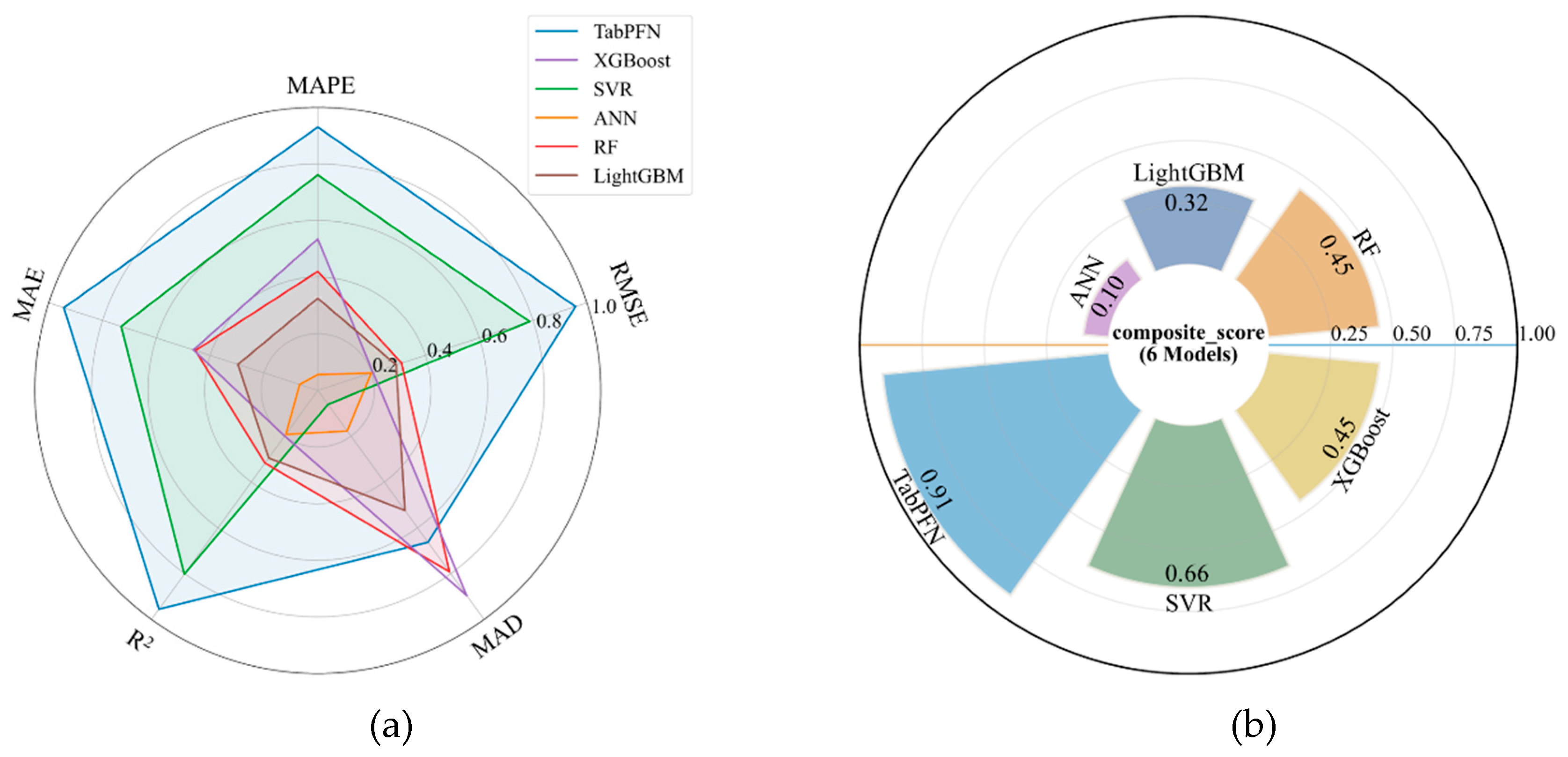

3.2. Prediction Performance

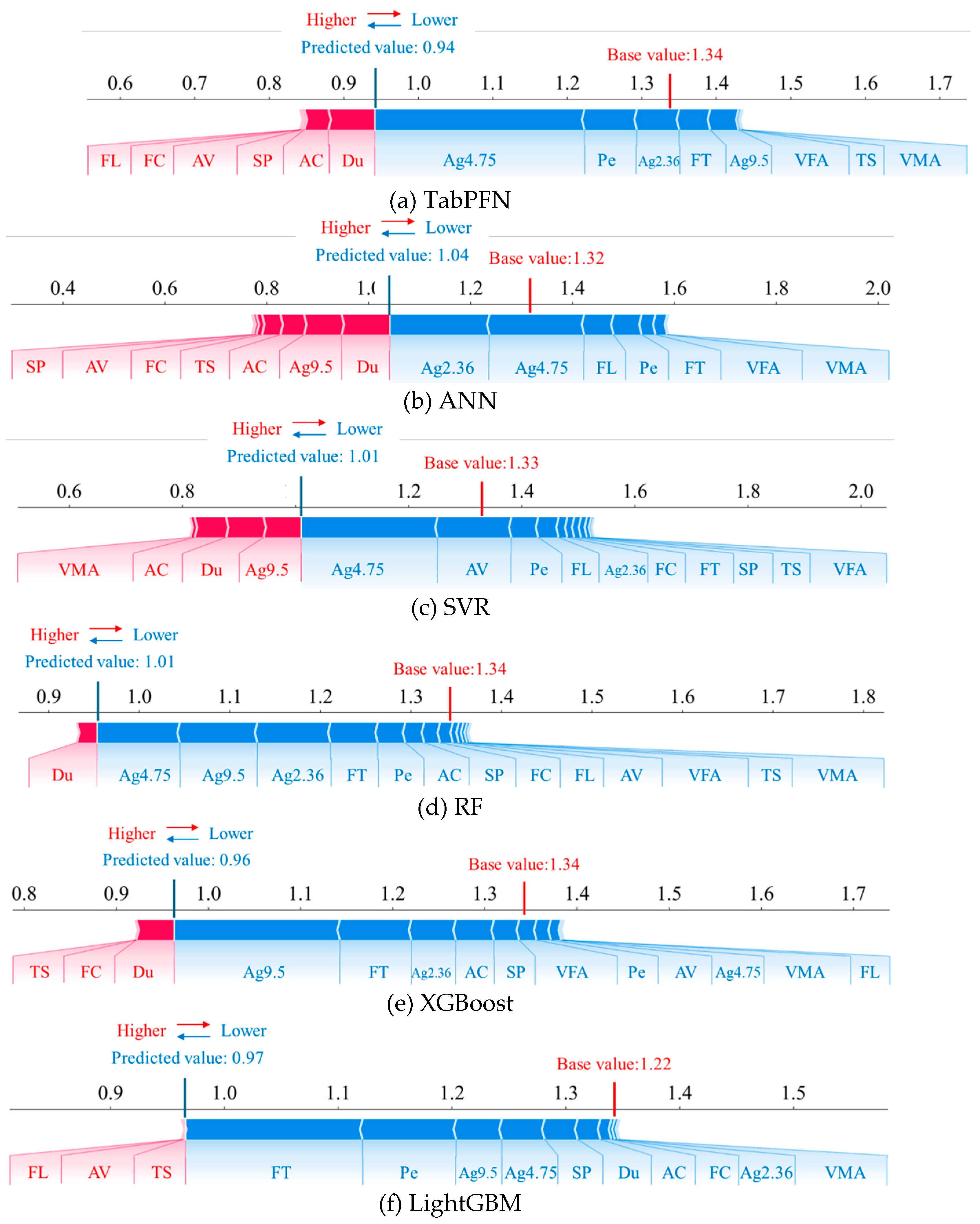

3.3. Local Interpretability Based on SHAP

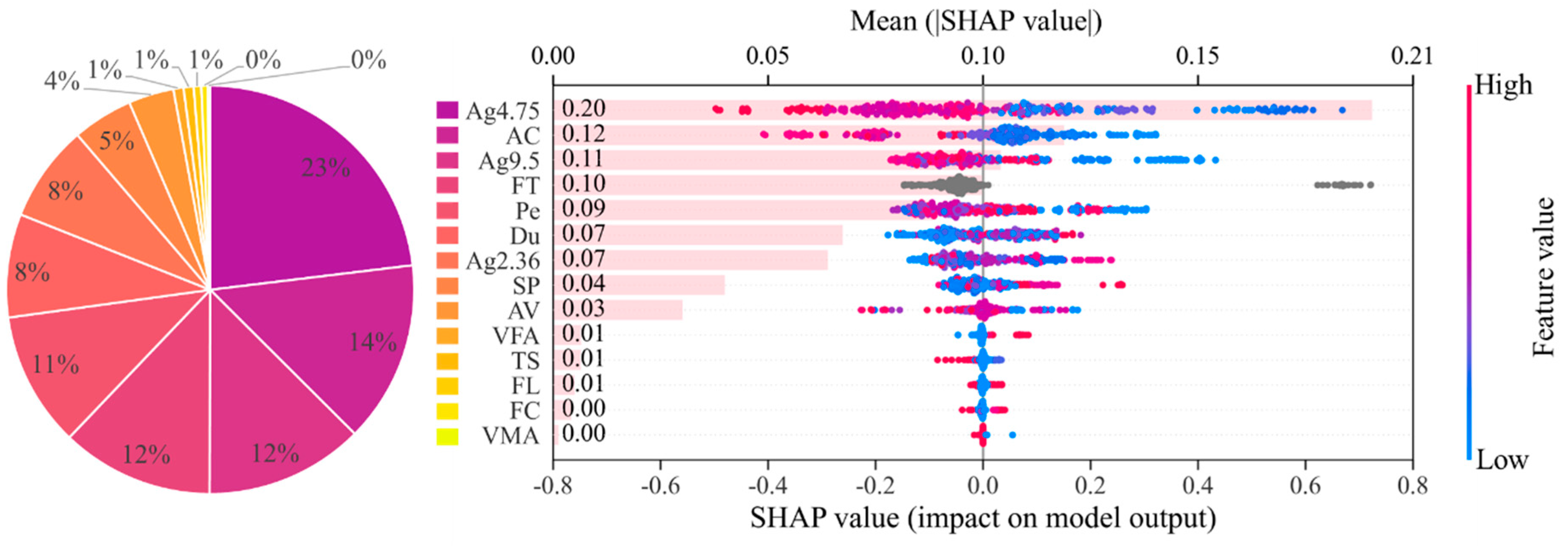

3.4. Global Interpretability Based on SHAP

3.4.1. Contribution of Individual Features

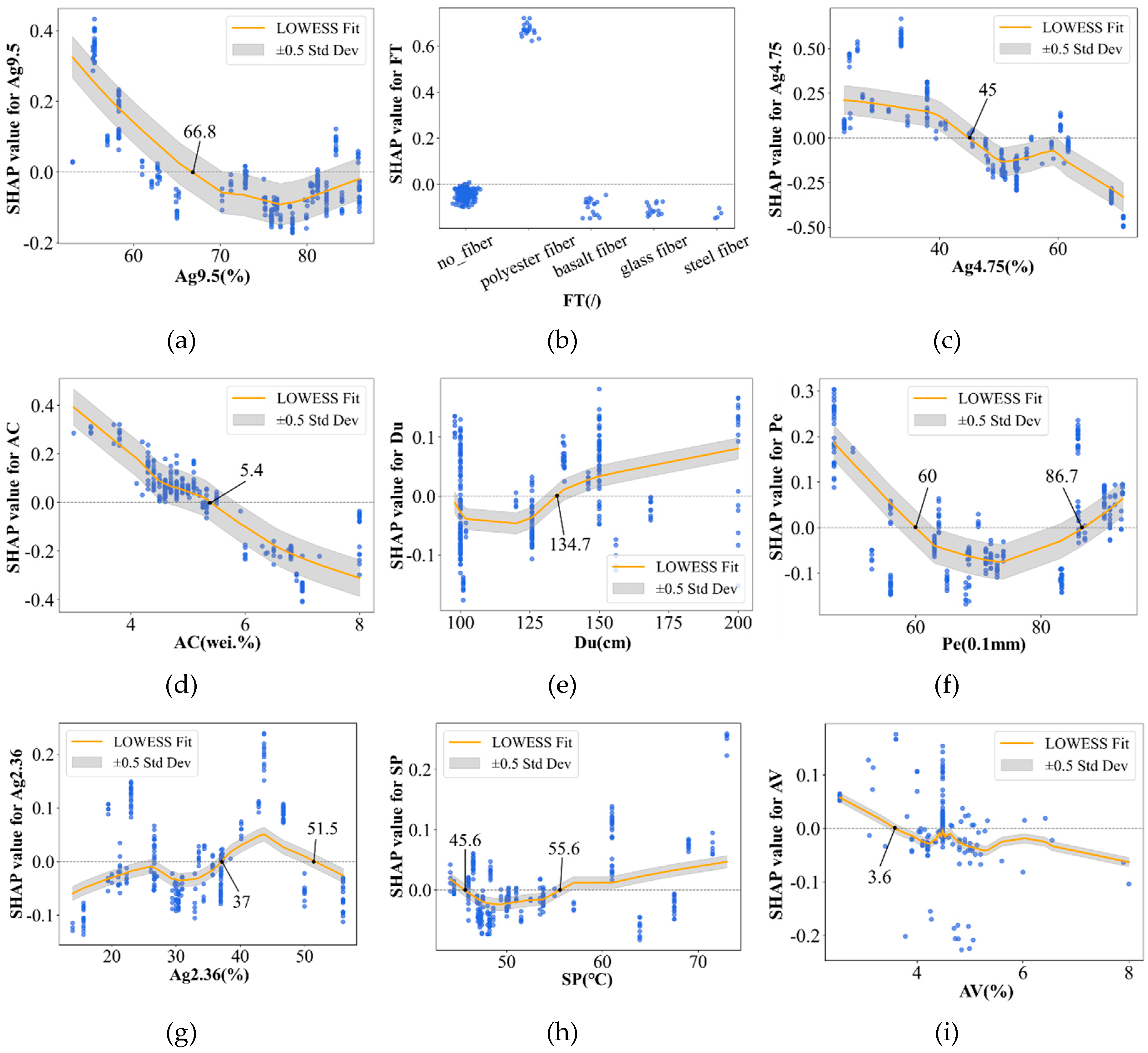

3.4.2. Feature-Wise Dependence Analysis

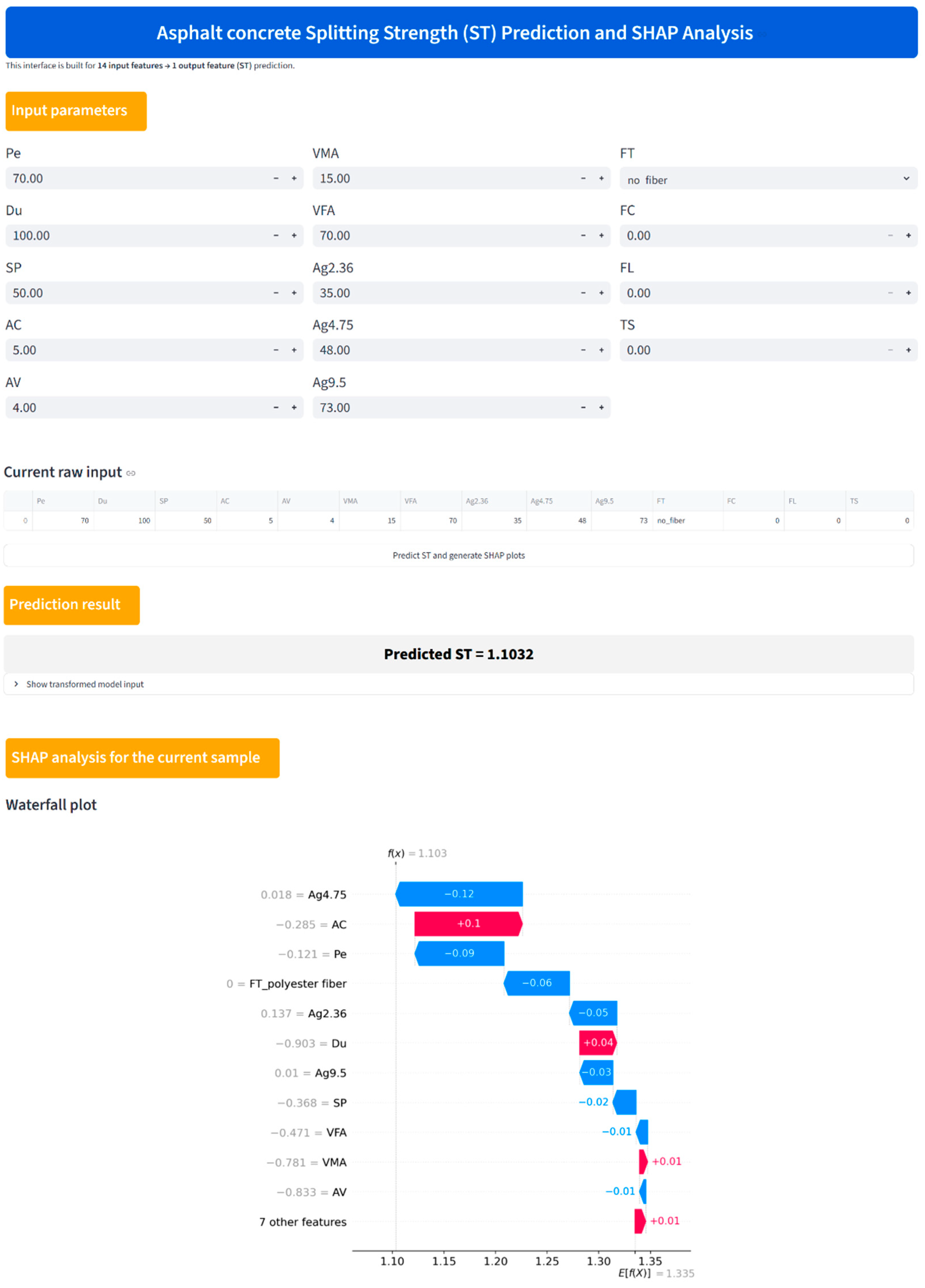

4. Graphical User Interface Platform

5. Limitations and Future Work

5.1. Overall Effectiveness

5.2. Challenges and Limitations

5.3. Opportunities for Future Research

6. Conclusion

- All six machine learning models demonstrated satisfactory capability for ST prediction, confirming that ML is effective in capturing the nonlinear relationships between mixture design variables and splitting strength. Among them, TabPFN achieved the best overall predictive performance on the testing set, with the lowest RMSE of 0.28, the highest R² of 0.88, and the highest composite score of 0.91. SVR ranked second overall, while XGBoost, RF, and LightGBM showed moderate but still acceptable predictive performance. These results indicate that TabPFN is the most suitable model for ST prediction in the present dataset.

- The SHAP analysis showed that the prediction of ST is mainly governed by a limited number of dominant variables. Based on the average feature contributions across the six models, Ag9.5, FT, Ag4.75, AC, and Du were identified as high-impact variables, while Pe, Ag2.36, SP, and AV were classified as medium-impact variables. Together, these nine variables accounted for 92.0% of the total average SHAP contribution, whereas FL, FC, TS, VFA, and VMA had relatively minor influence. In addition, the SHAP force-plot analysis for a representative sample showed that TabPFN provided the closest prediction to the actual ST value, further confirming its strong local interpretability and predictive reliability.

- The SHAP dependence analysis further revealed that the dominant variables exhibit different influence patterns on ST, including overall negative correlations, positive correlations, non-monotonic effects, and category-dependent effects. Specifically, Ag9.5, Ag4.75, AC, and AV showed overall negative correlations with ST; Du showed an overall positive correlation; and Pe, Ag2.36, and SP exhibited non-monotonic relationships. For the categorical feature FT, polyester fiber showed a comparatively stronger positive contribution to ST than the other fiber types in the present dataset under the current data conditions. Based on the dependence analysis, the favorable ranges for improving ST were identified as Ag9.5 < 66.8%, Ag4.75 < 45.0%, AC < 5.4 wt.%, AV < 3.6%, Du > 134.7 cm, Pe < 60 or > 86.7 (0.1 mm), 37.0% < Ag2.36 < 51.5%, and SP < 45.6 °C or > 55.6 °C.

- Beyond model construction and interpretation, this study also established a GUI platform to enhance the accessibility and applicability of the developed framework. By integrating prediction and SHAP-based explanation into a user-oriented interface, the platform provides a practical tool for estimating ST and understanding the role of individual design variables. Overall, the proposed framework offers not only accurate prediction of splitting strength, but also interpretable guidance for mixture design, thereby demonstrating the potential of explainable artificial intelligence in the intelligent design and optimization of asphalt concrete.

Author Contributions

Funding

Ethical Approval

Data Availability Statement

Abbreviation List

| Abbreviation | Full name |

| AC | Asphalt content |

| Ag2.36 | 2.36 mm aggregate passing rate |

| Ag4.75 | 4.75 mm aggregate passing rate |

| Ag9.5 | 9.5 mm aggregate passing rate |

| AV | Air voids |

| Du | Ductility |

| FC | Fiber content |

| FL | Fiber length |

| FT | Fiber type |

| Pe | Penetration |

| SP | Softening point |

| ST | Splitting strength |

| TS | Tensile strength |

| VFA | Voids filled with asphalt |

| VMA | Voids in mineral aggregate |

Appendix A. Data Description

Appendix B. Model Configuration and Performance Evaluation

| Models | Hyperparameters |

| TabPFN | default hyperparameters |

| ANN | n= 2 |

| hidden_layer_sizes = (128, 64) | |

| learning_rate_init = 0.01 | |

| batch_size = 151 | |

| activation = relu | |

| solver = adam | |

| validation_fraction = 0.1 | |

| early_stopping=True | |

| SVR | C = 5.48 |

| gamma = 0.14 | |

| epsilon = 0.24 | |

| kernel = rbf | |

| RF | n_estimators = 370 |

| max_depth = 13 | |

| min_samples_split = 2 | |

| min_samples_leaf = 1 | |

| max_features = log2 | |

| bootstrap = True | |

| XGBoost | n_estimators = 207 |

| learning_rate = 0.09 | |

| max_depth = 6 | |

| objective = reg:squarederror | |

| tree_method = hist | |

| LightGBM | n_estimators = 477 |

| learning_rate = 0.05 | |

| max_depth = 7 | |

| min_child_samples= 12 | |

| reg_alpha = 0.07 | |

| reg_lambda = 0.04 | |

| num_leaves = 57 |

| Model | Metrics | ||||

| RMSE | MAE | MAPE | MAD | R2 | |

| TabPFN | 0.28 | 0.21 | 18.01 | 0.14 | 0.88 |

| ANN | 0.37 | 0.27 | 24.87 | 0.16 | 0.78 |

| SVR | 0.31 | 0.23 | 19.81 | 0.17 | 0.84 |

| RF | 0.36 | 0.24 | 21.69 | 0.14 | 0.80 |

| XGBoost | 0.37 | 0.24 | 21.11 | 0.13 | 0.79 |

| LightGBM | 0.36 | 0.25 | 22.21 | 0.14 | 0.80 |

Appendix C. SHAP Analysis Demonstration

References

- Wang, F., Hoff, I., Yang, F., Wu, S., Xie, J., Li, N., and Zhang, L. (2021). Comparative assessments for environmental impacts from three advanced asphalt pavement construction cases. Journal of Cleaner Production, 297, p.126659. [CrossRef]

- AlKheder, S., AlKandari, D., and AlYatama, S. (2022). Sustainable assessment criteria for airport runway material selection: A fuzzy analytical hierarchy approach. Engineering, Construction and Architectural Management, 29(8), pp.3091-3113. [CrossRef]

- James, W., and Thompson, M. K. (2021). Contaminants from four new pervious and impervious pavements in a parking-lot. Advances in Modeling the Management of Stormwater Impacts, pp. 207-222. CRC Press. [CrossRef]

- Ning, Z., Sun, Z., Liu, Y., Dong, J., Meng, X., Wang, Q., and Wei, Y. (2024). Evaluating the impervious performance of hydraulic asphalt concrete in embankment dams: A study of crack evolution at different temperatures. Construction and Building Materials, 440, p.137247. [CrossRef]

- Bieliatynskyi, A., Yang, S., Pershakov, V., Shao, M., and Ta, M. (2022). Features of the hot recycling method used to repair asphalt concrete pavements. Materials Science-Poland, 40(2), pp.181-195. [CrossRef]

- Yao, H., Wang, Y., Ma, P., Li, X., and You, Z. (2023). A literature review: asphalt pavement repair technologies and materials. Proceedings of the Institution of Civil Engineers-Engineering Sustainability, 177(5), pp. 259-273. [CrossRef]

- Rivera-Pérez, J., Talebpour, A., and Al-Qadi, I. L. (2023). Prediction of asphalt concrete flexibility index and rut depth utilising deep learning and Monte Carlo Dropout simulation. International Journal of Pavement Engineering, 24(1), p.2253964. [CrossRef]

- Ma, R., Li, Y., Cheng, P., Chen, X., and Cheng, A. (2024). Low-temperature cracking and improvement methods for asphalt pavement in cold regions: A review. Buildings, 14(12), p.3802. [CrossRef]

- Al-Atroush, M. E. (2022). Structural behavior of the geothermo-electrical asphalt pavement: A critical review concerning climate change. Heliyon, 8(12). [CrossRef]

- Arabzadeh, A., Ceylan, H., Kim, S., Gopalakrishnan, K., and Sassani, A. (2016). Superhydrophobic coatings on asphalt concrete surfaces: Toward smart solutions for winter pavement maintenance. Transportation Research Record, 2551(1), pp.10-17. [CrossRef]

- Dias, J. F., Picado-Santos, L. G., and Capitão, S. D. (2014). Mechanical performance of dry process fine crumb rubber asphalt mixtures placed on the Portuguese road network. Construction and Building Materials, 73, pp.247-254. [CrossRef]

- Zaumanis, M., Mallick, R. B., and Frank, R. (2016). 100% hot mix asphalt recycling: Challenges and benefits. Transportation Research Procedia, 14, pp.3493-3502. [CrossRef]

- Liu, Q. T., and Wu, S. P. (2014). Effects of steel wool distribution on properties of porous asphalt concrete. Key Engineering Materials, 599, pp.150-154. [CrossRef]

- García, A., Norambuena-Contreras, J., Bueno, M., and Partl, M. N. (2014). Influence of steel wool fibers on the mechanical, termal, and healing properties of dense asphalt concrete. Journal of Testing and Evaluation, 42(5), pp.1107-1118. [CrossRef]

- Pasandín, A. R., and Pérez, I. (2015). Overview of bituminous mixtures made with recycled concrete aggregates. Construction and Building Materials, 74, pp.151-161. [CrossRef]

- Wang, L., Zhang, J., Song, M., Tian, B., Li, K., Liang, Y., Han, J., and Wu, Z. (2017). A shell-crosslinked polymeric micelle system for pH/redox dual stimuli-triggered DOX on-demand release and enhanced antitumor activity. Colloids and Surfaces B: Biointerfaces, 152, pp.1-11. [CrossRef]

- Hejazi, S. M., Abtahi, S. M., Sheikhzadeh, M., and Semnani, D. (2008). Introducing two simple models for predicting fiber-reinforced asphalt concrete behavior during longitudinal loads. Journal of Applied Polymer Science, 109(5), pp.2872-2881. [CrossRef]

- Karanam, G. D., and Underwood, B. S. (2024). Mechanical characterization and performance prediction of fiber-modified asphalt mixes. International Journal of Pavement Research and Technology, pp.1-19. [CrossRef]

- Khan, A. R., Fareed, A., Ali, A., Pandya, H., Ali, A., and Mehta, Y. (2023). Seasonal performance prediction comparison of unreinforced and fiber-reinforced Asphalt mixtures for airfield pavements. In Airfield and Highway Pavements 2023 (pp. 374-384). [CrossRef]

- Tan, X., Xing, J., Wang, Y., Qiu, H., Mahjoubi, S., and Guo, P. (2026). Explainable machine learning for predicting compressive strength of rubberized concrete: SHAP interpretation, lifecycle assessment, and design recommendations. Journal of Cleaner Production, 538, p.147338. [CrossRef]

- Tan, X., Xing, J., Mahjoubi, S., Guo, P., Wei, Z., Wang, Y., Ren, J., Ai, L., and Bao, Y. (2026). Explainable machine learning and life cycle assessment for sustainable design of fiber-reinforced asphalt concrete. Journal of Cleaner Production, 547, p.147759. [CrossRef]

- Upadhya, A., Thakur, M. S., Al Ansari, M. S., Malik, M. A., Alahmadi, A. A., Alwetaishi, M., and Alzaed, A. N. (2022). Marshall stability prediction with glass and carbon fiber modified asphalt mix using machine learning techniques. Materials, 15(24), p.8944. [CrossRef]

- Upadhya, A., Thakur, M. S., and Sihag, P. (2024). Predicting Marshall stability of carbon fiber-reinforced asphalt concrete using machine learning techniques. International Journal of Pavement Research and Technology, 17(1), pp.102-122. [CrossRef]

- Phung, B. N., Le, T. H., Nguyen, M. K., Nguyen, T. A., and Ly, H. B. (2023). Practical numerical tool for marshall stability prediction based on machine learning: an application for asphalt concrete containing basalt fiber. Journal of Science and Transport Technology, pp.26-43. [CrossRef]

- Lipton, Z. C. (2018). The mythos of model interpretability: In machine learning, the concept of interpretability is both important and slippery. Queue, 16(3), pp.31-57. [CrossRef]

- Wang, Z. (2020). Evaluation method of adhesion between aggregate and bitumen and long-term moisture susceptibility evaluation of hydraulic asphalt concrete. PhD Thesis, Chinese Research Institute of Water Resources and Hydropower Engineering. [CrossRef]

- He, J., Zhu, X., Yang, H., and Wang, W. (2014). Experimental research on the gravel aggregate water stability performance of asphalt concrete core wall. China Rural Water and Hydropower, (11), pp.109-112. https://irrigate.whu.edu.cn/CN/Y2014/V0/I11/109.

- Lin, P. (2025). Research on Mixture Proportion Design and Road Performance of Asphalt Concrete in Hot Area. Engineering and Technological Research, 10 (03), pp.125-127. [CrossRef]

- Tu, Y., Chen, G., Cheng, Z., and Cheng, S. (2022). Effect of nano-SiO, on properties of recycled aggregate asphalt mixture. Materials Reports, 36 (S1), pp.220-224. http://www.mater-rep.com/CN/Y2022/V36/IZ1/22030139.

- Lin, Z., and Wang, F. (2020). The Crack Resistance Experiment of Composite Fiber Asphalt Concrete. Journal of Shenyang University (Natural Science), 36 (03), pp.500-506. [CrossRef]

- Qin, L. (2019). Study on the Effects of Aging on the Volume and Water Stability Properties of Steel Slag and Its Asphalt Concrete. Journal of China & Foreign Highway, 39 (06), pp.264-270. [CrossRef]

- Kong, Z., Zhang, Y., and Zhang, A. (2014). The influence of granite powder filler on the water stability of asphalt concrete. Journal of China & Foreign Highway, 34 (05), pp.287-290. [CrossRef]

- Wang, A., Jiao, C., Han, C., and Kaung, Q. (2025). Study on the effect of salt corrosion on the performance of cold mixpermeable asphalt concrete. New Building Materials, 52 (07), pp.67-70. https://www.cnki.com.cn/Article/CJFDTOTAL-XXJZ202507013.htm.

- Fan, T. (2020). Study on performance of calcium sulfate whisker - polyester fiber compound modified asphalt and asphalt mixture. PhD Thesis, Chang’an University. [CrossRef]

- Ma, Z. (2024). Design and application research of electrically heated ice and snow melting paving structure based on conductive rubber composite material. PhD Thesis, Jilin University. [CrossRef]

- Shu, J., Xv, K., Liu, S., Wan, P., Liu, Q., and Wu, S. Effects of calcium alginate/Fe3O4 composite self-healing capsules on road performance of asphalt concrete. Journal of Wuhan University of Technology (Transportation Science & Engineering), pp.1-17. https://link.cnki.net/urlid/42.1824.U.20250325.1426.0.

- Zhu, C. (2018). Research on road performance and mechanical properties of diatomite-basalt fiber compound modified asphalt mixture. PhD Thesis, Jilin University. https://cdmd.cnki.com.cn/Article/CDMD-10183-1018213539.htm.

- Ge, Q., Wu, H., and Wang, G. (2019). The mix design of graphite steel and carbon fiber modified conductive asphalt mixture. Technology & Economy in Areas of Communications, 21 (06), pp.51-54. [CrossRef]

- Zhang, Z., Chai, Z., and Tao, Z. (2019). Research on the road performance of Dense-Mix Concrete Waste Ash Asphalt Mixture. Journal of Highway and Transportation Research and Development, 15 (08), pp.72-75. https://www.cnki.com.cn/Article/CJFDTOTAL-GLJJ201908025.htm.

- Zhu, T. (2017). Structural analysis and design for recycled asphalt pavement based on the performance characteristics of recycled asphalt mixture. PhD Thesis, Southeast University. https://cdmd.cnki.com.cn/Article/CDMD-10286-1017171128.htm.

- Zhao, H. (2016). Microwave absorbing properties and road performance of asphalt mixture doped with natural magnetite. PhD Thesis, Chang’an University. https://cdmd.cnki.com.cn/Article/CDMD-10710-1017804015.htm.

- Tang, J. (2013). Experimental research on composition and performance of fiber reinforced asphalt mixture. PhD Thesis, Zhengzhou University. https://cdmd.cnki.com.cn/Article/CDMD-10459-1013257892.htm.

- Liang, X. (2011). The Research of oil-stone interface adhesive based on modified surface of asphalt and stone. PhD Thesis, Jilin University. https://cdmd.cnki.com.cn/Article/CDMD-10183-1012257811.htm.

- Gao, C. (2012). Microcosmic analysis and performance research of basalt fiber asphalt concrete. PhD Thesis, Jilin University. https://cdmd.cnki.com.cn/Article/CDMD-10183-1012365736.htm.

- Shen, F. (2012). Research on composite steel bridge deck pavement of cement-emulsifying asphalt and waterborne epoxy. PhD Thesis, Wuhan University of Technology. https://cdmd.cnki.com.cn/Article/CDMD-10497-1012442305.htm.

- Luo, S. (2012). Pavement disease environment and dynamic coupling analysis of asphalt pavement in high temperature and rainy area. PhD Thesis, Central South University. https://cdmd.cnki.com.cn/Article/CDMD-10533-1012475004.htm.

- Chen, M. (2012). Research on snow melting and solar energy collection for thermal conductive asphalt pavement. PhD Thesis, Wuhan University of Technology. https://cdmd.cnki.com.cn/Article/CDMD-10497-1012442416.htm.

- Wei, G. (2020). Study on Crack Resistance of fiberglass-polyester paving mat in asphalt pavement. PhD Thesis, Chongqing Jiaotong University. [CrossRef]

- Li, C. (2020). Study on the self-healing performance and mechanism of asphalt concrete under microwave radiation. PhD Thesis, Wuhan University of Technology. [CrossRef]

- Zhang, Q. (2020). Study on water damage mechanism of asphalt mixture in multi-factor environment of the south coast. PhD Thesis, Zhejiang University. [CrossRef]

- Liu, D. (2018). Study on road performance and structural characteristics of asphalt stabilized macadam with high modulus. PhD Thesis, Wuhan University of Technology. [CrossRef]

- Liu, W. (2018). Study on enhancement mechanism and healing evaluation of microwave absorption of asphalt mixture. PhD Thesis, Southeast University. https://cdmd.cnki.com.cn/Article/CDMD-10286-1019650164.htm.

- Xv, C. (2010). Research on performance of glass fiber-diatomite composite modified asphalt concrete. PhD Thesis, Jilin University. https://cdmd.cnki.com.cn/Article/CDMD-10183-2011014225.htm.

- Xiao, J. (2011). Study on structure formation mechanism and features of cement emulsified asphalt mixture. PhD Thesis, Chang’an University. https://cdmd.cnki.com.cn/Article/CDMD-10710-1016327595.htm.

- Ai, C. (2008). Characteristics and design methods of asphalt pavement in plateau-cold region PhD Thesis, Southwest Jiaotong University. https://cdmd.cnki.com.cn/Article/CDMD-10613-2008177745.htm.

- Zhao, P., Li, M., He, W., Liu, Z., and Gao, Y. (2018). Application research of reinforced PAN fiber in color asphalt bus pavement. Construction Technology, 47 (20), pp.19-21+25. https://www.cnki.com.cn/Article/CJFDTOTAL-SGJS201820009.htm.

- Phung, B. N., Le, T. H., Nguyen, T. A., Hoang, H. G. T., and Ly, H. B. (2023). Novel approaches to predict the Marshall parameters of basalt fiber asphalt concrete. Construction and Building Materials, 400, p.132847. [CrossRef]

- Benesty, J., Chen, J., Huang, Y., and Cohen, I. (2009). Pearson correlation coefficient. Noise Reduction in Speech Processing, pp.1-4. Springer Berlin Heidelberg. [CrossRef]

- Guo, P., Meng, W., and Bao, Y. (2024). Knowledge-guided data-driven design of ultra-high-performance geopolymer (UHPG). Cement and Concrete Composites, 153, p.105723. [CrossRef]

- Zhou, C., Wang, W., and Zheng, Y. (2024). Data-driven shear capacity analysis of headed stud in steel-UHPC composite structures. Engineering Structures, 321, p.118946. [CrossRef]

- Hancock, J. T., and Khoshgoftaar, T. M. (2020). Survey on categorical data for neural networks. Journal of Big Data, 7(1), p.28. [CrossRef]

- Singh, D., and Singh, B. (2020). Investigating the impact of data normalization on classification performance. Applied Soft Computing, 97, p.105524. [CrossRef]

- Jain, A., Nandakumar, K., and Ross, A. (2005). Score normalization in multimodal biometric systems. Pattern Recognition, 38(12), pp.2270-2285. [CrossRef]

- Ejaz, U., Khan, S.M., Jehangir, S., Ahmad, Z., Abdullah, A., Iqbal, M., Khalid, N., Nazir, A. and Svenning, J.C. (2024). Monitoring the Industrial waste polluted stream-Integrated analytics and machine learning for water quality index assessment. Journal of Cleaner Production, 450, p.141877. [CrossRef]

- Hollmann, N., Müller, S., Purucker, L., Krishnakumar, A., Körfer, M., Hoo, S.B., Schirrmeister, R.T., and Hutter, F., Accurate predictions on small data with a tabular foundation model. Nature, 2025. 637(8045): pp.319-326. [CrossRef]

- Cortes, C., and Vapnik, V. (1995). Support-vector networks. Machine Learning, 20, pp.273-297. [CrossRef]

- Breiman, L. (2001). Random forests. Machine learning, 45(1), pp.5-32. [CrossRef]

- Chen, T., and Guestrin, C. (2016). XGBoost: A scalable tree boosting system. Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp.785-794. [CrossRef]

- Ke, G., Meng, Q., Finley, T., Wang, T., Chen, W., Ma, W., Ye, Q and Liu, T. Y. (2017). LightGBM: A highly efficient gradient boosting decision tree. Proceedings of the 31st International Conference on Neural Information Processing Systems, pp.3149-3157. https://proceedings.neurips.cc/paper/2017/file/6449f44a102fde848669bdd9eb6b76fa-Paper.pdf.

- Kaveh, A. (2024). Applications of Artificial Neural Networks and Machine Learning in Civil Engineering. Springer. [CrossRef]

- Hollmann, N., Müller, S., Eggensperger, K., and Hutter, F. (2022). TabPFN: A transformer that solves small tabular classification problems in a second. arXiv preprint arXiv:2207.01848. [CrossRef]

- PriorLabs. (2026). Tabular Foundation Models. https://priorlabs.ai/tabpfn.

- Sun, Y., Ding, S., Zhang, Z., and Jia, W. (2021). An improved grid search algorithm to optimize SVR for prediction. Soft Computing, 25, pp.5633-5644. [CrossRef]

- Bergstra, J., and Bengio, Y. (2012). Random search for hyper-parameter optimization. The Journal of Machine Learning Research, 13(1), pp.281-305. https://dl.acm.org/doi/10.5555/2188385.2188395.

- Yang, L., and Shami, A. (2020). On hyperparameter optimization of machine learning algorithms: Theory and practice. Neurocomputing, 415, pp.295-316. [CrossRef]

- Bergstra, J., and Bengio, Y. (2012). Random search for hyper-parameter optimization. Journal of Machine Learning Research, 13(2), pp.281-305. https://dl.acm.org/doi/10.5555/2188385.2188395.

- Deb, K., Agrawal, S., Pratap, A., and Meyarivan, T. (2000). A fast elitist non-dominated sorting genetic algorithm for multi-objective optimization: NSGA-II. In International Conference on Parallel Problem Solving from Nature, pp.849-858. Springer Berlin Heidelberg. [CrossRef]

- Lundberg, S. M. and Lee, S. I. (2017). A unified approach to interpreting model predictions. Proceedings of the 31st International Conference on Neural Information Processing Systems, pp.4768-4777. https://dl.acm.org/doi/10.5555/3295222.3295230.

- Khan, M., Lao, J., and Dai, J. G. (2022). Comparative study of advanced computational techniques for estimating the compressive strength of UHPC. Journal of Asian Concrete Federation, 8(1), pp.51-68. [CrossRef]

- Shi, C., Qian, G., Yu, H., Zhu, X., Yuan, M., Dai, W., Ge, J., and Zheng, X. (2024). Research on the evolution of aggregate skeleton characteristics of asphalt mixture under uniaxial compression loading. Construction and Building Materials, 413, p.134769. [CrossRef]

- Lin, P., Liu, X., Ren, S., Xu, J., Li, Y., and Li, M. (2023). Effects of bitumen thickness on the aging behavior of high-content polymer-modified asphalt mixture. Polymers, 15(10), p.2325. [CrossRef]

- Zhang, Y., Luo, X., Luo, R., and Lytton, R. L. (2014). Crack initiation in asphalt mixtures under external compressive loads. Construction and Building Materials, 72, pp.94-103. [CrossRef]

- Guo, M., Yao, X., and Du, X. (2023). Low temperature cracking behavior of asphalt binders and mixtures: A review. Journal of Road Engineering, 3(4), pp.350-369. [CrossRef]

- Khair, A., Wang, L., Li, H., Han, Y., Lin, Z., Sun, Y., and Zhang, H. (2026). Comparative performance evaluation of asphalt binder modified with high-content pretreated crumb rubber and various additives. Journal of Road Engineering. [CrossRef]

- Malluru, S., Islam, S. M. I., Saidi, A., Baditha, A. K., Chiu, G., and Mehta, Y. (2025). A state-of-the-practice review on the challenges of asphalt binder and a roadmap towards sustainable alternatives—A call to action. Materials, 18(10), p.2312. [CrossRef]

- Wang, X., Gu, X., Jiang, J., and Deng, H. (2018). Experimental analysis of skeleton strength of porous asphalt mixtures. Construction and Building Materials, 171, pp.13-21. [CrossRef]

| Fiber types | Sample size |

| Basalt fiber | 17 |

| Glass fiber | 14 |

| Polyester fiber | 20 |

| Steel fiber | 4 |

| No fiber | 241 |

| Variable | Unit | Min | Q1 | Q2 | Q3 | Max | Mean | STD |

| Pe | 0.1mm | 47 | 63 | 71.2 | 85.9 | 93 | 71.65 | 14.05 |

| Du | cm | 98 | 100 | 101 | 150 | 200 | 125.65 | 31.21 |

| SP | ℃ | 44.1 | 47.2 | 50 | 57 | 73 | 53 | 7.87 |

| AC | % by mass | 3 | 4.6 | 4.9 | 6.5 | 8 | 5.35 | 1.17 |

| Ag2.36 | % | 13.9 | 26.58 | 32.92 | 40.15 | 56 | 33.54 | 10.9 |

| Ag4.75 | % | 23.9 | 37.9 | 50.77 | 58.89 | 71 | 47.66 | 13.53 |

| Ag9.5 | % | 53 | 62.76 | 76.16 | 81.2 | 86 | 72.88 | 10.3 |

| AV | % | 2.54 | 4.01 | 4.34 | 4.95 | 8 | 4.41 | 0.98 |

| VMA | % | 12.1 | 14.94 | 15.36 | 16.2 | 65.6 | 17.08 | 8.65 |

| VFA | % | 17.11 | 69.03 | 72.95 | 82.59 | 83.41 | 72.91 | 11.81 |

| FC | % | 0 | 0 | 0 | 0 | 3 | 0.09 | 0.36 |

| FT | / | / | / | / | / | / | / | / |

| TS | MPa | 0 | 0 | 0 | 0 | 3250 | 237.17 | 735.13 |

| FL | mm | 0 | 0 | 0 | 0 | 12 | 1.23 | 2.94 |

| ST | MPa | 0.13 | 0.71 | 1.1 | 1.48 | 5.15 | 1.33 | 0.91 |

| No. | Model | Category | Notes |

| 1 | TabPFN | Foundation model | Transformer-based prediction |

| 2 | ANN | Classical | Nonlinear regression |

| 3 | SVR | Classical | Kernel-based regression |

| 4 | RF | Ensemble – Bagging | Bagging of decision trees |

| 5 | XGBoost | Ensemble – Boosting | Boosting model with regularization |

| 6 | LightGBM | Ensemble – Boosting | Efficient histogram-based gradient boosting |

| Variables | Proportion of models (%) | Mean (%) | |||||

| TabPFN | ANN | SVR | RF | XGBoost | LightGBM | ||

| Ag9.5 | 12 | 11 | 15 | 22 | 33 | 20 | 18.8 |

| FT | 12 | 7 | 7 | 12 | 14 | 25 | 12.8 |

| Ag4.75 | 23 | 15 | 13 | 11 | 1 | 7 | 11.7 |

| AC | 14 | 15 | 11 | 10 | 8 | 9 | 11.2 |

| Du | 8 | 10 | 9 | 10 | 17 | 12 | 11 |

| Pe | 11 | 7 | 9 | 8 | 7 | 11 | 8.8 |

| Ag2.36 | 8 | 6 | 7 | 8 | 3 | 4 | 6 |

| SP | 5 | 8 | 7 | 6 | 7 | 3 | 6 |

| AV | 4 | 5 | 7 | 5 | 6 | 7 | 5.7 |

| FL | 1 | 8 | 7 | 2 | 0 | 0 | 3 |

| FC | 0 | 1 | 4 | 4 | 1 | 1 | 1.8 |

| TS | 1 | 4 | 2 | 1 | 0 | 0 | 1.3 |

| VFA | 1 | 2 | 1 | 1 | 3 | 0 | 1.3 |

| VMA | 0 | 1 | 1 | 0 | 0 | 1 | 0.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.