Submitted:

25 March 2026

Posted:

26 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Dataset Description

2.2. Problem Formulation

2.3. Proposed Reinforcement Learning Framework

2.3.1. State Space Design

| Feature | Description | Encoding |

|---|---|---|

| Hour of day | Current hour (0-23) | Sine/cosine transformation |

| Day of week | Current day (0-6) | Sine/cosine transformation |

| Private load | Current charging load at private stations (kW) | Z-score normalization |

| Shared load | Current charging load at shared stations (kW) | Z-score normalization |

| Grid load | Total grid consumption from smart meter (kW) | Z-score normalization |

| Traffic volume | Vehicle count from nearby sensors | Z-score normalization |

| Temperature | Ambient temperature (°C) | Z-score normalization |

| Weekend indicator | Binary flag for Saturday/Sunday | Binary (0 or 1) |

2.3.2. Action Space Design

2.3.3. Reward Function Design

2.3.4. Demand Response Model

2.4. Learning Algorithm

2.5. Baseline Methods

2.6. Evaluation Metrics

2.7. Implementation Details

3. Results and Discussion

3.1. Exploratory Data Analysis

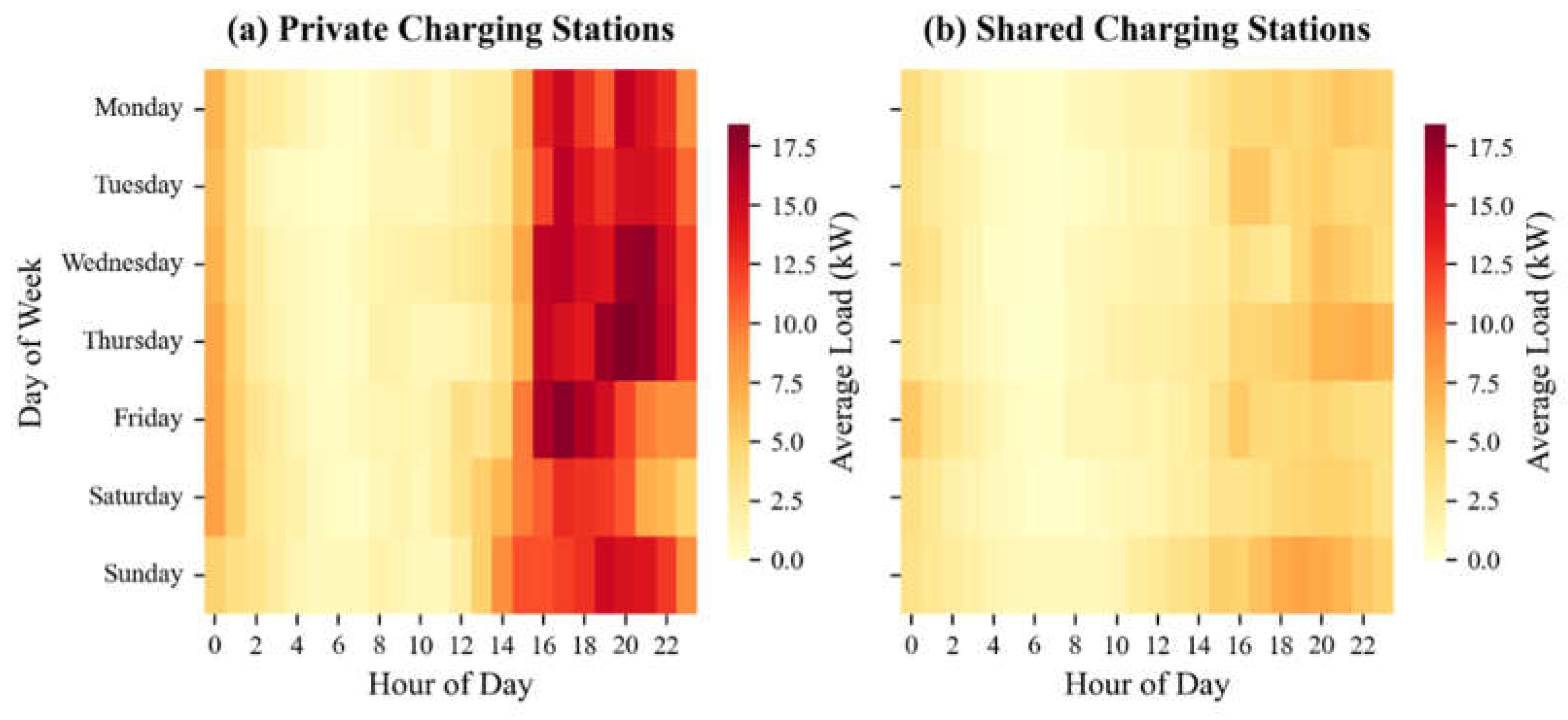

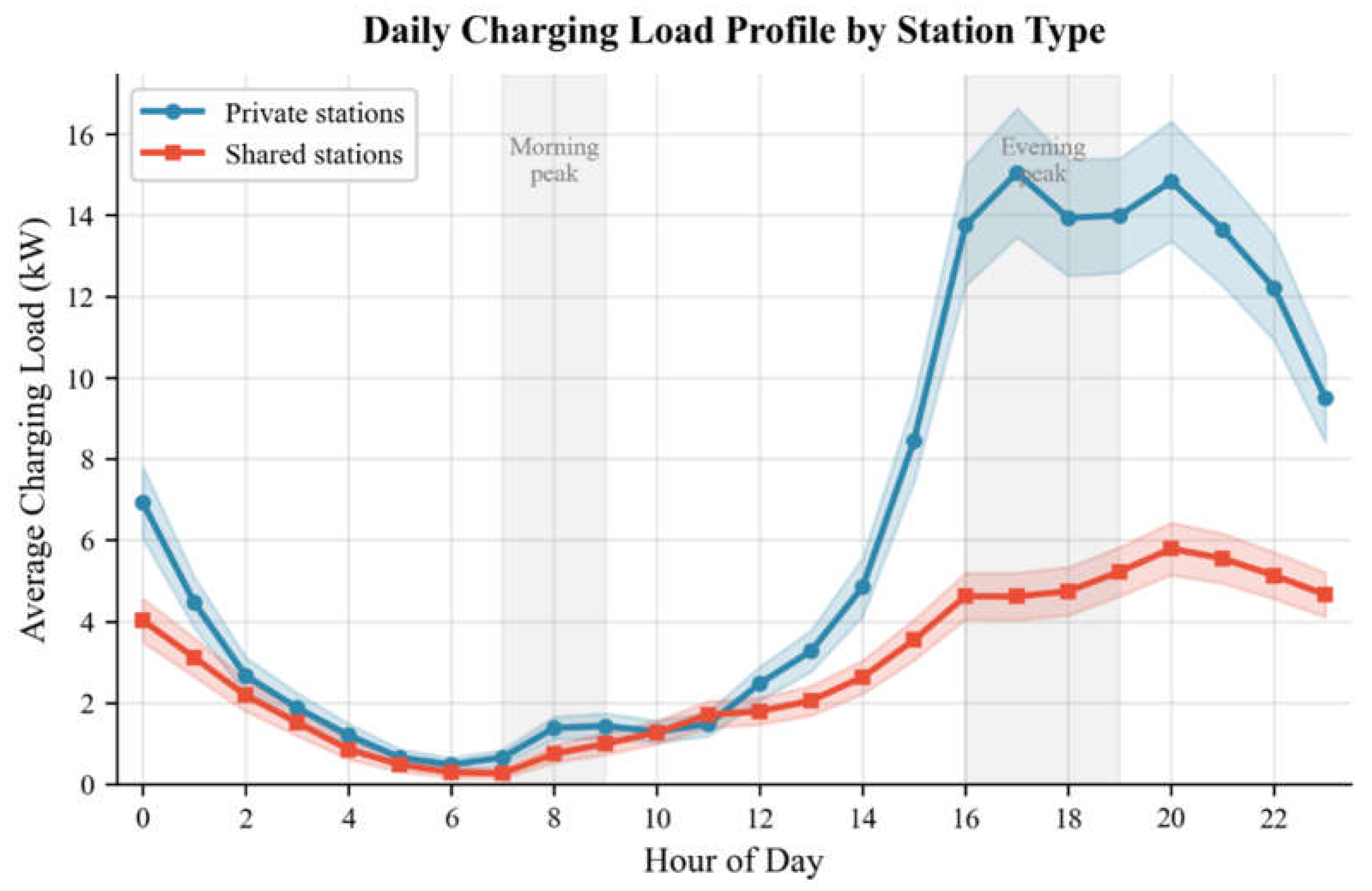

3.1.1. Temporal Charging Patterns

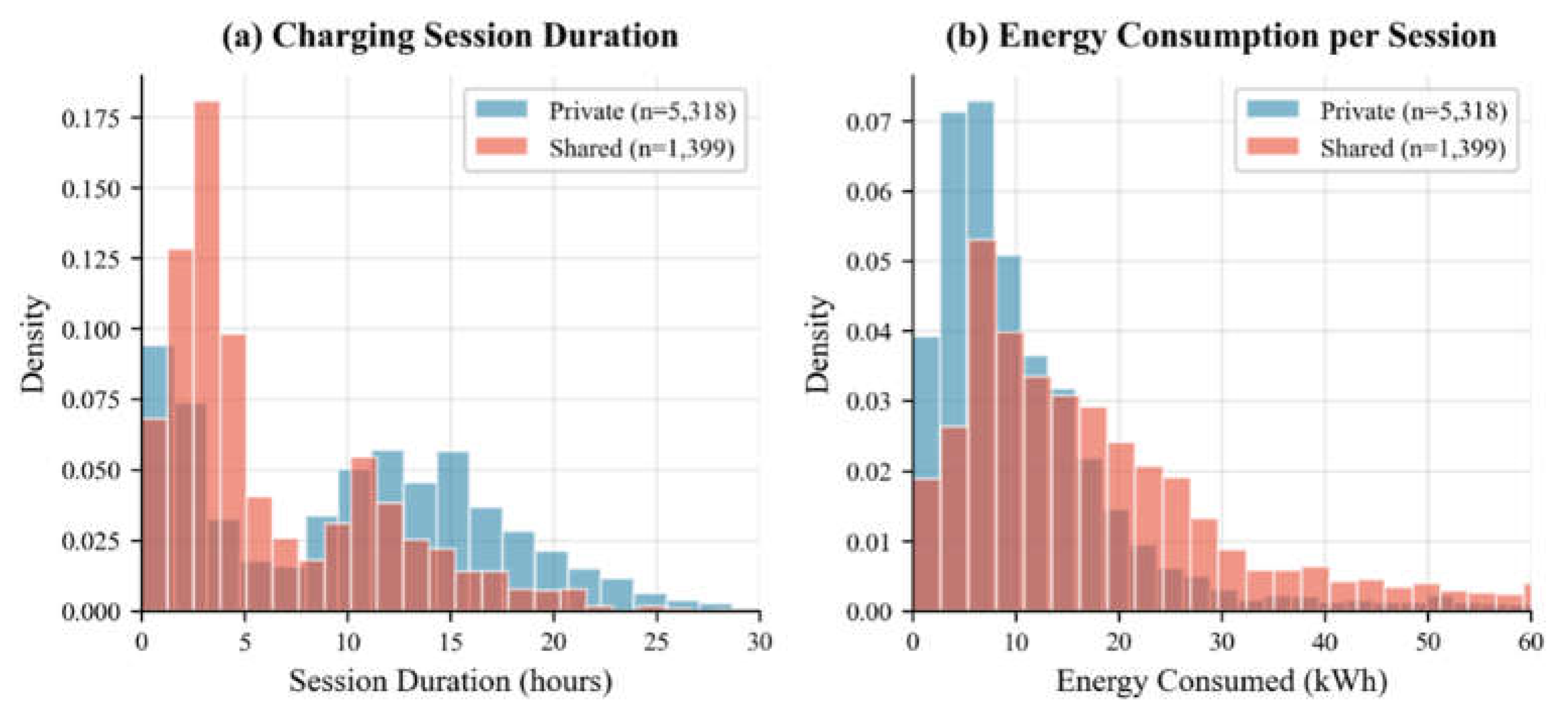

3.1.2. Session Characteristics

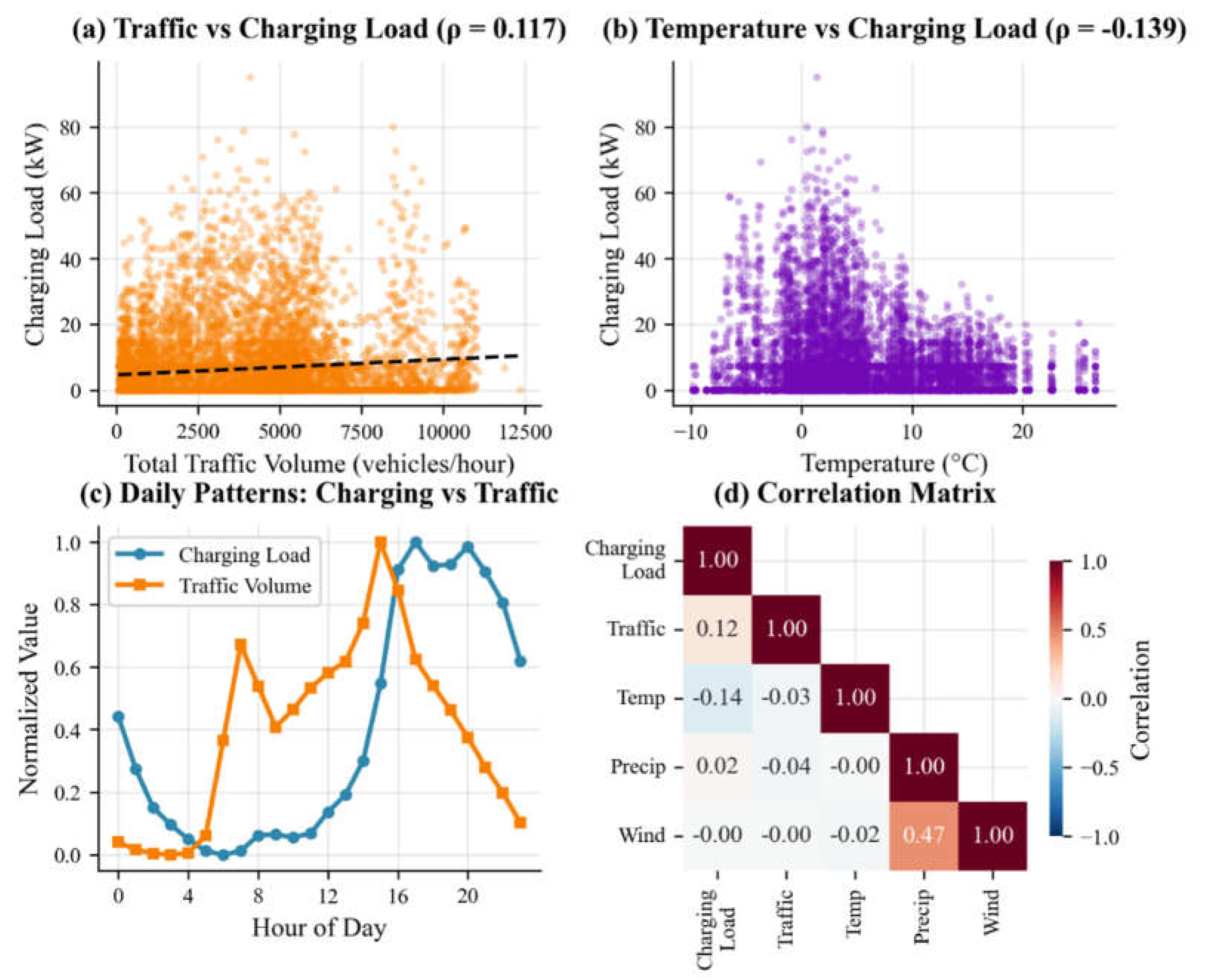

3.1.3. Correlation with External Factors

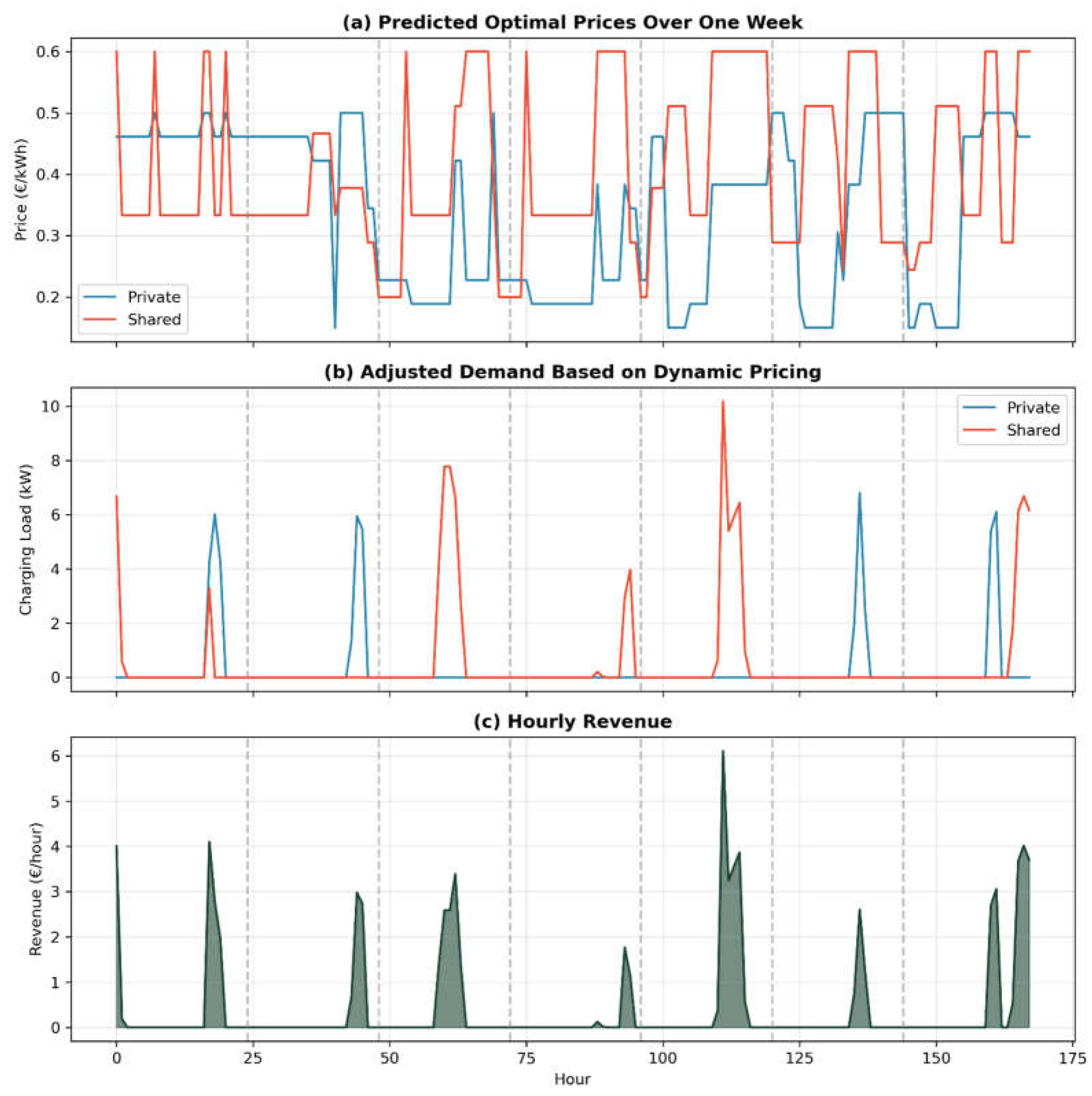

3.2. Learned Pricing Policy Behavior

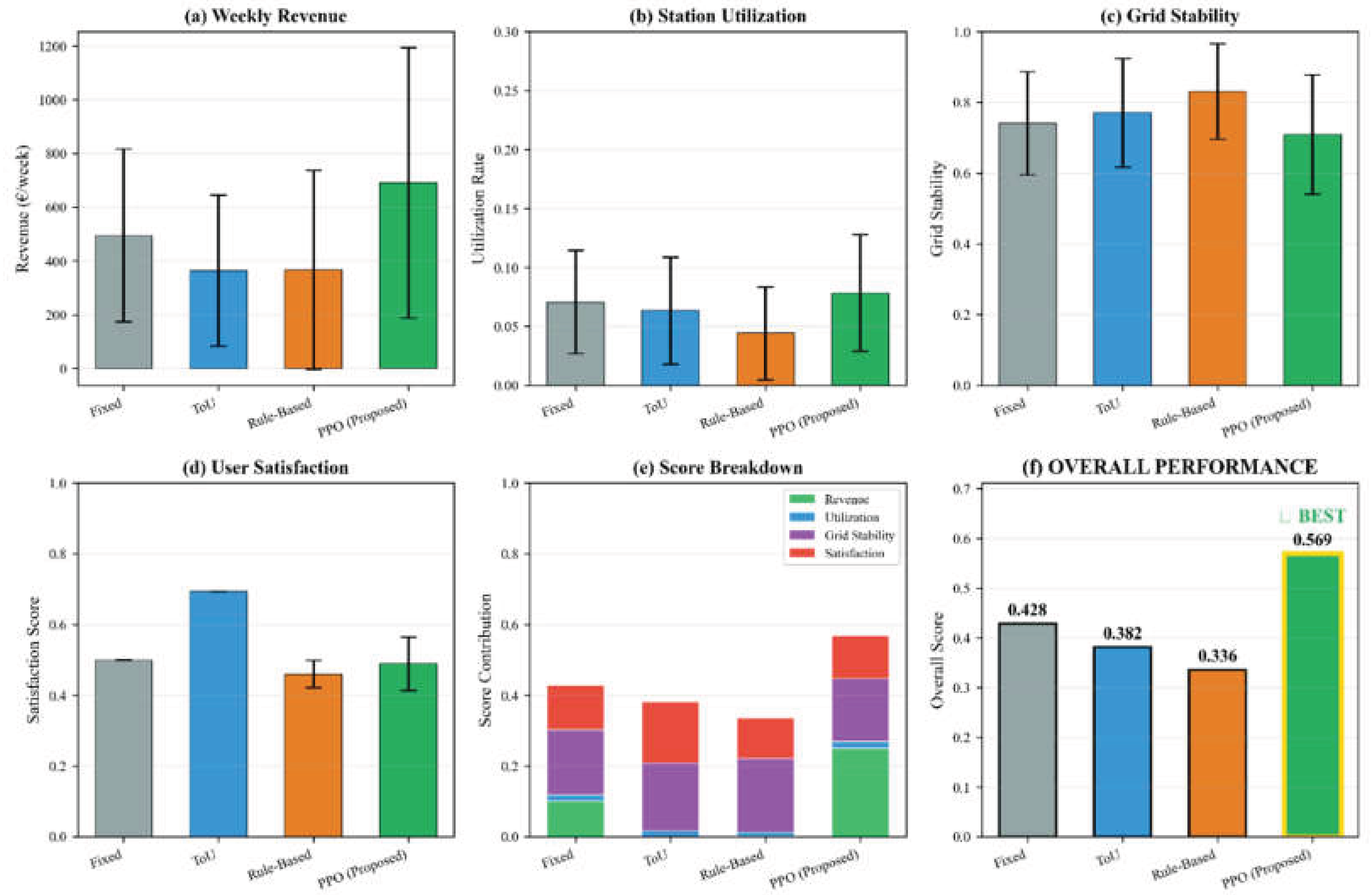

3.3. Comparison of Pricing Methods

3.4. Discussion

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| EV | Electric Vehicle |

| RL | Reinforcement Learning |

| ToU | Time -of- Use |

| PPO | Proximal Policy Optimization |

| MDP | Markov Decision Process |

References

- Tiwari, S.; Jamwal, P.S.; Jaiswal, S. Electric vehicle global market survey. In Development of Electric Vehicles in Smart Grid Concepts; Elsevier: Amsterdam, The Netherlands, 2026; pp. 219–236. [Google Scholar]

- Pergamalis, C.; Tsampasis, E.; Dedes, I.C.; Elias, C. Hydrogen fuel cell electrical vehicles (FCEV)-battery electric vehicles (BEV)-comparison and future challenges. In Proceedings of the 2024 13th Mediterranean Conference on Embedded Computing (MECO), June 2024; pp. 1–5. [Google Scholar]

- Faruqui, A.; Sergici, S. Household response to dynamic pricing of electricity: A survey of 15 experiments. J. Regul. Econ. 2010, 38, 193–225. [Google Scholar] [CrossRef]

- Borenstein, S. The long-run efficiency of real-time electricity pricing. Energy J. 2005, 26, 93–116. [Google Scholar] [CrossRef]

- Hardman, S.; Jenn, A.; Tal, G.; Axsen, J.; Beard, G.; Daina, N.; et al. A review of consumer preferences of and interactions with electric vehicle charging infrastructure. Transp. Res. Part D Transp. Environ. 2018, 62, 508–523. [Google Scholar] [CrossRef]

- Garcia-Villalobos, J.; Zamora, I.; San Martin, J.I.; Asensio, F.J.; Aperribay, V. Plug-in electric vehicles in electric distribution networks: A review of smart charging approaches. Renew. Sustain. Energy Rev. 2014, 38, 717–731. [Google Scholar] [CrossRef]

- Zhu, J.; Yang, Z.; Guo, Y.; Zhang, J.; Yang, H. Short-term load forecasting for electric vehicle charging stations based on deep learning approaches. Appl. Sci. 2019, 9, 1723. [Google Scholar] [CrossRef]

- Zhang, J.; Hou, L.; Zhang, B.; Yang, X.; Diao, X.; Jiang, L.; Qu, F. Optimal operation of energy storage system in photovoltaic-storage charging station based on intelligent reinforcement learning. Energy Build. 2023, 299, 113570. [Google Scholar] [CrossRef]

- Wang, S.; Bi, S.; Zhang, Y.A. Reinforcement learning for real-time pricing and scheduling control in EV charging stations. IEEE Trans. Ind. Inform. 2019, 17, 849–859. [Google Scholar] [CrossRef]

- Wan, Z.; Li, H.; He, H.; Prokhorov, D. Model-free real-time EV charging scheduling based on deep reinforcement learning. IEEE Trans. Smart Grid 2018, 10, 5246–5257. [Google Scholar] [CrossRef]

- Chirita, M.; Chirita, G. A comprehensive overview of deep learning for algorithmic pricing in ride-sharing platforms. Econ. Appl. Inform. 2024, 1, 177–181. [Google Scholar] [CrossRef]

- Li, H.; Wan, Z.; He, H. Constrained EV charging scheduling based on safe deep reinforcement learning. IEEE Trans. Smart Grid 2019, 11, 2427–2439. [Google Scholar] [CrossRef]

- Sorensen, A.L.; Lindberg, K.B.; Sartori, I.; Andresen, I. Residential electric vehicle charging datasets from apartment buildings. Data Brief 2021, 36, 107105. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; He, H.; Tan, X. Truly proximal policy optimization. In Proceedings of the Uncertainty in Artificial Intelligence, August 2020; pp. 113–122. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).