Submitted:

23 March 2026

Posted:

25 March 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Background and Related Work

2.1. AI Supply Chain Risks

2.2. Cryptographic Foundations of AI Assurance

2.3. Post-Quantum Cryptography Transition Requirements

2.4. Gaps in Existing Frameworks

3. Materials and Methods

3.1. Research Design and Contribution Type

3.2. Analytical Propositions

3.3. Search Strategy

3.4. Eligibility Criteria

3.5. Screening and Selection

3.6. Data Extraction and Coding

3.7. Evidence Confidence Tiers

3.8. Requirements-to-Architecture Traceability

4. Results: Threat and Dependency Analysis

4.1. AI Supply Chain Attack Surface

4.1.1. Training-Time Threats

4.1.2. Ingestion-Time Threats

4.1.3. Deployment-Time Threats

4.2. Cryptographic Dependencies in AI Pipelines

4.2.1. Model Signing and Verification

4.2.2. Dataset Integrity and Lineage

4.2.3. Secure Training and Deployment Pipelines

4.2.4. Federated Learning and Distributed Training

4.3. Lifecycle Vulnerabilities Across AI Supply Chains

4.3.1. Pre-Training

4.3.2. Fine-Tuning

4.3.3. Packaging and Distribution

4.3.4. Deployment and Continuous Learning

4.4. Requirements Derived from Threats and Dependencies

4.4.1. Provenance Requirements

4.4.2. Integrity Requirements

4.4.3. Lifecycle Requirements

4.4.4. Supply Chain Transparency Requirements

5. Results: The MBOM-PQC Schema—A Structured Framework for AI Provenance and Integrity

5.1. Design Principles

5.1.1. Completeness

5.1.2. Verifiability

5.1.3. Cryptographic Durability

5.1.4. Supply Chain Transparency

5.2. Schema Overview and Core Components

5.2.1. Component 1: Model Metadata

5.2.2. Component 2: Pre-Training Dataset Lineage

5.2.3. Component 3: Pre-Trained Model Dependencies

5.2.4. Component 4: Fine-Tuning Artifacts

5.2.5. Component 5: Training Environment and Pipeline

5.2.6. Component 6: Deployment Packaging

5.2.7. Component 7: Cryptographic Integrity Fields

5.3. PQC-Safe Extensions

5.3.1. Hybrid Signature Bundles

5.3.2. PQC-Safe Certificate Chains

5.3.3. Long-Term Integrity Anchors

5.4. Requirements-to-Schema Traceability

5.5. Summary

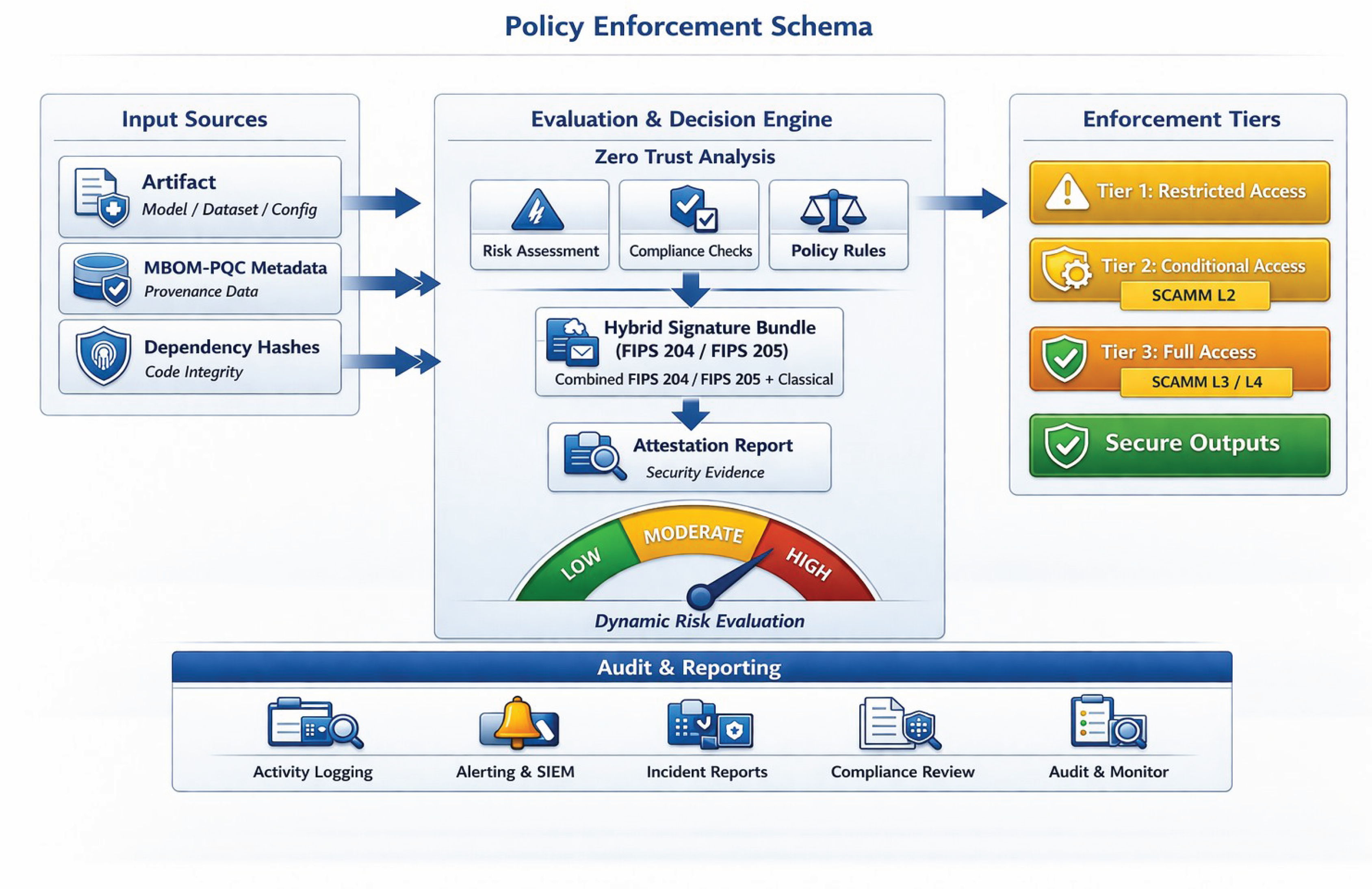

6. Results: PQC-Safe Model Signing and Attestation Pipeline

6.1. Pipeline Overview

6.1.1. Stage 1—Ingestion

6.1.2. Stage 2—Verification

6.1.3. Stage 3—Signing

6.1.4. Stage 4—Attestation

6.1.5. Stage 5—Deployment

6.2. PQC-Safe Signing Flow

6.2.1. Hybrid Mode Signing

6.2.2. FIPS 204 (ML-DSA) Signing for Standard Artifacts

6.2.3. FIPS 205 (SLH-DSA) for Long-Term Artifacts

6.2.4. PQC-Safe Key Management

6.3. Attestation Architecture

6.3.1. Hardware Root of Trust

6.3.2. PQC-Safe Certificate Chains

6.3.3. Remote Attestation

6.4. Integration with Zero Trust Architecture and AI RMF

6.4.1. Zero Trust Architecture Integration

6.4.2. NIST AI RMF Integration

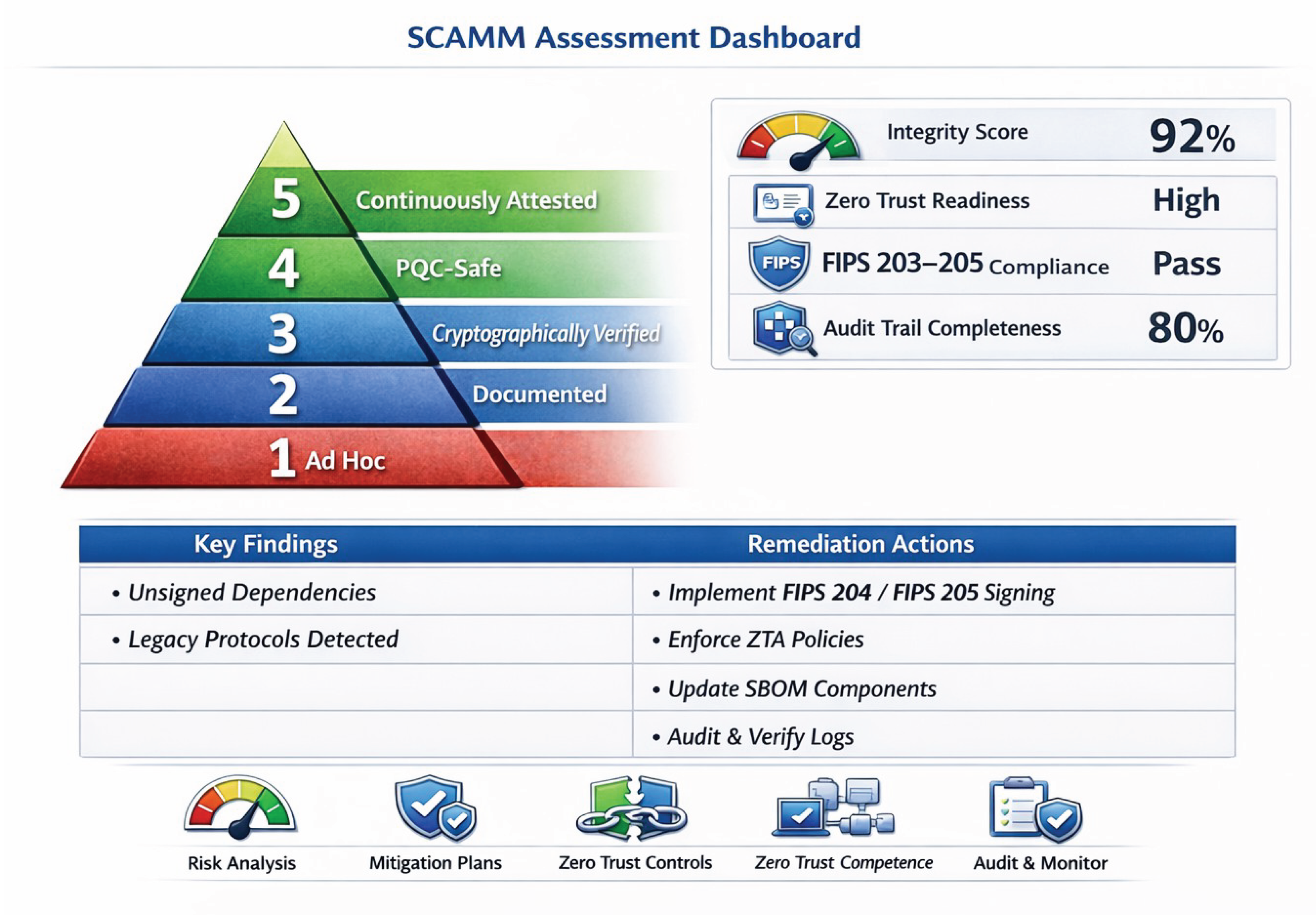

7. Results: Supply Chain Assurance Maturity Model (SCAMM)

7.1. SCAMM Overview

7.2. Maturity Level Definitions

7.3. SCAMM Indicators and Metrics

7.3.1. Provenance Completeness

7.3.2. Cryptographic Integrity

7.3.3. Pipeline Attestation

7.3.4. Lifecycle Governance

7.4. Requirements-to-Maturity Mapping

7.5. Summary

8. Discussion

8.1. Implications for AI Governance and Risk Management

8.2. Integration with Zero Trust Architecture and Enterprise Security

8.3. Implementation Challenges

8.3.1. Performance and Storage Overhead

8.3.2. Legacy System Compatibility

8.3.3. Provenance Completeness

8.3.4. Organizational Maturity and Skill Gaps

8.4. Limitations of the Proposed Framework

8.4.1. Evolving PQC Standards

8.4.2. Lack of Empirical Validation

8.4.3. Dependency on Upstream Transparency

8.4.4. Continuous Learning Complexity

8.5. Opportunities for Future Research

8.6. Summary

9. Conclusions

Supplementary Materials

Funding

Data Availability Statement

Conflicts of Interest

References

- ReversingLabs. Malicious Machine Learning Packages Targeting ML Developers in PyPI; ReversingLabs Threat Research: Boston, MA, USA, 2023.

- PyTorch. TorchServe Security Advisory: Server-Side Request Forgery and Model Loading Vulnerabilities (CVE-2023-43654); PyTorch Foundation: San Francisco, CA, USA, 2023. Available online: https://github.com/pytorch/serve/security/advisories/GHSA-xcvg-c98v-hjqc (accessed on 15 January 2026).

- CISA. Software Supply Chain Attacks: Threat Landscape and Mitigations; Cybersecurity and Infrastructure Security Agency: Washington, DC, USA, 2023.

- MITRE. ATLAS: Adversarial Threat Landscape for Artificial-Intelligence Systems; MITRE Corporation: McLean, VA, USA, 2024. Available online: https://atlas.mitre.org/ (accessed on 15 January 2026).

- NIST. Artificial Intelligence Risk Management Framework (AI RMF 1.0); National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023.

- NIST. Secure Software Development Framework (SSDF), Version 1.1; SP 800-218; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2022.

- NIST. ML-DSA: Module-Lattice-Based Digital Signature Algorithm; FIPS 204; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- NSA. Commercial National Security Algorithm Suite 2.0 (CNSA 2.0); National Security Agency: Fort Meade, MD, USA, 2022.

- NIST. SLH-DSA: Stateless Hash-Based Digital Signature Algorithm; FIPS 205; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- Machado, G.R.; Silva, E.; Goldschmidt, R.R. Adversarial Machine Learning in Image Classification: A Survey Toward the Defender’s Perspective. ACM Comput. Surv. 2023, 55, 1–38. [CrossRef]

- Liu, Y.; et al. A Survey on Model Watermarking and Provenance for Deep Learning. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 987–1005.

- NIST. ML-KEM: Module-Lattice-Based Key-Encapsulation Mechanism; FIPS 203; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- NIST. SP 800-204D: Strategies for the Integration of Software Supply Chain Security in DevSecOps CI/CD Pipelines; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- DoD CDAO. Responsible Artificial Intelligence (RAI) Toolkit; Chief Digital and Artificial Intelligence Office: Arlington, VA, USA, 2024. Available online: https://www.ai.mil/rai.html (accessed on 15 January 2026).

- NIST. SP 800-208: Recommendation for Stateful Hash-Based Signature Schemes (XMSS and LMS); National Institute of Standards and Technology: Gaithersburg, MD, USA, 2020.

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. BMJ 2021, 372, n71. [CrossRef]

- IETF. Hybrid Key Exchange in TLS 1.3; Internet-Draft draft-ietf-tls-hybrid-design-16; Internet Engineering Task Force, 2026. (Work in Progress.) Available online: https://datatracker.ietf.org/doc/draft-ietf-tls-hybrid-design/ (accessed on 15 January 2026).

- IETF. Post-quantum Hybrid ECDHE-MLKEM Key Agreement for TLSv1.3; Internet-Draft draft-ietf-tls-ecdhe-mlkem-04; Internet Engineering Task Force, 2026. (Work in Progress.) Available online: https://datatracker.ietf.org/doc/draft-ietf-tls-ecdhe-mlkem/ (accessed on 15 January 2026).

- Carlini, N.; et al. Poisoning Web-Scale Training Datasets Is Practical. In Proceedings of the 2023 IEEE Symposium on Security and Privacy; IEEE: San Francisco, CA, USA, 2023.

- Goldblum, M.; et al. Dataset Security for Machine Learning: Data Poisoning, Backdoor Attacks, and Defenses. ACM Comput. Surv. 2024, 56, 1–42.

- Wu, B.; Chen, H.; Zhang, M.; Zhu, J.; Wei, S.; Yuan, C.; Shen, C. BackdoorBench: A Comprehensive Benchmark of Backdoor Learning. In Proceedings of the 36th Conference on Neural Information Processing Systems (NeurIPS 2022); Curran Associates: Red Hook, NY, USA, 2022.

- Pearce, A.; et al. Model Inversion, Extraction, and Supply Chain Attacks on Machine Learning Systems. IEEE Trans. Dependable Secur. Comput. 2024, 21, 1123–1138.

- Rieger, P.; Krauß, T.; Miettinen, M.; Dmitrienko, A.; Sadeghi, A.-R. DeepSight: Mitigating Backdoor Attacks in Federated Learning Through Deep Model Inspection. In Proceedings of the 2022 Network and Distributed System Security Symposium (NDSS); Internet Society: San Diego, CA, USA, 2022.

- Red Hat. Securing the Modern Software Supply Chain: AI Models and Container Images; Red Hat Blog: Raleigh, NC, USA, 2025. Available online: https://www.redhat.com/en/blog/securing-modern-software-supply-chain-ai-models-container-images (accessed on 15 January 2026).

- NIST. Zero Trust Architecture; SP 800-207; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2020. [CrossRef]

- NIST. SP 800-193: Platform Firmware Resiliency Guidelines; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2022.

- Google. Secure AI Framework (SAIF); Google: Mountain View, CA, USA, 2023. Available online: https://safety.google/cybersecurity-advancements/saif/ (accessed on 15 January 2026).

- Kumar, R.S.S.; Lopez Munoz, G.; Maitre, M.; Minnich, A.; Chawla, S.; Dheekonda, R.S.R.; Zhang, L.; Siska, C.; Rakshit, S. New Research, Tooling, and Partnerships for More Secure AI and Machine Learning; Microsoft Security Blog: Redmond, WA, USA, 2023. Available online: https://www.microsoft.com/en-us/security/blog/2023/03/02/new-research-tooling-and-partnerships-for-more-secure-ai-and-machine-learning/ (accessed on 15 January 2026).

- NIST. Secure Software Development Practices for Generative AI and Dual-Use Foundation Models: An SSDF Community Profile; SP 800-218A; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024. Available online: https://csrc.nist.gov/pubs/sp/800/218/a/final (accessed on 15 January 2026).

- NIST. Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile; AI 600-1; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- NIST. Cybersecurity Supply Chain Risk Management Practices for Systems and Organizations; SP 800-161r1; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2022 (updated 2024). [CrossRef]

- NIST. The NIST Cybersecurity Framework (CSF) 2.0; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- The White House. Executive Order 14110: Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence; Washington, DC, USA, 30 October 2023.

- NIST. Security and Privacy Controls for Information Systems and Organizations; SP 800-53, Rev. 5; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2020. [CrossRef]

- IETF. Internet X.509 Public Key Infrastructure — Algorithm Identifiers for the Module-Lattice-Based Digital Signature Algorithm (ML-DSA); RFC 9881; Internet Engineering Task Force, 2025. Available online: https://www.rfc-editor.org/rfc/rfc9881 (accessed on 15 January 2026).

- IETF. Composite ML-DSA for Use in X.509 Public Key Infrastructure; Internet-Draft, current IETF LAMPS working-group draft. (Work in Progress.) Available online: https://datatracker.ietf.org/doc/draft-ietf-lamps-pq-composite-sigs/ (accessed on 15 January 2026).

- OWASP. Machine Learning Security Top 10: ML06 — AI Supply Chain Attacks; OWASP Foundation, 2023. Available online: https://owasp.org/www-project-machine-learning-security-top-10/ (accessed on 15 January 2026).

- OWASP. Top 10 for Large Language Model Applications, Version 2025: LLM03 — Supply Chain; OWASP GenAI Security Project, 2025. Available online: https://genai.owasp.org/ (accessed on 15 January 2026).

- ISO/IEC 42001:2023; Information Technology — Artificial Intelligence — Management System; International Organization for Standardization: Geneva, Switzerland, 2023.

- ISO/IEC 23894:2023; Information Technology — Artificial Intelligence — Guidance on Risk Management; International Organization for Standardization: Geneva, Switzerland, 2023.

- CISA. Shifting the Balance of Cybersecurity Risk: Principles and Approaches for Security-by-Design and -Default; Cybersecurity and Infrastructure Security Agency: Washington, DC, USA, April 2023. Available online: https://www.cisa.gov/securebydesign (accessed on 15 January 2026).

- NSA; CISA. Deploying AI Systems Securely: Best Practices for Deploying Secure and Resilient AI Systems; National Security Agency and Cybersecurity and Infrastructure Security Agency, 2024. Available online: https://media.defense.gov/2024/Apr/15/2003439257/-1/-1/0/CSI-DEPLOYING-AI-SYSTEMS-SECURELY.PDF (accessed on 15 January 2026).

- NIST. A Zero Trust Architecture Model for Access Control in Cloud-Native Applications in Multi-Cloud Environments; SP 800-207A; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023.

- DoD. Data, Analytics, and Artificial Intelligence Adoption Strategy; Department of Defense: Washington, DC, USA, 2023.

- OMB. Memorandum M-23-02: Migrating to Post-Quantum Cryptography; Office of Management and Budget: Washington, DC, USA, 2022. Implements National Security Memorandum 10 (NSM-10).

- OWASP Foundation; Ecma TC54. CycloneDX Bill of Materials Standard (ECMA-424); 2023. Available online: https://cyclonedx.org/specification/overview/ (accessed on 15 January 2026).

- Gu, T.; Dolan-Gavitt, B.; Garg, S. BadNets: Identifying Vulnerabilities in the Machine Learning Model Supply Chain. IEEE Access 2019, 7, 47230–47244. [CrossRef]

- Li, Y.; Lyu, X.; Koren, N.; Lyu, L.; Li, B.; Ma, X. Anti-Backdoor Learning: Training Clean Models on Poisoned Data. In Proceedings of the 35th Conference on Neural Information Processing Systems (NeurIPS 2021); Curran Associates: Red Hook, NY, USA, 2021.

- Ohm, M.; Plate, H.; Sykosch, A.; Meier, M. Backstabber’s Knife Collection: A Review of Open Source Software Supply Chain Attacks. In Proceedings of the Detection of Intrusions and Malware, and Vulnerability Assessment (DIMVA 2020); Springer: Cham, Switzerland, 2020.

- Ladisa, P.; Plate, H.; Martinez, M.; Barber, O. A Taxonomy of Attacks on Open-Source Software Supply Chains. In Proceedings of the 2023 IEEE Symposium on Security and Privacy; IEEE: San Francisco, CA, USA, 2023.

- PyTorch Foundation. Compromised PyTorch-nightly Dependency Chain Between December 25th and December 30th, 2022; PyTorch Blog, December 2022. Available online: https://pytorch.org/blog/compromised-nightly-dependency/ (accessed on 15 January 2026).

- Hugging Face. Hub Security Documentation: Pickle Scanning, Malware Scanning, and Repository Trust Controls; 2024. Available online: https://huggingface.co/docs/hub/en/security (accessed on 15 January 2026).

- Ultralytics. GitHub Issue #18027: Published Wheel 8.3.41 Contained Code Not Present in GitHub and Appeared to Invoke an XMRig Miner; December 2024. Available online: https://github.com/ultralytics/ultralytics/issues/18027 (accessed on 15 January 2026).

- Zhu, J.; et al. Models Are Codes: Towards Measuring Malicious Code Poisoning Attacks on Pre-trained Model Hubs. In Proceedings of the 39th IEEE/ACM International Conference on Automated Software Engineering (ASE 2024); ACM: Sacramento, CA, USA, 2024.

| Requirement | Threat Source | MBOM-PQC Schema Component |

|---|---|---|

| Dataset poisoning detection | Training-time attacks (Section 4.1.1) | C2: Pre-Training Dataset Lineage |

| Model swap prevention | Ingestion-time attacks (Section 4.1.2) | C3: Pre-Trained Model Dependencies |

| Fine-tuning tampering detection | Training-time and ingestion-time threats (Section 4.1.1, Section 4.1.2) | C4: Fine-Tuning Artifacts |

| Pipeline integrity | Pipeline compromise (Section 4.2.3) | C5: Training Environment & Pipeline |

| PQC-safe integrity | Quantum-enabled forgery (Section 4.2.1) | C7: Cryptographic Integrity Fields |

| Lifecycle transparency | Multi-stage supply chain (Section 4.3) | All components (C1–C7) |

| Requirement | SCAMM Level | Rationale |

|---|---|---|

| Dataset lineage | Levels 2–5 | Required for poisoning detection across all lifecycle stages (Section 4.1.1, Section 4.3.1) |

| PQC-safe signatures | Levels 4–5 | Required for PQC-safe integrity; driven by emerging CNSA 2.0 and federal PQC transition timelines (Section 4.2.1, Section 4.4.2) |

| Pipeline attestation | Levels 3–5 | Required for supply chain transparency and deployment-time tampering detection (Section 4.1.3, Section 4.4.4) |

| Continuous verification | Level 5 | Required for Zero Trust alignment and continuous learning integrity (Section 4.4.4; continuous learning lifecycle context: Section 4.3.4) |

| Hybrid signature modes | Level 4 | Required during PQC transition to maintain backward verifier compatibility (Section 4.2.1, Section 5.3.1, Section 6.2.1) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).