Submitted:

21 March 2026

Posted:

24 March 2026

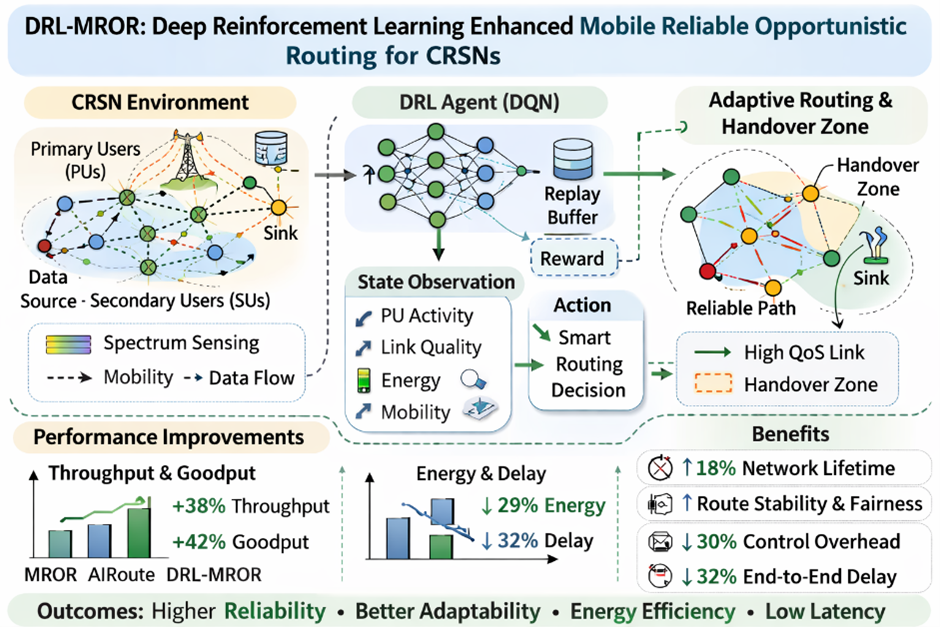

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. AI-Driven Routing in Dynamic Networks

2.2. Predictive Networking with Graph Neural Networks

2.3. Energy-Efficient and Reliable Forwarding

2.4. Positioning of DRL-MROR

3. System and Network Model

3.1. Formal Derivation of Spatio-Temporal Channel Availability

3.2. Analytical Model for Mobility-Induced Link Lifetime

4. DRL-MROR: Framework Design and MDP Formulation

4.1. Markov Decision Process (MDP) Formulation and Convergence Analysis

4.2. Problem Formulation as MDP

- : Do not forward,

- : Forward on channel ,

- : Request re-routing.

- . (tuned weights),

- , , : normalization constants.

4.3. Comprehensive Energy Consumption Model

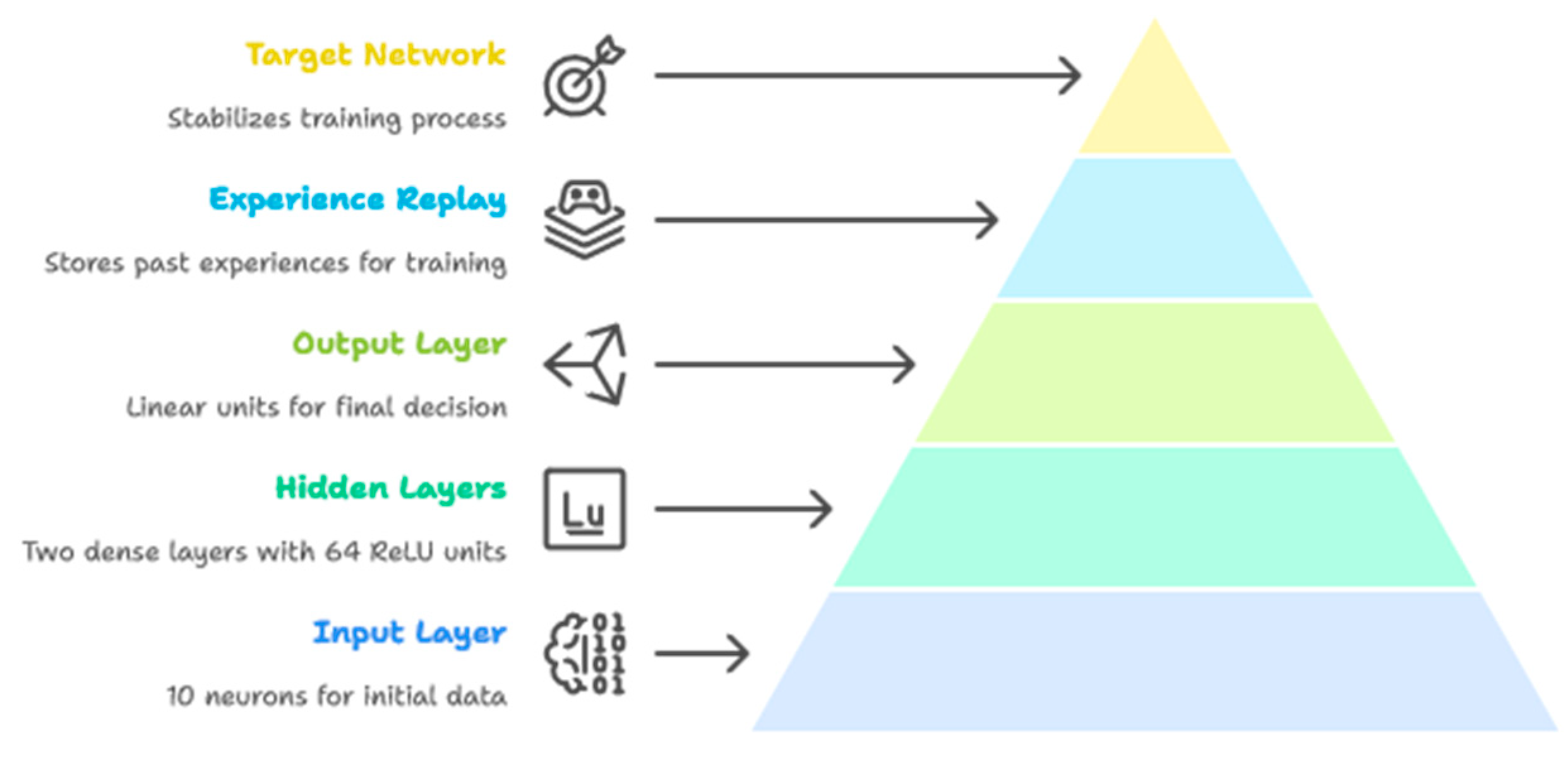

5. Deep Q-Network Implementation

5.1. Neural Network Architecture

5.2. Experience Replay and Training

5.3. Distributed Execution

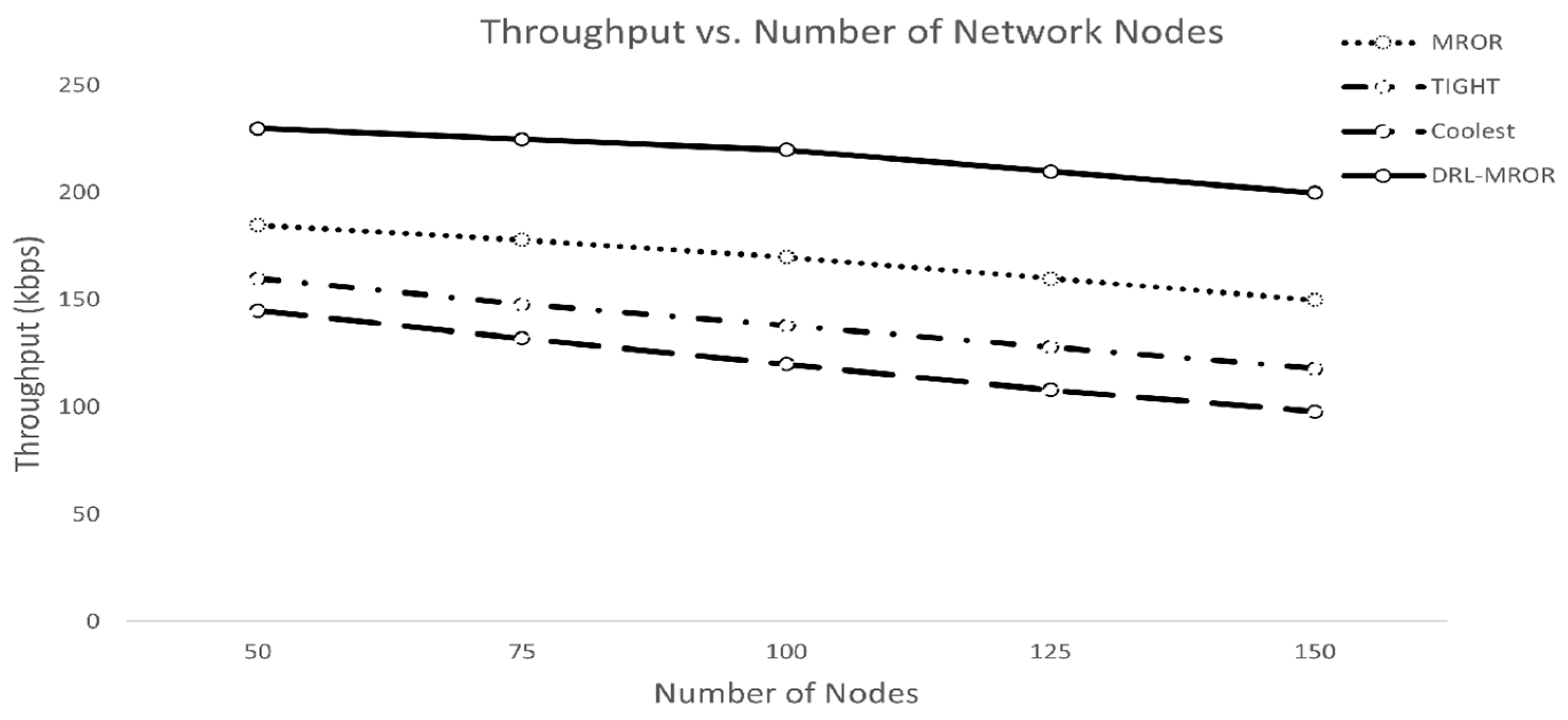

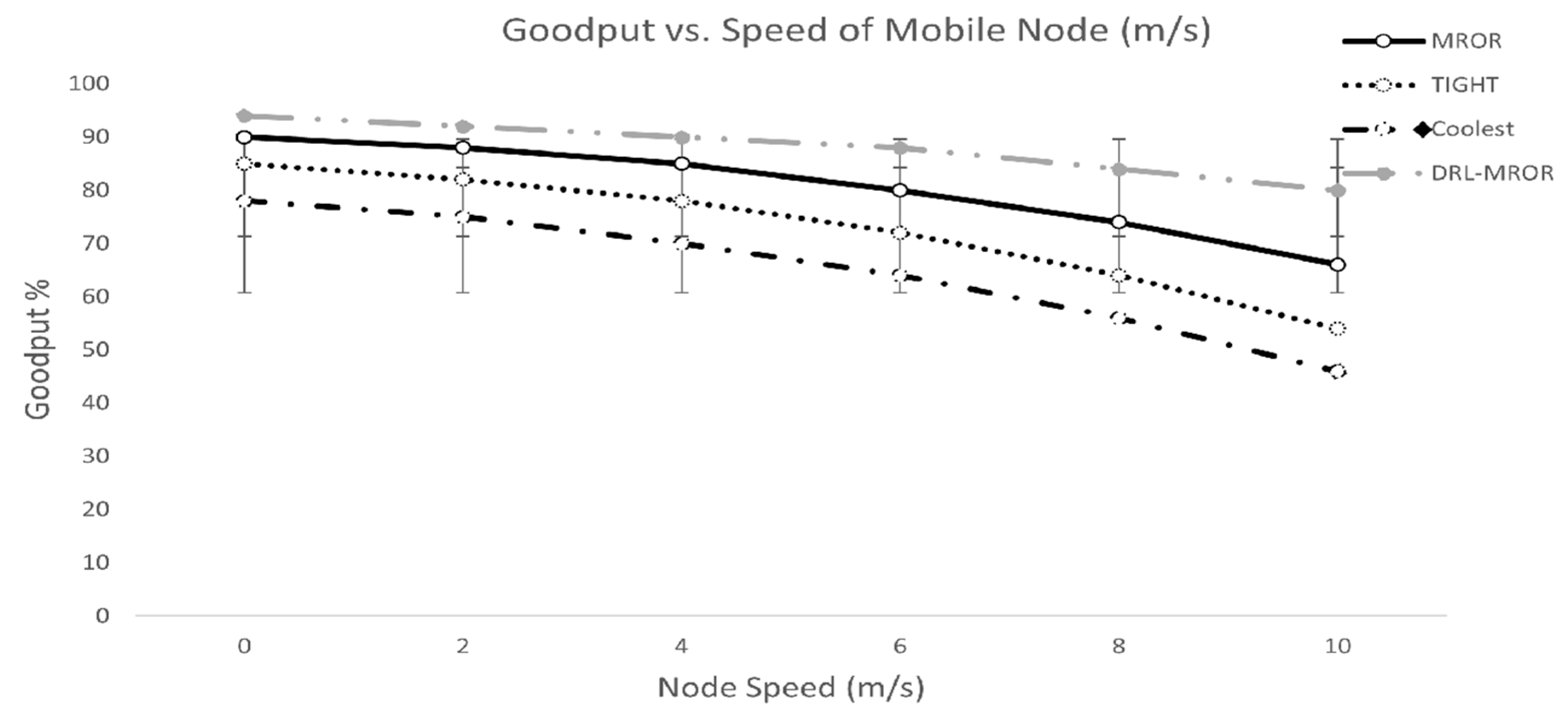

6. Performance Evaluation

6.1. Simultation Setup:

| Number of SUs | 50–150 |

| Channels (C) | 8 |

| Bandwidth per channel | 1 MHz |

| PU activity pattern | Semi-Markov (ON/OFF) |

| | 0.3 |

| | 0.2 |

| Transmission range | 50 m |

| Keep-out radius | 70 m |

| Packet size | 128 bytes |

| Traffic model | CBR, 4 pkt/s/node |

| Mobility speed | 0–10 m/s |

| Energy model | First-order radio model [19] |

| Simulated area | 1000 × 1000 m² |

| Simulator | NS-3.30 + Python API (via ns3gym) |

| DRL framework | PyTorch 2.1 |

6.2. Baseline Protocols

6.3. Results and Analysis

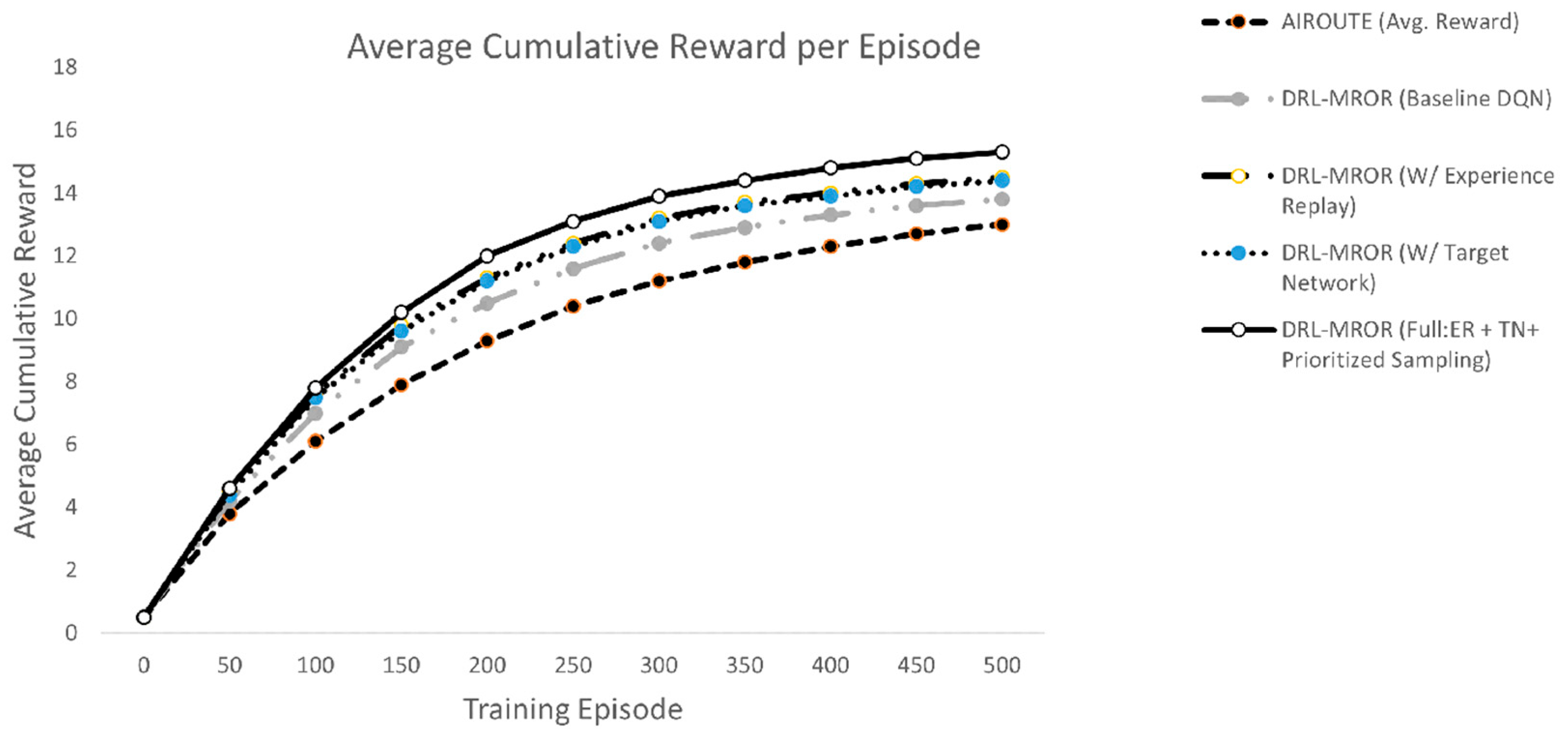

| Configuration | Description |

| AIRoute | State-of-the-art DRL-based routing using graph reinforcement learning for decentralized ad hoc networks [14]. Uses basic DQN with fixed exploration decay. |

| DRL-MROR (Baseline DQN) | Our agent without advanced training techniques. Serves as a baseline. |

| + Experience Replay (ER) | Stores past transitions in a replay buffer to break correlation and improve sample efficiency. |

| + Target Network (TN) | Uses a separate target network to stabilize Q-value updates and prevent oscillations. |

| Full: ER + TN + Prioritized Sampling | Combines all three techniques: experience replay, target network, and prioritized experience replay (focusing on high-error transitions). This is the final proposed model. |

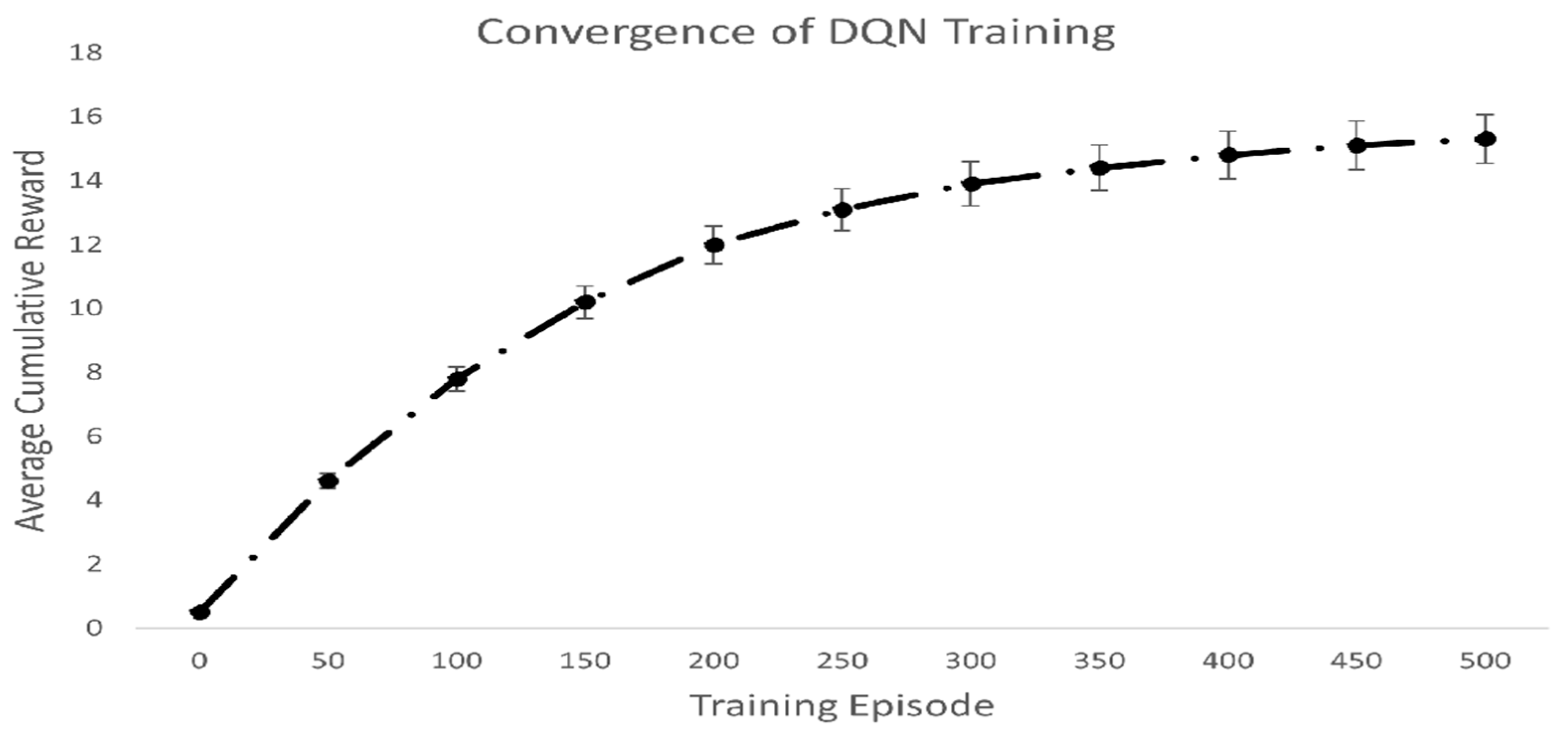

- Accelerated Convergence: DRL-MROR at full configuration converges to a greater reward (~15.3) which is more rapid than AIRoute (~13.0 at episode 500).

- Stability: The combination of experience replay and target networks reduces reward oscillation.

- Superior Final Performance: The full DRL-MROR model achieves ~18% higher cumulative reward than AIRoute by the end of training.

- Impact of Components: Each added component (ER, TN, Prioritization) provides a measurable performance boost, justifying their inclusion.

-

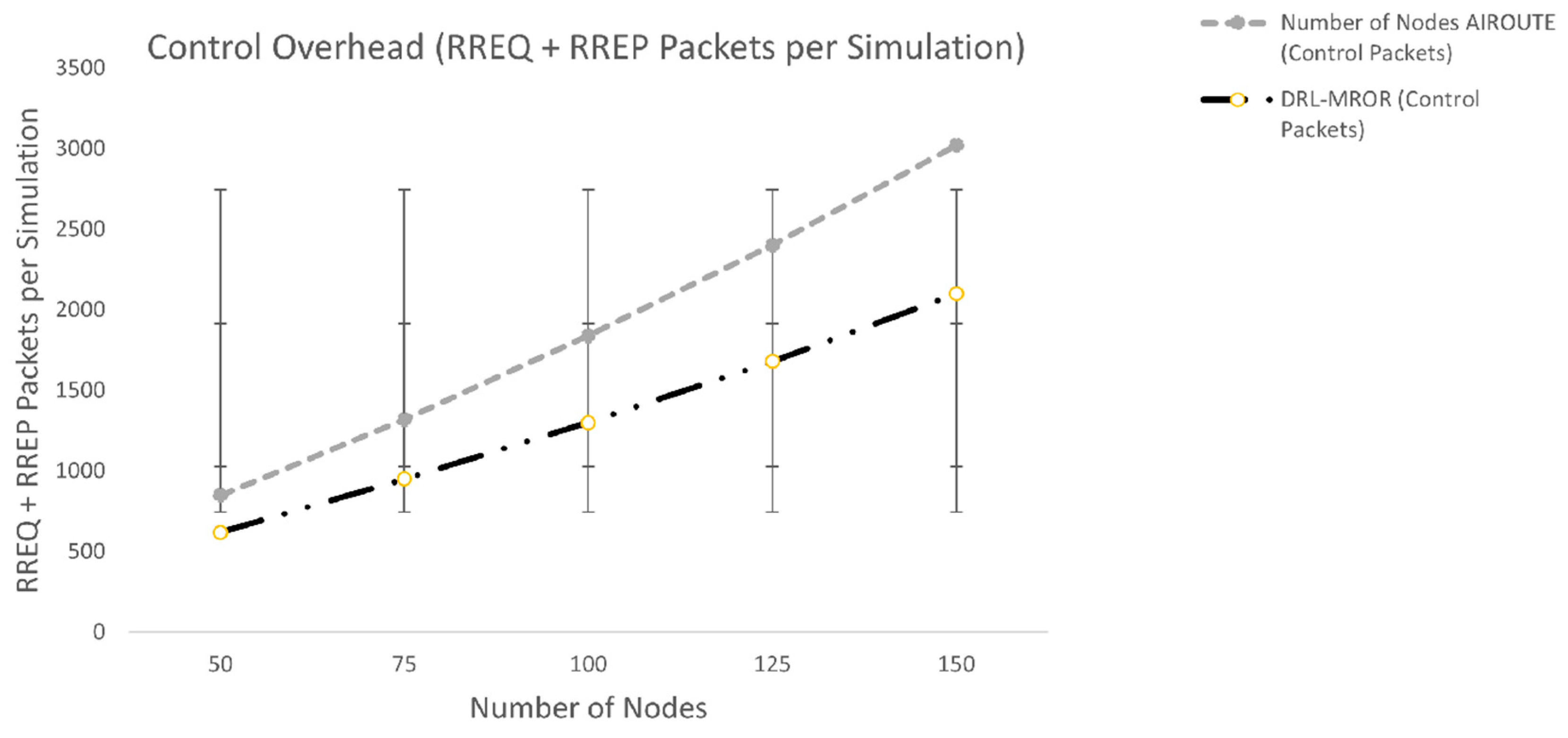

DRL-MROR has a ~30% reduction in control packets compared to AIRoute. This gain comes from:

- ○

- Proactive route maintenance using learned policies.

- ○

- Reduced need for route rediscovery due to better link stability prediction.

- ○

- Efficient VCG formation based on predicted receiver reliability.

- Trend: As the number of nodes increases, network density rises, leading to more route conflicts and discoveries. While both protocols see an increase in control traffic, DRL-MROR scales more efficiently due to its adaptive decision-making.

-

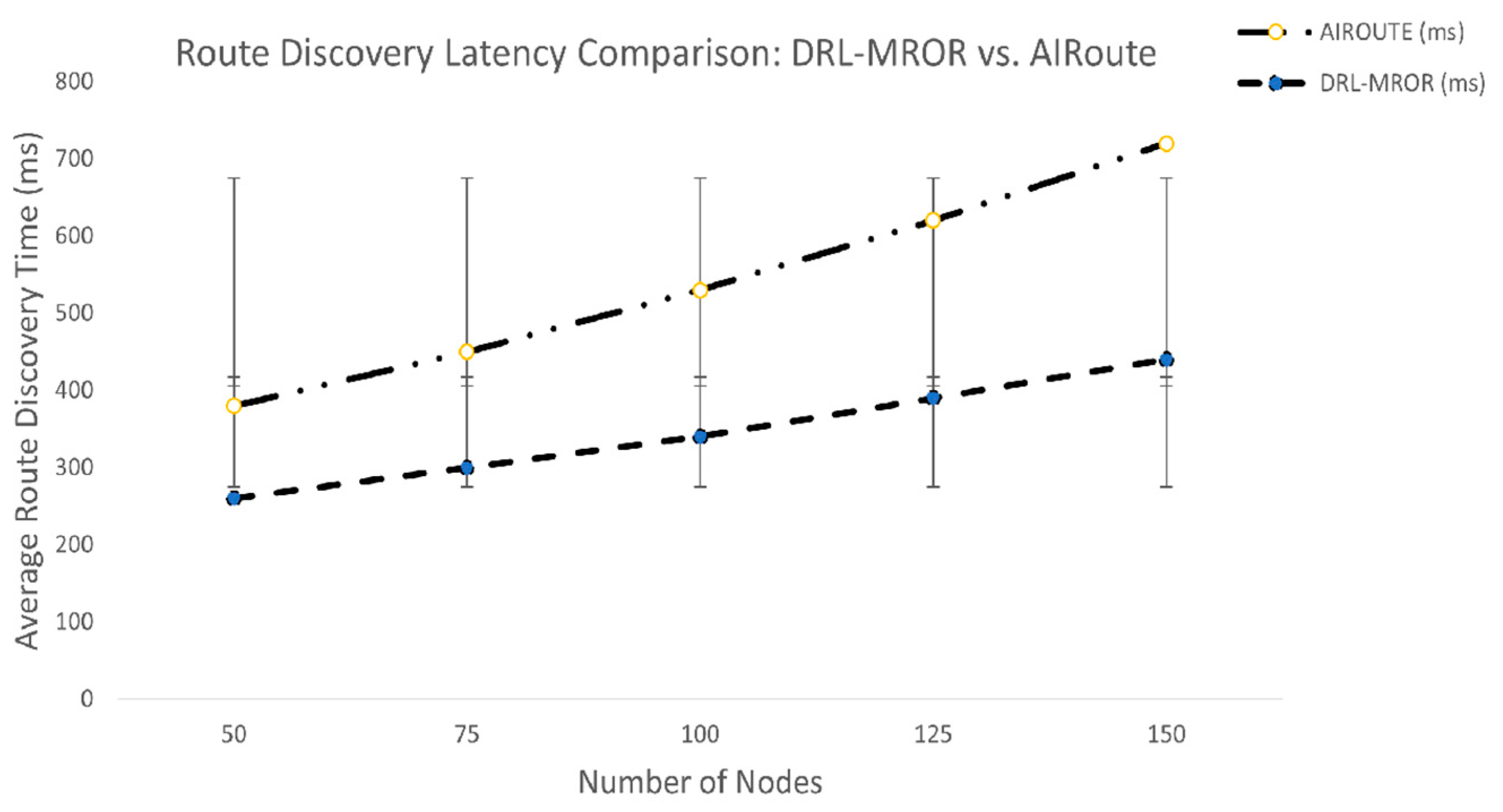

DRL-MROR achieves a ~30-40% reduction in route discovery time compared to AIRoute. This gain comes from:

- ○

- Predictive Forwarding: The DQN agent learns which neighbors are likely to have stable links and better connectivity to the sink, reducing the need for extensive flooding.

- ○

- Reduced Contention: With the receivers being prioritized by estimated stability (in lieu of fixed rules), a smaller contention window is solved more quickly and thus the packets are served quicker.

- ○

- Reduced Rediscoveries: The stability in the routes implies that fewer broken routes are experienced and therefore, the nodes do not spend much time rediscovering new routes.

- Trend: The more nodes in the network, the higher the density, and this might result in longer delays since the network is congested and collides as well. Although in both protocols the time of discovery increases, DRL-MROR is more efficient in its scaling due to the opportunity of the artificial intelligence agent to refine its strategy to evade the crowded locations and to choose better-quality paths.

-

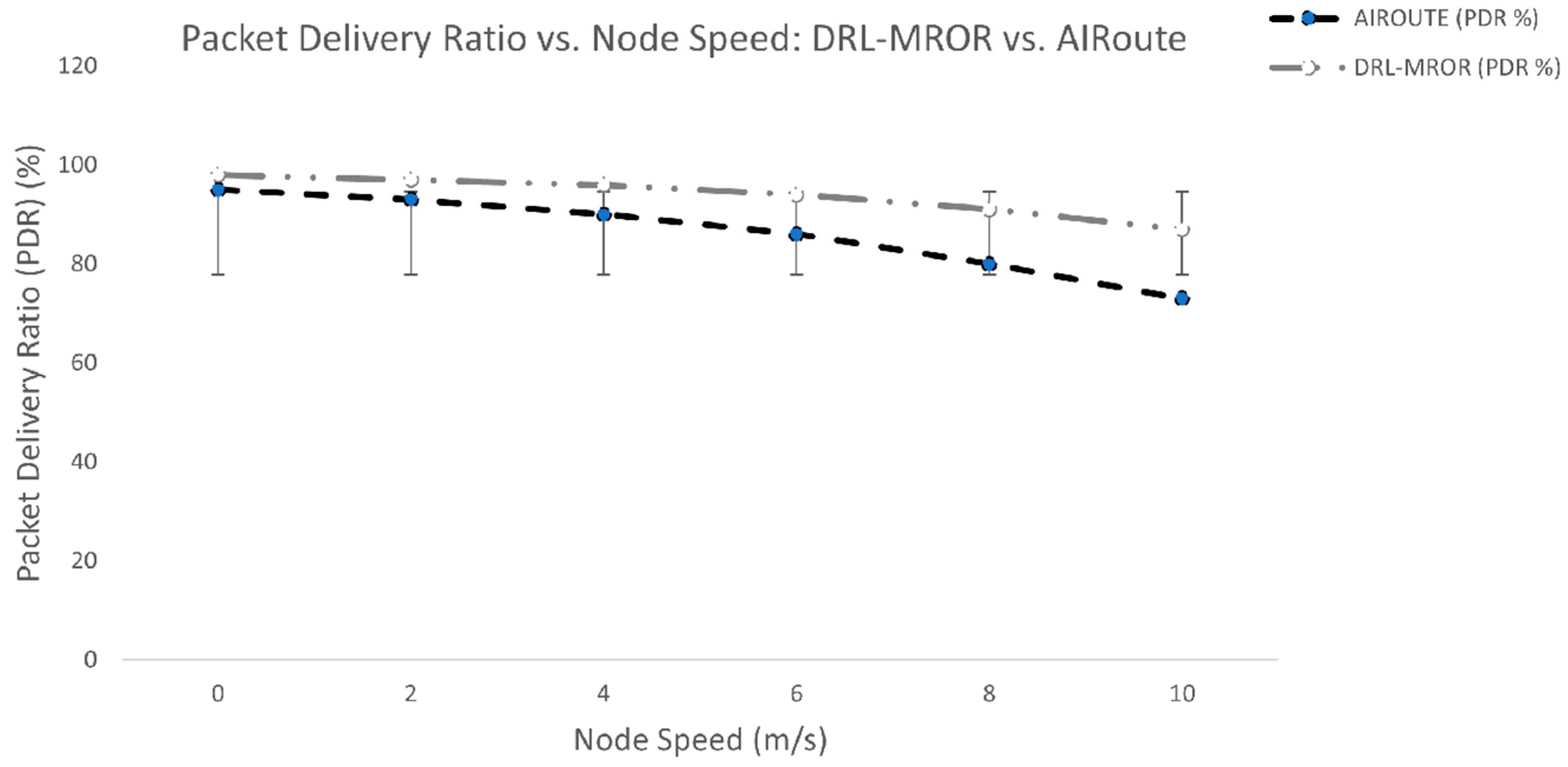

DRL-MROR optimizes a relative improvement of PDR over AIRoute by a factor of 10-15% at maximum all speeds. This gain comes from:

- ○

- Proactive Handover Management: The DQN agent is trained to forecast link partitions and initiate handovers through VMH zones before disconnections happen.

- ○

- Intelligent Channel Switching: The agent does not use channels that are likely to be used by PUs or have any interference and minimize the packet loss during transmission.

- ○

- Stable VCG Formation: The forwarding path is also stable over time as nodes change position as a result of the priorities placed on receivers with greater predicted stability (through learned metrics as opposed to fixed mguard).

- Trend: The higher the node speed, the shorter the durations of links become, which results in an increased frequency of route failures and packet loss. Whereas both protocols experience a reduction in PDR, the DRL-MROR decays with greater grace because it is predictive and adaptive.

-

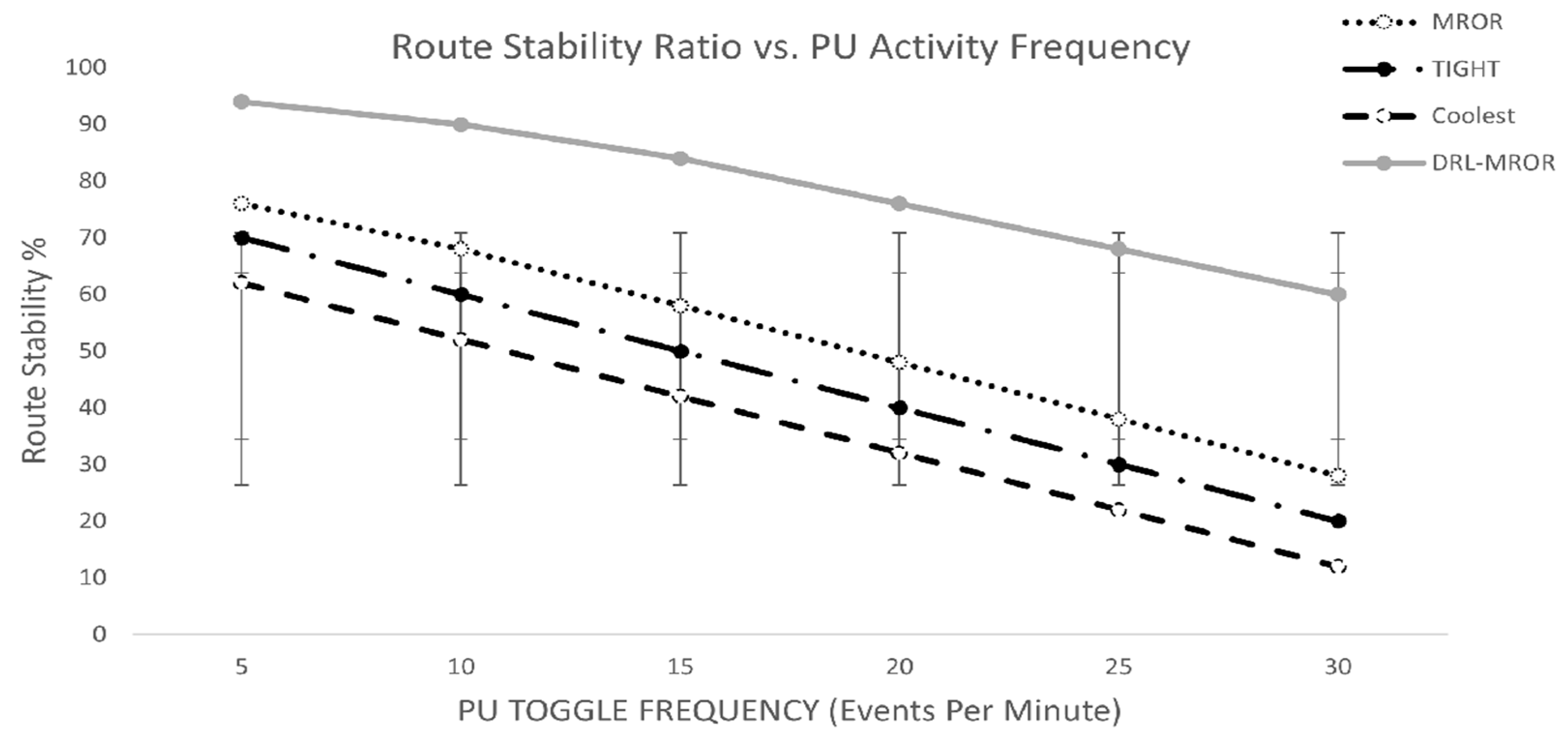

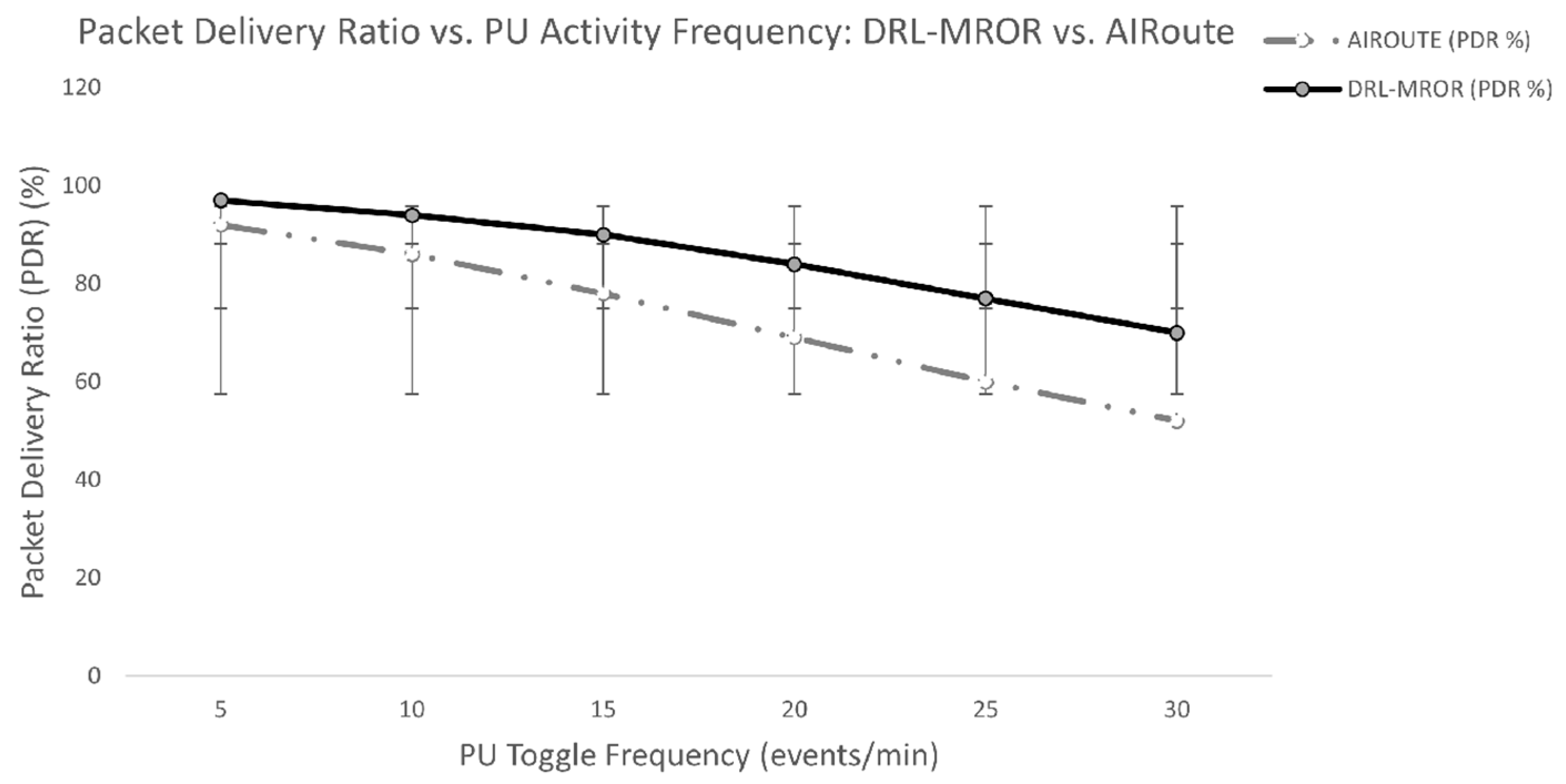

DRL-MROR achieves a relative improvement of PDR over AIRoute by a factor of ~10-15% at maximum frequencies. This gain comes from:

- ○

- Spectrum Availability Prediction: The DQN agent models the temporal dynamics of PU activity based on a history of sensing data and can be used to evade channels that are probably occupied shortly.

- ○

- Proactive Channel Switching: DRL-MROR can proactively switch to a more stable channel in advance of the arrival of a PU, rather than responding to that arrival with a reactive handoff.

- ○

- Robust VCG Formation: A route being initiated can be chosen by the agent to consist of SUs, which may use several alternative channels forming a more robust forwarding group.

- Trend: The higher the PU toggle frequency the less time the SUs have to transmit and the higher the chances of mid-transmission interference. This will make all protocols drop out in PDR. But DRL-MROR gracefully degrades since its predictive powers enable it to make better decisions in when and where to send packets.

-

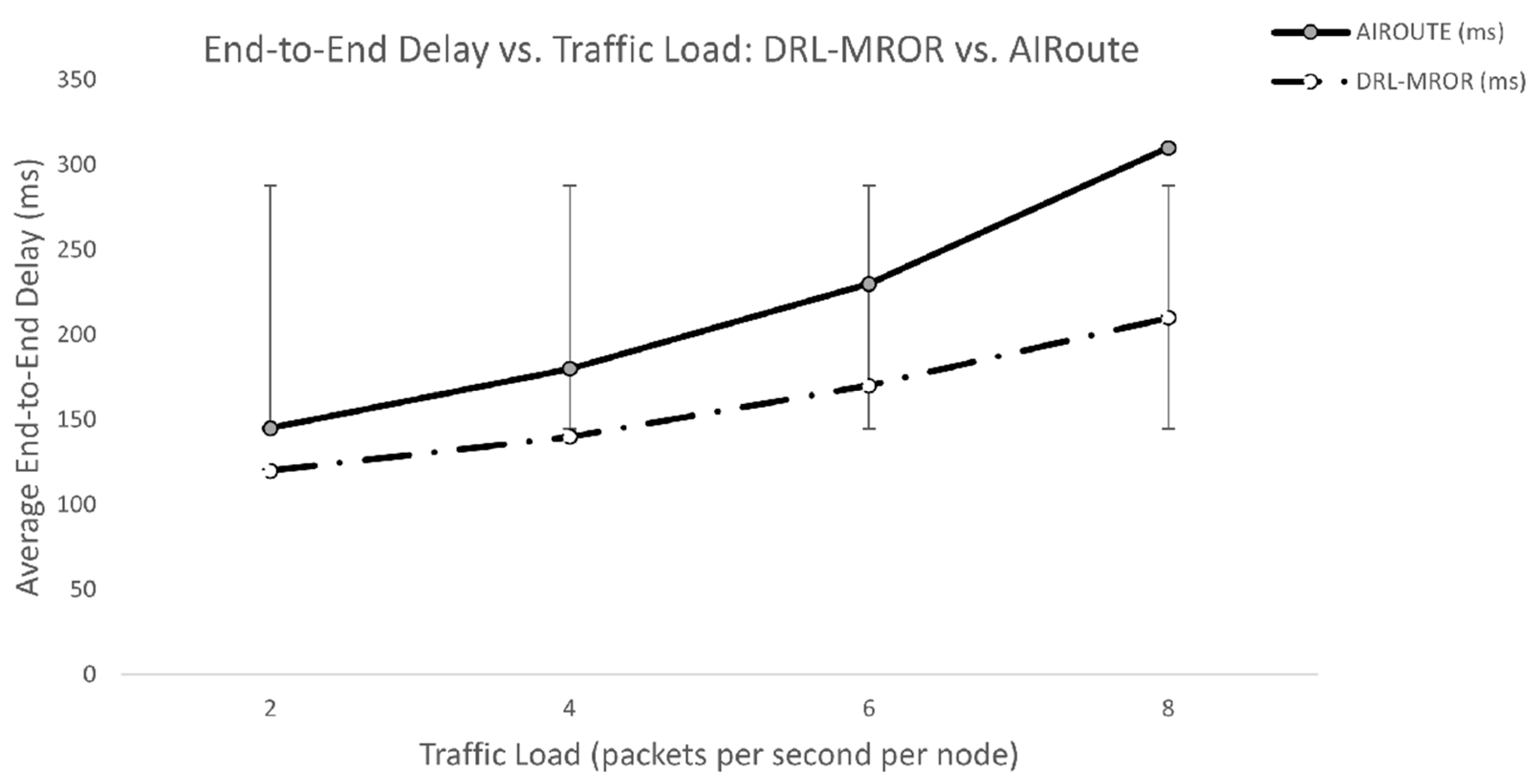

DRL-MROR leads to a reduction in end-to-end delay of up to 30-40% of that of AIRoute under all traffic loads. This gain comes from:

- ○

- Congestion-Aware Routing: DQN agent trains on the state of neighbors, therefore, preventing routes with long queues or high collision rates, and results in a faster packet delivery.

- ○

- Efficient Channel Utilization: The agent can choose the less busy channels and therefore lessen the queuing time is possible.

- ○

- Optimized VCG Contention: Contention is resolved better than when using a traditional backoff scheme because the receiver prioritization algorithm is driven by learned stability scores to resolve contention more rapidly, resulting in fewer forwarding delays.

- Trend: The network gets more and more congested with the traffic load, which results in the increase of the network delay, increased queuing time and increased collisions. Though delay increases are observed in both protocols, DRL-MROR is more efficient, since it is an AI agent that will dynamically adjust its strategy to prevent bottlenecks.

-

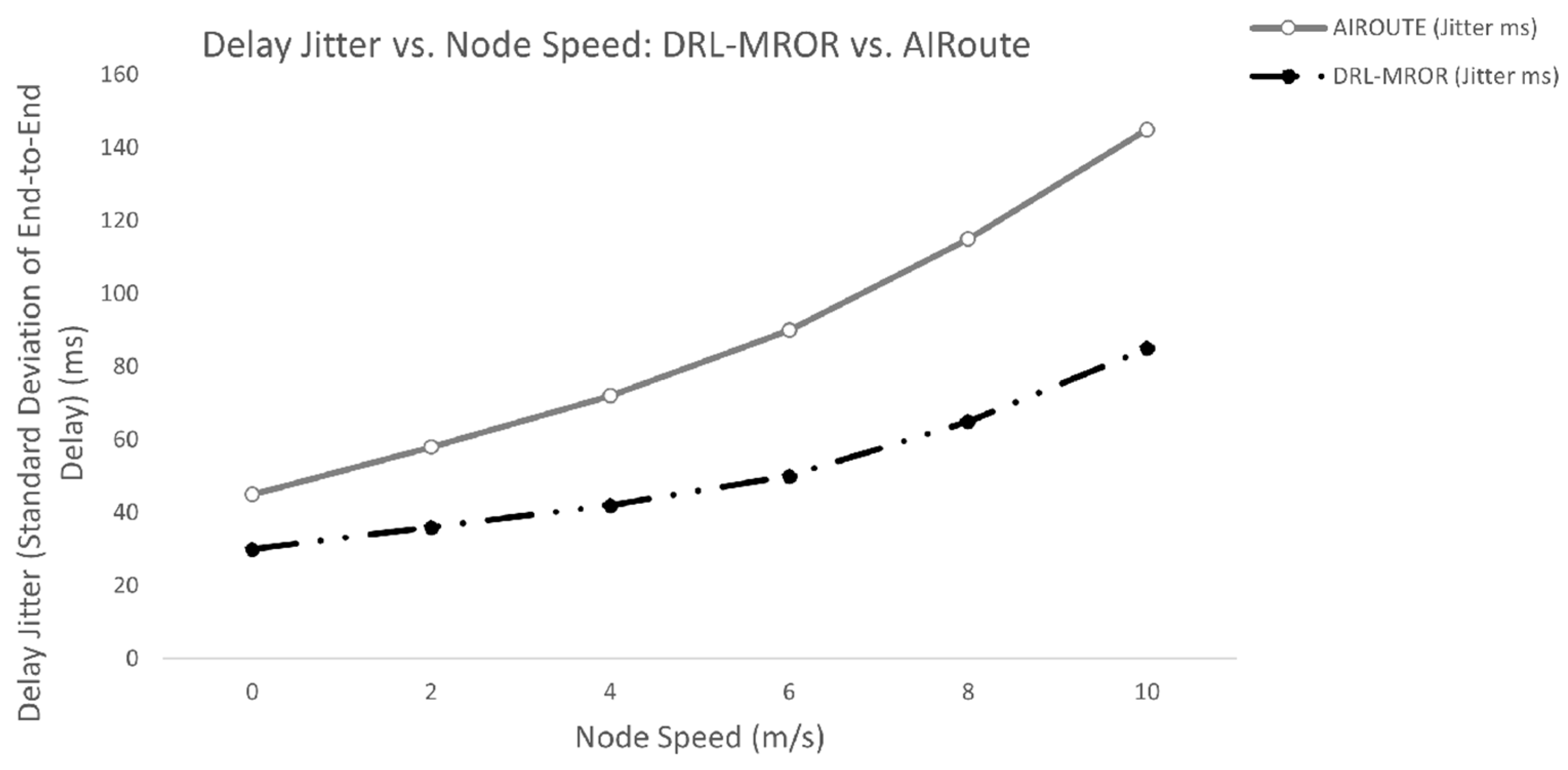

DRL-MROR includes a reduction of delay jitter in all speeds (approximately 40%) as compared to AIRoute. This gain comes from:

- ○

- Predictive Path Selection: The DQN agent also learns to avoid the paths which have high variance in length or congestion of the link and results in more predictable delivery times.

- ○

- Stable VCG Contention: When the contention process is performed based on the learned stability scores to prioritize the receivers, the contention process becomes more predictable and thus less variable in its forwarding delay.

- ○

- Proactive Handover Management: VMH zoning, controlled by the DRL agents can be used to have a smoother handover, avoiding abrupt increases in the delay due to a node going out of range.

- Trend: As node speed increases, link durations become shorter and more unpredictable, leading to higher jitter. While both protocols see an increase in jitter, DRL-MROR maintains significantly lower values because its AI agent can anticipate changes and make more consistent routing decisions.

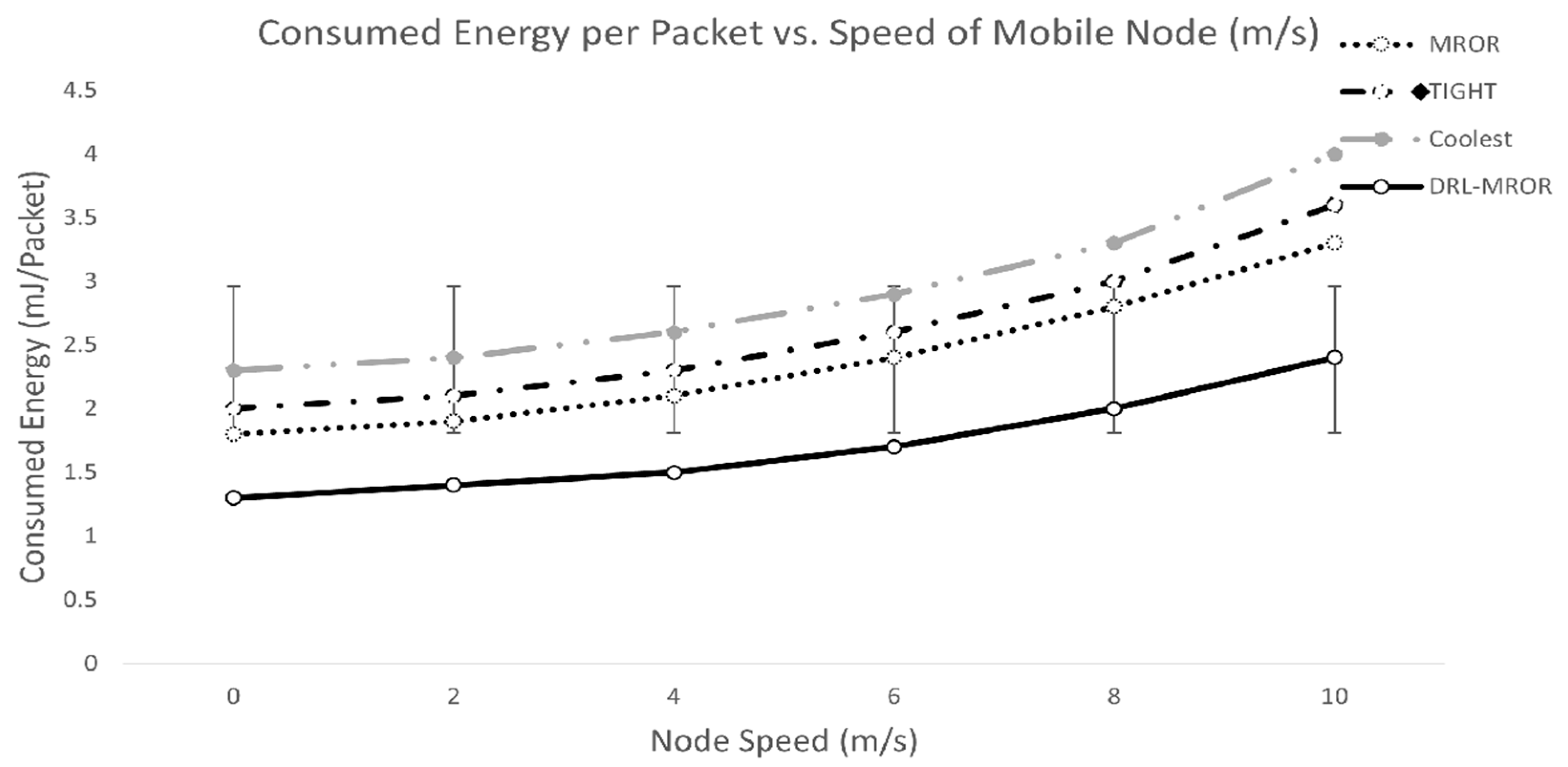

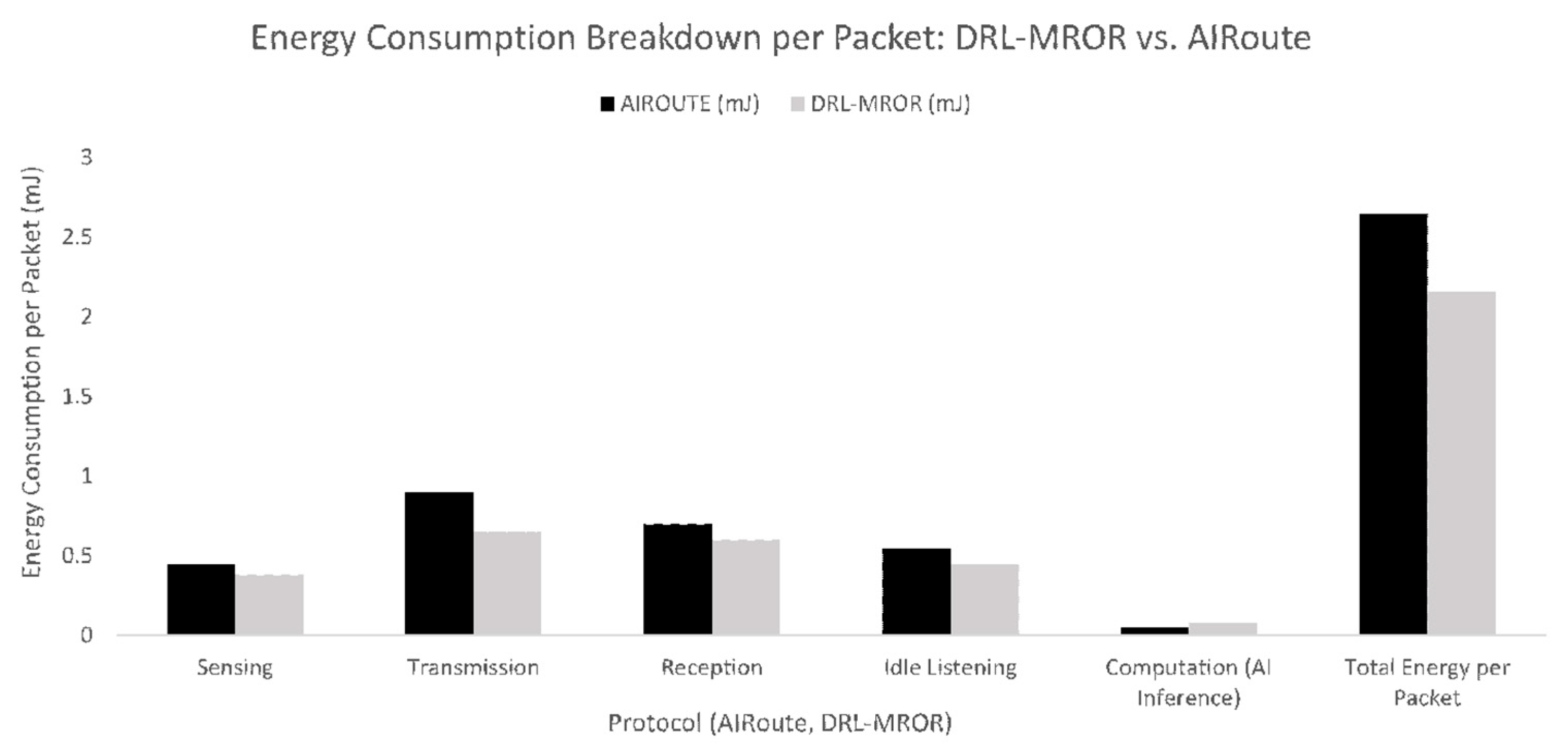

- Sensing: Reduced due to the agent having a sense when sensing is needed, which makes it adaptive in sensing.

- Transmission & Reception: The transmission is greatly decreased due to finding shorter, more reliable routes, which reduces retransmissions and redundant broadcasts.

- Idle Listening: It is minimized since the agent is able to forecast when it is to be active hence there is more time to sleep.

- Computation: A bit larger than AIRoute, since DRL-MROR operates on a more complicated network (e.g. with experience replay), but the price is insignificant in comparison to radio operations savings.

- Trend: Although it has increased computational cost, DRL-MROR realizes a total energy per packet reduction of almost ~18% since the radio (sensing, transmission, reception, idle listening) is the largest power user and the smart decisions significantly decrease its use.

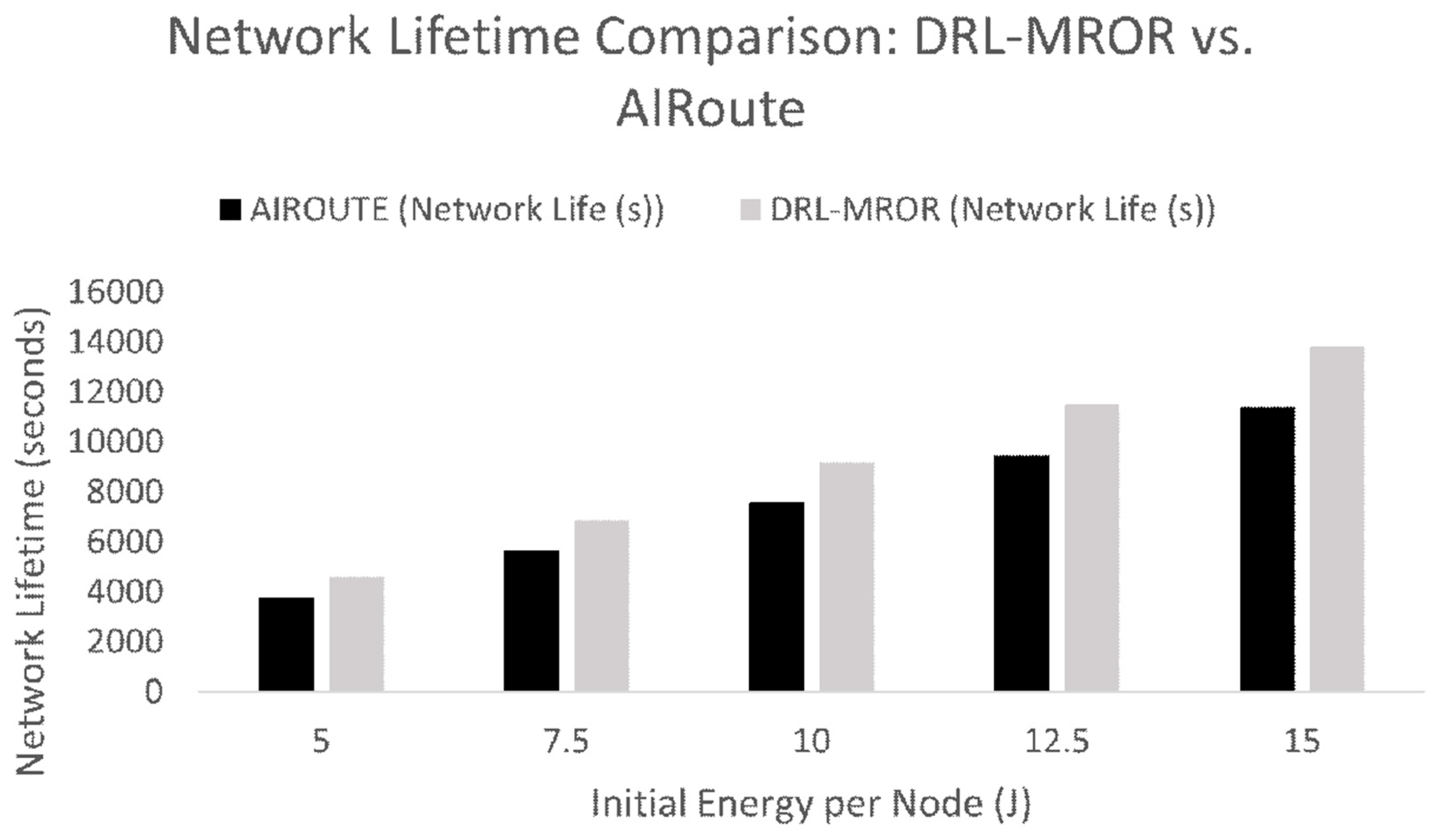

- DRL-MROR reaches an improvement of the network lifetime of approximately 20% over AIRoute at all the energy levels. This savings is obtained directly as a result of the much reduced overall energy being used per packet (as indicated in the above stacked bar chart). DRAMRO also leads to nodes draining their batteries much slower as the DRA lowers the number of retransmissions, listening overhead, and idle listening.

- Trend: Both have a linear scaling network lifetime with the initial energy protocols. Nonetheless, DRL-MROR has a greater lifetime at all times due to its use of energy more efficiently. The difference in lifetime with an increase in initial absolute energy, indicating its scalability.

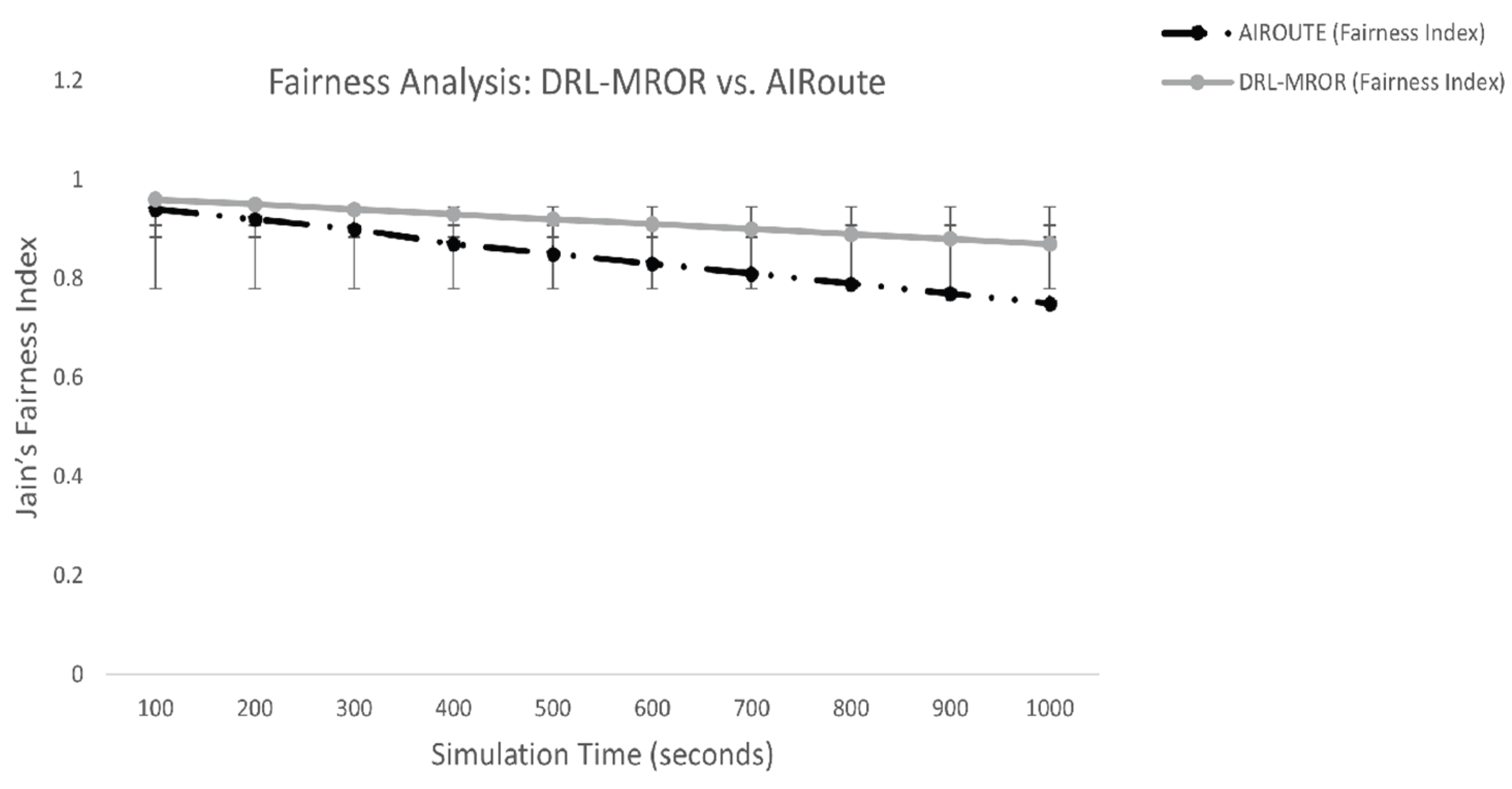

-

DRL-MROR has an average fairness index that is higher than AIRoute by an average of 0.08 to 0.10 over the time of simulation. This gain comes from:

- ○

- Balanced VCG Selection:The DQN agent does not just learn the stability of prospective receivers but also the load and remaining energy on them so that it can choose relays more fairly.

- ○

- Proactive Load Distribution: The agent can spread forwarding load by guessing future network conditions, thereby not always choosing the same one which is the best.

- ○

- Energy-Aware Decisions: The reward function contains the perspective of energy efficiency that motivates the agent to avoid exhausting all the nodes that are low in energy and make them a bottleneck.

- Trend: Network conditions vary over time with the simulation time (e.g. mobility, PU activity) resulting in some of the nodes being preferred as forwarders making them less fair. Although fairness decreases in both protocols, the level in DRL-MROR remains considerably higher since its AI agent constantly changes its approach to make sure that the fairness of resource use is provided.

| Configuration | Learning Rate | Discount Factor (γ) | Reward Weights: w1, w2, w3, w4 | Average Goodput |

| AIRoute [14] (Baseline) | 1e-3 | 0.95 | 0.5:0.3:0.2:0.0 | 78% |

| DRL-MROR (Default) | 1e-4 | 0.95 | 0.4:0.3:0.2:0.1 | 87% |

| DRL-MROR (High LR) | 1e-3 | 0.95 | 0.4:0.3:0.2:0.1 | 82% |

| DRL-MROR (Low LR) | 1e-5 | 0.95 | 0.4:0.3:0.2:0.1 | 84% |

| DRL-MROR (High γ) | 1e-4 | 0.99 | 0.4:0.3:0.2:0.1 | 86% |

| DRL-MROR (Low γ) | 1e-4 | 0.90 | 0.4:0.3:0.2:0.1 | 83% |

| DRL-MROR (w₁=0.6) | 1e-4 | 0.95 | 0.6:0.2:0.1:0.1 | 85% |

| DRL-MROR (w₂=0.5) | 1e-4 | 0.95 | 0.3:0.5:0.1:0.1 | 81% |

| DRL-MROR (w₃=0.4) | 1e-4 | 0.95 | 0.3:0.2:0.4:0.1 | 79% |

- AIRoute Baseline: Represents the state-of-the-art performance with its own set of hyperparameters.

-

Learning Rate (LR):

- ○

- High LR (1e-3): Causes unstable training and overshooting, leading to suboptimal policies and lower goodput (82%).

- ○

- Low LR (1e-5): Results in very slow convergence but can still reach a good policy, though slightly below the default (84%).

- ○

- Conclusion: DRL-MROR performs best at 1e-4, showing sensitivity to this parameter.

-

Discount Factor (γ):

- ○

- High γ (0.99): Makes the agent more farsighted, which is beneficial for long-term network utility (86%).

- ○

- Low γ (0.90): Makes the agent focus more on immediate rewards, potentially neglecting future stability, resulting in lower goodput (83%).

- ○

- Conclusion: Performance degrades if γ is too low, highlighting the importance of long-term planning.

- Reward Weights:

-

o w₁ (Throughput) = 0.6: Over-prioritizing throughput leads to aggressive forwarding but also higher collisions, reducing overall goodput (85%).

- ○

- w₂ (Energy) = 0.5: Over-emphasis on energy conservation causes the agent to drop packets or avoid forwarding, severely hurting delivery rates (81%).

- ○

- w₃ (Delay) = 0.4: Prioritizing low delay forces rapid decisions that may not be reliable, leading to the lowest goodput among variants (79%).

- ○

- Conclusion: The balanced reward function (0.4:0.3:0.2:0.1) is crucial for optimal performance.

7. Discussion

7.1. Discussion: A Critical Analysis of Results

7.2. The Source of Performance Gains

7.3. Energy Efficiency: A Validated Trade-off

7.4. Robustness and Practicality

7.5. Limitations and Future Work

8. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Khalek, N.A.; Tashman, D.H.; Hamouda, W. Advances in Machine Learning-Driven Cognitive Radio for Wireless Networks: A Survey. IEEE Communications Surveys & Tutorials 2024, vol. 26(no. 2), 1201–1237. [Google Scholar] [CrossRef]

- Zubair, S.; Syed Yusoff, S.K.; Fisal, N. Mobility-Enhanced Reliable Geographical Forwarding in Cognitive Radio Sensor Networks. Sensors 2016, vol. 16(no. 2, art. 172). [Google Scholar] [CrossRef] [PubMed]

- Feriani; Hossain, E. Single and Multi-Agent Deep Reinforcement Learning for AI-Enabled Wireless Networks: A Tutorial. IEEE Communications Surveys & Tutorials 2021, vol. 23(no. 2), 1226–1252. [Google Scholar] [CrossRef]

- Shen, Y.; Shi, Y.; Zhang, J.; Letaief, K.B. Graph Neural Networks for Scalable Radio Resource Management: Architecture Design and Theoretical Analysis. IEEE Journal on Selected Areas in Communications 2021, vol. 39(no. 1), 101–115. [Google Scholar] [CrossRef]

- Dašić, M.; Petrović, N.; Stanković, Z.; Milovanović, B. Distributed Spectrum Management in Cognitive Radio Networks by Consensus-Based Reinforcement Learning. Sensors 2021, vol. 21(no. 9), art. 2970. [Google Scholar] [CrossRef] [PubMed]

- Zhao, B.; Wu, J.; Ma, Y.; Yang, C. Meta-Learning for Wireless Communications: A Survey and a Comparison to GNNs. IEEE Open Journal of the Communications Society vol. 5, 1987–2015, 2024. [CrossRef]

- Wang, Z.; Hu, J.; Min, G.; Zhao, Z.; Chang, Z.; Wang, Z. Spatial-Temporal Cellular Traffic Prediction for 5G and Beyond: A Graph Neural Networks-Based Approach. IEEE Transactions on Industrial Informatics 2023, vol. 19(no. 4), 5722–5731. [Google Scholar] [CrossRef]

- Tang, F.; Chen, X.; Rodrigues, T.K.; Zhao, M.; Kato, N. Survey on Digital Twin Edge Networks (DITEN) Toward 6G. IEEE Open Journal of the Communications Society 2022, vol. 3, 1360–1381. [Google Scholar] [CrossRef]

- Ye, X.; Yu, Y.; Fu, L. Multi-Channel Opportunistic Access for Heterogeneous Networks Based on Deep Reinforcement Learning. IEEE Transactions on Wireless Communications 2022, vol. 21(no. 2), 794–807. [Google Scholar] [CrossRef]

- Cohen, Y.; Gafni, T.; Greenberg, R.; Cohen, K. SINR-Aware Deep Reinforcement Learning for Distributed Dynamic Channel Allocation in Cognitive Interference Networks. IEEE Transactions on Wireless Communications 2025, vol. 24(no. 1), 228–243. [Google Scholar] [CrossRef]

- Tran, T.N.; Nguyen, T.-V.; Shim, K.; da Costa, D.B.; An, B. A Deep Reinforcement Learning-Based QoS Routing Protocol Exploiting Cross-Layer Design in Cognitive Radio Mobile Ad Hoc Networks. IEEE Transactions on Vehicular Technology 2022, vol. 71(no. 12), 13165–13181. [Google Scholar] [CrossRef]

- Bai, Y.; Zhang, X.; Yu, D.; Li, S.; Wang, Y.; Lei, S.; Tian, Z. A Deep Reinforcement Learning-Based Geographic Packet Routing Optimization. IEEE Access 2022, vol. 10, 108785–108796. [Google Scholar] [CrossRef]

- Wang, X.; Wang, S.; Liang, X.; Zhao, D.; Huang, J.; Xu, X.; Dai, B.; Miao, Q. Deep Reinforcement Learning: A Survey. IEEE Transactions on Neural Networks and Learning Systems 2024, vol. 35(no. 4), 5064–5078. [Google Scholar] [CrossRef] [PubMed]

- Zhang, X.; Zhao, H.; Xiong, J.; Liu, X.; Yin, H.; Zhou, X.; Wei, J. Decentralized Routing and Radio Resource Allocation in Wireless Ad Hoc Networks via Graph Reinforcement Learning. IEEE Transactions on Cognitive Communications and Networking 2024, vol. 10(no. 3), 1146–1159. [Google Scholar] [CrossRef]

- Mnih, V. Human-level control through deep reinforcement learning. Nature 2015, vol. 518(no. 7540), 529–533. [Google Scholar] [CrossRef] [PubMed]

- Guo, Q.; Tang, F.; Kato, N. Federated Reinforcement Learning-Based Resource Allocation for D2D-Aided Digital Twin Edge Networks in 6G Industrial IoT. IEEE Transactions on Industrial Informatics 2023, vol. 19(no. 5), 7228–7236. [Google Scholar] [CrossRef]

- Halloum, N. Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks. Future Internet 2026, vol. 18(no. 2), 72. [Google Scholar] [CrossRef]

- Yan, W.-Z.; Li, X.-H.; Ding, Y.-M.; He, J.; Cai, B. DQN with Prioritized Experience Replay Algorithm for Reducing Network Blocking Rate in Elastic Optical Networks. Optical Switching and Networking 2024, vol. 52, 100761. [Google Scholar] [CrossRef]

- Merabtine, N.; Djenouri, D.; Zegour, D.-E. Towards Energy Efficient Clustering in Wireless Sensor Networks: A Comprehensive.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.