Submitted:

20 March 2026

Posted:

23 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Memristor Background

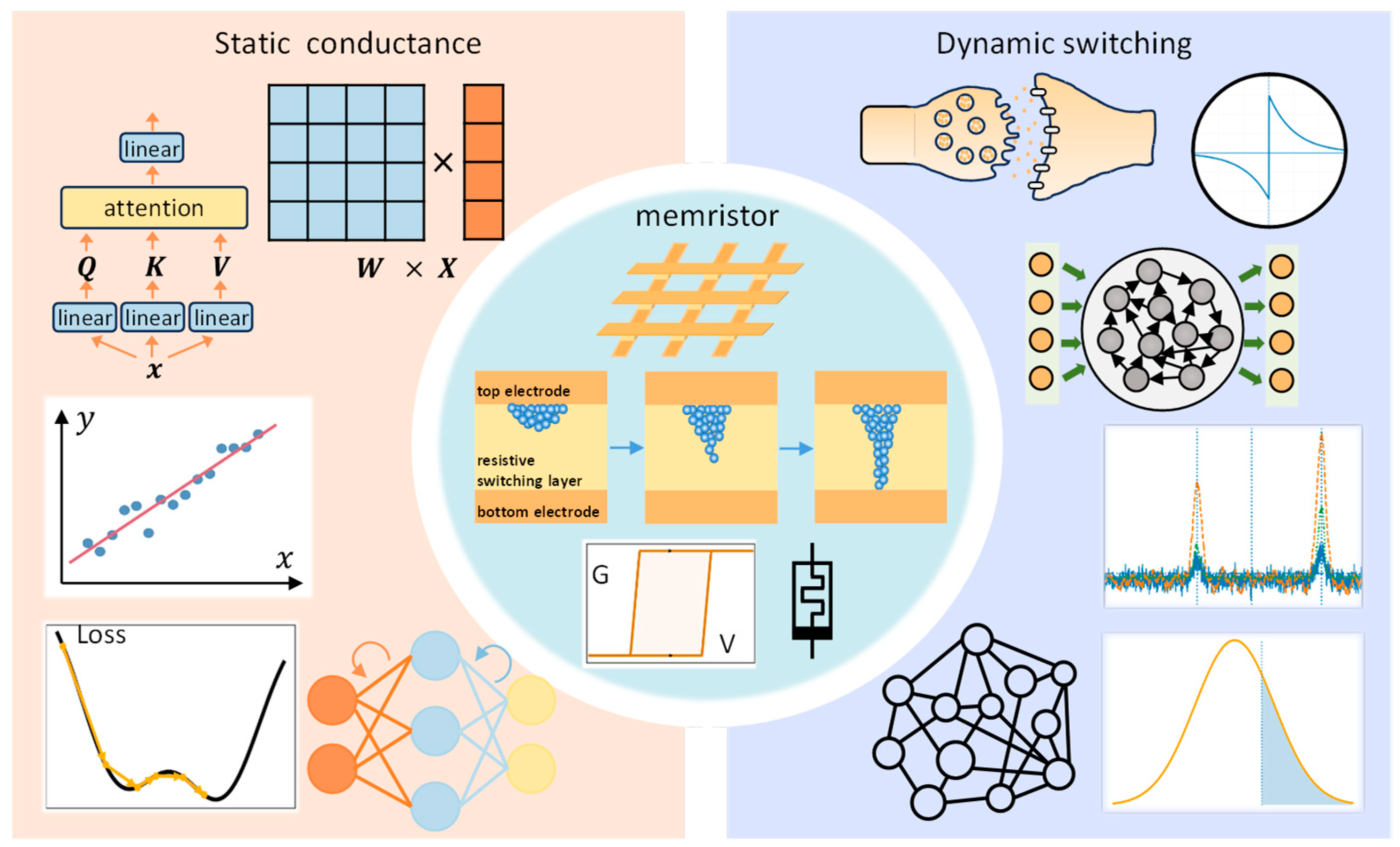

3. Static Conductance of Memristors and Their Applications in AI

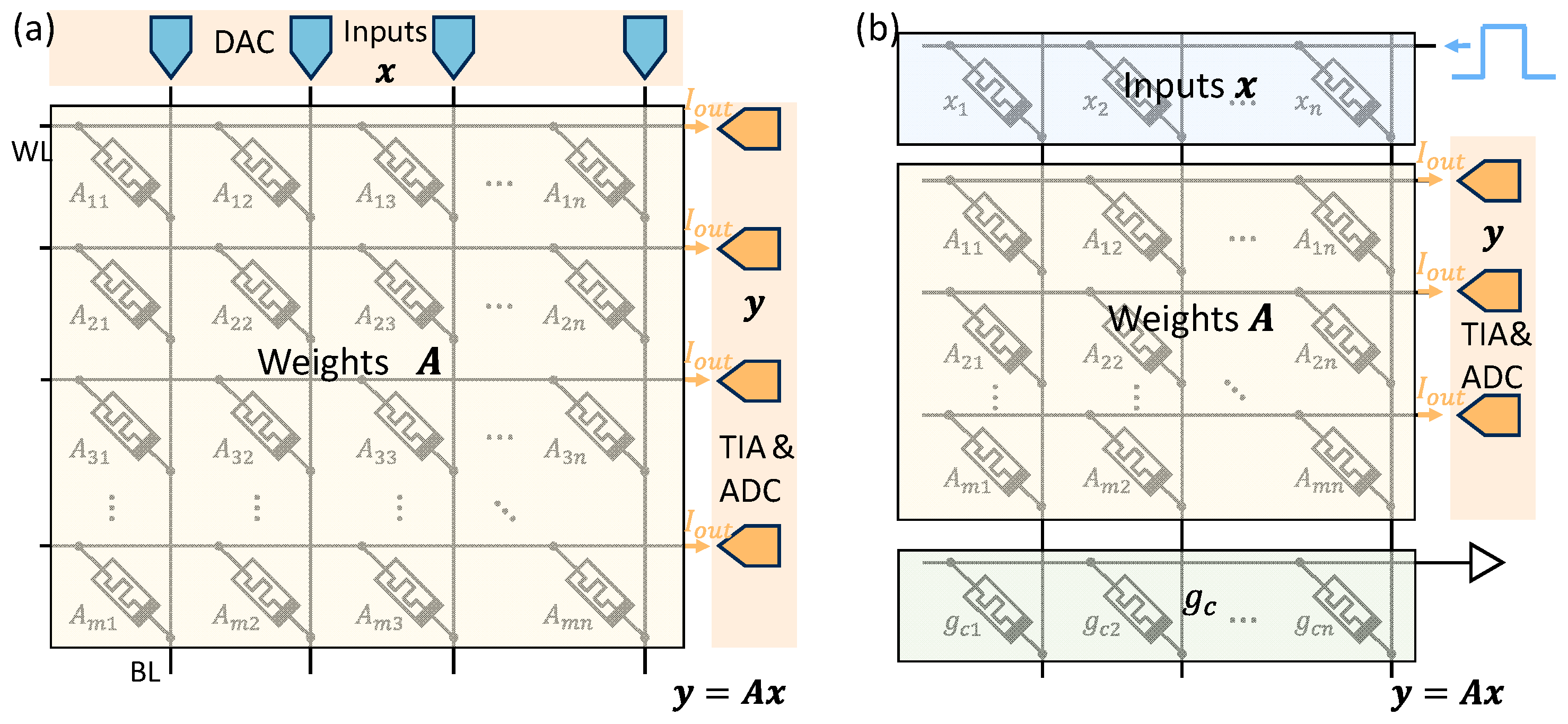

3.1. Matrix-Vector Multiplication Primitive on Crossbar Arrays

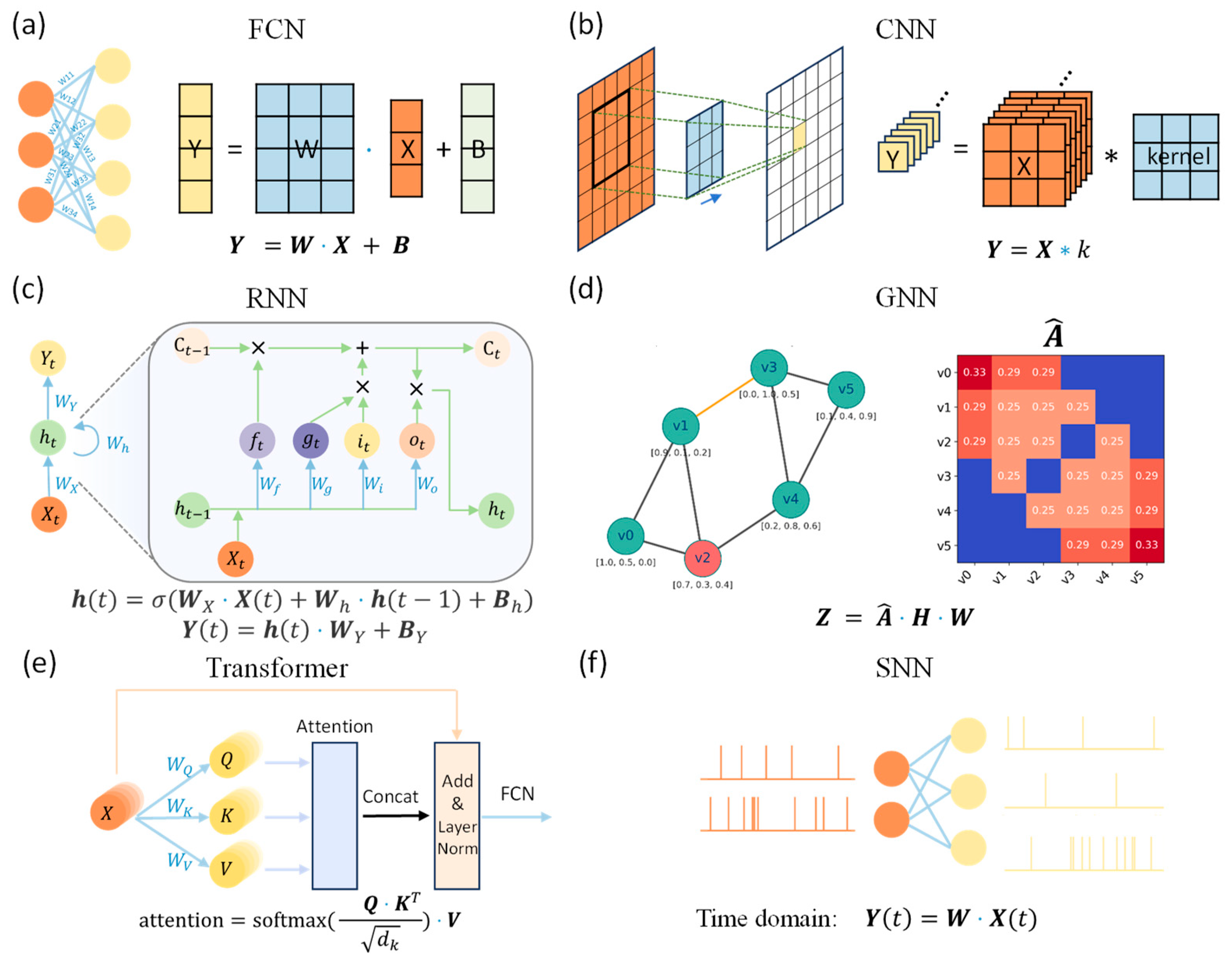

3.2. Applications of MVM in Neural Networks

3.3. Analog Matrix Equation Solving Circuits

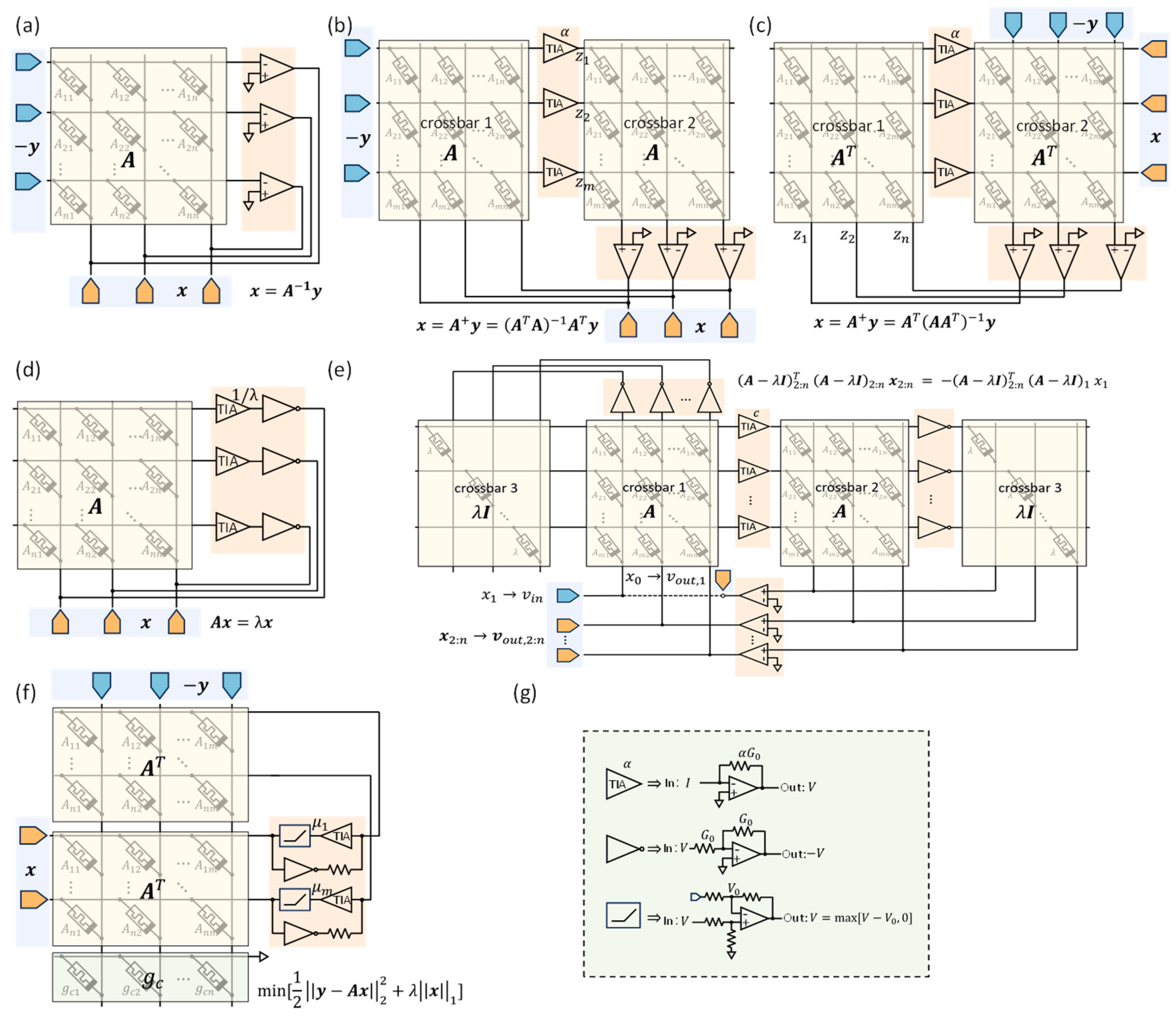

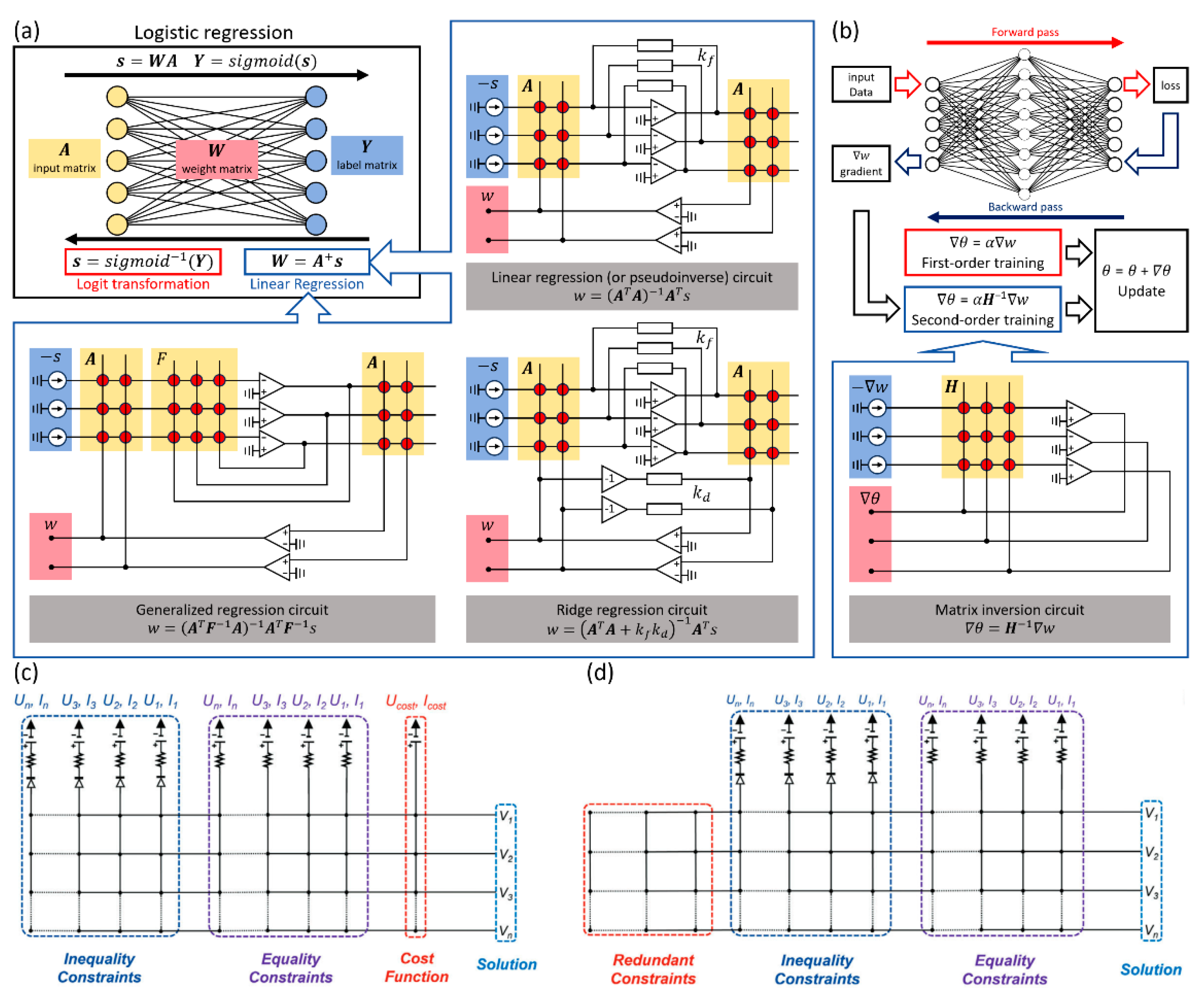

3.4. Applications of Analog Matrix Equation Solving Circuits

3.4.1. Linear/Logistic Regression

3.4.2. Second-Order Neural Network Training

3.4.3. Linear Programming

3.4.4. PCA and Spectral Clustering

4. Dynamic Switching of Memristors and Their Applications in AI

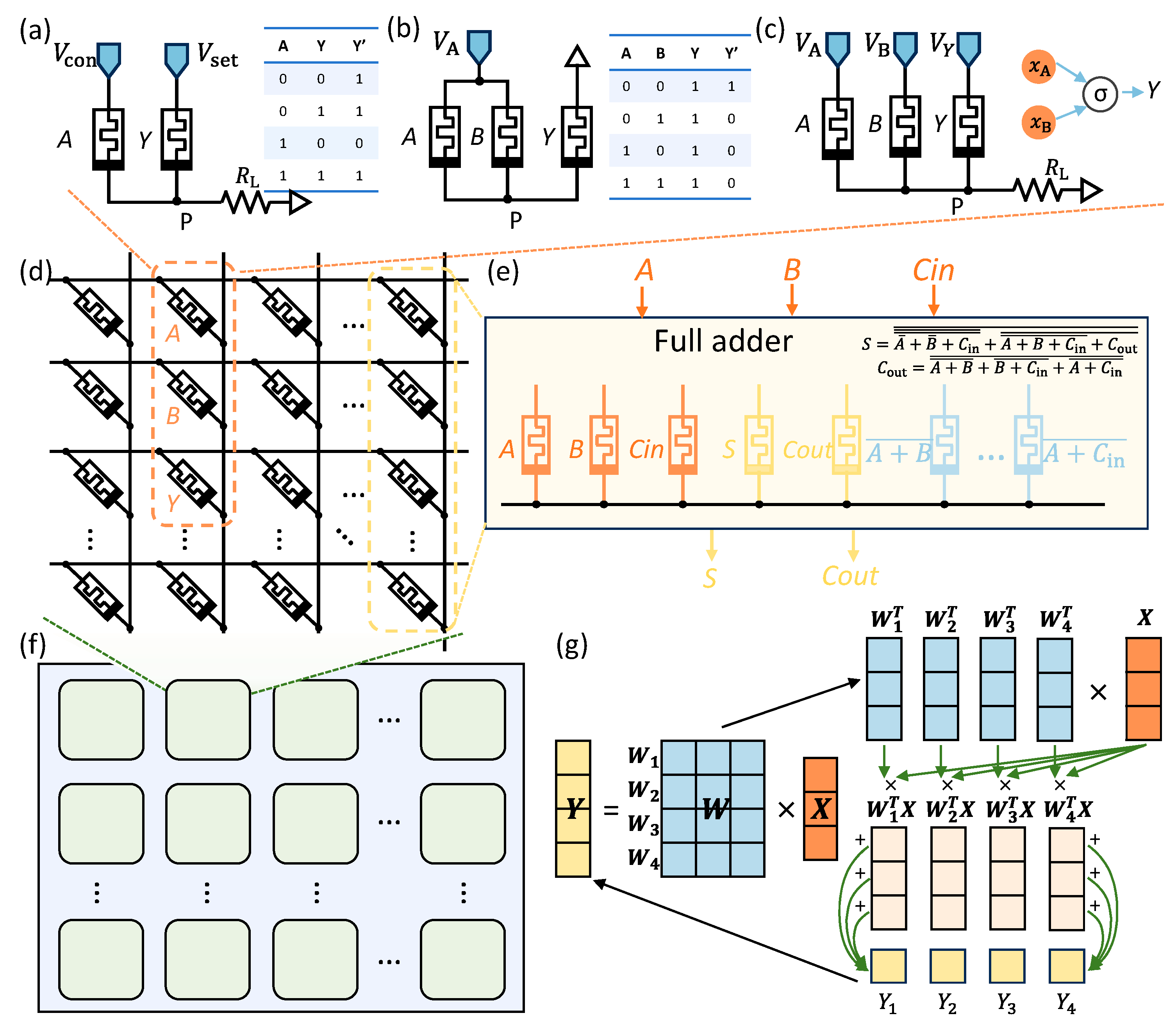

4.1. Stateful Logic and In-Memory Logic Acceleration

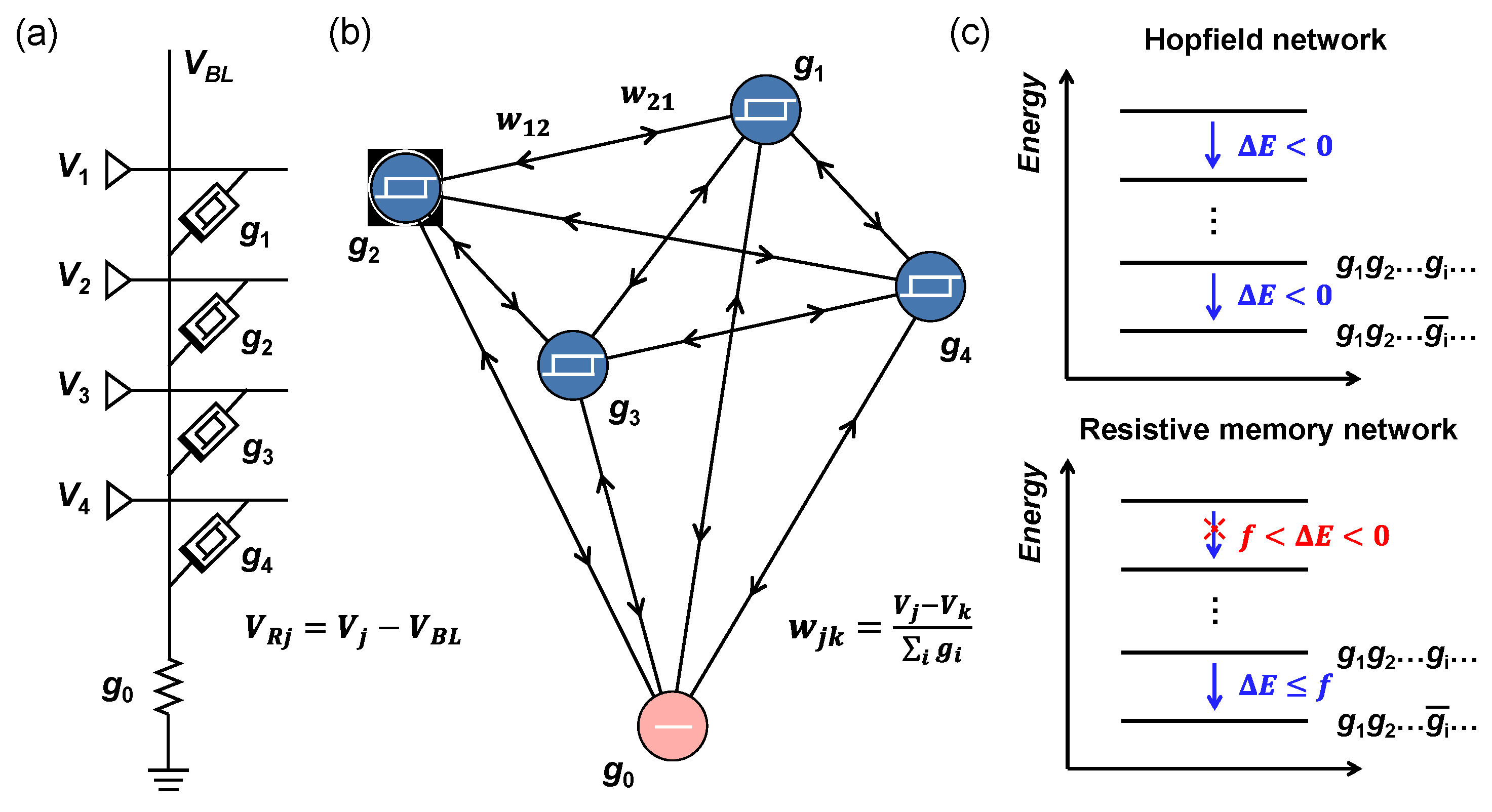

4.2. Attractor Network

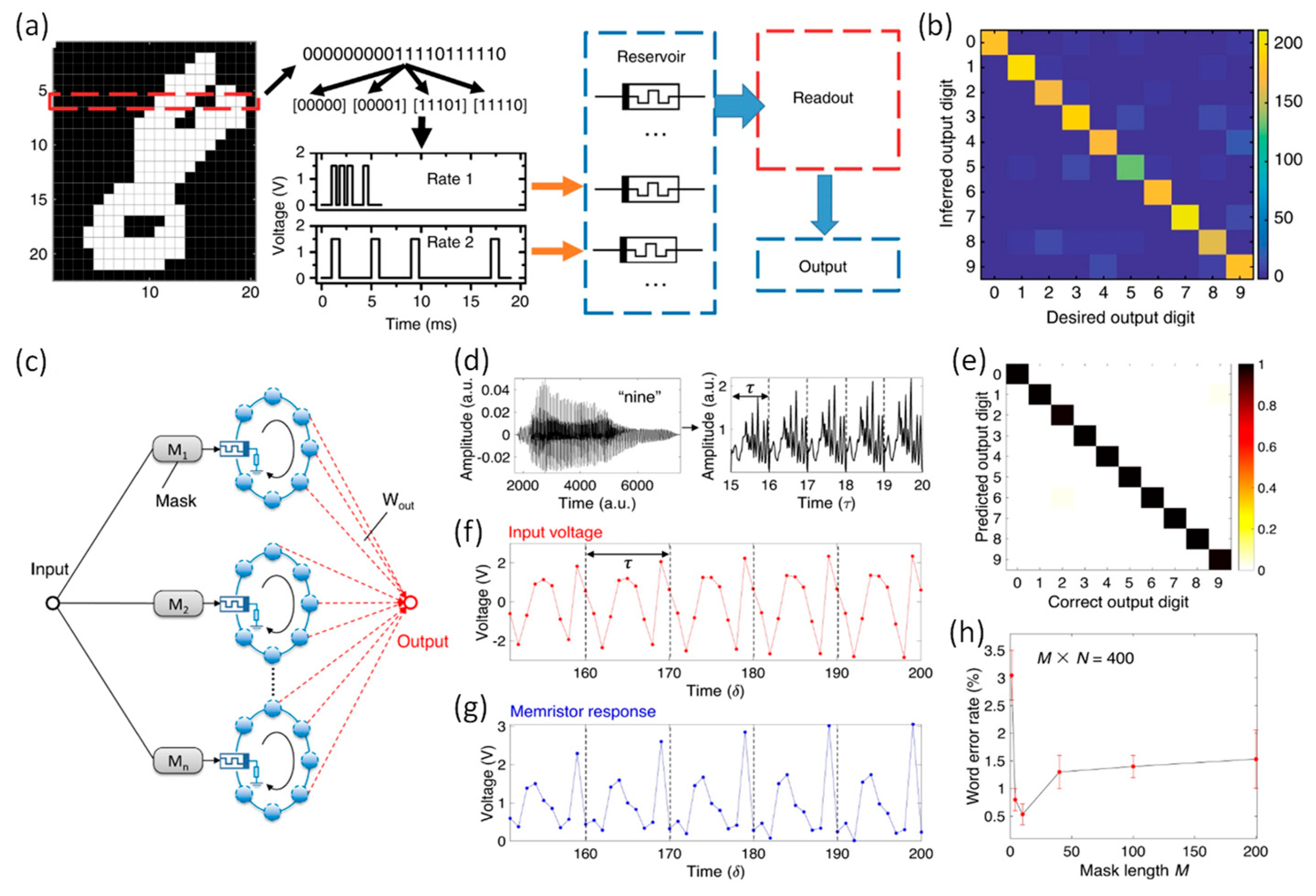

4.3. Reservoir Computing and Spatiotemporal Signal Detection with Dynamic Memristors

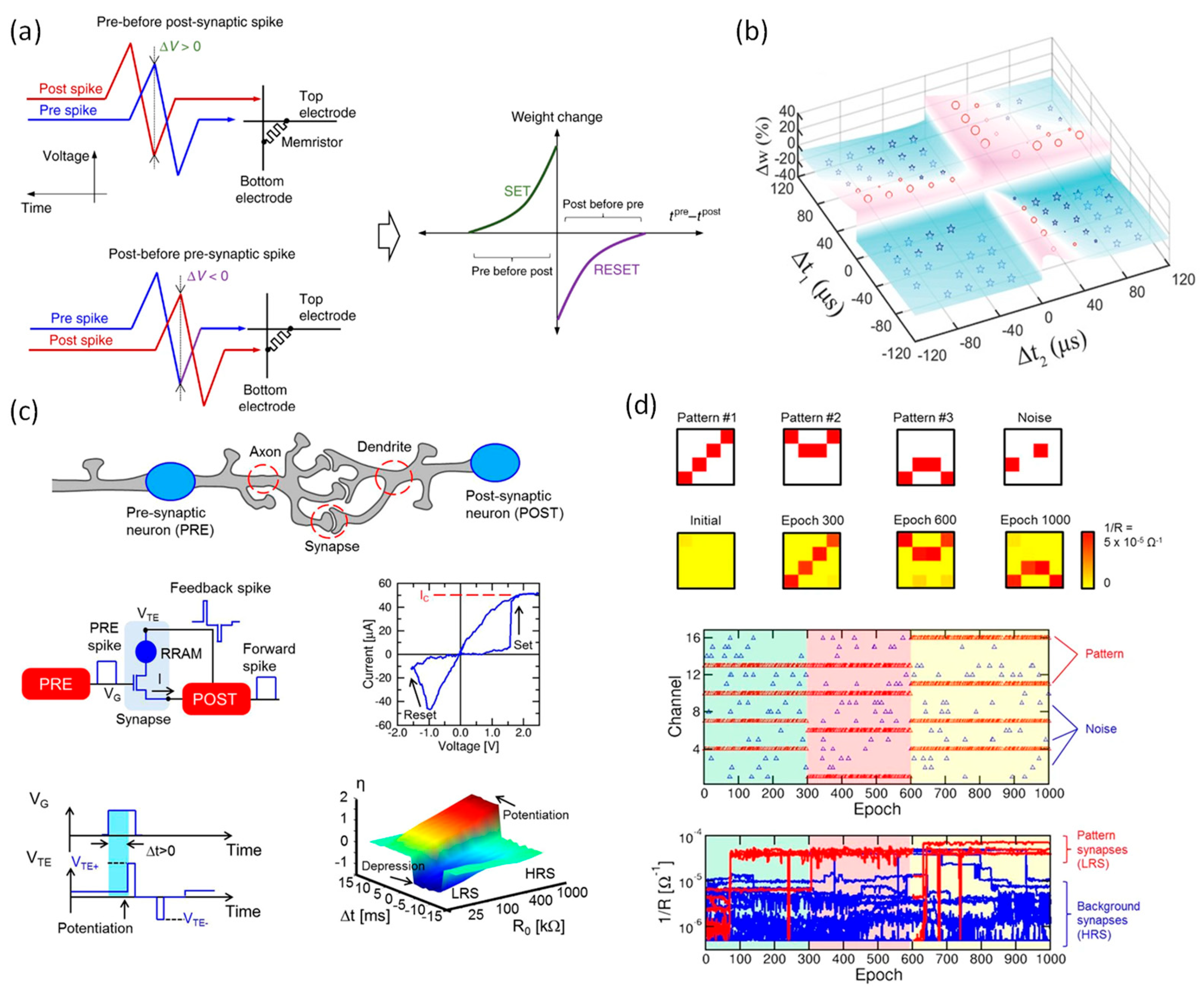

4.4. Spike-Timing-Dependent Plasticity

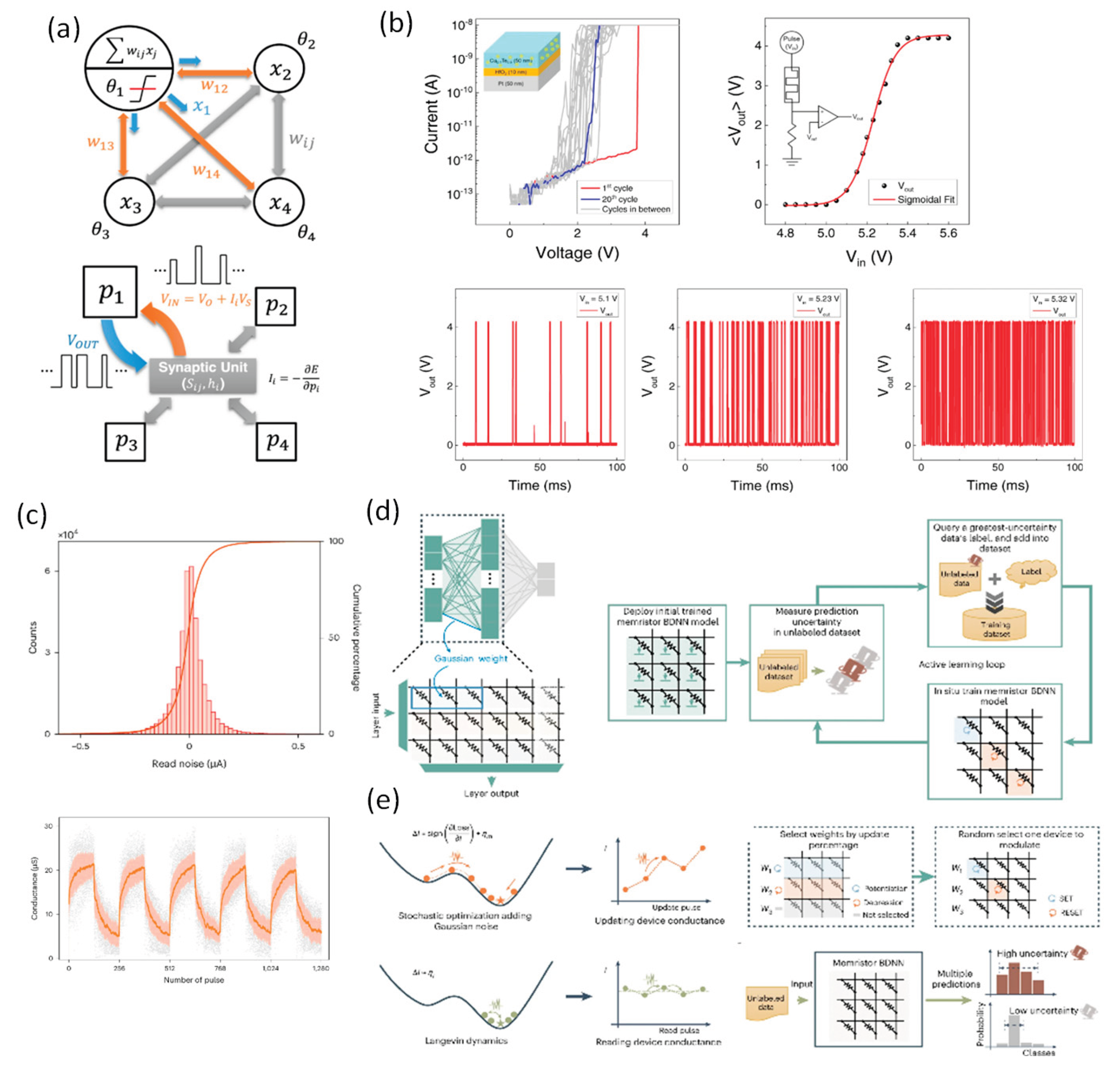

4.5. Memristor-Enabled Stochastic Computation

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Horowitz, M. Computing's energy problem (and what we can do about it). IEEE Int. Solid-State Circuits Conf. Dig. Tech. Papers (ISSCC) 2014, pp. 10–14.

- Waldrop, M.M. The chips are down for Moore's law. Nature 2016, 530, 144–147. [Google Scholar] [CrossRef] [PubMed]

- Ielmini, D.; Wong, H.-S.P. In-memory computing with resistive switching devices. Nat. Electron. 2018, 1, 333–343. [Google Scholar] [CrossRef]

- Sun, Z.; Kvatinsky, S.; Si, X.; Mehonic, A.; Cai, Y.; Huang, R. A full spectrum of computing-in-memory technologies. Nat. Electron. 2023, 6, 823–835. [Google Scholar] [CrossRef]

- Sebastian, A.; Le Gallo, M.; Khaddam-Aljameh, R.; Eleftheriou, E. Memory devices and applications for in-memory computing. Nat. Nanotechnol. 2020, 15, 529–544. [Google Scholar] [CrossRef]

- Sun, W.; Gao, B.; Chi, M.; Xia, Q.; Yang, J.J.; Qian, H.; Wu, H. Understanding memristive switching via in situ characterization and device modeling. Nat. Commun. 2019, 10, 3453. [Google Scholar] [CrossRef]

- Ielmini, D. Resistive switching memories based on metal oxides: Mechanisms, reliability and scaling. Semicond. Sci. Technol. 2016, 31, 063002. [Google Scholar] [CrossRef]

- Mehonic, A.; Ielmini, D.; Roy, K.; Mutlu, O.; Kvatinsky, S.; Serrano-Gotarredona, T.; Linares-Barranco, B.; Spiga, S.; Savel'ev, S.; Balanov, A.G.; et al. Roadmap to neuromorphic computing with emerging technologies. APL Mater. 2024, 12, 109201. [Google Scholar] [CrossRef]

- Yao, P.; Wu, H.; Gao, B.; Tang, J.; Zhang, Q.; Zhang, W.; Yang, J.J.; Qian, H. Fully hardware-implemented memristor convolutional neural network. Nature 2020, 577, 641–646. [Google Scholar] [CrossRef]

- Sun, Z.; Pedretti, G.; Ambrosi, E.; Bricalli, A.; Wang, W.; Ielmini, D. Solving matrix equations in one step with cross-point resistive arrays. Proc. Natl. Acad. Sci. U.S.A. 2019, 116, 4123–4128. [Google Scholar] [CrossRef]

- Zhang, G.; Qin, J.; Zhang, Y.; Gong, G.; Xiong, Z.Y.; Ma, X.; Lv, Z.; Zhou, Y.; Han, S.T. Functional materials for memristor-based reservoir computing: Dynamics and applications. Adv. Funct. Mater. 2023, 33, 2302929. [Google Scholar] [CrossRef]

- Midya, R.; Wang, Z.; Asapu, S.; Zhang, X.; Rao, M.; Song, W.; Zhuo, Y.; Upadhyay, N.; Xia, Q.; Yang, J.J. Reservoir computing using diffusive memristors. Adv. Intell. Syst. 2019, 1, 1900084. [Google Scholar] [CrossRef]

- Kumar, S.; Wang, X.; Strachan, J.P.; Yang, Y.; Lu, W.D. Dynamical memristors for higher-complexity neuromorphic computing. Nat. Rev. Mater. 2022, 7, 575–591. [Google Scholar] [CrossRef]

- Burr, G.W.; Shelby, R.M.; Sebastian, A.; Kim, S.; Kim, S.; Sidler, S.; Virwani, K.; Ishii, M.; Narayanan, P.; Fumarola, A.; et al. Neuromorphic computing using non-volatile memory. Adv. Phys. X 2017, 2, 89–124. [Google Scholar] [CrossRef]

- Chua, L.O. Memristor—The missing circuit element. IEEE Trans. Circuit Theory 1971, 18, 507–519. [Google Scholar] [CrossRef]

- Strukov, D.B.; Snider, G.S.; Stewart, D.R.; Williams, R.S. The missing memristor found. Nature 2008, 453, 80–83. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.; Yang, S.J.; Shim, Y.S.; Moon, C.W. A comprehensive review of electrochemical metallization and valence change mechanisms in filamentary resistive switching of halide perovskite-based memory devices. ACS Appl. Mater. Interfaces 2025, 17, 50122–50141. [Google Scholar] [CrossRef]

- Kwon, D.H.; Kim, K.M.; Jang, J.H.; Jeon, J.M.; Lee, M.H.; Kim, G.H.; Li, X.S.; Park, G.S.; Lee, B.; Han, S.; et al. Atomic structure of conducting nanofilaments in TiO₂ resistive switching memory. Nat. Nanotechnol. 2010, 5, 148–153. [Google Scholar] [CrossRef]

- Sokolov, A.S.; Jeon, Y.R.; Kim, S.; Ku, B.; Lim, D.; Han, H.; Chae, M.G.; Lee, J.; Ha, B.G.; Choi, C. Influence of oxygen vacancies in ALD HfO₂₋ₓ thin films on non-volatile resistive switching phenomena with a Ti/HfO₂₋ₓ/Pt structure. Appl. Surf. Sci. 2018, 434, 822–830. [Google Scholar] [CrossRef]

- Zhang, Y.; Mao, G.Q.; Zhao, X.; Li, Y.; Zhang, M.; Wu, Z.; Wu, W.; Sun, H.; Guo, Y.; Wang, L.; et al. Evolution of the conductive filament system in HfO₂-based memristors observed by direct atomic-scale imaging. Nat. Commun. 2021, 12, 7232. [Google Scholar] [CrossRef]

- Valov, I.; Waser, R.; Jameson, J.R.; Kozicki, M.N. Electrochemical metallization memories—Fundamentals, applications, prospects. Nanotechnology 2011, 22, 254003. [Google Scholar] [CrossRef]

- Ye, C.; Wu, J.; He, G.; Zhang, J.; Deng, T.; He, P.; Wang, H. Physical mechanism and performance factors of metal oxide based resistive switching memory: A review. J. Mater. Sci. Technol. 2016, 32, 1–11. [Google Scholar] [CrossRef]

- Raoux, S.; Wełnic, W.; Ielmini, D. Phase change materials and their application to nonvolatile memories. Chem. Rev. 2010, 110, 240–267. [Google Scholar] [CrossRef] [PubMed]

- Wuttig, M.; Yamada, N. Phase-change materials for rewriteable data storage. Nat. Mater. 2007, 6, 824–832. [Google Scholar] [CrossRef]

- Zhao, Z.; Clima, S.; Garbin, D.; Degraeve, R.; Pourtois, G.; Song, Z.; Zhu, M. Chalcogenide ovonic threshold switching selector. Nano-Micro Lett. 2024, 16, 81. [Google Scholar] [CrossRef] [PubMed]

- Han, C.Y.; Han, Z.R.; Fang, S.L.; Fan, S.Q.; Yin, J.Q.; Liu, W.H.; Li, X.; Yang, S.Q.; Zhang, G.H.; Wang, X.L.; et al. Characterization and modelling of flexible VO₂ Mott memristor for the artificial spiking warm receptor. Adv. Mater. Interfaces 2022, 9, 2200394. [Google Scholar] [CrossRef]

- Ju, D.; Kim, S. Versatile NbOₓ-based volatile memristor for artificial intelligent applications. Adv. Funct. Mater. 2024, 34, 2409436. [Google Scholar] [CrossRef]

- Prezioso, M.; Merrikh-Bayat, F.; Hoskins, B.D.; Adam, G.C.; Likharev, K.K.; Strukov, D.B. Training and operation of an integrated neuromorphic network based on metal-oxide memristors. Nature 2015, 521, 61–64. [Google Scholar] [CrossRef]

- Le Gallo, M.; Sebastian, A.; Mathis, R.; Giefers, H.; Tuma, T.; Bekas, C.; Curioni, A.; Eleftheriou, E. Mixed-precision in-memory computing. Nat. Electron. 2018, 1, 246–253. [Google Scholar] [CrossRef]

- Song, W.; Rao, M.; Li, Y.; Li, C.; Zhuo, Y.; Cai, F.; Wu, M.; Yin, W.; Li, Z.; Wei, Q.; et al. Programming memristor arrays with arbitrarily high precision for analog computing. Science 2024, 383, 903–910. [Google Scholar] [CrossRef]

- Ni, L.; Wang, Y.; Yu, H.; Yang, W.; Weng, C.; Zhao, J. An energy-efficient matrix multiplication accelerator by distributed in-memory computing on binary RRAM crossbar. 21st Asia South Pacific Design Automation Conf. (ASP-DAC), Macao, 2016; pp. 280–285.

- Sun, Z.; Huang, R. Time complexity of in-memory matrix-vector multiplication. IEEE Trans. Circuits Syst. II: Express Briefs 2021, 68, 2785–2789. [Google Scholar] [CrossRef]

- Wang, S.; Sun, Z. Dual in-memory computing of matrix-vector multiplication for accelerating neural networks. Device 2024, 2, 100546. [Google Scholar] [CrossRef]

- Li, C.; Hu, M.; Li, Y.; Jiang, H.; Ge, N.; Montgomery, J.; Zhang, J.; Song, W.; Dávila, N.; Graves, C.E.; et al. Analogue signal and image processing with large memristor crossbars. Nat. Electron. 2018, 1, 52–59. [Google Scholar] [CrossRef]

- Cai, F.; Correll, J.M.; Lee, S.H.; Lim, Y.; Bothra, S.; Zhang, Z.; Flynn, M.P.; Lu, W.D. A fully integrated reprogrammable memristor–CMOS system for efficient multiply–accumulate operations. Nat. Electron. 2019, 2, 290–299. [Google Scholar] [CrossRef]

- Chen, W.H.; Li, K.X.; Lin, W.Y.; Hsu, K.H.; Li, P.Y.; Yang, C.H.; Xue, C.X.; Yang, E.Y.; Chen, Y.K.; Chang, Y.S.; et al. A 65nm 1Mb nonvolatile computing-in-memory ReRAM macro with sub-16ns multiply-and-accumulate for binary DNN AI edge processors. IEEE Int. Solid-State Circuits Conf. (ISSCC), 2018; pp. 494–496.

- Gokmen, T.; Vlasov, Y. Acceleration of deep neural network training with resistive cross-point devices: Design considerations. Front. Neurosci. 2016, 10, 333. [Google Scholar] [CrossRef] [PubMed]

- Wen, T.H.; Hung, J.M.; Huang, W.H.; Jhang, C.J.; Lo, Y.C.; Hsu, H.H.; Ke, Z.E.; Chen, Y.C.; Chin, Y.H.; Su, C.I.; et al. Fusion of memristor and digital compute-in-memory processing for energy-efficient edge computing. Science 2024, 384, 325–332. [Google Scholar] [CrossRef]

- Jeon, K.; Ryu, J.J.; Im, S.; Seo, H.K.; Eom, T.; Ju, T.; Yang, M.K.; Jeong, D.S.; Kim, G.H. Purely self-rectifying memristor-based passive crossbar array for artificial neural network accelerators. Nat. Commun. 2024, 15, 129. [Google Scholar] [CrossRef] [PubMed]

- Hu, M.; Strachan, J.P.; Li, Z.; Grafals, E.; Davila, N.; Graves, C.; Lam, S.; Ge, N.; Yang, J.J.; Williams, R.S. Dot-product engine for neuromorphic computing: Programming 1T1M crossbar to accelerate matrix-vector multiplication. Proc. 53rd Annu. Design Autom. Conf. (DAC), 2016; pp. 1–6.

- Lecun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Wan, W.; Kubendran, R.; Schaefer, C.; Eryilmaz, S.B.; Zhang, W.; Wu, D.; Deiss, S.; Raina, P.; Qian, H.; Gao, B.; et al. A compute-in-memory chip based on resistive random-access memory. Nature 2022, 608, 504–512. [Google Scholar] [CrossRef]

- Wen, S.; Wei, H.; Zeng, Z.; Huang, T. Memristive fully convolutional network: An accurate hardware image-segmentor in deep learning. IEEE Trans. Emerg. Top. Comput. Intell. 2018, 2, 324–334. [Google Scholar] [CrossRef]

- Hu, M.; Graves, C.E.; Li, C.; Li, Y.; Ge, N.; Montgomery, J.; Davila, N.; Jiang, H.; Williams, R.S.; Yang, J.J.; et al. Memristor-based analog computation and neural network classification with a dot product engine. Adv. Mater. 2018, 30, 1705914. [Google Scholar] [CrossRef]

- Yakopcic, C.; Alom, M.Z.; Taha, T.M. Memristor crossbar deep network implementation based on a convolutional neural network. Proc. Int. Joint Conf. Neural Networks (IJCNN), 2016; pp. 963–970.

- Migliato Marega, G.; Ji, H.G.; Wang, Z.; Pasquale, G.; Tripathi, M.; Radenovic, A.; Kis, A. A large-scale integrated vector–matrix multiplication processor based on monolayer molybdenum disulfide memories. Nat. Electron. 2023, 6, 991–998. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Li, C.; Wang, Z.; Rao, M.; Belkin, D.; Song, W.; Jiang, H.; Yan, P.; Li, Y.; Lin, P.; Hu, M.; et al. Long short-term memory networks in memristor crossbar arrays. Nat. Mach. Intell. 2019, 1, 49–57. [Google Scholar] [CrossRef]

- Wang, Z.; Li, C.; Lin, P.; Rao, M.; Nie, Y.; Song, W.; Qiu, Q.; Li, Y.; Yan, P.; Strachan, J.P.; et al. In situ training of feed-forward and recurrent convolutional memristor networks. Nat. Mach. Intell. 2019, 1, 434–442. [Google Scholar] [CrossRef]

- Liu, X.; Zeng, Z.; Wunsch, D.C., II. Memristor-based LSTM network with in situ training and its applications. Neural Netw. 2020, 131, 300–311. [Google Scholar] [PubMed]

- Smagulova, K.; James, A.P. A survey on LSTM memristive neural network architectures and applications. Eur. Phys. J. Spec. Top. 2019, 228, 2313–2324. [Google Scholar] [CrossRef]

- Nikam, H.; Satyam, S.; Sahay, S. Long short-term memory implementation exploiting passive RRAM crossbar array. IEEE Trans. Electron Devices 2021, 69, 1743–1751. [Google Scholar] [CrossRef]

- Koller, D.; Friedman, N. Probabilistic Graphical Models: Principles and Techniques; MIT Press: Cambridge, MA, USA, 2009. [Google Scholar]

- Arka, A.I.; Doppa, J.R.; Pande, P.P.; Joardar, B.K.; Chakrabarty, K. ReGraphX: NoC-enabled 3D heterogeneous ReRAM architecture for training graph neural networks. In Design, Automation & Test in Europe Conf. (DATE); 2021; pp. 1667–1672. [Google Scholar]

- Lyu, B.; Wang, S.; Wen, S.; Shi, K.; Yang, Y.; Zeng, L.; Huang, T. AutoGMap: Learning to map large-scale sparse graphs on memristive crossbars. IEEE Trans. Neural Netw. Learn. Syst. 2023, 35, 12888–12898. [Google Scholar] [CrossRef]

- Morsali, M.; Nazzal, M.; Khreishah, A.; Angizi, S. Ima-GNN: In-memory acceleration of centralized and decentralized graph neural networks at the edge. Proc. Great Lakes Symp. VLSI, 2023; pp. 3–8.

- Liu, C.; Liu, H.; Jin, H.; Liao, X.; Zhang, Y.; Duan, Z.; Xu, J.; Li, H. ReGNN: A ReRAM-based heterogeneous architecture for general graph neural networks. Proc. 59th ACM/IEEE Design Autom. Conf. (DAC), 2022; pp. 469–474.

- He, Y.; Li, B.; Wang, Y.; Liu, C.; Li, H.; Li, X. A task-adaptive in-situ ReRAM computing for graph convolutional networks. IEEE Trans. Computer-Aided Des. Integr. Circuits Syst. 2024, 43, 2635–2646. [Google Scholar]

- Ogbogu, C.O.; Arka, A.I.; Pfromm, L.; Joardar, B.K.; Doppa, J.R.; Chakrabarty, K.; Pande, P.P. Accelerating graph neural network training on ReRAM-based PIM architectures via graph and model pruning. IEEE Trans. Computer-Aided Des. Integr. Circuits Syst. 2022, 42, 2703–2716. [Google Scholar]

- Yu, Y.; Wang, S.; Xu, M.; Wen, S.; Zhang, W.; Wang, B.; Yang, J.; Wang, S.; Zhang, Y.; Wu, X.; et al. Random memristor-based dynamic graph CNN for efficient point cloud learning at the edge. npj Unconventional Comput. 2024, 1, 6. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Yang, X.; Yan, B.; Li, H.; Chen, Y. ReTransformer: ReRAM-based processing-in-memory architecture for transformer acceleration. Proc. 39th Int. Conf. Computer-Aided Design (ICCAD), 2020; pp. 1–9.

- Sridharan, S.; Stevens, J.R.; Roy, K.; Raghunathan, A. X-former: In-memory acceleration of transformers. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 2023, 31, 1223–1233. [Google Scholar] [CrossRef]

- Leroux, N.; Manea, P.P.; Sudarshan, C.; Finkbeiner, J.; Siegel, S.; Strachan, J.P.; Neftci, E. Analog in-memory computing attention mechanism for fast and energy-efficient large language models. Nat. Comput. Sci. 2025, 5, 813–824. [Google Scholar] [CrossRef]

- Park, J.; Choi, J.; Kyung, K.; Kim, M.J.; Kwon, Y.; Kim, N.S.; Ahn, J.H. Attacc! Unleashing the power of PIM for batched transformer-based generative model inference. Proc. 29th ACM Int. Conf. Architectural Support Program. Lang. Operating Syst. (ASPLOS), 2024; pp. 103–119.

- Zhou, M.; Xu, W.; Kang, J.; Rosing, T. TransPIM: A memory-based acceleration via software-hardware co-design for transformer. Proc. IEEE Int. Symp. High-Performance Computer Architecture (HPCA), 2022; pp. 1071–1085.

- Bettayeb, M.; Halawani, Y.; Khan, M.U.; Saleh, H.; Mohammad, B. Efficient memristor accelerator for transformer self-attention functionality. Sci. Rep. 2024, 14, 24173. [Google Scholar] [CrossRef] [PubMed]

- Yang, C.; Wang, X.; Zeng, Z. Full-circuit implementation of transformer network based on memristor. IEEE Trans. Circuits Syst. I: Regular Papers 2022, 69, 1395–1407. [Google Scholar] [CrossRef]

- Duan, Q.; Jing, Z.; Zou, X.; Wang, Y.; Yang, K.; Zhang, T.; Wu, S.; Huang, R.; Yang, Y. Spiking neurons with spatiotemporal dynamics and gain modulation for monolithically integrated memristive neural networks. Nat. Commun. 2020, 11, 3399. [Google Scholar] [CrossRef]

- Nowshin, F.; Yi, Y. Memristor-based deep spiking neural network with a computing-in-memory architecture. Proc. 23rd Int. Symp. Quality Electron. Design (ISQED), 2022; pp. 1–6.

- Sun, Z.; Ielmini, D. Invited tutorial: Analog matrix computing with crosspoint resistive memory arrays. IEEE Trans. Circuits Syst. II: Express Briefs 2022, 69, 3024–3029. [Google Scholar] [CrossRef]

- Sun, Z.; Pedretti, G.; Mannocci, P.; Ambrosi, E.; Bricalli, A.; Ielmini, D. Time complexity of in-memory solution of linear systems. IEEE Trans. Electron Devices 2020, 67, 2945–2951. [Google Scholar] [CrossRef]

- Sun, Z.; Pedretti, G.; Ambrosi, E.; Bricalli, A.; Ielmini, D. In-Memory Eigenvector Computation in Time O(1). Adv. Intell. Syst. 2020, 2, 2000042. [Google Scholar] [CrossRef]

- Sun, Z.; Ambrosi, E.; Pedretti, G.; Bricalli, A.; Ielmini, D. In-memory PageRank accelerator with a cross-point array of resistive memories. IEEE Trans. Electron Devices 2020, 67, 1466–1470. [Google Scholar] [CrossRef]

- Hong, C.; Luo, Y.; Zuo, P.; Wang, S.; Sun, Z. Solving All Eigenpairs with Resistive Memory-Based Analog Matrix Computing Circuits. IEEE Trans. Circuits Syst. I: Reg. Papers 2025. early access. [Google Scholar]

- Wang, S.; Luo, Y.; Zuo, P.; Pan, L.; Li, Y.; Sun, Z. In-memory analog solution of compressed sensing recovery in one step. Sci. Adv. 2023, 9, eadj2908. [Google Scholar] [CrossRef]

- Kutner, M.H.; Nachtsheim, C.J.; Neter, J.; Li, W. Applied Linear Statistical Models, 5th ed.; McGraw-Hill: New York, NY, USA, 2004. [Google Scholar]

- Sun, Z.; Pedretti, G.; Bricalli, A.; Ielmini, D. One-step regression and classification with cross-point resistive memory arrays. Sci. Adv. 2020, 6, eaay2378. [Google Scholar] [CrossRef]

- Cucchi, M.; Abreu, S.; Ciccone, G.; Brunner, D.; Kleemann, H. Hands-on reservoir computing: A tutorial for practical implementation. Neuromorph. Comput. Eng. 2022, 2, 032002. [Google Scholar] [CrossRef]

- Farronato, M.; Mannocci, P.; Melegari, M.; Ricci, S.; Compagnoni, C.M.; Ielmini, D. Reservoir computing with charge-trap memory based on a MoS₂ channel for neuromorphic engineering. Adv. Mater. 2023, 35, 2205381. [Google Scholar] [CrossRef]

- Mikolajick, T.; Park, M.H.; Begon-Lours, L.; Slesazeck, S. From ferroelectric material optimization to neuromorphic devices. Adv. Mater. 2023, 35, 2206042. [Google Scholar] [CrossRef]

- Li, H.; Wang, S.; Zhang, X.; Wang, W.; Yang, R.; Sun, Z.; Feng, W.; Lin, P.; Wang, Z.; Sun, L.; et al. Memristive crossbar arrays for storage and computing applications. Adv. Intell. Syst. 2021, 3, 2100017. [Google Scholar] [CrossRef]

- Wang, S.; Li, Y.; Wang, D.; Zhang, W.; Chen, X.; Dong, D.; Wang, S.; Zhang, X.; Lin, P.; Gallichio, C. Echo state graph neural networks with analogue random resistive memory arrays. Nat. Mach. Intell. 2023, 5, 104–113. [Google Scholar] [CrossRef]

- Mikhaylov, A.; Pimashkin, A.; Pigareva, Y.; Gerasimova, S.; Gryaznov, E.; Shchanikov, S.; Zuev, A.; Talanov, M.; Lavrov, I.; Demin, V. Neurohybrid memristive CMOS-integrated systems for biosensors and neuroprosthetics. Front. Neurosci. 2020, 14, 358. [Google Scholar] [CrossRef] [PubMed]

- Gómez-Luna, J.; Guo, Y.; Brocard, S.; Legriel, J.; Cimadomo, R.; Oliveira, G.F. Evaluating machine learning workloads on memory-centric computing systems. Proc. IEEE Int. Symp. Perform. Anal. Syst. Softw. (ISPASS), 2023; pp. 35–49.

- Wang, S.; Sun, Z.; Liu, Y.; Bao, S.; Cai, Y.; Ielmini, D.; Huang, R. Optimization Schemes for In-Memory Linear Algebra Using Memristors. IEEE Trans. Circuits Syst. I: Reg. Papers 2021, 68, 4900–4909. [Google Scholar]

- Mannocci, P.; Pedretti, G.; Giannone, E.; Melacarne, E.; Sun, Z.; Ielmini, D. A universal, analog, in-memory computing primitive for linear algebra using memristors. IEEE Trans. Circuits Syst. I: Reg. Papers 2021, 68, 4889–4899. [Google Scholar] [CrossRef]

- Mannocci, P.; Melacarne, E.; Ielmini, D. An Analogue In-Memory Ridge Regression Circuit with Application to Massive MIMO Acceleration. IEEE J. Emerg. Sel. Top. Circuits Syst. 2022, 12, 952–962. [Google Scholar] [CrossRef]

- Mannocci, P.; Ielmini, D. A Generalized Block-Matrix Circuit for Closed-Loop Analog In-Memory Computing. IEEE J. Explor. Solid-State Comput. Devices Circuits 2023, 9, 47–58. [Google Scholar] [CrossRef]

- Yao, Z.; Gholami, A.; Shen, S.; Mustafa, M.; Keutzer, K.; Mahoney, M. ADAHESSIAN: An Adaptive Second Order Optimizer for Machine Learning. Proc. AAAI Conf. Artif. Intell. 2021, 35, 10665–10673. [Google Scholar] [CrossRef]

- Zhao, Y.; Jiang, L.; Gao, M.; Jing, N.; Gu, C.; Tang, Q.; Liu, F.; Yang, T.; Liang, X. RePAST: a ReRAM-based PIM accelerator for second-order training of DNN. arXiv 2022, arXiv:2210.15255. [Google Scholar]

- Melanson, D.; Abu Khater, M.; Aifer, M.; Donatella, K.; Hunter Gordon, M.; Ahle, T.; Crooks, G.; Martinez, A.J.; Sbahi, F.; Coles, P.J. Thermodynamic Computing System for AI Applications. Nat. Commun. 2025, 16, 3757. [Google Scholar] [CrossRef]

- Aifer, M.; Donatella, K.; Gordon, M.H.; Duffield, S.; Ahle, T.; Simpson, D.; Crooks, G.; Coles, P.J. Thermodynamic linear algebra. npj Unconventional Computing 2024, 1, 13. [Google Scholar] [CrossRef]

- Coles, P.J.; Aifer, M.; Donatella, K.; Melanson, D.; Gordon, M.H.; Ahle, T.D.; Simpson, D.; Crooks, G.; Martinez, A.J.; Sbahi, F.M. Thermodynamic AI and thermodynamic linear algebra. Machine Learning with New Compute Paradigms 2023, 21. [Google Scholar]

- Aifer, M.; Duffield, S.; Donatella, K.; Melanson, D.; Klett, P.; Belateche, Z.; Crooks, G.; Martinez, A.J.; Coles, P.J. Thermodynamic bayesian inference. Proc. IEEE Int. Conf. Rebooting Comput. (ICRC) 2024, pp. 1–13.

- Donatella, K.; Duffield, S.; Aifer, M.; Melanson, D.; Crooks, G.; Coles, P.J. Thermodynamic natural gradient descent. npj Unconventional Computing 2026, 3, 5. [Google Scholar] [CrossRef]

- Donatella, K.; Duffield, S.; Melanson, D.; Aifer, M.; Klett, P.; Salegame, R.; Belateche, Z.; Crooks, G.; Martinez, A.J.; Coles, P.J. Scalable Thermodynamic Second-order Optimization. arXiv 2025, arXiv:2502.08603. [Google Scholar] [CrossRef]

- Boyd, S.; Vandenberghe, L. Convex Optimization; Cambridge University Press: Cambridge, U.K., 2004. [Google Scholar]

- Wu, L.; Braatz, R.D. A Quadratic Programming Algorithm with O(n³) Time Complexity. arXiv 2025, arXiv:2507.04515. [Google Scholar] [CrossRef]

- Cohen, M.B.; Lee, Y.T.; Song, Z. Solving Linear Programs in the Current Matrix Multiplication Time. J. ACM 2021, 68, 1–39. [Google Scholar] [CrossRef]

- Liu, S.; Wang, Y.; Fardad, M.; Varshney, P.K. A Memristor-Based Optimization Framework for Artificial Intelligence Applications. IEEE Circuits Syst. Mag. 2018, 18, 29–44. [Google Scholar] [CrossRef]

- Shang, L.; Adil, M.; Madani, R.; Pan, C. Memristor-Based Analog Recursive Computation Circuit for Linear Programming Optimization. IEEE J. Explor. Solid-State Comput. Devices Circuits 2020, 6, 53–61. [Google Scholar] [CrossRef]

- Gao, X.; Liao, L.Z. A New One-Layer Neural Network for Linear and Quadratic Programming. IEEE Trans. Neural Netw. 2010, 21, 918–929. [Google Scholar] [PubMed]

- Di Marco, M.; Forti, M.; Pancioni, L.; Innocenti, G.; Tesi, A. Memristor Neural Networks for Linear and Quadratic Programming Problems. IEEE Trans. Cybern. 2022, 52, 1822–1835. [Google Scholar] [CrossRef]

- Liu, Z.; Cheng, H.C.; Hossain, D.; Meng, R.; Bena, Y.; Shi, B.; Chen, D.W.; Yang, S.; Su, Y.; Wang, P.; et al. Ultrafast Hybrid Computing Systems Enabled by Memristor-Based Quadratic Programming Circuits. Adv. Funct. Mater. 2024, 34, 2401600. [Google Scholar] [CrossRef]

- Vichik, S.; Borrelli, F. Solving linear and quadratic programs with an analog circuit. Comput. Chem. Eng. 2014, 70, 160–171. [Google Scholar] [CrossRef]

- Vichik, S.; Arcak, M.; Borrelli, F. Stability of an analog optimization circuit for quadratic programming. Syst. Control Lett. 2016, 88, 68–74. [Google Scholar] [CrossRef]

- Mannocci, P.; Giannone, E.; Ielmini, D. In-Memory Principal Component Analysis by Analogue Closed-Loop Eigendecomposition. IEEE Trans. Circuits Syst. II: Express Briefs 2024, 71, 1839–1843. [Google Scholar] [CrossRef]

- Von Luxburg, U. A Tutorial on Spectral Clustering. Stat. Comput. 2007, 17, 395–416. [Google Scholar] [CrossRef]

- Chi, Y.; Song, X.; Zhou, D.; Hino, K.; Tseng, B.L. Evolutionary Spectral Clustering by Incorporating Temporal Smoothness. Proc. 13th ACM SIGKDD Int. Conf. Knowl. Disc. Data Mining 2007, pp. 153–162.

- Leskovec, J.; Lang, K.J.; Mahoney, M. Empirical Comparison of Algorithms for Network Community Detection. Proc. 19th Int. World Wide Web Conf. (WWW) 2010, pp. 631–640.

- Siddique, N.; Paheding, S.; Elkin, C.P.; Devabhaktuni, V. U-Net and Its Variants for Medical Image Segmentation: A Review of Theory and Applications. IEEE Access 2021, 9, 82031–82057. [Google Scholar] [CrossRef]

- Borghetti, J.; Snider, G.S.; Kuekes, P.J.; Yang, J.J.; Stewart, D.R.; Williams, R.S. 'Memristive' Switches Enable 'Stateful' Logic Operations via Material Implication. Nature 2010, 464, 873–876. [Google Scholar] [CrossRef] [PubMed]

- Kvatinsky, S.; Belousov, D.; Liman, S.; Satat, G.; Wald, N.; Friedman, E.G.; Kolodny, A.; Weiser, U.C. MAGIC—Memristor-Aided Logic. IEEE Trans. Circuits Syst. II: Express Briefs 2014, 61, 895–899. [Google Scholar] [CrossRef]

- Sun, Z.; Ambrosi, E.; Bricalli, A.; Ielmini, D. Logic Computing with Stateful Neural Networks of Resistive Switches. Adv. Mater. 2018, 30, 1802554. [Google Scholar] [CrossRef]

- Song, Y.; Wang, X.; Wu, Q.; Yang, F.; Wang, C.; Wang, M.; Miao, X. Reconfigurable and Efficient Implementation of 16 Boolean Logics and Full-Adder Functions with Memristor Crossbar for Beyond von Neumann In-Memory Computing. Adv. Sci. 2022, 9, 2200036. [Google Scholar] [CrossRef]

- Miao, Z.; Li, Y.; Wang, S.; Sun, Z. Hybrid Quantum and In-Memory Computing for Accelerating Solving Simon's Problem. APL Mach. Learn. 2025, 3, 026117. [Google Scholar] [CrossRef]

- Kim, Y.R.; Shin, D.H.; Ghenzi, N.; Cheong, S.; Cho, J.M.; Shim, S.K.; Lee, J.K.; Kim, B.S.; Yim, S.; Park, T.; et al. Fully Resistive In-Memory Cryptographic Engine with Dual-Polarity Memristive Crossbar Array. Adv. Funct. Mater. 2025, 35, 2414332. [Google Scholar] [CrossRef]

- Ben-Hur, R.; Ronen, R.; Haj-Ali, A.; Bhattacharjee, D.; Eliahu, A.; Peled, N.; Kvatinsky, S. SIMPLER MAGIC: Synthesis and Mapping of In-Memory Logic Executed in a Single Row to Improve Throughput. IEEE Trans. Computer-Aided Des. Integr. Circuits Syst. 2019, 39, 2434–2447. [Google Scholar] [CrossRef]

- Imani, M.; Gupta, S.; Kim, Y.; Rosing, T. FloatPIM: In-Memory Acceleration of Deep Neural Network Training with High Precision. Proc. Int. Symp. Comput. Arch. (ISCA) 2019, 46, 802–815. [Google Scholar]

- Lu, Z.; Wang, X.; Arafin, M.T.; Yang, H.; Liu, Z.; Zhang, J.; Qu, G. An RRAM-Based Computing-in-Memory Architecture and Its Application in Accelerating Transformer Inference. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 2023, 32, 485–496. [Google Scholar] [CrossRef]

- Imani, M.; Gupta, S.; Rosing, T. Digital-Based Processing in-Memory: A Highly-Parallel Accelerator for Data Intensive Applications. Proc. Int. Symp. Memory Syst. 2019, pp. 38–40.

- Leitersdorf, O.; Ronen, R.; Kvatinsky, S. Making Memristive Processing-in-Memory Reliable. Proc. IEEE Int. Conf. Electron. Circuits Syst. (ICECS) 2021, pp. 1–6.

- Leitersdorf, O.; Ronen, R.; Kvatinsky, S. ConvPIM: Evaluating Digital Processing-in-Memory through Convolutional Neural Network Acceleration. arXiv 2025, arXiv:2305.04122. [Google Scholar]

- Lu, Z.; Arafin, M.T.; Qu, G. RIME: A Scalable and Energy-Efficient Processing-in-Memory Architecture for Floating-Point Operations. Proc. 26th Asia South Pac. Des. Autom. Conf. (ASP-DAC) 2021, pp. 120–125.

- Jin, H.; Liu, C.; Liu, H.; Luo, R.; Xu, J.; Mao, F.; Liao, X. ReHy: A ReRAM-Based Digital/Analog Hybrid PIM Architecture for Accelerating CNN Training. IEEE Trans. Parallel Distrib. Syst. 2021, 33, 2872–2884. [Google Scholar] [CrossRef]

- Imani, M.; Pampana, S.; Gupta, S.; Zhou, M.; Kim, Y.; Rosing, T. DUAL: Acceleration of Clustering Algorithms Using Digital-Based Processing in-Memory. Proc. 53rd IEEE/ACM Int. Symp. Microarchitecture (MICRO) 2020, pp. 356–371.

- Rashed, M.R.H.; Jha, S.K.; Ewetz, R. Hybrid Analog-Digital In-Memory Computing. Proc. IEEE/ACM Int. Conf. Computer Aided Des. (ICCAD) 2021, pp. 1–9.

- Li, Y.; Wang, S.; Yang, K.; Yang, Y.; Sun, Z. An Emergent Attractor Network in a Passive Resistive Switching Circuit. Nat. Commun. 2024, 15, 7683. [Google Scholar] [CrossRef]

- Hu, S.G.; Liu, Y.; Liu, Z.; Chen, T.P.; Wang, J.J.; Yu, Q.; Deng, L.J.; Yin, Y.; Hosaka, S. Associative Memory Realized by a Reconfigurable Memristive Hopfield Neural Network. Nat. Commun. 2015, 6, 7522. [Google Scholar] [CrossRef]

- Cai, F.; Kumar, S.; Van Vaerenbergh, T.; Sheng, X.; Liu, R.; Li, C.; Liu, Z.; Foltin, M.; Yu, S.; Xia, Q.; et al. Power-Efficient Combinatorial Optimization Using Intrinsic Noise in Memristor Hopfield Neural Networks. Nat. Electron. 2020, 3, 409–418. [Google Scholar] [CrossRef]

- Zhou, Y.; Wu, H.; Gao, B.; Wu, W.; Xi, Y.; Yao, P.; Zhang, S.; Zhang, Q.; Qian, H. Associative Memory for Image Recovery with a High-Performance Memristor Array. Adv. Funct. Mater. 2019, 29, 1900155. [Google Scholar]

- Liang, X.; Tang, J.; Zhong, Y.; Gao, B.; Qian, H.; Wu, H. Physical Reservoir Computing with Emerging Electronics. Nat. Electron. 2024, 7, 193–206. [Google Scholar] [CrossRef]

- Jang, Y.H.; Han, J.K.; Hwang, C.S. A Review of Memristive Reservoir Computing for Temporal Data Processing and Sensing. InfoScience 2024, 1, e12013. [Google Scholar] [CrossRef]

- Moon, J.; Ma, W.; Shin, J.H.; Cai, F.; Du, C.; Lee, S.H.; Lu, W.D. Temporal Data Classification and Forecasting Using a Memristor-Based Reservoir Computing System. Nat. Electron. 2019, 2, 480–487. [Google Scholar] [CrossRef]

- Du, C.; Cai, F.; Zidan, M.A.; Ma, W.; Lee, S.H.; Lu, W.D. Reservoir Computing Using Dynamic Memristors for Temporal Information Processing. Nat. Commun. 2017, 8, 2204. [Google Scholar] [CrossRef]

- Zhong, Y.; Tang, J.; Li, X.; Gao, B.; Qian, H.; Wu, H. Dynamic Memristor-Based Reservoir Computing for High-Efficiency Temporal Signal Processing. Nat. Commun. 2021, 12, 408. [Google Scholar]

- Zhong, Y.; Tang, J.; Li, X.; Liang, X.; Liu, Z.; Li, Y.; Xi, Y.; Yao, P.; Hao, Z.; Gao, B.; et al. A Memristor-Based Analogue Reservoir Computing System for Real-Time and Power-Efficient Signal Processing. Nat. Electron. 2022, 5, 672–681. [Google Scholar] [CrossRef]

- Zhang, T.; Wozniak, S.; Syed, G.S.; Mannocci, P.; Farronato, M.; Ielmini, D.; Sebastian, A.; Yang, Y. Emerging Materials and Computing Paradigms for Temporal Signal Analysis. Adv. Mater. 2025, 37, 2408566. [Google Scholar] [CrossRef] [PubMed]

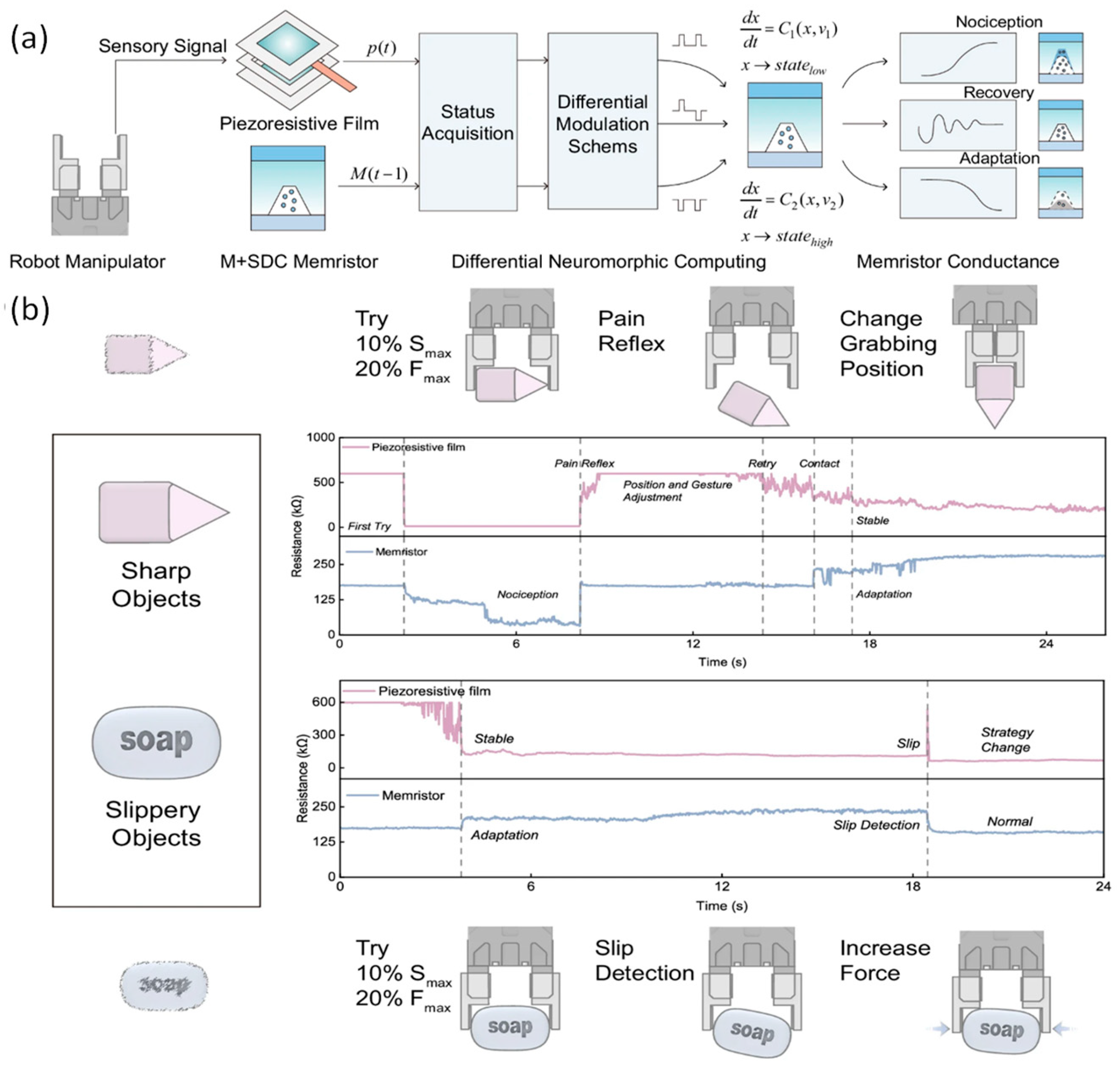

- Wang, S.; Gao, S.; Tang, C.; Occhipinti, E.; Li, C.; Wang, S.; Wang, J.; Zhao, H.; Hu, G.; Nathan, A.; Dahiya, R.; et al. Memristor-Based Adaptive Neuromorphic Perception in Unstructured Environments. Nat. Commun. 2024, 15, 4671. [Google Scholar] [CrossRef]

- Wang, Y.; Gong, Y.; Huang, S.; Xing, X.; Lv, Z.; Wang, J.; Yang, J.Q.; Zhang, G.; Zhou, Y.; Han, S.T. Memristor-Based Biomimetic Compound Eye for Real-Time Collision Detection. Nat. Commun. 2021, 12, 5979. [Google Scholar] [CrossRef] [PubMed]

- Jang, Y.H.; Kim, W.; Kim, J.; Woo, K.S.; Lee, H.J.; Jeon, J.W.; Shim, S.K.; Han, J.; Hwang, C.S. Time-Varying Data Processing with Nonvolatile Memristor-Based Temporal Kernel. Nat. Commun. 2021, 12, 5727. [Google Scholar] [CrossRef]

- Yoon, R.; Oh, S.; Cho, S.; Min, K.S. Memristor–CMOS Hybrid Circuits Implementing Event-Driven Neural Networks for Dynamic Vision Sensor Camera. Micromachines 2024, 15, 426. [Google Scholar] [CrossRef]

- D'Agostino, S.; Moro, F.; Torchet, T.; Demirağ, Y.; Grenouillet, L.; Castellani, N.; Indiveri, G.; Vianello, E.; Payvand, M. DenRAM: Neuromorphic Dendritic Architecture with RRAM for Efficient Temporal Processing with Delays. Nat. Commun. 2024, 15, 3446. [Google Scholar] [CrossRef] [PubMed]

- Wu, X.; Dang, B.; Zhang, T.; Wu, X.; Yang, Y. Spatiotemporal Audio Feature Extraction with Dynamic Memristor-Based Time-Surface Neurons. Sci. Adv. 2024, 10, eadl2767. [Google Scholar]

- Milozzi, A.; Ricci, S.; Ielmini, D. Memristive Tonotopic Mapping with Volatile Resistive Switching Memory Devices. Nat. Commun. 2024, 15, 2812. [Google Scholar] [CrossRef] [PubMed]

- Ji, X.; Paulsen, B.D.; Chik, G.K.K.; Wu, R.; Yin, Y.; Chan, P.K.L.; Rivnay, J. Mimicking Associative Learning Using an Ion-Trapping Non-Volatile Synaptic Organic Electrochemical Transistor. Nat. Commun. 2021, 12, 2480. [Google Scholar]

- Bi, G.Q.; Poo, M.M. Synaptic Modifications in Cultured Hippocampal Neurons: Dependence on Spike Timing, Synaptic Strength, and Postsynaptic Cell Type. J. Neurosci. 1998, 18, 10464–10472. [Google Scholar] [CrossRef] [PubMed]

- Roy, K.; Jaiswal, A.; Panda, P. Towards Spike-Based Machine Intelligence with Neuromorphic Computing. Nature 2019, 575, 607–617. [Google Scholar] [CrossRef]

- Ignatov, M.; Ziegler, M.; Hansen, M.; Kohlstedt, H. Memristive Stochastic Plasticity Enables Mimicking of Neural Synchrony: Memristive Circuit Emulates an Optical Illusion. Sci. Adv. 2017, 3, e1700849. [Google Scholar] [CrossRef]

- Jo, S.H.; Chang, T.; Ebong, I.; Bhadviya, B.B.; Mazumder, P.; Lu, W. Nanoscale Memristor Device as Synapse in Neuromorphic Systems. Nano Lett. 2010, 10, 1297–1301. [Google Scholar] [CrossRef]

- Weilenmann, C.; Ziogas, A.N.; Zellweger, T.; Portner, K.; Mladenović, M.; Kaniselvan, M.; Moraitis, T.; Luisier, M.; Emboras, A. Single Neuromorphic Memristor Closely Emulates Multiple Synaptic Mechanisms for Energy Efficient Neural Networks. Nat. Commun. 2024, 15, 6898. [Google Scholar] [CrossRef]

- Midya, R.; Pawar, A.S.; Pattnaik, D.P.; Mooshagian, E.; Borisov, P.; Albright, T.D.; Snyder, L.H.; Williams, R.S.; Yang, J.J.; Balanov, A.G.; et al. Artificial Transneurons Emulate Neuronal Activity in Different Areas of Brain Cortex. Nat. Commun. 2025, 16, 7289. [Google Scholar] [CrossRef]

- Ambrogio, S.; Balatti, S.; Milo, V.; Carboni, R.; Wang, Z.Q.; Calderoni, A.; Ramaswamy, N.; Ielmini, D. Neuromorphic Learning and Recognition with One-Transistor-One-Resistor Synapses and Bistable Metal Oxide RRAM. IEEE Trans. Electron Devices 2016, 63, 1508–1515. [Google Scholar] [CrossRef]

- Indiveri, G.; Linares-Barranco, B.; Legenstein, R.; Deligeorgis, G.; Prodromakis, T. Integration of Nanoscale Memristor Synapses in Neuromorphic Computing Architectures. Nanotechnology 2013, 24, 384010. [Google Scholar] [CrossRef] [PubMed]

- Ohno, T.; Hasegawa, T.; Tsuruoka, T.; Terabe, K.; Gimzewski, J.K.; Aono, M. Short-Term Plasticity and Long-Term Potentiation Mimicked in Single Inorganic Synapses. Nat. Mater. 2011, 10, 591–595. [Google Scholar] [CrossRef]

- Boybat, I.; Le Gallo, M.; Nandakumar, S.R.; Moraitis, T.; Parnell, T.; Tuma, T.; Rajendran, B.; Leblebici, Y.; Sebastian, A.; Eleftheriou, E. Neuromorphic Computing with Multi-Memristive Synapses. Nat. Commun. 2018, 9, 2514. [Google Scholar] [CrossRef]

- Serrano-Gotarredona, T.; Masquelier, T.; Prodromakis, T.; Indiveri, G.; Linares-Barranco, B. STDP and STDP Variations with Memristors for Spiking Neuromorphic Learning Systems. Front. Neurosci. 2013, 7, 2. [Google Scholar] [CrossRef]

- Kheradpisheh, S.R.; Ganjtabesh, M.; Thorpe, S.J.; Masquelier, T. STDP-Based Spiking Deep Convolutional Neural Networks for Object Recognition. Neural Netw. 2018, 99, 56–67. [Google Scholar] [CrossRef] [PubMed]

- Prezioso, M.; Mahmoodi, M.R.; Merrikh Bayat, F.; Nili, H.; Kim, H.; Vincent, A.; Strukov, D.B. Spike-Timing-Dependent Plasticity Learning of Coincidence Detection with Passively Integrated Memristive Circuits. Nat. Commun. 2018, 9, 5311. [Google Scholar] [CrossRef] [PubMed]

- Yang, R.; Huang, H.M.; Hong, Q.H.; Yin, X.B.; Tan, Z.H.; Shi, T.; Zhou, Y.X.; Miao, X.S.; Wang, X.P.; Mi, S.B.; et al. Synaptic Suppression Triplet-STDP Learning Rule Realized in Second-Order Memristors. Adv. Funct. Mater. 2018, 28, 1704455. [Google Scholar] [CrossRef]

- Pedretti, G.; Milo, V.; Ambrogio, S.; Carboni, R.; Bianchi, S.; Calderoni, A.; Ramaswamy, N.; Ielmini, D. Memristive Neural Network for On-Line Learning and Tracking with Brain-Inspired Spike Timing Dependent Plasticity. Sci. Rep. 2017, 7, 5288. [Google Scholar] [CrossRef]

- Ding, C.; Ren, Y.; Liu, Z.; Wong, N. Transforming Memristor Noises into Computational Innovations. Commun. Mater. 2025, 6, 149. [Google Scholar] [CrossRef]

- Woo, K.S.; Kim, J.; Han, J.; Kim, W.; Jang, Y.H.; Hwang, C.S. Probabilistic Computing Using Cu₀.₁Te₀.₉/HfO₂/Pt Diffusive Memristors. Nat. Commun. 2022, 13, 5762. [Google Scholar] [CrossRef]

- Wang, K.; Hu, Q.; Gao, B.; Lin, Q.; Zhuge, F.; Zhang, D.; Wang, L.; He, Y.; Scheicher, R.H.; Tong, H.; Miao, X. Threshold Switching Memristor-Based Stochastic Neurons for Probabilistic Computing. Mater. Horiz. 2021, 8, 619–629. [Google Scholar] [CrossRef]

- Rhee, H.; Kim, G.; Song, H.; Park, W.; Kim, D.H.; In, J.H.; Lee, Y.; Kim, K.M. Probabilistic Computing with NbOₓ Metal-Insulator Transition-Based Self-Oscillatory pbit. Nat. Commun. 2023, 14, 7199. [Google Scholar] [CrossRef]

- Song, L.; Liu, P.; Pei, J.; Liu, Y.; Liu, S.; Wang, S.; Ng, L.W.T.; Hasan, T.; Pun, K.; Gao, S. Lightweight Error-Tolerant Edge Detection Using Memristor-Enabled Stochastic Computing. Nat. Commun. 2025, 16, 4550. [Google Scholar] [CrossRef]

- Lin, Y.; Gao, B.; Tang, J.; Zhang, Q.; Qian, H.; Wu, H. Deep Bayesian Active Learning Using In-Memory Computing Hardware. Nat. Comput. Sci. 2025, 5, 27–36. [Google Scholar] [CrossRef] [PubMed]

- Mahmoodi, M.R.; Prezioso, M.; Strukov, D.B. Versatile Stochastic Dot Product Circuits Based on Nonvolatile Memories for High Performance Neurocomputing and Neurooptimization. Nat. Commun. 2019, 10, 5113. [Google Scholar] [CrossRef]

- Lin, Y.; Zhang, Q.; Gao, B.; Tang, J.; Yao, P.; Li, C.; Huang, S.; Liu, Z.; Zhou, Y.; Liu, Y.; et al. Uncertainty Quantification via a Memristor Bayesian Deep Neural Network for Risk-Sensitive Reinforcement Learning. Nat. Mach. Intell. 2023, 5, 714–723. [Google Scholar] [CrossRef]

- Li, X.; Gao, B.; Qin, Q.; Yao, P.; Li, J.; Zhao, H.; Liu, C.; Zhang, Q.; Hao, Z.; Li, Y.; et al. Federated Learning Using a Memristor Compute-in-Memory Chip with In Situ Physical Unclonable Function and True Random Number Generator. Nat. Electron. 2025, 8, 518–528. [Google Scholar] [CrossRef]

- Gao, D.; Huang, Q.; Zhang, G.L.; Yin, X.; Li, B.; Schlichtmann, U.; Zhuo, C. Bayesian Inference Based Robust Computing on Memristor Crossbar. Proc. 58th Des. Autom. Conf. (DAC) 2021, pp. 121–126.

- Lin, Y.; Zhang, Q.; Tang, J.; Gao, B.; Li, C.; Yao, P.; Liu, Z.; Zhu, J.; Lu, J.; Hu, X.S.; et al. Bayesian Neural Network Realization by Exploiting Inherent Stochastic Characteristics of Analog RRAM. Proc. IEEE Int. Electron Devices Meet. (IEDM) 2019, pp. 14.6.1–14.6.4.

- Bonnet, D.; Hirtzlin, T.; Majumdar, A.; Dalgaty, T.; Esmanhotto, E.; Meli, V.; Castellani, N.; Martin, S.; Nodin, J.F.; Bourgeois, G.; et al. Bringing Uncertainty Quantification to the Extreme-Edge with Memristor-Based Bayesian Neural Networks. Nat. Commun. 2023, 14, 7530. [Google Scholar] [CrossRef]

- Gao, D.; Yang, Z.; Huang, Q.; Zhang, G.L.; Yin, X.; Li, B.; Schlichtmann, U.; Zhuo, C. BRoCoM: A Bayesian Framework for Robust Computing on Memristor Crossbar. IEEE Trans. Computer-Aided Des. Integr. Circuits Syst. 2022, 42, 2136–2148. [Google Scholar] [CrossRef]

- Shi, L.; Zheng, G.; Tian, B.; Dkhil, B.; Duan, C. Research Progress on Solutions to the Sneak Path Issue in Memristor Crossbar Arrays. Nanoscale Adv. 2020, 2, 1811–1827. [Google Scholar] [CrossRef] [PubMed]

- Zolfagharinejad, M.; Büchel, J.; Cassola, L.; Kinge, S.; Syed, G.S.; Sebastian, A.; van der Wiel, W.G. Analogue Speech Recognition Based on Physical Computing. Nature 2025, 645, 886–892. [Google Scholar] [CrossRef] [PubMed]

- Lanza, M.; Waser, R.; Ielmini, D.; Yang, J.J.; Goux, L.; Suñé, J.; Kenyon, A.J.; Mehonic, A.; Spiga, S.; Rana, V.; et al. Standards for the Characterization of Endurance in Resistive Switching Devices. ACS Nano 2021, 15, 17214–17231. [Google Scholar] [CrossRef]

- Park, S.O.; Jeong, H.; Park, J.; Bae, J.; Choi, S. Experimental Demonstration of Highly Reliable Dynamic Memristor for Artificial Neuron and Neuromorphic Computing. Nat. Commun. 2022, 13, 2888. [Google Scholar] [CrossRef] [PubMed]

- Wu, Y.; Wang, Q.; Wang, Z.; Wang, X.; Ayyagari, B.; Krishnan, S.; Chudzik, M.; Lu, W.D. Bulk-Switching Memristor-Based Compute-In-Memory Module for Deep Neural Network Training. Adv. Mater. 2023, 35, 2305465. [Google Scholar] [CrossRef] [PubMed]

- Liu, C.; Tiw, P.J.; Zhang, T.; Wang, Y.; Cai, L.; Yuan, R.; Pan, Z.; Yue, W.; Tao, Y.; Yang, Y. VO₂ Memristor-Based Frequency Converter with In-Situ Synthesize and Mix for Wireless Internet-of-Things. Nat. Commun. 2024, 15, 1523. [Google Scholar] [CrossRef] [PubMed]

| Application | Typical device | Key feature | Conductance states | Randomness role | Endurance stress | Peripheral criticality |

|---|---|---|---|---|---|---|

| MVM | Nonvolatile memristor, long-term retention |

Highly parallel dot-products | Multilevel or analog | Harmful | Low (inference), high (training) | High (DACs/ADCs, drivers) |

| Analog matrix-equation solving | Closed-loop for matrix inversion/ pseudoinversion | High (second-order training), low (other tasks) | High (DACs/ADCs, drivers, op-amp) | |||

| Stateful logic | Deterministic SET/RESET, binary | Binary or discretized multilevel | High (frequency switching) | Medium (pulse drivers, selectors) | ||

| Attractor networks | ||||||

| Stochastic computing — continuous noisy weights | analog statistical conductance | Multilevel | Beneficial | Medium (sampling circuitry) | ||

| Reservoir computing & spatiotemporal detection | Volatile memristors, short-term retention | Fading memory, nonlinear I–V, tunable τ | Binary or discretized multilevel | Beneficial | Medium (analog readout) | |

| STDP | Pulse-induced incremental updates | Analog weight changes | Neutral / mildly beneficial | High (pulse timing, neuron circuits) | ||

| Stochastic computing — p-bit | Probabilistic switching | Binary | Beneficial | Low (sampling circuitry) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.