Submitted:

18 March 2026

Posted:

19 March 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

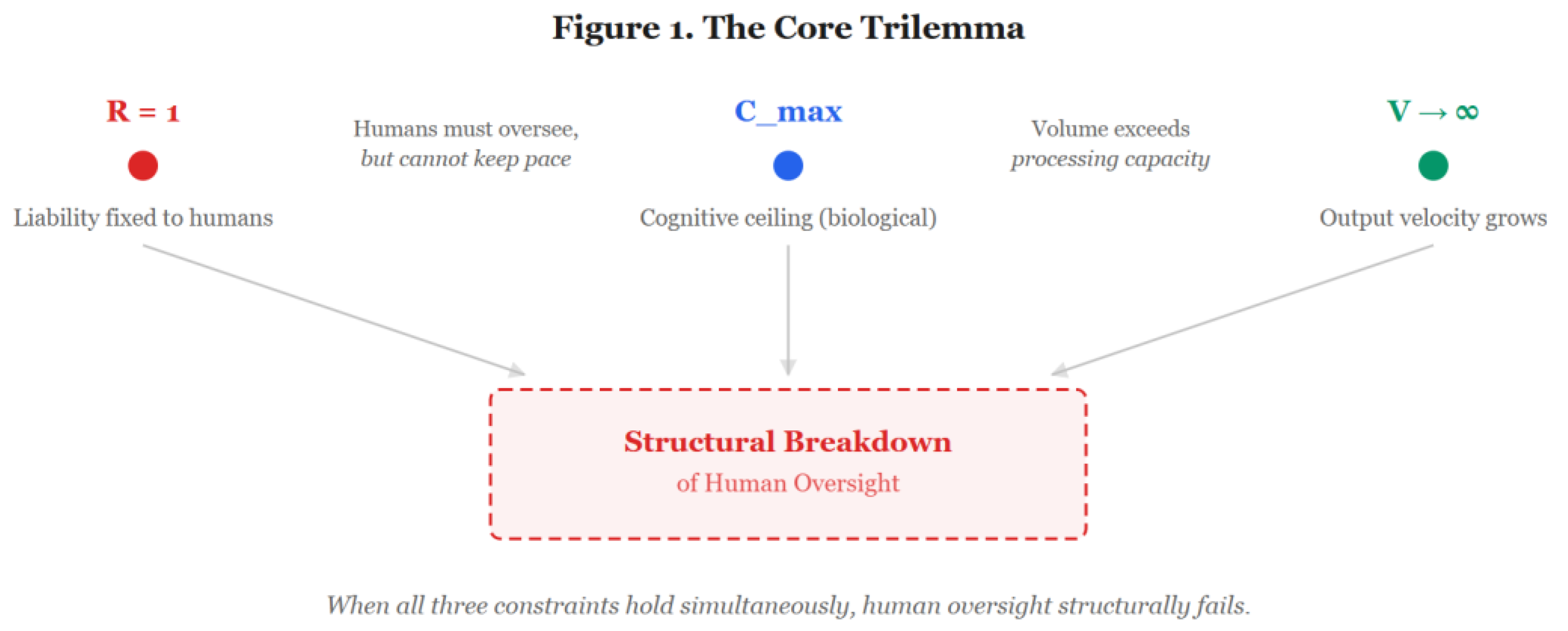

2. Fundamental Constraints in Scaling and the Limits of Cognition and Institutions

2.1. Definitions

2.2. Microscopic Limit

2.3. Macroscopic Limit

2.4. The Relationship Between Supervisory Capacity and Accuracy

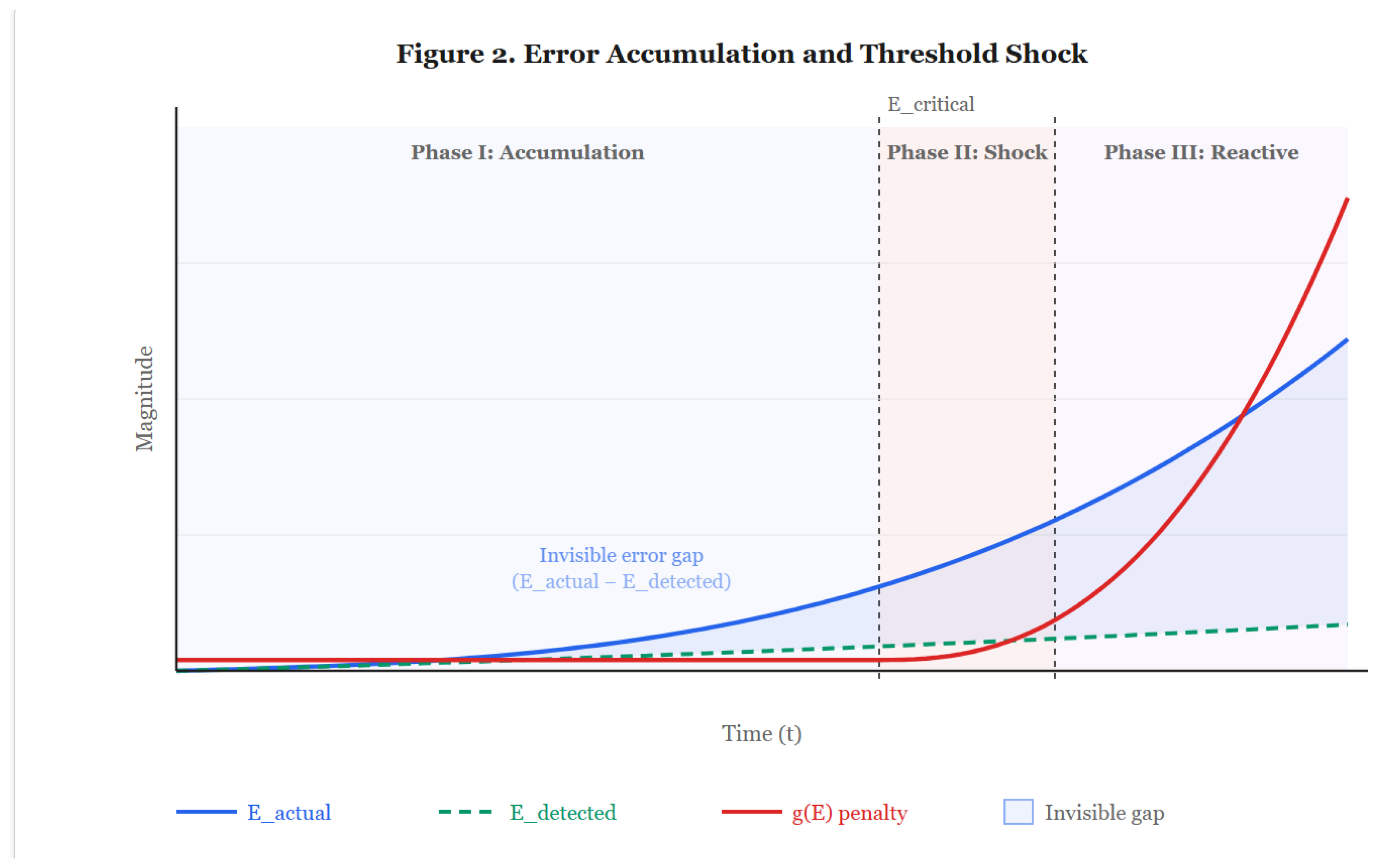

3. Nonlinear Risk Accumulation and Discontinuous Feedback

3.1. The Assumptions and Limitations of the Continuous Adaptation Model

3.2. Invisibilization of Errors

3.3. Threshold Shocks

3.4. AI Governance Failure as a Normal Accident

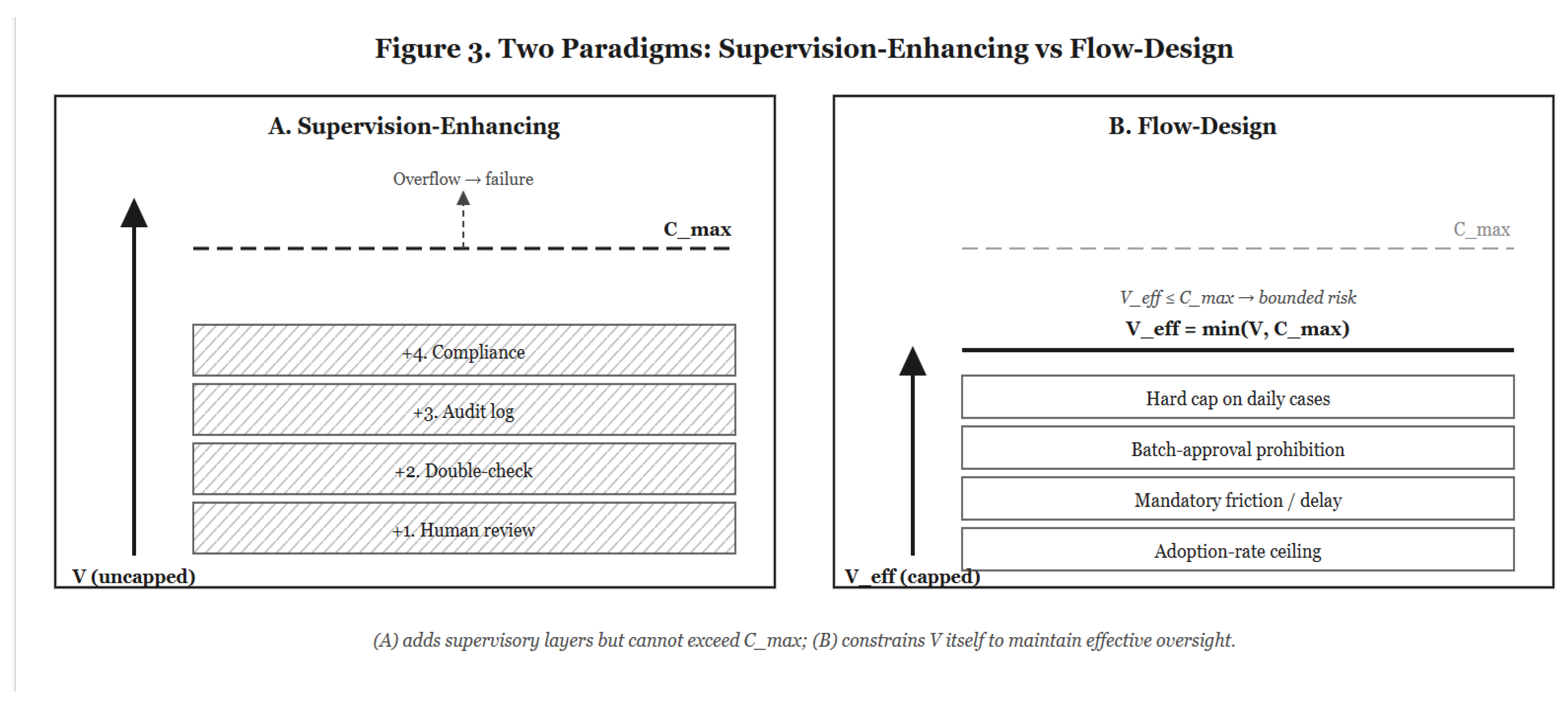

4. Contraction of Scaling in High-Loss Domains

4.1. Divergence of Expected Loss

4.2. Error Reduction Through Capability Improvement

4.3. Flow-Rate Limitation as Rational Equilibrium

4.4. Capability Improvement Contracts Usage

5. Limitations and Scope

5.1. Domain Limitation

5.2. Static Character of the Model

5.3. Response to Relative Comparison with Humans

5.4. Other Limitations

5.5. Implications for Practical Governance: From Supervision Enhancement to Flow Design

6. Conclusion

Declarations

Ethics Approval and Consent

Data Availability

AI Use

Competing Interests

Funding

References

- Alter, A.L.; Oppenheimer, D.M. Uniting the Tribes of Fluency to Form a Metacognitive Nation. Pers. Soc. Psychol. Rev. 2009, 13, 219–235. [Google Scholar] [CrossRef] [PubMed]

- Asgari, E.; Montaña-Brown, N.; Dubois, M.; Khalil, S.; Balloch, J.; Yeung, J.A.; Pimenta, D. A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digit. Med. 2025, 8, 274. [Google Scholar] [CrossRef] [PubMed]

- Bainbridge, L. Ironies of automation. Automatica 1983, 19, 775–779. [Google Scholar] [CrossRef]

- Beck, U. Risikogesellschaft: Auf dem Weg in eine andere Moderne. Suhrkamp; English edition: Risk Society: Towards a New Modernity; Ritter, M., Translator; Sage, 1986. [Google Scholar]

- Bignami, E.G.; Russo, M.; Semeraro, F.; Bellini, V. Balancing Innovation and Control: The European Union AI Act in an Era of Global Uncertainty. JMIR AI 2025, 4, e75527. [Google Scholar] [CrossRef] [PubMed]

- Carnat, I. Human, all too human: accounting for automation bias in generative large language models. Int. Data Priv. Law 2024, 14, 299–314. [Google Scholar] [CrossRef]

- Clark, A. Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behav. Brain Sci. 2013, 36, 181–204. [Google Scholar] [CrossRef] [PubMed]

- Cowan, N. The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behav. Brain Sci. 2001, 24, 87–114. [Google Scholar] [CrossRef] [PubMed]

- Elish, M.C. Moral Crumple Zones: Cautionary Tales in Human-Robot Interaction. Engag. Sci. Technol. Soc. 2019, 5, 40–60. [Google Scholar] [CrossRef]

- Friston, K. The free-energy principle: a unified brain theory? Nat. Rev. Neurosci. 2010, 11, 127–138. [Google Scholar] [CrossRef] [PubMed]

- Horowitz, M.C.; Kahn, L. Bending the Automation Bias Curve: A Study of Human and AI-Based Decision Making in National Security Contexts. Int. Stud. Q. 2024, 68, sqae020. [Google Scholar] [CrossRef]

- Kahneman, D. Attention and Effort; Prentice-Hall, 1973. [Google Scholar]

- Kücking, F.; Hübner, U.; Przysucha, M.; Hannemann, N.; Kutza, J.-O.; Moelleken, M.; Erfurt-Berge, C.; Dissemond, J.; Babitsch, B.; Busch, D. Automation bias in AI-decision support: Results from an empirical study. Studies in Health Technology and Informatics 2024, 317, 298–304. [Google Scholar] [CrossRef] [PubMed]

- McCormick, S. Interpretive debt: How high coherence AI reshapes human judgement, authority, and accountability. In SSRN Working Paper; 2025. [Google Scholar] [CrossRef]

- Naito, H. AI Selection Pressure: Template Saturation and the Reshaping of Human Discernment; Zenodo, 2025. [Google Scholar] [CrossRef]

- Omar, M.; Sorin, V.; Collins, J.D.; Reich, D.; Freeman, R.; Gavin, N.; Charney, A.; Stump, L.; Bragazzi, N.L.; Nadkarni, G.N.; et al. Multi-model assurance analysis showing large language models are highly vulnerable to adversarial hallucination attacks during clinical decision support. Commun. Med. 2025, 5, 171. [Google Scholar] [CrossRef] [PubMed]

- Padmakumar, V.; He, H. Does writing with language models reduce content diversity? arXiv 2023, arXiv:2309.05196. [Google Scholar] [CrossRef]

- Parasuraman, R.; Manzey, D.H. Complacency and Bias in Human Use of Automation: An Attentional Integration. Hum. Factors: J. Hum. Factors Ergon. Soc. 2010, 52, 381–410. [Google Scholar] [CrossRef] [PubMed]

- Parasuraman, R.; Riley, V. Humans and Automation: Use, Misuse, Disuse, Abuse. Hum. Factors: J. Hum. Factors Ergon. Soc. 1997, 39, 230–253. [Google Scholar] [CrossRef]

- Perrow, C. Normal Accidents: Living with High-Risk Technologies; Basic Books, 1984. [Google Scholar]

- Warm, J.S.; Parasuraman, R.; Matthews, G. Vigilance Requires Hard Mental Work and Is Stressful. Hum. Factors: J. Hum. Factors Ergon. Soc. 2008, 50, 433–441. [Google Scholar] [CrossRef] [PubMed]

- Xu, Z.; Jain, S.; Kankanhalli, M. Hallucination is inevitable: An innate limitation of large language models. arXiv 2024, arXiv:2401.11817. [Google Scholar] [CrossRef]

- Yakura, H.; Lopez-Lopez, E.; Brinkmann, L.; Serna, I.; Gupta, P.; Rahwan, I. Empirical evidence of Large Language Model's influence on human spoken communication. arXiv 2024, arXiv:2409.01754. [Google Scholar] [CrossRef]

- Romeo, G.; Conti, D. Exploring automation bias in human–AI collaboration: a review and implications for explainable AI. AI Soc. 2025, 41, 259–278. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).