Submitted:

02 March 2026

Posted:

18 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. The Role of Pauses in Interpreting Fluency and the Evolution of Technical Analysis

2.2. Debates on Pause Thresholds and the Refinement of Quantitative Methodologies

2.3. Cognitive Load and Pause Patterns

2.4. Limitations of Fixed Thresholds

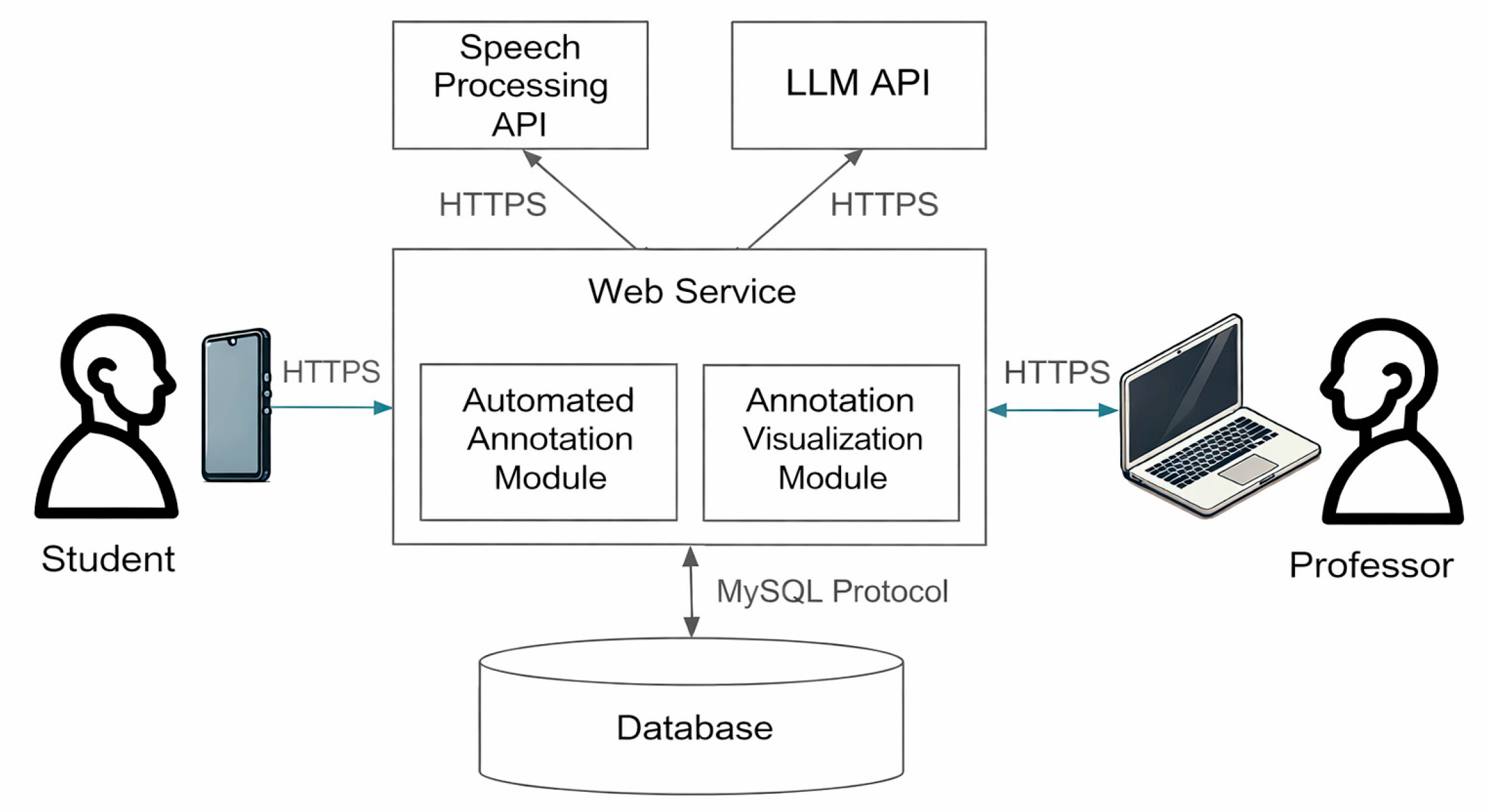

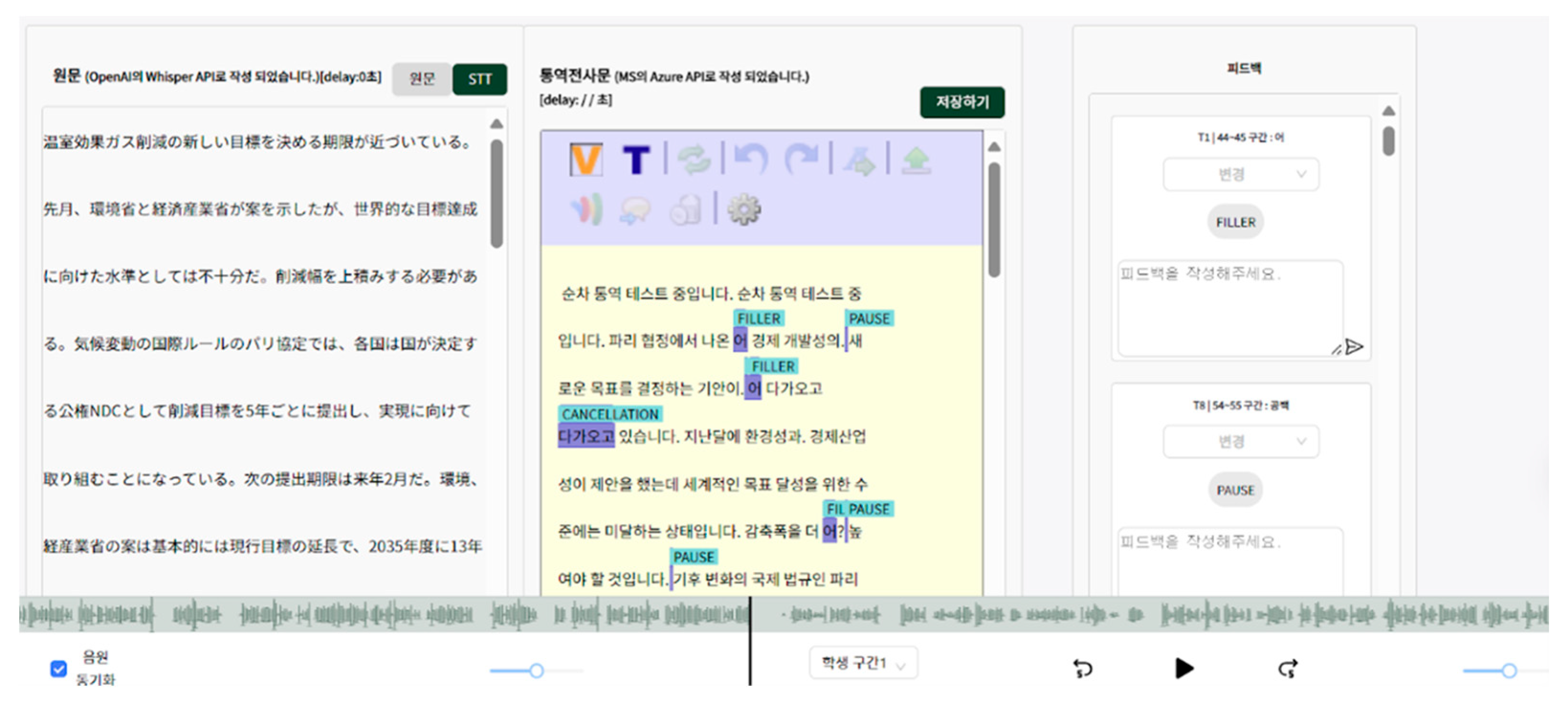

3. TalkTrack System

3.1. TalkTrack Platform Architecture

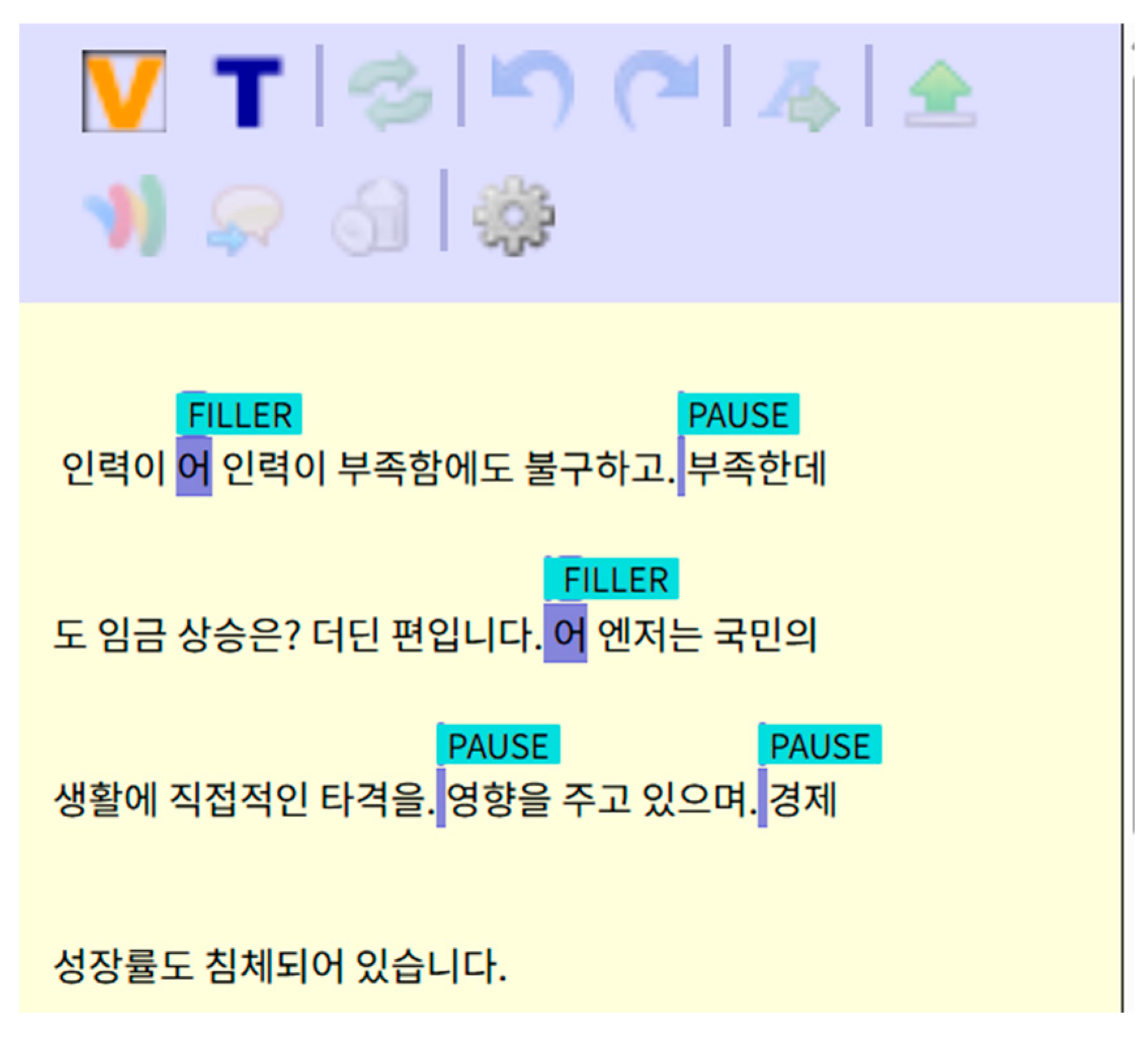

3.2. Automatic Annotation and Fluency Feedback Functionality

4. Methodology

4.1. Data Collection

4.2. Experimental Setting Model

4.3. Model Design

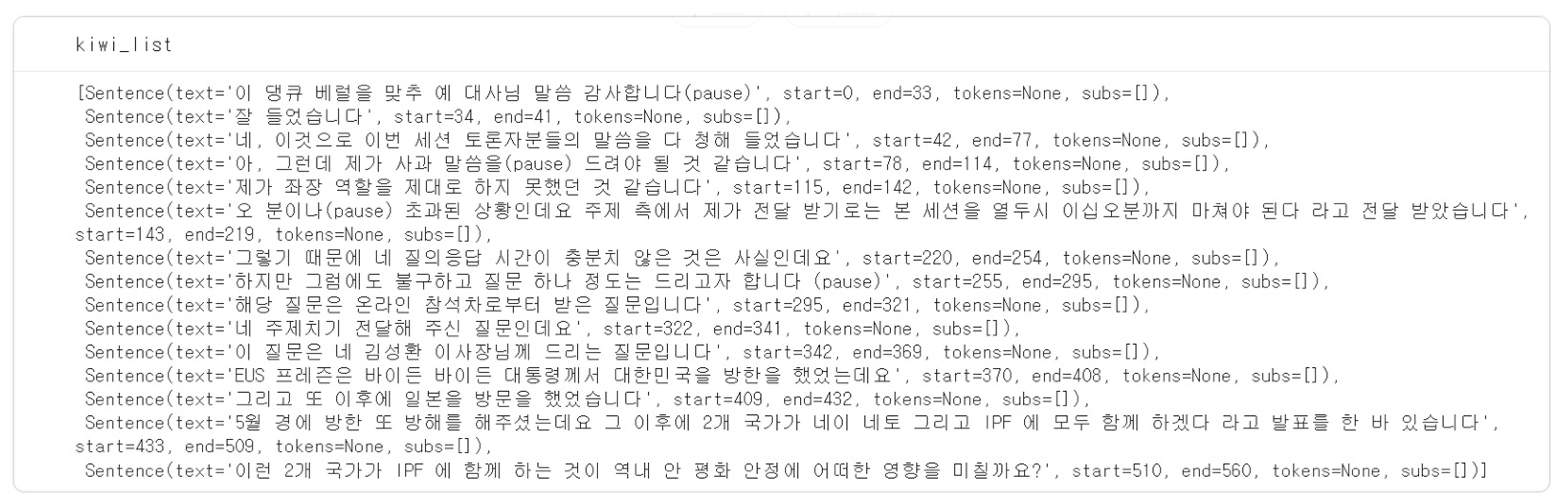

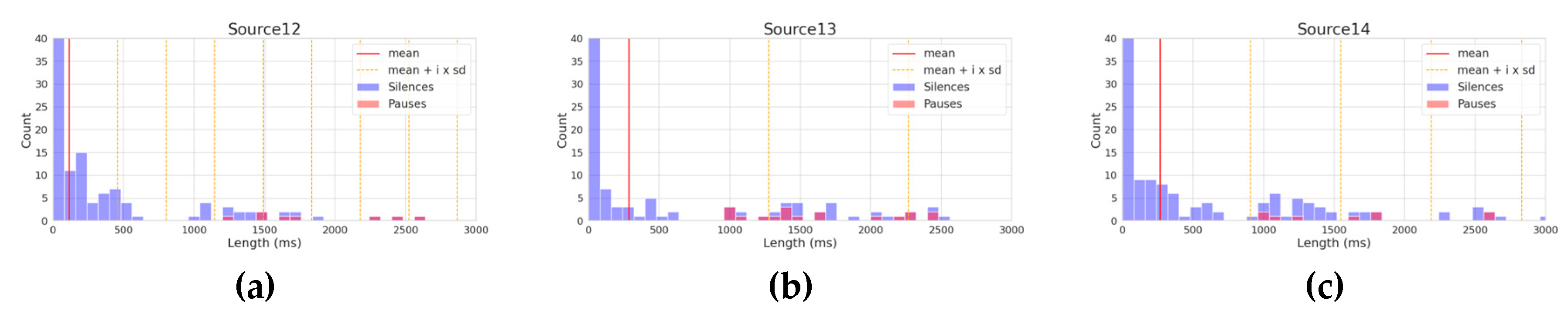

4.3.1. Threshold Setting Based on Statistical Distribution

4.3.2. Anomaly Detection using Isolation Forest(iForest)

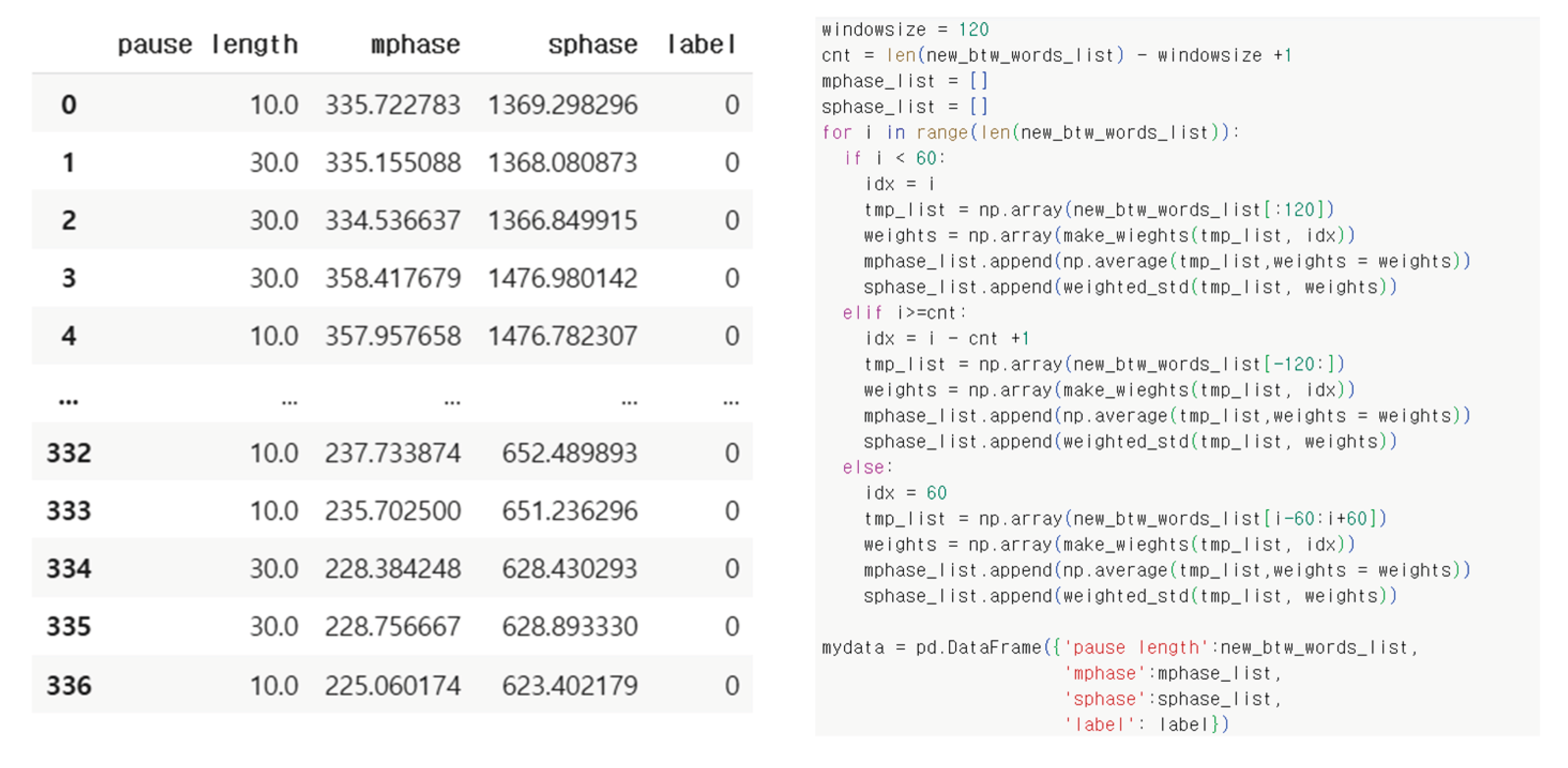

4.3.3. Anomaly Detection Using Sliding Window Technique

5. Results and Discussions

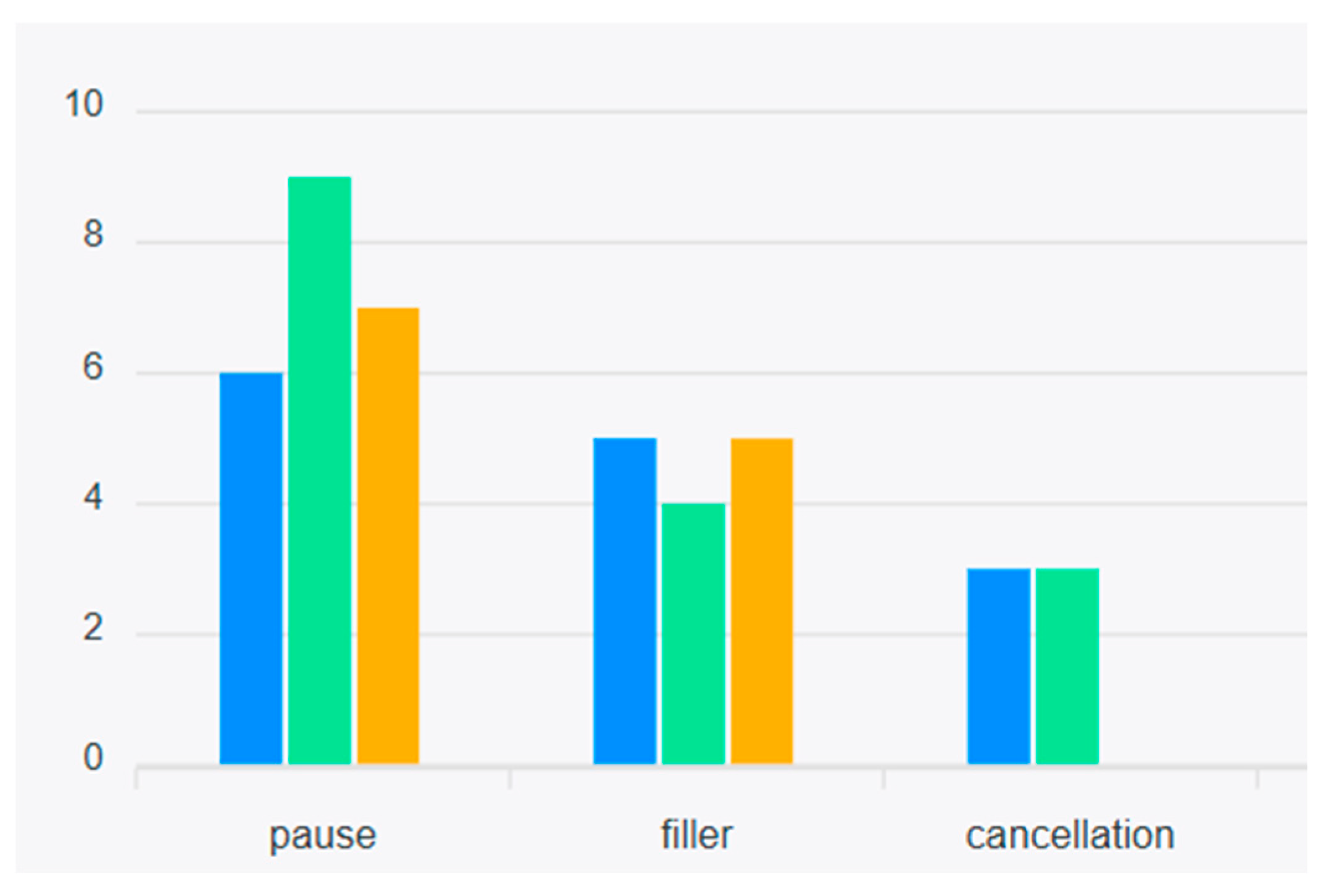

5.1. Comparative Analysis Across All Models

5.2. Per-source Fine-grained Performance Analysis

5.2.1. Fixed-threshold Approach (Baseline)

5.2.2. Adaptive Threshold Based on Statistical Distribution

5.2.3. Isolation Forest

5.2.4. Isolation Forest with Sliding Window

6. Conclusions

7. Future Works

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Glossary

References

- Da, L. Establishing interpreting fluency evaluation criteria based on correlational analysis of speech rate, articulation rate, and mean pause length. Adv. Soc. Behav. Res. 2025, 16, 162–166. [Google Scholar] [CrossRef]

- Dayter, D. Variation in non-fluencies in a corpus of simultaneous interpreting vs. non-interpreted English. Perspectives 2021, 29, 489–506. [Google Scholar] [CrossRef]

- Han, C.; Chen, S.; Fu, R.; Fan, Q. Modeling the relationship between utterance fluency and raters’ perceived fluency of consecutive interpreting. Interpreting 2020, 22, 211–237. [Google Scholar] [CrossRef]

- Han, C.; Yang, L. Relating utterance fluency to perceived fluency of interpreting: A partial replication and a mini meta-analysis. Transl. Interpret. Stud. 2023, 18, 421–447. [Google Scholar] [CrossRef]

- Gósy, M. Occurrences and durations of filled pauses in relation to words and silent pauses in spontaneous speech. Languages 2023, 8, 79. [Google Scholar] [CrossRef]

- Kajzer-Wietrzny, M.; Ivaska, I.; Ferraresi, A. Fluency in rendering numbers in simultaneous interpreting. Interpreting 2024, 26, 1–23. [Google Scholar] [CrossRef]

- Han, C.; An, K. Using unfilled pauses to measure (dis) fluency in English-Chinese consecutive interpreting: In search of an optimal pause threshold (s). Perspectives 2021, 29, 917–933. [Google Scholar] [CrossRef]

- Wang, B.; Li, T. An empirical study of pauses in Chinese-English simultaneous interpreting. Perspectives 2015, 23, 124–142. [Google Scholar] [CrossRef]

- Han, C.; Lu, X. Interpreting quality assessment re-imagined: The synergy between human and machine scoring. Interpret. Soc. 2021, 1, 70–90. [Google Scholar] [CrossRef]

- Wang, X.; Wang, B. Identifying fluency parameters for a machine-learning-based automated interpreting assessment system. Perspectives 2024, 32, 278–294. [Google Scholar] [CrossRef]

- Lee, J.R.A.; Kim, J.D.; Park, H.S.; Park, H.K.; Son, J.B.; Oh, U.; Sang, W.Y.; Kim, S.; Lim, J.W.; Cho, H.S.; et al. Development of an LMS-based automatic annotation authoring tool for computer-assisted interpreter training (CAIT). Interpret. Transl. Stud. 2025, 29, 79–113. [Google Scholar]

- Kim, J.D.; Wang, Y.; Fujiwara, T.; Okuda, S.; Callahan, T.J.; Cohen, K.B. Open Agile text mining for bioinformatics: the PubAnnotation ecosystem. Bioinformatics 2019, 35, 4372–4380. [Google Scholar] [CrossRef] [PubMed]

- Choi, M.S. A comparative analysis of disfluency in consecutive and simultaneous interpreting by trainee interpreters. Interpret. Transl. 2015, 17, 177–207. [Google Scholar]

- Lee, S. Analysis of Factors of disfluency in Japanese Simultaneous Interpretation: Focused on Pause and Filler. J. Transl. Stud. 2021, 22, 205–230. [Google Scholar]

- Fang, J.; Zhang, X. Pause in sight translation: A longitudinal study focusing on training effect. In Diverse Voices in Chinese Translation and Interpreting: Theory and Practice; Springer: Singapore, 2021; pp. 157–189. [Google Scholar]

- Park, S. Measuring Fluency: Temporal Variables and Pausing Patterns in L2 English Speech. Ph.D. Thesis, Purdue University, West Lafayette, IN, USA, 2016. [Google Scholar]

- Leveni, F. Structure-based Anomaly Detection and Clustering. arXiv 2025, arXiv:2505.12751. [Google Scholar] [CrossRef]

- Rennert, S. The impact of fluency on the subjective assessment of interpreting quality. Interpret. Newsl. 2010, 15, 101–115. [Google Scholar]

- Zhang, Q.; Jing, Y. The impact of interpreting students’ gestures and speech content on speech fluency of consecutive interpreting. Front. Psychol. 2025, 16, 1568341. [Google Scholar] [CrossRef]

- Cecot, M. Pauses in simultaneous interpretation: A corpus-based study of professional interpreters’ performance. Interpret. Newsl. 2001, 11, 63–85. [Google Scholar]

- Tissi, B. Silent pauses and disfluencies in simultaneous interpretation: A descriptive analysis. Interpret. Newsl. 2000, 10, 103–127. [Google Scholar]

- Ahrens, B. Pauses (and other prosodic features) in simultaneous interpreting. FORUM Rev. Int. d’interprétation et de traduction/Int. J. Interpret. Transl. 2007, 5, 1–18. [Google Scholar] [CrossRef]

- Christodoulides, G. Prosodic features of simultaneous interpreting. In Proceedings of the Prosody-Discourse Interface Conference, Leuven, Belgium, 11–13 September 2013; pp. 33–37. [Google Scholar]

- Mead, P. Methodological issues in the study of interpreters’ fluency. Interpret. Newsl. 2005, 13, 39–63. [Google Scholar]

- Shreve, G.M.; Lacruz, I.; Angelone, E. Sight translation and speech disfluency. In Methods and Strategies of Process Research; John Benjamins: Amsterdam, The Netherlands, 2011; pp. 93–120. [Google Scholar]

- Toivola, M.; Lennes, M.; Aho, E. Speech rate and pauses in non-native Finnish. In Proceedings of the INTERSPEECH 2009, Brighton, UK, 6–10 September 2009; pp. 1707–1710. [Google Scholar]

- Duez, D. Perception of silent pauses in continuous speech. Lang. Speech 1985, 28, 377–389. [Google Scholar] [CrossRef]

- Ruder, K.F.; Rupp, J.A. Pause Detection Thresholds in a Stop-Phoneme Environment. J. Acoust. Soc. Am. 1970, 48, 94–95. [Google Scholar] [CrossRef]

- Christodoulides, G.; Lenglet, C. Prosodic correlates of perceived quality and fluency in simultaneous interpreting. In Proceedings of the Speech Prosody, Dublin, Ireland, 20–23 May 2014; pp. 1002–1006. [Google Scholar]

- Lee, M. Kiwi: Developing a Korean Morphological Analyzer Based on Statistical Language Models and Skip-Bigram. KJDH 2024, 1, 109–136. [Google Scholar] [CrossRef]

- Liu, F.T.; Ting, K.M.; Zhou, Z.H. Isolation Forest. In Proceedings of the 2008 IEEE International Conference on Data Mining, Pisa, Italy, 15–19 December 2008; pp. 413–422. [Google Scholar]

- Xiang, H.; Zhang, X.; Dras, M.; Beheshti, A.; Dou, W.; Xu, X. Deep optimal isolation forest with genetic algorithm for anomaly detection. In Proceedings of the 2023 IEEE International Conference on Data Mining (ICDM), Shanghai, China, 1–4 December 2023; pp. 678–687. [Google Scholar]

- Guo, L.; Wei, Y. A Time Series Segment Finding Motifs Based On Sliding Window Algorithm. In Proceedings of the 2024 IEEE International Conference on Industrial Technology (ICIT), Bristol, UK, 25–27 March 2024; pp. 1–6. [Google Scholar]

- Norwawi, N.M. Sliding window time series forecasting with multilayer perceptron and multiregression of COVID-19 outbreak in Malaysia. In Data Science for COVID-19; Elsevier: Amsterdam, The Netherlands, 2021; pp. 547–564. [Google Scholar]

- Haist, F.; Shimamura, A.P.; Squire, L.R. On the relationship between recall and recognition memory. J. Exp. Psychol. Learn. Mem. Cogn. 1992, 18, 691–702. [Google Scholar] [CrossRef]

- Card, S.K.; Moran, T.P.; Newell, A. The Psychology of Human-Computer Interaction; Lawrence Erlbaum Associates: Hillsdale, NJ, USA, 1983. [Google Scholar]

- Krings, H.P. Repairing Texts: Empirical Investigations of Machine Translation Post-Editing Processes; Kent State University Press: Kent, OH, USA, 2001. [Google Scholar]

- Koponen, M. Comparing human perceptions of post-editing effort with machine translation quality metrics. In Proceedings of the Seventh Workshop on Statistical Machine Translation, Montreal, QC, Canada, 7–8 June 2012; pp. 134–143. [Google Scholar]

| Type of Data | Source | Number of Silences | Number of Pauses |

|---|---|---|---|

| Tuning Data | 8-11 | 1449 | 51 |

| Test Data | 12 | 448 | 8 |

| 13 | 336 | 21 | |

| 14 | 337 | 11 | |

| Total (12-14) | 1133 | 40 |

| Simultaneous Interpretation | Consecutive Interpretation | |

|---|---|---|

| Contamination | 0.035 | 0.05 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 0.1000 | 1 | 0.1818 | 0.1536 |

| 13 | 0.3333 | 1 | 0.5000 | 0.4484 |

| 14 | 0.1209 | 1 | 0.2157 | 0.1688 |

| Total | 0.1709 | 1 | 0.2920 | 0.2465 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 0.3684 | 0.8750 | 0.5185 | 0.5061 |

| 13 | 0.5714 | 0.3810 | 0.4571 | 0.4287 |

| 14 | 0.4286 | 0.5455 | 0.4800 | 0.4609 |

| Total | 0.4468 | 0.5250 | 0.4828 | 0.4622 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 0.5000 | 0.8750 | 0.6364 | 0.6279 |

| 13 | 0.6667 | 0.3810 | 0.4848 | 0.4604 |

| 14 | 0.3333 | 0.3636 | 0.3478 | 0.3256 |

| Total | 0.5000 | 0.4750 | 0.4872 | 0.4689 |

| Method | Precision | Recall | F1 Score | Kappa | Remark |

|---|---|---|---|---|---|

| Baseline | 0.8750 | 0.3684 | 0.5185 | 0.3910 | 1 sec |

| Adaptive Threshold Based on Statistical Distribution | 0.8000 | 0.3516 | 0.4885 | 0.3725 | i = 1 |

| Basic Isolation Forest | 0.5000 | 0.4750 | 0.4872 | 0.4774 | c = 0.035 |

| Isolation Forest with sliding window | 0.5366 | 0.5500 | 0.5432 | 0.5486 | c = 0.035 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 1 | 0.381 | 0.5517 | 0.5398 |

| 13 | 0.8571 | 0.5143 | 0.6429 | 0.6127 |

| 14 | 0.8182 | 0.2308 | 0.36 | 0.3268 |

| Total | 0.875 | 0.3684 | 0.5185 | 0.4933 |

| Source | mean | SD* | i | Threshold | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|---|---|---|---|

| 12 | 162.5 | 431.2 | 0 | 162.5 | 1 | 0.1404 | 0.2462 | 0.2218 |

| 1 | 593.7 | 1 | 0.3636 | 0.5333 | 0.5208 | |||

| 2 | 1024.9 | 1 | 0.381 | 0.5517 | 0.5398 | |||

| 3 | 1456.1 | 0.875 | 0.7 | 0.7778 | 0.7733 | |||

| 4 | 1887.3 | 0.375 | 0.75 | 0.5 | 0.494 | |||

| 13 | 347.1 | 1037.7 | 0 | 347.1 | 1 | 0.4565 | 0.6269 | 0.592 |

| 1 | 1384.8 | 0.7143 | 0.5 | 0.5882 | 0.5557 | |||

| 2 | 2422.5 | 0.1429 | 0.5 | 0.2222 | 0.2001 | |||

| 3 | 3460.2 | 0.1429 | 0.75 | 0.24 | 0.2245 | |||

| 4 | 4497.9 | 0.0476 | 0.5 | 0.087 | 0.077 | |||

| 14 | 338.7 | 709.4 | 0 | 338.7 | 1 | 0.1897 | 0.3188 | 0.2806 |

| 1 | 1048.1 | 0.8182 | 0.2308 | 0.36 | 0.3268 | |||

| 2 | 1757.5 | 0.5455 | 0.4286 | 0.48 | 0.4609 | |||

| 3 | 2466.9 | 0.3636 | 0.4 | 0.381 | 0.3617 | |||

| 4 | 3176.3 | 0.1818 | 0.6667 | 0.2857 | 0.2759 | |||

| Total | - | - | 0 | - | 1 | 0.2484 | 0.398 | 0.3619 |

| 1 | - | 0.8 | 0.3516 | 0.4885 | 0.4622 | |||

| 2 | - | 0.425 | 0.4146 | 0.4198 | 0.3982 | |||

| 3 | - | 0.35 | 0.5833 | 0.4375 | 0.4222 | |||

| 4 | - | 0.15 | 0.6667 | 0.2449 | 0.235 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 0.5 | 0.875 | 0.6364 | 0.6279 |

| 13 | 0.6667 | 0.381 | 0.4848 | 0.4604 |

| 14 | 0.3333 | 0.3636 | 0.3478 | 0.3256 |

| Total | 0.5 | 0.475 | 0.4872 | 0.4689 |

| Source | Precision | Recall | F1 Score | Kappa |

|---|---|---|---|---|

| 12 | 0.5000 | 1 | 0.6667 | 0.6585 |

| 13 | 0.6667 | 0.3810 | 0.4848 | 0.4604 |

| 14 | 0.4615 | 0.5455 | 0.5000 | 0.4823 |

| Total | 0.5366 | 0.5500 | 0.5432 | 0.5263 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).