Submitted:

15 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

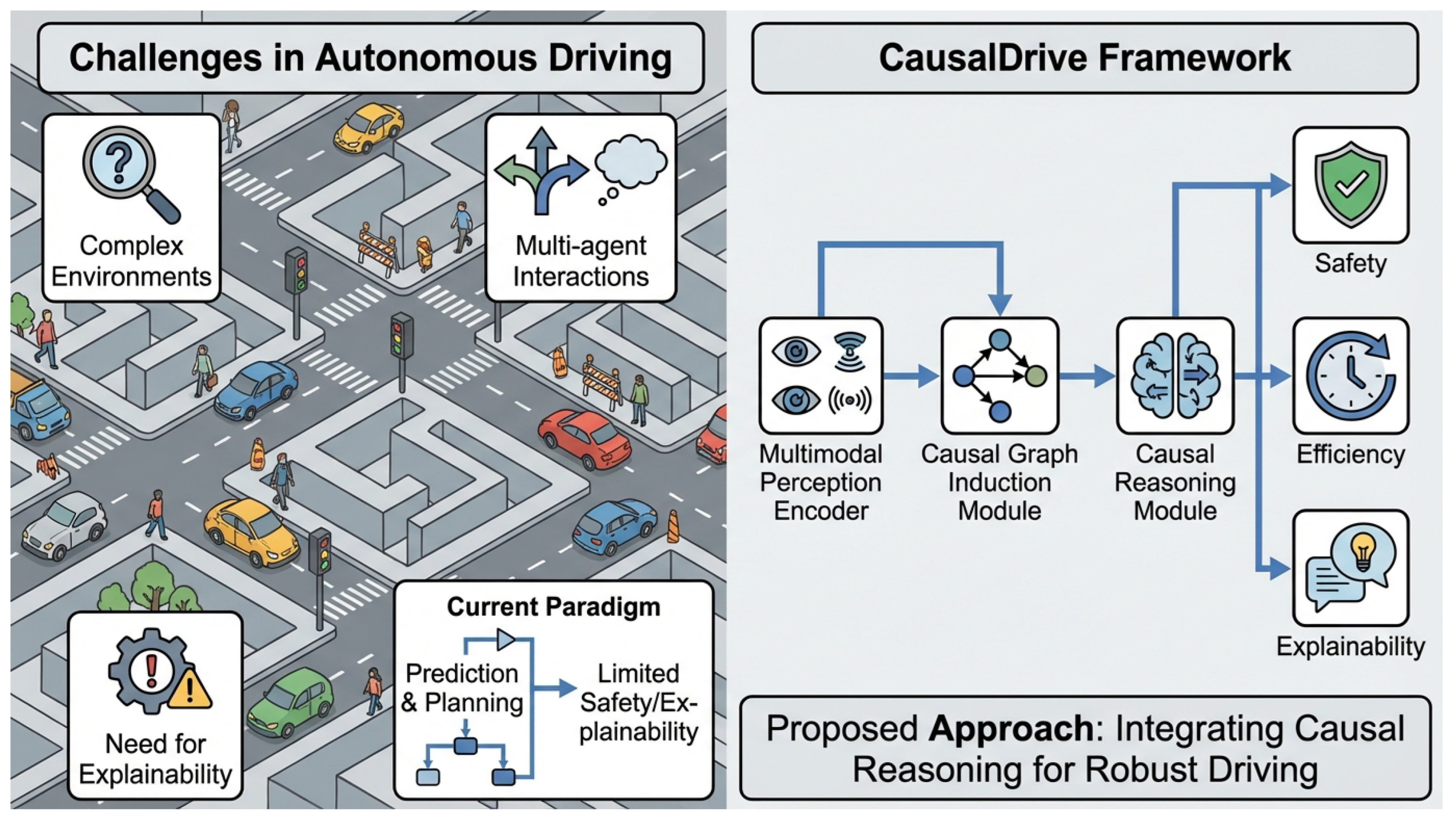

1. Introduction

- Robustly generalize to novel or unseen scenarios: Without understanding underlying causal mechanisms, models may make brittle predictions when faced with variations not present in training data.

- Provide explainable and trustworthy decisions: Autonomous vehicles need to communicate their rationale to human occupants, regulatory bodies, and other road users for trust and accountability [3].

- Effectively handle complex multi-agent interactions: Inferring the intentions and potential reactions of other road users requires more than just trajectory prediction; it demands an understanding of their causal influence on each other.

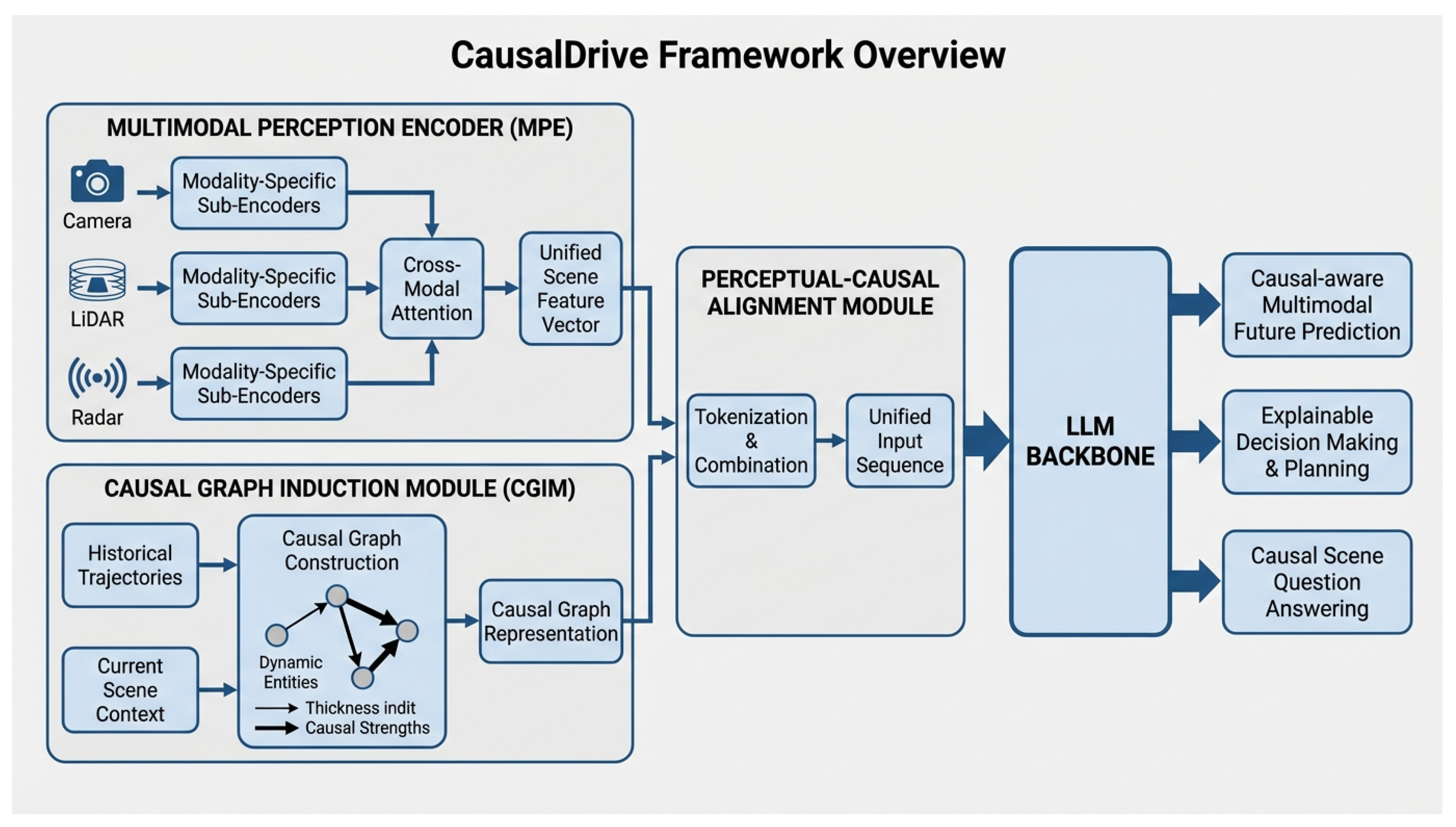

- Multimodal Perception Encoder (MPE): This module processes diverse sensor inputs (camera images, LiDAR point clouds, radar features) to generate comprehensive and compact scene feature vectors using a Transformer-based architecture with cross-modal attention.

- Causal Graph Induction Module (CGIM): A core innovation, the CGIM dynamically learns and infers causal relationships between entities (e.g., different vehicles, pedestrians, and the ego-vehicle) from historical trajectories and scene context. This process forms a dynamic Causal Graph, which represents structured causal knowledge embedded into the LLM.

- Perceptual-Causal Alignment Module: This module aligns the MPE-encoded scene features with the CGIM-derived causal graph, transforming them into LLM-friendly token sequences, enriched with timestamps and entity IDs to ensure the LLM understands temporal and individual context.

- Causal-aware Multimodal Future Prediction: This task involves fusing multi-source heterogeneous data to build comprehensive scene representations and predict the motion trajectories and states of all key dynamic entities (vehicles, pedestrians, etc.) within the next 3-5 seconds. Crucially, it provides potential causal explanations (e.g., “Vehicle A decelerates because Pedestrian B is crossing the road”).

- Explainable Decision Making & Planning: Based on the causal-aware scene prediction results, CausalDrive generates safe, efficient, and traffic-rule-compliant future trajectory plans for the ego-vehicle. It also provides natural language explanations for the decision basis (e.g., “To avoid the truck about to change lanes, we decided to maintain the current lane and slightly reduce speed”).

- Causal Scene Question Answering (C-QA): This task focuses on multimodal question answering about autonomous driving scenarios, with a particular emphasis on understanding causal relationships and complex interactions (e.g., “Why did Vehicle C suddenly accelerate?” or “If the ego-vehicle turns left now, what impact would it have on Pedestrian D?”).

- We propose CausalDrive, a novel unified framework that seamlessly integrates multimodal perception, explicit causal reasoning, and large language models for comprehensive scene understanding, prediction, and explainable planning in autonomous driving.

- We introduce a Causal Graph Induction Module (CGIM) that dynamically learns and infers causal relationships between road agents from historical context, providing crucial structured causal knowledge to the LLM backbone.

- We demonstrate that incorporating explicit causal reasoning significantly enhances prediction accuracy, improves the safety and explainability of planning decisions, and enables sophisticated causal scene question answering, achieving state-of-the-art performance on challenging autonomous driving benchmarks.

2. Related Work

2.1. Large Models and Multimodal Perception for Autonomous Driving

2.2. Causal Reasoning and Explainable AI in Autonomous Driving

2.2.1. Causal Reasoning for Understanding Dynamic Environments

2.2.2. Explainable AI for Transparency and Trust

2.2.3. Addressing Complexities in Autonomous Driving

3. Method

3.1. Overall Architecture

3.2. Multimodal Perception Encoder (MPE)

3.3. Causal Graph Induction Module (CGIM)

3.4. Perceptual-Causal Alignment Module

3.5. CausalDrive LLM Backbone and Task Adaptation

3.5.1. Causal-Aware Multimodal Future Prediction

3.5.2. Explainable Decision Making & Planning

3.5.3. Causal Scene Question Answering (C-QA)

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets

4.1.2. Evaluation Metrics

4.1.3. Baselines

4.1.4. Implementation Details

4.2. Main Results: Causal-aware Future Prediction and Explainable Planning

4.3. Ablation Study on Causal Graph Induction Module (CGIM)

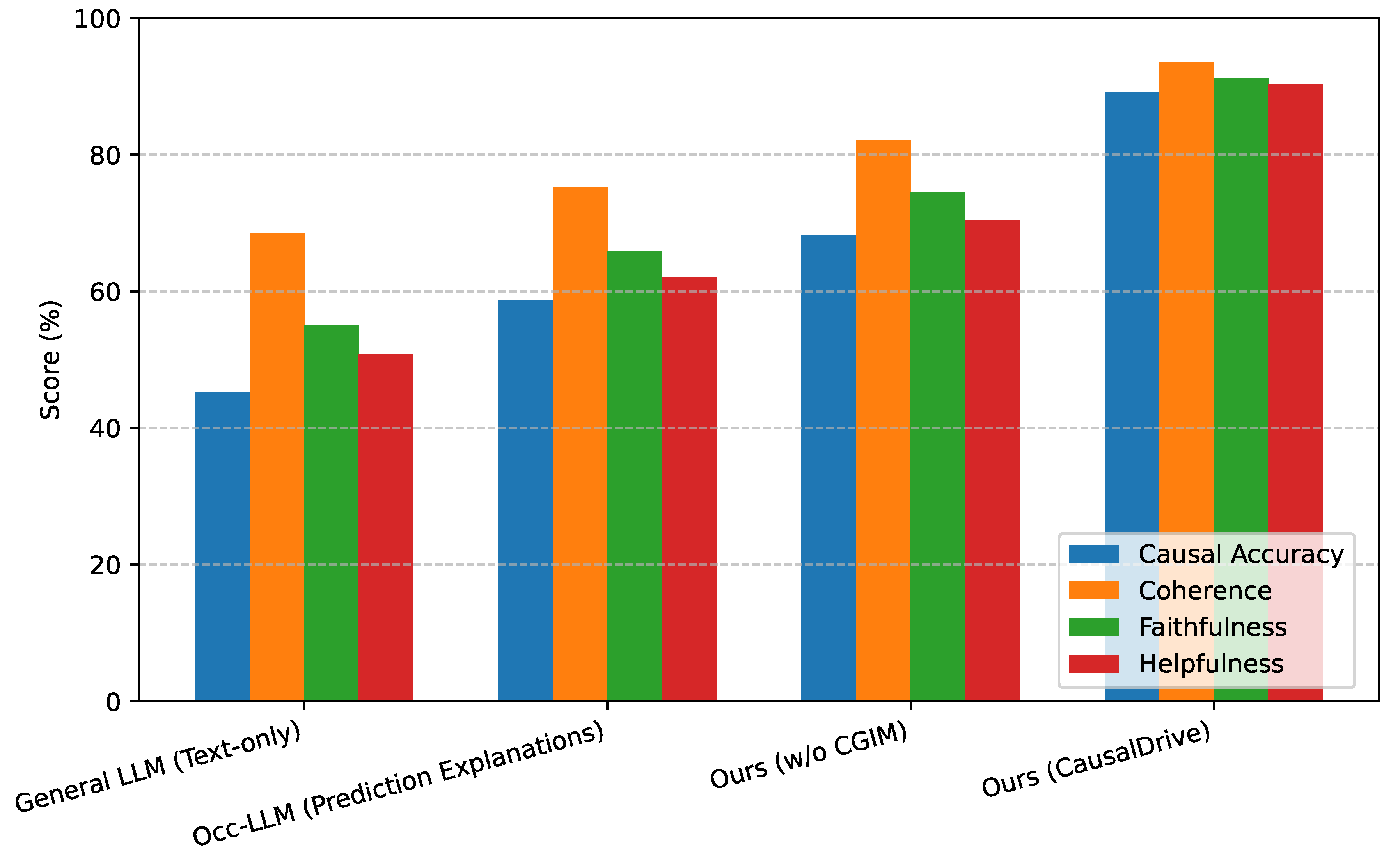

4.4. Human Evaluation for Explainability and Causal Scene Question Answering

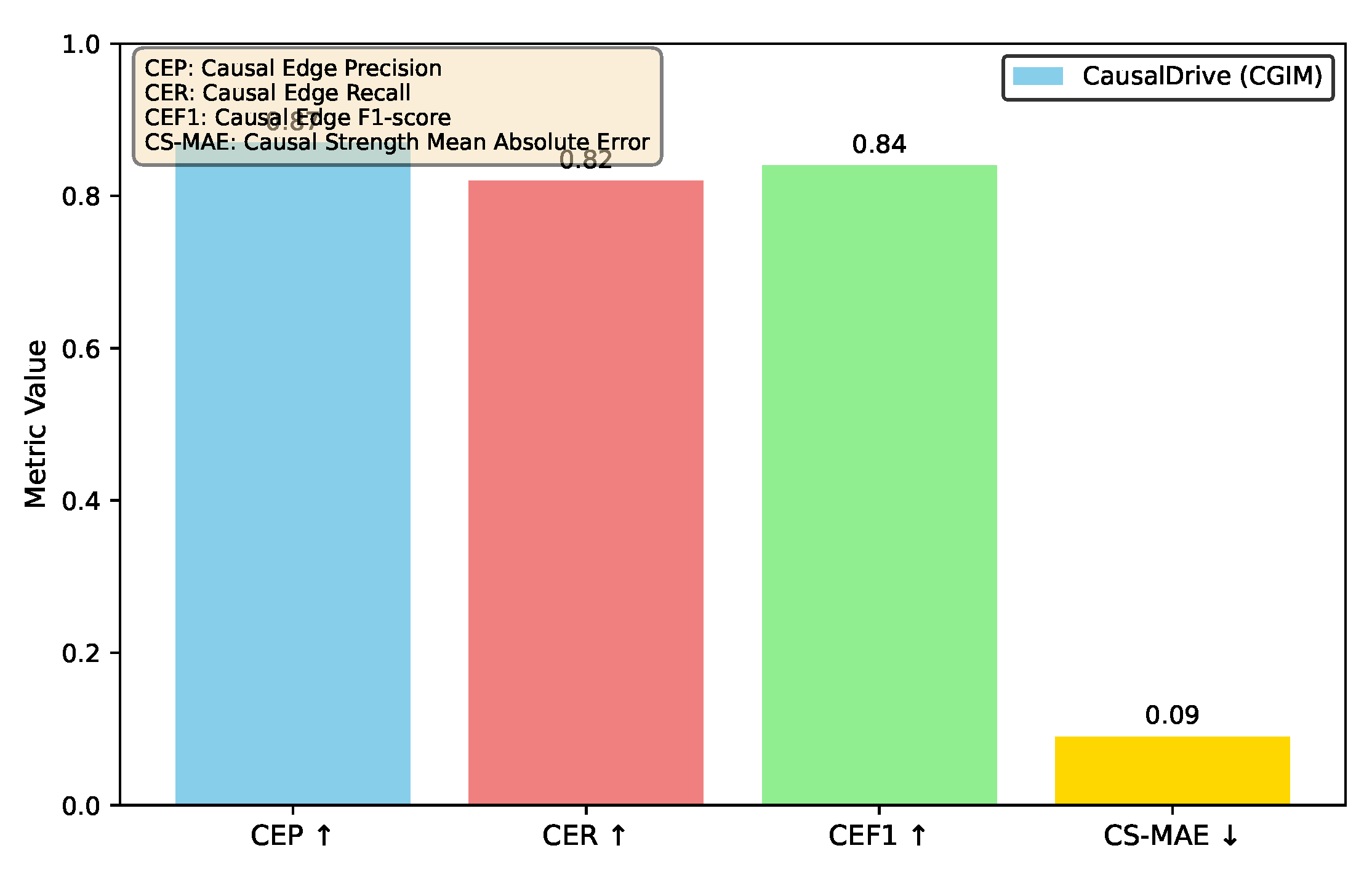

4.5. Detailed Causal Graph Quality Analysis

4.6. Robustness to Sensor Noise and Occlusions

4.7. Inference Latency and Computational Footprint

5. Conclusion

References

- Sheng, Q.; Cao, J.; Zhang, X.; Li, R.; Wang, D.; Zhu, Y. Zoom Out and Observe: News Environment Perception for Fake News Detection. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 4543–4556. [CrossRef]

- Sun, H.; Xu, G.; Deng, J.; Cheng, J.; Zheng, C.; Zhou, H.; Peng, N.; Zhu, X.; Huang, M. On the Safety of Conversational Models: Taxonomy, Dataset, and Benchmark. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022. Association for Computational Linguistics, 2022, pp. 3906–3923. [CrossRef]

- Li, L.; Zhang, Y.; Chen, L. Personalized Transformer for Explainable Recommendation. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 4947–4957. [CrossRef]

- Fong, W.K.; Liong, V.E.; Tan, K.S.; Caesar, H. nuScenes Revisited: Progress and Challenges in Autonomous Driving. CoRR 2025. [CrossRef]

- Lv, Q.; Kong, W.; Li, H.; Zeng, J.; Qiu, Z.; Qu, D.; Song, H.; Chen, Q.; Deng, X.; Pang, J. F1: A vision-language-action model bridging understanding and generation to actions. arXiv preprint arXiv:2509.06951 2025.

- Ding, N.; Xu, G.; Chen, Y.; Wang, X.; Han, X.; Xie, P.; Zheng, H.; Liu, Z. Few-NERD: A Few-shot Named Entity Recognition Dataset. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 3198–3213. [CrossRef]

- Ye, J.; Gao, J.; Li, Q.; Xu, H.; Feng, J.; Wu, Z.; Yu, T.; Kong, L. ZeroGen: Efficient Zero-shot Learning via Dataset Generation. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2022, pp. 11653–11669. [CrossRef]

- Wu, C.; Wu, F.; Huang, Y. DA-Transformer: Distance-aware Transformer. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 2059–2068. [CrossRef]

- Liu, W.; Wang, W.; Qiao, Y.; Guo, Q.; Zhu, J.; Li, P.; Chen, Z.; Yang, H.; Li, Z.; Wang, L.; et al. MMTL-UniAD: A Unified Framework for Multimodal and Multi-Task Learning in Assistive Driving Perception. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR 2025, Nashville, TN, USA, June 11-15, 2025. Computer Vision Foundation / IEEE, 2025, pp. 6864–6874. [CrossRef]

- Tan, Z.; Li, D.; Wang, S.; Beigi, A.; Jiang, B.; Bhattacharjee, A.; Karami, M.; Li, J.; Cheng, L.; Liu, H. Large Language Models for Data Annotation and Synthesis: A Survey. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2024, pp. 930–957. [CrossRef]

- Ling, Y.; Yu, J.; Xia, R. Vision-Language Pre-Training for Multimodal Aspect-Based Sentiment Analysis. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 2149–2159. [CrossRef]

- Wu, Z.; Kong, L.; Bi, W.; Li, X.; Kao, B. Good for Misconceived Reasons: An Empirical Revisiting on the Need for Visual Context in Multimodal Machine Translation. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 6153–6166. [CrossRef]

- Yang, X.; Feng, S.; Zhang, Y.; Wang, D. Multimodal Sentiment Detection Based on Multi-channel Graph Neural Networks. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 328–339. [CrossRef]

- Li, X.; Ma, Y.; Ye, K.; Cao, J.; Zhou, M.; Zhou, Y. Hy-facial: Hybrid feature extraction by dimensionality reduction methods for enhanced facial expression classification. arXiv preprint arXiv:2509.26614 2025.

- Liu, Y.; Liu, P. SimCLS: A Simple Framework for Contrastive Learning of Abstractive Summarization. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 2: Short Papers). Association for Computational Linguistics, 2021, pp. 1065–1072. [CrossRef]

- Pang, R.; Huang, J.; Li, Y.; Shan, Y. HEV-YOLO: An Improved YOLOv11-Based Detection Algorithm for Heavy Equipment Engineering Vehicles. In Proceedings of the 2025 5th International Conference on Electronic Information Engineering and Computer Technology (EIECT). IEEE, 2025, pp. 96–99.

- Hu, G.; Lin, T.E.; Zhao, Y.; Lu, G.; Wu, Y.; Li, Y. UniMSE: Towards Unified Multimodal Sentiment Analysis and Emotion Recognition. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2022, pp. 7837–7851. [CrossRef]

- Hu, J.; Liu, Y.; Zhao, J.; Jin, Q. MMGCN: Multimodal Fusion via Deep Graph Convolution Network for Emotion Recognition in Conversation. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 5666–5675. [CrossRef]

- Dai, W.; Cahyawijaya, S.; Liu, Z.; Fung, P. Multimodal End-to-End Sparse Model for Emotion Recognition. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 5305–5316. [CrossRef]

- Liu, Z.; Chen, N. Controllable Neural Dialogue Summarization with Personal Named Entity Planning. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 92–106. [CrossRef]

- Liu, W. Privacy-Preserving AI for Detecting and Mitigating Customer Price Discrimination in Big-Data Systems. Journal of Computer, Signal, and System Research 2026, 3, 37–46.

- Liu, W. KV Cache and Inference Scheduling: Energy Modeling for High-QPS Services. Journal of Industrial Engineering and Applied Science 2026, 4, 34–41.

- Liu, W. Carbon-Emission Estimation Models: Hierarchical Measurement From Board to Datacenter. Journal of Industrial Engineering and Applied Science 2026, 4, 42–48.

- Liu, Z.; Huang, J.; Wang, X.; Wu, Y.; Gorbatchev, N. Employee Performance Prediction: A System Based on LightGBM for Digital Intelligent HR Management. In Proceedings of the 2025 International Conference on Intelligent Computing and Next Generation Networks (ICNGN). IEEE, 2025, pp. 1–5.

- Huang, J.; Tian, Z.; Qiu, Y. Ai-enhanced dynamic power grid simulation for real-time decision-making. In Proceedings of the 2025 4th International Conference on Smart Grids and Energy Systems (SGES). IEEE, 2025, pp. 15–19.

- Wang, P.; Zhu, Z. Overview of Online Parameter Identification of Permanent Magnet Synchronous Machines under Sensorless Control. IEEE Access 2026.

- Wang, P.; Zhu, Z.; Freire, N.; Azar, Z.; Wu, X.; Liang, D. Online Simultaneous Identification of Multi-Parameters for Interior PMSMs Under Sensorless Control. CES Transactions on Electrical Machines and Systems 2025, 9, 422–433.

- Wang, P.; Zhu, Z.; Liang, D.; Freire, N.M.; Azar, Z. Dual signal injection-based online parameter estimation of surface-mounted PMSMs under sensorless control. IEEE Transactions on Industry Applications 2025.

- Kryscinski, W.; Rajani, N.; Agarwal, D.; Xiong, C.; Radev, D. BOOKSUM: A Collection of Datasets for Long-form Narrative Summarization. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022. Association for Computational Linguistics, 2022, pp. 6536–6558. [CrossRef]

- Zuo, X.; Cao, P.; Chen, Y.; Liu, K.; Zhao, J.; Peng, W.; Chen, Y. Improving Event Causality Identification via Self-Supervised Representation Learning on External Causal Statement. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics, 2021, pp. 2162–2172. [CrossRef]

- Magister, L.C.; Mallinson, J.; Adamek, J.; Malmi, E.; Severyn, A. Teaching Small Language Models to Reason. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers). Association for Computational Linguistics, 2023, pp. 1773–1781. [CrossRef]

- Lv, Q.; Deng, X.; Chen, G.; Wang, M.Y.; Nie, L. Decision mamba: A multi-grained state space model with self-evolution regularization for offline rl. Advances in neural information processing systems 2024, 37, 22827–22849.

- Jie, Z.; Li, J.; Lu, W. Learning to Reason Deductively: Math Word Problem Solving as Complex Relation Extraction. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 5944–5955. [CrossRef]

- Mishra, S.; Finlayson, M.; Lu, P.; Tang, L.; Welleck, S.; Baral, C.; Rajpurohit, T.; Tafjord, O.; Sabharwal, A.; Clark, P.; et al. LILA: A Unified Benchmark for Mathematical Reasoning. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2022, pp. 5807–5832. [CrossRef]

- Huang, J.; Chang, K.C.C. Towards Reasoning in Large Language Models: A Survey. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023. Association for Computational Linguistics, 2023, pp. 1049–1065. [CrossRef]

- Lv, Q.; Li, H.; Deng, X.; Shao, R.; Li, Y.; Hao, J.; Gao, L.; Wang, M.Y.; Nie, L. Spatial-temporal graph diffusion policy with kinematic modeling for bimanual robotic manipulation. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 17394–17404.

- Haviv, A.; Ram, O.; Press, O.; Izsak, P.; Levy, O. Transformer Language Models without Positional Encodings Still Learn Positional Information. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022. Association for Computational Linguistics, 2022, pp. 1382–1390. [CrossRef]

| Metrics | Waymo Mo.Trans. | SceneTran. | UniAD | Occ-LLM | Ours |

| Input | Camera/LiDAR | Camera/LiDAR | Camera | Occ. | Multi-modal |

| ADE 3s | 0.45 | 0.42 | 0.39 | 0.37 | 0.34 |

| ADE 5s | 0.88 | 0.83 | 0.78 | 0.75 | 0.69 |

| ADE Avg | 0.67 | 0.63 | 0.59 | 0.56 | 0.51 |

| FDE 3s | 0.92 | 0.88 | 0.81 | 0.78 | 0.70 |

| FDE 5s | 1.85 | 1.75 | 1.62 | 1.55 | 1.40 |

| FDE Avg | 1.39 | 1.32 | 1.22 | 1.17 | 1.05 |

| C-ADE 3s | - | - | - | - | 0.35 |

| C-ADE 5s | - | - | - | - | 0.70 |

| C-ADE Avg | - | - | - | - | 0.53 |

| L2 Avg | 0.76 | 0.73 | 0.68 | 0.65 | 0.58 |

| JMT Avg | 0.78 | 0.75 | 0.72 | 0.68 | 0.62 |

| Methods | ADE-Avg ↓ | FDE-Avg ↓ | L2-Avg ↓ | JMT-Avg ↓ |

| Waymo Motion Trans. | 0.78 | 1.65 | 0.89 | 0.90 |

| SceneTransformer | 0.75 | 1.58 | 0.85 | 0.86 |

| UniAD | 0.70 | 1.48 | 0.79 | 0.82 |

| Occ-LLM | 0.68 | 1.45 | 0.77 | 0.79 |

| Ours (w/o CGIM) | 0.64 | 1.38 | 0.73 | 0.76 |

| Ours (CausalDrive) | 0.58 | 1.25 | 0.67 | 0.70 |

| Methods | Inference Time (ms) ↓ | Parameters (M) ↓ | GPU Memory (GB) ↓ |

| Waymo Motion Trans. | 55 | 90 | 3.5 |

| SceneTransformer | 60 | 120 | 4.0 |

| UniAD | 70 | 180 | 5.0 |

| Occ-LLM | 180 | 750 | 15.0 |

| Ours (w/o CGIM) | 200 | 770 | 16.0 |

| Ours (CausalDrive) | 220 | 785 | 17.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).