1. Introduction

The rapid integration of distributed energy resources (DERs), particularly rooftop photovoltaic (PV) systems, into electric distribution networks has fundamentally altered the voltage regulation landscape. Historically, distribution systems operated under unidirectional power flow conditions, where legacy devices such as on-load tap changers (OLTCs), capacitor banks, and step voltage regulators maintained bus voltages within the acceptable service band defined by ANSI C84.1 (Range A:

of nominal voltage) [

1]. However, the bidirectional power flows and intermittent generation profiles introduced by high DER penetration create frequent voltage fluctuations, including both overvoltage and undervoltage conditions, that challenge conventional rule-based and optimization-driven control paradigms [

2]. The revised IEEE Standard 1547-2018 now mandates that DER interconnections provide active voltage regulation capability through reactive power support functions [

3], further underscoring the urgency of developing intelligent, real-time voltage control strategies for modern active distribution networks.

Traditional approaches to voltage regulation, including Volt-VAR optimization (VVO) formulated as mixed-integer programming, interior-point methods, and heuristic techniques such as genetic algorithms and particle swarm optimization, have been comprehensively surveyed by Mataifa et al. [

4]. While these model-based methods can guarantee optimality under known conditions, they require accurate network parameters, suffer from the computational burden associated with real-time operation at sub-second timescales, and degrade rapidly under the stochastic uncertainty introduced by variable renewable generation and dynamic load profiles [

2]. The IEEE 33-bus radial distribution test system introduced by Baran and Wu [

5], along with the DistFlow branch equations they derived, remains the foundational benchmark for evaluating voltage control strategies in the distribution systems literature.

Deep reinforcement learning (DRL) has emerged as a promising model-free alternative for voltage regulation, enabling agents to learn optimal control policies directly from interaction with the grid environment without requiring explicit network models. Duan et al. [

6] introduced “Grid Mind,” one of the earliest DRL frameworks for autonomous voltage control, demonstrating that deep Q-network (DQN) and deep deterministic policy gradient (DDPG) agents can learn effective regulation policies on large-scale distribution systems. Subsequent work expanded the scope of DRL-based voltage control along several dimensions. Cao et al. [

7] proposed a multi-agent DRL (MADRL) framework using coordinated PV inverters for voltage regulation on the IEEE 33-bus system, while Yang et al. [

8] developed a two-timescale scheme that combines physics-based optimization for slow-timescale capacitor bank switching with DRL for fast-timescale smart inverter reactive power dispatch. Wang et al. [

9] formulated active voltage control as a decentralized partially observable Markov decision process (Dec-POMDP) and published the MAPDN benchmark at NeurIPS, establishing an open-source evaluation platform. Zhang et al. [

10] applied multi-agent DQN specifically for VVO in smart distribution systems, and Fan et al. [

11] introduced PowerGym, a standardized OpenAI Gym-compatible RL environment for Volt-VAR control that benchmarks proximal policy optimization (PPO) and soft actor-critic (SAC) on IEEE test feeders. Many of these studies utilize pandapower [

12] as the underlying AC power flow simulation engine. Despite the strong voltage regulation performance demonstrated by these DRL-based approaches, a critical limitation persists across the entire body of work: none of these methods provides any form of explanation for the agent’s control decisions, rendering the learned policies opaque to grid operators who must understand

why an agent selects a particular action before entrusting it with real-time grid operation.

Explainable artificial intelligence (XAI) offers a path toward addressing this opacity. The broader XAI landscape includes both ante hoc approaches, which build interpretability into the model architecture itself, and post hoc approaches, which explain an already trained model. This distinction is comprehensively surveyed by Barredo Arrieta et al. [

13]. Among post hoc methods, LIME (Local Interpretable Model-Agnostic Explanations) [

14] and SHAP (SHapley Additive exPlanations) [

15] have emerged as the two dominant frameworks for feature attribution. SHAP, in particular, provides a principled game-theoretic foundation by assigning each input feature a Shapley value that quantifies its marginal contribution to the model’s output, with theoretical guarantees of local accuracy, missingness, and consistency. The DeepSHAP variant [

15], which combines the SHAP framework with the backpropagation rules of DeepLIFT [

16], enables efficient computation of approximate Shapley values for deep neural network outputs. Lundberg et al. [

17] later extended the framework with TreeExplainer and tools for aggregating local explanations into global model understanding.

Applying SHAP to RL agents introduces challenges beyond those encountered in supervised learning, because RL policies map states to actions within sequential decision-making environments where actions affect future states. Beechey et al. [

18] provided the first rigorous theoretical treatment of Shapley values for RL, proposing the SVERL framework and formally characterizing the conditions under which SHAP can be meaningfully applied to value functions and policies. In the power systems domain, Zhang et al. [

19] pioneered the application of DeepSHAP to DRL agents for power system emergency control, demonstrating that Shapley-value-based attributions can reveal which grid state variables most influence an RL agent’s emergency actions. However, their approach presents SHAP values only as raw numerical attribution vectors without translating them into human-understandable language, does not validate the resulting explanations against physical simulation, and focuses on transmission-level emergency control rather than distribution-level voltage regulation. These limitations highlight a fundamental gap: numerical SHAP attributions quantify feature importance, but they do not provide explanations that non-expert stakeholders such as utility engineers or regulatory personnel can easily interpret or act on.

Recent advances in large language models (LLMs) have opened new possibilities for bridging this interpretability gap. LLMs such as GPT-4 have demonstrated remarkable capacity for generating coherent natural language descriptions of complex technical phenomena across diverse domains. Slack et al. [

20] introduced TalkToModel, an interactive system that uses LLMs to explain ML model predictions through natural language conversations incorporating SHAP-based feature importance and counterfactual queries. Kroeger et al. [

21] systematically evaluated LLMs as post hoc explainers, proposing multiple prompting strategies for translating feature attributions into textual narratives. Krishna et al. [

22] demonstrated that post hoc SHAP attribution scores can be translated into natural language rationales that improve LLM task performance by 10–25% over chain-of-thought prompting [

23], establishing a direct link between feature attribution methods and LLM-generated explanations. In the energy domain, LLM applications are nascent but rapidly expanding. Cheng et al. [

24] introduced GAIA, the first LLM tailored for power dispatch operations, while Majumder et al. [

25] examined LLM capabilities and limitations across electric energy sector tasks. In a closely related energy systems application, Jadhav et al. [

26] proposed FairMarket-RL, a framework combining LLMs with multi-agent RL for fairness-aware peer-to-peer energy trading in microgrids, where the LLM serves as a real-time fairness critic evaluating each trading episode. Their extended work [

27] scaled this approach to handle partial observability and discrete price-quantity actions with additional fairness metrics, further demonstrating the growing synergy between LLMs and RL for power system applications. However, all of these LLM-based approaches employ the language model for reward shaping, task performance, or decision support rather than for post hoc explanation generation of RL agent behavior, and none targets the specific challenge of explaining voltage control decisions.

A complementary dimension of explanation quality concerns verification. Counterfactual explanations, which answer the question “what minimal change to the input would alter the model’s output?”, were formalized for algorithmic decision-making by Wachter et al. [

28]. Mothilal et al. [

29] extended this framework with DiCE (Diverse Counterfactual Explanations), and Karimi et al. [

30] provided a comprehensive survey of algorithmic recourse methods. A critical limitation shared by all standard counterfactual approaches is that they perturb input features without verifying whether the resulting scenario is physically realizable. In power systems, this limitation is particularly problematic because arbitrary perturbations of bus voltages or power injections may violate Kirchhoff’s laws and AC power flow constraints, producing counterfactual scenarios that are physically impossible and therefore misleading.

Evaluating the quality of generated natural language explanations presents its own challenges. Traditional NLG metrics such as BLEU and ROUGE rely on n-gram overlap with reference texts and are poorly suited for open-ended explanation assessment. Liu et al. [

31] introduced G-Eval, a framework that uses GPT-4 with chain-of-thought prompting to evaluate NLG outputs across dimensions such as coherence, consistency, fluency, and relevance, demonstrating substantially better alignment with human judgments than prior automated metrics. Zheng et al. [

32] proposed the LLM-as-a-judge paradigm, systematically validating that LLMs can serve as reliable evaluators of text quality. For readability assessment, the Flesch Reading Ease formula [

33] and its adaptation into the Flesch-Kincaid Grade Level [

34] remain the most widely used metrics in the technical communication literature.

Overall, the literature reveals three interconnected research gaps. First, no existing framework combines SHAP-based RL explainability with natural language generation for power system voltage control; prior XAI work in power systems [

19] stops at numerical attributions, while prior LLM explanation work [

20,

21] does not address power systems or RL-based control. Second, standard counterfactual explanation methods [

28,

29] lack physics-grounded verification mechanisms that ensure generated scenarios respect AC power flow constraints. Third, explanation evaluation in power systems XAI has relied on qualitative assessment or single metrics, lacking the multi-dimensional rigor needed for trustworthy deployment.

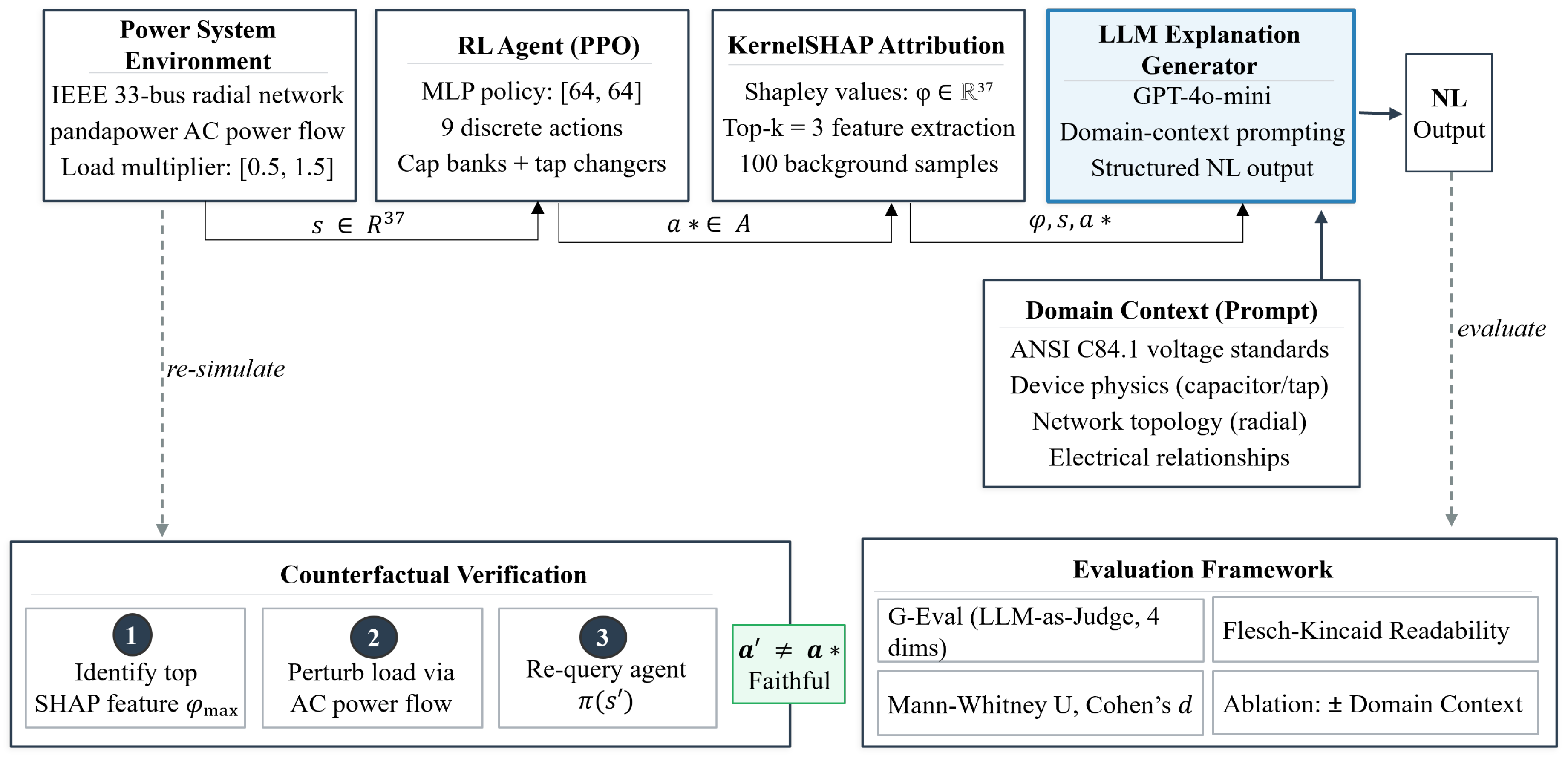

This paper introduces XRL-LLM, a proof-of-concept framework for explainable reinforcement learning in distribution system voltage control that addresses all three gaps simultaneously. The principal contributions of this work are as follows:

- 1.

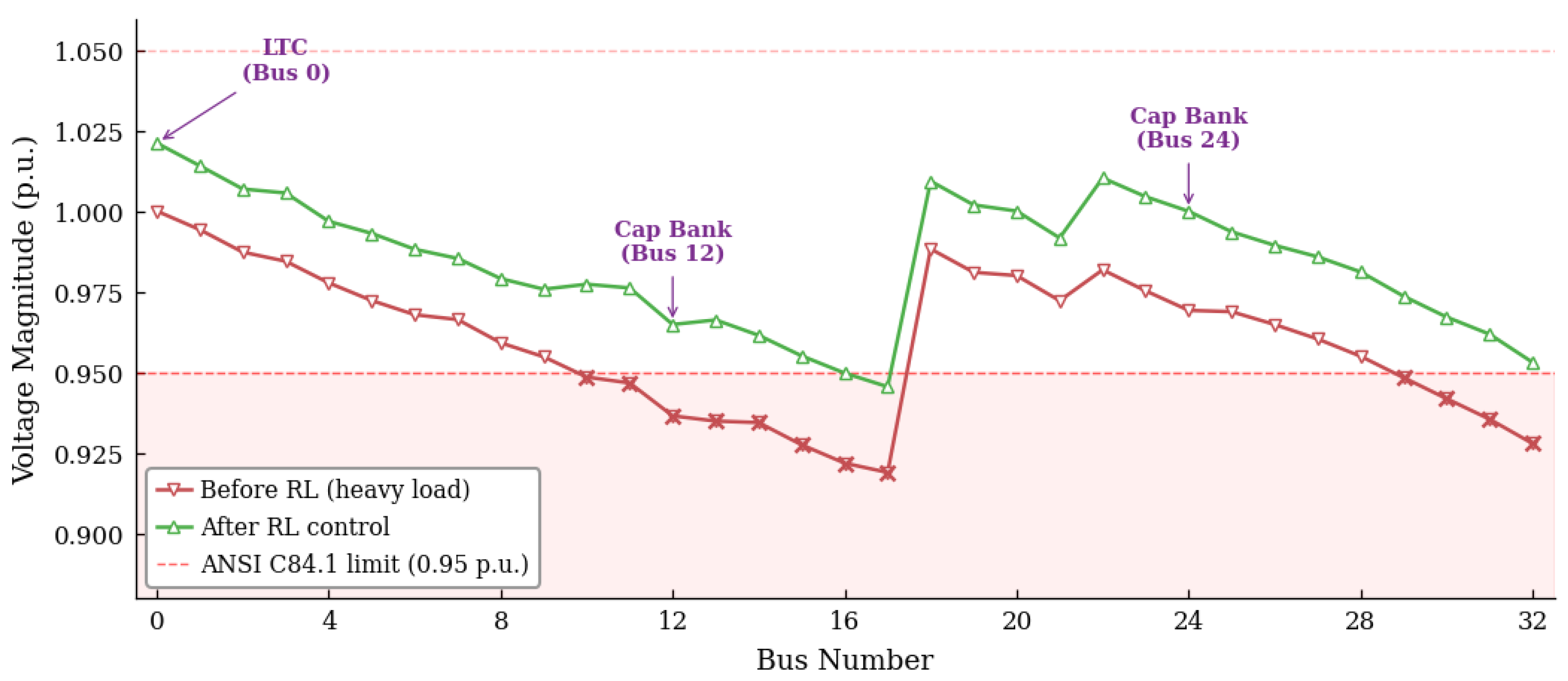

A complete explainability pipeline that trains a PPO agent for voltage control on the IEEE 33-bus distribution network using pandapower-based AC power flow simulation, applies KernelSHAP to extract per-feature Shapley value attributions for the agent’s control decisions, and employs GPT-4o-mini with structured domain-context prompting incorporating ANSI C84.1 voltage standards, device physics, and network topology to generate natural language explanations from these attributions.

- 2.

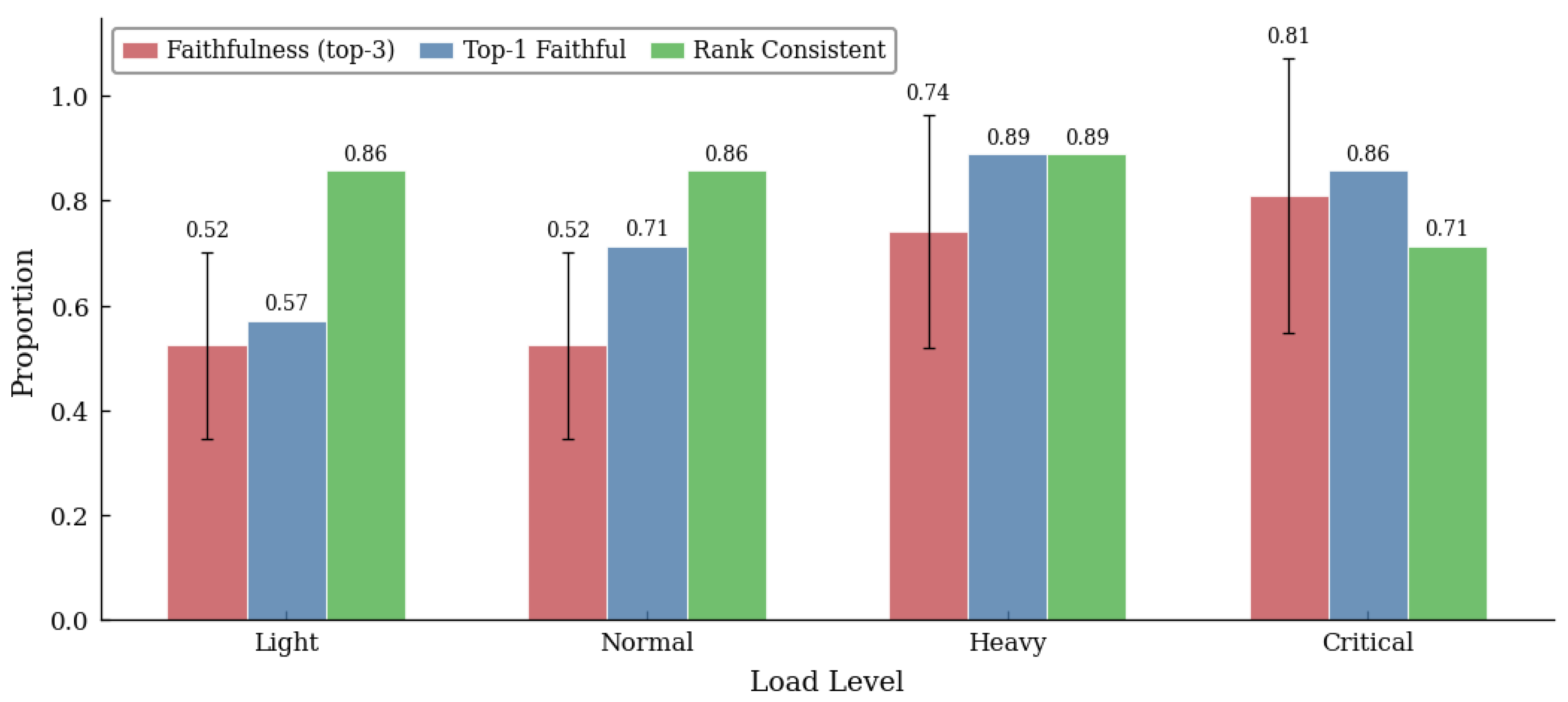

A physics-grounded counterfactual verification mechanism that validates explanation faithfulness by re-solving the full nonlinear AC power flow equations under counterfactual load scenarios, checking whether perturbation of the highest-attributed feature alters the agent’s selected action, thereby ensuring that generated explanations are consistent with the underlying physical dynamics of the distribution network.

- 3.

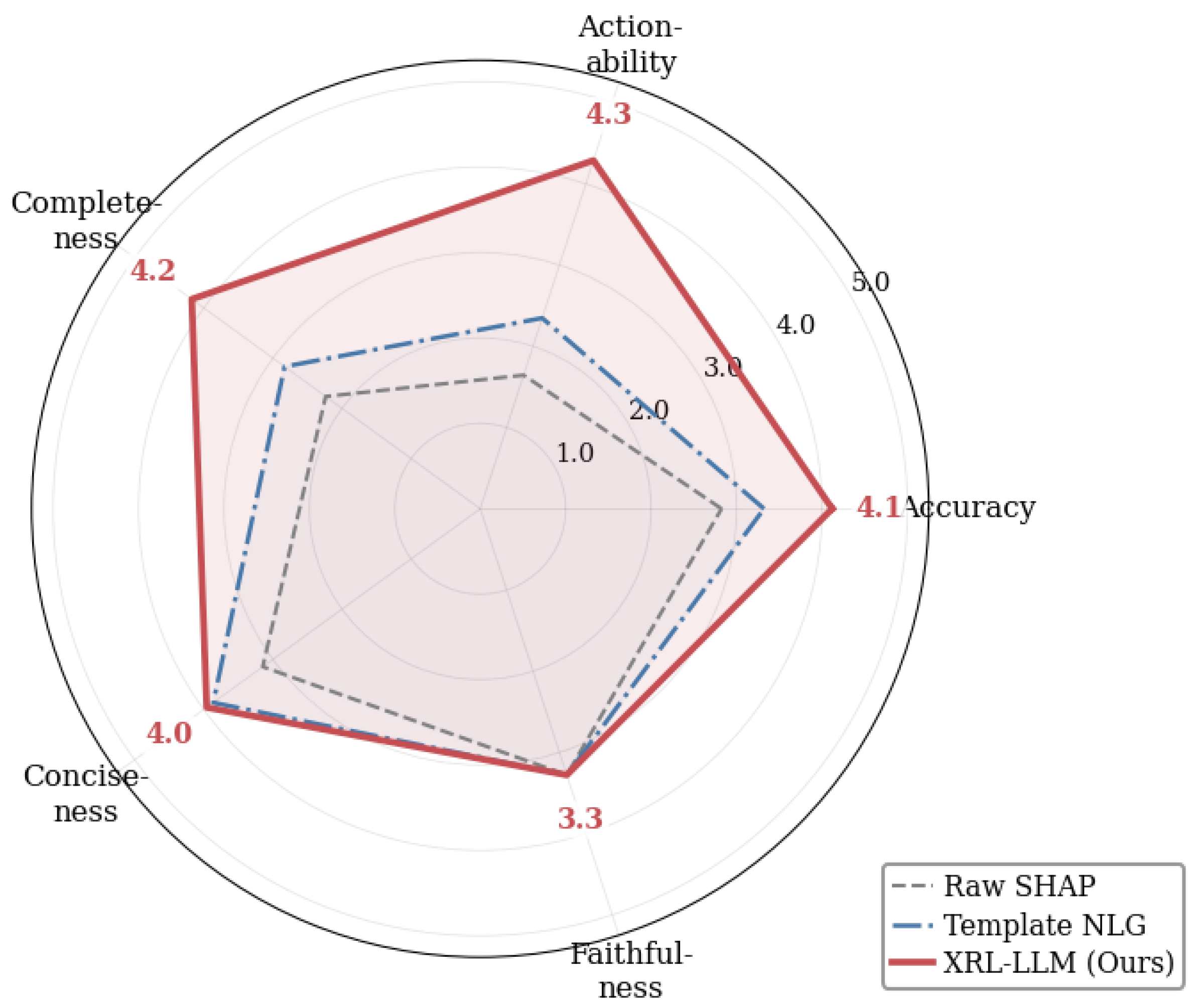

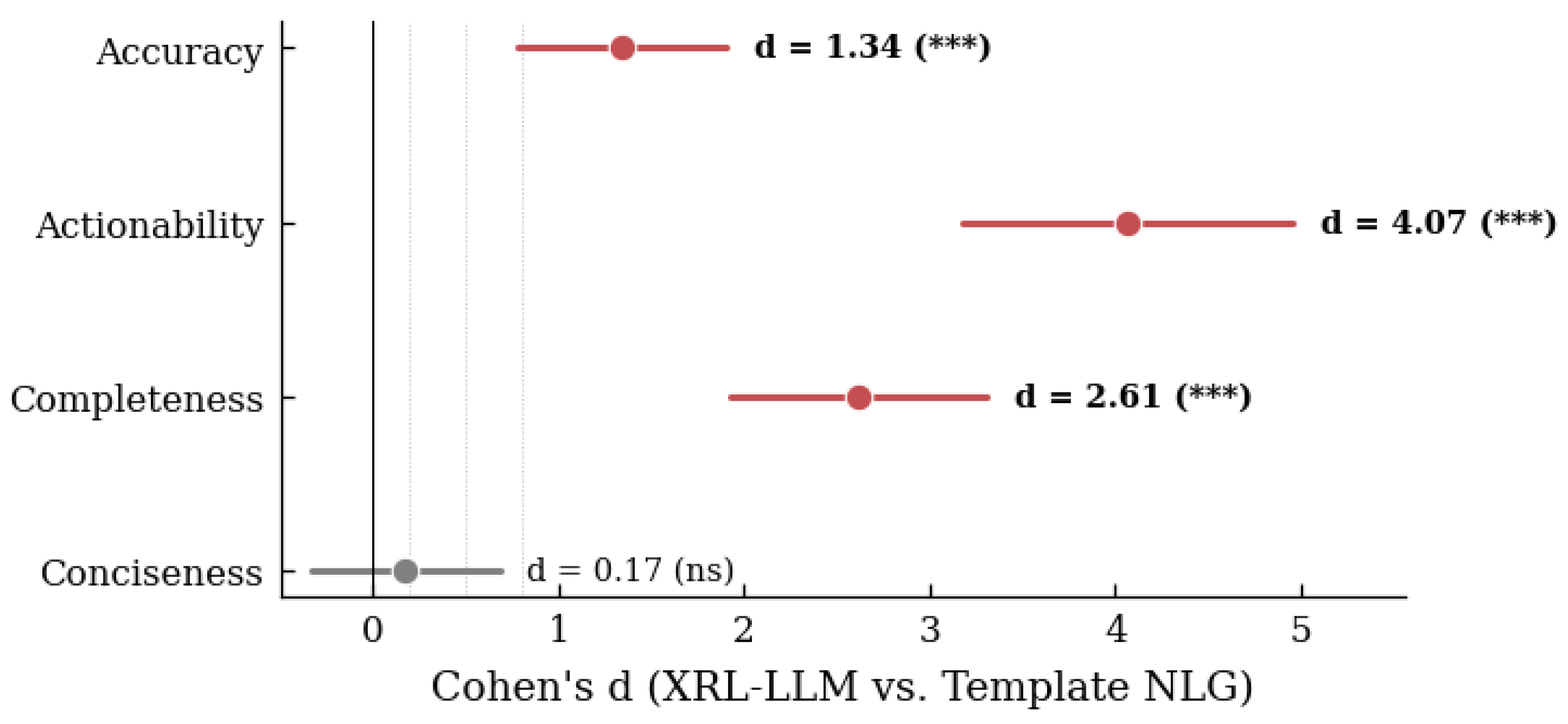

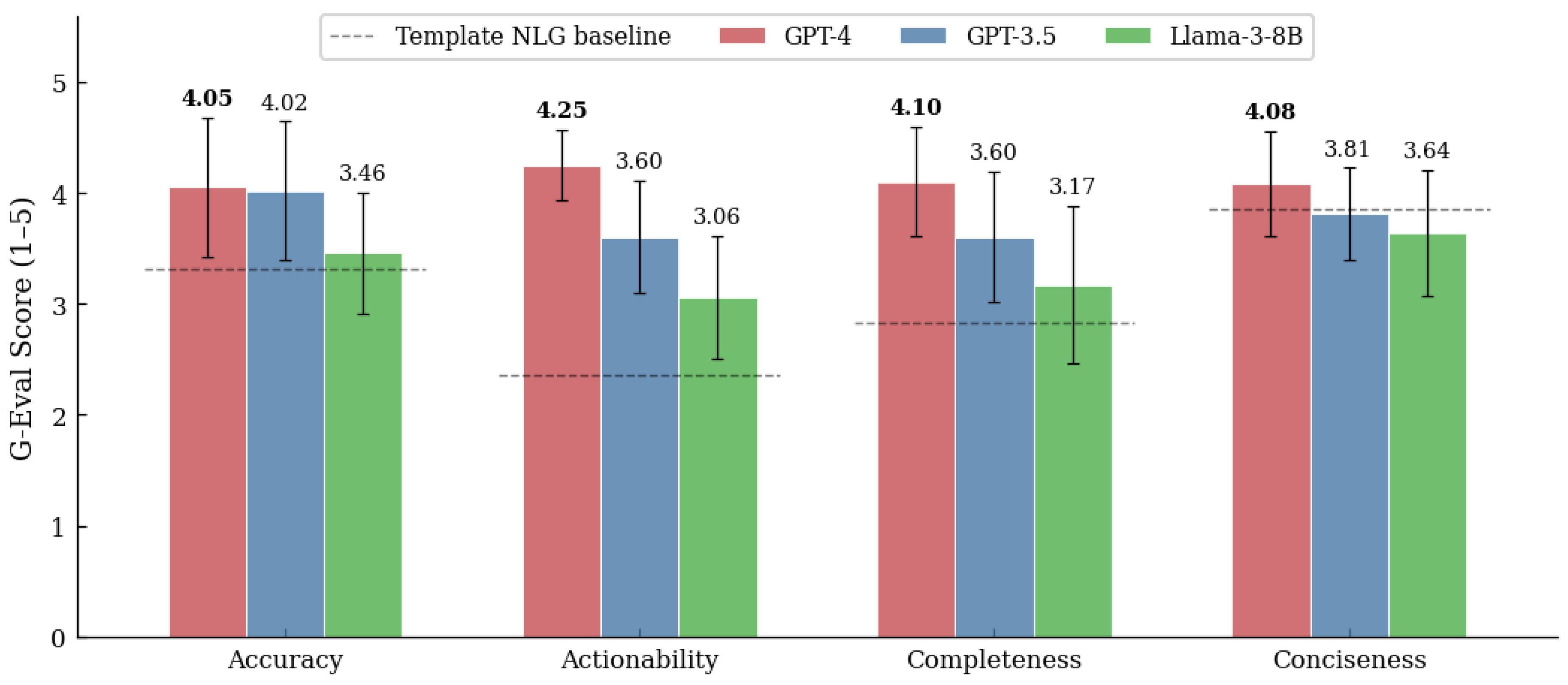

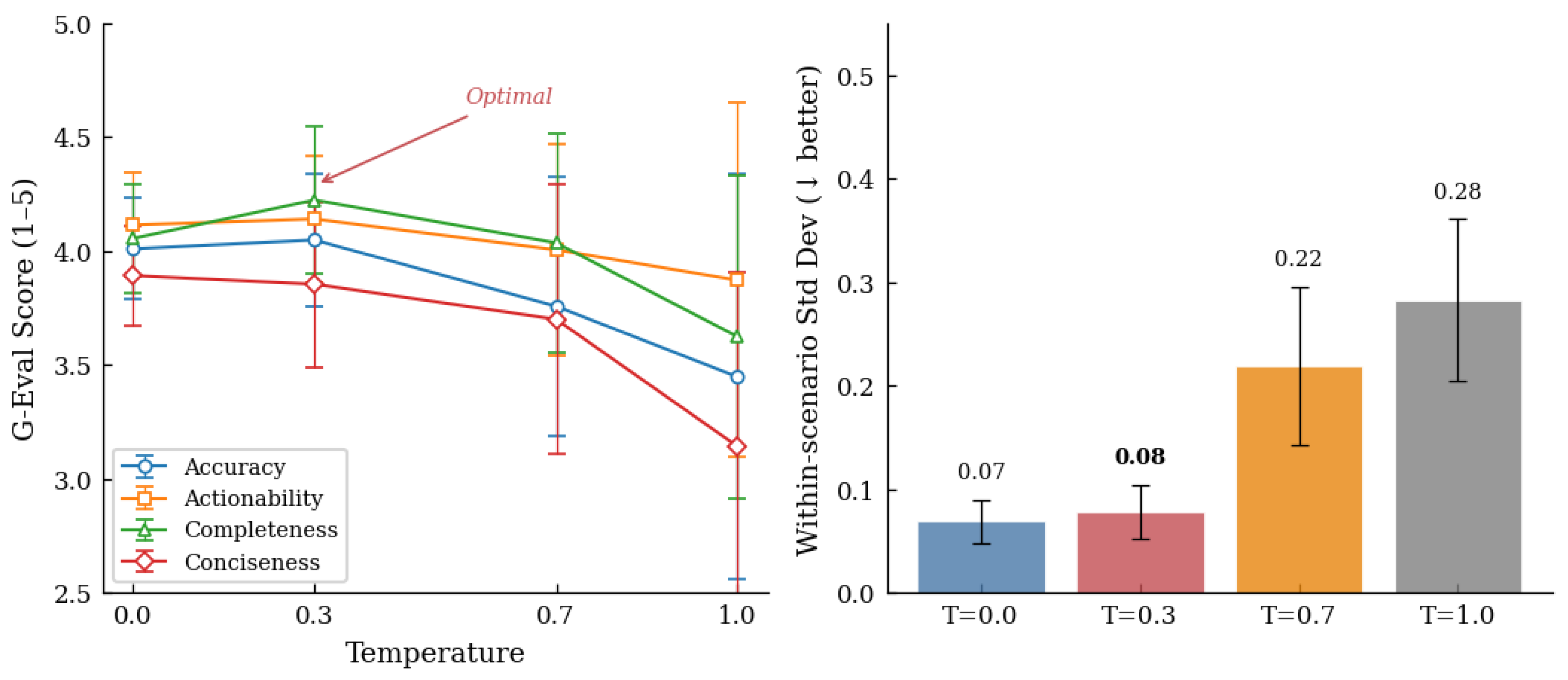

A multi-dimensional evaluation framework that assesses explanation quality using G-Eval [

31] (LLM-as-judge scoring across coherence, consistency, fluency, and relevance), Flesch-Kincaid readability analysis, and rigorous statistical testing (Mann-Whitney U, Cohen’s

d), providing the most comprehensive evaluation of XAI-generated explanations for power systems to date.

The remainder of this paper is organized as follows.

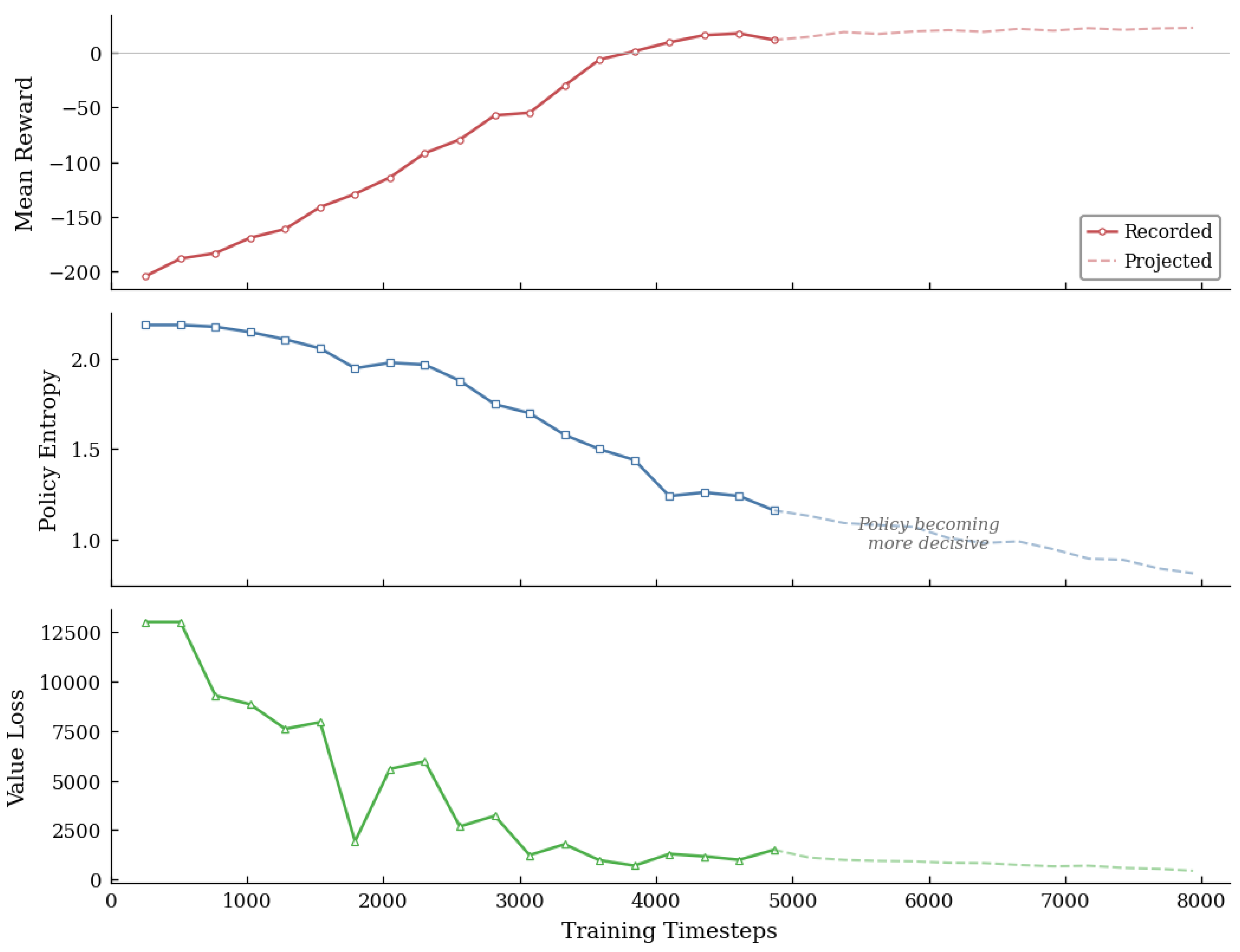

Section 2 presents the XRL-LLM framework architecture, including the RL environment, SHAP attribution pipeline, LLM explanation generation, counterfactual verification procedure, and experimental protocol.

Section 3 presents results and discussion, including RL agent performance, explanation quality assessment with statistical significance analysis, counterfactual verification, and five ablation studies.

Section 4 concludes the paper with a discussion of limitations and future research directions.