Submitted:

11 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Study

Concepts of Product Commonality

Product Families and Modularity

Industrial Practice and Tool Support

Synthesis of Literature Streams and Operationalization Gaps

Literature Discussion and Implications for PCATs

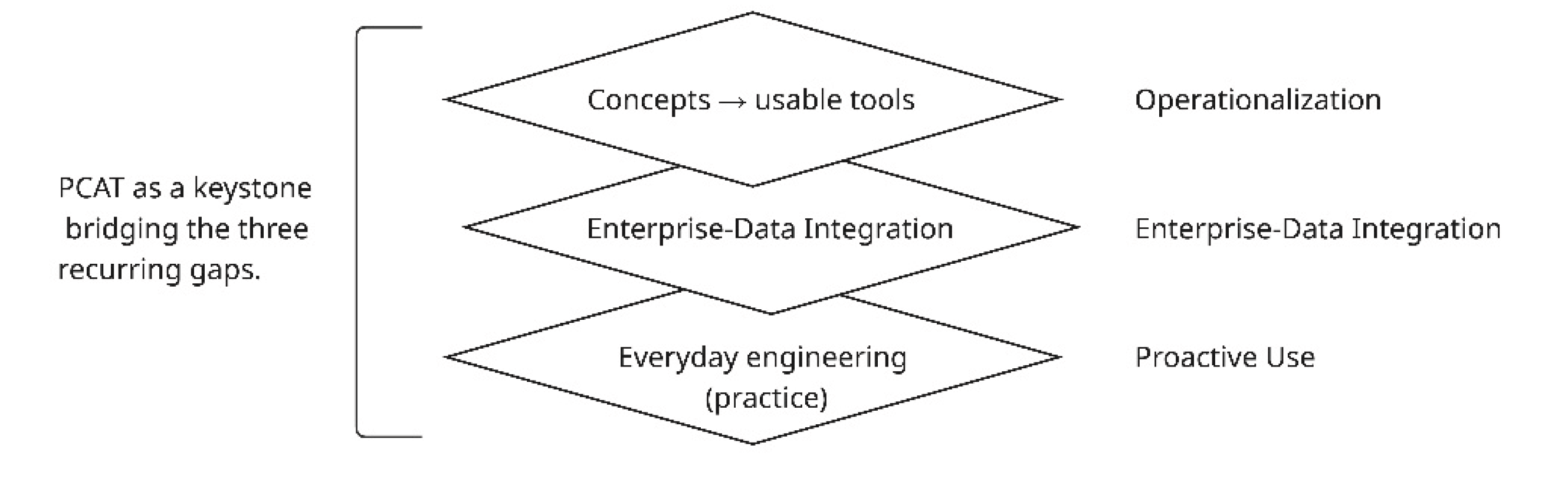

- Limited operationalization. Much of the work is conceptual (indices/frameworks), and recent studies call for IT-enabled decision-support tooling that engineers can apply directly in practice (e.g., model-based or analytics-enabled decision support) (Greve et al., 2022).

- Lack of integration with enterprise data. Although ERP/PLM systems hold BOMs and procurement attributes, studies report siloed data and manual reporting; firms often turn to application-level analytics outside ERP/PLM rather than built-in cross-product functionality (Koskinen et al., 2020; Ji and Abdoli, 2023).

- Reactive rather than proactive usage. In practice, commonality is frequently addressed only after problems arise, not as part of systematic design and procurement processes (Iakymenko et al., 2020; Sierra-Fontalvo et al., 2023).

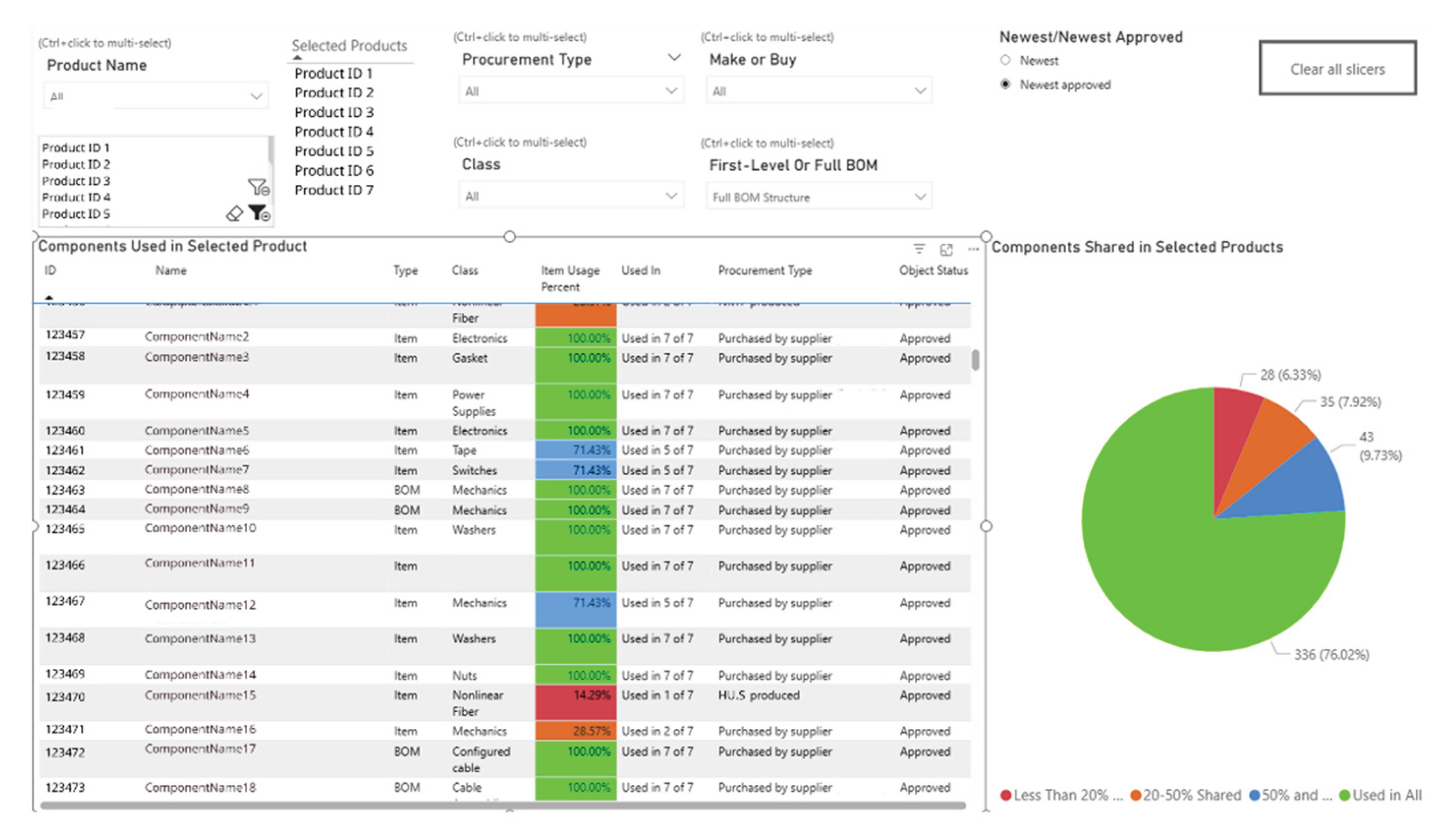

- Portfolio selection and data scoping – selecting a product set, choosing BOM depth, and controlling version sets to define a consistent analytical basis.

- Cross-product reuse quantification – computing and displaying sharedness metrics such as “Used in X of Y products,” usage percentages, and sharedness bands.

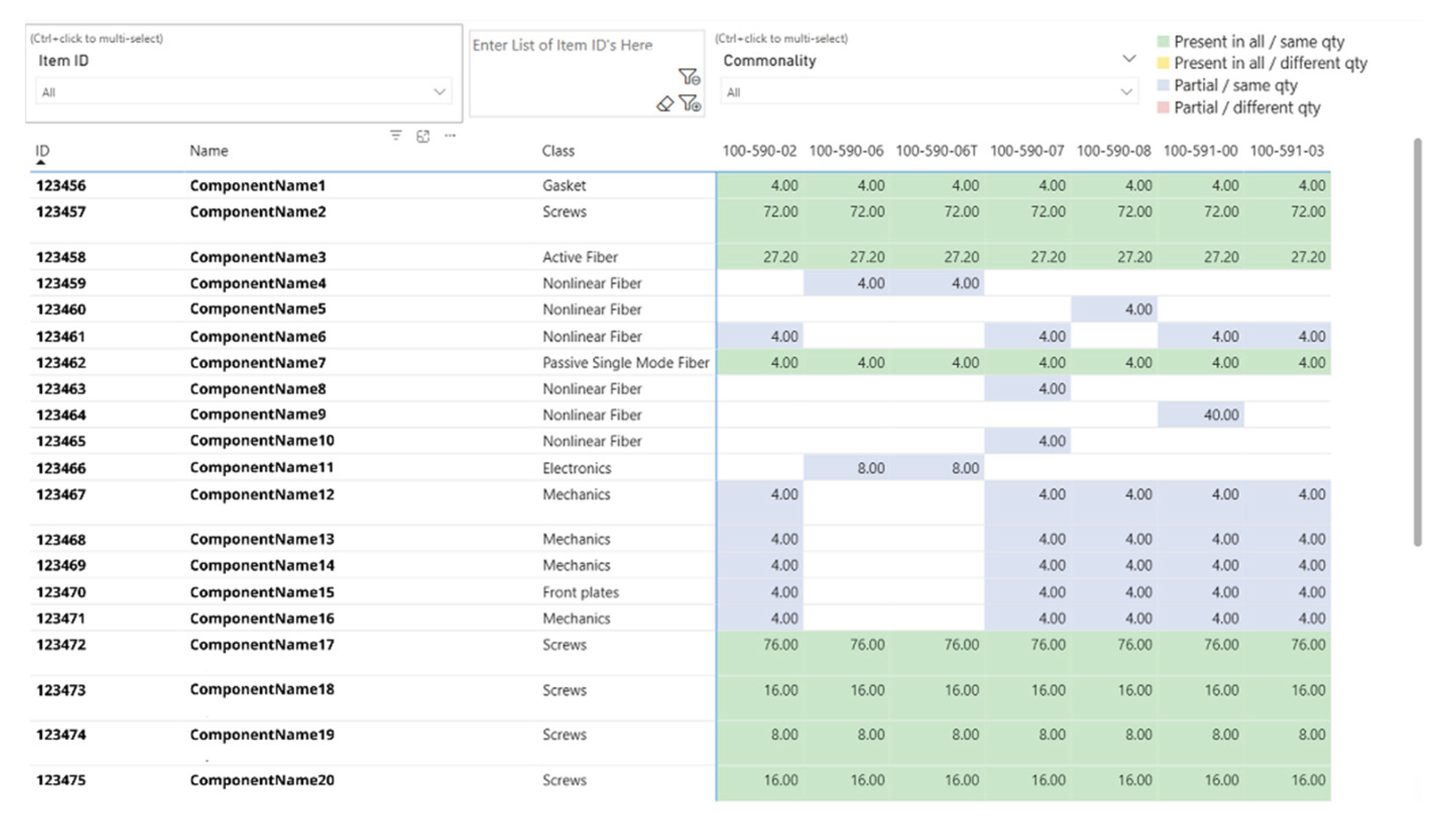

- Commonality Matrix visualization – presenting item presence and quantity consistency across products in a matrix view and highlighting inconsistencies.

- Attribute-based slicing and filtering – filtering/segmenting by procurement attributes (e.g., Make-Buy, procurement type, class, lifecycle flags where available).

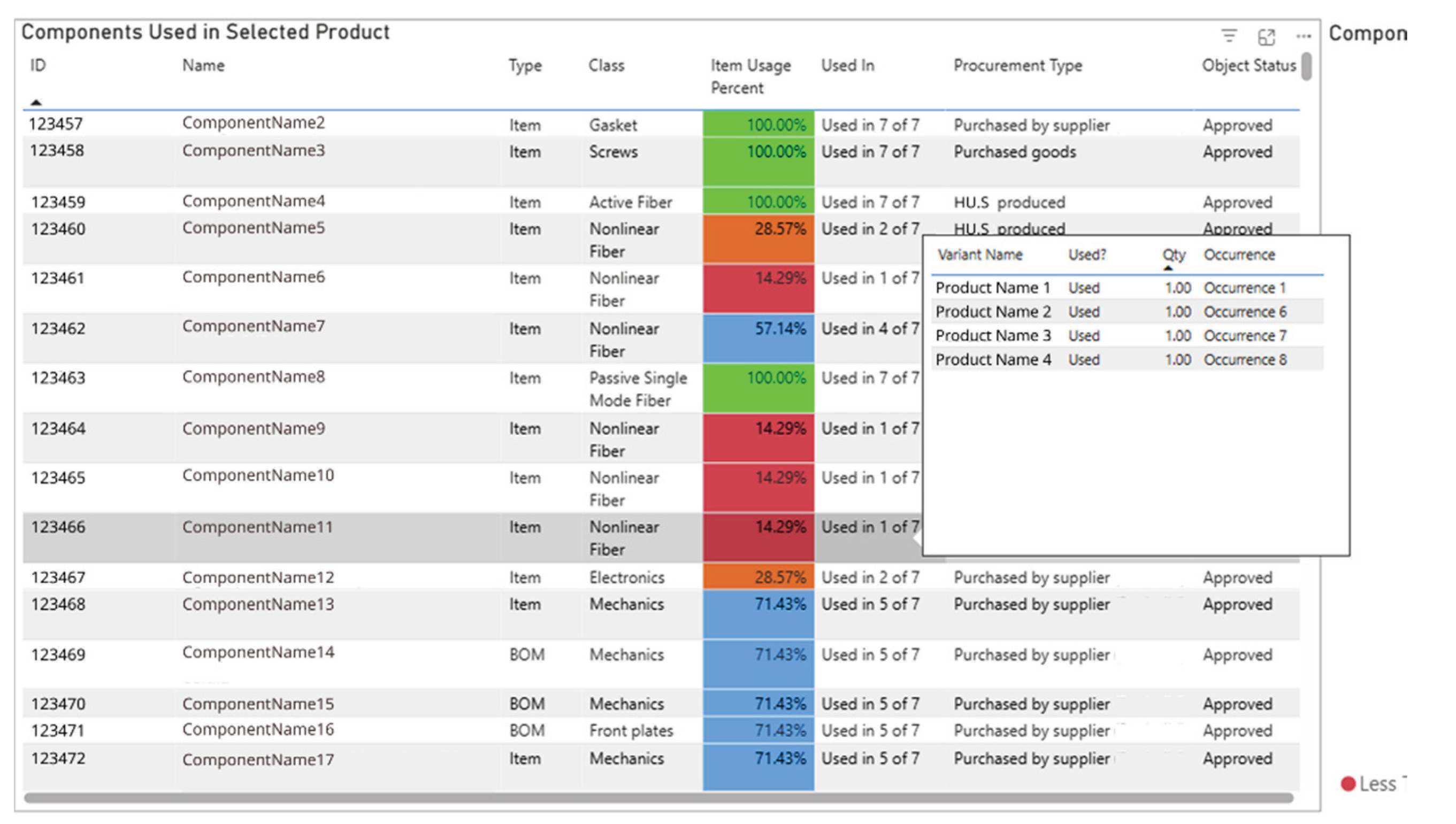

- Drill-through to BOM line detail – linking aggregate views to underlying BOM occurrences to support verification and follow-up actions.

- Export and reporting – exporting tables (CSV/XLSX) and generating printable reports for engineering and procurement reviews.

3. Methodology

Study Design and Rationale

- AR cycle 1: Development of PCAT – focusing on understanding current challenges, translating them into requirements, and developing a workable prototype.

- AR cycle 2: Implementation and test of PCAT – focusing on deploying the prototype in the company, comparing it to existing practices, and evaluating its usefulness in realistic scenarios.

Empirical Setting

Action-Research Cycles and Stages

Data Collection

- Enterprise data: multi-level BOM exports (first level and full structure), item-master attributes (Class, Make-Buy, Procurement Type), and version flags (Newest / Newest-approved).

- Work-practice materials: representative spreadsheets and ERP/PLM printouts used for cross-product checks.

- Session artefacts: bookmarked PCAT views, CSV/XLSX exports, and PDF printouts produced during evaluation sessions.

Data Analysis

4. AR Cycle 1: Development of PCAT

Diagnosis

Planning

Action

Evaluation and Reflection

5. AR Cycle 2: Implementation and Test of PCAT

Diagnosis

Planning

Action

Evaluation / Reflection

6. Discussion and Conclusions

Implications for Research

- Standardization and part rationalization as a life-cycle cost lever. Prior work argues that increasing commonality and modularity can reduce cost through mechanisms such as reduced process complexity and economies of scale, and can improve manageability of product complexity (Fixson, 2007). PCAT supports this principle by making reuse patterns explicit across multiple products (e.g., reuse ranking and cross-product presence/quantity checks), enabling stakeholders to identify where commonality is strong and where reuse breaks down.

- Platforming and modularization as mechanisms to manage variety. Platforming research emphasizes reuse of components/subsystems and interfaces across a product family as a means to offer variety efficiently, and reviews report benefits such as reduced development time, reduced system complexity, and reduced costs (Zhang, 2015). PCAT contributes a practical support layer for such discussions by providing portfolio-level views that help inspect reuse footprints and deviations at BOM-line detail, which can inform platform and modularization decisions.

- Variety reduction procedures that link internal and external variety. In ETO settings, where high variety is expected, literature discusses modular configurations and the use of standard items as strategies to manage variety and improve cost/lead-time (Gosling and Naim, 2009). Related variety-management work also emphasizes the value of transparently linking internal (component/module) and external (finished-goods) variety using enterprise data such as BOMs and master data, although applicability is noted to be strongest outside pure ETO contexts (Staśkiewicz et al., 2022). PCAT complements these strategies by enabling repeatable cross-product analyses that support structured reviews of reuse, inconsistencies, and comparison outputs without requiring intrusive ERP/PLM customization.

Implications for Practice

Limitations and Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. Usability Testing Materials

A1. Moderator Guide (Walkthrough)

A2. Task Set (Think-Aloud)

References

- Al-Assaf, K., Alzahmi, W., Alshaikh, R., Bahroun, Z. & Ahmed, V. (2024) ‘The Relative Importance of Key Factors for Integrating Enterprise Resource Planning (ERP) Systems and Performance Management Practices in the UAE Healthcare Sector’, Big Data and Cognitive Computing, Multidisciplinary Digital Publishing Institute (MDPI), 8.

- AlGeddawy, T. & ElMaraghy, H. (2013) ‘Reactive design methodology for product family platforms, modularity and parts integration’, CIRP Journal of Manufacturing Science and Technology, 6, 34–43.

- Bortolini, M., Calabrese, F., Galizia, F.G. & Regattieri, A. (2023) ‘A two-step methodology for product platform design and assessment in high-variety manufacturing’, International Journal of Advanced Manufacturing Technology, Springer Science and Business Media Deutschland GmbH, 126, 3923–3948.

- Chen, H., Chiang, R.H.L., Storey, V.C., Lindner, C.H. & Robinson, J.M. (2012) Business Intelligence and Analytics: From Big Data to Big Impact Quarterly-Business Intelligence and Analytics: From Big Data to Big Impact.

- Chiu, M.C. & Okudan, G. (2014) ‘An investigation on the impact of product modularity level on supply chain performance metrics: An industrial case study’, Journal of Intelligent Manufacturing, 25, 129–145.

- Collier, D.A. (1981) ‘THE MEASUREMENT AND OPERATING BENEFITS OF COMPONENT PART COMMONALITY’, Decision Sciences, 12, 85–96.

- Collier, Z.A. & Lambert, J.H. (2020) ‘Managing obsolescence of embedded hardware and software in secure and trusted systems’, Frontiers of Engineering Management, Higher Education Press Limited Company, 7, 172–181.

- Fixson, S.K. (2005) ‘Product architecture assessment: A tool to link product, process, and supply chain design decisions’, Journal of Operations Management, 23, 345–369.

- Fixson, S.K. (2007) ‘Modularity and Commonality Research: Past Developments and Future Opportunities’, Concurrent Engineering, 15, 85–111.

- Galizia, F.G., ElMaraghy, H., Bortolini, M. & Mora, C. (2020) ‘Product platforms design, selection and customisation in high-variety manufacturing’, International Journal of Production Research, Taylor and Francis Ltd., 58, 893–911.

- Gauss, L., Lacerda, D.P. & Cauchick Miguel, P.A. (2021) ‘Module-based product family design: systematic literature review and meta-synthesis’, Journal of Intelligent Manufacturing, Springer, 265–312.

- Gebhardt, M., Spieske, A., Kopyto, M. & Birkel, H. (2022) ‘Increasing global supply chains’ resilience after the COVID-19 pandemic: Empirical results from a Delphi study’, Journal of Business Research, Elsevier Inc., 150, 59–72.

- Gosling, J. & Naim, M.M. (2009) ‘Engineer-to-order supply chain management: A literature review and research agenda’, International Journal of Production Economics, 122, 741–754.

- Greve, E., Fuchs, C., Hamraz, B., Windheim, M., Rennpferdt, C., Schwede, L.N. & Krause, D. (2022) ‘Knowledge-Based Decision Support for Concept Evaluation Using the Extended Impact Model of Modular Product Families’, Applied Sciences (Switzerland), MDPI, 12.

- Haapasalo, H., Harkonen, J., Tolonen, A. & Hannila, H. (2019) ‘Product and supply chain related data, processes and information systems for product portfolio management’, International Journal of Product Lifecycle Management, 12, 1.

- Hannila, H., Koskinen, J., Harkonen, J. & Haapasalo, H. (2019) ‘Product-level profitability’, Journal of Enterprise Information Management, 33, 214–237.

- Hannila, H., Koskinen, J., Harkonen, J. & Haapasalo, H. (2020) ‘Product-level profitability: Current challenges and preconditions for data-driven, fact-based product portfolio management’, Journal of Enterprise Information Management, Emerald Group Holdings Ltd., 33, 214–237.

- Hapuwatte, B.M., Badurdeen, F., Bagh, A. & Jawahir, I.S. (2022) ‘Optimizing sustainability performance through component commonality for multi-generational products’, Resources, Conservation and Recycling, Elsevier B.V., 180.

- Hillier, M.S. (2002) ‘Using commonality as backup safety stock’, European Journal of Operational Research, 136, 353–365.

- Hossain, M.S., Chakrabortty, R.K., Elsawah, S. & Ryan, M.J. (2023) ‘Modelling and application of hierarchical joint optimisation for modular product family and supply chain architecture’, International Journal of Advanced Manufacturing Technology, Springer Science and Business Media Deutschland GmbH, 126, 947–971.

- Iakymenko, N., Romsdal, A., Alfnes, E., Semini, M. & Strandhagen, J.O. (2020) ‘Status of engineering change management in the engineer-to-order production environment: insights from a multiple case study’, International Journal of Production Research, Taylor and Francis Ltd., 58, 4506–4528.

- Jeet, V. & Kutanoglu, E. (2018) ‘Part commonality effects on integrated network design and inventory models for low-demand service parts logistics systems’, International Journal of Production Economics, Elsevier B.V., 206, 46–58.

- Ji, X. & Abdoli, S. (2023) ‘Challenges and Opportunities in Product Life Cycle Management in the Context of Industry 4.0’, in Procedia CIRP, Elsevier B.V., 29–34.

- Jiao, J., Simpson, T.W. & Siddique, Z. (2007) ‘Product family design and platform-based product development: A state-of-the-art review’, Journal of Intelligent Manufacturing, 18, 5–29.

- Jonnalagedda, S. & Saranga, H. (2017) ‘Commonality decisions when designing for multiple markets’, European Journal of Operational Research, Elsevier B.V., 258, 902–911.

- Kantola, K., Vanhanen, J. & Tolvanen, J. (2022) ‘Mind the product owner: An action research project into agile release planning’, Information and Software Technology, Elsevier B.V., 147.

- Koskinen, J., Mustonen, E., Harkonen, J. & Haapasalo, H. (2020) ‘Product-level profitability analysis and decision-making: opportunities of IT application-based approach’, International Journal of Product Lifecycle Management, 12, 210.

- Kristjansdottir, K.;, Shafiee, S.; & Hvam, L. (2017) How to Identify Possible Applications of Product Configuration Systems in Engineer-to-Order Companies, International Journal of Industrial Engineering and Management (IJIEM), APA.

- Kurniadi, K.A. & Ryu, K. (2021) ‘Development of multi-disciplinary green-bom to maintain sustainability in reconfigurable manufacturing systems’, Sustainability (Switzerland), MDPI AG, 13.

- Lee, J., Lim, J., Hong, Y.S. & Kang, C. (2025) ‘Variant mode and effects analysis for effective product family expansion under modular architecture’, Journal of Engineering Design, Taylor and Francis Ltd.

- MacCarthy, B.L. & Pasley, R.C. (2021) ‘Group decision support for product lifecycle management’, International Journal of Production Research, Taylor and Francis Ltd., 59, 5050–5067.

- Menezes, M.B.C., Jalali, H. & Lamas, A. (2021) ‘One too many: Product proliferation and the financial performance in manufacturing’, International Journal of Production Economics, Elsevier B.V., 242.

- Mertens, K.G., Rennpferdt, C., Greve, E., Krause, D. & Meyer, M. (2023) ‘Reviewing the intellectual structure of product modularization: Toward a common view and future research agenda’, Journal of Product Innovation Management, John Wiley and Sons Inc, 40, 86–119.

- Myrodia, A., Hvam, L., Sandrin, E., Forza, C. & Haug, A. (2021) ‘Identifying variety-induced complexity cost factors in manufacturing companies and their impact on product profitability’, Journal of Manufacturing Systems, Elsevier B.V., 60, 373–391.

- Ollila, S. & Yström, A. (2020) Action research for innovation management: three benefits, three challenges, and three spaces.

- Romero Rojo, F.J., Roy, R. & Shehab, E. (2010) ‘Obsolescence management for long-life contracts: State of the art and future trends’, International Journal of Advanced Manufacturing Technology, 49, 1235–1250.

- Roy, M.A. & Abdul-Nour, G. (2024) ‘Integrating Modular Design Concepts for Enhanced Efficiency in Digital and Sustainable Manufacturing: A Literature Review’, Applied Sciences (Switzerland), Multidisciplinary Digital Publishing Institute (MDPI), 14.

- Saeed, K.A., Malhotra, M.K. & Abdinnour, S. (2019) ‘How supply chain architecture and product architecture impact firm performance: An empirical examination’, Journal of Purchasing and Supply Management, Elsevier Ltd., 25, 40–52.

- Shih, H.M. (2014) ‘Migrating product structure bill of materials Excel files to STEP PDM implementation’, International Journal of Information Management, Elsevier Ltd., 34, 489–516.

- Sierra-Fontalvo, L., Gonzalez-Quiroga, A. & Mesa, J.A. (2023) ‘A deep dive into addressing obsolescence in product design: A review’, Heliyon, Elsevier Ltd., 9.

- Simpson, T.W., Bobuk, A., Slingerland, L.A., Brennan, S., Logan, D. & Reichard, K. (2012) ‘From user requirements to commonality specifications: An integrated approach to product family design’, Research in Engineering Design, 23, 141–153.

- Staśkiewicz, A.M., Hvam, L. & Haug, A. (2022) ‘A procedure for reducing stock–keeping unit variety by linking internal and external product variety’, CIRP Journal of Manufacturing Science and Technology, Elsevier Ltd., 37, 344–358.

- Terzi, S., Bouras, A., Dutta, D., Garetti, M. & Kiritsis, D. (2010) Product lifecycle management-from its history to its new role, Int. J. Product Lifecycle Management.

- Thevenot, H.J. & Simpson, T.W. (2007) ‘A comprehensive metric for evaluating component commonality in a product family’, Journal of Engineering Design, 18, 577–598.

- Trattner, A., Hvam, L., Forza, C. & Herbert-Hansen, Z.N.L. (2019) ‘Product complexity and operational performance: A systematic literature review’, CIRP Journal of Manufacturing Science and Technology, Elsevier Ltd., 69–83.

- Wong, H., Lesmono, D., Chhajed, D. & Kim, K. (2019) ‘On the evaluation of commonality strategy in product line design: The effect of valuation change and distribution channel structure’, Omega (United Kingdom), Elsevier Ltd., 83, 14–25.

- Zhang, L.L. (2015) ‘A literature review on multitype platforming and framework for future research’, International Journal of Production Economics, Elsevier B.V., 1–12.

- Zhang, L.L., Jiao, R.J., Huang, G. & MacCarthy, B.L. (2025) ‘Extended guest editorial: Smart product platforming in the industry 4.0 era and beyond’, International Journal of Production Economics, Elsevier B.V., 280.

- Zhang, Y., Yang, Z., Ma, X., Dong, W., Dong, D., Tan, Z. & Zhang, S. (2019) ‘Exploration and implementation of commonality valuation method in commercial aircraft family design’, Chinese Journal of Aeronautics, Chinese Journal of Aeronautics, 32, 1828–1846.

| Literature stream | What the stream focuses on (definition) | Typical outputs in the literature | Operationalization gap (what’s missing in day-to-day work) | What PCAT operationalizes (view/feature) | Representative sources (examples) |

| Commonality indices & valuation | Quantifying component reuse/commonality and linking it to performance outcomes (cost, inventory, efficiency). | Indices/metrics, cost or inventory models, portfolio-level assessments | Metrics require clean aggregation + joins across many BOMs; rarely embedded in engineers’ routine workflow/tools. | Compare & Filter: “Used in X of Y”, usage %, sharedness bands; sortable portfolio list. | Collier (1981); Hillier (2002); Jeet & Kutanoglu (2018); Zhang et al. (2019); Hapuwatte et al. (2022). |

| Trade-off studies (variety–standardization–differentiation) | The benefits and limits of standardization/commonality versus product variety and differentiation. | Conceptual trade-offs, managerial implications, sometimes empirical relationships. | Hard to translate trade-offs into concrete “what to check in BOM/procurement data” during decisions. | Attribute slicing in Compare & Filter (Make-Buy, Class, Procurement Type) + BOM-level focus to inspect variety vs reuse. | Jonnalagedda & Saranga (2017) |

| Product architecture, modularity & platforms | How modular product architectures/platforms enable reuse, faster development, and responsiveness. | Frameworks, architectural models, platform design methods; often early-phase guidance. | Frameworks stay conceptual; rarely turned into simple portfolio analytics that highlight “where reuse breaks” across products. | Commonality Matrix: presence/absence + quantity-consistency status across products; highlights gaps undermining modular reuse. | Fixson (2005); Simpson et al. (2012); AlGeddawy & ElMaraghy (2013); Chiu & Okudan (2014); Galizia et al. (2020). |

| Industrial practice & enterprise-system tool support | What ERP/PLM do well (transactional, per-product views) vs what they don’t (repeatable cross-product analytics). | BOM printouts/reports, per-product views; workarounds via Excel/structured query language (SQL); bespoke vendor reports. | Data fragmentation + high manual effort; results are ad hoc, non-repeatable, and dependent on specialist skills. | Read-only tool over exports + two portfolio views; drill-through + export/print for handoff; positioned vs Excel and ERP/PLM standard reports. | Haapasalo et al. (2019); Hannila et al. (2019); Iakymenko et al. (2020); Kurniadi & Ryu (2021); Al-Assaf et al. (2024). |

| Lifecycle risk / obsolescence-triggered rework | Commonality risks that surface late (service/production) when parts become obsolete or inconsistent. | Reactive problem descriptions; risk/obsolescence framing; lifecycle management discussions. | Signals exist in item master/procurement fields but aren’t routinely connected to “how widely reused is this part?” across products. | (If data exists) add slicers/flags (end-of-life (EOL)/lifecycle) + reuse ranking to surface “high-reuse, high-risk” items. | Romero Rojo et al. (2010); Collier & Lambert (2020) |

| Attribute | Neutral fact | Disclosure note |

| Company size (headcount) | Medium-sized enterprise (50–249 employees) | EU small and medium-sized enterprise (SME) size class; exact headcount withheld |

| Geography | Europe | Country withheld for confidentiality |

| Industry | Laser manufacturing / photonics | High-mix, complex engineered products |

| Business model | ETO with some Configure-to-Order (CTO) components | Typical for customized instruments |

| Product-line scope | Supercontinuum sources (single product line) | Focused to avoid cross-line identification |

| Portfolio within line | Several released variants | Exact count withheld; analysis at aggregate level |

| Lifecycle traits | Long service life; obsolescence risk relevant | Motivation for portfolio-level reuse visibility |

| Data used | ERP/PLM BOM exports + procurement attributes | Masked IDs, no customer/order data |

| User roles involved | R&D/Engineering, PMO/Business Process Development, procurement | Roles only; no names or teams disclosed |

| Study role of PCAT | Read-only analytics layer (no write-back) | Built from exported, de-identified data |

| AR stage | Key activities with participants | Evidence / artefacts captured | Outputs used later |

| Diagnosis | Mapped current cross-product analysis practice and pain points. Collected examples of how engineers/PMO/business process development participants check reuse, duplicates, and procurement consistency (Excel exports, printed BOMs). Confirmed that existing ERP/PLM reports are largely product-specific and do not provide portfolio-level reuse views. | Interview/workshop notes; sample Excel workbooks; ERP/PLM BOM printouts; example ad-hoc query outputs; initial problem statement draft. | Problem formulation; initial task catalogue draft (T1–T9); initial requirements themes. |

| Planning | Co-defined PCAT scope and requirements. Derived preliminary task list (T1–T9) from artefact analysis of existing Excel sheets and ERP/PLM printouts. Agreed tool constraints: read-only, operates on exports, no ERP/PLM write-back. Selected Power BI for rapid iteration. Specified core functions and views: portfolio comparison across released products; Compare & Filter and Commonality Matrix; drill-through to BOM line detail; slicing by procurement attributes (Procurement Type, Make-Buy, Class); export/print for review use. | Consolidated requirements list; refined task list T1–T9; workshop notes; solution outline (views, fields, filters); data input specification (BOM + item-master attributes + version flags). | PCAT requirements specification; development plan; initial view mock-ups/configuration plan. |

| Action | Implemented first PCAT prototype as a lightweight analytics layer. Built an Extract–Transform–Load (ETL) routine from ERP/PLM exports (multi-level BOM + item-master attributes) into a Power BI data model. Implemented Compare & Filter: product selection; BOM depth (first-level vs full structure); version sets (Newest vs Newest-approved); sharedness metrics (“Used in X of Y”, usage%); attribute slicers (Procurement Type, Make-Buy, Class). Implemented Commonality Matrix: items as rows/products as columns; presence/absence + quantity-consistency status; drill-through to occurrences; enabled export (CSV/XLSX) and print (PDF). | Power BI data model and report; ETL/transformation notes; screenshots of views; test exports (CSV/XLSX) and PDF prints; prototype configuration notes. | Working PCAT prototype; short user manual (core functions and usage steps). |

| Evaluation / reflection | Internal walkthrough of tasks T1–T9 in the prototype with practitioners. Collected feedback on terminology, layout, missing functions, and usability issues (e.g., legend clarity for matrix statuses). Agreed minor refinements: sharedness labels (“Used in X of Y”); BOM-level toggles; filter presets for typical review scenarios; legend placement and reset guidance. | Session notes; annotated UI screenshots; consolidated change list; updated view labels and presets. | Updated prototype baseline for Cycle 2; refined task definitions; updated user manual. |

| AR stage | Key activities with participants | Evidence / artefacts captured | Outputs used later |

| Diagnosis | Revisited work practices with prototype available to define realistic evaluation scenarios. Conducted meetings to elicit concrete “pain scenarios” (e.g., compare new variant vs existing, check missing items/quantity inconsistencies, review sourcing setups for highly reused components). Collected representative ERP/PLM exports (incl. versions) and the spreadsheets/printouts used for current cross-product checks. Confirmed nine recurring tasks T1–T9; selected T1–T7 and T9 for detailed user testing. | Diagnosis meeting notes; representative ERP/PLM BOM exports + item-master extracts; legacy Excel files and ERP/PLM printouts; finalized task catalogue T1–T9; scenario candidates list. | Baseline description of current practice; evaluation scenario set; data specification for evaluation. |

| Planning | Structured evaluation and evidence collection. Co-designed scenario scripts mapping typical questions to tasks T1–T7 (and export/print task T9). Defined acceptance criteria: ability to complete tasks without manual joins; clarity of outputs for review meetings; effort relative to Excel/ERP/PLM. Agreed data-collection artefacts: bookmarked views; exported CSV/XLSX; PDF printouts; observation notes. Linked evaluation foci EF1–EF4 to data sources and analysis methods. | Scenario scripts; acceptance criteria (EF1–EF4); data-collection protocol; planned outputs list (bookmarks, exports, PDFs); evaluation plan (Table 6 mapping question→data→analysis→evidence→output). | Implementation and evaluation plan; explicit EF1–EF4 traceability to data and analysis. |

| Action | Deployed refreshed prototype for evaluation sessions. Refreshed Power BI model with up-to-date exports. Ran scenario-based think-aloud sessions where participants executed tasks: product set selection/sharedness lists (T1); attribute slicing (T2); BOM depth and version toggles (T3–T4); reuse ranking focus (T5); matrix checks for missing items/quantity inconsistencies (T6–T7); export/print for follow-up (T9). In parallel, replicated the same tasks using existing Excel workbooks and standard ERP/PLM reports to document feasibility and effort for the functional comparison. | Session observation notes; bookmarked PCAT views; CSV/XLSX exports; PDF printouts; screenshots; time/step counts (PCAT vs Excel/ERP/PLM); comparison notes from parallel replications. | Empirical artefact set for analysis; documented basis for functional comparison (comparison matrix in Section 5/Table 9). |

| Evaluation / reflection | Combined functional comparison with qualitative analysis. Coded task support for T1–T9 as Yes/Partly/No for PCAT, Excel, and standard ERP/PLM based on actual attempts and user sessions (comparison table). Analyzed session artefacts for evidence of (i) error detection (missing components/qty inconsistencies), (ii) procurement insight (slicing by attributes revealing heterogeneous sourcing), and (iii) reuse visibility (sharedness rankings shaping review focus). Collected participant reflections on preparation effort and confidence vs Excel-based workarounds. | Completed Yes/Partly/No coding matrix with justification notes; annotated exports/PDFs; session notes with quotations/paraphrases; short vignettes/examples for Section 5. | Findings on usefulness and limitations (Section 5); implications for practice and future work (Section 6); refinement backlog (if any). |

| Participant | Role / Title | Function group | Session format | Approx. duration |

| P1 | Senior Optical Engineer | R&D / Engineering | Walkthrough + think-aloud tasks (T1–T7, export/print as needed) | ~30 min |

| P2 | Engineering Manager - Products & ATE | R&D / Engineering | Walkthrough + think-aloud tasks (T1–T7, export/print as needed) | ~30 min |

| P3 | Senior Optical Engineer | R&D / Engineering | Walkthrough + think-aloud tasks (T1–T7, export/print as needed) | ~30 min |

| P4 | Senior R&D Process Engineering Manager | PMO / Business Process Development | Walkthrough + think-aloud tasks (T1–T7, export/print as needed) | ~30 min |

| P5 | Procurement Engineer | Procurement | Walkthrough + think-aloud tasks (T1–T7, export/print as needed) | ~30 min |

| Evaluation focus | Data used | Analysis operations | Evidence captured | Output |

| EF1: Does PCAT provide portfolio-level visibility that Excel/ERP can’t readily offer? | PCAT views; Excel workbooks; ERP/PLM reports | Comparative functionality coding of T1–T9 (Yes/Partly/No + note) | Completed comparison matrix; screenshots | Table 9 in Section 5 (comparison); narrative summary |

| EF2: Does PCAT help detect duplicates/missing items/qty inconsistencies? | Session artefacts; PCAT exports | Thematic content analysis of session artefacts for “Error detection,” “Procurement insight,” “Reuse visibility” | Annotated exports; bookmarked filters | Result examples in Section 5; printed PDFs |

| EF3: Can users slice by procurement attributes to support sourcing discussions? | PCAT slicers; session notes | Usage tracing of slicers (Procurement Type/Make-Buy/Class) | Screenshot sequences; timestamps | Short vignettes in Section 5 |

| EF4: Preparation effort vs baseline | Time estimates; steps | Task decomposition (steps, manual joins) | Step counts; example formulas | Effort commentary in Section 5 |

| Task ID & name | PCAT functionality used | Specific user operations (Power BI) | Output / artefact |

| T1. Cross-product sharing list | Compare & Filter view | Multi-select products → adjust slicers (Procurement Type / Make-Buy / Class / BOM level) → sort “Used in” | Item table with Used in X of Y and usage % |

| T2. Attribute-sliced comparison | Compare & Filter view | Slice by Procurement Type / Make-Buy / Class → view affected items | Attribute-filtered item list for engineering/procurement |

| T3. BOM-level focus | Compare & Filter view | Toggle First-level vs Full BOM structure | Narrowed list (e.g., top-level only) |

| T4. Version selection | Compare & Filter view | Choose Newest or Newest approved | Version-consistent comparison basis |

| T5. Sharedness summary | Compare & Filter view (pie) | After selecting products/filters, read pie bands | Share-of-BOM summary: in all / 50–<100% / <50% / unique |

| T6. Commonality Matrix | Commonality Matrix view | Select products → (optional) paste list of Item IDs → read cell statuses | Matrix with cell legends: all/same qty, all/different qty, partial/same, partial/different |

| T7. Missing-component detection | Commonality Matrix view | Scan for blanks/partials per row → filter to gaps | Gap list: items missing from specific products |

| T8. Quantity consistency check | Commonality Matrix view | Sort/filter by “different qty” status | Irregular-quantity list per product |

| T9. Export / print | Both views | Export → CSV/XLSX; Print → PDF | Shareable files / meeting pack |

| Term | Operational definition | Data required | Example output in PCAT |

| Exact duplicate | Same part number (optionally including revision) appears in multiple selected products. | Part number (and revision if used), product set, BOM occurrences | Appears multiple times in “Used in X of Y” list / drill-through occurrences |

| Sharedness (“Used in X of Y”) | X = number of selected products containing the item; Y = size of selected product set. | Product selection; BOM occurrences | “Used in 3 of 5” |

| Item usage % | X/Y × 100 for the current selection. | Same as above | “60%” |

| Commonality Matrix status | Present in all/same qty; present in all/different qty; partial/same qty; partial/different qty. | Per-product quantities per item | Matrix cell status/legend class |

| BOM level | First-level includes top-level items; full structure includes all BOM levels. | BOM depth tagging / multilevel BOM | “First-level” vs “Full BOM” toggle |

| Version set | Newest vs Newest-approved controls which revision of each product/BOM is included. | Version flags in export | Version toggle |

| Attribute slicing | Procurement Type, Make-Buy, Class used as filters (additional attributes if present). | Item-master attributes | Slicers + filtered item list |

| Lifecycle/EOL | Not included in current prototype; if an EOL flag exists in exports, it can be added as a slicer (out of scope here). | EOL/lifecycle field (if available) | Lifecycle slicer (future work) |

| Modeling-related task | PCAT (Power BI prototype)* | Excel / spreadsheets* | ERP/PLM systems standard reports* |

| T1. Select multiple products and list components shared across them (with “used in N of M” counts) | Yes - Compare & Filter view lists items and “Used in X of Y” | Partly - pivots/joins; heavy manual setup | No - reports are per-product |

| T2. Filter by Procurement Type / Make-Buy / Class | Yes - slicers on those attributes | Partly - VLOOKUP/PowerQuery; fragile | No - typically split across modules |

| T3. Limit to BOM level (first-level vs full structure) | Yes - BOM-level slicer | Partly - needs level tagging & formulas | No - standard BOM printouts only |

| T4. Choose version set (Newest vs Newest-approved) | Yes - version toggle | Yes (with limitations) - only if version flags present | No - usually one active view |

| T5. Summarize sharedness across selected products (pie: in all / 50–<100% / <50% / unique) | Yes - pie chart on sharedness bands | Yes (with limitations) - build buckets manually | No - not a standard portfolio view |

| T6. Per-item Commonality Matrix (products in columns; presence/qty status by cell) | Yes - matrix shows present in all/same qty, present in all/different qty, partial/same, partial/different | Yes (with limitations) - complex pivot; formatting overhead | No - per-product only |

| T7. Missing components between compared products | Yes -empty/partial cells highlight gaps | Yes (with limitations) - manual comparison | No - not available |

| T8. Quantity consistency check across products (same vs different qty) | Yes - cell status encodes qty consistency | Yes (with limitations) - manual rules | No - not available |

| T9. Export/print selected views for handoff | Yes - Export CSV/XLSX; Print to PDF | Yes - native | Yes (with limitations) - fixed layouts |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).