B. Experimental Results

This paper first conducts a comparative experiment, and the experimental results are shown in

Table 1.

From the results in

Table 1, it can be observed that traditional sequence modeling methods, such as LSTM, show relatively lower performance across all metrics. The AUC is 0.871 and the ACC is 0.843, indicating limited ability to capture the complex features of financial data. Because financial data exhibit strong nonlinearity and multi-scale dependencies, LSTM still faces limitations in long-sequence modeling. This leads to lower values in F1-Score and Precision. These results suggest that relying solely on recurrent structures makes it difficult to achieve both stability and accuracy in privacy-preserving financial modeling tasks.

In contrast, the Transformer model shows certain advantages in global dependency modeling due to its self-attention mechanism. The AUC increases to 0.902 and the ACC rises to 0.868, with corresponding improvements in F1-Score and Precision. This demonstrates that Transformer is more adaptive in capturing cross-temporal dependencies and feature interactions, and it can better characterize the dynamic patterns in financial data. However, its performance is still influenced by heterogeneous data and privacy constraints. This indicates that in real federated learning environments, relying only on global attention remains limited.

The 1DCNN and ConvNextv2 models represent different convolution-based approaches. 1DCNN performs better than LSTM in local pattern extraction, with an AUC of 0.889. However, due to the lack of effective modeling for long-range dependencies, its overall performance is slightly lower than Transformer. ConvNextv2, as an improved convolutional network with modern architecture design, outperforms the previous models. It achieves an AUC of 0.915 and an ACC of 0.876, showing advantages in handling high-dimensional and complex data. Yet convolutional structures in privacy-preserving financial technology tasks are still constrained by insufficient global modeling and cannot fully address the challenges of non-independent and non-identically distributed data.

Overall, the proposed method outperforms all baseline models in every metric. The AUC increases to 0.943, and the ACC reaches 0.897. The F1-Score and Precision are 0.885 and 0.873, respectively, showing significant improvements. These results demonstrate that combining the federated learning framework with privacy-preserving mechanisms not only reduces the privacy risks of data sharing but also achieves better predictive performance in heterogeneous data environments. This provides strong evidence of the practical value and potential applicability of the proposed method in financial technology scenarios.

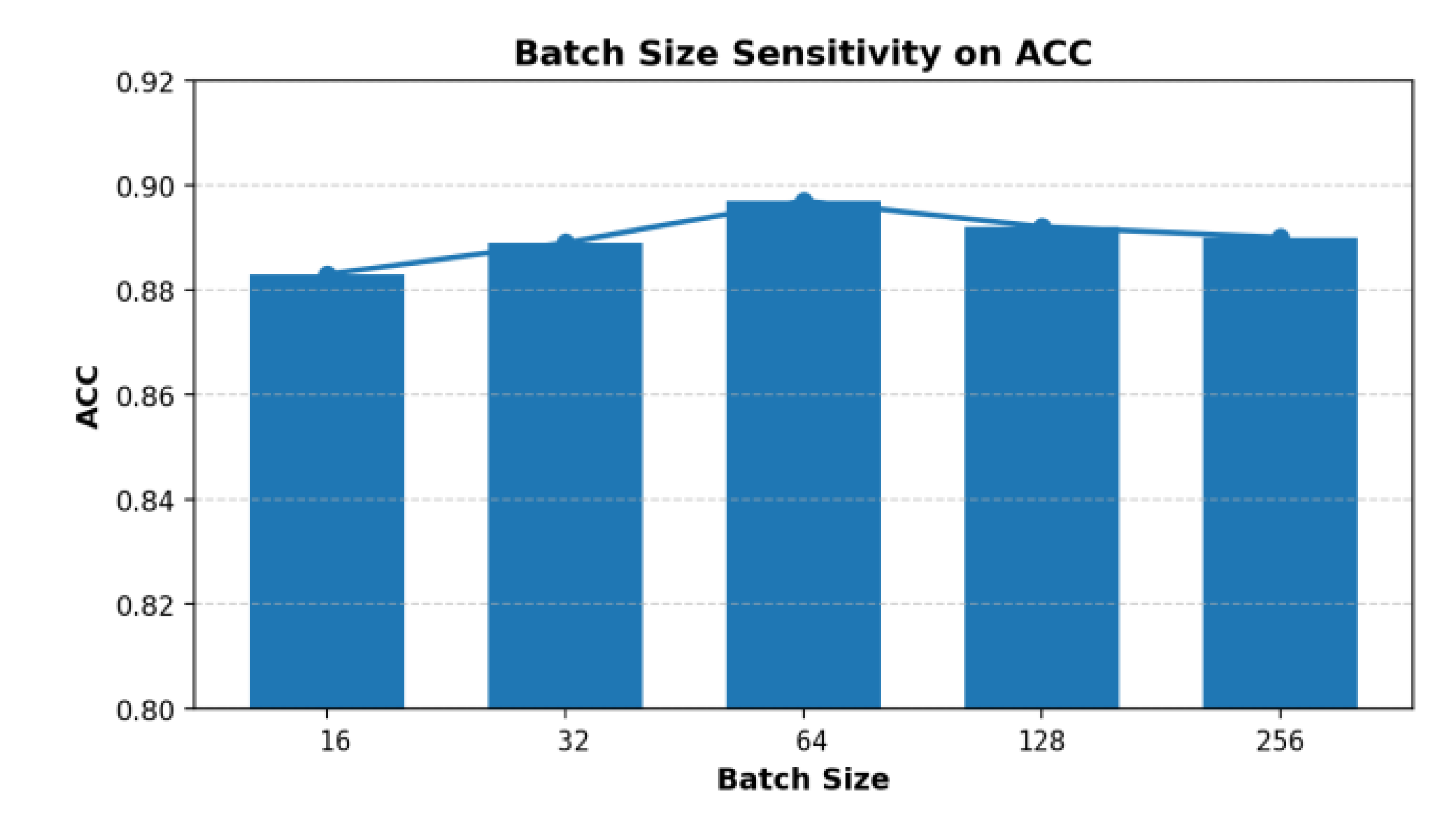

This paper also presents an experiment on the sensitivity of batch size to the ACC indicator, and the experimental results are shown in

Figure 2.

From the results in

Figure 2, it can be observed that batch size has a direct impact on the ACC metric. As the batch size increases from 16 to 64, the ACC value gradually rises and reaches the highest value of 0.897 at batch size 64. This indicates that a moderate batch size helps the model balance gradient estimation and parameter updates, thereby improving prediction performance under privacy-preserving conditions.

When the batch size continues to increase to 128 and 256, the ACC shows a slight decline. This suggests that an excessively large batch may reduce the model’s ability to capture details of the data distribution during local training, which weakens the generalization ability of the global model. In particular, within the federated learning framework, the data distributions of participants are often non-independent and non-identical. Too large a batch size reduces the sensitivity of local updates to heterogeneous features.

On the other hand, too small a batch size can also cause unstable gradient updates and increase the variance of the model during aggregation. The experimental results show that the ACC at batch sizes of 16 and 32 is significantly lower than at 64. This indicates that although smaller batches increase the update frequency, the overall stability and convergence performance of the model are not well guaranteed. This further confirms that in financial technology scenarios, the proper choice of batch size is a key factor for improving global model performance.

In summary, the experimental results indicate that batch size in a privacy-preserving federated learning framework is not better when simply larger or smaller, but rather there exists an optimal range. Within this range, the model can achieve both stability of local updates and robustness of global aggregation, thereby reaching the best performance in terms of ACC. This finding provides a useful reference for hyperparameter selection and model optimization. It also highlights the importance of sensitivity experiments under privacy-preserving constraints.

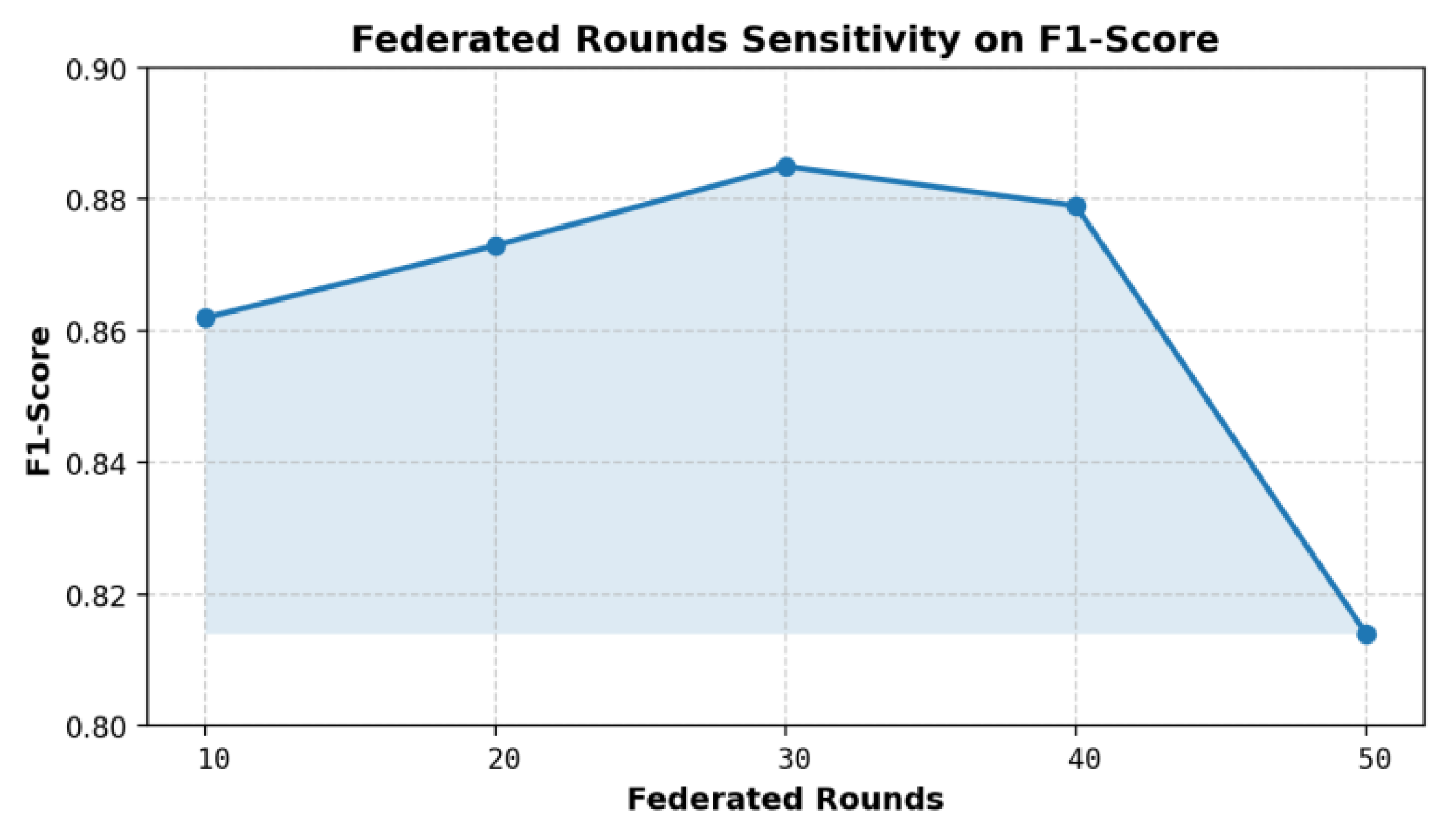

This paper also presents an experiment on the sensitivity of the number of federation rounds to the F1-Score indicator, and the experimental results are shown in

Figure 3.

From the results in

Figure 3, it can be observed that the number of federated rounds has a clear impact on the F1-Score. When the number of rounds is small, such as 10, the F1-Score remains around 0.862. This indicates that the model has not yet fully converged in the early stage of aggregation. Insufficient parameter updates limit the overall performance. As the number of federated rounds increases, collaborative optimization across participants’ data becomes more stable, and the F1-Score shows a steady upward trend.

When the number of rounds reaches 30, the F1-Score peaks at 0.885. This shows that the model achieves an optimal balance between local training and global aggregation at this point. The result demonstrates that in a privacy-preserving federated learning framework, a moderate increase in rounds helps integrate multi-party data features more effectively. It also enhances the ability to capture complex patterns in financial data, which improves prediction accuracy and robustness.

However, when the number of federated rounds further increases to 40 and 50, the F1-Score declines slightly. This may result from overfitting caused by excessive rounds, which reduces the generalization ability of the global model on heterogeneous data from some participants. At the same time, frequent parameter exchanges may amplify communication costs and the effect of noise injection. These factors can weaken the performance of the model under privacy-preserving mechanisms.

Overall, the experimental results indicate that the choice of federated rounds is critical for model performance. Too few rounds prevent full convergence, while too many rounds may cause performance fluctuations and additional costs. A reasonable number of rounds can balance privacy protection, computational efficiency, and predictive accuracy. This provides practical guidance for parameter setting in federated learning systems for financial technology. It also highlights the importance of sensitivity experiments under privacy-preserving conditions.

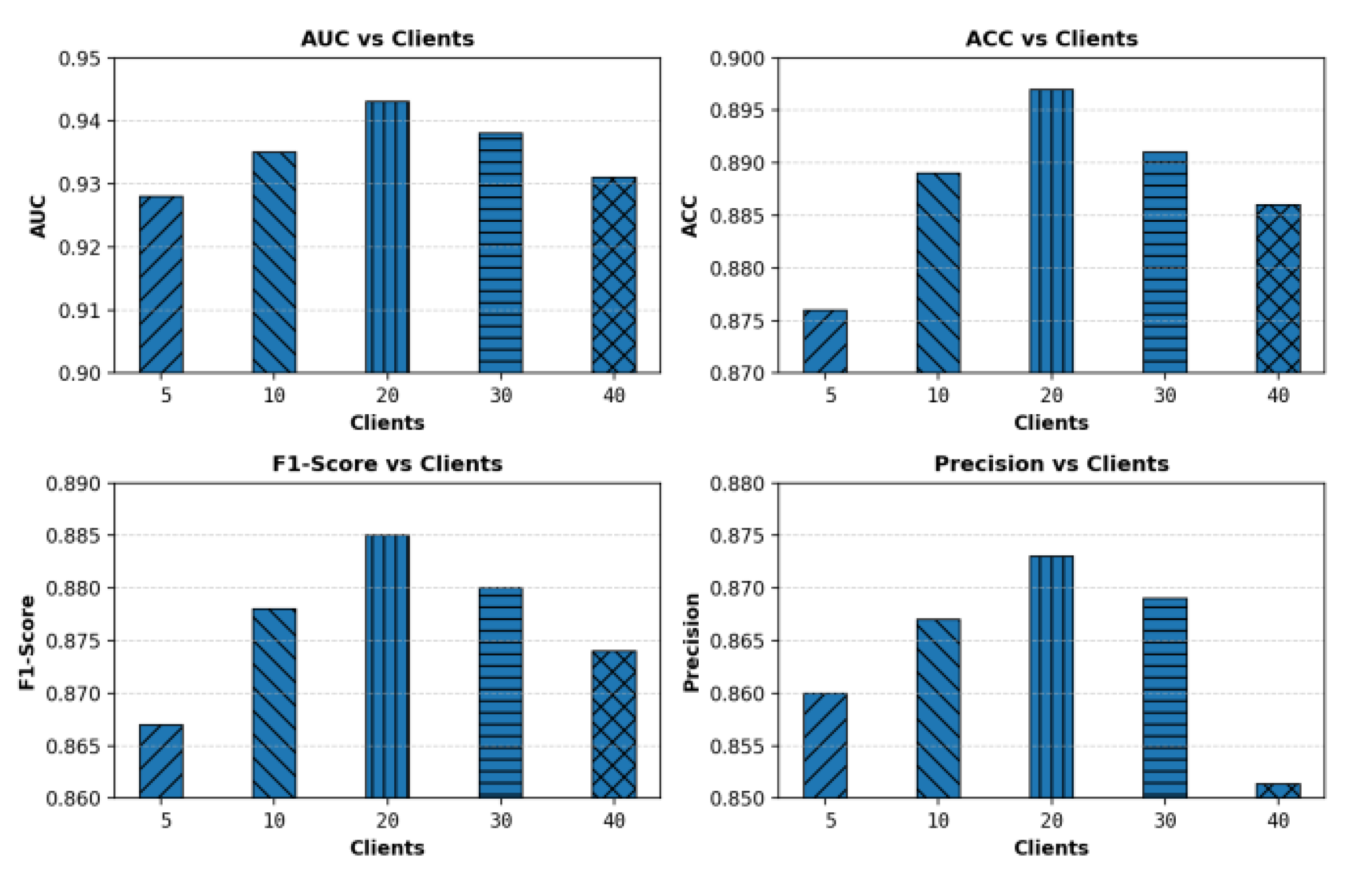

This paper further gives the impact of the number of participating clients on the experimental results, and the experimental results are shown in

Figure 4.

From the results in

Figure 4, it can be seen that the number of participating clients has a clear impact on model performance. When the number of clients is small, the performance of AUC, ACC, F1-Score, and Precision is relatively low. This indicates that with limited data distribution, the global model cannot fully learn feature differences from diverse sources, which restricts overall performance. It shows that insufficient clients may hinder federated learning models from forming robust feature representations in cross-institutional collaborative modeling. When the number of clients increases to 20, all four metrics reach their peak. The AUC approaches 0.943, the ACC reaches 0.897, the F1-Score rises to 0.885, and the Precision improves to 0.873. This result shows that with a moderate number of participants, the model can better integrate feature distributions from multiple data sources. It also demonstrates that the model achieves the best predictive performance under privacy-preserving conditions. This indicates that an appropriate number of clients helps balance data diversity and aggregation stability, which is a critical factor affecting global model performance.

However, when the number of clients further increases to 30 and 40, the model's performance shows a slight decline. All four metrics drop to some extent. This may be due to stronger data heterogeneity caused by too many participants. Larger differences in data distributions across clients make it difficult to eliminate bias during global aggregation. At the same time, excessive clients increase communication and synchronization costs, introducing additional randomness and noise during global updates, which affects convergence.

Overall, the experimental results indicate that the choice of client number is crucial in privacy-preserving federated learning. A moderate number of clients can effectively improve model stability and generalization ability, while too few or too many participants may weaken global model performance. This finding reveals the limiting effect of environmental sensitivity on model performance. It also provides valuable guidance for parameter selection when deploying federated learning systems in practice.

Overall, the experimental results indicate that the choice of client number is crucial in privacy-preserving federated learning. A moderate number of clients can effectively improve model stability and generalization ability, while too few or too many participants may weaken global model performance. This finding reveals the limiting effect of environmental sensitivity on model performance. It also provides valuable guidance for parameter selection when deploying federated learning systems in practice.

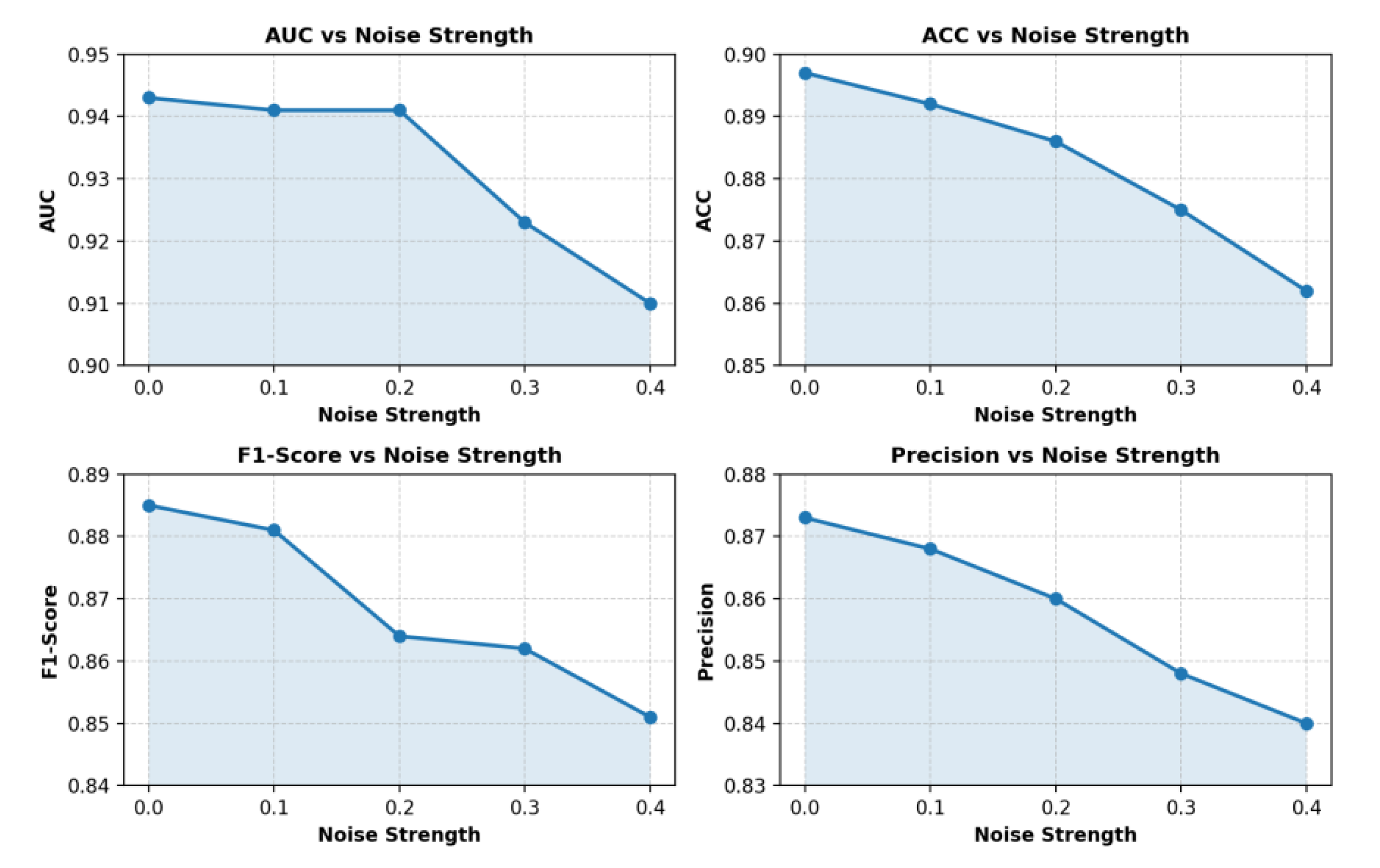

This paper also gives the influence of noise injection intensity on the experimental results, and the experimental results are shown in

Figure 5.

From the results in

Figure 5, it can be observed that increasing noise injection intensity has a significant impact on overall model performance. As the noise level rises from 0.0 to 0.4, the AUC gradually decreases from 0.943 to about 0.910. This indicates that excessive noise interferes with the model’s ability to distinguish patterns in different feature spaces. Although differential privacy plays an important role in protecting user information, too much noise reduces the capacity to capture abnormal transaction patterns. For the ACC metric, the accuracy also decreases as noise intensity grows. The value drops from 0.897 to 0.862, showing the same downward trend as AUC. This suggests that stronger privacy protection affects the stability of global prediction results, preventing the model from maintaining its original classification advantage. In financial technology applications, balancing data security and model performance is a critical issue when designing privacy-preserving algorithms.

The variation in F1-Score also highlights this trade-off. The experiments show that as noise increases from 0.0 to 0.4, the F1-Score falls from 0.885 to 0.851. The decline is substantial. This means that noise not only reduces recall but also interferes with precision, lowering the model’s overall effectiveness in detecting risky transactions. In other words, while noise enhances privacy protection, excessive noise can prevent the model from effectively balancing recognition across different classes. The downward trend in Precision further confirms this observation. As noise intensity rises, Precision decreases from 0.873 to 0.840. This indicates that the reliability of positive predictions is reduced. In real privacy-preserving settings, too much noise weakens the trustworthiness of risk prediction. Therefore, these results emphasize the sensitivity of noise injection intensity. Setting noise at a reasonable level can safeguard data privacy while ensuring model usability and stability in financial applications.