1. Introduction

Open-pit mines are among the most hazardous industrial environments due to the risks posed by geotechnical events such as slope failures and landslides [

1,

2,

3]. The devastating consequences of these incidents are exemplified by recent disasters such as the 2023 Xinjing coal mine landslide in China, which resulted in 53 fatalities and approximately USD 28 million in economic losses [

4], and the 2020 Hpakant jade mine landslide in Myanmar, which claimed nearly 200 lives and severely impacted local communities [

5]. These events can be triggered by several factors, including weak geological structures [

6], intense or prolonged rainfall [

7,

8], seismic activity, and vibrations from excavation and blasting [

9]. Early warning systems are therefore designed to monitor slope displacement and detect hazards such as surface cracks [

10,

11,

12], enabling safety interventions such as exclusion zones to protect both workers and assets [

13]. Despite advances in technologies such as stability radar, visual inspection remains central to hazard identification across many open-pit mines in Australia [

14,

15,

16]. However, this manual practice is both labor-intensive and subjective, exposing workers to hazardous environments and compromising safety [

17,

18,

19].

Consequently, recent studies have focused on developing automated approaches that leverage technologies such as AI to reduce dependence on manual monitoring while enhancing operational safety and efficiency [

20]. Specifically, advances in DL [

21] have driven substantial progress in CV tasks such as object detection, image classification, and image segmentation [

22], thereby enabling machines to derive meaningful information from real-world visual data [

23]. To that end, neural network architectures such as convolutional neural networks (CNNs) [

24] and vision transformers (ViTs) [

25] have been widely utilized across diverse domains [

26,

27,

28,

29,

30,

31,

32,

33,

34,

35], underscoring their potential for geotechnical risk management. In particular, recent studies have demonstrated the use of CNN-based CV models such as YOLOv8 [

36], YOLOv10 [

37], Mask R-CNN [

38], U-Net [

39,

40], and ENet [

41] for automated surface crack detection in open-pit mining. While these works demonstrate the feasibility of DL models for geotechnical hazard identification, their effectiveness remains constrained by the limited availability of labelled crack images.

This issue, commonly referred to as data scarcity, is a ubiquitous problem in the field of DL [

42], where domain generalization, or the capacity of a CV model to recognize objects in unseen settings or environments [

43], is driven by the volume and representativeness of the data used for training [

44]. Data scarcity is especially pronounced in industrial domains such as mining [

45], where datasets are inherently commercially sensitive and limited by the significant cost and expertise required for data collection and annotation [

46,

47,

48]. To address this challenge, techniques such as transfer learning [

49] and data augmentation [

50] have been widely adopted in the literature, particularly in healthcare and other data-constrained domains. Transfer learning reduces reliance on large datasets but is vulnerable to source-target domain mismatch [

51,

52], while data augmentation, though capable of artificially increasing dataset size [

53], is prone to amplifying existing distributional biases [

54,

55]. As both methods remain fundamentally bounded by the quality of the original training data, interest continues to grow in techniques capable of generating entirely new and diverse training data at scale [

56].

One established approach is the use of game engines, where platforms such as Unreal Engine (UE) [

57] and Unity [

58] are adapted to render synthetic images for training CV models. These programs enable controlled variation of scene parameters and environmental conditions, as well as automated dataset generation and ground-truth annotation [

59]. However, their diversity is inherently bounded by the manual effort required for scene and asset development, imposing practical limitations on dataset scale and variation. Generative models address this limitation by learning directly from existing real-world distributions to produce high-fidelity outputs at scale. Among these, generative adversarial networks (GANs) [

60] such as StyleGAN2-ADA are highly effective at emulating realistic visual patterns [

61], providing controllable latent-space manipulation and adaptive augmentation that mitigates discriminator overfitting for small datasets [

62]. Although modern diffusion models can also produce visually rich and highly stylized imagery, their outputs are more dependent on prompt tuning and exhibit less fine-grained, deterministic control compared to GANs, making them less suited to domains requiring strict structural realism and reproducibility [

63]. Despite these technological advances, the generation of synthetic images remains constrained by a number of limitations. The most impactful of these issues is the reality gap, a concept which refers to the perceptual and distributional disparity between real and synthetic data, a factor which often limits the generalizability of CV models trained on images from game engines [

64]. Moreover, generative models typically require substantial training data, impacting their effectiveness in domains where real-world datasets are limited [

65]. While techniques such as domain randomization [

66,

67] have been explored as a means of mitigating the reality gap [

68], no prior study has systematically evaluated if game engines can be leveraged to train generative models for realistic and scalable image synthesis as a mitigant for data scarcity.

To address this gap, we develop and evaluate a hybrid game engine—generative AI framework that produces synthetic images to offset data scarcity and enhance the generalizability of CV models. The main contributions of this work are summarized as follows:

We develop a scalable hybrid framework integrating UE5 and StyleGAN2-ADA to generate realistic synthetic images with automated annotations for training CV models.

We demonstrate that synthetic images of surface cracks generated through our pipeline achieve enhanced fidelity and diversity compared to game engine data alone, validated quantitatively through Fréchet Inception Distance (FID) and Learned Perceptual Image Patch Similarity (LPIPS).

We evaluate the downstream effectiveness of images generated through our pipeline by training the real-time object detection model YOLOv11, achieving substantial performance improvements relative to models trained solely on game engine data.

To the best of our knowledge, this study presents the first systematic evaluation of a hybrid game engine—generative AI framework for data synthesis utilizing UE5 and StyleGAN2-ADA. By combining the geometric and structural realism of game engines with the scalable diversity and textural domain adaptation of generative modelling, our framework demonstrates improved generalization performance in data-scarce CV tasks. Applied to surface crack detection in open-pit mining, the framework enhances the accuracy of object detection models in identifying slope failure precursors, supporting improved safety outcomes while demonstrating a methodology with potential transferability to other data-constrained domains.

5. Conclusions and Future Work

Autonomous surface crack detection in open-pit mining offers numerous benefits such as enhanced worker safety and improved operational efficiency. However, CV models require large amounts of representative training data to generalize effectively to unseen conditions, impacting their applicability in commercial domains constrained by safety, cost, and data confidentiality considerations. To address this challenge, this study presented a hybrid game engine—generative AI framework for dataset synthesis and evaluated its effectiveness for surface crack detection in real-world open-pit mining imagery. The proposed approach combined the realism of the UE5 game engine with the scalability of StyleGAN2-ADA, enabling the synthesis of large-scale, fully labelled surface crack datasets that significantly improve the generalizability of CV models without reliance on extensive field data collection or manual annotation.

Comprehensive evaluation on a held-out real-world test set demonstrated that object detection models trained on images generated by the proposed framework substantially outperformed those trained solely on synthetic data from UE5. In particular, AP@0.5 increased from 0.403 to 0.922 for the best-performing GAN-adapted configuration, while AP@[0.5:0.95] exhibited approximately a threefold improvement across the board, indicating significantly enhanced localization robustness and bounding box accuracy. These performance gains were accompanied by higher recall and reduced missed detections, confirming that the proposed framework effectively narrows the domain gap between synthetic and real-world imagery through the increased diversity and realism of its generated samples. From a practical perspective, this work highlights the viability of synthetic data in autonomous inspection workflows, reducing dependence on manual labeling while mitigating operational, safety, and confidentiality constraints associated with real-world data collection. More broadly, it demonstrates that synthetic data can generalize effectively to real-world conditions when underpinned by an appropriate generation framework, suggesting that the proposed approach may be extended to other data-constrained domains where large-scale labelled datasets are similarly difficult to obtain.

Future work will iterate on this research in several ways. Firstly, diffusion-based generative models will be explored as an alternative to the proposed framework to examine whether their enhanced synthesis fidelity provides meaningful downstream benefits over the controllability of game engine-based rendering. Secondly, the detection pipeline will be extended toward multi-scale learning for improved object detection at varying distances, and instance or semantic segmentation to enable more precise delineation of surface crack boundaries for downstream analysis such as propagation measurement. Additionally, to target the limitation of small object detection identified in the study, future work will investigate architectural enhancements to the object detection and segmentation models to further improve sensitivity to fine-grained cracks. Finally, framework integration with edge-based inference platforms and UAV-based data acquisition will be examined to support real-time autonomous inspection workflows across diverse open-pit mining environments.

Figure 1.

Graphical comparison between (a) GTA V and (b) a nature scene in Unity. GTA V demonstrates greater photorealism than Unity due to advanced rendering effects such as ray-traced reflections, global illumination, and detailed material texturing, illustrating the disparity in visual fidelity that contributes to the reality gap.

Figure 1.

Graphical comparison between (a) GTA V and (b) a nature scene in Unity. GTA V demonstrates greater photorealism than Unity due to advanced rendering effects such as ray-traced reflections, global illumination, and detailed material texturing, illustrating the disparity in visual fidelity that contributes to the reality gap.

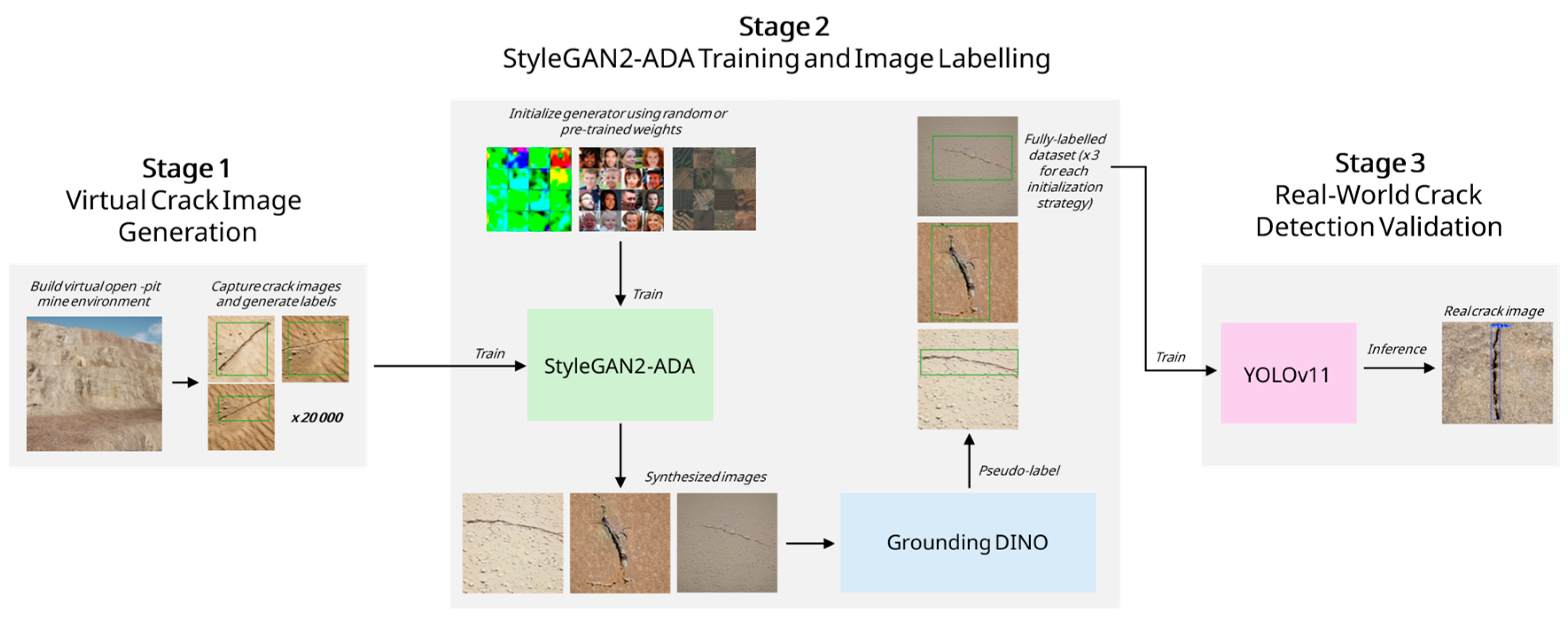

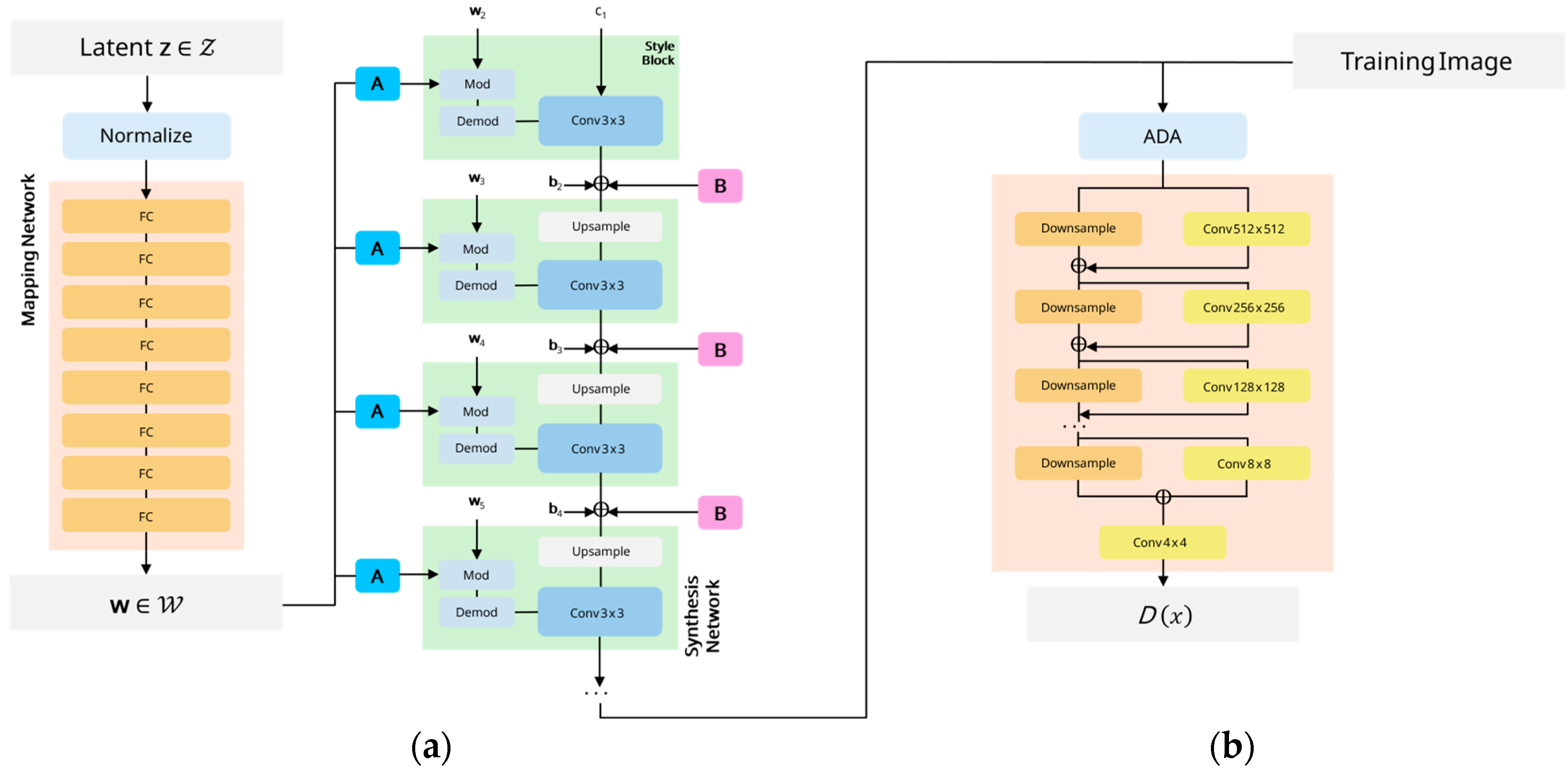

Figure 2.

Overview of the proposed hybrid synthetic dataset generation framework. Stage 1 constructs a virtual open-pit mine environment in UE5 and automatically captures crack images with ground-truth bounding boxes. These labelled UE5 images are then used to train StyleGAN2-ADA in Stage 2, where crack images are generated from sampled latent noise or pre-trained weights and subsequently pseudo-labelled using Grounding DINO to produce bounding boxes for the synthesized samples. In Stage 3, YOLOv11 is trained exclusively on these synthetic datasets and tested on real-world imagery to assess the effectiveness of the proposed pipeline in improving surface crack detection performance for data-scarce open-pit mining.

Figure 2.

Overview of the proposed hybrid synthetic dataset generation framework. Stage 1 constructs a virtual open-pit mine environment in UE5 and automatically captures crack images with ground-truth bounding boxes. These labelled UE5 images are then used to train StyleGAN2-ADA in Stage 2, where crack images are generated from sampled latent noise or pre-trained weights and subsequently pseudo-labelled using Grounding DINO to produce bounding boxes for the synthesized samples. In Stage 3, YOLOv11 is trained exclusively on these synthetic datasets and tested on real-world imagery to assess the effectiveness of the proposed pipeline in improving surface crack detection performance for data-scarce open-pit mining.

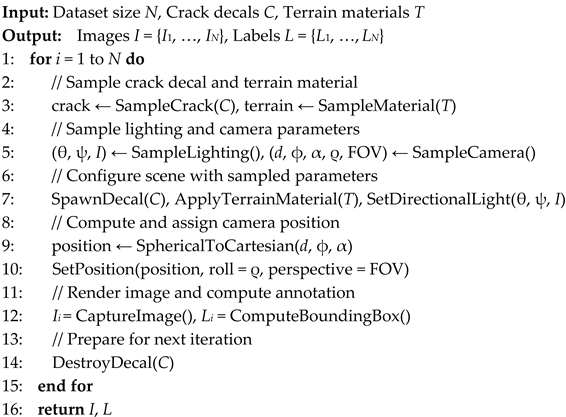

Figure 3.

High-level overview of the UE5 synthetic dataset generation pipeline. A parameterized open-pit mine environment is constructed using virtualized geometry exhibiting realistic benches and haul roads, slope faces, and weathered rock surfaces. Domain randomization is applied across variables such as illumination, camera parameters, surface appearance, and crack decal morphology. For each randomized scene instance, high resolution images are captured and annotated by projecting the 3D decal corner points into image space to compute ground-truth bounding box dimensions. Image and label pairs are then exported for downstream generative modelling using StyleGAN2-ADA.

Figure 3.

High-level overview of the UE5 synthetic dataset generation pipeline. A parameterized open-pit mine environment is constructed using virtualized geometry exhibiting realistic benches and haul roads, slope faces, and weathered rock surfaces. Domain randomization is applied across variables such as illumination, camera parameters, surface appearance, and crack decal morphology. For each randomized scene instance, high resolution images are captured and annotated by projecting the 3D decal corner points into image space to compute ground-truth bounding box dimensions. Image and label pairs are then exported for downstream generative modelling using StyleGAN2-ADA.

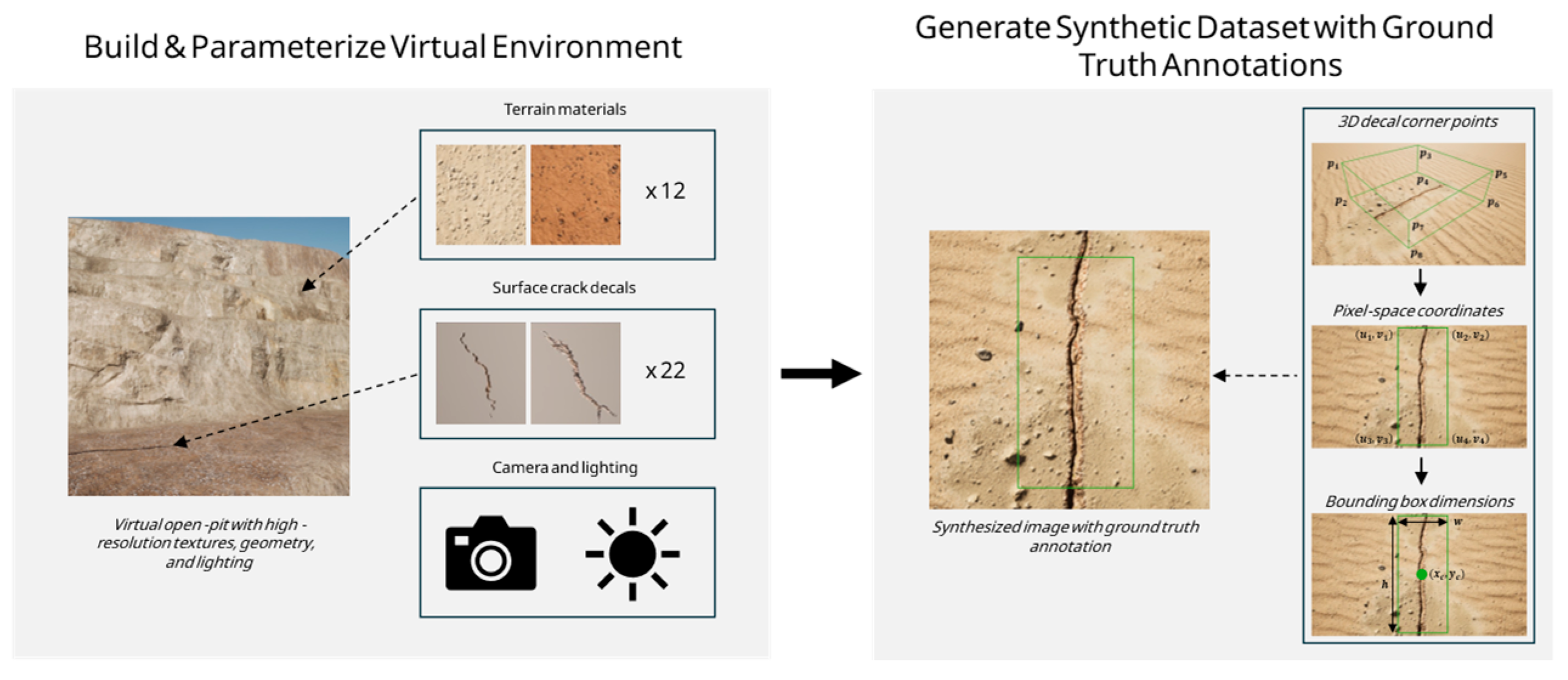

Figure 4.

Architecture of StyleGAN2-ADA, comprising (a) the generator and (b) the discriminator. The mapping network transforms a latent vector z ∈ Z into an intermediate latent representation w ∈ W, which modulates the synthesis network through per-layer affine transforms A. The synthesis network starts from a constant input c1 and progressively refines features using modulated style blocks and stochastic noise B to introduce unstructured detail. The discriminator uses ADA to apply random geometric and color-space perturbations to real and generated images, mitigating overfitting under limited-data conditions. Progressive downsampling with residual connections produces a scalar output D(x) representing the probability that an image is real or generated.

Figure 4.

Architecture of StyleGAN2-ADA, comprising (a) the generator and (b) the discriminator. The mapping network transforms a latent vector z ∈ Z into an intermediate latent representation w ∈ W, which modulates the synthesis network through per-layer affine transforms A. The synthesis network starts from a constant input c1 and progressively refines features using modulated style blocks and stochastic noise B to introduce unstructured detail. The discriminator uses ADA to apply random geometric and color-space perturbations to real and generated images, mitigating overfitting under limited-data conditions. Progressive downsampling with residual connections produces a scalar output D(x) representing the probability that an image is real or generated.

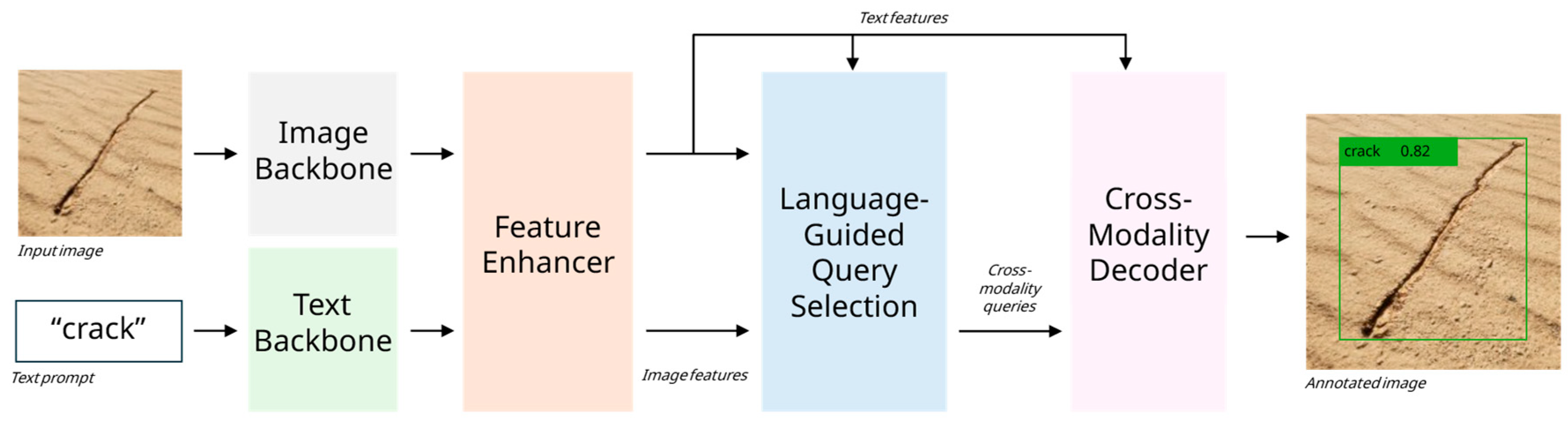

Figure 5.

High-level overview of the Grounding DINO pipeline used for pseudo-labelling the images generated by StyleGAN2-ADA. The input image and text prompt are encoded by their respective backbones and fused in the feature enhancer, which injects semantic text information into the visual features via cross-attention. The resulting language-conditioned object queries, together with multi-scale image features, are processed by the cross-modality decoder. The decoder outputs are then linearly projected into bounding-box coordinates and text-region alignment scores, yielding detections corresponding to the text prompt used, in this case, “crack”.

Figure 5.

High-level overview of the Grounding DINO pipeline used for pseudo-labelling the images generated by StyleGAN2-ADA. The input image and text prompt are encoded by their respective backbones and fused in the feature enhancer, which injects semantic text information into the visual features via cross-attention. The resulting language-conditioned object queries, together with multi-scale image features, are processed by the cross-modality decoder. The decoder outputs are then linearly projected into bounding-box coordinates and text-region alignment scores, yielding detections corresponding to the text prompt used, in this case, “crack”.

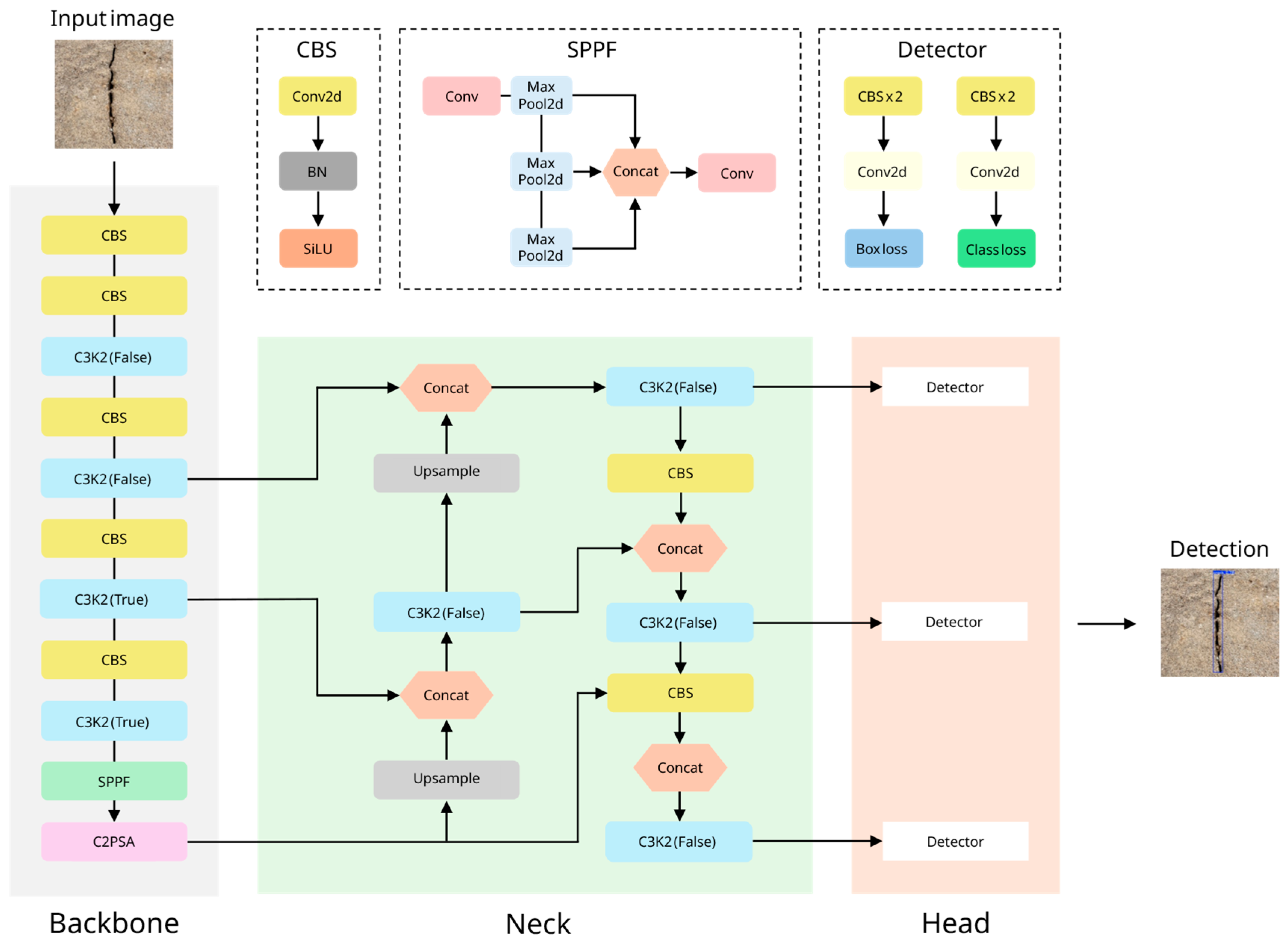

Figure 6.

Architectural overview of YOLOv11, comprising a backbone, neck, and detection head for real-time crack detection. The backbone uses stacked CBS and C3K2 blocks to progressively downsample the input while increasing channel depth to extract features relevant to crack morphology. The SPPF block enlarges the receptive field by combining multi-scale context, and C2PSA modules enhance feature representation through spatial and channel attention. The neck performs multi-scale feature fusion via upsampling, downsampling, and concatenation operations that integrate information from different backbone stages. The decoupled detection head then applies concurrent classification and regression branches to generate bounding box coordinates and class scores for detected cracks.

Figure 6.

Architectural overview of YOLOv11, comprising a backbone, neck, and detection head for real-time crack detection. The backbone uses stacked CBS and C3K2 blocks to progressively downsample the input while increasing channel depth to extract features relevant to crack morphology. The SPPF block enlarges the receptive field by combining multi-scale context, and C2PSA modules enhance feature representation through spatial and channel attention. The neck performs multi-scale feature fusion via upsampling, downsampling, and concatenation operations that integrate information from different backbone stages. The decoupled detection head then applies concurrent classification and regression branches to generate bounding box coordinates and class scores for detected cracks.

Figure 7.

Examples of real-world open-pit mine surface crack images used for YOLOv11 performance evaluation.

Figure 7.

Examples of real-world open-pit mine surface crack images used for YOLOv11 performance evaluation.

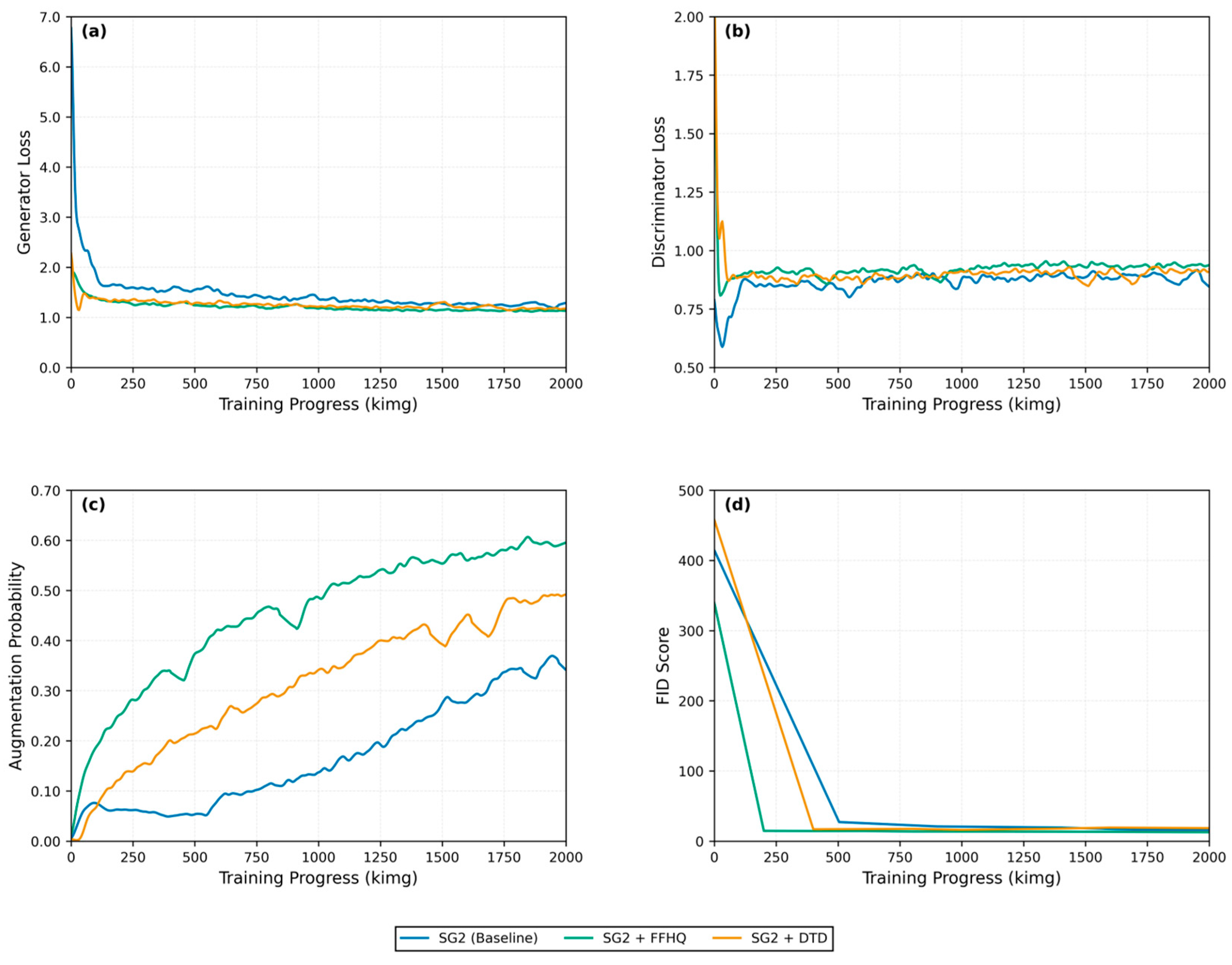

Figure 8.

StyleGAN2-ADA training behavior for three initialization strategies. Subplots show (a) generator loss, (b) discriminator loss, (c) augmentation probability, and (d) FID score progression over a 2 000 kimg training window for SG2 (Baseline), SG2 + FFHQ, and SG2 + DTD configurations.

Figure 8.

StyleGAN2-ADA training behavior for three initialization strategies. Subplots show (a) generator loss, (b) discriminator loss, (c) augmentation probability, and (d) FID score progression over a 2 000 kimg training window for SG2 (Baseline), SG2 + FFHQ, and SG2 + DTD configurations.

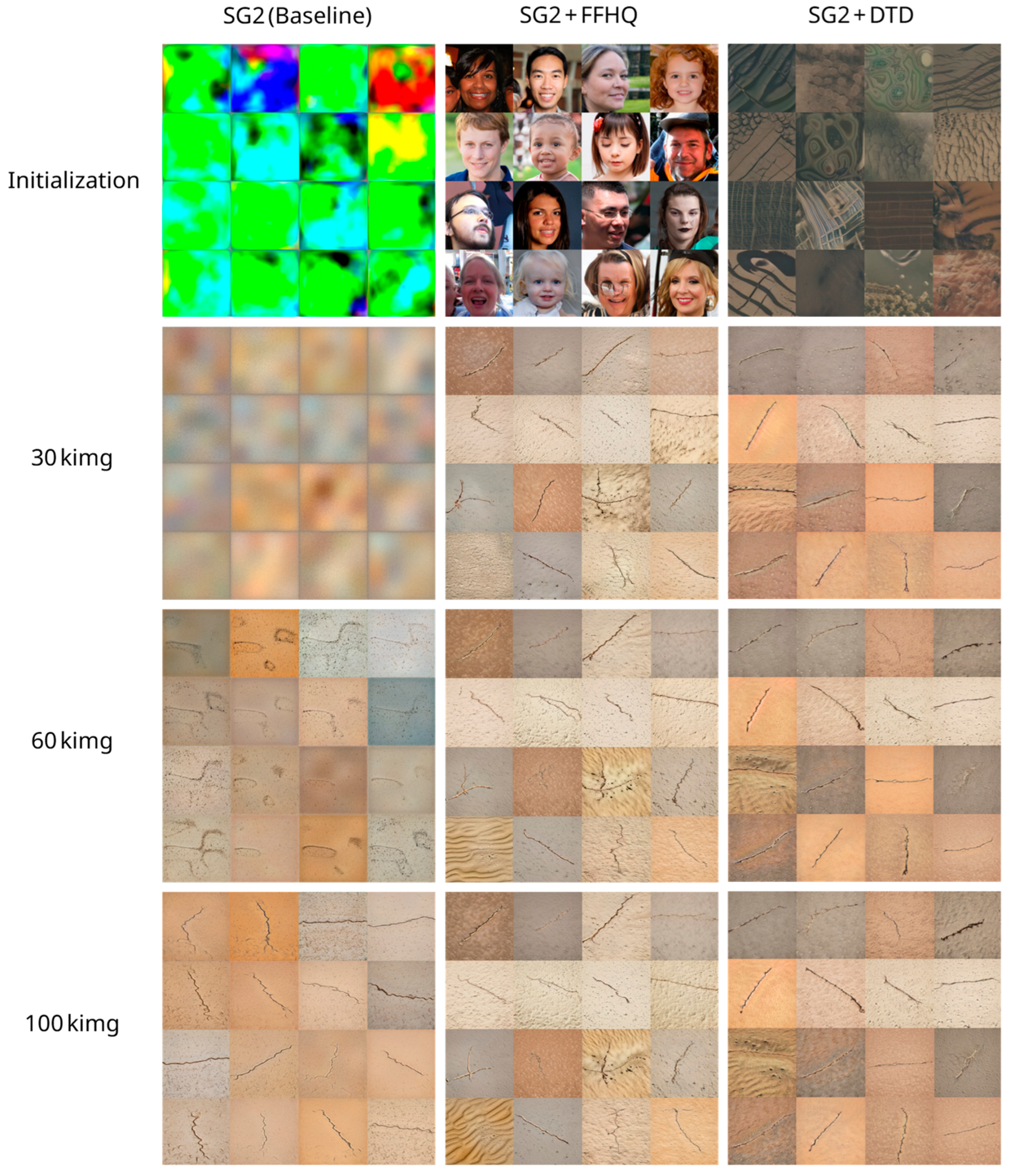

Figure 9.

Early synthesis progression of the three StyleGAN2-ADA training configurations. Samples are shown at initialization, 30 kimg, 60 kimg, and 100 kimg. At initialization, the baseline configuration produces unstructured noise, while the pre-trained configurations generate coherent textures that reflect their source-domain priors. By 30 kimg, SG2 begins to acquire coarse color and texture distributions, whereas the pre-trained configurations already synthesize recognizable crack-like structures. All configurations improve in fidelity and structural realism by 60 kimg, with the pre-trained configurations presenting more developed crack morphology. By 100 kimg, all configurations produce reasonable crack patterns, although the pre-trained configurations contain sharper edges, more consistent textures, and realistic background materials, highlighting the benefits of transfer learning.

Figure 9.

Early synthesis progression of the three StyleGAN2-ADA training configurations. Samples are shown at initialization, 30 kimg, 60 kimg, and 100 kimg. At initialization, the baseline configuration produces unstructured noise, while the pre-trained configurations generate coherent textures that reflect their source-domain priors. By 30 kimg, SG2 begins to acquire coarse color and texture distributions, whereas the pre-trained configurations already synthesize recognizable crack-like structures. All configurations improve in fidelity and structural realism by 60 kimg, with the pre-trained configurations presenting more developed crack morphology. By 100 kimg, all configurations produce reasonable crack patterns, although the pre-trained configurations contain sharper edges, more consistent textures, and realistic background materials, highlighting the benefits of transfer learning.

Figure 10.

t-SNE visualizations of UE5O and StyleGAN2-ADA feature embeddings. (a) All datasets plotted together, showing UE5O forming a series of compact clusters while StyleGAN2-ADA samples occupy a broader manifold. (b) UE5O vs SG2 illustrates the expansion in diversity introduced by generative modelling. (c) UE5O vs SG2 + FFHQ highlights the large dispersion and heavy distributional overlap achieved through effective pre-training. (d) UE5O vs SG2 + DTD shows a similar but slightly less pronounced expansion in feature space.

Figure 10.

t-SNE visualizations of UE5O and StyleGAN2-ADA feature embeddings. (a) All datasets plotted together, showing UE5O forming a series of compact clusters while StyleGAN2-ADA samples occupy a broader manifold. (b) UE5O vs SG2 illustrates the expansion in diversity introduced by generative modelling. (c) UE5O vs SG2 + FFHQ highlights the large dispersion and heavy distributional overlap achieved through effective pre-training. (d) UE5O vs SG2 + DTD shows a similar but slightly less pronounced expansion in feature space.

Figure 11.

Example of images generated by each StyleGAN2-ADA training configuration alongside the UE5O baseline, illustrating differences in crack morphology, background texture, and overall synthesis quality achieved by (a) UE5O, (b) SG2 (Baseline), (c) SG2 + FFHQ, and (d) SG2 + DTD. All SG2-adapted variants, shown in subplots (b-d) exhibit varying degrees of improvement to overall crack morphology variation, while the baseline synthetic dataset shown in subplot (a) maintains greater structural coherence and photorealism.

Figure 11.

Example of images generated by each StyleGAN2-ADA training configuration alongside the UE5O baseline, illustrating differences in crack morphology, background texture, and overall synthesis quality achieved by (a) UE5O, (b) SG2 (Baseline), (c) SG2 + FFHQ, and (d) SG2 + DTD. All SG2-adapted variants, shown in subplots (b-d) exhibit varying degrees of improvement to overall crack morphology variation, while the baseline synthetic dataset shown in subplot (a) maintains greater structural coherence and photorealism.

Figure 12.

Training behavior of the four YOLOv11 dataset configurations evaluated in this study, showing validation box loss, AP@0.5, AP@[0.5:0.95], and precision for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD.

Figure 12.

Training behavior of the four YOLOv11 dataset configurations evaluated in this study, showing validation box loss, AP@0.5, AP@[0.5:0.95], and precision for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD.

Figure 13.

UE5O surface crack detection performance on real-world open-pit test images, showing low-confidence and missed detections across subplots (a-d). Fragmented and inconsistent spatial coverage is observed in subplots (a) and (c), while subplot (d) highlights a false positive triggered by background debris and shadowing effects.

Figure 13.

UE5O surface crack detection performance on real-world open-pit test images, showing low-confidence and missed detections across subplots (a-d). Fragmented and inconsistent spatial coverage is observed in subplots (a) and (c), while subplot (d) highlights a false positive triggered by background debris and shadowing effects.

Figure 14.

Precision-recall characteristics for the four YOLOv11 dataset configurations evaluated on the real-world open-pit surface crack test set. The curves demonstrate the trade-off between precision and recall across confidence thresholds, for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD, highlighting the improved robustness and extended high-precision regions of the SG2-adapted variants relative to the UE5O baseline.

Figure 14.

Precision-recall characteristics for the four YOLOv11 dataset configurations evaluated on the real-world open-pit surface crack test set. The curves demonstrate the trade-off between precision and recall across confidence thresholds, for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD, highlighting the improved robustness and extended high-precision regions of the SG2-adapted variants relative to the UE5O baseline.

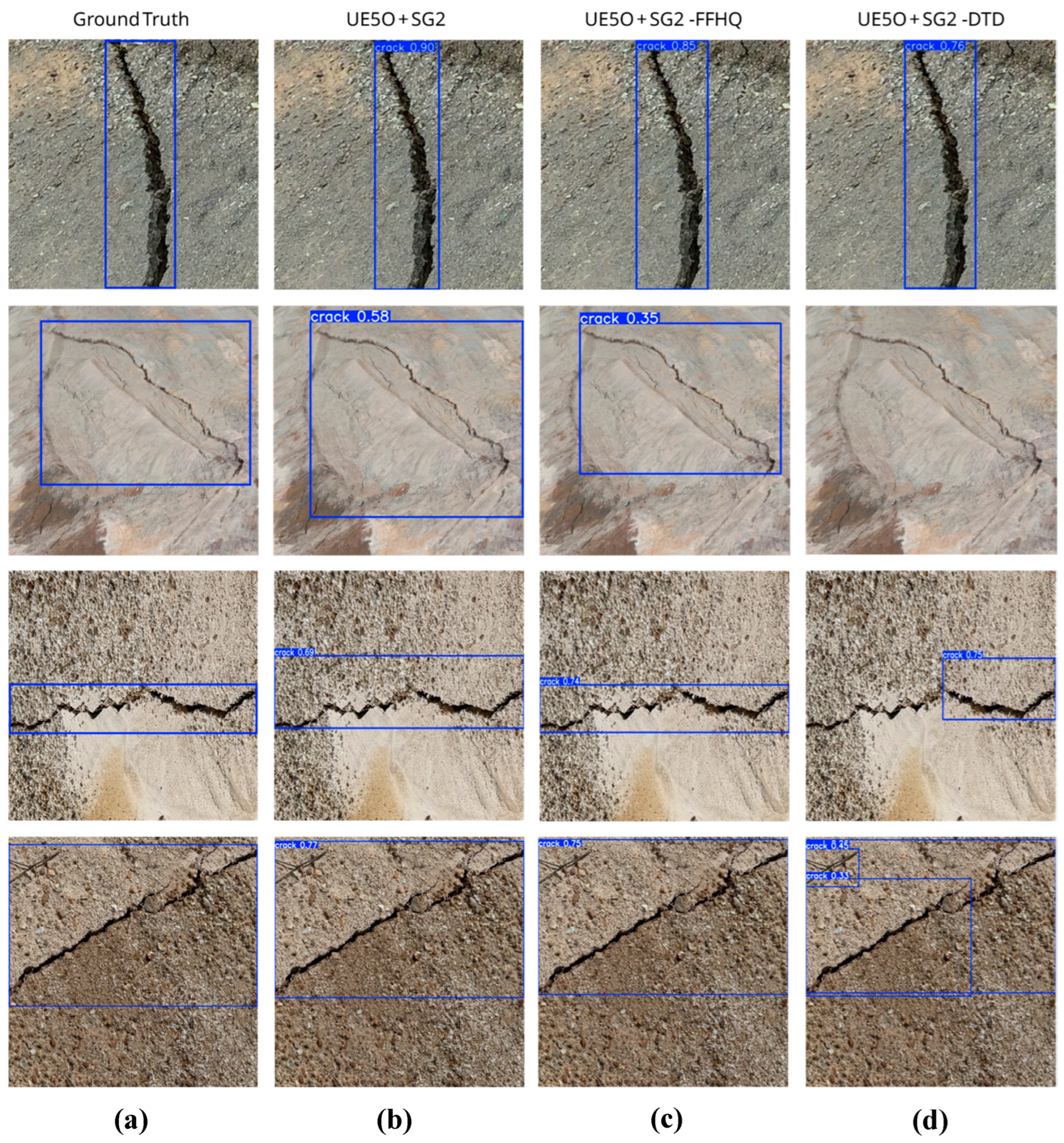

Figure 15.

Qualitative comparison of SG2-adapted surface crack detection performance on real-world open-pit test images. Each row shows a test image with ground truth annotation, followed by predictions from UE5O + SG2, UE5O + SG2-FFHQ, and UE5O + SG2-DTD. Across the representative examples, UE5O + SG2 demonstrates high prediction confidence but produces less tight bounding box localization, while UE5O + SG2-FFHQ frequently provides improved spatial alignment at the cost of slightly reduced confidence (subplots

b-c). UE5O + SG2-DTD demonstrates reduced robustness under challenging conditions, including missed detections on low contrast or partially occluded cracks (subplots

b-c), as well as false positives on background debris and rubble (subplot

d). This behavior explains the observed differences in precision–recall characteristics (

Figure 15) and overall model performance (

Table 8).

Figure 15.

Qualitative comparison of SG2-adapted surface crack detection performance on real-world open-pit test images. Each row shows a test image with ground truth annotation, followed by predictions from UE5O + SG2, UE5O + SG2-FFHQ, and UE5O + SG2-DTD. Across the representative examples, UE5O + SG2 demonstrates high prediction confidence but produces less tight bounding box localization, while UE5O + SG2-FFHQ frequently provides improved spatial alignment at the cost of slightly reduced confidence (subplots

b-c). UE5O + SG2-DTD demonstrates reduced robustness under challenging conditions, including missed detections on low contrast or partially occluded cracks (subplots

b-c), as well as false positives on background debris and rubble (subplot

d). This behavior explains the observed differences in precision–recall characteristics (

Figure 15) and overall model performance (

Table 8).

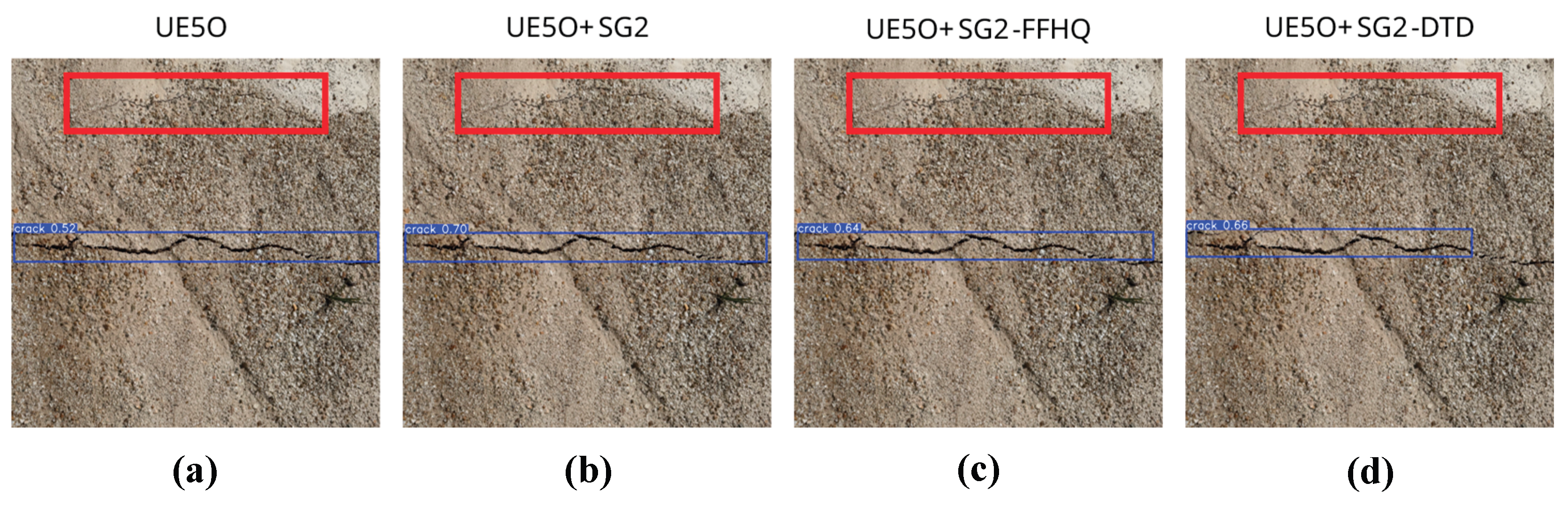

Figure 16.

Representative failure case highlighting the difficulty in detecting fine-grained surface cracks in real-world open-pit test images for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD, where the red bounding box denotes the ground truth annotation for the missed detection.

Figure 16.

Representative failure case highlighting the difficulty in detecting fine-grained surface cracks in real-world open-pit test images for (a) UE5O, (b) UE5O + SG2, (c) UE5O + SG2-FFHQ, and (d) UE5O + SG2-DTD, where the red bounding box denotes the ground truth annotation for the missed detection.

Table 1.

Summary of related works on synthetic dataset generation using game engines.

Table 1.

Summary of related works on synthetic dataset generation using game engines.

| Application |

Downstream Task |

Platform |

Dataset Size |

Performance |

| Construction monitoring [74] |

Object detection |

Unity |

7 000 |

mAP@[0.5:0.95]: 0.46 |

| Generic object detection [79] |

Object detection |

UE4 with NDDS |

1 500 |

Not reported |

| Autonomous driving [80] |

Object detection |

UE5 |

16 700 |

mAP@0.5: 0.67 |

| Navigation assistance [73] |

Object detection |

UE4 with NDDS |

3 000 |

Precision: 0.92

Recall: 0.91 |

| Exercise monitoring [82] |

Pose estimation |

Unity |

5 000 |

I3D test accuracy: 0.99 |

| Grocery item detection [81] |

Object detection |

Unity |

400 000 |

mAP@[0.5:0.95]: 0.68 |

| Warehouse object detection [83] |

Semantic segmentation |

Unity |

7 140 |

mAP@0.5: 0.65 |

| Autonomous driving [78] |

Semantic segmentation |

GTA V |

1 355 568 |

CIoU: 0.45 |

| Animal monitoring [84] |

Pose estimation |

Unity |

32 000 |

PCK: 0.13 |

| Construction monitoring [85] |

Object detection |

Unity |

6 000 |

Precision: 0.92 |

| Generic object detection [86] |

Classification |

UE4 |

31 200 |

Top-1 accuracy: 0.72 |

Table 2.

Summary of related works on synthetic dataset generation using StyleGAN2-ADA.

Table 2.

Summary of related works on synthetic dataset generation using StyleGAN2-ADA.

| Application |

Downstream Task |

Training Dataset |

Training Configuration |

FID Score |

| Petrographic image classification [97] |

Classification |

10 070 real petrographic images |

6 520 kimg, NVIDIA Quadro RTX 5000 |

12.49 |

| Brain tumor classification [92] |

Classification |

3 064 real brain scans |

NVIDIA Tesla P100 |

58.11–67.53 |

| Abdominal scan synthesis [91] |

Not reported |

1 300 real abdominal scans |

7 800 kimg, NVIDIA GeForce RTX 2080 |

18.14 |

| Algal bloom detection [102] |

Semantic segmentation |

3 114 real algal bloom images |

NVIDIA Tesla P100 |

42.56 |

| Dental radiograph classification [95] |

Classification |

1 456 real dental radiographs |

NVIDIA Tesla A100 |

72.76 |

| Brain scan synthesis [90] |

Not reported |

1 412 real brain scans |

1 800 kimg, NVIDIA Tesla A100 |

20.21 |

| Chest X-ray classification [96] |

Classification |

3 616 real chest X-rays |

NVIDIA Tesla K80 |

20.90 |

| Skin cancer classification [89] |

Classification |

33 126 real skin lesion images |

NVIDIA GeForce RTX 3090 |

0.79 |

| Landslide detection [99] |

Semantic segmentation |

770 real landslide images |

Not reported |

67.47 |

| Wildfire detection [103] |

Object detection |

1 865 real wildfire images |

25 000 kimg, NVIDIA GeForce RTX 3090 Ti |

24.07 |

| Pavement crack detection [98] |

Semantic segmentation |

778 real crack images |

32 000 kimg, NVIDIA Tesla T4 |

6.30 |

Table 3.

Cine Camera Actor settings used for synthetic dataset generation in UE5.

Table 3.

Cine Camera Actor settings used for synthetic dataset generation in UE5.

| Setting |

Value |

| Sensor Format |

36 mm × 20.25 mm |

| Aspect Ratio |

16:9 |

| Resolution |

1920 × 1080 pixels |

| Aperture |

ƒ/5.6 |

| ISO |

100 |

| Shutter Speed |

1/500 s |

Table 4.

Default hyperparameter configuration used for training StyleGAN2-ADA.

Table 4.

Default hyperparameter configuration used for training StyleGAN2-ADA.

| Hyperparameter |

Value |

| Learning Rate |

0.002 |

| Optimizer |

Adam (β1 = 0, β2 = 0.99, ε = 1e-8) |

| R1 Regularization Weight |

10.0 |

| Effective R1 Weight |

160 |

| Path Length Regularization Interval |

4 iterations |

| R1 Regularization Interval |

16 iterations |

| ADA Target |

0.6 (60%) |

| Loss Function |

Non-saturating logistic loss |

Table 5.

Dataset configurations used for training YOLOv11 for open-pit surface crack detection.

Table 5.

Dataset configurations used for training YOLOv11 for open-pit surface crack detection.

| Dataset |

Total Images |

Training Images |

Validation Images |

| UE5O |

20 000 |

17 000 |

3 000 |

| UE5O + SG2 |

40 000 |

34 000 |

6 000 |

| UE5O + SG2-FFHQ |

40 000 |

34 000 |

6 000 |

| UE5O + SG2-DTD |

40 000 |

34 000 |

6 000 |

Table 6.

Hyperparameter configuration used for training YOLOv11.

Table 6.

Hyperparameter configuration used for training YOLOv11.

| Hyperparameter |

Value |

| Model |

YOLOv11m |

| Initialization Weights |

COCO |

| Input Resolution |

512 × 512 pixels |

| Batch Size |

64 |

| Epochs |

300 |

| Optimizer |

Adam (β1 = 0.9, β2 = 0.99) |

| Initial Learning Rate |

0.001 |

| Learning Rate Schedule |

Cosine decay |

| Warmup Epochs |

3 |

| Weight Decay |

0.0005 |

| Data Augmentation |

On (scaling, translation, flip, mosaic) |

Table 7.

Fidelity (FID), perceptual diversity (LPIPS), and relative LPIPS improvement over the UE5O baseline (LPIPS vs UE5O) for the three StyleGAN2-ADA initialization strategies.

Table 7.

Fidelity (FID), perceptual diversity (LPIPS), and relative LPIPS improvement over the UE5O baseline (LPIPS vs UE5O) for the three StyleGAN2-ADA initialization strategies.

| Configuration |

FID Score |

Mean LPIPS |

Median LPIPS |

LPIPS Range |

LPIPS vs UE5O |

| SG2 (Baseline) |

15.99 |

0.452 ± 0.094 |

0.456 |

[0.011, 0.725] |

+ 2.80% |

| SG2 + FFHQ |

12.75 |

0.472 ± 0.090 |

0.477 |

[0.140, 0.709] |

+ 7.49% |

| SG2 + DTD |

15.88 |

0.457 ± 0.092 |

0.462 |

[0.115, 0.735] |

+ 3.96% |

Table 8.

YOLOv11 performance evaluation results for each dataset configuration evaluated on the real-world open-pit surface crack test set.

Table 8.

YOLOv11 performance evaluation results for each dataset configuration evaluated on the real-world open-pit surface crack test set.

| Dataset |

Precision |

Recall |

F1 |

AP@0.5 |

AP@[0.5:0.95] |

| UE5O |

0.402 |

0.445 |

0.422 |

0.403 |

0.223 |

| UE5O + SG2 |

0.792 |

0.902 |

0.844 |

0.922 |

0.706 |

| UE5O + SG2-FFHQ |

0.808 |

0.850 |

0.829 |

0.911 |

0.722 |

| UE5O + SG2-DTD |

0.730 |

0.828 |

0.776 |

0.858 |

0.638 |

Table 9.

FP and FN count for each YOLOv11 dataset configuration evaluated on the real-world open-pit surface crack test set.

Table 9.

FP and FN count for each YOLOv11 dataset configuration evaluated on the real-world open-pit surface crack test set.

| Configuration |

FP Count |

FN Count |

| UE5O |

52 |

95 |

| UE5O + SG2 |

27 |

20 |

| UE5O + SG2-FFHQ |

42 |

8 |

| UE5O + SG2-DTD |

48 |

31 |