Submitted:

10 March 2026

Posted:

11 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

2.1. Traditional Driver Monitoring Systems

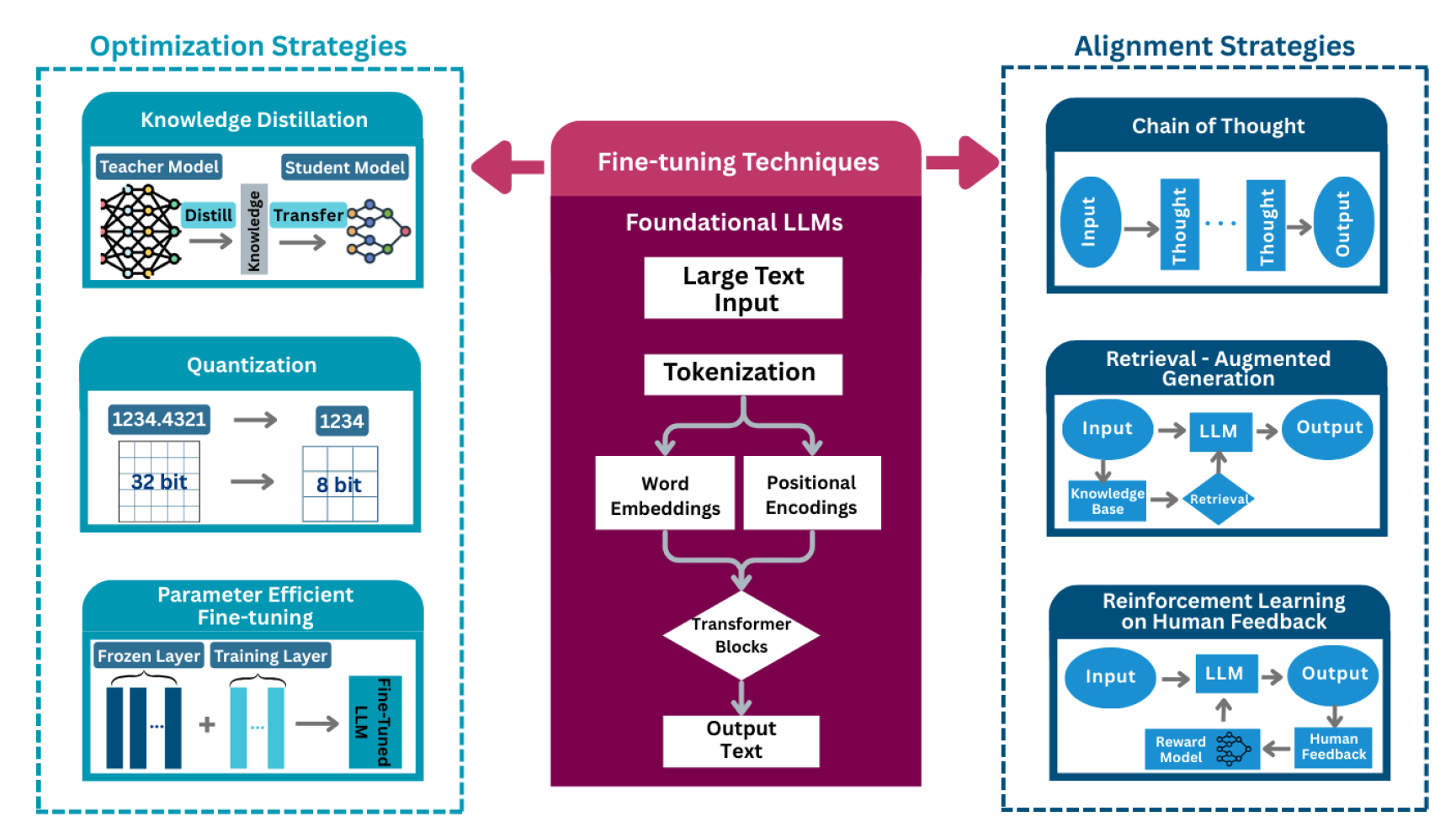

2.2. LLMs and Their Optimization

2.3. Vision-Language Models (VLMs) and Multimodal LLMs (MLLMs)

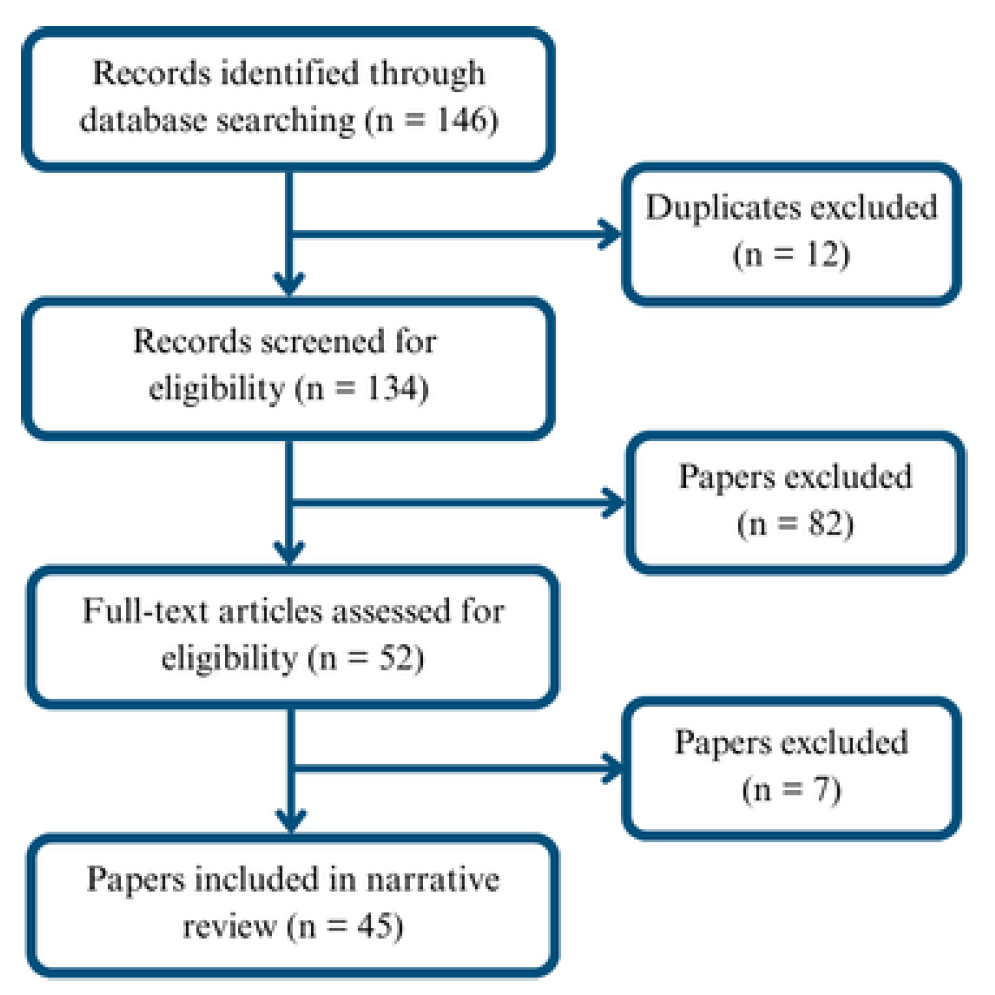

3. Methodology

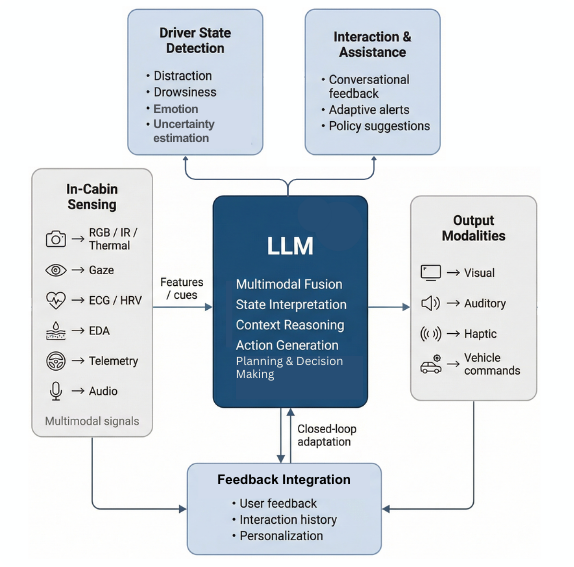

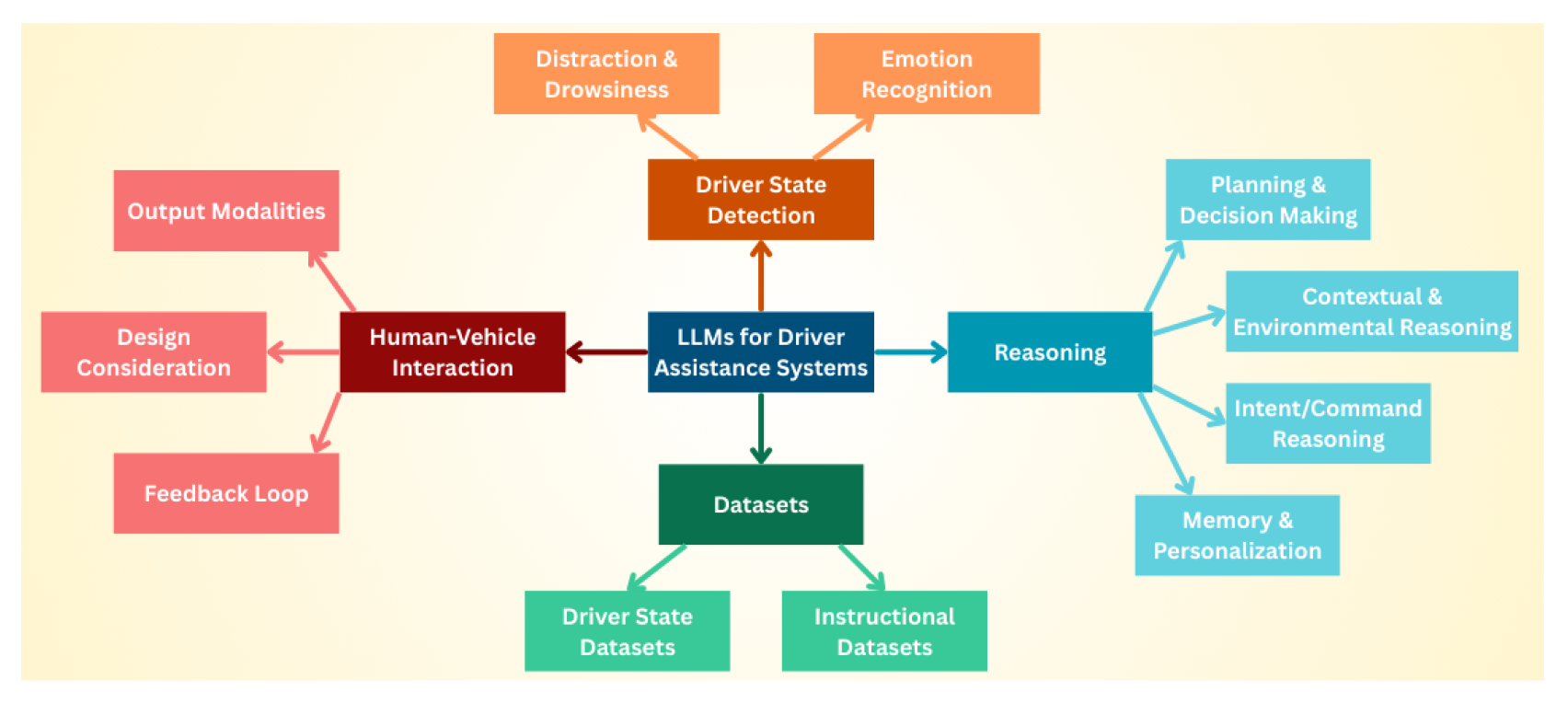

4. Driver State Detection with LLMs

4.1. In-Cabin Sensing Modalities

4.2. Distraction and Drowsiness

4.3. Emotion Recognition

5. LLM Reasoning Over Driver Context

5.1. Planning and Decision Making

5.2. Environmental and Contextual Reasoning

5.3. Command and Intent Reasoning

5.4. Memory and Personalization

6. Interaction and Intervention

6.1. Output Modalities and Design Considerations

6.2. Open- vs Closed-Loop

7. Datasets and Benchmarks

8. Discussion

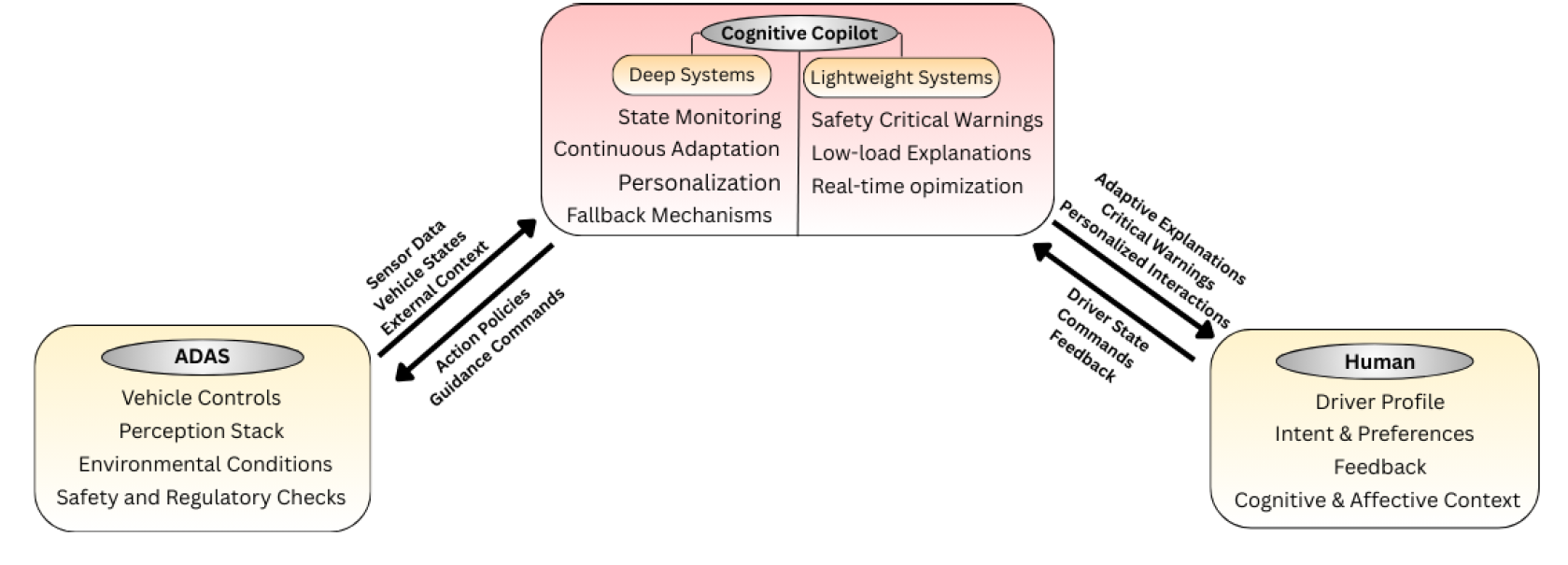

8.1. From ADAS to Cognitive Co-Pilots

8.2. Design Tensions in Human-Centric Driver Assistance

8.2.1. Personalization vs. Generalization

8.2.2. Transparency vs. Cognitive Load

8.2.3. Efficiency vs. Reliability

8.3. Rethinking Trust

8.4. Datasets, Methods, and Ethical Considerations

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Li, J.; Li, J.; Yang, G.; Yang, L.; Chi, H.; Yang, L. Applications of large language models and multimodal large models in autonomous driving: A comprehensive review. 2025. [Google Scholar]

- Commission, E.; Directorate-General for Internal, Market; Industry, E.; SMEs.; TRL.; Seidl, M.; Edwards, M.; Hynd, D.; McCarthy, M.; Livadeas, A.; Carroll, J.; et al. General Safety Regulation – Technical study to assess and develop performance requirements and test protocols for various measures implementing the new General Safety Regulation, for accident avoidance and vehicle occupant, pedestrian and cyclist protection in case of collisions – Final report; Publications Office, 2021. [Google Scholar] [CrossRef]

- Yang, D.; Huang, S.; Xu, Z.; Li, Z.; Wang, S.; Li, M.; Wang, Y.; Liu, Y.; Yang, K.; Chen, Z.; et al. Aide: A vision-driven multi-view, multi-modal, multi-tasking dataset for assistive driving perception. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023; pp. 20459–20470. [Google Scholar]

- Cañas, P.N.; Nieto, M.; Otaegui, O.; Rodríguez, I. Exploration of VLMs for Driver Monitoring Systems Applications. arXiv 2025, arXiv:2503.12281. [Google Scholar] [CrossRef]

- Rumpf, S. Sleepy driver puts Tesla on autopilot, leading police on high-speed chase in Germany. 2023. [Google Scholar]

- Cui, C.; Ma, Y.; Cao, X.; Ye, W.; Zhou, Y.; Liang, K.; Chen, J.; Lu, J.; Yang, Z.; Liao, K.D.; et al. A survey on multimodal large language models for autonomous driving. In Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2024; pp. 958–979. [Google Scholar]

- Yan, Y.; Liao, Y.; Xu, G.; Yao, R.; Fan, H.; Sun, J.; Wang, X.; Sprinkle, J.; An, Z.; Ma, M.; et al. Large language models for traffic and transportation research: Methodologies, state of the art, and future opportunities. arXiv 2025, arXiv:2503.21330. [Google Scholar] [CrossRef]

- Xu, Z.; Chen, T.; Huang, Z.; Xing, Y.; Chen, S. Personalizing driver agent using large language models for driving safety and smarter human–machine interactions. IEEE intelligent transportation Systems magazine 2025. [Google Scholar] [CrossRef]

- Cui, C.; Ma, Y.; Cao, X.; Ye, W.; Wang, Z. Drive as you speak: Enabling human-like interaction with large language models in autonomous vehicles. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024; pp. 902–909. [Google Scholar]

- Wen, S.; Middleton, M.; Ping, S.; Chawla, N.N.; Wu, G.; Feest, B.S.; Nadri, C.; Liu, Y.; Kaber, D.; Zahabi, M.; et al. AdaptiveCoPilot: Design and Testing of a NeuroAdaptive LLM Cockpit Guidance System in both Novice and Expert Pilots. In Proceedings of the 2025 IEEE Conference Virtual Reality and 3D User Interfaces (VR); 2025, IEEE; pp. 656–666.

- Gao, Y.; Yue, L.; Sun, J.; Shan, X.; Liu, Y.; Wu, X. WorkloadGPT: A Large Language Model Approach to Real-Time Detection of Pilot Workload. Applied Sciences 2024, 14, 8274. [Google Scholar] [CrossRef]

- Wahab, O.; Adda, M. Comprehensive Literature Review on Large Language Models and Smart Monitoring Devices for Stress Management. Procedia Computer Science 2025, 257, 166–173. [Google Scholar] [CrossRef]

- Wang, S.; Zhu, Y.; Li, Z.; Wang, Y.; Li, L.; He, Z. ChatGPT as your vehicle co-pilot: An initial attempt. IEEE Transactions on Intelligent Vehicles 2023, 8, 4706–4721. [Google Scholar] [CrossRef]

- Bond, Y.L.; Choe, M.; Hasan, B.K.; Siddiqui, A.; Jeon, M. ChatGPT on the Road: Leveraging Large Language Model-Powered In-vehicle Conversational Agents for Safer and More Enjoyable Driving Experience. arXiv 2025, arXiv:2508.08101. [Google Scholar]

- Xu, Z.; Chen, T.; Chen, S. A LLM-based Multimodal Warning System for Driver Assistance. In Proceedings of the 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC); IEEE, 2024; pp. 1527–1532. [Google Scholar]

- Markelius, A.; Lou, Y.; Galazka, M.; Lundgren, S.; Zemblys, R.; Lind, H.; Lowe, R. Investigating the Mitigation of Stress in Autonomous and Non-autonomous Vehicles Using LLM Feedback. In Proceedings of the International Conference on Human-Computer Interaction; Springer, 2025; pp. 108–127. [Google Scholar]

- Hernandez-Salinas, B.; Terven, J.; ChaveZ-Urbiola, E.; Cordova-Esparza, D.M.; Romero-Gonzalez, J.A.; Arguelles, A.; Cervantes, I. Idas: Intelligent driving assistance system using rag. IEEE Open Journal of Vehicular Technology 2024. [Google Scholar] [CrossRef]

- Zhang, K.; Wang, S.; Jia, N.; Zhao, L.; Han, C.; Li, L. Integrating visual large language model and reasoning chain for driver behavior analysis and risk assessment. Accident Analysis & Prevention 2024, 198, 107497. [Google Scholar] [CrossRef]

- Wu, Y.; Li, D.; Chen, Y.; Jiang, R.; Zou, H.P.; Fang, L.; Wang, Z.; Yu, P.S. Multi-agent autonomous driving systems with large language models: A survey of recent advances. arXiv 2025, arXiv:2502.16804. [Google Scholar] [CrossRef]

- Knapik, M.; Cyganek, B.; Balon, T. Multimodal driver condition monitoring system operating in the far-infrared spectrum. Electronics 2024, 13, 3502. [Google Scholar] [CrossRef]

- Tavakkoli, V.; Mohsenzadegan, K.; Kyamakya, K. Leveraging Context-Aware Emotion and Fatigue Recognition Through Large Language Models for Enhanced Advanced Driver Assistance Systems (ADAS). In Recent Advances in Machine Learning Techniques and Sensor Applications for Human Emotion, Activity Recognition and Support; Springer, 2024; pp. 49–85. [Google Scholar]

- Hu, C.; Li, X. Human-Centric Context and Self-Uncertainty-Driven Multi-Modal Large Language Model for Training-Free Vision-Based Driver State Recognition. IEEE Transactions on Intelligent Transportation Systems 2025. [Google Scholar] [CrossRef]

- Li, B.; Luo, M.; Zhang, D. Facial Emotion Detection Research Based on an Improved Multi-modal LLM. In Proceedings of the 2024 3rd International Conference on Artificial Intelligence, Human-Computer Interaction and Robotics (AIHCIR); IEEE, 2024; pp. 61–66. [Google Scholar]

- Chen, X.; Wang, X.; Fang, C.; Fang, L.; Gong, W.; Liu, C.; Wang, S.J. Emotion-aware Design in Automobiles: Embracing Technology Advancements to Enhance Human-vehicle Interaction. In Proceedings of the Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, 2025; pp. 1–18. [Google Scholar]

- Taveekitworachai, P.; Suntichaikul, P.; Nukoolkit, C.; Thawonmas, R. Speed up! Cost-effective large language model for ADAS via knowledge distillation. In Proceedings of the 2024 IEEE Intelligent Vehicles Symposium (IV). IEEE; 2024; pp. 1933–1938. [Google Scholar]

- Song, G.; Lim, J.; Jeong, C.; Kang, C.M. Enhancing Inference Performance of a Personalized Driver Assistance System through LLM Fine-Tuning and Quantization. In Proceedings of the 2025 International Conference on Electronics, Information, and Communication (ICEIC); IEEE, 2025; pp. 1–4. [Google Scholar]

- Cheng, Z.; Cheng, Z.Q.; He, J.Y.; Wang, K.; Lin, Y.; Lian, Z.; Peng, X.; Hauptmann, A. Emotion-llama: Multimodal emotion recognition and reasoning with instruction tuning. Advances in Neural Information Processing Systems 2024, 37, 110805–110853. [Google Scholar]

- Sun, Y.; Salami Pargoo, N.; Jin, P.; Ortiz, J. Optimizing autonomous driving for safety: A human-centric approach with LLM-enhanced RLHF. In Proceedings of the Companion of the 2024 on ACM International Joint Conference on Pervasive and Ubiquitous Computing, 2024; pp. 76–80. [Google Scholar]

- Hasan, M.Z.; Chen, J.; Wang, J.; Rahman, M.S.; Joshi, A.; Velipasalar, S.; Hegde, C.; Sharma, A.; Sarkar, S. Vision-language models can identify distracted driver behavior from naturalistic videos. IEEE Transactions on Intelligent Transportation Systems 2024, 25, 11602–11616. [Google Scholar] [CrossRef]

- Girbacia, F.; Voinea, G.D.; Danu, M.D.; Buzdugan, I.D.; Duguleana, M. Detection of Phone Distraction While Driving Using Open Visual-Language Models. In Proceedings of the International Congress of Automotive and Transport Engineering; Springer, 2024; pp. 281–286. [Google Scholar]

- Dona, M.A.M.; Cabrero-Daniel, B.; Yu, Y.; Berger, C. Evaluating and Enhancing Trustworthiness of LLMs in Perception Tasks. In Proceedings of the 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC); IEEE, 2024; pp. 431–438. [Google Scholar]

- Chi, H.; Yang, H.; Yang, L.; Lv, C. VLM-DM: Visual Language Models for Multitask Domain Adaptation in Driver Monitoring. In Proceedings of the 2025 IEEE Intelligent Vehicles Symposium (IV); IEEE, 2025; pp. 1280–1285. [Google Scholar]

- Huang, S.; Zhao, X.; Wei, D.; Song, X.; Sun, Y. Chatbot and fatigued driver: Exploring the use of LLM-based voice assistants for driving fatigue. In Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems, 2024; pp. 1–8. [Google Scholar]

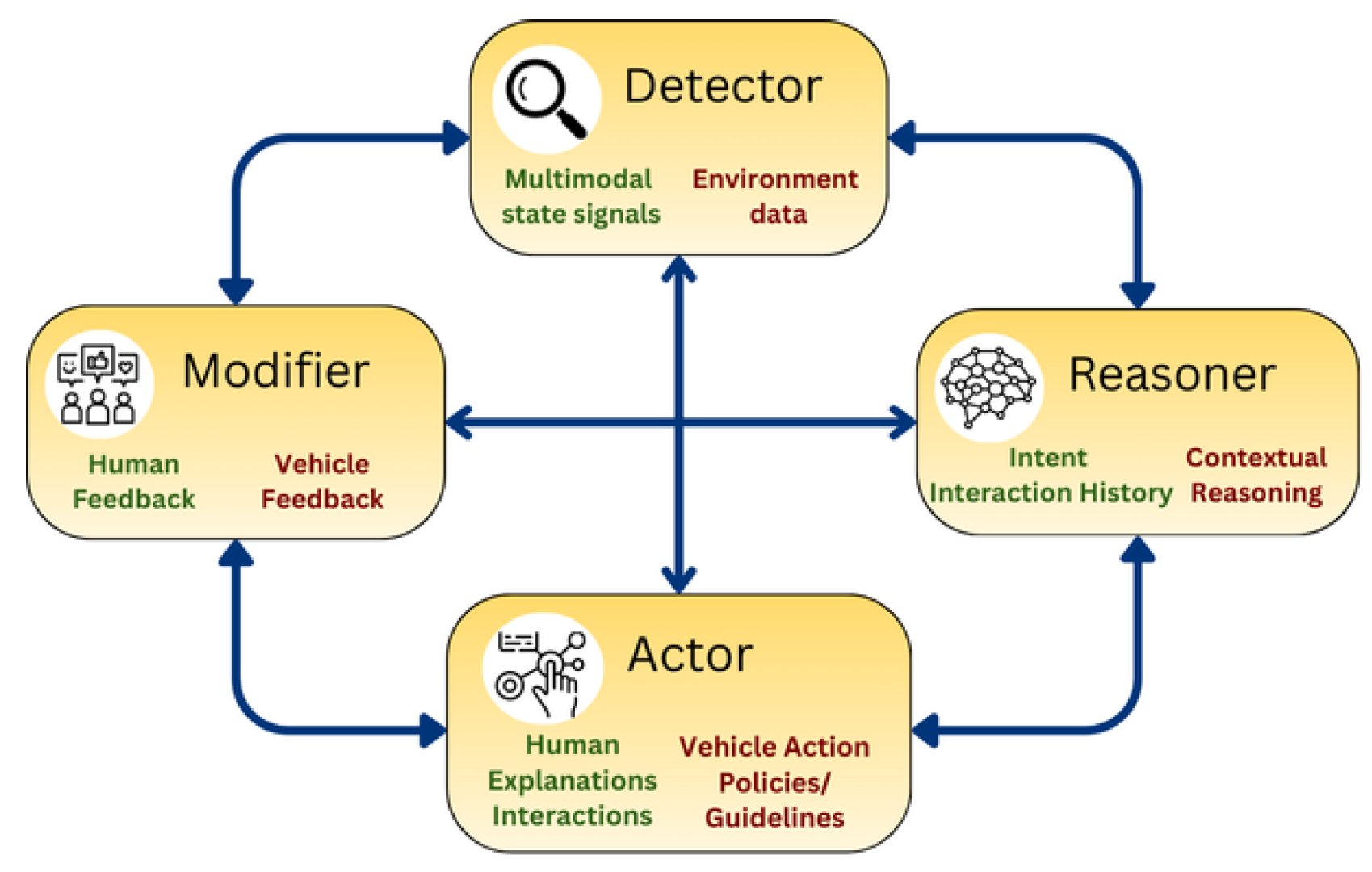

- Fang, S.; Liu, J.; Xu, C.; Lv, C.; Hang, P.; Sun, J. Interact, instruct to improve: A llm-driven parallel actor-reasoner framework for enhancing autonomous vehicle interactions. arXiv 2025, arXiv:2503.00502. [Google Scholar] [CrossRef]

- Yang, Y.; Zhang, Q.; Li, C.; Marta, D.S.; Batool, N.; Folkesson, J. Human-centric autonomous systems with llms for user command reasoning. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024; pp. 988–994. [Google Scholar]

- Lo, I.C.; Rau, P.L.P. D-Twins: Your Digital Twin Designed for Real-Time Boredom Intervention. In Proceedings of the Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, 2025; pp. 1–15. [Google Scholar]

- Huang, B.; Lv, J.; Qiang, L. Influencing driving safety by matching AI assistant’s verbal emotions to driver: A randomized controlled trial on performance, attention, and emotion. Computers in Human Behavior 2025, 169, 108667. [Google Scholar] [CrossRef]

- Hu, C.; Li, X.; Pang, J. Trustworthy Driver State Perception via Contextual Interaction-Driven Evidential Vision-Language Fusion in Vehicular Cyber-Physical Systems. IEEE Transactions on Intelligent Transportation Systems 2025. [Google Scholar] [CrossRef]

- Xiang, W.; Li, M.; Yan, J.; Zheng, M.; Zhu, H.; Jiang, M.; Sun, L. Driver Assistant: Persuading Drivers to Adjust Secondary Tasks Using Large Language Models. arXiv 2025, arXiv:2508.05238. [Google Scholar] [CrossRef]

- Zou, Z.; Khan, A.; Lwin, M.; Alnajjar, F.; Mubin, O. Investigating the impacts of auditory and visual feedback in advanced driver assistance systems: a pilot study on driver behavior and emotional response. Frontiers in Computer Science 2025, 6, 1499165. [Google Scholar] [CrossRef]

- Choe, M.; Bosch, E.; Dong, J.; Alvarez, I.; Oehl, M.; Jallais, C.; Alsaid, A.; Nadri, C.; Jeon, M. Emotion GaRage Vol. IV: Creating empathic in-vehicle interfaces with generative AIs for automated vehicle contexts. In Proceedings of the Adjunct Proceedings of the 15th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, 2023; pp. 234–236. [Google Scholar]

- Cui, C.; Yang, Z.; Zhou, Y.; Ma, Y.; Lu, J.; Li, L.; Chen, Y.; Panchal, J.; Wang, Z. Personalized autonomous driving with large language models: Field experiments. In Proceedings of the 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC); IEEE, 2024; pp. 20–27. [Google Scholar]

- Cui, C.; Ma, Y.; Cao, X.; Ye, W.; Wang, Z. Receive, reason, and react: Drive as you say, with large language models in autonomous vehicles. IEEE Intelligent Transportation Systems Magazine 2024, 16, 81–94. [Google Scholar] [CrossRef]

- Ma, Y.; Cui, C.; Cao, X.; Ye, W.; Liu, P.; Lu, J.; Abdelraouf, A.; Gupta, R.; Han, K.; Bera, A.; et al. Lampilot: An open benchmark dataset for autonomous driving with language model programs. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024; pp. 15141–15151. [Google Scholar]

- Krömker, H. HCI in Mobility, Transport, and Automotive Systems; Springer, 2021. [Google Scholar]

- .

- Rahman, M.S.; Venkatachalapathy, A.; Sharma, A.; Wang, J.; Gursoy, S.V.; Anastasiu, D.; Wang, S. Synthetic distracted driving (syndd1) dataset for analyzing distracted behaviors and various gaze zones of a driver. Data in brief 2023, 46, 108793. [CrossRef] [PubMed]

- Ortega, J.D.; Kose, N.; Cañas, P.; Chao, M.A.; Unnervik, A.; Nieto, M.; Otaegui, O.; Salgado, L. Dmd: A large-scale multi-modal driver monitoring dataset for attention and alertness analysis. In Proceedings of the European Conference on Computer Vision; Springer, 2020; pp. 387–405. [Google Scholar]

- Liu, W.; Qian, J.; Yao, Z.; Jiao, X.; Pan, J. Convolutional two-stream network using multi-facial feature fusion for driver fatigue detection. Future Internet 2019, 11, 115. [Google Scholar] [CrossRef]

- Jegham, I.; Khalifa, A.B.; Alouani, I.; Mahjoub, M.A. A novel public dataset for multimodal multiview and multispectral driver distraction analysis: 3MDAD. Signal Processing: Image Communication 2020, 88, 115960. [Google Scholar] [CrossRef]

- Kuzdeuov, A.; Aubakirova, D.; Koishigarina,, D.; Varol, H.A. TFW: Annotated thermal faces in the wild dataset. IEEE Transactions on Information Forensics and Security 2022, 17, 2084–2094. [Google Scholar] [CrossRef]

- Kuzdeuov, A.; Koishigarina, D.; Aubakirova, D.; Abushakimova, S.; Varol, H.A. Sf-tl54: A thermal facial landmark dataset with visual pairs. In Proceedings of the 2022 IEEE/SICE International Symposium on System Integration (SII); IEEE, 2022; pp. 748–753. [Google Scholar]

- Han, Z.; Zhu, B.; Xu, Y.; Song, P.; Yang, X. Benchmarking and Bridging Emotion Conflicts for Multimodal Emotion Reasoning. arXiv 2025, arXiv:2508.01181. [Google Scholar] [CrossRef]

- Qian, T.; Chen, J.; Zhuo, L.; Jiao, Y.; Jiang, Y.G. Nuscenes-qa: A multi-modal visual question answering benchmark for autonomous driving scenario. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024; Volume 38, pp. 4542–4550. [Google Scholar]

- Sima, C.; Renz, K.; Chitta, K.; Chen, L.; Zhang, H.; Xie, C.; Beißwenger, J.; Luo, P.; Geiger, A.; Li, H. Drivelm: Driving with graph visual question answering. In Proceedings of the European conference on computer vision; Springer, 2024; pp. 256–274. [Google Scholar]

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets robotics: The kitti dataset. The international journal of robotics research 2013, 32, 1231–1237. [Google Scholar] [CrossRef]

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuscenes: A multimodal dataset for autonomous driving. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 11621–11631. [Google Scholar]

- Yu, F.; Chen, H.;Wang, X.; Xian,W.; Chen, Y.; Liu, F.; Madhavan, V.; Darrell, T. Bdd100k: A diverse driving dataset for heterogeneous multitask learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 2636–2645.

| Sensors | Used for | Key limitations / failure modes | Edge feasibility | Privacy risk |

|---|---|---|---|---|

| Vision / Audio | ||||

| FIR (thermal) [20] | Drowsiness (yawn, head droop, head pose); distraction | Glasses block eyes; temperature variance; low spatial detail | Med–high efficiency | High |

| NIR / IR [23][3] | Facial emotion; drowsiness; distraction; robust low-light monitoring | Occlusion (hair, glasses); pose variation; specular reflections | Efficient perception; temporal complexity | Med–High |

| RGB [3][29][30][18] | Distraction / secondary tasks; facial emotion; drowsiness; body/pose behavior | Low-light sensitivity; occlusion; motion blur; false detections | Low–Med; time-consuming inference | High |

| Depth / RGB-D [32][21] | Posture / geometry cues; object detection; silent interaction | Noisy depth in real time; reflective surfaces; limited range / placement constraints | Low; 3D processing overhead | Med–High |

| Audio [27][14][33][34][35] | Emotion; intent / command; fatigue | Background noise; cognitive interference; latency; speech variability | Med–high with hybrid edge-cloud frameworks | Med–High |

| Physiological | ||||

| EEG [36][21] | Drowsiness; cognitive state; stress; workload; emotion | Intrusive; noisy; high inter-subject variability | Low; high-dimensional data | High |

| ECG / HRV [16][21][36][24][32][3] | Arousal; drowsiness; workload | Motion artefacts; signal noise; delayed HRV response | Med; temporal complexity | Med–High |

| EDA / GSR [37][28][24][38] | Stress; arousal; frustration | Contact sensitivity; drift; influenced by hydration/skin properties/placement | Wearables feasible; ambiguous LLM interpretation | Med |

| Eye tracking [39][3][4][14][36] | Attention; situational awareness; drowsiness; workload | Lighting variation; occlusion; calibration sensitivity | Med–low efficiency | Med |

| Vehicle telemetry | ||||

| Steering wheel (angle/torque/variability) [14][23][13][28][21] | Fatigue; drowsiness; distraction | Reactive (not proactive); indirect correlation; limited personalization | High efficiency | Low |

| Pedal interaction (brake/throttle) [23][33][40] | Attention level; situational awareness; arousal proxies; driving performance | Context-dependent; confounded by driving style/traffic; one-size-fits-all assumptions | High efficiency | Low |

| Planning and Decision Making | Environmental or Contextual Reasoning | Command or Intent Reasoning | Memory and Personalization | |

|---|---|---|---|---|

| Driver State Detection and Interpretation | [18]: VLM, reasoning chain framework (7 steps);[20]: LLM (thermal fusion) + zero-shot | [22]: MLLMs with Human-Centric Context Generator (HCG) for scene graphs;[38]: VLM+LLM for contextual interactions; evidential fusion for uncertainty;[4][30]: VLM for Driver Monitoring (Idefics2, PaliGemma) + prompt engineering;[27]: Emotion-LLaMA for aligning emotional tone;[32]: LoRA-optimized multi-state detection | – | – |

| Interaction with Humans | [15][8]: LLM with CoT prompting;[16]: LLM (GPT-4) with prompt engineering | [17]: RAG to retrieve from external knowledge;[16]: LLM feedback by contextual relevance;[33][14]: ChatGPT-4 voice assistant;[27]: MLLM for affect-aware reasoning | [9][43][13][37]: LLM interprets natural language commands;[35][39]: LLM + CoT for command interpretation;[17]: LLM with RAG for queries | [15][8]: memory module (individualization profile) + RAG;[13]: memory module (working/procedural/semantic);[28]: human data into preferences;[14]: ChatGPT for adaptive dialogue |

| Interaction with Vehicles | [9][43][44][13]: LLM generates action policies for vehicles;[34]: LLM (Llama3) plans & refines vehicle maneuvers | [9][43]: LLMs process contextual data with CoT prompting;[34]: LLM estimates driving styles | [44][13]: LLM generates driving policy code via intent reasoning;[34]: human instructions as prompts | [34]: interaction memory database + memory partition module |

| Closed-Loop Systems | [42]: GPT-4 translates natural commands into controls;[36]: EEG + LLM dialogue agent;[21] (Conceptual): LLMs for decision support (emotion/fatigue recognition) | [42]: GPT-4 for context and emotional state;[36]: affect-aware dialogue interaction;[21]: LLMs with bio-signal + context fusion | [42]: GPT-4 interprets direct vs. indirect intentions | [42]: memory module for past interactions;[36]: LoRA fine-tuned LLMs on driver dialogue, training on driver’s dialogue data to embody user’s personality and emotional traits;[21]: personalized ADAS through empathetic interactions |

| Type | Focus | Datasets | Modalities | Utilization in Reviewed Studies |

|---|---|---|---|---|

| Driver State | Distraction | StateFarmSynDD1, DMDSAM-DD | RGBRGBRGB | [30] [29][29][29][32] |

| Drowsiness | NTHU-DDD | RGB | [32] | |

| Emotion | KMU-FEDFER2013MERR, CA-MER | RGBRGBMultimodal (Audio/Visual/Text) | [32][23][23][24] | |

| Multi-state | AIDE (Emotion, Drowsiness, Distraction)3MDAD (Distraction, Drowsiness)TFW / SF-TL54 (Distraction, Drowsiness) | RGB (internal & external view)RGBFar-infrared spectrum | [22][38][22][38][20] | |

| Instruction | Command grounding | UCUTalk2Car | TextRGB + LiDAR + RADAR + GPS + Text (commands) | [35][9] |

| Reasoning / QA | DriveLM, nuScenes-QA | RGB + LiDAR + RADAR + GPS + textual Q/A | [17] | |

| Program / Policy synthesis | LaMPilot-Bench | Text (commands) + simulation environment | [44] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.