Submitted:

09 March 2026

Posted:

10 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Smart Urbanism and the Conceptual Gap

2.1. Smart Urbanism as a Governance Project

2.2. The Prioritization Turns: From “Smart Services” to AI-Enabled Capital Allocation

2.3. Why Existing Smart City Models Under-Theorize Psychosocial Outcomes

2.4. Why Do Climate-Resilient Planning Frameworks Omit Algorithmic Governance Mechanisms

2.5. The Conceptual Gap

2.6. Comparison Table: What Conventional Smart Urbanism Omits and What Caring Cities Adds

| Governance Dimension | Conventional Smart-Urbanism Tendency | Caring Urban AI Intervention |

|---|---|---|

| Planning objective | Efficiency, cost-effectiveness, performance metrics | Well-being-supportive living conditions formalized as objective terms |

| Equity treatment | Aspirational language, distribution evaluated post hoc | Equity encoded as auditable constraints (floors, caps, equal-opportunity conditions) |

| Climate uncertainty | Single-scenario scoring, point-estimate risk maps | Robustness logic across plausible futures; stress testing and regret minimization |

| Data politics | Data treated as neutral input | Classification and representation treated as distributive choices requiring stewardship |

| Accountability | Vendor opacity, limited explainability | Documentation, auditing, contestability, and redress treated as core requirements |

| Participation | Consultation around outputs | Co-governance of problem framing, indicators, and constraints; ongoing oversight |

| Mental well-being | Diffuse “quality of life” claims | Psychosocial mediators specified and protected through objectives/constraints |

3. Materials & Methods

3.1. Study Design and Framework-Construction Method

3.2. Evidence Domains and Search Strategy

3.3. Inclusion/Exclusion Logic

3.4. Concept Extraction, Integration, and Derivation of the Five-Layer Architecture

3.5. Operationalization Logic for Propositions and Specification Guide

4. Results

4.1. Climate Risk, Resilience, and Deep Uncertainty

4.2. Urban Well-Being and Mental Health as Planning-Relevant Outcomes

4.3. Psychosocial Mediators

- Housing stability and security, including predictability of shelter and tenure-related stress burdens [17].

- Perceived safety and environmental threat, shaped by both physical conditions and institutional trust [16].

- Control and agency, including residents, perceived ability to manage risks and influence decisions [45].

4.4. AI-Enabled Planning Systems as Socio-Technical Decision Infrastructures

4.5. Objective Functions, Equity Constraints, and Robustness Logic

4.6. Algorithmic Accountability, Documentation, Auditing, and Contestability

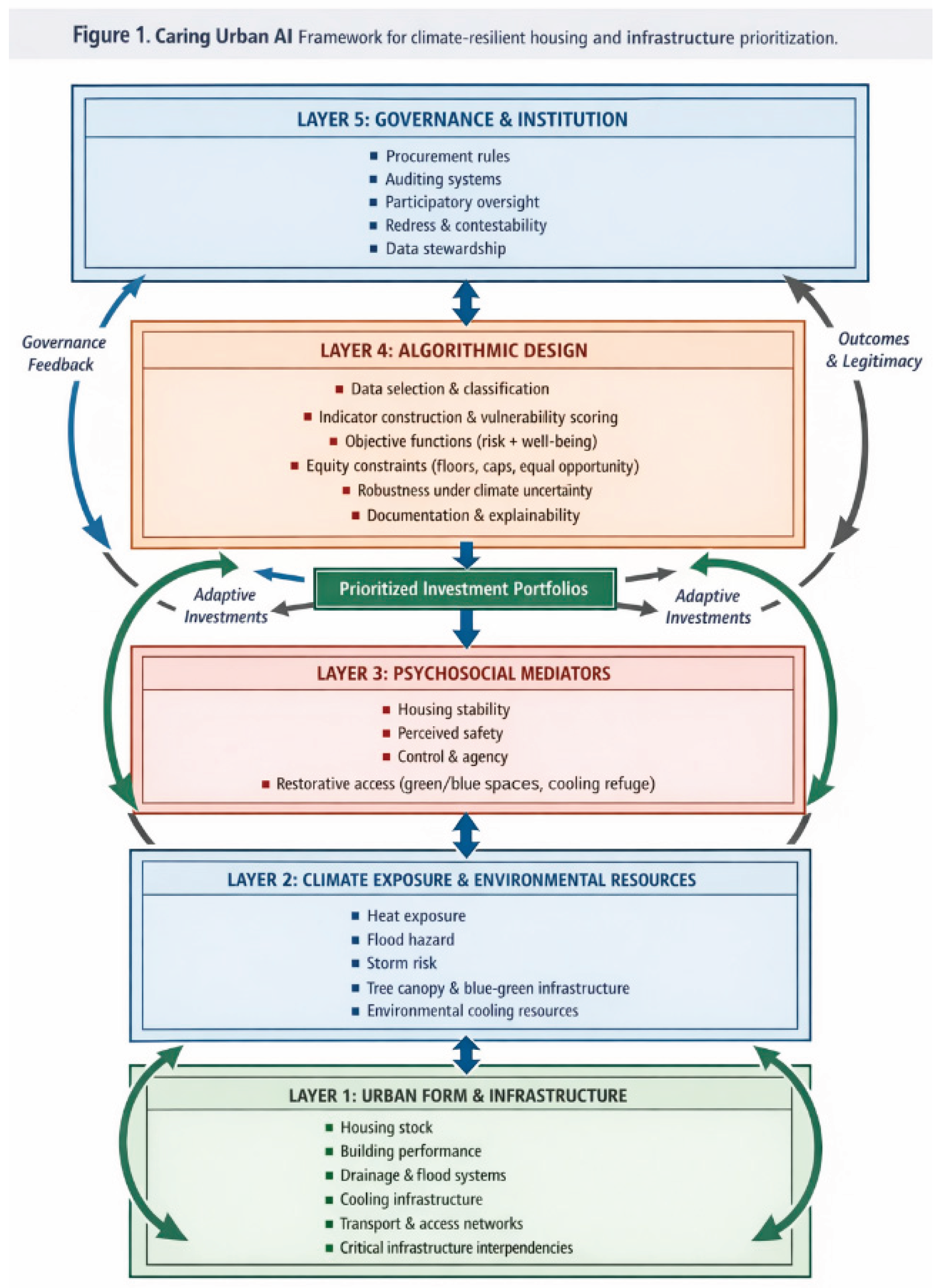

5. The Caring Urban AI Framework

5.1. Core Premise

5.2. Five-Layer Architecture

- Data selection and classification, including the politics of categories and missingness [26].

- Objective function specification, including explicit inclusion of psychosocial mediators and service reliability.

5.3. Feedback Loops and Dynamic Behavior

- Investment-to-mediator loop: housing and infrastructure interventions reshape psychosocial mediators, e.g., stability and perceived safety, thus affecting well-being trajectories [17].

- Algorithm-to-investment loop: prioritization outputs become implementation sequences, reshaping Layer 1 and redistributing protection and services.

5.4. Figure Description (Conceptual)

5.5. Caring Urban AI within Digital Urban Governance Architectures

6. Propositional Development

- Directional expectation. Systems with mediator-inclusive objectives will generate investment portfolios that more consistently improve well-being-supportive living conditions than systems that optimize only hazard reduction and cost.

- Empirical test route. Comparative policy evaluation of portfolio outputs before/after objective revisions, combined with mixed-method assessment of mediator-relevant indicators and resident-reported experiences.

- Directional expectation. Under-represented neighborhoods will be systematically under-prioritized for climate-resilient housing and infrastructure investments even when aggregate model performance appears acceptable.

- Empirical test route. Data and model audit linking missingness and proxy bias to prioritization outcomes, supplemented by participatory validation or targeted ground-truthing of high-risk areas.

- Directional expectation. Unconstrained systems will widen spatial disparities in climate protection and well-being-supportive living conditions compared with constrained systems that enforce service floors or disparity caps.

- Empirical test route. Counterfactual simulation comparing unconstrained vs. equity-constrained portfolios on the same project universe, evaluating disparity metrics and distributional coverage.

- Directional expectation. Portfolios chosen under robustness criteria will show fewer failure modes and lower regret under alternative climate scenarios than portfolios optimized for a single forecast.

- Empirical test route. Scenario stress-testing of portfolios within a digital twin or planning model, comparing regret and service failure outcomes across plausible hazard trajectories.

- Directional expectation. When restorative access objectives are paired with anti-displacement governance and equity constraints, portfolios will deliver larger well-being co-benefits and fewer adverse distributional effects than portfolios that treat green/blue infrastructure solely as amenity or aesthetic improvement.

- Empirical test route. Policy evaluation comparing intervention areas with and without explicit anti-displacement safeguards, assessing changes in access, perceived comfort/safety, and displacement pressure indicators.

- Directional expectation. Systems with institutionalized participatory oversight will exhibit higher legitimacy (trust and acceptance) and improved mediator relevance of indicators, reducing psychosocial harms associated with technocratic allocation.

- Empirical test route. Comparative process evaluation across governance models, measuring changes in objectives/constraints after participation and assessing trust, contestation uptake, and perceived agency.

- Directional expectation. Prioritization systems with strong documentation and routine audits will show lower persistence of systematic distributive errors than systems governed only by transparency statements or one-time evaluations.

- Empirical test route. Cross-sectional governance audit scoring documentation and monitoring practices, linked to observed correction rates and identified bias incidents over time.

- Directional expectation. Cities with explicit stewardship and contestability arrangements will exhibit higher-quality data inputs for prioritization (reduced missingness in marginalized areas) and higher public cooperation than cities where data practices are opaque or vendor-dominated.

- Empirical test route. Comparative institutional analysis of stewardship models linked to participation rates, data completeness metrics, and survey-based trust indicators in affected communities.

7. Governance and Planning Implications

7.1. Procurement as a Governance Lever for Objective and Constraint Transparency

- Objective function transparency: the system must publish and maintain a clear statement of objectives, including how psychosocial mediators and service reliability are represented where relevant.

- Equity constraints as enforceable requirements: equity is not a reporting metric but a feasibility condition (floors, caps, equal-opportunity constraints where predictive models are used).

- Robustness-oriented uncertainty handling: the system must demonstrate performance under scenario ranges and document how decisions change across futures.

- Audit interfaces: the system must support independent auditing, including reproducible evaluation pipelines and access to relevant logs under appropriate safeguards [59].

7.2. Algorithmic Impact Assessment as Planning Due Diligence

- Decision context and scope (what decisions the system influences).

- Affected populations and equity-relevant groups.

- Data provenance, representational adequacy, and proxy risks.

- Objective functions and equity constraints, including rationales and trade-offs.

- Uncertainty handling (scenarios, stress tests, robustness criteria).

- Monitoring and re-evaluation cycles (drift detection).

7.3. Documentation, Explainability, and the Limits of Transparency

- Interpretability for planners: the system must allow planners to understand why a portfolio was selected and how trade-offs were handled.

- Auditability for oversight bodies: the system must support reproducible evaluation of bias, performance, and constraint compliance.

- Contestability for affected residents: the system must provide explanations suitable for challenge and remedy where decisions impose harm.

7.4. Auditing and Monitoring as Continuous Governance

- Representation audits (coverage and missingness by neighborhood and group).

- Constraint compliance audits (floors and caps enforced).

- Performance audits (predictive validity where prediction is used, and error distribution).

- Outcome audits (distribution of investments and service improvements).

- Process audits (whether participation, contestation, and corrections occur).

7.5. Participatory Oversight and Democratic Contestability

7.6. Box 1. Operationalizing Caring Urban AI (Conceptual Guide)

- Minimize combined climate harm and psychosocial stress burden subject to feasibility and budget.

- Minimize worst-case unacceptable harm across plausible climate scenarios (robustness).

- Maximize well-being-supportive stability (housing security, thermal comfort, service reliability, restorative access) while meeting minimum risk reduction thresholds.

- Service floors: every neighborhood must meet minimum thresholds of heat refuge access, flood protection coverage, or housing retrofit eligibility.

- Disparity caps: limit the gap in protection or restorative access between high-deprivation and low-deprivation areas.

- Equal-opportunity constraints: when predictive scores are used to identify “high need,” require comparable false negative rates across protected groups to reduce systematic under-prioritization [54].

- Scenario ranges: evaluate portfolios across ensembles of plausible hazard pathways rather than a single projection [4].

- Robustness criteria: select portfolios that avoid catastrophic underperformance and minimize regret [41].

- Stress tests: identify neighborhoods that become high-risk under higher-end scenarios and ensure adaptation pathways remain adjustable.

- Perceived safety and environmental threat (survey-based or participatory assessments).

- Housing stability risk proxies (tenure precarity, displacement pressure indicators).

- Access to restorative environments and heat refuges (walk-time accessibility; canopy/shade access) [47].

- Reliability of essential services during hazard events (interdependency-sensitive metrics) [39].

7.7. Avoiding “Caring Washing” in Smart City Governance

8. Boundary Conditions and Research Agenda

8.1. Primary Domain of Applicability

- A city uses GIS and data platforms to develop risk and vulnerability layers.

- Prioritization involves ranking projects or selecting portfolios.

- Institutional capacity exists (or can be built) for documentation, auditing, and participatory oversight.

8.2. Where the Framework Should Not Be Applied

- Fully automated prioritization without meaningful human accountability and democratic oversight.

- Adaptation strategies that treat displacement as a default solution, absent strong protections and consent mechanisms [34].

8.3. Global North / Global South, Data-Poor Contexts, and Informal Settlements

- Participatory mapping and community-generated data with safeguards against extraction and harm [75].

- Stronger data stewardship and contestability protections, given heightened risks of eviction or policing.

8.4. Research Agenda for Smart City Governance Scholarship

- 3.

9. Discussion and Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- United Nations General Assembly. Transforming Our World: The 2030 Agenda for Sustainable Development; Resolution A/RES/70/1; United Nations: New York, NY, USA, 2015. [Google Scholar]

- United Nations General Assembly. New Urban Agenda; Resolution A/RES/71/256; United Nations: New York, NY, USA, 2016. [Google Scholar]

- United Nations Office for Disaster Risk Reduction (UNDRR). Sendai Framework for Disaster Risk Reduction 2015–2030; UNDRR: Geneva, Switzerland, 2015. [Google Scholar]

- Intergovernmental Panel on Climate Change (IPCC). Climate Change 2022: Impacts, Adaptation and Vulnerability; Working Group II Contribution to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change; Cambridge University Press: Cambridge, UK, 2022. [Google Scholar]

- Couclelis, H. “Where Has the Future Gone?” Rethinking the Role of Integrated Land-Use Models in Spatial Planning. Environ. Plan. A 2005, 37, 1353–1371. [Google Scholar] [CrossRef]

- Kitchin, R.; Lauriault, T.P.; McArdle, G. Knowing and Governing Cities through Urban Indicators, City Benchmarking and Real-Time Dashboards. Reg. Stud. Reg. Sci. 2015, 2, 6–28. [Google Scholar] [CrossRef]

- Kitchin, R.; Maalsen, S.; McArdle, G. The Praxis and Politics of Building Urban Dashboards. Geoforum 2016, 77, 93–101. [Google Scholar] [CrossRef]

- Hollands, R.G. Will the Real Smart City Please Stand Up? Intelligent, Progressive or Entrepreneurial? City 2008, 12, 303–320. [Google Scholar] [CrossRef]

- Kitchin, R. The Ethics of Smart Cities and Urban Science. Phil. Trans. R. Soc. A 2016, 374, 20160115. [Google Scholar] [CrossRef] [PubMed]

- Sadowski, J.; Pasquale, F. The Spectrum of Control: A Social Theory of the Smart City. First Monday 2015, 20. [Google Scholar] [CrossRef]

- Shelton, T.; Zook, M.; Wiig, A. The “Actually Existing Smart City”. Camb. J. Reg. Econ. Soc. 2015, 8, 13–25. [Google Scholar] [CrossRef]

- Söderström, O.; Paasche, T.; Klauser, F. Smart Cities as Corporate Storytelling. City 2014, 18, 307–320. [Google Scholar] [CrossRef]

- Vanolo, A. Smartmentality: The Smart City as Disciplinary Strategy. Urban Stud. 2014, 51, 883–898. [Google Scholar] [CrossRef]

- Tronto, J.C. Moral Boundaries: A Political Argument for an Ethic of Care; Routledge: New York, NY, USA, 1993. [Google Scholar]

- Tronto, J.C. Caring Democracy: Markets, Equality, and Justice; New York University Press: New York, NY, USA, 2013. [Google Scholar]

- Evans, G.W. The Built Environment and Mental Health. J. Urban Health 2003, 80, 536–555. [Google Scholar] [CrossRef]

- Evans, G.W.; Wells, N.M.; Moch, A. Housing and Mental Health: A Review of the Evidence and a Methodological and Conceptual Critique. J. Soc. Issues 2003, 59, 475–500. [Google Scholar] [CrossRef]

- Hartig, T.; Mitchell, R.; de Vries, S.; Frumkin, H. Nature and Health. Annu. Rev. Public Health 2014, 35, 207–228. [Google Scholar] [CrossRef]

- Berry, H.L.; Bowen, K.; Kjellstrom, T. Climate Change and Mental Health: A Causal Pathways Framework. Int. J. Public Health 2010, 55, 123–132. [Google Scholar] [CrossRef]

- Clayton, S.; Manning, C.M.; Krygsman, K.; Speiser, M. Mental Health and Our Changing Climate: Impacts, Implications, and Guidance; American Psychological Association and ecoAmerica: Washington, DC, USA, 2017. [Google Scholar]

- Cunsolo, A.; Ellis, N.R. Ecological Grief as a Mental Health Response to Climate Change-Related Loss. Nat. Clim. Chang. 2018, 8, 275–281. [Google Scholar] [CrossRef]

- Albino, V.; Berardi, U.; Dangelico, R.M. Smart cities: Definitions, dimensions, performance, and initiatives. J. Urban Technol. 2015, 22, 3–21. [Google Scholar] [CrossRef]

- Batty, M.; Axhausen, K.W.; Giannotti, F.; Pozdnoukhov, A.; Bazzani, A.; Wachowicz, M.; Ouzounis, G.; Portugali, Y. Smart cities of the future. Eur. Phys. J. Spec. Top. 2012, 214, 481–518. [Google Scholar] [CrossRef]

- Barns, S. Platform Urbanism: Negotiating Platform Ecosystems in Connected Cities; Palgrave Macmillan: Singapore, 2020. [Google Scholar] [CrossRef]

- Cutter, S.L.; Boruff, B.J.; Shirley, W.L. Social vulnerability to environmental hazards. Soc. Sci. Q. 2003, 84, 242–261. [Google Scholar] [CrossRef]

- Bowker, G.C.; Star, S.L. Sorting Things Out: Classification and Its Consequences; MIT Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Dembski, F.; Wössner, U.; Letzgus, M.; Ruddat, M.; Yamu, C. Urban digital twins for smart cities and citizens: The case study of Herrenberg, Germany. Sustainability 2020, 12, 2307. [Google Scholar] [CrossRef]

- Malczewski, J. GIS and Multicriteria Decision Analysis; John Wiley & Sons: New York, NY, USA, 1999. [Google Scholar]

- Malczewski, J. GIS-based multicriteria decision analysis: A survey of the literature. Int. J. Geogr. Inf. Sci. 2006, 20, 703–726. [Google Scholar] [CrossRef]

- Geertman, S.; Stillwell, J. (Eds.) Planning Support Systems: Best Practice and New Methods; Springer: Dordrecht, The Netherlands, 2009. [Google Scholar] [CrossRef]

- Gascon, M.; Triguero-Mas, M.; Martínez, D.; Dadvand, P.; Forns, J.; Plasència, A.; Nieuwenhuijsen, M.J. Mental health benefits of long-term exposure to residential green and blue spaces: A systematic review. Int. J. Environ. Res. Public Health 2015, 12, 4354–4379. [Google Scholar] [CrossRef]

- Twohig-Bennett, C.; Jones, A. The health benefits of the great outdoors: A systematic review and meta-analysis of greenspace exposure and health outcomes. Environ. Res. 2018, 166, 628–637. [Google Scholar] [CrossRef] [PubMed]

- Anguelovski, I.; Connolly, J.J.T.; Masip, L.; Pearsall, H. Assessing green gentrification in historically disenfranchised neighborhoods: A longitudinal and spatial analysis of Barcelona. Urban Geogr. 2018, 39, 458–491. [Google Scholar] [CrossRef]

- Anguelovski, I.; Connolly, J.J.T.; Pearsall, H.; Shokry, G.; Checker, M.; Maantay, J.; Gould, K.; Lewis, T.; Maroko, A.; Roberts, J.T.; et al. Opinion: Why green “climate gentrification” threatens poor and vulnerable populations. Proc. Natl. Acad. Sci. USA 2019, 116, 26139–26143. [Google Scholar] [CrossRef]

- Barocas, S.; Selbst, A.D. Big data’s disparate impact. Calif. Law Rev. 2016, 104, 671–732. [Google Scholar] [CrossRef]

- Selbst, A.D.; Boyd, D.; Friedler, S.A.; Venkatasubramanian, S.; Vertesi, J. Fairness and abstraction in sociotechnical systems. In Proceedings of the 2019 Conference on Fairness, Accountability, and Transparency (FAT ’19)*, Atlanta, GA, USA, 29–31 January 2019; ACM: New York, NY, USA, 2019; pp. 59–68. [Google Scholar] [CrossRef]

- Mehrabi, N.; Morstatter, F.; Saxena, N.; Lerman, K.; Galstyan, A. A survey on bias and fairness in machine learning. ACM Comput. Surv. 2021, 54, 115. [Google Scholar] [CrossRef]

- Meerow, S.; Newell, J.P.; Stults, M. Defining urban resilience: A review. Landsc. Urban Plan. 2016, 147, 38–49. [Google Scholar] [CrossRef]

- Rinaldi, S.M.; Peerenboom, J.P.; Kelly, T.K. Identifying, understanding, and analyzing critical infrastructure interdependencies. IEEE Control Syst. Mag. 2001, 21, 11–25. [Google Scholar] [CrossRef]

- Hallegatte, S. Strategies to adapt to an uncertain climate change. Glob. Environ. Chang. 2009, 19, 240–247. [Google Scholar] [CrossRef]

- Lempert, R.J.; Popper, S.W.; Bankes, S.C. Shaping the Next One Hundred Years: New Methods for Quantitative, Long-Term Policy Analysis; RAND Corporation: Santa Monica, CA, USA, 2003; MR-1626-RPC. [Google Scholar] [CrossRef]

- Bullard, R.D. Dumping in Dixie: Race, Class, and Environmental Quality; Westview Press: Boulder, CO, USA, 1990. [Google Scholar]

- Schlosberg, D. Defining Environmental Justice: Theories, Movements, and Nature; Oxford University Press: Oxford, UK, 2007. [Google Scholar]

- Haasnoot, M.; Kwakkel, J.H.; Walker, W.E.; ter Maat, J. Dynamic adaptive policy pathways: A method for crafting robust decisions for a deeply uncertain world. Global Environ. Change 2013, 23, 485–498. [Google Scholar] [CrossRef]

- Marmot, M. Fair Society, Healthy Lives: The Marmot Review; The Marmot Review: London, UK, 2010. [Google Scholar]

- Dahlgren, G.; Whitehead, M. Policies and Strategies to Promote Social Equity in Health; Institute for Futures Studies: Stockholm, Sweden, 1991. [Google Scholar]

- World Health Organization Regional Office for Europe. Urban Green Spaces and Health: A Review of Evidence; WHO Regional Office for Europe: Copenhagen, Denmark, 2016. [Google Scholar]

- Galea, S.; Freudenberg, N.; Vlahov, D. Cities and population health. Soc. Sci. Med. 2005, 60, 1017–1033. [Google Scholar] [CrossRef] [PubMed]

- Gruebner, O.; Rapp, M.A.; Adli, M.; Kluge, U.; Galea, S.; Heinz, A. Cities and mental health. Dtsch. Arztebl. Int. 2017, 114, 121–127. [Google Scholar] [CrossRef] [PubMed]

- Klosterman, R.E. The What If? collaborative planning support system. Environ. Plan. B Plan. Des. 1999, 26, 393–408. [Google Scholar] [CrossRef]

- te Brömmelstroet, M. Performance of planning support systems: What is it and how do we report on it? Comput. Environ. Urban Syst. 2013, 41, 299–308. [Google Scholar] [CrossRef]

- Biljecki, F.; Stoter, J.; Ledoux, H.; Zlatanova, S.; Cöltekin, A. Applications of 3D city models: State of the art review. ISPRS Int. J. Geo-Inf. 2015, 4, 2842–2889. [Google Scholar] [CrossRef]

- Fuller, A.; Fan, Z.; Day, C.; Barlow, C. Digital twin: Enabling technologies, challenges and open research. IEEE Access 2020, 8, 108952–108971. [Google Scholar] [CrossRef]

- Hardt, M.; Price, E.; Srebro, N. Equality of opportunity in supervised learning. In Advances in Neural Information Processing Systems 29 (NIPS 2016); Curran Associates, Inc.: Red Hook, NY, USA, 2016; pp. 3315–3323. [Google Scholar]

- Dwork, C.; Hardt, M.; Pitassi, T.; Reingold, O.; Zemel, R. Fairness through awareness. In Proceedings of the 3rd Innovations in Theoretical Computer Science Conference (ITCS ’12), Cambridge, MA, USA, 8–10 January 2012; ACM: New York, NY, USA, 2012; pp. 214–226. [Google Scholar] [CrossRef]

- Walker, G. Environmental Justice: Concepts, Evidence and Politics; Routledge: London, UK, 2012. [Google Scholar]

- Diakopoulos, N. Algorithmic accountability: Journalistic investigation of computational power structures. Digit. J. 2015, 3, 398–415. [Google Scholar] [CrossRef]

- Pasquale, F. The Black Box Society: The Secret Algorithms That Control Money and Information; Harvard University Press: Cambridge, MA, USA, 2015. [Google Scholar]

- Raji, I.D.; Smart, A.; White, R.N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Smith-Loud, J.; Theron, D.; Barnes, P. Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAT* ’20), Barcelona, Spain, 27–30 January 2020; ACM: New York, NY, USA, 2020; pp. 33–44. [Google Scholar] [CrossRef]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model cards for model reporting. In Proceedings of the Conference on Fairness, Accountability, and Transparency (FAT* ’19), Atlanta, GA, USA, 29–31 January 2019; ACM: New York, NY, USA, 2019; pp. 220–229. [Google Scholar] [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.; Daumé, H., III; Crawford, K. Datasheets for datasets. Commun. ACM 2021, 64, 86–92. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology (NIST). Artificial Intelligence Risk Management Framework (AI RMF 1.0); NIST AI 100-1; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023. [Google Scholar]

- Organisation for Economic Co-operation and Development (OECD). Recommendation of the Council on Artificial Intelligence (OECD/LEGAL/0449); OECD: Paris, France, 2019. [Google Scholar]

- Reisman, D.; Schultz, J.; Crawford, K.; Whittaker, M. Algorithmic Impact Assessments: A Practical Framework for Public Agency Accountability; AI Now Institute: New York, NY, USA, 2018. [Google Scholar]

- Graham, S.; Marvin, S. Splintering Urbanism: Networked Infrastructures, Technological Mobilities and the Urban Condition; Routledge: London, UK, 2001. [Google Scholar]

- Flanagan, B.E.; Gregory, E.W.; Hallisey, E.J.; Heitgerd, J.L.; Lewis, B. A social vulnerability index for disaster management. J. Homel. Secur. Emerg. Manag. 2011, 8, 1–22. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “Why should I trust you?”: Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD ’16), San Francisco, CA, USA, 13–17 August 2016; ACM: New York, NY, USA, 2016; pp. 1135–1144. [Google Scholar] [CrossRef]

- Crawford, K. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence; Yale University Press: New Haven, CT, USA, 2021. [Google Scholar]

- Zuboff, S. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power; PublicAffairs: New York, NY, USA, 2019. [Google Scholar]

- Austin, L.M.; Lie, D. Data trusts and the governance of smart environments: Lessons from the failure of Sidewalk Labs’ Urban Data Trust. Surveill. Soc. 2021, 19, 255–261. [Google Scholar] [CrossRef]

- Arnstein, S.R. A ladder of citizen participation. J. Am. Inst. Plann. 1969, 35, 216–224. [Google Scholar] [CrossRef]

- Healey, P. Collaborative Planning: Shaping Places in Fragmented Societies; Macmillan Press: London, UK, 1997. [Google Scholar] [CrossRef]

- Cardullo, P.; Kitchin, R. Being a ‘citizen’ in the smart city: Up and down the scaffold of smart citizen participation in Dublin, Ireland. GeoJournal 2019, 84, 1–13. [Google Scholar] [CrossRef]

- Houser, K.A.; Bagby, J.W. The data trust solution to data sharing problems. Vand. J. Entertain. Technol. Law 2023, 25, 113–180. [Google Scholar] [CrossRef]

- Goodchild, M.F. Citizens as sensors: The world of volunteered geography. GeoJournal 2007, 69, 211–221. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).