I. Introduction

Long text and multi-document reasoning are becoming key hurdles for large language models to navigate complex real-world scenarios. With the increasing volume of information carriers such as government regulatory materials, medical guidelines, legal provisions, corporate knowledge bases, and research reviews, models need to maintain stable referential consistency and factual self-consistency within longer contexts. They also need to align evidence, resolve conflicts, and perform chain induction across multiple source documents. Without effective modeling of structural hierarchy and evidence organization, even models with strong language generation capabilities are prone to problems such as information omissions, cross-paragraph memory drift, unstable evidence citations, and conclusion jumps, directly impacting credibility and usability in high-risk tasks[

1].

Meanwhile, the challenges of long text and multi-document reasoning stem not only from the length itself but also from the hierarchical nature of information organization and the progressive nature of task objectives. Documents often contain chapter structures, semantic paragraphs, and key entity relationships, while multiple documents also exhibit thematic overlap, perspective differences, and information redundancy. To clearly present these challenges and their research value,

Table 1 briefly summarizes the core issues and highlights their direct requirements for methodological design. By breaking down the problem into actionable levels, we can more clearly define the boundaries of the algorithm’s learning capabilities, thus providing a theoretical basis for subsequent fine-tuning paradigms and data organization[

2].

In this context, tiered, course-based fine-tuning is a natural fit and has significant methodological implications. Tiered approach emphasizes organizing learning objectives at a structural granularity, enabling the model to first master intra-paragraph aggregation and local consistency, then transition to chapter-level induction and global argumentation, ultimately achieving cross-document evidence integration and conclusion generation[

3]. Course-based approach emphasizes arranging learning sequences according to difficulty and prior ability, allowing the model to build transferable reasoning skills on tasks with lower cognitive burdens, avoiding initial exposure to complex samples with high noise, strong conflict, and strong combinatoriality, which could lead to learning instability. Combining these two approaches forms a systematic fine-tuning path for reasoning with long texts and multiple documents, thereby improving the controllability, interpretability, and robustness of the reasoning process[

4,

5].

The significance of this research lies not only in improving the accuracy of models in answering complex questions but also in providing traceable evidence, organizational capabilities, and more reliable decision support for demanding applications. In knowledge-intensive scenarios, the key to output quality often lies in the ability to organize scattered information into structured arguments and, when necessary, clearly demonstrate the sources of evidence and the dependencies in reasoning[

6]. The tiered curriculum-based fine-tuning, with structure and difficulty as its two axes, unifies long text comprehension, multi-document fusion, and reasoning generation under the same training framework. It is expected to drive large language models from focusing on fluent expression to further developing capabilities centered on structured reasoning and evidence consistency, laying the methodological foundation for building usable, controllable, and reliable intelligent systems.

II. Methodology Foundation

The proposed hierarchical, curriculum-based fine-tuning framework is grounded in advances in structured representation learning, uncertainty-aware modeling, causal alignment, dynamic stability control, and adaptive training organization. These methodological strands collectively inform the construction of hierarchical encoding paths, differentiable evidence aggregation, and progressive curriculum scheduling.

Structured multi-source representation learning provides a foundational basis for organizing heterogeneous inputs into coherent latent spaces. Joint cross-modal representation learning demonstrates how heterogeneous signals can be aligned through unified embedding mechanisms while preserving hierarchical granularity [

7]. This principle directly motivates the three-level convergence path—tokens, fragments, and documents—where local semantic units are progressively aggregated into structured global representations rather than treated as flat sequences.

Long-context reasoning also requires robustness under noise and uncertainty. Uncertainty-driven robust time series forecasting illustrates how explicit uncertainty modeling stabilizes prediction under fluctuating signals [

8]. This informs the introduction of question-guided evidence scoring, where fragment weights are calibrated rather than implicitly determined by attention alone. Similarly, causal reasoning over structured knowledge systems demonstrates how relational dependencies can be preserved across multi-hop inference chains [

9], supporting the structured memory construction that maintains inter-fragment and inter-document consistency.

When multiple documents contain conflicting or redundant evidence, invariant alignment mechanisms become critical. Causal-invariant retrieval modeling under distribution shift shows how invariant representations suppress spurious correlations while preserving essential signals [

10]. This insight directly informs the differentiable evidence aggregation mechanism, which emphasizes alignment with question-relevant signals and suppresses redundant interference. Resource-aware inference scheduling strategies further highlight the importance of structured memory allocation and progressive capacity management [

11], conceptually aligning with hierarchical aggregation to mitigate memory drift in long contexts. Dynamic environments introduce semantic drift over extended sequences. Residual-regulated modeling for non-stationary sequences demonstrates how controlled residual adjustment mitigates drift accumulation [

12]. Structure-aware graph modeling for anomaly pattern recognition reinforces the importance of preserving structural relationships when detecting deviations across complex dependencies [

13]. These methods collectively inform the stability objective of hierarchical representation construction, ensuring that expansion in context length does not degrade internal consistency. Causal representation learning further contributes principles for disentangling stable core features from noise-induced variations [

14]. This aligns with the framework’s emphasis on distinguishing salient evidence from peripheral fragments. Conditional generative modeling with structured control mechanisms illustrates how controlled latent transitions maintain coherence across generative steps [

15], which parallels the need to maintain reasoning coherence across multi-document integration stages.

Uncertainty-aware summarization approaches introduce calibrated confidence estimation within generation processes [

16], guiding the suppression of unreliable evidence contributions during aggregation. Adaptive structural fusion techniques for multi-task adaptation demonstrate how dynamic weighting mechanisms reconcile heterogeneous contextual signals [

17], directly supporting the question-guided weight aggregation layer in the proposed reasoning module.

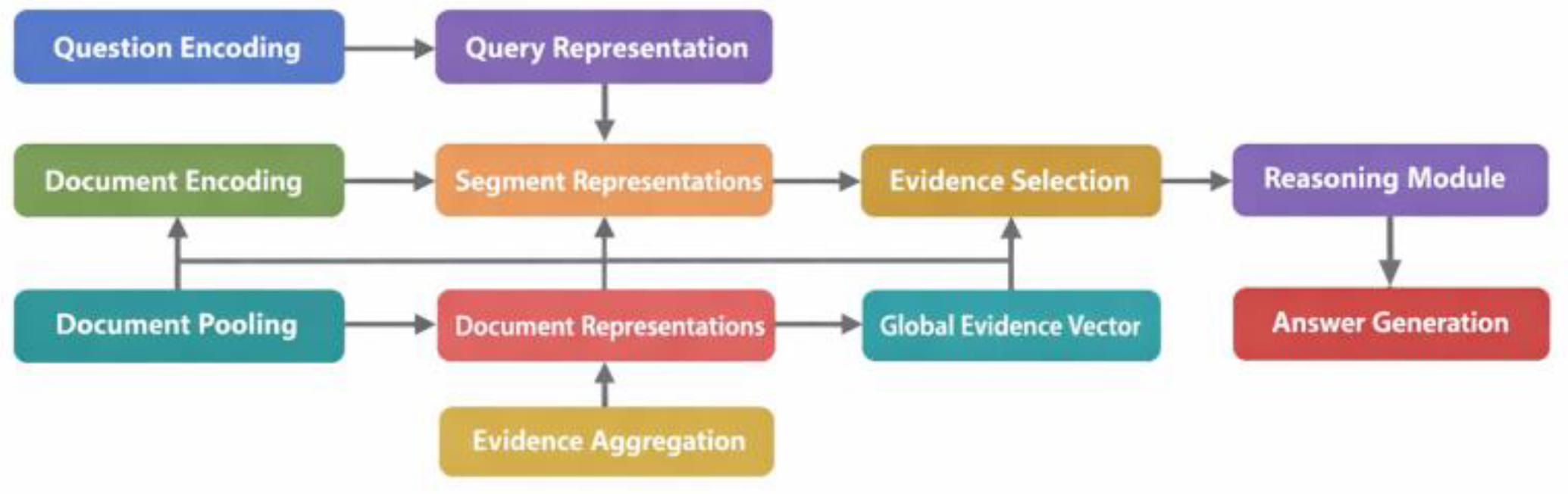

IV. Method

In long text and multi-document reasoning scenarios, the core challenge lies in the large information span and dispersed evidence distribution. The model must maintain stable semantic representations within documents while simultaneously aligning evidence and aggregating reasoning across multiple documents. To address this, this paper proposes a hierarchical, course-based fine-tuning framework. This framework organizes the input into a hierarchical structure of questions and multi-document contexts, and decomposes the training objective into a progressively deeper sequence of capabilities. To ensure stable semantic representations under long contexts, we build upon the semantic alignment and output-constrained generation framework proposed by Yang et al [

18]. Their method fundamentally applies alignment objectives to regulate representation consistency and leverages output constraints to prevent semantic deviation during generation. We adopt their semantic alignment principle at the representation level to calibrate token and fragment-level embeddings, and incorporate constrained supervision signals to reduce context-induced semantic drift. By extending alignment control from classification outputs to hierarchical reasoning states, we strengthen intra-document stability during long-span encoding. To support structured memory construction and progressive capability growth, we further build upon the autonomous learning and knowledge structuring mechanism introduced by Wang et al [

19]. Their approach fundamentally applies self-driven exploration to organize information into structured knowledge representations, enabling agents to gradually refine internal abstractions. We adopt this knowledge structuring principle to construct a three-level convergence path of tokens, fragments, and documents, and leverage progressive abstraction to guide the transition from local aggregation to cross-document integration. By incorporating structured representation accumulation into fine-tuning, we extend autonomous knowledge organization into supervised hierarchical reasoning.To enhance robustness under multi-source conflicts and noisy evidence, we incorporate the semantic calibration strategy proposed by Shao et al. [

20], which fundamentally applies calibration mechanisms to align model predictions with semantically consistent evidence distributions under adversarial perturbations. We adopt their calibration principle to regulate question-guided evidence scoring and weight aggregation, leveraging semantic consistency signals to suppress redundant or misleading fragments. By building upon adversarial robustness techniques, we extend semantic calibration into multi-document evidence alignment, ensuring stable global evidence vector construction across structurally complex inputs. The model first learns to maintain consistency within local structures, then gradually learns cross-document evidence selection and global reasoning generation. A sample consists of a question

and a document set

. Each document C comprises several paragraphs or fragments, which can be further expanded into token sequences. The model employs a three-layer representation learning path, corresponding to the token layer, fragment layer, and document layer. Explicit hierarchical aggregation compresses the long context into a structured memory capable of reasoning, while preserving document boundary information to support evidence tracking. Overall, the framework views multi-document reasoning as an evidence retrieval and aggregation process on hierarchical memory, then uses the aggregated global semantic state for answer generation, thus making the fine-tuning process more aligned with the structural attributes and difficulty gradients of long text tasks. This paper presents the overall model architecture, as shown in

Figure 1.

First, the token sequence of the m-th document is encoded to obtain a token-level hidden representation. Let the length of the expanded document be

, then:

Here,

represents the base encoder, and

is the hidden dimension. To explicitly introduce a hierarchical structure, the document is divided into several fragment sets

, each fragment corresponding to a continuous token interval

. Fragment representation uses interval average pooling to keep the formula simple and stable:

A document-level representation is built on top of the fragments for cross-document alignment and global inference. The document representation is also obtained by converging the fragment means:

In the evidence alignment phase, the model needs to identify key evidence relevant to the question from multiple documents and fragments. Based on the question representation

and fragment representation

, a scoring function is defined, and evidence weights are obtained through softmax, thereby achieving differentiable evidence selection.

Where

is a learnable parameter, and

represents the relative importance of the fragment as evidence. A weighted summation is then used to obtain a global evidence vector

, which is used to aggregate cross-document information and suppress interference from redundant fragments.

The global evidence vector and the question representation together constitute the reasoning state, which can be directly used for answer generation or further lightweight reasoning modules.

Course-based fine-tuning controls the probability of samples of varying difficulty appearing during training, allowing the model’s capabilities to gradually increase according to difficulty. Let

represent the difficulty sets, corresponding to easy, medium, and hard, respectively, and let

be the number of training steps. Define a difficulty weight function that increases with training progress, gradually increasing the probability of high-difficulty samples:

Where

is a monotonically increasing progress function, and

is a fixed coefficient for different difficulties. The final fine-tuning objective, expressed in conditional generation form, is to maximize the log-likelihood of the answer sequence

under the given problem and hierarchical context:

Here, represents the model parameters, and is jointly determined by the inference state and the decoder. By providing stable, structured memory through hierarchical representation learning, achieving traceable multi-document alignment through evidence weights, and gradually increasing the difficulty of training samples through course scheduling, the overall method can more directly address the stable representation, key selection, and cross-source aggregation capabilities required for long text and multi-document inference without relying on additional experimental hypotheses.