Submitted:

06 March 2026

Posted:

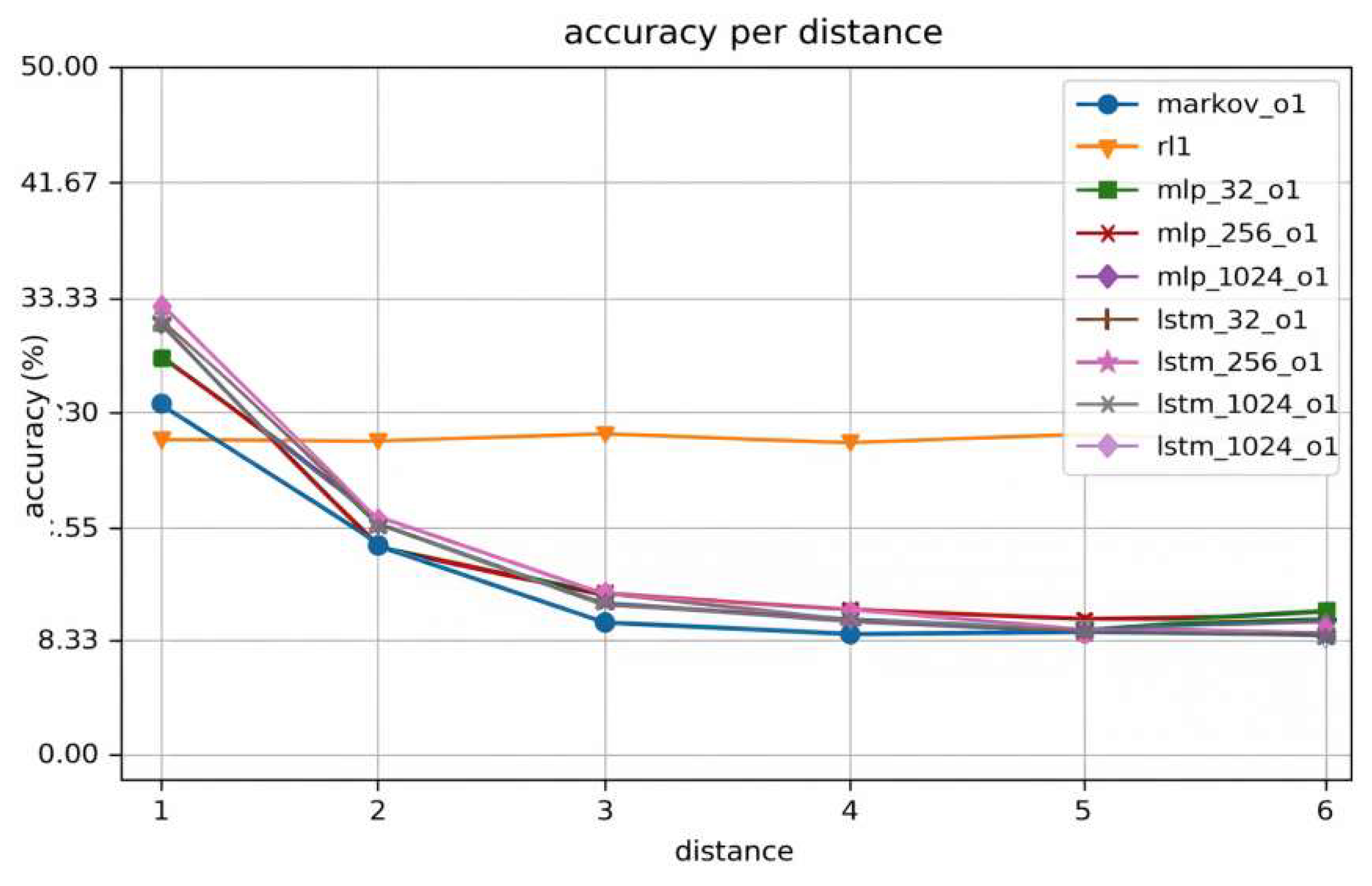

09 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Sample and Study Environment Description

2.2. Experimental Design and Control Settings

2.3. Measurement Methods and Quality Control

2.4. Data Processing and Model Formulation

2.5. Implementation and Reproducibility

3. Results and Discussion

3.1. Task Success and Irreversible-Action Failures

3.2. Efficiency and Budget Adherence

3.3. Effect of Hazard Prediction and Gating

3.4. Comparison with Existing Approaches

4. Conclusion

References

- Mao, Y.; Ma, X.; Li, J. Research on Web System Anomaly Detection and Intelligent Operations Based on Log Modeling and Self-Supervised Learning. 2025. [Google Scholar] [CrossRef]

- Banerjie, S.; Zhu, Y.; Freeman, I.; Machado, J. V.; Ahmed, A.; Sarker, A.; Al-Garadi, M. Agentic AI in Healthcare: A Comprehensive Survey of Foundations, Taxonomy, and Applications; Authorea Preprints, 2025. [Google Scholar]

- Li, T.; Xia, J.; Liu, S.; Jiang, Y. Digital Transformation of Human Resources: From Consulting Frameworks to AI-Enabled Learning Management Systems. 2025. [Google Scholar] [CrossRef]

- Awais, M.; Naseer, M.; Khan, S.; Anwer, R. M.; Cholakkal, H.; Shah, M.; Khan, F. S. Foundation models defining a new era in vision: a survey and outlook. IEEE Transactions on Pattern Analysis and Machine Intelligence 2025, 47(4), 2245–2264. [Google Scholar] [CrossRef] [PubMed]

- Ma, Q.; Yue, L.; Xu, S.; Shi, Y.; Liu, H. Web Agent Agentic Reinforcement Learning Decision Model Under Multi-Cost and Failure Risk Constraints. 2026. [Google Scholar] [CrossRef]

- Engelsman, D.; Klein, I. Guidance, Navigation, and Control: A Survey, Taxonomy, and Challenges; Authorea Preprints, 2025. [Google Scholar]

- Gu, X.; Liu, M.; Yang, J. Application and Effectiveness Evaluation of Federated Learning Methods in Anti-Money Laundering Collaborative Modeling Across Inter-Institutional Transaction Networks. 2025. [Google Scholar]

- Qiu, Y.; Wang, J. A machine learning approach to credit card customer segmentation for economic stability. In Proceedings of the 4th International Conference on Economic Management and Big Data Applications, ICEMBDA, 2023, October; pp. 27–29. [Google Scholar]

- Alikhasi, M.; Lelis, L. H. Unveiling Options with Neural Decomposition. arXiv 2024, arXiv:2410.11262. [Google Scholar] [CrossRef]

- Zhu, W.; Yao, Y.; Yang, J. Real-Time Risk Control Effects of Digital Compliance Dashboards: An Empirical Study Across Multiple Enterprises Using Process Mining, Anomaly Detection, and Interrupt Time Series. 2025. [Google Scholar] [PubMed]

- Srivastava, K. K. S3: Stable Subgoal Selection by Constraining Uncertainty of Coarse Dynamics in Hierarchical Reinforcement Learning. Master’s thesis, University of Massachusetts Lowell, 2025. [Google Scholar]

- Zhu, W.; Yang, J.; Yao, Y. How Compliance Maturity Translates to Risk Reduction: A Multi-Case Comparison of Global Operations Using fsQCA and Hierarchical Bayesian Methods. In Proceedings of the 2025 2nd International Conference on Digital Economy and Computer Science, 2025, October; pp. 672–676. [Google Scholar]

- Dong, H.; Zhang, P.; Lu, M.; Shen, Y.; Ke, G. MachineLearningLM: Scaling Many-shot In-context Learning via Continued Pretraining. arXiv 2025, arXiv:2509.06806. [Google Scholar]

- Khan, Q. W. Exploring Markov decision processes: a comprehensive survey of optimization applications and techniques. Igmin Research 2024, 2(7), 508–517. [Google Scholar]

- Liu, S.; Feng, H.; Liu, X. A Study on the Mechanism of Generative Design Tools’ Impact on Visual Language Reconstruction: An Interactive Analysis of Semantic Mapping and User Cognition; Authorea Preprints, 2025. [Google Scholar]

- Jennings, S.; Collins, S.; Carter, S.; Turner, S.; Fields, S.; Reynolds, S.; Carter, S. Reinforcement Learning for Adaptive Construction Budget Allocation under Schedule and Resource Uncertainty. 2024. [Google Scholar]

- Du, Y. Research on Deep Learning Models for Forecasting Cross-Border Trade Demand Driven by Multi-Source Time-Series Data. Journal of Science, Innovation & Social Impact 2025, 1(2), 63–70. [Google Scholar]

- Keswani, M.; Jain, S.; Bhattacharyya, R. P. Safe Langevin Soft Actor Critic. arXiv 2026, arXiv:2602.00587. [Google Scholar] [CrossRef]

- Mao, Y.; Ma, X.; Li, J. Research on API Security Gateway and Data Access Control Model for Multi-Tenant Full-Stack Systems. 2025. [Google Scholar]

- Zanotto, A.; Frumento, E.; Sainio, P.; Virtanen, S. Sandboxed navigation and deep inspection of suspicious links reported by humans as a security sensor (haass). 2022. [Google Scholar]

- Li, T.; Xia, J.; Liu, S.; Hong, E. Strategic Human Resource Leadership in Global Biopharmaceutical Enterprises: Integrating HR Analytics and Cross-Cultural. 2025. [Google Scholar] [CrossRef]

- Maharaj, A.; Miller, K.; Davis, D.; Sutherland, M. Geostatistical analysis of maritime accidents: identifying contributory factors and risk patterns in maritime navigation. The International Hydrographic Review 2025, 31(2), 102–121. [Google Scholar] [CrossRef]

- Gu, X.; Yang, J.; Liu, M. Research on a Green Money Laundering Identification Framework and Risk Monitoring Mechanism Integrating Artificial Intelligence and Environmental Governance Data. 2025. [Google Scholar] [CrossRef]

- Koyuncu Tunç, S. Comparative Analysis of Four Usability Assessment Techniques for Electronic Record Management Systems. Advances in Human-Computer Interaction 2025, 2025(1), 8693889. [Google Scholar] [CrossRef]

- Cai, B.; Bai, W.; Lu, Y.; Lu, K. Fuzz like a Pro: Using Auditor Knowledge to Detect Financial Vulnerabilities in Smart Contracts. 2024 International Conference on Meta Computing (ICMC), 2024, June; IEEE; pp. 230–240. [Google Scholar]

- Islam, M. M.; Dhanekula, A. Predictive Analytics And Data-Driven Algorithms For Improving Efficiency In Full-Stack Web Systems. International Journal of Scientific Interdisciplinary Research 2024, 5(2), 226–260. [Google Scholar] [CrossRef]

- Wang, Y.; Feng, Y.; Fang, Y.; Zhang, S.; Jing, T.; Li, J.; Xu, R. HERO: Hierarchical Traversable 3D Scene Graphs for Embodied Navigation Among Movable Obstacles. arXiv 2025, arXiv:2512.15047. [Google Scholar] [CrossRef]

- Alyanbaawi, A.; El-Sayed, A.; Salah, N.; Said, W.; Elmezain, M.; Elkomy, O. MC-LBTO: secure and resilient state-aware multi-controller framework with adaptive load balancing for SD-IoT performance optimization. In Scientific Reports; 2025. [Google Scholar]

- Cai, Z.; Qiu, H.; Zhao, H.; Wan, K.; Li, J.; Gu, J.; Hu, J. From Preferences to Prejudice: The Role of Alignment Tuning in Shaping Social Bias in Video Diffusion Models. arXiv 2025, arXiv:2510.17247. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).