3. Method

3.1. Overview

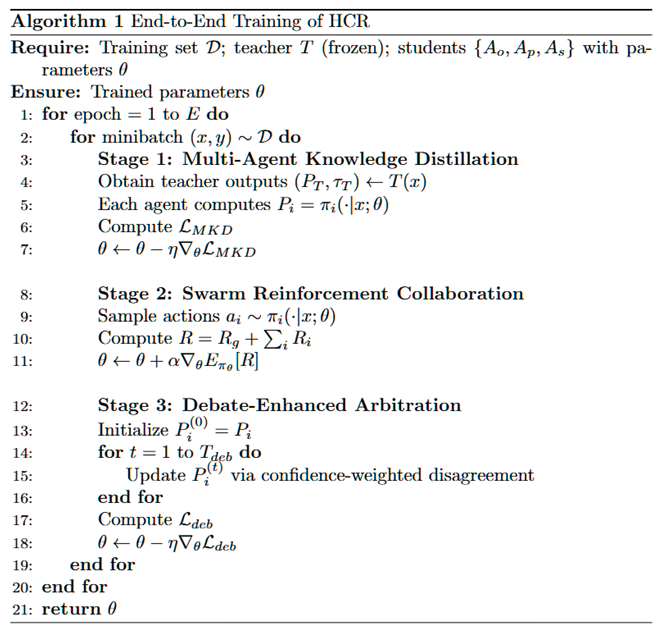

The HCR framework integrates three core components into a unified architecture for Chinese spelling correction, including multi-agent knowledge distillation, swarm reinforcement collaboration, and debate-enhanced arbitration. A large teacher model guides three specialized student agents to acquire expertise in orthographic similarity, phonetic consistency, and contextual semantics through interactive knowledge transfer. All three student agents are architecturally identical, sharing the same backbone and prediction head, and their specialization is induced purely by different supervision signals and optimization objectives. The distilled agents collaborate to exchange reasoning signals and dynamically adapt their contributions via a swarm reinforcement mechanism, enabling robust and flexible decision-making under diverse error patterns. Finally, a debate-enhanced arbitration module refines predictions by integrating outputs and confidence estimates from all agents, providing transparent and interpretable correction results.

Figure 1 shows the overall pipeline.

3.2. Multi-Agent Knowledge Distillation

HCR employs a multi-agent knowledge distillation strategy to enable three specialized student agents to acquire complementary reasoning capabilities from a large teacher model while preserving distinct expertise in orthographic similarity, phonetic consistency, and contextual semantics. The three student agents are architecturally identical and share the same backbone and prediction head; their functional specialization does not come from structural differences, but is induced by different supervision signals, distillation targets, and optimization biases. Unlike conventional approaches that directly mimic the teacher’s outputs, HCR leverages both predictive distributions and intermediate reasoning trajectories to guide agent-specific learning. Given an input sequence , the teacher model produces a probability distribution over the candidate vocabulary . Each student agent , parameterized by , generates its own distribution under the teacher’s supervision.

In addition to the output distribution, the teacher model is further prompted to generate a structured reasoning trajectory , which is defined as an ordered token sequence that explicitly encodes the intermediate decision process from the input to the final correction. Concretely, is constructed under a predefined format that represents a sequence of reasoning states, each corresponding to a well-defined diagnostic or decision step, such that the entire sequence forms a complete and self-consistent decision path. This trajectory is generated by the teacher via autoregressive decoding and stored as an intermediate supervision signal.

To ensure effective learning, we incorporate a supervised negative log-likelihood loss that directly optimizes the likelihood of predicting the correct sequence:

where is the final predicted probability for the ground-truth character at position

In parallel, the teacher’s knowledge is transferred to each student via an agent-specific distillation loss defined by the Kullback-Leibler divergence between softened teacher and student distributions:

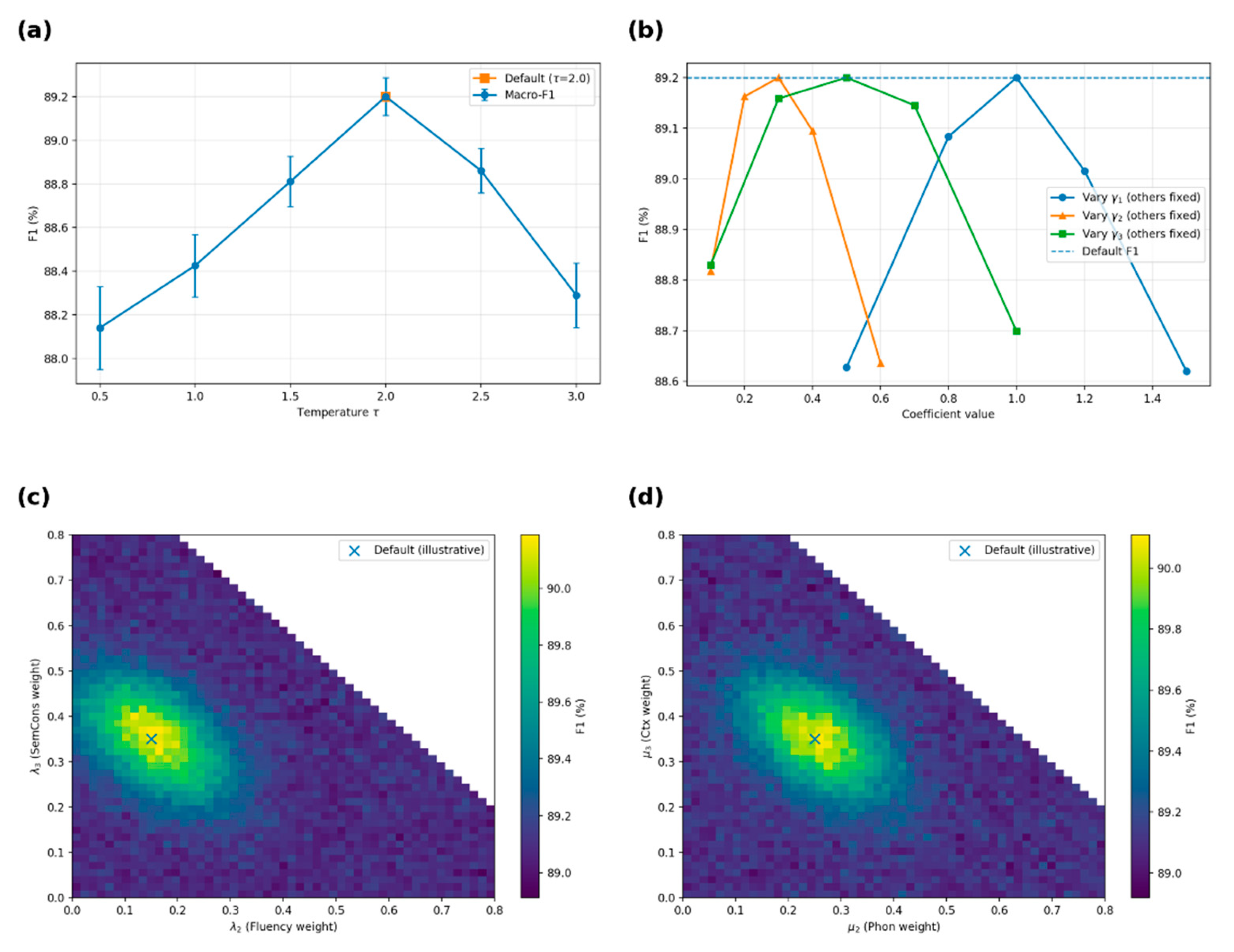

where denotes the softmax function, is the temperature parameter controlling distribution smoothness, and represents the predictive distribution generated by . This formulation allows the student agents to approximate the teacher’s knowledge while retaining stable gradients for efficient training.

Beyond output-level distillation, we further introduce a trajectory-level supervision that explicitly transfers the teacher’s reasoning process to each student. Given the teacher-generated trajectory , each student agent is trained to model the conditional distribution . The corresponding trajectory distillation loss is defined as:

which enforces the student to reproduce the same ordered sequence of intermediate decisions as the teacher, thereby aligning not only the final output but also the underlying reasoning process.

To further encourage the agents to specialize in distinct reasoning spaces, we introduce a cross-agent consistency regularization that penalizes redundant representation learning. For hidden states and from agents and , the regularization is defined as:

which drives the intermediate representations of different agents toward diverse and complementary subspaces. Finally, we combine the teacher alignment loss, cross-agent regularization, and supervised ground-truth learning into the unified objective for multi-agent knowledge distillation:

where , , , and are hyperparameters balancing the contributions of each component. This formulation enables each agent to achieve specialization in its designated reasoning domain while collectively contributing to the overall correction performance.

3.3. Swarm Reinforcement Collaboration

While interactive multi-agent distillation enables the student agents to acquire complementary reasoning capabilities, effective collaboration among them remains critical for achieving robust and adaptive correction. To address this challenge, we introduce Swarm Reinforcement Collaboration (SRC), which models the three agents as a cooperative multi-agent reinforcement learning system, metaphorically described as a coordinated swarm, that jointly optimizes correction policies through structured policy updates and shared reward signals to maximize overall correction quality. Instead of treating the agents as independent learners, SRC enables them to dynamically adapt their contributions based on input characteristics and error patterns, resulting in more flexible and reliable decision-making.

Formally, given an input sequence , three agents {, , } generate candidate corrections and confidence estimates. A global reward evaluates the overall performance of the swarm, encouraging the agents to collaborate toward shared objectives of accuracy, fluency, and semantic consistency:

where denotes sentence-level correction accuracy, defined as a binary indicator of whether exactly matches the ground-truth correction ; measures linguistic well-formedness and is computed as the negative length-normalized log-likelihood under a pretrained language model :

and evaluates semantic consistency between the input and the correction by the cosine similarity between their sentence embeddings:

and , , are weighting coefficients.

To encourage specialization and complementary decision-making, each agent also receives an agent-specific reward measuring its performance on the designated reasoning dimension:

where , , and quantify orthographic similarity, phonetic alignment, and contextual relevance, respectively, and , , balance their contributions. Specifically, is defined as the normalized character-level edit similarity:

is computed analogously on the corresponding pinyin sequences:

where maps a character sequence to its phonetic representation; and is defined as the sentence-level semantic similarity:

The overall objective of SRC is to maximize the expected cumulative reward of the swarm, combining both global and agent-specific signals:

where denotes the joint policy parameterized by . The optimization is performed via policy gradient methods, updating the parameters according to:

By integrating global coordination and agent-level specialization, SRC enables HCR to achieve adaptive multi-agent collaboration, balancing collective objectives with individual expertise and improving correction performance under diverse and noisy scenarios.

3.4. Debate-Enhanced Arbitration

While multi-agent knowledge distillation enables the student agents to acquire complementary expertise and swarm reinforcement collaboration optimizes their cooperative strategies, conflicts may still arise when the agents produce divergent correction hypotheses due to their specialized reasoning perspectives. To resolve these conflicts and achieve reliable final predictions, we introduce a Debate-Enhanced Arbitration (DEA) mechanism, which refines agent outputs through iterative debates and confidence-adaptive fusion. The debate process is performed at inference time and does not update model parameters; instead, it operates on the prediction distributions to reach a consensus.

Given an input sequence , each agent produces an initial prediction distribution and a self-estimated confidence score . During the debate phase, the agents iteratively exchange hypotheses and counterarguments, incorporating peer feedback to refine their predictions. Let denote the prediction of agent at debate round , and represent the adjustment derived from peer feedback. Specifically, is defined as the confidence-weighted disagreement between agent and the other agents:

where the peer weight is computed from the confidence scores via a softmax normalization, . The refined prediction at round is updated as:

followed by a normalization step to ensure remains a valid probability distribution. is the debate learning rate controlling the extent to which external feedback influences the agent’s predictions. This update is not gradient-based and does not involve backpropagation; it is a deterministic, confidence-weighted refinement at the distribution level.

After

rounds of debate, the arbitration module computes the final prediction probability

P defined in

Section 3.2 by aggregating the refined outputs

from all agents using a confidence-adaptive fusion strategy:

where is the final prediction from agent , and denotes the arbitration weight assigned to agent .

To incorporate both the agent’s confidence and its reinforcement performance introduced in

Section 3.3, the weight is computed as:

where and are balancing coefficients for confidence and reinforcement contributions, respectively.

To encourage consensus among agents, we introduce a debate consistency loss that penalizes significant divergence between their final predictions:

where is the number of student agents.

Finally, we integrate the objectives of multi-agent knowledge distillation, swarm reinforcement collaboration, and debate-enhanced arbitration into a unified loss function:

where , , are non-negative hyperparameters that balance the relative contributions of knowledge distillation, reinforcement optimization, and debate consistency within the overall objective.

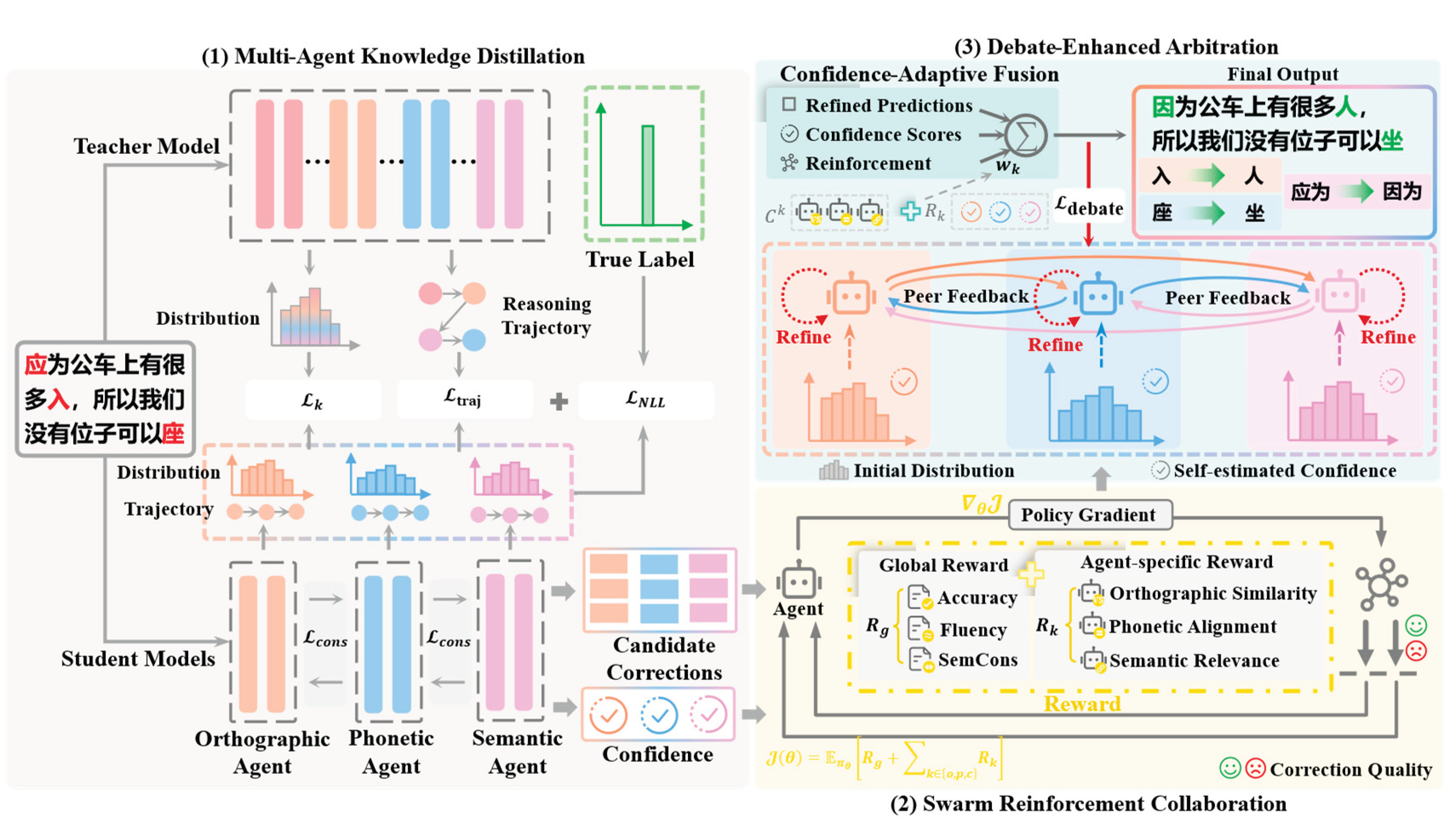

Training alternates between knowledge distillation, swarm reinforcement updates, and multi-round debates until convergence, while inference starts with agents generating initial predictions, refining them through debates, and reaching consensus via confidence-adaptive arbitration. For clarity, we summarize the overall training procedure and stage-wise parameter updates in the Algorithm.