Submitted:

06 March 2026

Posted:

09 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Data and Methods

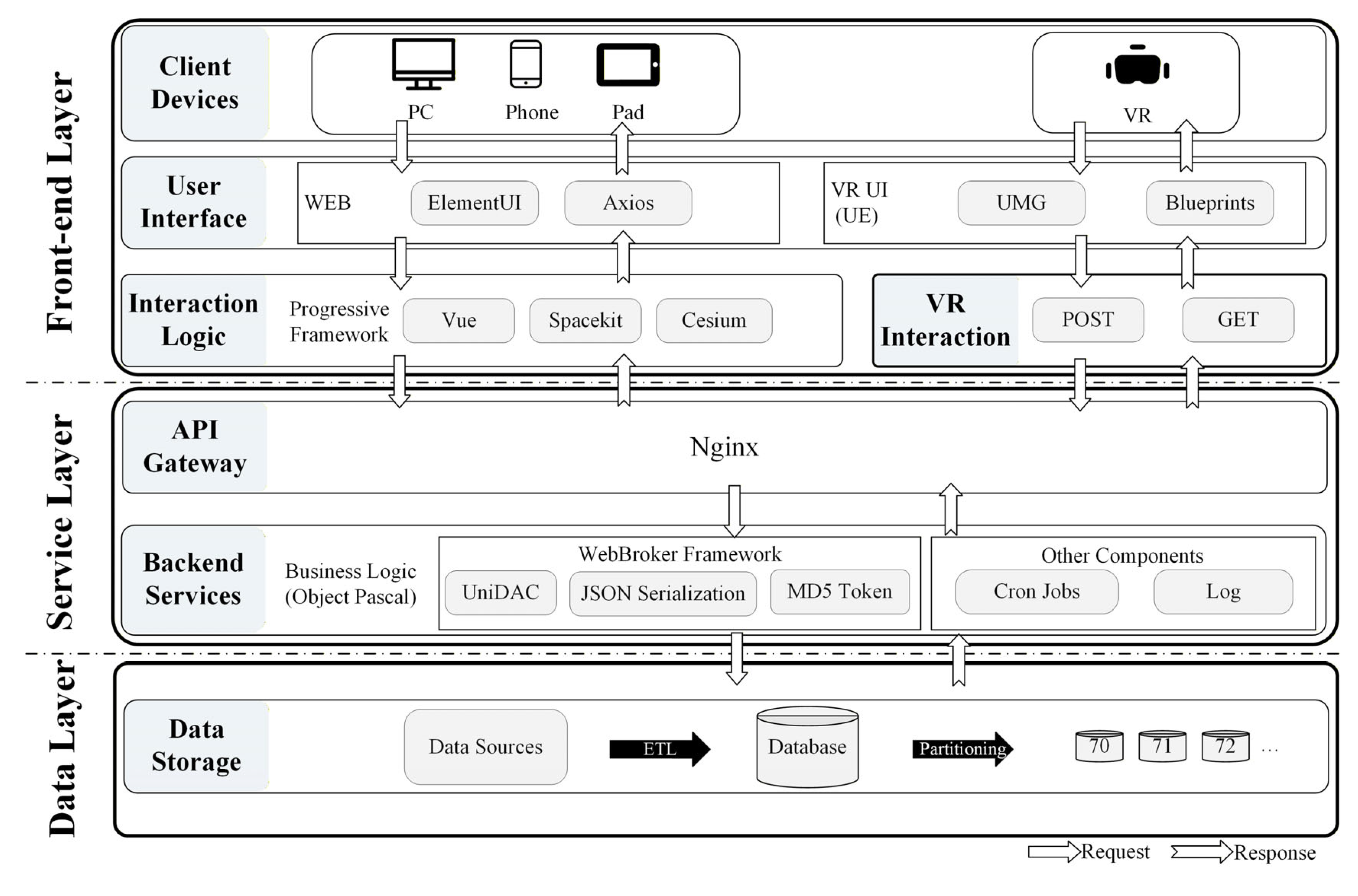

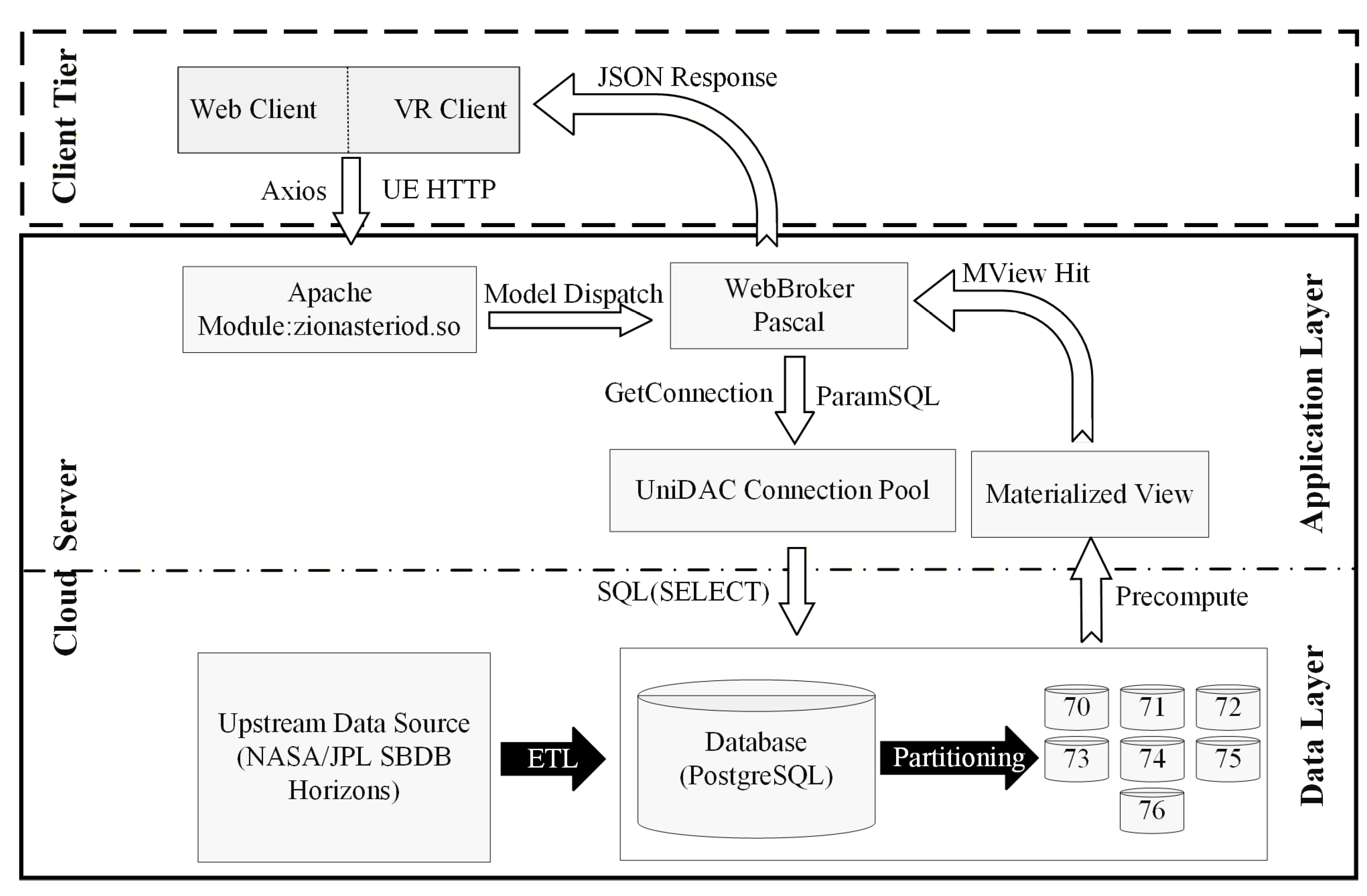

2.1. Overall Architecture

2.2. Data and Models

2.3. Key Technical Approach

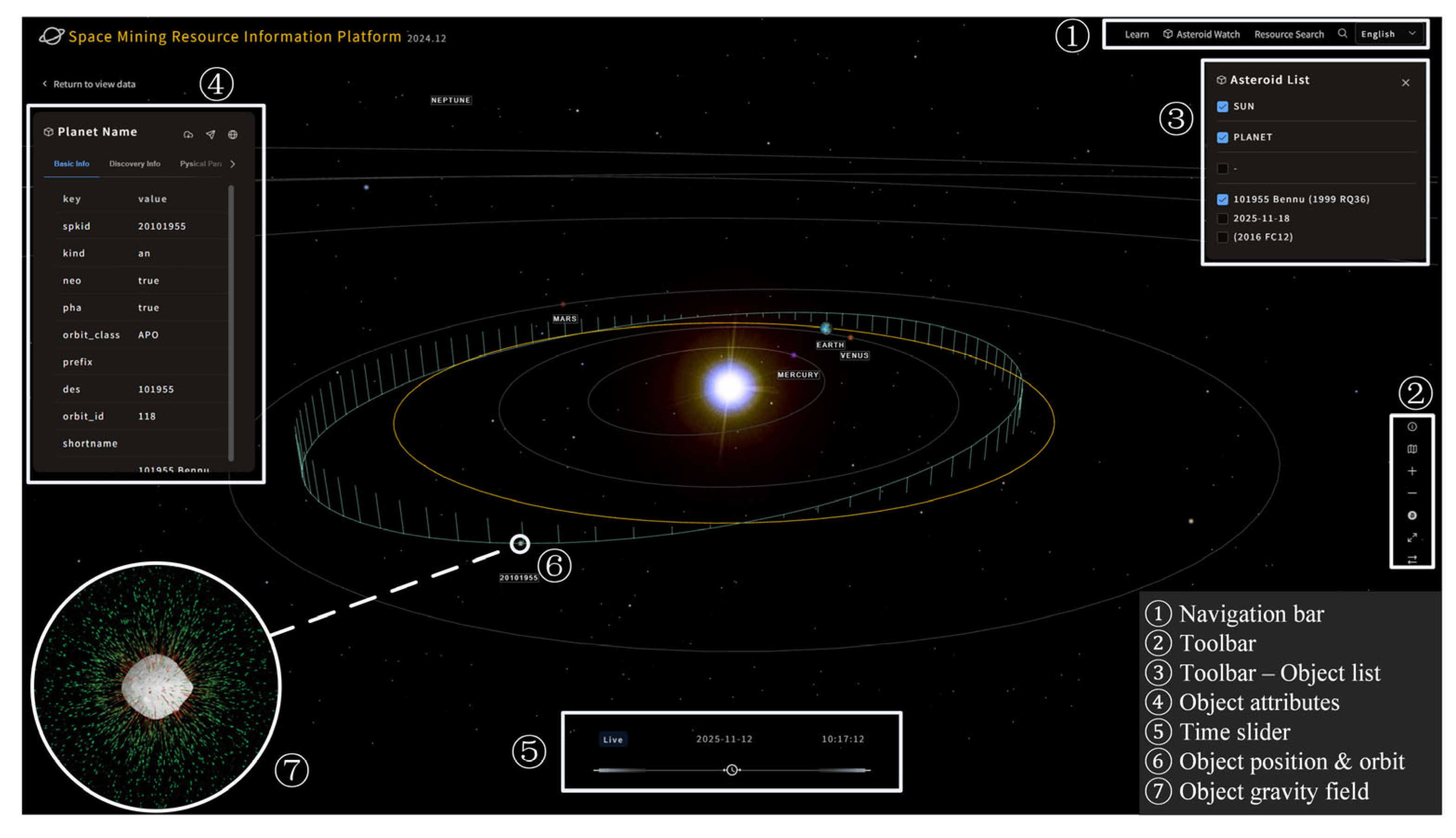

3. Realization of System

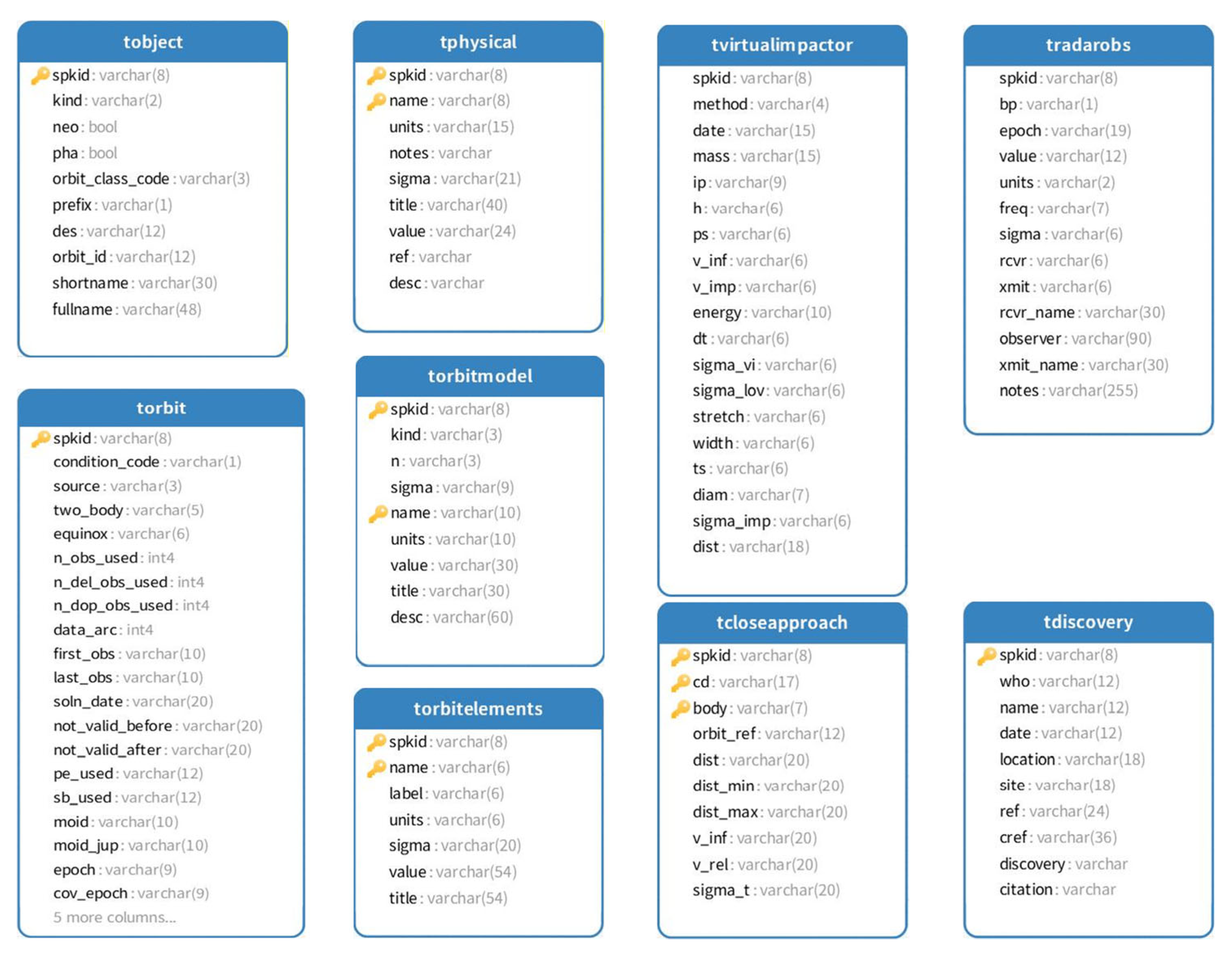

3.1. Realization: Backend Database

3.2. Realization: Frontend Function

3.2.1. Web Client

3.2.2. VR Client

4. System Performance Test

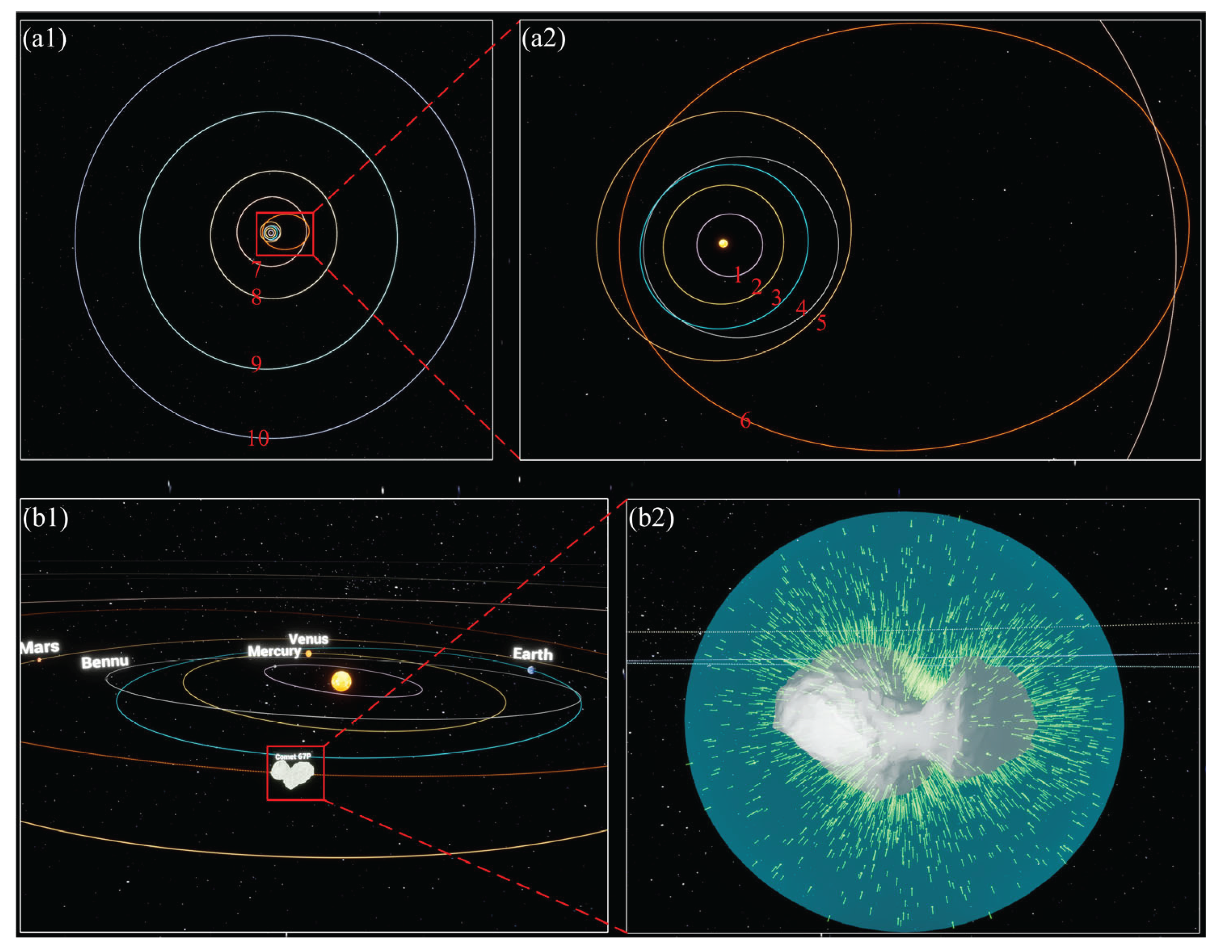

4.1. Validation of the Orbits in VR Client

4.2. Efficiency of the Gravitational Vector Rendering in VR Client

5. Discussion and Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Peeters, W.; Ehrenfreund, P. Charting the Future of Space: A Collaborative Vision for Innovative Commercial Partnerships and Sustainable Space Exploration. New Space 2025, 13, 7–21. [Google Scholar] [CrossRef]

- Getzandanner, K.M.; Antreasian, P.G.; Moreau, M.C.; Leonard, J.M.; Adam, C.D.; Wibben, D.R.; Berry, K.; Highsmith, D.E.; Lauretta, D.S. Small Body Proximity Operations & TAG: Navigation Experiences & Lessons Learned from the OSIRIS-REx Mission. AIAA SCITECH 2022 Forum, 2022. [Google Scholar] [CrossRef]

- Glassmeier, K.-H.; Boehnhardt, H.; Koschny, D.; Kührt, E.; Richter, I. The Rosetta mission: flying towards the origin of the solar system. Space Science Reviews 2007, 128, 1–21. [Google Scholar] [CrossRef]

- Lauretta, D.; Balram-Knutson, S.; Beshore, E.; Boynton, W.; Drouet d’Aubigny, C.; DellaGiustina, D.; Enos, H.; Golish, D.; Hergenrother, C.; Howell, E. OSIRIS-REx: sample return from asteroid (101955) Bennu. Space Science Reviews 2017, 212, 925–984. [Google Scholar] [CrossRef]

- Fujiwara, A.; Kawaguchi, J.; Yeomans, D.; Abe, M.; Mukai, T.; Okada, T.; Saito, J.; Yano, H.; Yoshikawa, M.; Scheeres, D. The rubble-pile asteroid Itokawa as observed by Hayabusa. Science 2006, 312, 1330–1334. [Google Scholar] [CrossRef]

- Watanabe, S.; Hirabayashi, M.; Hirata, N.; Hirata, N.; Noguchi, R.; Shimaki, Y.; Ikeda, H.; Tatsumi, E.; Yoshikawa, M.; Kikuchi, S. Hayabusa2 arrives at the carbonaceous asteroid 162173 Ryugu—A spinning top–shaped rubble pile. Science 2019, 364, 268–272. [Google Scholar] [CrossRef]

- LIANG, Z.; LU, B.; CUI, P.; ZHU, S.; XU, R.; GE, D.; BAOYIN, H.; SHAO, W. Research Progress of Technologies for Intelligent Landing on Small Celestial Bodies. Journal of Deep Space Exploration 2024, 11, 213–224. [Google Scholar] [CrossRef]

- YU, D.; ZHANG, Z.; PAN, B.; LIU, C.; DING, L.; ZHU, J.; GAO, H.; LIU, J.; CHEN, P. Development and Trend of Artificial Intelligent in Deep Space Exploration. Journal of Deep Space Exploration (in Chinese). 2020, 7, 11–23. [Google Scholar] [CrossRef]

- WU, W.; YU, D. Development of Deep Space Exploration and Its Future Key Technologies. Journal of Deep Space Exploration (in Chinese). 2014, 1, 5–17. [Google Scholar] [CrossRef]

- Ely, T.P.; Tjoelker, John; Burt, Robert; Dorsey, Eric; Enzer, Angela; Herrera, Daphna; Kuang, Randy; Murphy, Da; Robison, David; Seal, David; et al. Deep Space Atomic Clock Technology Demonstration Mission Results. In Proceedings of the 2022 IEEE Aerospace Conference (AERO 2022), Big Sky, Montana, USA, 5–12 March 2022; 2022, pp. 1–20. [Google Scholar] [CrossRef]

- Antreasian, P.G.; Adam, C.D.; Berry, K.; Geeraert, J.L.; Getzandanner, K.M.; Highsmith, D.E.; Leonard, J.M.; Lessac-Chenen, E.J.; Levine, A.H.; McADAMS, J.V.; et al. OSIRIS-REx Proximity Operations and Navigation Performance at Bennu. AIAA SCITECH 2022 Forum 2022. [Google Scholar] [CrossRef]

- Park, R.S.; Werner, R.A.; Bhaskaran, S. Estimating small-body gravity field from shape model and navigation data. Journal of guidance, control, and dynamics 2010, 33, 212–221. [Google Scholar] [CrossRef]

- Yin, Z.; Zhang, K.; Duan, Y.; Liu, J.; Mu, Q. heoretical research progress of gravitational field modeling in Earth science anddeep-space exploration. Reviews of Geophysics and Planetary Physics (in Chinese). 2024, 55, 501–512. [Google Scholar] [CrossRef]

- Archinal, B.A.; Lee, E.M.; Kirk, R.L.; Duxbury, T.; Sucharski, R.M.; Cook, D.; Barrett, J.M. A new Mars digital image model (MDIM 2.1) control network. International Archives of Photogrammetry and Remote Sensing 2004, 35, B4. [Google Scholar]

- Duan, Y.; Zhang, K.; Yin, Z.; Zhang, S.; Li, H.; Wu, S.; Zheng, N.; Bian, C.; Li, L. A novel gravitational inversion method for small celestial bodies based on geodesyNets. Icarus 2025, 433, 116525. [Google Scholar] [CrossRef]

- Kikuchi, S.; Saiki, T.; Takei, Y.; Terui, F.; Ogawa, N.; Mimasu, Y.; Ono, G.; Yoshikawa, K.; Sawada, H.; Takeuchi, H.; et al. Hayabusa2 pinpoint touchdown near the artificial crater on Ryugu: Trajectory design and guidance performance. Advances in Space Research 2021, 68, 3093–3140. [Google Scholar] [CrossRef]

- Scheeres, D.; McMahon, J.; French, A. The dynamic geophysical environment of (101955) Bennu based on OSIRIS-REx measurements. Nature Astronomy 2019, 3, 352–361. [Google Scholar] [CrossRef] [PubMed]

- Keane, J.T.; Sori, M.M.; Ermakov, A.I.; Austin, A.; Bapst, J.; Berne, A.; Bierson, C.J.; Bills, B.G.; Boening, C.; Bramson, A.M.; et al. Next-Generation Planetary Geodesy: Results from the 2021 Keck Institute for Space Studies Workshops. In Proceedings of the 53rd Lunar and Planetary Science Conference, The Woodlands, Texas, March 01, 2022, 2022; p. 1622. [Google Scholar]

- YAN, Y. 2024 Annual Progress Review in the Field of Deep Space Exploration. High-Technology & Commercialization (in Chinese). 2025, 31, 73–78. [Google Scholar] [CrossRef]

- Scheeres, D. Orbital mechanics about small bodies. Acta Astronautica 2012, 72, 1–14. [Google Scholar] [CrossRef]

- Bottke, W.F., Jr.; Vokrouhlický, D.; Rubincam, D.P.; Nesvorný, D. The Yarkovsky and YORP effects: Implications for asteroid dynamics. Annu. Rev. Earth Planet. Sci. 2006, 34, 157–191. [Google Scholar] [CrossRef]

- NASA Jet Propulsion Laboratory (JPL). Eyes on Asteroids. Available online: https://eyes.nasa.gov/apps/asteroids/#/home (accessed on 2025-06-21).

- ESA NEO Coordination Centre (NEOCC). Orbit Visualisation Tool. Available online: https://neotools.neo.s2p.esa.int/ovt (accessed on 2025-12-01).

- Pavelka, K.; Landa, M. Using Virtual and Augmented Reality with GIS Data. ISPRS International Journal of Geo-Information 2024, 13, 241. [Google Scholar] [CrossRef]

- Levin, E.; Shults, R.; Habibi, R.; An, Z.; Roland, W. Geospatial Virtual Reality for Cyberlearning in the Field of Topographic Surveying: Moving Towards a Cost-Effective Mobile Solution. ISPRS International Journal of Geo-Information 2020, 9, 433. [Google Scholar] [CrossRef]

- Zhi, Y.; Nico, S. Modeling the gravitational field by using CFD techniques. Journal of Geodesy 2021, 95, 68. [Google Scholar] [CrossRef]

- Han, B.; Zhang, Y.-X.; Zhong, S.-B.; Zhao, Y.-H. Astronomical data fusion tool based on PostgreSQL. Research in Astronomy and Astrophysics 2016, 16, 178. [Google Scholar] [CrossRef]

- JPL Solar System Dynamics (SSD). SBDB API. Available online: https://ssd-api.jpl.nasa.gov/doc/sbdb.html (accessed on 2025-12-01).

- Giorgini, J.D. JPL Solar System Dynamics Group. Horizons System. Available online: https://ssd.jpl.nasa.gov/horizons/app.html#/ (accessed on 2025-12-01).

- Planetary Data System (PDS) Small Bodies Node; Asteroid/Dust Subnode. PDS SBN Asteroid/Dust Subnode. Available online: https://sbn.psi.edu/pds/shape-models/ (accessed on 2025-12-01).

- Duan, Y.; Yin, Z.; Zhang, K.; Zhang, S.; Wu, S.; Li, H.; Zheng, N.; Bian, C. Modeling the gravitational field of the ore-bearing asteroid by using the CFD-based method. Acta Astronautica 2024, 215, 664–673. [Google Scholar] [CrossRef]

- Avula, S.B. PostgreSQL Table Partitioning Strategies: Handling Billions of Rows Efficiently. International Journal of Emerging Trends in Computer Science and Information Technology 2024, 5, 23–37. [Google Scholar] [CrossRef]

- Xiao, W.; Lv, X.; Xue, C. Dynamic Visualization of VR Map Navigation Systems Supporting Gesture Interaction. ISPRS International Journal of Geo-Information 2023, 12, 133. [Google Scholar] [CrossRef]

- Bardwell, J. Adding Smooth Locomotion To Unreal Engine 5.1+ For VR (Open XR). Available online: https://www.gdxr.co.uk/learning/adding-smooth-locomotion-to-unreal-engine-5-1 (accessed on 2025-06-21).

| Name | Type | Source |

| Small-Body Database (SBDB) | Attribute data of small bodies | NASA JPL [28] |

| Horizons ephemeris service | Small-body ephemerides | NASA JPL Horizons [29] |

| Publicly accessible scientific data repositories | Small-body 3D geometric/shape models | Public 3D model repositories [30] |

| Small-body force vector-field model (CSV format) | Gravitational-field model | Adopted from the authors’ previous study [26,31] |

| Software/Framework | Purpose |

| PostgreSQL | Database design and development |

| Object Pascal+WebBroker | Backend server development |

| PrimeVue | Web front-end development |

| Spacekit | Web-based orbit visualization |

| Cesium | Web-based field-data visualization |

| Unreal Engine 5.5 | VR front-end development (including scene interaction, orbit rendering, model loading, etc.) |

| Body | Orbital Period | Time Span | Step size of Sampling | Amount of Sampling Points |

| Mercury | 88 day | 2024-04-25——2024-07-22 | 1 day | 88 |

| Venus | 224 day | 2024-04-25——2024-12-06 | 2 day | 113 |

| Earth | 365 day | 2024-04-25——2025-04-25 | 1 day | 365 |

| Mars | 687 day | 2024-04-25——2026-03-12 | 2 day | 344 |

| Jupiter | 11.86 years | 2024-04-25——2036-03-27 | 1 month | 144 |

| Saturn | 29.5 years | 2024-04-25——2053-10-25 | 2 months | 177 |

| Uranus | 84 years | 2024-04-25——2108-04-25 | 2 months | 504 |

| Neptune | 164.8 years | 2024-04-25——2189-02-11 | 2 months | 989 |

| Bennu (Asteroid) | 1.2 years | 2024-04-25——2025-07-07 | 1 day | 439 |

| 67P-CG (Comet) | 6.42 years | 2024-04-25——2030-09-25 | 1 month | 78 |

| Object | Keplerian element |

Web client (Data source: SBDB database) |

VR client (Data source: NASA Horizons System) |

Difference (×10−13) |

Relative difference (×10−10) |

| Bennu | epoch | 2455562.5 | 2455562.5 | / | / |

| a(AU) | 1.126391025894812 | 1.126391025888730 | 60.82 | 0.053995 | |

| e | 0.2037450762416414 | 0. 2037450762425329 | 8.915 | 0.043756 | |

| i(deg) | 6.03494377024794 | 6.034943770255041 | 71.01 | 0.011766 | |

| Ω(deg) | 2.06086619569642 | 2.060866195707317 | 108.97 | 0.052876 | |

| ω(deg) | 66.22306084084298 | 66.22306084001706 | 8259.2 | 0.124718 | |

| Comet 67P | epoch | 2457305.5 | 2457305.5 | / | / |

| a(AU) | 3.462249489765068 | 3.462249489765064 | 0.04 | 0.000011553 | |

| e | 0.6409081306555051 | 0.6409081306555049 | 0.002 | 0.000003121 | |

| i(deg) | 7.040294906760007 | 7.040294906760015 | 0.08 | 0.000011363 | |

| Ω(deg) | 50.13557380441372 | 50.13557380441362 | 1.0 | 0.000019946 | |

| ω(deg) | 12.79824973415729 | 12.79824973415736 | 0.7 | 0.000054695 |

| ID | Arrows | Frames | AvgFPS | FT_P99 | HitchRate | GT | RT | GPU |

| 1 | 0 | 1800 | 71.864 | 14.643 | 0.00% | 13.904 | 2.412 | 3.616 |

| 2 | 500 | 1800 | 71.861 | 14.728 | 0.00% | 13.908 | 3.211 | 3.883 |

| 3 | 1000 | 1800 | 71.872 | 14.842 | 0.00% | 13.909 | 4.237 | 4.055 |

| 4 | 2000 | 1800 | 71.890 | 15.016 | 0.00% | 13.906 | 5.976 | 4.35 |

| 5 | 2500 | 1800 | 71.914 | 15.19 | 0.00% | 13.905 | 7.038 | 4.606 |

| 6 | 3000 | 1800 | 71.901 | 15.484 | 0.00% | 13.912 | 8.211 | 4.778 |

| 7 | 3200 | 1800 | 71.742 | 15.722 | 0.28% | 13.989 | 8.487 | 5.226 |

| 8 | 3500 | 1800 | 49.723 | 30.903 | 37.94% | 22.451 | 10.406 | 5.82 |

| 9 | 4000 | 1800 | 35.922 | 28.689 | 60.33% | 27.828 | 9.836 | 5.05 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).