Submitted:

06 March 2026

Posted:

09 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

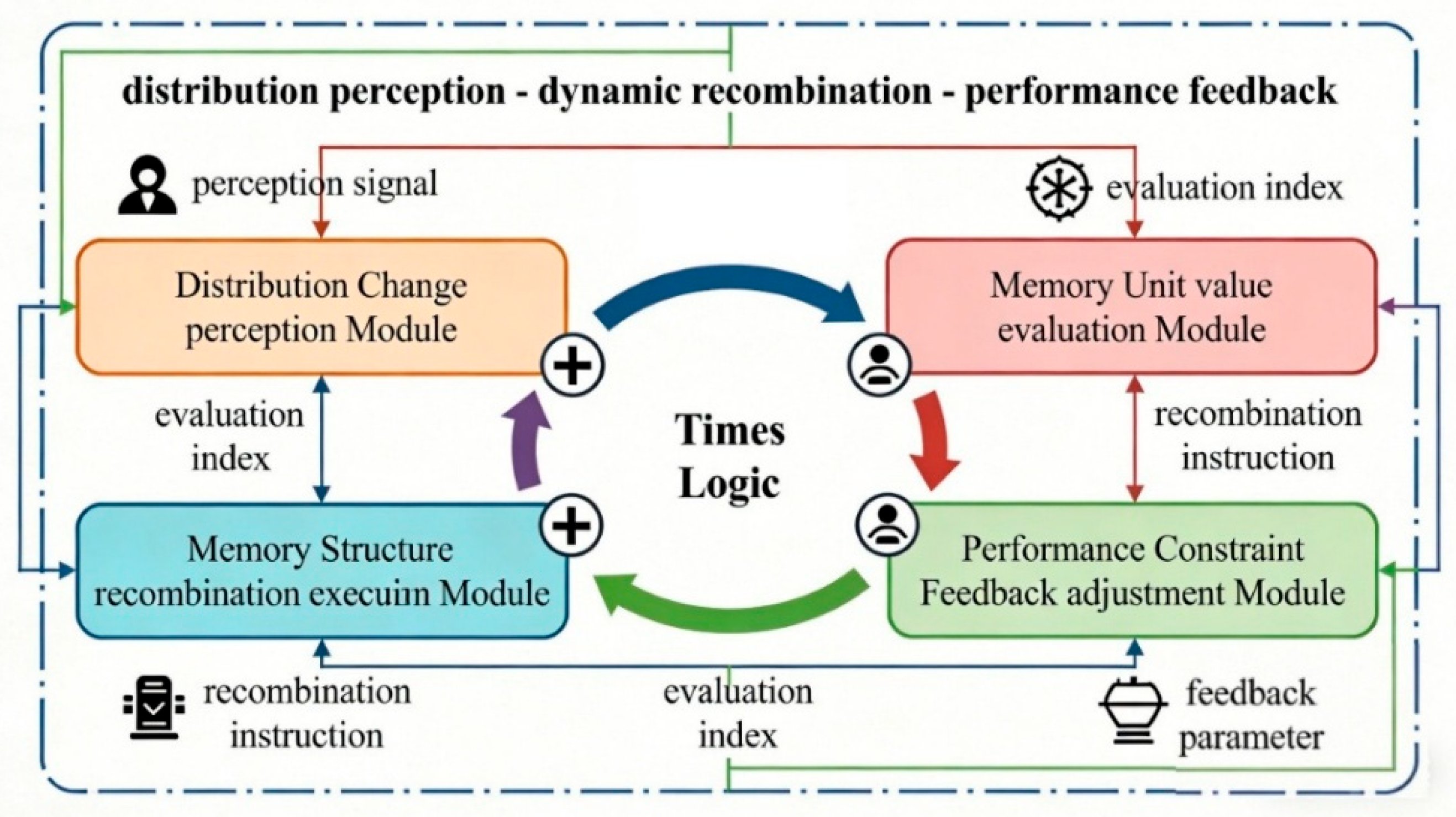

2. Distribution-Aware Continuous Memory Reorganization Algorithm (DAMCR Algorithm)

2.1. Distribution Change Sensing Module

2.2. Memory Unit Value Assessment Module

2.3. Memory Structure Reorganization Execution Module

2.4. Performance Constraint Feedback Adjustment Module

3. Experimental Simulation and Result Analysis

3.1. Experimental Environment Setup

3.2. Baseline Algorithms

3.3. Main Results

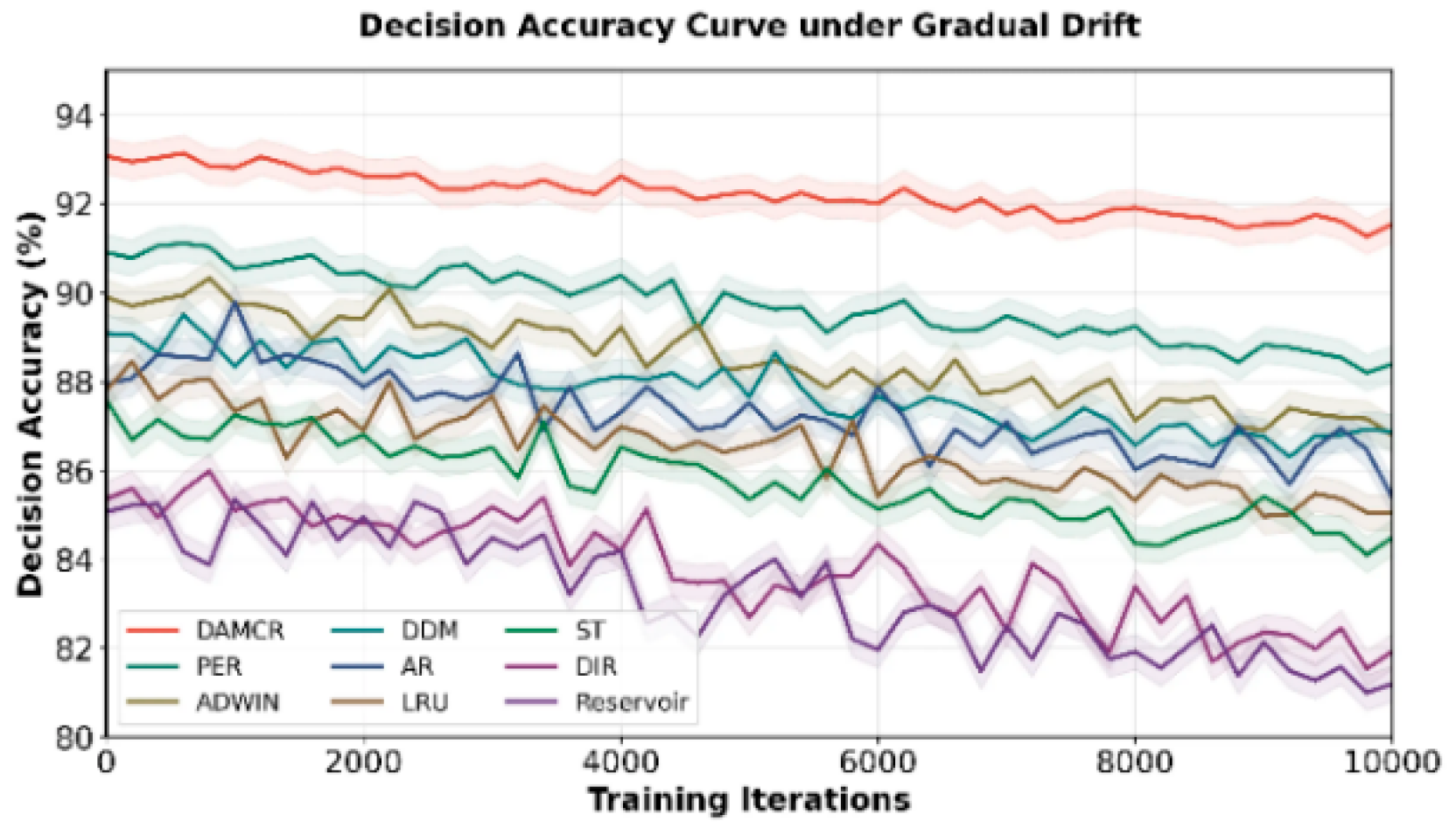

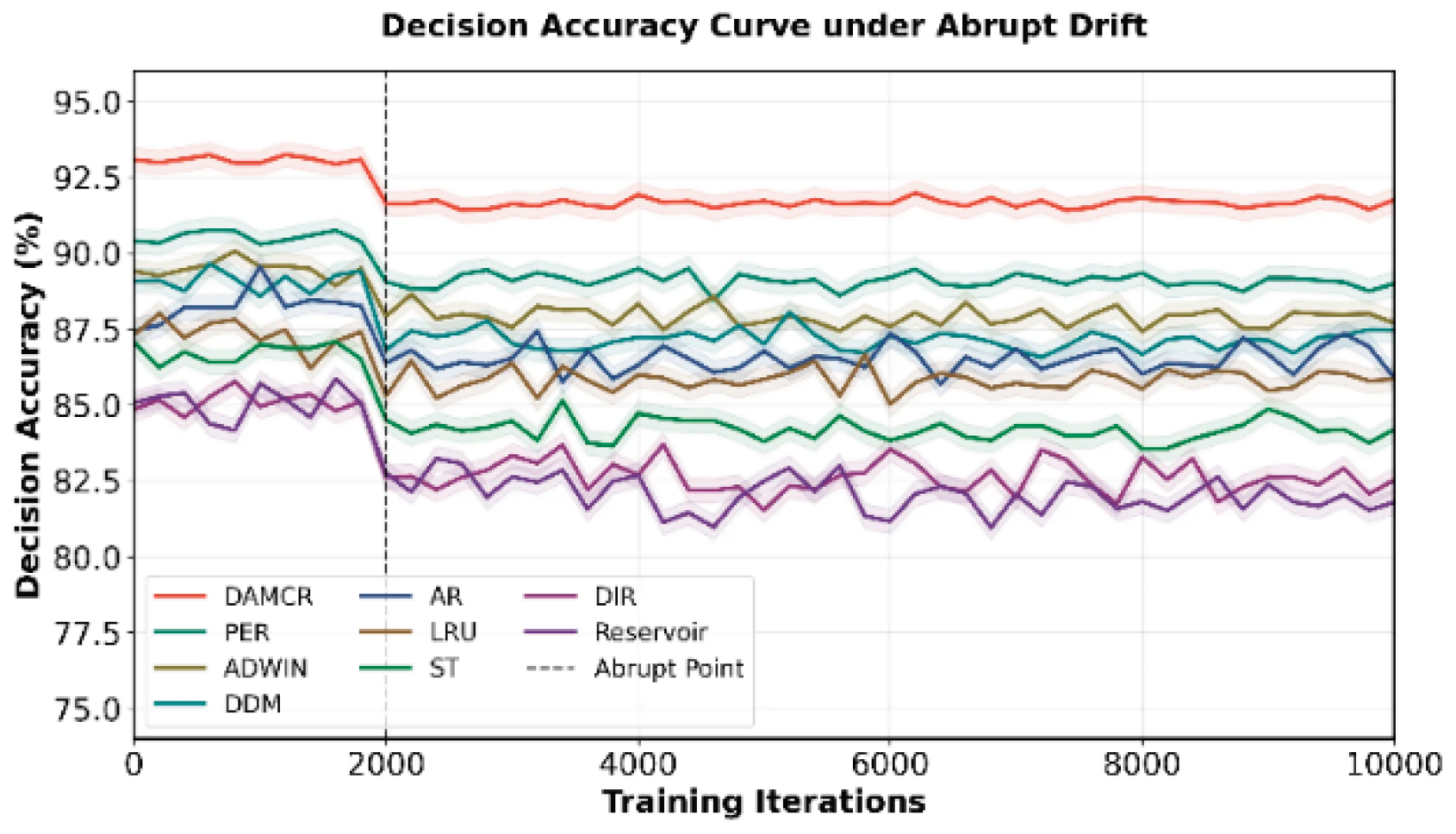

3.3.1. Quantitative Main Results

3.3.2. Qualitative Results

4. Conclusions

References

- Kirkby, R. Computing Quantiles of Functions of the Agent Distribution Using t-Digests. Computational Economics 2024, 64(2), 1199–1218. [Google Scholar] [CrossRef]

- Shi, S.; Jiang, L.; Dai, D.; Schiele, B. Mtr++: Multi-agent motion prediction with symmetric scene modeling and guided intention querying. IEEE Transactions on Pattern Analysis and Machine Intelligence 2024, 46(5), 3955–3971. [Google Scholar] [CrossRef] [PubMed]

- Zou, J.; Wang, Y.; Yu, X.; Liu, R.; Fan, W.; Cheng, J.; Cai, W. Skin-inspired zero carbon heat-moisture management based on shape memory smart fabric. Advanced Fiber Materials 2025, 7(2), 481–500. [Google Scholar] [CrossRef]

- Ghafarollahi, A.; Buehler, M. J. ProtAgents: protein discovery via large language model multi-agent collaborations combining physics and machine learning. Digital Discovery 2024, 3(7), 1389–1409. [Google Scholar] [CrossRef] [PubMed]

- Levin, M. Bioelectric networks: the cognitive glue enabling evolutionary scaling from physiology to mind. Animal Cognition 2023, 26(6), 1865–1891. [Google Scholar] [CrossRef] [PubMed]

- Deng, Z.; Guo, Y.; Han, C.; Ma, W.; Xiong, J.; Wen, S.; Xiang, Y. Ai agents under threat: A survey of key security challenges and future pathways. ACM Computing Surveys 2025, 57(7), 1–36. [Google Scholar] [CrossRef]

- McKenna, C. A. Agency and the successive structure of time-consciousness. Erkenntnis 2023, 88(5), 2013–2034. [Google Scholar] [CrossRef]

- He, F.; Zhu, T.; Ye, D.; Liu, B.; Zhou, W.; Yu, P. S. The emerged security and privacy of llm agent: A survey with case studies. ACM Computing Surveys 2025, 58(6), 1–36. [Google Scholar] [CrossRef]

| Algorithm | Decision accuracy (%) Gradual/abrupt | Recombination delay (ms) gradual/mutation | Performance fluctuation range (%) Gradual/abrupt | Performance fluctuation range (%) Gradual/abrupt |

|---|---|---|---|---|

| DAMCR | 92.3/91.7 | 12.7/13.2 | 1.8/2.1 | 91.5/90.8 |

| ST | 85.7/84.2 | 28.2/29.5 | 7.9/8.5 | 67.3/66.5 |

| AR | 87.2/86.5 | 24.5/25.1 | 6.3/6.8 | 72.1/71.4 |

| DIR | 84.1/82.6 | 30.1/31.2 | 8.7/9.2 | 65.8/64.9 |

| ADWIN | 88.5/87.9 | 20.3/21.5 | 5.2/5.7 | 78.6/77.9 |

| DDM | 87.8/87.1 | 21.7/22.3 | 5.8/6.2 | 76.2/75.5 |

| LRU | 86.4/85.8 | 18.5/19.2 | 7.1/7.6 | 80.3/79.6 |

| Reservoir | 83.5/82.1 | 17.8/18.5 | 9.2/9.8 | 74.5/73.8 |

| PER | 89.6/89.1 | 16.2/16.8 | 4.1/4.5 | 85.7/84.9 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).