Submitted:

05 March 2026

Posted:

06 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Autonomous Mobile Robots

2.1. Locomotion Mechanisms

- Differential Drive: Common in structured indoor spaces such as factories and warehouses, offering mechanical simplicity and efficient planar navigation [15].

- Omnidirectional Wheels: Provide enhanced maneuverability in confined spaces, supporting applications in logistics and healthcare settings [16].

- Tracked Bases: Used in rugged or mixed terrains such as search-and-rescue missions, prioritizing stability over speed [17].

- Quadrupeds: Enable dynamic gait control over unstructured ground, with notable deployment in defense and exploration tasks [18].

- General advantages: On highly rugged terrain, legs enable true point-to-point mobility, clearing steps and minimising soil compaction, albeit at the price of higher energy consumption and more complex control [22].

- Octopod-Inspired: Combine rolling and climbing locomotion to traverse debris-laden or obstacle-rich environments [23].

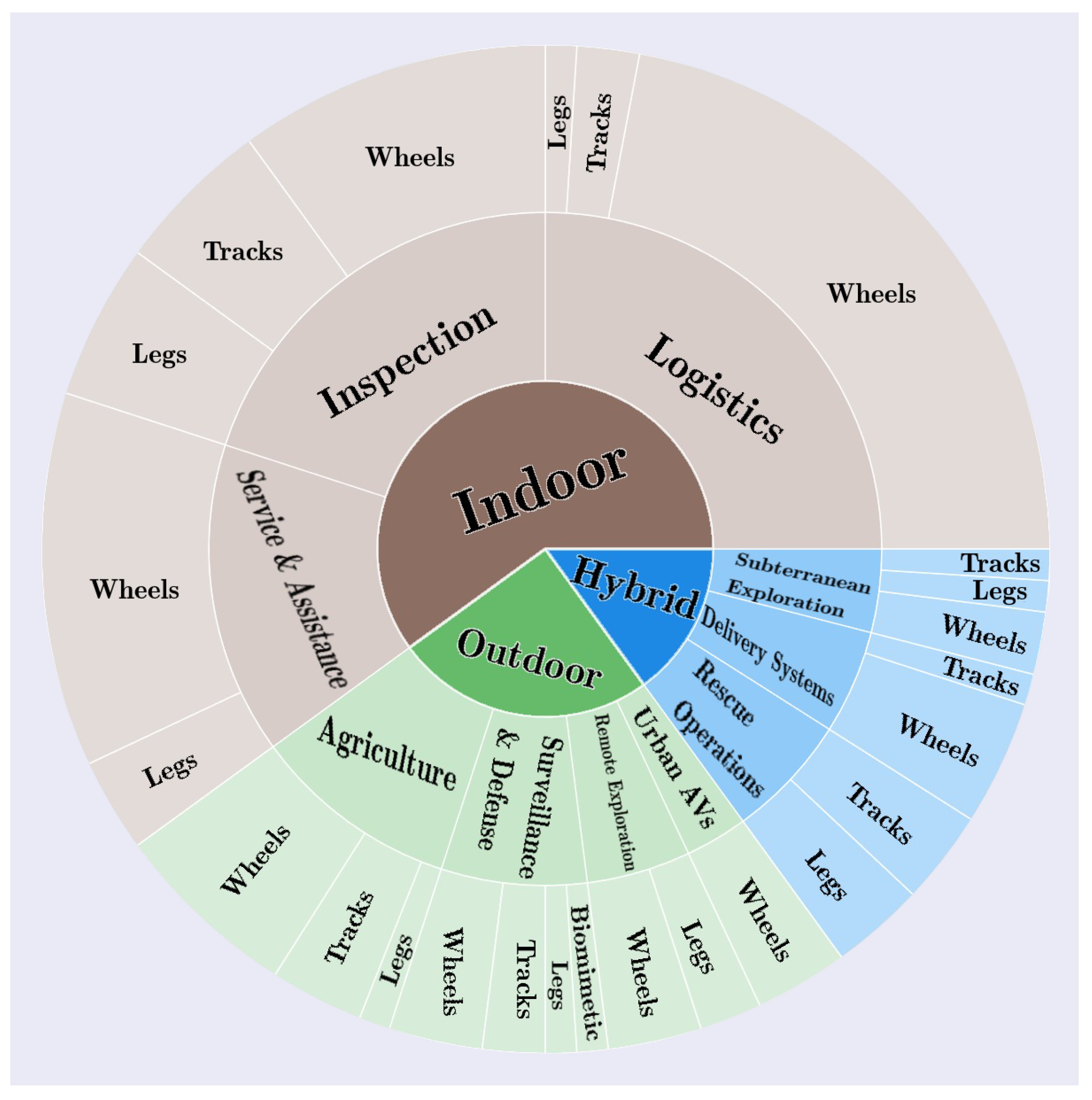

2.2. Application Domains

2.3. Autonomy Metrics

- Human-Robot Collaboration: Beer et al. [37] propose the LORA (Levels of Robot Autonomy) scale, which maps autonomy onto the classic SENSE-PLAN-ACT cycle, distinguishing supervisory from full autonomy. Gervasi et al. [38] improves this by integrating adaptivity, training, and decision authority into a multidimensional framework that assesses collaborative fluency.

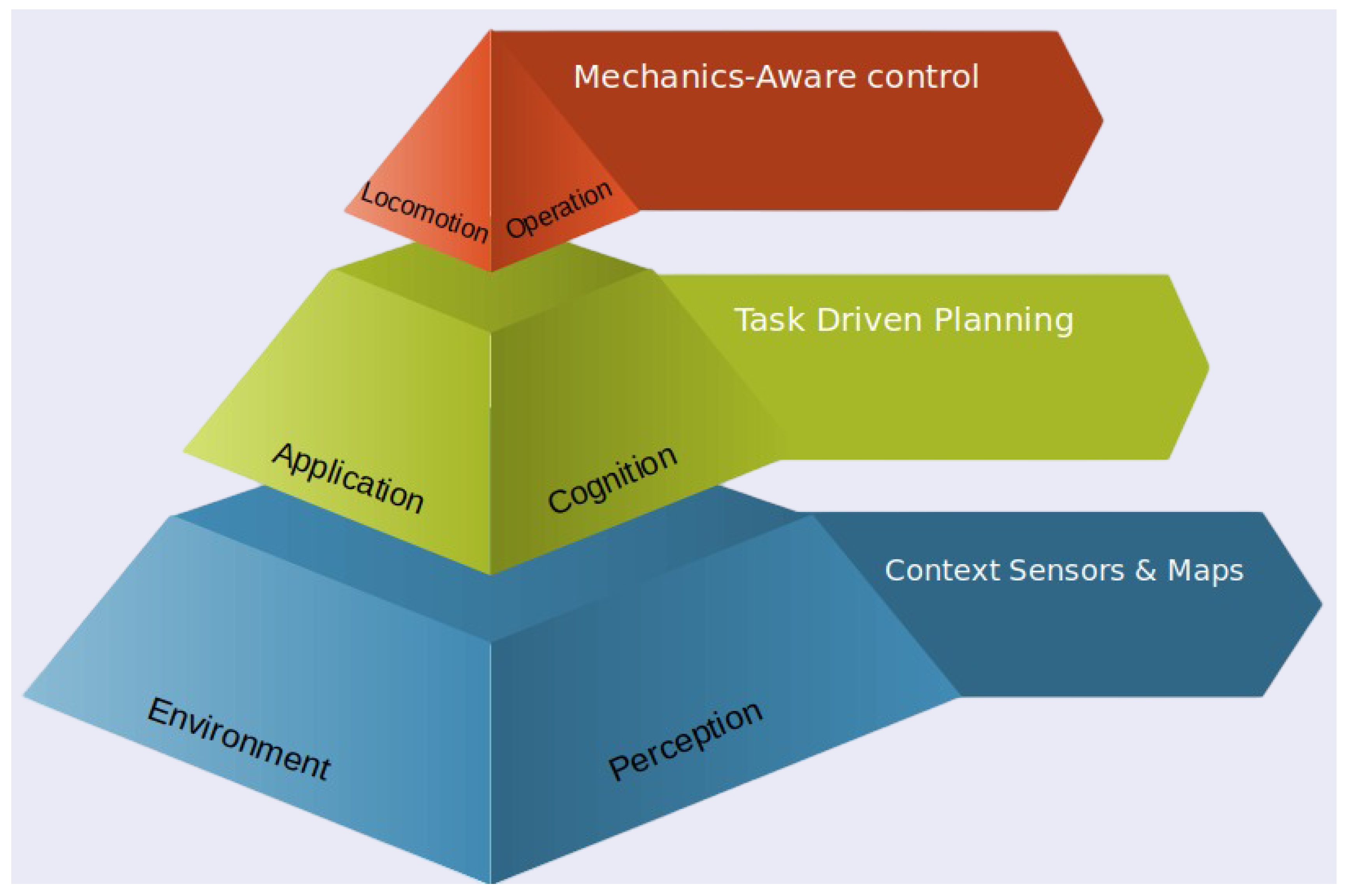

2.4. Synthesis: Taxonomy-Implementation Nexus

- Locomotion choice influences sensor layout and physical capabilities.

- Application context defines mapping, localization, and mission profiles.

- Autonomy level impacts planning architecture and the required adaptability.

3. Methodology for Structured Literature Screening

4. Bibliographic Review and Classification of Mobile Robots

- Indoor: highly structured and controlled;

- Hybrid: partially structured, partially unstructured;

- Outdoor: largely unstructured and open.

4.1. Indoor Environments

4.1.1. Industrial Inspection

4.1.2. Logistics

4.1.3. Service & Assistance

4.2. Outdoor Environments

4.2.1. Agriculture

4.2.2. Surveillance & Defense

4.2.3. Remote Exploration

4.2.4. Urban Autonomous Vehicles

4.3. Hybrid Environments

4.3.1. Rescue Operations

4.3.2. Delivery Systems

4.3.3. Subterranean Exploration

4.4. Locomotion and Upper Layers Overview

4.5. Robotics Frameworks

4.5.1. Domain-Specific Frameworks

4.5.2. Application-Oriented Frameworks (Highly Specific)

4.5.3. Generalist Frameworks

4.5.4. Abstract and Complex Frameworks

4.5.5. Ambiguous or Intricate Representations

4.5.6. Benchmarking-Focused Frameworks

4.5.7. Frameworks Focused on Specific Technical Aspects

Trajectory-Optimisation Toolkits

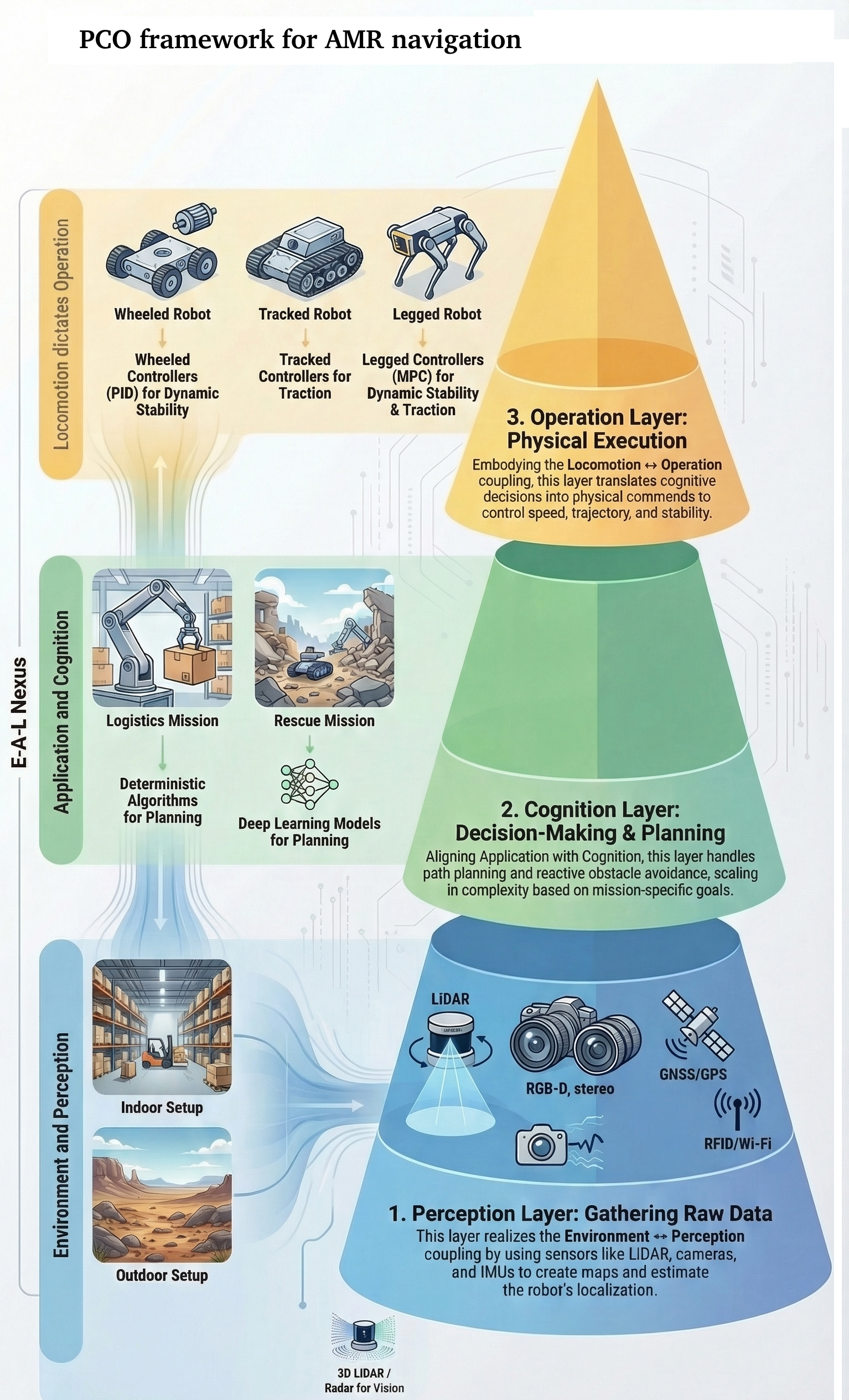

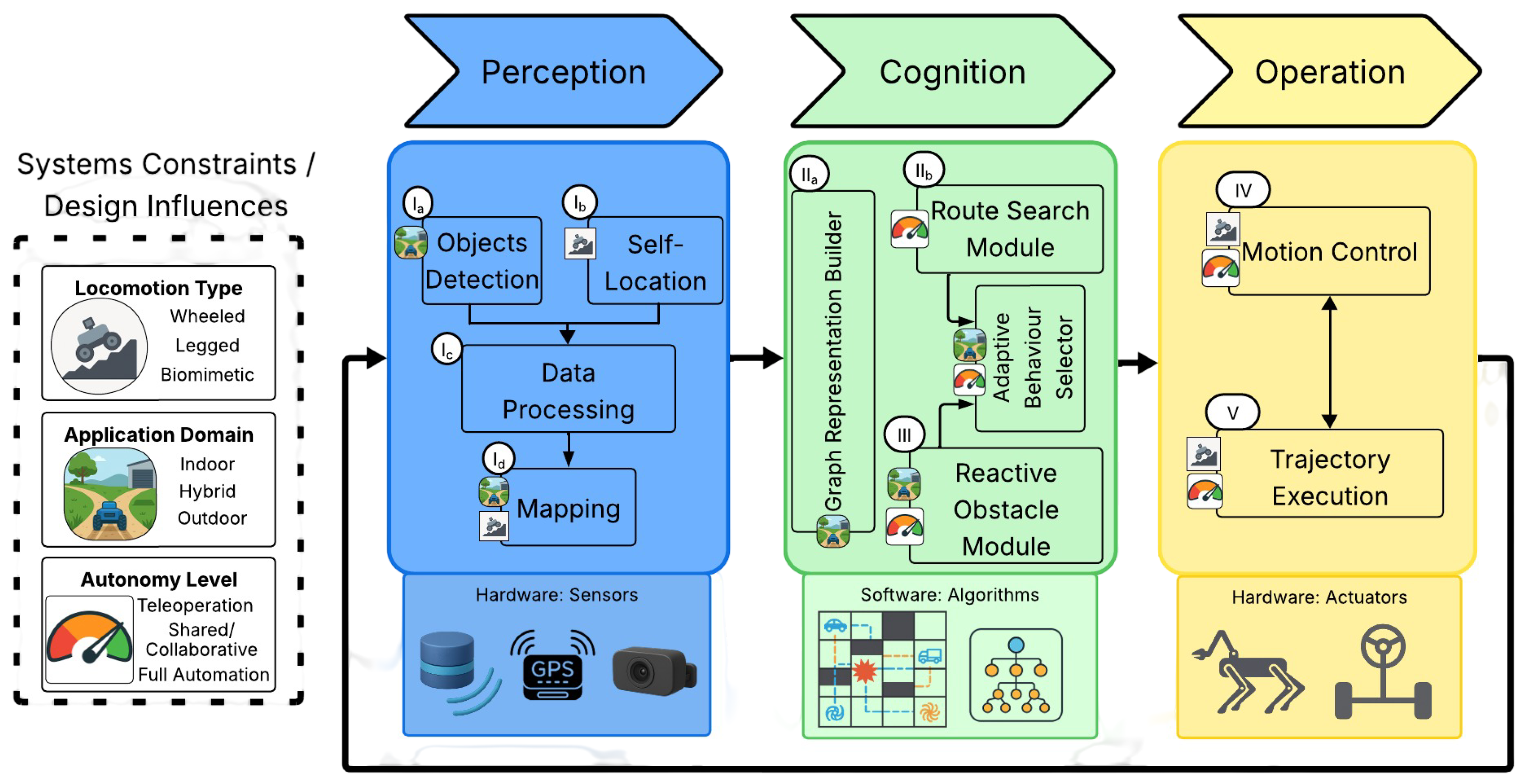

5. Proposed Unified Architecture (Framework)

5.1. Overview of the Three Layers

- Perception Layer (Phase I: Environment Perception, Self-Location, Data Processing)

- Cognition Layer (Phases II–III: Path Planning and Obstacle Avoidance)

- Operation Layer (Phases IV–V: Motion Control and Trajectory Execution).

- Perception ↔ Environment: Sensor selection and perception algorithms are dictated by environmental constraints (indoor/outdoor/hybrid).

- Cognition ↔ Application: Task complexity and autonomy requirements drive planning strategies.

- Operation ↔ Locomotion: Control mechanisms are tailored to the robot’s kinematic design (wheeled/legged/tracked).

5.2. Perception Layer

- 1a

- Environment Sensing: LiDAR, cameras (RGB-D, thermal), IMUs, radar, and other sensors gather raw data.

- 1b

- Self-Localization: Pose estimation via GNSS, SLAM, or dead-reckoning odometry.

- 1c

- Data Fusion and Filtering: Kalman/Bayesian filters and HDR algorithms reduce noise and extract features.

- 1d

- Mapping: 2-D or 3-D grid representations built from fused data.

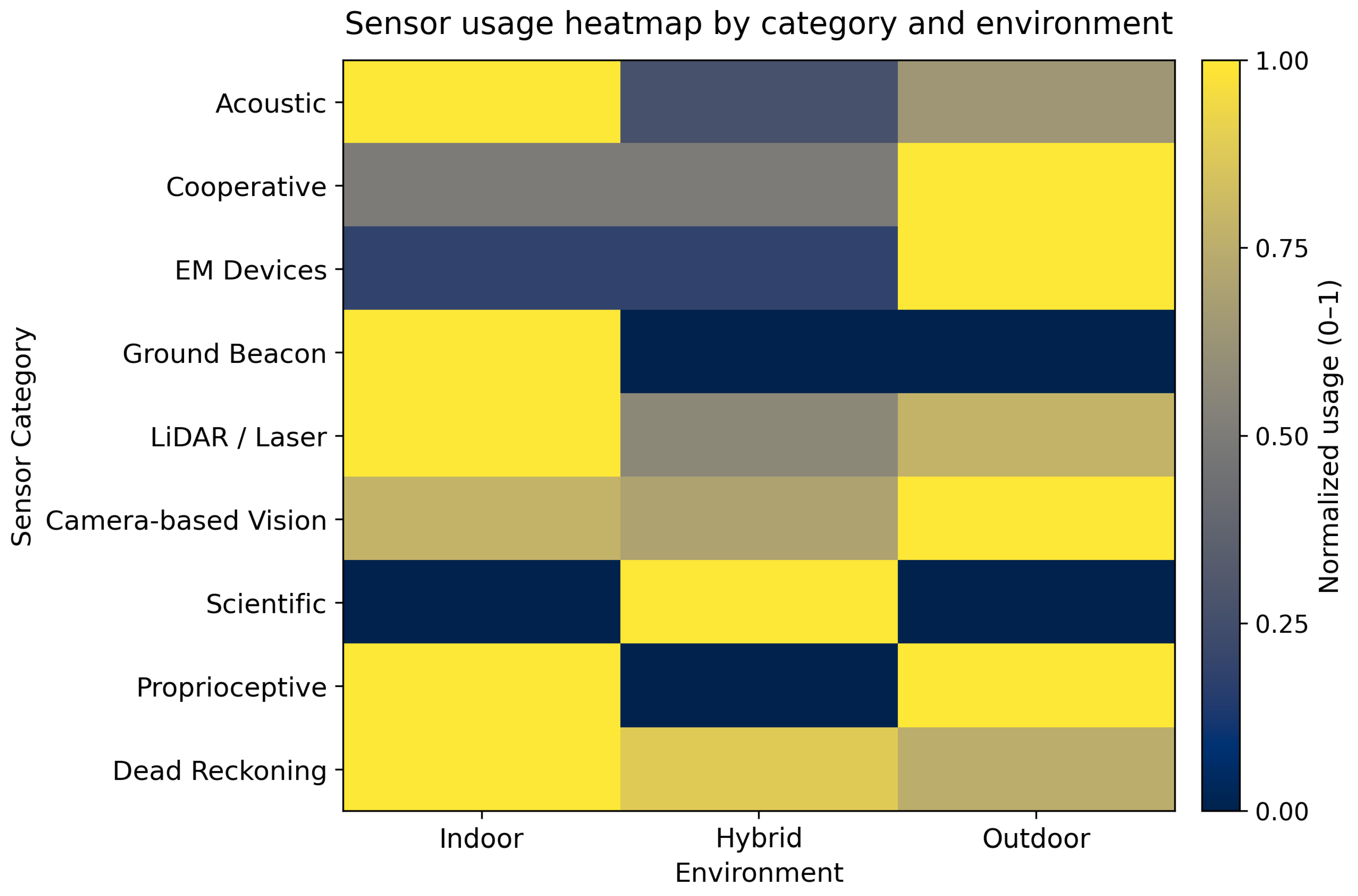

| Sensor (Category) | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| General Sensor Overview (Survey) | [2,112] | [113] | |

| Exteroceptive Sensors | |||

| Acoustic-Based Sensors | |||

| Audio Sensor (Microphone, Speaker) | [57,114] | ||

| Ultrasonic Sensor | [47,49,115,116,117,118,119,120,121,122,123] | [80,124,125] | [42,57,64,126,127] |

| Cooperative Localization Devices | |||

| Automatic Dependent Surveillance-Broadcast (ADS-B), Zigbee, Wireless | [128] | [66] | |

| PetriNet Model | [129] | ||

| Wireless Router (V2V Communication) | [66] | ||

| Electromagnetic Waves Based Devices | |||

| Force Sensing Resistor (FSR) | [122] | ||

| Global Positioning System (GPS) and/or DGPS | [72,80,130] | [11,18,29,58,62,63,64,66,78,114,126,131,132,133,134,135,136,137,138,139,140,141,142,143] | |

| Ground Penetrating Radar (GPR) | [25] | [63,132,142] | |

| Joint Position Sensor | [122] | ||

| Pressure Sensor | [20] | ||

| Radar | [124,130,144] | [64,126,131,133,134,137,138,141] | |

| Ultra Wide Band (UWB) | [45] | [64] | |

| WiFi | [49,123,145] | ||

| Ground Beacon-based Locators | |||

| Radio Frequency Identification (RFID) | [43,49,52,146] | ||

| Optical and Laser-based Sensors | |||

| Distance Measurement Sensor | [25,27] | [61,74] | |

| Ground Sensors (Reflectivity) | [14] | ||

| Infrared Sensor / Thermal Camera | [49,123,147,148] | [71,73,129,149] | [57] |

| Laser Scanner (2D) | [4,49,120,128,150,151,152,153,154,155,156,157,158,159,160] | [71,77,79,125,161] | [18,29,66,126,131,134,136] |

| LiDAR (3D) | [25,27,159,160,162,163] | [72,130,149,164,165] | [6,29,64,114,131,134,141,142,166] |

| Optical Particle Counter (OPC) | [132] | ||

| Optical Velocity Sensor (Corsys) | [135] | ||

| Panospheric Camera (360 view) | [30,60] | ||

| Scientific Sensors | |||

| Toxic Gas Sensor, Aethalometer, Wind | [62,73,132] | ||

| Visual Sensors | |||

| Kinect, 3D Depth Camera | [4,19,50,151,157,163,167] | [73,125,165] | [5,20,168] |

| RGB Camera | [21,48,118,119,120,121,163,169,170,171] | [3,27,71,72,77,80,124,130,165,172,173,174] | [18,29,30,64,74,78,114,126,131,133,134,137,138,139,141,142,166,168,175,176] |

| UV Solar Based | [59] | ||

| Proprioceptive Sensors | |||

| Geo-referencing Systems | |||

| Current Sensor (Motor) | [135] | ||

| Haptic Sensor | [177] | [57] | |

| Force/Torque Sensor | [53] | ||

| Inertial & Attitude Sensors (INS & AHRS: IMU, Gyroscopes, Accelerometers, Compass, Magnetometers, Altimeter) | [2,19,27,53,121,122,123,145,170] | [20,72,130,144,145] | [5,6,11,18,29,42,57,58,62,64,78,127,131,133,134,136,139,140,176] |

| Self Localization Apparatus (for Dead Reckoning Estimation) | |||

| Encoders (Odometer, Encoder, Optical Encoder) | [19,53,118,119,123,151,156,178] | [27,61,72,129,172,173,174] | [29,58,66,127,139,176] |

5.3. Cognition Layer

- 1.

- Path Planning: Industrial robots often rely on deterministic methods such as for structured navigation, whereas agricultural and off-road robots more frequently employ sampling-based planners such as to cope with uneven terrain and partial observability. Thus, task complexity directly affects the balance between global and local planning granularity [179].

- 2.

5.4. Operation Layer

- Motion Control: translates planned trajectories into hardware commands. Wheeled and tracked systems employ PID or Model Predictive Control (MPC) for precise speed and steering regulation [65,139], while legged robots utilize whole-body controllers with gait adaptation for dynamic stability on rough terrain [54,56]. Machine learning approaches, particularly deep reinforcement learning, are increasingly deployed to adaptively regulate heading and steering in uncertain environments, enabling wheeled platforms to autonomously optimize traction on slippery surfaces [180] and legged systems to learn complex locomotion policies through high-dimensional continuous control [181].

- Trajectory Execution: is adapted to environmental constraints [10]. In structured environments, offline dead reckoning ensures repeatable paths [49]. In unstructured settings, real-time Model Predictive Path Integral (MPPI) handles slippage at high speeds [182]; for hazardous missions (e.g., subterranean exploration), episodic execution enables teleoperation switches when autonomy limits are exceeded [183].

5.5. Advantages of the Unified Framework

- Clear separation of sensor/data processing tasks (Perception) from algorithmic decision-making (Cognition) and real-world execution (Operation).

- Better interoperability: modules in each layer can be replaced or upgraded (e.g. switching from A* to RRT*, or from PID to MPC) without overhauling the entire system.

- Easier mapping to different autonomy levels, since each layer can support more or fewer features as required.

6. Sensors and Algorithms for Terrestrial Robots

6.1. Phase I: Environment Perception, Self-Location, and Data Processing

| a Object Detection | |||

| Method | Indoor | Hybrid | Outdoor |

| LiDAR disparity / gap extraction | [161] | ||

| Camera-based Detection | |||

| Boundary Extraction | [158] | ||

| Canny Edged Detection | [174] | ||

| CNN-Based Multi-Object | [162] | [149] | |

| Color Marker-based Recognition | [119] | ||

| Edge Based Terrain Classification | [131] | ||

| Faster R-CNN | [133] | ||

| Haar Cascade Classifier | [174] | ||

| Hough Transform (Lane Detection) | [72] | [29,176] | |

| Image Processing and Enhancement | [112] | ||

| Online Boosting and Haar-like Features | [151] | ||

| Point Cloud–based Detection | [133] | ||

| Single Shot Detectors (YOLO/SSD) | [30,133,166] | ||

| SVM-based Mobility Hazard | [135] | ||

| b Sensor Fusion & Data Processing | |||

| Filter (Category) | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| General Sensor Fusion Overview | [2] | ||

| Probabilistic Fusion Filters | |||

| Extended Kalman Filter (EKF) | [120,121,150,151,157] | [130] | [64,131] |

| Kalman Filter | [121,145] | [72] | [29,64] |

| Particle Filter (PF) | [115,121] | [1] | [64,131] |

| Unscented Kalman Filter (UKF) | [1] | ||

| Other Fusion Methods | |||

| Information Matrix Fusion | [126] | ||

| Iterative Closest Point (ICP) | [164] | ||

| Multi-Level Sensor Fusion | [166] | ||

| SVD + ICP | [158] | ||

| Track-to-Track Fusion (T2TF) | [126] | ||

| Vector Auto-Regressive (VAR) Prediction | [185] | ||

| Vision-Based Fusion Filters | |||

| Bayesian-based Filters | [121,146,186] | [64] | |

| Complementary Filter State Estimator | [53,171] | ||

| Dempster–Shafer | [121] | [64] | |

| Extended H∞ Filter (EHF) | [187] | ||

| Gaussian-based Filters | [188] | ||

| High Dynamic Range (HDR) | [175] | ||

| Deep Learning–based Fusion Filters | |||

| CNN-based Sensor Fusion | [180] | ||

| Hierarchical NN Fusion | [123] | ||

| LSTM-based Predictive Fusion | [189] | ||

| Optimization-based State Estimation | |||

| Genetic Algorithm (GA) | [170] | ||

| a Self-Localization | |||

| Method | Indoor | Hybrid | Outdoor |

| Visual Place Recognition: survey | [191] | ||

| Ant-inspired PI-Full mode | [59] | ||

| Evidence-Grids Continuous | [192] | ||

| Kalman Filter-based Localization | [131] | ||

| Landmark Localization | [112] | ||

| Markov Localization | [120] | ||

| Monte Carlo Localization (MCL) | [120,156] | [131] | |

| NDT-based LiDAR Localization | [187] | ||

| RFID-based Localization | [43] | ||

| SLAM-based Localization | [25,115,157,160] | [140,142] | |

| Vehicle-to-Vehicle (V2V) | [64] | ||

| b Mapping | |||

| Method | Indoor | Hybrid | Outdoor |

| C-SLAMMODT: Cooperative Factor-Graph SLAM | [193] | ||

| Centralized Map Builder | [162] | ||

| Color-Depth Map | [151] | ||

| Continuous Metric Mapping | [146] | ||

| Elevation Map | [19] | [20] | [194] |

| Feature Map-Based Framework | [133] | ||

| Local Perceptual Space (LPS) | [118] | ||

| Merging Occupancy Grid | [158] | [66] | |

| NDT-based LiDAR Mapping | [187] | ||

| Occupancy Grid Mapping | [120,150,152,169,195] | [30,112] | |

| Octomap (3D Mapping) | [159] | ||

| Uncertainty Map | [160] | ||

| SLAM-based Mapping (e.g. Gmapping) | [163] | [112] | [18,196] |

| Stereo ORB-SLAM2 | [168] | ||

| Voxel Grid based | [4] | [131,166] | |

6.2. Phase IIa: Path Planning: Graph Construction

| Method | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| General Path Planning Overview (Survey) | [113] | ||

| Classical, Heuristic and Meta-heuristic Planners (Survey) | [102] | ||

| Genetic Algorithms | |||

| Bioinspired based | [59] | ||

| Chaotic + Co-evolutionary GA (Swarm robots) | [197] | [197] | |

| Genetic Algorithm for Map Merging Optimization | [66] | ||

| Particle Swarm Optimization (PSO) | [124] | ||

| Graph Search Maps | |||

| 3D Delaunay Triangulation | [168] | ||

| Boundary Planning | [126] | ||

| Breadth-First Search (BFS) | [10] | [113] | |

| Convex Feasible Region Mapping | [21] | ||

| Depth-First Search (DFS) | [10] | ||

| Exact Cell Decomposition | [124,179] | ||

| Free-space Volume Extraction | [168] | ||

| Grid-based Path Planning | [195] | [20] | [11,58,132] |

| Lattice based Graph | [152] | [42,137,198] | |

| Probabilistic Roadmaps | [179] | ||

| Rapid Exploring Random Tree (RRT) based | [27] | [136] | |

| Uncertainty Frontier Map (UM) | [160] | ||

| Visibility Graphs | [179] | ||

| Voronoi Diagram | [116] | [124,147,179] | |

| Others Methods of Map Building | |||

| 3D Segmentation | [19,50] | ||

| Digital Map | [126] | ||

| Elastic Bubble Band | [199] | [92] | [200] |

| Elevation Map based | [60,194] | ||

| Fast Marking Tree (FMT)* | [3] | ||

| Gaussian Mixture Model (GMM) | [131] | ||

| Hierarchical Finite State Machine (HFSM) | [138] | ||

| Hybrid Walking Pattern Generator | [53] | ||

| Lane Marking Based Mapping | [176] | ||

| NF1 Algorithm | [154] | ||

| Probabilistic Roadmap (PRM) | [3] | [5] | |

| State Vector Machine (SVM) | [135] | ||

| SuperVoxel Graph | [5,6] | ||

| Uncertainty Map (UM) | [160] | ||

| Potential Field Maps | |||

| Artificial Potential Field | [147,179] | ||

6.3. Phase IIb: Path Planning: Graph Search Algorithms

| Method | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| General Path Planning Overview (Survey) | [113] | ||

| Derived Algorithms from the previous graph search methods | |||

| Bacterial Potential Field | [44] | ||

| High Autonomous Driving (HAD) Algorithms | [138] | ||

| Multi-criteria Path Fusion Planner | [60] | ||

| Particle Swarm Optimization (PSO) | [141] | ||

| Potential Field based Algorithms | [186] | ||

| Deterministic Graph Search | |||

| A* based Algorithms | [4,19,25,50,116,152,195,201] | [3,20,92,112,173] | [5,42,58,131,137,141,176] |

| D* based Algorithms | [120,150,156,202] | [3] | [5,126] |

| Dijkstra’s based Algorithms | [185] | [112,201] | [141] |

| GPS based Coverage Approach | [132,143] | ||

| Greedy and Heuristic Quadratic Programming (GH-QP) | [21] | ||

| Smac Planner | [173] | ||

| State Lattice Search | [92,173] | [198] | |

| Utility-Based Decision Making | [138] | ||

| Genetic/Evolutionary Based Algorithms | |||

| Firefly Algorithm (FA) | [129] | ||

| Genetic Algorithm (GA) | [76] | [141] | |

| Randomized Graph Search | |||

| OMPL and SBO Planners | [109] | [203] | |

| Probabilistic Roadmap | [141] | ||

| Rapidly Exploring Random Tree (RRT) based | [45,160] | [3] | [136,141] |

| Spider Monkey Optimization (ISMO) | [122] | ||

| Wall Follow & Random Walk | [47] | ||

Selecting the “Best” Planner Is a Mission–Specific Trade-Off

6.4. Phase III: Obstacle Avoidance and Trap Landscapes

| STRATEGIES CASs | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| Anti-target Approach Laws | |||

| Cone’s Geometry-based Calculated Rule | [124] | ||

| Piecewise Continuous Bezier Curves | [136] | ||

| Visibility Constraints-based Space Carving | [168] | ||

| Genetic based Algorithms | |||

| Artificial Neural Networks | [112] | ||

| BeeClust Algorithm | [112] | ||

| Biological Approach (incl. Cockroach-Inspired Neural Escape Circuit) | [127] | ||

| Evolutionary Behavior based on Genetic Programming | [147,170] | ||

| Geometrical Methods | |||

| Collision Cone | [144] | ||

| 3-Spline | [151] | ||

| Foot Collision Check | [19,195] | [20] | |

| GPS-Based Path Correction | [132] | ||

| GH-QP (Greedy and Heuristic QP in Convex Regions) | [21] | ||

| Hybrid Regression Analysis-ISMO | [122] | ||

| Markov Random Fields (MRF) | [131] | ||

| Occupancy Likelihood-Based Merging | [66] | ||

| SuperVoxel-based Cost Model | [5] | ||

| Traditional Algorithms | |||

| Boundary Following (i.e. walls) | [112,147] | ||

| Bug Algorithms | [205,206,207] | [147] | [141] |

| Curvature Velocities Techniques (CVM) | [208] | ||

| DWA + Elastic Band | [154] | [141] | |

| Dynamic Windows Approaches | [4,117] | [124] | [137,209] |

| Elastic Band Concept | [4,25] | ||

| Follow the Gap (FTG) | [161] | ||

| Machine Learning based | [72,79,210] | [29] | |

| Nearness Diagram | [155,156] | [125] | |

| Reactive Methods | [147] | ||

| Vector Field Histogram (VFH) based algorithms | [211] | [124,147,212] | [18] |

| Virtual Force Field (VFF) Methods | |||

| Costmap Segmentation based | [30] | ||

| Dynamic Cost Map Refinement | [131] | ||

| ML based Obstacle Detection via Haar Cascade Classifier | [174] | ||

| Potential Field (Gradient Based) Methods | [1,116,118,170,186,213] | [3] | [11,42,141] |

6.5. Phase IV: Motion Control and Robot Relocation

| Controllers | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| Behavior Based Controllers | |||

| Fuzzy Logic | [21,43,151,167,174] | [112,214] | |

| Motion Generator / Shape Corrector | [125] | ||

| Rotation Shim | [92] | ||

| FTG Heuristic (model–free) | [161] | ||

| Control-Theory Based Controllers | |||

| DCC, ACC, LCGA | [138] | ||

| Robust/Optimal State-Feedback (LQR / / LMI) | [171,215,216] | ||

| Active / Optimised Disturbance-Rejection | [217] | [61] | |

| Solar-Adaptive Speed | [132] | ||

| Hybrid Controllers | |||

| Image-Segmentation Path-Following | [172] | ||

| MPPI | [218] | [92] | [139] |

| Linear Controllers | |||

| Lane Detection + Sliding Mode | [147] | ||

| Lateral & Longitudinal PID | [126] | ||

| Preview LQR | [176] | ||

| Whole-Body QP | [53] | ||

| PID (Pose / Velocity) | [20,27,112,164] | [18,42,58,135] | |

| Machine Learning | |||

| CNN | [133] | ||

| MobileNet | [166] | ||

| Neural-Network (generic) | [151] | [127] | |

| Reinforcement Learning | [20] | ||

| Nonlinear Controllers | |||

| Bio-Inspired | [116] | ||

| Dining Philosopher | [122] | ||

| Exact Feedback Linearisation (FBL / Backstepping) | [219,220] | ||

| Gradient-Based Speed & Steering | [118] | ||

| iLQR | [108,221] | ||

| Loop-Closure Pose Optimisation | [168] | ||

| Lyapunov-Based | [170] | ||

| Sliding-Mode Family (SMC / VG-NTSMC) | [222,223] | ||

| MPC | [185] | [3,72,147,182] | [5,6,11,18,131,137] |

| MSaDE-Static Force Opt. | [52] | ||

| Nonlinear Optimal SDRE | [213] | ||

| Optimized Sail Assistance | [62] | ||

| Passivity-Based Formation / Tracking | [224] | ||

| Pure Pursuit | [218,225] | [92] | [11,18,114,226] |

| Rate / Nonlinear Pos. Mapping | [177] | ||

| SC Impedance (SCIC) | [53] | ||

| State Lattice Policy | [198] | ||

| Time Elastic Band | [4,218] | ||

6.6. Phase V: Trajectory Execution

| Method/Algorithm | Indoor | Hybrid | Outdoor |

|---|---|---|---|

| Episodic Planning (Deferred Execution) | [21,119,154] | [14] | [63] |

| Hybrid Mode Switching (Autonomous-Manual Transitions) | [227] | [172] | [18,78,138] |

| Integrated Planning and Execution (Continuous Replanning) | [4,19,25,116,118,152,169,170,213] | [20,72,182] | [5,11,44,66,132,141,168,176] |

| Offline Trajectory Execution (Predefined Paths or Teleoperation) | [164,177] | [74] | [42,135,200] |

| Real-Time Reactive Trajectory Execution (Local Adjustments) | [53,171,195] | [3,49,122,125,182] | [30,126,127,131,137,166,209] |

Complementary Surveys by PCO Phase

| Layer : Phase (module) | Survey References |

|---|---|

| Perception : Detection & Self-Localization (Ia+Ib) | [2,228] |

| Perception : Mapping & SLAM (Id) | [229] |

| Perception : Sensor Fusion & Data Processing (Ic) | [121,230,231,232] |

| Cognition : Graph Representation Builder (IIa) | [233] |

| Cognition : Route Search Module (IIb) | [3,102,113,124,234,235] |

| Cognition : Obstacle Avoidance – Reactive Module (IIc) | [236] |

| Cognition : Decision-Making (III) (Adaptive Behaviour Selector) | [237,238] |

| Operation : Motion Control (IV) | [239,240] |

| Operation : Trajectory Execution (V) | [241] |

| Perception–Cognition : Prediction (Ic→II) | [242] |

| Perception–Operation : Sensor–Control Integration (I→IV) | [243] |

| Cognition–Operation : Task & Motion Planning (II→IV) | [244] |

| Perception–Cognition–Operation : End-to-end DL navigation | [245] |

6.7. Summary of Phases vs. Layers

7. Discussion and Comparison

- 1.

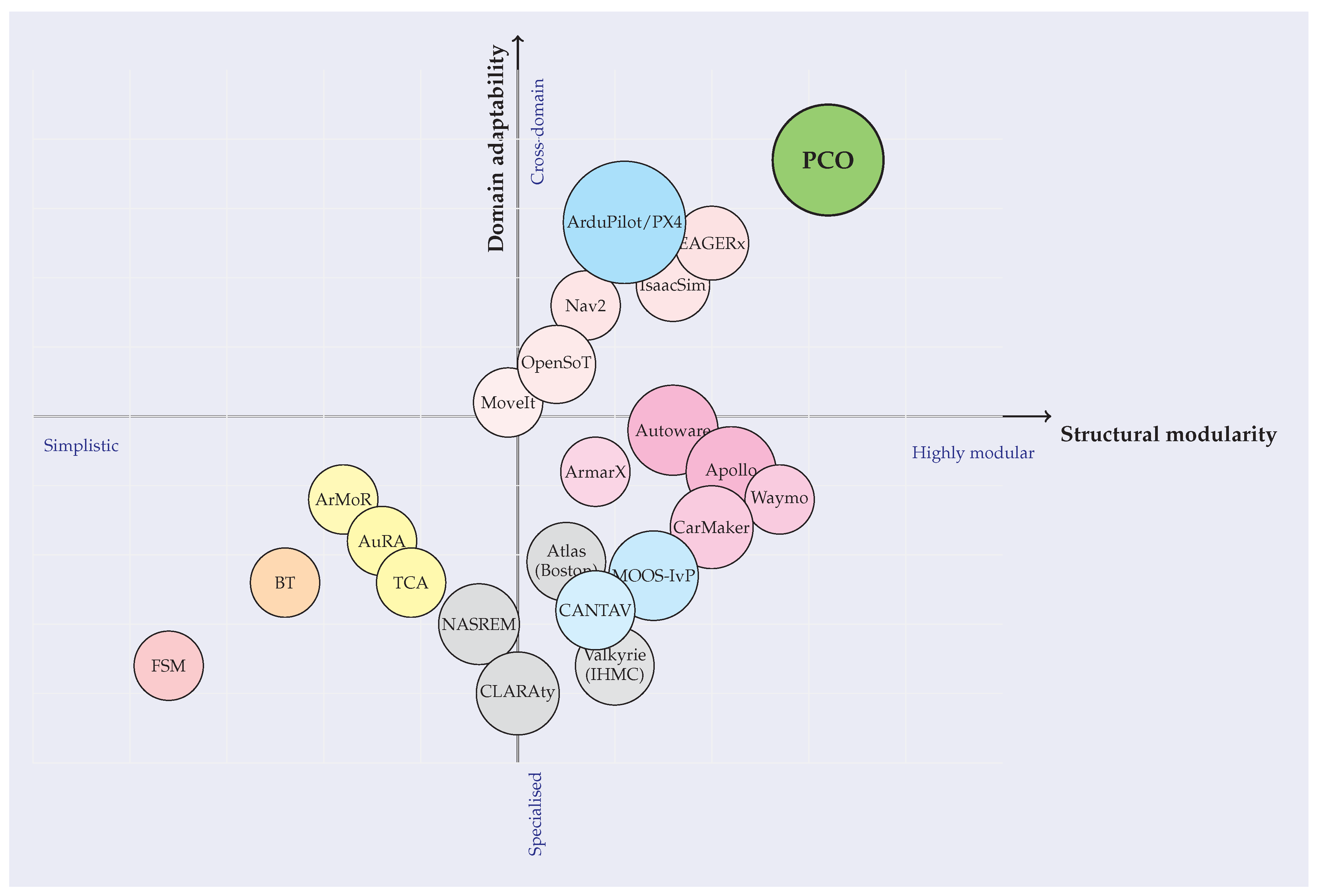

- Benchmarking insight: Table 10 details key architectural properties of representative frameworks - ranging from minimalist finite-state machines to large-scale autonomous-driving stacks - thereby clarifying the design space in which PCO operates.

- 2.

- Conceptual mapping: Figure 5 provides a visual taxonomy based on level of abstraction and domain adaptability. By anchoring each quadrant with well-known examples (e.g., Autoware, Apollo, Nav2), the plot helps researchers infer where unreviewed or future frameworks might fall and, importantly, underscores PCO’s role as a high-level reference design intended to guide forthcoming functional implementations.

| Criteria | Decision patterns(FSM, BT) | Academic / conceptual(ArMoR, AuRA, TCA, NASREM, CLARAty) | Generalist / Control SDKs(MoveIt, Nav2, Isaac, EAGERx, OpenSoT) | Domain stacks(Autoware, Apollo, Waymo, CarMaker, ArmarX) | Cross-domain pilots(ArduPilot/PX4, MOOS-IvP) | Proposed PCO |

|---|---|---|---|---|---|---|

| General architecture | State graph / tree | Layered or hybrid concepts | Plugin-based ROS / GPU SDK | Large multi-module monolith | Real-time autopilot core | Three orthogonal layers |

| Structural modularity | Low | Moderate | High | High | High | High |

| Domain adaptability | Low–Moderate | Low | High | Low | Moderate–High | High |

| Scalability | Poor–Good | Moderate | High | High | High | High |

| Ease of reuse / config. | Limited | Low (concept only) | High (launch + plugins) | Moderate (heavy setup) | Moderate (parameter files) | High (clear APIs) |

| Typical scope | Toy demos, game AI | Research prototypes, rovers | Arms, AMRs, factories | L4/L5 road vehicles | UAVs, AUVs, UGVs | Reference design for multiple domains |

8. Emerging Trends and Future Directions

8.1. Trends in Autonomous Robotics

8.1.1. Electronics-Free Robots

8.1.2. Multi-modal Locomotion and Task Adaptability

8.1.3. Collaborative Mapping and Localization

8.1.4. Scientific Sensor Payloads

8.1.5. Learning-Enabled Navigation

8.2. Energy Optimization and Sustainability

8.3. Challenges in Autonomous Robotics

8.3.1. Decision-Making and Ethical Considerations

8.3.2. Autonomous Recovery from Failures

8.3.3. Adaptive Algorithm Switching for Diverse Operational Conditions

8.4. Discussion of Results

9. Conclusion

9.1. Limitations and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Khatib, O. Real-time obstacle avoidance for manipulators and mobile robots. In Autonomous robot vehicles; Springer, 1986; pp. 396–404.

- Panigrahi, P.K.; Bisoy, S.K. Localization strategies for autonomous mobile robots: A review. J. King Saud Univ., Comp. & Info. Sci. 2022, 34, 6019–6039. [CrossRef]

- Sánchez-Ibáñez, J.R.; Pérez-del Pulgar, C.J.; García-Cerezo, A. Path planning for autonomous mobile robots: A review. Sensors 2021, 21, 7898. [CrossRef]

- Macenski, S.; Martin, F.; White, R.; Ginés Clavero, J. The Marathon 2: A Navigation System. In Proceedings of the 2020 IEEE/RSJ IRO), 2020.

- Atas, F.; Grimstad, L.; Cielniak, G. Evaluation of sampling-based optimizing planners for outdoor robot navigation. arXiv preprint arXiv:2103.13666 2021.

- Atas, F.; Cielniak, G.; Grimstad, L. Navigating in 3D Uneven Environments through Supervoxels and Nonlinear MPC. In Proceedings of the 2023 ECMR. IEEE, 2023, pp. 1–8.

- Jalal, F.; Nasir, F. Underwater navigation, localization and path planning for autonomous vehicles: A review. In Proceedings of the 2021 International Bhurban Conference on Applied Sciences and Technologies (IBCAST). IEEE, 2021, pp. 817–828.

- Yang, J.; Huo, J.; Xi, M.; He, J.; Li, Z.; Song, H.H. A time-saving path planning scheme for autonomous underwater vehicles with complex underwater conditions. IEEE Internet of Things Journal 2022, 10, 1001–1013. [CrossRef]

- Yim, M.; Shen, W.M.; Salemi, B.; Rus, D.; Moll, M.; Lipson, H.; Klavins, E.; Chirikjian, G.S. Modular self-reconfigurable robot systems [grand challenges of robotics]. IEEE Robotics & Automation Magazine 2007, 14, 43–52. [CrossRef]

- Siegwart, R.; Nourbakhsh, I.R.; Scaramuzza, D.; Arkin, R.C. Introduction to autonomous mobile robots; 2011.

- Hajjaj, S.S.H.; Sahari, K.S.M. Bringing ROS to agriculture automation: hardware abstraction of agriculture machinery. Int. J. Appl. Eng. Res. 2017, 12, 311–316.

- Siciliano, B. Springer Handbook of Robotics. Springer-Verlag google schola 2008, 2, 15–35.

- Jahn, U.; Heß, D.; Stampa, M.; Sutorma, A.; Röhrig, C.; Schulz, P.; Wolff, C. A taxonomy for mobile robots: Types, applications, capabilities, implementations, requirements, and challenges. Robotics 2020, 9, 109. [CrossRef]

- Ben-Ari, M.; Mondada, F.; Ben-Ari, M.; Mondada, F. Robots and their applications. Elements of robotics 2018, pp. 1–20.

- Bach, S.H.; Khoi, P.B.; Yi, S.Y. Global UWB system: A high-accuracy mobile robot localization system with tightly coupled integration. IEEE Internet of Things Journal 2024, 11, 16618–16626. [CrossRef]

- Aydınocak, E.U. Robotics systems and healthcare logistics. In Health 4.0 and medical supply chain; Springer, 2023; pp. 79–96.

- Edlinger, R.; Föls, C.; Nüchter, A. An innovative pick-up and transport robot system for casualty evacuation. In Proceedings of the 2022 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR). IEEE, 2022, pp. 67–73.

- Sanaullah, M.; Akhtaruzzaman, M.; Hossain, M.A. Land-robot technologies: The integration of cognitive systems in military and defense. NDC E-JOURNAL 2022, 2, 123–156.

- Kim, J.H.; Shin, Y.H.; Jeong, H.; Oh, J.H.; Park, H.W. Real time humanoid footstep planning with foot angle difference consideration for cost-to-go heuristic. In Proceedings of the 2023 20th International Conference on Ubiquitous Robots (UR). IEEE, 2023, pp. 92–99.

- Wang, S.; Piao, S.; Leng, X.; He, Z. Learning 3D bipedal walking with planned footsteps and Fourier series periodic gait planning. Sensors 2023, 23, 1873. [CrossRef]

- Gao, Z.; Chen, X.; Yu, Z.; Li, C.; Han, L.; Zhang, R. Global footstep planning with greedy and heuristic optimization guided by velocity for biped robot. Expert Systems with Applications 2024, 238, 121798. [CrossRef]

- Garcia, E.; Jimenez, M.A.; De Santos, P.G.; Armada, M. The evolution of robotics research. IEEE Robotics & Automation Magazine 2007, 14, 90–103.

- Sun, H.; Wei, C.; Yao, Y.a.; Wu, J. Analysis and Experiment of a Bioinspired Multimode Octopod Robot. Chinese Journal of Mechanical Engineering 2023, 36, 142. [CrossRef]

- Santos, H.F.; Perondi, E.A.; Wentz, A.V.; da Silva Junior, A.L.; Barone, D.A.; Galassi, M.; de Castro, B.B.; dos Reis, N.R.; Basso, E.D.; Pereira Pinto, H.L.; et al. Annelida, a Robot for Removing Hydrate and Paraffin Plugs in Offshore Flexible Lines: Development and Experimental Trials. SPE Production & Operations 2020, 35, 641–653. [CrossRef]

- Eldemiry, A.; Muddassir, M.; Zayed, T. Autonomous Data Acquisition of Ground Penetrating Radar (GPR) Using LiDAR-based Mobile Robot. In Proceedings of the ISARC. Proceedings of the International Symposium on Automation and Robotics in Construction. IAARC Publications, 2024, Vol. 41, pp. 206–212.

- Sostero, M. Automation and robots in services: review of data and taxonomy 2020.

- Bui, H.D.; Nguyen, S.; Billah, U.H.; Le, C.; Tavakkoli, A.; La, H.M. Control framework for a hybrid-steel bridge inspection robot. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2020, pp. 2585–2591.

- Redhead, F.; Snow, S.; Vyas, D.; Bawden, O.; Russell, R.; Perez, T.; Brereton, M. Bringing the farmer perspective to agricultural robots. In Proceedings of the Proceedings of the 33rd Annual ACM Conference Extended Abstracts on Human Factors in Computing Systems, 2015, pp. 1067–1072.

- Li, M.; Imou, K.; Wakabayashi, K.; Yokoyama, S. Review of research on agricultural vehicle autonomous guidance. International Journal of Agricultural and biological engineering 2009, 2, 1–16.

- de Avila Lopes, R.; de Carvalho, M.V.L.; Kitani, E.; de Assis Zampirolli, F.; Yoshioka, L.; Junior, L.A.C.; Ibusuki, U. Deep Learning-Based Instance Segmentation for Enhanced Navigation of Agricultural Vehicles. Revista de Informática Teórica e Aplicada 2025, 32, 136–142. [CrossRef]

- Tranzatto, M.; Miki, T.; Dharmadhikari, M.; Bernreiter, L.; Kulkarni, M.; Mascarich, F.; Andersson, O.; Khattak, S.; Hutter, M.; Siegwart, R.; et al. Cerberus in the darpa subterranean challenge. Science Robotics 2022, 7, eabp9742. [CrossRef]

- Kono, H.; Isayama, S.; Koshiji, F.; Watanabe, K.; Suzuki, H. Automatic Flipper Control for Crawler Type Rescue Robot using Reinforcement Learning. International Journal of Advanced Computer Science & Applications 2024, 15. [CrossRef]

- Huang, H.M.; Pavek, K.; Novak, B.; Albus, J.; Messin, E. A framework for autonomy levels for unmanned systems (ALFUS). Proceedings of the AUVSI’s unmanned systems North America 2005, pp. 849–863.

- Meakin, M. Quantifying Turing: a systems approach to quantitatively assessing the degree of autonomy of any system. Journal of Unmanned Vehicle Systems 2021, 9, 219–233. [CrossRef]

- Pittman, J.M. A Measure for Level of Autonomy Based on Observable System Behavior. arXiv preprint arXiv:2407.14975 2024.

- Hwang, G.J.; Katre, A.; Hart, K.M.; Rea, C.A. Analysis techniques of autonomy framework metrics for autonomous developers. In Proceedings of the Autonomous Systems: Sensors, Processing and Security for Ground, Air, Sea and Space Vehicles and Infrastructure 2022. SPIE, 2022, Vol. 12115, pp. 124–136.

- Beer, J.; Fisk, A.; Rogers, W. Toward a Framework for Levels of Robot Autonomy in Human–Robot Interaction. J. of Human–Robot Interaction 2014, 3. [CrossRef]

- Gervasi, R.; Mastrogiacomo, L.; Franceschini, F. A conceptual framework to evaluate human-robot collaboration. The International Journal of Advanced Manufacturing Technology 2020, 108, 841–865. [CrossRef]

- International Federation of Robotics. World Robotics 2021. https://ifr.org/, 2021. Accessed: 2 Mar. 2025.

- Bruzzone, L.; Quaglia, G. Locomotion systems for ground mobile robots in unstructured environments. Mechanical sciences 2012, 3, 49–62. [CrossRef]

- Karelics Oy. Karelics Radar Inspection Robot. https://karelics.fi/radar-inspections/. Accessed: 2 Mar. 2025.

- Dissanayake, M. Development of a Chain Climbing Robot and an Automated Ultrasound Inspection System for Mooring Chain Integrity Assessment. PhD thesis, London South Bank University, 2018.

- Gueaieb, W.; Miah, M.S. An intelligent mobile robot navigation technique using RFID technology. IEEE Transactions on Instrumentation and Measurement 2008, 57, 1908–1917. [CrossRef]

- Montiel, O.; Orozco-Rosas, U.; Sepúlveda, R. Path planning for mobile robots using Bacterial Potential Field for avoiding static and dynamic obstacles. Expert Systems with Applications 2015, 42, 5177–5191. [CrossRef]

- Shen, K.; Li, C.; Xu, D.; Wu, W.; Wan, H. Sensor-network-based navigation of delivery robot for baggage handling in international airport. International Journal of Advanced Robotic Systems 2020, 17, 1729881420944734. [CrossRef]

- KASURINEN, M. MOBILE ROBOTS IN INDOOR LOGISTICS 2017.

- Ong, R.; Azir, K.K. Low cost autonomous robot cleaner using mapping algorithm based on internet of things (IoT). In Proceedings of the IOP conference series: materials science and engineering. IOP Publishing, 2020, Vol. 767, p. 012071.

- Yamaguchi, U.; Saito, F.; Ikeda, K.; Yamamoto, T. HSR, human support robot as research and development platform. In Proceedings of the The Abstracts of the international conference on advanced mechatronics: toward evolutionary fusion of IT and mechatronics: ICAM 2015.6. The Japan Society of Mechanical Engineers, 2015, pp. 39–40. [CrossRef]

- Bloss, R. Mobile hospital robots cure numerous logistic needs. Industrial Robot: An International Journal 2011, 38, 567–571. [CrossRef]

- Karkowski, P.; Oßwald, S.; Bennewitz, M. Real-time footstep planning in 3D environments. In Proceedings of the 2016 IEEE-RAS 16th International Conference on Humanoid Robots (Humanoids). IEEE, 2016, pp. 69–74.

- Karkowski, P.; Bennewitz, M. Prediction maps for real-time 3d footstep planning in dynamic environments. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA). IEEE, 2019, pp. 2517–2523.

- Pierezan, J.; Freire, R.Z.; Weihmann, L.; Reynoso-Meza, G.; dos Santos Coelho, L. Static force capability optimization of humanoids robots based on modified self-adaptive differential evolution. Computers & Operations Research 2017, 84, 205–215. [CrossRef]

- Ruscelli, F.; Rossini, L.; Hoffman, E.M.; Baccelliere, L.; Laurenzi, A.; Muratore, L.; Antonucci, D.; Cordasco, S.; Tsagarakis, N.G. Design and Control of the Humanoid Robot COMAN+: Hardware Capabilities and Software Implementations. IEEE Robotics & Automation Magazine 2024. [CrossRef]

- Khorshidi, S.; Dawood, M.; Nederkorn, B.; Bennewitz, M.; Khadiv, M. Physically-Consistent Parameter Identification of Robots in Contact. arXiv preprint arXiv:2409.09850 2024.

- Bayer, J. Autonomous Exploration of Unknown Rough Terrain with Hexapod Walking Robot. In Proceedings of the Conference on Intelligent Robots and Systems (IROS), 2016, pp. 2859–2866.

- Azayev, T.; Zimmerman, K. Blind hexapod locomotion in complex terrain with gait adaptation using deep reinforcement learning and classification. Journal of Intelligent & Robotic Systems 2020, 99, 659–671. [CrossRef]

- Ghute, M.S.; Kamble, K.P.; Korde, M. Design of military surveillance robot. In Proceedings of the 2018 First International Conference on Secure Cyber Computing and Communication (ICSCCC). IEEE, 2018, pp. 270–272.

- He, Y.; Chen, C.; Bu, C.; Han, J. A polar rover for large-scale scientific surveys: design, implementation and field test results. International Journal of Advanced Robotic Systems 2015, 12, 145. [CrossRef]

- Dupeyroux, J.; Serres, J.R.; Viollet, S. AntBot: A six-legged walking robot able to home like desert ants in outdoor environments. Science Robotics 2019, 4, eaau0307. [CrossRef]

- Wettergreen, D.; Bapna, D.; Maimone, M.; Thomas, G. Developing Nomad for robotic exploration of the Atacama Desert. Robotics and Autonomous Systems 1999, 26, 127–148. [CrossRef]

- Silva, A.F.B. Modelagem de Sistemas Robóticos Móveis para Controle de Tração em Terrenos Acidentados. PhD thesis, M. Sc. Thesis, Mech. Eng. Dept., Pontifical Catholic University of Rio de …, 2007.

- Luo, Y.; Liu, G.; Guo, L.; Zhu, Y.; Zhao, J. Scalable Wing Sailing and Snowboarding Enhance Efficient and Energy-Saving Mobility of Polar Robot. IEEE/ASME Transactions on Mechatronics 2024. [CrossRef]

- Lever, J.H.; Delaney, A.J.; Ray, L.E.; Trautmann, E.; Barna, L.A.; Burzynski, A.M. Autonomous gpr surveys using the polar rover yeti. Journal of Field Robotics 2013, 30, 194–215. [CrossRef]

- Kuutti, S.; Fallah, S.; Katsaros, K.; Dianati, M.; Mccullough, F.; Mouzakitis, A. A survey of the state-of-the-art localization techniques and their potentials for autonomous vehicle applications. IEEE Internet of Things Journal 2018, 5, 829–846. [CrossRef]

- Kuwata, Y.; Teo, J.; Fiore, G.; Karaman, S.; Frazzoli, E.; How, J.P. Real-time motion planning with applications to autonomous urban driving. IEEE Transactions on Control Systems Technology 2009, 17, 1105–1118. [CrossRef]

- Li, H.; Tsukada, M.; Nashashibi, F.; Parent, M. Multivehicle cooperative local mapping: A methodology based on occupancy grid map merging. IEEE Transactions on Intelligent Transportation Systems 2014, 15, 2089–2100. [CrossRef]

- Yang, S.; Wang, W.; Liu, C.; Deng, W. Scene Understanding in Deep Learning-Based End-to-End Controllers for Autonomous Vehicles. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2019, 49, 53–63. [CrossRef]

- Liu, G.H.; Lin, H.Y.; Lin, H.Y.; Chen, S.T.; Lin, P.C. A bio-inspired hopping kangaroo robot with an active tail. Journal of Bionic Engineering 2014, 11, 541–555. [CrossRef]

- Yoshimura, S.; Suzuki, T.; Bando, M.; Yuzaki, S.; Kawaharazuka, K.; Okada, K.; Inaba, M. Design method of a Kangaroo robot with high power legs and an articulated soft tail. In Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2023, pp. 6631–6638.

- Grzelczyk, D.; Awrejcewicz, J. Dynamics, stability analysis and control of a mammal-like octopod robot driven by different central pattern generators. Journal of Computational Applied Mechanics 2019, 50, 76–89.

- Murphy, R.R. Trial by fire [rescue robots]. IEEE Robotics & Automation Magazine 2004, 11, 50–61.

- Delmerico, J.; Mintchev, S.; Giusti, A.; Gromov, B.; Melo, K.; Horvat, T.; Cadena, C.; Hutter, M.; Ijspeert, A.; Floreano, D.; et al. The current state and future outlook of rescue robotics. Journal of Field Robotics 2019, 36, 1171–1191. [CrossRef]

- Reddy, A.H.; Kalyan, B.; Murthy, C.S. Mine rescue robot system–a review. Procedia Earth and Planetary Science 2015, 11, 457–462. [CrossRef]

- Matsuno, F.; Tadokoro, S. Rescue robots and systems in Japan. In Proceedings of the 2004 IEEE international conference on robotics and biomimetics. IEEE, 2004, pp. 12–20.

- Navarro, A.S.; Monteiro, C.M.; Cardeira, C.B. A mobile robot vending machine for beaches based on consumers’ preferences and multivariate methods. Procedia-Social and Behavioral Sciences 2015, 175, 122–129. [CrossRef]

- Ko, Y.K.; Park, J.H.; Ko, Y.D. A development of optimal algorithm for integrated operation of UGVs and UAVs for goods delivery at tourist destinations. Applied Sciences 2022, 12, 10396.

- Shiomi, M.; Kanda, T.; Glas, D.F.; Satake, S.; Ishiguro, H.; Hagita, N. Field trial of networked social robots in a shopping mall. In Proceedings of the 2009 IEEE/RSJ international conference on intelligent robots and systems. IEEE, 2009, pp. 2846–2853.

- Hoffmann, T.; Prause, G. On the regulatory framework for last-mile delivery robots. Machines 2018, 6, 33. [CrossRef]

- Wei, C.; Li, Y.; Ouyang, Y.; Ji, Z. Deep reinforcement learning with heuristic corrections for UGV navigation. Journal of Intelligent & Robotic Systems 2023, 109, 18. [CrossRef]

- Srinivas, S.; Ramachandiran, S.; Rajendran, S. Autonomous robot-driven deliveries: A review of recent developments and future directions. Transportation research part E: logistics and transportation review 2022, 165, 102834. [CrossRef]

- Chung, T.H.; Orekhov, V.; Maio, A. Into the robotic depths: Analysis and insights from the darpa subterranean challenge. Annual Review of Control, Robotics, and Autonomous Systems 2023, 6, 477–502. [CrossRef]

- Raja, G.; Raja, K.; Kanagarathinam, M.R.; Needhidevan, J.; Vasudevan, P. Advanced Decision Making and Motion Planning Framework for Autonomous Navigation in Unsignalized Intersections. IEEE Access 2024. [CrossRef]

- Fan, H.; Zhu, F.; Liu, C.; Zhang, L.; Zhuang, L.; Li, D.; Zhu, W.; Hu, J.; Li, H.; Kong, Q. Baidu Apollo EM Motion Planner. arXiv e-prints 2018, pp. arXiv–1807.

- Würsching, G.; Mascetta, T.; Lin, Y.; Althoff, M. Simplifying Sim-to-Real Transfer in Autonomous Driving: Coupling Autoware with the CommonRoad Motion Planning Framework. In Proceedings of the 2024 IEEE Intelligent Vehicles Symposium (IV). IEEE, 2024, pp. 1462–1469.

- Kato, S.; Tokunaga, S.; Maruyama, Y.; Maeda, S.; Hirabayashi, M.; Kitsukawa, Y.; Monrroy, A.; Ando, T.; Fujii, Y.; Azumi, T. Autoware on board: Enabling autonomous vehicles with embedded systems. In Proceedings of the 2018 ACM/IEEE 9th International Conference on Cyber-Physical Systems (ICCPS). IEEE, 2018, pp. 287–296.

- Liu, W.; Wan, G.; Liu, J.; Cong, D. Path Planning for Lunar Rovers in Dynamic Environments: An Autonomous Navigation Framework Enhanced by Digital Twin-Based A*-D3QN. Aerospace 2025, 12, 517. [CrossRef]

- Nesnas, I.A.; Simmons, R.; Gaines, D.; Kunz, C.; Diaz-Calderon, A.; Estlin, T.; Madison, R.; Guineau, J.; McHenry, M.; Shu, I.H.; et al. CLARAty: Challenges and steps toward reusable robotic software. International Journal of Advanced Robotic Systems 2006, 3, 5. [CrossRef]

- Benjamin, M.R.; Schmidt, H.; Newman, P.M.; Leonard, J.J. Nested autonomy for unmanned marine vehicles with MOOS-IvP. Journal of Field Robotics 2010, 27, 834–875. [CrossRef]

- Asfour, T.; Waechter, M.; Kaul, L.; Rader, S.; Weiner, P.; Ottenhaus, S.; Grimm, R.; Zhou, Y.; Grotz, M.; Paus, F. Armar-6: A high-performance humanoid for human-robot collaboration in real-world scenarios. IEEE Robotics & Automation Magazine 2019, 26, 108–121. [CrossRef]

- Youakim, D.; Ridao, P.; Palomeras, N.; Spadafora, F.; Ribas, D.; Muzzupappa, M. MoveIt!: autonomous underwater free-floating manipulation. IEEE Robotics & Automation Magazine 2017, 24, 41–51. [CrossRef]

- Gonzalez, A.G.; Alves, M.V.; Viana, G.S.; Carvalho, L.K.; Basilio, J.C. Supervisory control-based navigation architecture: a new framework for autonomous robots in industry 4.0 environments. IEEE Transactions on Industrial Informatics 2017, 14, 1732–1743. [CrossRef]

- Macenski, S.; Moore, T.; Lu, D.V.; Merzlyakov, A.; Ferguson, M. From the desks of ROS maintainers: A survey of modern & capable mobile robotics algorithms in the robot operating system 2. Robotics and Autonomous Systems 2023, 168, 104493. [CrossRef]

- Alam, M.S.; Gullu, A.I.; Gunes, A. Fiducial Markers and Particle Filter Based Localization and Navigation Framework for an Autonomous Mobile Robot. SN Computer Science 2024, 5, 748. [CrossRef]

- Sandeep, P.; Yerragudi, V.; Gangadhar, N. CANTAV: A Cloud Centric Framework for Navigation and Control of Autonomous Road Vehicles. In Proceedings of the 2017 IEEE International Conference on Cloud Computing in Emerging Markets (CCEM). IEEE, 2017, pp. 99–106.

- Muñoz-Bañón, M.Á.; del Pino, I.; Candelas, F.A.; Torres, F. Framework for fast experimental testing of autonomous navigation algorithms. Applied Sciences 2019, 9, 1997. [CrossRef]

- Goodwin, J.R.; Winfield, A. A unified design framework for mobile robot systems. PhD thesis, University of the West of England, Bristol, 2008.

- Kainova, T.D. Overview of the accelerated platform for robotics and artificial intelligence NVIDIA Isaac. In Proceedings of the 2023 seminar on information computing and processing (ICP). IEEE, 2023, pp. 89–93.

- Saadat, N.; Sharif, M.M.M. Application framework for forest surveillance and data acquisition using unmanned aerial vehicle system. In Proceedings of the 2017 International Conference on Engineering Technology and Technopreneurship (ICE2T). IEEE, 2017, pp. 1–6.

- van der Heijden, B.; Luijkx, J.; Ferranti, L.; Kober, J.; Babuska, R. Engine Agnostic Graph Environments for Robotics (EAGERx): A Graph-Based Framework for Sim2real Robot Learning. IEEE Robotics & Automation Magazine 2024. [CrossRef]

- Orebäck, A. A component framework for autonomous mobile robots. PhD thesis, Numerisk analys och datalogi, 2004.

- Alami, R.; Chatila, R.; Fleury, S.; Ghallab, M.; Ingrand, F. An architecture for autonomy. The International Journal of Robotics Research 1998, 17, 315–337. [CrossRef]

- Ugwoke, K.C.; Nnanna, N.A.; Abdullahi, S.E.Y. Simulation-based review of classical, heuristic, and metaheuristic path planning algorithms. Scientific Reports 2025, 15, 12643.

- Nalic, D.; Pandurevic, A.; Eichberger, A.; Rogic, B. Design and implementation of a co-simulation framework for testing of automated driving systems. Sustainability 2020, 12, 10476. [CrossRef]

- Wang, X.; Qi, X.; Wang, P.; Yang, J. Decision making framework for autonomous vehicles driving behavior in complex scenarios via hierarchical state machine. Autonomous Intelligent Systems 2021, 1, 1–12. [CrossRef]

- Godin, A. A simple architecture for modular robots.

- Axelsson, M.; Oliveira, R.; Racca, M.; Kyrki, V. Social robot co-design canvases: A participatory design framework. ACM Transactions on Human-Robot Interaction (THRI) 2021, 11, 1–39. [CrossRef]

- Hoffman, E.M.; Laurenzi, A.; Tsagarakis, N.G. The open stack of tasks library: Opensot: A software dedicated to hierarchical whole-body control of robots subject to constraints. IEEE Robotics & Automation Magazine 2024. [CrossRef]

- Ruscelli, F.; Laurenzi, A.; Tsagarakis, N.G.; Mingo Hoffman, E. Horizon: A trajectory optimization framework for robotic systems. Frontiers in Robotics and AI 2022, 9, 899025. [CrossRef]

- Sucan, I.A.; Moll, M.; Kavraki, L.E. The open motion planning library. IEEE Robotics & Automation Magazine 2012, 19, 72–82. [CrossRef]

- Kunchev, V.; Jain, L.; Ivancevic, V.; Finn, A. Path planning and obstacle avoidance for autonomous mobile robots: A review. In Proceedings of the International Conference on Knowledge-Based and Intelligent Information and Engineering Systems. Springer, 2006, pp. 537–544.

- Pham, H.; Smolka, S.A.; Stoller, S.D.; Phan, D.; Yang, J. A survey on unmanned aerial vehicle collision avoidance systems. arXiv preprint arXiv:1508.07723 2015.

- Ben-Ari, M.; Mondada, F. Elements of robotics; Springer Nature, 2017.

- Carvalho, M.V.L.d.; et al. A review of ROS based autonomous driving platforms to carry out automated driving functions 2022.

- Giribet, J.; Mas, I.; Roca, A.; Marzik, G.; Torre, G.; Castro, C.R.G. Base de Datos para Conduccion Autonoma. Sensores y Sincronizacion. Apertura (f stop), 1, 1–8.

- Yang, P. Efficient particle filter algorithm for ultrasonic sensor-based 2D range-only simultaneous localisation and mapping application. IET Wireless Sensor Systems 2012, 2, 394–401. [CrossRef]

- Sgorbissa, A.; Zaccaria, R. Planning and obstacle avoidance in mobile robotics. Robotics and Autonomous Systems 2012, 60, 628–638. [CrossRef]

- Fox, D.; Burgard, W.; Thrun, S. The dynamic window approach to collision avoidance. IEEE Robotics & Automation Magazine 1997, 4, 23–33. [CrossRef]

- Konolige, K. A gradient method for realtime robot control. In Proceedings of the Proceedings. 2000 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2000)(Cat. No. 00CH37113). IEEE, 2000, Vol. 1, pp. 639–646.

- Nourbakhsh, I.R.; Bobenage, J.; Grange, S.; Lutz, R.; Meyer, R.; Soto, A. An affective mobile robot educator with a full-time job. Artificial intelligence 1999, 114, 95–124. [CrossRef]

- Cechinel, A.K.; et al. Desenvolvimento de um sistema de logística para um robô móvel hospitalar utilizando mapas de grade 2018.

- Alatise, M.B.; Hancke, G.P. A review on challenges of autonomous mobile robot and sensor fusion methods. IEEE access 2020, 8, 39830–39846. [CrossRef]

- Kashyap, A.K.; Parhi, D.R. Multi-objective trajectory planning of humanoid robot using hybrid controller for multi-target problem in complex terrain. Expert Systems with Applications 2021, 179, 115110. [CrossRef]

- Magrin, C.E.; Todt, E. Multi-sensor fusion method based on artificial neural network for mobile robot self-localization. In Proceedings of the 2019 Latin American Robotics Symposium (LARS), 2019 Brazilian Symposium on Robotics (SBR) and 2019 Workshop on Robotics in Education (WRE). IEEE, 2019, pp. 138–143.

- Raja, P.; Pugazhenthi, S. Optimal path planning of mobile robots: A review. Int. J. Phys. Sci. 2012, 7, 1314–1320.

- Minguez, J.; Montano, L.; Khatib, O. Reactive collision avoidance for navigation with dynamic constraints. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2002, Vol. 1, pp. 588–594.

- Aeberhard, M.; Rauch, S.; Bahram, M.; Tanzmeister, G.; Thomas, J.; Pilat, Y.; Homm, F.; Huber, W.; Kaempchen, N. Experience, results and lessons learned from automated driving on Germany’s highways. IEEE Intelligent transportation systems magazine 2015, 7, 42–57. [CrossRef]

- Chen, C.T.; Quinn, R.D.; Ritzmann, R.E. A crash avoidance system based upon the cockroach escape response circuit. In Proceedings of the Proceedings of International Conference on Robotics and Automation. IEEE, 1997, Vol. 3, pp. 2007–2012.

- Li, H.; Savkin, A.V. An algorithm for safe navigation of mobile robots by a sensor network in dynamic cluttered industrial environments. Robotics and Computer-Integrated Manufacturing 2018, 54, 65–82. [CrossRef]

- Patle, B.; Pandey, A.; Jagadeesh, A.; Parhi, D. Path planning in uncertain environment by using firefly algorithm. Defence technology 2018, 14, 691–701. [CrossRef]

- Mar, M.; Chellapandi, V.P.; Yuan, L.; Wang, Z.; Dietz, E. Advanced Sensor Configurations for High-Speed Autonomous Racing Vehicles. IEEE Journal of Selected Areas in Sensors 2025. [CrossRef]

- Buehler, M.; Iagnemma, K.; Singh, S. The 2005 DARPA grand challenge: the great robot race; Vol. 36, Springer Science & Business Media, 2007.

- Ray, L.; Adolph, A.; Morlock, A.; Walker, B.; Albert, M.; Lever, J.H.; Dibb, J. Autonomous rover for polar science support and remote sensing. In Proceedings of the 2014 IEEE Geoscience and Remote Sensing Symposium. IEEE, 2014, pp. 4101–4104.

- Yan, Z.; Li, J.; Wu, Y.; Zhang, G. A Real-Time Path Planning Algorithm for AUV in Unknown Underwater Environment Based on Combining PSO and Waypoint Guidance. Sensors 2019, 19, 20. [CrossRef]

- Noh, S. Decision-Making Framework for Autonomous Driving at Road Intersections: Safeguarding Against Collision, Overly Conservative Behavior, and Violation Vehicles. IEEE Transactions on Industrial Electronics 2019, 66, 3275–3286. [CrossRef]

- Trautmann, E.; Ray, L.; Lever, J. Development of an autonomous robot for ground penetrating radar surveys of polar ice. In Proceedings of the 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2009, pp. 1685–1690.

- Yoon, S.; Lee, D.; Jung, J.; Shim, D.H. Spline-based RRT Using Piecewise Continuous Collision-checking Algorithm for Car-like Vehicles. Journal of Intelligent & Robotic Systems 2018, 90, 537–549. [CrossRef]

- Ferguson, D.; Howard, T.M.; Likhachev, M. Motion planning in urban environments: Part ii. In Proceedings of the 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2008, pp. 1070–1076.

- Bahram, M.; Ghandeharioun, Z.; Zahn, P.; Baur, M.; Huber, W.; Busch, F. Microscopic traffic simulation based evaluation of highly automated driving on highways. In Proceedings of the 17th International IEEE Conference on Intelligent Transportation Systems (ITSC). IEEE, 2014, pp. 1752–1757.

- Williams, G.; Drews, P.; Goldfain, B.; Rehg, J.M.; Theodorou, E.A. Aggressive driving with model predictive path integral control. In Proceedings of the 2016 ICRA. IEEE, 2016, pp. 1433–1440.

- Bresson, G.; Alsayed, Z.; Yu, L.; Glaser, S. Simultaneous localization and mapping: A survey of current trends in autonomous driving. IEEE Transactions on Intelligent Vehicles 2017, 2, 194–220. [CrossRef]

- Wang, N.; Li, X.; Zhang, K.; Wang, J.; Xie, D. A survey on path planning for autonomous ground vehicles in unstructured environments. Machines 2024, 12, 31. [CrossRef]

- Lee, K.; Lin, W.H.; Javed, T.; Madhusudhan, S.; Sher, B.; Feng, C. Roofus: Learning-based Robotic Moisture Mapping on Flat Rooftops with Ground Penetrating Radar. In Proceedings of the 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2024, pp. 11773–11780.

- Rossmadl, A.; Gandorfer, M.; Kopfinger, S.; Busboom, A. Autonomous robotics in agriculture–a preliminary techno-economic evaluation of a mechanical weeding system. In Proceedings of the ISR Europe 2023; 56th International Symposium on Robotics. VDE, 2023, pp. 405–411.

- Chakravarthy, A.; Ghose, D. Obstacle avoidance in a dynamic environment: A collision cone approach. IEEE Transactions on Systems, Man, and Cybernetics-Part A: Systems and Humans 1998, 28, 562–574. [CrossRef]

- Venkatnarayan, R.H.; Shahzad, M. Enhancing indoor inertial odometry with wifi. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies 2019, 3, 1–27. [CrossRef]

- Zhang, J.; Lyu, Y.; Patton, J.; Periaswamy, S.C.; Roppel, T. BFVP: A probabilistic UHF RFID tag localization algorithm using Bayesian filter and a variable power RFID model. IEEE Trans. Ind. Electron. 2018, 65, 8250–8259. [CrossRef]

- Hoy, M.; Matveev, A.S.; Savkin, A.V. Algorithms for collision-free navigation of mobile robots in complex cluttered environments: a survey. Robotica 2015, 33, 463–497. [CrossRef]

- Almasri, M.M.; Alajlan, A.M.; Elleithy, K.M. Trajectory planning and collision avoidance algorithm for mobile robotics system. IEEE Sensors journal 2016, 16, 5021–5028. [CrossRef]

- Rodríguez, D.A.; Tafur, C.L.; Daza, P.F.M.; Vidales, J.A.V.; Rincón, J.C.D. Inspection of aircrafts and airports using UAS: A review. Results in Engineering 2024, p. 102330. [CrossRef]

- Iturrate, I.; Antelis, J.M.; Kubler, A.; Minguez, J. A noninvasive brain-actuated wheelchair based on a P300 neurophysiological protocol and automated navigation. IEEE Transactions on Robotics 2009, 25, 614–627. [CrossRef]

- Xiao, H.; Li, Z.; Yang, C.; Yuan, W.; Wang, L. RGB-D sensor-based visual target detection and tracking for an intelligent wheelchair robot in indoors environments. International Journal of Control, Automation and Systems 2015, 13, 521–529. [CrossRef]

- Rufli, M.; Ferguson, D.; Siegwart, R. Smooth path planning in constrained environments. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation. IEEE, 2009, pp. 3780–3785.

- Schlegel, C. Fast local obstacle avoidance under kinematic and dynamic constraints for a mobile robot. In Proceedings of the Proceedings. 1998 IEEE/RSJ International Conference on Intelligent Robots and Systems. Innovations in Theory, Practice and Applications (Cat. No. 98CH36190). IEEE, 1998, Vol. 1, pp. 594–599.

- Philippsen, R.; Siegwart, R. Smooth and efficient obstacle avoidance for a tour guide robot. In Proceedings of the Proceedings. 2003 IEEE International Conference on Robotics and Automation, September 14-19, 2003, The Grand Hotel, Taipei, Taiwan. IEEE Operations Center, 2003, Vol. 1, pp. 446–451.

- Minguez, J.; Montano, L. Nearness diagram (ND) navigation: collision avoidance in troublesome scenarios. IEEE Transactions on Robotics and Automation 2004, 20, 45–59. [CrossRef]

- Cechinel, A.K.; Perez, A.L.F.; Plentz, P.D.; De Pieri, E.R. Autonomous mobile robot using distance and priority as logistics task cost. In Proceedings of the IECON 2020 The 46th Annual Conference of the IEEE Industrial Electronics Society. IEEE, 2020, pp. 569–574.

- SCHNEIDER, D.G.; Stemmer, M.R. SISTEMA DE LOCALIZAÇÃO DE UM ROBÔ MÓVEL BASEADO EM FILTRO DE KALMAN ESTENDIDO PARA SLAM COM KINECT EM AMBIENTES INTERNOS. In Proceedings of the Congresso Brasileiro de Automática-CBA, 2019, Vol. 1.

- Nievas, M.; Araguás, G.; Paz, C.J. Fusion de mapas mediante encuentros frecuentes para la exploracion multirobot.

- Rico, F.M.; Hernández, J.M.G.; Pérez-Rodríguez, R.; Peña-Narvaez, J.D.; Gómez-Jacinto, A.G. Open source robot localization for nonplanar environments. Journal of Field Robotics 2024, 41, 1922–1939. [CrossRef]

- Sansoni, S.; Gimenez, J.; Castro, G.; Tosetti, S.; Capraro, F. Optimizing exploration with a new uncertainty framework for active SLAM systems. Robotics and Autonomous Systems 2025, 193, 105059. [CrossRef]

- Preto, F.Z.; Neto, A.C.; Arronte, C.; Freitas, C.V.G.; Marazia, F.R.; Angélico, B.A. Follow-the-Gap Control for Fast and Safe Autonomous Driving on F1Tenth Virtual Circuit. In Proceedings of the Proceedings of the XVII Simpósio Brasileiro de Automação Inteligente (SBAI), Sociedade Brasileira de Automática, Brazil, 2025. In press.

- Schneider, D.G.; Stemmer, M.R. CNN-Based Multi-Object Detection and Segmentation in 3D LiDAR Data for Dynamic Industrial Environments. Robotics 2024, 13, 174. [CrossRef]

- Udugama, B. Mini bot 3d: A ros based gazebo simulation. arXiv preprint arXiv:2302.06368 2023.

- Bedın, S.; Civera, J.; Nitsche, M. Teach and Repeat con calibracion odométrica para robots omnidireccionales con sensor LiDAR.

- Zhu, K.; Zhang, T. Deep reinforcement learning based mobile robot navigation: A review. Tsinghua Science and Technology 2021, 26, 674–691. [CrossRef]

- Mendez, J.; Molina, M.; Rodriguez, N.; Cuellar, M.P.; Morales, D.P. Camera-LiDAR multi-level sensor fusion for target detection at the network edge. Sensors 2021, 21, 3992. [CrossRef]

- Schneider, D.G.; da Silva, L.L.; Diehl, P.; Leite, A.H.R.; Bastos, G.S. Robot navigation by gesture recognition with ros and kinect. In Proceedings of the 2015 12th Latin American Robotics Symposium and 2015 3rd Brazilian Symposium on Robotics (LARS-SBR). IEEE, 2015, pp. 145–150.

- Ling, Y.; Shen, S. Building maps for autonomous navigation using sparse visual SLAM features. In Proceedings of the 2017 IEEE/RSJ IROS. IEEE, 2017, pp. 1374–1381.

- Snape, J.; Van Den Berg, J.; Guy, S.J.; Manocha, D. The hybrid reciprocal velocity obstacle. IEEE Transactions on Robotics 2011, 27, 696–706. [CrossRef]

- Clemente, E.; Meza-Sánchez, M.; Bugarin, E.; Aguilar-Bustos, A.Y. Adaptive behaviors in autonomous navigation with collision avoidance and bounded velocity of an omnidirectional mobile robot. Journal of Intelligent & Robotic Systems 2018, 92, 359–380. [CrossRef]

- Liu, D.; Yang, F.; Liao, X.; Lyu, X. DIABLO: A 6-DoF Wheeled Bipedal Robot Composed Entirely of Direct-Drive Joints. In Proceedings of the 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2024, pp. 3605–3612.

- De Cristóforis, P.; Nitsche, M.; Krajník, T.; Pire, T.; Mejail, M. Hybrid vision-based navigation for mobile robots in mixed indoor/outdoor environments. Pattern Recognit. Lett. 2015, 53, 118–128. [CrossRef]

- Macenski, S.; Booker, M.; Wallace, J. Open-Source, Cost-Aware Kinematically Feasible Planning for Mobile and Surface Robotics. arXiv preprint arXiv:2401.13078 2024.

- Singh, R.; Bera, T.K.; Chatti, N. A real-time obstacle avoidance and path tracking strategy for a mobile robot using machine-learning and vision-based approach. Simulation 2022, 98, 789–805. [CrossRef]

- Paul, N.; Chung, C. Application of HDR algorithms to solve direct sunlight problems when autonomous vehicles using machine vision systems are driving into sun. Computers in Industry 2018, 98, 192–196. [CrossRef]

- Zhang, X.; Zhu, X. Autonomous path tracking control of intelligent electric vehicles based on lane detection and optimal preview method. Expert Systems with Applications 2019, 121, 38–48. [CrossRef]

- Chicaiza, F.A.; Slawinski, E.; Mut, V. Teleoperacion de un Manipulador Dual Movil con Torso.

- Liu, Y.; Li, J.; Li, Z. An indoor navigation control strategy for a brain-actuated mobile robot. In Proceedings of the 2018 3rd International Conference on Advanced Robotics and Mechatronics (ICARM). IEEE, 2018, pp. 13–18.

- Gasparetto, A.; Boscariol, P.; Lanzutti, A.; Vidoni, R. Path planning and trajectory planning algorithms: A general overview. Motion and Operation Planning of Robotic Systems: Background and Practical Approaches 2015, pp. 3–27.

- Wei, S.; Zefran, M. Smooth path planning and control for mobile robots. In Proceedings of the Proceedings. 2005 IEEE Networking, Sensing and Control, 2005. IEEE, 2005, pp. 894–899.

- Duan, Y.; Chen, X.; Houthooft, R.; Schulman, J.; Abbeel, P. Benchmarking deep reinforcement learning for continuous control. In Proceedings of the International conference on machine learning. PMLR, 2016, pp. 1329–1338.

- Herrmann, T.; Wischnewski, A.; Hermansdorfer, L.; Betz, J.; Lienkamp, M. Real-time adaptive velocity optimization for autonomous electric cars at the limits of handling. IEEE Transactions on Intelligent Vehicles 2020, 6, 665–677. [CrossRef]

- Bayer, J.; Cížek, P.; Faigl, J. Autonomous multi-robot exploration with ground vehicles in darpa subterranean challenge finals. Field Robotics 2023, 3, 266–300.

- Chung, H.; Ojeda, L.; Borenstein, J. Accurate mobile robot dead-reckoning with a precision-calibrated fiber-optic gyroscope. IEEE transactions on robotics and automation 2001, 17, 80–84.

- Schöneberg, E.; Schröder, M.; Görges, D.; Schotten, H.D. Trajectory Planning with Model Predictive Control for Obstacle Avoidance Considering Prediction Uncertainty. arXiv preprint arXiv:2504.19193 2025. [CrossRef]

- Borenstein, J.; Koren, Y. Real-time obstacle avoidance for fast mobile robots. IEEE Transactions on systems, Man, and Cybernetics 1989, 19, 1179–1187. [CrossRef]

- Schratter, M.; Zubaca, J.; Mautner-Lassnig, K.; Renzler, T.; Kirchengast, M.; Loigge, S.; Stolz, M.; Watzenig, D. LiDAR-based mapping and localization for autonomous racing. In Proceedings of the Proceedings of the ICRA Workshop on Opportunities and Challenges of Autonomous Racing, 2021, pp. 1–6.

- Kwok, N.M.; Ha, Q.P.; Huang, S.; Dissanayake, G.; Fang, G. Mobile robot localization and mapping using a Gaussian sum filter. International Journal of Control, Automation and Systems 2007.

- Karle, P.; Török, F.; Geisslinger, M.; Lienkamp, M. Mixnet: Physics constrained deep neural motion prediction for autonomous racing. IEEE Access 2023, 11, 85914–85926. [CrossRef]

- Liu, Y.; Wang, S.; Xie, Y.; Xiong, T.; Wu, M. A review of sensing technologies for indoor autonomous mobile robots. Sensors 2024, 24, 1222. [CrossRef]

- Schubert, S.; Neubert, P.; Garg, S.; Milford, M.; Fischer, T. Visual Place Recognition: A Tutorial [Tutorial]. IEEE Robotics & Automation Magazine 2023, 31, 139–153. [CrossRef]

- Schultz, A.C.; Adams, W. Continuous localization using evidence grids. In Proceedings of the Proceedings. 1998 IEEE International Conference on Robotics and Automation (Cat. No. 98CH36146). IEEE, 1998, Vol. 4, pp. 2833–2839.

- Fang, S.; Li, H. Multi-vehicle cooperative simultaneous LiDAR SLAM and object tracking in dynamic environments. IEEE Transactions on Intelligent Transportation Systems 2024, 25, 11411–11421. [CrossRef]

- Atas, F.; Cielniak, G.; Grimstad, L. Elevation state-space: Surfel-based navigation in uneven environments for mobile robots. In Proceedings of the 2022 IEEE/RSJ IROS. IEEE, 2022, pp. 5715–5721.

- Missura, M.; Bennewitz, M. Fast footstep planning with aborting a. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2021, pp. 2964–2970.

- Nobis, F.; Betz, J.; Hermansdorfer, L.; Lienkamp, M. Autonomous racing: A comparison of slam algorithms for large scale outdoor environments. In Proceedings of the Proceedings of the 2019 3rd international conference on virtual and augmented reality simulations, 2019, pp. 82–89.

- Stolfi, D.H.; Brust, M.R.; Danoy, G.; Bouvry, P. UAV-UGV-UMV multi-swarms for cooperative surveillance. Frontiers in Robotics and AI 2021, 8, 616950. [CrossRef]

- Pivtoraiko, M.; Knepper, R.A.; Kelly, A. Differentially constrained mobile robot motion planning in state lattices. Journal of Field Robotics 2009, 26, 308–333. [CrossRef]

- Quinlan, S.; Khatib, O. Elastic bands: Connecting path planning and control. In Proceedings of the [1993] Proceedings IEEE International Conference on Robotics and Automation. IEEE, 1993, pp. 802–807.

- Khatib, M.; Jaouni, H.; Chatila, R.; Laumond, J.P. Dynamic path modification for car-like nonholonomic mobile robots. In Proceedings of the Proceedings of International Conference on Robotics and Automation. IEEE, 1997, Vol. 4, pp. 2920–2925.

- Karamitsos, G.; Bechtsis, D.; Tsolakis, N.; Vlachos, D. Assessing Path Planning Algorithms of Mobile Robots: A ROS-Based Simulation Framework. In Disruptive Technologies and Optimization Towards Industry 4.0 Logistics; Springer, 2024; pp. 139–160.

- Kunz Cechinel, A.; De Pieri, E.R. Centralized multi-robot logistic system: An approach using the island model genetic algorithm as task scheduler. International Journal of Advanced Robotic Systems 2024, 21, 17298806241279595.

- Atas, F.; Cielniak, G.; Grimstad, L. Benchmark of sampling-based optimizing planners for outdoor robot navigation. In Proceedings of the International Conference on Intelligent Autonomous Systems. Springer, 2022, pp. 231–243.

- De Carvalho, M.V.; Simoni, R.; Yoshioka, L.R.; FJ Filho, J.; Kawakami, B.M. A Performance Evaluation of Open Source Autonomous Driving Frameworks: Case Studies of Apollo and Autoware. IEEE Access 2025.

- Kamon, I.; Rivlin, E.; Rimon, E. A new range-sensor based globally convergent navigation algorithm for mobile robots. In Proceedings of the Proceedings of IEEE International Conference on Robotics and Automation. IEEE, 1996, Vol. 1, pp. 429–435.

- Lumelsky, V.J.; Skewis, T. Incorporating range sensing in the robot navigation function. IEEE Transactions on Systems, Man, and Cybernetics 1990, 20, 1058–1069. [CrossRef]

- Lumelsky, V.J.; Stepanov, A.A. Path-planning strategies for a point mobile automaton moving amidst unknown obstacles of arbitrary shape. Algorithmica 1987, 2, 403–430. [CrossRef]

- Simmons, R. The curvature-velocity method for local obstacle avoidance. In Proceedings of the Proceedings of IEEE international conference on robotics and automation. IEEE, 1996, Vol. 4, pp. 3375–3382.

- Brock, O.; Khatib, O. High-speed navigation using the global dynamic window approach. In Proceedings of the Proceedings 1999 IEEE International Conference on Robotics and Automation (Cat. No. 99CH36288C). IEEE, 1999, Vol. 1, pp. 341–346.

- Medina-Santiago, A.; Morales-Rosales, L.A.; Hernández-Gracidas, C.A.; Algredo-Badillo, I.; Pano-Azucena, A.D.; Orozco Torres, J.A. Reactive obstacle–avoidance systems for wheeled mobile robots based on artificial intelligence. Applied Sciences 2021, 11, 6468. [CrossRef]

- Ulrich, I.; Borenstein, J. VFH+: Reliable obstacle avoidance for fast mobile robots. In Proceedings of the Proceedings. 1998 IEEE international conference on robotics and automation (Cat. No. 98CH36146). IEEE, 1998, Vol. 2, pp. 1572–1577.

- Ulrich, I.; Borenstein, J. VFH/sup*: Local obstacle avoidance with look-ahead verification. In Proceedings of the Proceedings 2000 ICRA. Millennium Conference. IEEE International Conference on Robotics and Automation. Symposia Proceedings (Cat. No. 00CH37065). IEEE, 2000, Vol. 3, pp. 2505–2511.

- Rostami, S.M.H.; Sangaiah, A.K.; Wang, J.; Kim, H.j. Real-time obstacle avoidance of mobile robots using state-dependent Riccati equation approach. EURASIP Journal on Image and Video Processing 2018, 2018, 79. [CrossRef]

- Borrero, G.H.; Becker, M.; Archila, J.F.; Bonito, R. Fuzzy control strategy for the adjustment of the front steering angle of a 4WSD agricultural mobile robot. In Proceedings of the 2012 7th Colombian Computing Congress (CCC). IEEE, 2012, pp. 1–6.

- Lafmejani, A.S.; Farivarnejad, H.; Berman, S. H∞-optimal tracking controller for three-wheeled omnidirectional mobile robots with uncertain dynamics. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2020, pp. 7587–7594.

- Gonzalez, R.; Fiacchini, M.; Alamo, T.; Guzman, J.L.; Rodriguez, F. Adaptive control for a mobile robot under slip conditions using an LMI-based approach. European Journal of Control 2010, 16, 144–155. [CrossRef]

- Zhu, Y.; Huang, Y.; Su, J.; Pu, C. Active disturbance rejection control for wheeled mobile robots with parametric uncertainties. IFAC-PapersOnLine 2020, 53, 1355–1360. [CrossRef]

- Schena, F. Development of an automated benchmark for the analysis of Nav2 controllers. PhD thesis, Politecnico di Torino, 2024.

- Kabanov, A. Feedback linearized trajectory-tracking control of a mobile robot. In Proceedings of the MATEC Web of Conferences. EDP Sciences, 2017, Vol. 129, p. 03029. [CrossRef]

- Zhang, K.; Chai, B.; Tan, M. Optimal enhanced backstepping method for trajectory tracking control of the wheeled mobile robot. Optimal Control Applications and Methods 2024, 45, 2762–2790. [CrossRef]

- Tassa, Y.; Erez, T.; Todorov, E. Synthesis and stabilization of complex behaviors through online trajectory optimization. In Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2012, pp. 4906–4913.

- Ibrahim, A.E.S.B. Wheeled Mobile Robot Trajectory Tracking using Sliding Mode Control. J. Comput. Sci. 2016, 12, 48–55. [CrossRef]

- Zhang, J.; Li, S.; Meng, H.; Li, Z.; Sun, Z. Variable gain based composite trajectory tracking control for 4-wheel skid-steering mobile robots with unknown disturbances. Control Engineering Practice 2023, 132, 105428. [CrossRef]

- Li, N.; Borja, P.; Scherpen, J.M.; Van Der Schaft, A.; Mahony, R. Passivity-based trajectory tracking and formation control of nonholonomic wheeled robots without velocity measurements. IEEE Transactions on Automatic Control 2023, 68, 7951–7957. [CrossRef]

- Macenski, S.; Singh, S.; Martin, F.; Gines, J. Regulated Pure Pursuit for Robot Path Tracking. Auton. Robots 2023. [CrossRef]

- Chen, Y.; Zhu, J.J. Pure Pursuit Guidance for Car-Like Ground Vehicle Trajectory Tracking. In Proceedings of the ASME 2017 Dynamic Systems and Control Conference. American Society of Mechanical Engineers, 2017, pp. V002T21A015–V002T21A015.

- Sidiropoulos, A.; Konstantinidis, D.; Karamanos, X.; Mastos, T.; Apostolou, K.; Chatzis, T.; Papaspyropoulou, M.; Marini, K.; Karamitsos, G.; Theodoridou, C.; et al. A Novel Autonomous Robotic Vehicle-Based System for Real-Time Production and Safety Control in Industrial Environments. Computers 2025, 14, 188. [CrossRef]

- Schubert, S.; Neubert, P.; Garg, S.; Milford, M.; Fischer, T. Visual Place Recognition: A Tutorial. IEEE Robotics & Automation Magazine 2024, 30, 139–154. [CrossRef]

- Irshad, M.Z.; Comi, M.; Lin, Y.C.; Heppert, N.; Valada, A.; Ambrus, R.; Kira, Z.; Tremblay, J. Neural Fields in Robotics: A Survey. arXiv preprint arXiv:2410.20220 2024.

- Luo, R.C.; Chang, C.C. Multisensor fusion and integration: A review on approaches and its applications in mechatronics. IEEE Transactions on Industrial Informatics 2011, 8, 49–60. [CrossRef]

- Ruan, S.; Wang, R.; Shen, X.; Liu, H.; Xiao, B.; Shi, J.; Zhang, K.; Huang, Z.; Liu, Y.; Chen, E.; et al. A Survey of Multi-sensor Fusion Perception for Embodied AI: Background, Methods, Challenges and Prospects. arXiv preprint arXiv:2506.19769 2025.

- Panduru, K.; Walsh, J.; et al. Exploring the Unseen: A Survey of Multi-Sensor Fusion and the Role of Explainable AI (XAI) in Autonomous Vehicles. Sensors (Basel, Switzerland) 2025, 25, 856. [CrossRef]

- Raychaudhuri, S.; Chang, A.X. Semantic Mapping in Indoor Embodied AI–A Comprehensive Survey and Future Directions. arXiv preprint arXiv:2501.05750 2025.

- Karur, K.; Sharma, N.; Dharmatti, C.; Siegel, J.E. A Survey of Path Planning Algorithms for Mobile Robots. Vehicles 2021, 3, 448–468. [CrossRef]

- Qin, H.; Shao, S.; Wang, T.; Yu, X.; Jiang, Y.; Cao, Z. Path planning for ´ mobile robots using bacterialalgorithms for mobile robots. Drones 2023, 7, 211.

- Yang, G.; An, L.; Zhao, C. Collision/Obstacle Avoidance Coordination of Multi-Robot Systems: A Survey. In Proceedings of the Actuators. MDPI, 2025, Vol. 14, p. 85. [CrossRef]

- Lauri, M.; Hsu, D.; Pajarinen, J. Partially Observable Markov Decision Processes in Robotics: A Survey. IEEE Transactions on Robotics 2022, 39, 21–40. [CrossRef]

- Iovino, M.; Scukins, E.; Styrud, J.; Ögren, P.; Smith, C. A survey of behavior trees in robotics and ai. Robotics and Autonomous Systems 2022, 154, 104096. [CrossRef]

- Xiao, X.; Liu, B.; Warnell, G.; Stone, P. A Survey of Motion Control for Mobile Robot Navigation Using Machine Learning. In Proceedings of the AAAI Spring Symposium Series, 2021.

- Rybczak, M.; Popowniak, N.; Lazarowska, A. A Survey of Machine Learning Approaches for Mobile Robot Control. Robotics 2024, 13, 12. [CrossRef]

- Nascimento, T.P.; Dórea, C.E.; Gonçalves, L.M.G. Nonholonomic mobile robots’ trajectory tracking model predictive control: a survey. Robotica 2018, 36, 676–696. [CrossRef]

- Dal’Col, L.; Oliveira, M.; Santos, V. Joint Perception and Prediction for Autonomous Driving: A Survey. IEEE Transactions on Intelligent Transportation Systems 2024. arXiv:2412.14088. [CrossRef]

- Luo, J.; Zhou, X.; Zeng, C.; Jiang, Y.; Qi, W.; Xiang, K.; Pang, M.; Tang, B. Robotics perception and control: Key technologies and applications. Micromachines 2024, 15, 531. [CrossRef]

- Guo, H.; Wu, F.; Qin, Y.; Li, R.; Li, K.; Li, K. Recent trends in task and motion planning for robotics: A survey. ACM Computing Surveys 2023, 55, 1–36. [CrossRef]

- Golroudbari, A.A.; Sabour, M.H. Recent advancements in deep learning applications and methods for autonomous navigation: A comprehensive review. arXiv preprint arXiv:2302.11089 2023.

- Jeon, H.; Park, K.; Sun, J.Y.; Kim, H.Y. Particle-armored liquid robots. Science Advances 2025, 11, eadt5888. [CrossRef]

- Zhai, Y.; Yan, J.; De Boer, A.; Faber, M.; Gupta, R.; Tolley, M.T. Monolithic Desktop Digital Fabrication of Autonomous Walking Robots. Advanced Intelligent Systems 2025, p. 2400876.

- Tkachenko, E.; Merkulov, D.; Pelevina, D.; Turkov, V.; Vinogradova, A.; Naletova, V. Mathematical model of a mobile robot with a magnetizable material in a uniform alternating magnetic field. Meccanica 2023, 58, 357–369. [CrossRef]

- Ramirez, J.P.; Hamaza, S. Multimodal locomotion: next generation aerial–terrestrial mobile robotics. Advanced Intelligent Systems 2023, p. 2300327. [CrossRef]

- Low, K.; Hu, T.; Mohammed, S.; Tangorra, J.; Kovac, M. Perspectives on biologically inspired hybrid and multi-modal locomotion. Bioinspiration & biomimetics 2015, 10, 020301. [CrossRef]

- Swaminathan, N.; Reddy, S.R.P.; RajaShekara, K.; Haran, K.S. Flying cars and eVTOLs—Technology advancements, powertrain architectures, and design. IEEE Transactions on Transportation Electrification 2022, 8, 4105–4117. [CrossRef]

- Daler, L.; Mintchev, S.; Stefanini, C.; Floreano, D. A bioinspired multi-modal flying and walking robot. Bioinspiration & biomimetics 2015, 10, 016005. [CrossRef]

- Zhang, R.; Wu, Y.; Zhang, L.; Xu, C.; Gao, F. Tie: An autonomous and adaptive terrestrial-aerial quadrotor. arXiv preprint arXiv:2109.04706 2021.

- Fagundes-Júnior, L.A.; Barcelos, C.O.; Silvatti, A.P.; Brandão, A.S. UAV–UGV Formation for Delivery Missions: A Practical Case Study. Drones 2025, 9, 48. [CrossRef]

- Alzu’bi, H.; Mansour, I.; Rawashdeh, O. Loon copter: Implementation of a hybrid unmanned aquatic–aerial quadcopter with active buoyancy control. Journal of field Robotics 2018, 35, 764–778. [CrossRef]

- Chernousko, F. Locomotion principles for mobile robotic systems. Procedia Computer Science 2017, 103, 613–617. [CrossRef]

- Shamsuddoha, M.; Nasir, T.; Fawaaz, M.S. Humanoid Robots like Tesla Optimus and the Future of Supply Chains: Enhancing Efficiency, Sustainability, and Workforce Dynamics. Automation 2025, 6, 9. [CrossRef]

- Kawasaki Heavy Industries. Showcasing CORLEO – A New Type of Futuristic, Off-Road Personal Mobility Vehicle. https://global.kawasaki.com/en/corp/newsroom/news/detail/?f=20250403_9193, 2025. Press release, accessed on 5 July 2025.

- Tang, Y.; Zhao, C.; Wang, J.; Zhang, C.; Sun, Q.; Zheng, W.X.; Du, W.; Qian, F.; Kurths, J. Perception and navigation in autonomous systems in the era of learning: A survey. IEEE Transactions on Neural Networks and Learning Systems 2022, 34, 9604–9624. [CrossRef]

- Musa, M.A.; Mashori, S. Solar Powered Autonomous RC Robot. Progress in Engineering Application and Technology 2023, 4, 133–144.

- Cha, Y.; Hong, S. Energy harvesting from walking motion of a humanoid robot using a piezoelectric composite. Smart Materials and Structures 2016, 25, 10LT01. [CrossRef]

- Seo, J.; Paik, J.; Yim, M. Modular reconfigurable robotics. Annual Review of Control, Robotics, and Autonomous Systems 2019, 2, 63–88.

- Shorinwa, O.; Halsted, T.; Yu, J.; Schwager, M. Distributed optimization methods for multi-robot systems: Part 1—a tutorial [tutorial]. IEEE Robotics & Automation Magazine 2024, 31, 121–138. [CrossRef]