Submitted:

03 March 2026

Posted:

04 March 2026

You are already at the latest version

Abstract

Keywords:

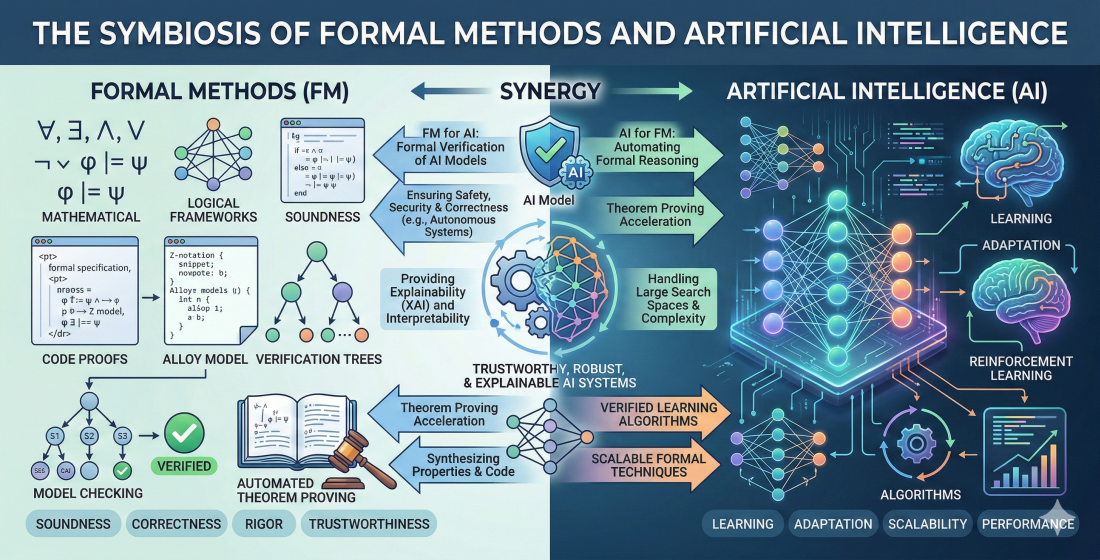

1. Introduction

1.1. Formal Methods Background

- Validation: helping to establish a correct specification; and

- Verification: establishing a correct implementation with respect to a formal specification.

- Abstract Interpretation: approximating program behaviour to prove correctness or detect errors.

- Model-Based Testing: generating test cases from a formal model.

- Model Checking: exhaustively verifying system behaviour against a formal specification.

- Proof Assistants: tools for interactively constructing and verifying mathematical proofs.

- Refinement: systematically refining a high-level specification into a correct implementation.

- Static Analysis: analyzing program code meaning to detect errors or enforce constraints.

- Verification: proving the correctness of a program using logical inference rules.

- 1.

- Formal Specification: This is applicable to the system requirements. Formal analysis and proofs are optional. The specification can be used to aid testing. It is the most cost-effective approach using formal methods.

- 2.

- Formal Verification: The implemented program is produced in a more mathematically formal way. Typically, the approach uses proof or refinement based on a formal specification. This is significantly more costly than just using formal specification.

- 3.

- Theorem Proving: This involves the use of a theorem prover tool. The approach allows formal machine-checked proofs. A proof of the entire system is possible, but scaling is difficult. The approach can be very expensive and hard.

1.2. Formal Methods and AI

2. Methodology

3. Results

Question 1: Is Artificial Intelligence useful for formal methods?

Publication 1.1

Explains that Artificial Intelligence turns to formal logic for powerful and well-behaved knowledge representation languages.

• Enriching AI with Logic: Aims to show how Artificial Intelligence can be enriched by incorporating recent advances in formal logic, including extensions of classical logic for reasoning about time, knowledge, or uncertainty.

• Borrowing Formal Techniques: Details that techniques from formal logic have been borrowed by AI researchers, often for applications outside their original context, resulting in useful and constructive work.

Cited by 118

Publication 1.2

Examines state-of-the-art formal methods for the verification and validation of machine learning (a subset of AI) systems.

• Verifying ML Models: Reviews research works dedicated to the verification of specific machine learning models, such as support vector machines and decision tree ensembles.

• Verifying ML Phases: Summarizes formal verification efforts for the data preparation, training phases, and the resulting trained machine learning models, including neural networks.

Cited by 128

Publication 1.3

Argues that autoformalization, an AI system that converts natural language into machine-verifiable formalization, is a promising direction for creating automated mathematical reasoning systems.

• Automating Formal Proofs: Discusses the potential of autoformalization to automate the creation of computer verifiable proofs, which traditionally require significant human effort.

• AI Techniques: Explains that advances in machine learning techniques, such as neural networks and reinforcement learning, are paving the way for strong automated reasoning and formalization systems.

Cited by 94

Publication 1.4

Discusses the use of computer programs for theorem proving, which is a key component of formal methods, and its relationship to Artificial Intelligence (AI).

• Early Program Results: Describes early programs that applied formal logic to prove a large number of theorems from Principia Mathematica in the propositional and predicate calculus, demonstrating the utility of AI for formal methods.

• Expert Systems for Proof: Presents a plea for ’expert systems’ in the context of theorem proving, suggesting that partial, efficient strategies should be emphasized over general algorithms for proving theorems on a machine.

Cited by 15

Publication 1.5

Proposes B-repair, an approach for automated repair of faulty models written in the B formal specification language, which uses machine learning techniques to estimate the quality of suggested repairs.

• AI and Model Checking Integration: Combines model checking and machine learning-guided techniques to automatically find and repair faults during the design of software systems.

• AI for Repair Quality Estimation: Uses machine learning techniques to learn the features of state transitions, which helps in ranking suggested repairs and selecting the best one for revising the formal model.

Cited by 22

Publication 1.6

Explores the safety and reliability challenges arising from the increasing use of Artificial Intelligence (AI) agents, such as deep neural network models, in controlling physical and mechanical systems.

• Formal Verification of NNs: Focuses on applying formal verification techniques to neural network models, including those used in autonomous systems, to provide safety guarantees that testing and falsification methods lack.

• NN-Controlled Robot Safety: Considers the formal verification of the safety of an autonomous robot equipped with a Neural Network controller that processes sensor data (LiDAR images) to produce control actions, demonstrating a practical application of formal methods to an AI-controlled system.

Cited by 208

Publication 1.7

Highlights that Large Language Models (LLMs), a form of Artificial Intelligence, can significantly enhance the usability, efficiency, and scalability of existing formal methods (FM) tools.

• Advancing Formal Methods Tasks: Explores how LLMs can drive advancements in formal methods by automating the formalization of specifications, leveraging LLMs to enhance theorem proving, and developing intelligent model checkers.

• AI as Intelligent Assistants: Details that AI, specifically LLMs, can act as intelligent assistants to formal methods by automating tedious tasks and enhancing the capabilities of existing FM algorithms for verification processes.

Cited by 31

Publication 1.8

Introduces MLFMF, a collection of data sets for benchmarking recommendation systems that use machine learning to support the formalization of mathematics with proof assistants.

• Evaluates ML Approaches: Reports baseline results using standard graph and word embeddings, tree ensembles, and instance-based learning algorithms to evaluate machine learning approaches to formalized mathematics.

• Aiding Formalization Efforts: Describes how machine learning methods are used to address premise selection, such as recommending theorems useful for proving a given statement, and how various neural networks and reinforcement learning are applied to automated theorem proving.

Cited by 13

Publication 1.9

Reviews methodologies that employ machine learning concepts, specifically Bayesian methods and Gaussian Processes, for model checking and system design/parameter synthesis against stochastic dynamical models.

• Convergence of Ideas: Focuses on the increasingly fertile convergence of ideas from machine learning and formal modelling, highlighting it as a growing research area.

• Bayesian Methods for Efficiency: Details the use of powerful methodologies from Bayesian machine learning and reinforcement learning to obtain efficient algorithms for statistical model checking and parameter synthesis.

Cited by 13

Publication 1.10

Explains that a model of learning from positive and negative examples, a core aspect of Artificial Intelligence/Machine Learning, can be naturally described using the means of Formal Concept Analysis (FCA), a formal method.

• Describing AI Models: Demonstrates the usefulness of Formal Concept Analysis by applying its language to give natural descriptions of standard Machine Learning models, including version spaces and decision trees.

• Comparing Approaches: Suggests that using the language of FCA allows for the comparison of several machine learning approaches and the employment of standard FCA techniques within the domain of machine learning.

Cited by 206

Summary:

Question 2: Can Generative AI be used to aid formal methods?

Publication 2.1

Exploits the dialog capability of large language models (LLMs) to iteratively steer them toward responses consistent with a correctness specification using formal methods.

• Self-Monitoring Architecture: Describes a self-monitoring and iterative prompting architecture that uses formal methods to automatically detect errors and inconsistencies in LLM responses, focusing on applications in autonomous systems.

• Formal Methods Integration: Presents a loose coupling between the LLM and a counterexample generator, allowing the use of different formal methods, such as model checking and SMT solving, to achieve a high-assurance solution.

Cited by 106

Publication 2.2

Explores the application of transformer-based language models, a form of Generative AI, to the domain of automated theorem proving, a key aspect of formal methods.

• GPT-f Proof Assistant: Presents an automated prover and proof assistant, GPT-f, which uses generative language modeling for the Metamath formalization language.

• Practical Contribution of AI: Contributed new short proofs that were adopted by the formal mathematics community, demonstrating the practical utility of a deep-learning based system in this field.

Cited by 409

Publication 2.3

Highlights how the advanced learning capabilities and adaptability of Large Language Models (LLMs), a form of Generative AI, can significantly enhance the usability, efficiency, and scalability of existing Formal Methods (FM) tools.

• Driving FM Advancements: Examines how LLMs can drive advancements in existing formal methods and tools, focusing on utilizing LLMs for automated formalization of specifications, enhancing theorem proving, and developing intelligent model checkers.

• Automating Verification Tasks: Proposes using LLMs to make formal methods more efficient and effective by serving as intelligent assistants that automate tedious tasks, such as generating structured code and symbolic representations for verification processes.

Cited by 31

Publication 2.4

Introduces an open-source language model, Goedel-Prover, that achieves state-of-the-art performance in automated formal proof generation for mathematical problems, directly demonstrating the use of generative AI in formal methods.

• Training LLMs for Formalization: Explains the process of training Large Language Models (LLMs) to convert natural language math problems into equivalent formal statements in Lean 4, which is a key step in applying AI to formal methods.

• Developing Formal Proof Datasets: Demonstrates training a series of provers to develop a large dataset of formal proofs, showcasing how generative AI can overcome the scarcity of formalized mathematical data.

Cited by 75

Publication 2.5

Introduces Goedel-Prover-V2, an open-source language model series that achieves a new state-of-the-art in automated theorem proving (a key component of formal methods).

• Generating Formal Proofs: Demonstrates the use of a generative AI model to create machine-verifiable proofs in formal languages like Lean, which is essential for formal methods.

• Compiler-Guided Proof Correction: Presents a method where the model iteratively revises its proofs by utilizing feedback from the Lean compiler (verifier-guided self-correction), integrating verification feedback into the proof generation process.

Cited by 29

Publication 2.6

Introduces DeepSeek-Prover-V1.5, an open-source language model specifically designed for theorem proving in Lean 4, demonstrating a direct application of generative AI in formal methods.

• Influences Mathematical Reasoning: Discusses how recent advancements in large language models have significantly influenced mathematical reasoning and theorem proving in artificial intelligence, confirming the relevance of generative AI in this domain.

• Employs Proof Generation Strategies: Presents two common strategies language models use in formal theorem proving: proof-step generation and whole-proof generation, detailing mechanisms for utilizing generative AI in creating formal proofs.

Cited by 42

Publication 2.7

Proposes a method to address the scaling challenges of formal methods for image-based neural network controllers by training a Generative Adversarial Network (GAN) to map states to plausible input images.

• Reducing Input Dimension: Shows that concatenating the generative network with the control network results in a network with a low-dimensional input space, enabling the use of existing closed-loop verification tools to obtain formal guarantees.

• Applying to Safety Guarantees: Applies the approach to provide safety guarantees for an image-based neural network controller for an autonomous aircraft taxi problem.

Cited by 85

Publication 2.8

Introduces Kimina-Prover Preview, a large language model designed for formal theorem proving, demonstrating its application in Lean 4 proof generation.

• Emulates Human Reasoning: Employs a reasoning-driven exploration paradigm and a structured formal reasoning pattern to emulate human problem-solving strategies in the Lean 4 proof assistant, iteratively generating and refining proof steps.

• State-of-the-Art Performance: Achieves state-of-the-art results on the miniF2F benchmark for automated theorem proving, confirming the effectiveness of generative AI models in formal verification.

Cited by 74

Publication 2.9

Introduces an approach to generate extensive Lean 4 proof data from mathematical competition problems to address the lack of training data for large language models (LLMs) in formal theorem proving.

• Automated Theorem Proving: Details how the fine-tuned DeepSeekMath model, using the synthetic dataset, achieved high whole-proof generation accuracies on the Lean 4 miniF2F test, surpassing baseline models like GPT-4, demonstrating the LLM’s capability in automated theorem proving (a core formal method task).

• Formal Proof Generation: Explains that generative AI models, like LLMs, can be utilized to automatically generate and verify formal proofs in environments such as Lean 4, overcoming the challenge of manually crafting formal proofs.

Cited by 147

Publication 2.10

Explores the use of generative AI, specifically Large Language Models like GPT-4, to assist in obtaining correct executable simulation models, which are a form of formal specification.

• Uses DEVS Formalism: Adopts the Discrete Event System Specification (DEVS), a sound modeling and simulation formalism, as a suitable candidate for exploring the limits of LLM capabilities to generate correct generalized simulation models.

• Prototype Tool Development: Introduces a methodology and tool, DEVS Copilot, which transforms natural language descriptions of systems into a DEVS executable simulation model using AI, succeeding at producing correct DEVS simulations in a case study.

Cited by 5

Summary:

Question 3: What are the latest papers investigating the use of Generative AI to support formal methods?

Publication 3.1

Presents a novel approach integrating Large Language Models (LLMs) with Formal Verification for automatic software vulnerability repair, using Bounded Model Checking (BMC) to identify vulnerabilities and extract counterexamples for the LLM.

• Introduces ESBMC-AI Framework: Introduces the ESBMC-AI framework as a proof of concept, leveraging the Efficient SMT-based Context-Bounded Model Checker (ESBMC) and a pre-trained transformer model to detect and fix errors in C programs.

• Evaluates Automatic Repair Capabilities: Evaluates the approach on 50,000 C programs and demonstrates its capability to automate the detection and repair of issues such as buffer overflow, arithmetic overflow, and pointer dereference failures with high accuracy.

Cited by 123

Publication 3.2

Introduces Kimina-Prover Preview, a large language model utilizing a novel reasoning-driven exploration paradigm for formal theorem proving, trained with a large-scale reinforcement learning (RL) pipeline from Qwen2.5-72B.

• Lean 4 Proof Generation: Demonstrates strong performance in Lean 4 proof generation by employing a structured reasoning pattern, which allows the model to emulate human problem-solving strategies and iteratively refine proof steps.

• Formal Reasoning Pattern: Presents a formal reasoning pattern designed to align informal mathematical reasoning data with its translation to formal proofs, addressing a critical challenge for LLMs in this domain.

Cited by 74

Publication 3.3

Introduces the FormAI dataset, which consists of 112,000 AI-generated C programs classified for vulnerabilities using a formal verification method.

• Formal Verification Method: Employs the Efficient SMT-based Bounded Model Checker (ESBMC) for formal verification, which uses model checking, abstract interpretation, constraint programming, and satisfiability modulo theories to definitively detect vulnerabilities.

• AI Code Generation: Details the process of using Large Language Models (LLMs), specifically GPT-3.5-turbo, and a dynamic zero-shot prompting technique to generate diverse C programs with varying complexity.

Cited by 100

Publication 3.4

Proposes PALM, a novel proof automation approach that combines Large Language Models (LLMs) and symbolic methods in a generate-then-repair pipeline to create formal proofs.

• Evaluates PALM Performance: Evaluates the PALM system on a large dataset of over 10,000 theorems, demonstrating significant improvement over existing state-of-the-art methods.

• Identifies LLM Errors: Identifies common errors made by LLMs, such as GPT-3.5, when generating formal proofs, noting that they often succeed with high-level structure but fail with low-level details.

Cited by 36

Publication 3.5

Explores the use of Large Language Models (LLMs) for autoformalization, the process of translating natural language mathematics into formal specifications and proofs.

• Translating Math Problems: Shows that LLMs can translate a significant portion of mathematical competition problems perfectly to formal specifications in Isabelle/HOL, indicating their capability in generating formal language from natural language.

• Improving Theorem Prover: Demonstrates the usefulness of autoformalization by improving a neural theorem prover, resulting in a new state-of-the-art result on the MiniF2F theorem proving benchmark.

Cited by 311

Publication 3.6

Proposes a generative neural network architecture for creating finite models of algebraic structures, drawing inspiration from image generation models like Generative Adversarial Networks (GANs) and autoencoders.

• Semigroup Generation Package: Contributes a Python package for generating small-sized finite semigroups, serving as a reference implementation of the proposed generative method.

• Reinforcement Learning for Provers: Designs a general architecture for guiding saturation provers using reinforcement learning algorithms, which is a key component of automated theorem proving (a formal method).

Cited by 0

Publication 3.7

Investigates how combinations of Large Language Models (LLMs) and symbolic analyses can synthesize specifications for C programs in the ACSL language.

• Augmenting LLMs with Formal Tools: Augments LLM prompts with outputs from formal methods tools, specifically Pathcrawler and EVA from the Frama-C ecosystem, to produce C program annotations.

• Impact of Symbolic Analysis: Demonstrates that integrating symbolic analysis enhances the quality of annotations, making them more context-aware and attuned to runtime errors, and enabling inference of program intent rather than just behavior.

Cited by 11

Publication 3.8

Demonstrates how Generative AI can be used in induction-based formal verification to increase the verification throughput.

• Automating Assertion Generation: Explores the potential of GenAI to automate assertion generation, which helps resolve the complexity and labor-intensive nature of formal verification.

• GenAI-Based Verification Flow: Presents a GenAI-based flow that generates helper assertions and generates lemmas in the event of an inductive step failure during formal verification.

Cited by 7

Publication 3.9

Examines how autoformalization, driven by Large Language Models (LLMs), is applied across various mathematical domains and levels of difficulty, relating to automated theorem proving.

• Autoformalization Workflow Analysis: Analyzes the end-to-end workflow of autoformalization, including data preprocessing, model design, and evaluation protocols for converting informal math to formal representations.

• Verifiability of LLM Outputs: Explores the emerging role of autoformalization in enhancing the verifiability and trustworthiness of LLM-generated outputs, bridging the gap between human intuition and machine-verifiable reasoning.

Cited by 11

Publication 3.10

Presents a comprehensive study of state-of-the-art software testing and verification, focusing on classical formal methods, Large Language Model (LLM)-based analysis, and emerging hybrid techniques.

• Enhancing Verification Scalability: Highlights the potential of hybrid systems, which combine formal rigor with LLM-driven insights, to enhance the effectiveness and scalability of software verification.

• LLM-Assisted Formal Verification: Details how integrating LLMs with formal methods and program analysis tools is a promising direction for improving software vulnerability detection by reducing the manual burden through automatic generation of formal assertions/invariants.

Cited by 6

Summary:

4. Discussion

4.1. The GenAI Survey

4.2. Future Directions

- Formal Methods: Rigorous, “correct by construction”, based on precise language and proof assistants.

- Machine Learning/AI: Based on large learned models, statistical probability, and often “unreliable” or “hallucinating” outputs.

- Vibe Coding: A colloquial term for AI-generated code that “feels” right but requires verification.

- Vibe Proving: Perhaps using AI to bridge gaps in formal proofs.

5. Conclusions

Oui, l’ouvre sort plus belle

D’une forme au travail

Rebelle,

Vers, marbre, onyx, émail.

[Yes, the work comes out more beautiful from a material that resists the process, verse, marble, onyx, or enamel.]

References

- Ramsay, A. Formal Methods in Artificial Intelligence; Cambridge University Press, 1988; Vol. 6. [Google Scholar]

- Krichen, M.; Mihoub, A.; Alzahrani, M.Y.; Adoni, W.Y.H.; Nahhal, T. Are formal methods applicable to machine learning and Artificial Intelligence? In Proceedings of the 2022 2nd International Conference of Smart Systems and Emerging Technologies (SMARTTECH), 2022; IEEE; pp. 48–53. [Google Scholar] [CrossRef]

- Szegedy, C. A promising path towards autoformalization and General Artificial Intelligence. In Proceedings of the International Conference on Intelligent Computer Mathematics, Cham, 2020; Vol. 12236, LNCS, pp. 3–20. [Google Scholar] [CrossRef]

- Wang, H. Computer theorem proving and Artificial Intelligence. In Computation, Logic, Philosophy: A Collection of Essays;Mathematics and its Application (China Series); Springer: Dordrecht, 1990; Vol. 2, pp. 63–75. [Google Scholar] [CrossRef]

- Cai, C.H.; Sun, J.; Dobbie, G. Automatic B-model repair using model checking and machine learning. Automated Software Engineering 2019, 26, 653–704. [Google Scholar] [CrossRef]

- Sun, X.; Khedr, H.; Shoukry, Y. Formal verification of neural network controlled autonomous systems. In Proceedings of the Proceedings of the 22nd ACM International Conference on Hybrid Systems: Computation and Control. ACM, 2019; pp. 147–156. [Google Scholar] [CrossRef]

- Zhang, Y.; Cai, Y.; Zuo, X.; Luan, X.; Wang, K.; Hou, Z.; Zhang, Y.; Wei, Z.; Sun, M.; Sun, J.; et al. The fusion of Large Language Models and formal methods for trustworthy AI agents: A roadmap. arXiv 2024, arXiv:2412.06512. [Google Scholar] [CrossRef]

- Bauer, A.; Petković, M.; Todorovski, L. MLFMF: Data sets for machine learning for mathematical formalization. Advances in Neural Information Processing Systems (NeurIPS 2023) 2023, 36, 50730–50741. [Google Scholar] [CrossRef]

- Bortolussi, L.; Milios, D.; Sanguinetti, G. Machine learning methods in statistical model checking and system design – tutorial. In Proceedings of the Runtime Verification; Bartocci, E., Majumdar, R., Eds.; Cham, 2015; Vol. 9333, LNCS, pp. 323–341. [Google Scholar] [CrossRef]

- Kuznetsov, S.O. Machine learning and formal concept analysis. In Proceedings of the International Conference on Formal Concept Analysis; Cham, Eklund, P., Ed.; 2004; Vol. 2961, LNCS, pp. 287–312. [Google Scholar] [CrossRef]

- Jha, S.; Jha, S.K.; Lincoln, P.; Bastian, N.D.; Velasquez, A.; Neema, S. Dehallucinating Large Language Models using formal methods guided iterative prompting. In Proceedings of the 2023 IEEE International Conference on Assured Autonomy (ICAA), 2023; IEEE; pp. 149–152. [Google Scholar] [CrossRef]

- Polu, S.; Sutskever, I. Generative language modeling for automated theorem proving. arXiv 2020, arXiv:2009.03393. [Google Scholar] [CrossRef]

- Lin, Y.; Tang, S.; Lyu, B.; Wu, J.; Lin, H.; Yang, K.; Li, J.; Xia, M.; Chen, D.; Arora, S.; et al. Goedel-Prover: A frontier model for open-source automated theorem proving. arXiv 2025, arXiv:2502.07640. [Google Scholar] [CrossRef]

- Lin, Y.; Tang, S.; Lyu, B.; Yang, Z.; Chung, J.H.; Zhao, H.; Jiang, L.; Geng, Y.; Ge, J.; Sun, J.; et al. Goedel-Prover-V2: Scaling formal theorem proving with scaffolded data synthesis and self-correction. arXiv 2025, arXiv:2508.03613. [Google Scholar] [CrossRef]

- Xin, H.; Ren, Z.; Song, J.; Shao, Z.; Zhao, W.; Wang, H.; Liu, B.; Zhang, L.; Lu, X.; Du, Q.; et al. DeepSeek-Prover-V1.5: Harnessing proof assistant feedback for reinforcement learning and Monte-Carlo tree search. arXiv 2024, arXiv:2408.08152. [Google Scholar] [CrossRef]

- Xin, H.; Guo, D.; Shao, Z.; Ren, Z.; Zhu, Q.; Liu, B.; Ruan, C.; Li, W.; Liang, X. DeepSeek-Prover: Advancing theorem proving in LLMs through large-scale synthetic data. arXiv 2024, arXiv:2405.14333. [Google Scholar] [CrossRef]

- Katz, S.M.; Corso, A.L.; Strong, C.A.; Kochenderfer, M.J. Verification of image-based neural network controllers using generative models. Journal of Aerospace Information Systems 2022, 19, 574–584. [Google Scholar] [CrossRef]

- Wang, H.; Unsal, M.; Lin, X.; Baksys, M.; Liu, J.; Santos, M.D.; Sung, F.; Vinyes, M.; Ying, Z.; Zhu, Z.; et al. Kimina-Prover preview: Towards large formal reasoning models with reinforcement learning. arXiv 2025, arXiv:2504.11354. [Google Scholar] [CrossRef]

- Carreira-Munich, T.; Paz-Marcolla, V.; Castro, R. DEVS Copilot: Towards Generative AI-Assisted Formal Simulation Modelling based on Large Language Models. In Proceedings of the 2024 Winter Simulation Conference (WSC), 2024; IEEE; pp. 2785–2796. [Google Scholar] [CrossRef]

- Tihanyi, N.; Charalambous, Y.; Jain, R.; Ferrag, M.A.; Cordeiro, L.C. A new era in software security: Towards self-healing software via Large Language Models and formal verification. In Proceedings of the 2025 IEEE/ACM International Conference on Automation of Software Test (AST). IEEE, 2025; pp. 136–147. [Google Scholar] [CrossRef]

- Tihanyi, N.; Bisztray, T.; Jain, R.; Ferrag, M.A.; Cordeiro, L.C.; Mavroeidis, V. The FormAI dataset: Generative AI in software security through the lens of formal verification. In Proceedings of the PROMISE 2023: Proceedings of the 19th International Conference on Predictive Models and Data Analytics in Software Engineering. ACM, 2023; pp. 33–43. [Google Scholar] [CrossRef]

- Lu, M.; Delaware, B.; Zhang, T. Proof automation with Large Language Models. In Proceedings of the ASE’24: Proceedings of the 39th IEEE/ACM International Conference on Automated Software Engineering. IEEE/ACM, 2024; pp. 1509–1520. [Google Scholar] [CrossRef]

- Wu, Y.; Jiang, A.Q.; Li, W.; Rabe, M.; Staats, C.; Jamnik, M.; Szegedy, C. Autoformalization with Large Language Models. In Proceedings of the NIPS’22: Proceedings of the 36th International Conference on Advances in Neural Information Processing Systems; Koyejo, S., Mohamed, S., Agarwal, A., Belgrave, D., Cho, K., Oh, A., Eds.; Curran Associates, 2022; Vol. 35, pp. 32353–32368. [Google Scholar] [CrossRef]

- Shminke, B. Applications of AI to study of finite algebraic structures and automated theorem proving. PhD thesis, Université Côte d’Azur, 2023. [Google Scholar]

- Granberry, G.; Ahrendt, W.; Johansson, M. Specify what? Enhancing neural specification synthesis by symbolic methods. In Proceedings of the Integrated Formal Methods (IFM 2024) Cham; Kosmatov, N., Kovács, L., Eds.; 2024; Vol. 15234, LNCS, pp. 307–325. [Google Scholar] [CrossRef]

- Kumar, A.; Gadde, D.N. Generative AI augmented induction-based formal verification. In Proceedings of the 2024 IEEE 37th International System-on-Chip Conference (SOCC), 2024; IEEE; pp. 1–2. [Google Scholar] [CrossRef]

- Weng, K.; Du, L.; Li, S.; Lu, W.; Sun, H.; Liu, H.; Zhang, T. Autoformalization in the Era of Large Language Models: A Survey. arXiv 2025, arXiv:2505.23486. [Google Scholar] [CrossRef]

- Tihanyi, N.; Bisztray, T.; Ferrag, M.A.; Cherif, B.; Dubniczky, R.A.; Jain, R.; Cordeiro, L.C. Vulnerability detection: From formal verification to Large Language Models and hybrid approaches: A comprehensive overview. arXiv 2025, arXiv:2503.10784. [Google Scholar] [CrossRef]

- Tihanyi, N.; Bisztray, T.; Ferrag, M.A.; Cherif, B.; Dubniczky, R.A.; Jain, R.; Cordeiro, L.C. Vulnerability detection: From formal verification to Large Language Models and hybrid approaches: A comprehensive overview. In Adversarial Example Detection and Mitigation Using Machine Learning; Nowroozi, E., Taheri, R., Cordeiro, L., Eds.; Springer: Cham, 2026; Volume chapter 3, pp. 33–47. [Google Scholar] [CrossRef]

- Abrial, J.-R. The B-Book: Assigning programs to meanings; Cambridge University Press, 1996. [Google Scholar]

- Avigad, J. Mathematics in the Age of AI. LMS/BCS-FACS Seminar; London Mathematical Society, 6 November 2025. Available online: https://www.lms.ac.uk/events/lms-bcs-facs-seminar-jeremy-avigad.

- Beth, E.W. Formal Methods: An Introduction to Symbolic Logic and to the Study of Effective Operations in Arithmetic and Logic; D. Reidel Publishing Company / Dordrecht-Holland, 1962. [Google Scholar]

- Formal Methods: Industrial Use from Model to the Code. In ISTE; Boulanger, J.-L., Ed.; Wiley, 2012. [Google Scholar]

- Bowen, J.P. The Z Notation: Whence the Cause and Whither the Course? In Engineering Trustworthy Software Systems, SETSS 2014. LNCS; Liu, Z., Zhang, Z., Eds.; Springer: Cham, 2016; vol. 9506, pp. 103–151. [Google Scholar] [CrossRef]

- Bowen, J.P.; Hinchey, M.G. Ten commandments of formal methods. IEEE Computer 1995, 28(4), 56–63. [Google Scholar] [CrossRef]

- Bowen, J.P.; Hinchey, M.G. Seven more myths of formal methods. IEEE Software 1995, 12(4), 34–41. [Google Scholar] [CrossRef]

- Bowen, J.P.; Stavridou, V. Safety-critical systems, formal methods and standards. Software Engineering Journal 1993, 8(4), 189–209. [Google Scholar] [CrossRef]

- Brereton, P.; Kitchenham, B.A.; Budgen, D.; Turner, M.; Khalil, M. Lessons from applying the systematic literature review process within the software engineering domain. The Journal of Systems and Software 2007, 80(4), 571–583. [Google Scholar] [CrossRef]

- Clarke, E.; Biere, A.; Raimi, R.; Zhu, Y. Bounded Model Checking Using Satisfiability Solving. Formal Methods in System Design 2001, 19, 7–34. [Google Scholar] [CrossRef]

- Dieste, O.; Grimán, A.; Juristo, N. Developing search strategies for detecting relevant experiments. Empirical Software Engineering 2009, 14, 513–539. [Google Scholar] [CrossRef]

- Floyd, R.W. Assigning meaning to programs. In Proceedings of Symposia in Applied Mathematics 19; Mathematical Aspects of Computer Science;(republished in 1993); Scharts, S.T., Ed.; American Mathematical Society: Providence, 1967. [Google Scholar] [CrossRef]

- Software Specification Methods: An Overview Using a Case Study; Frappier, M., Habrias, H., Eds.; Springer: FACIT, 2001. [Google Scholar]

- Gautier, T. L’Art Moderne; M. Lévy Frères: Paris, 1856; Available online: https://archive.org/details/lartmodernetho00gautuoft.

- Gnesi, S.; Margaria, T. Formal Methods for Industrial Critical Systems: A Survey of Applications; IEEE Computer Society Press, Wiley, 2012. [Google Scholar]

- Google. Google Scholar Labs. Available online: https://scholar.google.com/scholar_labs/search (accessed on 25 February 2026).

- Hall, J.A. Seven myths of formal methods. IEEE Software 1990, 7(5), 11–19. [Google Scholar] [CrossRef]

- Applications of Formal Methods. In Series in Computer Science; Hinchey, M.G., Bowen, J.P., Eds.; Prentice Hall, 1995. [Google Scholar]

- Industrial-Strength Formal Methods in Practice; Hinchey, M.G., Bowen, J.P., Eds.; Springer: FACIT, 1999. [Google Scholar]

- Hoare, C.A.R. An axiomatic basis for computer programming. Communications of the ACM 1969, 12(10), 576–580. [Google Scholar] [CrossRef]

- Hoare, C.A.R.; He, J. Unifying Theories of Programming. In Series in Computer Science; Prentice Hall, 1998. [Google Scholar]

- Huang, S.; et al. LeanProgress: Guiding Search for Neural Theorem Proving via Proof Progress Prediction. arXiv 2025. [Google Scholar] [CrossRef]

- Lu, J.; et al. Lean Finder: Semantic Search for Mathlib That Understands User Intents. arXiv 2025. [Google Scholar] [CrossRef]

- Morris, F.L.; Jones, C.B. An Early Program Proof by Alan Turing. IEEE Annals of the History of Computing 1984, 6(2), 139–143. [Google Scholar] [CrossRef]

- Isabelle/HOL: A Proof Assistant for Higher-Order Logic. In LNCS; Nipkow, T., Wenzel, M., Paulson, L.C., Eds.; Springer: Cham, 2002; vol. 2283. [Google Scholar] [CrossRef]

- Romera-Paredes, B.; et al. Mathematical discoveries from program search and large language models. Nature 2024, 625, 468–475. [Google Scholar] [CrossRef] [PubMed]

- Petersen, K.; Feldt, R.; Mujtaba, S.; Mattsson, M. Systematic mapping studies in software engineering. In EASE’08: Proceedings of the 12th International Conference on Evaluation and Assessment in Software Engineering, 2008; pp. 68–77. [Google Scholar]

- Petersen, K.; Vakkalanka, S.; Kuzniarz, L. Guidelines for conducting systematic mapping studies in software. Information and Software Technology 2015, 64, 1–18. [Google Scholar] [CrossRef]

- Shao, Z.; et al. DeepSeekMath-V2: Towards Self-Verifiable Mathematical Reasoning. arXiv 2025. [Google Scholar] [CrossRef]

- Strachey, C. The Strachey’s Philosophy. Department of Computer Science, University of Oxford. Available online: https://www.cs.ox.ac.uk/activities/concurrency/courses/stracheys.html (accessed on 2 March 2025).

- Tao, T. The Potential for AI in Science and Mathematics. Oxford Mathematics Public Lectures, Science Museum, London, 17 July 2024; Available online: https://www.maths.ox.ac.uk/node/68243.

- Turing, A.M. Checking a Large Routine. Report of a Conference on High Speed Automatic Calculating Machines, Cambridge University Mathematical Laboratory, 1949; pp. 67–69. Available online: https://turingarchive.kings.cam.ac.uk/checking-large-routine.

- Turing, A.M. Computing Machinery and Intelligence; 1952; Volume Mind LIX(236), pp. 433–460. [Google Scholar] [CrossRef]

- Whitehead, A.N.; Russell, B. Principia Mathematica; Cambridge University Press, 1910–1913. [Google Scholar]

- Woodcock, J.; Larsen, P.G.; Bicarregui, J.; Fitzgerald, J. Formal methods: Practice and experience. ACM Computing Surveys (CSUR) 2009, 41(4), 1–36. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).