Submitted:

03 March 2026

Posted:

04 March 2026

Read the latest preprint version here

Abstract

Keywords:

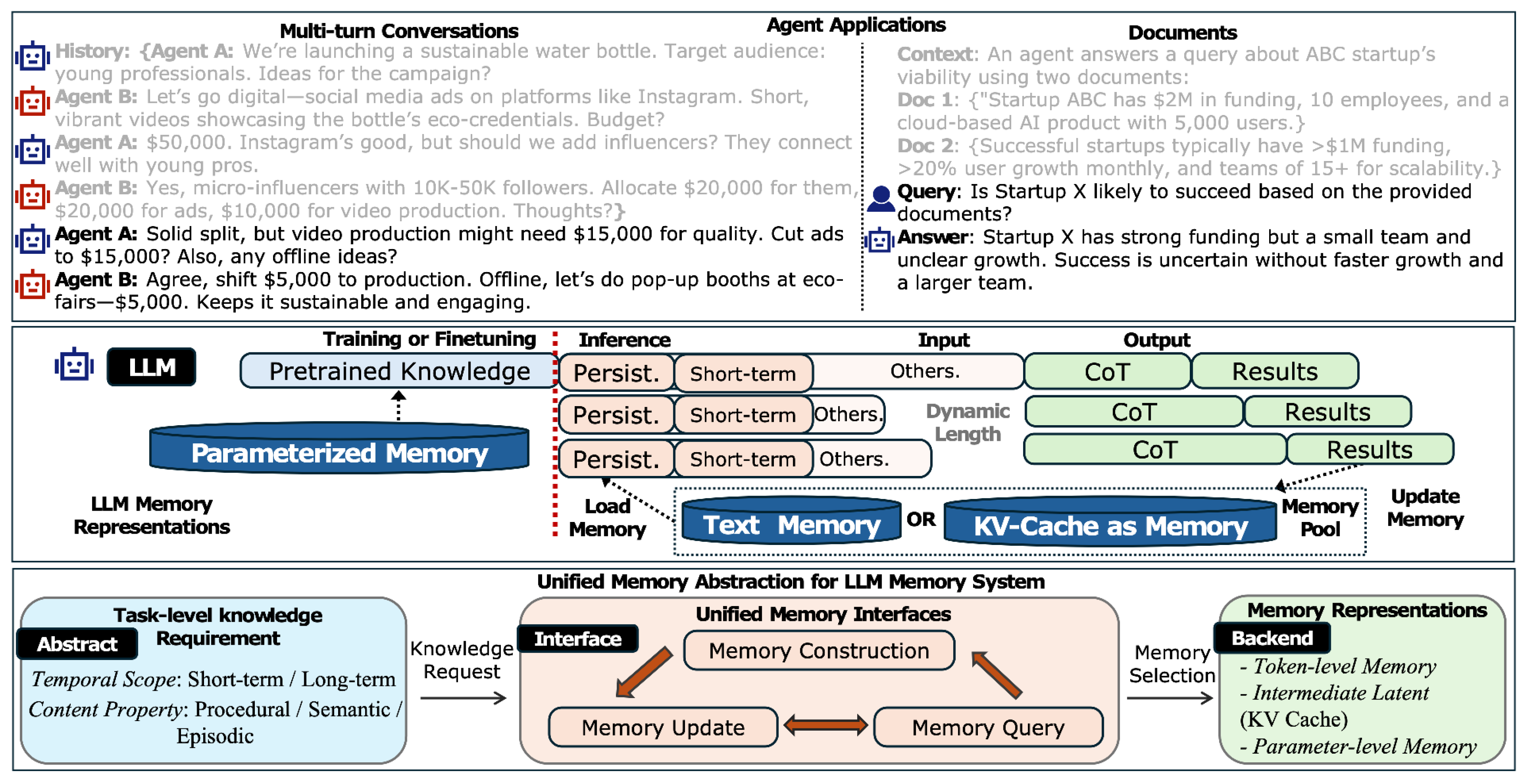

1. Introduction

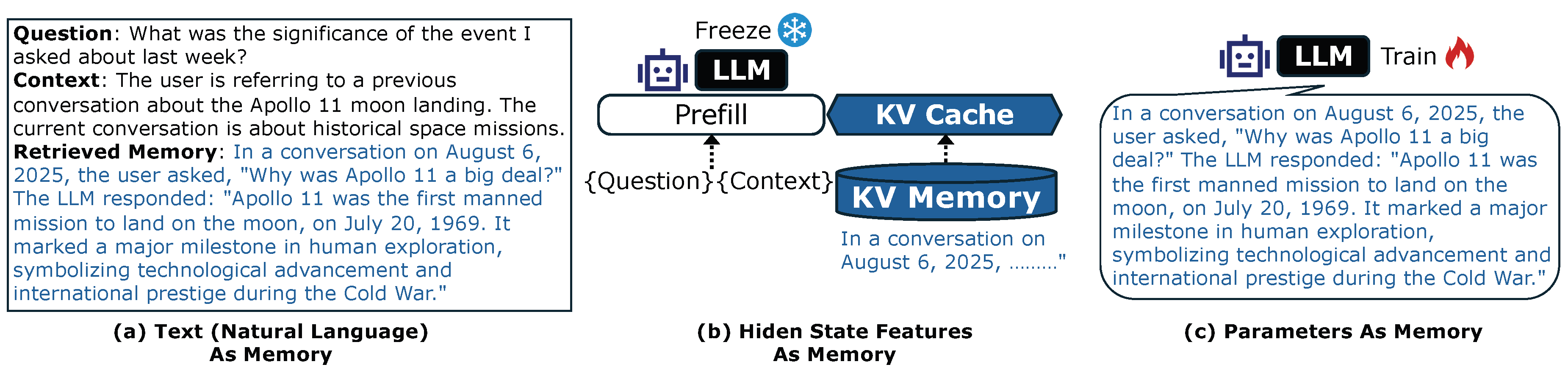

- Memory representation describes where and in what form information resides: token-level memory in the input context, intermediate latent memory as inference-time states (e.g., Key–Value caches), and parameter-level memory in model weights via adaptation or editing.

- Memory management describes how memory is operated over time to satisfy task requirements under practical constraints. Across representations, we observe a shared interface of three core operations: memory construction (what to store and how to structure it), memory update (how to maintain, consolidate, or remove stored content), and memory query (how to select and integrate relevant information during inference).

2. Natural Language Tokens as Memory

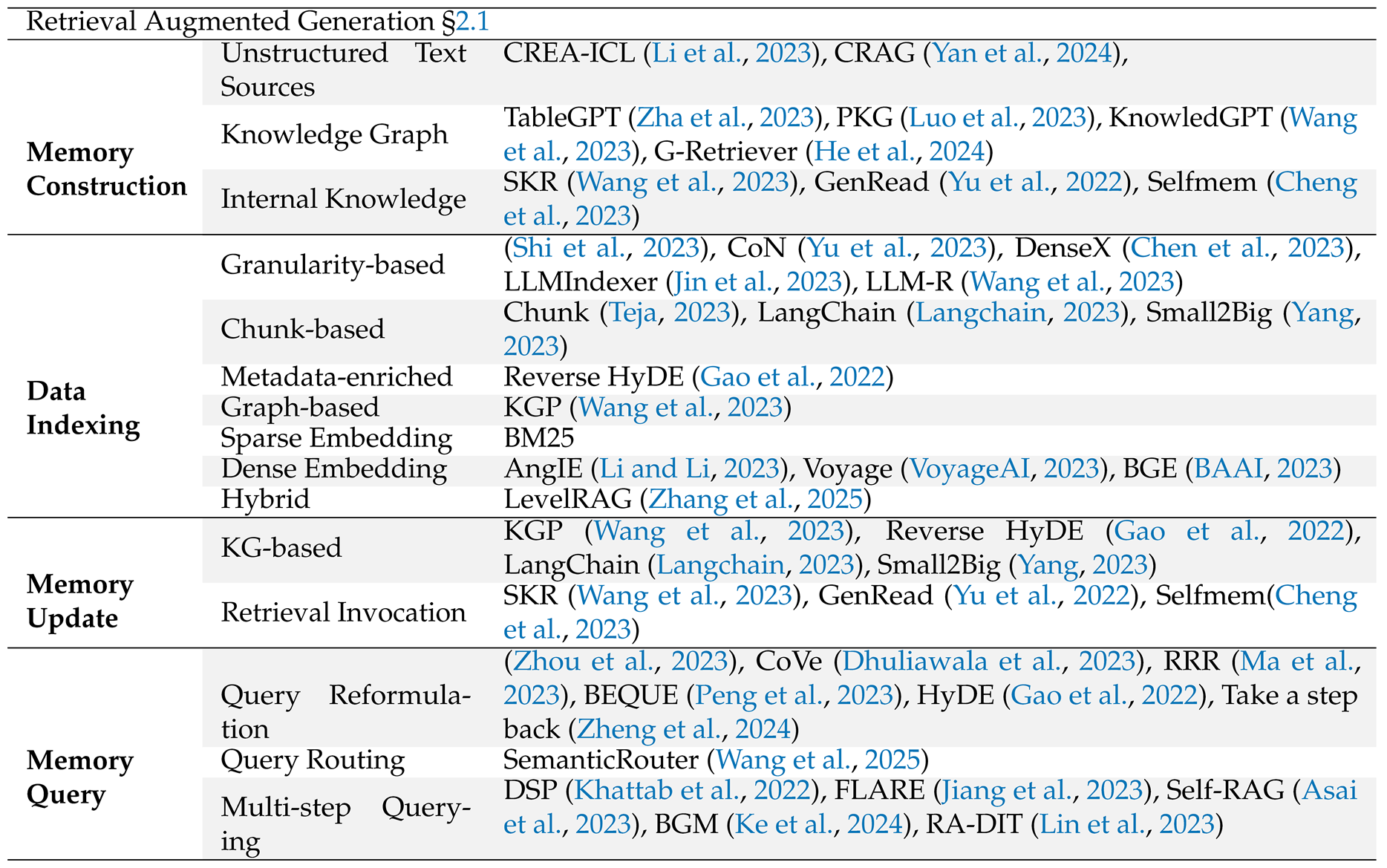

2.1. Retrieval-Augmented Generation (RAG)

Memory Construction.

Memory Update.

Memory Query.

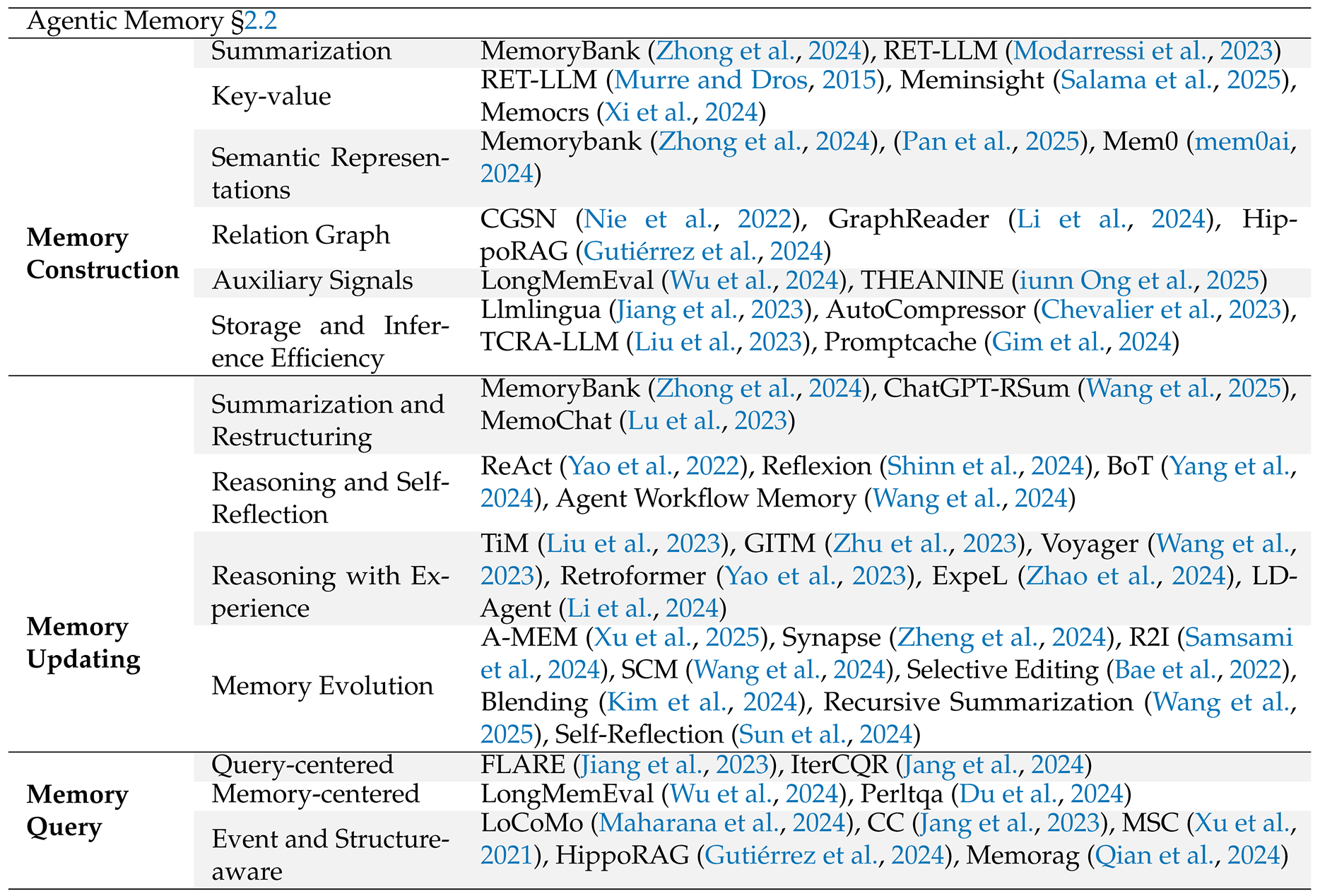

2.2. Agentic Memory

Memory Construction.

Memory Update.

Memory Query.

3. Intermediate Latent as Memory

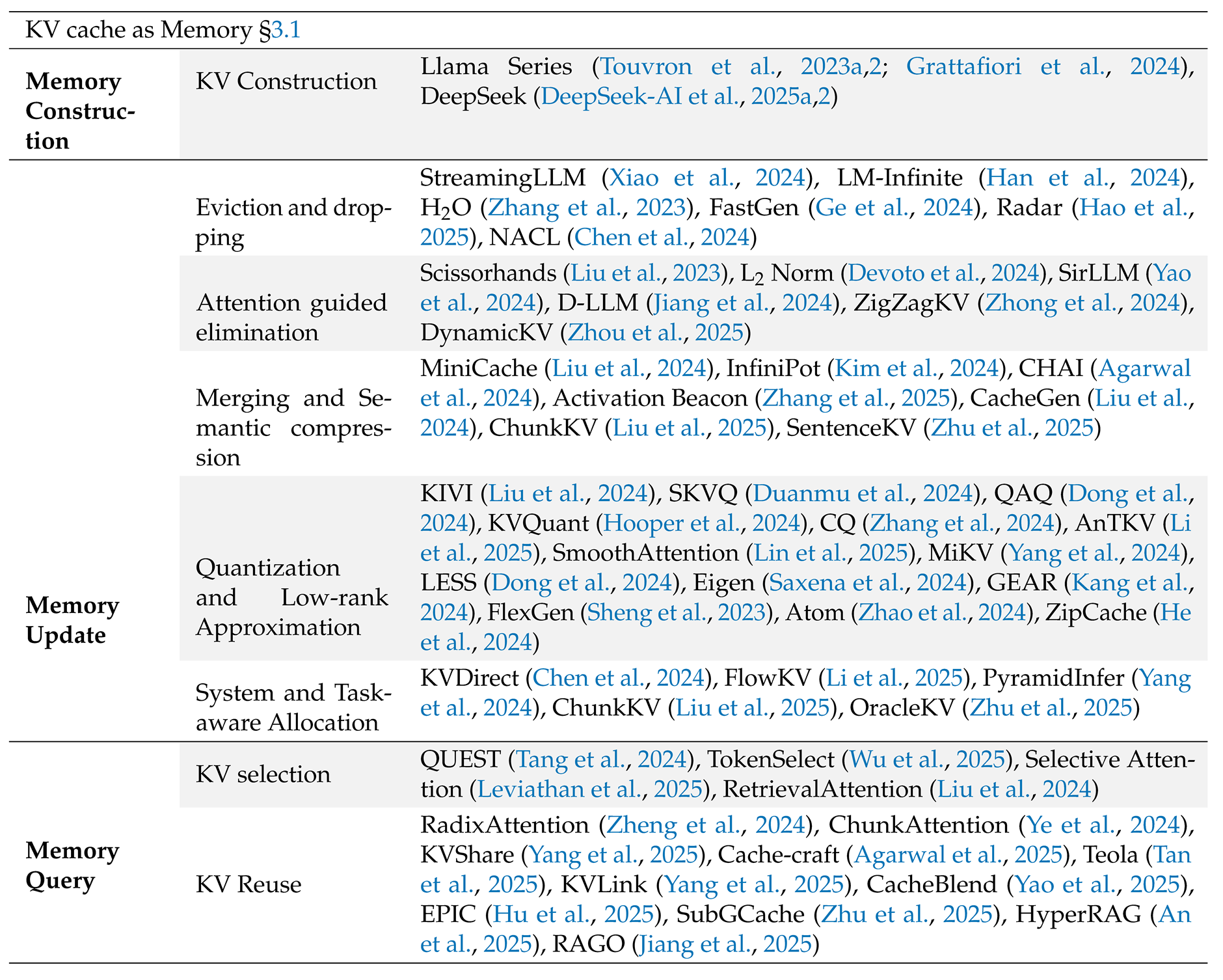

3.1. KV Cache as Memory

Memory Construction.

Memory Update.

Memory Query.

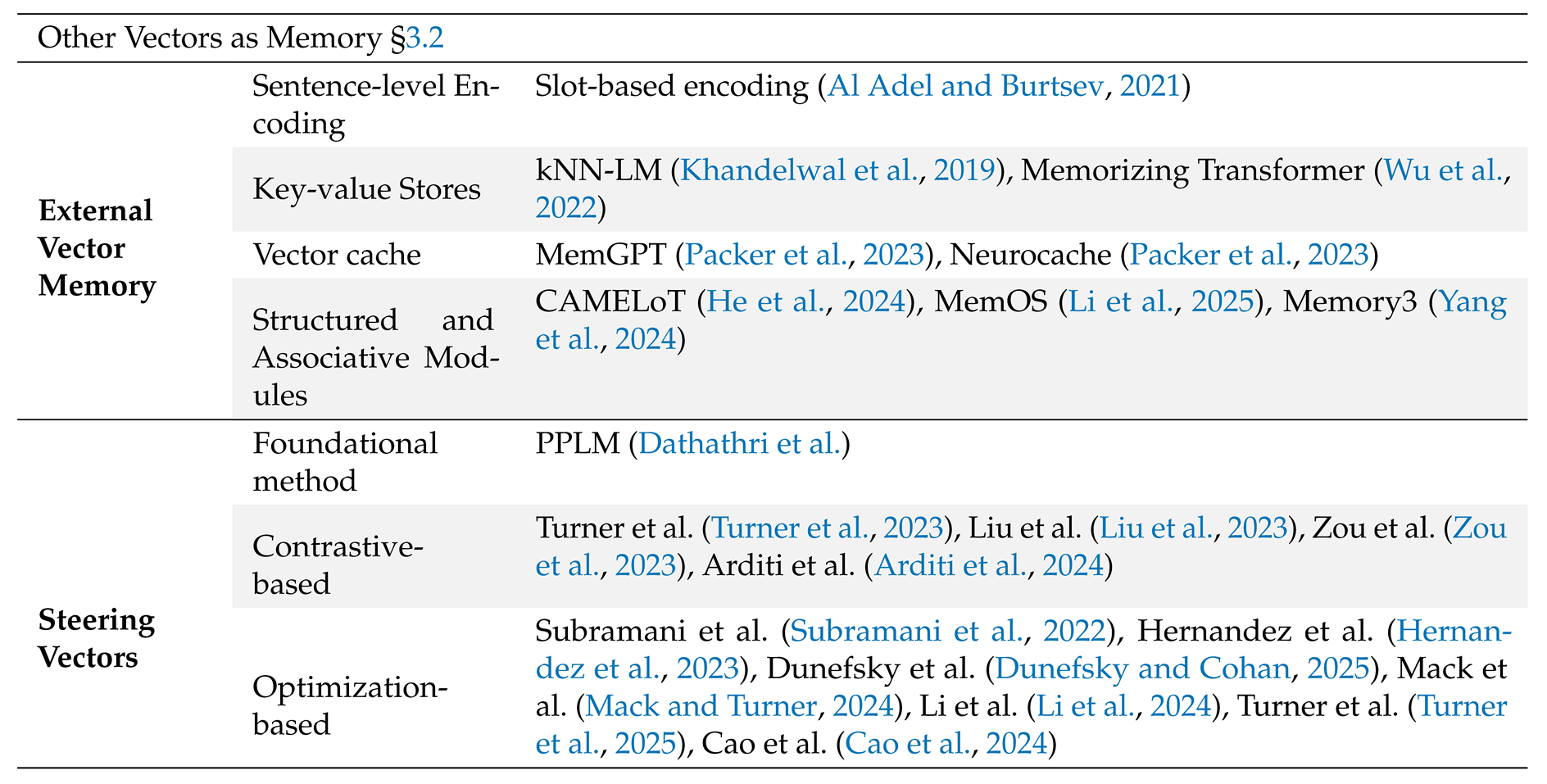

3.2. Other Vectors as Memory

External Vectors.

Steering Vectors.

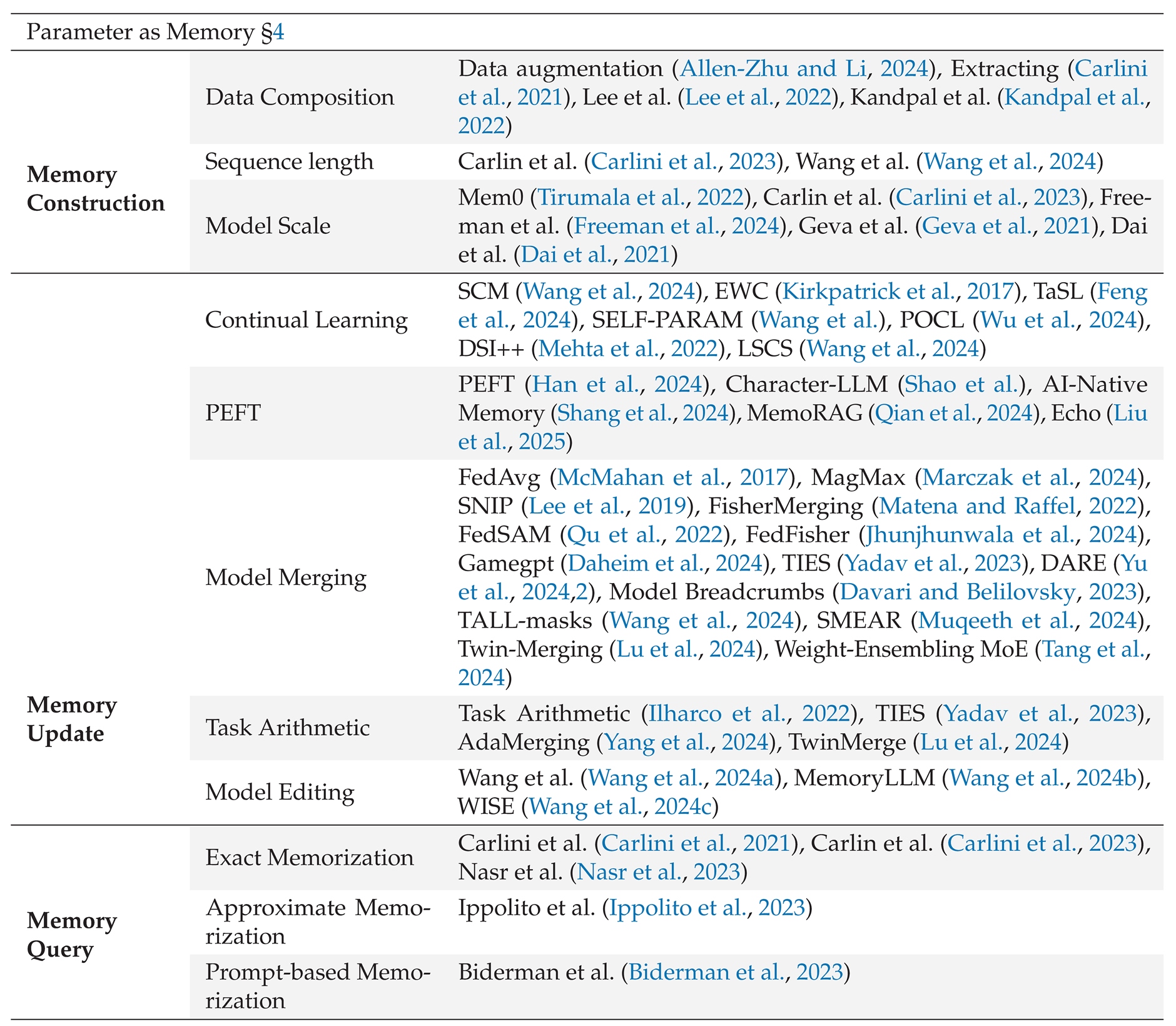

4. Parameter as Memory

Memory Construction.

Memory Update.

Memory Query.

5. Discussion

From Knowledge Requests to Memory Backends.

Unified Interfaces as System Glue.

Future Directions

6. Conclusion

Limitations

Appendix A. Related Surveys

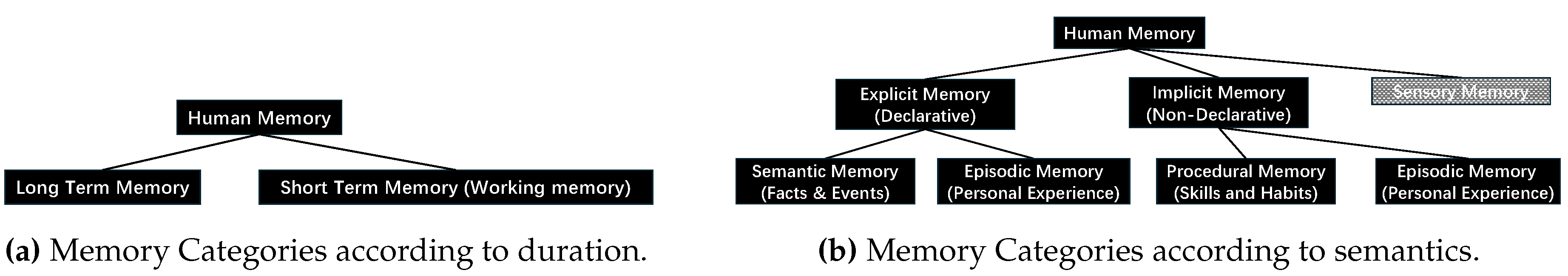

Appendix B. Overview of Human and LLM Memory and Taxonomy

Appendix B.1. Human Memory

| Memory Type | Key Function/Characteristics | Duration/Capacity |

|---|---|---|

| Sensory Memory | Brief buffer for incoming sensory information (visual, auditory, etc.) | Milliseconds to a few seconds |

| Working Memory (WM) | Transient active store for manipulating information; supports complex cognitive operations (reasoning, language) |

Tens of seconds to minutes; limited items |

| Short-Term Memory (STM) | Temporary holding of information before transfer to LTM or forgetting | Tens of seconds to minutes; limited items |

| Long-Term Memory (LTM) | Stores information for extended periods; large capacity and durability | Minutes to decades; Vast capacity |

| Declarative (Explicit) | Consciously recalled facts and events | Minutes to decades; Vast capacity |

| Episodic Memory | Personal experiences, specific events with contextual details | Minutes to decades |

| Semantic Memory | General world knowledge, facts, concepts, language | Minutes to decades |

| Non-Declarative (Implicit) | Unconscious learning: skills, habits, priming, conditioning | Acquired slowly, long-lasting |

Appendix B.2. LLM Memory

| Question: Can you remind me about the trip I mentioned planning to Paris? |

|---|

| Memory (Vector Database): Embedding match - Conversation: "User plans a trip to Paris in September 2025, interested in visiting the Louvre and Eiffel Tower." Vector Database: Enables semantic retrieval by matching the query’s meaning to stored embeddings, useful for broad or vague queries. |

| Memory (Time Index): On July 15, 2025, at 14:30, the user said, "I’m planning a trip to Paris next month and want to see the Louvre." Time Index: Organizes memories chronologically, ideal for queries referencing recent or specific dates. |

| Memory (Username Index): User "JaneDoe123" discussed a Paris trip, mentioning a preference for art museums. Username Index: Ensures personalization by linking memories to a specific user, enhancing relevance. |

| Memory (Event Name Index): Event "Paris Trip 2025": User plans to visit Paris, focusing on cultural landmarks. Event Name Index: Tags memories with specific events, allowing precise retrieval for event-related queries. |

| Memory (Story Index): Story "Jane’s European Adventure": Includes a chapter on planning a Paris trip, with details about booking a hotel near the Seine. Story Index: Structures memories as narratives, preserving context across related interactions. |

| Memory (Place Index): Place "Paris, France": User mentioned visiting the Eiffel Tower and dining at a café in Montmartre. Place Index: Associates memories with locations, enabling spatial queries about specific places. |

Appendix B.3. Taxonomy of Memory Implementations

| Implementation Ways | Memory Type | Forgetting Pretrained Knowledge | Memory Scalability | Explainability | Serving Costs |

|---|---|---|---|---|---|

| In-context Learning | Short Term | No | High | High | Low |

| Long Term | No | Weak | High | Low | |

| Parameter by Training | Short Term | Weak | Hight | Low | Medium |

| Long Term | Severe | Hight | Low | High |

| Implementation Ways | Memory Type | Forgetting Pretrained Knowledge | Explainability | Costs | Knowledge Match Degree |

|---|---|---|---|---|---|

| In-context Learning | Procedural | No | High | Low | Low |

| Episodic | No | High | Low | High | |

| Semantic | No | High | Low | High | |

| Parameter by Training | Procedural | Possible | Low | High | High |

| Episodic | Possible | Low | High | Moderate | |

| Semantic | Possible | Low | High | Moderate |

Appendix B.4. How Human Memory Benefits LLM Agentic Applications

Appendix C. Taxonomy of Different Memory

|

|

|

|

|

- Agarwal, S., Acun, B., Hosmer, B., Elhoushi, M., Lee, Y., Venkataraman, S., Papailiopoulos, D., & Wu, C.-J. (2024, 21-27 Jul). CHAI: Clustered Head Attention for Efficient LLM Inference. In R. Salakhutdinov et al. (Eds.), Proceedings of the 41st international conference on machine learning (Vol. 235, pp. 291-312). PMLR. Available online: https://proceedings.mlr.press/v235/agarwal24a.html (accessed on).

- Agarwal, S., Sundaresan, S., Mitra, S., Mahapatra, D., Gupta, A., Sharma, R., Kapu, N. J., Yu, T., & Saini, S. (2025). Cache-craft: Managing chunk-caches for efficient retrieval-augmented generation. Available online: https://arxiv.org/abs/2502.15734 (accessed on).

- Ai, T. (2024). Talkie | ai-native character community. Available online: https://www.talkie-ai.com/ (accessed on).

- Al Adel, A., & Burtsev, M. S. (2021). Memory transformer with hierarchical attention for long document processing. In 2021 international conference engineering and telecommunication (p. 1-7). [CrossRef]

- Allen-Zhu, Z., & Li, Y. (2024). Physics of language models: part 3.1, knowledge storage and extraction. In Proceedings of the 41st international conference on machine learning (pp. 1067-1077).

- An, Y., Cheng, Y., Park, S. J., & Jiang, J. (2025). Hyperrag: Enhancing quality-efficiency tradeoffs in retrieval-augmented generation with reranker kv-cache reuse. Available online: https://arxiv.org/abs/2504.02921 (accessed on).

- Anysphere. (2025). Cursor - the ai code editor. https://www.cursor.com/en.. Available online: https://www.cursor.com (accessed on).

- Arditi, A., Obeso, O., Syed, A., Paleka, D., Panickssery, N., Gurnee, W., & Nanda, N. (2024). Refusal in language models is mediated by a single direction. arXiv.

- Asai, A., Wu, Z., Wang, Y., Sil, A., & Hajishirzi, H. (2023). Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. arXiv preprint arXiv:2310.11511.

- BAAI. (2023). Flagembedding. https://github.com/FlagOpen/FlagEmbedding.

- Baddeley, A. (2007). Working memory, thought, and action (Vol. 45). OuP Oxford.

- Baddeley, A. D., & Hitch, G. (1974). Working Memory. In G. H. Bower (Ed.), (Vol. 8, p. 47-89). Academic Press. Available online: https://www.sciencedirect.com/science/article/pii/S0079742108604521 (accessed on). https://doi.org/10.1016/S0079-7421(08)60452-1.

- Bae, S., Kwak, D., Kang, S., Lee, M. Y., Kim, S., Jeong, Y., Kim, H., Lee, S.-W., Park, W., & Sung, N. (2022, December). Keep Me Updated! Memory Management in Long-term Conversations. In Y. Goldberg, Z. Kozareva, & Y. Zhang (Eds.), Findings of the association for computational linguistics: Emnlp 2022 (pp. 3769-3787). Abu Dhabi, United Arab Emirates: Association for Computational Linguistics. Available online: https://aclanthology.org/2022.findings-emnlp.276/ (accessed on). https://doi.org/10.18653/v1/2022.findings-emnlp.276.

- Bai, J., Bai, S., Chu, Y., Cui, Z., Dang, K., Deng, X., et al. (2023). Qwen technical report. Available online: https://arxiv.org/abs/2309.16609 (accessed on).

- Begg, I. (1984). Tulving's memory [Review of the book Elements of episodic memory, by E. Tulving . Canadian Journal of Psychology / Revue canadienne de psychologie, 38(1), 144-147.

- Beltagy, I., Peters, M. E., & Cohan, A. (2020). Longformer: The Long-Document Transformer. CoRR, abs/2004.05150. Available online: https://arxiv.org/abs/2004.05150 (accessed on).

- Biderman, S., Prashanth, U. S., Sutawika, L., Schoelkopf, H., Anthony, Q., Purohit, S., & Raff, E. (2023). Emergent and predictable memorization in large language models. Available online: https://arxiv.org/abs/2304.11158 (accessed on).

- Bommasani, R., Hudson, D. A., Adeli, E., Altman, R., Arora, S., von Arx, S., Bernstein, M. S., Bohg, J., Bosselut, A., Brunskill, E., Brynjolfsson, E., Buch, S., Card, D., Castellon, R., Chatterji, N., Chen, A., Creel, K., Davis, J. Q., Demszky, D.,... Liang, P. (2022). On the opportunities and risks of foundation models. Available online: https://arxiv.org/abs/2108.07258 (accessed on).

- Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., Agarwal, S., Herbert-Voss, A., Krueger, G., Henighan, T., Child, R., Ramesh, A., Ziegler, D. M., Wu, J., Winter, C.,... Amodei, D. (2020a). Language models are few-shot learners. In Proceedings of the 34th international conference on neural information processing systems. Red Hook, NY, USA: Curran Associates Inc.

- Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., Agarwal, S., Herbert-Voss, A., Krueger, G., Henighan, T., Child, R., Ramesh, A., Ziegler, D. M., Wu, J., Winter, C.,... Amodei, D. (2020b). Language models are few-shot learners. Available online: https://arxiv.org/abs/2005.14165 (accessed on).

- Budson, A. E., & Kensinger, E. A. (2023). Why we forget and how to remember better: the science behind memory. Oxford University Press.

- Cao, Y., Zhang, T., Cao, B., Yin, Z., Lin, L., Ma, F., & Chen, J. (2024). Personalized steering of large language models: Versatile steering vectors through bi-directional preference optimization. arXiv.

- Carlini, N., Ippolito, D., Jagielski, M., Lee, K., Tramer, F., & Zhang, C. (2023). Quantifying memorization across neural language models. Available online: https://arxiv.org/abs/2202.07646 (accessed on).

- Carlini, N., Tramer, F., Wallace, E., Jagielski, M., Herbert-Voss, A., Lee, K., Roberts, A., Brown, T., Song, D., Erlingsson, U., Oprea, A., & Raffel, C. (2021). Extracting training data from large language models. Available online: https://arxiv.org/abs/2012.07805 (accessed on).

- Character AI. (2023). Character ai. Retrieved September 14, 2023 from https://character.ai/. Available online: https://character.ai/ (accessed on).

- Chen, D., Wang, H., Huo, Y., Li, Y., & Zhang, H. (2023). Gamegpt: Multi-agent collaborative framework for game development. arXiv preprint arXiv:2310.08067.

- Chen, J., Xiao, S., Zhang, P., Luo, K., Lian, D., & Liu, Z. (2024). BGE M3-Embedding: Multi-Lingual, Multi-Functionality, Multi-Granularity Text Embeddings Through Self-Knowledge Distillation. CoRR, abs/2402.03216. Available online: https://doi.org/10.48550/arXiv.2402.03216 (accessed on) https://doi.org/10.48550/ARXIV.2402.03216.

- Chen, K., Li, J., Wang, K., Du, Y., Yu, J., Lu, J., Li, L., Qiu, J., Pan, J., Huang, Y., Fang, Q., Heng, P. A., & Chen, G. (2024). Chemist-x: Large language model-empowered agent for reaction condition recommendation in chemical synthesis.

- Chen, S., Jiang, R., Yu, D., Xu, J., Chao, M., Meng, F., Jiang, C., Xu, W., & Liu, H. (2024). Kvdirect: Distributed disaggregated llm inference. Available online: https://arxiv.org/abs/2501.14743 (accessed on).

- Chen, T., Wang, H., Chen, S., Yu, W., Ma, K., Zhao, X., Yu, D., & Zhang, H. (2023). Dense X Retrieval: What Retrieval Granularity Should We Use? arXiv preprint arXiv:2312.06648.

- Chen, Y., Wang, G., Shang, J., Cui, S., Zhang, Z., Liu, T., Wang, S., Sun, Y., Yu, D., & Wu, H. (2024, August). NACL: A General and Effective KV Cache Eviction Framework for LLM at Inference Time. In L.-W. Ku, A. Martins, & V. Srikumar (Eds.), Proceedings of the 62nd annual meeting of the association for computational linguistics (volume 1: Long papers) (pp. 7913-7926). Bangkok, Thailand: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.acl-long.428/ (accessed on). https://doi.org/10.18653/v1/2024.acl-long.428.

- Chen, Z.-Y., Xie, F.-K., Wan, M., Yuan, Y., Liu, M., Wang, Z.-G., Meng, S., & Wang, Y.-G. (2023). Matchat: A large language model and application service platform for materials science. Chinese Physics B, 32(11), 118104.

- Cheng, X., Luo, D., Chen, X., Liu, L., Zhao, D., & Yan, R. (2023). Lift Yourself Up: Retrieval-augmented Text Generation with Self Memory. arXiv preprint arXiv:2305.02437.

- Cheng, Y., Zhang, C., Zhang, Z., Meng, X., Hong, S., Li, W., Wang, Z., Wang, Z., Yin, F., Zhao, J., & et al. (2024). Exploring large language model based intelligent agents: Definitions, methods, and prospects. arXiv.

- Chevalier, A., Wettig, A., Ajith, A., & Chen, D. (2023, December). Adapting Language Models to Compress Contexts. In H. Bouamor, J. Pino, & K. Bali (Eds.), Proceedings of the 2023 conference on empirical methods in natural language processing (pp. 3829-3846). Singapore: Association for Computational Linguistics. Available online: https://aclanthology.org/2023.emnlp-main.232/ (accessed on). https://doi.org/10.18653/v1/2023.emnlp-main.232.

- Chugtai, B., & Bushnaq, L. (2025). Activation space interpretability may be doomed. Available online: https://www.lesswrong.com/posts/gYfpPbww3wQRaxAFD (accessed on).

- Craik, F. I., & Lockhart, R. S. (1972). Levels of processing: A framework for memory research. Journal of verbal learning and verbal behavior, 11(6), 671-684.

- Cui, C., Ma, Y., Cao, X., Ye, W., Zhou, Y., Liang, K., Chen, J., Lu, J., Yang, Z., Liao, K.-D., & et al. (2024). A survey on multimodal large language models for autonomous driving. In Proceedings of the ieee/cof winter conference on applications of computer vision (pp. 958-979).

- Daheim, N., Möllenhoff, T., Ponti, E., Gurevych, I., & Khan, M. E. (2024). Model Merging by Uncertainty-Based Gradient Matching. In Iclr.

- Dai, D., Dong, L., Hao, Y., Sui, Z., Chang, B., & Wei, F. (2021). Knowledge neurons in pretrained transformers. arXiv.

- Dai, D., Sun, Y., Dong, L., Hao, Y., Ma, S., Sui, Z., & Wei, F. (2023). Why can GPT learn in-context? language models secretly perform gradient descent as meta-optimizers. In Findings of the association for computational linguistics: Acl 2023 (pp. 4005-4019). Toronto, Canada: Association for Computational Linguistics. Available online: https://doi.org/10.18653/v1/2023.findings-acl.247 (accessed on).

- Dao, T., Fu, D. Y., Ermon, S., Rudra, A., & Ré, C. (2022). FLASHATTENTION: fast and memory-efficient exact attention with IO-awareness. In Proceedings of the 36th international conference on neural information processing systems. Red Hook, NY, USA: Curran Associates Inc.

- Dathathri, S., Madotto, A., Lan, J., Hung, J., Frank, E., Molino, P., Yosinski, J., & Liu, R. (n.d.). Plug and Play Language Models: A Simple Approach to Controlled Text Generation. In International conference on learning representations.

- Davari, M., & Belilovsky, E. (2023). Model breadcrumbs: Scaling multi-task model merging with sparse masks. arXiv preprint arXiv:2312.06795.

- DeepSeek-AI, Guo, D., Yang, D., Zhang, H., Song, J., et al. (2025). Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. Available online: https://arxiv.org/abs/2501.12948 (accessed on).

- DeepSeek-Al, Liu, A., Feng, B., Xue, B., Wang, B., Wu, B., et al. (2025). Deepseek-v3 technical report. Available online: https://arxiv.org/abs/2412.19437 (accessed on).

- Devoto, A., Zhao, Y., Scardapane, S., & Minervini, P. (2024, November). A Simple and Effective L_2 Norm- Based Strategy for KV Cache Compression. In Proceedings of the 2024 conference on empirical methods in natural language processing (pp. 18476-18499). Miami, Florida, USA: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.emnlp-main.1027/ (accessed on). https://doi.org/10.18653/v1/2024.emnlp-main.1027.

- Dhuliawala, S., Komeili, M., Xu, J., Raileanu, R., Li, X., Celikyilmaz, A., & Weston, J. (2023). Chain-of-verification reduces hallucination in large language models. arXiv preprint arXiv:2309.11495.

- Di, S., Yu, Z., Zhang, G., Li, H., TaoZhong, Cheng, H., Li, B., He, W., Shu, F., & Jiang, H. (2025). Streaming Video Question-Answering with In-context Video KV-Cache Retrieval. In The thirteenth international conference on learning representations. Available online: https://openreview.net/forum?id=8g9fs6mdEG (accessed on).

- Dong, H., Yang, X., Zhang, Z., Wang, Z., Chi, Y., & Chen, B. (2024, 21-27 Jul). Get More with LESS: Synthesizing Recurrence with KV Cache Compression for Efficient LLM Inference. In R. Salakhutdinov et al. (Eds.), Proceedings of the 41st international conference on machine learning (Vol. 235, pp. 11437-11452). PMLR. Available online: https://proceedings.mlr.press/v235/dong24f.html (accessed on).

- Dong, S., Cheng, W., Qin, J., & Wang, W. (2024). Qaq: Quality adaptive quantization for Ilm ku cache. Available online: https://arxiv.org/abs/2403.04643 (accessed on).

- Du, Y., Huang, W., Zheng, D., Wang, Z., Montella, S., Lapata, M., Wong, K.-F., & Pan, J. Z. (2025). Rethinking memory in ai: Taxonomy, operations, topics, and future directions. Available online: https://arxiv.org/abs/2505.00675 (accessed on).

- Du, Y., Wang, H., Zhao, Z., Liang, B., Wang, B., Zhong, W., Wang, Z., & Wong, K.-F. (2024). Perltqa: A personal long-term memory dataset for memory classification, retrieval, and synthesis in question answering. Available online: https://arxiv.org/abs/2402.16288 (accessed on).

- Duanmu, H., Yuan, Z., Li, X., Duan, J., Zhang, X., & Lin, D. (2024). SKVQ: Sliding-window key and value cache quantization for large language models. Available online: https://arxiv.org/abs/2405.06219 (accessed on).

- Dunefsky, J., & Cohan, A. (2025). Investigating generalization of one-shot LLM steering vectors. arXiv preprint arXiv:2502.18862.

- Durante, Z., Huang, Q., Wake, N., Gong, R., Park, J. S., Sarkar, B., Taori, R., Noda, Y., Terzopoulos, D., Choi, Y., & et al. (2024). Agent ai: Surveying the horizons of multimodal interaction. arXiv.

- Fan, A., Gokkaya, B., Harman, M., Lyubarskiy, M., Sengupta, S., Yoo, S., & Zhang, J. M. (2023). Large language models for software engineering: Survey and open problems. arXiv.

- Fan, Y., Sun, H., Xue, K., Zhang, X., Zhang, S., & Ruan, T. (2024). MedOdyssey: A Medical Domain Benchmark for Long Context Evaluation Up to 200K Tokens. CoRR, abs/2406.15019. Available online: https://doi.org/10.48550/arXiv.2406.15019 (accessed on) https://doi.org/10.48550/ARXIV.2406.15019.

- Feng, Y., Chu, X., Xu, Y., Shi, G., Liu, B., & Wu, X.-M. (2024, August). TaSL: Continual Dialog State Tracking via Task Skill Localization and Consolidation. In L.-W. Ku, A. Martins, & V. Srikumar (Eds.), Proceedings of the 62nd annual meeting of the association for computational linguistics (volume 1: Long papers) (pp. 1266-1279). Bangkok, Thailand: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.acl-long.69/ (accessed on). https://doi.org/10.18653/v1/2024.acl-long.69.

- Freeman, J., Rippe, C., Debenedetti, E., & Andriushchenko, M. (2024). Exploring memorization and copyright violation in frontier LLMs: A study of the new york times v. openai 2023 lawsuit. Available online: https://arxiv.org/abs/2412.06370 (accessed on).

- Fu, Y., Xue, L., Huang, Y., Brabete, A.-O., Ustiugov, D., Patel, Y., & Mai, L. (2024). ServerlessLLM: low-latency serverless inference for large language models. In Proceedings of the 18th usenix conference on operating systems design and implementation. USA: USENIX Association.

- Gao, C., Lan, X., Lu, Z., Mao, J., Piao, J., Wang, H., Jin, D., & Li, Y. (2023). S3: Social-network simulation system with large language model-empowered agents. arxiv.

- Gao, L., Ma, X., Lin, J., & Callan, J. (2022). Precise zero-shot dense retrieval without relevance labels. arXiv preprint arXiv:2212.10496.

- Gao, M., Lu, T., Yu, K., Byerly, A., & Khashabi, D. (2024, November). Insights into LLM Long-Context Failures: When Transformers Know but Don't Tell. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Findings of the association for computational linguistics: Emnlp 2024. Miami, Florida, USA: Association for Computational Linguistics.

- Gao, S., Chen, X., Li, P., Ren, Z., Bing, L., Zhao, D., & Yan, R. (2019). Abstractive Text Summarization by Incorporating Reader Comments. In The thirty-third AAAI conference on artificial intelligence, AAAI 2019, the thirty-first innovative applications of artificial intelligence conference, IAAI 2019, the ninth AAAI symposium on educational advances in artificial intelligence, EAAI 2019, honolulu, hawaii, usa, january 27-february 1, 2019 (pp. 6399-6406). AAAI Press. Available online: https://doi.org/10.1609/aaai.v33101.33016399 (accessed on). https://doi.org/10.1609/AAALV33101.33016399.

- Garg, S., Tsipras, D., Liang, P. S., & Valiant, G. (2022). What can transformers learn in-context? A case study of simple function classes. In Advances in neural information processing systems (Vol. 35, pp. 30583-30598).

- Ge, S., Zhang, Y., Liu, L., Zhang, M., Han, J., & Gao, J. (2024). Model Tells You What to Discard: Adaptive KV Cache Compression for LLMs. In The twelfth international conference on learning representations. Available online: https://openreview.net/forum?id=uNrFpDPMyo (accessed on).

- Ge, Y., Ren, Y., Hua, W., Xu, S., Tan, J., & Zhang, Y. (2023). Llm as os (llmao), agents as apps: Envisioning aios, agents and the aios-agent ecosystem. arXiv.

- Geva, M., Schuster, R., Berant, J., & Levy, O. (2021). Transformer feed-forward layers are key-value memories. arXiv.

- Gim, I., Chen, G., Lee, S.-s., Sarda, N., Khandelwal, A., & Zhong, L. (2024). Prompt cache: Modular attention reuse for low-latency inference. Proceedings of Machine Learning and Systems, 6, 325-338.

- GitHub. (2022). Github copilot. Available online: https://github.com/copilot (accessed on).

- Grattafiori, A., Dubey, A., Jauhri, A., Pandey, A., Kadian, A., et al. (2024). The llama 3 herd of models. Available online: https://arxiv.org/abs/2407.21783 (accessed on).

- Günther, M., Ong, J., Mohr, I., Abdessalem, A., Abel, T., Akram, M. K., Guzman, S., Mastrapas, G., Sturua, S., Wang, B., Werk, M., Wang, N., & Xiao, H. (2023). Jina Embeddings 2: 8192-Token General-Purpose Text Embeddings for Long Documents. CoRR, abs/2310.19923. Available online: https://doi.org/10.48550/arXiv.2310.19923 (accessed on). https://doi.org/10.48550/ARXIV.2310.19923.

- Guo, M., Ainslie, J., Uthus, D. C., Ontañón, S., Ni, J., Sung, Y., & Yang, Y. (2022). LongT5: Efficient Text-To- Text Transformer for Long Sequences. In M. Carpuat, M. de Marneffe, & I. V. M. Ruíz (Eds.), Findings of the association for computational linguistics: NAACL 2022, seattle, wa, united states, july 10-15, 2022 (pp. 724-736). Association for Computational Linguistics. Available online: https://doi.org/10.18653/v1/2022.findings-naacl.55 (accessed on). https://doi.org/10.18653/V1/2022.FINDINGS-NAACL.55.

- Guo, T., Chen, X., Wang, Y., Chang, R., Pei, S., Chawla, N. V., Wiest, O., & Zhang, X. (2024). Large language model based multi-agents: A survey of progress and challenges. arXiv.

- Gutiérrez, B. J., Shu, Y., Gu, Y., Yasunaga, M., & Su, Y. (2024). Hipporag: Neurobiologically inspired long-term memory for large language models. In The thirty-eighth annual conference on neural information processing systems.

- Hahn, M., & Goyal, N. (2023). A theory of emergent in-context learning as implicit structure induction. arxiv, arXiv:2303.07971. Available online: https://arxiv.org/abs/2303.07971 (accessed on).

- Han, C., Wang, Q., Peng, H., Xiong, W., Chen, Y., Ji, H., & Wang, S. (2024, June). LM-Infinite: Zero-Shot Extreme Length Generalization for Large Language Models. In K. Duh, H. Gomez, & S. Bethard (Eds.), Proceedings of the 2024 conference of the north american chapter of the association for computational linguistics: Human language technologies (volume 1: Long papers) (pp. 3991-4008). Mexico City, Mexico: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.naacl-long.222/ (accessed on). https://doi.org/10.18653/v1/2024.naacl-long.222.

- Han, Z., Gao, C., Liu, J., Zhang, J., & Zhang, S. Q. (2024). Parameter-efficient fine-tuning for large models: A comprehensive survey. arXiv preprint arXiv:2403.14608.

- Hao, Y., Zhai, M., Hajimirsadeghi, H., Hosseini, S., & Tung, F. (2025). Radar: Fast Long-Context Decoding for Any Transformer. In The thirteenth international conference on learning representations. Available online: https://openreview.net/forum?id=ZTpWOwMrzQ (accessed on).

- Hatalis, K., Christou, D., Myers, J., Jones, S., Lambert, K., Amos-Binks, A., Dannenhauer, Z., & Dannenhauer, D. (2024). Memory Matters: The Need to Improve Long-Term Memory in LLM-Agents. Proceedings of the AAAI Symposium Series, 2.

- He, K., Mao, R., Lin, Q., Ruan, Y., Lan, X., Feng, M., & Cambria, E. (2023). A survey of large language models for healthcare: from data, technology, and applications to accountability and ethics. arXiv.

- He, T., Fu, G., Yu, Y., Wang, F., Li, J., Zhao, Q., Song, C., Qi, H., Luo, D., Zou, H., & et al. (2023). Towards a psychological generalist ai: A survey of current applications of large language models and future prospects. arXiv.

- He, X., Tian, Y., Sun, Y., Chawla, N. V., Laurent, T., LeCun, Y., Bresson, X., & Hooi, B. (2024). G-Retriever: Retrieval-Augmented Generation for Textual Graph Understanding and Question Answering. arXiv preprint arXiv:2402.07630.

- He, Y., Zhang, L., Wu, W., Liu, J., Zhou, H., & Zhuang, B. (2024). ZipCache: Accurate and Efficient KV Cache Quan- tization with Salient Token Identification. In A. Globerson et al. (Eds.), Advances in neural information processing systems (Vol. 37, pp. 68287-68307). Curran Associates, Inc. Available online: https://proceedings.neurips.cc/paper_files/paper/2024/file/7e57131fdeb815764434b65162c88895-Paper-Conference.pdf (accessed on).

- He, Z., Karlinsky, L., Kim, D., McAuley, J., Krotov, D., & Feris, R. (2024). Camelot: Towards large language models with training-free consolidated associative memory. arXiv preprint arXiv:2402.13449.

- He, Z., Lin, W., Zheng, H., Zhang, F., Jones, M. W., Aitchison, L., Xu, X., Liu, M., Kristensson, P. O., & Shen, J. (2024). Human-inspired Perspectives: A Survey on Al Long-term Memory. arXiv preprint arXiv:2411.00489. Available online: https://arxiv.org/abs/2411.00489 (accessed on).

- Hernandez, E., Li, B. Z., & Andreas, J. (2023). Inspecting and editing knowledge representations in language models. arXiv.

- Herold, C., & Ney, H. (2023). Improving Long Context Document-Level Machine Translation. CoRR, abs/2306.05183. Available online: https://doi.org/10.48550/arXiv.2306.05183 (accessed on) https://doi.org/10.48550/ARXIV.2306.05183.

- Hilgert, L., Liu, D., & Niehues, J. (2024, November). Evaluating and Training Long-Context Large Language Models for Question Answering on Scientific Papers. In S. Kumar et al. (Eds.), Proceedings of the 1st workshop on customizable nlp: Progress and challenges in customizing nlp for a domain, application, group, or individual (customnlp4u) (pp. 220-236). Miami, Florida, USA: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.customnlp4u-1.17/ (accessed on). https://doi.org/10.18653/v1/2024.customnlp4u-1.17.

- Hooper, C., Kim, S., Mohammadzadeh, H., Mahoney, M. W., Shao, S., Keutzer, K., & Gholami, A. (2024). KVQuant: Towards 10 million context length LLM inference with KV cache quantization. Advances in Neural Information Processing Systems, NeurIPS 2024, 37, 1270-1303.

- Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., & Chen, W. (2021). Lora: Low-rank adaptation of large language models. Available online: https://arxiv.org/abs/2106.09685 (accessed on).

- Hu, J., Huang, W., Wang, W., Wang, H., Hu, T., Zhang, Q., Feng, H., Chen, X., Shan, Y., & Xie, T. (2025). Epic: Efficient position-independent caching for serving large language models. Available online: https://arxiv.org/abs/2410.15332 (accessed on).

- Hua, W., Fan, L., Li, L., Mei, K., Ji, J., Ge, Y., Hemphill, L., & Zhang, Y. (2023). War and peace (waragent): Large language model-based multi-agent simulation of world wars. arXiv preprint arXiv:2311.17227.

- Huang, C., Liu, Q., Lin, B. Y., Pang, T., Du, C., & Lin, M. (2023, 07). LoraHub: Efficient Cross-Task Generalization via Dynamic LoRA Composition. Available online: https://arxiv.org/pdf/2307.13269.pdf (accessed on).

- Hooper, C., Kim, S., Mohammadzadeh, H., Mahoney, M. W., Shao, S., Keutzer, K., & Gholami, A. (2024). KVQuant: Towards 10 million context length LLM inference with KV cache quantization. Advances in Neural Information Processing Systems, NeurIPS 2024, 37, 1270-1303.

- Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., & Chen, W. (2021). Lora: Low-rank adaptation of large language models. Available online: https://arxiv.org/abs/2106.09685 (accessed on).

- Hu, J., Huang, W., Wang, W., Wang, H., Hu, T., Zhang, Q., Feng, H., Chen, X., Shan, Y., & Xie, T. (2025). Epic: Efficient position-independent caching for serving large language models. Available online: https://arxiv.org/abs/2410.15332 (accessed on).

- Hua, W., Fan, L., Li, L., Mei, K., Ji, J., Ge, Y., Hemphill, L., & Zhang, Y. (2023). War and peace (waragent): Large language model-based multi-agent simulation of world wars. arXiv preprint arXiv:2311.17227.

- Huang, C., Liu, Q., Lin, B. Y., Pang, T., Du, C., & Lin, M. (2023, 07). LoraHub: Efficient Cross-Task Generalization via Dynamic LoRA Composition. Available online: https://arxiv.org/pdf/2307.13269.pdf (accessed on).

- Huang, X., Liu, W., Chen, X., Wang, X., Wang, H., Lian, D., Wang, Y., Tang, R., & Chen, E. (2024). Understanding the planning of llm agents: A survey. arXiv.

- Huang, Y., Xu, J., Lai, J., Jiang, Z., Chen, T., Li, Z., Yao, Y., Ma, X., Yang, L., Chen, H., et al. (2023). Advancing transformer architecture in long-context large language models: A comprehensive survey. arXiv preprint arXiv:2311.12351.

- Hui, B., Yang, J., Cui, Z., Yang, J., Liu, D., Zhang, L., Liu, T., Zhang, J., Yu, B., Dang, K., Yang, A., Men, R., Huang, F., Ren, X., Ren, X., Zhou, J., & Lin, J. (2024). Qwen2.5-Coder Technical Report. CoRR, abs/2409.12186. Available online: https://doi.org/10.48550/arXiv.2409.12186 (accessed on).

- Ilharco, G., Ribeiro, M. T., Wortsman, M., Gururangan, S., Schmidt, L., Hajishirzi, H., & Farhadi, A. (2022, 12). Editing Models with Task Arithmetic. Available online: https://arxiv.org/pdf/2212.04089.pdf (accessed on).

- Inflection. (2023). I’m pi, your personal ai. https://inflection.ai/. Available online: https://inflection.ai/ (accessed on).

- Ippolito, D., Tramer, F., Nasr, M., Zhang, C., Jagielski, M., Lee, K., Choquette Choo, C., & Carlini, N. (2023, September). Preventing Generation of Verbatim Memorization in Language Models Gives a False Sense of Privacy. In C. M. Keet, H.-Y. Lee, & S. Zarrieß (Eds.), Proceedings of the 16th international natural language generation conference (pp. 28–53). Prague, Czechia: Association for Computational Linguistics.

- iunn Ong, K. T., Kim, N., Gwak, M., Chae, H., Kwon, T., Jo, Y., won Hwang, S., Lee, D., & Yeo, J. (2025). Towards Lifelong Dialogue Agents via Timeline-based Memory Management. In Proceedings of the 2025 conference of the north american chapter of the association for computational linguistics: Human language technologies. Mexico City, Mexico: Association for Computational Linguistics.

- Izacard, G., Lewis, P., Lomeli, M., Hosseini, L., Petroni, F., Schick, T., Dwivedi-Yu, J., Joulin, A., Riedel, S., & Grave, E. (2023, January). Atlas: few-shot learning with retrieval augmented language models. J. Mach. Learn. Res., 24(1).

- Jang, J., Boo, M., & Kim, H. (2023). Conversation chronicles: Towards diverse temporal and relational dynamics in multi-session conversations. arXiv preprint arXiv:2310.13420.

- Jang, Y., Lee, K.-i., Bae, H., Lee, H., & Jung, K. (2024, June). IterCQR: Iterative Conversational Query Reformulation with Retrieval Guidance. In K. Duh, H. Gomez, & S. Bethard (Eds.), Proceedings of the 2024 conference of the north american chapter of the association for computational linguistics: Human language technologies (volume 1: Long papers) (pp. 8121–8138).

- Jhunjhunwala, D., Wang, S., & Joshi, G. (2024). FedFisher: Leveraging Fisher Information for One-Shot Federated Learning. In Aistats (pp. 1612–1620).

- Jiang, H., Wu, Q., Lin, C.-Y., Yang, Y., & Qiu, L. (2023, December). LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models. In H. Bouamor, J. Pino, & K. Bali (Eds.), Proceedings of the 2023 conference on empirical methods in natural language processing (pp. 13358–13376).

- Jiang, W., Subramanian, S., Graves, C., Alonso, G., Yazdanbakhsh, A., & Dadu, V. (2025). Rago: Systematic performance optimization for retrieval-augmented generation serving. Available online: https://arxiv.org/abs/2503.14649 (accessed on).

- Jiang, X., Li, F., Zhao, H., Wang, J., Shao, J., Xu, S., Zhang, S., Chen, W., Tang, X., Chen, Y., & et al. (2024). Long term memory: The foundation of ai self-evolution. arXiv.

- Jiang, Y., Wang, H., Xie, L., Zhao, H., Zhang, C., Qian, H., & Lui, J. C. (2024). D-LLM: A Token Adaptive Computing Resource Allocation Strategy for Large Language Models. In A. Globerson et al. (Eds.), Advances in neural information processing systems (Vol. 37, pp. 1725–1749).

- Jiang, Z., Xu, F., Gao, L., Sun, Z., Liu, Q., Dwivedi-Yu, J., Yang, Y., Callan, J., & Neubig, G. (2023b, December). Active Retrieval Augmented Generation. In H. Bouamor, J. Pino, & K. Bali (Eds.), Proceedings of the 2023 conference on empirical methods in natural language processing (pp. 7969–7992).

- Jiang, Z., Xu, F. F., Gao, L., Sun, Z., Liu, Q., Dwivedi-Yu, J., Yang, Y., Callan, J., & Neubig, G. (2023a). Active retrieval augmented generation. arXiv preprint arXiv:2305.06983.

- Jin, B., Yoon, J., Han, J., & Arik, S. Ö. (2024). Long-Context LLMs Meet RAG: Overcoming Challenges for Long Inputs in RAG. CoRR, abs/2410.05983. Available online: https://doi.org/10.48550/arXiv.2410.05983 (accessed on).

- Jin, B., Zeng, H., Wang, G., Chen, X., Wei, T., Li, R., Wang, Z., Li, Z., Li, Y., Lu, H., et al. (2023). Language Models As Semantic Indexers. arXiv preprint arXiv:2310.07815.

- Jin, H., & Wu, Y. (2025). CE-CoLLM: Efficient and Adaptive Large Language Models Through Cloud-Edge Collaboration. In 2025 ieee international conference on web services (icws) (p. 316-323).

- Jin, H., Zhang, Y., Meng, D., Wang, J., & Tan, J. (2024). A Comprehensive Survey on Process-Oriented Automatic Text Summarization with Exploration of LLM-Based Methods. CoRR, abs/2403.02901. Available online: https://doi.org/10.48550/arXiv.2403.02901 (accessed on).

- Johnson-Laird, P. N. (1983). Mental models: Towards a cognitive science of language, inference, and consciousness (Vol. 6). Harvard University Press.

- Jouppi, N. P., Young, C., Patil, N., Patterson, D., Agrawal, G., Bajwa, R., Bates, S., Bhatia, S., Boden, N., Borchers, A., Boyle, R., Cantin, P.-l., Chao, C., Clark, C., Coriell, J., Daley, M., Dau, M., Dean, J., Gelb, B., . . . Yoon, D. H. (2017, June). In-Datacenter Performance Analysis of a Tensor Processing Unit. SIGARCH Comput. Archit. News, 45(2), 1–12.

- Kadavy, D. (2021). Digital zettelkasten: Principles, methods, and examples. Kadavy, Inc.

- Kaiya, Z., Naim, M., Kondic, J., Cortes, M., Ge, J., Luo, S., Yang, G. R., & Ahn, A. (2023). Lyfe agents: Generative agents for low-cost real-time social interactions. arXiv.

- Kandpal, N., Wallace, E., & Raffel, C. (2022). Deduplicating training data mitigates privacy risks in language models. Available online: https://arxiv.org/abs/2202.06539 (accessed on).

- Kang, H., Zhang, Q., Kundu, S., Jeong, G., Liu, Z., Krishna, T., & Zhao, T. (2024). Gear: An efficient kv cache compression recipe for near-lossless generative inference of llm. Available online: https://arxiv.org/abs/2403.05527 (accessed on).

- Kapoor, S., Henderson, P., & Narayanan, A. (2024). Promises and pitfalls of artificial intelligence for legal applications. CoRR, abs/2402.01656. Available online: https://doi.org/10.48550/arXiv.2402.01656 (accessed on).

- Karpukhin, V., Oguz, B., Min, S., Lewis, P. S., Wu, L., Edunov, S., Chen, D., & Yih, W.-t. (2020). Dense passage retrieval for open-domain question answering. In Emnlp (1) (pp. 6769–6781).

- Ke, Z., Kong, W., Li, C., Zhang, M., Mei, Q., & Bendersky, M. (2024). Bridging the Preference Gap between Retrievers and LLMs. arXiv preprint arXiv:2401.06954.

- Khandelwal, U., Levy, O., Jurafsky, D., Zettlemoyer, L., & Lewis, M. (2019). Generalization through memorization: Nearest neighbor language models. arXiv preprint arXiv:1911.00172.

- Khattab, O., Santhanam, K., Li, X. L., Hall, D., Liang, P., Potts, C., & Zaharia, M. (2022). Demonstrate-Search-Predict: Composing retrieval and language models for knowledge-intensive NLP. arXiv preprint arXiv:2212.14024.

- Khattab, O., & Zaharia, M. (2020). ColBERT: Efficient and Effective Passage Search via Contextualized Late Interaction over BERT. In Proceedings of the 43rd international acm sigir conference on research and development in information retrieval (p. 39–48).

- Kim, M., Shim, K., Choi, J., & Chang, S. (2024, November). InfiniPot: Infinite Context Processing on Memory- Constrained LLMs. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Proceedings of the 2024 conference on empirical methods in natural language processing (pp. 16046–16060).

- Kim, S. H., Ka, K., Jo, Y., Hwang, S.-w., Lee, D., & Yeo, J. (2024). Ever-Evolving Memory by Blending and Refining the Past. arXiv preprint arXiv:2403.04787. Available online: https://arxiv.org/abs/2403.04787 (accessed on).

- Kirkpatrick, J., Pascanu, R., Rabinowitz, N., Veness, J., Desjardins, G., Rusu, A. A., Milan, K., Quan, J., Ramalho, T., Grabska-Barwinska, A., et al. (2017). Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences, 114(13), 3521–3526.

- Kwon, W., Li, Z., Zhuang, S., Sheng, Y., Zheng, L., Yu, C. H., Gonzalez, J., Zhang, H., & Stoica, I. (2023). Efficient Memory Management for Large Language Model Serving with PagedAttention. In Proceedings of the 29th symposium on operating systems principles (p. 611–626).

- Lai, K., Tang, Z., Pan, X., Dong, P., Liu, X., Chen, H., Shen, L., Li, B., & Chu, X. (2025). Mediator: Memory-efficient LLM Merging with Less Parameter Conflicts and Uncertainty Based Routing. arxiv preprint arXiv:2502.04411.

- Laird, J. E. (2019). The soar cognitive architecture. MIT press.

- Langchain. (2023). Recursively split by character. https://python.langchain.com/docs/modules/data_connection/ document_transformers/recursive_text_splitter.

- Lee, G., Hartmann, V., Park, J., Papailiopoulos, D., & Lee, K. (2023a). Prompted LLMs as Chatbot Modules for Long Open-domain Conversation. In A. Rogers, J. L. Boyd-Graber, & N. Okazaki (Eds.), Findings of the association for computational linguistics: ACL 2023, toronto, canada, july 9-14, 2023 (pp. 4536–4554).

- Lee, G., Hartmann, V., Park, J., Papailiopoulos, D., & Lee, K. (2023b). Prompted llms as chatbot modules for long open-domain conversation. arXiv preprint arXiv:2305.04533.

- Lee, K., Ippolito, D., Nystrom, A., Zhang, C., Eck, D., Callison-Burch, C., & Carlini, N. (2022). Deduplicating Training Data Makes Language Models Better. In Proceedings of the 60th annual meeting of the association for computational linguistics.

- Lee, N., Ajanthan, T., & Torr, P. (2019). SNIP: SINGLE-SHOT NETWORK PRUNING BASED ON CONNECTION SENSITIVITY. In International conference on learning representations. Available online: https://openreview.net/ forum?id=B1VZqjAcYX (accessed on).

- Leviathan, Y., Kalman, M., & Matias, Y. (2025). Selective Attention Improves Transformer. In The thirteenth international conference on learning representations. Available online: https://openreview.net/forum?id=v0 FzmPCd1e (accessed on).

- Lewis, P., Perez, E., Piktus, A., Petroni, F., Karpukhin, V., Goyal, N., Küttler, H., Lewis, M., Yih, W.-t., Rocktäschel, T., et al. (2020). Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems, 33, 9459–9474.

- Leydesdorff, S. (2017). Memory cultures: Memory, subjectivity and recognition. Routledge.

- LI, H., Li, Y., Tian, A., Tang, T., Xu, Z., Chen, X., HU, N., Dong, W., Qing, L., & Chen, L. (2025). A Survey on Large Language Model Acceleration based on KV Cache Management. Transactions on Machine Learning Research. Available online: https://openreview.net/forum?id=z3JZzu9EA3 (accessed on).

- Li, H., Li, Y., Tian, A., Tang, T., Xu, Z., Chen, X., HU, N., Dong, W., Qing, L., & Chen, L. (2025). A Survey on Large Language Model Acceleration based on KV Cache Management. Transactions on Machine Learning Research. Available online: https://openreview.net/forum?id=z3JZzu9EA3 (accessed on).

- Li, H., Yang, C., Zhang, A., Deng, Y., Wang, X., & Chua, T.-S. (2024). Hello again! llm-powered personalized agent for long-term dialogue. arXiv preprint arXiv:2406.05925.

- Li, K., Patel, O., Viégas, F., Pfister, H., & Wattenberg, M. (2024). Inference-time intervention: Eliciting truthful answers from a language model. In Advances in neural information processing systems (Vol. 36).

- Li, L., Zhang, Y., Liu, D., & Chen, L. (2023). Large language models for generative recommendation: A survey and visionary discussions. arXiv.

- Li, N., Gao, C., Li, Y., & Liao, Q. (2023). Large language model-empowered agents for simulating macroeconomic activities. arXiv.

- Li, S., He, Y., Guo, H., Bu, X., Bai, G., Liu, J., Liu, J., Qu, X., Li, Y., Ouyang,W., Su,W., & Zheng, B. (2024, November). GraphReader: Building Graph-based Agent to Enhance Long-Context Abilities of Large Language Models. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Findings of the association for computational linguistics: Emnlp 2024 (pp. 12758–12786).

- Li, W., Jiang, G., Ding, X., Tao, Z., Hao, C., Xu, C., Zhang, Y., & Wang, H. (2025). Flowkv: A disaggregated inference framework with low-latency kv cache transfer and load-aware scheduling. Available online: https://arxiv.org/abs/2504.03775 (accessed on).

- Li, X., & Li, J. (2023). AnglE-optimized Text Embeddings. arXiv preprint arXiv:2309.12871.

- Li, X., Nie, E., & Liang, S. (2023). From Classification to Generation: Insights into Crosslingual Retrieval Augmented ICL. arXiv preprint arXiv:2311.06595.

- Li, Y., Huang, Y., Yang, B., Venkitesh, B., Locatelli, A., Ye, H., Cai, T., Lewis, P., & Chen, D. (2024). SnapKV: LLM Knows What You are Looking for Before Generation. arXiv preprint arXiv:2404.14469.

- Li, Y.,Wang, S., Ding, H., & Chen, H. (2023). Large language models in finance: A survey. In Proceedings of the fourth acm international conference on ai in finance (pp. 374–382).

- Li, Y., Wen, H., Wang, W., Li, X., Yuan, Y., Liu, G., Liu, J., Xu, W., Wang, X., Sun, Y., & et al. (2024). Personal llm agents: Insights and survey about the capability, efficiency and security. arXiv.

- Li, Y., Zhang, Y., & Sun, L. (2023). Metaagents: Simulating interactions of human behaviors for llm-based task-oriented coordination via collaborative generative agents. arXiv preprint arXiv:2310.06500.

- Li, Z., Song, S., Xi, C., Wang, H., Tang, C., Niu, S., Chen, D., Yang, J., Li, C., Yu, Q., et al. (2025). Memos: A memory os for ai system. arXiv preprint arXiv:2507.03724.

- Li, Z., Xiao, C.,Wang, Y., Liu, X., Tang, Z., Lu, B., Yang, M., Chen, X., &Chu, X. (2025). Antkv: Anchor token-aware subbit vector quantization for kv cache in large language models. Available online: https://arxiv.org/abs/2506.19505 (accessed on).

- Lin, J., Dai, X., Xi, Y., Liu, W., Chen, B., Li, X., Zhu, C., Guo, H., Yu, Y., Tang, R., & et al. (2023). How can recommender systems benefit from large language models: A survey. arXiv.

- Lin, X. V., Chen, X., Chen, M., Shi,W., Lomeli, M., James, R., Rodriguez, P., Kahn, J., Szilvasy, G., Lewis, M., et al. (2023). RA-DIT: Retrieval-Augmented Dual Instruction Tuning. arXiv preprint arXiv:2310.01352.

- Lin, Y., Tang, H., Yang, S., Zhang, Z., Xiao, G., Gan, C., & Han, S. (2025). Qserve: W4a8kv4 quantization and system co-design for efficient llm serving. Available online: https://arxiv.org/abs/2405.04532 (accessed on).

- Liu, A., Liu, J., Pan, Z., He, Y., Haffari, G., & Zhuang, B. (2024). MiniCache: KV Cache Compression in Depth Dimension for Large Language Models. In A. Globerson et al. (Eds.), Advances in neural information processing systems (Vol. 37, pp. 139997–140031).

- Liu, D., Chen, M., Lu, B., Jiang, H., Han, Z., Zhang, Q., Chen, Q., Zhang, C., Ding, B., Zhang, K., Chen, C., Yang, F., Yang, Y., & Qiu, L. (2024). Retrievalattention: Accelerating long-context llm inference via vector retrieval. Available online: https://arxiv.org/abs/2409.10516 (accessed on).

- Liu, J., Li, L., Xiang, T., Wang, B., & Qian, Y. (2023, December). TCRA-LLM: Token Compression Retrieval Augmented Large Language Model for Inference Cost Reduction. In H. Bouamor, J. Pino, & K. Bali (Eds.), Findings of the association for computational linguistics: Emnlp 2023 (pp. 9796–9810).

- Liu, J., Qiu, Z., Li, Z., Dai, Q., Zhu, J., Hu, M., Yang, M., & King, I. (2025). A Survey of Personalized Large Language Models: Progress and Future Directions. arXiv preprint arXiv:2502.11528.

- Liu, L., Yang, X., Shen, Y., Hu, B., Zhang, Z., Gu, J., & Zhang, G. (2023). Think-in-memory: Recalling and post-thinking enable llms with long-term memory. arXiv preprint arXiv:2311.08719.

- Liu, N. F., Lin, K., Hewitt, J., Paranjape, A., Bevilacqua, M., Petroni, F., & Liang, P. (2024). Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics, 12, 157–173.

- Liu, S., Ye, H., Xing, L., & Zou, J. (2023). In-context vectors: Making in context learning more effective and controllable through latent space steering. arXiv.

- Liu, W., Zhang, R., Zhou, A., Gao, F., & Liu, J. (2025). Echo: A large language model with temporal episodic memory. arXiv preprint arXiv:2502.16090.

- Liu, X., Chen, H., Hu, X., & Chu, X. (2025). FlowKV: Enhancing Multi-Turn Conversational Coherence in LLMs via Isolated Key-Value Cache Management. In First workshop on multi-turn interactions in large language models. Available online: https://openreview.net/forum?id=rZumU1owkr (accessed on).

- Liu, X., Tang, Z., Dong, P., Li, Z., Liu, Y., Li, B., Hu, X., & Chu, X. (2025). Chunkkv: Semantic-preserving kv cache compression for efficient long-context llm inference. Available online: https://arxiv.org/abs/2502.00299 (accessed on).

- Liu, Y., Li, H., Cheng, Y., Ray, S., Huang, Y., Zhang, Q., Du, K., Yao, J., Lu, S., Ananthanarayanan, G., Maire, M., Hoffmann, H., Holtzman, A., & Jiang, J. (2024). Cachegen: Kv cache compression and streaming for fast large language model serving. Available online: https://arxiv.org/abs/2310.07240 (accessed on).

- Liu, Z., Desai, A., Liao, F., Wang, W., Xie, V., Xu, Z., Kyrillidis, A., & Shrivastava, A. (2023). Scissorhands: Exploiting the Persistence of Importance Hypothesis for LLM KV Cache Compression at Test Time. In Thirty-seventh conference on neural information processing systems.

- Liu, Z., Yuan, J., Jin, H., Zhong, S., Xu, Z., Braverman, V., Chen, B., & Hu, X. (2024). KIVI: A Tuning-Free Asymmetric 2bit Quantization for KV Cache. In International conference on machine learning, icml 2024 (pp. 32332–32344).

- Liu, Z., Zhong, A., Li, Y., Yang, L., Ju, C., Wu, Z., Ma, C., Shu, P., Chen, C., Kim, S., & et al. (2023). Radiology-gpt: A large language model for radiology. arXiv preprint arXiv:2306.08666.

- Lozhkov, A., Li, R., Allal, L. B., Cassano, F., Lamy-Poirier, J., Tazi, N., Tang, A., Pykhtar, D., Liu, J., Wei, Y., Liu, T., Tian, M., Kocetkov, D., Zucker, A., Belkada, Y., Wang, Z., Liu, Q., Abulkhanov, D., Paul, I., . . . et al. (2024). StarCoder 2 and The Stack v2: The Next Generation. CoRR, abs/2402.19173.

- Lu, J., An, S., Lin, M., Pergola, G., He, Y., Yin, D., Sun, X., & Wu, Y. (2023a). Memochat: Tuning llms to use memos for consistent long-range open-domain conversation. arXiv preprint arXiv:2308.08239.

- Lu, J., An, S., Lin, M., Pergola, G., He, Y., Yin, D., Sun, X., & Wu, Y. (2023b). Memochat: Tuning llms to use memos for consistent long-range open-domain conversation. arXiv preprint arXiv:2308.08239.

- Lu, Z., Fan, C.,Wei,W., Qu, X., Chen, D., & Cheng, Y. (2024a). Twin-Merging: Dynamic Integration of Modular Expertise in Model Merging. arXiv preprint arXiv:2406.15479.

- Lu, Z., Fan, C.,Wei,W., Qu, X., Chen, D., & Cheng, Y. (2024b, 06). Twin-Merging: Dynamic Integration of Modular Expertise in Model Merging. NIPS. Available online: https://arxiv.org/pdf/2406.15479v2.pdf (accessed on).

- Luo, Z., Xu, C., Zhao, P., Geng, X., Tao, C., Ma, J., Lin, Q., & Jiang, D. (2023). Augmented Large Language Models with Parametric Knowledge Guiding. arXiv preprint arXiv:2305.04757.

- Luohe, S., Zhang, H., Yao, Y., Li, Z., et al. (n.d.). Keep the Cost Down: A Review on Methods to Optimize LLM’s KV-Cache Consumption. In First conference on language modeling.

- Lyu, C., Du, Z., Xu, J., Duan, Y., Wu, M., Lynn, T., Aji, A. F., Wong, D. F., & Wang, L. (2024). A Paradigm Shift: The Future of Machine Translation Lies with Large Language Models. In Proceedings of the 2024 joint international conference on computational linguistics, language resources and evaluation, LREC/COLING 2024 (pp. 1339–1352).

- Ma, X., Gong, Y., He, P., Zhao, H., & Duan, N. (2023). Query Rewriting for Retrieval-Augmented Large Language Models. arXiv preprint arXiv:2305.14283.

- Mack, A., & Turner, A. (2024). Mechanistically eliciting latent behaviors in language models. Available online: https://www.lesswrong.com/posts/ioPnHKFyy4Cw2Gr2x (accessed on).

- Maharana, A., Lee, D.-H., Tulyakov, S., Bansal, M., Barbieri, F., & Fang, Y. (2024). Evaluating very long-term conversational memory of llm agents. arXiv preprint arXiv:2402.17753.

- Marczak, D., Twardowski, B., Trzciński, T., & Cygert, S. (2024). MagMax: Leveraging Model Merging for Seamless Continual Learning. In Eccv.

- Masry, A., & Hajian, A. (2024). LongFin: A Multimodal Document Understanding Model for Long Financial Domain Documents. CoRR, abs/2401.15050. Available online: https://doi.org/10.48550/arXiv.2401.15050 (accessed on).

- Matena, M. S., & Raffel, C. A. (2022). Merging models with fisher-weighted averaging. NeurIPS, 35, 17703–17716.

- McMahan, B., Moore, E., Ramage, D., Hampson, S., & y Arcas, B. A. (2017). Communication-efficient learning of deep networks from decentralized data. In Aistats (pp. 1273–1282).

- Mehta, S. V., Gupta, J., Tay, Y., Dehghani, M., Tran, V. Q., Rao, J., Najork, M., Strubell, E., & Metzler, D. (2022). DSI++: Updating transformer memory with new documents. arXiv preprint arXiv:2212.09744.

- mem0ai. (2024, July). mem0: The memory layer for personalized ai. mem0.ai.

- Meng, F., Tang, P., Tang, X., Yao, Z., Sun, X., & Zhang, M. (2025). Transmla: Multi-head latent attention is all you need. Available online: https://arxiv.org/abs/2502.07864 (accessed on).

- Meng, K., Sharma, A. S., Andonian, A. J., Belinkov, Y., & Bau, D. (2023). Mass-Editing Memory in a Transformer. In The eleventh international conference on learning representations. Available online: https://openreview.net/ forum?id=MkbcAHIYgyS (accessed on).

- Min, S., Lyu, X., Holtzman, A., Artetxe, M., Lewis, M., Hajishirzi, H., & Zettlemoyer, L. (2022). Rethinking the role of demonstrations: What makes in-context learning work? In Proceedings of the 2022 conference on empirical methods in natural language processing (pp. 11048–11064).

- Mishra, M., Stallone, M., Zhang, G., Shen, Y., Prasad, A., Soria, A. M., Merler, M., Selvam, P., Surendran, S., Singh, S., Sethi, M., Dang, X., Li, P., Wu, K., Zawad, S., Coleman, A., White, M., Lewis, M., Pavuluri, R., . . . Panda, R. (2024). Granite Code Models: A Family of Open Foundation Models for Code Intelligence. CoRR, abs/2405.04324.

- Modarressi, A., Imani, A., Fayyaz, M., & Schütze, H. (2023). Ret-llm: Towards a general read-write memory for large language models. arxiv.

- Montazeralghaem, A., Zamani, H., & Allan, J. (2020). A reinforcement learning framework for relevance feedback. In Proceedings of the 43rd international acm sigir conference on research and development in information retrieval (pp. 59–68).

- Muqeeth, M., Liu, H., & Raffel, C. (2024). Soft merging of experts with adaptive routing. TMLR.

- Murre, J. M., & Dros, J. (2015). Replication and analysis of ebbinghaus’ forgetting curve. PloS one, 10(7), e0120644.

- Nasr, M., Carlini, N., Hayase, J., Jagielski, M., Cooper, A. F., Ippolito, D., Choquette-Choo, C. A., Wallace, E., Tramèr, F., & Lee, K. (2023). Scalable extraction of training data from (production) language models. Available online: https://arxiv.org/abs/2311.17035 (accessed on).

- Nie, Y., Huang, H.,Wei, W., & Mao, X.-L. (2022, December). Capturing Global Structural Information in Long Document Question Answering with Compressive Graph Selector Network. In Proceedings of the 2022 conference on empirical methods in natural language processing (pp. 5036–5047).

- Nie, Y., Kong, Y., Dong, X., Mulvey, J. M., Poor, H. V., Wen, Q., & Zohren, S. (2024). A Survey of Large Language Models for Financial Applications: Progress, Prospects and Challenges. CoRR, abs/2406.11903. Available online: https://doi.org/10.48550/arXiv.2406.11903 (accessed on).

- OpenAI. (2024a). Memory and new controls for chatgpt. Available online: https://openai.com/index/memory-and -new-controls-for-chatgpt (accessed on).

- OpenAI. (2024b). New embedding models and api updates. Available online: https://openai.com/index/new -embedding-models-and-api-updates/ (accessed on).

- Packer, C., Wooders, S., Lin, K., Fang, V., Patil, S. G., Stoica, I., & Gonzalez, J. E. (2023). Memgpt: Towards llms as operating systems. arXiv preprint arXiv:2310.08560.

- Pan, H., Zhai, Z., Yuan, H., Lv, Y., Fu, R., Liu, M., Wang, Z., & Qin, B. (2023). Kwaiagents: Generalized information-seeking agent system with large language models. arXiv.

- Pan, J., Gao, T., Chen, H., & Chen, D. (2023). What in-context learning “learns” in-context: Disentangling task recognition and task learning. In Findings of the association for computational linguistics: Acl 2023 (pp. 8298–8319).

- Pan, J., & Li, G. (2025). A survey of llm inference systems. Available online: https://arxiv.org/abs/2506.21901 (accessed on).

- Pan, Z.,Wu, Q., Jiang, H., Luo, X., Cheng, H., Li, D., Yang, Y., Lin, C.-Y., Zhao, H. V., Qiu, L., & et al. (2025). On memory construction and retrieval for personalized conversational agents. arXiv preprint arXiv:2502.05589.

- Park, J. S., O’Brien, J., Cai, C. J., Morris, M. R., Liang, P., & Bernstein, M. S. (2023). Generative agents: Interactive simulacra of human behavior. In Proceedings of the 36th annual acm symposium on user interface software and technology (pp. 1–22).

- Peng, W., Li, G., Jiang, Y., Wang, Z., Ou, D., Zeng, X., Chen, E., et al. (2023). Large language model based long-tail query rewriting in taobao search. arXiv preprint arXiv:2311.03758.

- Pope, R., Douglas, S., Chowdhery, A., Devlin, J., Bradbury, J., Levskaya, A., Heek, J., Xiao, K., Agrawal, S., & Dean, J. (2022). Efficiently scaling transformer inference. Available online: https://arxiv.org/abs/2211.05102 (accessed on).

- Qian, C., Cong, X., Yang, C., Chen, W., Su, Y., Xu, J., Liu, Z., & Sun, M. (2023). Communicative agents for software development. arXiv preprint arXiv:2307.07924.

- Qian, H., Zhang, P., Liu, Z., Mao, K., & Dou, Z. (2024). Memorag: Moving towards next-gen rag via memoryinspired knowledge discovery. arXiv preprint arXiv:2409.05591.

- Qiang, Z.,Wang,W., & Taylor, K. (2023). Agent-om: Leveraging large language models for ontology matching. arXiv.

- Qu, Z., Li, X., Duan, R., Liu, Y., Tang, B., & Lu, Z. (2022). Generalized federated learning via sharpness aware minimization. In International conference on machine learning (pp. 18250–18280).

- Qwen, :, Yang, A., Yang, B., Zhang, B., Hui, B., Zheng, B., et al. (2025). Qwen2.5 technical report. Available online: https://arxiv.org/abs/2412.15115 (accessed on).

- Raventos, A., Paul, M., Chen, F., & Ganguli, S. (2023). Pretraining task diversity and the emergence of non-bayesian in-context learning for regression. In Thirty-seventh conference on neural information processing systems.

- Reddy, V., Koncel-Kedziorski, R., Lai, V. D., Krumdick, M., Lovering, C., & Tanner, C. (2024). DocFinQA: A Long-Context Financial Reasoning Dataset. In Proceedings of the 62nd annual meeting of the association for computational linguistics, ACL 2024 - short papers (pp. 445–458).

- Saad-Falcon, J., Fu, D. Y., Arora, S., Guha, N., & Ré, C. (2024). Benchmarking and Building Long-Context Retrieval Models with LoCo and M2-BERT. In Forty-first international conference on machine learning, ICML 2024. OpenReview.net.

- Safaya, A., & Yuret, D. (2024). Neurocache: Efficient vector retrieval for long-range language modeling. arXiv preprint arXiv:2407.02486.

- Salama, R., Cai, J., Yuan, M., Currey, A., Sunkara, M., Zhang, Y., & Benajiba, Y. (2025). Meminsight: Autonomous memory augmentation for llm agents. arXiv preprint arXiv:2503.21760.

- Samsami, M. R., Zholus, A., Rajendran, J., & Chandar, S. (2024). Mastering Memory Tasks withWorld Models. In The twelfth international conference on learning representations. Available online: https://openreview.net/forum?id=1vDArHJ68h (accessed on).

- Saxena, U., Saha, G., Choudhary, S., & Roy, K. (2024, November). Eigen Attention: Attention in Low-Rank Space for KV Cache Compression. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Findings of the association for computational linguistics: Emnlp 2024 (pp. 15332–15344). Miami, Florida, USA: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-emnlp.899/ (accessed on). https://doi.org/10.18653/v1/2024.findings-emnlp.899.

- Shan, L., Luo, S., Zhu, Z., Yuan, Y., & Wu, Y. (2025). Cognitive memory in large language models. arXiv preprint arXiv:2504.02441.

- Shang, J., Zheng, Z.,Wei, J., Ying, X., Tao, F., & Team, M. (2024). Ai-native memory: A pathway from llms towards agi. arXiv preprint arXiv:2406.18312.

- Shao, B., & Yan, J. (2024). A long-context language model for deciphering and generating bacteriophage genomes. Nature Communications, 15(1), 9392.

- Shao, Y., Li, L., Dai, J., & Qiu, X. (n.d.). Character-llm: A trainable agent for role-playing.

- Shao, Y., Li, L., Dai, J., & Qiu, X. (2023). Character-llm: A trainable agent for role-playing. arXiv preprint arXiv:2310.10158.

- Shazeer, N., Mirhoseini, A., Maziarz, K., Davis, A., Le, Q., Hinton, G., & Dean, J. (2017, 01). Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer. Available online: https://arxiv.org/pdf/1701.06538.pdf (accessed on).

- Shen, L., Mishra, A., & Khashabi, D. (2024). Do pretrained transformers learn in-context by gradient descent? arxiv, arXiv:2310.08540. Available online: https://arxiv.org/abs/2310.08540 (accessed on).

- Sheng, Y., Zheng, L., Yuan, B., Li, Z., Ryabinin, M., Chen, B., Liang, P., Ré, C., Stoica, I., & Zhang, C. (2023). FlexGen: high-throughput generative inference of large language models with a single GPU. In Proceedings of the 40th international conference on machine learning. JMLR.org.

- Sherwood, L., Kell, R. T., & Ward, C. (2004). Human physiology: from cells to systems. Thomson/Brooks/Cole.

- Shi, F., Chen, X., Misra, K., Scales, N., Dohan, D., Chi, E. H., Schärli, N., & Zhou, D. (2023). Large language models can be easily distracted by irrelevant context. In International conference on machine learning (pp. 31210–31227).

- Shi, K., Sun, X., Li, Q., & Xu, G. (2024). Compressing Long Context for Enhancing RAG with AMR-based Concept Distillation. CoRR, abs/2405.03085. Available online: https://doi.org/10.48550/arXiv.2405.03085 (accessed on). https://doi.org/10.48550/ARXIV.2405.03085.

- Shinn, N., Cassano, F., Gopinath, A., Narasimhan, K., & Yao, S. (2024). Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems, 36.

- Shinn, N., Cassano, F., Gopinath, A., Narasimhan, K. R., & Yao, S. (2023). Reflexion: Language agents with verbal reinforcement learning. In Thirty-seventh conference on neural information processing systems.

- Shinwari, H. U. K., & Usama, M. (2025). Memory-Augmented Architecture for Long-Term Context Handling in Large Language Models. arXiv preprint arXiv:2506.18271.

- Singh, A. K., Chan, S. C., Moskovitz, T., Grant, E., Saxe, A. M., & Hill, F. (2023). The transient nature of emergent in-context learning in transformers. In Thirty-seventh conference on neural information processing systems.

- Solso, R. L., & Kagan, J. (1979). Cognitive psychology. Houghton Mifflin Harcourt P.

- Squire, L. R. (2009). Memory and brain systems: 1969-2009. J Neurosci. 2009 Oct 14;29(41):12711-6. doi: 10.1523/JNEUROSCI.3575-09.2009. PMID: 19828780; PMCID: PMC2791502. J Neurosci, 29(41), 12711–12716.

- Sridhar, S., Khamaj, A., & Asthana, M. (2023). Cognitive neuroscience perspective on memory: overview and summary. Frontiers in human neuroscience, 17, 1217093.

- Subramani, N., Suresh, N., & Peters, M. E. (2022). Extracting latent steering vectors from pretrained language models. arXiv.

- Sun, H., Cai, H., Wang, B., Hou, Y., Wei, X., Wang, S., Zhang, Y., & Yin, D. (2024, November). Towards Verifiable Text Generation with Evolving Memory and Self-Reflection. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Proceedings of the 2024 conference on empirical methods in natural language processing (pp. 8211–8227). Miami, Florida, USA: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.emnlp-main.469/ (accessed on).

- Sun, R. (2001). Duality of the mind: A bottom-up approach toward cognition. Psychology Press.

- Sun, Z., Wang, X., Tay, Y., Yang, Y., & Zhou, D. (2022). Recitation-augmented language models. arXiv preprint arXiv:2210.01296.

- Sutton, R. S., & Barto, A. G. (2018). Reinforcement learning: An introduction. MIT press.

- Tan, X., Jiang, Y., Yang, Y., & Xu, H. (2025). Teola: Towards end-to-end optimization of llm-based applications. Available online: https://arxiv.org/abs/2407.00326 (accessed on).

- Tang, A., Shen, L., Luo, Y., Yin, N., Zhang, L., & Tao, D. (2024). Merging Multi-Task Models viaWeight-Ensembling Mixture of Experts. ICML.

- Tang, J., Zhao, Y., Zhu, K., Xiao, G., Kasikci, B., & Han, S. (2024, 21–27 Jul). QUEST: Query-Aware Sparsity for Efficient Long-Context LLM Inference. In Proceedings of the 41st international conference on machine learning (Vol. 235, pp. 47901–47911). PMLR.

- Teja, R. (2023). Evaluating the ideal chunk size for a rag system using llamaindex. https://www.llamaindex.ai/blog/ evaluating-the-ideal-chunk-size-for-a-rag-system-using-llamaindex-6207e5d3fec5.

- Tirumala, K., Markosyan, A. H., Zettlemoyer, L., & Aghajanyan, A. (2022). Memorization without overfitting: Analyzing the training dynamics of large language models. Available online: https://arxiv.org/abs/2205.10770 (accessed on).

- Touvron, H., Lavril, T., Izacard, G., Martinet, X., Lachaux, M.-A., Lacroix, T., Rozière, B., Goyal, N., Hambro, E., Azhar, F., Rodriguez, A., Joulin, A., Grave, E., & Lample, G. (2023). Llama: Open and efficient foundation language models. Available online: https://arxiv.org/abs/2302.13971 (accessed on).

- Touvron, H., Martin, L., Stone, K., et al. (2023). Llama 2: Open foundation and fine-tuned chat models. Available online: https://arxiv.org/abs/2307.09288 (accessed on).

- Tsai, Y., Liu, M., & Ren, H. (2023). Rtlfixer: Automatically fixing rtl syntax errors with large language models. arXiv preprint arXiv:2311.16543.

- Tulving, E., & Donaldson, W. (1972). Episodic and semantic memory. Academic Press.

- Turner, A., Kurzeja, M., Orr, D., & Elson, D. (2025). Steering Gemini using bidpo vectors. Available online: https://turntrout.com/gemini-steering (accessed on).

- Turner, A. M., Thiergart, L., Leech, G., Udell, D., Vazquez, J. J., Mini, U., & MacDiarmid, M. (2023). Steering language models with activation engineering. arXiv preprint arXiv:2308.10248.

- Tworkowski, S., Staniszewski, K., Pacek, M. a., Wu, Y., Michalewski, H., & Mił o´s, P. (2023). Focused Transformer: Contrastive Training for Context Scaling. In A. Oh, T. Naumann, A. Globerson, K. Saenko, M. Hardt, & S. Levine (Eds.), Advances in neural information processing systems (Vol. 36, pp. 42661–42688). Curran Associates, Inc. Available online: https://proceedings.neurips.cc/paper_files/paper/2023/file/8511d06d5 590f4bda24d42087802cc81-Paper-Conference.pdf (accessed on).

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, L., & Polosukhin, I. (2017). Attention is all you need. In Proceedings of the 31st international conference on neural information processing systems (p. 6000–6010). Red Hook, NY, USA: Curran Associates Inc.

- VoyageAI. (2023). Voyage’s embedding models. https://docs.voyageai.com/embeddings/.

- Wan, L., & Ma,W. (2025). StoryBench: A Dynamic Benchmark for Evaluating Long-Term Memory with Multi Turns. arXiv preprint arXiv:2506.13356.

- Wang, B., Liang, X., Yang, J., Huang, H.,Wu, S.,Wu, P., Lu, L., Ma, Z., & Li, Z. (2024). Enhancing large language model with self-controlled memory framework. Available online: https://arxiv.org/abs/2304.13343 (accessed on).

- Wang, B., Xie, Q., Pei, J., Chen, Z., Tiwari, P., Li, Z., & Fu, J. (2023). Pre-trained language models in biomedical domain: A systematic survey. ACM Computing Surveys, 56(3), 1–52.

- Wang, C., Liu, X., Liu, Y., Zhu, Y., Mo, X., Jiang, J., & Chen, H. (2025). When to Reason: Semantic Router for vLLM. arXiv preprint arXiv:2510.08731.

- Wang, G., Xie, Y., Jiang, Y., Mandlekar, A., Xiao, C., Zhu, Y., Fan, L., & Anandkumar, A. (2023). Voyager: An open-ended embodied agent with large language models. arXiv preprint arXiv:2305.16291.

- Wang, H., Liu, C., Xi, N., Qiang, Z., Zhao, S., Qin, B., & Liu, T. (2023). Huatuo: Tuning llama model with chinese medical knowledge. arXiv preprint arXiv:2304.06975.

- Wang, H., Zhao, S., Qiang, Z., Li, Z., Xi, N., Du, Y., Cai, M., Guo, H., Chen, Y., Xu, H., & et al. (2023). Knowledgetuning large language models with structured medical knowledge bases for reliable response generation in chinese. arXiv preprint arXiv:2309.04175.

- Wang, J., Huang, Y., Chen, C., Liu, Z., Wang, S., & Wang, Q. (2024). Software testing with large language models: Survey, landscape, and vision. IEEE Transactions on Software Engineering.

- Wang, K., Dimitriadis, N., Ortiz-Jimenez, G., Fleuret, F., & Frossard, P. (2024). Localizing Task Information for Improved Model Merging and Compression. ICML.

- Wang, L., Du, Z., Jiao,W., Lyu, C., Pang, J., Cui, L., Song, K.,Wong, D. F., Shi, S., & Tu, Z. (2024). Benchmarking and Improving Long-Text Translation with Large Language Models. In L. Ku, A. Martins, & V. Srikumar (Eds.), Findings of the association for computational linguistics, ACL 2024, bangkok, thailand and virtual meeting, august 11-16, 2024 (pp. 7175–7187). Association for Computational Linguistics. Available online: https://doi.org/10.18653/v1/2024.findings-acl.428 (accessed on). https://doi.org/10.18653/V1/2024.FINDINGS-ACL.428.

- Wang, L., Ma, C., Feng, X., Zhang, Z., Yang, H., Zhang, J., Chen, Z., Tang, J., Chen, X., Lin, Y., & et al. (2023). A survey on large language model based autonomous agents. arxiv.

- Wang, L., Yang, N., Huang, X., Yang, L., Majumder, R., & Wei, F. (2024). Improving Text Embeddings with Large Language Models. In L. Ku, A. Martins, & V. Srikumar (Eds.), Proceedings of the 62nd annual meeting of the association for computational linguistics (volume 1: Long papers), ACL 2024, bangkok, thailand, august 11-16, 2024 (pp. 11897–11916). Association for Computational Linguistics. Available online: https://doi.org/10.18653/ v1/2024.acl-long.642 (accessed on). https://doi.org/10.18653/V1/2024.ACL-LONG.642.

- Wang, L., Yang, N., &Wei, F. (2023). Learning to retrieve in-context examples for large language models. arXiv preprint arXiv:2307.07164.

- Wang, L., Zhang, J., Yang, H., Chen, Z., Tang, J., Zhang, Z., Chen, X., Lin, Y., Song, R., Zhao, W. X., Xu, J., Dou, Z., Wang, J., &Wen, J.-R. (2023). When large language model based agent meets user behavior analysis: A novel user simulation paradigm.

- Wang, L., Zhang, X., Su, H., & Zhu, J. (2024). A comprehensive survey of continual learning: Theory, method and application. IEEE Transactions on Pattern Analysis and Machine Intelligence.

- Wang, P., Li, Z., Zhang, N., Xu, Z., Yao, Y., Jiang, Y., Xie, P., Huang, F., & Chen, H. (2024). Wise: Rethinking the knowledge memory for lifelong model editing of large language models. Advances in Neural Information Processing Systems, 37, 53764–53797.

- Wang, Q., Fu, Y., Cao, Y., Wang, S., Tian, Z., & Ding, L. (2025a). Recursively summarizing enables long-term dialogue memory in large language models. Neurocomputing, 639, 130193. Available online: https:// www.sciencedirect.com/science/article/pii/S0925231225008653 (accessed on). https://doi.org/https:// doi.org/10.1016/j.neucom.2025.130193.

- Wang, Q., Fu, Y., Cao, Y.,Wang, S., Tian, Z., & Ding, L. (2025b). Recursively Summarizing Enables Long-Term Dialogue Memory in Large Language Models. Neurocomputing, 130193. Available online: https://www .sciencedirect.com/science/article/abs/pii/S0925231225008653 (accessed on). https://doi.org/10.1016/ j.neucom.2025.130193.

- Wang, Q., Tang, Z., & He, B. (2025). Can LLM Simulations Truly Reflect Humanity? A Deep Dive. In The fourth blogpost track at iclr 2025.

- Wang, S., Zhu, Y., Liu, H., Zheng, Z., Chen, C., & Li, J. (2024). Knowledge editing for large language models: A survey. ACM Computing Surveys, 57(3), 1–37.

- Wang, W., Dong, L., Cheng, H., Liu, X., Yan, X., Gao, J., & Wei, F. (2023). Augmenting Language Models with Long-Term Memory. In A. Oh, T. Naumann, A. Globerson, K. Saenko, M. Hardt, & S. Levine (Eds.), Advances in neural information processing systems 36: Annual conference on neural information processing systems 2023, neurips 2023, new orleans, la, usa, december 10 - 16, 2023. Available online: http://papers.nips.cc/paper_files/ paper/2023/hash/ebd82705f44793b6f9ade5a669d0f0bf-Abstract-Conference.html (accessed on).

- Wang,W., Lin, X., Feng, F., He, X., & Chua, T.-S. (2023). Generative recommendation: Towards next-generation recommender paradigm. arXiv.

- Wang, X., Salmani, M., Omidi, P., Ren, X., Rezagholizadeh, M., & Eshaghi, A. (2024). Beyond the Limits: A Survey of Techniques to Extend the Context Length in Large Language Models. In Proceedings of the thirty-third international joint conference on artificial intelligence, IJCAI 2024, jeju, south korea, august 3-9, 2024 (pp. 8299–8307). ijcai.org. Available online: https://www.ijcai.org/proceedings/2024/917 (accessed on).

- Wang, X., Yang, Q., Qiu, Y., Liang, J., He, Q., Gu, Z., Xiao, Y., & Wang, W. (2023). KnowledGPT: Enhancing Large Language Models with Retrieval and Storage Access on Knowledge Bases. arXiv preprint arXiv:2308.11761.

- Wang, Y., Gao, Y., Chen, X., Jiang, H., Li, S., Yang, J., Yin, Q., Li, Z., Li, X., Yin, B., & et al. (2024). Memoryllm: Towards self-updatable large language models. arXiv preprint arXiv:2402.04624.

- Wang, Y., Han, C., Wu, T., He, X., Zhou, W., Sadeq, N., Chen, X., He, Z., Wang, W., Haffari, G., et al. (2024). Towards lifespan cognitive systems. arXiv preprint arXiv:2409.13265.

- Wang, Y., Li, P., Sun, M., & Liu, Y. (2023). Self-Knowledge Guided Retrieval Augmentation for Large Language Models. arXiv preprint arXiv:2310.05002.

- Wang, Y., Lipka, N., Rossi, R. A., Siu, A., Zhang, R., & Derr, T. (2023). Knowledge graph prompting for multi-document question answering. arXiv preprint arXiv:2308.11730.

- Wang, Y., Liu, X., Chen, X., O’Brien, S.,Wu, J., & McAuley, J. (n.d.). Self-Updatable Large Language Models by Integrating Context into Model Parameters. In The thirteenth international conference on learning representations.

- Wang, Z., Bao, R., Wu, Y., Taylor, J., Xiao, C., Zheng, F., Jiang, W., Gao, S., & Zhang, Y. (2024, November). Unlocking Memorization in Large Language Models with Dynamic Soft Prompting. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Proceedings of the 2024 conference on empirical methods in natural language processing (pp. 9782–9796). Miami, Florida, USA: Association for Computational Linguistics. Availableonline: https://aclanthology.org/2024.emnlp-main.546/ (accessed on). https://doi.org/10.18653/v1/2024.emnlp-main.546.

- Wang, Z., Liu, Z., Zhang, Y., Zhong, A., Fan, L., Wu, L., & Wen, Q. (2023). Rcagent: Cloud root cause analysis by autonomous agents with tool-augmented large language models. arXiv.

- Wang, Z. Z., Mao, J., Fried, D., & Neubig, G. (2024a). Agent workflow memory. Available online: https://arxiv.org/ abs/2409.07429 (accessed on).

- Wang, Z. Z., Mao, J., Fried, D., & Neubig, G. (2024b). Agent workflow memory. arXiv preprint arXiv:2409.07429.

- Wei, F., Tang, Z., Zeng, R., Liu, T., Zhang, C., Chu, X., & Han, B. (2025). JailbreakLoRA: Your Downloaded LoRA from Sharing Platforms might be Unsafe. In Icml 2025 workshop on data in generative models - the bad, the ugly, and the greats. Available online: https://openreview.net/forum?id=RjaeiNswGh (accessed on).

- Weng, L. (2023, Jun). Llm-powered autonomous agents. lilianweng.github.io.

- Wu, D., Wang, H., Yu, W., Zhang, Y., Chang, K.-W., & Yu, D. (2024). Longmemeval: Benchmarking chat assistants on long-term interactive memory. arXiv preprint arXiv:2410.10813.

- Wu, H., & Tu, K. (2024, August). Layer-Condensed KV Cache for Efficient Inference of Large Language Models. In L.-W. Ku, A. Martins, & V. Srikumar (Eds.), Proceedings of the 62nd annual meeting of the association for computational linguistics (volume 1: Long papers) (pp. 11175–11188). Bangkok, Thailand: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.acl-long.602/ (accessed on). https://doi.org/10.18653/v1/2024.acl-long.602.

- Wu, Q., Bansal, G., Zhang, J.,Wu, Y., Zhang, S., Zhu, E., Li, B., Jiang, L., Zhang, X., &Wang, C. (2023). Autogen: Enabling next-gen llm applications via multi-agent conversation framework. arXiv preprint arXiv:2308.08155.

- Wu,W., Pan, Z.,Wang, C., Chen, L., Bai, Y.,Wang, T., Fu, K.,Wang, Z., & Xiong, H. (2025). Tokenselect: Efficient long-context inference and length extrapolation for llms via dynamic token-level kv cache selection. Available online: https://arxiv.org/abs/2411.02886 (accessed on).

- Wu, Y., Liang, S., Zhang, C., Wang, Y., Zhang, Y., Guo, H., Tang, R., & Liu, Y. (2025). From human memory to ai memory: A survey on memory mechanisms in the era of llms. Available online: https://arxiv.org/abs/2504.15965 (accessed on).

- Wu, Y., Rabe, M. N., Hutchins, D., & Szegedy, C. (2022). Memorizing transformers. arXiv preprint arXiv:2203.08913.

- Wu, Y., Wang, H., Zhao, P., Zheng, Y., Wei, Y., & Huang, L.-K. (2024). Mitigating catastrophic forgetting in online continual learning by modeling previous task interrelations via pareto optimization. In Forty-first international conference on machine learning.

- Wulf, W. A., & McKee, S. A. (1995, March). Hitting the memory wall: implications of the obvious. SIGARCH Comput. Archit. News, 23(1), 20–24. Available online: https://doi.org/10.1145/216585.216588 (accessed on). https://doi.org/10.1145/216585.216588.

- Xi, Y., Liu, W., Lin, J., Chen, B., Tang, R., Zhang, W., & Yu, Y. (2024). Memocrs: Memory-enhanced sequential conversational recommender systems with large language models. In Proceedings of the 33rd acm international conference on information and knowledge management (pp. 2585–2595).

- Xi, Z., Chen, W., Guo, X., He, W., Ding, Y., Hong, B., Zhang, M., Wang, J., Jin, S., Zhou, E., & et al. (2023). The rise and potential of large language model based agents: A survey. arxiv.

- Xiao, G., Tian, Y., Chen, B., Han, S., & Lewis, M. (2023). Efficient Streaming Language Models with Attention Sinks. arXiv.

- Xiao, G., Tian, Y., Chen, B., Han, S., & Lewis, M. (2024a). Efficient Streaming Language Models with Attention Sinks. In The twelfth international conference on learning representations. Available online: https://openreview.net/ forum?id=NG7sS51zVF (accessed on).

- Xiao, G., Tian, Y., Chen, B., Han, S., & Lewis, M. (2024b). Efficient streaming language models with attention sinks. Available online: https://arxiv.org/abs/2309.17453 (accessed on).

- Xiong, H.,Wang, S., Zhu, Y., Zhao, Z., Liu, Y., Wang, Q., & Shen, D. (2023). Doctorglm: Fine-tuning your chinese doctor is not a herculean task. arXiv preprint arXiv:2304.01097.