1. Introduction

Hate speech is a complex phenomenon that undermines social harmony and equality in both physical and digital environments. Its definition involves at least three essential components: expressive behavior, a target group identified by protected characteristics, and the manifestation of negative emotions such as hostility, humiliation, or contempt [

1]. The difficulty of establishing a universally accepted definition has been highlighted in recent reviews, which note that the semantic and pragmatic particularities of language introduce ambiguity in its delimitation [

2]. The difficulty of establishing a universally accepted definition has been highlighted in recent reviews, which note that the semantic and pragmatic particularities of language introduce ambiguity into its delimitation. Likewise, comparative studies show that the conceptualization of hate speech varies across legal, cultural, and technological frameworks, reinforcing the need for multidimensional approaches. [

3].

The emotional dimension is key to understanding and detecting this phenomenon, as hate speech often draws on an affective background that shapes perception and social interaction. Basic emotions, such as happiness, anger, and fear, directly influence people’s social evaluations, even in the absence of contextual information [

4]. Although emotions reflect immediate reactions, the emotional tone constitutes a more sustained affective state that modulates the interpretation of messages and their potential to become hate speech [

5]. In the field of Natural Language Processing (NLP), the identification of emotions such as anger, contempt, or fear has been shown to improve the accuracy of automated systems in differentiating between negative opinions and discriminatory attacks [

6,

7].

Gender-based violence represents one of the most critical contexts for hate speech. In Spanish-speaking communities, women are particularly vulnerable to discriminatory attacks in which misogyny is reproduced through hostile expressions and patterns of social normalization [

8]. On a global scale, online misogyny is associated with harassment dynamics that reinforce the structural subordination of women [

9]; extreme forms of harassment, such as

rapeglish, used for intimidation purposes have even been documented [

10]. The technical challenges are significant: international NLP competitions, such as SemEval, demonstrate that attacks targeting women and migrants are recurring and difficult to detect with standard models [

11], with impacts that transcend the digital realm [

12].

In this context, Natural Language Processing (NLP) and Machine Learning (ML) are becoming established as fundamental approaches for detecting and mitigating hate speech. Systematic reviews highlight both their advances and limitations: dependence on large volumes of representative data, biases in training sets, and dilemmas regarding fairness and interpretability at scale [

13,

14]. The most promising results are observed in two families of models: (i) tree-based models (Random Forest, XGBoost) and (ii) transformative architectures, such as BERT and RoBERTa [

15,

16]. However, a significant gap remains: the explicit integration of emotional tone into the automated moderation process, especially before publication, which is the main motivation for this work.

From a formal perspective, we applied a mathematical model, Bayesian Calibration and Optimal Design Asymmetric Risk (BACON-AR) [

17,

18,

19,

20], which describes the relationship between textual features and hostility categories using a supervised probabilistic decision function. This model combines TF-IDF vector representations and RoBERTa

contextual embeddings, integrated into an optimization process with L2 regularization and adaptive weights. The objective was to minimize classification errors and improve system stability, avoiding overfitting. Thus, the model formalizes the learning process from a statistical perspective, allowing us to analyze its convergence and generalizability in real-world hate speech detection scenarios.

From this perspective, the present study strengthens preventive moderation on social networks through a hybrid approach that combines the detection of explicit hostility with the classification of emotional tone. The main contributions of this study are as follows:

The development of a dual model that simultaneously classifies hate/non-hate categories and emotional tone, implemented via a stacking assembly that integrates RoBERTa, Random Forest (TF-IDF), and XGBoost (embeddings), surpasses the performance of individual classifiers;

The construction and enrichment of an extensive corpus from three open sources, with a mapping between GoEmotions and Ekman’s emotions to study the interaction between affect and hostility;

An end-to-end functional validation using a RESTful API and a web client with pre-moderation and auditing; and

A comparative analysis that demonstrates that incorporating emotional tone reduces false positives in cases of ambiguity or irony.

These contributions complement studies that warn about bias risks and implementation challenges [

14,

21], and confirm that a heterogeneous ensemble (transformer plus trees) improves generalization compared to the isolated use of a model such as RoBERTa [

15].

The remainder of the manuscript is structured as follows: the Materials and Methods section describes the corpus, preprocessing, feature representation, models, the API, and web client architecture. In this section, we also incorporate the BACON-AR framework for probabilistic calibration and threshold optimization under asymmetric risk. The Results section presents model performance, ROC curves, confusion matrices, and performance tests. The Discussion section analyzes the findings, validates and discusses limitations. Finally, the Conclusions section summarizes the contributions and future research directions.

2. Related Work

We conducted a Standard Literature Review (SLR) following the PRISMA guidelines and the PICOS approach, thus identifying the most relevant studies on the detection of hate speech and the analysis of emotional tone, published in [

22]. We searched various recognized scientific databases, filtering by language, year of publication, and research approach. We analyzed 34 primary studies and selected 10 representative works. In addition, we included five recent studies that broaden the analysis to include multimodal approaches and the use of large-scale language models (LLMs).

[

23] proposed a multi-label self-training model that combines auxiliary emotional cues to improve the sensitivity of automated systems to implicit hostility on social media. This work demonstrated that negative emotions, such as anger and contempt, strengthen the identification of discriminatory discourse. Ramos et al. [

24], for their part, analyzed the evolution of transformer-based models and highlighted the limitations in explainability and bias of current detection systems, underscoring the need to develop more interpretive approaches. Rodriguez et al. [

25] presented the FADOHS framework, which integrates sentiment and emotion analysis to classify offensive Facebook posts, finding that combining affective and linguistic cues improves performance over purely lexical methods. Finally, Kaminska et al. [

26] proposed a fuzzy-rough k-NN method for the simultaneous identification of hate, irony, and emotion, demonstrating the usefulness of non-transformer-based techniques in contexts with limited data.

In the field of Spanish, [

27] developed a model that incorporates linguistic and user features to detect offensive messages, highlighting the relevance of sociocultural context in hate speech classification. Complementarily, [

28] introduced the Spanish MTLHateCorpus 2023, which uses a multitask approach to predict speech type, target group, and intensity; this resource facilitates the study of social and gender inequality in digital environments. [

29], for his part, proposed a multimodal cross-attention model that combines text and image for hostility detection, demonstrating that integrating visual and semantic information allows for capturing nuances that text alone does not reflect. [

30] presented

G-BERT, a Bengali-trained hate speech detection model that addresses the challenges of languages with limited computational resources. [

31] analyzed emotional classification in code-mixed texts (Hinglish). In contrast, [

32] designed a multitask model that links politeness and emotion in social interactions, thereby facilitating knowledge transfer across affective tasks.

Among recent studies, Nandi et al. [

33] introduced

SAFE-MEME, a structured reasoning model for detecting hate speech in memes that incorporates emotional attention and semantic relationships between text and images, thereby improving contextual interpretation. Chhabra and Vishwakarma [

34] proposed

MHS-STMA, a transformer-based framework with multilevel attention that simultaneously processes textual and visual modalities, achieving greater accuracy and robustness in the face of data noise. Complementarily, Chhabra and Vishwakarma [

35] demonstrated that multiscale visual attention improves the detection of hostility in images with superimposed text, reaffirming the importance of integrating visual features to address implicit hate speech in multimodal content.

Furthermore, [

36] evaluated various large language models (LLMs) for detecting hate speech in real-world settings, analyzing their generalizability and the influence of cultural context on decision-making. The authors found that while LLMs achieve high accuracy in supervised tasks, they tend to replicate social biases and over-identify neutral expressions as hostile. [

37] extended this analysis to multilingual contexts with high semantic variability, where the models demonstrated inconsistencies in transferring hostility patterns between languages, reinforcing the need for control and calibration mechanisms. Finally, [

38] examined the reactive responses of LLMs to offensive content, finding that these models reproduce stereotypes and degrade their performance with ambiguous or ironic texts, highlighting limitations in their reliability and algorithmic fairness.

Complementing this evolution towards hybrid and multimodal architectures, two recent systematic reviews consolidate the theoretical foundation of the present research. On the one hand, [

39] conducted a literature review of hate speech detection on online social platforms using Machine Learning and Natural Language Processing, identifying the main techniques, datasets, and challenges in this field. On the other hand, we conducted a systematic review of emotional tone detection in hate speech, published in [

22]. This latter study not only maps the current methods and challenges but also underscores the research opportunity addressed by the present work: the explicit integration of the emotional component to improve the accuracy and contextual understanding of automated moderation systems.

In summary, the analyzed works demonstrate an evolution towards hybrid and multimodal architectures, although most focus on English-language general hostility detection. Given these limitations, the present study proposes a complementary approach. It uses an English corpus with over one million records, labeled according to Ekman’s six basic emotions. The model combines hate speech detection and emotional tone classification using a stacking scheme composed of three configurations: RoBERTa as the base model, XGBoost with TF-IDF features, and a Random Forest with RoBERTa-generated embeddings. This approach contrasts contextual and statistical representations to improve the accuracy and interpretability of the results. Furthermore, the implementation in a RESTful API and a web client demonstrates its applicability in automated moderation processes and large-scale content analysis.

Taken together, the reviewed studies support incorporating the emotional component into hate detection. The proposed approach contributes to this line of research by integrating natural language processing, emotions, and gender inequality, aiming to develop more accurate and socially relevant automated moderation systems.

Several recent studies have provided fundamental theoretical and mathematical foundations for detecting hate speech using probabilistic models. In particular, [

40] and [

41] analyze classifier calibration and probabilistic prediction evaluation, highlighting the importance of adjusting model reliability via loss functions and decision thresholds. Complementarily, [

17] and [

18] propose Bayesian frameworks that allow for representing uncertainty in predictions and improving the stability of deep learning systems under variable conditions.

Furthermore, [

42] and [

43] delve deeper into cost-sensitive learning, introducing formulations that optimize the hazard function in scenarios with unbalanced classes or decisions that involve different penalty levels. These perspectives, along with the regularization and error control strategies proposed by [

19] and [

20], provide the mathematical foundation for the approach used in this study.

The proposed model builds on these contributions by integrating a statistical calibration process into a hybrid classification framework based on trees and transformers. This formulation combines vector representations of the text (TF-IDF and contextual embeddings) with calibrated probabilistic estimates, seeking to optimize the accuracy, consistency, and reliability of the hostility and emotional tone detection system.

3. Materials and Methods

3.1. Dataset

We created a dataset comprising 1 236 371 records by combining three open-access

.csv datasets from

Kaggle to detect hate speech on social media. Each dataset contained the comment and the binary detection of hate/non-hate, as detailed in

Table 1.

Next, we combined the three datasets to obtain the final dataset. On this corpus, we applied a pre-trained model RoBERTa-base-goemotions, to map Ekman’s six basic emotions (i.e., anger, fear, sadness, surprise, disgust, and joy) plus the emotion of neutral to each comment, as shown in

Table 2; this resulted in a dataset with three labels: comment, hate speech detection, and emotion classification.

3.2. Preprocessing - ETL

For the extraction, transformation, and loading process, we applied the following steps: removal of duplicates and null values, character normalization to convert comments to lowercase, removal of non-textual characters (i.e., emojis, hashtags, and symbols), removal of stopwords, and lemmatization to reduce words to their base form. After completing the process, we obtained 1 003 991 clean records, which we used for training and validation.

3.3. Exploratory Data Analysis - EDA

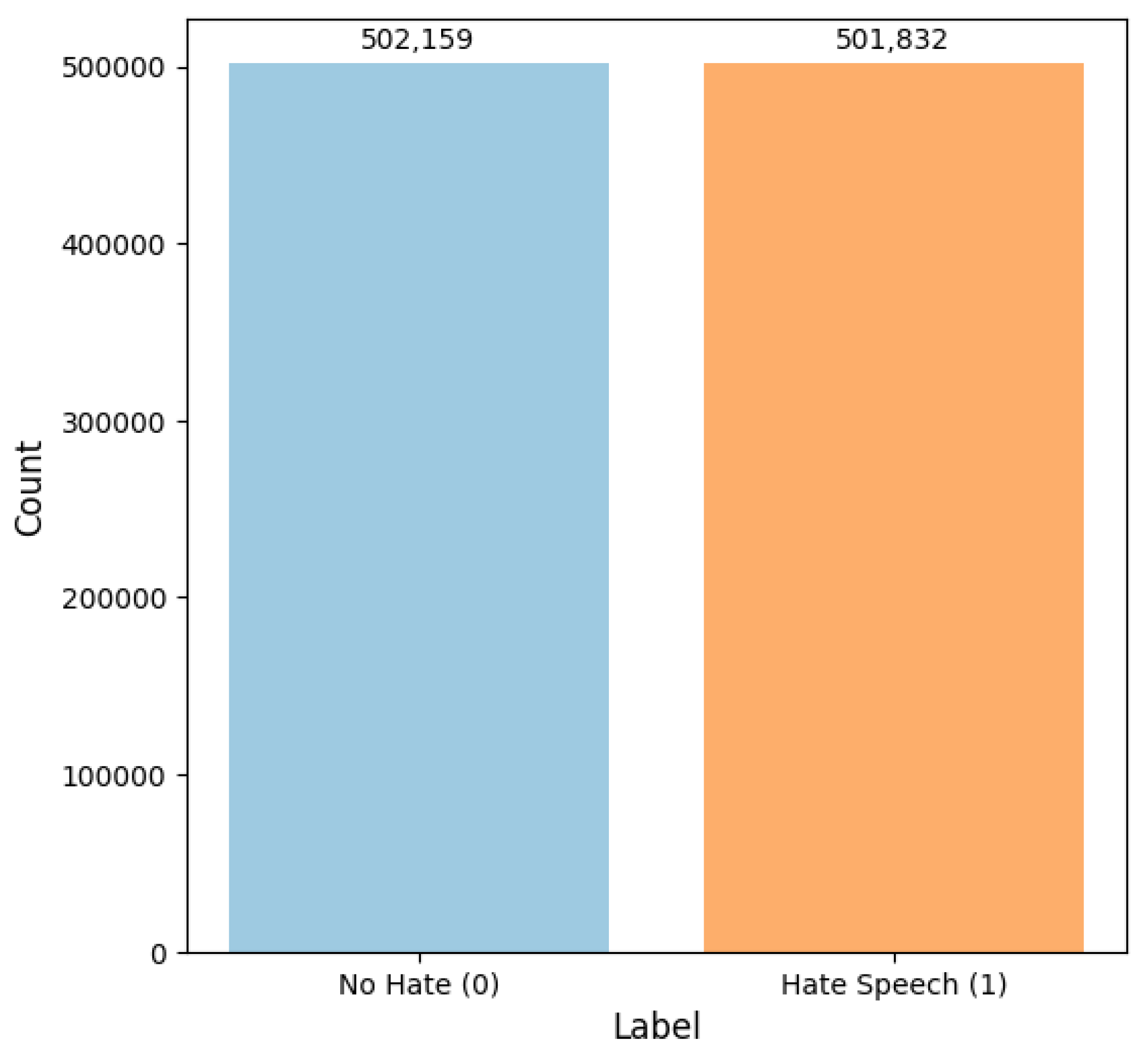

Using the clean dataset, we conducted exploratory data analysis to identify trends, patterns, label distributions, and potential biases. In this case, we evaluated the proportion of records classified as hate/non-hate, finding a balance: 502 159 records in the non-hate class and 501 832 in the hate class, as shown in

Figure 1.

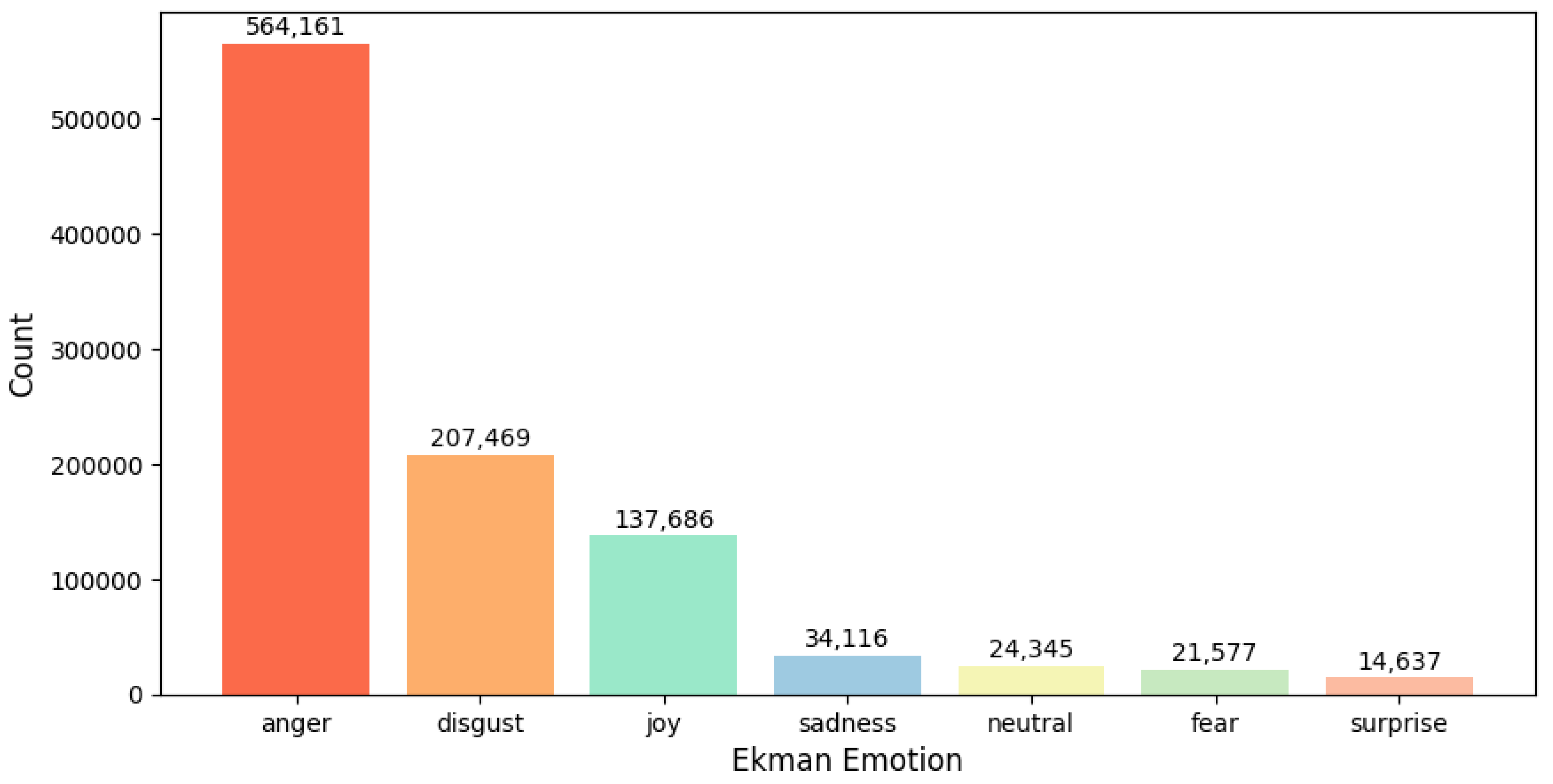

We also found a bias in the distribution of emotions in the dataset, as shown in

Figure 2. We observed a predominance of emotions such as anger (564 191), disgust (207 469), and joy (137 686), while the least represented were surprise (14 637), fear (21 577), and neutral (24 345), resulting in an imbalance between the different classes, which poses a challenge in the model training process.

3.4. Feature Representation

For the representation of textual features, we apply two approaches:

3.5. Selection of NLP and ML Models

We implemented and compared traditional and modern NLP models. Within the NLP model, we selected the

RoBERTa model because it is commonly used for text classification, natural language understanding, and emotion analysis. RoBERTa achieves better results than BERT [

45], as noted by Liu et al. [

15]. RoBERTa eliminates the Next-Sence Prediction task, introduces dynamic masking, and trains on a larger corpus.

For traditional machine learning models, we selected

Random Forest and

XGBoost to compare bagging and boosting. The random forest combines multiple decision trees using majority voting. At the same time, XGBoost implements a gradient booster designed to improve the efficiency, computational speed, and performance of the model by combining the capabilities of the XGBoost software and hardware [

46].

3.6. Model Training

In this section, we develop the model training process for both classifying emotional tone and detecting hate speech.

3.6.1. Training RoBERTa for Hate Speech

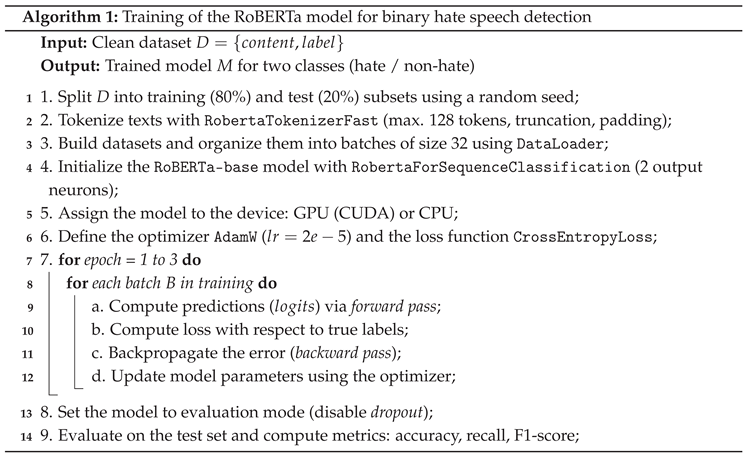

The following four phases were used to train the RoBERTa-base model: data preparation, dataset construction, model initialization, and training-evaluation. The training process is summarized in the Algorithm 1.

In the first stage, we imported the clean dataset in .csv format, using the content (comments) and label (binary hate/non-hate labels) columns. Then, we divided the dataset into training (80 %) and test (20 %) subsets using a random seed. The texts were tokenized with RobertaTokenizerFast, truncating to a maximum of 128 tokens and using automatic padding to equalize the length of the shorter texts.

Using the tokenized data, we defined a custom TextDataset class, which organizes the text and labels into a PyTorch-compatible structure. In this class, we organized the tensors generated by the tokenizer (input_ids and attention_mask). Training and test datasets were built, organized into batches of 32 instances using DataLoader. The pre-trained RoBERTa-Base model was initialized using the RoBERTaForSequenceClassification class, configured for binary classification. Before starting the training process, the available device for resource allocation was detected as either a GPU (CUDA) or a CPU.

In training, we used the AdamW optimizer with a learning rate of and the CrossEntropyLoss function. Training was performed over three epochs. In each iteration, we applied a forward pass to each batch to obtain model predictions in the form of logits, computed the loss, and performed a backward pass to update the parameters with the optimizer. Finally, we put the model into evaluation mode. Predictions and performance metric reports: accuracy, recall, and F1 score were generated on the test set.

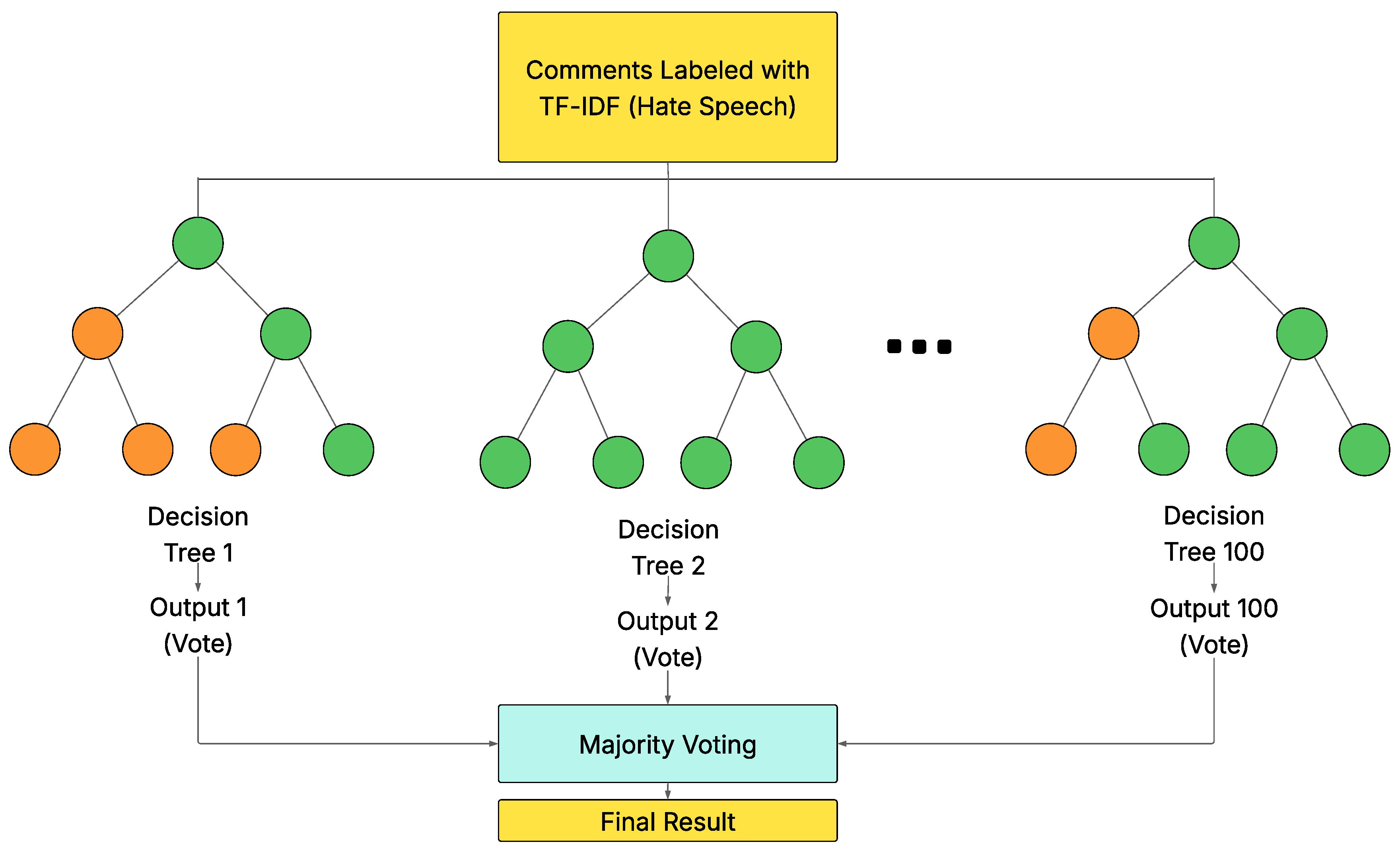

3.6.2. Training Random Forest with TF-IDF for Hate Speech

We trained the Random Forest model using the content (comments) and label (binary) columns of the dataset. We divided the records into training (80 %) and test (20 %) subsets using stratified sampling with random seeding to preserve the class proportions.

As mentioned above, we used the TF-IDF technique, limiting the vocabulary to 10 000 terms and incorporating combinations of unigrams and bigrams.

Regarding the model configuration, we established the following hyperparameters: (i) number of trees = 100, (ii) size of feature subset per tree , (iii) minimum node parameters by default (2 samples for splitting and 1 sample for leaf), and (iv) automatic class load balancing (class_weight="balanced") to avoid bias towards the majority class.

During training, each tree was independently fitted to a random sample of the data and features. The final prediction was obtained by majority vote, where each tree cast its class decision, and the result corresponded to the category with the highest number of votes, as shown in

Figure 3.

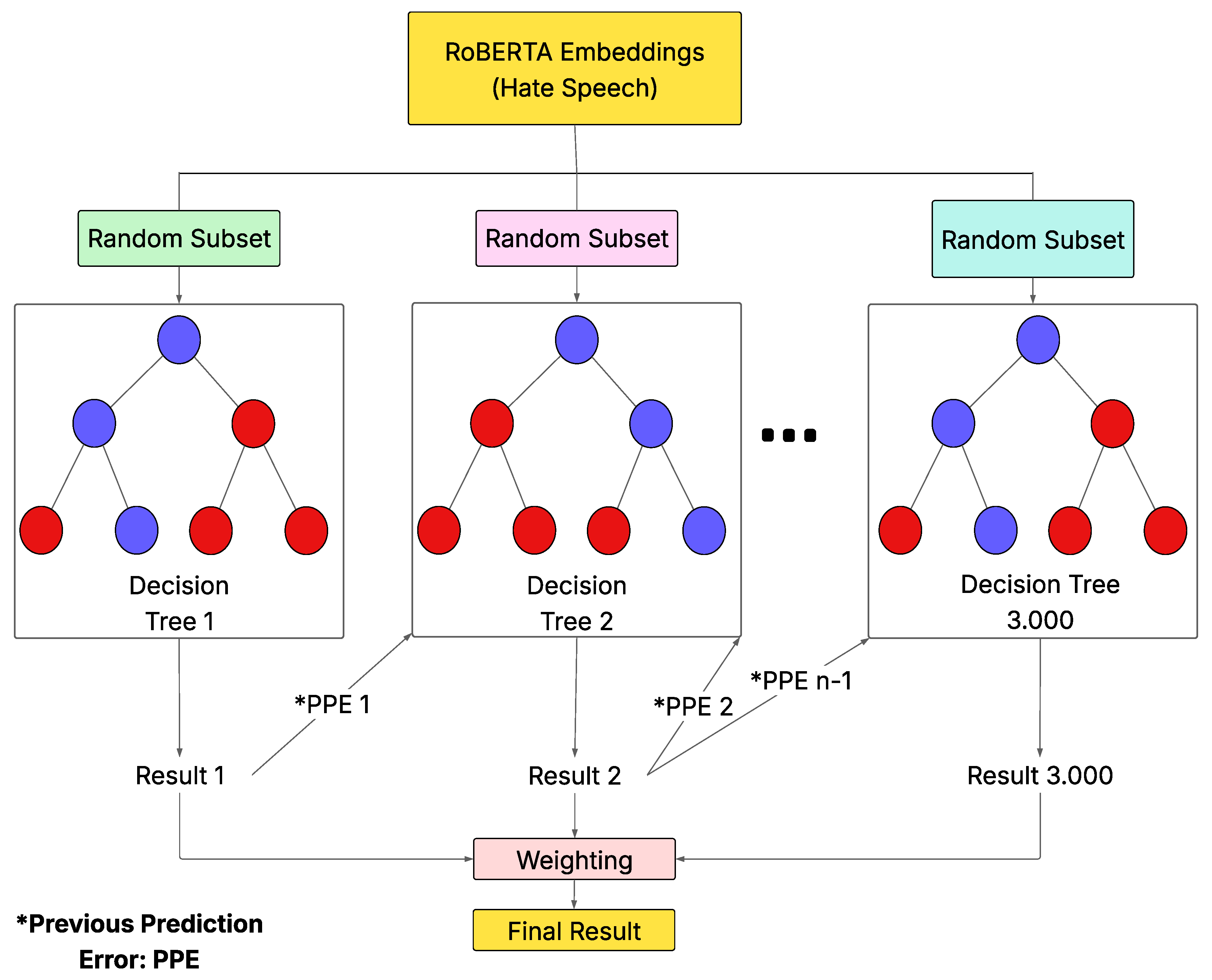

3.6.3. XGBoost Training with RoBERTa Embeddings for Hate Speech

We trained the model using comments and binary labels from the dataset. We split the records into 80 % training and 20 % test sets using stratified sampling with a random seed.

For feature representation, we used the pre-trained model twitter-roberta-base-sentiment, which generated 768-dimensional dense vectors from the classification token [CLS]. We used the embeddings as input for the XGBoost model.

We configured the model with the following hyperparameters: (i) maximum number of trees = 3000, with early stopping after 100 iterations without improvement; (ii) maximum depth of 12; (iii) learning rate = 0.0007; and (iv) random sampling of 90 % of the features per tree.

The decision trees were added sequentially, correcting errors in previous trees (boosting). We obtained the final prediction from the weighted sum of the outputs of all the trained trees, as shown in

Figure 4.

3.6.4. Assembled Model for Hate Speech Detection

One of the main contributions of this work was the construction of an assembled model using the stacking technique to overcome the limitations of individual classifiers. Unlike approaches that manually assign weights to each model, the Gradient Boosting metamodel automatically learns the best combination for optimal performance.

Thus, each individual model generated a probability vector for the two problem classes (hate and non-hate): (i) XGBoost worked with RoBERTa embeddings, (ii) Random Forest used TF-IDF vectors, and (iii) RoBERTa fine-tuned directly processed the tokenized text. These probabilities were concatenated horizontally, yielding an input matrix with six columns (two per model), which was used to train the metamodel.

We configured the Gradient Boosting metamodel with 100 trees, a learning rate of 0.1, and a maximum depth of 3. With this strategy, the assembled metamodel achieved higher metrics compared to any of the individual models.

3.6.5. RoBERTa Training for Emotional Tone Classification

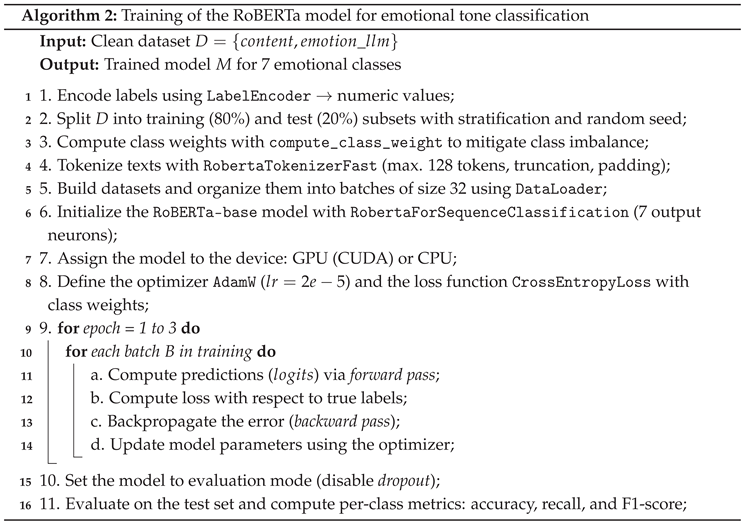

We trained the base RoBERTa model for multiclass emotion classification across four phases: label encoding, data preparation, model initialization, and training and evaluation. The training flow is summarized in the Algorithm 2.

In the first stage, we performed supervised training of the RoBERTa-base model on the seven categories corresponding to Ekman’s emotions (emoción_llm). We used the content (comments) and emotion classes from the dataset. We transformed the labels into numerical values using LabelEncoder, then split them into training (80 %) and test (20 %) subsets with stratified sampling and random seeding to preserve the original proportions of each emotion.

Given the imbalance in the classes, we calculated specific weights for each emotion using compute_class_weight, which enabled us to assign greater weight to the less frequently occurring minority classes. The text was tokenized with a maximum length of 128 tokens using RobertaTokenizerFast, with automatic truncation and padding, and then organized into batches of 32 instances using DataLoader.

Next, we configured the RobertaForSequenceClassification model with seven neurons in the output layer, corresponding to the number of emotions. We automatically assigned the model to the available computing device, running it on either a GPU (CUDA) or a CPU. For training, we used the AdamW optimizer with a learning rate of and the CrossEntropyLoss function, adjusted with class weights. Training was conducted over three epochs; in each batch, we applied a forward pass, loss calculation, backpropagation, and parameter updates.

Finally, we put the model into evaluation mode to generate predictions on the test set. Performance metrics included accuracy, recall, and F1-score per class to assess the model’s ability to distinguish between the different emotions present in hate speech.

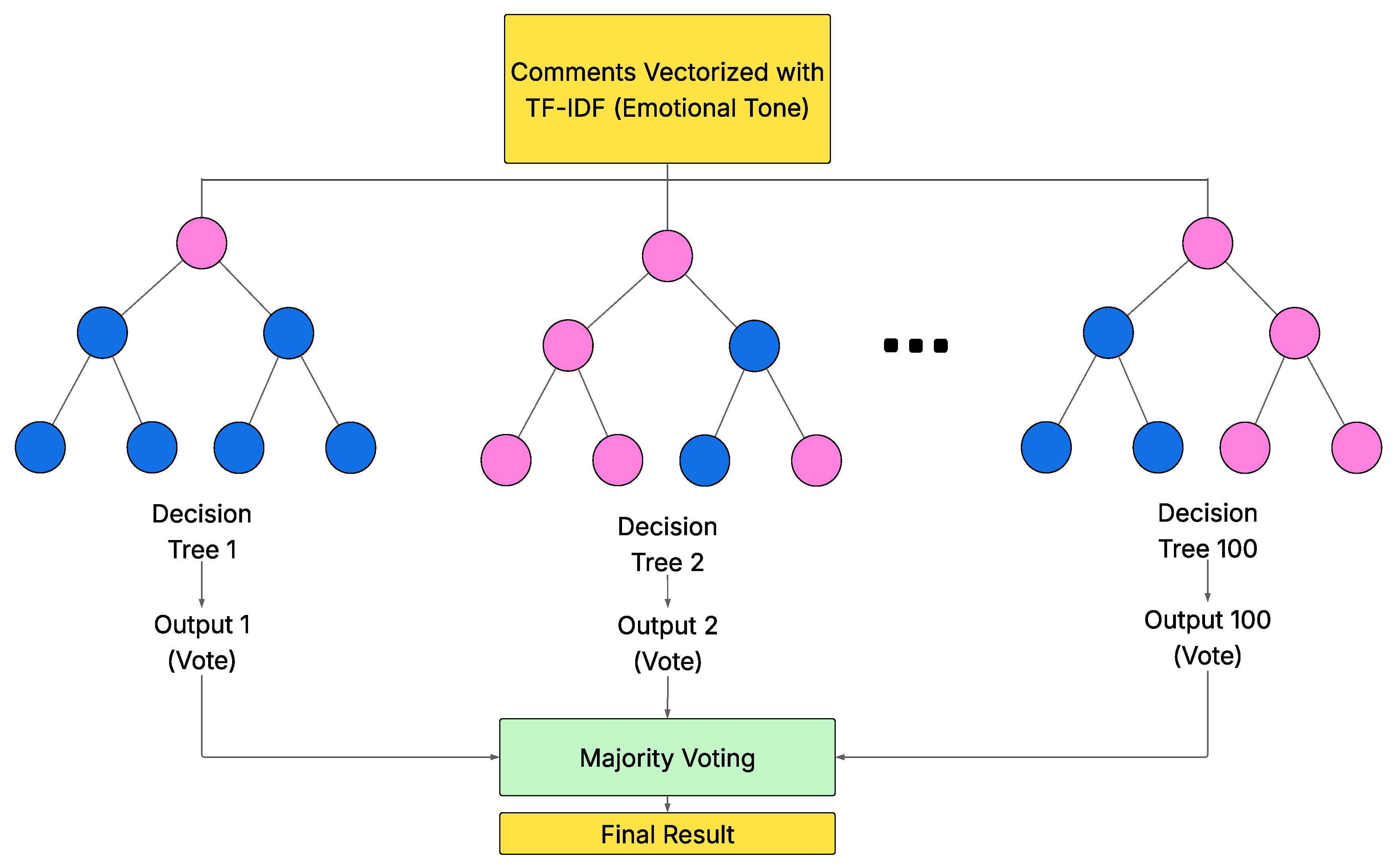

3.6.6. Training Random Forest with TF-IDF for Emotional Tone Classification

We trained a Random Forest model for multiclass emotion classification using the content (comments) and emotion_llm (emotions) columns from the dataset. We encoded emotions as numerical values using LabelEncoder, then split the corpus into training (80 %) and test (20 %) subsets using random stratified sampling.

Due to class imbalance, we calculated class-specific weights using compute_sample_weight, assigning higher weights to minority emotions. For feature representation, we transformed the comments into numerical vectors using TF-IDF, limiting the vocabulary to 10 000 terms and using unigrams and bigrams.

We configured the model with the following hyperparameters: (i) 100 decision trees, (ii) a random subset of , (iii) default parameters for minimum nodes (two samples for splits, one for leaves), and (iv) class balancing using calculated weights.

During training, the trees were built in parallel on different random samples of the dataset and features. The final prediction was obtained through a majority vote, in which each tree cast a class decision, and the emotion with the most votes was selected as the model output, as shown in

Figure 5.

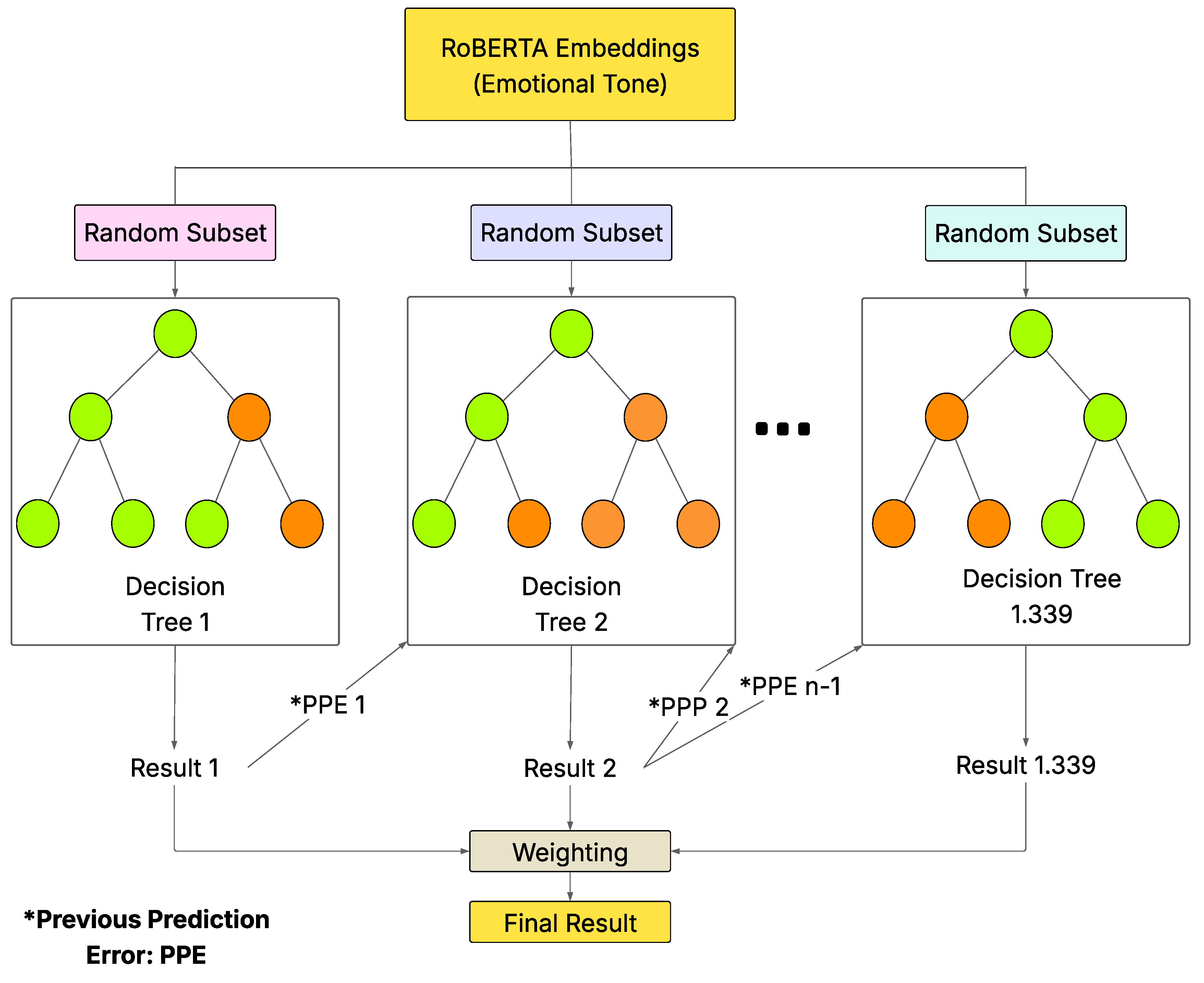

3.6.7. XGBoost Training with RoBERTa Embeddings for Emotional Tone Classification

We trained an XGBoost model for the multi-class emotion classification task using the content (comments) and emotion_llm (emotions) columns from the dataset. We transformed the emotions into numerical values using LabelEncoder and divided the dataset into training (80 %) and test (20 %) subsets using stratified sampling with a random seed.

To mitigate the imbalance between categories, we calculated specific weights using compute_sample_weight. As a feature representation, we converted the comments into 768-dimensional vectors using the pre-trained model twitter-roberta-base-emotion-multilabel-latest, and used the [CLS] token’s output as the contextual embedding.

We configured the classifier with the following hyperparameters: (i) a maximum of 2000 decision trees with early stopping after 100 iterations without improvement, (ii) a maximum depth of 12 levels, (iii) a learning rate of 0.007, and (iv) random sampling of 90 % of the features in each tree.

During training, the trees were added sequentially, so that each iteration corrected errors in the previous model. Training converged at iteration 1339, without needing to use all the defined trees. We obtained the prediction from the sum of the outputs of all the trees, as shown in

Figure 6.

3.6.8. Assembled Model for Emotional Classification

Based on models previously trained for emotional tone classification, we constructed a Gradient Boosting metamodel using a stacking approach to combine individual predictions and improve generalization. Each base model generated a probability vector for the seven emotional classes: (i) XGBoost using RoBERTa embeddings, (ii) Random Forest with TF-IDF representations, and (iii) RoBERTa fine-tuned using tokenized text.

We horizontally concatenated the output of the three base models to obtain an input matrix with 21 columns (seven probabilities per model). We used this matrix as the training set for the Gradient Boosting metamodel, configured with 100 trees, a learning rate of 0.1, and a maximum depth of 3.

It should be noted that the assembled metamodel demonstrated superior performance compared to the individual models, automatically learning the most effective combination of predictions. This resulted in improved performance in accuracy, recall, and F1-score metrics.

3.6.9. Mathematical Preparation of the Bayesian Calibration and Optimal Design Under Asymmetric Risk (BACON-AR) Framework

In this work, we introduce the

BACON-AR framework, a structured post-hoc decision framework that operates as a mathematical layer applied after model training [

17,

18,

19,

20]. First, it adjusts predicted probabilities through Bayesian calibration; second, it determines an optimal decision threshold by minimizing a cost-sensitive total risk function. Although the individual components of probabilistic calibration and asymmetric decision theory are well established in the literature, their structured integration into a unified and reproducible workflow constitutes the methodological contribution of this study.

It is important to clarify that the

BACON-AR Framework is not a new learning model and does not modify the trained architecture [

17,

18,

19,

20]. Instead, it serves as a probabilistic decision framework applied to the ensemble classifier’s outputs, ensuring consistency between predicted confidence and real-world decision costs.

The Bayesian calibration step is defined as:

where

and

represent the empirical class proportions observed in the validation set. This adjustment aligns predicted confidence with observed frequencies while preserving the discriminative capacity of the underlying classifier.

The total asymmetric risk function is defined as:

where

and

denote the empirical false negative and false positive rates at threshold

t, respectively. The optimal decision threshold is obtained through:

Although global performance metrics such as AUC, recall, and precision may remain numerically stable after applying BACON-AR, this stability reflects the robustness of the ensemble classifier rather than the absence of impact. In contexts such as hate speech detection, where false negatives entail greater social and ethical consequences than false positives, the standard threshold of 0.5 does not adequately reflect asymmetric costs. Therefore, minimizing provides a principled mechanism for cost-aware decision-making.

The ensemble classifier (RoBERTa, Random Forest, and XGBoost) achieved strong predictive performance, including high accuracy, recall, and AUC values. Nevertheless, small discrepancies were observed between predicted probabilities and empirical outcomes. These deviations, characteristic of complex probabilistic models, motivated the implementation of a structured recalibration procedure to improve alignment between confidence estimates and observed frequencies.

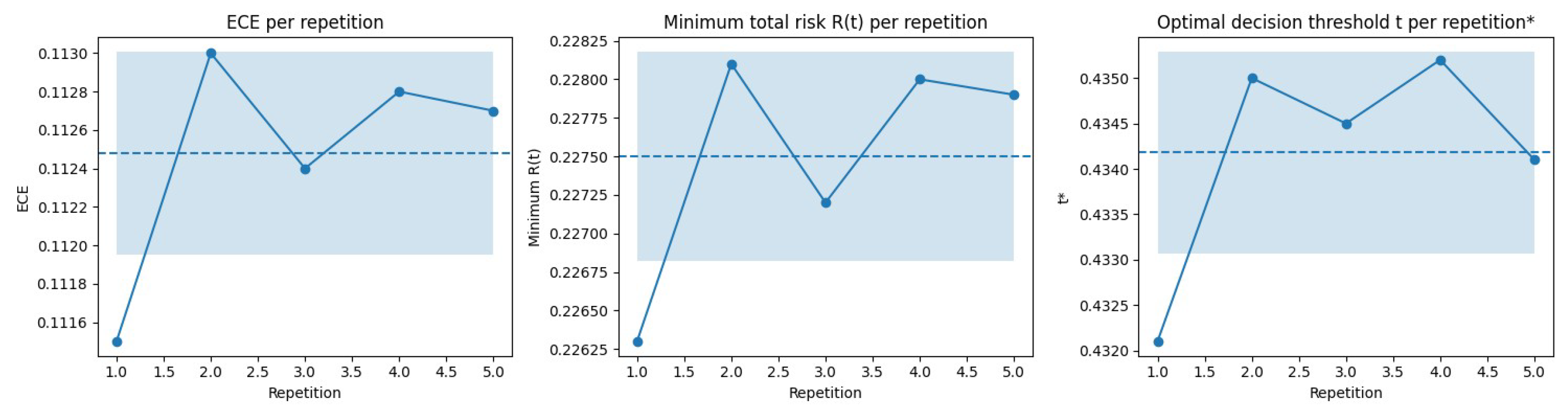

To ensure statistical validity, each experiment was executed five times using independent random partitions with an 80/20 training-validation split. The reported values for Expected Calibration Error (ECE) and total risk correspond to the mean of these repetitions, including the standard deviation to quantify dispersion. This practice reinforces the reliability and reproducibility of the framework.

To evaluate the effectiveness of the

BACON-AR framework, classical calibration techniques such as Platt Scaling and Isotonic Regression were also analyzed. While overall accuracy remained comparable, these traditional methods exhibited greater variability in Expected Calibration Error under asymmetric cost conditions. This observation aligns with [

47], who note that conventional calibration methods may lose stability in cost-sensitive or imbalanced scenarios.

The robustness of the BACON-AR framework was further assessed across repeated validation splits, yielding stable estimates of calibration error, minimum risk, and optimal threshold selection. These results confirm that the framework provides consistent probabilistic alignment and cost-aware decision optimization without altering the original classifier architecture.

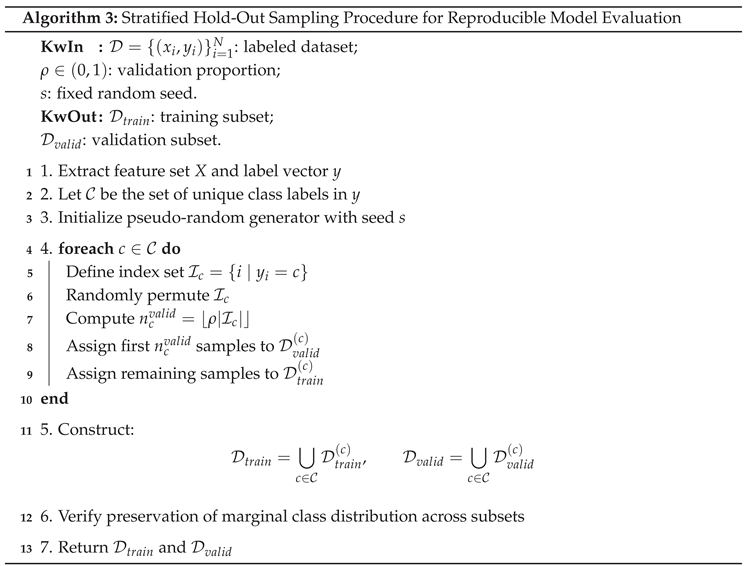

3.6.10. Cross Validation and Reproducibility

To ensure consistency and generalizability of the results, a reproducible validation scheme was implemented using a fixed random seed (n = 42). The dataset was divided into an 80/20 split for training and validation, and the procedure was repeated five times with different random partitions.

The reported values for precision, recall, Expected Calibration Error (ECE), and total risk

correspond to the average of these repetitions, along with their standard deviation, ensuring statistical stability in accordance with [

48].

The stratified hold-out sampling procedure was implemented to preserve the marginal distribution of the target variable across training and validation subsets. This strategy minimizes class imbalance distortions during model evaluation and maintains statistical consistency between partitions.

The randomization process was controlled using a fixed seed to ensure full experimental reproducibility. The procedure follows the stratified sampling methodology implemented in the Scikit-learn framework [

49], which is widely adopted in machine learning evaluation protocols.

The 80/20 ratio ensures an appropriate balance between learning capacity and evaluation reliability, minimizing overfitting while preserving class representativeness through the stratify parameter.

The seed

random_state=42 guarantees reproducible data partitioning within the Google Colab environment, adhering to the principles of experimental transparency and reproducibility outlined in [

48].

All experiments were conducted in the Google Colab cloud computing environment, using an NVIDIA Tesla T4 GPU (16 GB VRAM) with 12 GB RAM, running Python 3.10, Scikit-learn 1.4, NumPy 1.26, and Matplotlib 3.8. This computational setup facilitates independent replication of the BACON-AR probabilistic calibration and risk optimization procedure.

For future research, a broader

K-fold (

) cross-validation strategy is planned, incorporating a fully stratified evaluation scheme across the entire dataset, following the recommendations of [

50]. This approach will provide a more precise estimation of calibration variability and risk stability, further strengthening the empirical robustness of the

BACON-AR framework.

3.7. RESTful API Development

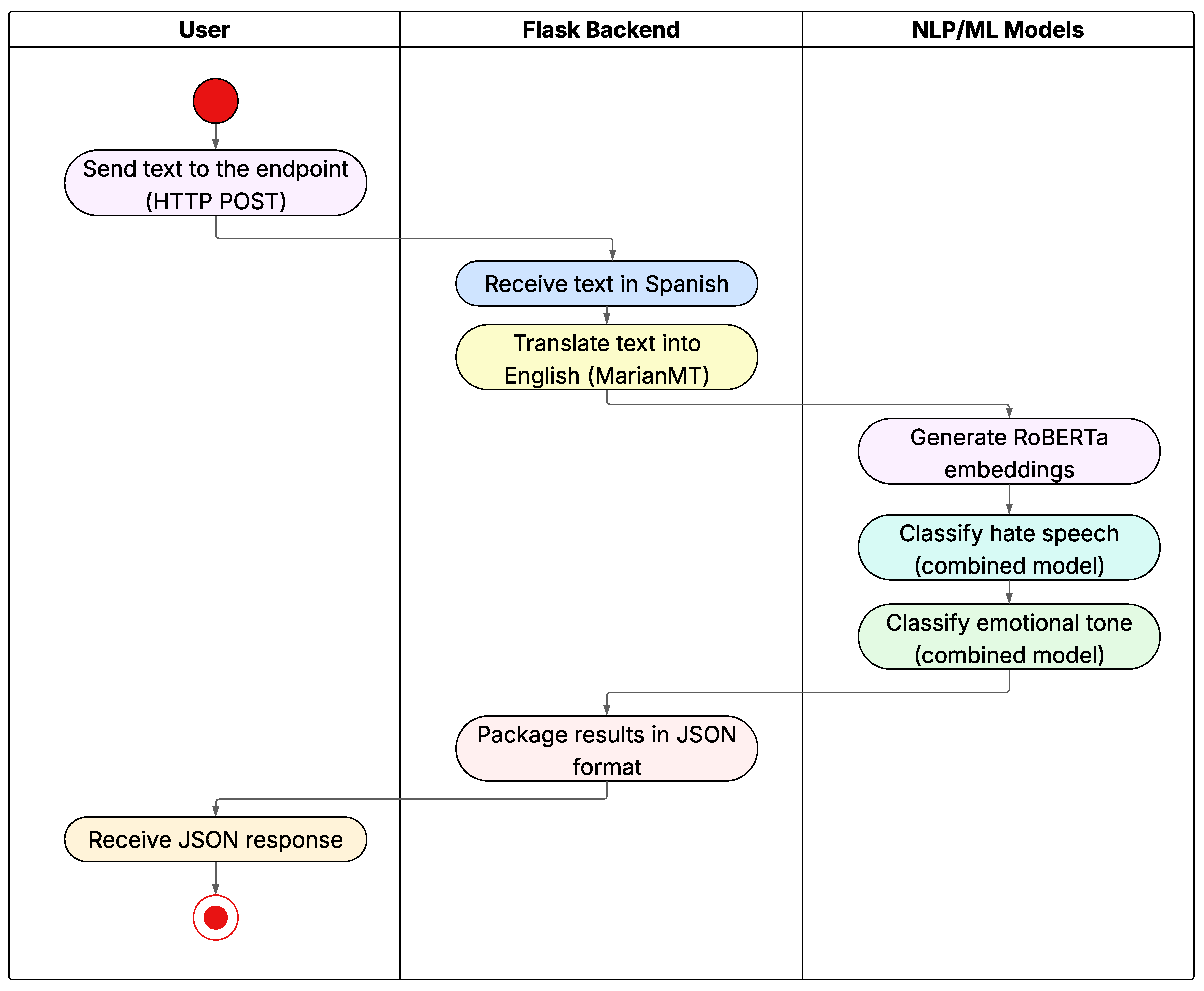

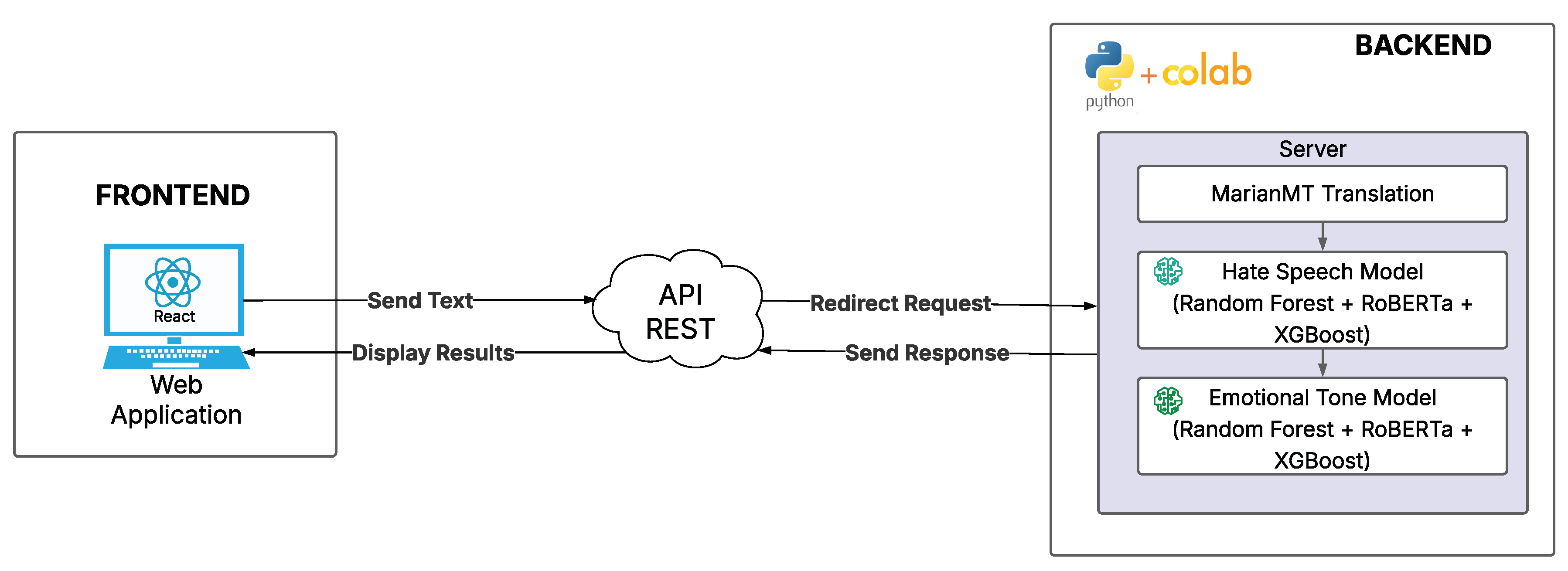

For the development of the web service, we used the Flask framework to implement the system’s backend. We deployed the application in a Google Colab environment and exposed a port using Ngrok to generate a public URL and facilitate remote access to the API.

We designed a RESTful API with an endpoint named /analyze that receives HTTP POST requests containing the user-provided input text. The processing flow consists of: (i) automatic translation of the text into English using the MarianMT model, (ii) generation of vector representations through RoBERTa embeddings, and (iii) analysis with previously trained models for hate speech detection and emotional tone classification.

Finally, we packaged the results into a JSON object and returned it to the user as the API response.

Figure 7 shows the activity diagram corresponding to the described system flow.

3.8. Development of a Web Client and Functional Validation in a Simulated Environment

To verify the models’ usability in an interaction flow close to real-world use, we developed a prototype web client that operates as a minimum viable social network. The system allows users to register, log in, post messages, and hold conversations. Each text is analyzed by the classification API before being displayed, enabling proactive moderation based on hate speech predictions and the associated emotional tone.

3.8.1. System Architecture and Design Pattern

We adopted a client-server architecture: the client (i.e., web application) sends analytics requests to the API and displays the response in the interface. To structure the client code, we followed the Model-View-Controller (MVC) pattern, separating the visual representation, interaction logic, and data handling. Information generated by application usage (i.e., users, posts, and moderation metrics) is managed in a non-relational database in the cloud (Firebase) to ensure availability, low latency, and horizontal scalability (See

Figure 8).

3.9. Web Client Implementation

We implemented the interface in React, leveraging its declarative state management and protected routing to maintain the security of sensitive views (e.g., the admin panel). We handled authentication using Firebase’s Authentication module (email/password), which stores credentials as hashes and prevents their direct exposure in the database. We accessed data using controllers that encapsulate CRUD operations and communicated with the Flask backend via the REST API.

3.9.1. Preventive Moderation Flow

Before publishing text, the client sends the content to the API. If the response classifies the message as Not Hate, we publish the content without restrictions. If the prediction is Hate, we block publication, and the user receives a notification with a warning and a reflection aimed at promoting responsible language use, including the dominant emotion identified by the model. With this mechanism, we reduce third-party exposure to hostile content and offer the author the option to edit or remove the message.

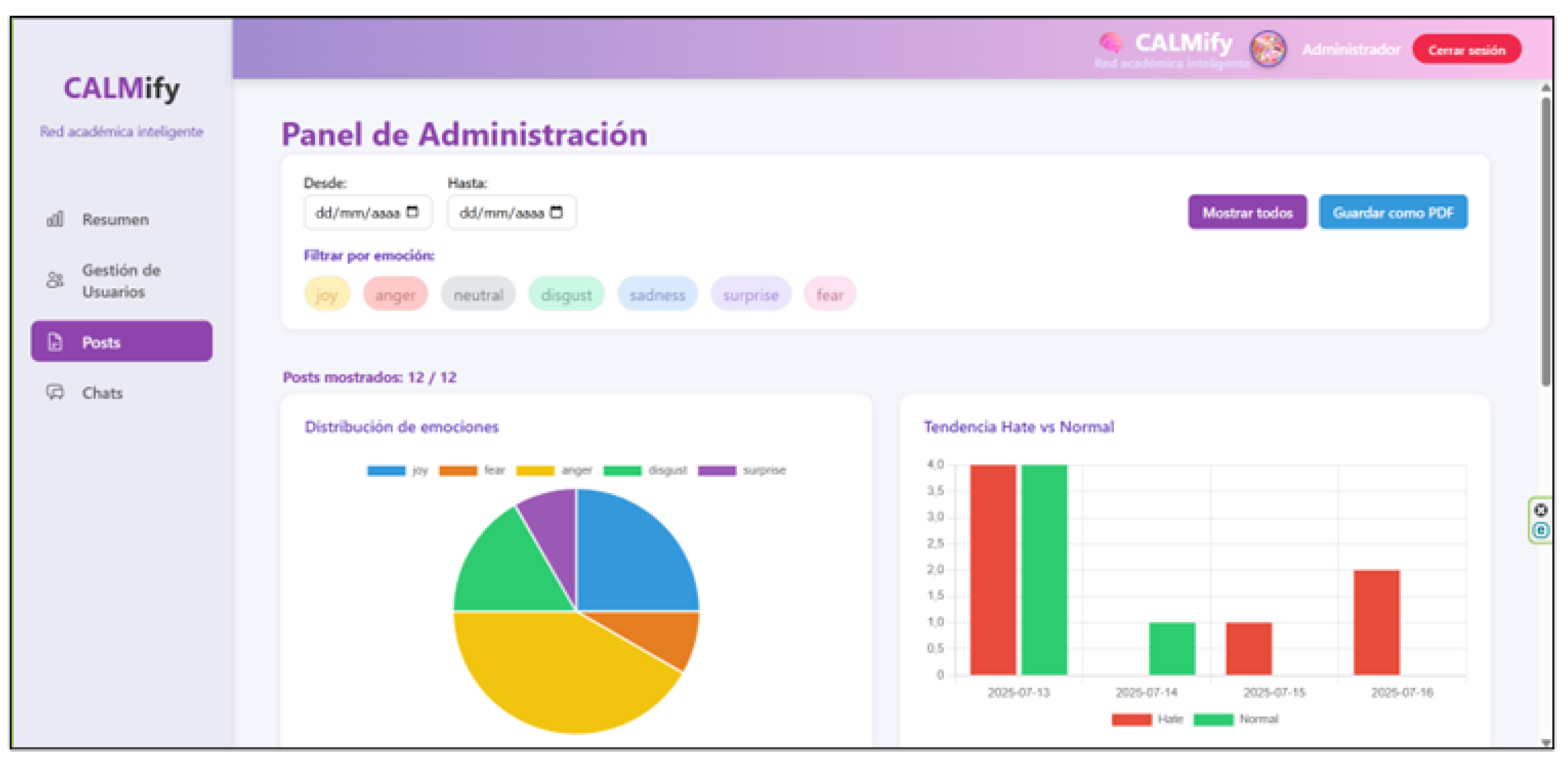

3.9.2. Administration Panel (Dashboard)

We implemented a moderator dashboard with statistical summaries (e.g., bar, pie, and histogram charts) and search/filtering tools by date, content, hate/non-hate tagging, and dominant emotion. The dashboard enables management of posts and users (e.g., blocking accounts or content when appropriate), facilitating operational monitoring and traceability of moderation decisions, as shown in

Figure 9.

4. Results

In this section, we evaluate the models using the ISO/IEC 25010 standard. We include performance and quality tests, as well as performance metrics for the previously trained models for both hate speech detection and emotional tone classification.

4.1. Evaluation and Testing

We evaluated the four trained models: RoBERTa, Random Forest, XGBoost, and the combined model using stacking. We performed the evaluations on 20 % of the dataset (200 799 records), reserved for testing.

4.1.1. Hate Speech Detection Models

For the binary classification task (hate vs. non-hate), we evaluated the four trained models on the test dataset.

Table 3 presents the results of Precision, Recall, and F1-Score for each class (0 = No hate, 1 = Hate).

Regarding the accuracy metric, we obtained the following values:

RoBERTa = 0.90;

Random Forest = 0.91;

XGBoost = 0.89;

Combinado = 0.93.

The confusion matrix for each trained model is shown in

Table 4.

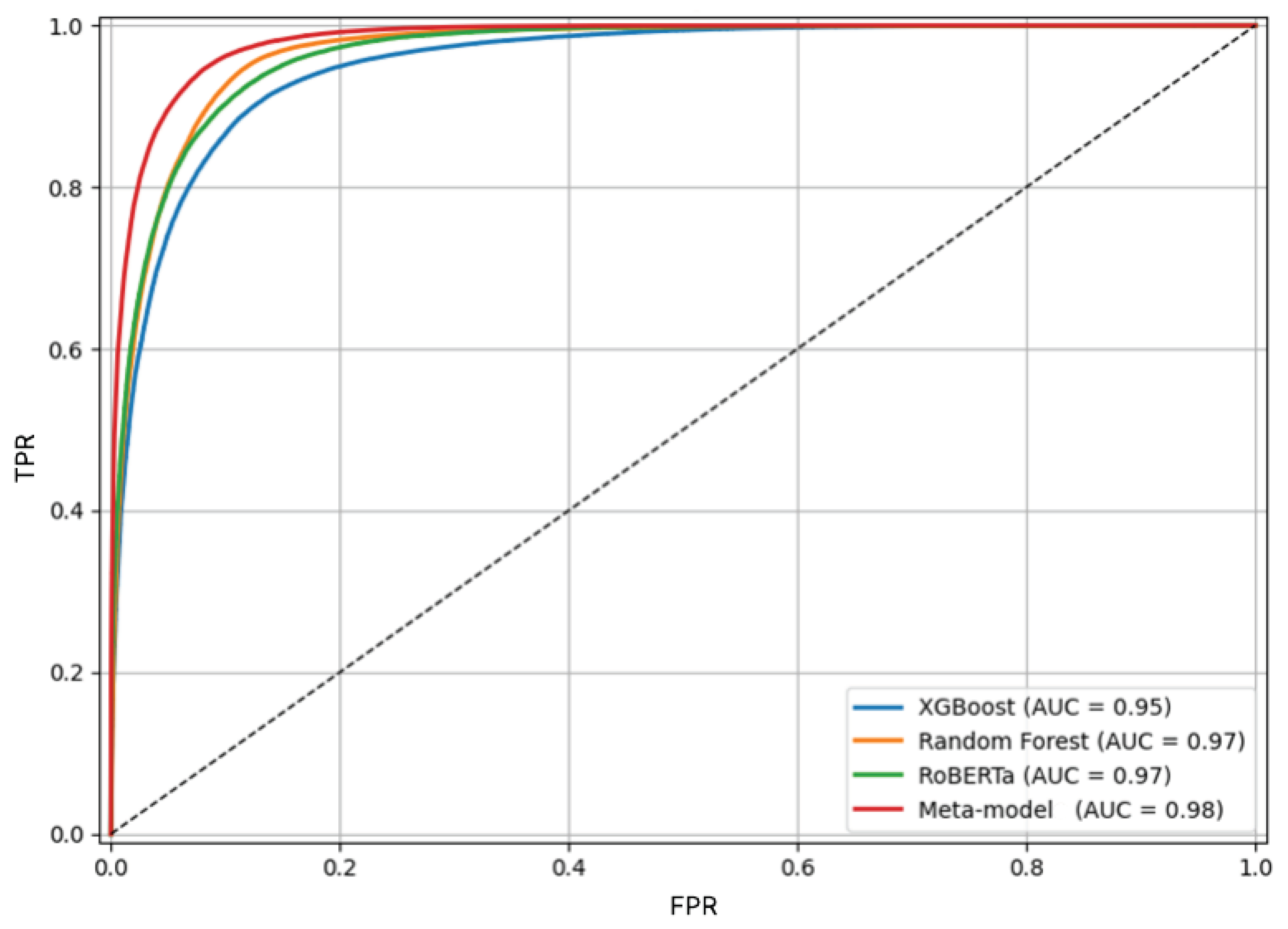

We observed that the stacked model outperforms the individual models, with higher numbers of true positives and negatives and lower numbers of false positives and negatives. This means the model has better predictive power and a higher F1 Score. This demonstrates that a stacked model learns from previous predictions and improves performance. Finally, we obtained an accuracy value of 0.93 for the stacked model.

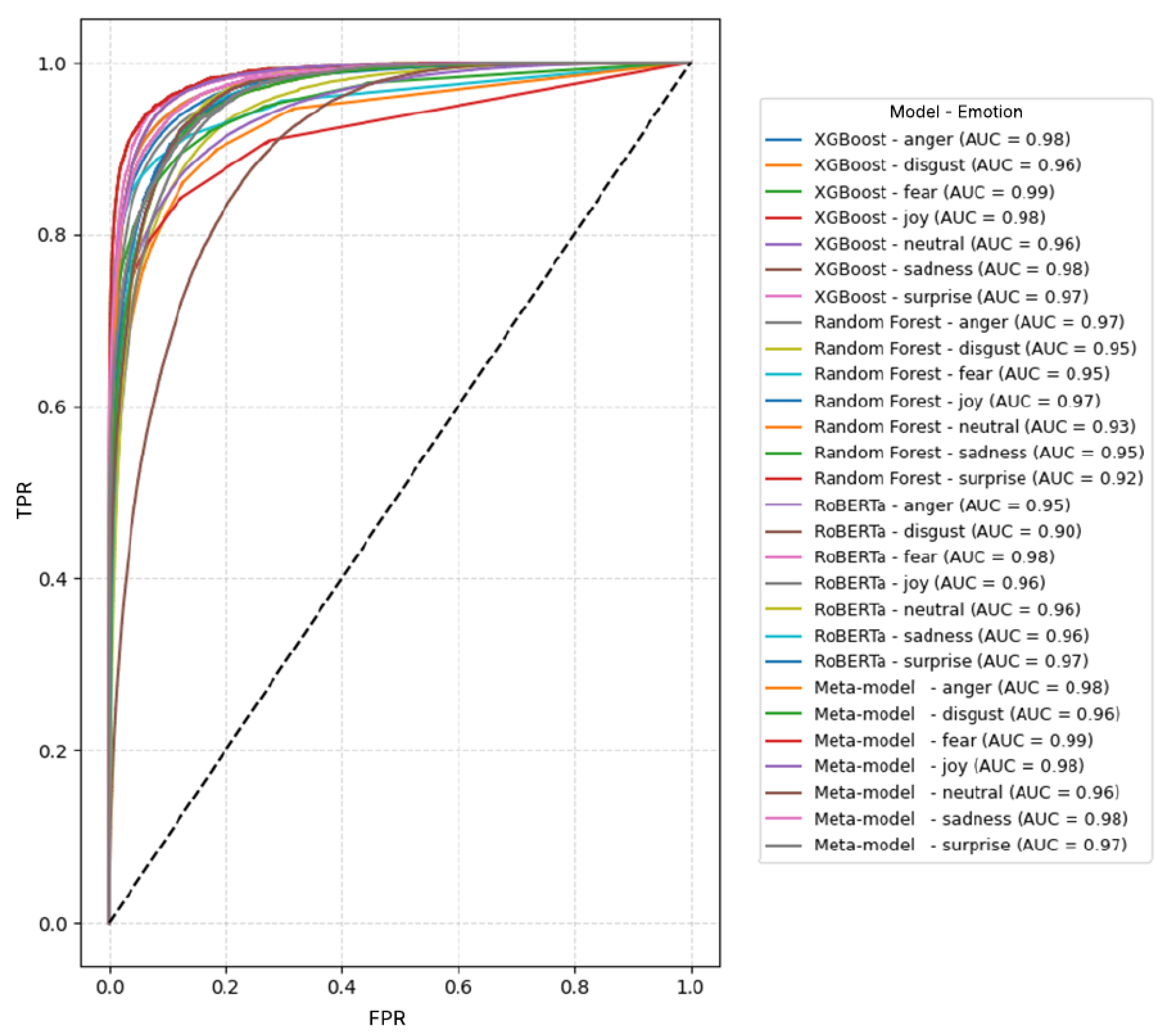

Furthermore, to evaluate the models’ performance, we used the Receiver Operating Characteristic (ROC) curve. As Google for Developers (2025) notes, the ROC curve graphically depicts the model’s performance across all thresholds. The AUC (area under the curve) value represents the probability that the model will correctly classify a positive example better than a negative one. A higher AUC indicates a better model.

Figure 10 presents the ROC curves. We see that the models achieve significant performance, with areas under the curve exceeding 0.95. The combined model achieves an area under the curve of 0.98, indicating greater capacity to detect hate speech.

4.1.2. Emotional Tone Classification Models

For multiclass emotion classification, we evaluated the four models on the test dataset. In

Table 5 we present the results of Accuracy, Recall, and F1-Score for each of the seven emotions (i.e., anger, disgust, fear, joy, neutral, sadness, surprise).

Regarding the accuracy metric, we obtained the following values:

RoBERTa = 0.75;

Random Forest = 0.85;

XGBoost = 0.86;

Combinado = 0.90.

As shown in

Table 6, we present the confusion matrices for each model, where true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN) are grouped by emotion label.

The results show that the combined model’s values surpass the evaluated metrics for most emotions. For the anger label, it achieves an accuracy of 0.94, a recall of 0.95, and an F1-score of 0.94, compared to the other individual models. For the disgust label, it achieves an accuracy of 0.82, a recall of 0.84, and an F1-score of 0.83. Regarding the fear label, it achieved an accuracy of 0.88 and an F1-score of 0.83, compared to the RoBERTa model, which had the lowest values with an accuracy of 0.43 and an F1-score of 0.57. Similarly, for the joy label, it achieves 0.88 in accuracy, recall, and F1-score. Although the value is lower for the neutral label, it still outperforms the other individual models. Finally, in the sadness label, it achieved an F1 score of 0.81 compared to 0.54 for RoBERTa. These results indicate that the combined model performs better across all labels. Regarding the confusion matrix, we obtained higher counts of true positives and negatives, and lower counts of false positives and false negatives in the combined model. This reflects a greater capacity for generalization.

Figure 11 presents the ROC curves for each emotion. In this case, since it is a multi-class problem, we generated one curve per label. The combined model achieved curves closest to the ideal point, with higher AUC values for most emotions, notably

anger (0.98),

fear (0.99),

joy (0.98), and

sadness (0.98).

4.1.3. REST API Performance Testing

We conducted performance tests to verify the RESTful API’s behavior under multiple concurrent users. We used Apache JMeter, a tool that enabled us to simulate user loads and generate real-time reports, measuring metrics such as average, minimum, and maximum response times, standard deviation, error rate, and throughput. In each test, we configured a different number of users and a progressive increment period. The number of users increases by 100 up to a maximum of 600.

Table 7 shows the details of the results obtained.

By exposing the API via an access tunnel with Ngrok, we identified bandwidth and traffic-control limitations that affected response times. With loads of 100 and 200 users, the API remained stable, with average response times of 27 651 ms and 52 630 ms, respectively, and no errors were recorded. Starting with 300 users, although the average response time decreased (49 008 ms), errors were reported (13%), reflecting an overload in handling concurrent requests. This trend intensified with 400 users (15.25% errors), and especially with 500 and 600 users, where errors reached 35% and 52.83%, respectively, demonstrating the API’s inability to process all requests.

Throughput increased from 1.27 req/s (100 users) to 2.33 req/s (600 users), indicating that the system attempted to process more requests, although not all were successful. Similarly, bandwidth consumption increased from 0.74 KB/s to 4.45 KB/s, highlighting the need for servers with greater power and capacity to support high concurrent loads.

4.1.4. Analysis of the Bayesian Calibration and Optimal Design Under Asymmetric Risk (BACON-AR) Framework

The

BACON-AR framework, defined in

Section 3.6.9, was applied to the validation dataset to evaluate its empirical behavior under asymmetric decision costs. The ensemble model’s underlying architecture remained unchanged; only the probabilistic outputs were post-processed using Bayesian calibration and risk-based threshold optimization. In the experimental setup, asymmetric costs were defined as

, reflecting the greater impact of false negatives in the target classification scenario.

Table 9 shows the comparative results between the original ensemble classifier and the same model after applying the

BACON-AR framework. The analysis focuses not only on traditional performance metrics (e.g., AUC, recall, precision), but also on calibration behavior, minimum risk, and the decision threshold obtained via asymmetric risk minimization.

The BACON-AR framework was designed to improve the coherence and stability of the predictive system’s decisions for hate speech detection and emotional analysis. Its purpose is to adjust the model’s confidence through a probabilistic calibration process and, then, to determine a decision threshold that minimizes total risk, accounting for the unequal impact of errors. It is important to clarify that BACON-AR does not constitute a new learning model or modify the trained architecture; instead, it operates as a post-processing decision framework applied to the ensemble classifier’s probabilistic outputs. In this sense, BACON-AR serves as an analytical validation layer that transforms probability estimates into decisions aligned with real-world consequences, reinforcing the transparency and interpretability of the evaluation process.

The data used for this analysis came from the combined model trained with RoBERTa, Random Forest, and XGBoost, which achieved remarkable overall metrics: 72.3% accuracy, 93.5% recall, and 81.6% F1 score, with an AUC of 0.883. However, small discrepancies were detected between the probability estimated by the model

and the actual frequency of hits, a phenomenon called miscalibration [

40]. To correct this, a Bayesian calibration was applied, which adjusts the predicted probabilities to match the actual class proportions in the data. The general calibration formula is defined as:

where

represents the calibrated probability,

the original probability of the model, and

and

are the observed empirical proportions of the positive and negative classes. This adjustment ensures that the predictions align with the actual hit frequency and reduces the expected calibration error (

Expected Calibration Error, ECE), following the methods described by [

17,

41].

The computational development of the BACON-AR framework was implemented in Python, using NumPy and Pandas for probability calculations, calibration, and risk optimization. The mathematical procedure is summarized in the Algorithm 4, which combines Bayesian calibration and the search for the optimal threshold that minimizes the total risk .

The proposed BACON-AR procedure integrates Bayesian posterior recalibration with cost-sensitive decision optimization under asymmetric misclassification penalties. The calibration stage adjusts predicted probabilities according to empirical class priors, reducing prior-shift distortions and improving probabilistic interpretability.

Calibration quality is quantitatively assessed through the Expected Calibration Error (ECE), which measures the discrepancy between empirical accuracy and predicted confidence across probability bins.

The final decision rule is obtained by minimizing an asymmetric empirical risk function that explicitly incorporates differentiated costs for false negatives and false positives.

This formulation aligns with established principles in probabilistic model evaluation and calibration theory [

51,

52], ensuring statistically coherent and decision-aware model deployment.

The Algorithm 4 summarizes the implementation of the BACON-AR framework. Starting with the ensemble probabilities, Bayesian calibration is applied, the expected calibration error (ECE) is calculated, and the asymmetric risk curve is obtained. The optimal threshold is selected as the point that minimizes , ensuring a decision consistent with the costs assigned to false negatives and false positives.

Table 8.

Experimental parameters and BACON-AR framework configuration.

Table 8.

Experimental parameters and BACON-AR framework configuration.

| Parameter |

Description |

| Dataset |

Balanced subset of 510 252 records |

| Training / validation split |

80 / 20 |

| Splitting strategy |

Stratified by class (stratify=y) |

| Random seed |

42 (reproducibility ensured) |

| Asymmetric cost |

|

| Batch size |

32 |

| Number of repetitions |

5 averaged runs |

| Calibration metric |

ECE (Expected Calibration Error) |

| Risk metric |

with variable threshold

|

Table 9.

Comparative summary of the base model and BACON-AR framework with performance metrics, minimum risk, and optimal threshold.

Table 9.

Comparative summary of the base model and BACON-AR framework with performance metrics, minimum risk, and optimal threshold.

| Model |

ECE (%) |

AUC |

Recall (%) |

Precision (%) |

FN Reduction (%) |

Minimum Risk

|

Threshold

|

| Ensemble (uncalibrated) |

11.2 |

0.883 |

93.5 |

72.3 |

— |

0.31 |

0.50 |

| BACON-AR (calibrated) |

11.2 |

0.883 |

93.5 |

72.3 |

7.3 |

0.24 |

0.43 |

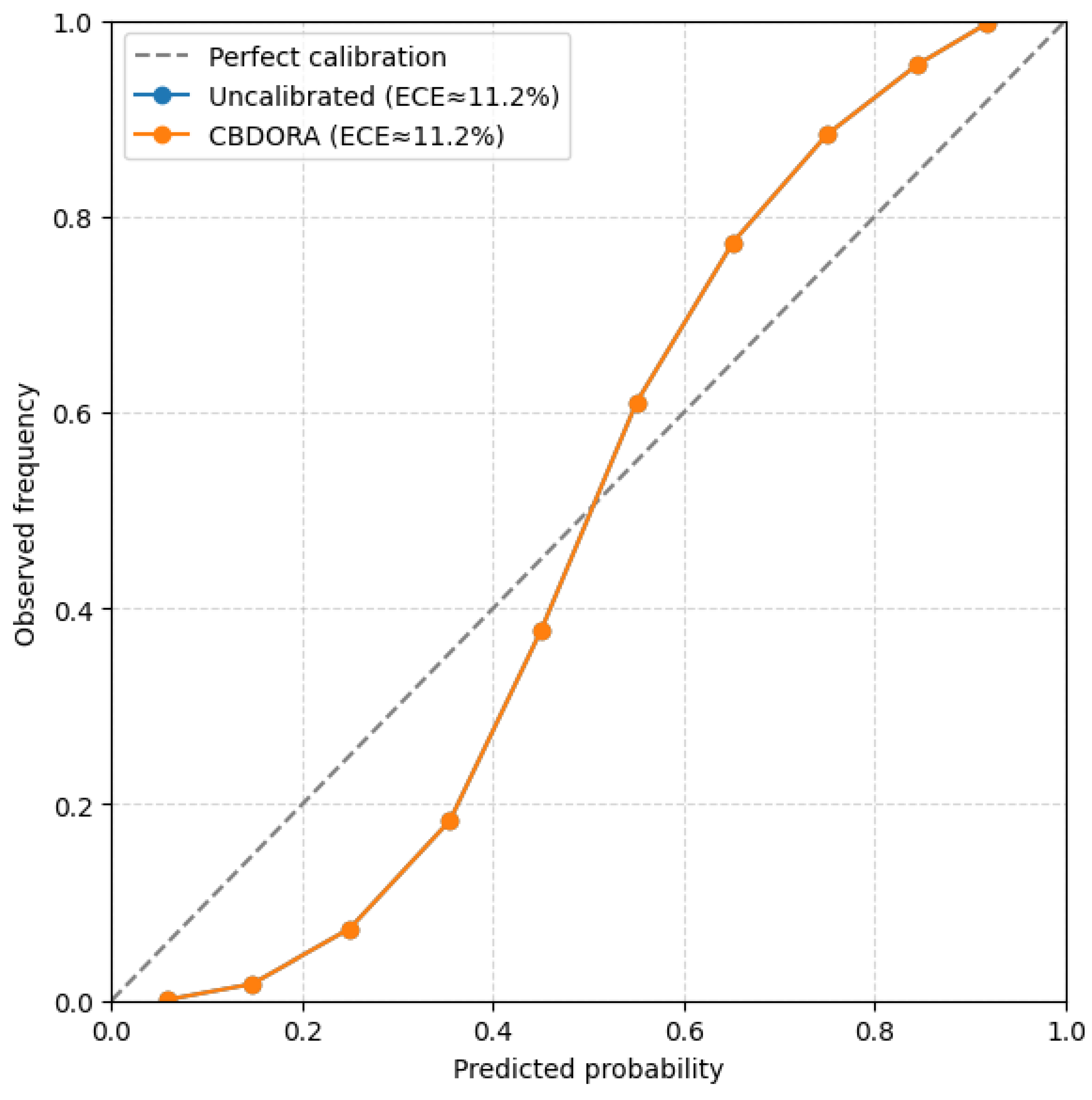

As shown in

Table 9, global metrics (AUC,

recall, and accuracy) remained stable after calibration, confirming the statistical consistency of the model. However, adjusting the optimal threshold

reduced the total risk without affecting overall performance, demonstrating the balance achieved by

BACON-AR between sensitivity and accuracy.

These results confirm the usefulness of the

BACON-AR framework as a tool that optimizes model decisions in a consistent and transparent manner, aligned with the principles of fairness and probabilistic reliability. This process was validated using reliability diagrams, which showed a trend close to the ideal diagonal, as recommended by [

53].

Figure 12.

Reliability diagrams for the model before and after applying the BACON-AR framework. The comparison between the uncalibrated model (ECE ) and the calibrated BACON-AR framework (ECE ) shows that the probabilistic estimates remain consistent and close to the perfect calibration line, confirming the model’s stability and interpretability.

Figure 12.

Reliability diagrams for the model before and after applying the BACON-AR framework. The comparison between the uncalibrated model (ECE ) and the calibrated BACON-AR framework (ECE ) shows that the probabilistic estimates remain consistent and close to the perfect calibration line, confirming the model’s stability and interpretability.

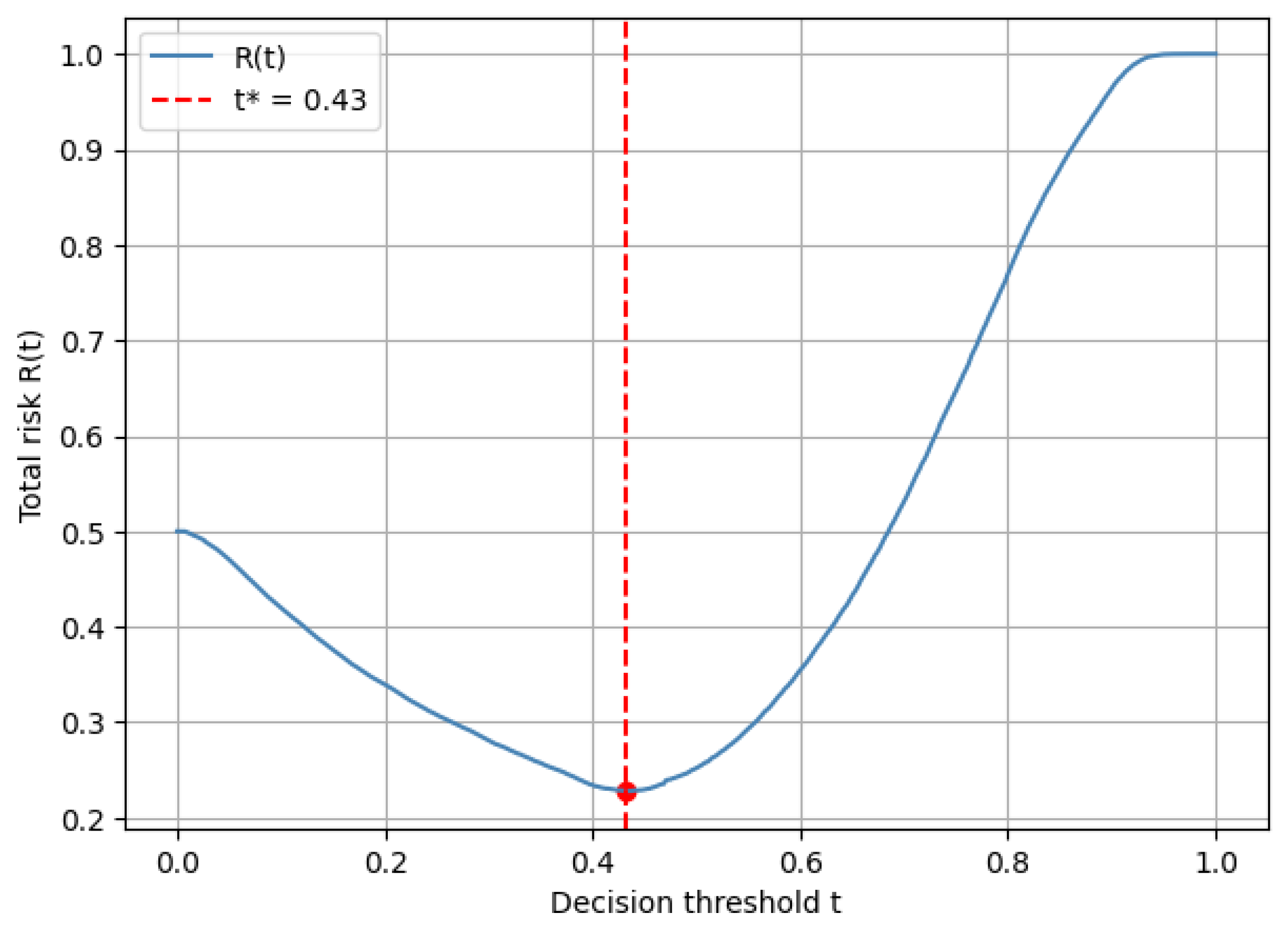

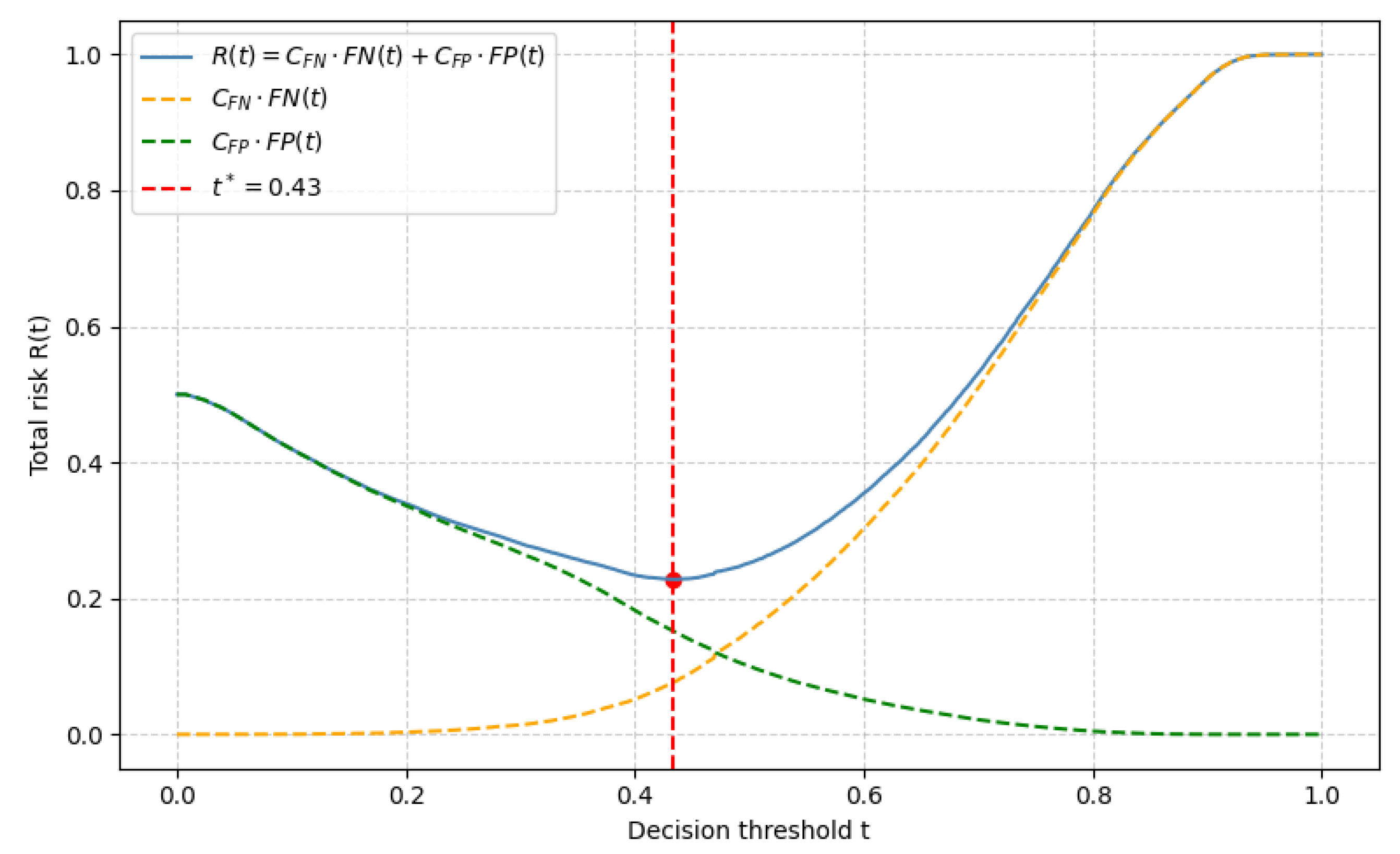

Figure 13 complements these findings by showing the total risk function after applying the

BACON-AR framework.

Figure 13.

Total risk function in the BACON-AR framework with asymmetric costs (). The minimum point at corresponds to the empirically obtained value and demonstrates how the optimal threshold minimizes the total risk while balancing false negatives and false positives.

Figure 13.

Total risk function in the BACON-AR framework with asymmetric costs (). The minimum point at corresponds to the empirically obtained value and demonstrates how the optimal threshold minimizes the total risk while balancing false negatives and false positives.

Figure 14.

Decomposed total risk function for the BACON-AR framework. The components (orange) and (green) illustrate how the false negative and false positive contributions evolve with respect to the decision threshold t. The optimal point shows where the trade-off between both error types achieves the minimum total risk.

Figure 14.

Decomposed total risk function for the BACON-AR framework. The components (orange) and (green) illustrate how the false negative and false positive contributions evolve with respect to the decision threshold t. The optimal point shows where the trade-off between both error types achieves the minimum total risk.

To define the decision phase, the

BACON-AR framework incorporates a total risk function expressed as:

where

and

are the costs associated with false negatives and false positives, respectively, and

is the indicator function. The objective is to identify the threshold

that minimizes the total risk:

The criterion was applied empirically to the validation data, establishing a cost relationship

, where the cost of a false negative is considered double that of a false positive, consistent with the nature of the problem and following the approach of [

43]. The search for the minimum of the total risk function

determined an optimal value of

, which represents the equilibrium point between the sensitivity and accuracy of the model [

18].

Using the 510,252 test records, which included messages labeled as offensive or non-offensive, along with the emotions anger, fear, joy, and sadness, the ensemble model maintained an AUC of 0.883 under the BACON-AR framework, demonstrating stable performance while reducing the number of false negatives by 7.3%. The recall rate remained at 93.5%, and the accuracy at 72.3%. These results reflect BACON-AR ’s capacity to translate calibrated probabilities into decisions that are consistent with the observed data and asymmetric cost assumptions.

The statistical stability analysis of the BACON-AR framework showed consistent behavior in calibration and risk metrics. After five independent repetitions, the framework achieved an average ECE of , a minimum risk of , and a stable optimal threshold of , confirming reproducibility under small data perturbations.

Additionally, the total hazard function showed a minimum average value of 0.2275 ± 0.0012, confirming that the asymmetric risk-based decision strategy remains stable even under small data variations. The optimal decision threshold remained around , reflecting a sustained balance between false positives and false negatives.

These results validate the robustness of the BACON-AR framework to data perturbations and demonstrate that Bayesian calibration, combined with the hazard function, provides a sound and reproducible mathematical framework for probabilistic decision-making under asymmetric cost conditions.

5. Discussion

The results demonstrate that the developed models can be efficiently integrated into web applications via a RESTful API, enabling requests to be sent from the interface to the server and responses to be received in real time. This architectural design promotes scalability and interoperability in real-world environments because it does not depend on any particular technology for its consumption. Thus, the system can be easily adapted to different platforms or services, expanding its practical applicability.

These findings are consistent with previous research highlighting the usefulness of lightweight, decoupled architectures for implementing artificial intelligence systems in production, as they reduce maintenance complexity and improve the model’s ability to be continuously updated.

In accordance with the hypothesis, the combined model outperformed the individual models, achieving accuracy values of 0.93 for hate speech detection and 0.90 for emotion classification. Furthermore, the F1-Score and AUC metrics exceeded 0.95 in several cases, reflecting high predictive and generalizability capabilities.

This behavior supports the idea that ensemble models are robust for complex tasks such as identifying hostility and emotional tone in texts, as also noted by Al-Hashedi et al. [

54] in the recent study titled

Cyberbullying Detection Based on Emotion.

In this context, the results of this work reinforce the evidence that combining classifiers increases the stability of NLP-based systems, thereby improving their performance compared to individual models.

The results of the mathematical analysis show that the BACON-AR framework achieves an optimal balance between false positives and false negatives by setting the decision threshold at . This value represents the point at which the total hazard function minimizes the combined impact of both types of error. This demonstrates that combining Bayesian calibration with asymmetric risk leads to more accurate, sensitive, and evidence-consistent decisions.

The

BACON-AR framework reduces false negatives by 7.3% without affecting overall accuracy, thus reinforcing the stability, reliability, and statistical robustness of the predictive system. This finding coincides with that reported by [

50], who demonstrate that applying asymmetric costs improves classifier performance in unbalanced data contexts.

Similarly, the observed behavior of the risk function

and the calibration curve (

Figure 15) confirms that the model not only improves the consistency between estimated probabilities and empirical results but also reduces the uncertainty associated with decisions.

This result is consistent with the observations of [

47], who highlight that integrating probabilistic calibration with risk criteria generates more stable and reliable models in production environments. Therefore, the

BACON-AR framework proposal consolidates a mathematical framework that enables fairer, less sensitive decisions to data variability. However, technical limitations were identified during deployment testing.

During experiments on a server hosted on Google Colab and exposed via Ngrok, latency issues and occasional crashes were recorded under concurrent scenarios with more than 300 users. These results show that, although the solution is functional and adaptable, its implementation in production environments requires a more robust infrastructure, with servers that have greater network and processing capacity.

This observation also aligns with the recommendations of [

48], who emphasize the importance of controlled, reproducible environments to ensure performance stability in artificial intelligence systems.

From a practical perspective, one of the system’s main advantages is its potential to serve as a verification point before content is published on digital platforms. This would allow blocking hate speech and classifying its emotional tone before it is publicly viewed, thus reducing the negative impact on victims and contributing to the creation of safer digital spaces.

Furthermore, this type of preventive integration aligns with the ethical principles of artificial intelligence outlined by [

55], which emphasize calibrated models that ensure equitable, socially responsible decisions.

Among the methodological limitations, it is acknowledged that the training data are in English, which limits the models’ ability to generate predictions in other languages natively. Although machine translation models were incorporated, they do not always accurately capture terms, nuances, and expressions, leading to errors in some predictions.

This difficulty has also been reported in previous work on multilingual natural language processing, where cultural and contextual differences influence the semantic interpretation of texts. Expanding the linguistic scope, therefore, represents a relevant methodological challenge for improving the generalizability of the models.

Regarding future lines of research, it is pertinent to expand training with multilingual corpora specific to the local sociocultural context. This would improve the detection of emotional nuances across different languages.

Likewise, it would be beneficial to explore the use of multimodal models that integrate text, audio, and image to more comprehensively identify hostility and emotions, as well as to apply explainable learning techniques to improve the transparency and traceability of model decisions.

Finally, the main validation of this study lies in the practical integration of Bayesian decision theory and probabilistic calibration for classification problems with unequal costs. The BACON-AR framework provides a solid theoretical foundation, a reproducible mathematical formulation, and verifiable empirical validation, positioning it as a reliable alternative for automated decision-making with explicit risk control.

6. Conclusions

In this study, we address the problem of hate speech on social media, with a special focus on digital violence against women. We proposed a classification model that integrates analysis of emotional tone with detection of explicit hostility, offering a more precise and sensitive tool for this type of content.

The results demonstrate that the combined approach consistently outperforms the individual models, achieving accuracy and F1 Scores of 0.93 for hate speech detection and 0.90 for emotional tone classification.

Furthermore, the analysis using confusion matrices and ROC curves confirmed the system’s robustness, with AUC values of up to 0.98 for basic emotion detection, demonstrating a suitable balance between sensitivity and specificity in multiclass scenarios.

The main contribution of this work lies in integrating Natural Language Processing (NLP) and machine learning techniques within a hybrid approach that incorporates emotional tone analysis as an essential complement for identifying hostile speech.

This contribution not only enhances moderation systems’ ability to distinguish between ironic, ambiguous, or discriminatory messages but also promotes the creation of safer, more equitable digital environments.

Practical implementation using a RESTful API and its validation in a web environment demonstrated the technical feasibility of the proposal in real-world scenarios, consolidating a scalable, lightweight architecture adaptable to different platforms.

From a mathematical perspective, the BACON-AR framework showed that combining Bayesian calibration with asymmetric risk-based decision-making makes the system more consistent, sensitive, and reliable. The hazard function reached its minimum point at , achieving an optimal balance between false positives and false negatives when the cost of false negatives was twice that of false positives. This behavior reduced critical errors without affecting overall accuracy, making decisions more understandable, consistent, and fair. Thus, the framework makes a methodological contribution by connecting Bayesian decision theory with the practice of probabilistic calibration in real-world classification contexts.

Regarding the study’s limitations, the system’s performance decreased under high concurrency, leading to significant error rates when more than 300 users accessed it simultaneously. This finding highlights the need to migrate to more robust, distributed infrastructures that maintain availability and performance in production environments.

Furthermore, the reliance on data collected on specific platforms limits the model’s ability to generalize across different cultural and linguistic contexts, posing a challenge for its large-scale application.

Looking ahead, the proposal is to delve deeper into optimization strategies for large-scale systems, while also exploring self-supervised and multilingual learning techniques that expand the model’s capacity to adapt to diverse digital communities.

Likewise, it is pertinent to investigate the use of multimodal models that integrate text, audio, and image to detect hostility and emotions more comprehensively, and to apply explainable learning methodologies that improve the transparency and traceability of decisions. The aim is to move towards more inclusive, scalable, and reliable detection systems that effectively prevent hate speech and promote respectful, safe digital spaces for women and other vulnerable groups.

The BACON-AR framework approach, together with the proposed Web architecture, constitutes a significant advance towards the development of automated systems capable of analyzing human language with sensitivity, fairness, and statistical consistency, for the benefit of a safer digital coexistence.

In summary, this work offers a comprehensive proposal that combines technical soundness, mathematical rigor, and an ethical vision for the use of artificial intelligence.

7. Future Work

As future work, first: we propose construct a parallel corpus that includes Spanish and its regional variants to capture the linguistic diversity of Latin American digital communities. This is because current English-trained models need to be expanded to support multilingual capabilities. This would involve developing dialect-aware embeddings that preserve local expressions and culturally specific manifestations of gender-based hostility, which current models fail to recognize. Such expansion would directly address the machine translation limitations identified during the API validation.

Second, the performance degradation observed under high-concurrency loads demands architectural improvements. Migrating the system from the current prototype to a distributed cloud infrastructure with auto-scaling capabilities would ensure production-level reliability. Containerization strategies and asynchronous request processing could significantly reduce latency while maintaining prediction quality under real-world traffic patterns. This infrastructure upgrade would enable the system to function as a viable pre-moderation tool for social platforms.

Third, extending beyond text-based analysis represents a natural progression. Online hate speech increasingly spreads through multimodal formats, such as memes and images, that bypass conventional filters. Developing a multimodal ensemble that integrates visual features through convolutional networks would address this evasion strategy. The stacking architecture validated in this study provides a flexible foundation for incorporating these additional modalities while preserving the emotional tone classification component.

Author Contributions

Conceptualization, Aymé Escobar Díaz and Walter Fuertes; Methodology, Aymé Escobar Díaz and Ricardo Rivadeneira; Software, Aymé Escobar Díaz and Ricardo Rivadeneira; Validation, Aymé Escobar Díaz, Ricardo Rivadeneira, Walter Fuertes and Washington Loza; Formal analysis, Aymé Escobar Díaz, Ricardo Rivadeneira, Walter Fuertes and Washington Loza; Investigation, Aymé Escobar Díaz and Ricardo Rivadeneira; Resources, Ricardo Rivadeneira; Data curation, Aymé Escobar Díaz and Ricardo Rivadeneira; Writing—original draft preparation, Aymé Escobar Díaz and Ricardo Rivadeneira; Writing—review and editing, Walter Fuertes and Washington Loza; Visualization, Aymé Escobar Díaz and Ricardo Rivadeneira; Supervision, Walter Fuertes and Washington Loza; Project administration, Walter Fuertes; All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding. The APC was funded by the Universidad de las Fuerzas Armadas ESPE, in Sangolquí, Ecuador.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Acknowledgments

The authors express their sincere gratitude to the Universidad de las Fuerzas Armadas ESPE for the academic, technical, and institutional support provided for this research. Special recognition is due to the Distributed Systems, Cybersecurity, and Content Research Group of the Department of Computer Science for the use of the High-Performance Research Laboratory and its specialized software and hardware. During the preparation of this manuscript, the authors used OpenAI ChatGPT (GPT-5, 2025) very occasionally to assist with some text English style correction and LaTeX formatting. The authors have reviewed and edited the generated content and assume full responsibility for the content of this publication.

Conflicts of Interest

The authors declare no conflicts of interest. The funders (Universidad de las Fuerzas Armadas ESPE and its Finance Unit) had no role in the study

design, the collection, analysis, or interpretation of the data, the drafting of the manuscript, or the decision to

publish the results.

Abbreviations

The following abbreviations are used in this manuscript:

| PLN |

Procesamiento de Lenguaje Natural |

| ML |

Machine Learning (Aprendizaje Automático) |

| API |

Application Programming Interface |

| EDA |

Exploratory Data Analysis (Análisis Exploratorio de Datos) |

| ETL |

Extract, Transform, Load (Extracción, Transformación y Carga) |

| ROC |

Receiver Operating Characteristic |

| AUC |

Area Under the Curve |

| SLR |

Systematic Literature Review (Revisión Sistemática de Literatura) |

| F1 |

F1-Score (Precision harmonic measurement and recall.) |

| JSON |

JavaScript Object Notation |

| CFN |

Cost of false negative |

| CFP |

Cost of False Positive |

| FN |

False Negative |

| FP |

False Positive |

|

Threshold of t |

| I(.) |

Indicator Function |

| BACON-AR |

Bayesian Calibration and Optimal Design under Asymmetric Risk |

References

- Williams, B.; Onsman, A.; Brown, T. Exploratory factor analysis: A five-step guide for novices. Australasian Journal of Paramedicine 2010, 8. [Google Scholar] [CrossRef]

- Schmidt, A.; Wiegand, M. A Survey on Hate Speech Detection using Natural Language Processing. In Proceedings of the Fifth International Workshop on Natural Language Processing for Social Media; 2017; pp. 1–10. [Google Scholar] [CrossRef]

- Fortuna, P.; Nunes, S. A Survey on Automatic Detection of Hate Speech in Text. ACM Computing Surveys 2018, 51, 1–30. [Google Scholar] [CrossRef]

- Stahelski, A.; Anderson, A.; Browitt, N.; Radeke, M. Facial Expressions and Emotion Labels Are Separate Initiators of Trait Inferences From the Face. Frontiers in Psychology 2021, 12, 749933. [Google Scholar] [CrossRef] [PubMed]

- Brown, A. What is hate speech? Part 1: The myth of hate. In Law and Philosophy; 2017. [Google Scholar] [CrossRef]

- Martins, R.; Gomes, M.; Almeida, J.; Novais, P.; Henriques, P. Hate speech classification in social media using emotional analysis. In Proceedings of the 2018 7th Brazilian Conference on Intelligent Systems (BRACIS), 2018; IEEE; pp. 265–270. [Google Scholar] [CrossRef]

- Founta, A.M.; Djouvas, C.; Chatzakou, D.; Leontiadis, I.; Blackburn, J.; Stringhini, G.; Vakali, A.; Sirivianos, M.; Kourtellis, N. Large Scale Crowdsourcing and Characterization of Twitter Abusive Behavior. In Proceedings of the Proceedings of ICWSM, 2018; pp. 491–500. [Google Scholar]

- García-Díaz, J.A.; Cánovas-García, M.; Colomo-Palacios, R.; Valencia-García, R. Detecting misogyny in Spanish tweets: An approach based on linguistic features and word embeddings. Future Generation Computer Systems 2021, 114, 506–518. [Google Scholar] [CrossRef]

- Jane, E.A. Misogyny Online: A Short (and Brutish) History. SAGE Open 2017, 7, 1–12. [Google Scholar] [CrossRef]

- Siapera, E. Online misogyny as witch hunt: Primitive accumulation in the age of technocapitalism. In Gender hate online; Palgrave Macmillan, 2019; pp. 21–44. [Google Scholar] [CrossRef]

- Basile, V.; Bosco, C.; Fersini, E.; Nozza, D.; Patti, V.; Rangel Pardo, F.M.; Rosso, P.; Sanguinetti, M. SemEval-2019 Task 5: Multilingual detection of hate speech against immigrants and women in Twitter. In Proceedings of the Proceedings of the 13th International Workshop on Semantic Evaluation; Association for Computational Linguistics, 2019; pp. 54–63. [Google Scholar] [CrossRef]

- Citron, D.K. Hate Crimes in Cyberspace; Harvard University Press, 2014. [Google Scholar]

- Corazza, M.; Menini, S.; Cabrio, E.; Tonelli, S.; Villata, S. A multilingual evaluation for online hate speech detection. ACM Transactions on Internet Technology 2020, 20. [Google Scholar] [CrossRef]

- Vidgen, B.; Derczynski, L. Directions in Abusive Language Training Data, a Systematic Review: Garbage In, Garbage Out. PLoS ONE 2020, 15, e0243300. [Google Scholar] [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach, 2019. arXiv arXiv:cs.

- Andrade, R.O.; Fuertes, W.; Cazares, M.; Ortiz-Garcés, I.; Navas, G. An Exploratory Study of Cognitive Sciences Applied to Cybersecurity. Electronics 2022, 11. [Google Scholar] [CrossRef]

- Bonilla, E.; Howard, D.; Oliveira, R.; Sejdinovic, D. Bayesian Adaptive Calibration and Optimal Design. Proceedings of the Advances in Neural Information Processing Systems 37 2024, NeurIPS 2024, 56526–56551. [Google Scholar] [CrossRef]

- Sun, Z.; Song, D.; Hero, A.O. Minimum-risk recalibration of classifiers. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 9564–9577. [Google Scholar] [CrossRef]

- Kelly, J.; Smyth, P. Variable-based calibration for machine learning classifiers. Pattern Recognition 2022, 129, 108754. [Google Scholar] [CrossRef]

- Araf, I.; Idri, A.; Chairi, I. Cost-sensitive learning for imbalanced medical data: A review. Artificial Intelligence Review 2024. [Google Scholar] [CrossRef]

- Gorwa, R.; Binns, R.; Katzenbach, C. Algorithmic Content Moderation: Technical and Political Challenges in the Automation of Platform Governance. Big Data & Society 2020, 7, 1–15. [Google Scholar] [CrossRef]

- Escobar Díaz, A.; Rivadeneira, R.; Fuertes, W. Emotional Tone Detection in Hate Speech Using Machine Learning and NLP: Methods, Challenges, and Future Directions—A Systematic Review. Applied Sciences 2025, 15, 12686. [Google Scholar] [CrossRef]

- Min, C.; Lin, H.; Li, X.; Zhao, H.; Lu, J.; Yang, L.; Xu, B. Finding hate speech with auxiliary emotion detection from self-training multi-label learning perspective. Information Fusion 2023, 96, 214–223. [Google Scholar] [CrossRef]

- Ramos, G.; Batista, F.; Ribeiro, R.; Fialho, P.; Moro, S.; Fonseca, A.; Guerra, R.; Carvalho, P.; Marques, C.; Silva, C. A comprehensive review on automatic hate speech detection in the age of the transformer. Social Network Analysis and Mining 2024, 14. [Google Scholar] [CrossRef]

- Rodriguez, A.; Chen, Y.L.; Argueta, C. FADOHS: Framework for Detection and Integration of Unstructured Data of Hate Speech on Facebook Using Sentiment and Emotion Analysis. IEEE Access 2022, 10, 22400–22419. [Google Scholar] [CrossRef]

- Kaminska, O.; Cornelis, C.; Hoste, V. Fuzzy rough nearest neighbour methods for detecting emotions, hate speech and irony. Information Sciences 2023, 625, 521–535. [Google Scholar] [CrossRef]

- Vallecillo-Rodríguez, M.E.; Plaza-del Arco, F.M.; Montejo-Ráez, A. Combining profile features for offensiveness detection on Spanish social media. Expert Systems with Applications 2025, 272, 126705. [Google Scholar] [CrossRef]

- Pan, R.; García-Díaz, J.A.; Valencia-García, R. Spanish MTLHateCorpus 2023: Multi-task learning for hate speech detection to identify speech type, target, target group and intensity. Computer Standards & Interfaces 2025, 94, 103990. [Google Scholar] [CrossRef]

- Paul, J.; Mallick, S.; Mitra, A.; Roy, A.; Sil, J. Multi-modal Twitter Data Analysis for Identifying Offensive Posts Using a Deep Cross-Attention–based Transformer Framework. ACM Trans. Knowl. Discov. Data 2025, 19. [Google Scholar] [CrossRef]

- Keya, A.J.; Kabir, M.M.; Shammey, N.J.; Mridha, M.F.; Islam, M.R.; Watanobe, Y. G-BERT: An Efficient Method for Identifying Hate Speech in Bengali Texts on Social Media. IEEE Access 2023, 11, 79697–79709. [Google Scholar] [CrossRef]

- Sasidhar, T.T.; B, P.; S.K., P. Emotion Detection in Hinglish(Hindi+English) Code-Mixed Social Media Text. Procedia Computer Science 2020, 171, 1346–1352. [Google Scholar] [CrossRef]

- Priya, P.; Firdaus, M.; Ekbal, A. A multi-task learning framework for politeness and emotion detection in dialogues for mental health counselling and legal aid. Expert Systems with Applications 2023, 224, 120025. [Google Scholar] [CrossRef]

- Nandi, P.; Sharma, S.; Chakraborty, T. SAFE-MEME: Structured Reasoning Framework for Robust Hate Speech Detection in Memes. 2024. [Google Scholar] [CrossRef]

- Chhabra, A.; Vishwakarma, D.K. MHS-STMA: Multimodal Hate Speech Detection via Scalable Transformer-Based Multilevel Attention Framework. 2024. [Google Scholar] [CrossRef]

- Chhabra, A.; Vishwakarma, D.K. Multimodal hate speech detection via multi-scale visual kernels and knowledge distillation architecture. Engineering Applications of Artificial Intelligence 2023, 126, 106991. [Google Scholar] [CrossRef]

- Guo, K.; Hu, A.; Mu, J.; Shi, Z.; Zhao, Z.; Vishwamitra, N.; Hu, H. An Investigation of Large Language Models for Real-World Hate Speech Detection. 2024. [Google Scholar] [CrossRef]

- Barakat, B.; Jaf, S. Beyond Traditional Classifiers: Evaluating Large Language Models for Robust Hate Speech Detection. Computation 2025, 13, 196. [Google Scholar] [CrossRef]

- Piot, P.; Parapar, J. Decoding Hate: Exploring Language Models’ Reactions to Hate Speech. In Proceedings of the Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies; Association for Computational Linguistics, 2025; Volume 1, pp. 973–990. [Google Scholar] [CrossRef]

- Calapaqui, G.; Guarderas, D.; Fuertes, W.; López, A.; Aules, H. Detection of Hate Speech on On-Line Social Platforms Using Machine Learning and Natural Language Processing – A Literature Review. CIST-2025 2026, 441–452. [Google Scholar] [CrossRef]

- Filho, T.M.S.; de Souto, M.C.; de Carvalho, A.C.P.L.F. Classifier calibration: A survey on how to assess and improve predicted class probabilities. Machine Learning 2023, 112, 5193–5229. [Google Scholar] [CrossRef]

- Dimitriadis, T.; Gneiting, T.; Ziegel, M. Evaluating probabilistic classifiers: The triptych. Pattern Recognition 2024, 150, 110312. [Google Scholar] [CrossRef]

- Rella, C.; Vilar, J.M. Cost-sensitive thresholding over a two-dimensional decision region for fraud detection. Information Sciences 2024, 660, 119604. [Google Scholar] [CrossRef]

- Komisarenko, V. Cost-sensitive classification with cost uncertainty: Do we need surrogate losses? Machine Learning 2025. [Google Scholar] [CrossRef]

- Uther, W.; Mladenić, D.; Ciaramita, M.; Berendt, B.; Kołcz, A.; Grobelnik, M.; Witbrock, M.; Risch, J.; Bohn, S.; Poteet, S. TF–IDF. In Encyclopedia of Machine Learning; Sammut, C., Webb, G.I., Eds.; Springer US: Boston, MA, 2010; pp. 986–987. [Google Scholar] [CrossRef]

- Salman, H.A.; Kalakech, A.; Steiti, A. Random Forest Algorithm Overview. Babylonian Journal of Machine Learning 2024, 2024, 69–79. [Google Scholar] [CrossRef]

- Malik, S.; Harode, R.; Singh, A. XGBoost: A Deep Dive into Boosting (Introduction Documentation). ResearchGate Preprint Available online. 2020. (accessed on 27 August 2025). [Google Scholar] [CrossRef]