Submitted:

02 March 2026

Posted:

02 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Robot System Settings

2.2. Image Acquisition and Dataset Annotation

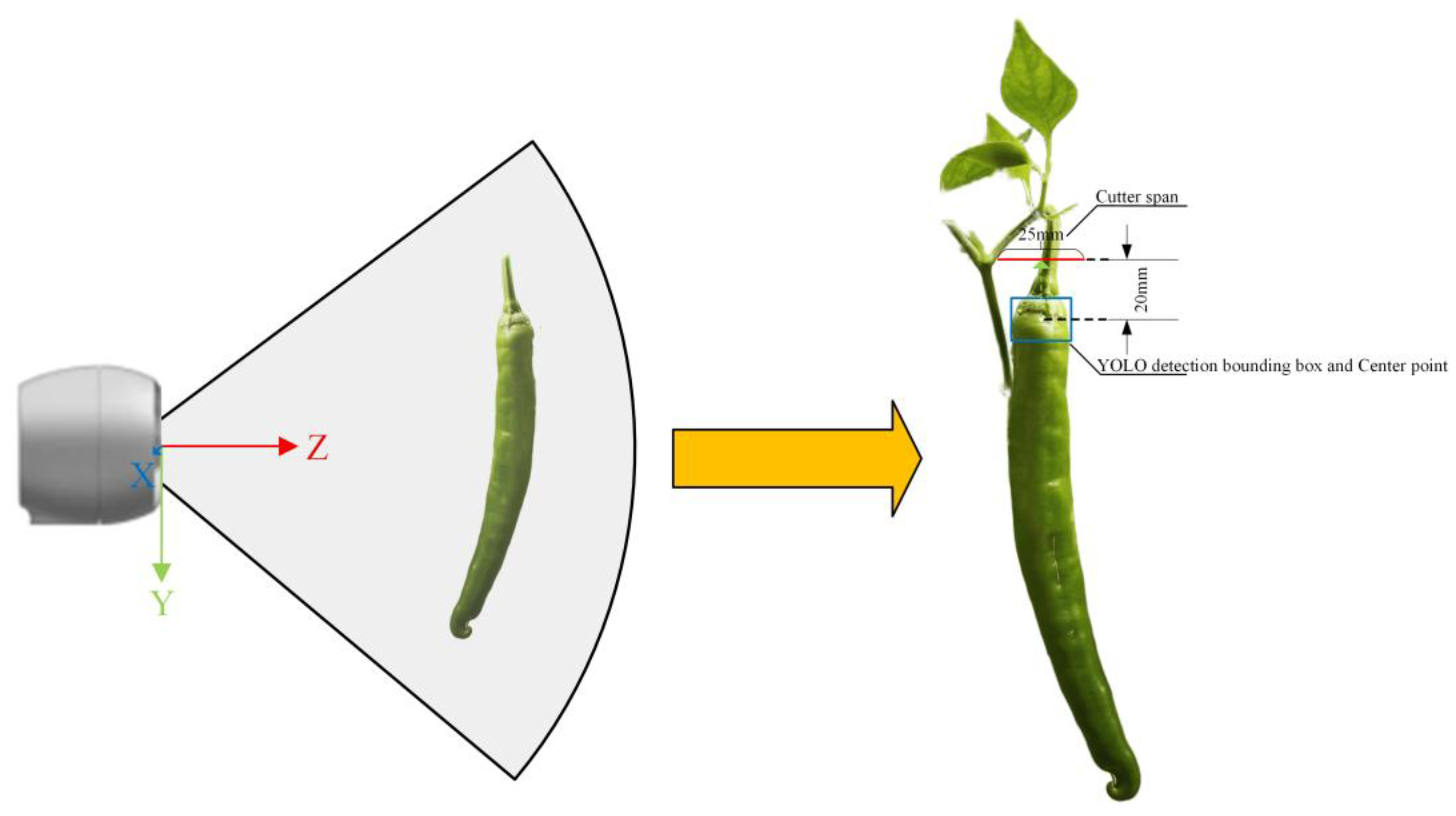

2.3. Feature Detection and 3D Coordinate Extraction

2.3.1. YOLOv8 Target Detection Model Construction

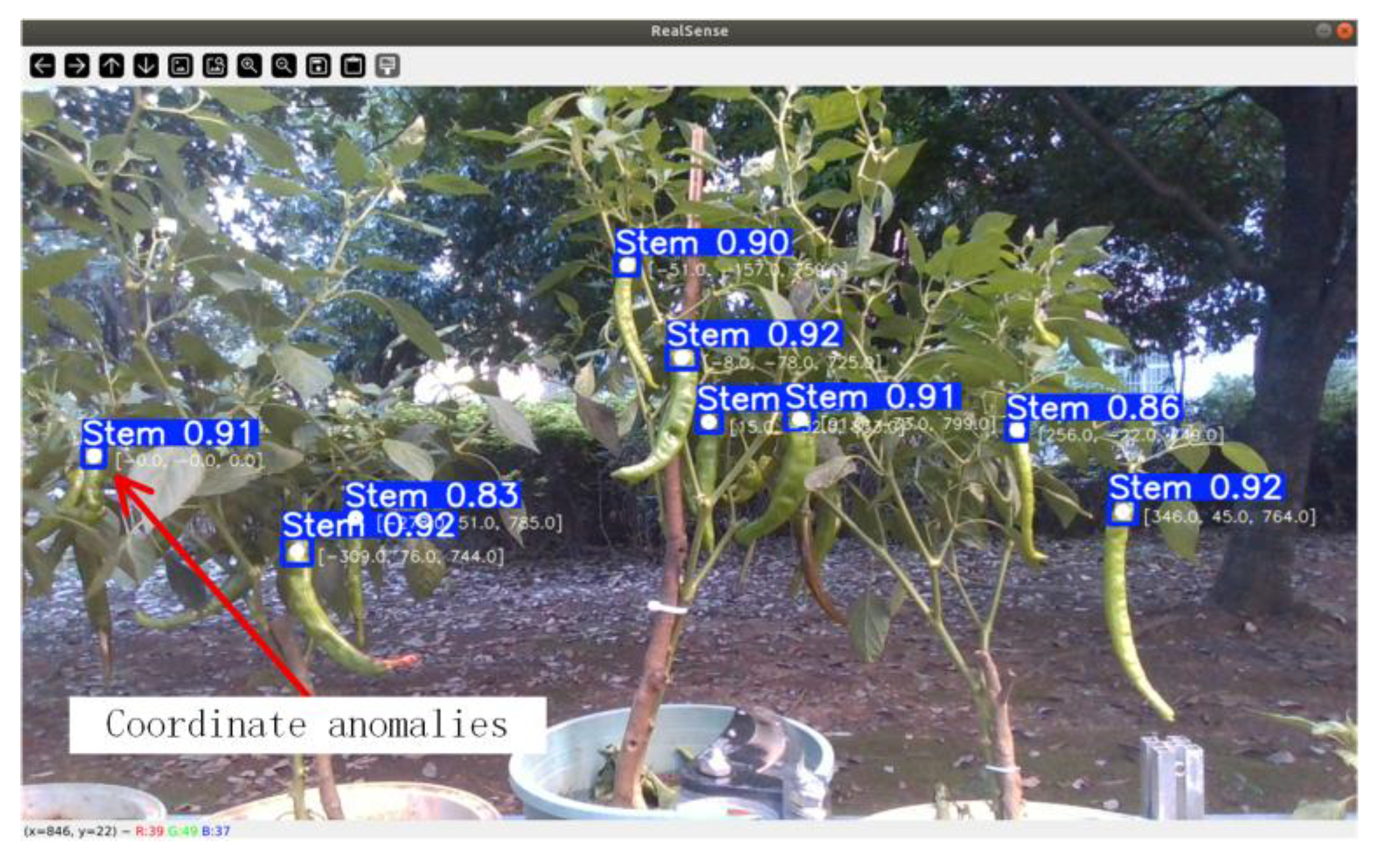

2.3.2.3. D Coordinate Extraction

2.4. Multi-Target State Management and Coordinate Smoothing Filter

2.4.1. Multi-Feature Fusion Data Association and Target Tracking

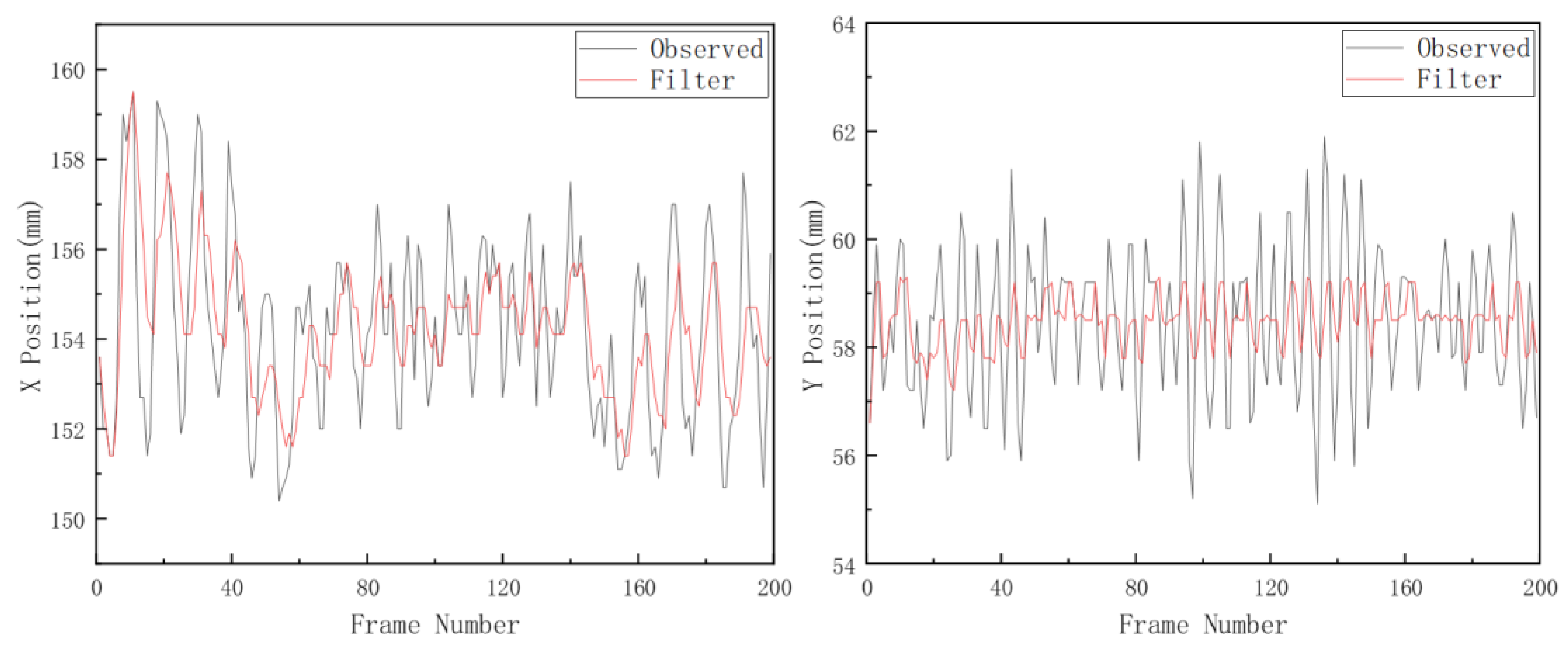

2.4.2. Kalman Filter Modeling and State Update

2.4.2.1. State-Space Model Formulation

2.4.2.2. Recursive Formulas of the Kalman Filter

2.4.2.3. Parameter Initialization and Tuning Strategy

2.4.3.3. σ Outlier Rejection and Single Exponential Smoothing

| Algorithm 1: Multi-feature fusion tracking and 3D Coordinate smoothing | |

| Input: color_image, depth_frame, depth_intri Output: Smoothed 3D coordinates of tracked peppers 1: Initialize prev_boxes, current_boxes, kalman_filters, track_missing_count, xyz_histories, smoothed_history 2: Set IOU_TH, MAHALANOBIS_TH, LAMBDA_IOU, LAMBDA_M, max_frames_missing, window_len, α 3: while camera stream is available do 4: Run YOLOv8 on color_image, obtain detections D = { (box_k, conf_k) | conf_k ≥ IOU_TH } in (x, y, w, h) format 5: Prune tracks whose missing count > max_frames_missing; let existing_tracks be keys of prev_boxes 6: if existing_tracks ≠ ∅ and D ≠ ∅ then 7: For each track_i ∈ existing_tracks and det_j ∈ D, compute IoU(track_i, det_j) in image plane 8: Use Kalman prediction to get predicted center of track_i and Mahalanobis distance to det_j center 9: If Mahalanobis distance > MAHALANOBIS_TH, set cost_{i,j} = +∞; else cost_{i,j} = LAMBDA_IOU·(1−IoU) + LAMBDA_M·Mahalanobis 10: Apply Hungarian algorithm to the cost matrix to obtain globally optimal one-to-one matches 11: For each matched pair (track_id, det_box) do Kalman prediction + update with det_box center, obtain (x_kf, y_kf, w, h) 12: Set current_boxes[track_id] ← (x_kf, y_kf, w, h), track_missing_count[track_id] ← 0 13: For unmatched tracks do Kalman prediction only, update current_boxes and increment track_missing_count 14: For unmatched detections do create new track_id, initialize its Kalman filter with det_box center, add to current_boxes 15: else if existing_tracks = ∅ and D ≠ ∅ then 16: Initialize a new track and Kalman filter for each det_box ∈ D and fill current_boxes 17: end if 18: For each (track_id, box) ∈ current_boxes do 19: Obtain (x_kf, y_kf) from box and query median_depth at (x_kf, y_kf) in depth_frame 20: if median_depth is valid then 21: Back-project (x_kf, y_kf, median_depth) via depth_intri to camera_xyz and append to xyz_histories[track_id] (kept at window_len) 22: if |xyz_histories[track_id]| ≥ N then 23: Compute mean μ and std σ of xyz_histories[track_id], discard points violating the 3σ rule to obtain inliers 24: Let filtered_xyz be mean of inliers; apply single exponential smoothing 25: smoothed_xyz = α·filtered_xyz + (1−α)·previous smoothed_xyz (or filtered_xyz if no history) 26: Update smoothed_history[track_id] with smoothed_xyz 27: end if 28: end if 29: end for 30: Set prev_boxes ← current_boxes; clear current_boxes 31: end while |

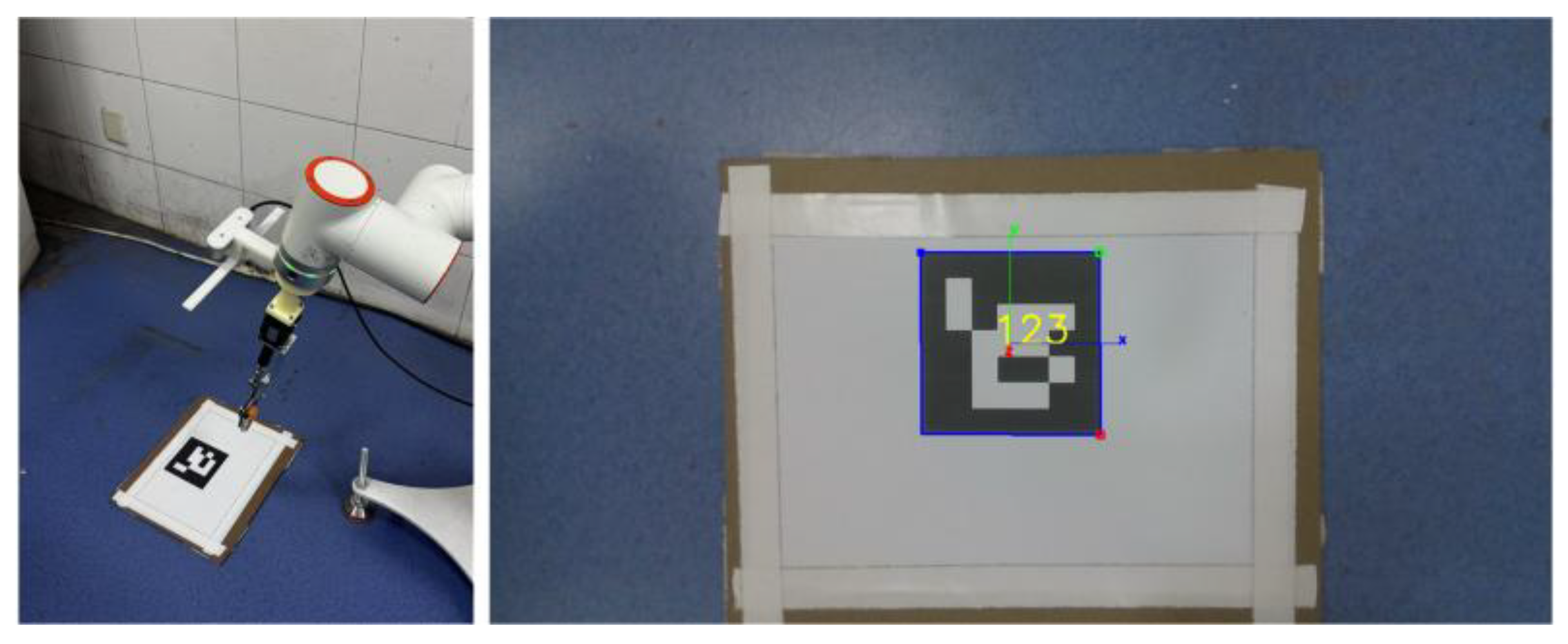

2.5. Hand-Eye Calibration

2.6. Picking Task Planning and System Coordination

3. Results and Discussion

3.1. Experimental Setup

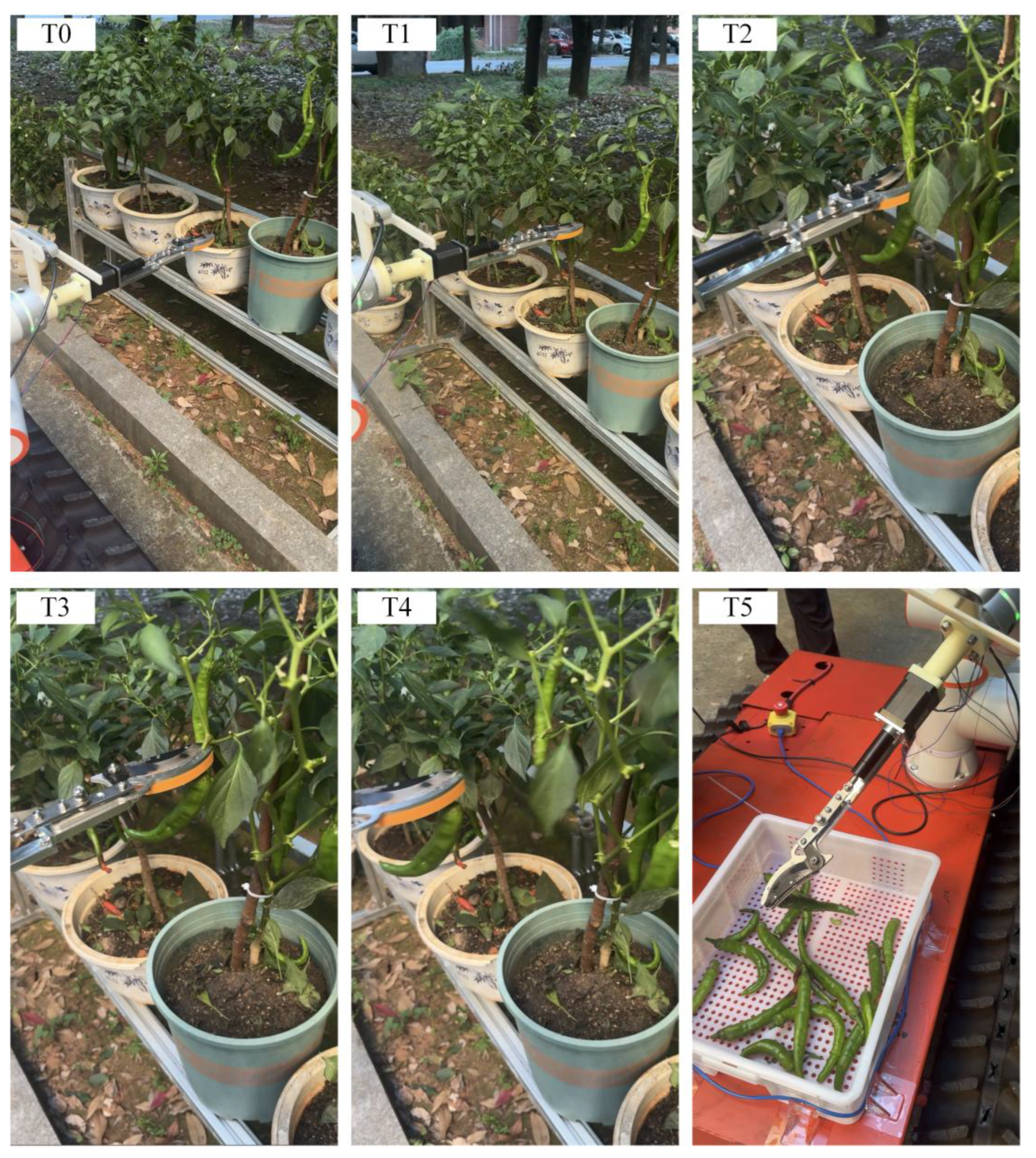

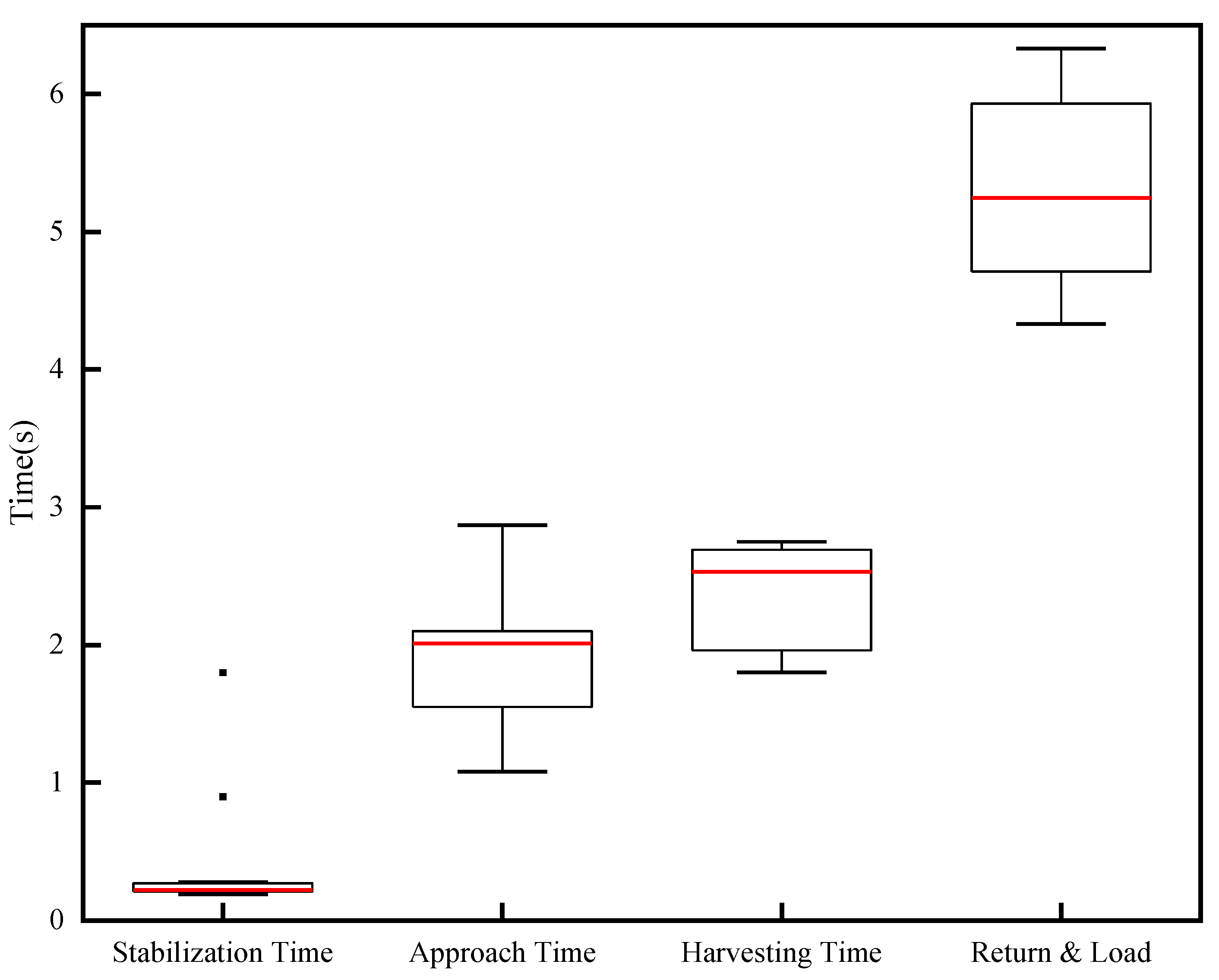

3.2. Experimental Results

3.3. Discussion

3.3.1. Perception Limitations

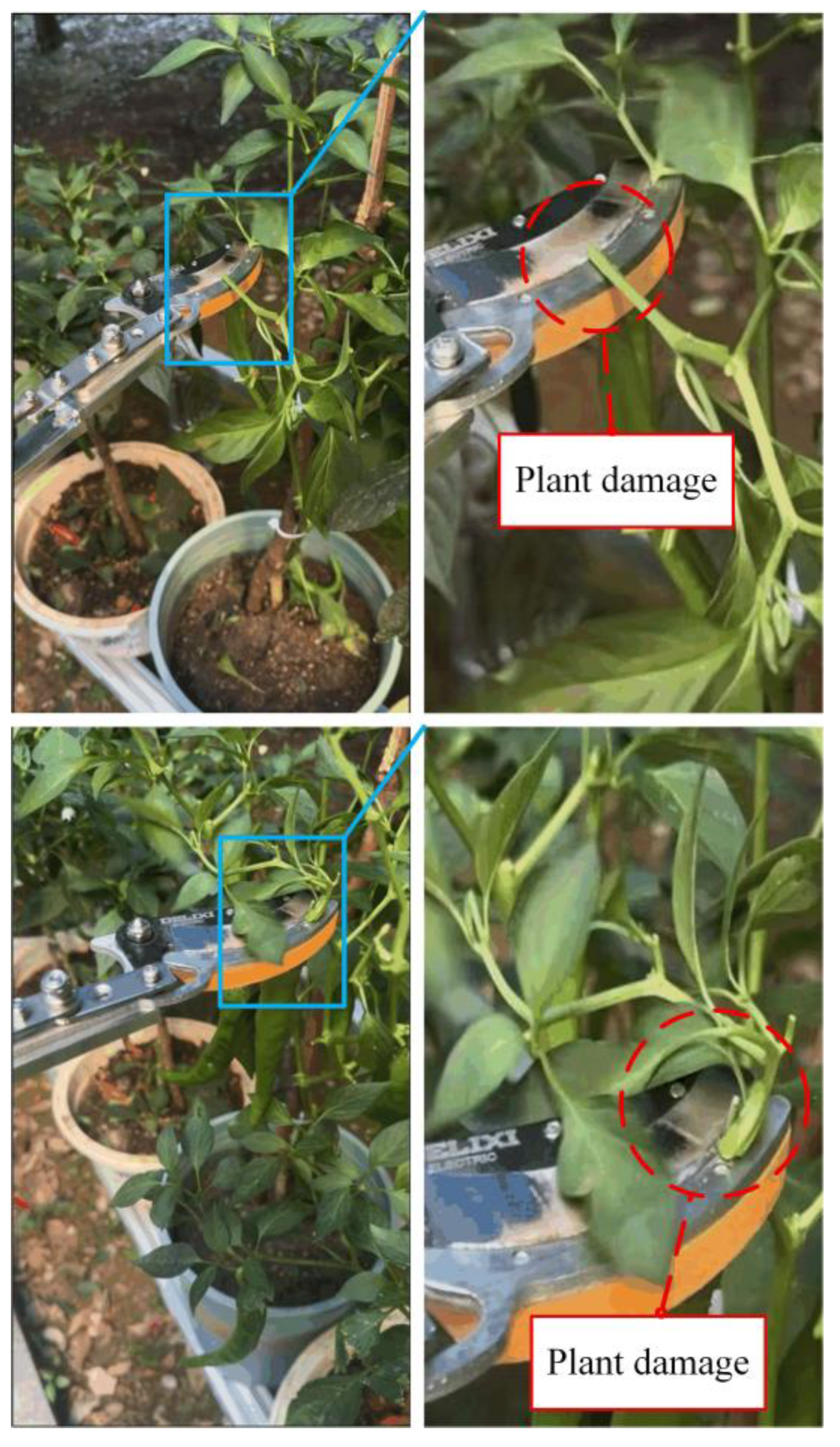

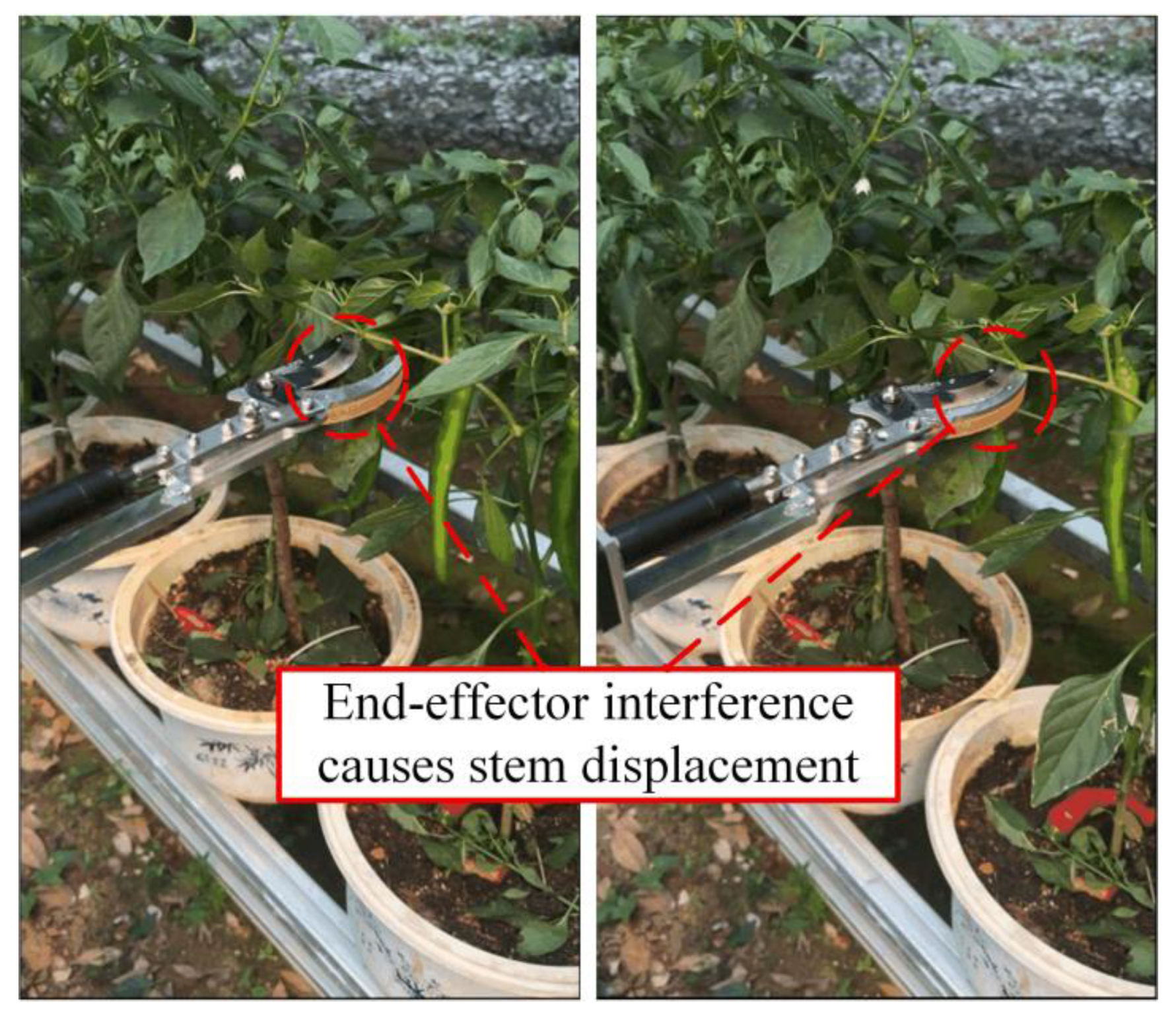

3.3.2. Execution Limitations

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- FAO. Production of Chillies and peppers, green (World and China). FAOSTAT. Retrieved from http://www.fao.org/faostat 2024.

- Lin, Z.; Chen, H. Labor Structure, Wage and Efficiency of Economic Growth. In Proceedings of the 2012 4th International Conference on Intelligent Human-Machine Systems and Cybernetics, Nanchang, China, 26-27 August 2012; pp. 167–170. [Google Scholar]

- Chen, Y.; Ren, T.; Li, Y.; Jiang, G.; Liu, Q.; Chen, Y.; Yang, S.X. AI-empowered intelligence in industrial robotics: technologies, challenges, and emerging trends. Intell. Robot 2026, 6, 1–18. [Google Scholar] [CrossRef]

- Tang, Y.C.; Chen, M.Y.; Wang, C.L.; Luo, L.F.; Li, J.H.; Lian, G.P.; Zou, X.J. Recognition and Localization Methods for Vision-Based Fruit Picking Robots: A Review. Frontiers in Plant Science 2020, 11. [Google Scholar] [CrossRef]

- Yuan, J.; Fan, J.; Liu, H.; Yan, W.; Li, D.; Sun, Z.; Liu, H.; Huang, D. RT-DETR Optimization with Efficiency-Oriented Backbone and Adaptive Scale Fusion for Precise Pomegranate Detection. Horticulturae 2026, 12, 42. [Google Scholar] [CrossRef]

- Du, P.C.; Chen, S.; Li, X.; Hu, W.W.; Lan, N.; Lei, X.M.; Xiang, Y. Green pepper fruits counting based on improved DeepSort and optimized Yolov5s. Frontiers in Plant Science 2024, 15. [Google Scholar] [CrossRef]

- Huang, Y.K.; Zhong, Y.L.; Zhong, D.C.; Yang, C.C.; Wei, L.F.; Zou, Z.P.; Chen, R.Q. Pepper-YOLO: an lightweight model for green pepper detection and picking point localization in complex environments. Frontiers in Plant Science 2024, 15. [Google Scholar] [CrossRef] [PubMed]

- Ji, W.; Gao, X.X.; Xu, B.; Chen, G.Y.; Zhao, D. Target recognition method of green pepper harvesting robot based on manifold ranking. Computers and Electronics in Agriculture 2020, 177. [Google Scholar] [CrossRef]

- Jiang, H.K.; Liu, J.Z.; Lei, X.J.; Xu, B.C.; Jin, Y.C. Multi-stage fusion of dual attention mask R-CNN and geometric filtering for fast and accurate localization of occluded apples. Artificial Intelligence in Agriculture 2026, 16, 187–205. [Google Scholar] [CrossRef]

- Jin, Y.C.; Liu, J.Z.; Wang, J.; Xu, Z.J.; Yuan, Y. Far-near combined positioning of picking-point based on depth data features for horizontal-trellis cultivated grape. Computers and Electronics in Agriculture 2022, 194. [Google Scholar] [CrossRef]

- Yaojun, G.; Ping, M.; Wenmin, L.; Yuanshuang, M.; Changfei, G.; Runyu, L.; Lyuwen, H. Multi-label recognition of ripen persimmons varieties and phenotypic characteristics based on improved YOLOv8m [J]. Transactions of the Chinese Society of Agricultural Engineering (Transactions of the CSAE) 2025, 41, 143–152. [Google Scholar] [CrossRef]

- Pan, Q.; Wang, D.; Lian, J.; Dong, Y.; Qiu, C. Development of an Automatic Sweet Pepper Harvesting Robot and Experimental Evaluation. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 13-17 May, 2024; pp. 15811–15817. [Google Scholar]

- Alam, U.K.; Garcia, L.; Grajeda, J.; Haghshenas-Jaryani, M.; Boucheron, L.E. Automated Harvesting of Green Chile Peppers with a Deep Learning-based Vision-enabled Robotic Arm. In Proceedings of the 2024 IEEE International Conference on Advanced Intelligent Mechatronics (AIM), Boston, MA, USA, 15-19 July, 2024; pp. 805–811. [Google Scholar]

- Ravuri, S.P.; Allimuthu, S.; Ramasamy, K.; K, N.; K, V.; R, R. Automation in Agriculture: Capsicum Harvesting Using GRBL and Arduino-Driven Cartesian Robot. In Proceedings of the 2024 2nd International Conference on Signal Processing, Communication, Power and Embedded System (SCOPES), Paralakhemundi Campus, Centurion University of Technology and Management, Odisha., India, 19-21 December 2024; pp. 1–6. [Google Scholar]

- Li, R.; Liu, Q.; Wang, M.; Su, Y.; Li, C.; Ou, M.; Liu, L. Maize Kernel Batch Counting System Based on YOLOv8-ByteTrack. Sensors (Basel) 2025, 25. [Google Scholar] [CrossRef]

- Paul, A.; Machavaram, R.; Ambuj; Kumar, D.; Nagar, H. Smart solutions for capsicum Harvesting: Unleashing the power of YOLO for Detection, Segmentation, growth stage Classification, Counting, and real-time mobile identification. Computers and Electronics in Agriculture 2024, 219. [Google Scholar] [CrossRef]

- Liang, Z.; Li, X.; Wang, G.; Wu, F.; Zou, X. Palm vision and servo control strategy of tomato picking robot based on global positioning. Computers and Electronics in Agriculture 2025, 237. [Google Scholar] [CrossRef]

- Rapado-Rincón, D.; van Henten, E.J.; Kootstra, G. MinkSORT: A 3D deep feature extractor using sparse convolutions to improve 3D multi-object tracking in greenhouse tomato plants. Biosystems Engineering 2023, 236, 193–200. [Google Scholar] [CrossRef]

- Arlotta, A.; Lippi, M.; Gasparri, A. An EKF-Based Multi-Object Tracking Framework for a Mobile Robot in a Precision Agriculture Scenario. In Proceedings of the 2023 European Conference on Mobile Robots (ECMR), Coimbra, Portugal, 04-07 September, 2023; pp. 1–6. [Google Scholar]

- Dai, N.; Fang, J.; Yuan, J.; Liu, X. 3MSP2: Sequential picking planning for multi-fruit congregated tomato harvesting in multi-clusters environment based on multi-views. Computers and Electronics in Agriculture 2024, 225. [Google Scholar] [CrossRef]

- Mahalanobis, P.C. On the Generalized Distance in Statistics. proceedings of the national institute of sciences 1936. [Google Scholar]

- Kuhn, H.W. The Hungarian method for the assignment problem. Naval Research Logistics 1955, 2, 83–97. [Google Scholar] [CrossRef]

- Michaelis, S.S.P.A.A.-H.B. Intelligent feature-guided multi-object tracking using Kalman filter. 2009 2nd International Conference on Computer, Control and Communication, 2009. [Google Scholar] [CrossRef]

- Cui, W.T.; Yan, X.F. Adaptive weighted least square support vector machine regression integrated with outlier detection and its application in QSAR. Chemometrics and Intelligent Laboratory Systems 2009, 98, 130–135. [Google Scholar] [CrossRef]

- Karahasan, O.; Bas, E.; Egrioglu, E. A hybrid deep recurrent artificial neural network with a simple exponential smoothing feedback mechanism. Information Sciences 2025, 686. [Google Scholar] [CrossRef]

- Horaud, F.D.R. Simultaneous robot-world and hand-eye calibration. IEEE Transactions on Robotics and Automation ( Volume: 14, Issue: 4, August 1998) 1998, 617 - 622,. [CrossRef]

- Park, F.C.; Martin, B.J. Robot sensor calibration solving AX=XB on the Euclidean group. IEEE Transactions on Robotics and Automation ( Volume: 10, Issue: 5, October 1994) 1994, 717 - 721,. [CrossRef]

- Wang, Q.; Wu, D.; Sun, Z.; Zhou, M.; Cui, D.; Xie, L.; Hu, D.; Rao, X.; Jiang, H.; Ying, Y. Design, integration, and evaluation of a robotic peach packaging system based on deep learning. Computers and Electronics in Agriculture 2023, 211. [Google Scholar] [CrossRef]

- Condotta, I.; Brown-Brandl, T.M.; Pitla, S.K.; Stinn, J.P.; Silva-Miranda, K.O. Evaluation of low-cost depth cameras for agricultural applications. Computers and Electronics in Agriculture 2020, 173. [Google Scholar] [CrossRef]

- Pan, Y.; Han, Y.; Wang, L.; Chen, J.; Meng, H.; Wang, G.; Zhang, Z.; Wang, S. 3D Reconstruction of Ground Crops Based on Airborne LiDAR Technology. IFAC-PapersOnLine 2019, 52, 35–40. [Google Scholar] [CrossRef]

- Li, X.; Liu, B.; Shi, Y.; Xiong, M.; Ren, D.; Wu, L.; Zou, X. Efficient three-dimensional reconstruction and skeleton extraction for intelligent pruning of fruit trees. Computers and Electronics in Agriculture 2024, 227. [Google Scholar] [CrossRef]

- Jiang, Y.; Zhao, S.; Liu, J.; Wu, S.; Jiang, Y.; Jin, Y. Review of dual-arm parallel and collaborative motion: methods, progress and applications in agriculture. Computers and Electronics in Agriculture 2025, 239. [Google Scholar] [CrossRef]

- Chen, M.; Tang, Y.; Zou, X.; Huang, Z.; Zhou, H.; Chen, S. 3D global mapping of large-scale unstructured orchard integrating eye-in-hand stereo vision and SLAM. Computers and Electronics in Agriculture 2021, 187. [Google Scholar] [CrossRef]

- Yang, D.; Cui, D.; Ying, Y. Object perception in sparse 3D point cloud scenes for floor-rearing chicken farming robots using an improved PointNet++ algorithm. Computers and Electronics in Agriculture 2025, 237. [Google Scholar] [CrossRef]

- Sun, J.; Sun, L.; Zhao, G.; Liu, J.; Chen, Z.; Jing, L.; Cao, X.; Zhang, H.; Tang, W.; Wang, J. Triboelectric force feedback-based fully actuated adaptive apple-picking gripper for optimized stability and non-destructive harvesting. Computers and Electronics in Agriculture 2025, 237. [Google Scholar] [CrossRef]

- Zhao, H.; Chen, Y.; Guo, J.; Liu, J.; Zhang, Z.; Zhang, X. Cutting dynamics modeling and parameter configuration optimization of rubber tree multi bark-layer composite system based on dynamic finite element method and quasi-static mechanical testing. Computers and Electronics in Agriculture 2026, 240. [Google Scholar] [CrossRef]

- Zuoxun, W.; Chuanzhe, P.; Jinxue, S.; Guojian, Z.; Wangyao, W.; Liteng, X. Time-optimal trajectory planning for a six-degree-of-freedom manipulator: a method integrating RRT and chaotic PSO. Intell. Robot 2024, 4, 479–502. [Google Scholar] [CrossRef]

- Cao, X.; Zou, X.; Jia, C.; Chen, M.; Zeng, Z. RRT-based path planning for an intelligent litchi-picking manipulator. Computers and Electronics in Agriculture 2019, 156, 105–118. [Google Scholar] [CrossRef]

- Lin, G.; Zhu, L.; Li, J.; Zou, X.; Tang, Y. Collision-free path planning for a guava-harvesting robot based on recurrent deep reinforcement learning. Computers and Electronics in Agriculture 2021, 188. [Google Scholar] [CrossRef]

- Lammers, K.; Zhang, K.; Zhu, K.; Chu, P.; Li, Z.; Lu, R. Development and evaluation of a dual-arm robotic apple harvesting system. Computers and Electronics in Agriculture 2024, 227. [Google Scholar] [CrossRef]

| Module name | Model/control method | Main parameters |

|---|---|---|

| Upper computer | Hasee z7-ct7na | Intel i7-9750H, 16GB RAM, GTX1660Ti, Ubuntu 18.04 |

| RGB-D camera | RealSense D435i | 1280×720@30FPS, depth accuracy ±2% |

| Robot arm | FR5-SPARK | 6-DOF, Load 5kg, Repeat positioning accuracy ±0.02mm, Fiber optic Ethernet communication |

| End-effector | Self-designed actuator module,commercial cutting–gripping head | 24V DC motor drive, actuator stroke 40mm, maximum clamping width 50mm, motor torque 2N-m |

| Training parameter | Value |

|---|---|

| weight | Yolov8l.pt |

| batch-size | 8 |

| epochs | 300 |

| imgsz | 640×640 |

| momentum | 0.937 |

| warmup_bias_lr | 0.1 |

| lr0 | 0.01 |

| lrf | 0.01 |

| optimizer | SGD |

| weight_decay | 0.0005 |

| mosaic | 0.5 |

| cos_lr | True |

| seed | 0 |

| dropout | 0.0 |

| θ | Total matching pairs | ID matches | ID switches | ID matching rate |

|---|---|---|---|---|

| 0.1 | 400 | 400 | 0 | 100% |

| 0.3 | 400 | 400 | 0 | 100% |

| 0.5 | 400 | 376 | 24 | 94% |

| 0.7 | 400 | 260 | 140 | 65% |

| 0.9 | 400 | 41 | 359 | 10.25% |

| Matching Method | Total Pairs | ID Switches | Association Accuracy (%) | Miss Rate (%) |

|---|---|---|---|---|

| IoU-only (θ=0.5) | 300 | 40 | 84.47 | 19.5 |

| Fusion Method | 300 | 18 | 92.48 | 22.67 |

| q Value | Noise Reduction Ratio | Normalized Residual |

|---|---|---|

| 0.001 | 0.2437 | 0.9344 |

| 0.01 | 0.4658 | 0.7680 |

| 0.1 | 0.6198 | 0.7289 |

| 1 | 0.7271 | 0.5417 |

| 10 | 0.7728 | 0.3864 |

| r Value | Noise Reduction Ratio | Normalized Residual |

|---|---|---|

| 0.1 | 0.7769 | 0.5478 |

| 1 | 0.5155 | 0.7338 |

| 5 | 0.8843 | 0.6074 |

| 10 | 0.7101 | 0.6972 |

| 50 | 0.5585 | 0.7842 |

| Occlusion Level | Count (pcs) | Detection Correct Rate (%) | Harvesting Success Rate (%) | Plant Damage Rate (%) |

|---|---|---|---|---|

| No occlusion | 261 | 91.57 | 82.85 | 11.11 |

| Light occlusion | 279 | 80.29 | 77.68 | 34.48 |

| Moderate occlusion | 244 | 72.13 | 57.39 | 51.49 |

| Severe occlusion | 215 | 46.51 | 40.00 | 75.00 |

| Average | 249.75 | 72.63 | 64.34 | 56.85 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).