Submitted:

01 March 2026

Posted:

02 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

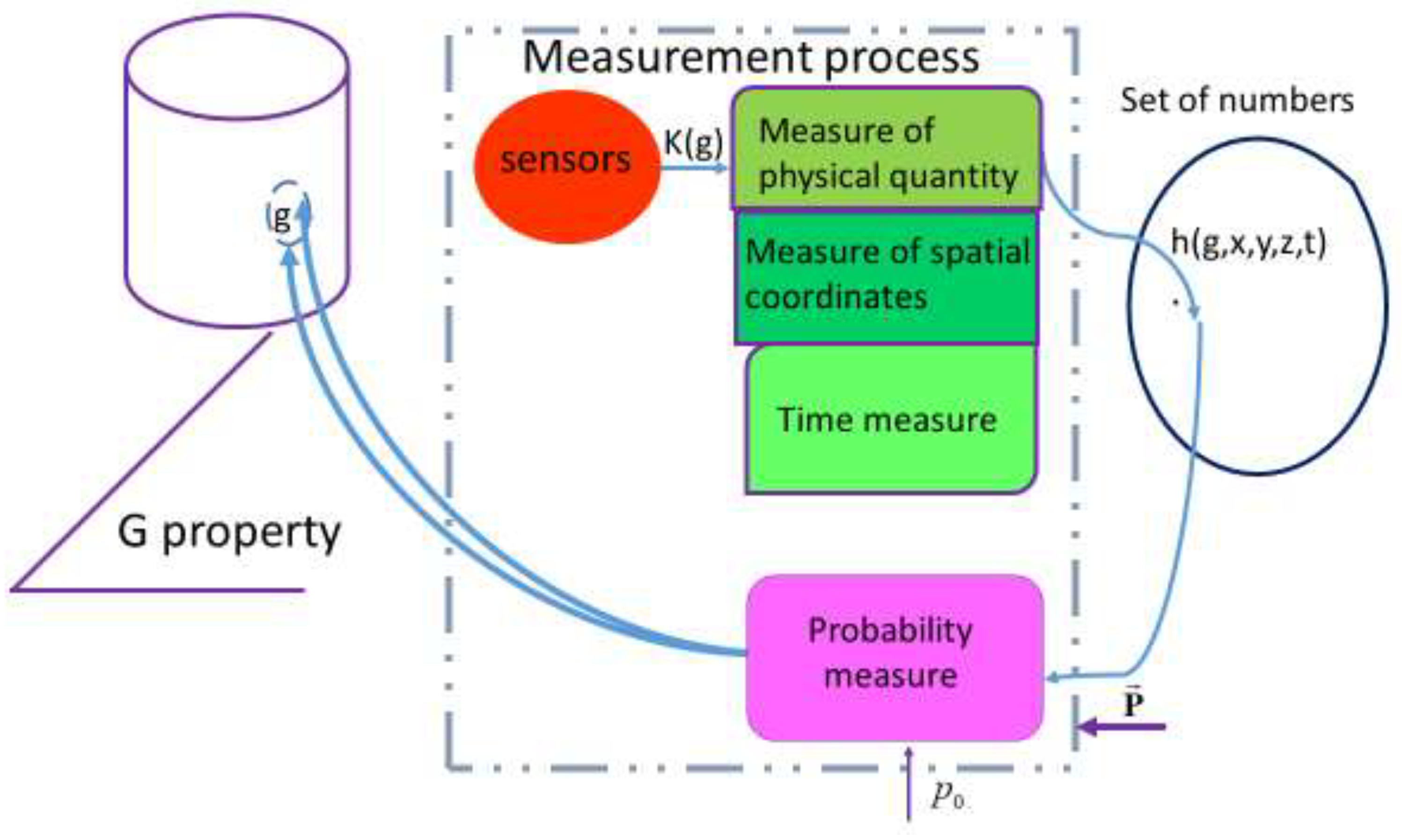

2. Models of Measurement Signals

2.1. Measurement Signal Model

- the mean value of a random fieldwhere is the mathematical expectation operator;

- the variance of the random field

- autocorrelation function of the field

- structural function of the field

2.2. Linear AR Field

3. Measures and Their Application

4. Combination of Physical and Probabilistic Measures in Manufacturing Processes

- if , then

- if , then

5. Example of Using the "Model → Measure → Algorithm → Program" Methodology

- Euclidean distance

- square of Euclidean distance

- Manhattan distance

- Chebyshev distance

- power distance

- Mahalanobis distance

- classical (according to the CCIR-601 standard):

- by calculating the arithmetic mean of the components of the three channels:

6. Entropy Measures for Constructing Decision-Making Rules

- when and , relation (44) describes the Gaussian probability distribution;

- when and , relation (44) defines the Laplace probability distribution;

- where and , relation (44) describes the gamma probability distribution.

- (hypothesis H1 is accepted);

- (hypothesis H2 is accepted).

Use of Mutual Entropy

7. Artificial Intelligence in Production Process Control Tasks

Quality Control and Preventive Maintenance Based on AI

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| AR | Autoregressive |

| AR field | Autoregressive random field |

| CBM | Condition-Based Maintenance |

| CE | Cross-Entropy |

| CF | Characteristic Function |

| CGO | Sreznevsky Central Geophysical Observatory |

| CM | Corrective Maintenance |

| CMg | Cloud Manufacturing |

| CPS | Cyber-Physical Systems |

| DL | Deep Learning |

| DT | Digital Twin |

| EM | Expectation–Maximisation |

| FDPM | Federated Data Prediction Model |

| GCE | Generalised Cross-Entropy |

| IoT | Internet of Things |

| ISO | International Organization for Standardization |

| LRP | Linear Random Process |

| MI | Mutual Information |

| PM | Preventive Maintenance |

| PMS | Performance Measurement System |

| RMS | Root Mean Square |

| SC | Sum of control sets (SC = “1” + “2” + “3”) |

| SME | Subject Matter Expert |

| TBM | Time-Based Maintenance |

References

- World Economic Forum. (2018). Digital Transformation Initiative: Maximising the Return on Digital Investments. Geneva, Switzerland; pp. 1–27. https://www3.weforum.org/docs/DTI_Maximizing_Return_Digital_WP.pdf.

- Dolgui, A.; Ivanov, D.; Sethi, S.P.; Sokolov, B. (2019). Scheduling in Production, Supply Chain and Industry 4.0 Systems by Optimal Control. International Journal of Production Research, 57(2), 411–432. [CrossRef]

- Ivanov, D.; Dolgui, A.; Sokolov, B. (2018). Scheduling of Recovery Actions in the Supply Chain with Resilience Analysis Considerations. International Journal of Production Research, 56(19), 6473–6490. [CrossRef]

- Babak, V.P.; Babak, S.V.; Myslovych, M.V.; Zaporozhets, A.O.; Zvaritch, V.M. (2020). Methods and Models for Information Data Analysis. In: Diagnostic Systems for Energy Equipments. Studies in Systems, Decision and Control, 281, 23–70. Springer: Cham. [CrossRef]

- Ivanov, D.; Dolgui, A. (2020). A Digital Supply Chain Twin for Managing the Disruption Risks and Resilience in the Era of Industry 4.0. Production Planning & Control, 32(9), 775–788. [CrossRef]

- Ivanov, D.; Sokolov, B.; Dolgui, A. (2020). Scheduling in Industry 4.0 and Cloud Manufacturing. Springer: New York, NY, USA. [CrossRef]

- Luo, D.; Thevenin, S.; Dolgui, A. (2023). A State-of-the-Art on Production Planning in Industry 4.0. International Journal of Production Research, 61(19), 6602-6632. [CrossRef]

- Shafto, M.; Conroy, M.; Doyle, R.; Glaessgen, E.; Kemp, C.; Le Moigne, J.; Wang, L. (2012). Modelling, Simulation, Information Technology & Processing Roadmap. National Aeronautics and Space Administration, 32, 1–38. https://www.researchgate.net/publication/280310295.

- Tao, F.; Zhang, H.; Liu, A.; Nee, A.Y.C. (2019a). Digital Twin in Industry: State-of-the-Art. IEEE Transactions on Industrial Informatics, 15(4), 2405–2415. [CrossRef]

- Grieves, M.; Vickers, J. (2017). Digital Twin: Mitigating Unpredictable, Undesirable Emergent Behaviour in Complex Systems. In: Transdisciplinary Perspectives on Complex Systems: New Findings and Approaches, 89-113. Springer: Cham. [CrossRef]

- Psarommatis, F.; May, G. (2023). A Standardised Approach for Measuring the Performance and Flexibility of Digital Twins. International Journal of Production Research, 61(20), 6923-6938. [CrossRef]

- Haag, S.; Anderl, R. (2018). Digital Twin – Proof of Concept. Manufacturing Letters, 15, 64–66. [CrossRef]

- Barricelli, B.R.; Casiraghi, E.; Fogli, D. (2019). A Survey on Digital Twin: Definitions, Characteristics, Applications, and Design Implications. IEEE Access, 7, 167653–167671. [CrossRef]

- Liu, Z.; Meyendorf, N.; Mrad, N. (2018). The Role of Data Fusion in Predictive Maintenance Using Digital Twin. AIP Conference Proceedings, 1949, 020023. [CrossRef]

- Gökalp, E.; Martinez, V. (2021). Digital Transformation Maturity Assessment: Development of the Digital Transformation Capability Maturity Model. International Journal of Production Research, 60(20), 6282–6302. [CrossRef]

- Billingsley, P. (1999). Convergence of Probability Measures, 2nd ed.; Wiley-Interscience: New York, NY, USA; ISBN 9780471197454. [CrossRef]

- Williams, P.; Cert, G.; Lovelock, B.; Cabarrus, T.; Harvey, M. (2019). Improving Digital Hospital Transformation: Development of an Outcomes-Based Infrastructure Maturity Assessment Framework. JMIR Medical Informatics, 7(1), e12465. [CrossRef]

- Garsia, E.; Montes, E. (2019). Mini-term, a novel paradigm for fault detection. IFAC PapersOnLine, 53(13), 165–170. [CrossRef]

- Falkenauer, E. (2013). Real-World Line Balancing of Very Large Products. IFAC Proceedings Volumes, 46(9), 1732–1737. [CrossRef]

- Zeng, J.; Kruger, U.; Geluk, J.; Wang, X.; Xie, L. (2014). Detecting Abnormal Situations Using Kullback–Leibler Divergence. Automatica, 50(11), 2777–2786. [CrossRef]

- Ge, R.; Zhai, Q.; Wang, H.; Yuang, Y. (2022). Wiener Degradation Models with Scale-Mixture Normal Distributed Measurement Errors for RUL Detection. Mechanical Systems and Signal Processing, 173, 109029. [CrossRef]

- Ravelomanantsoa, M.; Ducq, Y.; Vallespir, B. (2019). A State of the Art and Comparison of Approaches for Performance Measurement Systems Definition and Design. International Journal of Production Research, 57, 5026-5046. [CrossRef]

- Ravelomanantsoa, M.; Ducq, Y.; Vallespir, B. (2020). General Enterprise Performance Measurement Architecture. International Journal of Production Research, 58(22), 7023-7043. [CrossRef]

- ISO. (2003). ISO/IEC 15504-2: Information Technology – Process Assessment – Part 2: Performing an Assessment. https://www.iso.org/standard/37458.html.

- ISO. (2004). ISO/IEC 15504-3: Information Technology – Process Assessment – Part 3: Guidance on Performing an Assessment. https://www.iso.org/standard/37454.html.

- ISO. (2004). ISO/IEC 15504-4: Information Technology – Process Assessment – Part 4: Guidance on Use for Process Improvement and Process Capability Determination. https://www.iso.org/standard/37462.html.

- ISO. (2015). ISO/IEC 33001: Information Technology — Process Assessment — Concepts and Terminology. https://www.iso.org/standard/54175.html.

- ISO. (2015). ISO/IEC 33004: Information Technology – Process Assessment – Requirements for Process Reference, Process Assessment and Maturity Models. https://www.iso.org/standard/54178.html.

- ISO. (2015). ISO/IEC 33020: Information Technology – Process Assessment – Process Measurement Framework for Assessment of Process Capability. https://www.iso.org/standard/54195.html.

- IEC. (2020). IEC 61850-1-2: Communication Networks and Systems for Power Utility Automation – Part 1-2: Guideline on Extending 61850. https://webstore.iec.ch/en/publication/59652.

- IEC. (2013). IEC 61850-3: Communication Networks and Systems for Power Utility Automation – Part 3: General Requirements. https://webstore.iec.ch/en/publication/6010.

- IEC. (2013). IEC 61850-5: Communication Networks and Systems for Power Utility Automation – Part 5: Communication Requirements for Functions and Device Models. https://webstore.iec.ch/en/publication/75090.

- ISO. (2004). ISO 16269-8: Statistical Interpretation of Data – Part 8: Determination of Prediction Intervals. https://www.iso.org/ru/standard/38154.html.

- ISO/TS. (2005). ISO/TS 21749: Measurement Uncertainty for Metrological Applications — Repeated Measurements and Nested Experiments. https://www.iso.org/standard/34687.html.

- Acciaio, B.; Penner, I. (2011). Dynamic Risk Measures. In: Advanced Mathematical Methods for Finance, 1-34. Springer: Berlin, Heidelberg. [CrossRef]

- Zhu, X.; Kuo, W. (2014). Importance Measures in Reliability and Mathematical Programming. Annals of Operations Research, 212, 241–267. [CrossRef]

- Babak, V.P.; Babak, S.V.; Eremenko, V.S.; Kuts, Yu.V.; Myslovych, M.V.; Scherbak, L.M.; Zaporozhets, A.O. (2021). Models and Measures in Measurements and Monitoring. In: Studies in Systems, Decision and Control, 360, 266 p. [CrossRef]

- Babak, V.; Zaporozhets, A.; Zvaritch, V.; Scherbak, L.; Myslovych, M.; Kuts, Yu. (2022). Models and Measures in Theory and Practice of Manufacturing Processes. IFAC PapersOnLine, 55(10), 1956–1961. [CrossRef]

- Coenen, L.; Smits, T. (2022). Strong Form Frequentist Testing in Communication Science: Principles, Opportunities and Challengers. Communication Methods and Measures, 58(52), 237-265. [CrossRef]

- Babak, V.P.; Babak, S.V.; Myslovych, M.V.; Zaporozhets, A.O.; Zvaritch, V.M. (2020). Technical Provision of Diagnostic Systems. In: Diagnostic Systems for Energy Equipments. Studies in Systems, Decision and Control, 281, 91–133. Springer: Cham. [CrossRef]

- Zvaritch, V.N. (2016). Peculiarities of Finding Characteristic Function of the Generating Process in the Model of Stationary Linear (AR) Process with Negative Binomial Distribution. Radioelectronics and Communication Systems, 59(12), 567–573. [CrossRef]

- Pugachev, V.S. (1984). Probability Theory and Mathematical Statistics for Engineers. ISBN 978-0-08-029148-2. [CrossRef]

- Rosinski, J. (2018). Representations and Isomorphism Identities for Infinitely Divisible Processes. Annals of Probability, 46(6), 3229–3274. [CrossRef]

- Lukacs, E. (1970). Characteristic Functions, 2nd ed.; Griffin: London, UK; 350 p.

- Zvaritch, V. (2023). Some Singularities of Linear AR Processes Characterisation in Applied Problems of Power Equipment and Power Systems Diagnosis. In: Power Systems Research and Operation. Studies in Systems, Decision and Control, 512, 263-278. Springer: Cham. [CrossRef]

- Zvaritch, V. (2020). Some Mathematical Models Which Can Be Applied to Develop Arctic Cybersecurity System. In: Cybersecurity and Resilience in the Arctic. NATO SPS Series D: Information and Communication Security, 58, 373–386. IOS Press: Amsterdam, The Netherlands. http://. [CrossRef]

- Zvaritch, V.; Davydiuk, A.V. (2019). The Method of Color Formalization of the Level of Information Security Risk. Electronic Modelling, 41(2), 121–126. [CrossRef]

- Zvaritch, V.N.; Myslovych, M.V.; Gyzhko, Y.I. (2021). Application of Linear Random Processes to Construction of Diagnostic System for Power Engineering Equipment. In: Advances in Production Management Systems. Artificial Intelligence for Sustainable and Resilient Production Systems (APMS 2021). IFIP Advances in Information and Communication Technology, 630, 617–622. Springer: Cham. [CrossRef]

- Maszczyk, T.; Duch, W. (2008). Comparison of Shannon, Renyi and Tsallis Entropy Used in Decision Trees. In: Artificial Intelligence and Soft Computing – ICAISC 2008. ICAISC 2008. Lecture Notes in Computer Science, 5097, 643–651. [CrossRef]

- Tsallis, C.; Mendes, R.; Plastino, A. (1998). The Role of Constraints within Generalised Nonextensive Statistics. Physica A: Statistical Mechanics and its Applications, 261(3-4), 534–554. [CrossRef]

- Rényi, A. (1970). Probability Theory. North-Holland Publishing Co.: Amsterdam, The Netherlands; 670 p.

- Botev, Z.I.; Kroese, D.P. (2011). The Generalised Cross Entropy Method, with Applications to Probability Density Estimation. Methodology and Computing in Applied Probability, 13, 1–27. [CrossRef]

- Rubinstein, R.Y.; Kroese, D.P. (2004). The Cross-Entropy Method. Springer: Berlin, Heidelberg, New York.

- Rubinstein, R.Y. (2005). The Stochastic Minimum Cross Entropy Method for Combinatorial Optimisation and Rare-Event Estimation. Methodol Comput Appl Probab, 7, 5–50. [CrossRef]

- Kapur, J.N.; Kesavan, H.K. (1987). Generalized Maximum Entropy Principle (with Applications). Sandford Educational Press: Waterloo, Canada.

- Kesavan, H.K.; Kapur, J.N. (2002). The Generalized Maximum Entropy Principle. IEEE Transactions on Systems, Man, and Cybernetics, 19(5), 1042–1052. [CrossRef]

- Csiszár, I. (1972). A Class of Measures of Informativity of Observation Channels. Periodica Mathematica Hungarica, 2, 191–213. [CrossRef]

- Havrda, J.H.; Charvat, F. (1967). Quantification Methods of Classification Processes: Concepts of Structural α Entropy. Kybernetica, 3, 30–35. https://dml.cz/handle/10338.dmlcz/125526.

- Pearson, K. (1895). Contributions to the Mathematical Theory of Evolution. – II. Skew Variation in Homogeneous Material. Philosophical Transactions of the Royal Society of London. Series A, 186, 343–414. http://www.jstor.org/stable/90649.

- Pearson, K. (1901). Contributions to the Mathematical Theory of Evolution. – X. Supplement to a Memoir on Skew Variations. Philosophical Transactions of the Royal Society of London. Series A, 196, 443–459. [CrossRef]

- Shin, J.W.; Chang, J.-H.; Kim, N.S. (2007). Voice Activity Detection Based on a Family of Parametric Distribution. Pattern Recognition Letters, 28, 1295–1299. [CrossRef]

- Zhao, N.; Lin, W.T. (2011). A Copula Entropy Approach to Correlation Measurement at the Country Level. Applied Mathematics and Computation, 218, 628–642. [CrossRef]

- Shannon, C.E. (1948). A Mathematical Theory of Communication. Bell System Technical Journal, 27, 379–423. [CrossRef]

- Jaynes, E.T. (1957). Information Theory and Statistical Mechanics. Physical Review, 106, 620–630. [CrossRef]

- Kullback, S.; Leibler, L.A. (1951). On Information and Sufficiency. The Annals of Mathematical Statistics, 22, 79–86. https://www.jstor.org/stable/2236703.

- Sun, Y.-N.; Pan, Y.-J.; Liu, L.-L.; Gao, G.; Qin, W. (2024). Reconstructing Causal Networks from Data for the Analysis, Prediction, and Optimisation of Complex Industrial Processes. Engineering Applications of Artificial Intelligence, 138, 109494. [CrossRef]

- Sun, Y.; Qin, W.; Zhuang, Z. (2021). Nonparametric-Copula-Entropy and Network Deconvolution Method for Causal Discovery in Complex Manufacturing Systems. Journal of Intelligent Manufacturing, 33, 1699–1713. [CrossRef]

- Dolgui, A.; HaddouBenderbal, H.; Sgarbossa, F.; Thevenin, S. (2024). Editorial for the Special Issue: AI and Data-Driven Decisions in Manufacturing. Journal of Intelligent Manufacturing, 35, 3599–3604. [CrossRef]

- Castañe, G.; Dolgui, A.; Kousi, N.; Meyers, B.; Thevenin, S.; Vyhmeister, E.; Ostberg, P.O. (2023). The Assistant Project: AI for High-Level Decisions in Manufacturing. International Journal of Production Research, 61(7), 2288–2306. [CrossRef]

- Gkournelos, C.; Konstantinou, C.; Angelakis, P.; Tzavara, E.; Makris, S. (2023). Praxis: A Framework for AI-Driven Human Action Recognition in Assembly. Journal of Intelligent Manufacturing, 51(5), 3697–3711. [CrossRef]

- Meyers, B.; Vangheluwe, H.; Lietaert, P.; Vanderhulst, G.; Van Noten, J.; Schaffers, M.; Maes, D.; Gadeyne, K. (2024). Towards a Knowledge Graph Framework for Ad Hoc Analysis in Manufacturing. Journal of Intelligent Manufacturing, 51(5) ,3731–3752. [CrossRef]

- Wagner, S.; Gonnermann, C.; Wegmann, M.; Listl, F.; Reinhart, G.; Weyrich, M. (2023). From Framework to Industrial Implementation: The Digital Twin in Process Planning. Journal of Intelligent Manufacturing, 51(5), 3793–3813. [CrossRef]

- Shen, Y.; Wang, T.; Song, Z. (2024). Online Performance and Proactive Maintenance Assessment of Data-Driven Prediction Models. Journal of Intelligent Manufacturing, 51(5), 3959–3993. [CrossRef]

- Chakroun, A.; Hani, Y.; Elmhamedi, A.; Masmoudi, F. (2024). A Predictive Maintenance Model for Health Assessment of an Assembly Robot Based on Machine Learning in the Context of Smart Plant. Journal of Intelligent Manufacturing, 51(5), 3995–4013. [CrossRef]

- Mäkiaho, T.; Laitinen, J.; Nuutila, M.; Koskinen, K.T. (2024). Remaining Useful Lifetime Prediction for Milling Blades Using a Fused Data Prediction Model (FDPM). Journal of Intelligent Manufacturing, 51(5), 4035–4054. [CrossRef]

- Dang, J.F. (2024). The Multisensor Information Fusion-Based Deep Learning Model for Equipment Health Monitor Integrating Subject Matter Expert Knowledge. Journal of Intelligent Manufacturing, 51(5), 4055–4069. [CrossRef]

- Cohen, J.; Huan, X.; Ni, J. (2024). Shapley-Based Explainable AI for Clustering Applications in Fault Diagnosis and Prognosis. Journal of Intelligent Manufacturing, 51(5), 4071–4086. [CrossRef]

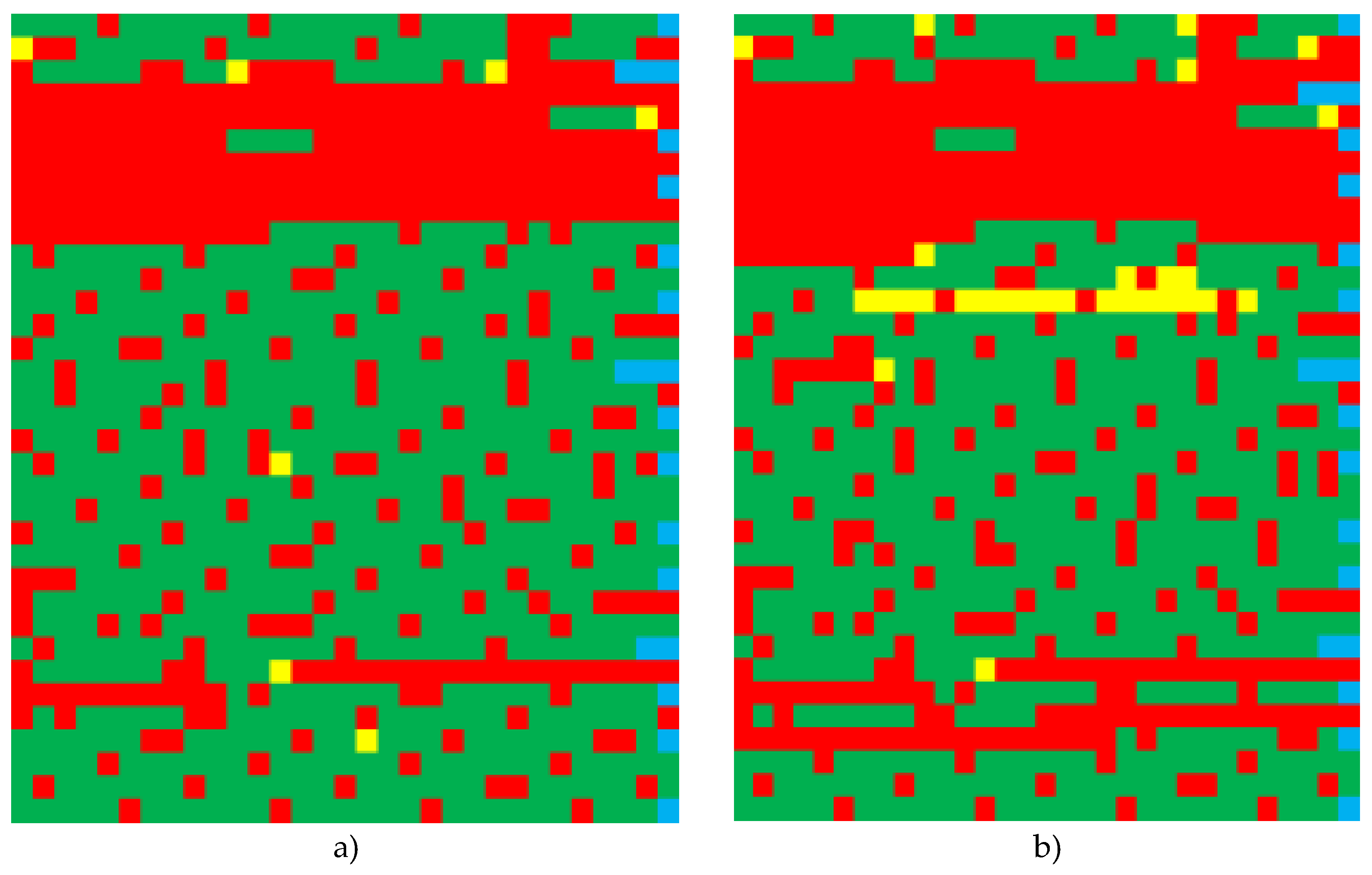

| No. | Parameters* | Activation time |

| 3 | PM, SO2, CO, NO2, CH2O | September 2018 |

| 5 | PM, SO2, CO, NO2, CH2O | September 2018 |

| 7 | PM, SO2, CO, NO2, HF, HCl, CH2O | November 2017 |

| 20 | PM, SO2, CO, NO2, NO, HF, NH3, CH2O | November 2017 |

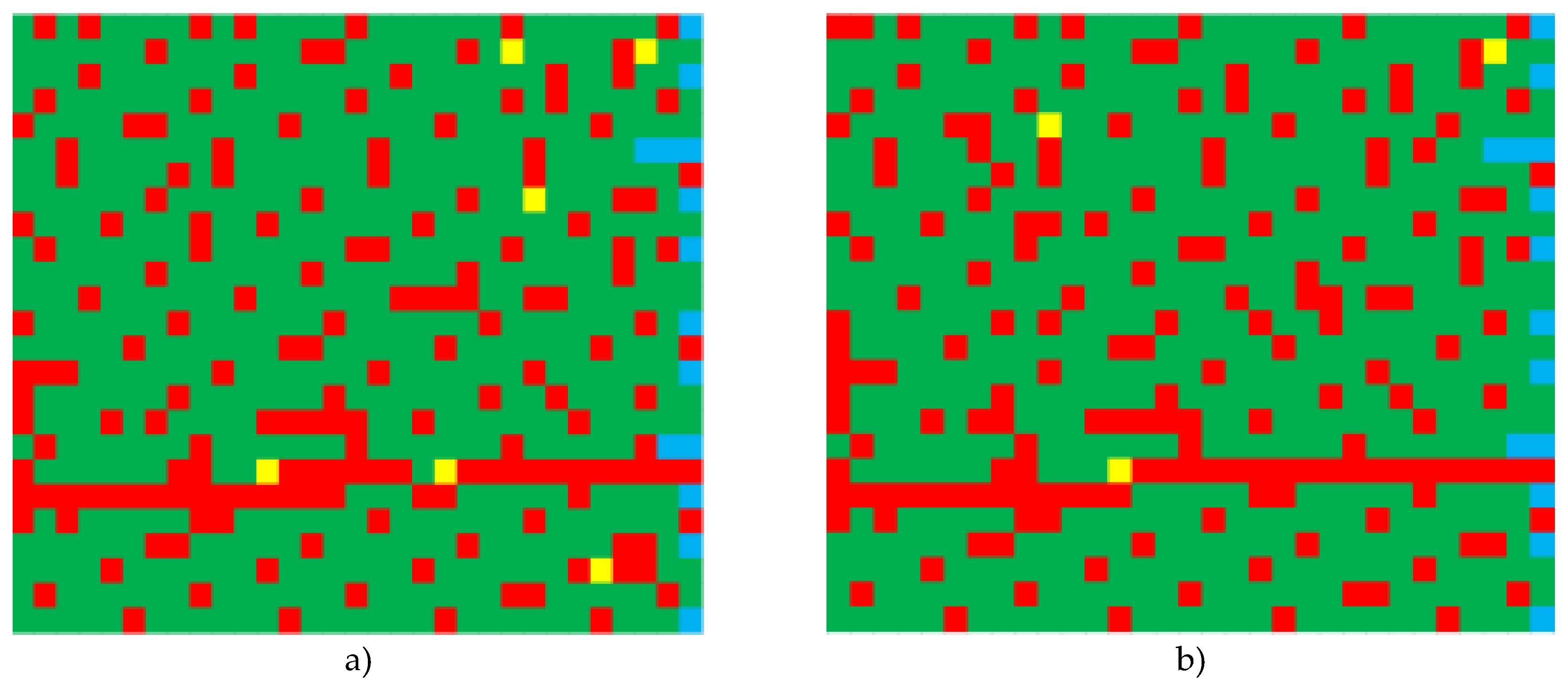

| No | Parameter | Parameter value | Colour |

| 1 | 3 | 100% of planned data received | |

| 2 | 2 | Data obtained within the range 0<x<100 (%) | |

| 3 | 1 | Data not obtained | |

| 4 | 0 | No control date |

| No | Number of groups | SС | 100% | |

| 3 | 0 | 14 | 761 | 76.3 |

| 1 | 174 | |||

| 2 | 6 | |||

| 3 | 581 | |||

| 5 | 0 | 14 | 761 | 76.3 |

| 1 | 177 | |||

| 2 | 3 | |||

| 3 | 581 | |||

| 7 | 0 | 20 | 1065 | 63.4 |

| 1 | 383 | |||

| 2 | 7 | |||

| 3 | 675 | |||

| 20 | 0 | 20 | 1065 | 56.6 |

| 1 | 429 | |||

| 2 | 33 | |||

| 3 | 603 | |||

| Artificial intelligence model | Key features | Application for electrical equipment diagnostics |

| Neural networks (NN) | Capable of modelling complex, non-linear processes | Fault prediction, anomaly detection, recognition of patterns in equipment behaviour |

| Convolutional neural networks (CNN) | Specialised for processing data in the form of a grid (such as images) | Detect defects based on images, such as insulator defects or component overheating using thermal imaging |

| Recurrent neural networks (RNN) | Effective for sequential data such as time series | Predictive maintenance by analysing time series data on equipment performance |

| Support vector machines (SVM) | Good for classification and regression tasks | Classification of equipment status as normal or faulty based on characteristic data |

| Decision trees (DT) | Easy to understand and interpret | Determination of fault conditions by tracing the decision path in the tree structure |

| Random forests (RF) | An ensemble of DT, less prone to overfitting | Diagnosis of faults in complex scenarios with high-dimensional data |

| Gradient boosting machines (GBM) | Building models sequentially, good predictive performance | Increased fault prediction accuracy by combining weak predictive models |

| Autoencoders (AE) | Used for data encoding and dimensionality reduction | Detection of anomalies by training on normal operating patterns and identifying deviations |

| Generative adversarial networks (GANs) | Used to generate new data instances | Data augmentation to improve the reliability of diagnostic models by generating synthetic fault data |

| Reinforcement learning (RL) | Training to make sequential decisions | Adaptive control systems for electrical equipment to optimise performance |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).