Submitted:

27 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

Introduction

- A dense safety-guided policy learning method based on CDF is proposed. Breaking through the limitation of sparse collision penalties of traditional workspace signed distance functions, CDF is integrated into the design of the SAC reward function, and its continuously differentiable gradient characteristic is utilized to provide global dense guidance for obstacle avoidance behavior;

- A policy-guided MPPI online planning architecture is designed to achieve deep integration of global prior and local optimization. The offline-trained Constraint-Discounted SAC (CD-SAC) policy is used as the nominal control sequence generator for MPPI to provide a high-quality warm start, concentrating the sampling distribution near the global optimal solution. It resolves traditional MPPI's drawbacks, and corrects the offline policy’s generalization error via MPPI’s online receding horizon optimization;

- A multi-level safety system featuring "policy soft guidance + optimization hard constraint + filter final guarantee" is constructed. At the offline learning layer, the safety preference is internalized into the policy through CDF and TD-CD; at the online planning layer, the trajectory feasibility is enhanced through the explicit cost penalty of MPPI; at the execution layer, a safety filter based on first-order CBF is introduced to perform real-time projection correction on control commands.

2. Preliminaries

2.1. Problem Formulation

- 4.

- State constraint: Set upper and lower bounds for joint angles and joint velocities, i.e., , , to prevent the system from entering a physically infeasible state for the mechanical structure and drive system;

- 5.

- Control constraint: Set an amplitude upper bound for joint acceleration, i.e., , to match the actual output capability of actuators and prevent mechanical vibration, impact or hardware damage caused by abrupt changes in control signals.

2.2. Model Predictive Path Integral

2.3. Soft Actor-Critic

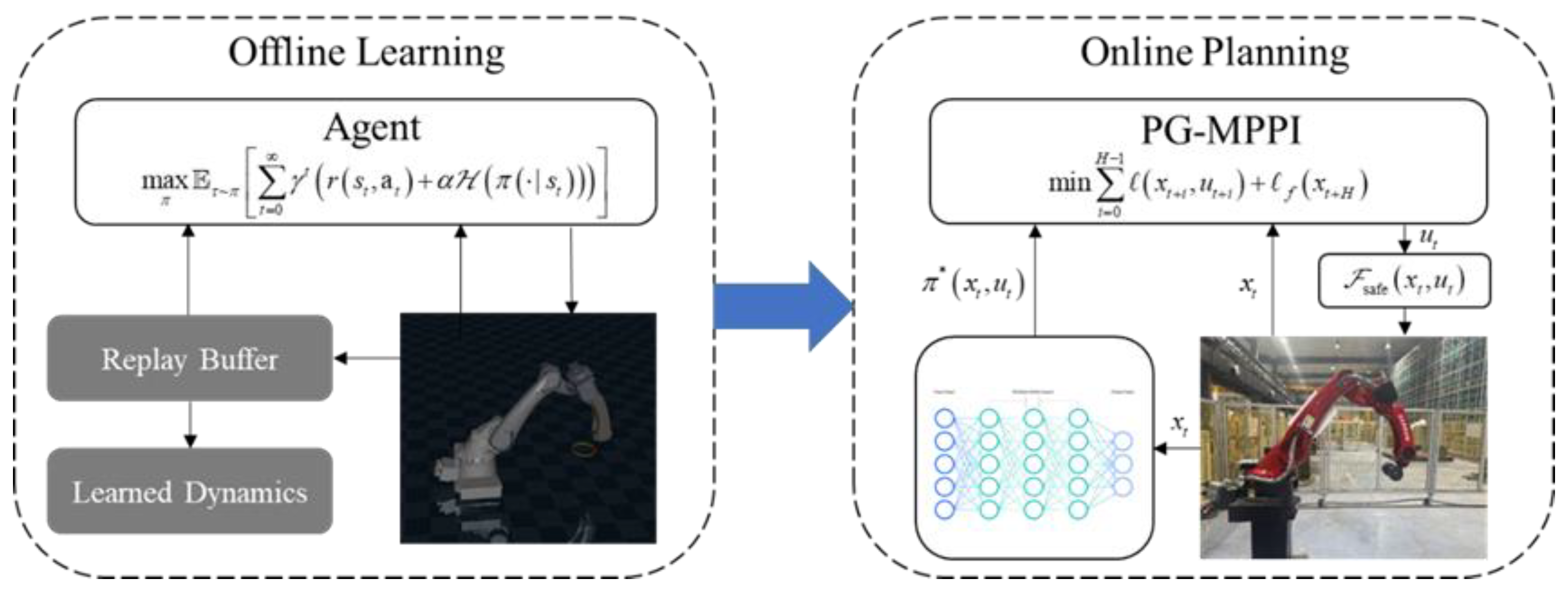

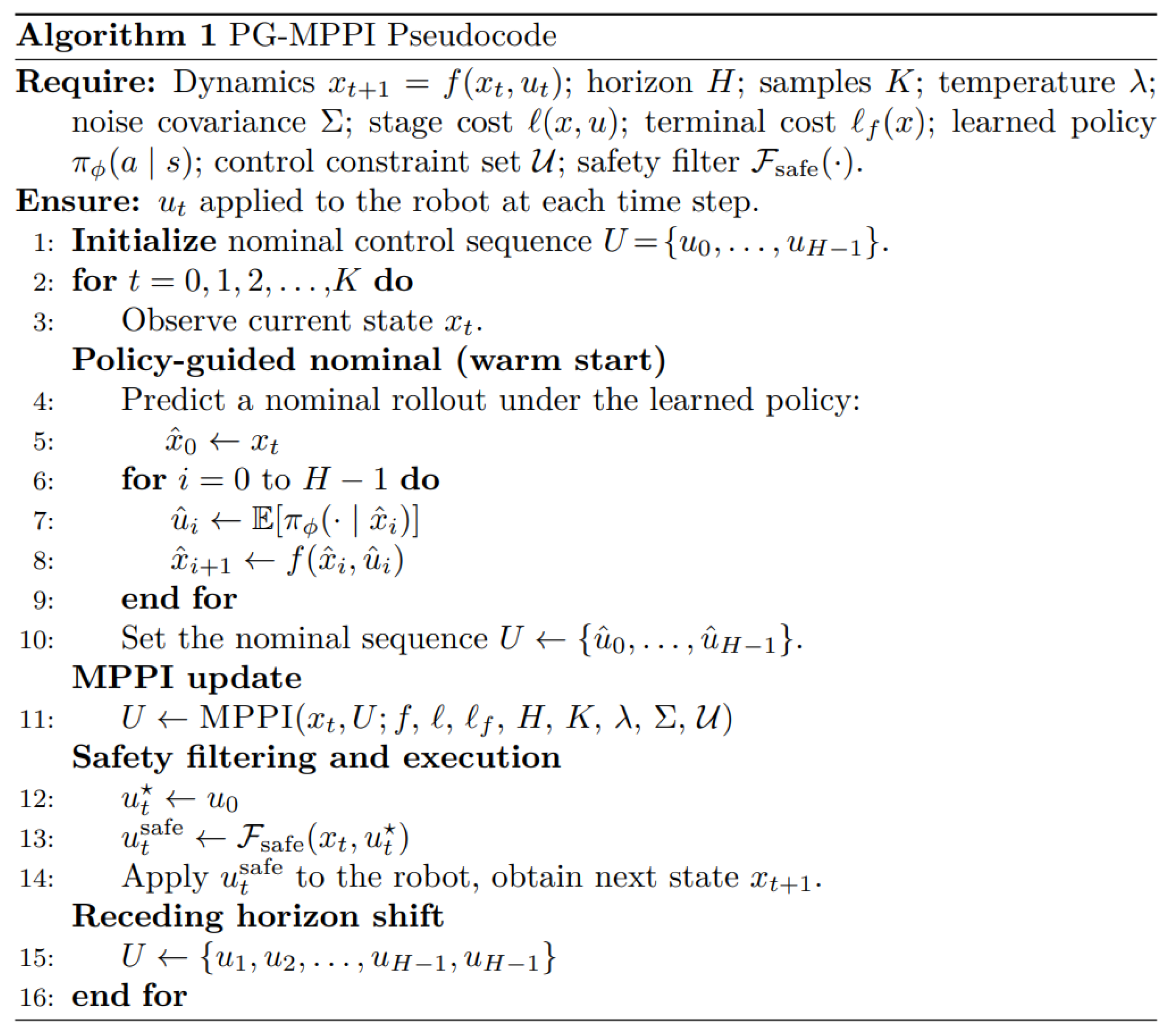

3. Policy-Guided MPPI

3.1. Algorithm Framework

3.2. Offline Policy Learning

3.2.1. Reward Design

3.2.2. Constraints Based on Discount Factor

3.2.3. Constraint-Discounted SAC Learning Strategy

3.3. Online Trajectory Planning

3.3.1. CBF-Based Safety Filter

3.3.2. Algorithm Implementation

4. Experiments

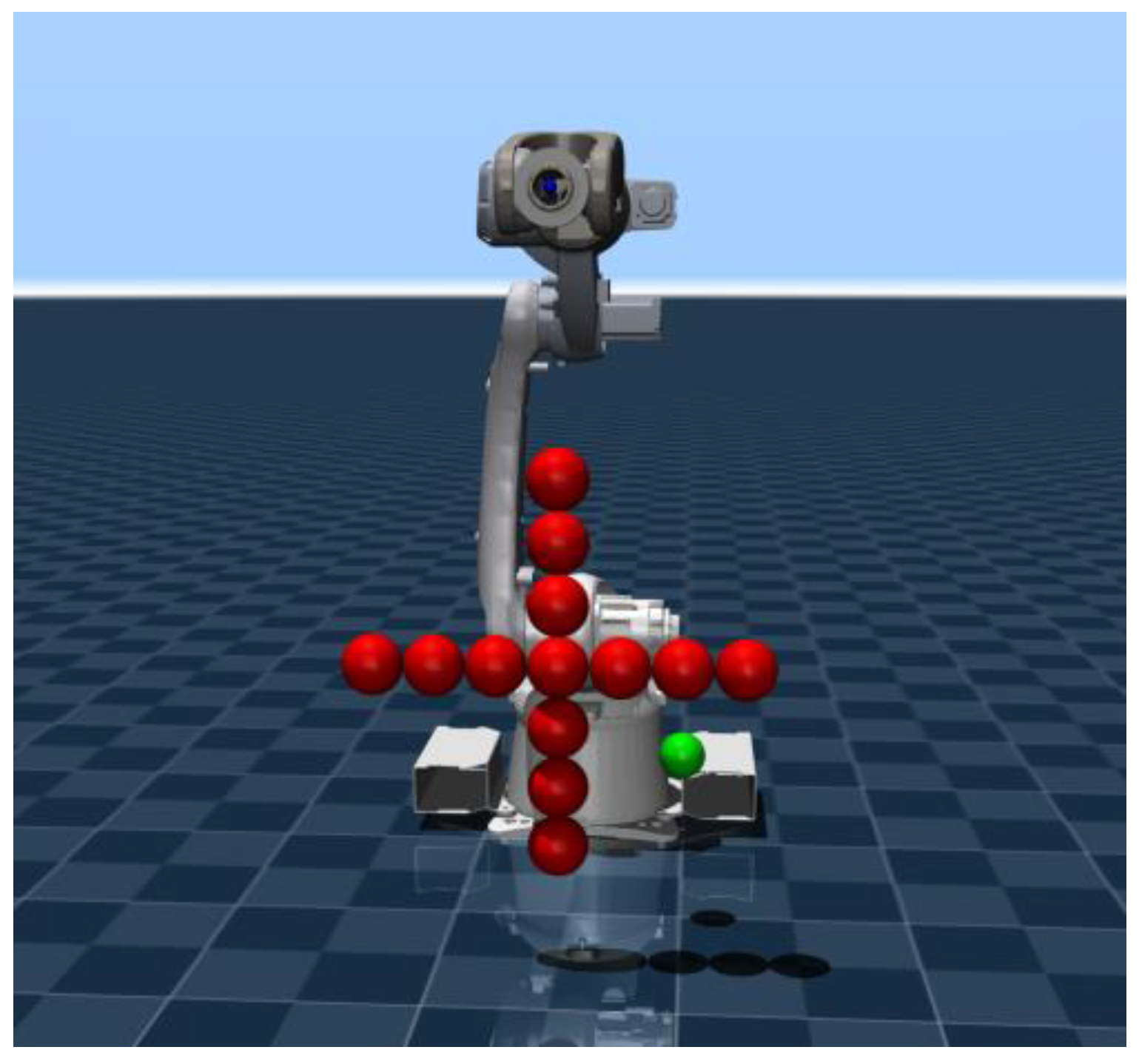

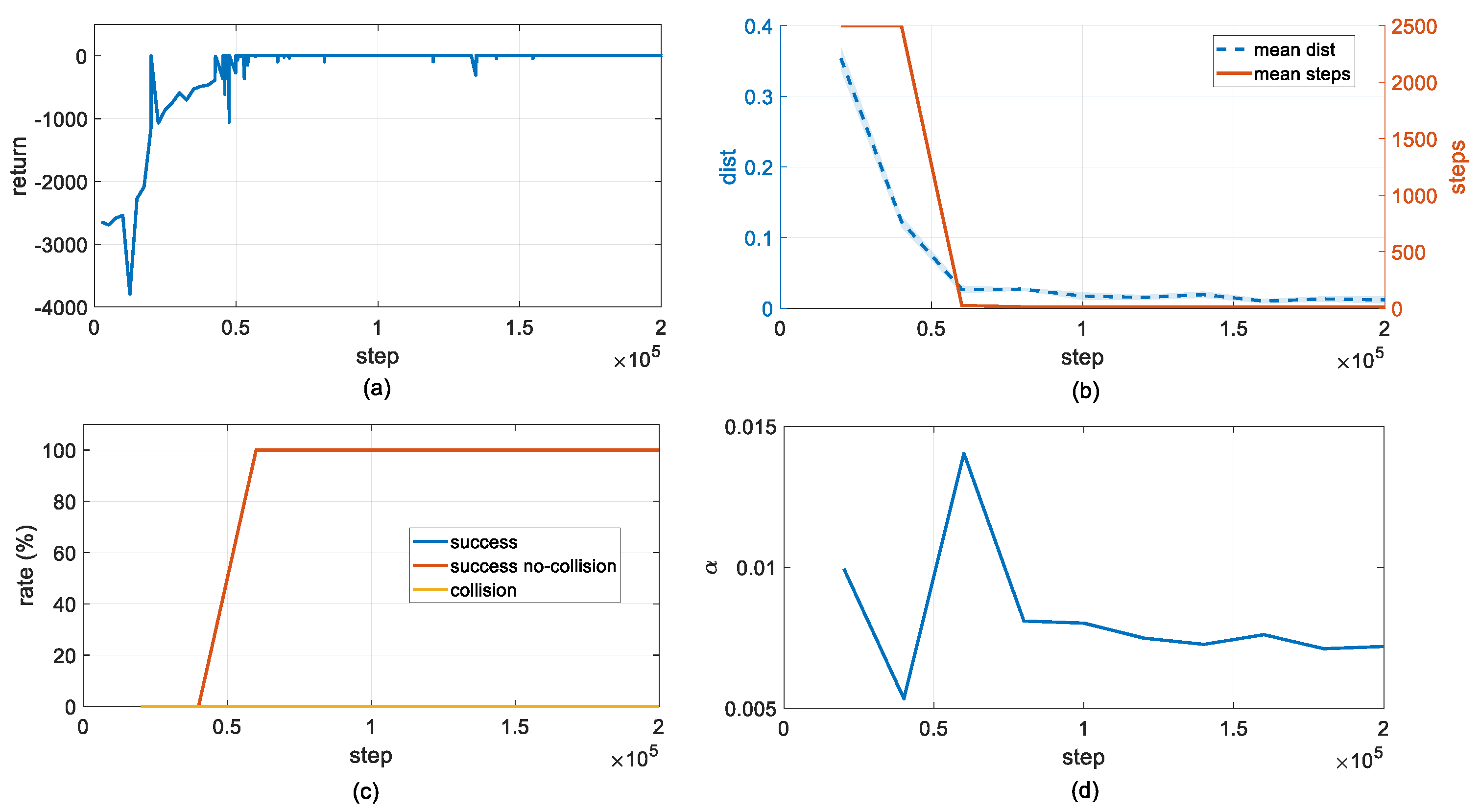

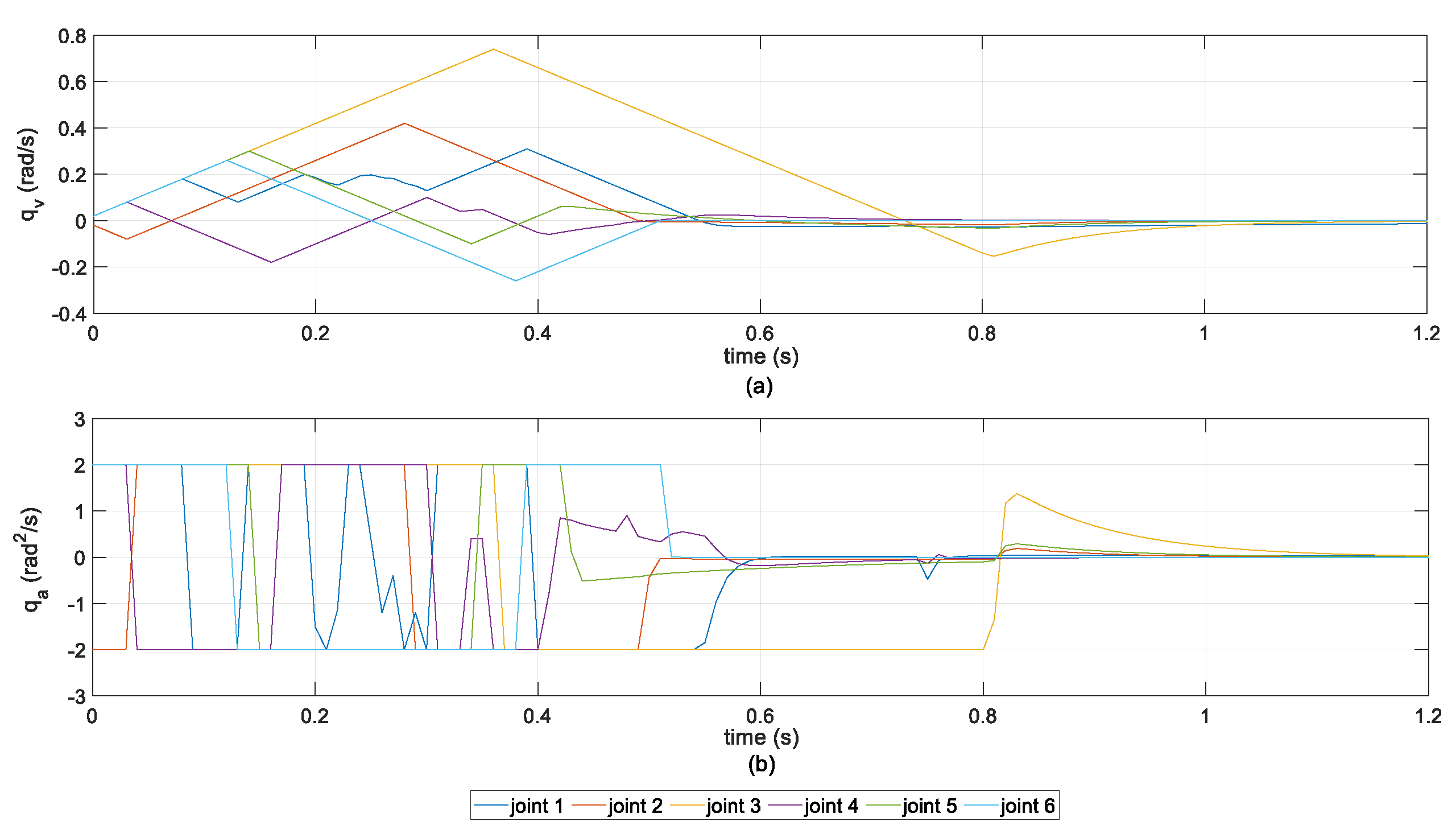

4.1. Offline Policy Learning

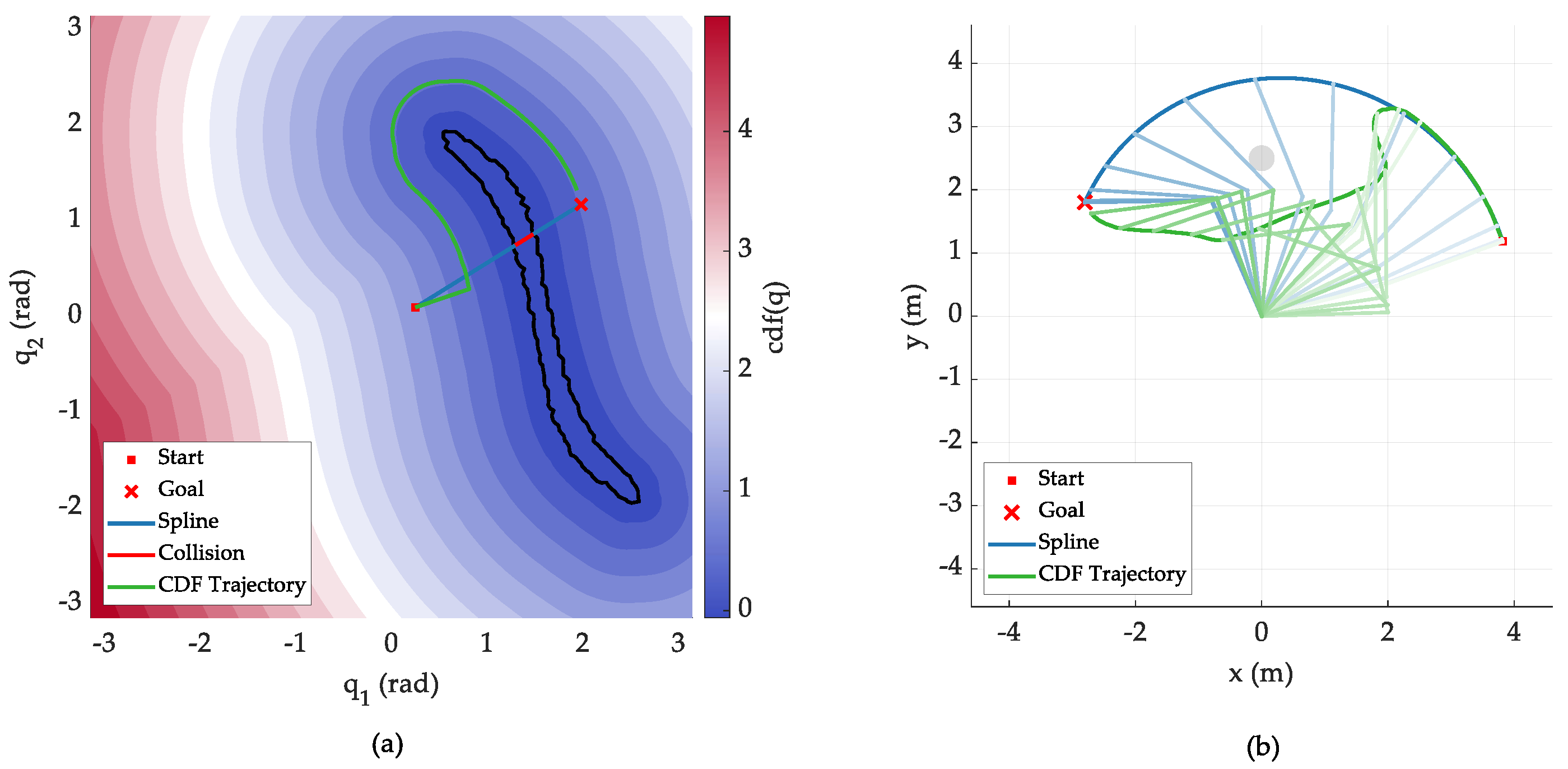

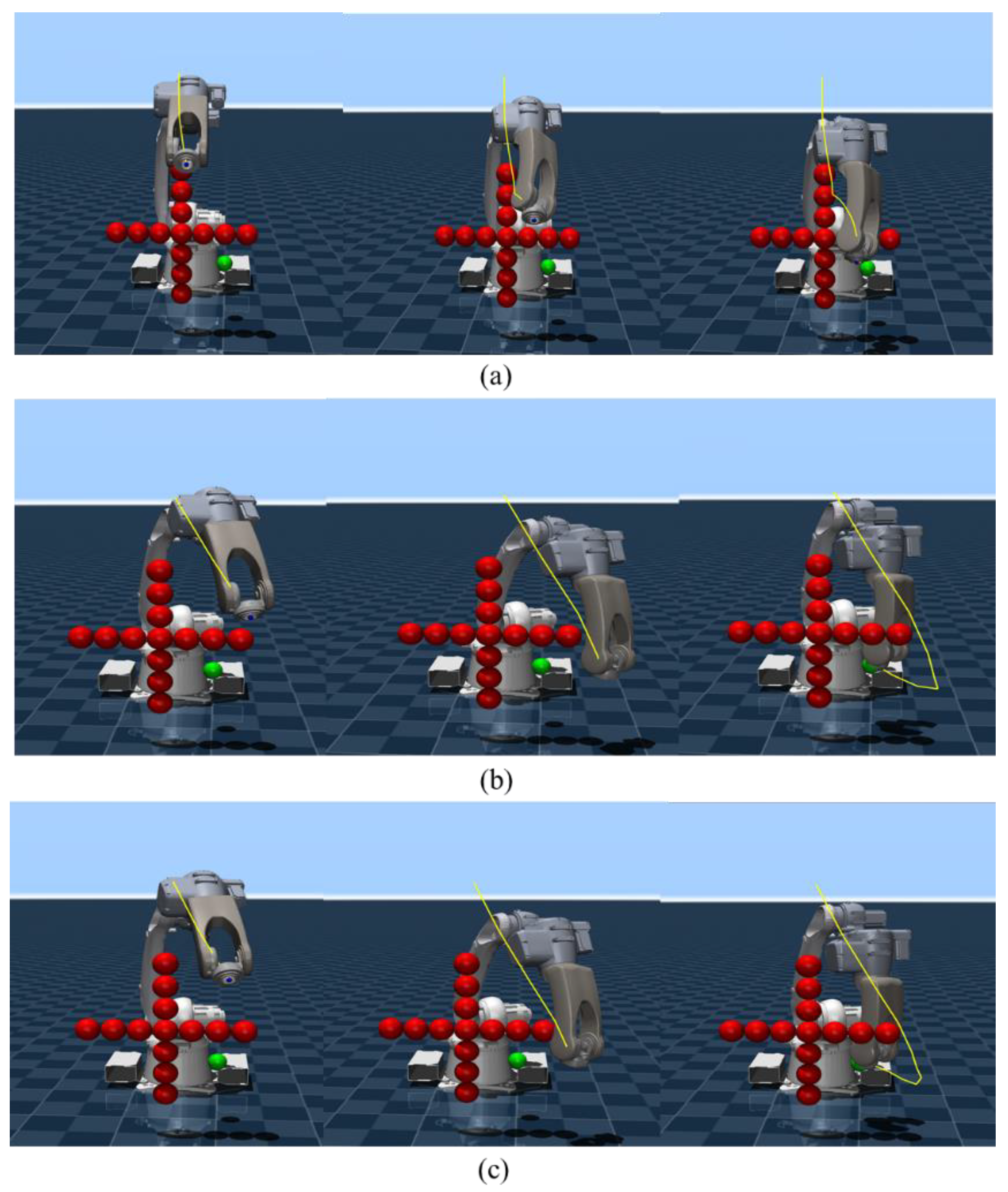

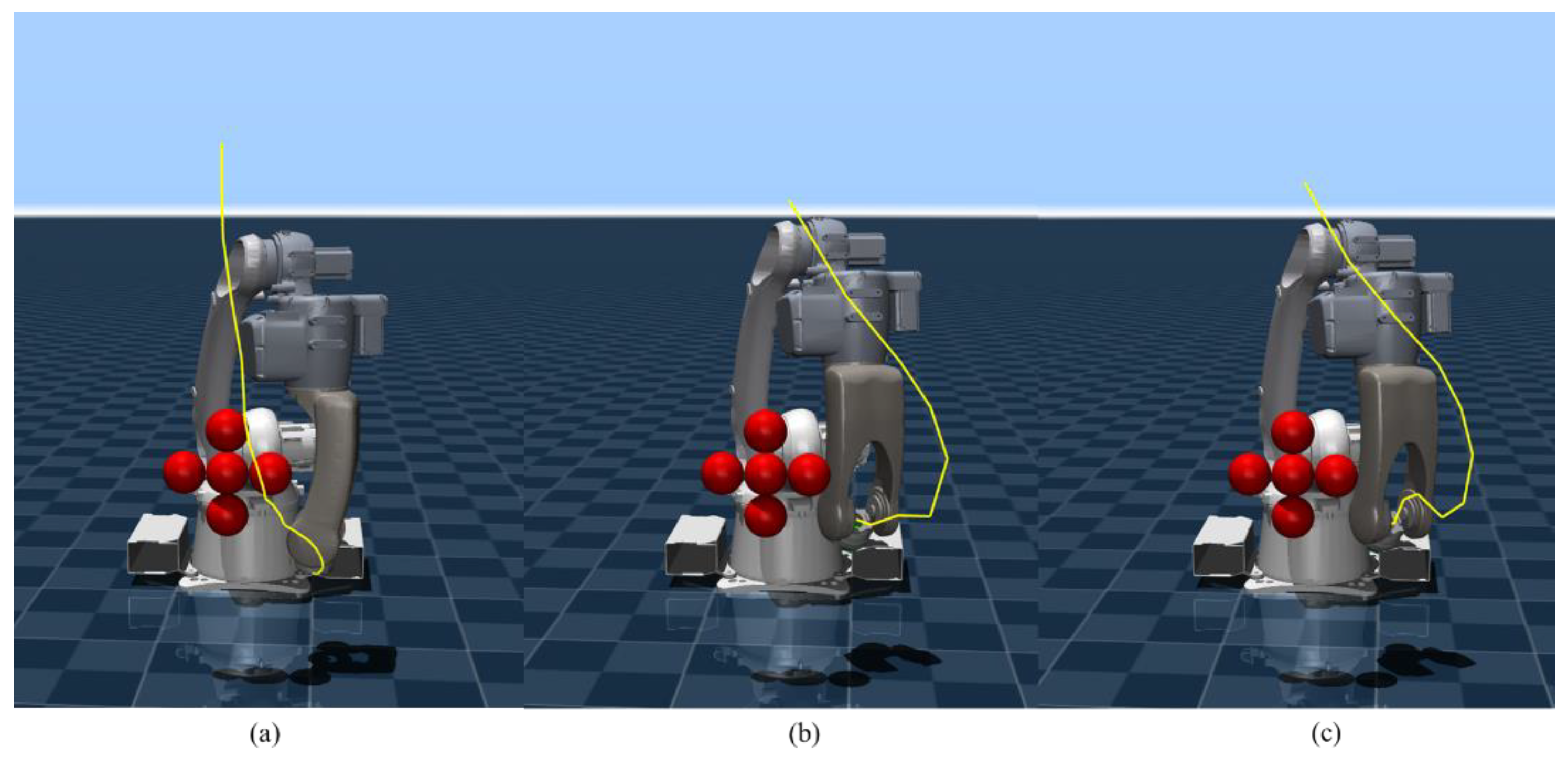

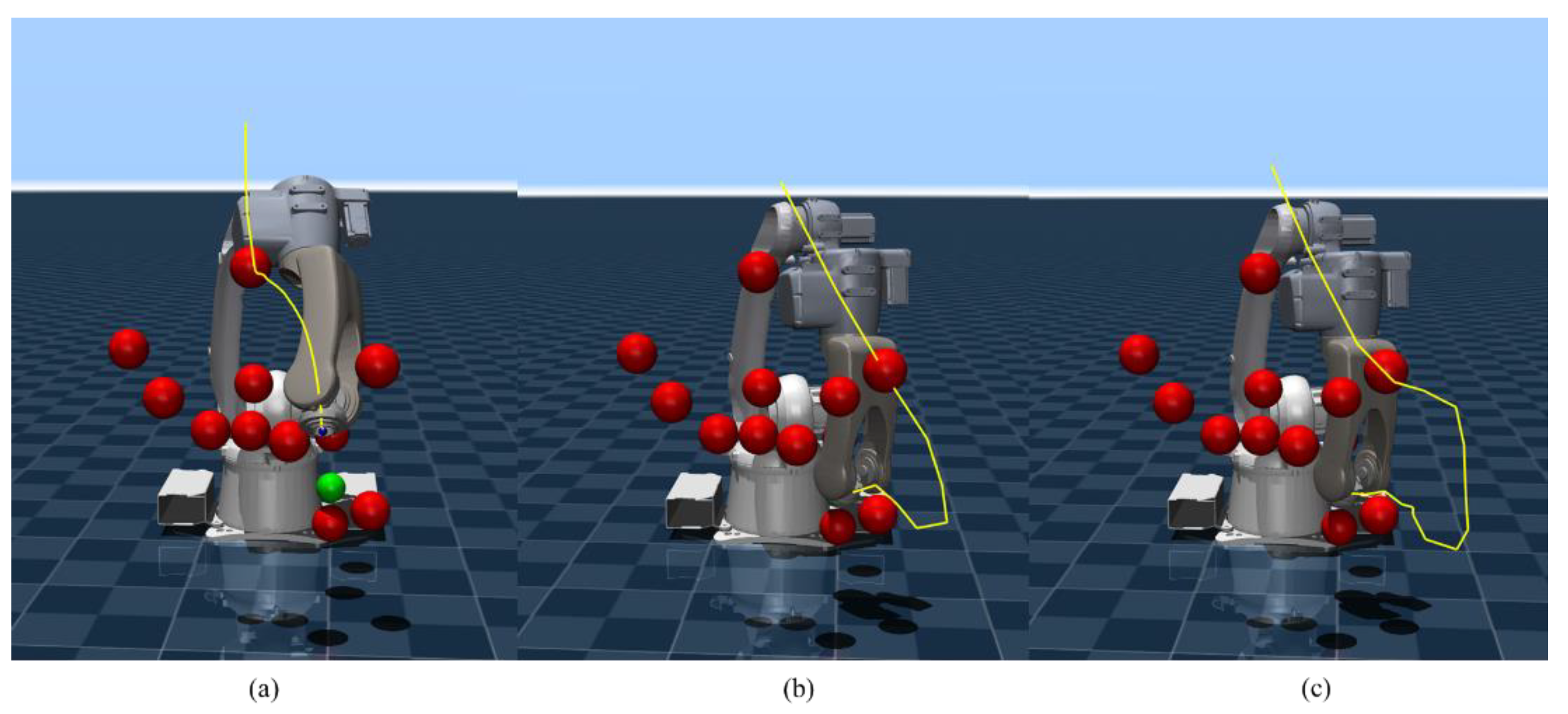

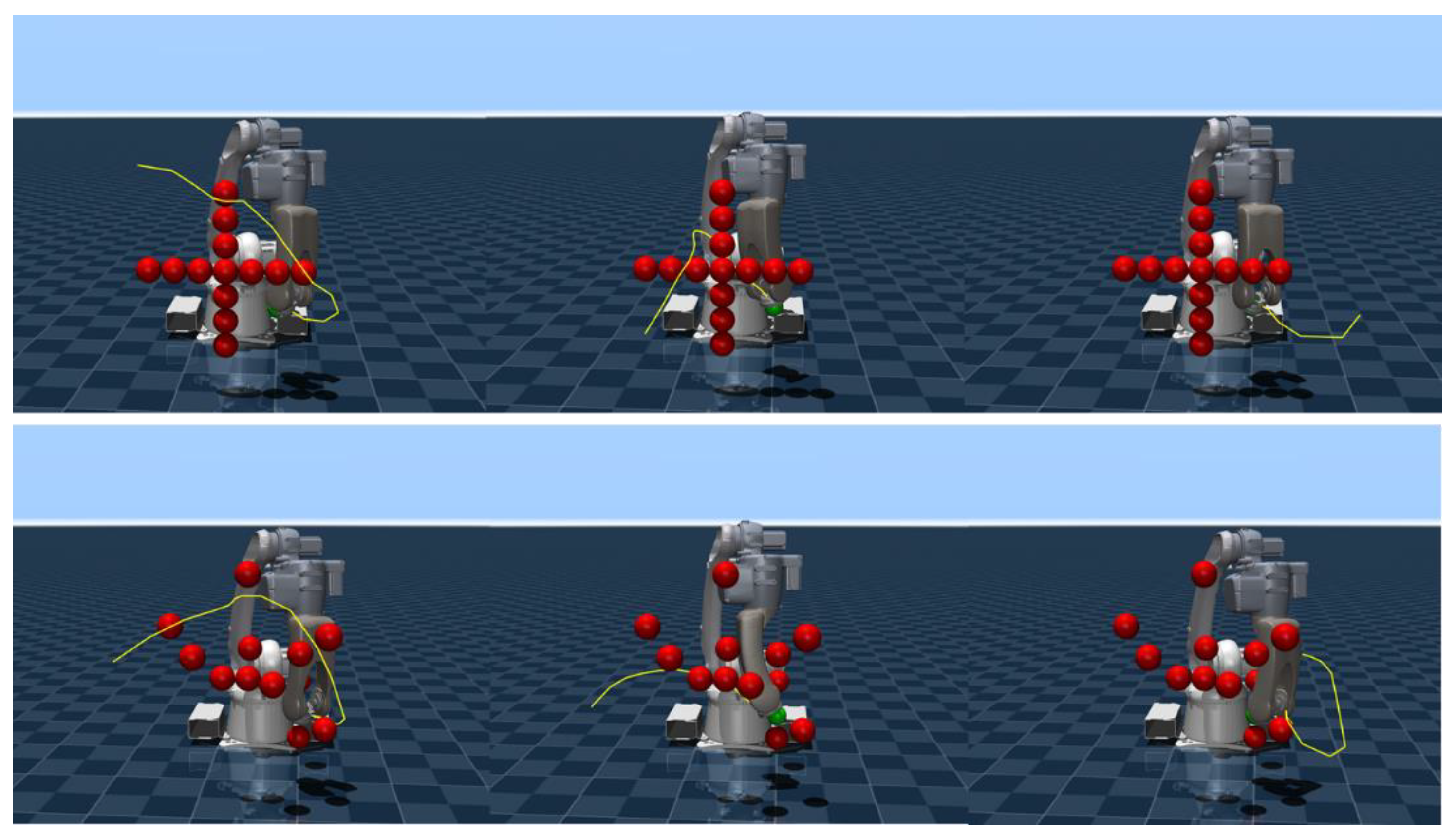

4.2. Obstacle Avoidance Trajectory

5. Conclusions

6. Patents

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Liu, J.; Yap, H.J.; Khairuddin, A.S.M. Review on motion planning of robotic manipulator in dynamic environments. J. Sens. 2024, 1, 5969512. [Google Scholar] [CrossRef]

- Koptev, M.; Figueroa, N.; Billard, A. Reactive collision-free motion generation in joint space via dynamical systems and sampling-based MPC. Int. J. Robot. Res. 2024, 43, 2049–2069. [Google Scholar] [CrossRef] [PubMed]

- Luo, S.; Zhang, M.; Zhuang, Y.; et al. A survey of path planning of industrial robots based on rapidly exploring random trees. Front. Neurorobot. 2023, 17, 1268447. [Google Scholar] [CrossRef] [PubMed]

- Schulman, J.; Ho, J.; Lee, A.X.; et al. Finding locally optimal, collision-free trajectories with sequential convex optimization. In Proceedings of the Robotics: Science and Systems 2013, Berlin, Germany, 24 June 2013. [Google Scholar]

- Mayne, D.Q. Model predictive control: Recent developments and future promise. Automatica 2014, 50, 2967–2986. [Google Scholar] [CrossRef]

- Williams, G.; Drews, P.; Goldfain, B.; et al. Information-theoretic model predictive control: Theory and applications to autonomous driving. IEEE Trans. Robot. 2018, 34, 1603–1622. [Google Scholar] [CrossRef]

- Belvedere, T.; Ziegltrum, M.; Turrisi, G.; et al. Feedback-MPPI: fast sampling-based MPC via rollout differentiation–adios low-level controllers. IEEE Robot. Autom. Lett. 2025, 11, 1–8. [Google Scholar] [CrossRef]

- Qu, Y.; Chu, H.; Gao, S.; et al. RL-driven MPPI: Accelerating online control laws calculation with offline policy. IEEE Trans. Intell. Veh. 2023, 9, 3605–3616. [Google Scholar] [CrossRef]

- Ezeji, O.; Ziegltrum, M.; Turrisi, G.; et al. BC-MPPI: A Probabilistic Constraint Layer for Safe Model-Predictive Path-Integral Control. Proceedings of Agents and Robots for Reliable Engineered Autonomy 2025, Bologna, Italy, 25 October 2025. [Google Scholar]

- Tamizi, M.G.; Yaghoubi, M.; Najjaran, H. A review of recent trend in motion planning of industrial robots: MG Tamizi et al. Int. J. Intell. Robot. Appl. 2023, 7, 253–274. [Google Scholar] [CrossRef]

- Stan, L.; Nicolescu, A.F.; Pupăză, C. Reinforcement learning for assembly robots: A review. Proc. Manuf. Syst. 2020, 15, 135–146. [Google Scholar]

- Romero, A.; Aljalbout, E.; Song, Y.; et al. Actor–Critic Model Predictive Control: Differentiable Optimization Meets Reinforcement Learning for Agile Flight. IEEE Trans. Robot. 2025, 42, 673–692. [Google Scholar] [CrossRef]

- Baltussen, T.; Orrico, C.A.; Katriniok, A.; et al. Value Function Approximation for Nonlinear MPC: Learning a Terminal Cost Function with a Descent Property. In In Proceedings of the 2025 IEEE 64th Conference on Decision and Control, Rio De Janeiro, Brazil, 10 December 2025. [Google Scholar]

- Hansen, N.; Wang, X.; Su, H. Temporal difference learning for model predictive control. arXiv 2022, 2203.04955. [Google Scholar] [CrossRef]

- Liu, P.; Zhang, Y.; Wang, H.; et al. Real-time collision detection between general SDFs. Comput. Aided Geom. Des. 2024, 111, 102305. [Google Scholar] [CrossRef]

- Ames, A.D.; Coogan, S.; Egerstedt, M.; et al. Control barrier functions: Theory and applications. In Proceedings of the 2019 18th European Control Conference, Naples, Italy, 15 June 2019. [Google Scholar]

- Almubarak, H.; Sadegh, N.; Theodorou, E.A. Safety embedded control of nonlinear systems via barrier states. IEEE Control Syst. Lett. 2021, 6, 1328–1333. [Google Scholar] [CrossRef]

- Li, Y.; Miyazaki, T.; Kawashima, K. One-Step Model Predictive Path Integral for Manipulator Motion Planning Using Configuration Space Distance Fields. arXiv 2025, 2509.00836. [Google Scholar] [CrossRef]

- Li, Y.; Chi, X.; Razmjoo, A.; et al. Configuration space distance fields for manipulation planning. arXiv 2024, 2406.01137. [Google Scholar] [CrossRef]

- Crestaz, P.N.; De Matteis, L.; Chane-Sane, E.; et al. TD-CD-MPPI: Temporal-Difference Constraint-Discounted Model Predictive Path Integral Control. IEEE Robot. Autom. Lett. 2025, 11, 498–505. [Google Scholar] [CrossRef]

- Haarnoja, T.; Zhou, A.; Abbeel, P.; et al. Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10 July 2018. [Google Scholar]

- Ames, A.D.; Xu, X.; Grizzle, J.W.; et al. Control barrier function based quadratic programs for safety critical systems. IEEE Trans. Autom. Control 2016, 62, 3861–3876. [Google Scholar] [CrossRef]

- Todorov, E. Convex and analytically-invertible dynamics with contacts and constraints: Theory and implementation in mujoco. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation, Hong Kong, China, 31 May 2014. [Google Scholar]

|

| Type | Parameters | Value | Function |

|---|---|---|---|

| Constraint Discount |

velocity bound | 1rad/s | Joint velocity constraint |

| acceleration bound | 2rad2/s | Joint acceleration constraint | |

| 1 | Termination probability calculation in Eq.(19) | ||

| 0.99 | Exponential moving average decay rate in Eq.(20) | ||

| SAC Network |

hide dim | 256 | Number of neurons per layer for actor/critic MLP |

| learning rate | 3e-4 | Learning rate of Adam optimizer | |

| 0.99 | Baseline discount factor of SAC in Eq.(21) | ||

| batch size | 256 | Batch size for each network update | |

| action repeat | 5 | Number of physics simulation steps per RL step | |

| Environment Reward |

reach tolerance | 0.03 | position error threshold for task success |

| max ep steps | 2500 | Maximum steps per training episode | |

| success bonus | 10.0 | Extra reward | |

| total steps | 200000 | Total environment interaction steps |

| Parameters | Value | Function |

| policy update | 0.1s | Update cycle of the global prior policy |

| horizon | 25 | Length of the predictive receding horizon |

| samples number | 200 | Number of sampled trajectories for MPPI |

| 0.6 | Temperature parameter | |

| standard noise | 0.6 | Standard deviation of Gaussian noise |

| Method | SF-MPPI | SF-SAC | PG-MPPI |

| Normal Obstacle | 20 | 100 | 100 |

| Complex Obstacle | 20 | 60 | 100 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).