Submitted:

27 February 2026

Posted:

02 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We study zero-shot cross-dataset open-vocabulary UAV detection and identify stride-2 downsampling as a central zero-shot transfer bottleneck that disproportionately harms tiny-object representations under domain shift.

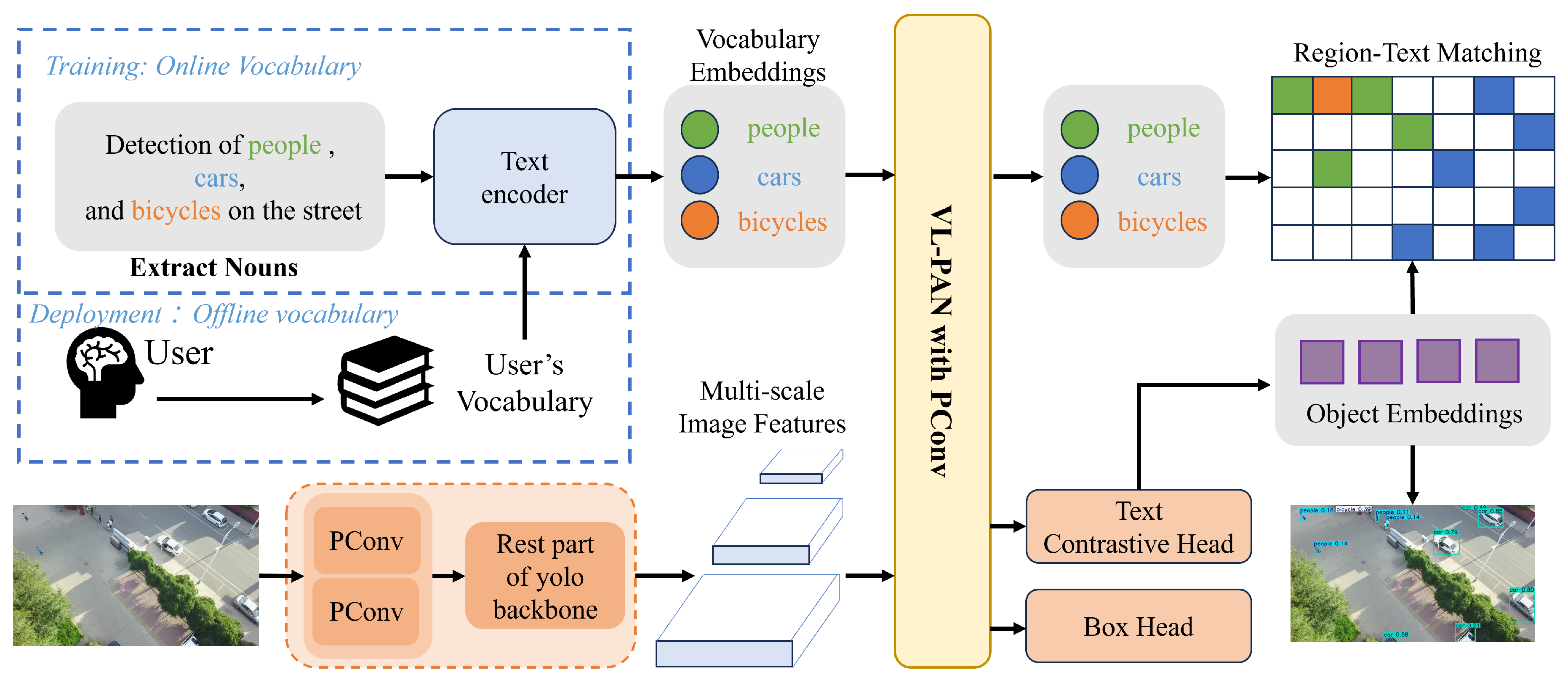

- We propose AeroPinWorld, a pinwheel-augmented open-vocabulary UAV detector for zero-shot cross-dataset transfer, which introduces pinwheel-shaped convolution (PConv) as a phase-aware stride-2 reduction via selective insertion at transfer-critical pyramid transitions.

- We establish a rigorous zero-shot cross-dataset evaluation protocol by training solely on COCO2017 and directly evaluating on VisDrone2019-DET and UAVDT without any target-domain training, demonstrating consistent improvements under this protocol.

2. Related Works

2.1. Open-Vocabulary Detection and Zero-Shot Transfer

2.2. UAV Small-Object Detection Under Domain Shift

2.3. Downsampling, Aliasing, and Phase-Aware Reduction

3. Method

3.1. Overview: Revisiting Stride-2 Downsampling for Transferable OV-UAV Detection

3.2. Revisiting Stride-2 Reduction as a Transfer-Critical Operator

3.3. PConv-Based Phase-Aware Stride-2 Block

Offset probing with asymmetric padding.

Channel fusion into a coarse-grid feature.

Why this helps transfer.

3.4. Selective Integration into YOLO-World v2

Replacement locations.

- Early backbone reductions (detail formation). We apply to the first two backbone reductions that generate the P1/2 and P2/4 features. Operating on the highest-resolution internal maps, these layers largely determine whether weak high-frequency cues from tiny objects survive and enter the feature pyramid.

- Bottom-up head reductions (pyramid construction). We further apply to the two bottom-up stride-2 reductions in the head, namely the P3–P4 and P4–P5 transitions. This improves the stability of coarse-level representations under domain shift and mitigates error propagation through multi-scale fusion.

What we keep unchanged (and why).

3.5. Open-Vocabulary Detection Head

3.6. Optimization Objective

Assignment and normalization.

Classification loss .

IoU loss (CIoU).

Distribution Focal Loss .

Total loss.

4. Experiments

4.1. Datasets

Source dataset (training).

Target datasets (zero-shot evaluation).

4.2. Zero-Shot Transfer Protocol and Prompt Vocabulary

Prompt vocabulary.

4.3. Implementation Details

Training setup.

Inference setup and image sizes.

Environment and hardware.

4.4. Baselines and Compared Methods

- YOLO-World v2 (baseline): the original model with standard stride-2 downsampling.

- AeroPinWorld (ours): selectively replaces four transfer-critical stride-2 transitions with the proposed phase-aware stride-2 block (Section 3.4), while keeping the rest of the architecture unchanged.

4.5. Evaluation Metrics

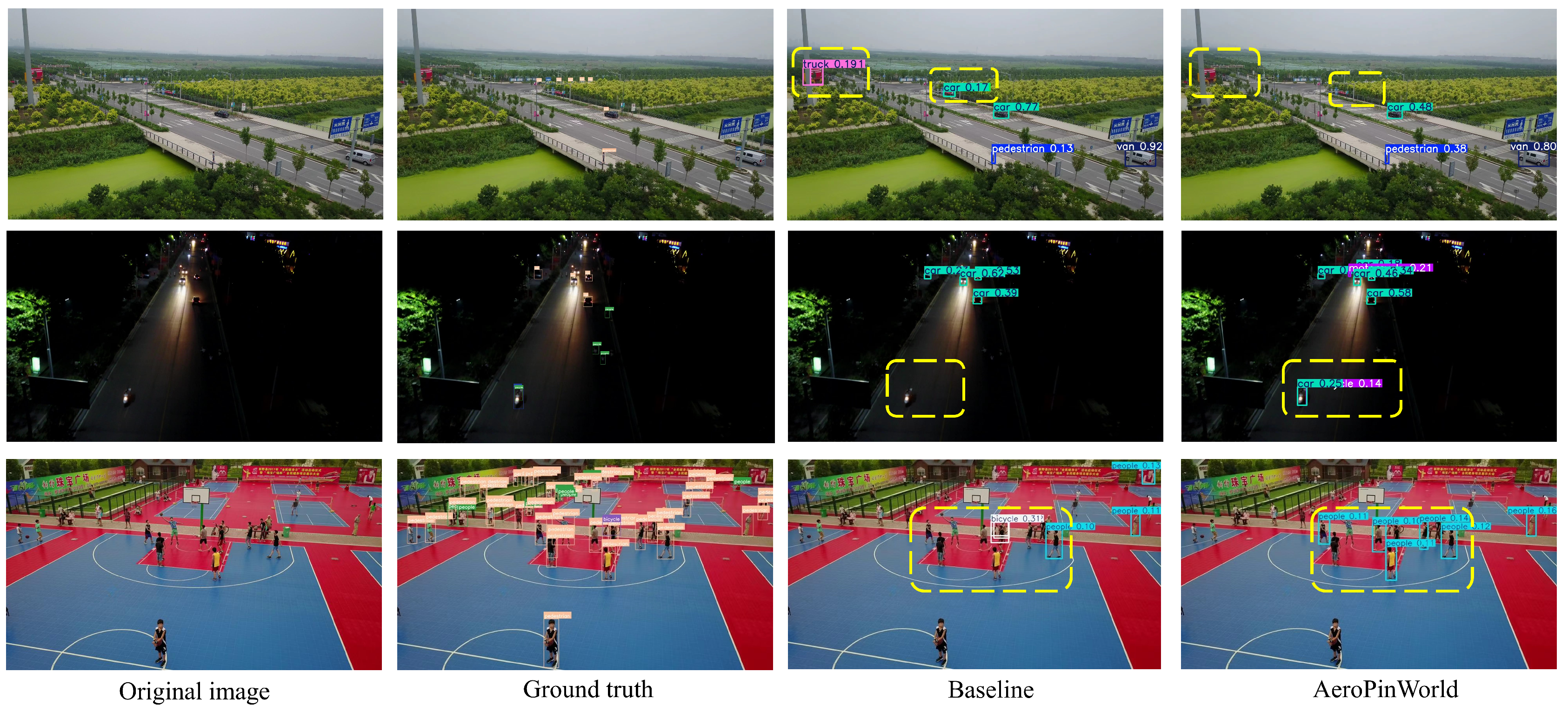

4.6. Main Results

Zero-shot transfer on VisDrone2019-DET (val, 1280).

Comparison with existing baselines in Table 1.

Zero-shot transfer on UAVDT-DET (640).

4.7. Ablation Studies

4.8. Qualitative Results

4.9. Efficiency Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Pham, C.; Vu, T.; Nguyen, K. LP-OVOD: Open-vocabulary object detection by linear probing. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024; pp. 779–788. [Google Scholar]

- Fang, R.; Pang, G.; Bai, X. Simple image-level classification improves open-vocabulary object detection. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2024, Vol. 38, 1716–1725. [Google Scholar] [CrossRef]

- Zhu, C.; Chen, L. A survey on open-vocabulary detection and segmentation: Past, present, and future. IEEE Transactions on Pattern Analysis and Machine Intelligence 2024, 46, 8954–8975. [Google Scholar] [CrossRef]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International conference on machine learning. PmLR, 2021; pp. 8748–8763. [Google Scholar]

- Du, D.; Qi, Y.; Yu, H.; Yang, Y.; Duan, K.; Li, G.; Zhang, W.; Huang, Q.; Tian, Q. The unmanned aerial vehicle benchmark: Object detection and tracking. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018; pp. 370–386. [Google Scholar]

- Zhu, P.; Wen, L.; Du, D.; Bian, X.; Fan, H.; Hu, Q.; Ling, H. Detection and tracking meet drones challenge. IEEE transactions on pattern analysis and machine intelligence 2021, 44, 7380–7399. [Google Scholar] [CrossRef]

- Tang, G.; Ni, J.; Zhao, Y.; Gu, Y.; Cao, W. A survey of object detection for UAVs based on deep learning. Remote Sensing 2023, 16, 149. [Google Scholar] [CrossRef]

- Qin, J.; Yu, W.; Feng, X.; Meng, Z.; Tan, C. A UAV Aerial Image Target Detection Algorithm Based on YOLOv7 Improved Model. Electronics 2024, 13, 3277. [Google Scholar] [CrossRef]

- Wang, K.; Fu, X.; Huang, Y.; Cao, C.; Shi, G.; Zha, Z.J. Generalized uav object detection via frequency domain disentanglement. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023; pp. 1064–1073. [Google Scholar]

- Cheng, T.; Song, L.; Ge, Y.; Liu, W.; Wang, X.; Shan, Y. Yolo-world: Real-time open-vocabulary object detection. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024; pp. 16901–16911. [Google Scholar]

- Zhang, R. Making convolutional networks shift-invariant again. In Proceedings of the International conference on machine learning. PMLR, 2019; pp. 7324–7334. [Google Scholar]

- Azulay, A.; Weiss, Y. Why do deep convolutional networks generalize so poorly to small image transformations? Journal of Machine Learning Research 2019, 20, 1–25. [Google Scholar]

- Yang, J.; Liu, S.; Wu, J.; Su, X.; Hai, N.; Huang, X. Pinwheel-shaped convolution and scale-based dynamic loss for infrared small target detection. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2025, Vol. 39, 9202–9210. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Advances in neural information processing systems 2015, 28. [Google Scholar] [CrossRef] [PubMed]

- Gu, X.; Lin, T.Y.; Kuo, W.; Cui, Y. Open-vocabulary object detection via vision and language knowledge distillation. arXiv 2021, arXiv:2104.13921. [Google Scholar]

- Zhou, X.; Girdhar, R.; Joulin, A.; Krähenbühl, P.; Misra, I. Detecting twenty-thousand classes using image-level supervision. In Proceedings of the European conference on computer vision, 2022; Springer; pp. 350–368. [Google Scholar]

- Li, L.H.; Zhang, P.; Zhang, H.; Yang, J.; Li, C.; Zhong, Y.; Wang, L.; Yuan, L.; Zhang, L.; Hwang, J.N.; et al. Grounded language-image pre-training. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 10965–10975. [Google Scholar]

- Zhang, H.; Zhang, P.; Hu, X.; Chen, Y.C.; Li, L.; Dai, X.; Wang, L.; Yuan, L.; Hwang, J.N.; Gao, J. Glipv2: Unifying localization and vision-language understanding. Advances in Neural Information Processing Systems 2022, 35, 36067–36080. [Google Scholar]

- Minderer, M.; Gritsenko, A.; Stone, A.; Neumann, M.; Weissenborn, D.; Dosovitskiy, A.; Mahendran, A.; Arnab, A.; Dehghani, M.; Shen, Z.; et al. Simple open-vocabulary object detection. In Proceedings of the European conference on computer vision, 2022; Springer; pp. 728–755. [Google Scholar]

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, C.; Yang, J.; Su, H.; et al. Grounding dino: Marrying dino with grounded pre-training for open-set object detection. In Proceedings of the European conference on computer vision, 2024; Springer; pp. 38–55. [Google Scholar]

- Du, D.; Zhu, P.; Wen, L.; Bian, X.; Lin, H.; Hu, Q.; Peng, T.; Zheng, J.; Wang, X.; Zhang, Y.; et al. VisDrone-DET2019: The vision meets drone object detection in image challenge results. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision workshops, 2019; pp. 0–0. [Google Scholar]

- Kayhan, O.S.; Gemert, J.C.v. On translation invariance in cnns: Convolutional layers can exploit absolute spatial location. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 14274–14285. [Google Scholar]

- Li, J. Research on RT-DETR Drone Target Detection Method Based on Bidirectional Feature Attention Fusion. In Proceedings of the 2025 IEEE 7th International Conference on Civil Aviation Safety and Information Technology (ICCASIT), 2025; IEEE; pp. 222–227. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European conference on computer vision, 2014; Springer; pp. 740–755. [Google Scholar]

- Zhai, X.; Huang, Z.; Li, T.; Liu, H.; Wang, S. YOLO-Drone: an optimized YOLOv8 network for tiny UAV object detection. Electronics 2023, 12, 3664. [Google Scholar] [CrossRef]

- Gong, J.; Yuan, Z.; Li, W.; Li, W.; Guo, Y.; Guo, B. A Lightweight Upsampling and Cross-Modal Feature Fusion-Based Algorithm for Small-Object Detection in UAV Imagery. Electronics 2026, 15, 298. [Google Scholar] [CrossRef]

- Li, X.; Wang, W.; Wu, L.; Chen, S.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized focal loss: Learning qualified and distributed bounding boxes for dense object detection. Advances in neural information processing systems 2020, 33, 21002–21012. [Google Scholar]

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. Distance-IoU loss: Faster and better learning for bounding box regression. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2020, Vol. 34, 12993–13000. [Google Scholar] [CrossRef]

- Weng, Z.; Yu, Z. Cross-Modal Enhancement and Benchmark for UAV-based Open-Vocabulary Object Detection. arXiv 2025. [Google Scholar]

- Gao, J.; Jiang, X.; Wang, A.; Gao, Y.; Fang, Z.; Lew, M.S. PMG-SAM: Boosting Auto-Segmentation of SAM with Pre-Mask Guidance. Sensors 2026, 26, 365. [Google Scholar] [CrossRef] [PubMed]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.Y.; et al. Segment anything. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023; pp. 4015–4026. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE transactions on pattern analysis and machine intelligence 2016, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2017; pp. 2980–2988. [Google Scholar]

- Tian, Z.; Shen, C.; Chen, H.; He, T. Fcos: Fully convolutional one-stage object detection. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019; pp. 9627–9636. [Google Scholar]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.Y. Dino: Detr with improved denoising anchor boxes for end-to-end object detection. arXiv 2022, arXiv:2203.03605. [Google Scholar]

- Yu, Y.; Sun, X.; Cheng, Q. Expert teacher based on foundation image segmentation model for object detection in aerial images. Scientific Reports 2023, 13, 21964. [Google Scholar] [CrossRef] [PubMed]

- Sun, X.; Yu, Y.; Cheng, Q. Unified diffusion-based object detection in multi-modal and low-light remote sensing images. Electronics Letters 2024, 60, e70093. [Google Scholar] [CrossRef]

| Method | mAP | AP50 | AP75 | APS | Params (M) | FLOPs (G) |

|---|---|---|---|---|---|---|

| CAGE [29] | 0.105 | 0.156 | 0.106 | 0.048 | 17.4 | 36.3 |

| PMG-SAM [30] | 0.109 | 0.157 | 0.110 | 0.051 | 136.0 | 296.2 |

| SAM [31] | 0.105 | 0.159 | 0.107 | 0.048 | 93.7 | 233.5 |

| Faster R-CNN [32] | 0.111 | 0.168 | 0.111 | 0.051 | 41.1 | 133.6 |

| RetinaNet [33] | 0.123 | 0.179 | 0.112 | 0.056 | 36.5 | 129.0 |

| FCOS [34] | 0.108 | 0.161 | 0.109 | 0.049 | 32.1 | 125.3 |

| DINO [35] | 0.129 | 0.183 | 0.111 | 0.051 | 47.6 | 146.0 |

| ET-FSM [36] | 0.131 | 0.192 | 0.114 | 0.052 | 41.1 | 133.6 |

| YOLO-World [10] | 0.126 | 0.179 | 0.109 | 0.056 | 46.8 | 180.4 |

| YOLO-World+UMM [37] | 0.128 | 0.182 | 0.113 | 0.057 | 79.3 | 276.0 |

| YOLO-World v2-S [10] | 0.112 | 0.159 | 0.098 | 0.054 | 16.4 | 34.2 |

| AeroPinWorld-S (ours) | 0.135 | 0.197 | 0.118 | 0.063 | 16.3 | 35.7 |

| Method | mAP | AP50 | AP75 | APS |

|---|---|---|---|---|

| CAGE [29] | 0.135 | 0.259 | 0.134 | 0.056 |

| PMG-SAM [30] | 0.139 | 0.263 | 0.141 | 0.058 |

| SAM [31] | 0.137 | 0.258 | 0.138 | 0.056 |

| Faster R-CNN [32] | 0.142 | 0.265 | 0.136 | 0.059 |

| RetinaNet [33] | 0.139 | 0.255 | 0.142 | 0.060 |

| FCOS [34] | 0.141 | 0.263 | 0.141 | 0.058 |

| DINO [35] | 0.143 | 0.261 | 0.134 | 0.061 |

| ET-FSM [36] | 0.138 | 0.254 | 0.138 | 0.058 |

| YOLO-World [10] | 0.145 | 0.263 | 0.141 | 0.063 |

| YOLO-World+UMM [37] | 0.144 | 0.265 | 0.143 | 0.059 |

| YOLO-World v2 [10] | 0.144 | 0.265 | 0.140 | 0.060 |

| AeroPinWorld (ours) | 0.146 | 0.270 | 0.148 | 0.068 |

| Setting | mAP | AP50 | AP75 | APS | Params (M) | FLOPs (G) |

|---|---|---|---|---|---|---|

| Baseline (YOLO-World v2) | 0.112 | 0.159 | 0.098 | 0.054 | 16.4027 | 34.2 |

| Only B (P1/2 + P2/4) | 0.124 | 0.171 | 0.109 | 0.057 | 16.4031 | 35.3 |

| Only H (P3→P4 + P4→P5) | 0.129 | 0.182 | 0.111 | 0.059 | 16.3745 | 35.6 |

| B+H (Full AeroPinWorld) | 0.135 | 0.197 | 0.118 | 0.063 | 16.3745 | 35.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).