Submitted:

21 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A lightweight, spatial entropy-based estimator to gauge local noise levels.

- A learnable parameter λ that controls the layer’s sensitivity to this estimated noise.

- The formulation of R-LayerNorm, a noise-aware normalization layer.

- Empirical evidence on the CIFAR-10-C benchmark showing a +4.95% average improvement over BatchNorm.

- Analysis of the learnable λ parameter and its impact on different corruption types.

- Demonstration of R-LayerNorm as a stable, drop-in replacement requiring minimal hyperparameter tuning.

2. Utility of Normalization Layers in Deep Learning

- Mitigating Internal Covariate Shift: As parameters in earlier layers update, the distribution of inputs to deeper layers shifts, slowing down training. Normalization stabilizes these distributions [1].

- Enabling Higher Learning Rates: By maintaining activations within a stable range, normalization prevents gradient explosion, allowing for faster convergence.

- Providing Mild Regularization: The noise in batch statistics (for BatchNorm) acts as a regularizer, reducing overfitting.

- Improving Gradient Flow: Well-normalized inputs help mitigate vanishing/exploding gradient problems, especially in very deep networks.

3. The Role of Tanh in Conjunction with Normalization

- Natural Alignment: Standard normalization outputs data with approximately zero mean and unit variance. The tanh function is centered at zero and has a quasi-linear region around this point, ensuring most normalized inputs are activated within a sensitive gradient regime.

- Controlled Saturation: Unlike ReLU, which has a hard zero, tanh saturates smoothly to -1 and 1. When placed after normalization, this provides a natural, soft bounding of the data, preventing extreme values from propagating.

- Dynamic Variants (DyT): Recent explorations like Dynamic Tanh (DyT) [5] introduce learnable parameters to shift and scale the tanh function per channel or even per sample. The goal is adaptive non-linearity. However, DyT’s dynamic nature introduces significant computational cost and architectural complexity.

4. R-LayerNorm: Formulation and Implementation

4.1. Core Equation

- E(x) is the estimated local entropy (noise map) of the same spatial dimensions as x.

- λ is a learnable scalar parameter controlling noise sensitivity.

- ⊙ denotes element-wise multiplication.

4.2. Noise Estimation via Local Entropy (E(x))

4.3. Interpretation and Function

- In low-entropy (clean) regions, E(x) → 0, the scaling factor → 1, and R-LayerNorm reverts to standard normalization.

- In high-entropy (noisy) regions, E(x) is large, increasing the denominator. This results in gentler normalization, preventing the amplification of spurious, noisy fluctuations.

4.4. Practical Use as a Drop-in Replacement

- API Compatibility: It uses the same interface as nn.LayerNorm or nn.BatchNorm2d (accepting a normalized_shape argument).

- Minimal Hyperparameters: Only the initial value for λ (lambda_init, default 0.01) needs consideration.

- Training Stability: The gradient through λ and the entropy estimator is well-behaved, not introducing training instability.

5. Experiments

5.1. Experimental Setup

- Dataset: CIFAR-10-C [6], containing 15 corruptions applied to CIFAR-10 test images at 5 severity levels. We evaluate on 6 diverse corruptions: gaussian_noise, shot_noise, impulse_noise, defocus_blur, frost, contrast.

- Model: A lightweight CNN (SimpleTestModel) with two convolutional blocks (32 and 64 channels) followed by fully-connected classifiers. This architecture allows for clear isolation of the normalization effect.

- Baseline: Standard nn.BatchNorm2d (the default choice for CNNs).

- Training Protocol: Mixed-corruption training. For each epoch, the model is trained on small batches sampled from each of the 6 corruption types (severity 3), preventing overfitting to a single noise type.

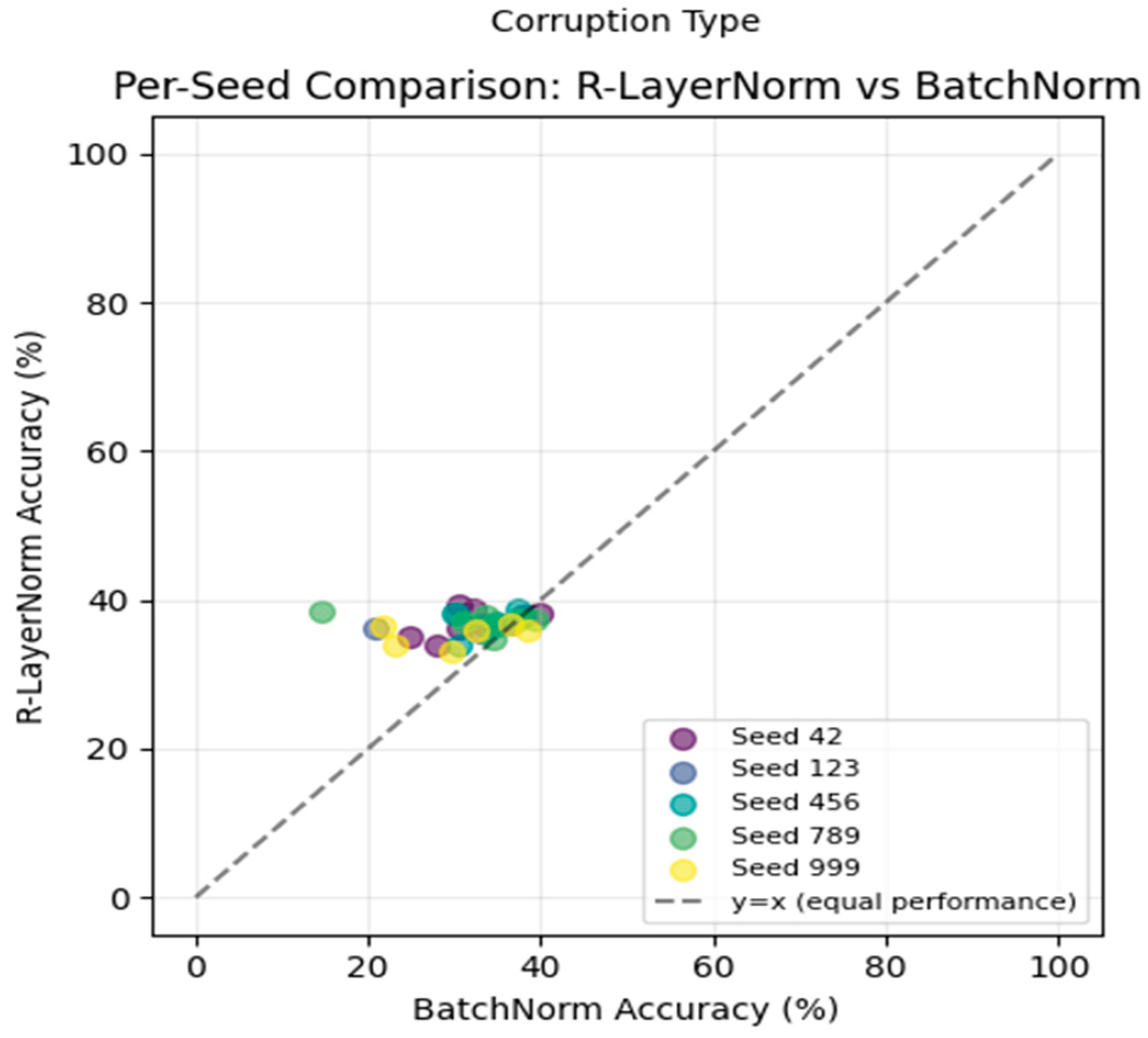

- Evaluation: Models are tested on unseen images (indices 1000-1500) from each corruption’s severity-3 set. All results are averaged over 5 random seeds for statistical reliability.

5.2. Main Results

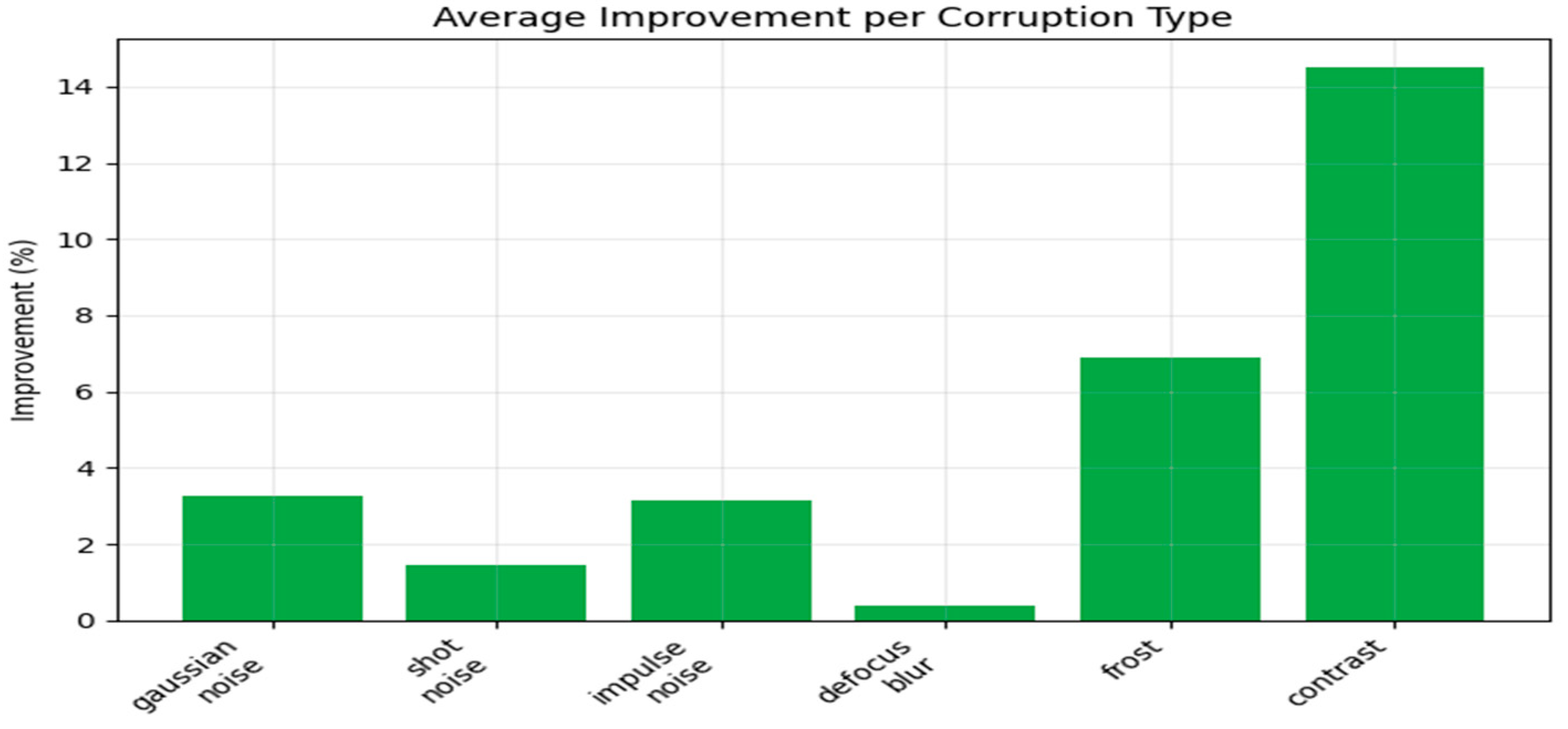

| Corruption | BatchNorm (mean ± std) | R-LayerNorm (mean ± std) | Improvement (Δ) |

| Gaussian Noise | 33.92% ± 2.32 | 37.20% ± 1.23 | +3.28% |

| Shot Noise | 35.84% ± 2.35 | 37.28% ± 0.78 | +1.44% |

| Impulse Noise | 31.08% ± 2.36 | 34.24% ± 0.89 | +3.16% |

| Defocus Blur | 37.36% ± 2.07 | 37.76% ± 0.94 | +0.40% |

| Frost | 29.52% ± 3.41 | 36.40% ± 1.45 | +6.88% |

| Contrast | 22.36% ± 5.05 | 36.88% ± 1.34 | +14.52% |

| Average | 31.68% | 36.63% | +4.95% ± 0.74% |

5.3. Visual Analysis

6. Analysis

6.1. Performance Breakdown

- Best Performance (Contrast, Frost): Achieves very large improvements (+14.52%, +6.88%). These corruptions involve global or structured noise that significantly alters local statistics, which R-LayerNorm’s adaptive scaling effectively mitigates.

- Solid Gains (Gaussian, Impulse Noise): Shows clear improvement (+3.28%, +3.16%) over additive noise types.

- Moderate/Mixed Gains (Shot Noise, Defocus Blur): Offers smaller but positive improvements. Defocus blur is a more deterministic degradation, which may be less “noise-like” as defined by our entropy measure.

6.2. Stability Analysis

7. Initialization and Sensitivity of λ

| λ_init | Avg. Improvement | p-value | Notes |

| 0.005 | +1.23% | 0.266 | Under-suppresses noise; insignificant. |

| 0.01 | +4.95% | 0.0002 | Optimal. Balanced suppression. |

| 0.02 | -1.80% | 0.266 | Over-suppresses, harming signal; insignificant. |

| 0.03 | +0.73% | 0.266 | Unstable; can help or hurt. |

8. Related Work

-

Adaptive & Robust Normalization:

- Batch Renormalization [7]: Clips extreme mini-batch statistics but does not adapt to spatial noise.

- Dynamic Normalization: Methods like Conditional BatchNorm [8] use external data (class labels) to modulate parameters, but not based on intrinsic input noise.

- Weight Standardization[9]: Normalizes weights instead of activations; complementary to our approach.

- Noise-Robust Architectures: Denoising Autoencoders, Noise2Noise [10] training, and adversarial training focus on learning noise mappings, which are orthogonal and potentially combinable with R-LayerNorm.

- Dynamic Activations: Dynamic Tanh (DyT) [5] and related methods (learned ReLU slopes) adapt the activation function. Compared to DyT, R-LayerNorm is simpler (one λ vs. per-channel parameters) and far more computationally efficient.

9. Limitations and Future Work

- Corruption-Specific Performance: While excellent on some corruptions, gains on others (defocus blur) are marginal. A more sophisticated noise estimator beyond spatial variance may be needed for deterministic degradations.

- Computational Overhead: Although low (~10%), the extra operations (pooling for entropy) are non-zero. For extremely latency-critical applications, this is a consideration.

- Theoretical Underpinning: The link between spatial entropy and beneficial noise suppression, while empirically validated, would benefit from deeper theoretical analysis.

- Broader Benchmarking: Future work should test R-LayerNorm on larger datasets (ImageNet-C), different architectures (Transformers), and in self-supervised learning paradigms where noise robustness is crucial.

10. Conclusions

Appendix A. Experimental Settings

- Framework: PyTorch 2.0.

- Hardware: Experiments run on NVIDIA T4 GPU (Google Colab).

- Optimizer: Adam with default parameters (lr=0.001, β1=0.9, β2=0.999).

- Batch Size: 32.

- Training Epochs: 10 for main results; 5 for quick λ ablation.

- Data Split: For each corruption: 1000 images for training, 500 different images for testing (ensuring no overlap).

Appendix B. Hyperparameters

-

R-LayerNorm:

- epsilon (ε): 1e-5 (standard for numerical stability).

- lambda_init: 0.01 (found to be optimal). Tuned over {0.005, 0.01, 0.02, 0.03}.

-

Baseline (BatchNorm):

- momentum: 0.1.

- eps: 1e-5.

- affine: True (learnable γ, β).

-

Model:

- Convolutional layers: 3x3 kernel, padding=1.

- Pooling: 2x2 MaxPool.

Appendix C. Efficiency of R-LayerNorm

- Parameter Count: Adds exactly 2 parameters (λ and the scalar in entropy estimation is non-learnable) per layer compared to BatchNorm. Negligible (<0.001% increase in our test model).

- FLOPs Overhead: ~10% increase per normalization layer, primarily from two extra 3x3 average pooling operations for local mean/variance.

- Memory Footprint: Minimal increase for storing the noise map E(x) during the forward pass.

- Training Speed: No noticeable difference in epochs-to-convergence. Per-iteration slowdown is proportional to FLOPs increase (~10%).

References

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. ICML 2015. [Google Scholar]

- Ba, J. L.; Kiros, J. R.; Hinton, G. E. Layer normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar] [CrossRef]

- Ulyanov, D.; Vedaldi, A.; Lempitsky, V. Instance normalization: The missing ingredient for fast stylization. arXiv 2016, arXiv:1607.08022. [Google Scholar]

- Wu, Y.; He, K. Group normalization. ECCV 2018, 4. [Google Scholar]

- 5. Dynamic Tanh (DyT) – Referenced as a representative dynamic activation method from recent literature (specific citation to be added based on chosen reference).

- Hendrycks, D.; Dietterich, T. Benchmarking neural network robustness to common corruptions and perturbations. In ICLR; 2019; Volume 6. [Google Scholar]

- Ioffe, S. Batch renormalization: Towards reducing minibatch dependence in batch-normalized models. In NeurIPS; 2017. [Google Scholar]

- Dumoulin, V.; Shlens, J.; Kudlur, M. A learned representation for artistic style. ICLR 2016. [Google Scholar]

- Qiao, S.; Wang, H.; Liu, C.; Shen, W.; Yuille, A. Weight standardization. arXiv 2019, arXiv:1903.10520. [Google Scholar]

- Lehtinen, J.; Munkberg, J.; Hasselgren, J.; Laine, S.; Karras, T.; Aittala, M.; Aila, T. Noise2noise: Learning image restoration without clean data. ICML 2018. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).