1. Introduction

The rapid development of online course platforms has significantly transformed how learning is delivered beyond the boundaries of formal education. Course-oriented platforms allow learners to enroll in standalone courses created by independent authors, providing flexibility, scalability, and broad access to knowledge. These platforms primarily operate within the context of non-formal learning, where participation is voluntary and self-directed, and certification – when available – is issued by the platform itself rather than by an accredited educational institution.

Despite their growing popularity, many online course platforms remain focused mainly on content delivery and transactional processes, such as course publication, purchase, and access management. Support for the learning process is often limited to basic activity indicators and isolated assessment functionalities that operate as standalone automated components. As a result, learners may complete a course without a clear understanding of their level of knowledge acquisition, while platforms have limited capacity to meaningfully support the learning process.

Recent advances in artificial intelligence have accelerated the move toward automation in online learning platforms through automated content generation and personalization mechanisms. However, in many cases, these AI-based functionalities are implemented in a fragmented manner, lacking coordination or explicit pedagogical logic, which leads to an interpretation of learning as a sequence of automated actions.

This paper proposes an alternative perspective that shifts the focus from automation to orchestration of the learning experience within course-based platforms for non-formal online learning. In this context, orchestration refers to the systematic coordination of key elements of the learning experience – engagement with content, assessment activities, progress tracking, feedback provision, and learner guidance – without presupposing the presence of an instructor or formal instructional control.

Within the proposed conceptual framework, SoftLearner is developed as a prototype of a commercially oriented online course platform designed to illustrate a pedagogically grounded model for orchestrating learning in a course-based environment. The platform supports course publication, approval, purchase, and participation, and integrates AI-assisted mechanisms for test generation, along with functionalities for structured tracking of learning activity.

A central contribution of this work is the distinction between content consumption progress, which reflects the extent to which a course has been viewed or read, and knowledge acquisition progress, which is based on assessment performance and task difficulty levels. By coordinating these two dimensions, the platform moves beyond basic automation and offers a learning experience that supports orientation, self-reflection, and informed continuation of learning within individual courses.

By positioning orchestration as a systemic function, the proposed approach is particularly suitable for non-formal online environments in which learners interact directly with educational content. In this way, the platform compensates for the absence of an instructor through the coordinated use of AI-assisted mechanisms in a transparent and evidence-informed manner, without claiming equivalence to formal education.

The remainder of the paper is structured as follows.

Section 2 reviews related work on course-based learning platforms, AI-supported educational systems, and non-formal online learning.

Section 3 presents the platform architecture.

Section 4 describes the developed prototype.

Section 5 discusses directions for future development, and

Section 6 concludes the paper.

2. Related Works

2.1. Non-Formal Online Learning

Online course platforms constitute a distinct category of educational systems, separate from traditional Learning Management Systems (LMS) used in formal education. These course-based platforms primarily emphasize the publication, distribution, and participation in standalone online courses, often operating in consumer-oriented or commercial contexts, and function mainly within the realm of non-formal learning [

1].

Non-formal online learning is defined by voluntary participation, self-directed engagement, and flexible learning pathways, setting these platforms apart from institutionally governed learning management systems [

2]. As online courses increasingly expand in areas such as professional development and skills acquisition, the need for structured mechanisms to monitor and assess learner progress becomes more significant.

Research shows that non-formal online learning can include clearly defined learning objectives, assessment procedures, and knowledge validation without becoming part of formal education systems [

3,

4]. Thus, online courses are increasingly used for professional development, reskilling, and targeted competence acquisition, which requires more structured approaches to progress tracking and assessment [

5].

However, while the structural characteristics of non-formal online learning are increasingly acknowledged, less attention has been given to the pedagogical orchestration of learning processes within commercially oriented course-based platforms.

2.2. AI in Non-Formal Online Learning

In recent years, interest has grown in applying artificial intelligence (AI) to online learning environments, including course-based platforms in non-formal contexts. Major areas of focus include automated content generation, data-driven analysis, personalized learner interaction, and support through intelligent assistants [

6,

7].

However, in many current implementations, AI functionalities are deployed as separate automated components – such as test generators or recommendation systems – rather than being integrated into a coherent pedagogical framework. While this automation can improve the efficiency of specific tasks, it does not necessarily enhance the overall quality or coherence of the learning experience [

8]. This fragmentation can lead to learning environments where intelligent features function independently instead of as coordinated elements within a structured educational process.

2.3. Progress Tracking in Online Learning Environments

Progress tracking is a central component in both formal and non-formal learning contexts. On course-based platforms, progress is typically measured by content consumption indicators, such as completed lessons or viewed video materials. Although these metrics provide insight into learner engagement, they do not directly measure knowledge acquisition.

Research in learning analytics highlights the importance of combining activity data with assessment outcomes to gain a more comprehensive understanding of learning progress [

9,

10]. In non-formal learning environments, this requires balancing automated data collection with the interpretability of results, especially in the absence of an instructor who mediates between the system and the learner [

11].

Systematic reviews show that learning analytics in distance and online education enables the use of activity data (e.g., logs, participation records, interaction patterns) to analyze learning pathways, predict outcomes, and identify learners at risk. These approaches extend the understanding of learning progress beyond simple content completion indicators [

12,

13].

2.4. Assessment and Testing in AI-Supported Learning

AI-based methods for test generation and analysis have been widely explored in intelligent tutoring systems and e-learning environments. Existing approaches support automated generation of assessment items based on instructional content, educational taxonomies, and predefined difficulty levels [

14]. These methods are especially relevant for non-formal online courses, where the scale and diversity of content make manual test design resource-intensive.

Several authors emphasize that assessment in non-formal learning contexts should primarily serve a diagnostic and guiding function, rather than act as a high-stakes evaluation mechanism [

15]. This view aligns with the broader shift toward using assessment to support learning rather than as an end in itself. In non-formal online platforms, AI-assisted testing mechanisms are most effective when integrated as formative tools that inform learners about their understanding and support self-regulation.

2.5. Adaptive Support and Pedagogically Oriented Recommendation

Recommender systems are widely used across digital platforms, including educational environments. However, directly adopting classical approaches such as collaborative filtering raises concerns about transparency, pedagogical control, and potential bias in educational settings [

16,

17]. To address these challenges, research has increasingly focused on pedagogically informed guidance models, where recommendations are based on assessment outcomes, difficulty levels, and patterns of learner activity, and are offered as supportive suggestions rather than prescriptive learning pathways [

18]. These approaches are especially appropriate for course-based platforms in non-formal contexts, where learners have a high degree of autonomy and need guidance without restrictive system control.

2.6. Orchestration versus Automation in AI-Supported Learning

Recent discussions in the literature increasingly distinguish between the automation of educational functions and more holistic approaches that conceptualize learning as a coordinated system of interrelated activities [

19,

20]. While automation seeks to optimize isolated processes such as grading, recommendation, or content generation, an orchestration-oriented perspective emphasizes alignment among content engagement, assessment, progress monitoring, feedback, and learner guidance.

This distinction is particularly relevant for non-formal course-based platforms, where the absence of an instructor necessitates system-level coordination of learning processes. In such environments, systematically implemented orchestration may compensate for the lack of real-time instructional mediation by coordinating AI-assisted mechanisms in a transparent and interpretable manner.

3. System Architecture

3.1. Overall Architectural Overview

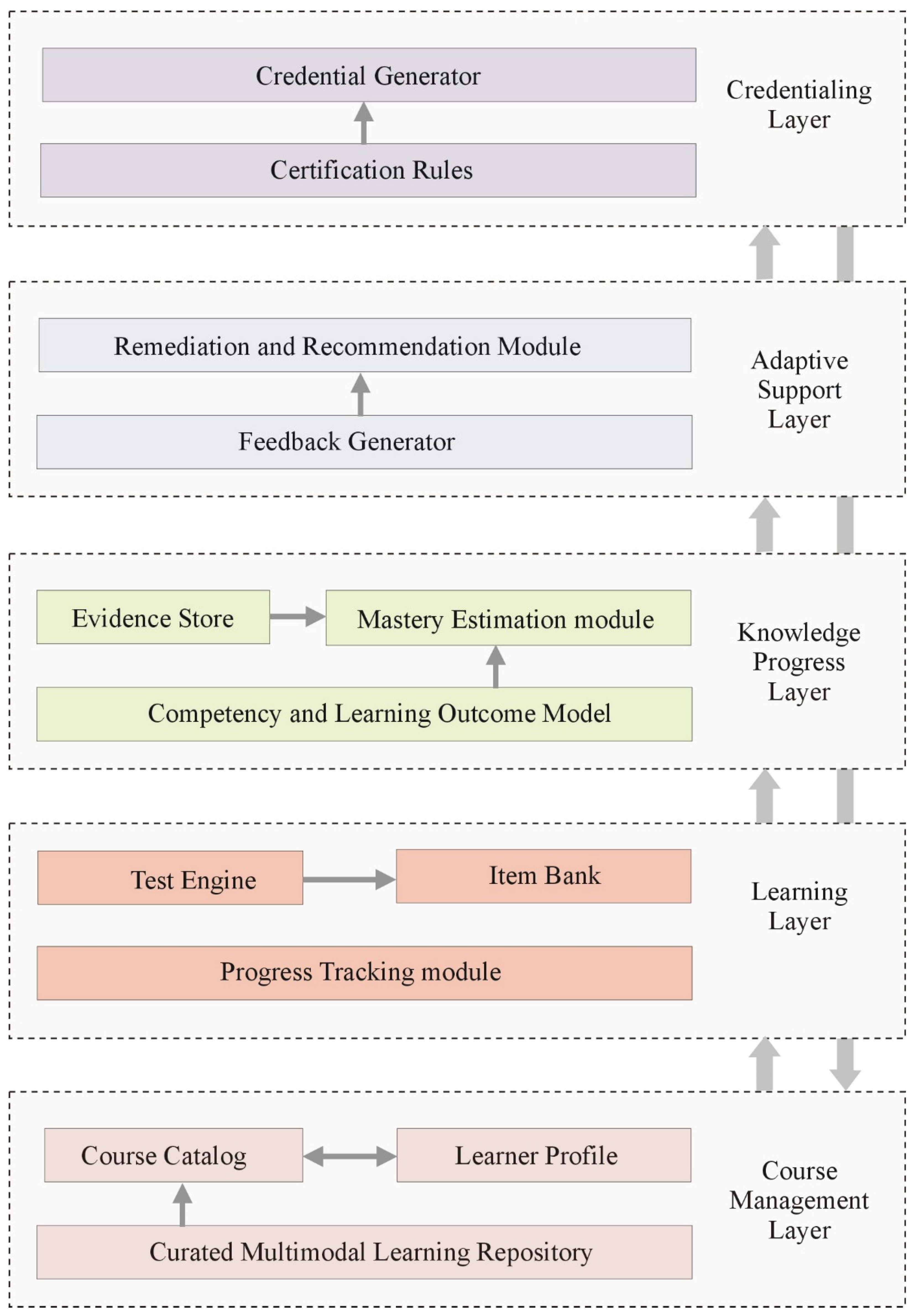

The architectural design of the proposed online course platform is structured to clearly distinguish content management, learning activity tracking, knowledge acquisition assessment, and guidance provision, while intentionally avoiding full automation of the learning process (

Figure 1).

The system is organized into five interconnected layers, each with a distinct and well-defined role. The foundational layer handles course management and delivery, including content access, presentation, and basic learner interaction mechanisms. Above this is the learning experience layer, which monitors learner progress at the content level and implements assessment through tests and task-based activities.

A central component of the architecture is the knowledge acquisition tracking layer, which introduces a competency and learning outcomes model to differentiate between “content viewed” and “knowledge mastered.” This layer uses evidence from assessment interactions and learner activity data to estimate mastery of specific competencies.

Building on this foundation, an adaptive support layer generates feedback, recommendations, and guidance for additional activities or content revision. The support provided is advisory rather than prescriptive, preserving learner autonomy and avoiding enforced learning pathways.

The top layer includes mechanisms for achievement validation through certificates and micro-credentials, based on predefined and verifiable criteria. Coordination across all layers is managed by an orchestration mechanism that ensures alignment among content engagement, assessment processes, progress representation, and adaptive support. This orchestration does not centralize instructional decision-making within a single autonomous component but instead facilitates structured coordination among system functions. Thus, the orchestration mechanism operates through distributed coordination across architectural layers rather than through a centralized autonomous decision-making entity.

3.2. Course Management Layer

The Course Management Layer provides the infrastructure for creating, organizing, and delivering online courses. This layer ensures the structured management of instructional content and controlled access to it. Its core functions include course cataloging, categorization and search, enrollment and access management, and the delivery of multimedia learning materials such as video lectures, textual resources, and interactive components.

The system supports access control mechanisms and tracks user status within individual courses, enabling a clear distinction between infrastructural and pedagogical components. Basic learner interaction is implemented at this level through feedback mechanisms related to course completion and content evaluation. These features enhance transparency and orientation within the available course offerings without directly affecting knowledge assessment algorithms.

Architecturally, the Course Management Layer serves as a supporting environment for the higher layers responsible for progress tracking, competency assessment, and adaptive support. This separation enables a clear distinction between content management and knowledge modeling, which is essential for implementing the orchestration-oriented approach described in

Section 2.6.

3.3. Learning Layer

The Learning Layer implements mechanisms to track learning activity within individual courses. This layer focuses on learner interaction with instructional units and the formalized collection of interaction data.

The first component is the Progress Tracking module, which records completed lessons, viewed resources, and performed learning activities. This tracking reflects engagement with instructional content but does not directly assess knowledge acquisition.

The second component is the Test Engine, which supports both lesson-level assessments and cumulative course-level evaluations. It incorporates an AI-assisted item generation mechanism capable of producing assessment items based on specified topics and varying levels of difficulty. Generated items are stored in a structured Item Bank enriched with pedagogical metadata, such as difficulty level, thematic classification, alignment with learning objectives, and assessment criteria. This structured representation enables subsequent competency-based interpretation in higher architectural layers.

The Learning Layer collects and organizes learner interaction data but does not estimate competency mastery. That function is delegated to the next layer, where a specialized analytical model interprets assessment evidence in relation to defined competencies.

3.4. Knowledge Progress Layer

The Knowledge Progress Layer models learner advancement based on accumulated evidence and provides a structured representation of the current state of knowledge. At the core of this layer is a Competency and Learning Outcome Model, in which each course is defined by a structured and bounded set of measurable learning outcomes. The model is grounded in the principles of constructive alignment between learning objectives and assessment [

21], as well as a competency-based approach that emphasizes clearly defined and measurable learning outcomes [

22]. Each competency is linked to specific lessons, thematic units, and item types, enabling systematic alignment between assessment activities and intended learning outcomes rather than isolated test scores.

Learner interaction data – including test version, performance results, response time, and number of attempts – are stored in a logically separated Evidence Store. Based on this evidence, a Mastery Estimation module computes the degree of mastery for each defined competency.

The estimation mechanism may use different strategies depending on the implementation context, ranging from aggregated performance indicators adjusted for difficulty to more formal psychometric models, such as Item Response Theory (IRT), including Rasch models [

23]. The architecture is not bound to a specific estimation technique, ensuring extensibility of the assessment mechanism.

3.5. Adaptive Support Layer

The Adaptive Support Layer provides guidance based on the learner’s current competency mastery levels. This layer implements adaptive support mechanisms without prescribing a fixed learning pathway or restricting learner autonomy.

A central component is the Feedback Generator, which interprets mastery estimations and produces structured, evidence-informed feedback on strengths and areas for improvement within individual competencies. The feedback serves a diagnostic function and supports self-regulated learning.

The second component, the Remediation and Recommendation Module, offers guidance based on mastery levels. Depending on the degree of mastery, the system may suggest revisiting specific lessons, engaging in additional practice activities, or attempting a focused reassessment targeting a particular competency. These recommendations are advisory rather than mandatory.

Additionally, the layer may adapt the difficulty of subsequent assessment items according to the current mastery estimation. This enables dynamic adjustment of support while avoiding centralized control over the learning sequence.

Architecturally, the Adaptive Support Layer functions as a coordination interface between assessment and content engagement, operationalizing the orchestration approach described in

Section 2.6. It uses mastery estimations as input but does not replace learner decision-making.

3.6. Credentialing Layer

The Credentialing Layer completes the learning cycle by establishing a structured credentialing ecosystem based on verified evidence of competency mastery. This layer defines explicit Certification Rules, which may include minimum mastery thresholds for key competencies, final assessment requirements, or coverage of a specified set of learning outcomes. These criteria are transparent and verifiable, ensuring accountability in the credentialing process.

When predefined conditions are met, the Credential Generator issues a credential (such as a digital certificate or micro-credential), along with a validation mechanism for subsequent verification. Credentialing is not treated as a one-time administrative action but as part of a broader ecosystem of rules, evidence, and verifiability.

By integrating competency-based modeling, evidence-based mastery estimation, adaptive support, and structured credentialing, the proposed architecture enables modular and cumulative recognition of learning outcomes. This design aligns with the principles of lifelong learning and supports continuous competency development in both formal and non-formal educational contexts [

24].

4. Prototype and Implementation

4.1. Prototype Overview

The proposed architecture has been implemented as a functional web-based prototype called SoftLearner. The prototype was developed in a university research environment and serves as an experimental validation of the pedagogically oriented architecture described in

Section 3.

SoftLearner supports the creation, publication, purchase, and participation in online courses within a unified platform. Multiple user roles are implemented (learner, content creator, administrator), enabling structured content management and secure transaction handling. The system operationalizes the separation between infrastructural, learning, and competency-related components defined in the architectural model.

4.2. Implemented Architectural Layers

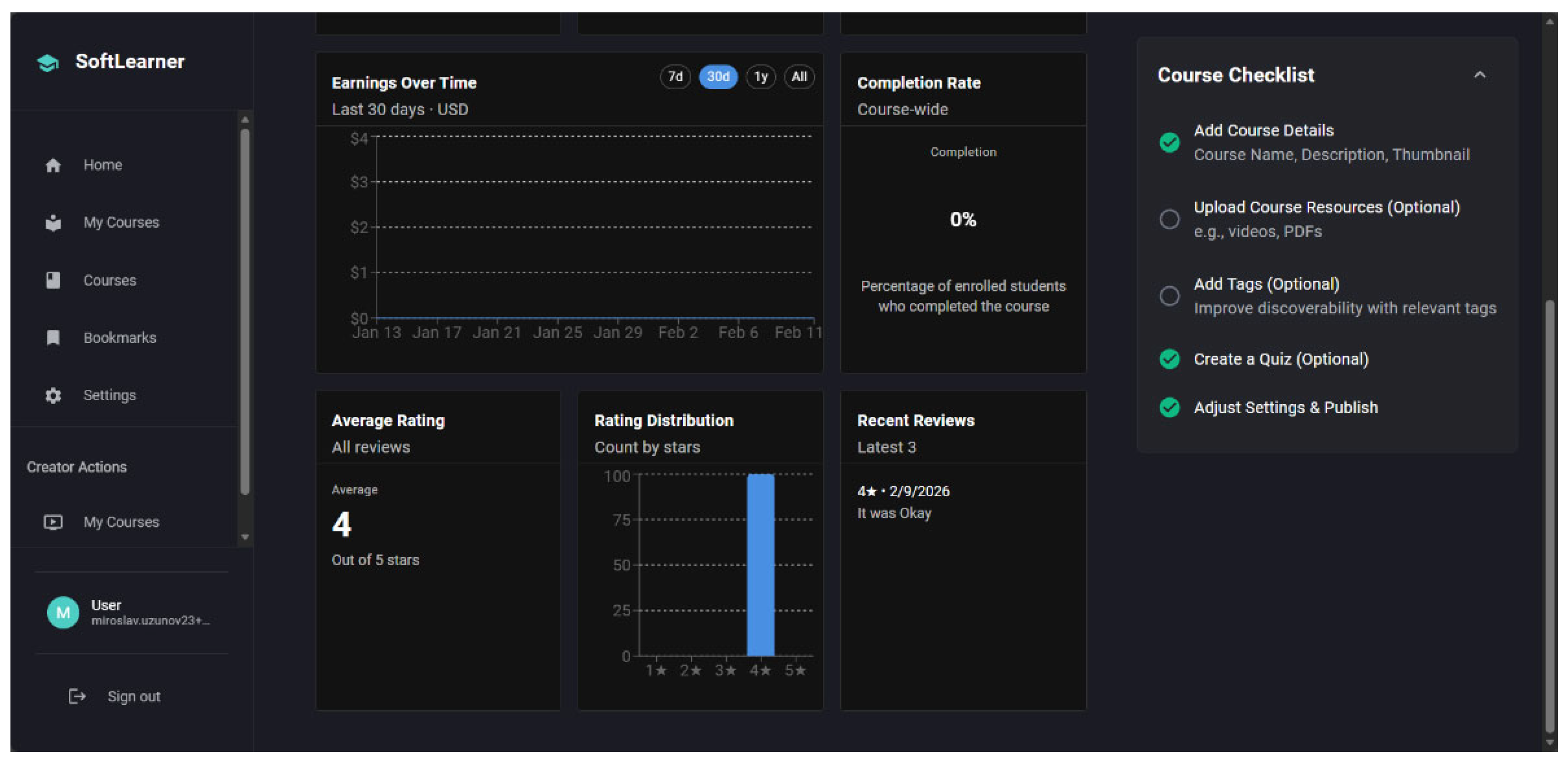

The Course Management Layer has been implemented with functionalities for course creation, editing, and publication. Content creators can define core course metadata (title, description, category, tags, price), upload multimedia resources, and organize them into a structured instructional sequence. Additionally, course-level analytics are provided to support structured monitoring and performance evaluation (

Figure 2).

A structured application process for content creators is included and subject to administrative review. Learners can browse the course catalog, filter courses by category, and complete purchases through an integrated online payment system. After successful payment, purchased courses become accessible in a personalized “My Courses” section.

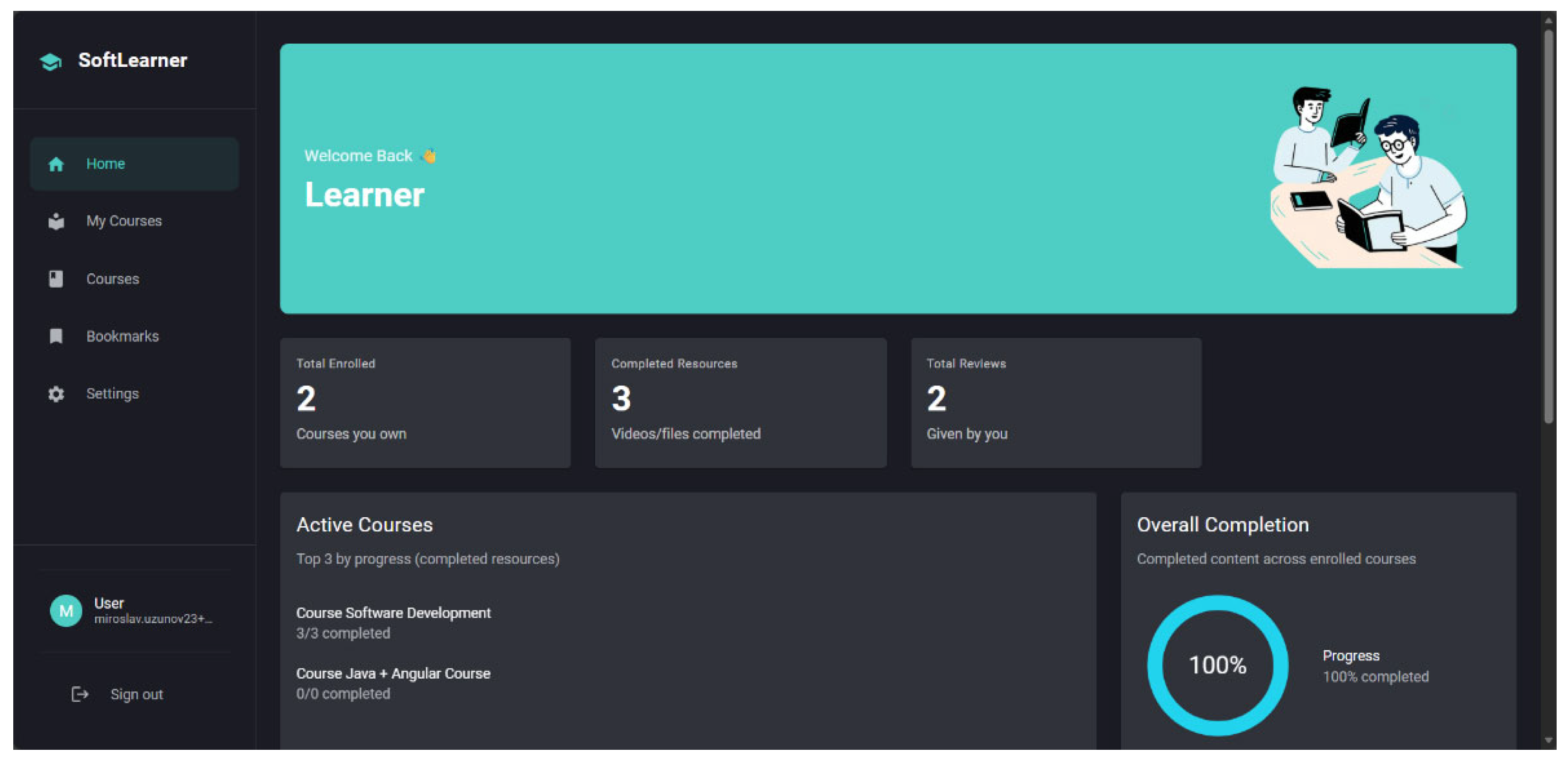

The Learning Layer is implemented with activity tracking and test-based assessment mechanisms. The Progress Tracking module records completed lessons and accessed resources, visualizing overall course completion. This tracking reflects learner engagement but does not currently provide competency-based interpretation (

Figure 3).

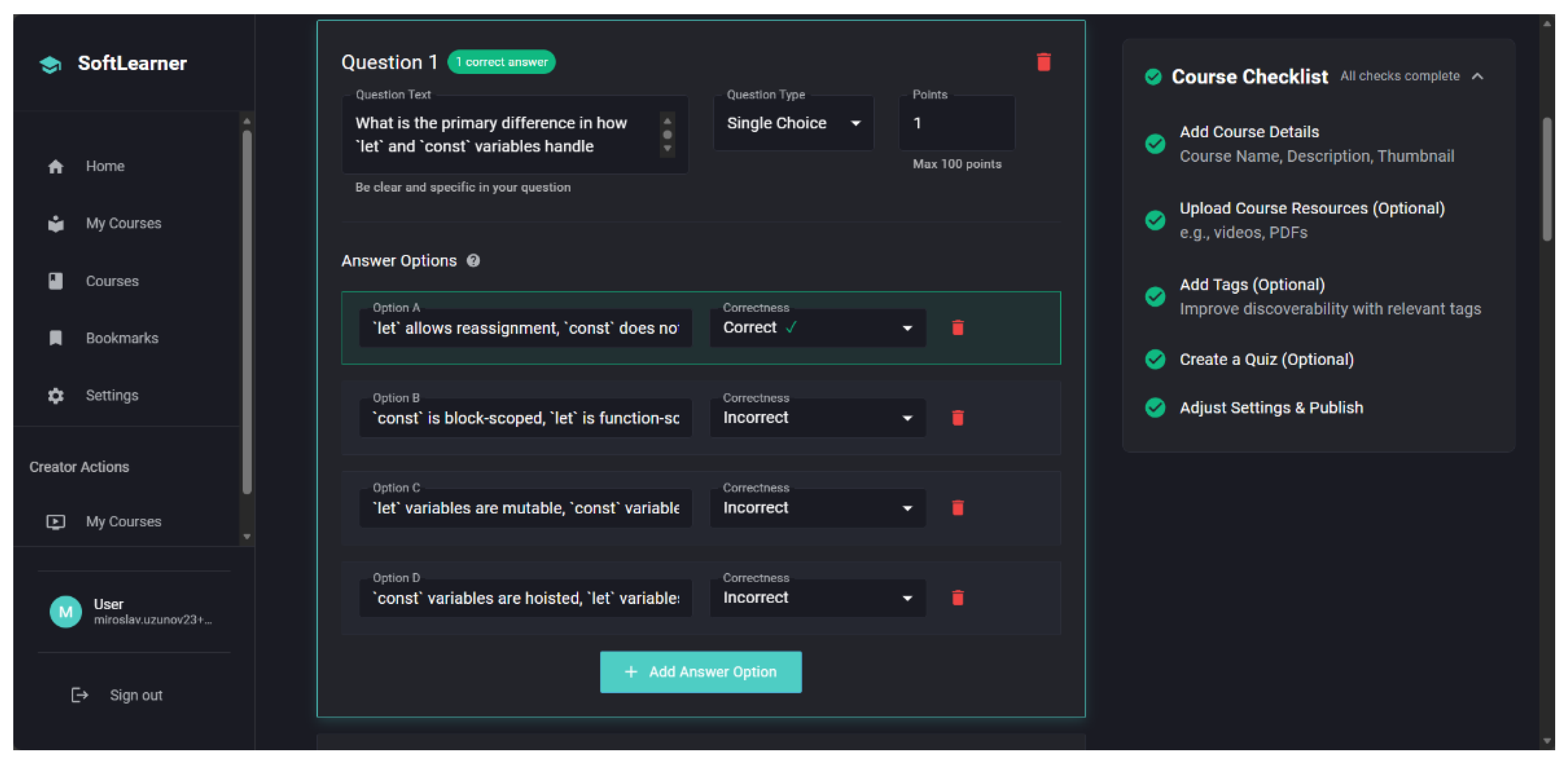

A Test Engine supports both lesson-level assessments and cumulative course-level tests. The system automatically calculates test scores and stores the most recent result. Assessment items are maintained in a structured Item Bank (

Figure 4).

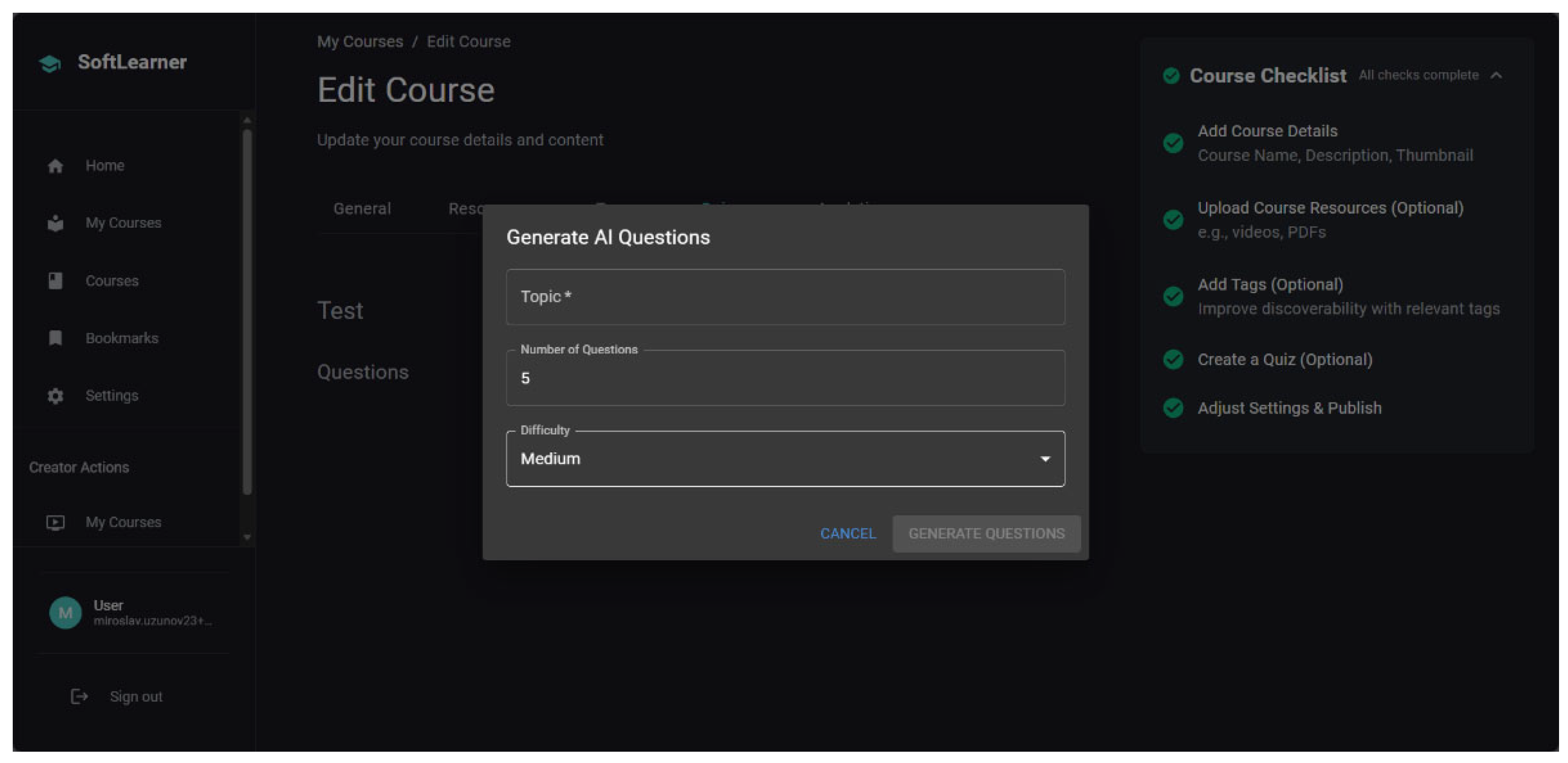

The platform features an AI-assisted question generation tool. Content creators specify a topic, difficulty level, and number of items, after which the system generates draft assessment items for review and editing before publication. In this way, artificial intelligence serves as a supportive tool rather than a replacement for expert judgment (

Figure 5).

The higher architectural layers – specifically the Knowledge Progress Layer, Adaptive Support Layer, and Credentialing Layer – are partially implemented at a conceptual level and are intended for future system expansion. Their integration is structurally supported by the current system architecture.

The prototype demonstrates the feasibility of clearly separating content management, activity tracking, and test-based assessment within a coherent, multi-layered structure.

4.3. Technical Implementation

SoftLearner is implemented as a modern full-stack web application. The frontend is developed using Next.js, which enables server-side rendering and efficient client–server interaction. Supabase provides backend infrastructure, including authentication services and relational data storage with PostgreSQL. Type-safe communication between the frontend and backend is achieved through tRPC. Stripe is integrated to provide secure online payment processing.

The technical implementation follows the principle of separation of concerns and aligns with the conceptual multi-layered architecture presented in

Section 3.

5. Discussion and Future Work

The proposed architecture introduces a multi-layered model for orchestrating course-based online learning, clearly distinguishing content management, learning activity tracking, competency assessment, adaptive support, and credentialing. This approach enables structured modeling of the learning process without centralizing instructional decision-making in a single autonomous algorithmic component.

A key conceptual contribution of the model is the distinction between “content completion” and “competency mastery.” While many existing platforms primarily rely on activity indicators or isolated test scores, the proposed architecture systematically links assessment processes to formally defined learning outcomes. This alignment provides a foundation for evidence-informed representation of learner progress.

Furthermore, the integration of AI-assisted mechanisms is positioned as supportive rather than substitutive regarding pedagogical control. Artificial intelligence serves as an augmentation tool that enhances feedback generation, item creation, and guidance processes, while preserving transparency, interpretability, and human oversight in the educational environment.

Since the current prototype partially implements the proposed architecture, future system development will include full operationalization of the competency model and mastery estimation mechanisms. In a broader research context, the architecture may be extended with advanced analytical modules to investigate learning behavior patterns and adaptive dynamics.

Future work also includes empirical validation of the orchestration model within non-formal online learning environments, with particular emphasis on evaluating its impact on learner orientation, self-regulation, and perceived clarity of progress representation.

6. Conclusions

This paper presents a conceptual architecture for orchestrating course-based online learning, based on a clear separation of content management, learning activity tracking, competency modeling, adaptive support, and credentialing. The proposed model extends conventional e-learning platforms by introducing competency-oriented logic and an evidence-informed interpretation of learning outcomes.

A central contribution of the study is the conceptual distinction between “content completion” and “competency mastery,” as well as the positioning of artificial intelligence as a supportive rather than substitutive element in the pedagogical process. Through its multi-layered structure, the architecture enables transparent and extensible integration of assessment and adaptive support mechanisms without centralizing instructional control in a single autonomous algorithmic component.

The developed prototype, SoftLearner, demonstrates the technical feasibility of the core layers related to course management and test-based assessment, while outlining a structured pathway toward full implementation of competency-based modeling and cumulative credentialing. In this respect, the proposed framework advances pedagogically oriented AI-supported systems within the broader context of non-formal learning and lifelong education.

Author Contributions

Conceptualization, A.T.; methodology, A.T.; software, M.U.; validation, M.U.; formal analysis, T.G.; writing—original draft preparation, A.T., M.U., T.G.; writing—review and editing, A.T., T.G.; funding acquisition, T.G.

Funding

This study is financed by the European Union-NextGenerationEU, through the National Recovery and Resilience Plan of the Republic of Bulgaria, project № BG-RRP-2.004-0001-C01 and supported by the project FP25-FMI-010 “Innovative interdisciplinary research in informatics, mathematics and educational pedagogy” at the Plovdiv University “Paisii Hilendarski”.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Reich, J.; Ruipérez-Valiente, J. The MOOC pivot. Science 2019, 363(6423) vol. 363(no. 6423), 130-131 pp. 130-131. [Google Scholar] [CrossRef] [PubMed]

- Council of Europe. Non-formal learning/education“, Youth Partnership. Available online: https://pjp-eu.coe.int/en/web/youth-partnership/non-formal-learning.

- Johnson, M.; Majewska, D. Formal, non-formal, and informal learning: What are they, and how can we research them? In Cambridge University Press & Assessment Research Report; 2022; p. pp. Available online: https://www.cambridgeassessment.org.uk/Images/665425-formal-non-formal-and-informal-learning-what-are-they-and-how-can-we-research-them-.pdf.

- European Commission – Joint Research Centre (JRC). Developing social and emotional skills through non-formal learning,“ Policy Brief: Non-formal learning, pp; Available online: https://joint-research-centre.ec.europa.eu/system/files/2020-12/policy_brief_non_formal_learning.pdf.

- European Commission. Instrument 12 – Module 9 (Validation of Non-formal and Informal Learning),“ Erasmus+ Project Result, pp. Available online: https://ec.europa.eu/programmes/erasmus-plus/project-result-content/a08ce95c-801e-4d94-869e-a75948569722/Instrument%2012%20-%20Module%209-EN.pdf.

- Becerra, Á.; Mohseni, Z.; Sanz, J.; Cobos, R. A Generative AI-Based Personalized Guidance Tool for Enhancing the Feedback to MOOC Learners. IEEE Global Engineering Education Conference; 2024; pp. pp. 1–8. [Google Scholar] [CrossRef]

- Sajja, R.; Sermet, Y.; Cikmaz, M.; Cwiertny, D.; Demir, I. Artificial Intelligence-Enabled Intelligent Assistant for Personalized and Adaptive Learning in Higher Education. Information 2024, 15(№ 10), pp. 596. [Google Scholar] [CrossRef]

- Garzón, J.; Patiño, E.; Marulanda, C. Systematic Review of Artificial Intelligence in Education: Trends, Benefits, and Challenges. Multimodal Technol. Interact. 2025, vol. 9(no. 8), pp. 84. [Google Scholar] [CrossRef]

- Sajja, R.; Sermet, Y.; Cwiertny, D.; Demir, I. Integrating AI and Learning Analytics for Data-Driven Pedagogical Decisions and Personalized Interventions in Education. In Technology, Knowledge and Learning; 2025. [Google Scholar] [CrossRef]

- Pan, Z.; Tillstrom, L.; Lauren, B.; Biegley, T.; Taylor, A.; Zheng, H. A Systematic Review of Learning Analytics-Incorporated Instructional Interventions on Learning Management Systems. Journal of Learning Analytics 2024, vol. 11(no. 2), 1–21. [Google Scholar] [CrossRef]

- Alfredo, R.; Echeverria, V.; Jin, Y. Y. L.; Swiecki, Z.; Gasevic, D.; Martinez-Maldonado, R. Human-Centred Learning Analytics and AI in Education: a Systematic Literature Review. Computers and Education Artificial Intelligence 2024, vol. 6(no. 5), pp. 100215. [Google Scholar] [CrossRef]

- Palanci, A.; Yılmaz, R. &. T. Z. Learning analytics in distance education: A systematic review. In Education and Information Technologies; Springer, 2024; vol. 29, pp. 22629–22650. [Google Scholar] [CrossRef]

- Johar, N.; Kew, S.; Tasir, Z.; Koh, E. Learning Analytics on Student Engagement to Enhance Students’ Learning Performance: A Systematic Review. Sustainability 2023, vol. 15, pp. 7849. [Google Scholar] [CrossRef]

- Calatayud, V.; Prendes, P.; Rosabel, R.-V. Artificial Intelligence for Student Assessment: A Systematic Review. Applied Sciences 2021, vol. 11(no. 12), pp. 5467. [Google Scholar] [CrossRef]

- Klose, M.; Handschuh, P.; Steger, D.; Artelt, C. Embracing the challenge: Predicting self-testing in non-formal online courses using machine learning. Computers & Education online first. 2026, vol. 242, pp. 105507. [Google Scholar] [CrossRef]

- Oubalahcen, H.; Tamym, L.; El Ouadghiri, M. The Use of AI in E-Learning Recommender Systems: A Comprehensive Survey. Procedia Computer Science 2023, vol. 224, 437–442. [Google Scholar] [CrossRef]

- Lampropoulos, G. Recommender systems in education: A literature review and bibliometric analysis. Advances in Mobile Learning Educational Research 2023, 3(№ 2), 829–850. [Google Scholar] [CrossRef]

- Brusilovsky, P.; Millán, E. User Models for Adaptive Hypermedia and Adaptive Educational Systems," Chapter in The Adaptive Web. In Lecture Notes in Computer Science; Springer, 2007; vol. 4321, pp. 3–53. [Google Scholar] [CrossRef]

- U.S. Department of Education. Artificial Intelligence and the Future of Teaching and Learning. Insights and Recommendations,“ pp. 2023. Available online: https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf?

- Holstein, K.; McLaren, B.; Aleven, V. Intelligent tutors as teachers’ aides: Exploring teacher needs for real-time analytics in blended classrooms. In Proceedings of the Seventh International Learning Analytics & Knowledge Conference (LAK '17), Association for Computing Machinery, 2017; pp. pp. 257–266. [Google Scholar] [CrossRef]

- Biggs, J. Enhancing teaching through constructive alignment. Higher Education 1996, vol. 32, 347–364. [Google Scholar] [CrossRef]

- Mulder, M. Mulder, Competence Theory and Research: a synthesis. In Competence-Based Vocational and Professional Education. Bridging the Worlds of Work and Education; Mulder, M., Ed.; Springer: Cham, Switzerland, 2017; pp. 1071–1106. [Google Scholar]

- Embretson, S.; Reise, S. Item Response Theory for Psychologists; Lawrence Erlbaum Associates, 2000; p. pp. 384. [Google Scholar] [CrossRef]

- Council of the European Union. Council Recommendation on a European approach to micro-credentials for lifelong learning and employability. Official Journal of the European Union 2022, C243, 10–25. Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32022H0627(02).

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).