Submitted:

14 February 2026

Posted:

28 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Research Questions

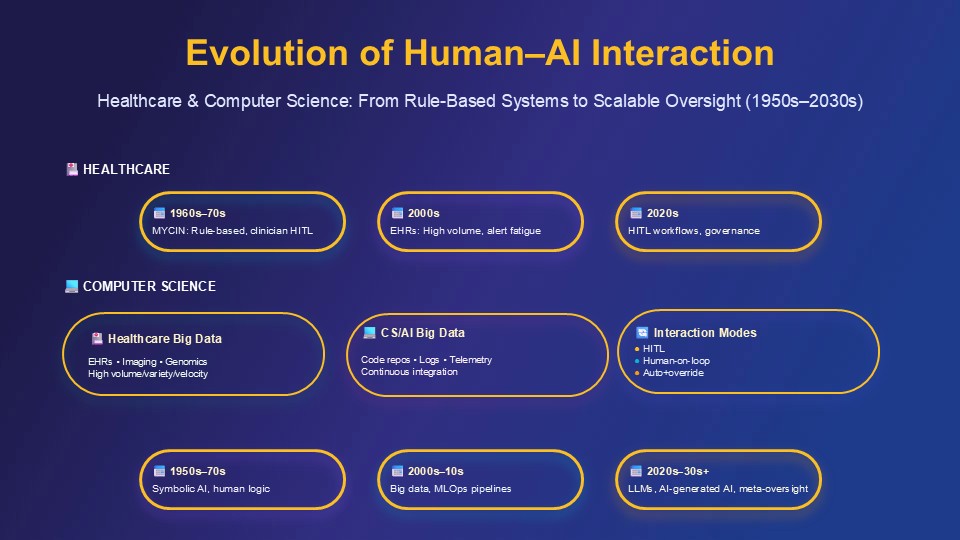

- How has human oversight evolved in the context of healthcare AI and computer science/AI development till 2026?

- Are these domains converging on structured, operational human-in-the-loop paradigms? If so, what are their core technical and organizational components?

- What gaps remain in translating human-in-the-loop principle (HITL) into practice in data-intensive, adaptive AI environments in these two domains?

1.2. Scope and Contribution

- Healthcare: Clinical decision support, diagnosis, imaging, risk prediction, treatment planning, documentation and workflow automation in hospital and ambulatory settings.

2. Methods

2.1. Design and Scope

2.2. Primary Sources (Regulatory and Policy Documents)

- UN human-rights guidance on AI and privacy, as it informs transparency and accountability requirements [32].

2.3. Secondary Sources (Conceptual and Empirical Literature)-

2.4. Extraction and Synthesis Strategy

- Definitions and framings of human oversight or human-in-the-loop interaction.

- Technical and organizational requirements related to oversight (override capabilities, transparency, training, governance structures, monitoring and incident response).

- Descriptions of data types, architectures, pipelines and monitoring mechanisms relevant to oversight in healthcare and AI development.

- Conceptual developments regarding meaningful human control, scalable oversight and AI safety.

- Implementation challenges in big-data, high-velocity environments (cognitive load, alert fatigue, adaptive models, recursive AI systems).

- Temporal evolution was structured into three phases (2016–2020: ethics; 2021–2023: regulation; 2023–2025: operationalization) to identify paradigm convergence.

- Quality Appraisal Sources were appraised by issuing authority (regulatory > consensus > academic), citation impact, and clinical applicability. No formal quality scoring was applied given policy document heterogeneity.

3. Results

3.1. Evolution of Human Oversight: From Ethics to Regulation (2016–2020)

3.1.1. Foundational Principles and Soft Law

3.2. Risk-Based Regulation and Cross-Cutting Guidance (2021–2023)

3.2.1. EU AI Act: Binding Human-Oversight Requirements

3.2.2. OECD AI Principles and Accountability

3.2.3. WHO Health-Sector Guidance

3.2.4. National and Sector-Aligned Guidance for Healthcare and AI Development

3.3. Operational Paradigms in Healthcare and AI Development (2023–2025)

3.3.1. Healthcare: FUTURE-AI, CHAI Blueprint and Clinical AI Pipelines

3.3.2. Computer Science and AI Development: Human-in-the-Loop Governance of AI Lifecycles

4. Discussion

4.1. Convergence on Human-in-the-Loop Paradigms in Healthcare and AI Development

- Distributed across the lifecycle, from data selection and model design through deployment and monitoring.

- Shared across individuals, teams and institutions, rather than resting solely on single clinicians or developers.

- Dependent on sociotechnical infrastructures—interfaces, workflows, metrics and incentives—that give humans the information, time and authority needed to intervene effectively.

4.2. Deepening Challenges: Cognitive Load, Big-Data Pipelines and Adaptive Systems

4.2.1. Cognitive Burden in High-Velocity Environments

4.2.2. Oversight of Adaptive and Continuously Learning Systems

4.3. Practical Implications: Designing Human-Centred Oversight Architectures

5. Conclusions

- Investment in governance infrastructure and monitoring capabilities suited to big-data AI systems.

- Workforce development that equips clinicians, engineers and data scientists with the knowledge, skills and authority to exercise oversight.

- Transparent performance and oversight metrics that track not only AI performance but also how and when humans intervene.

- Learning systems that treat AI-related incidents as opportunities for redesign rather than solely individual error.

References

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; et al. AI4People—An ethical framework for a good AI society: Opportunities, risks, principles, and recommendations. Minds Mach. 2018, 28, 689–707. [Google Scholar] [CrossRef]

- Jobin, A.; Ienca, M.; Vayena, E. The global landscape of AI ethics guidelines. Nat. Mach. Intell. 2019, 1, 389–399. [Google Scholar] [CrossRef]

- Morley, J.; Floridi, L.; Kinsey, L.; Elhalal, A. From what to how: An initial review of publicly available AI ethics tools, methods and research to translate principles into practices. Sci. Eng. Ethics 2020, 26, 2141–2168. [Google Scholar] [CrossRef] [PubMed]

- Mittelstadt, B. Principles alone cannot guarantee ethical AI. Nat. Mach. Intell. 2019, 1, 501–507. [Google Scholar] [CrossRef]

- IEEE. Ethically Aligned Design: A Vision for Prioritizing Human Well-being with Autonomous and Intelligent Systems, Version 2. IEEE: Piscataway, NJ, USA, 2017.

- European Commission High-Level Expert Group on AI. Ethics Guidelines for Trustworthy AI; European Commission: Brussels, Belgium, 2019. [Google Scholar]

- European Parliament and Council. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act); Official Journal of the European Union: Brussels, Belgium, 2024. [Google Scholar]

- European Commission. Proposal for a Regulation of the European Parliament and of the Council Laying Down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act); COM(2021) 206 final; European Commission: Brussels, Belgium, 2021. [Google Scholar]

- European Commission. Questions and Answers—Artificial Intelligence Act; European Commission: Brussels, Belgium, 2024; Available online: https://ec.europa.eu/commission/presscorner/detail/en/qanda_21_1683 (accessed on 1 February 2026).

- World Health Organization. Ethics and Governance of Artificial Intelligence for Health: WHO Guidance; World Health Organization: Geneva, Switzerland, 2021. [Google Scholar]

- World Health Organization. Regulatory Considerations on Artificial Intelligence for Health; World Health Organization: Geneva, Switzerland, 2023. [Google Scholar]

- OECD. OECD AI Principles; OECD Publishing: Paris, France, 2019. [Google Scholar]

- OECD. Advancing Accountability in AI: Governing and Managing Risks throughout the Lifecycle for Trustworthy AI; OECD Publishing: Paris, France, 2023. [Google Scholar]

- Indian Council of Medical Research. Ethical Guidelines for Application of Artificial Intelligence in Biomedical Research and Healthcare; ICMR: New Delhi, India, 2023. [Google Scholar]

- U.S. Food and Drug Administration. Good Machine Learning Practice for Medical Device Development: Guiding Principles; FDA: Silver Spring, MD, USA, 2021. [Google Scholar]

- Personal Data Protection Commission Singapore. Model Artificial Intelligence Governance Framework, 2nd ed.; PDPC: Singapore, 2020. [Google Scholar]

- Kocaballi, A.B.; Ijaz, K.; Laranjo, L.; Quiroz, J.C.; Rezazadegan, D.; Berkovsky, S.; Coiera, E.; Tong, H.L. The FUTURE-AI Guideline for Fair, Universal, Transparent, Understandable, Robust and Explainable AI in Healthcare. Nat. Mach. Intell. 2024, 6, 1202–1213. [Google Scholar]

- Coalition for Health AI. Blueprint for Trustworthy AI Implementation Guidance and Assurance for Healthcare; CHAI: Washington, DC, USA, 2023. [Google Scholar]

- UNESCO. Recommendation on the Ethics of Artificial Intelligence; UNESCO: Paris, France, 2021. [Google Scholar]

- Santoni de Sio, F.; Mecacci, G. Four responsibility gaps with artificial intelligence: Why they matter and how to address them. Philos. Technol. 2021, 34, 1057–1084. [Google Scholar] [CrossRef]

- Tigard, D.W. There is no techno-responsibility gap. Philos. Technol. 2021, 34, 589–607. [Google Scholar] [CrossRef]

- Umbrello, S.; van de Poel, I. Mapping value sensitive design onto AI for social good principles. AI Ethics 2021, 1, 283–296. [Google Scholar] [CrossRef] [PubMed]

- European Commission. Regulatory Framework Proposal on Artificial Intelligence; European Commission: Brussels, Belgium, 2021. [Google Scholar]

- European Parliament. Amendments Adopted by the European Parliament on 14 June 2023 on the Proposal for a Regulation of the European Parliament and of the Council on Laying down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act); European Parliament: Strasbourg, France, 2023. [Google Scholar]

- UNESCO. The UNESCO Recommendation on the Ethics of Artificial Intelligence: Key Facts; UNESCO: Paris, France, 2022. [Google Scholar]

- United Nations Office of the High Commissioner for Human Rights. The Right to Privacy in the Digital Age; UN: New York, NY, USA, 2021. [Google Scholar]

- Personal Data Protection Commission Singapore. Model Artificial Intelligence Governance Framework, 2nd ed.; PDPC: Singapore, 2020. [Google Scholar]

- World Health Organization. Ethics and Governance of Artificial Intelligence for Health: Guidance on Large Multi-Modal Models; World Health Organization: Geneva, Switzerland, 2024. [Google Scholar]

- Shortliffe, E.H. Computer-Based Medical Consultations: MYCIN; Elsevier: New York, NY, USA, 1976. [Google Scholar]

- Bostrom, N.; Yudkowsky, E. The ethics of artificial intelligence. In The Cambridge Handbook of Artificial Intelligence; Frankish, K., Ramsey, W.M., Eds.; Cambridge University Press: Cambridge, UK, 2014; pp. 316–334. [Google Scholar]

- Russell, S.; Dewey, D.; Tegmark, M. Research priorities for robust and beneficial artificial intelligence. AI Mag. 2015, 36, 105–114. [Google Scholar] [CrossRef]

- OECD. OECD Framework for the Classification of AI Systems; OECD Publishing: Paris, France, 2022. [Google Scholar]

- OECD. Emerging Accountability Structures to Oversee Global AI; OECD Publishing: Paris, France, 2024. [Google Scholar]

- Garg, S.; Pundir, P.; Rathee, G.; Gupta, P.K.; Garg, S.; Ahlawat, S. On continuous integration/continuous delivery for automated deployment of machine learning models using MLOps. In Proceedings of the 2021 IEEE Fourth International Conference on Artificial Intelligence and Knowledge Engineering (AIKE), Laguna Hills, CA, USA, 1–3 December 2021; pp. 25–28. [Google Scholar]

- Char, D.S.; Abràmoff, M.D.; Feudtner, C. Identifying ethical considerations for machine learning healthcare applications. Am. J. Bioeth. 2020, 20, 7–17. [Google Scholar] [CrossRef]

- U.S. Food and Drug Administration. Artificial Intelligence/Machine Learning (AI/ML)-Based Software as a Medical Device (SaMD) Action Plan; FDA: Silver Spring, MD, USA, 2021. [Google Scholar]

- U.S. Food and Drug Administration. Marketing Submission Recommendations for a Predetermined Change Control Plan for Artificial Intelligence/Machine Learning (AI/ML)-Enabled Device Software Functions; FDA: Silver Spring, MD, USA, 2023. [Google Scholar]

- Vasey, B.; Nagendran, M.; Campbell, B.; Clifton, D.A.; Collins, G.S.; Denaxas, S.; Denniston, A.K.; Faes, L.; Geerts, B.; Ibrahim, M.; et al. Reporting guideline for the early-stage clinical evaluation of decision support systems driven by artificial intelligence: DECIDE-AI. Nat. Med. 2022, 28, 924–933. [Google Scholar] [CrossRef] [PubMed]

- Joint Commission. Safe and Trustworthy Artificial Intelligence-Enabled Clinical Decision Support in Healthcare; Joint Commission Resources: Oak Brook, IL, USA, 2024. [Google Scholar]

- Sendak, M.P.; Gao, M.; Brajer, N.; Balu, S. Presenting machine learning model information to clinical end users with model facts labels. NPJ Digit. Med. 2020, 3, 41. [Google Scholar] [CrossRef]

- Sendak, M.; Elish, M.C.; Gao, M.; Futoma, J.; Ratliff, W.; Nichols, M.; Bedoya, A.; Balu, S.; O’Brien, C. “The human body is a black box”: Supporting clinical decision-making with deep learning. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, Barcelona, Spain, 27–30 January 2020; pp. 99–109. [Google Scholar]

- Ancker, J.S.; Edwards, A.; Nosal, S.; Hauser, D.; Mauer, E.; Kaushal, R. Effects of workload, work complexity, and repeated alerts on alert fatigue in a clinical decision support system. BMC Med. Inform. Decis. Mak. 2017, 17, 36. [Google Scholar] [CrossRef]

- Benda, N.C.; Veinot, T.C.; Sieck, C.J.; Ancker, J.S. Broadband internet access is a social determinant of health! Am. J. Public Health 2020, 110, 1123–1125. [Google Scholar] [CrossRef]

- Khairat, S.; Marc, D.; Crosby, W.; Al Sanousi, A. Reasons for physicians not adopting clinical decision support systems: Critical analysis. JMIR Med. Inform. 2018, 6, e24. [Google Scholar] [CrossRef]

- European Commission. Proposal for a Regulation Laying Down Harmonised Rules on Artificial Intelligence: Explanatory Memorandum; European Commission: Brussels, Belgium, 2021. [Google Scholar]

- European Parliament. Legislative Resolution of 13 March 2024 on the Proposal for a Regulation of the European Parliament and of the Council on Laying Down Harmonised Rules on Artificial Intelligence; European Parliament: Strasbourg, France, 2024. [Google Scholar]

- Vollmer, S.; Mateen, B.A.; Bohner, G.; Király, F.J.; Ghani, R.; Jonsson, P.; Cumbers, S.; Jonas, A.; McAllister, K.S.L.; Myles, P.; et al. Machine learning and artificial intelligence research for patient benefit: 20 critical questions on transparency, replicability, ethics, and effectiveness. BMJ 2020, 368, l6927. [Google Scholar] [CrossRef] [PubMed]

- Topol, E.J. High-performance medicine: The convergence of human and artificial intelligence. Nat. Med. 2019, 25, 44–56. [Google Scholar] [CrossRef]

- Moor, M.; Banerjee, O.; Abad, Z.S.H.; Krumholz, H.M.; Leskovec, J.; Topol, E.J.; Rajpurkar, P. Foundation models for generalist medical artificial intelligence. Nature 2023, 616, 259–265. [Google Scholar] [CrossRef]

- Marcus, G.; Davis, E. Rebooting AI: Building Artificial Intelligence We Can Trust; Pantheon: New York, NY, USA, 2019. [Google Scholar]

- Mitchell, M. Artificial Intelligence: A Guide for Thinking Humans; Farrar, Straus and Giroux: New York, NY, USA, 2019. [Google Scholar]

- Pearl, J.; Mackenzie, D. The Book of Why: The New Science of Cause and Effect; Basic Books: New York, NY, USA, 2018. [Google Scholar]

- Sculley, D.; Holt, G.; Golovin, D.; Davydov, E.; Phillips, T.; Ebner, D.; Chaudhary, V.; Young, M.; Crespo, J.F.; Dennison, D. Hidden technical debt in machine learning systems. In Advances in Neural Information Processing Systems 28; Cortes, C., Lawrence, N., Lee, D., Sugiyama, M., Garnett, R., Eds.; Curran Associates: Red Hook, NY, USA, 2015; pp. 2503–2511. [Google Scholar]

- Paleyes, A.; Urma, R.G.; Lawrence, N.D. Challenges in deploying machine learning: A survey of case studies. ACM Comput. Surv. 2022, 55, 1–29. [Google Scholar] [CrossRef]

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Breck, E.; Cai, S.; Nielsen, E.; Salib, M.; Sculley, D. The ML test score: A rubric for ML production readiness and technical debt reduction. In Proceedings of the 2017 IEEE International Conference on Big Data (Big Data), Boston, MA, USA, 11–14 December 2017; pp. 1123–1132. [Google Scholar]

- Ashmore, R.; Calinescu, R.; Paterson, C. Assuring the machine learning lifecycle: Desiderata, methods, and challenges. ACM Comput. Surv. 2021, 54, 1–39. [Google Scholar] [CrossRef]

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arora, S.; von Arx, S.; Bernstein, M.S.; Bohg, J.; Bosselut, A.; Brunskill, E.; et al. On the opportunities and risks of foundation models. arXiv 2021, arXiv:2108.07258. [Google Scholar] [CrossRef]

- Lwakatare, L.E.; Raj, A.; Bosch, J.; Olsson, H.H.; Crnkovic, I. A taxonomy of software engineering challenges for machine learning systems: An empirical investigation. In Agile Processes in Software Engineering and Extreme Programming; Springer: Cham, Switzerland, 2019; pp. 227–243. [Google Scholar]

- Sato, D.; Wider, A.; Windheuser, C. Continuous Delivery for Machine Learning; ThoughtWorks: Chicago, IL, USA, 2019. [Google Scholar]

- Shankar, S.; Garcia, R.; Howard, J.; Riberio, D.; Calo, S.; Mousavi, P. Operationalizing machine learning: An interview study. arXiv 2022, arXiv:2209.09125. [Google Scholar] [CrossRef]

- Karlaš, B.; Dao, D.; Interlandi, M.; Ding, B.; Ré, C.; Klimovic, A.; Schelter, S. Building continuous integration services for machine learning. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Virtual Event, 6–10 July 2020; pp. 2407–2415. [Google Scholar]

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; Pinto, H.P.D.O.; Kaplan, J.; Edwards, H.; Burda, Y.; Joseph, N.; Brockman, G.; et al. Evaluating large language models trained on code. arXiv 2021, arXiv:2107.03374. [Google Scholar] [CrossRef]

- Barke, S.; James, M.B.; Polikarpova, N. Grounded Copilot: How programmers interact with code-generating models. Proc. ACM Program. Lang. 2023, 7, 85–111. [Google Scholar] [CrossRef]

- Dakhel, A.M.; Majdinasab, V.; Nikanjam, A.; Khomh, F.; Desmarais, M.C.; Jiang, Z.M. GitHub Copilot AI pair programmer: Asset or liability? J. Syst. Softw. 2023, 203, 111734. [Google Scholar] [CrossRef]

- Vaithilingam, P.; Zhang, T.; Glassman, E.L. Expectation vs. experience: Evaluating the usability of code generation tools powered by large language models. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Hamburg, Germany, 23–28 April 2022; pp. 1–23. [Google Scholar]

- Peng, S.; Kalliamvakou, E.; Cihon, P.; Demirer, M. The impact of AI on developer productivity: Evidence from GitHub Copilot. arXiv 2023, arXiv:2302.06590. [Google Scholar] [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.; Daumé, H., III; Crawford, K. Datasheets for datasets. Commun. ACM 2021, 64, 86–92. [Google Scholar] [CrossRef]

- Pushkarna, M.; Zaldivar, A.; Kjartansson, O. Data cards: Purposeful and transparent dataset documentation for responsible AI. In Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency, Seoul, Republic of Korea, 21–24 June 2022; pp. 1776–1826. [Google Scholar]

- Christiano, P.F.; Leike, J.; Brown, T.; Martic, M.; Legg, S.; Amodei, D. Deep reinforcement learning from human preferences. In Advances in Neural Information Processing Systems 30; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates: Red Hook, NY, USA, 2017; pp. 4299–4307. [Google Scholar]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model cards for model reporting. In Proceedings of the Conference on Fairness, Accountability, and Transparency, Atlanta, GA, USA, 29–31 January 2019; pp. 220–229. [Google Scholar]

- Weidinger, L.; Mellor, J.; Rauh, M.; Griffin, C.; Uesato, J.; Huang, P.S.; Cheng, M.; Glaese, M.; Balle, B.; Kasirzadeh, A.; et al. Ethical and social risks of harm from language models. arXiv 2021, arXiv:2112.04359. [Google Scholar] [CrossRef]

- Irving, G.; Christiano, P.; Amodei, D. AI safety via debate. arXiv 2018, arXiv:1805.00899. [Google Scholar] [CrossRef]

- Leike, J.; Krueger, D.; Everitt, T.; Martic, M.; Maini, V.; Legg, S. Scalable agent alignment via reward modeling: A research direction. arXiv 2018, arXiv:1811.07871. [Google Scholar] [CrossRef]

- Bai, Y.; Jones, A.; Ndousse, K.; Askell, A.; Chen, A.; DasSarma, N.; Drain, D.; Fort, S.; Ganguli, D.; Henighan, T.; et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv 2022, arXiv:2204.05862. [Google Scholar] [CrossRef]

- Bai, Y.; Kadavath, S.; Kundu, S.; Askell, A.; Kernion, J.; Jones, A.; Chen, A.; Goldie, A.; Mirhoseini, A.; McKinnon, C.; et al. Constitutional AI: Harmlessness from AI feedback. arXiv 2022, arXiv:2212.08073. [Google Scholar] [CrossRef]

- Amershi, S.; Begel, A.; Bird, C.; DeLine, R.; Gall, H.; Kamar, E.; Nagappan, N.; Nushi, B.; Zimmermann, T. Software engineering for machine learning: A case study. In Proceedings of the 41st International Conference on Software Engineering: Software Engineering in Practice, Montreal, QC, Canada, 25–31 May 2019; pp. 291–300. [Google Scholar]

- Sambasivan, N.; Kapania, S.; Highfill, H.; Akrong, D.; Paritosh, P.; Aroyo, L.M. “Everyone wants to do the model work, not the data work”: Data cascades in high-stakes AI. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 8–13 May 2021; pp. 1–15. [Google Scholar]

- Arpteg, A.; Brinne, B.; Crnkovic-Friis, L.; Bosch, J. Software engineering challenges of deep learning. In Proceedings of the 2018 44th Euromicro Conference on Software Engineering and Advanced Applications (SEAA), Prague, Czech Republic, 29–31 August 2018; pp. 50–59. [Google Scholar]

- Polyzotis, N.; Roy, S.; Whang, S.E.; Zinkevich, M. Data lifecycle challenges in production machine learning: A survey. ACM SIGMOD Rec. 2018, 47, 17–28. [Google Scholar] [CrossRef]

- Bernstein, M.S.; Levi, M.; Magnus, D.; Rajala, B.A.; Satz, D.; Waeiss, C. Responsible AI: Bridging from ethics to practice. Commun. ACM 2021, 64, 164–166. [Google Scholar]

- Microsoft. Responsible AI Standard, v2; Microsoft Corporation: Redmond, WA, USA, 2022. [Google Scholar]

- Raji, I.D.; Smart, A.; White, R.N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Smith-Loud, J.; Theron, D.; Barnes, P. Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, Barcelona, Spain, 27–30 January 2020; pp. 33–44. [Google Scholar]

- ISO/IEC. ISO/IEC 42001:2023 Information Technology—Artificial Intelligence—Management System; ISO. Geneva, Switzerland, 2023.

- IEEE. IEEE 7000-2021—IEEE Standard Model Process for Addressing Ethical Concerns During System Design; IEEE. Piscataway, NJ, USA, 2021.

- Kenton, Z.; Everitt, T.; Weidinger, L.; Gabriel, I.; Mikulik, V.; Irving, G. Alignment of language agents. arXiv 2021, arXiv:2103.14659. [Google Scholar] [CrossRef]

- Saunders, W.; Yeh, C.; Wu, J.; Bills, S.; Ouyang, L.; Ward, J.; Leike, J. Self-critiquing models for assisting human evaluators. arXiv 2022, arXiv:2206.05802. [Google Scholar] [CrossRef]

- Perez, E.; Huang, S.; Song, F.; Cai, T.; Ring, R.; Aslanides, J.; Glaese, A.; McAleese, N.; Irving, G. Red teaming language models with language models. arXiv 2022, arXiv:2202.03286. [Google Scholar] [CrossRef]

- Perry, N.; Srivastava, M.; Kumar, D.; Boneh, D. Do users write more insecure code with AI assistants? In Proceedings of the 2023 ACM SIGSAC Conference on Computer and Communications Security, Copenhagen, Denmark, 26–30 November 2023; pp. 2785–2799. [Google Scholar]

- Nguyen, N.; Nadi, S. An empirical evaluation of GitHub Copilot’s code suggestions. In Proceedings of the 19th International Conference on Mining Software Repositories, Pittsburgh, PA, USA, 23–24 May 2022; pp. 1–5. [Google Scholar]

- Sandoval, G.; Pearce, H.; Nys, T.; Karri, R.; Garg, S.; Dolan-Gavitt, B. Lost at C: A user study on the security implications of large language model code assistants. In Proceedings of the 32nd USENIX Security Symposium, Anaheim, CA, USA, 9–11 August 2023; pp. 2205–2222. [Google Scholar]

- Seeber, I.; Bittner, E.; Briggs, R.O.; De Vreede, T.; De Vreede, G.J.; Elkins, A.; Maier, R.; Merz, A.B.; Oeste-Reiß, S.; Randrup, N.; et al. Machines as teammates: A research agenda on AI in team collaboration. Inf. Manag. 2020, 57, 103174. [Google Scholar] [CrossRef]

- Jarrahi, M.H.; Askay, D.; Eshraghi, A.; Smith, P. Artificial intelligence and knowledge management: A partnership between human and AI. Bus. Horiz. 2023, 66, 87–99. [Google Scholar] [CrossRef]

- Barke, S.; James, M.B.; Polikarpova, N. Grounded Copilot: How programmers interact with code-generating models. Proc. ACM Program. Lang. 2023, 7, 85–111. [Google Scholar] [CrossRef]

- Bubeck, S.; Chandrasekaran, V.; Eldan, R.; Gehrke, J.; Horvitz, E.; Kamar, E.; Lee, P.; Lee, Y.T.; Li, Y.; Lundberg, S.; et al. Sparks of artificial general intelligence: Early experiments with GPT-4. arXiv 2023, arXiv:2303.12712. [Google Scholar] [CrossRef]

- Wei, J.; Tay, Y.; Bommasani, R.; Raffel, C.; Zoph, B.; Borgeaud, S.; Yogatama, D.; Bosma, M.; Zhou, D.; Metzler, D.; et al. Emergent abilities of large language models. In Trans. Mach. Learn. Res.; 2022. [Google Scholar]

- Zheng, L.; Chiang, W.L.; Sheng, Y.; Zhuang, S.; Wu, Z.; Zhuang, Y.; Lin, Z.; Li, Z.; Li, D.; Xing, E.; et al. Judging LLM-as-a-judge with MT-Bench and Chatbot Arena. In Advances in Neural Information Processing Systems 36; Oh, A., Naumann, T., Globerson, A., Saenko, K., Hardt, M., Levine, S., Eds.; Curran Associates: Red Hook, NY, USA, 2023; pp. 46595–46623. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).