Submitted:

21 February 2026

Posted:

28 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Method

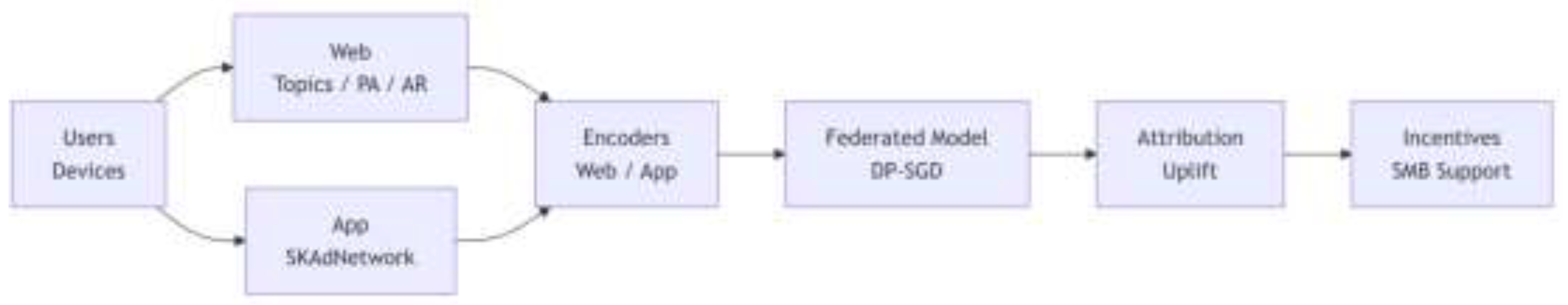

3.1. System Model and Notation

3.2. Differentially Private Measurement and Attribution

3.3. Differential Privacy Preliminaries

3.4. DP-SGD for Federated Learning

3.5. DP Aggregation for Cross-Channel Attribution

3.6. Consistency Constraints Between Event-Level and Summary-Level Views

3.7. Multi-Touch Attribution and Incentive Allocation

3.7.1. Path-Based Attribution Weights.

3.7.2. Uplift-Based Incentive Scores.

4. Valuation

4.1. Model Performance Across Channels

4.2. Attribution Consistency Under DP Summary Reporting

4.3. Incentive Allocation Outcomes for SMB Advertisers

5. Conclusion

- measurement utility, improving AUC, calibration, and uplift RMSE under realistic DP budgets;

- attribution consistency, reducing discrepancies between model-derived multi-touch paths and DP summary-level conversions;

- economic efficiency, delivering higher incremental lift and lower cost per incremental conversion in SMB-targeted incentive programs while satisfying fairness constraints.

References

- Xiao, Y., Du, J., Zhang, S., Zhang, W., Yang, Q., Zhang, D., & Kifer, D. (2025). Click without compromise: Online advertising measurement via per-user differential privacy. In Proceedings of the IEEE Symposium on Security and Privacy.

- Delaney, J., Ghazi, B., Harrison, C., Ilvento, C., Kumar, R., Manurangsi, P., Pal, M., Prabhakar, K., & Raykova, M. (2024). Differentially private ad conversion measurement. Proceedings on Privacy Enhancing Technologies, 2024(2).

- Du, J. (2024). Designing for user privacy: Integrating differential privacy into ad measurement systems in practice. In Proceedings of the 2024 USENIX Conference on Privacy Engineering Practice and Respect (PEPR ’24).

- Du, J., Ghazi, B., Ilvento, C., Kumar, R., Manurangsi, P., Pal, M., Prabhakar, K., & Raykova, M. (2025). PrivacyGo: Privacy-preserving ad measurement with multidimensional intersection. arXiv preprint.

- Sun, J.; Zhao, L.; Liu, Z.; Li, Q.; Deng, X.; Wang, Q.; Jiang, Y. Practical differentially private online advertising. Computers & Security 2022, 112, 102504. [Google Scholar]

- Lindell, Y.; Omri, E. A practical application of differential privacy to personalized online advertising. IACR Cryptology ePrint Archive 2011, 2011(152). [Google Scholar]

- Mouris, D.; Masny, D.; Trieu, N.; Sengupta, S.; Buddhavarapu, P.; Case, B. M. Delegated private matching for compute. Proceedings on Privacy Enhancing Technologies 2024, 2024(2), 49–72. [Google Scholar] [CrossRef]

- Mouris, D.; Sarkar, P.; Tsoutsos, N. G. PLASMA: Private, lightweight aggregated statistics against malicious adversaries. Proceedings on Privacy Enhancing Technologies 2024, 2024(3), 4–24. [Google Scholar] [CrossRef]

- Anonymous. (2020). Secure multiparty computation for private measurement of advertising lift. Technical Disclosure Commons.

- Zhong, K., Ma, F., & Angel, S. (2022). Ibex: Privacy-preserving ad conversion tracking and bidding. In Proceedings of the 2022 ACM SIGSAC Conference on Computer and Communications Security (CCS ’22).

- Aksu, H., Aksu, H. H., Ghazi, B., Harrison, C., Kumar, R., Manurangsi, P., Pal, M., & Raykova, M. (2024). Summary report optimization in the Privacy Sandbox Attribution Reporting API. Proceedings on Privacy Enhancing Technologies, 2024(4).

- Ghazi, B., Harrison, C., Hosabettu, A., Kamath, P., Knop, A., Kumar, R., Leeman, E., Manurangsi, P., & Sahu, V. (2024). On the differential privacy and interactivity of Privacy Sandbox reports. arXiv preprint.

- Su, C.; Wei, J.; Lei, Y.; Li, J. A federated learning framework based on transfer learning and knowledge distillation for targeted advertising. PeerJ Computer Science 2023, 9, e1496. [Google Scholar] [CrossRef] [PubMed]

- Seyghaly, R.; Garcia, J.; Masip-Bruin, X. A comprehensive architecture for federated learning-based smart advertising. Sensors 2024, 24(12), 3765. [Google Scholar] [CrossRef]

- Seyghaly, R., Garcia, J., Masip-Bruin, X., & Mahmoodi Varnamkhasti, M. (2024). An optimized data architecture for smart advertising based on federated learning. In 2024 IEEE Symposium on Computers and Communications (ISCC). 2024.

- Chivukula, V. V. Use of federated learning for optimizing ad delivery platforms without exchanging user PII. International Journal of Science and Advanced Technology 2022, 12(3). [Google Scholar]

- Zhang, K.; Li, P. Federated learning optimizing multi-scenario ad targeting and investment returns in digital advertising. Journal of Advanced Computing Systems 2024, 4(8), 36–43. [Google Scholar] [CrossRef]

- Chivukula, V. V. The use of federated learning for digital advertising measurement. ESP Journal of Engineering & Technology Advancements 2022, 2(4), 161–162. [Google Scholar] [CrossRef]

- Kraft, L. Leveraging differential privacy for targeted advertisements. SSRN Electronic Journal. 2023. [Google Scholar] [CrossRef]

- Ullah, I.; Boreli, R.; Kanhere, S. S. Privacy in targeted advertising on mobile devices: A survey. International Journal of Information Security 2023, 22(3), 647–678. [Google Scholar] [CrossRef] [PubMed]

| Channel Type | #Events | AUC (↑) | ECE (↓) | Uplift RMSE (↓) | DP Noise σ | Avg. Client Participation (%) |

| Web – Topics | 3,245,901 | 0.782 | 0.041 | 0.116 | 1.2 | 27.4% |

| Web – Protected Audience | 1,982,334 | 0.768 | 0.052 | 0.129 | 1.2 | 25.9% |

| App – SKAdNetwork | 4,156,442 | 0.804 | 0.038 | 0.112 | 1.0 | 31.2% |

| Combined (Unified FL) | 9,384,677 | 0.816 | 0.035 | 0.104 | 1.1 | 28.3% |

| Method | ACR (↓) | Avg. Per-Advertiser Error (↓) | #Advertisers | Dimensionality of Reports | Supports Cross-Channel MTA |

| Baseline: Last-Touch (Non-DP) | 0.214 | 38.6 | 220 | Low | No |

| Baseline: Summary-Only DP Reports | 0.167 | 29.3 | 220 | Medium | No |

| Proposed: DP-Federated MTA (Ours) | 0.091 | 17.5 | 220 | High | Yes |

| Proposed + Consistency Regularization | 0.072 | 14.2 | 220 | High | Yes |

| Method | Incremental Lift ↑ | CPIC (↓) | SMB Allocation Ratio ↑ | Budget Utilization (%) | #Advertisers Receiving Incentives |

| Heuristic Rule-Based Allocation | 9.3% | $41.2 | 48.1% | 92.4% | 62 |

| Baseline DP Aggregated Lift | 12.7% | $34.5 | 53.8% | 96.0% | 85 |

| Proposed DP-FL Uplift Allocation (Ours) | 18.4% | $27.8 | 56.7% | 99.1% | 104 |

| Proposed + Strong SMB Constraint | 17.6% | $28.9 | 61.4% | 98.3% | 112 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).