Submitted:

17 February 2026

Posted:

28 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Contributions

- A usable definition of shadow AI and a compact taxonomy for risk classification.

- A multi-layer detection view with concrete signal/log categories and known limits.

- An obligations-to-evidence mapping concept to operationalise governance and audit readiness.

- A validation plan (PRISMA-informed review + triangulation) for future empirical assessment.

2. Related Work and Context

2.1. From Shadow IT to Shadow AI

- 1.

- AI Supply Chain Complexity: Unlike conventional software with deterministic behaviour, AI systems depend on complex supply chains involving third-party models, training data of uncertain provenance, and external inference services (NIST, 2023). When employees use external generative AI services, organisations lose visibility into model versioning, training data composition, and processing pipelines — creating compliance blind spots that do not exist with traditional shadow IT.

- 2.

- Data Leakage Amplification: Shadow AI magnifies data exposure risks because generative AI tools often process, store, or incorporate user inputs into model training (IBM, 2024). Employees who submit confidential documents, customer data, or proprietary information to external AI services may inadvertently expose sensitive organisational assets to third-party processors without contractual safeguards or data processing agreements.

- 3.

- Decision Impact and Accountability: AI-generated outputs increasingly influence organisational decisions, from document drafting to data analysis and strategic recommendations. When these outputs originate from unsanctioned tools, the organisation cannot ensure quality, accuracy, or alignment with organisational policies (Puthal, 2025). This creates accountability gaps, particularly in regulated functions such as legal, finance, and human resources.

- 4.

- Opacity and Explainability Deficits: Shadow AI compounds the inherent interpretability challenges of machine learning systems. Organisations using sanctioned AI can implement monitoring, logging, and explainability mechanisms; shadow AI, by definition, operates outside such controls. This opacity conflicts with regulatory expectations for transparency and traceability under frameworks such as the EU AI Act (EU AI Act, 2024).

- 5.

- Automation of Influence: Shadow IT primarily concerns infrastructure and data access; shadow AI extends to automated content generation and decision support. AI-generated text, analysis, or recommendations may propagate through organisational processes without human verification, amplifying the consequences of errors, biases, or inappropriate outputs (CSA, 2024).

2.2. Governance and Regulatory Drivers

- 1.

-

GDPR: Data Protection and Automated Decision-MakingThe GDPR establishes foundational requirements for the lawful processing of personal data, with several provisions bearing directly on AI governance. Article 5 mandates that personal data be processed lawfully, fairly, and transparently, with controllers maintaining accountability for demonstrating compliance (GDPR, 2016). When employees route personal data through unsanctioned AI services, organisations lose the capacity to ensure or demonstrate adherence to these principles.Article 22 imposes specific constraints on automated individual decision-making. Data subjects hold the right not to be subject to decisions based solely on automated processing, including profiling, that produce legal effects or similarly significant impacts (Art22 GDPR). Where such processing occurs under permitted exceptions (contractual necessity, legal authorisation, or explicit consent), controllers must implement suitable safeguards, including the right to obtain human intervention, express a viewpoint, and contest the decision. Shadow AI fundamentally compromises these obligations: organisations cannot ensure human oversight of automated decisions when they lack awareness that such decisions are being made, nor can they provide contestability mechanisms for processes invisible to governance structures.The transparency obligations under Articles 13 and 14 require controllers to inform data subjects about the existence of automated decision-making and provide meaningful information about the logic involved, as well as the significance and envisaged consequences of such processing (GDPR, 2016). Fulfilling these disclosure requirements presupposes that organisations maintain inventories of AI systems processing personal data.Article 35 mandates Data Protection Impact Assessments (DPIAs) for processing operations likely to result in high risk to individuals’ rights and freedoms, explicitly including systematic and extensive evaluation of personal aspects based on automated processing (GDPR, 2016). The European Data Protection Board has emphasised that AI systems processing personal data frequently trigger DPIA requirements (EDPB, 2024). Shadow AI circumvents these safeguards entirely, as organisations cannot assess risks associated with systems they do not know exist.The accountability principle under Article 5(2) requires controllers to demonstrate compliance with data protection principles (GDPR, 2016). This demonstrable accountability is incompatible with unmonitored AI usage. Organisations cannot produce evidence of lawful processing, appropriate safeguards, or risk mitigation for systems operating outside their governance frameworks.

- 2.

-

EU AI Act: Risk-Based Governance ObligationsThe EU AI Act, which entered into force on 1 August 2024 with phased compliance deadlines extending through 2027, establishes the world’s first comprehensive regulatory framework for AI systems. The Act adopts a risk-based approach, calibrating obligations according to the potential harm AI systems may cause to health, safety, or fundamental rights (EU AI Act, 2024).

- 2.1

- Transparency as a Foundational Requirement: Transparency is a core pillar of the EU AI Act. Article 13 requires high-risk AI systems to provide sufficient transparency for appropriate interpretation and use. Article 50 extends transparency obligations to certain AI systems regardless of risk classification, including disclosure of AI interaction and machine-readable labelling of AI-generated content (Arts 13, 50 EUAIA). These requirements presuppose organisational awareness of AI deployments; shadow AI, by definition, bypasses such transparency mechanisms.

- 2.2

- Accountability Through Documentation and Logging: The EU AI Act embeds accountability through mandatory documentation and logging. Article 11 requires technical documentation for high-risk AI systems, Articles 12 and 19 mandate automatic event logging and log retention, and Article 26(6) obliges deployers to retain logs for at least six months (EUAIA, 2024). Shadow AI operates outside these accountability structures, preventing organisations from producing required documentation or auditable compliance evidence.

- 2.3

- Deployer Obligations and Human Oversight: Article 26 imposes specific obligations on deployers of high-risk AI systems, including ensuring compliant use, assigning competent human oversight, maintaining input data quality, and monitoring system operation (Art 26 EUAIA). Article 14 further requires systems to enable effective human oversight by design. Shadow AI fundamentally conflicts with these obligations, as organisations cannot monitor, oversee, or govern systems operating beyond institutional awareness.

- 2.4

- Governance Implications of Non-Compliance: The EU AI Act establishes significant penalties for non-compliance, including fines of up to €35 million or 7% of global annual turnover for prohibited practices, and up to €15 million or 3% for other infringements (Art 99 EUAIA). Beyond financial sanctions, non-compliance carries reputational and market-access risks. Shadow AI creates compliance blind spots that expose organisations to enforcement actions for obligations they may be unaware they are breaching.

- 3.

-

Complementary Governance FrameworksBeyond mandatory compliance, voluntary governance frameworks offer practical implementation guidance that strengthens the governance objectives set by regulation. The NIST AI Risk Management Framework (AI RMF 1.0), released in January 2023, offers a structured approach to AI risk identification, assessment, and mitigation. It is organised around four core functions: Govern, Map, Measure, and Manage (NIST 2023). The framework emphasises transparency, accountability, and explainability, all of which assumes organisational awareness and control of AI systems.ISO/IEC 42001:2023 establishes requirements for AI Management Systems (AIMS), providing a certifiable standard for organisational AI governance (ISO 42001). The standard addresses AI-specific management challenges, including transparency, explainability, and continuous learning behaviours that require special consideration for responsible use (ISO 42001). Implementation of ISO/IEC 42001 necessarily requires comprehensive inventories of AI systems within the organisation’s scope. It is expected that such inventories should not account for shadow AI.

3. Shadow AI: Operational Scope and Risk Lens

3.1. Operational Definition and Boundaries

Includes (In-scope)

- Use of public generative AI services (e.g., ChatGPT, Claude, Gemini) via browser or mobile application for work tasks without organisational approval.

- Installation of unapproved browser extensions, plug-ins, or desktop applications that transmit organizational content to third-party AI services for processing.

- Direct API calls to external AI inference endpoints using personal accounts or credentials, where outputs are incorporated into organisational deliverables.

- Embedding of AI-powered features within personal SaaS subscriptions (e.g., AI writing assistants, code completion tools) used for work purposes.

- Local deployment of open-source large language models on personal devices for work-related tasks without IT security review.

- Use of AI features auto-enabled within sanctioned applications where the AI component itself has not undergone separate governance review.

Excludes (Out-of-scope)

- Enterprise AI features embedded within sanctioned SaaS platforms operating under contractual data processing agreements and organisational governance.

- Internal AI systems deployed through official MLOps pipelines with documented risk assessment, security review, and compliance sign-off.

- AI tools procured through formal vendor evaluation processes with established service-level agreements and data protection addenda.

- Personal use of AI tools for non-work purposes on personal time and personal devices, with no organisational data involved.

- Research and development use within designated sandbox environments with appropriate access controls and data segregation.

3.2. Taxonomy for Risk Classification

3.3. Risk Dimensions

D1: Data Sensitivity

- Public: Non-confidential, publicly available information.

- Internal: Organisational information not intended for external distribution.

- Confidential: Proprietary business information, trade secrets, strategic plans.

- Regulated: Personal data subject to GDPR (GDPR, 2016), health information, financial records, or other legally protected categories.

D2: Decision Impact

- Informational: AI output used for background research or ideation with no direct decision influence.

- Advisory: AI output informs human decisions but undergoes substantive review before action.

- Determinative: AI output materially shapes decisions affecting individuals, contracts, or organisational commitments.

- Automated: AI output directly triggers actions with legal or similarly significant effects, potentially engaging Article 22 GDPR obligations (Art22, GDPR).

D3: Processing Transparency

- Documented: Processing logic, data flows, and model provenance are known and recorded.

- Partially Opaque: Service provider discloses general processing approach but not implementation details.

- Fully Opaque: No visibility into model architecture, training data, or processing pipeline; “black box” third-party service.

D4: Organisational Function

- General Operations: Administrative, logistical, or support functions.

- Customer-Facing: Marketing, sales, customer service, where AI outputs may reach external parties.

- High-Stakes Functions: Human resources, legal, finance, compliance – domains with significant individual impact or regulatory exposure.

- Safety-Critical: Functions where errors could cause physical harm, system failures, or significant financial loss.

D5: Third-Party Dependency

- None: Locally deployed models with no external data transmission.

- Inference-Only: Data transmitted to external service for processing; no declared retention or training use.

- Training-Inclusive: Service terms permit use of inputs for model improvement, creating data leakage and IP exposure.

- Unknown: Terms of service not reviewed or understood; data handling practices indeterminate.

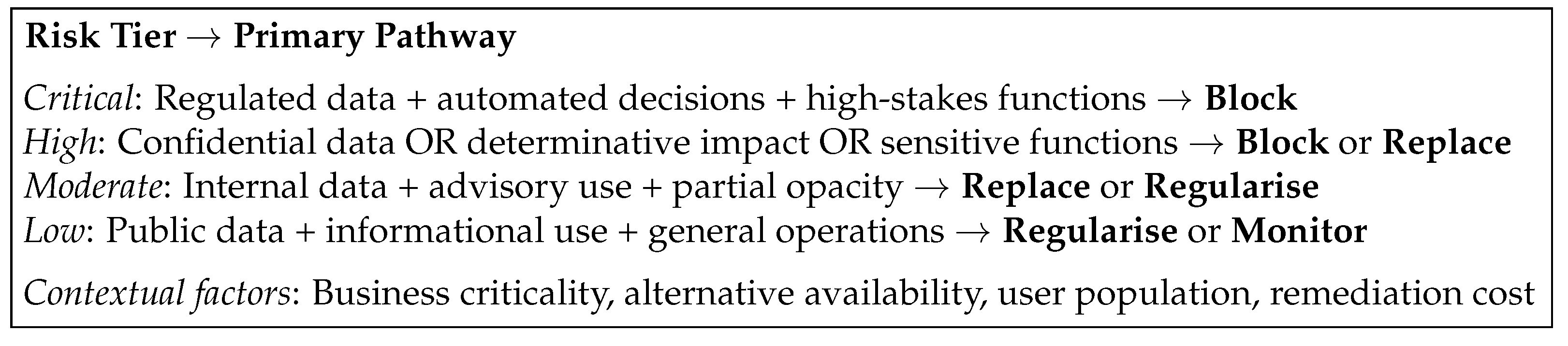

3.4. Risk Tier Assignment

4. Proposed Framework

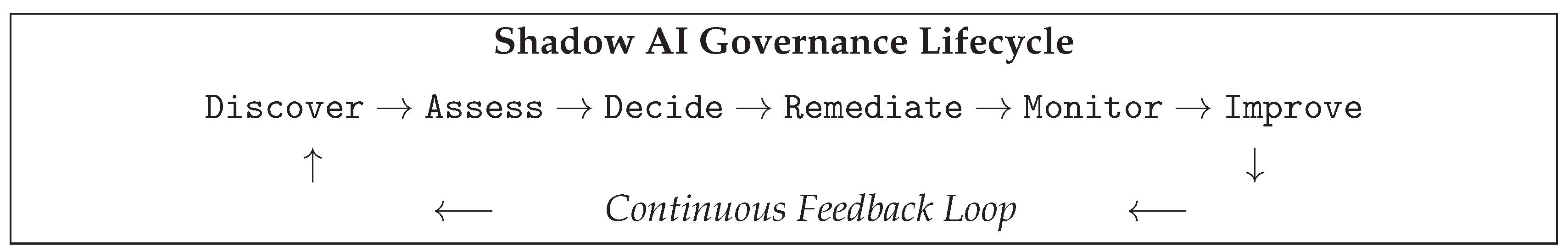

4.1. Lifecycle: From Discovery to Continuous Control

Stage 1: Discover

Stage 2: Assess

Stage 3: Decide

- Block: Prohibit and technically restrict usage. Appropriate for Critical-tier instances involving regulated data, prohibited AI practices, or unacceptable risk exposure.

- Replace: Substitute with an approved alternative that meets security, privacy, and compliance requirements while preserving productivity benefits.

- Regularise: Onboard the tool or use case into governed AI operations through formal risk assessment, contractual arrangements, and evidence artefact generation.

Stage 4: Remediate

Stage 5: Monitor

Stage 6: Improve

4.2. Obligations-to-Evidence Mapping

4.3. Mapping Structure

4.4. Multi-Layer Detection View

| Layer | Observable signals / log sources | Main limitations |

|---|---|---|

| Network | DNS/HTTP(S) metadata, proxy logs, SASE logs, CASB signals. | Encryption, personal networks, approved SaaS overlap. |

| Endpoint | EDR telemetry, process/browser events, clipboard/file activity patterns. | BYOD, privacy constraints, local models. |

| Identity/SaaS | SSO audit logs, OAuth grants, unusual app authorisations. | Shadow accounts, token reuse, incomplete coverage. |

| UBA/Behaviour | Anomalous usage patterns, time/volume irregularities. | False positives, baseline drift. |

| Content/DLP (where applicable) | Policy matches for sensitive data exfiltration. | Minimisation limits, encryption, scope constraints. |

4.5. Governance and Remediation Paths

Decision Logic

Remediation Options

- Block: prohibit and technically restrict high-risk shadow AI usage.

- Replace: provide an approved alternative that meets security/compliance needs.

- Regularise: onboard the use case into governed AI with explicit evidence artefacts.

5. Validation Plan

- PRISMA-informed systematic review: A structured literature review following Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 guidelines (Page, 2021) will identify and synthesise existing evidence on shadow AI risks, detection mechanisms, and governance approaches.

- Expert feedback: Reliance on domain experts to assess framework completeness, feasibility, and clarity of the mapping and detection layers. The expert panel will consist of practitioners from information security, data protection, AI governance, and compliance functions across multiple industry sectors.

- Case studies: The framework will be applied to representative organisational scenarios to assess practical applicability. Case study design follows established information systems research methodology (Yin, 2018).

- Survey: A Survey instrument will be used to measure perceived usefulness, adoption barriers, and governance acceptability among IT security, compliance, and business professionals.

6. Discussion

6.1. Privacy-Preserving Monitoring in the Workplace

- Proportionality: Monitoring must be limited to what is necessary for security objectives; targeted, risk-based detection is preferable to broad surveillance and should be supported by a documented proportionality assessment.

- Data Minimisation: Detection should rely on the least intrusive data possible, prioritising metadata over content inspection, with human review restricted to confirmed policy violations.

- Retention Limits: Monitoring data or detection logs should be retained only for predefined, justified periods, enforced through automated deletion and transparently documented.

- Transparency: Employees must be clearly informed about monitoring activities, their purposes, and data rights. Covert monitoring must be avoided except where legally and ethically justified.

- DPIA Requirement: Employee monitoring typically requires a GDPR Data Protection Impact Assessment under GDPR Article 35 (GDPR, 2016) to assess privacy risks, proportionality, and mitigations. Employee representatives should be consulted where required by national law.

6.2. Limitations and Practical Constraints

Technical Limitations

- Encryption and TLS: Because most network traffic is encrypted, monitoring is usually limited to basic metadata. Inspecting actual content requires endpoint tools or breaking encryption, which is more complex and raises privacy issues.

- BYOD and Personal Devices: Employees accessing AI services via personal devices on personal networks entirely bypass corporate detection infrastructure. Policy-based controls (acceptable use agreements) substitute for technical detection in these scenarios.

- Shadow Channels: Browser-based AI access, mobile applications, and API integrations create multiple access vectors. Therefore, comprehensive detection requires coverage across all channels, which may be technically or economically infeasible.

- Local Models: Locally deployed open-source models (e.g., running LLaMA variants on personal hardware) generate no network signals to external services, rendering network-based detection ineffective.

- Approved SaaS with Embedded AI: Distinguishing shadow AI from AI features embedded in approved SaaS platforms requires maintained inventories and distinct policy definitions.

- Detection Accuracy: False positives increase operational burden and reduce trust, while false negatives allow shadow AI usage to go undetected. Detection accuracy is challenged by evolving AI tools and usage patterns, requiring continuous measurement and acceptance of inherent limitations.

Organisational Factors

Methodological Constraints

6.3. Regulatory Change and XAI-as-Evidence

7. Conclusions

- 1.

- Conceptual foundations: definition, taxonomy, and lifecycle of shadow AI;

- 2.

- Compliance mechanisms: regulatory matrices, liability allocation logic, and contractual/organisational templates;

- 3.

- Technical detection and monitoring: a multi-layered detection architecture incorporating privacy-preserving methods;

- 4.

- Governance and risk management: a structured, tiered governance model supported by risk assessment instruments, policies, and implementation guidance.

- Theoretical contribution: a structured conceptualisation of shadow AI as distinct from shadow IT, and a unified framing of how legal requirements can inform technical design (and vice versa) within risk-based organisational governance.

- Methodological contribution: the application of PRISMA-driven evidence synthesis to an interdisciplinary AI governance problem, combined with a coherent, mixed evaluation approach (expert validation, case studies, and surveys) that explicitly accounts for data protection constraints.

- Practical contribution: delivery of ready-to-adopt organisational tools, including phased implementation roadmaps, regulatory compliance matrices and checklists, privacy-preserving detection guidance, and risk scoring matrices and assessment templates for systematic shadow AI risk evaluation.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- American Bar Association. Formal Opinion 512: Generative Artificial Intelligence Tools, 2023; ABA Standing Committee on Ethics and Professional Responsibility.

- Cloud Security Alliance. Shadow AI: Risks and Governance Considerations. Online. 2024.

- Data Protection Commission (Ireland). Guidance on Artificial Intelligence and Data Protection. Online. 2024.

- European Data Protection Board. Opinion 28/2024 on Data Protection and Artificial Intelligence Models. Online. 2024.

- Directive (EU) 2019/1937 of the European Parliament and of the Council on the Protection of Persons Who Report Breaches of Union Law (Whistleblower Protection Directive). 2019. EU Directive 2019/1937.

- Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the Protection of Natural Persons with Regard to the Processing of Personal Data and on the Free Movement of Such Data (GDPR); General Data Protection Regulation, 2016.

- European Commission. The AI Act, 2024. Online.

- European Parliament. Artificial Intelligence Act: MEPs Adopt Landmark Law, 2024. Online.

- Fürstenau, D.; Rothe, H. Shadow IT Systems: Discerning the Good and the Evil. In Proceedings of the Proceedings of the 22nd European Conference on Information Systems (ECIS), Tel Aviv, Israel, 2014. [Google Scholar]

- Gartner. AI Governance Frameworks for Enterprises, 2024; Gartner Research.

- Local, GDPR. GDPR and AI Compliance Guide, 2025. Online.

- Haag, S.; Eckhardt, A. Shadow IT. Business & Information Systems Engineering 2017, 59, 469–473. [Google Scholar] [CrossRef]

- Hevner, A.R.; March, S.T.; Park, J.; Ram, S. Design Science in Information Systems Research. MIS Quarterly 2004, 28, 75–105. [Google Scholar] [CrossRef]

- IBM. What Is Shadow AI? Online. 2024. [Google Scholar] [PubMed]

- IEEE. Ethically Aligned Design: A Vision for Prioritizing Human Well-Being with Autonomous and Intelligent Systems, 2019; IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems.

- Infosecurity Magazine. Survey Reveals 77% of UK Cyber Leaders Believe GenAI Has Contributed to Rise in Security Incidents. Online. 2024.

- ISACA. Shadow AI Governance: Managing the Risks, 2025. Online.

- International Organization for Standardization. ISO/IEC 42001:2023 — Information Technology — Artificial Intelligence — Management System; Standard. 2023.

- King; Spalding. Navigating the Global AI Regulatory Landscape, 2025. Online.

- Kopper, A.; Westner, M. Deriving a Framework for Causes, Consequences and Governance of Shadow IT from Literature. In Proceedings of the Proceedings of the 22nd Americas Conference on Information Systems (AMCIS), San Diego, CA, 2016. [Google Scholar]

- Kuner, C.; Bygrave, L.A.; Docksey, C. The EU General Data Protection Regulation (GDPR): A Commentary; Oxford University Press, 2020. [Google Scholar]

- Lakera. Shadow AI: The Hidden Risks Organizations Face, 2024. Online.

- Liberati, A.; Altman, D.G.; Tetzlaff, J.; Mulrow, C.; Gøtzsche, P.C.; Ioannidis, J.P.A.; Clarke, M.; Devereaux, P.J.; Kleijnen, J.; Moher, D. The PRISMA Statement for Reporting Systematic Reviews and Meta-Analyses of Studies That Evaluate Health Care Interventions: Explanation and Elaboration. PLoS Medicine 2009, 6, e1000100. [Google Scholar] [CrossRef] [PubMed]

- Lohmann, S.; Krcmar, H. Cloud Access Security Brokers: Current Solutions and Challenges. Business & Information Systems Engineering 2018, 60, 197–210. [Google Scholar]

- McKinsey Company. The State of AI Governance in 2024, 2024. Online/Report.

- Microsoft. LinkedIn. 2024 Work Trend Index: Annual Report, 2024. Online.

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; The PRISMA Group. Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Medicine 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- National Institute of Standards and Technology. AI Risk Management Framework (AI RMF 1.0), 2023. Online.

- OECD. Recommendation of the Council on Artificial Intelligence, 2019; OECD/LEGAL/0449.

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef] [PubMed]

- Palo Alto Networks. Shadow AI Security: Detecting and Managing Unauthorized AI, 2025. Online.

- Peffers, K.; Tuunanen, T.; Rothenberger, M.A.; Chatterjee, S. A Design Science Research Methodology for Information Systems Research. Journal of Management Information Systems 2007, 24, 45–77. [Google Scholar] [CrossRef]

- PurpleSec. Shadow IT Statistics and Security Risks, 2025. Online.

- Puthal, D.; Mishra, A.K.; Mohanty, S.P.; et al. Shadow AI: Cyber Security Implications, Opportunities and Challenges in the Unseen Frontier. SN Computer Science 2025, 6, 405. [Google Scholar] [CrossRef]

- PwC. EU AI Act: What Organizations Need to Know. Online. 2024.

- Sartor, G.; Lagioia, F. The Impact of the General Data Protection Regulation (GDPR) on Artificial Intelligence, 2020; European Parliamentary Research Service.

- Securiti. Shadow AI: Understanding the Risks and Governance Challenges. Online. 2024.

- Silic, M.; Back, A. Shadow IT — A View from Behind the Curtain. Computers & Security 2014, 45, 274–283. [Google Scholar] [CrossRef]

- Singh, A.P.; Kumar, R.; Sharma, S.; Kumar, A. Encrypted Malware Detection Methodology Without Decryption Using Deep Learning-Based Approaches. Turkish Journal of Engineering 2024, 8, 498–509. [Google Scholar] [CrossRef]

- Varonis. Shadow AI Security Risks: What You Need to Know, 2025. Online.

- World Health Organization. Ethics and Governance of Artificial Intelligence for Health. Online. 2024.

- Wiz. Shadow AI: The Invisible Security Threat in Your Organization, 2025. Online.

- Zylo. Shadow AI Report: Understanding the Unauthorized AI Landscape, 2025. Online.

| Tier | Characterization | Governance Response |

|---|---|---|

| Critical | Regulated/confidential data + determinative/automated decisions + high-stakes functions | Immediate block; incident investigation; remediation mandatory |

| High | Confidential data OR determinative decisions OR high-stakes functions | Replace with approved alternative; escalate to governance review |

| Moderate | Internal data + advisory decisions + partially opaque processing | Regularize through governed onboarding with evidence artefacts |

| Low | Public data + informational use + general operations | Monitor; consider for approved tool catalogue; awareness training |

| Element | What it captures |

|---|---|

| Requirement | The regulatory or policy provision that imposes a verifiable duty (e.g., GDPR Article 35, EU AI Act Article 26). |

| Obligation | The operational statement of what must be ensured, expressed in terms that are open to verification. |

| Evidence Artefact | The specific document, log, record, or report that demonstrates obligation fulfilment. |

| Control | The technical and/or organisational mechanism that produces or supports the evidence artefact. |

| Owner (RACI) | Assignment of Responsible, Accountable, Consulted, and Informed roles for the obligation. |

| Trigger | The condition or event that initiates review, escalation, or remediation action. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).