Submitted:

27 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

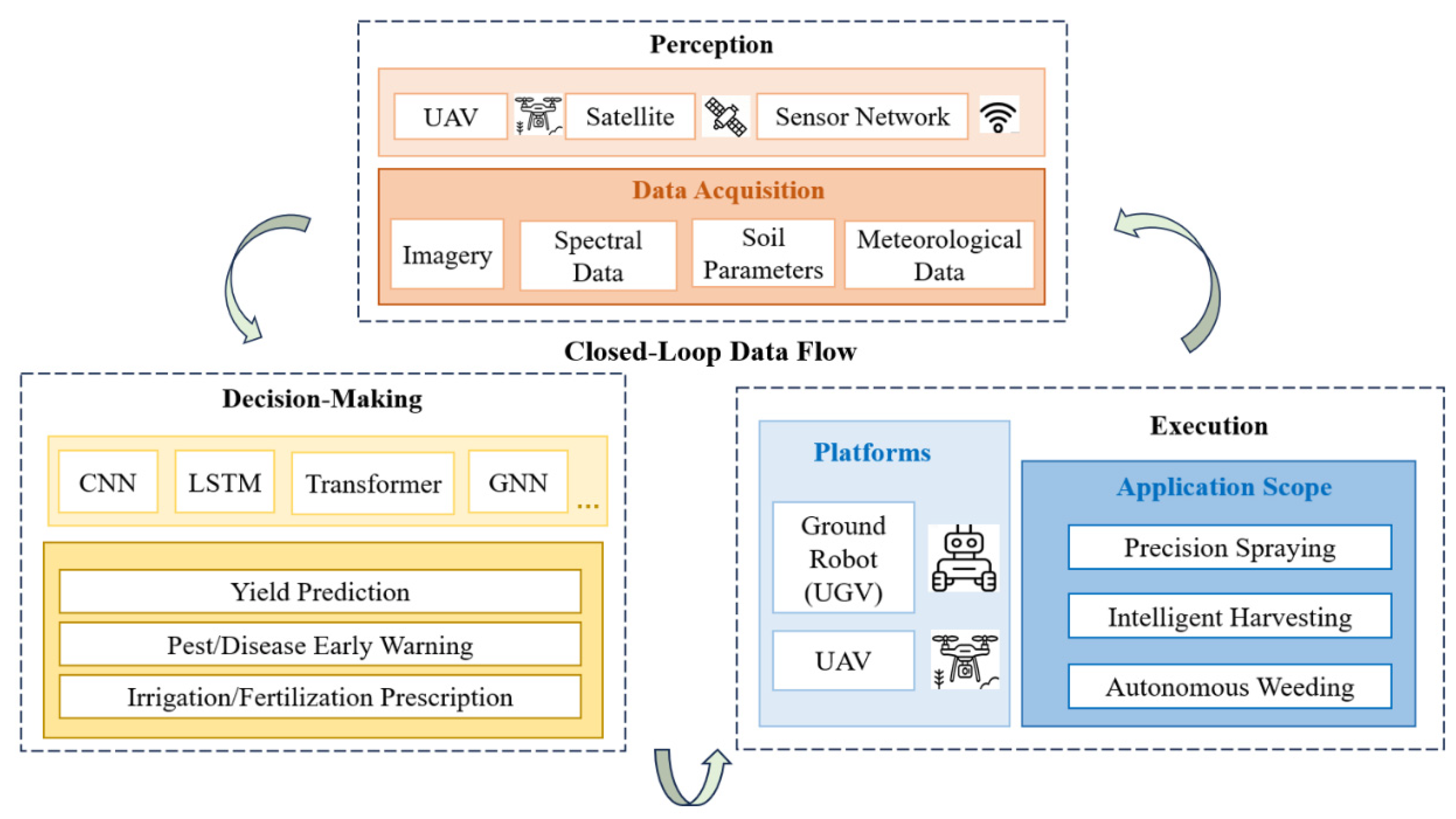

1. Introduction

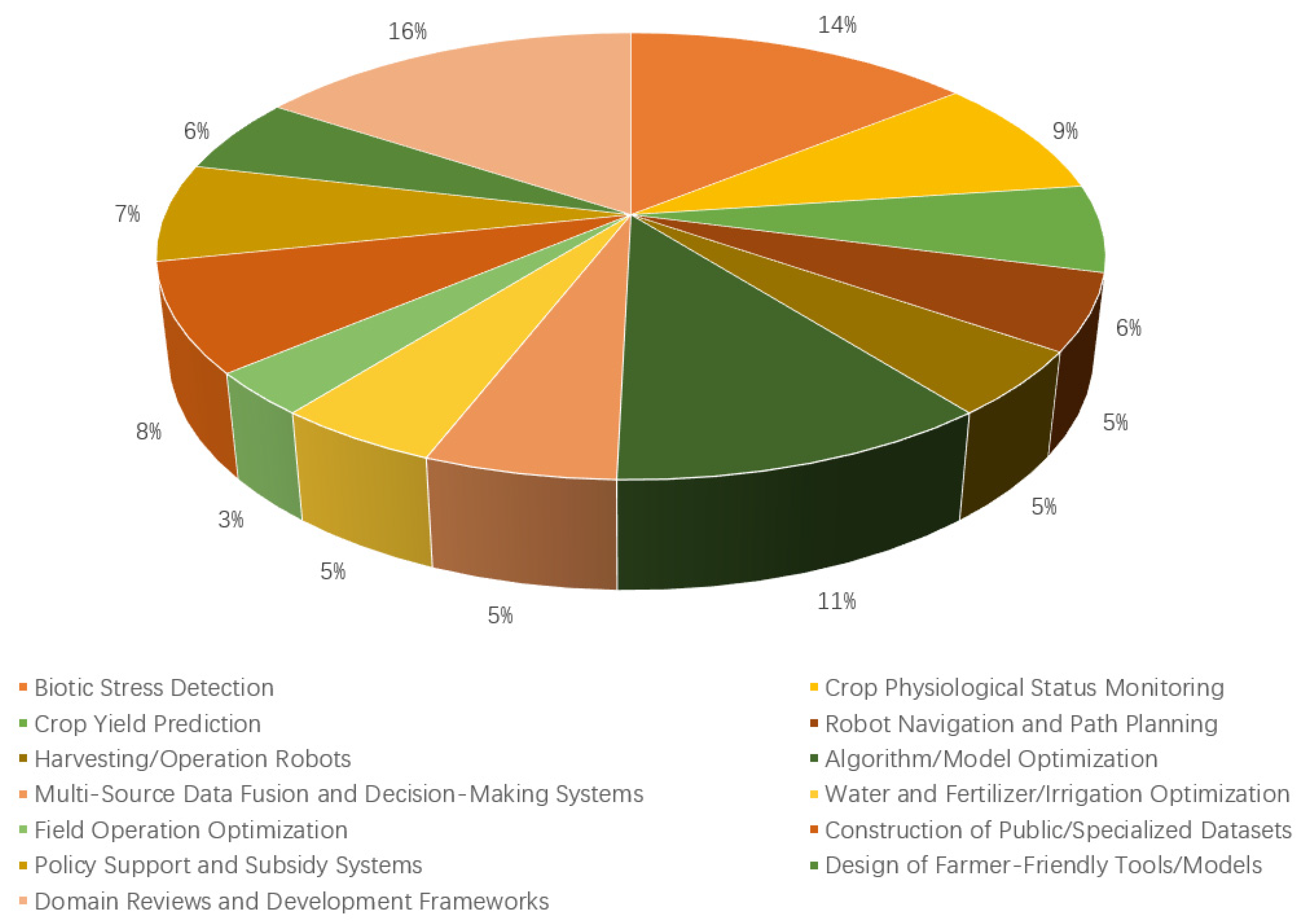

2. Related Publication Retrieval and Screening

3. Crop Perception

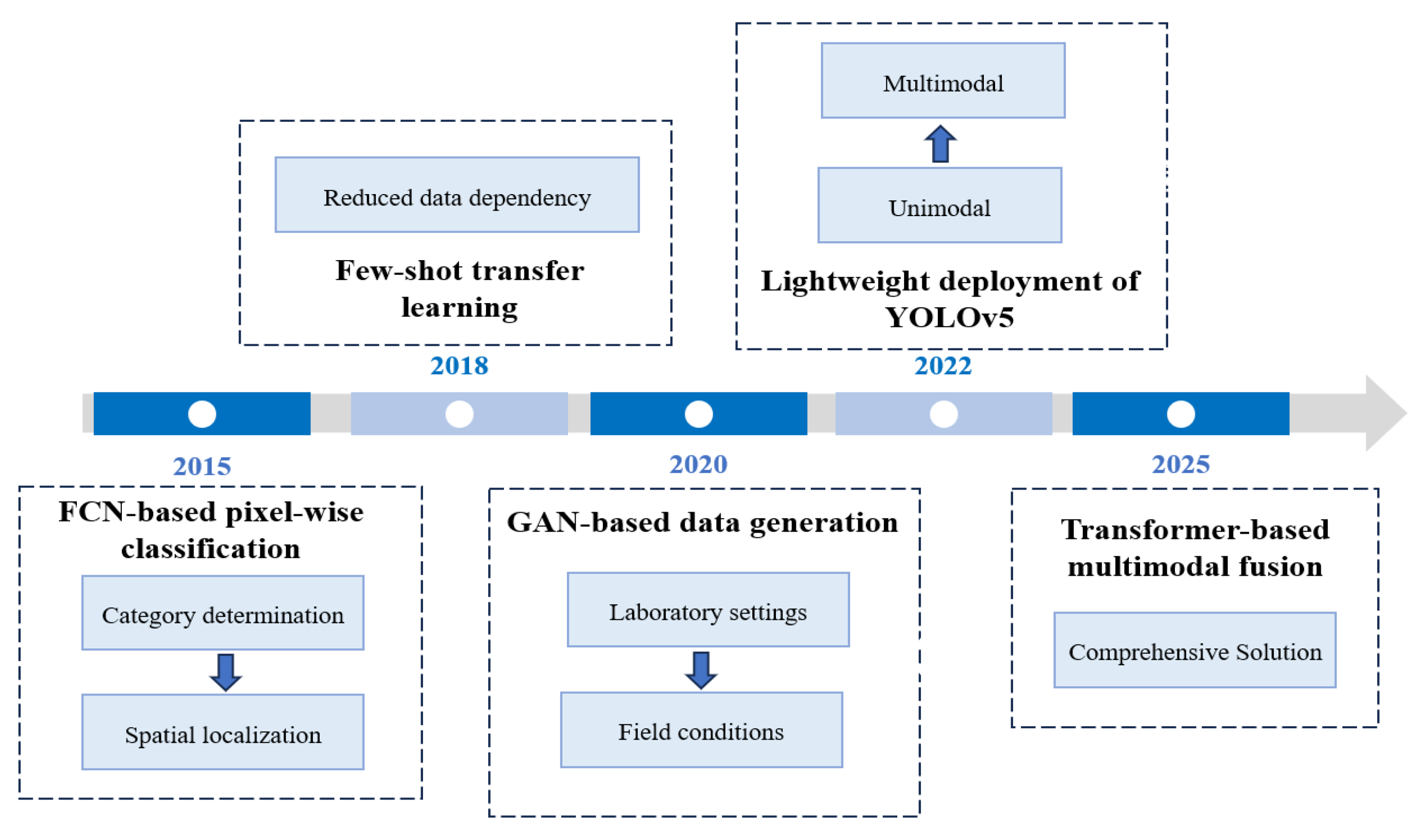

3.1. Evolution of DL Models for Intelligent Crop Disease Perception

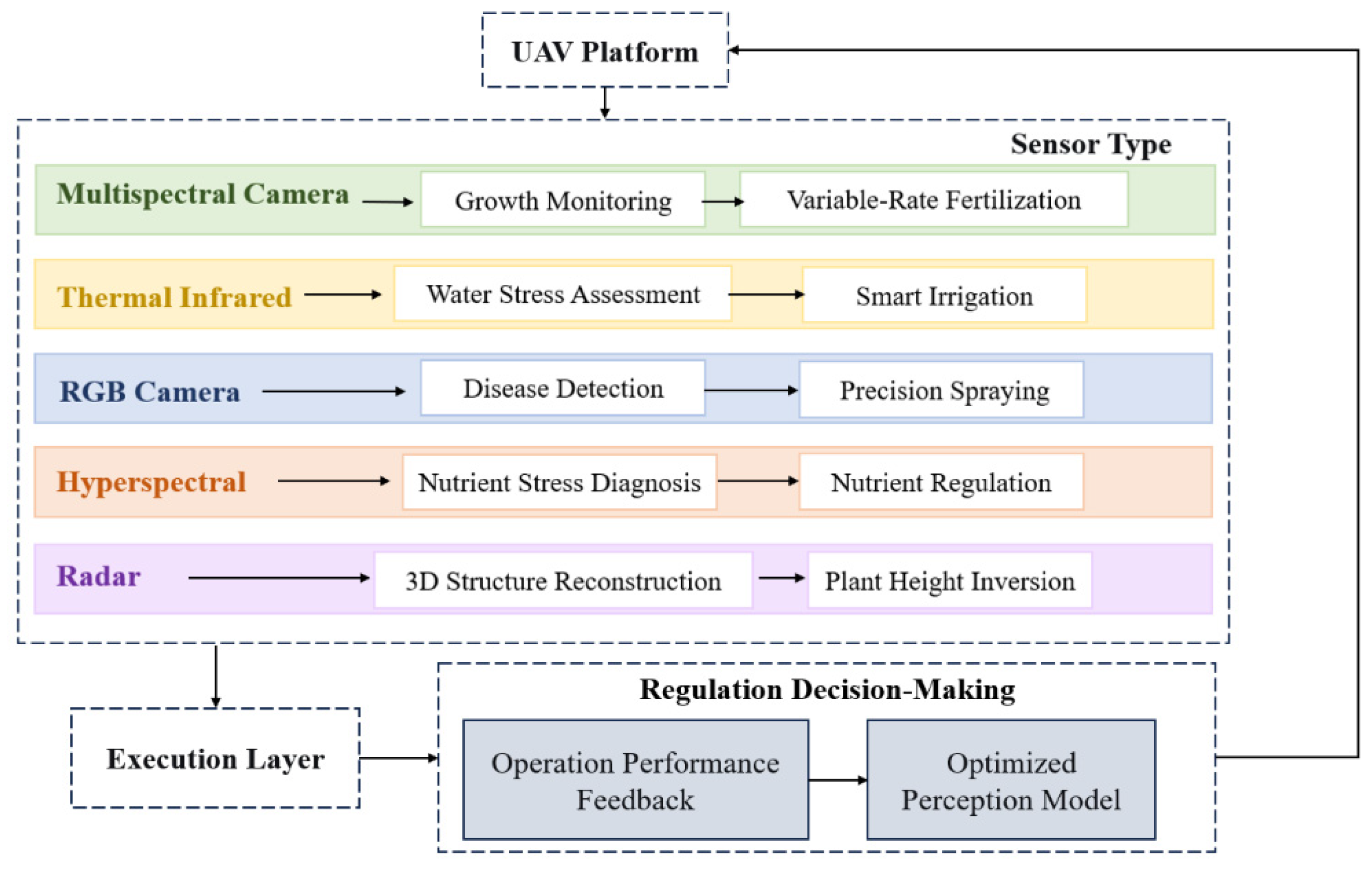

3.2. UAV-Enabled Fine-Grained Agricultural Perception

3.2.1. Precise Crop Pest/Disease Detection: From Macro-Scale Identification to Micro-Scale Lesion Segmentation

3.2.2. Dynamic Crop Growth Monitoring: From Morphological Observation to Physiological Parameter Retrieval

3.2.3. Resource Stress Assessment: Rapid Diagnosis of Water and Nutrient Stress

4. Intelligent Agricultural Decision-Making Based on Data-Driven Prediction, Regulation, and Planning

4.1. Intelligent Agricultural Decision-Making via Multi-Source Data Fusion

4.1.1. Meteorological Data for Dynamic Environmental Recording

4.1.2. Soil Data for Fine-Grained Characterization of Crop Growth Substrate

4.1.3. RS Data for Multi-Scale 3D Perception of Crop Information

4.1.4. Market Data for Dynamic Feedback of Supply-Demand and Policy

4.2. Predictive Decision-Making by ML-Based Dynamic Yield Prediction

4.3. Preventive/Protective Decision-Making: Pest/Disease Risk Prediction and Early Warning

4.4. Regulatory Decision-Making: Prescription Generation for Precision Water and Nutrient Management

4.5. Planning Decision-Making: Synergistic Optimization of Planting Layout and Market Supply-Demand

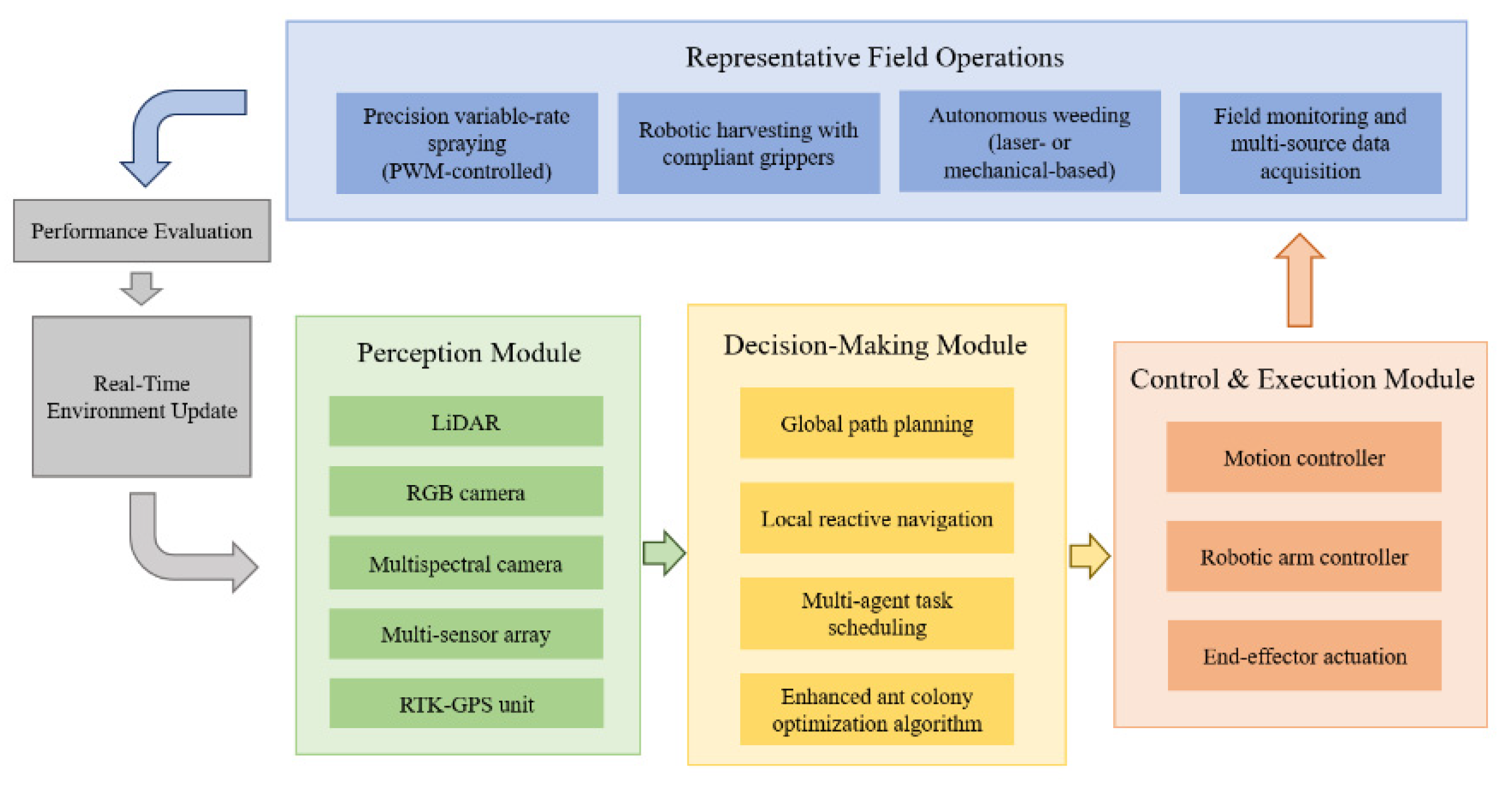

5. Autonomous Operation Execution: System Architecture and Intelligent Planning of Agricultural Robots

5.1. Platform and System Architecture of Agricultural Robots

5.1.1. Agricultural Robot Work Platforms

5.1.2. Modular Analysis of the System Architecture

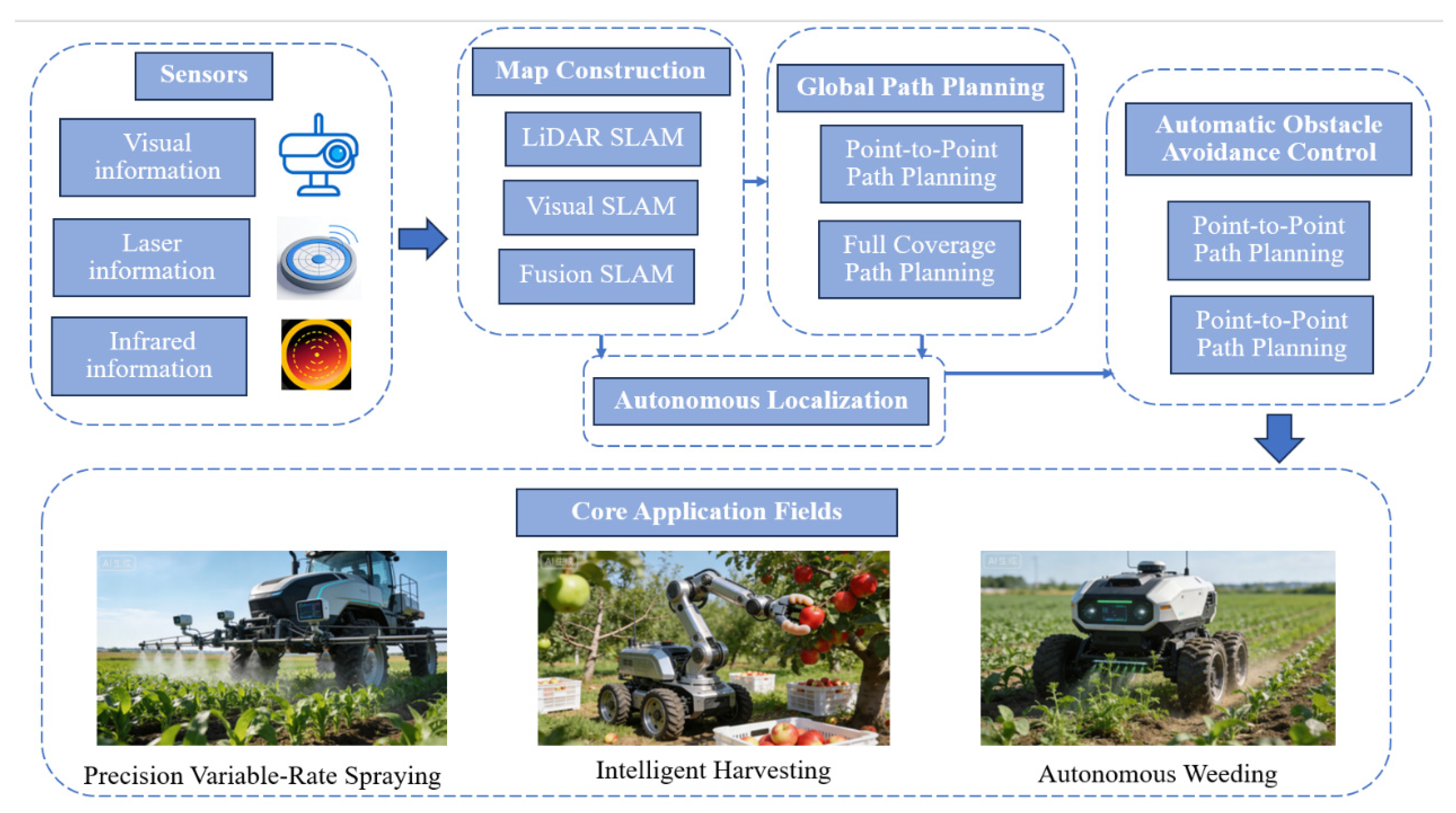

5.2. Navigation and Path Planning Algorithms for Complex Environments

5.2.1. Global Path Planning for Unstructured Terrain

5.2.2. Local Perception and Planning in Perception-Limited Environments

5.2.3. Dynamic Multi-Robot Coordination and Operational Path Optimization

5.2.4. Multi-Sensor Fusion for Enhanced Navigation Reliability

6. Challenges and Future Outlook

6.1. Core Challenges

6.1.1. System Fragmentation: Obstacles to Synergistic Integration of Perception, Decision, and Execution

6.1.2. Data and Algorithmic Bottlenecks: Triple Challenge of Quality, Privacy, and Generalizability

6.1.3. Hardware Reliability Dilemma: High Costs and Low Durability Constraining Large-Scale Deployment

6.2. Future Landscape of Human-Centered and Inclusive Agriculture

6.2.1. Empowering the Agricultural Brain with Frontier Innovations

6.2.2. Advancing Technologies from Precision Toward Sustainability

6.2.3. Promoting Global Adoption Through Human-Centered System Design

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviation

| AI | Artificial Intelligence |

| PDE | Perception-Decision-Execution |

| UAV | Unmanned Aerial Vehicle |

| RS | Remote Sensing |

| SLAM | Simultaneous Localization and Mapping |

| RL | Reinforcement Learning |

| DL | Deep Learning |

| ML | Machine Learning |

| CNN | Convolutional Neural Network |

| YOLO | You Only Look Once |

| RF | Random Forest |

| FAO | Food and Agriculture Organization |

| WMO | World Meteorological Organization |

| LSTM | Long Short-Term Memory |

| GNN | Graph Neural Network |

| UGV | Unmanned Ground Vehicle |

| NIR | Near-Infrared light |

| LiDAR | Light Detection and Ranging |

| RGB-D | RGB plus Depth |

| SVR | Support Vector Regression |

| PSO | Particle Swarm Optimization |

| GA | Genetic Algorithm |

| AHP | Analytic Hierarchy Process |

| LLM | Large Language Model |

| GRU | Gated Recurrent Unit |

| NDVI | Normalized Difference Vegetation Index |

| NPK | Nitrogen, Phosphorus, and Potassium |

| RTK-GPS | Real-Time Kinematic Global Positioning System |

| IMU | Inertial Measurement Unit |

| SAR | Synthetic Aperture Radar |

| XGBoost | Extreme Gradient Boosting |

| FCN | Fully Convolutional Network |

| GAN | Generative Adversarial Network |

| mAP | mean Average Precision |

| ISO | International Organization for Standardization |

References

- Ahmad, I.; Yang, Y.; Yue, Y.; et al. Deep Learning Based Detector YOLOv5 for Identifying Insect Pests. Applied Sciences 2022, 12(19), 10167. [Google Scholar] [CrossRef]

- Maimaitijiang, M.; Sagan, V.; Sidike, P.; et al. Soybean yield prediction from UAV using multi-modal data fusion and deep learning. Remote Sens. Environ. 2020, 237, 111599. [Google Scholar] [CrossRef]

- Hughes, D.; Salathé, M. An open access repository of images on plant health to enable the development of mobile disease diagnostics. arXiv 2015, arXiv:1511.08060. [Google Scholar]

- Wang, D.; Zhang, D.; Yang, G.; et al. CropDeep: A multi-class dataset for crop pest and disease detection in the wild. Computers and Electronics in Agriculture 2022, 201, 107306. [Google Scholar]

- Chiu, M. T.; Li, X.; Xu, Y.; et al. Agricultural-Vision: A large aerial image database for agricultural pattern analysis. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2021; pp. 868–877. [Google Scholar]

- Smith, J.; Johnson, L.; Brown, K. SoybeanNet: A Large-Scale RGB-D Dataset for Crop and Weed Segmentation in Precision Agriculture. IEEE Robotics and Automation Letters 2024, 9(3), 12345–12352. [Google Scholar]

- Li, Y.; Wang, H.; Chen, Z.; et al. FruitVerse: A Large-Scale Dataset for Fine-Grained Fruit Perception in Orchard Environments. International Journal of Computer Vision 2024, 132(5), 789–805. [Google Scholar]

- Jones, M.; Williams, R. WeedMap-3D: A Multi-Modal Dataset for Weed Detection and Localization in Agricultural Fields. Computers and Electronics in Agriculture 2024, 220, 108876. [Google Scholar]

- Momin, S.; Yamamoto, K.; Miyamoto, K.; et al. AgriSegNet: A Deep Learning-based Framework for Semantic Segmentation of Crop and Weed. Computers and Electronics in Agriculture 2023, 204, 107509. [Google Scholar]

- Wu, J.; Birch, C.; Wang, Y.; et al. OpenWeedLocator (OWL): An open-source, low-cost device for fallow weed detection. HardwareX 2024, 17, e00507. [Google Scholar]

- Tseng, G.; Zvonkov, I.; Rolf, E.; et al. CropHarvest: A global dataset for crop type classification and yield forecasting. Nature Scientific Data 2022, 9(1), 357. [Google Scholar]

- Long, J; Shelhamer, E; Darrell, T. Fully convolutional networks for semantic segmentation. IEEE Trans Pattern Anal Mach Intell. 2015, 39(4), 640–51. [Google Scholar]

- Too, EC; Yujian, L; Njuki, S; Yingchun, L. A comparative study of fne-tuning deep learning models for plant disease identifcation. Comput Electron Agric. 2018, 161, 272–9. [Google Scholar] [CrossRef]

- Picon, A; Seitz, M; Alvarez-Gila, A; Mohnke, P; Echazarra, J. Crop conditional convolutional neural networks for massive multi-crop plant disease classifcation over cell phone acquired images taken on real feld conditions. Comput Electron Agric. 2019, 167, 105093. [Google Scholar] [CrossRef]

- Kerkech, M; Hafane, A; Canals, R. Vine disease detection in UAV multi-spectralimages using optimized image registration and deep learning segmentation approach. Comput Electron Agric. 2020, 174, 105446. [Google Scholar] [CrossRef]

- Nazki, H.; Yoon, S.; Fuentes, A.; Park, D. S. Unsupervised image translation using adversarial networks for improved plant disease recognition. Comput. Electron. Agric. 2020, 168, 105117. [Google Scholar] [CrossRef]

- Shoaib, M.; Sadeghi-Niaraki, A.; Ali, F.; et al. Leveraging deep learning for plant disease and pest detection: a comprehensive review and future directions. Frontiers in Plant Science 2025, 16, 1538163. [Google Scholar] [CrossRef]

- Liu, W.; Cao, X.; Fan, J.; et al. Detecting wheat powdery mildew and predicting grain yield using unmanned aerial photography. Plant Disease 2018, 102(9), 1981–1988. [Google Scholar] [CrossRef] [PubMed]

- Lan, Y.; Huang, Z.; Deng, X.; et al. Comparison of machine learning methods for citrus greening detection on UAV multi-spectral images. Computers and Electronics in Agriculture 2020, 171, 105234. [Google Scholar] [CrossRef]

- Abdulridha, J.; Ampatzidis, Y.; Roberts, P. Detecting powdery mildew disease in squash at different stages using UAV-based hyper-spectral imaging and artificial intelligence. Biosyst. Eng. 2020, 197, 135–148. [Google Scholar] [CrossRef]

- Nahiyoon, S. A.; Cui, L.; Yang, D.; et al. Biocidal radiuses of cycloxaprid, imidacloprid and lambda-cyhalothrin droplets controlling against cotton aphid (Aphis gossypii) using an unmanned aerial vehicle. Pest Management Science 2020, 76(11), 3029–3036. [Google Scholar] [CrossRef]

- Bhujel, A.; Khan, F.; Basak, J. K.; et al. Detection of gray mold disease and its severity on strawberry using deep learning networks. Journal of Plant Diseases and Protection 2022, 129(6), 579–592. [Google Scholar] [CrossRef]

- Huang, Y; Liu, Z; Zhao, H; et al. YOLO-YSTs: An improved YOLOv10n-based method for real-time field pest detection[J]. Agronomy 2025, 15(3), 575. [Google Scholar] [CrossRef]

- Sharma, H; Sidhu, H; Bhowmik, A. Remote Sensing Using Unmanned Aerial Vehicles for Water Stress Detection: A Review Focusing on Specialty Crops[J]. Drones 2025, 9(4), 241. [Google Scholar] [CrossRef]

- Yang, G.; Li, C.; Yu, H.; et al. UAV based multi-load remote sensing technologies for wheat breeding information acquirement. Transactions of the Chinese Society of Agricultural Engineering 2015, 31(10), 184–190. [Google Scholar]

- Yinka-Banjo, C.; Ajayi, O. Sky-farmers: Applications of unmanned aerial vehicles (UAV) in agriculture. In Autonomous vehicles; Elsevier, 2019; pp. 107–128. [Google Scholar]

- Marin, D. B.; Santana, L. S.; Barbosa, B. D. S.; et al. Detecting coffee leaf rust with UAV-based vegetation indices and decision tree machine learning models. Computers and Electronics in Agriculture 2021, 190, 106476. [Google Scholar] [CrossRef]

- Lin, Y. C.; Habib, A. Quality control and crop characterization framework for multi-temporal UAV LiDAR data over mechanized agricultural fields. Remote Sensing of Environment 2021, 256, 112299. [Google Scholar] [CrossRef]

- Feng, Z.; Song, L.; Zhang, S.; et al. Wheat Powdery Mildew monitoring based on information fusion of multi-spectral and thermal infrared images acquired with an unmanned aerial vehicle. Scientia Agricultura Sinica 2022, 55(5), 890–906. [Google Scholar]

- Bouguettaya, A.; Zarzour, H.; Kechida, A.; et al. Deep learning techniques to classify agricultural crops through UAV imagery: a review. Neural Computing and Applications 2022, 34(12), 9511–9536. [Google Scholar] [CrossRef]

- Liu, Y; Feng, H; Fan, Y; et al. Utilizing UAV-based hyper-spectral remote sensing combined with various agronomic traits to monitor potato growth and estimate yield[J]. Computers and Electronics in Agriculture 2025. [Google Scholar]

- Wang, Y; An, J; Shao, M; et al. A comprehensive review of proximal spectral sensing devices and diagnostic equipment for field crop growth monitoring[J]. Precision Agriculture 2025. [Google Scholar] [CrossRef]

- Gago, J.; Douthe, C.; Coopman, R. E.; et al. UAVs challenge to assess water stress for sustainable agriculture. Agricultural Water Management 2015, 153, 9–19. [Google Scholar] [CrossRef]

- Golhani, A.; Srivastava, A.; Escobar, D. E. A review of hyper-spectral imaging applications for the detection of biotic and abiotic stresses in plants. Remote Sensing 2018, 10(6), 828. [Google Scholar]

- Feng, S.; Cao, Y.; Xu, T.; et al. Rice leaf blast classification method based on fused features and one-dimensional deep convolutional neural network. Remote Sensing 2021, 13(16), 3207. [Google Scholar] [CrossRef]

- Hu, P.; Zhang, R.; Yang, J.; et al. Development Status and Key Technologies of Plant Protection UAVs in China: A Review. Drones 2022, 6(12), 354. [Google Scholar] [CrossRef]

- Xu, Z.; Zhang, Q.; Xiang, S.; et al. Monitoring the severity of Pantana phyllostachysae Chao infestation in Moso bamboo forests based on UAV multi-spectral remote sensing feature selection. Forests 2022, 13(3), 418. [Google Scholar] [CrossRef]

- Okyere, F G; Cudjoe, D K; Virlet, N; et al. hyper-spectral imaging for phenotyping plant drought stress and nitrogen interactions using multivariate modeling and machine learning techniques in wheat[J]. Remote Sensing 2024, 16(18), 3446. [Google Scholar] [CrossRef]

- Food and Agriculture Organization of the United Nations; World Meteorological Organization. FAO and WMO report highlights extreme heat risks for agriculture[R]. 2025.

- Shaikh, T. A.; Mir, W. A.; Rasool, T.; et al. Machine Learning for Smart Agriculture and Precision Farming: Towards Making the Fields Talk. Archives of Computational Methods in Engineering 2022, 29, 4557–4597. [Google Scholar] [CrossRef]

- Manafifard, M.; Huang, J. A comprehensive review on wheat yield prediction based on remote sensing. Multimedia Tools and Applications 2025, 84(15), 20843–20916. [Google Scholar] [CrossRef]

- Morellos, A.; Pantazi, X.; Moshou, D.; et al. Machine learning-based prediction of soil total nitrogen, organic carbon and moisture content by using VIS-NIR spectroscopy. Biosyst. Eng. 2016, 152, 104–116. [Google Scholar] [CrossRef]

- Padalalu, P.; Mahajan, S.; Dabir, K.; et al. Smart water dripping system for agriculture/farming. 2nd International Conference for Convergence in Technology, 2017; pp. 659–662. [Google Scholar]

- Saki, M; Keshavarz, R; Franklin, D; et al. A Data-Driven Review of Remote Sensing-Based Data Fusion in Precision Agriculture from Foundational to Transformer-Based Techniques[J]. IEEE Transactions on Geoscience and Remote Sensing, 2025. [Google Scholar]

- Agricultural Research Service. Integrating reinforcement learning and large language models for crop production process management optimization and control[R]. 2025.

- Haseeb, M; Tahir, Z; Mahmood, S A; et al. Winter wheat yield prediction using linear and nonlinear machine learning algorithms based on climatological and remote sensing data[J]. Information Processing in Agriculture, 2025. [Google Scholar]

- Chu, Z.; Yu, J. An end-to-end model for rice yield prediction using deep learning fusion. Comput. Electron. Agric. 2020, 174, 105471. [Google Scholar] [CrossRef]

- Nevavuori, P.; Narra, N.; Lipping, T. Crop yield prediction with deep convolutional neural networks. Comput. Electron. Agric. 2019, 163, 104859. [Google Scholar] [CrossRef]

- Sagan, V.; Maimaitijiang, M.; Bhadra, S.; et al. Field-scale crop yield prediction using multi-temporal WorldView-3 and PlanetScope satellite data and deep learning. ISPRS J. Photogramm. Remote Sens. 2021, 174, 265–281. [Google Scholar] [CrossRef]

- Adrian, J.; Sagan, V.; Maimaitijiang, M. Sentinel SAR-optical fusion for crop type mapping using deep learning and google earth engine. ISPRS J. Photogramm. Remote Sens. 2021, 175, 215–235. [Google Scholar] [CrossRef]

- Liu, K.; Zhang, C.; Yang, X.; et al. Development of an Occurrence Prediction Model for Cucumber Downy Mildew in Solar Greenhouses Based on Long Short-Term Memory Neural Network. Agronomy 2022, 12, 442. [Google Scholar] [CrossRef]

- Ali, T; Rehman, S U; Ali, S; et al. Smart agriculture: utilizing machine learning and deep learning for drought stress identification in crops[J]. Scientific Reports 2024, 14(1), 74127. [Google Scholar] [CrossRef]

- Van Asselt, J; et al. Climate shocks and climate smart agricultural adoption in Sri Lanka. 2025. [Google Scholar]

- Otazua, N. I. AI-Powered DSS for Resource-Efficient Nutrient, Irrigation, and Microclimate Management in Greenhouses. Chem. Proc. 2022, 10, 63. [Google Scholar]

- Mortazavizadeh, F; Bolonio, D; Mirzaei, M; et al. Advances in machine learning for agricultural water management: a review of techniques and applications[J]. Journal of Hydrology 2025. [Google Scholar] [CrossRef]

- Mesías-Ruiz, G. A.; Pérez-Ortiz, M.; Dorado, J.; et al. Boosting precision crop protection towards Agriculture 5.0 via machine learning and emerging technologies: A contextual review. Front. Plant Sci. 2023, 14, 1143326. [Google Scholar] [CrossRef]

- Deo, A; Sawant, N; Arora, A; et al. How has scientific literature addressed crop planning at farm level: A bibliometric-qualitative review[J]. Farming System 2025. [Google Scholar] [CrossRef]

- Ali, A; Jat Baloch, M Y; Naveed, M; et al. Advanced satellite-based remote sensing and data analytics for precision water resource management and agricultural optimization[J]. Scientific Reports 2025, 15(1), 13167. [Google Scholar] [CrossRef] [PubMed]

- Ji, W.; Huang, X.; Wang, S.; et al. A comprehensive review of the research of the “Eye–Brain–Hand” harvesting system in smart agriculture. Agronomy 2023, 13(9), 2237. [Google Scholar] [CrossRef]

- Wang, L.; Chang, Y.; Chen, W.B. The Artificial Intelligence Driven Autonomous Navigation Operation Path Planning System for Agricultural Machinery. Scalable Computing: Practice and Experience 2025, 26(4), 1879–1885. [Google Scholar] [CrossRef]

- Etezadi, H; Eshkabilov, S. A Comprehensive Overview of Control Algorithms, Sensors, Actuators, and Communication Tools of Autonomous All-Terrain Vehicles in Agriculture [J]. Agriculture 2024, 14(2), 163. [Google Scholar] [CrossRef]

- Bai, Y; Zhang, B; Xu, N; et al. Vision-based navigation and guidance for agricultural autonomous vehicles and robots: A review [J]. Computers and Electronics in Agriculture 2023, 205, 107584. [Google Scholar] [CrossRef]

- Chakraborty, S.; Elangovan, D.; Govindarajan, P.L.; et al. A Comprehensive Review of Path Planning for Agricultural Ground Robots. Sustainability 2022, 14(15), 9156. [Google Scholar] [CrossRef]

- Juman, M.A.; Wong, Y.W.; Rajkumar, R.K.; et al. An Integrated Path Planning System for a Robot Designed for Oil Palm Plantations. In Proceedings of the TENCON 2017–2017 IEEE Region 10 Conference, Penang, Malaysia, 5–8 November 2017; 2017; pp. 1–6. [Google Scholar]

- Yan, X.-T.; Bianco, A.; Niu, C.; et al. The AgriRover: A Reinvented Mechatronic Platform from Space Robotics for Precision Farming. In Reinventing Mechatronics; Springer: Berlin/Heidelberg, 2020; pp. 55–73. [Google Scholar]

- Santos, L.; Santos, F.N.; Mendes, J.; et al. Path Planning Aware of Robot’s Center of Mass for Steep Slope Vineyards. Robotica 2019, 38(4), 684–689. [Google Scholar] [CrossRef]

- Rovira-Más, F.; Zhang, Q.; Reid, J.F. Stereo vision three-dimensional terrain maps for precision agriculture. Computers and Electronics in Agriculture 2008, 60(2), 133–143. [Google Scholar] [CrossRef]

- Talami, M.A.; Istiak, S.M.; Safayet, R.; et al. Path Planning and Controller Development for UGVs. 2025; ASABE Paper No. 2500735. [Google Scholar] [CrossRef]

- Padhiary, M.; Kumar, R.; Sethi, L.N. Navigating the Future of Agriculture: A Comprehensive Review of Automatic All-Terrain Vehicles in Precision Farming. J. Inst. Eng. India Ser. A 2024, 105(3), 767–782. [Google Scholar] [CrossRef]

- FAO. Community-Based AI Platforms for Smallholder Resilience; Food and Agriculture Organization of the United Nations, 2024. [Google Scholar]

- Koella, M.; Hughes, D. PlantVillage: A dataset for plant disease image recognition. arXiv 2015, arXiv:1511.08060. [Google Scholar]

- Kaur, P.; Singh, R.; Kumar, S. Adoption of Explainable AI for Fertilizer Recommendation in Punjab Wheat Fields. Computers and Electronics in Agriculture 2024, 218, 108742. [Google Scholar]

- Mwangi, J.; Otieno, D.; Adera, E. Co-Designing Mobile Apps with Farmers for Pest Detection in Kenya. ICT for Development 2024, 30(1), 45–63. [Google Scholar]

- Rahman, M.; Islam, S.; Hossain, T. Icon-Based UI for Low-Literacy Farmers in Bangladesh. In Proceedings of the IEEE Global Humanitarian Technology Conference, 2024; pp. 156–162. [Google Scholar]

- Silva, R.; Oliveira, L.; Costa, F. Voice-Assisted AI Systems for Elderly Farmers in Brazil. Journal of Agricultural Informatics 2023, 14(3), 112–125. [Google Scholar]

- Nguyen, T.; Le, H.; Pham, V. Drone Sharing Model for Smallholder Rice Farmers in the Mekong Delta. Sustainability 2025, 17(3), 895. [Google Scholar]

- Chen, P.; Ma, H.; Cui, Z.; et al. Field Study of UAV Variable-Rate Spraying Method for Orchards Based on Canopy Volume. Agriculture 2025, 15(13), 1374. [Google Scholar] [CrossRef]

- Cho, S B; Soleh, H M; Choi, J W; et al. Recent methods for evaluating crop water stress using ai techniques: A review[J]. Sensors 2024, 24(19), 6313. [Google Scholar] [CrossRef] [PubMed]

- EU Agri-Digital. Subsidy Schemes for Smart Farming Equipment in Family Farms; European Commission Directorate-General for Agriculture and Rural Development, 2023. [Google Scholar]

- Government of India. Digital Krishi: Annual Report on Farmer Training and AI Adoption. In Ministry of Agriculture and Farmers Welfare; 2024. [Google Scholar]

- Jeon, D.; Jung, H.-J.; Lee, K.-D.; et al. A Study of Spray Volume Prediction Techniques for Variable Rate Pesticide Application using Unmanned Aerial Vehicles. Journal of Biosystems Engineering 2025, 50(1), 21–32. [Google Scholar] [CrossRef]

- Li, Z.; Wang, J.; Chen, Y. Trust and Transparency in AI-Driven Agriculture: A Case Study of Smallholder Farms in China. Agriculture and Human Values 2023, 40(2), 567–582. [Google Scholar]

- Maraveas, C. Incorporating Artificial Intelligence Technology in Smart Greenhouses: Current State of the Art. Applied Sciences 2023, 13(1), 14. [Google Scholar] [CrossRef]

- Navas, J.; Vidwath, S.; Kootstra, G. Soft Robotic Grippers for Fruit Harvesting: Design and Performance Evaluation. Robotics 2023, 12(4), 89. [Google Scholar]

- Qazi, S.; Khawaja, B. A.; Farooq, Q. U. IoT-Equipped and AI-Enabled Next Generation Smart Agriculture: A Critical Review, Current Challenges and Future Trends. IEEE Access 2022, 10, 21219–21235. [Google Scholar] [CrossRef]

| Task | Modality | Description | Main Features | Year | |

|---|---|---|---|---|---|

|

PlantVillage (Hughes & Salathé, 2015)[3] |

disease classification | RGB | 54,309 images, 14 crop species, and 26 diseases. | laboratory setting; clean backgrounds. | 2015 |

|

CropDeep (Wang et al., 2022)[4] |

pest/disease detection and classification | RGB | 11,768 images containing 31 pest/disease categories. | real scenes; complex backgrounds; varying lighting; multi-scale targets. | 2022 |

|

Agricultural-Vision (Chiu et al., 2021)[5] |

semantic segmentation | RGB + NIR | 94,986 image patches, 9 types of field anomaly patterns. | large-scale multi-spectral RS imagery; field-level anomaly region identification. | 2021 |

|

SoybeanNet (Smith et al., 2024)[6] |

crop and weed segmentation | RGB + Depth | 10,000 synchronized RGB-D image pairs. | rich geometric information; crops, weeds, and soil in complex backgrounds; robotic precision operations. | 2024 |

|

FruitVerse (Li et al., 2024) [7] |

orchard fruit detection, segmentation and counting | multi-view RGB | over 500k annotated fruit instances; 12 fruit crop species, covering multiple growth stages. | large-scale, multi-species, multi-growth-stage database | 2024 |

|

WeedMap-3D (Jones & Williams, 2024)[8] |

weed localization | RGB + 3D LiDAR Point Cloud | 2,500 synchronized data groups covering various weed and crop species. | 2D visual appearance with 3D spatial structure information; precise weed localization and classification; advanced perception for autonomous weeding robots. | 2024 |

|

AgriSeg-V2 (Momin et al., 2023)[9] |

semantic segmentation | Hyper-spectral imaging | 5,000 hyper-spectral image cubes; 5 major crop and weed species. | continuous spectral information capturing physiological changes invisible to human eye; early stress diagnosis and fine species discrimination. | 2023 |

|

OpenWeedLocator (Wu et al., 2024)[10] |

weed detection | RGB | 5,778 images and video frames. | an open-source precision weeding project; data from diverse geographical environments and growth stages. | 2024 |

|

CropHarvest (Tseng et al., 2022)[11] |

crop type classification; yield estimation |

multi-temporal satellite imagery (Sentinel-2) | satellite time-series data; over 90,000 plots globally. | temporal analysis with labels from multiple global sources; macro-agricultural monitoring and yield prediction. | 2022 |

| Data Type | Decision Types | Models | Applications & Effects |

|---|---|---|---|

| meteorological data |

Predictive decision (dynamic yield prediction); Preventive decision (pest and disease early warning); Regulatory decision (precise water and fertilizer prescription); Planning decision (planting layout optimization) |

LSTM, Transformer, GRU, RF, Prophet |

1. LSTM/Transformer: Correct meteorological forecast errors, capture temporal features and improve yield prediction accuracy 2. GRU/LSTM: Predict the occurrence probability of pests and diseases combined with temperature and humidity data 3. Assist SVR model in calculating crop water demand based on meteorological evapotranspiration 4. Judge suitable crop planting areas via RF combined with historical meteorological data |

| Soil measure | 1. Predictive Decision (Dynamic Yield Prediction) 2. Regulatory Decision (Precise Water and Fertilizer Prescription) 3. Planning Decision (Planting Layout Optimization) |

RF, SVR, PSO, GA, Analytic hierarchy process | 1. Provide basic soil fertility data to assist XGBoost in improving yield prediction accuracy 2. RF Regression/SVR: Establish the relationship between soil nutrients and crop yield, predict fertilizer/water demand; PSO/GA: Optimize water and fertilizer ratio 3. AHP + ML: Quantify soil suitability to support planting planning decisions |

| remote sensing | 1. Predictive Decision (Dynamic Yield Prediction) 2. Preventive Decision (Pest and Disease Early Warning) 3. Regulatory Decision (Precise Water and Fertilizer Prescription) 4. Planning Decision (Planting Layout Optimization) |

CNN, 3D-U-Net, ResNet, XGBoost, GNN, RF | 1. CNN/3D-U-Net/ResNet: Extract spatial features of RS images, fuse SAR and optical data to improve yield prediction robustness; XGBoost: Predict yield combined with RS indices 2. GNN: Characterize the spatial diffusion of pests and diseases; UAV hyper-spectral assists early disease identification 3. Extract crop canopy temperature, NDVI and other growth data to optimize water and fertilizer application rate 4. RF: Identify suitable planting areas combined with satellite RS data; CAMarkov Model: Predict the change trend of agricultural land |

| market data | Planning Decision (Collaborative Optimization of Planting Layout and Market Supply-Demand) | LSTM, Prophet, GNN, RL, LLM, Multi-objective optimization |

1. LSTM/Prophet: Predict agricultural product price trends based on historical price and transaction volume data 2. GNN: Construct a “producer-intermediary-consumer” network to achieve efficient supply-demand matching 3. RL+LLM: Analyze e-commerce demand and policy text data to optimize sales connection processes; Multi-objective Optimization Model: Balance policy compliance, revenue and supply-demand matching |

| Platform Type | Advantages | Limitations | Typical Scenarios | Industrialization Level |

|---|---|---|---|---|

| Wheeled Platform | Simple structure, high speed, high energy efficiency, high control precision | Limited obstacle-crossing ability, poor soft soil adaptability, prone to slipping | Field seeding, plant protection, weeding, plain orchard management | Industrialized |

| Tracked Platform | Low ground pressure, excellent traction, strong obstacle-crossing ability, good terrain adaptability | Complex structure, higher cost, damages surfaces, high steering energy consumption | Mountainous orchards, greenhouses, wet/muddy environments | Near Industrialization |

| Rail-guided Platform | High positioning accuracy, stable operation, low energy consumption, enables continuous operation | Limited mobility range, high installation cost, low flexibility | Greenhouses, fixed work areas, potted crops | Specific Scenario Application |

| Multi-rotor UAV | Vertical Take-off and Landing, hovering capability, high maneuverability, simple structure | Short endurance, limited payload, poor wind resistance | Precision spraying, crop monitoring, small-area surveying | Specific Scenario Application |

| Fixed-wing UAV | Long endurance, high flight speed, strong wind resistance, larger payload | Requires runway/take-off area, cannot hover, complex operation | Large-area RS, farmland surveying, regional census | Specific Scenario Application |

| Sensor Category | Sensor Type | Technical Parameters | Typical Application Scenarios |

|---|---|---|---|

| Positioning & Attitude | RTK-GPS | Horizontal error <5 cm, update rate 1 Hz | Global positioning and path planning in open, plain fields |

| LiDAR | Working range <200 m, accuracy 0.5-10 mm | Orchard row identification, dynamic obstacle (stones/animals) detection | |

| IMU | Roll/pitch angle error <0.1°, update rate >100 Hz | Real-time vehicle attitude monitoring, short-term positioning during GPS signal loss | |

| Environment & Operational State | RGB Camera | Resolution 1920×1080, crop row segmentation error 3-5 cm | Crop row identification, visual pest/disease detection |

| multi-spectral Camera | NDVI index measurement error <5% | Crop growth vigor assessment, water stress identification | |

| Soil Sensor (Moisture, pH, NPK) | Moisture measurement accuracy ±1%, pH error <0.1 pH | Soil fertility monitoring, variable-rate fertilization or irrigation decision | |

| Ultrasonic Sensor | Detection accuracy 92.20%-92.88%, working range <20 m | Proximity obstacle (ridges/farm machinery) warning |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).