Submitted:

25 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Proposed PV Power Forecasting Model

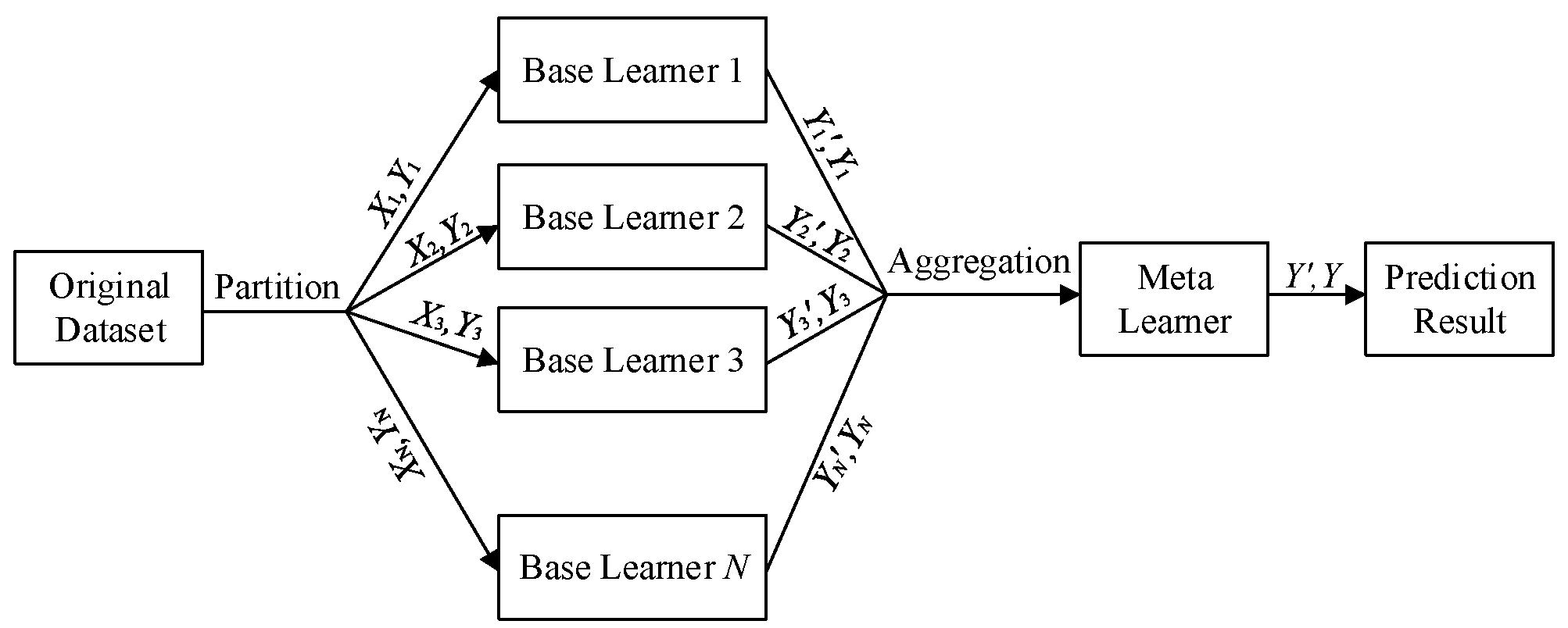

A. Principle of Stacking Ensemble Learning

B. Selection Basis of Primary Learners

III. Weight Optimization Algorithm for Ensemble Models Based on Double Q-Learning

A. Objective Function and Constraints

B. Double Q-Learning Mechanism

IV. Experimental Analysis

A. Data Source and Preprocessing

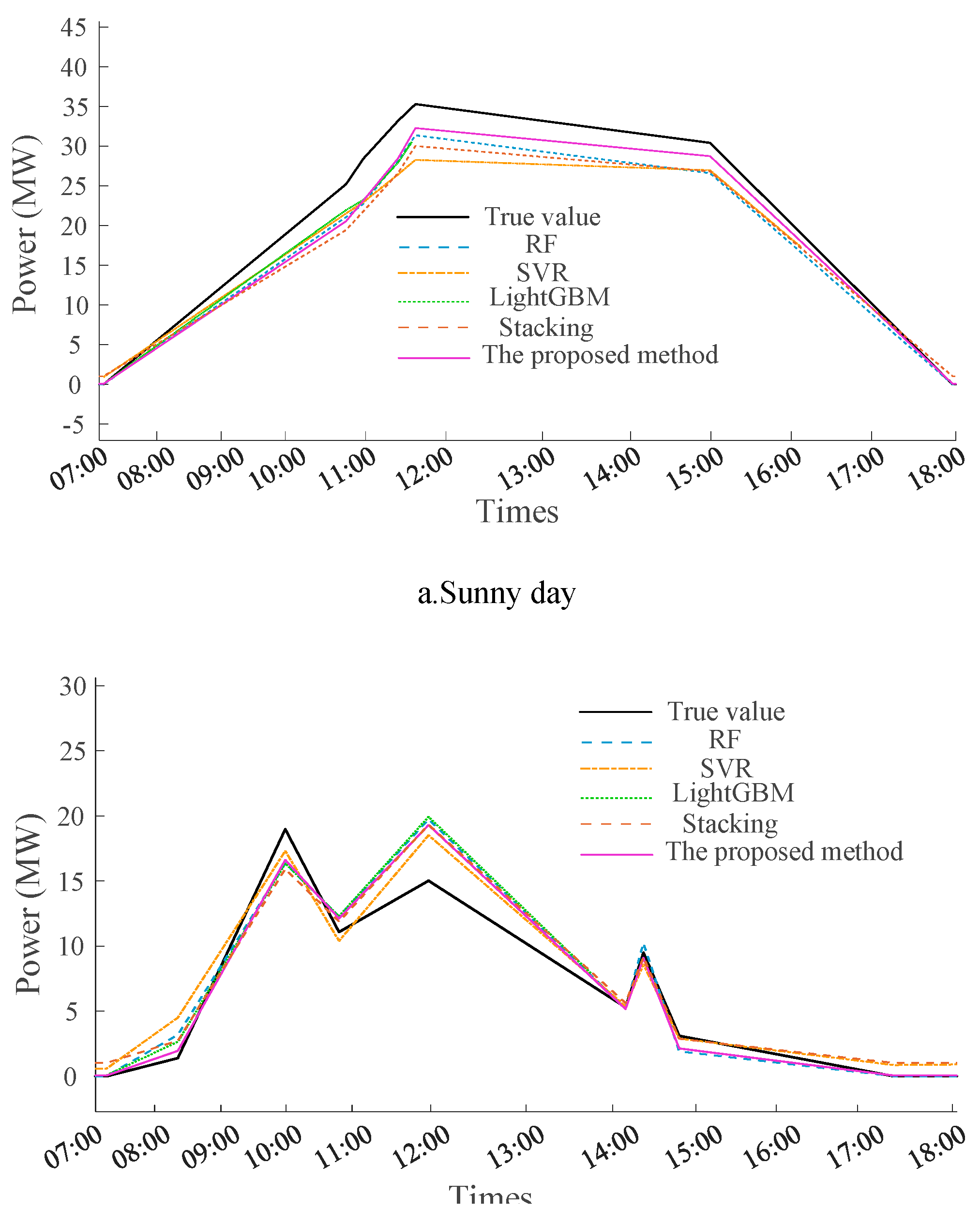

B. Comparison Models and Results Analysis

V. Conclusions and Prospects

Funding

References

- International Energy Agency (IEA), "Net zero roadmap: A global pathway to keep the 1.5 °C goal in reach – 2023 update," IEA Report, Revised ed., Nov. 2024, [Online]. Available: https://www.iea.org.

- H. Chen, W. Wu, C. Li, G. Lu, D. Ye, C. Ma, L. Ren, and G. Li, "Ecological and environmental effects of global photovoltaic power plants: A meta-analysis," Journal of Environmental Management, vol. 373, 2025, art. 123785. [CrossRef]

- P. Di Leo, A. Ciocia, G. Malgaroli, and F. Spertino, "Advancements and challenges in photovoltaic power forecasting: A comprehensive review," Energies, vol. 18, no. 8, p. 2108, 2025. [CrossRef]

- N. Kumari, K. Namrata, M. Kumar and R. P. Gupta, "Random Forest Algorithm for Solar Forecasting in Jamshedpur – India," 2022 4th International Conference on Energy, Power and Environment (ICEPE), Shillong, India, 2022, pp. 1-6. [CrossRef]

- Z. Ullah, S. M. Shahid, J. Park, B. Min, and S. Kwon, "LSTM-based online learning for real-time solar power forecasting," IEEE Access, vol. 13, pp. 160456–160465, 2025. [CrossRef]

- E. Safishahi, A. A. Safavi and P. Khalili, "Real-Time PV Power Forecasting Using LSTM-Attention, Transformer & XGBoost Hybrid Model," 2025 11th International Conference on Control, Instrumentation and Automation (ICCIA), Tehran, Iran, Islamic Republic of, 2025, pp. 1-4. [CrossRef]

- J. Wang, J. Jiang and W. Zhang, "Power Load Forecasting Based on GA-Optimized CNN-LSTM Model," 2025 IEEE 7th International Conference on Civil Aviation Safety and Information Technology (ICCASIT), Yinchuan, China, 2025, pp. 1555-1559. [CrossRef]

- P. Di Leo, A. Ciocia, G. Malgaroli, and F. Spertino, "Advancements and challenges in photovoltaic power forecasting: A comprehensive review," Energies, vol. 18, no. 8, p. 2108, 2025. [CrossRef]

- F. Schwenker, "Ensemble Methods: Foundations and Algorithms [Book Review]," in IEEE Computational Intelligence Magazine, vol. 8, no. 1, pp. 77-79, Feb. 2013. [CrossRef]

- J. Chen, E. Dou, and X. Li, "Dynamic weight allocation and extension optimization for intelligent evaluation," Procedia Computer Science, vol. 266, pp. 652–659, 2025. [CrossRef]

- R. S. Sutton and A. G. Barto, Reinforcement Learning: An Introduction, Cambridge, MA, USA: MIT Press, 1998.

- H. Mohammadi Rouzbahani, H. Karimipour and L. Lei, "Optimizing Resource Swap Functionality in IoE-Based Grids Using Approximate Reasoning Reward-Based Adjustable Deep Double Q-Learning," in IEEE Transactions on Consumer Electronics, vol. 69, no. 3, pp. 522-532, Aug. 2023. [CrossRef]

- Y. Chen and J. Xu, "Solar and wind power data from the Chinese State Grid Renewable Energy Generation Forecasting Competition," Scientific Data, vol. 9, p. 577, 2022. [CrossRef]

| Algorithm | Applicable Data | Training Speed | Key Features | Limitations |

| RF | Large-scale data | Relatively fast | Random sampling ensemble | Weak temporal modeling |

| SVR | low-to-medium dimensions | Slow | Non-linear fitting via kernel trick | Computationally expensive |

| LightGBM | Large-scale data | Very fast | Efficient parallel training | Leaf-wise overfitting risk |

| Model | MAE | MSE | RMSE | R² |

| RF | 1.5779 | 13.3648 | 3.6558 | 0.9288 |

| SVR | 1.9272 | 20.4287 | 4.5198 | 0.8912 |

| LightGBM | 1.5941 | 12.8810 | 3.5890 | 0.9314 |

| Stacking | 2.2478 | 14.3770 | 3.7917 | 0.9234 |

| The proposed method | 1.5281 | 12.5000 | 3.5355 | 0.9334 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).