Introduction

The global economy is undergoing a structural transformation driven by the convergence of technological innovation, demographic change, environmental imperatives, and geopolitical fragmentation (Brynjolfsson & McAfee, 2014; Schwab, 2017). As organizations adopt artificial intelligence, automate routine tasks, and adapt to new business models, the skills required for productive participation in the labour market are shifting rapidly. While much attention has been devoted to digital and technical competencies, there is growing recognition that distinctively human capabilities—often termed "soft," "non-cognitive," or "21st-century" skills—are the critical differentiators for individuals and organizations navigating an uncertain future (Deming, 2017; World Economic Forum [WEF], 2020).

Human-centric skills, as defined in the World Economic Forum's 2025 white paper New Economy Skills: Unlocking the Human Advantage, encompass creativity, analytical and systems thinking, resilience, emotional intelligence, leadership, empathy, collaboration, and the capacity for lifelong learning (WEF, 2025). Despite their acknowledged importance, these skills remain difficult to define, measure, develop, and credential—challenges that undermine their visibility in labour markets and limit their integration into education and training systems (Autor, 2015; Heckman & Kautz, 2012).

This paper offers a comprehensive and critical examination of human-centric skills in the context of workforce transformation. The analysis is guided by four central questions:

What is the current state of demand for and supply of human-centric skills in global labour markets, and what are the limitations of the evidence base?

What are the principal barriers to effective assessment, development, and credentialling of these skills, including issues of validity, reliability, and fairness?

What theoretical and practical frameworks can guide educators, employers, and policy-makers in cultivating and recognizing human-centric capabilities, and what are their boundary conditions?

What critical perspectives challenge the dominant skills discourse, and how might these inform more balanced approaches?

The paper proceeds as follows. First, it reviews the conceptual landscape of human-centric skills, distinguishing them from technical and foundational competencies and situating them within broader debates about the future of work. Second, it develops a theoretical framework integrating human capital theory, signaling theory, and situated learning perspectives. Third, it examines empirical evidence on the supply and demand of these skills, drawing on multiple sources and critically evaluating methodological limitations. Fourth, it analyses the persistent challenges of assessment, development, and credentialling, with attention to validity, reliability, and fairness. Fifth, it engages with critical perspectives on the skills agenda. Sixth, it proposes a set of guiding principles informed by educational theory and organizational practice. Seventh, it presents illustrative case studies, including independent evaluations. Finally, it discusses implications for research, policy, and practice, and articulates a detailed research agenda with testable propositions.

Conceptual Foundations: Defining Human-Centric Skills

Terminology and Taxonomies

The construct of human-centric skills occupies contested conceptual terrain. Over the past two decades, a proliferation of overlapping terms—soft skills, non-cognitive skills, social and emotional learning (SEL), transferable skills, 21st-century competencies—has generated both intellectual richness and practical confusion (Duckworth & Yeager, 2015; Heckman & Kautz, 2012). Each framing reflects disciplinary traditions, policy contexts, and normative assumptions about what counts as valuable human capability.

The World Economic Forum's Global Skills Taxonomy organizes human-centric skills into four clusters: (a) creativity and problem solving (including analytical thinking, creative thinking, systems thinking, and mathematical reasoning); (b) emotional intelligence (motivation and self-awareness, resilience, flexibility and agility); (c) learning and growth (curiosity and lifelong learning, teaching, mentoring, dependability); and (d) collaboration and communication (empathy, active listening, leadership, speaking, writing, and languages) (WEF, 2025). This taxonomy provides a useful heuristic for research and practice, though it should be acknowledged that boundaries between categories are porous and that skills often interact dynamically.

A critical distinction is made between human-centric skills and foundational competencies such as literacy, numeracy, and digital fluency. While foundational skills enable learning and participation, human-centric skills are higher-order capabilities that support adaptability, innovation, and meaningful collaboration in complex, uncertain environments (Care et al., 2018; Organisation for Economic Co-operation and Development [OECD], 2018).

However, it is important to interrogate whether "human-centric skills" constitutes a coherent construct or an umbrella term for disparate competencies with distinct antecedents, correlates, and consequences. Competency modeling research suggests that effective frameworks require clear behavioral indicators, empirical validation, and attention to context-specificity (Campion et al., 2011; Shippmann et al., 2000). The WEF taxonomy, while practically useful, has not been subjected to the rigorous psychometric validation expected of scientific constructs. As such, claims about "human-centric skills" as a unified category should be interpreted with caution.

Construct Validity Concerns

Construct validity—the extent to which a measure captures the theoretical construct it purports to assess—is a fundamental concern for human-centric skills (Messick, 1995). Several issues warrant attention:

Heterogeneity: Creativity, resilience, empathy, and leadership may have different developmental trajectories, neural substrates, and labour market returns. Treating them as a single category risks obscuring important distinctions (Duckworth & Yeager, 2015).

Context-dependence: Skills that manifest in one context may not transfer to another. A person who demonstrates creativity in artistic domains may not exhibit creativity in scientific problem-solving (Barnett & Ceci, 2002). This raises questions about the generality of skill constructs.

Cultural variation: Constructs such as emotional intelligence and resilience have been developed primarily in Western contexts. Cross-cultural research reveals significant variation in how these constructs are defined, expressed, and valued (Matsumoto et al., 2008; Ungar, 2008). Measurement instruments validated in one culture may not be valid elsewhere.

These concerns do not invalidate the study of human-centric skills, but they counsel epistemic humility and attention to boundary conditions.

Potential Downsides and Trade-offs

The dominant discourse frames human-centric skills as unambiguously positive—more is better. However, research suggests potential downsides and contingencies:

Emotional intelligence: While generally associated with positive outcomes, emotional intelligence can be used manipulatively. Kilduff et al. (2010) describe the "strategic use" of emotional intelligence for self-interested purposes, raising ethical concerns.

Resilience: Excessive emphasis on individual resilience may obscure structural barriers and place undue burden on individuals to adapt to adverse conditions (Tennen & Affleck, 1998). Critics argue that resilience discourse can depoliticize systemic issues.

Creativity: High creativity is associated with nonconformity and risk-taking, which may be disruptive in certain organizational contexts (Mueller et al., 2012).

A balanced approach recognizes that skill development involves trade-offs and that the value of skills is contingent on context.

Theoretical Framework

This paper integrates three theoretical perspectives to analyze human-centric skills: human capital theory, signaling theory, and situated learning theory. Each offers distinct insights into skill development, recognition, and labour market dynamics.

Human Capital Theory

Human capital theory, as developed by Becker (1964), emphasizes the productive value of individual knowledge and skills for labour market outcomes. Investment in education and training enhances productivity, leading to higher wages and better employment prospects. While originally focused on cognitive abilities and formal schooling, the framework has been extended to encompass non-cognitive or "soft" skills (Heckman & Kautz, 2012; Heckman et al., 2006).

From a human capital perspective, human-centric skills are investments that enhance worker productivity. The returns to these investments depend on skill relevance, transferability, and employer recognition. The theory predicts that employers will invest in general skills (transferable across firms) less than in firm-specific skills, because workers can take general skills to competitors. This has implications for who bears the cost of skill development and how portable credentials might alter incentive structures.

Recent research has demonstrated that non-cognitive skills predict employment, earnings, educational attainment, and a range of social outcomes, often as strongly as cognitive abilities (Heckman & Kautz, 2012). Deming (2017) found that jobs requiring high levels of social skills grew substantially between 1980 and 2012, and that workers with strong social skills earned wage premiums.

Signaling Theory

Signaling theory, originating with Spence (1973), offers a complementary perspective. In this view, credentials serve not primarily to certify skills but to signal underlying attributes—ability, perseverance, conscientiousness—that are difficult for employers to observe directly. Education and credentials reduce information asymmetry in labour markets by providing a credible signal of worker quality.

This distinction has important implications for credentialling human-centric skills. If credentials function primarily as signals, then their value depends on their credibility, scarcity, and correlation with unobserved attributes. A proliferation of micro-credentials without robust validation may undermine signaling value. Conversely, if credentials genuinely certify skills, then their value depends on accurate assessment and employer recognition.

The tension between human capital and signaling perspectives is empirically difficult to resolve, and both mechanisms likely operate (Autor, 2015). For human-centric skills, the challenge is particularly acute: these skills are difficult to observe and verify, making signaling mechanisms especially important.

Situated Learning and Transfer

Situated learning theory, articulated by Lave and Wenger (1991), challenges the assumption that skills are abstract, decontextualized competencies that transfer readily across settings. Instead, learning is viewed as fundamentally situated in social practice. Knowledge and skills are not simply acquired but are co-constructed through participation in communities of practice.

This perspective raises critical questions for human-centric skill development and credentialling. If creativity or collaboration are context-dependent, what is the meaning of a generic "creativity" credential? Barnett and Ceci (2002) reviewed the transfer literature and found that transfer is often limited, especially for "far" transfer across dissimilar contexts.

The implication is that skill development interventions must attend to context, and credentials should specify the conditions under which skills were demonstrated. Portable credentials may overstate generalizability if they do not account for situational factors.

Integrating Perspectives

These three perspectives are not mutually exclusive but offer complementary lenses:

Human capital theory emphasizes the productive value of skills and the returns to investment.

Signaling theory highlights the role of credentials in reducing information asymmetry and the importance of credibility.

Situated learning theory foregrounds context-dependence and the challenges of transfer.

An integrated framework recognizes that human-centric skills have productive value (human capital), that credentials must be credible to function as effective signals (signaling), and that skill expression is context-dependent (situated learning). Effective interventions and credentialling systems must attend to all three dimensions.

Supply and Demand of Human-Centric Skills: Empirical Evidence

Employer Demand: Survey and Labor Market Data

Employer surveys consistently identify human-centric skills as among the most valued but hardest to find in the workforce. The World Economic Forum's Future of Jobs Report 2025 finds that nearly 40% of core skills required for jobs will be disrupted in the next five years, with employers emphasizing analytical thinking, creativity, resilience, motivation, and curiosity as priorities for 2030 (WEF, 2025). Similarly, survey data indicate that while collaboration and teamwork are viewed as relative strengths, curiosity, resilience, and lifelong learning are perceived as weak points across all regions (WEF, 2025).

These findings align with peer-reviewed research. Deming (2017) analyzed US labor market data and found that employment in occupations requiring high social skills grew by 24% between 1980 and 2012, compared to 11% for occupations low in social skills. Workers in high social-skill occupations earned significant wage premiums, even after controlling for cognitive ability and education. Similarly, Deming and Kahn (2018) analyzed job postings and found that employers increasingly list social skills among job requirements, with such postings associated with higher wages.

However, methodological limitations should be acknowledged. Employer surveys, including those conducted by the WEF, rely on self-report data from executives and HR professionals, which may reflect espoused values rather than actual hiring behavior. Response bias, social desirability, and sampling limitations affect generalizability. Labour market analysis of job postings captures stated requirements but may not reflect actual selection criteria or job performance.

The Gap Between Rhetoric and Practice

A critical finding is the gap between employers' stated priorities and hiring practices. An Indeed analysis of US job postings from May 2024 to April 2025, as reported in the WEF white paper, reveals that only 72% explicitly mention at least one human-centric skill, with communication, leadership, and dependability most frequently cited. Creativity and curiosity—skills employers project as most important for the future—are among the least mentioned (WEF, 2025).

This pattern is consistent with broader research on skills-based hiring. Fuller et al. (2022) documented widespread "degree inflation" in job postings, where employers require bachelor's degrees for positions that do not require degree-level skills, effectively screening out qualified candidates. Blair et al. (2020) found that "STARs" (workers Skilled Through Alternative Routes) are often overlooked despite possessing relevant competencies.

The gap between rhetoric and practice may reflect several factors: lack of valid assessment tools, inertia in hiring practices, risk aversion, and the use of credentials as screening devices regardless of skill content.

Supply: Education and Training Systems

Education systems have begun to prioritize human-centric skills, but progress is uneven. A cross-national study of 152 countries found that communication, creativity, critical thinking, and problem solving are frequently cited in policy documents, yet clear pedagogical and assessment guidance is often lacking (Care et al., 2018). The OECD's Programme for International Student Assessment (PISA) 2022 creative thinking assessment found that only half of students in OECD countries could generate original ideas in familiar contexts, with significant socioeconomic and gender gaps (OECD, 2024a).

Teacher preparedness is a persistent bottleneck. According to the OECD's Survey on Social and Emotional Skills (SSES) 2023, 30% of teachers of 15-year-olds had received no training in incorporating social and emotional skills into classroom practice, and 40% lacked training to monitor these skills regularly (OECD, 2024b). Many teachers feel less confident fostering social and emotional skills than delivering academic content, particularly at the secondary level.

Despite these challenges, there is evidence of growing learner investment in human-centric skills. Coursera data, as reported in the WEF white paper, show a steady increase in learning hours devoted to analytical thinking, creative thinking, resilience, empathy, and lifelong learning between 2020 and 2025 (WEF, 2025). However, the relationship between course completion and actual skill acquisition remains unclear, as most online platforms do not employ rigorous competency assessments.

Regional and Industry Variation

Demand for and supply of human-centric skills vary by region and industry. The WEF's Executive Opinion Survey 2025 reveals that Sub-Saharan Africa rates above average in creativity, resilience, curiosity, and collaboration, while Northern America and Oceania emphasize creativity and problem solving but lag in teamwork. Eastern Asia and Latin America and the Caribbean express the greatest optimism about overall human-skills readiness (WEF, 2025).

Industry patterns are equally differentiated. Sectors such as insurance, telecommunications, and education place high value on resilience, analytical thinking, and leadership, while real estate, supply chain, and retail emphasize dependability and attention to detail. Creative thinking is most visible in media and entertainment, and analytical thinking is concentrated in financial services and technology (WEF, 2025).

These patterns align with occupational analysis frameworks such as ONET, which categorizes occupations by skill requirements and work contexts. ONET data confirm that social and emotional skills vary substantially across occupations and are particularly salient in service, managerial, and creative roles (National Center for O*NET Development, 2023).

The Fragility and Durability of Human-Centric Skills

Sensitivity to External Shocks

A common assumption is that human-centric skills, once acquired, are "durable" or transferable across contexts. However, emerging evidence suggests these skills are surprisingly fragile and sensitive to external shocks. During the COVID-19 pandemic, skills requiring frequent interpersonal engagement—such as teaching and resilience—declined sharply, while empathy and active listening proved more robust, possibly reflecting heightened social connection during crisis (WEF, 2025).

This pattern is consistent with research on skill depreciation. Deming and Noray (2020) found that technical skills depreciate rapidly, especially in STEM fields. Human-centric skills may depreciate differently—not through obsolescence but through disuse. Without sustained practice in social environments, interpersonal skills may atrophy.

Time to Skill Acquisition

Developing human-centric skills requires sustained effort. Data reported in the WEF white paper indicate that while approximately 25% of learners show progress within weeks, most need several months of deliberate practice to become proficient (WEF, 2025). This aligns with research on deliberate practice, which emphasizes that expertise develops through extended, focused practice with feedback (Ericsson et al., 1993).

The wide gap between early and late learners suggests that some benefit from prior experience, while others depend on structured opportunities and organizational support. The implication is that human-centric skills are not simply innate traits but are shaped by context, culture, and investment.

Resilience to Automation

Despite their fragility in the face of social disruption, human-centric skills are highly resistant to automation. Analysis reported in the WEF white paper estimates that tasks tied to empathy, creativity, leadership, and curiosity have only about 13% potential for AI transformation, as they depend on human judgment, context, and lived experience that machines cannot replicate (WEF, 2025).

This finding aligns with broader research on automation and the future of work. Frey and Osborne (2017) estimated that 47% of US employment was at high risk of automation, but tasks requiring social intelligence, creativity, and perception were least susceptible. Autor (2015) argued that automation complements rather than substitutes for non-routine cognitive and interpersonal tasks, potentially increasing demand for human-centric skills.

Barriers to Assessment, Development, and Credentialling

Invisibility in Hiring and Recognition

A fundamental barrier is the invisibility of human-centric skills in hiring and recognition systems. Despite their acknowledged importance, these skills are often treated as "givens" rather than explicit criteria. Data reported in the WEF white paper show that leadership, motivation, and dependability are the most frequently recognized in workplaces, while creative thinking and curiosity—though highly valued—are least acknowledged (WEF, 2025).

This invisibility may reflect deeper structural issues. Traditional hiring processes rely heavily on credentials, work experience, and structured interviews—methods poorly suited to assessing complex, context-dependent skills. Without valid assessment tools, employers default to proxies such as educational pedigree or prior job titles.

Challenges of Assessment: Validity, Reliability, and Fairness

Human-centric skills are difficult to assess for several reasons. First, they are context-dependent, meaning effective behavior in one setting may not transfer to another. Second, they are multidimensional, encompassing cognitive, emotional, and behavioral components. Third, they are often expressed in subtle or ambiguous ways that resist standardization (Duckworth & Yeager, 2015; Heckman & Kautz, 2012).

Validity

Validity concerns whether an assessment measures what it claims to measure and supports the intended inferences (Messick, 1995). Kane (2013) articulated an argument-based approach to validity, emphasizing that validation requires evidence for each inference in the interpretive chain—from observed performance to claims about underlying constructs and predictions of future behavior.

For human-centric skills, validity evidence is often lacking. Self-report measures may not predict actual behavior. Performance assessments may capture task-specific competence without establishing generalizability. The relationship between assessment scores and meaningful outcomes (e.g., job performance, career success) is often assumed rather than demonstrated.

Reliability

Reliability concerns the consistency of assessment results across occasions, raters, and contexts. Performance-based assessments of human-centric skills often suffer from low inter-rater reliability, as evaluators may interpret behaviors differently (Lane & Stone, 2006). Context effects further complicate reliability: a person may demonstrate high creativity in one task but not another.

Strategies to enhance reliability include structured rubrics, multiple raters, and aggregation across occasions. However, these approaches increase cost and complexity, limiting scalability.

Fairness

Fairness concerns whether assessments function equitably across demographic groups. Self-report measures of social and emotional skills are susceptible to reference group effects, where respondents from different cultural or socioeconomic backgrounds interpret scale anchors differently (Duckworth & Yeager, 2015). This can produce spurious group differences unrelated to actual skill levels.

AI-powered assessments introduce additional fairness concerns. Algorithmic bias can arise when training data reflect historical inequities or when proxy variables correlate with protected characteristics (O'Neil, 2016; Hutchinson & Mitchell, 2019). Without careful validation, technology-enabled assessments may perpetuate or amplify existing disparities.

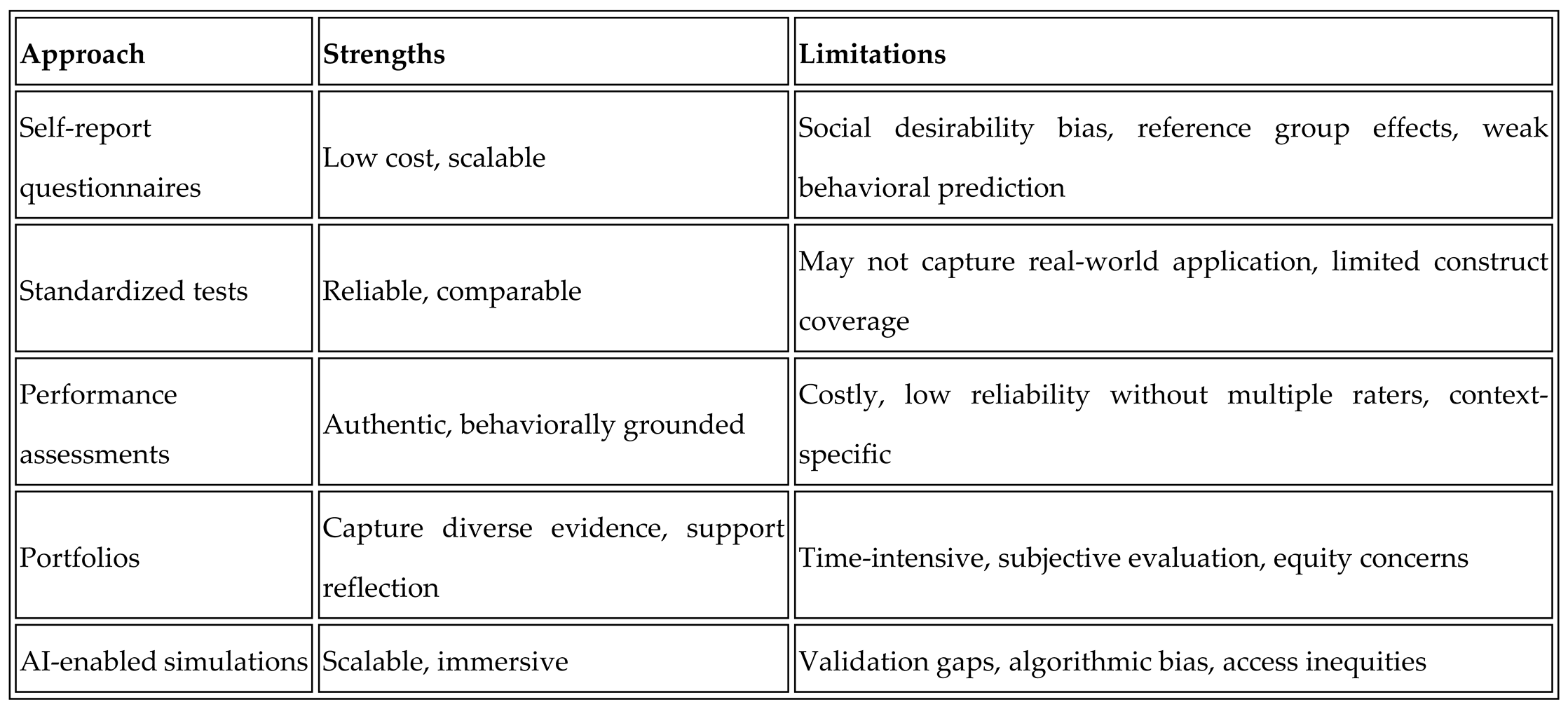

Trade-offs Among Assessment Approaches

Different assessment approaches involve trade-offs:

Effective assessment systems may need to combine approaches, using standardized benchmarks for comparability, performance tasks for authenticity, and reflective tools for growth—while attending to validity, reliability, and fairness throughout.

Development: Pedagogy and Organizational Practice

Developing human-centric skills requires more than passive exposure. Evidence supports the effectiveness of structured, experiential, and reflective approaches.

Social and Emotional Learning

Meta-analytic evidence demonstrates that well-implemented SEL programs improve social-emotional skills, attitudes, and academic outcomes. Durlak et al. (2011) found that students in SEL programs showed significant gains in social-emotional competencies and academic achievement, with effects persisting over time. Taylor et al. (2017) confirmed long-term benefits in follow-up assessments.

However, program quality matters. Effective programs are systematic, use evidence-based curricula, and provide ongoing teacher support. Programs that are poorly implemented or lack fidelity to design principles may show minimal effects.

Experiential and Problem-Based Learning

Experiential learning, as articulated by Kolb (1984), emphasizes learning through direct experience, reflection, and application. Problem-based learning (PBL) engages students in solving complex, authentic problems, often in collaborative teams (Hmelo-Silver, 2004). Both approaches have been shown to develop critical thinking, collaboration, and problem-solving skills.

However, critiques of these approaches should be acknowledged. Kirschner et al. (2006) argued that minimally guided instructional approaches can be less effective than direct instruction for novice learners, particularly for acquiring foundational knowledge. Effective skill development may require balancing experiential approaches with structured guidance.

Deliberate Practice and Feedback

Research on expertise development emphasizes the role of deliberate practice—focused, effortful practice with clear goals and immediate feedback (Ericsson et al., 1993). Human-centric skills may develop through similar mechanisms: repeated engagement in challenging interpersonal situations, with opportunities for feedback and reflection.

Schön (1983) described the development of professional expertise through "reflection-in-action"—the capacity to think critically during practice and adjust behavior accordingly. Creating environments that support reflection—through mentoring, coaching, and structured debriefs—is essential for skill development.

Organizational Conditions

Workplace culture is a critical enabler. Psychological safety—the belief that one can take interpersonal risks without fear of negative consequences—supports learning, voice, and innovation (Edmondson, 1999). Organizations that cultivate psychological safety are more likely to see employees experiment, seek feedback, and develop human-centric skills.

However, creating psychological safety is challenging, particularly in hierarchical or high-pressure environments. Leadership behavior, team norms, and organizational policies all influence safety perceptions.

Credentialling: Signaling, Portability, and Trust

Credentialling human-centric skills remains a critical gap. Traditional qualifications such as degrees and diplomas signal competence but often capture what people know rather than how they adapt, collaborate, and lead.

The Micro-Credential Landscape

Micro-credentials—short, focused credentials certifying specific skills—have emerged as a response to the limitations of traditional qualifications. Digital badges, endorsed by organizations such as Mozilla and IMS Global, provide visual representations of achievements with embedded metadata describing learning outcomes and assessment methods.

However, the micro-credential landscape is fragmented. Kato et al. (2020) reviewed the emergence of alternative credentials and found significant variation in quality, rigor, and employer recognition. Oliver (2019) noted that without shared standards, credentials risk becoming "credential clutter" that confuses rather than informs.

Signaling and Credibility

From a signaling perspective, credentials are valuable only if they credibly distinguish holders from non-holders. Credential proliferation may undermine signaling value if credentials are easily obtained or lack rigorous validation. The challenge is to establish credentials that are meaningful (reflecting genuine competence), portable (recognized across contexts), and trusted (validated by credible third parties).

National and International Frameworks

Qualifications frameworks provide systematic approaches to classifying and comparing credentials. The European Qualifications Framework (EQF), for example, defines eight levels of learning outcomes spanning knowledge, skills, and competence. National vocational qualifications systems in countries such as Australia, the United Kingdom, and Germany provide further examples.

However, integrating human-centric skills into these frameworks is challenging. Most frameworks emphasize knowledge and technical skills, with less attention to social-emotional competencies. Developing valid, reliable, and fair assessments of human-centric skills at scale remains an unsolved problem.

Risks of Credential Inflation

Collins (1979) documented the historical expansion of credentialling requirements—"credential inflation"—whereby jobs that previously required lower qualifications come to demand higher ones, often without corresponding changes in job content. This dynamic can exclude qualified workers, increase education costs, and reduce social mobility.

The proliferation of micro-credentials for human-centric skills carries similar risks. If credentials become requirements for entry-level positions, workers without access to credentialling opportunities may be disadvantaged. Equity considerations must inform credential design and recognition.

Critical Perspectives on the Skills Agenda

While this paper has focused on the challenges of assessing, developing, and credentialling human-centric skills, it is important to engage with broader critiques of the "skills agenda" in education and employment.

Reductionism and Instrumentalism

Critics argue that the skills discourse reduces complex human capacities to checklists and behavioral indicators, ignoring the holistic, contextual, and relational nature of competence (Biesta, 2015; Wheelahan, 2010). By focusing on discrete, measurable skills, the agenda may overlook deeper forms of knowledge, understanding, and judgment that are essential for professional practice.

Biesta (2009) articulated a concern that the emphasis on skills and measurable outcomes reflects an "instrumentalist" view of education, prioritizing economic utility over broader purposes such as personal development, democratic participation, and social justice. Education, in this view, is not merely preparation for work but cultivation of the whole person.

Whose Skills? Whose Interests?

The paper has relied substantially on employer surveys and industry reports to define skill demands. But whose interests are served by these definitions? Workers, communities, and educators may foreground different values—civic engagement, critical consciousness, solidarity—that are less visible in employer-driven frameworks.

Ball (2003) described the "terrors of performativity" in education, whereby the emphasis on measurable outcomes distorts educational practice and narrows the curriculum. If skills are defined by employer surveys and assessed through standardized instruments, there is a risk that teaching will focus on measurable behaviors at the expense of deeper learning.

The Limits of Individual Responsibility

The skills discourse often places responsibility for development on individuals: workers must continuously upskill and reskill to remain employable. This framing can obscure structural barriers—discrimination, lack of access to education, precarious employment—that limit opportunities regardless of individual skill levels.

Resilience discourse, in particular, has been criticized for depoliticizing systemic issues. Emphasizing individual capacity to "bounce back" may divert attention from the conditions that create adversity in the first place (Tennen & Affleck, 1998; Ungar, 2008).

Balancing Perspectives

These critiques do not invalidate the study of human-centric skills but counsel a more balanced approach. Skill development initiatives should:

Attend to the holistic development of persons, not merely the acquisition of discrete competencies.

Consider multiple stakeholder perspectives, including those of workers, communities, and educators.

Recognize and address structural barriers to opportunity.

Avoid narrow instrumentalism by situating skills within broader educational and social purposes.

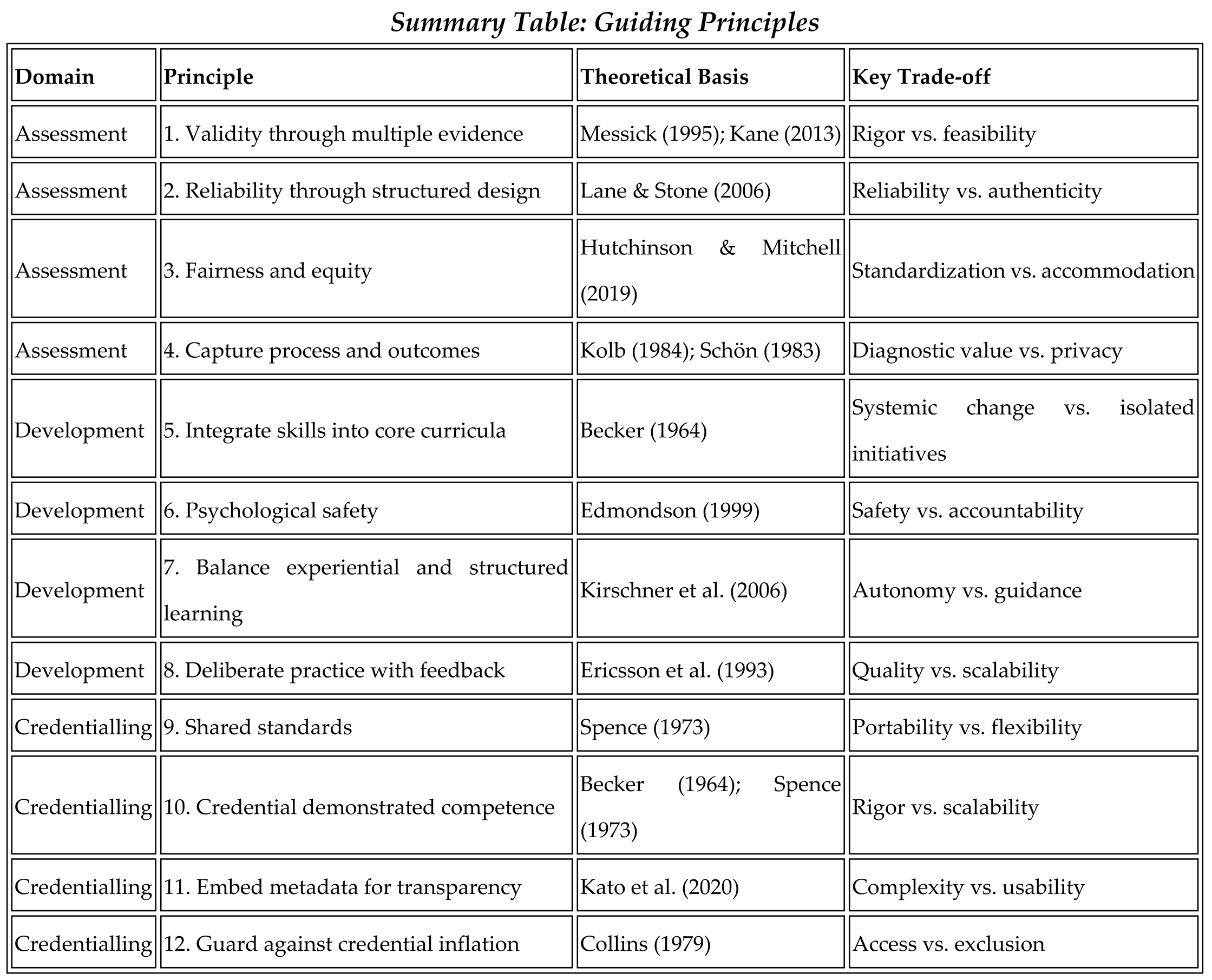

Guiding Principles for Cultivating Human-Centric Skills

Drawing on evidence from peer-reviewed research, the WEF's 2025 white paper, and the theoretical framework developed above, this section proposes a set of guiding principles for assessing, developing, and credentialling human-centric skills. Each principle is explicitly connected to underlying theory and accompanied by consideration of trade-offs and boundary conditions.

Principles for Assessment

Principle 1: Establish Validity Through Multiple Forms of Evidence

Theoretical grounding: Validity is not a property of a test but of the inferences drawn from test scores (Messick, 1995; Kane, 2013). Establishing validity requires evidence across the interpretive chain: that assessments capture the intended construct, that scores generalize across contexts, and that scores predict meaningful outcomes.

Recommendation: Combine multiple forms of evidence—content analysis, response process data, internal structure, relationships with other variables, and consequences of use—to support validity claims. No single assessment method is sufficient; triangulation across approaches enhances confidence.

Trade-offs: Comprehensive validation is resource-intensive and may not be feasible for all assessment contexts. Pragmatic approaches should prioritize the most consequential inferences.

Principle 2: Attend to Reliability Through Structured Assessment Design

Theoretical grounding: Reliability is essential for validity; unreliable assessments cannot support valid inferences. Performance assessments of human-centric skills often suffer from low reliability due to rater variability and context effects (Lane & Stone, 2006).

Recommendation: Use structured rubrics with clear behavioral anchors, train raters to calibrate judgments, and aggregate scores across multiple occasions or raters where possible. Consider tradeoffs between reliability and authenticity.

Trade-offs: Highly structured assessments may sacrifice authenticity; highly authentic assessments may sacrifice reliability. Balancing these tensions requires thoughtful design.

Principle 3: Ensure Fairness and Equity

Theoretical grounding: Fairness requires that assessments function equitably across groups and that scores have the same meaning for all examinees (Hutchinson & Mitchell, 2019). Bias in assessments can perpetuate inequities.

Recommendation: Conduct differential item functioning analyses to detect bias. Validate AI-enabled assessments for algorithmic fairness. Provide accommodations and ensure equitable access to assessment opportunities.

Boundary conditions: Perfect fairness may be unattainable; the goal is to minimize harm and continuously monitor for bias.

Principle 4: Capture Process as Well as Outcomes

Theoretical grounding: Situated learning and reflective practice emphasize the importance of process—how individuals approach problems, adapt strategies, and learn from experience (Kolb, 1984; Schön, 1983).

Recommendation: Track thinking processes through learning analytics, digital portfolios, and reflective prompts, not just final products. Provide formative feedback that supports growth.

Trade-offs: Process assessment is more intrusive and may raise privacy concerns. Balance diagnostic value with ethical considerations.

Principles for Development

Principle 5: Integrate Human-Centric Skills into Core Curricula

Theoretical grounding: Human capital theory predicts that explicit investment in skills enhances productivity and outcomes (Becker, 1964). Skills that are peripheral or optional receive less investment.

Recommendation: Treat human-centric skills as core learning outcomes, not add-ons. Embed structured opportunities for skill development through experiential, collaborative, and reflective activities.

Boundary conditions: Integration requires capacity building for teachers and trainers, curriculum reform, and assessment alignment. Without systemic change, isolated initiatives may have limited impact.

Principle 6: Create Psychologically Safe Learning Environments

Theoretical grounding: Psychological safety supports experimentation, voice, and learning (Edmondson, 1999). Without safety, learners avoid risk and conceal errors.

Recommendation: Foster environments where learners can take interpersonal risks without fear of negative consequences. Model vulnerability, respond constructively to mistakes, and establish norms of respect.

Boundary conditions: Psychological safety is necessary but not sufficient; it must be combined with high standards and accountability for learning. In some contexts—such as safety-critical environments—the balance between psychological safety and error prevention requires careful attention.

Principle 7: Balance Experiential Learning with Structured Guidance

Theoretical grounding: Experiential learning is powerful but not universally superior. Novice learners may benefit from direct instruction before engaging in complex, ill-structured problems (Kirschner et al., 2006).

Recommendation: Sequence learning experiences to build foundational knowledge before engaging in complex problem-solving. Provide scaffolds and gradually release responsibility as learners develop competence.

Trade-offs: Over-scaffolding may limit autonomy and intrinsic motivation; under-scaffolding may overwhelm novices. Adaptive design is essential.

Principle 8: Provide Deliberate Practice with Feedback

Theoretical grounding: Expertise develops through deliberate practice—focused, effortful engagement with clear goals and immediate feedback (Ericsson et al., 1993).

Recommendation: Create opportunities for repeated practice in challenging interpersonal situations, with structured feedback from coaches, peers, or technology. Encourage reflection on practice.

Boundary conditions: Feedback must be timely, specific, and actionable. Poor-quality feedback may be counterproductive.

Principles for Credentialling

Principle 9: Establish Shared Standards for Portability and Trust

Theoretical grounding: Signaling theory predicts that credentials are valuable only if they credibly distinguish holders (Spence, 1973). Fragmented, unvalidated credentials undermine signaling value.

Recommendation: Develop clear, consistent frameworks for recognizing human-centric skills at national and international levels. Align with existing qualifications frameworks where possible. Engage employers, educators, and workers in standard-setting.

Trade-offs: Standardization may reduce flexibility and responsiveness to local contexts. Balance portability with adaptability.

Principle 10: Credential Demonstrated Competence, Not Just Completion

Theoretical grounding: Human capital theory emphasizes skill as a productive asset; signaling theory emphasizes credibility (Becker, 1964; Spence, 1973). Credentials that reflect completion without competence assessment have limited value.

Recommendation: Use portfolios, performance assessments, and real-world evidence to demonstrate skill application. Require evidence of competence, not merely course completion or seat time.

Boundary conditions: Rigorous competency assessment is resource-intensive. Pragmatic approaches must balance rigor with scalability.

Principle 11: Embed Metadata for Transparency

Theoretical grounding: Credential value depends on transparency about what was learned, how it was assessed, and who endorsed it (Kato et al., 2020).

Recommendation: Issue credentials with embedded metadata describing learning outcomes, assessment methods, and endorsing organizations. Enable verification through secure digital platforms.

Trade-offs: Metadata complexity may reduce user-friendliness. Design for clarity and accessibility.

Principle 12: Guard Against Credential Inflation and Inequity

Theoretical grounding: Credential inflation can exclude qualified workers and perpetuate inequality (Collins, 1979).

Recommendation: Monitor for credential creep—the expansion of credential requirements beyond job demands. Ensure equitable access to credentialling opportunities. Use credentials to expand opportunity, not restrict it.

Boundary conditions: This requires ongoing vigilance and systemic attention, not merely individual action.

Case Studies: From Principles to Practice

This section presents illustrative case studies of innovative approaches to assessing, developing, and credentialling human-centric skills. Where possible, independent evaluations or peer-reviewed evidence are included to supplement practitioner accounts.

Case Study 1: Social and Emotional Learning in K–12 Education

The Collaborative for Academic, Social, and Emotional Learning (CASEL) has promoted evidence-based SEL programs in schools worldwide. Meta-analytic evidence demonstrates that high-quality SEL programs improve social-emotional competencies, reduce behavioral problems, and enhance academic achievement (Durlak et al., 2011; Taylor et al., 2017).

A notable example is the PATHS (Promoting Alternative Thinking Strategies) curriculum, which has been evaluated through randomized controlled trials. Studies have found that PATHS improves students' emotional understanding, self-control, and problem-solving skills, with effects sustained over time (Greenberg et al., 1995). The program illustrates principles of integration, structured guidance, and evidence-based practice.

Connection to principles: PATHS exemplifies Principle 5 (integration into core curricula), Principle 7 (structured guidance), and Principle 8 (deliberate practice with feedback).

Limitations: Implementation fidelity varies, and effects depend on teacher training and organizational support. Scaling SEL programs requires systemic investment.

Case Study 2: Challenge-Based Learning at Tecnológico de Monterrey

Tecnológico de Monterrey's Tec21 model redesigns undergraduate education around challenge-based learning (CBL), with more than 50% of the curriculum centered on real-world problems co-created with industry and community partners. Students work in multidisciplinary teams, applying transversal skills—self-awareness, social intelligence, resilience, ethical engagement—alongside disciplinary knowledge (WEF, 2025).

Competency-based assessment rubrics track sub-competencies at multiple levels, while digital portfolios and learning analytics compile evidence of both outcomes and decision-making processes. Verifiable digital badges are integrated with academic records, providing employers with transparent evidence of applied competence.

Independent evaluations suggest that CBL enhances student motivation, problem-solving skills, and collaboration, though effects vary by implementation quality (Hmelo-Silver, 2004). The Tec21 model illustrates how experiential learning can be combined with rigorous assessment and credentialling.

Connection to principles: This case exemplifies Principle 5 (integration), Principle 4 (capturing process), Principle 7 (experiential learning), Principle 10 (credentialling competence), and Principle 11 (metadata transparency).

Case Study 3: Psychological Safety and Learning at Google

Google's Project Aristotle investigated the characteristics of effective teams and found that psychological safety was the single most important factor predicting team success (Duhigg, 2016). Teams with high psychological safety were more likely to take risks, share ideas, and learn from failures.

In response, Google implemented interventions to enhance psychological safety, including leader training, team norms, and structured feedback processes. While proprietary evaluations limit external verification, the findings align with Edmondson's (1999) research and have influenced organizational practice widely.

Connection to principles: This case exemplifies Principle 6 (psychological safety) and illustrates the role of organizational culture in skill development.

Limitations: The generalizability of findings from a single organization (particularly a high-resource technology firm) to other contexts is uncertain.

Case Study 4: Micro-Credentials in Professional Development—IBM Digital Badges

IBM has issued millions of digital badges to employees and external learners, certifying skills ranging from technical competencies to leadership and collaboration. Badges include metadata describing learning outcomes, assessment methods, and endorsing authorities, and are sharable on professional networks (IBM, 2023).

Studies of IBM's badging program have found positive effects on learner motivation and engagement, though evidence on labour market outcomes is limited (Jovanovic & Devedzic, 2015). The program illustrates both the promise and the challenges of micro-credentialling: badges can enhance visibility and motivation, but their value depends on employer recognition and rigorous assessment.

Connection to principles: This case exemplifies Principle 9 (shared standards), Principle 10 (credentialling competence), and Principle 11 (metadata transparency).

Limitations: The relationship between badge attainment and actual skill acquisition is not well established. Credential proliferation risks undermining signaling value.

Case Study 5: AI-Enabled Assessment—Development and Cautions

Emerging platforms use AI to assess human-centric skills through simulations, video interviews, and text analysis. For example, some companies analyze facial expressions, voice tone, and word choice to infer personality traits and social skills.

While these tools offer scalability, they raise significant validity and fairness concerns. O'Neil (2016) documented cases where algorithmic hiring tools perpetuated bias against women and minorities. Hutchinson and Mitchell (2019) reviewed 50 years of test fairness research and identified persistent challenges in ensuring equitable treatment across groups.

Responsible use of AI in assessment requires rigorous validation, bias testing, transparency about methods, and human oversight. Without these safeguards, technology-enabled assessment may harm rather than help.

Connection to principles: This case illustrates the importance of Principle 1 (validity), Principle 3 (fairness), and the need for caution in adopting new technologies.

Discussion: Implications for Research, Policy, and Practice

The evidence reviewed in this paper points to a central paradox: human-centric skills are widely recognized as essential for individual, organizational, and societal success, yet they remain invisible, undertaught, and inadequately validated in most education and employment systems. Addressing this paradox requires coordinated action across multiple domains, informed by critical analysis and theoretical rigor.

Implications for Education

Educators at all levels—from primary school to higher education to corporate training—must prioritize human-centric skills as core learning outcomes, not peripheral add-ons. This requires embedding structured opportunities for experiential learning, reflection, and feedback into curricula, and equipping teachers and trainers with the competencies and resources to foster these skills (Durlak et al., 2011; OECD, 2024b).

Assessment practices must evolve to capture the multidimensional, context-dependent nature of human-centric skills. Performance-based, portfolio, and process-oriented approaches offer promise, but require investment in design, validation, and scaling. The trade-offs among approaches—cost, reliability, authenticity—must be navigated thoughtfully.

However, educators should resist narrow instrumentalism. Skill development should be situated within broader educational purposes, including personal development, civic engagement, and social justice. The goal is to cultivate whole persons, not merely to produce workers with specified competencies.

Implications for Employers

Organizations must move beyond espoused values to embed human-centric skills in hiring, performance management, and recognition systems. Making these skills explicit in job descriptions, assessing for them in selection, and rewarding them in practice sends clear signals about their value and encourages investment by both employers and workers (Deming, 2017).

Workplace culture is a critical enabler. Psychological safety, access to mentoring, and opportunities for feedback and growth support skill development and retention. Organizations that cultivate safe spaces for experimentation and learning are likely to see gains in adaptability, innovation, and engagement (Edmondson, 1999).

Employers should also critically examine credentialling practices. Using credentials as proxies without regard to skill content may exclude qualified candidates. Skills-based hiring—evaluating candidates based on demonstrated competencies rather than credentials—can expand talent pools and improve outcomes (Fuller et al., 2022).

Implications for Policy

Policy-makers have a vital role in establishing standards, providing resources, and ensuring equity. National and international frameworks for defining, assessing, and credentialling human-centric skills can reduce fragmentation and enhance portability. Funding for teacher training, curriculum development, and scalable assessment tools is essential to close gaps in supply.

Equity must be a central concern. Socioeconomic, gender, and regional disparities in access to skill development opportunities risk widening inequalities in the future of work. Policy interventions should target underserved populations and ensure that new credentialling systems do not replicate existing barriers.

Policy-makers should also attend to the risks of credential inflation and instrumentalism. Credentialling requirements should be proportionate to job demands, and educational policy should preserve space for broader purposes beyond employability.

Research Agenda: Testable Propositions and Methodological Priorities

Advancing understanding of human-centric skills requires rigorous, interdisciplinary research. The following agenda identifies testable propositions and methodological priorities.

Testable Propositions

Skill structure: Human-centric skills can be empirically distinguished into distinct factors (e.g., creativity, resilience, empathy) with differential predictive validity for work and life outcomes.

Assessment validity: Performance-based assessments of human-centric skills will show stronger correlations with job performance than self-report measures, controlling for cognitive ability and personality.

Development interventions: Structured SEL or experiential learning interventions will produce greater gains in human-centric skills than unstructured exposure, with effects moderated by implementation fidelity and learner readiness.

Psychological safety: Teams with higher psychological safety will show greater skill development over time, controlling for initial skill levels and task characteristics.

Credential signaling: Micro-credentials with rigorous assessment and transparent metadata will have stronger effects on hiring outcomes than credentials without these features, particularly for workers without traditional degrees.

Transfer and context: Skills developed in one context will show limited transfer to dissimilar contexts, with transfer moderated by the degree of structural similarity and explicit bridging instruction.

Automation complementarity: Jobs that combine high technical and high human-centric skills will show stronger wage growth and employment stability than jobs high in one skill domain alone.

Methodological Priorities

Longitudinal designs: Track skill development over time, linking interventions to outcomes and examining trajectories.

Randomized experiments: Test causal effects of development interventions under controlled conditions.

Cross-cultural studies: Examine cultural variation in skill definitions, expression, and measurement validity.

Multi-method assessment: Combine self-report, performance, and observational measures to triangulate evidence.

Labour market linkage: Connect skill assessments to administrative data on employment, earnings, and career trajectories.

Equity analysis: Disaggregate findings by demographic group to identify disparities and evaluate intervention effects on equity.

Interdisciplinary Collaboration

The study of human-centric skills crosses disciplinary boundaries. Organizational behavior, educational psychology, economics, and computer science offer complementary perspectives. Collaborative research teams that integrate these perspectives are likely to generate more robust and actionable insights.

Conclusions

In an era defined by rapid technological change, demographic shifts, and global uncertainty, human-centric skills have emerged as the critical differentiators for individuals, organizations, and societies. Creativity, resilience, emotional intelligence, collaboration, and the capacity for lifelong learning are not soft luxuries but hard necessities for navigating complexity and driving innovation.

Yet the evidence reviewed in this paper reveals a persistent gap between rhetoric and reality. Despite their acknowledged importance, human-centric skills remain invisible in hiring, undertaught in education, and inadequately validated through credentialling. Addressing this gap requires a fundamental shift in mindset—treating human-centric skills as core competencies to be systematically cultivated, measured, and recognized.

This paper has offered a theoretically grounded, critically engaged analysis of the challenges and opportunities. By integrating human capital, signaling, and situated learning perspectives, it has illuminated the complexity of skill development and recognition. By engaging with critical perspectives, it has cautioned against narrow instrumentalism and emphasized the need for holistic, equitable approaches.

The guiding principles and case studies presented here offer a roadmap for action. By embedding valid and fair assessment, experiential learning, and portable credentialling into education and employment systems, stakeholders can unlock the full potential of human capability. In the age of artificial intelligence, the true competitive edge remains profoundly human—but realizing that advantage requires intentional, evidence-based, and equitable investment.

References

- Autor, D. H. (2015). Why are there still so many jobs? The history and future of workplace automation. Journal of Economic Perspectives, 29(3), 3–30. [CrossRef]

- Ball, S. J. (2003). The teacher's soul and the terrors of performativity. Journal of Education Policy, 18(2), 215–228. [CrossRef]

- Barnett, S. M., & Ceci, S. J. (2002). When and where do we apply what we learn? A taxonomy for far transfer. Psychological Bulletin, 128(4), 612–637. [CrossRef]

- Becker, G. S. (1964). Human capital: A theoretical and empirical analysis, with special reference to education. University of Chicago Press.

- Biesta, G. (2009). Good education in an age of measurement: On the need to reconnect with the question of purpose in education. Educational Assessment, Evaluation and Accountability, 21(1), 33–46. [CrossRef]

- Biesta, G. (2015). What is education for? On good education, teacher judgement, and educational professionalism. European Journal of Education, 50(1), 75–87. [CrossRef]

- Blair, P. Q., Castagnino, T. G., Groshen, E. L., Debroy, P., Auguste, B., Ahmed, S., Diaz, F., & Bonavida, C. (2020). Searching for STARs: Work experience as a job market signal for workers without bachelor's degrees (NBER Working Paper No. 26844). National Bureau of Economic Research.

- Brynjolfsson, E., & McAfee, A. (2014). The second machine age: Work, progress, and prosperity in a time of brilliant technologies. W. W. Norton.

- Campion, M. A., Fink, A. A., Ruggeberg, B. J., Carr, L., Phillips, G. M., & Odman, R. B. (2011). Doing competencies well: Best practices in competency modeling. Personnel Psychology, 64(1), 225–262. [CrossRef]

- Care, E., Kim, H., Vista, A., & Anderson, K. (2018). Education system alignment for 21st century skills: Focus on assessment. Brookings Institution.

- Collins, R. (1979). The credential society: An historical sociology of education and stratification. Academic Press.

- Deming, D. J. (2017). The growing importance of social skills in the labor market. Quarterly Journal of Economics, 132(4), 1593–1640. [CrossRef]

- Deming, D. J., & Kahn, L. B. (2018). Skill requirements across firms and labor markets: Evidence from job postings for professionals. Journal of Labor Economics, 36(S1), S337–S369. [CrossRef]

- Deming, D. J., & Noray, K. (2020). Earnings dynamics, changing job skills, and STEM careers. Quarterly Journal of Economics, 135(4), 1965–2005. [CrossRef]

- Duckworth, A. L., & Yeager, D. S. (2015). Measurement matters: Assessing personal qualities other than cognitive ability for educational purposes. Educational Researcher, 44(4), 237–251. [CrossRef]

- Duhigg, C. (2016, February 25). What Google learned from its quest to build the perfect team. The New York Times Magazine.

- Durlak, J. A., Weissberg, R. P., Dymnicki, A. B., Taylor, R. D., & Schellinger, K. B. (2011). The impact of enhancing students' social and emotional learning: A meta-analysis of school-based universal interventions. Child Development, 82(1), 405–432. [CrossRef]

- Edmondson, A. (1999). Psychological safety and learning behavior in work teams. Administrative Science Quarterly, 44(2), 350–383. [CrossRef]

- Ericsson, K. A., Krampe, R. T., & Tesch-Römer, C. (1993). The role of deliberate practice in the acquisition of expert performance. Psychological Review, 100(3), 363–406. [CrossRef]

- Frey, C. B., & Osborne, M. A. (2017). The future of employment: How susceptible are jobs to computerisation? Technological Forecasting and Social Change, 114, 254–280. [CrossRef]

- Fuller, J. B., Raman, M., Sage-Gavin, E., & Hines, K. (2022). Hidden workers: Untapped talent. Harvard Business School Project on Managing the Future of Work.

- Greenberg, M. T., Kusche, C. A., Cook, E. T., & Quamma, J. P. (1995). Promoting emotional competence in school-aged children: The effects of the PATHS curriculum. Development and Psychopathology, 7(1), 117–136. [CrossRef]

- Heckman, J. J., & Kautz, T. (2012). Hard evidence on soft skills. Labour Economics, 19(4), 451–464. [CrossRef]

- Heckman, J. J., Stixrud, J., & Urzua, S. (2006). The effects of cognitive and noncognitive abilities on labor market outcomes and social behavior. Journal of Labor Economics, 24(3), 411–482. [CrossRef]

- Hmelo-Silver, C. E. (2004). Problem-based learning: What and how do students learn? Educational Psychology Review, 16(3), 235–266. [CrossRef]

- Hutchinson, B., & Mitchell, M. (2019). 50 years of test (un)fairness: Lessons for machine learning. Proceedings of the Conference on Fairness, Accountability, and Transparency, 49–58.

- IBM. (2023). IBM digital badges program. IBM Corporation.

- Jovanovic, J., & Devedzic, V. (2015). Open badges: Novel means to motivate, scaffold and recognize learning. Technology, Knowledge and Learning, 20(1), 115–122. [CrossRef]

- Kane, M. T. (2013). Validating the interpretations and uses of test scores. Journal of Educational Measurement, 50(1), 1–73. [CrossRef]

- Kato, S., Galán-Muros, V., & Weko, T. (2020). The emergence of alternative credentials (OECD Education Working Papers, No. 216). OECD Publishing.

- Kilduff, M., Chiaburu, D. S., & Menges, J. I. (2010). Strategic use of emotional intelligence in organizational settings: Exploring the dark side. Research in Organizational Behavior, 30, 129–152. [CrossRef]

- Kirschner, P. A., Sweller, J., & Clark, R. E. (2006). Why minimal guidance during instruction does not work: An analysis of the failure of constructivist, discovery, problem-based, experiential, and inquiry-based teaching. Educational Psychologist, 41(2), 75–86. [CrossRef]

- Kolb, D. A. (1984). Experiential learning: Experience as the source of learning and development. Prentice Hall.

- Lane, S., & Stone, C. A. (2006). Performance assessment. In R. L. Brennan (Ed.), Educational measurement (4th ed., pp. 387–431). American Council on Education/Praeger.

- Lave, J., & Wenger, E. (1991). Situated learning: Legitimate peripheral participation. Cambridge University Press.

- Matsumoto, D., Yoo, S. H., & Nakagawa, S. (2008). Culture, emotion regulation, and adjustment. Journal of Personality and Social Psychology, 94(6), 925–937. [CrossRef]

- Messick, S. (1995). Validity of psychological assessment: Validation of inferences from persons' responses and performances as scientific inquiry into score meaning. American Psychologist, 50(9), 741–749. [CrossRef]

- Mueller, J. S., Melwani, S., & Goncalo, J. A. (2012). The bias against creativity: Why people desire but reject creative ideas. Psychological Science, 23(1), 13–17. [CrossRef]

- National Center for ONET Development. (2023). ONET OnLine. U.S. Department of Labor.

- O'Neil, C. (2016). Weapons of math destruction: How big data increases inequality and threatens democracy. Crown.

- Oliver, B. (2019). Making micro-credentials work for learners, employers, and providers. Deakin University.

- Organisation for Economic Co-operation and Development. (2018). The future of education and skills: Education 2030. OECD Publishing.

- Organisation for Economic Co-operation and Development. (2024a). PISA 2022 results (Volume III): Creative minds, creative schools. OECD Publishing.

- Organisation for Economic Co-operation and Development. (2024b). Nurturing social and emotional learning across the globe: Findings from the OECD Survey on Social and Emotional Skills 2023. OECD Publishing.

- Schön, D. A. (1983). The reflective practitioner: How professionals think in action. Basic Books.

- Schwab, K. (2017). The fourth industrial revolution. Crown Business.

- Shippmann, J. S., Ash, R. A., Batjtsta, M., Carr, L., Eyde, L. D., Hesketh, B., Kehoe, J., Pearlman, K., Prien, E. P., & Sanchez, J. I. (2000). The practice of competency modeling. Personnel Psychology, 53(3), 703–740. [CrossRef]

- Spence, M. (1973). Job market signaling. Quarterly Journal of Economics, 87(3), 355–374. [CrossRef]

- Taylor, R. D., Oberle, E., Durlak, J. A., & Weissberg, R. P. (2017). Promoting positive youth development through school-based social and emotional learning interventions: A meta-analysis of follow-up effects. Child Development, 88(4), 1156–1171. [CrossRef] [PubMed]

- Tennen, H., & Affleck, G. (1998). Personality and transformation in the face of adversity. In R. G. Tedeschi, C. L. Park, & L. G. Calhoun (Eds.), Posttraumatic growth: Positive changes in the aftermath of crisis (pp. 65–98). Erlbaum.

- Ungar, M. (2008). Resilience across cultures. British Journal of Social Work, 38(2), 218–235. [CrossRef]

- Wheelahan, L. (2010). Why knowledge matters in curriculum: A social realist argument. Routledge.

- World Economic Forum. (2020). The future of jobs report 2020. World Economic Forum.

- World Economic Forum. (2025). New economy skills: Unlocking the human advantage. World Economic Forum.

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).