Submitted:

13 March 2026

Posted:

13 March 2026

You are already at the latest version

Abstract

Keywords:

Introduction

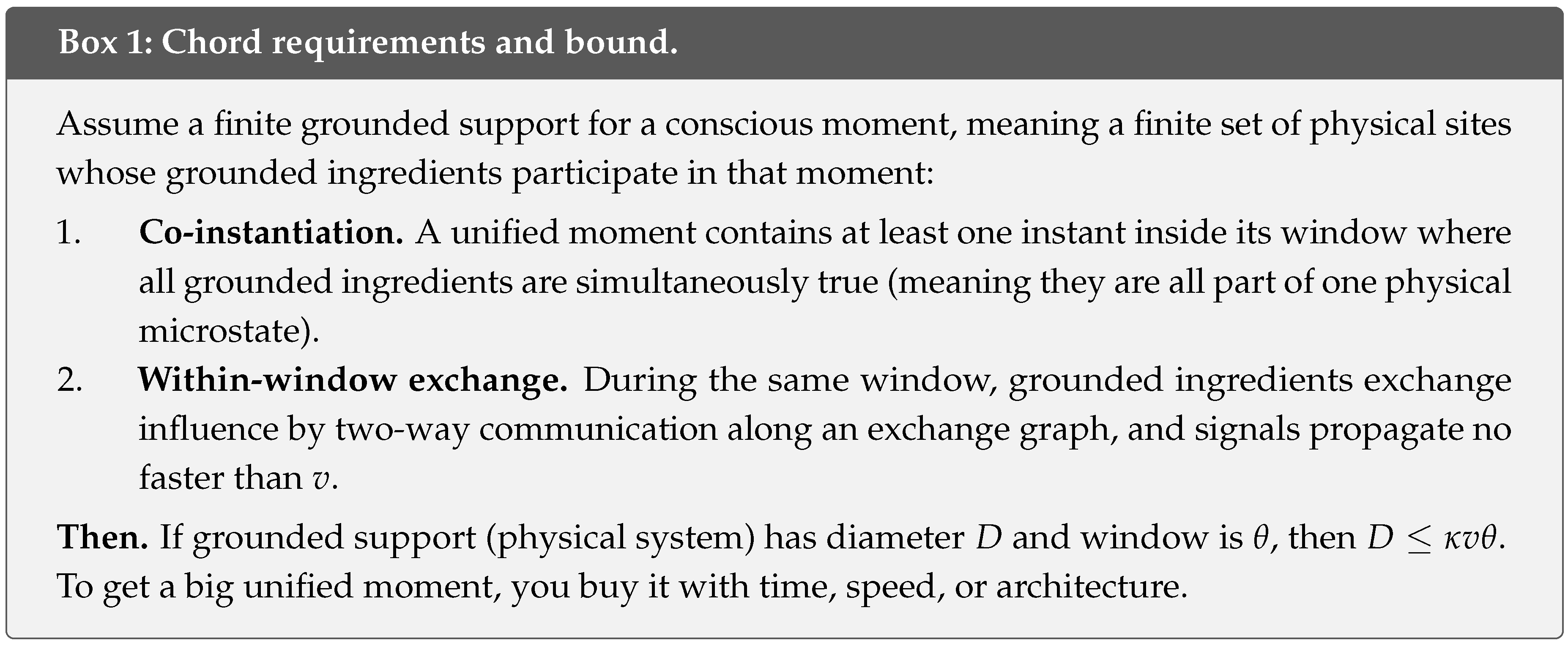

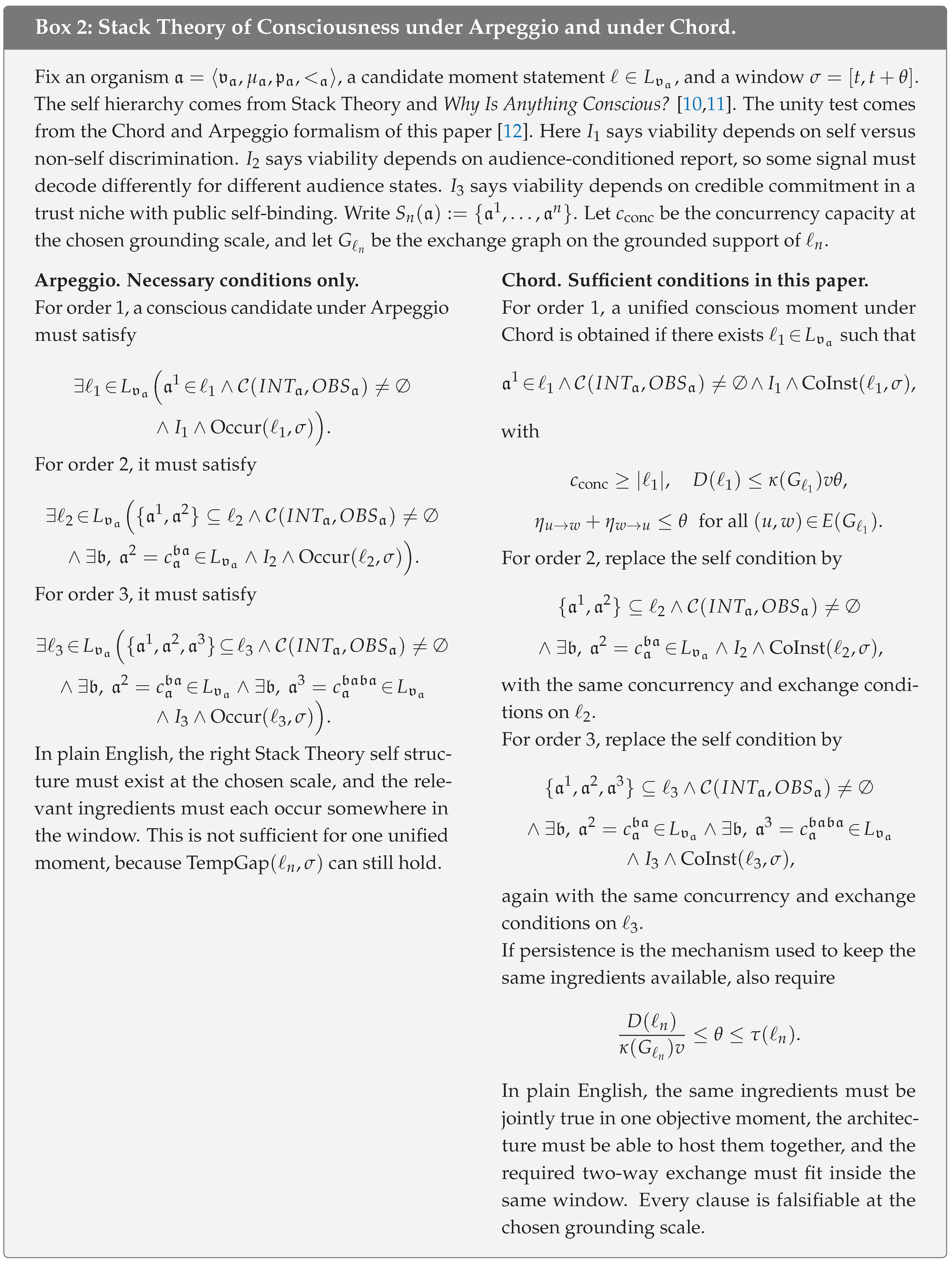

- I extend a workshop paper [12] that introduced the Stack Theory time semantics of Arpeggio and Chord. Here I add a within-window causal exchange postulate to Chord and derive a spacetime diameter bound for one unified moment.

- I integrate the algebraic results from that workshop paper (the non-commutation of existential temporal lift with conjunction, the concurrency capacity threshold, and the persistence collapse condition) and give arguments in favour of Chord over Arpeggio on formal, neuroscientific, and architectural grounds.

- I build a falsification pipeline, validate it with stress tests, and anchor the lower side of the window using a reanalysis of published primate corpus callosum microstructure.

- I address the “make the window larger” objection. The window is bracketed from below by the exchange budget implied by D, , and v, and from above by ingredient persistence when persistence is the mechanism invoked to close the Temporal Gap. This yields a feasibility interval rather than an arbitrary stipulation.

- I present case studies for liquid brains, brain-computer interface hybrids, cloud-hosted AI, human populations, and Arpeggio taken to its logical extreme.

Results

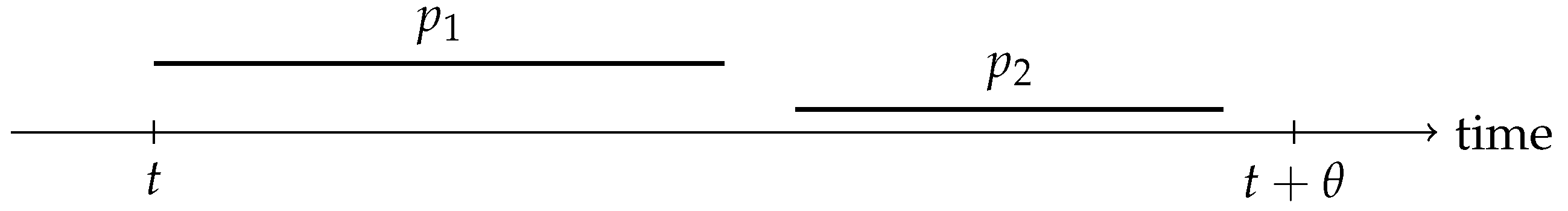

Windowing Is Weaker Than Simultaneity

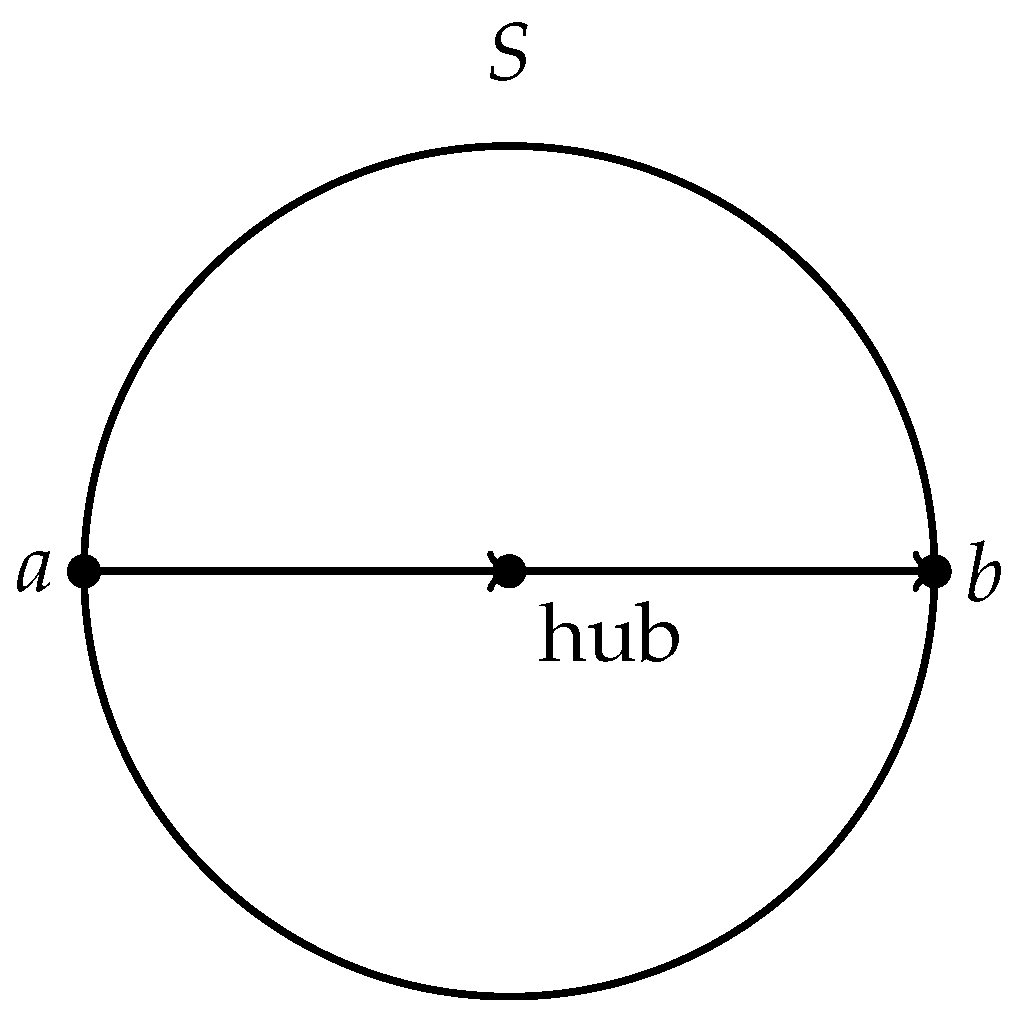

Diameter Bound

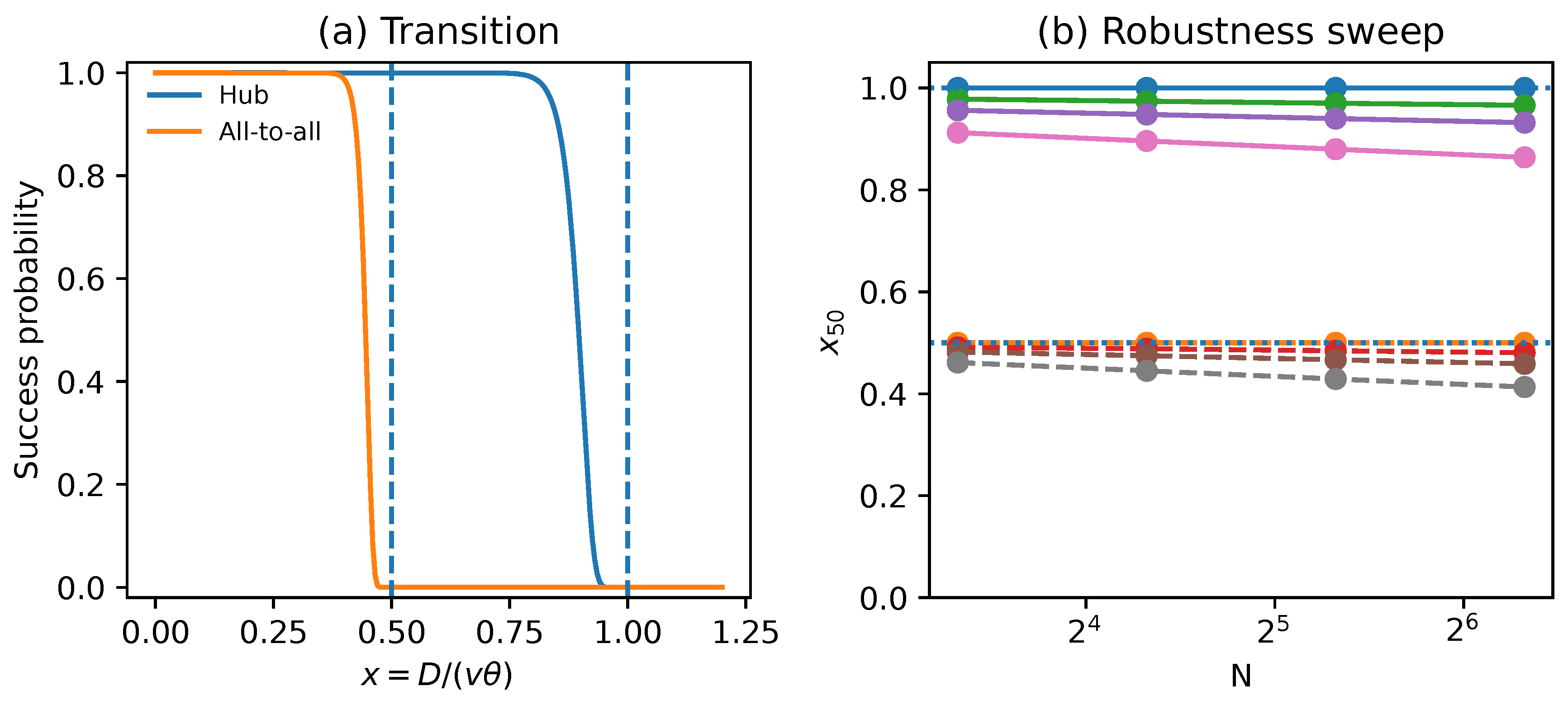

Fragmentation Transition

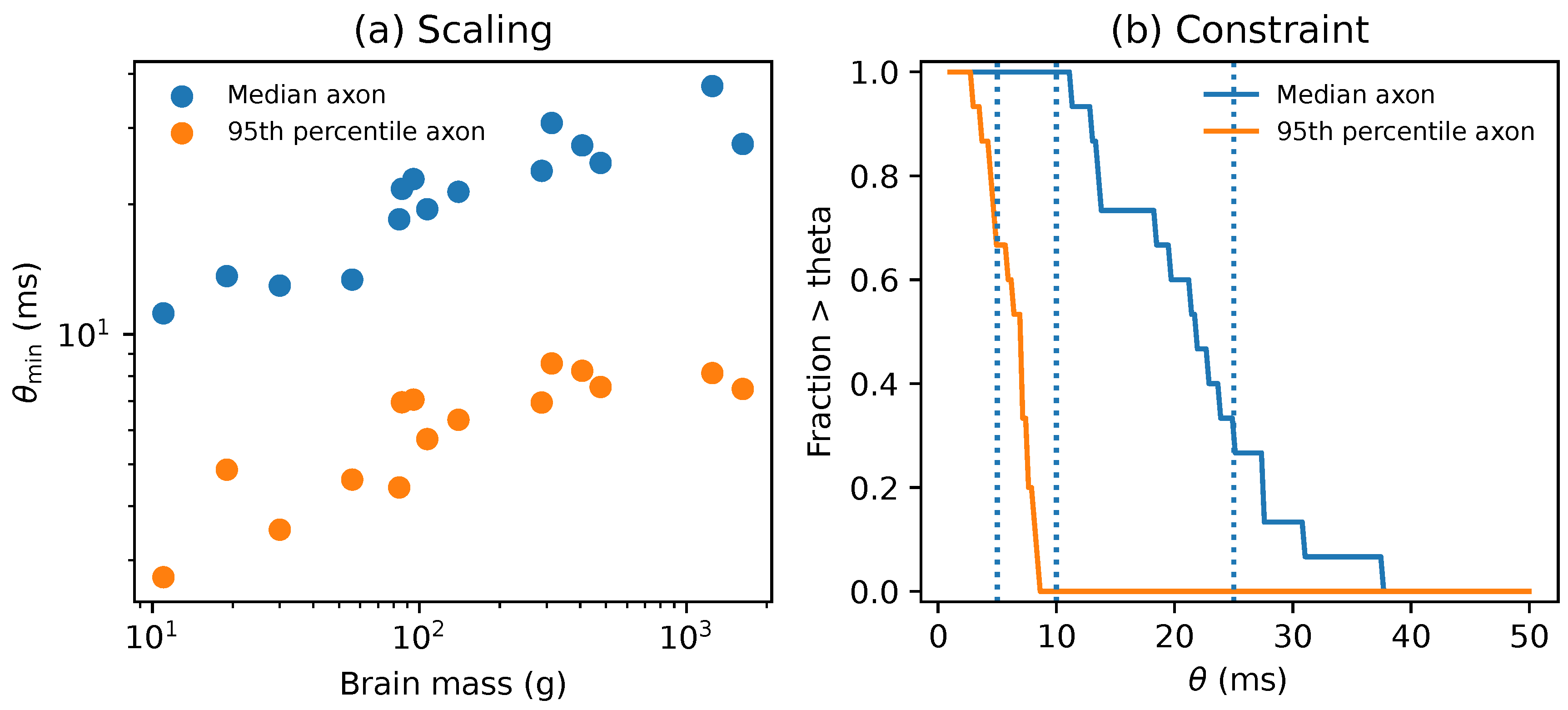

Primate Scaling Anchor

Falsification and Stress Tests

- Pick a candidate unity marker and a window duration .

- For each window, estimate the support diameter and collect fast-propagation samples to estimate .

- Compute the margin .

- If windows overlap heavily, thin them by keeping every kth window.

- Test whether the mean margin is greater than zero using a one sided t test.

- The false refutation rate is controlled at or below the nominal level when v is conservatively estimated. Under the default q95 rule used here I observe no false refutations at ratio 1.00 in 2500 replications.

- Power rises rapidly once the true ratio exceeds one. In these Monte Carlo tests I set as a units choice, so the x axis should be read as in general. With the conservative default quantile estimator used here and windows, the rise begins once the true ratio exceeds one by only a few percent.

- Naive estimators that underestimate the relevant speed bound, especially low end choices like taking the minimum within-window sample, can manufacture violations and inflate false refutation dramatically.

Discussion

Why Chord and not Arpeggio?

Novelty in Comparison to a Similar Bound

What Constrains the Window?

Implications for Populations and Human-AI Hybrids

Persistence, Robustness, and Substrate Tradeoffs

- Human brain (fine-grained neural ingredients)., . Derived diagnostic: . Human brain diameter . Compatible with Chord under both hub exchange () and all-to-all (). Consistent with consciousness at the whole-brain scale.

- Ant colony (coarse-grained colony-scale ingredients)., (hours, for colony-scale statistical properties). Derived diagnostic: . A small colony () can satisfy the diameter budget under hub exchange. Whether Chord is satisfied still depends on co-instantiation and concurrency at the relevant grounding resolution, not just on diameter.

Predictions and Falsifiers

- Temporal Gap falsifier. Take any high time resolution recording where you can define a set of grounded ingredients ℓ and a candidate unity marker over windows of duration . A unity marker is any measurable variable that is supposed to indicate that one unified moment occurred, such as a phase synchrony measure or a behavioural report. For each window , compute and for the same ℓ. Then focus on windows where holds but fails. If the unity marker still behaves as if a single moment occurred in those windows, then the Chord requirement is wrong for that marker.

- Latency budget violation. Pick any substrate where you can estimate a support diameter and a propagation ceiling for the same candidate unity marker. Write for a conservative lower bound on diameter, and write and for conservative upper bounds on speed and window duration. Compute the conservative margin . If is significantly positive across windows under the protocol in Supplementary Note 5, then either the within-window exchange postulate is false or the marker is not a unified moment.

- Architecture factor shift. Hold the same nodes, the same geometry, and the same window definition. Change only the exchange graph. For engineered systems this means swapping between hub exchange, all-to-all exchange, and a chosen sparse graph. For the same measured v and , the largest diameter that still supports integration should scale in direct proportion to . If the transition does not move when moves, then the architecture factor model is wrong.

Methods

Stack Theory Objects and Temporal Lift

Architecture Factor and Proof Idea

Mechanistic Integration Model

Primate Literature Extraction

Monte Carlo Stress Tests

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Code Availability

References

- Ned Block. On a confusion about a function of consciousness. Behavioral and Brain Sciences 1995, 18(2), 227–247. [Google Scholar] [CrossRef]

- Bernard J. Baars. A Cognitive Theory of Consciousness; Cambridge University Press: Cambridge, 1988. [Google Scholar]

- Stanislas Dehaene and Lionel Naccache. Towards a cognitive neuroscience of consciousness: basic evidence and a workspace framework. Cognition 2001, 79(1-2), 1–37. [Google Scholar] [CrossRef] [PubMed]

- Victor Lamme. Towards a true neural stance on consciousness. Trends in cognitive sciences 2006, 10(11), 494–501. [Google Scholar] [CrossRef]

- Francisco J. Varela, Jean-Philippe Lachaux, Eugenio Rodriguez, and Jacques Martinerie. The brainweb: phase synchronization and large-scale integration. Nature Reviews Neuroscience 2001, 2(4), 229–239. [Google Scholar] [CrossRef]

- Wolf Singer and Charles M. Gray. Visual feature integration and the temporal correlation hypothesis. Annual Review of Neuroscience 1995, 18, 555–586. [Google Scholar] [CrossRef] [PubMed]

- Pascal Fries. A mechanism for cognitive dynamics: neuronal communication through neuronal coherence. Trends in Cognitive Sciences 2005, 9(10), 474–480. [Google Scholar] [CrossRef] [PubMed]

- Giulio Tononi. An information integration theory of consciousness. BMC Neuroscience 2004, 5(1), 42. [Google Scholar] [CrossRef]

- Michael Timothy Bennett. Emergent causality and the foundation of consciousness. In 16th International Conference on Artificial General Intelligence, Lecture Notes in Computer Science; Springer, 2023; pp. pages 52–61. Available online: https://ouci.dntb.gov.ua/en/works/7BoXJMW4/. [CrossRef]

- Michael Timothy Bennett, Sean Welsh, and Anna Ciaunica. Why Is Anything Conscious? Preprint. arXiv 2024, arXiv:2409.14545. [Google Scholar] [CrossRef]

- Michael Timothy Bennett. How To Build Conscious Machines. PhD thesis, The Australian National University, 2025. Available online: https://hdl.handle.net/1885/733782452. [CrossRef]

- Michael Timothy Bennett. A mind cannot be smeared across time. In Forthcoming in Proceedings of the AAAI 2026 Spring Symposium on Machine Consciousness: Integrating Theory, Technology, and Philosophy, Burlingame, California, USA, April 2026; AAAI Press. [Google Scholar]

- Daniel C. Dennett and Marcel Kinsbourne. Time and the observer: the where and when of consciousness in the brain. Behavioral and Brain Sciences 1992, 15(2), 183–201. [Google Scholar] [CrossRef]

- Anna Ciaunica, Andreas Roepstorff, Aikaterini Katerina Fotopoulou, and Bruna Petreca. Whatever next and close to my self—the transparent senses and the “second skin”: Implications for the case of depersonalization. Frontiers in Psychology 2021, 12. [Google Scholar] [CrossRef]

- David M. Eagleman and Terrence J. Sejnowski. Motion integration and postdiction in visual awareness. Science 2000, 287(5460), 2036–2038. [Google Scholar] [CrossRef] [PubMed]

- Ernst Pöppel. A hierarchical model of temporal perception. Trends in Cognitive Sciences 1997, 1(2), 56–61. [Google Scholar] [CrossRef] [PubMed]

- Jean Vroomen and Mirjam Keetels. Perception of intersensory synchrony: a tutorial review. Attention, Perception, & Psychophysics 2010, 72(4), 871–884. [Google Scholar] [CrossRef] [PubMed]

- Mark T. Wallace and Ryan A. Stevenson. The construct of the multisensory temporal binding window and its dysregulation in developmental disabilities. Neuropsychologia 2014, 64, 105–123. [Google Scholar] [CrossRef]

- Ricard Solé, Melanie Moses, and Stephanie Forrest. Liquid brains, solid brains. Philosophical Transactions of the Royal Society B: Biological Sciences 2019, 374(1774), 20190040. [Google Scholar] [CrossRef]

- Ricard Solé, Luis F. Seoane, Jordi Pla-Mauri, Michael Timothy Bennett, Michael E. Hochberg, and Michael Levin. Cognition spaces: natural, artificial, and hybrid, 2026. arXiv. Available online: https://arxiv.org/abs/2601.12837. [CrossRef]

- Michael Timothy Bennett. Are biological systems more intelligent than artificial intelligence?, 2024 Special issue on Hybrid agencies: crossing borders between biological and artificial worlds; Philosophical Transactions of the Royal Society B: Biological Sciences, 2026; Available online: https://arxiv.org/abs/2405.02325. [CrossRef]

- Jacob D. Bekenstein. Universal upper bound on the entropy-to-energy ratio for bounded systems. Phys. Rev. D 1981, 23, 287–298. [Google Scholar] [CrossRef]

- Michael Timothy Bennett. Is complexity an illusion? In 17th International Conference on Artificial General Intelligence Lecture Notes in Computer Science; Springer, 2024. [Google Scholar] [CrossRef]

- Michael Timothy Bennett. Technical appendices, 2025. Archived release on Zenodo. Available online: https://github.com/ViscousLemming/Technical-Appendices. [CrossRef]

- Kimberley A. Phillips, Cheryl D. Stimpson, Jeroen B. Smaers, Mary Ann Raghanti, Bob Jacobs, Aleksandar Popratiloff, Patrick R. Hof, and Chet C. Sherwood. The corpus callosum in primates: processing speed of axons and the evolution of hemispheric asymmetry. Proceedings of the Royal Society B: Biological Sciences 2015, 282(1818), 20151535. [Google Scholar] [CrossRef]

- Kimberley A. Phillips, Cheryl D. Stimpson, Jeroen B. Smaers, Mary Ann Raghanti, Bob Jacobs, Aleksandar Popratiloff, Patrick R. Hof, and Chet C. Sherwood. Correction to The corpus callosum in primates: processing speed of axons and the evolution of hemispheric asymmetry. Proceedings of the Royal Society B: Biological Sciences 2015, 282(1819), 20152620. [Google Scholar] [CrossRef]

- John L. Ringo, Richard W. Doty, Steven Demeter, and Pierre Y. Simard. Time is of the essence: a conjecture that hemispheric specialization arises from interhemispheric conduction delay. Cerebral Cortex 1994, 4(4), 331–343. [Google Scholar] [CrossRef] [PubMed]

- Roberto Caminiti, Houda Ghaziri, Ralf A. W. Galuske, Patrick R. Hof, and Giorgio M. Innocenti. Evolution amplified processing with temporally dispersed slow neuronal connectivity in primates. Proceedings of the National Academy of Sciences of the United States of America 2009, 106(46), 19551–19556. [Google Scholar] [CrossRef]

- Giorgio M. Innocenti, Ingo Vahlsing, and Roberto Caminiti. The functional characterization of callosal connections. Progress in Neurobiology 2022, 208, 102186. [Google Scholar] [CrossRef]

- Lucia Melloni, Carlos Molina, Miguel Pena, David Torres, Wolf Singer, and Eugenio Rodriguez. Synchronization of neural activity across cortical areas correlates with conscious perception. Journal of Neuroscience 2007, 27(11), 2858–2865. [Google Scholar] [CrossRef]

- Marcello Massimini, Fabio Ferrarelli, Reto Huber, Steven K. Esser, Harminder Singh, and Giulio Tononi. Breakdown of cortical effective connectivity during sleep. Science 2005, 309(5744), 2228–2232. [Google Scholar] [CrossRef] [PubMed]

- Adenauer G. Casali, Olivia Gosseries, Mario Rosanova, M’elanie Boly, Simone Sarasso, Karina R. Casali, Silvia Casarotto, Marie-Aur’elie Bruno, Steven Laureys, Giulio Tononi, and Marcello Massimini. A theoretically based index of consciousness independent of sensory processing and behavior. Science Translational Medicine 2013, 5(198), 198ra105. [Google Scholar] [CrossRef] [PubMed]

- Jonathon Sendall. Event horizons, spacetime geometry, and the limits of integrated consciousness, 2026. arXiv. Available online: https://arxiv.org/abs/2512.23105.

- Stuart Hameroff and Roger Penrose. Consciousness in the universe: A review of the ‘orch or’ theory. Physics of Life Reviews 2014, 11(1), 39–78. Available online: https://www.sciencedirect.com/science/article/pii/S1571064513001188. [CrossRef]

- Galen Strawson. Realistic monism - why physicalism entails panpsychism. Journal of Consciousness Studies 2006, 13(10-11), 3–31. [Google Scholar]

- Anna Ciaunica and Laura Crucianelli. Minimal self-awareness: from within a developmental perspective. Journal of Consciousness Studies 2019, 26(3-4), 207–226. Available online: https://www.ingentaconnect.com/content/imp/jcs/2019/00000026/f0020003/art00010.

- Michael Timothy Bennett. The optimal choice of hypothesis is the weakest, not the shortest. In 16th International Conference on Artificial General Intelligence Lecture Notes in Computer Science; Springer, 2023; pp. pages 42–51. [Google Scholar] [CrossRef]

- Michael Timothy Bennett. Optimal policy is weakest policy. In Artificial General Intelligence, volume 16057 of Lecture Notes in Computer Science; Springer, 2025; pp. 43–56. [Google Scholar] [CrossRef]

- Anna Ciaunica, Adam Safron, and Jonathan Delafield-Butt. Back to square one: the bodily roots of conscious experiences in early life. Neuroscience of Consciousness 2021, 2, 11 2021. [Google Scholar] [CrossRef]

- Manuel Blum and Lenore Blum. A theoretical computer science perspective on consciousness. J. Artif. Intell. Conscious. 2020, 8, 1–42. [Google Scholar]

- Lenore Blum and Manuel Blum. A theory of consciousness from a theoretical computer science perspective: Insights from the conscious turing machine. Proceedings of the National Academy of Sciences 2022, 119(21), e2115934119. [Google Scholar] [CrossRef] [PubMed]

- Michael Timothy Bennett. Why does life exist?, 2026. Preprint. 2026. Available online: https://www.preprints.org/manuscript/202603.0203.

- Ricard Solé, Christopher P Kempes, Bernat Corominas-Murtra, Manlio De Domenico, Artemy Kolchinsky, Michael Lachmann, Eric Libby, Serguei Saavedra, Eric Smith, and David Wolpert. Fundamental constraints to the logic of living systems. Interface Focus 2024, 14(5), 20240010. [Google Scholar] [CrossRef]

- Ricard Solé and Luís F Seoane. Evolution of brains and computers: The roads not taken. Entropy 2022, 24(5), 665. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).