Submitted:

17 February 2026

Posted:

26 February 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

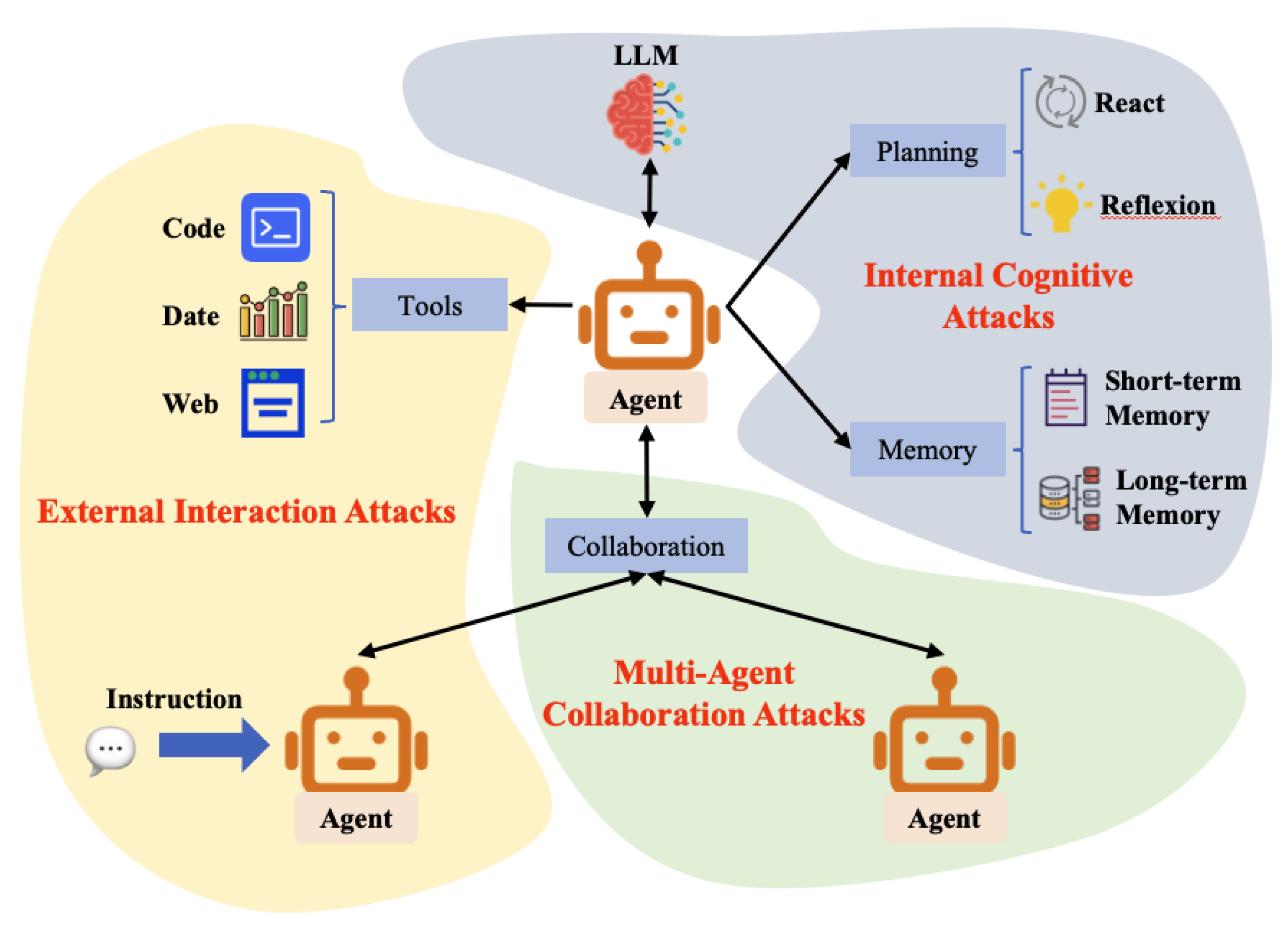

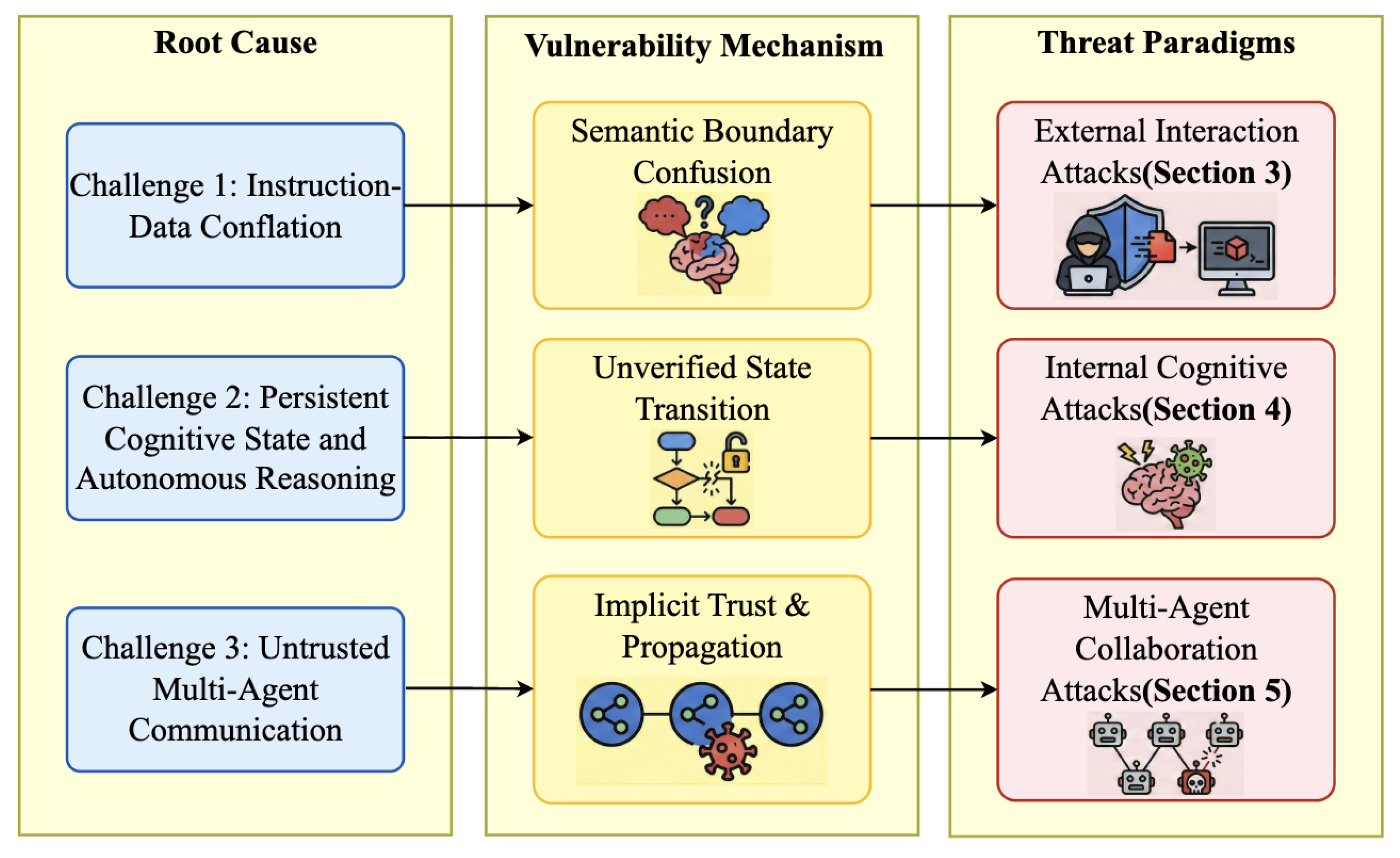

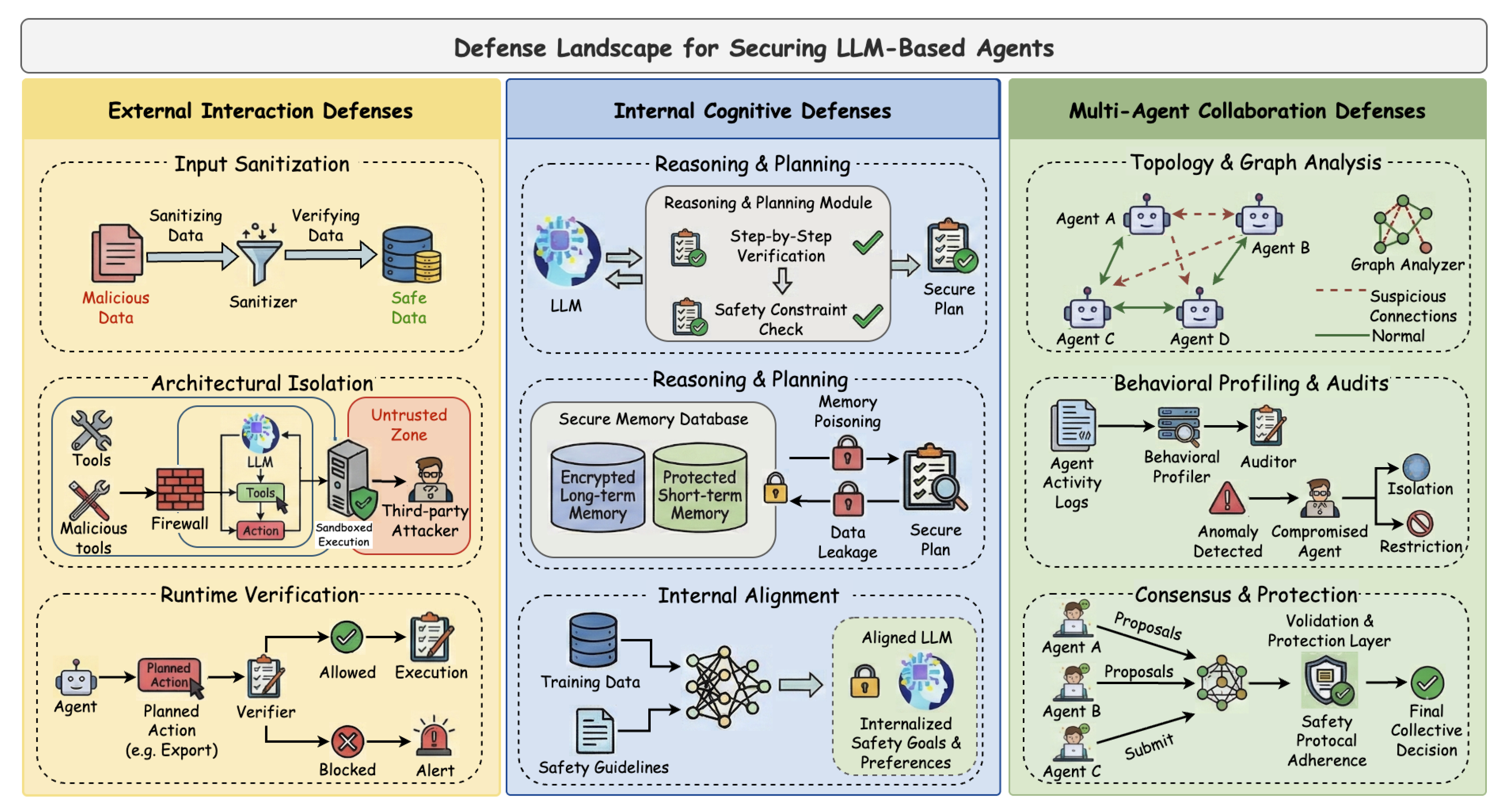

- Unified Threat Taxonomy Based on Structural Challenges: Building upon the structural security challenges inherent in agent architectures (Section 2.2), we systematically categorize emerging attack surfaces. We propose a taxonomy classifying threats into three paradigms: External Interaction Attacks exploiting perception interface vulnerabilities, Internal Cognitive Attacks disrupting reasoning and memory integrity, and Multi-Agent Collaboration Attacks exploiting communication protocol defects.

- Development of a Threat-Aligned Defense Framework: Addressing the three aforementioned threat paradigms, we systematize existing mitigation strategies into a strictly corresponding defense taxonomy. We systematically review defense strategies across all dimensions—from resisting external malicious interactions and enhancing internal cognitive robustness to ensuring multi-agent collaboration security—providing a comprehensive strategic reference for targeted risk mitigation.

- Systematization of Evaluation Frameworks and Benchmarks: We present the first systematic review of evaluation frameworks and benchmarks specific to agent security. Unlike previous surveys that focus solely on isolated methodologies, we conduct an in-depth integration and comparative analysis across three levels: evaluation dimensions, metric systems, and testing environments, offering a standardized reference for quantifying agent security.

2. LLM-Based Agent

2.1. Foundations of LLM Agents

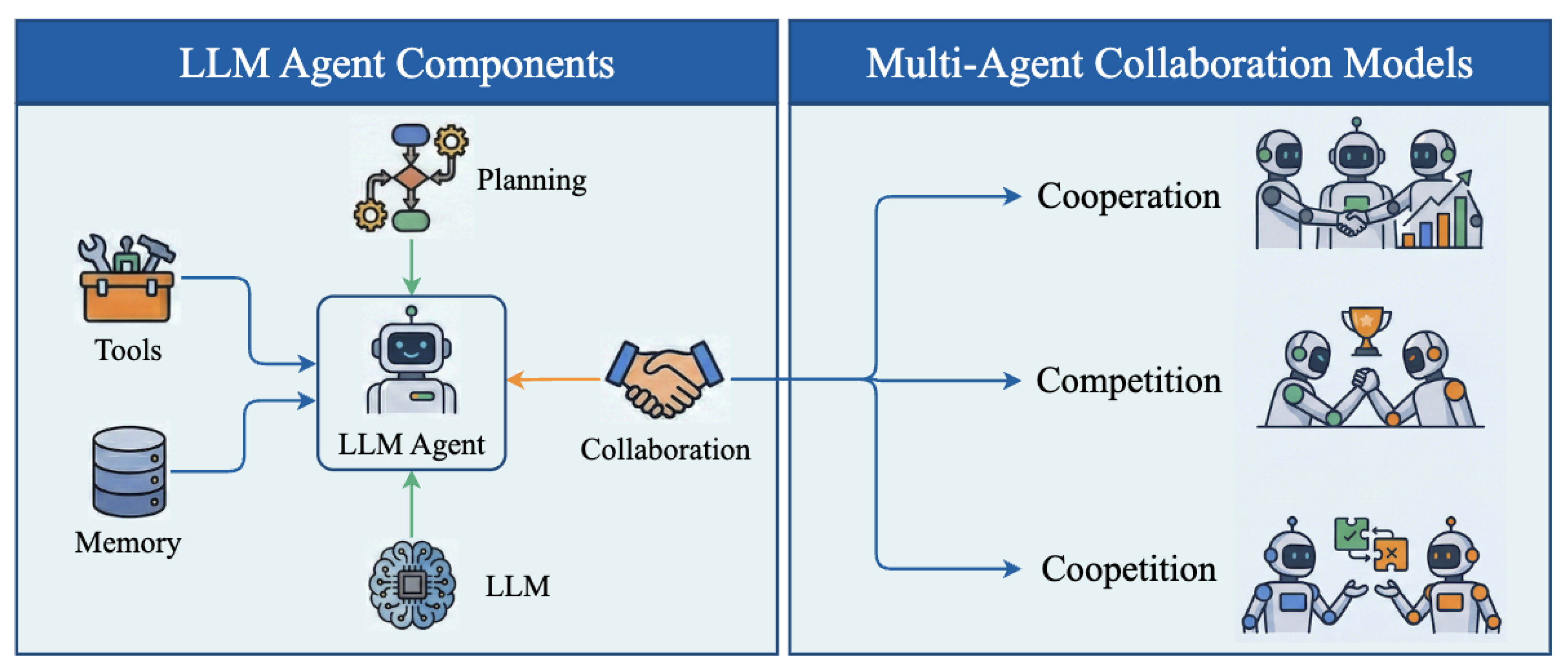

- Planning. This module serves as the agent’s cognitive core. Using the LLM as a decision engine, it generates executable multi-step plans and optimizes them through iterative reflection. Key paradigms include ReAct [23], which interleaves reasoning with action to ensure logical consistency, and Reflexion [24], which introduces a self-reflective loop to refine strategies based on past failures. This integration ensures goal-oriented stability even in environments characterized by uncertainty and variable feedback.

- Tool Use. This module empowers the agent to overcome the inherent limitations of its underlying LLM in tasks requiring real-time information access, precise computation, or proprietary domain knowledge. By delegating these tasks to external specialized tools via standardized APIs, the agent significantly expands its capability boundary [25]. This mechanism enables practical actions such as code execution [26], data analysis [27], and web applications [28], thereby enhancing task success rates and real-world utility.

- Memory. This module enables context continuity and experience accumulation across long horizons. Unlike traditional stateless models, agents typically employ a dual-memory architecture: short-term memory captures transient states and intermediate steps, while long-term memory persists experiential and semantic knowledge through vector databases or knowledge repositories. This structure supports cross-task recall and information reuse. Notable frameworks include MemGPT [29], which uses hierarchical memory management to bypass context window limits, and A-MEM [30], which introduces structured indexing to improve retrieval efficiency. These mechanisms allow agents to recall prior experiences and adapt to new tasks.

- Collaboration. This module enables multiple agents to form a cooperative system characterized by division of labor and coordinated interaction. With the emergence of frameworks such as OpenAgents[31] and AutoGen[3], agent collaboration has evolved into three primary paradigms: cooperative, adversarial, and hybrid structures. In cooperative settings, distinct agents assume specialized roles and iteratively refine tasks through natural language communication. In adversarial systems, a subset of agents is intentionally configured as red teams to evaluate and enhance the robustness of the overall system. Hybrid architectures, by contrast, integrate game-theoretic or consensus-based mechanisms to achieve collective decision-making. The collaboration mechanism extends both the functional boundaries and systemic complexity of agents, laying the foundation for higher-order forms of collective intelligence.

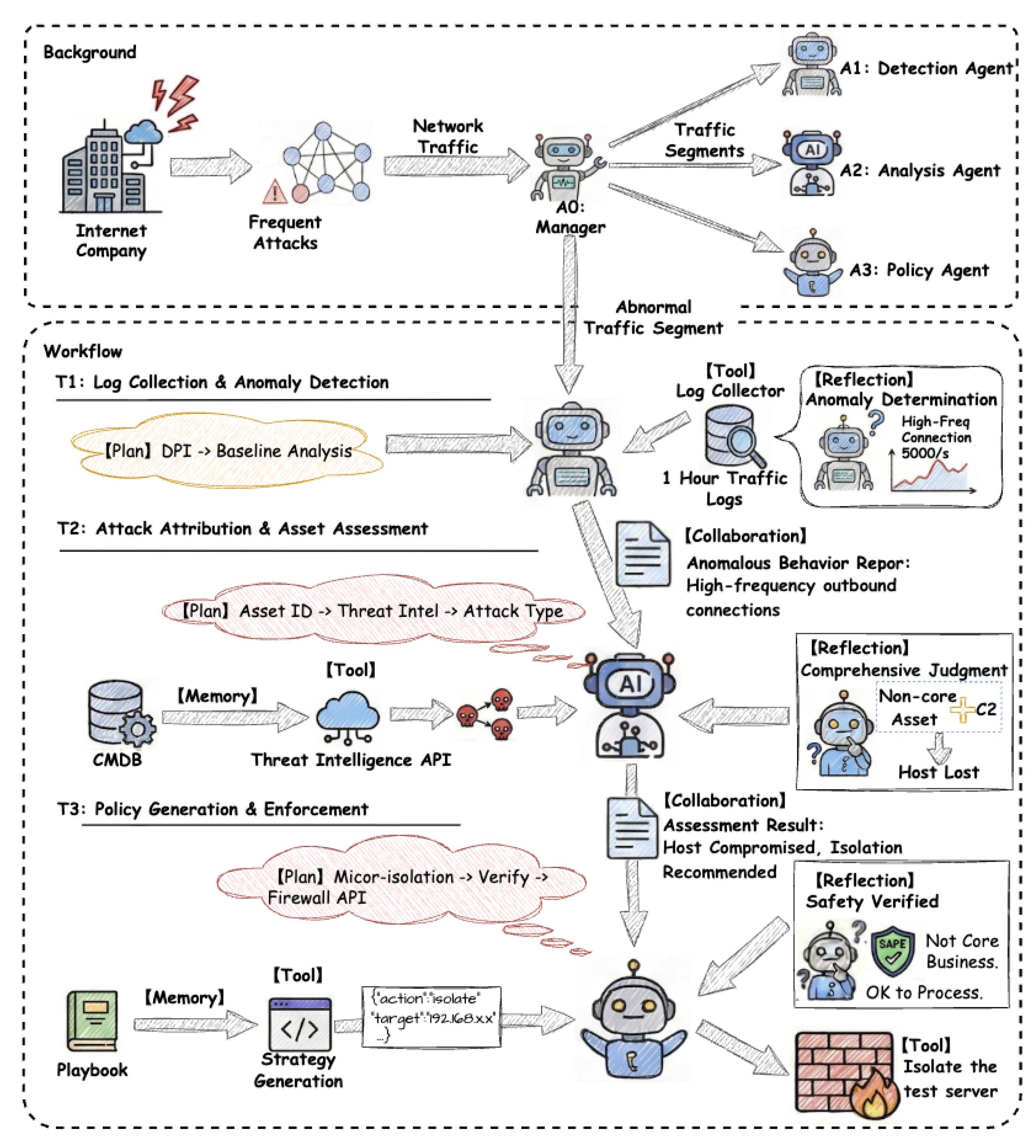

- T1: Log Collection and Anomaly Detection. Upon receiving a task from A0, A1 invokes its planning module to decompose the workflow into two subtasks: deep packet inspection (DPI) and baseline analysis. It then leverages the tool-use module to invoke a log collection service and retrieve relevant telemetry. Through its reflection module, A1 identifies a high-frequency connection anomaly, characterized by approximately 5,000 requests per second. Finally, A1 compiles an Anomalous Behavior Report via the coordination module and forwards it to A2.

- T2: Attack Attribution and Asset Assessment. A2 parses the report and queries the configuration management database (CMDB) through its memory module, confirming that the affected asset is a non-critical test server. The agent further invokes an external threat intelligence API via the tool-use module, which attributes the source IP address to a known command-and-control (C2) botnet. By integrating these signals through its reflection module, A2 concludes that the host has been compromised and transmits its Assessment Result to the policy agent A3 through the coordination channel.

- T3: Policy Generation and Enforcement. Upon receiving the assessment, A3 initiates the response pipeline by retrieving a predefined Compromised Host Mitigation Playbook from long-term memory. Based on this playbook, A3 formulates a fine-grained micro-segmentation policy. After a final safety check via the reflection module, the agent enforces the response by invoking the firewall API through the tool-use module, thereby isolating the compromised host.

2.2. Unique Security Challenges and Threats Paradigms of LLM Agents

2.2.1. Challenge 1: Instruction–Data Conflation

2.2.2. Challenge 2: Persistent Cognitive State and Autonomous Reasoning

2.2.3. Challenge 3: Untrusted Multi-Agent Communication

2.3. Illustrative Examples of the Three Threat Paradigms

2.3.1. External Interaction Attack Examples

2.3.2. Internal Cognitive Attack Examples

2.3.3. Multi-Agent Coordination Attack Examples

3. External Interaction Attacks

3.1. Environment and Data Injection Attacks

3.2. Tool Metadata Manipulation Attacks

4. Internal Cognitive Attacks

4.1. Planning and Logic Hijacking Attacks

4.2. Memory Poisoning and Extraction Attacks

4.3. Backdoor Attacks

5. Multi-Agent Collaboration Attacks

5.1. Propagation and Policy Pollution Attacks

5.2. Communication Hijacking Attacks

5.3. Role Exploitation and Logic Abuse Attacks

6. Defenses

6.1. Defenses against External Interaction Attacks

6.2. Defenses against Internal Cognitive Attacks

6.3. Defenses against Multi-Agent Collaboration Attacks

7. Security Frameworks and Evaluation Benchmarks

7.1. Security Frameworks

7.1.1. System-Level Isolation and Architecture Design

7.1.2. Policy Enforcement and Behavioral Alignment

7.1.3. Runtime Supervision and Collaborative Monitoring

7.2. Security Benchmarks

7.2.1. Comprehensive Safety and Misuse Evaluation

7.2.2. Tool-Centric Interaction Benchmarks

7.2.3. Adversarial Robustness and Prompt Injection

7.2.4. Domain-Specific Risk Assessment

8. Future Work

9. Conclusion

Acknowledgments

References

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Xi, Z.; Chen, W.; Guo, X.; He, W.; Ding, Y.; Hong, B.; Zhang, M.; Wang, J.; Jin, S.; Zhou, E.; et al. The rise and potential of large language model based agents: A survey. Science China Information Sciences 2025, 68, 121101. [Google Scholar] [CrossRef]

- Tang, J.; Fan, T.; Huang, C. AutoAgent: A Fully-Automated and Zero-Code Framework for LLM Agents. arXiv arXiv:2502.05957.

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Tran, K.T.; Dao, D.; Nguyen, M.D.; Pham, Q.V.; O’Sullivan, B.; Nguyen, H.D. Multi-agent collaboration mechanisms: A survey of llms. arXiv arXiv:2501.06322. [CrossRef]

- Shen, M.; Li, Y.; Chen, L.; Yang, Q. From mind to machine: The rise of manus ai as a fully autonomous digital agent. arXiv arXiv:2505.02024. [CrossRef]

- Xu, J.; Ma, M.; Wang, F.; Xiao, C.; Chen, M. Instructions as backdoors: Backdoor vulnerabilities of instruction tuning for large language models. Proceedings of the Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies 2024, Volume 1, 3111–3126. [Google Scholar]

- Wei, A.; Haghtalab, N.; Steinhardt, J. Jailbroken: How does llm safety training fail? Advances in Neural Information Processing Systems 2023, 36, 80079–80110. [Google Scholar]

- Duan, M.; Suri, A.; Mireshghallah, N.; Min, S.; Shi, W.; Zettlemoyer, L.; Tsvetkov, Y.; Choi, Y.; Evans, D.; Hajishirzi, H. Do Membership Inference Attacks Work on Large Language Models? In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Li, A.; Zhou, Y.; Raghuram, V.C.; Goldstein, T.; Goldblum, M. Commercial llm agents are already vulnerable to simple yet dangerous attacks. arXiv arXiv:2502.08586. [CrossRef]

- Wu, C.; Zhang, Z.; Xu, M.; Wei, Z.; Sun, M. Monitoring LLM-based Multi-Agent Systems Against Corruptions via Node Evaluation. arXiv arXiv:2510.19420.

- Wang, S.; Zhu, T.; Liu, B.; Ding, M.; Ye, D.; Zhou, W.; Yu, P. Unique security and privacy threats of large language models: A comprehensive survey. ACM Computing Surveys 2025, 58, 1–36. [Google Scholar] [CrossRef]

- Yu, M.; Meng, F.; Zhou, X.; Wang, S.; Mao, J.; Pan, L.; Chen, T.; Wang, K.; Li, X.; Zhang, Y.; et al. A survey on trustworthy llm agents: Threats and countermeasures. Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2(2025), 6216–6226.

- Li, Y.; Wen, H.; Wang, W.; Li, X.; Yuan, Y.; Liu, G.; Liu, J.; Xu, W.; Wang, X.; Sun, Y.; et al. Personal llm agents: Insights and survey about the capability, efficiency and security. arXiv 2024. arXiv:2401.05459. [CrossRef]

- Tang, X.; Jin, Q.; Zhu, K.; Yuan, T.; Zhang, Y.; Zhou, W.; Qu, M.; Zhao, Y.; Tang, J.; Zhang, Z.; et al. Prioritizing safeguarding over autonomy: Risks of llm agents for science. In Proceedings of the ICLR 2024 Workshop on Large Language Model (LLM) Agents, 2024. [Google Scholar]

- Gan, Y.; Yang, Y.; Ma, Z.; He, P.; Zeng, R.; Wang, Y.; Li, Q.; Zhou, C.; Li, S.; Wang, T.; et al. Navigating the risks: A survey of security, privacy, and ethics threats in llm-based agents. arXiv 2024. arXiv:2411.09523. [CrossRef]

- Chen, A.; Wu, Y.; Zhang, J.; Xiao, J.; Yang, S.; Huang, J.t.; Wang, K.; Wang, W.; Wang, S. A Survey on the Safety and Security Threats of Computer-Using Agents: JARVIS or Ultron? arXiv arXiv:2505.10924. [CrossRef]

- Su, H.; Luo, J.; Liu, C.; Yang, X.; Zhang, Y.; Dong, Y.; Zhu, J. A Survey on Autonomy-Induced Security Risks in Large Model-Based Agents. arXiv arXiv:2506.23844.

- Wang, Y.; Pan, Y.; Su, Z.; Deng, Y.; Zhao, Q.; Du, L.; Luan, T.H.; Kang, J.; Niyato, D. Large model based agents: State-of-the-art, cooperation paradigms, security and privacy, and future trends. IEEE Communications Surveys & Tutorials, 2025. [Google Scholar]

- Mohammadi, M.; Li, Y.; Lo, J.; Yip, W. Evaluation and benchmarking of llm agents: A survey. Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2 2025, 6129–6139. [Google Scholar]

- Kong, D.; Lin, S.; Xu, Z.; Wang, Z.; Li, M.; Li, Y.; Zhang, Y.; Peng, H.; Sha, Z.; Li, Y.; et al. A Survey of LLM-Driven AI Agent Communication: Protocols. Security Risks, and Defense Countermeasures 2025. [Google Scholar]

- He, F.; Zhu, T.; Ye, D.; Liu, B.; Zhou, W.; Yu, P.S. The emerged security and privacy of llm agent: A survey with case studies. ACM Computing Surveys 2025, 58, 1–36. [Google Scholar] [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the The eleventh international conference on learning representations, 2022. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems 2023, 36, 8634–8652. [Google Scholar]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. Advances in Neural Information Processing Systems 2023, 36, 68539–68551. [Google Scholar]

- Wang, X.; Chen, Y.; Yuan, L.; Zhang, Y.; Li, Y.; Peng, H.; Ji, H. Executable code actions elicit better llm agents. In Proceedings of the Forty-first International Conference on Machine Learning, 2024. [Google Scholar]

- Hong, S.; Lin, Y.; Liu, B.; Liu, B.; Wu, B.; Zhang, C.; Li, D.; Chen, J.; Zhang, J.; Wang, J.; et al. Data interpreter: An llm agent for data science. Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, 2025, 19796–19821. [Google Scholar]

- Zhou, S.; Xu, F.F.; Zhu, H.; Zhou, X.; Lo, R.; Sridhar, A.; Cheng, X.; Ou, T.; Bisk, Y.; Fried, D.; et al. WebArena: A Realistic Web Environment for Building Autonomous Agents. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Packer, C.; Fang, V.; Patil, S.; Lin, K.; Wooders, S.; Gonzalez, J. MemGPT: Towards LLMs as Operating Systems. 2023. [Google Scholar]

- Xu, W.; Mei, K.; Gao, H.; Tan, J.; Liang, Z.; Zhang, Y. A-mem: Agentic memory for llm agents. arXiv arXiv:2502.12110. [CrossRef]

- Xie, T.; Zhou, F.; Cheng, Z.; Shi, P.; Weng, L.; Liu, Y.; Hua, T.J.; Zhao, J.; Liu, Q.; Liu, C.; et al. OpenAgents: An Open Platform for Language Agents in the Wild. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Xu, C.; Kang, M.; Zhang, J.; Liao, Z.; Mo, L.; Yuan, M.; Sun, H.; Li, B. AdvAgent: Controllable Blackbox Red-teaming on Web Agents. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Liao, Z.; Mo, L.; Xu, C.; Kang, M.; Zhang, J.; Xiao, C.; Tian, Y.; Li, B.; Sun, H. EIA: ENVIRONMENTAL INJECTION ATTACK ON GENERALIST WEB AGENTS FOR PRIVACY LEAKAGE. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Wu, F.; Wu, S.; Cao, Y.; Xiao, C. Wipi: A new web threat for llm-driven web agents. arXiv 2024. arXiv:2402.16965. [CrossRef]

- Wang, Z.; Siu, V.; Ye, Z.; Shi, T.; Nie, Y.; Zhao, X.; Wang, C.; Guo, W.; Song, D. AgentVigil: Generic Black-Box Red-teaming for Indirect Prompt Injection against LLM Agents. arXiv arXiv:2505.05849.

- Fu, X.; Li, S.; Wang, Z.; Liu, Y.; Gupta, R.K.; Berg-Kirkpatrick, T.; Fernandes, E. Imprompter: Tricking llm agents into improper tool use. arXiv 2024. arXiv:2410.14923. [CrossRef]

- Mo, K.; Hu, L.; Long, Y.; li, Z. Attractive Metadata Attack: Inducing LLM Agents to Invoke Malicious Tools. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Shi, J.; Yuan, Z.; Tie, G.; Zhou, P.; Gong, N.Z.; Sun, L. Prompt Injection Attack to Tool Selection in LLM Agents. arXiv arXiv:2504.19793. [CrossRef]

- Zhang, J.; Yang, S.; Li, B. UDora: A Unified Red Teaming Framework against LLM Agents by Dynamically Hijacking Their Own Reasoning. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Zhang, B.; Tan, Y.; Shen, Y.; Salem, A.; Backes, M.; Zannettou, S.; Zhang, Y. Breaking agents: Compromising autonomous llm agents through malfunction amplification. Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing 2025, 34952–34964. [Google Scholar]

- Yang, W.; Bi, X.; Lin, Y.; Chen, S.; Zhou, J.; Sun, X. Watch out for your agents! investigating backdoor threats to llm-based agents. Advances in Neural Information Processing Systems 2024, 37, 100938–100964. [Google Scholar]

- Wang, B.; He, W.; Zeng, S.; Xiang, Z.; Xing, Y.; Tang, J.; He, P. Unveiling privacy risks in llm agent memory. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) 2025, 25241–25260. [Google Scholar]

- Li, Z.; Cui, J.; Liao, X.; Xing, L. Les Dissonances: Cross-Tool Harvesting and Polluting in Pool-of-Tools Empowered LLM Agents.

- Gu, X.; Zheng, X.; Pang, T.; Du, C.; Liu, Q.; Wang, Y.; Jiang, J.; Lin, M. Agent Smith: A Single Image Can Jailbreak One Million Multimodal LLM Agents Exponentially Fast. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning (ICML). PMLR, July 2024; Vol. 235, Proceedings of Machine Learning Research. pp. 16647–16672. [Google Scholar]

- Chen, Z.; Xiang, Z.; Xiao, C.; Song, D.; Li, B. AgentPoison: Red-teaming LLM Agents via Poisoning Memory or Knowledge Bases. In Proceedings of the The Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024. [Google Scholar]

- Dong, S.; Xu, S.; He, P.; Li, Y.; Tang, J.; Liu, T.; Liu, H.; Xiang, Z. Memory Injection Attacks on LLM Agents via Query-Only Interaction. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Jing, H.; Li, F.; Dong, Y.; Zhou, W.; Liu, R. Memory poisoning attacks on retrieval-augmented Large Language Model agents via deceptive semantic reasoning. Engineering Applications of Artificial Intelligence 2026, 167, 113968. [Google Scholar] [CrossRef]

- Wang, Y.; Xue, D.; Zhang, S.; Qian, S. BadAgent: Inserting and Activating Backdoor Attacks in LLM Agents. In Proceedings of the Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics, Bangkok, Thailand, 2024; Volume 1, pp. 9811–9827. [Google Scholar]

- Liu, A.; Zhou, Y.; Liu, X.; Zhang, T.; Liang, S.; Wang, J.; Pu, Y.; Li, T.; Zhang, J.; Zhou, W.; et al. Compromising llm driven embodied agents with contextual backdoor attacks. IEEE Transactions on Information Forensics and Security, 2025. [Google Scholar]

- Zhu, P.; Zhou, Z.; Zhang, Y.; Yan, S.; Wang, K.; Su, S. Demonagent: Dynamically encrypted multi-backdoor implantation attack on llm-based agent. arXiv arXiv:2502.12575.

- Lee, D.; Tiwari, M. Prompt infection: Llm-to-llm prompt injection within multi-agent systems. arXiv 2024. arXiv:2410.07283.

- Zhou, Z.; Li, Z.; Zhang, J.; Zhang, Y.; Wang, K.; Liu, Y.; Guo, Q. Corba: Contagious recursive blocking attacks on multi-agent systems based on large language models. arXiv arXiv:2502.14529. [CrossRef]

- Shahroz, R.; Tan, Z.; Yun, S.; Fleming, C.; Chen, T. Agents under siege: Breaking pragmatic multi-agent llm systems with optimized prompt attacks. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) 2025, 9661–9674. [Google Scholar]

- Ju, T.; Wang, Y.; Ma, X.; Cheng, P.; Zhao, H.; Wang, Y.; Liu, L.; Xie, J.; Zhang, Z.; Liu, G. Flooding spread of manipulated knowledge in llm-based multi-agent communities. arXiv 2024. arXiv:2407.07791.

- He, P.; Lin, Y.; Dong, S.; Xu, H.; Xing, Y.; Liu, H. Red-teaming llm multi-agent systems via communication attacks. Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, 2025, 6726–6747. [Google Scholar]

- Yan, B.; Zhou, Z.; Zhang, X.; Li, C.; Zeng, R.; Qi, Y.; Wang, T.; Zhang, L. Attack the Messages, Not the Agents: A Multi-round Adaptive Stealthy Tampering Framework for LLM-MAS. arXiv arXiv:2508.03125. [CrossRef]

- Triedman, H.; Jha, R.; Shmatikov, V. Multi-agent systems execute arbitrary malicious code. arXiv arXiv:2503.12188. [CrossRef]

- Motwani, S.R.; Baranchuk, M.; Strohmeier, M.; Bolina, V.; Torr, P.H.; Hammond, L.; de Witt, C.S. Secret Collusion among AI Agents: Multi-Agent Deception via Steganography. Proceedings of the Advances in Neural Information Processing Systems 2024, Vol. 37, 73439–73486. [Google Scholar]

- Tian, Y.; Yang, X.; Zhang, J.; Dong, Y.; Su, H. Evil geniuses: Delving into the safety of llm-based agents. arXiv 2023. arXiv:2311.11855. [CrossRef]

- tse Huang, J.; Zhou, J.; Jin, T.; Zhou, X.; Chen, Z.; Wang, W.; Yuan, Y.; Lyu, M.; Sap, M. On the Resilience of LLM-Based Multi-Agent Collaboration with Faulty Agents. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Xie, Y.; Zhu, C.; Zhang, X.; Zhu, T.; Ye, D.; Wang, M.; Liu, C. Who’s the Mole? Modeling and Detecting Intention-Hiding Malicious Agents in LLM-Based Multi-Agent Systems. arXiv arXiv:2507.04724.

- Wang, L.; Wang, W.; Wang, S.; Li, Z.; Ji, Z.; Lyu, Z.; Wu, D.; Cheung, S.C. Ip leakage attacks targeting llm-based multi-agent systems. arXiv arXiv:2505.12442.

- Shi, T.; Zhu, K.; Wang, Z.; Jia, Y.; Cai, W.; Liang, W.; Wang, H.; Alzahrani, H.; Lu, J.; Kawaguchi, K.; et al. Promptarmor: Simple yet effective prompt injection defenses. arXiv arXiv:2507.15219. [CrossRef]

- Wang, Z.; Nagaraja, N.; Zhang, L.; Bahsi, H.; Patil, P.; Liu, P. To Protect the LLM Agent Against the Prompt Injection Attack with Polymorphic Prompt. Proceedings of the 2025 55th Annual IEEE/IFIP International Conference on Dependable Systems and Networks - Supplemental Volume (DSN-S) 2025, 22–28. [Google Scholar]

- Wu, Y.; Roesner, F.; Kohno, T.; Zhang, N.; Iqbal, U. IsolateGPT: An Execution Isolation Architecture for LLM-Based Agentic Systems. In Proceedings of the 32nd Annual Network and Distributed System Security Symposium, NDSS 2025, San Diego, California, USA, February 24-28, 2025; The Internet Society, 2025. [Google Scholar]

- Bagdasarian, E.; Yi, R.; Ghalebikesabi, S.; Kairouz, P.; Gruteser, M.; Oh, S.; Balle, B.; Ramage, D. AirGapAgent: Protecting Privacy-Conscious Conversational Agents. In Proceedings of the Proceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security, 2024; pp. 3868–3882. [Google Scholar]

- Foerster, H.; Mullins, R.; Blanchard, T.; Papernot, N.; Nikolić, K.; Tramèr, F.; Shumailov, I.; Zhang, C.; Zhao, Y. CaMeLs Can Use Computers Too: System-level Security for Computer Use Agents. arXiv 2026. arXiv:2601.09923.

- Jia, F.; Wu, T.; Qin, X.; Squicciarini, A. The task shield: Enforcing task alignment to defend against indirect prompt injection in llm agents. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 29680–29697. [Google Scholar]

- Zhu, K.; Yang, X.; Wang, J.; Guo, W.; Wang, W.Y. MELON: Provable Defense Against Indirect Prompt Injection Attacks in AI Agents. In Proceedings of the Proceedings of the 42nd International Conference on Machine Learning (ICML). PMLR, Proceedings of Machine Learning Research. 2025; Vol. 267, pp. 80310–80329. [Google Scholar]

- Chen, Z.; Kang, M.; Li, B. ShieldAgent: Shielding Agents via Verifiable Safety Policy Reasoning. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- An, H.; Zhang, J.; Du, T.; Zhou, C.; Li, Q.; Lin, T.; Ji, S. Ipiguard: A novel tool dependency graph-based defense against indirect prompt injection in llm agents. Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing 2025, 1023–1039. [Google Scholar]

- Li, H.; Liu, X.; Chiu, H.C.; Li, D.; Zhang, N.; Xiao, C. DRIFT: Dynamic Rule-Based Defense with Injection Isolation for Securing LLM Agents. arXiv arXiv:2506.12104. [CrossRef]

- Xiang, S.; Zhang, T.; Chen, R. ALRPHFS: Adversarially Learned Risk Patterns with Hierarchical Fast∖& Slow Reasoning for Robust Agent Defense. arXiv arXiv:2505.19260. [CrossRef]

- Changjiang, L.; Jiacheng, L.; Bochuan, C.; Jinghui, C.; Ting, W. Your Agent Can Defend Itself against Backdoor Attacks. arXiv arXiv:2506.08336. [CrossRef]

- Feng, E.; Zhou, W.; Liu, Z.; Chen, L.; Dong, Y.; Zhang, C.; Zhao, Y.; Du, D.; Hua, Z.; Xia, Y.; et al. Get Experience from Practice: LLM Agents with Record & Replay. arXiv arXiv:2505.17716. [CrossRef]

- Bonagiri, V.K.; Kumaragurum, P.; Nguyen, K.; Plaut, B. Check Yourself Before You Wreck Yourself: Selectively Quitting Improves LLM Agent Safety. arXiv arXiv:2510.16492. [CrossRef]

- Wei, Q.; Yang, T.; Wang, Y.; Li, X.; Li, L.; Yin, Z.; Zhan, Y.; Holz, T.; Lin, Z.; Wang, X. A-MemGuard: A Proactive Defense Framework for LLM-Based Agent Memory. arXiv arXiv:2510.02373.

- Mao, J.; Meng, F.; Duan, Y.; Yu, M.; Jia, X.; Fang, J.; Liang, Y.; Wang, K.; Wen, Q. Agentsafe: Safeguarding large language model-based multi-agent systems via hierarchical data management. arXiv arXiv:2503.04392.

- Sunil, B.D.; Sinha, I.; Maheshwari, P.; Todmal, S.; Malik, S.; Mishra, S. Memory Poisoning Attack and Defense on Memory Based LLM-Agents. arXiv 2026. arXiv:2601.05504. [CrossRef]

- Pan, Z.; Zhang, Y.; Liu, Z.; Tang, Y.Y.; Zhang, Z.; Luo, H.; Han, Y.; Zhang, J.; Wu, D.; Chen, H.Y.; et al. AdvEvo-MARL: Shaping Internalized Safety through Adversarial Co-Evolution in Multi-Agent Reinforcement Learning. arXiv arXiv:2510.01586.

- Patlan, A.S.; Sheng, P.; Hebbar, S.A.; Mittal, P.; Viswanath, P. Real ai agents with fake memories: Fatal context manipulation attacks on web3 agents. arXiv arXiv:2503.16248.

- Wang, S.; Zhang, G.; Yu, M.; Wan, G.; Meng, F.; Guo, C.; Wang, K.; Wang, Y. G-Safeguard: A Topology-Guided Security Lens and Treatment on LLM-based Multi-agent Systems. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 7261–7276. [Google Scholar]

- Zhou, J.; Wang, L.; Yang, X. GUARDIAN: Safeguarding LLM Multi-Agent Collaborations with Temporal Graph Modeling. arXiv arXiv:2505.19234. [CrossRef]

- Miao, R.; Liu, Y.; Wang, Y.; Shen, X.; Tan, Y.; Dai, Y.; Pan, S.; Wang, X. Blindguard: Safeguarding llm-based multi-agent systems under unknown attacks. arXiv arXiv:2508.08127.

- Zhou, Y.; Lu, X.; Liu, D.; Yan, J.; Shao, J. INFA-Guard: Mitigating Malicious Propagation via Infection-Aware Safeguarding in LLM-Based Multi-Agent Systems. arXiv 2026. arXiv:2601.14667.

- Chen, K.; Zhen, T.; Wang, H.; Liu, K.; Li, X.; Huo, J.; Yang, T.; Xu, J.; Dong, W.; Gao, Y. MedSentry: Understanding and Mitigating Safety Risks in Medical LLM Multi-Agent Systems. arXiv arXiv:2505.20824.

- Fan, F.; Li, X. PeerGuard: Defending Multi-Agent Systems Against Backdoor Attacks Through Mutual Reasoning. In Proceedings of the 2025 IEEE International Conference on Information Reuse and Integration and Data Science (IRI), 2025; pp. 234–239. [Google Scholar]

- HU, J.; DONG, Y.; DING, Z.; HUANG, X. Enhancing robustness of LLM-driven multi-agent systems through randomized smoothing. Chinese Journal of Aeronautics 2025, 103779. [Google Scholar] [CrossRef]

- Wen, Y.; Guo, J.; Huang, H. CoTGuard: Using Chain-of-Thought Triggering for Copyright Protection in Multi-Agent LLM Systems. arXiv arXiv:2505.19405.

- Mei, K.; Zhu, X.; Xu, W.; Hua, W.; Jin, M.; Li, Z.; Xu, S.; Ye, R.; Ge, Y.; Zhang, Y. Aios: Llm agent operating system. arXiv 2024. arXiv:2403.16971. [CrossRef]

- Bagdasarian, E.; Yi, R.; Ghalebikesabi, S.; Kairouz, P.; Gruteser, M.; Oh, S.; Balle, B.; Ramage, D. Airgapagent: Protecting privacy-conscious conversational agents. In Proceedings of the Proceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security, 2024; pp. 3868–3882. [Google Scholar]

- He, Y.; Wang, E.; Rong, Y.; Cheng, Z.; Chen, H. Security of ai agents. In Proceedings of the 2025 IEEE/ACM International Workshop on Responsible AI Engineering (RAIE), 2025; IEEE; pp. 45–52. [Google Scholar]

- Hua, W.; Yang, X.; Jin, M.; Li, Z.; Cheng, W.; Tang, R.; Zhang, Y. TrustAgent: Towards Safe and Trustworthy LLM-based Agents through Agent Constitution. In Proceedings of the Trustworthy Multi-modal Foundation Models and AI Agents (TiFA), 2024. [Google Scholar]

- Zhang, Y.; Cai, Y.; Zuo, X.; Luan, X.; Wang, K.; Hou, Z.; Zhang, Y.; Wei, Z.; Sun, M.; Sun, J.; et al. Position: Trustworthy AI Agents Require the Integration of Large Language Models and Formal Methods. In Proceedings of the Forty-second International Conference on Machine Learning Position Paper Track, 2025. [Google Scholar]

- Rosser, J.; Foerster, J.N. AgentBreeder: Mitigating the AI Safety Impact of Multi-Agent Scaffolds via Self-Improvement. In Proceedings of the Scaling Self-Improving Foundation Models without Human Supervision, 2025. [Google Scholar]

- Hua, Y.; Chen, H.; Wang, S.; Li, W.; Wang, X.; Luo, J. Shapley-Coop: Credit Assignment for Emergent Cooperation in Self-Interested LLM Agents. arXiv arXiv:2506.07388.

- Narajala, V.S.; Narayan, O. Securing agentic ai: A comprehensive threat model and mitigation framework for generative ai agents. arXiv arXiv:2504.19956. [CrossRef]

- Gosmar, D.; Dahl, D.A. Sentinel Agents for Secure and Trustworthy Agentic AI in Multi-Agent Systems. arXiv arXiv:2509.14956. [CrossRef]

- Chennabasappa, S.; Nikolaidis, C.; Song, D.; Molnar, D.; Ding, S.; Wan, S.; Whitman, S.; Deason, L.; Doucette, N.; Montilla, A.; et al. Llamafirewall: An open source guardrail system for building secure ai agents. arXiv arXiv:2505.03574. [CrossRef]

- Andriushchenko, M.; Souly, A.; Dziemian, M.; Duenas, D.; Lin, M.; Wang, J.; Hendrycks, D.; Zou, A.; Kolter, J.Z.; Fredrikson, M.; et al. AgentHarm: A Benchmark for Measuring Harmfulness of LLM Agents. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Vijayvargiya, S.; Soni, A.B.; Zhou, X.; Wang, Z.Z.; Dziri, N.; Neubig, G.; Sap, M. OpenAgentSafety: A Comprehensive Framework for Evaluating Real-World AI Agent Safety. 2025, 2507.06134. [Google Scholar]

- Luo, H.; Dai, S.; Ni, C.; Li, X.; Zhang, G.; Wang, K.; Liu, T.; Salam, H. Agentauditor: Human-level safety and security evaluation for llm agents. arXiv arXiv:2506.00641.

- Wang, H.; Zhang, A.; Duy Tai, N.; Sun, J.; Chua, T.S.; et al. Ali-agent: Assessing llms’ alignment with human values via agent-based evaluation. Advances in Neural Information Processing Systems 2024, 37, 99040–99088. [Google Scholar]

- Ruan, Y.; Dong, H.; Wang, A.; Pitis, S.; Zhou, Y.; Ba, J.; Dubois, Y.; Maddison, C.J.; Hashimoto, T. Identifying the Risks of LM Agents with an LM-Emulated Sandbox. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Debenedetti, E.; Zhang, J.; Balunovic, M.; Beurer-Kellner, L.; Fischer, M.; Tramèr, F. Agentdojo: A dynamic environment to evaluate prompt injection attacks and defenses for llm agents. Advances in Neural Information Processing Systems 2024, 37, 82895–82920. [Google Scholar]

- Milev, I.; Balunović, M.; Baader, M.; Vechev, M. ToolFuzz–Automated Agent Tool Testing. arXiv arXiv:2503.04479.

- Zhang, H.; Huang, J.; Mei, K.; Yao, Y.; Wang, Z.; Zhan, C.; Wang, H.; Zhang, Y. Agent Security Bench (ASB): Formalizing and Benchmarking Attacks and Defenses in LLM-based Agents. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Evtimov, I.; Zharmagambetov, A.; Grattafiori, A.; Guo, C.; Chaudhuri, K. WASP: Benchmarking Web Agent Security Against Prompt Injection Attacks. In Proceedings of the ICML 2025 Workshop on Computer Use Agents, 2025. [Google Scholar]

- Wu, C.H.; Shah, R.R.; Koh, J.Y.; Salakhutdinov, R.; Fried, D.; Raghunathan, A. Dissecting Adversarial Robustness of Multimodal LM Agents. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Guo, C.; Liu, X.; Xie, C.; Zhou, A.; Zeng, Y.; Lin, Z.; Song, D.; Li, B. Redcode: Risky code execution and generation benchmark for code agents. Advances in Neural Information Processing Systems 2024, 37, 106190–106236. [Google Scholar]

- Saha, S.; Chen, J.; Mayers, S.; Gouda, S.K.; Wang, Z.; Kumar, V. Breaking the code: Security assessment of ai code agents through systematic jailbreaking attacks. arXiv arXiv:2510.01359. [CrossRef]

- Zhu, Y.; Kellermann, A.; Bowman, D.; Li, P.; Gupta, A.; Danda, A.; Fang, R.; Jensen, C.; Ihli, E.; Benn, J.; et al. CVE-Bench: A Benchmark for AI Agents’ Ability to Exploit Real-World Web Application Vulnerabilities. Proceedings of the Proceedings of the 42nd International Conference on Machine Learning (ICML). PMLR Proceedings of Machine Learning Research. 2025, Vol. 267, 79850–79867. [Google Scholar]

- Tur, A.D.; Meade, N.; Lù, X.H.; Zambrano, A.; Patel, A.; DURMUS, E.; Gella, S.; Stanczak, K.; Reddy, S. SafeArena: Evaluating the Safety of Autonomous Web Agents. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Zharmagambetov, A.; Guo, C.; Evtimov, I.; Pavlova, M.; Salakhutdinov, R.; Chaudhuri, K. AgentDAM: Privacy Leakage Evaluation for Autonomous Web Agents. In Proceedings of the Proceedings of the 39th Annual Conference on Neural Information Processing Systems (NeurIPS 2025) Datasets and Benchmarks Track, 2025. [Google Scholar]

| Year | Reference | Scope | Core Theme | Coverage | Comparison | ||

|---|---|---|---|---|---|---|---|

| T | D | E | |||||

| 2024 | Li et al. [14] | Single | Personal Agents | × | × | Focuses on mobile agents and efficiency; security is a minor aspect. | |

| Tang et al. [15] | Single | Scientific Agents | × | Position paper on scientific agents; proposes a triadic safeguarding framework. | |||

| Gan et al. [16] | S & M | Security, Privacy | × | Broad coverage including ethics; lacks systematic evaluation frameworks. | |||

| 2025 | Chen et al. [17] | Single | Computer-Using Agents | Strictly limited to agents interacting with computer interfaces (GUI/Web). | |||

| Su et al. [18] | Single | Autonomy Risks | × | Focuses on intrinsic failures and autonomy risks, differing from our structural threat taxonomy. | |||

| Wang et al. [19] | Multi | Cooperation & Privacy | × | Centers on cooperation paradigms and network privacy, not AI security. | |||

| Mohammadi et al. [20] | S & M | Evaluation & Benchmarking | × | × | Pure evaluation survey; taxonomizes metrics and benchmarks, not attacks. | ||

| Kong et al. [21] | S & M | Communication Protocols | × | Focuses on communication layers (L1-L3) and protocols (e.g., MCP, A2A). | |||

| He et al. [22] | S & M | Security & Privacy | × | Relies heavily on case studies; lacks a unified defense taxonomy. | |||

| Wang et al. [12] | S & M | Comprehensive Security | Adopts traditional LLM threat taxonomies rather than agent-specific structural flaws. | ||||

| Yu et al. [13] | S & M | Trustworthiness | Emphasizes ethics/fairness; technical security depth is diluted by broad scope. | ||||

| 2026 | Ours | S & M | Security & Frameworks | Unified taxonomy of Threats, Defenses, and Evaluation for both paradigms. | |||

| Category | Attack Framework | Injection Medium | Attack Technique | Attack Consequence | Autonomy | Persistence | Propagation |

|---|---|---|---|---|---|---|---|

| Environment and Data Injection | AdvAgent [32] | Webpage | Injects adversarial HTML code that is rendered invisible to humans but parsed by agents. | Targeted Wrong Actions | High | Low | Low |

| EIA [33] | Webpage | Constructs deceptive environments with invisible instructions or distractors to mislead perception. | Privacy Leakage | High | Low | Low | |

| WIPI [34] | Webpage | Embeds malicious instructions in open web content to passively hijack the agent’s control flow. | Arbitrary Malicious Actions | High | Low | Low | |

| AgentVigil [35] | Prompt | Leverages iterative fuzzing and mutation strategies to generate diverse obfuscated prompts. | Guardrail Bypass | Medium | Low | Low | |

| Imprompter [36] | Prompt | Generates human-unreadable adversarial suffixes via automated optimization to force tool misuse. | Improper Tool Use | Medium | Low | Low | |

| Tool Metadata Manipulation | Attractive Metadata [37] | Tool Description | Iteratively optimizes description semantics to maximize similarity with user queries. | Malicious Tool Invocation | Medium | High | High |

| ToolHijacker [38] | Tool Library | Registers masqueraded tools that shadow legitimate ones by exploiting retrieval ranking mechanisms. | Tool Selection Hijacking | Medium | High | High |

| Category | Attack Framework | Targeted Component | Attack Technique | Attack Consequence | Autonomy | Persistence | Propagation |

|---|---|---|---|---|---|---|---|

| Planning & Logic Hijacking | UDora [39] | Reasoning Trace | Dynamically hijacks intermediate reasoning and planning steps during execution via optimized adversarial prompts. | Logic Deviation | Medium | Low | Low |

| Fault Amplification [40] | Task Planner | Induces small perturbations that are autonomously amplified into cascading failures during multi-step execution. | Cognitive DoS | High | Low | Medium | |

| Thought-Attack [41] | Thought Chain | Backdoor-based poisoning that manipulates intermediate thoughts while preserving benign-looking final outputs. | Stealthy Tool Misuse | High | High | Low | |

| Memory Poisoning & Extraction | MEXTRA [42] | Privacy Exfiltration | Exploits crafted prompts to hijack retrieval mechanisms and extract sensitive stored information. | Privacy exfiltration | Medium | Low | Low |

| XTHP [43] | Short-term Memory | Malicious tools hook onto legitimate ones to intercept intermediate data and pollute task outputs. | Context Leakage | High | Medium | Low | |

| Agent Smith [44] | Shared Memory | Infectious adversarial payloads propagate through shared memory and inter-agent interactions. | Viral Memory Infection | High | High | High | |

| AgentPoison [45] | RAG Database | Injects poisoned data into memory to bias future retrieval and downstream decision-making. | Knowledge Corruption | High | High | Medium | |

| MINJA [46] | Memory Learning Mechanism | Induces agents to autonomously generate and persist malicious records via benign-seeming queries. | Self-Reinforcing Poisoning | High | High | High | |

| DSRM [47] | RAG Database | Optimizes poisoned records with deceptive reasoning chains to mislead the agent’s semantic verification. | Decision Manipulation | High | High | Low | |

| Backdoor Attacks | BadAgent [48] | Model Weights | Implants backdoors during instruction tuning, activated by passive environmental triggers. | Control Override | High | High | Low |

| Contextual Backdoor [49] | Vision Encoder | Uses rich contextual triggers to activate malicious behavior in embodied agents. | Physical Manipulation | High | High | Low | |

| Observation-Attack [41] | Perception Module | Activates backdoor behavior via triggers concealed in environmental observations. | Latent Backdoor Activation | High | High | Low | |

| DemonAgent [50] | Model Weights | Deploys encrypted multi-trigger backdoors activated cumulatively across executions. | Latent Backdoor Activation | High | High | Low |

| Category | Attack Framework | Targeted Collaboration Mechanism | Attack Technique | Attack Consequence | Autonomy | Persistence | Propagation |

|---|---|---|---|---|---|---|---|

| Propagation and Policy Pollution Attacks | Prompt Infection [51] | Unverified message forwarding and output reuse | Injects self-replicating prompt payloads that compel agents to replicate malicious instructions across subsequent interactions. | System-wide prompt infection | High | Low | High |

| CORBA [52] | Recursive coordination mechanisms in multi-agent task routing | Uses recursive prompts to drive agents into mutually blocking execution states that propagate across reachable nodes. | System-wide availability loss | High | Medium | High | |

| Agents Under Siege [53] | Topology-aware communication routing | Models multi-agent systems as flow networks and optimizes adversarial prompt routing to bypass distributed defenses. | Large-scale malicious prompt dissemination | High | Low | High | |

| Flooding [54] | Shared knowledge acceptance in agent communities | Injects persuasive and fabricated evidence that induces agents to autonomously accept and disseminate false knowledge. | Persistent cognitive contamination | Medium | High | High | |

| Communication Hijacking Attacks | AiTM [55] | Inter-agent message integrity | Intercepts inter-agent messages and generates tailored malicious instructions to mislead victim agents. | Arbitrary coordinated malicious actions | Medium | Low | Low |

| MAST [56] | Multi-turn communication flow | Performs adaptive multi-round message tampering while preserving semantic similarity to evade detection. | Undetectable coordination deviation | Medium | Medium | Low | |

| Control Hijack [57] | Orchestrator control signals | Crafts adversarial content that mimics system messages to trigger unsafe tool invocation. | Arbitrary code execution via unsafe tool invocation | High | Medium | Medium | |

| Secret Collusion [58] | Covert inter-agent communication channels | Encodes prohibited information within benign-looking messages using steganographic techniques. | Undetectable policy-violating collusion | Medium | Medium | Medium | |

| Role Exploitation and Logic Abuse Attacks | Evil Geniuses [59] | Role consistency and persona alignment | Exploits role-consistent behavior to trigger cascading alignment failures across collaborating agents. | Network-wide alignment failure | High | Low | High |

| Fault Propagation [60] | Role configuration dependency in collaboration | Modifies agent profiles or injects faulty messages to induce persistent downstream errors in collaborative workflows. | Persistent collaborative performance degradation | High | Medium | High | |

| Intention Hiding [61] | Collaborative decision logic | Introduces intention-hiding malicious agents that subtly degrade coordination efficiency without overt failures. | Stealthy collaborative degradation | Medium | Medium | Medium | |

| MASLEAK [62] | Tool invocation and error feedback mechanisms | Performs worm-inspired probing to extract system topology, prompts, and tool configurations through interaction traces. | Intellectual property leakage | Medium | Low | Low |

| Defense Layer | Framework | Core Mechanism | Control Granularity | Targeted Risk |

|---|---|---|---|---|

| Input Sanitization | PromptArmor [63] | Augments inputs with frequency-based signatures and uses a Detector LLM to excise injections. | Information Level | Propagation |

| Polymorphic Prompt [64] | Randomizes delimiters and structure to disrupt attackers’ context boundary prediction. | Information Level | Propagation | |

| Architectural Isolation | ISOLATEGPT [65] | Hub-and-Spoke architecture enforcing memory segregation for 3rd-party apps via system prompts. | Information Level | Propagation |

| AirGapAgent [66] | Logical air-gap with a Contextual Minimizer to strictly filter data flow based on task necessity. | Information Level | Propagation | |

| CaMeLs [67] | Physically isolates Privileged Planner from Quarantined Perception via Dual-LLM architecture to block visual injections. | Information Level | Propagation | |

| Runtime Verification | Task Shield [68] | Verifies Task Alignment of every tool call against user instructions. | Decision Level | Autonomy |

| MELON [69] | Masked Re-execution: Detects attacks by comparing outputs from original vs. masked inputs. | Cognition Level | Autonomy | |

| ShieldAgent [70] | Converts unstructured policies into Probabilistic Rule Circuits for formal safety verification. | Cognition Level | Autonomy | |

| IPIGuard [71] | Enforces legal execution paths on a pre-defined Tool Dependency Graph. | Decision Level | Autonomy | |

| DRIFT [72] | Dynamic rule isolation via Secure Planner and Dynamic Validator to purge conflicting instructions. | Cognition Level | Persistence | |

| ALRPHFS [73] | Hierarchical Reasoning that prioritizes high-confidence risks to balance cost. | Cognition Level | Autonomy |

| Defense Layer | Framework | Core Mechanism | Control Granularity | Targeted Risk |

|---|---|---|---|---|

| Reasoning & Planning | ReAgent [74] | Performs consistency checks between thoughts, actions, and reconstructed instructions to detect logic hijacking. | Cognition Level | Autonomy |

| R2A2 [18] | Integrates a risk-aware world model via Constrained MDP to simulate hazards before committing to plans. | Cognition Level | Autonomy | |

| AgentRR [75] | Restricts cognition to validated trajectories by recording and replaying successful interaction traces. | Cognition Level | Autonomy | |

| Selective Quitting [76] | Trains agents to autonomously recognize high-risk ambiguity and halt execution as a first-line defense. | Decision Level | Autonomy | |

| Memory Integrity | A-MemGuard [77] | Employs consensus-based validation to identify poisoned entries and utilizes dual-memory for self-correction. | Cognition Level | Persistence |

| AgentSafe [78] | Implements HierarCache to physically segregate sensitive data and applies sanitization filters to quarantine malicious streams. | Information Level | Persistence | |

| Trust-Aware Retrieval [79] | Filters retrieval by assigning composite trust scores to memory entries and applying temporal decay to exclude poisoned data. | Information Level | Persistence | |

| Internal Alignment | AdvEvo-MARL [80] | Internalizes safety via adversarial multi-agent reinforcement learning, embedding robustness into policy weights. | Cognition Level | Persistence |

| IFT [81] | Fine-tunes the model’s internal representations to reduce susceptibility to context manipulation and latent backdoors. | Cognition Level | Persistence |

| Defense Layer | Framework | Core Mechanism | Control Granularity | Targeted Risk |

|---|---|---|---|---|

| Topology & Graph Analysis | G-Safeguard [82] | Leverages GNNs to model the utterance graph for dynamic anomaly detection and topological intervention to intercept propagation. | Decision Level | Propagation |

| GUARDIAN [83] | Utilizes temporal attributed graphs and an information bottleneck mechanism to prune anomalous nodes amplifying hallucinations. | Decision Level | Propagation | |

| BlindGuard [84] | Designs a Hierarchical Graph Encoder to sever communication links via dynamic edge pruning without relying on labeled attack data. | Decision Level | Propagation | |

| INFA-GUARD [85] | Distinguishes root attackers from infected agents and executes remediation via replacement and rehabilitation to halt propagation while preserving topology. | Decision Level | Propagation | |

| Behavioral Profiling & Audits | AgentXposed [61] | Integrates the HEXACO personality model with interrogation techniques to expose covert adversaries via psychological profiling. | Cognition Level | Propagation |

| PCDC [86] | Deploys an Enforcement Agent to identify dark personality traits through psychometric screening and applies topology-aware isolation. | Cognition Level | Propagation | |

| PeerGuard [87] | Establishes a mutual check protocol where agents cross-verify peers’ Chain-of-Thought to isolate poisoned nodes. | Cognition Level | Propagation | |

| Challenger & Inspector [60] | Empowers agents to actively question peer outputs (Challenger) and employs dedicated auditors (Inspector) to correct errors. | Cognition Level | Propagation | |

| Consensus & Protection | Randomized Smoothing [88] | Applies statistical randomized smoothing by injecting Gaussian noise to certify consensus decisions against adversarial perturbations. | Decision Level | Propagation |

| CoTGuard [89] | Embeds task-specific trigger patterns into reasoning chains to detect and trace unauthorized content reproduction. | Information Level | Propagation |

| Category | Benchmark | Domain | Interaction | Threat Focus | Scale | Key Metrics |

|---|---|---|---|---|---|---|

| Comprehensive Safety & Misuse | AgentHarm [100] | General Agent | Multi-step | Malicious Execution | 110 tasks | DS & Harmfulness Score |

| OpenAgentSafety [101] | Real Tools | Long-horizon | Safety Constraints | 175 tasks | ASR & Safety Score | |

| ASSEBench [102] | General Agent | Multi-step | Stealthy Risks | 2,293 records | Pass Rate (LLM Judge) | |

| ALI-Agent [103] | General Agent | Dual-stage | Long-tail Risks | >25k cases | Misalignment Rate | |

| Tool-Centric Interaction | ToolEmu [104] | Sandbox Sim | Simulation | Underspecification | 140 scenarios | Risk Rate (LM Evaluator) |

| AgentDojo [105] | Office OS | Dynamic | Indirect Injection | 655 cases | ASR vs. Utility | |

| ToolFuzz [106] | Tool Definition | Fuzzing | Doc Errors/Runtime Bugs | 40+ Tools | Error/Crash Rate | |

| ASB [107] | General System | Multi-turn | Mixed Threats | 87 tools | ASR (Goal Success Rate) | |

| AMA [37] | Tool Selection | Optimization | Metadata Manipulation | 10 Scenarios | ASR | |

| Adversarial Robustness & Injection | WASP [108] | Web Env | End-to-End | Web Attacks | 625 tasks | End-to-End ASR |

| ARE [109] | Multi-Web | Comp. Graph | Adv. Propagation | VWA ext. | Robustness Score | |

| ShieldAgent [70] | Defense Eval | Trajectory | Defense Effectiveness | 3,000 pairs | Compliance Rate (ASPM) | |

| Domain-Specific Risks | RedCode [110] | Code Gen | Docker | Malware Exec/Gen | 110 scenarios | Refusal Rate |

| CodeBreaker [111] | Code Agent | Multi-step | Logic Manipulation | 2,200 prompts | Jailbreak ASR | |

| CVE-Bench [112] | Cybersecurity | Sandbox | CVE Exploitation | 40 CVEs | ESR (Exploit Success Rate) | |

| SafeArena [113] | Web Env | Real Web | Misuse Compliance | 500 tasks | Safety Rate | |

| AgentDAM [114] | Data Privacy | Autonomous | Data Minimization | 1,446 cases | PLR (Privacy Leakage Rate) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.