2. Materials and Methods

2.1. Overview of the Proposed Pipeline

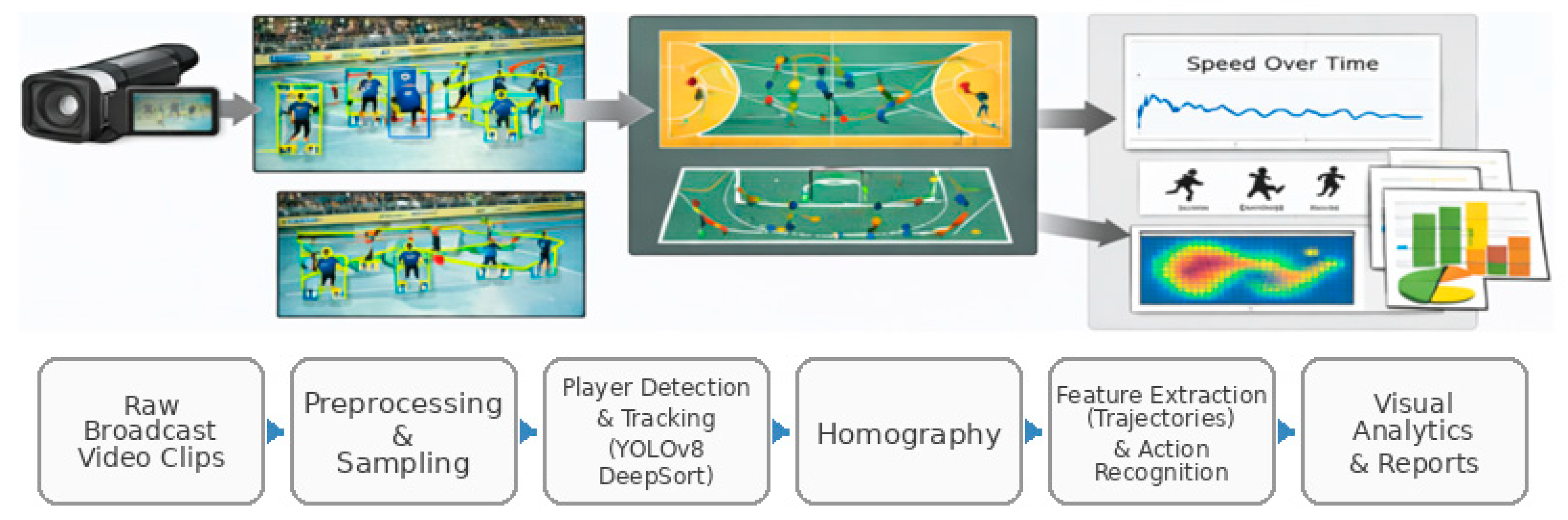

The proposed workflow processes each video clip through a modular four-stage pipeline that produces explicit intermediate outputs at every step. Given a monocular broadcast clip , the goal is to transform pixel-level observations into court-referenced trajectories in metric units and to derive quantitative and visual analytics, along with clip-level action recognition.

In Stage 1, a deep detector is applied independently to each frame to localize players and output bounding boxes with confidence scores. In Stage 2, a tracking-by-detection method associates detections over time to form trajectories with persistent identities, enabling temporal continuity under short occlusions. In Stage 3, player image positions are mapped to a standardized court coordinate system using homography-based camera calibration under the planar court assumption, producing trajectories in meters.

In Stage 4, the court-referenced trajectories are used to compute motion indicators such as distance covered and speed, generate coaching-oriented visual summaries such as trajectory overlays and heatmaps, and extract interpretable clip-level features for action classification, which are evaluated with multiple lightweight classifiers under a common protocol.

A key design choice is modularity: each stage can be replaced independently while maintaining the same intermediate representations (detections, tracks, court-projected trajectories, and feature vectors). This structure supports reproducibility and systematic troubleshooting, since errors can be localized by inspecting the outputs of each stage before propagating to subsequent components.

Figure 2.

Overview of the proposed monocular handball analytics pipeline.

Figure 2.

Overview of the proposed monocular handball analytics pipeline.

2.2. Player Detection and Tracking

Players are detected independently in each frame using a fine-tuned YOLOv8 model [

3]. Given a frame

, the detector returns a set of

detections

, where each detection includes a bounding box

in pixel coordinates and a confidence score

. The model is trained as a single-class detector (player) to maximize detection stability for subsequent tracking. At the level of internal feature extraction, the detector is treated as a black box, but its interface is explicit. For each frame it outputs a finite set of bounding boxes and confidence scores, which forms the observable input to the tracking stage.

To provide comparable inputs for the tracking stage, a fixed confidence threshold is selected on the validation set and then kept constant across all experiments. After thresholding, non-maximum suppression (NMS) is applied to remove duplicate detections. The overlap between two boxes A and B is quantified by the Intersection over Union . When exceeds a predefined NMS threshold, the lower-confidence detection is suppressed. Finally, detections are filtered with basic sanity checks to discard degenerate boxes, such as invalid dimensions or boxes outside image boundaries. The final output of Stage 1 is the per-frame detection set , which is passed unchanged to the tracker.

Figure 3.

Example of player detections in a broadcast frame (YOLOv8 outputs).

Figure 3.

Example of player detections in a broadcast frame (YOLOv8 outputs).

Frame-level detections are linked into trajectories using DeepSORT [

2] under a tracking-by-detection paradigm. DeepSORT was selected because broadcast handball includes frequent short occlusions and close player interactions. In such conditions, appearance-based association reduces identity switches compared to purely motion-based trackers. This choice matters because identity instability and track fragmentation propagate to the downstream trajectory features and can degrade the reliability of clip-level action recognition.

The input to the tracker at time t is the per-frame detection set . The output is a set of tracks with persistent identities. For each track, the tracker provides a state estimate per frame, which includes the predicted location and bounding box in image coordinates. For each frame, the tracker maintains a set of active track identities . Each track state is propagated with a Kalman filter motion model, which predicts the next state and defines a motion-based gating region for plausible matches.

Data association combines spatial consistency with appearance similarity to reduce identity switches in crowded scenes and under short occlusions. Matching between predicted tracks and current detections is obtained by solving an assignment problem, typically with the Hungarian algorithm. This produces matched track–detection pairs, along with unmatched detections and unmatched tracks. Unmatched detections may initialize new tracks. Unmatched tracks may remain active for a limited number of frames, controlled by a maximum age parameter.

Tracking is performed independently for each clip because persistent identities across clips are not required for the downstream analytics. Conservative association settings are used to reduce false track initiations during dense interactions, prioritizing identity stability when players are in close proximity. The explicit track representation supports systematic troubleshooting because errors can be traced to missed detections, track fragmentation, or identity switches before court projection and motion analytics are applied.

2.3. Court Projection via Homography

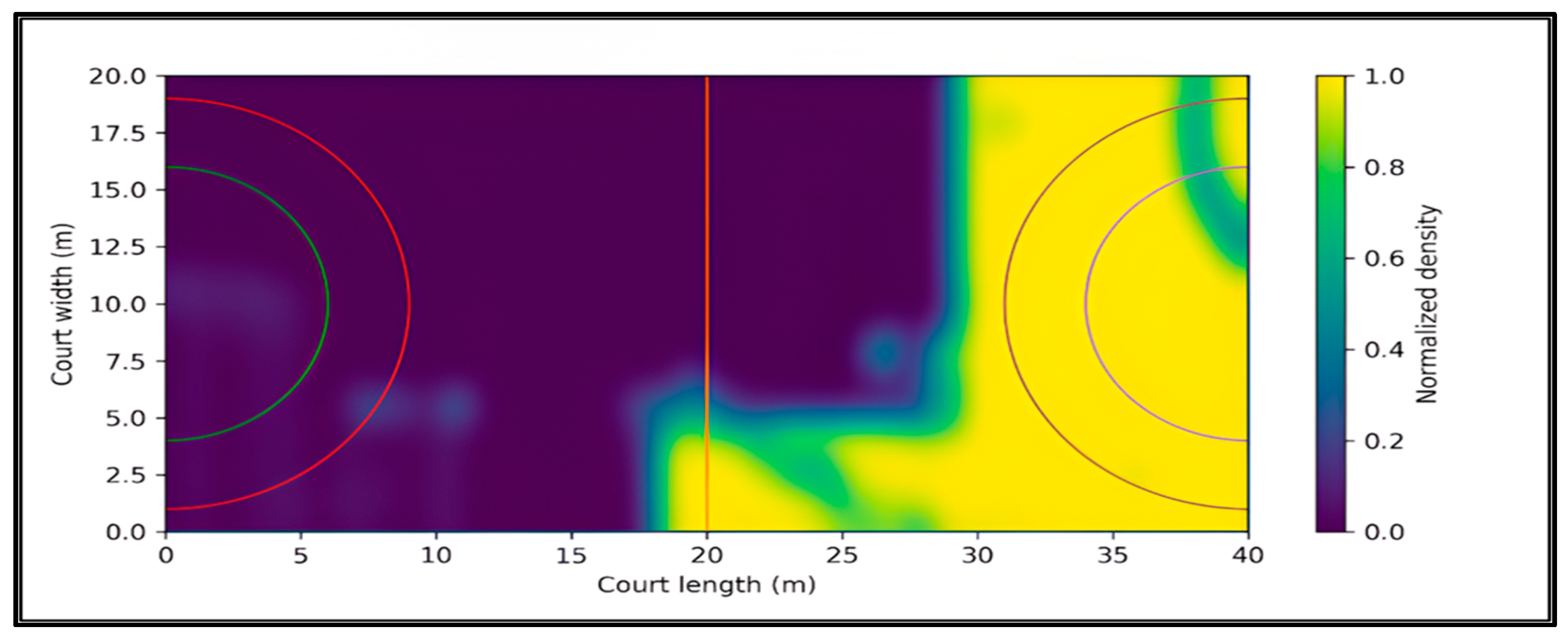

To express movement in metric units, image coordinates are mapped onto a standardized 40×20 m handball court using a planar homography, under the assumption that the playing surface is approximately planar. A top-down court reference is used to reduce perspective effects and to make distances and speeds comparable across clips, regardless of camera zoom and viewpoint. This representation also supports coaching-oriented visualizations, such as trajectory overlays and heatmaps on the court template.

Let denote a point in image homogeneous coordinates (pixels) and let denote the corresponding point on the court template. The homography matrix satisfies the projective mapping where is a non-zero scale factor. The input to the homography estimation consists of image-to-court point correspondences derived from visible court landmarks, such as line intersections and characteristic marking points. The output is the 3×3 matrix H, which maps pixel coordinates to metric court coordinates and enables computation of kinematic quantities in meters and seconds.

For each tracked player, a single representative point is extracted from the bounding box and projected to the court plane. The bottom-center point is used as an approximation of the ground contact point. This choice reduces systematic perspective error compared to using the box center, which lies above the court plane. Court-referenced coordinates are obtained by applying the homography and converting from homogeneous to Euclidean coordinates, yielding positions in meters for each tracked identity and frame.

Calibration quality is verified through complementary checks. First, a visual overlay is performed by projecting the court template lines back onto the image and inspecting their alignment with visible markings. Second, plausibility checks are applied to the resulting trajectories and motion signals, including consistency with court bounds and speed values that remain within realistic ranges. When camera zoom or viewpoint changes significantly, a new homography must be estimated because a single planar homography cannot represent multiple camera geometries.

Figure 4.

Example of homography-based court projection to standardized coordinates.

Figure 4.

Example of homography-based court projection to standardized coordinates.

2.4. Trajectory Processing and Motion Indicators

Court-referenced trajectories are post-processed to reduce high-frequency jitter introduced by detection and tracking noise, and to stabilize derivative quantities such as speed and acceleration. Let denote the projected court position in meters of track k at frame t. A short temporal smoothing filter is applied to the sequence } to suppress frame-to-frame fluctuations while preserving the overall motion pattern. This step is important because raw coordinate jitter can produce unrealistically large spikes in numerical derivatives. In practice, smoothing can be implemented using a short moving average or a low-pass filter applied independently to X and Y.

Motion indicators are then computed from the optionally smoothed trajectories. The distance covered by track k over the interval defined as the cumulative Euclidean displacement between consecutive points . Instantaneous speed is computed as displacement over time , where . Speed is expressed in meters per second. Clip-level speed statistics, such as mean, median, maximum, and percentiles, are computed per track and can also be aggregated across tracks to summarize the movement intensity within a clip.

To ensure physically plausible outputs, basic sanity checks are applied. Trajectory points are constrained to remain within court bounds, and unrealistic speed spikes are flagged as outliers and excluded from summary statistics when they exceed a plausible threshold. This filtering reduces the impact of occasional tracking fragmentation or identity switches on downstream motion analytics.

2.5. Visual Analytics Outputs

To support coaching-oriented interpretation, the pipeline produces court-referenced trajectory plots and heatmaps from projected trajectories in meters. Trajectory plots visualize the motion paths of tracked identities on a standardized 40×20 m court template. Each track is rendered as a polyline by connecting consecutive positions over time. When smoothing is enabled, the plotted paths reflect the stabilized trajectories and allow qualitative inspection of movement patterns such as runs, cuts, and positional changes.

Heatmaps provide a compact summary of spatial occupancy by aggregating projected positions over time and across tracked players. The court is discretized into a two-dimensional grid of cells, denoted as cell (m, n). For each cell, an unnormalized occupancy count is computed as in , where 1{⋅} is the indicator function. To reduce discretization artifacts and improve interpretability, H can be spatially smoothed and then normalized. A simple normalization divides by producing a relative occupancy distribution. The resulting heatmaps highlight frequently visited court regions and support qualitative comparisons between clips and action categories. Since heatmaps are derived from tracked and projected positions, their quality depends on tracking stability and calibration accuracy, especially under heavy occlusions and rapid camera motion.

2.6. Action Recognition Using Trajectory-Derived Features

Each clip is represented by a fixed-dimensional vector x that summarizes player motion on the court and the reliability of the underlying detections. The representation is computed from court-referenced trajectories and frame-level detection statistics. The resulting features remain physically interpretable and support diagnostic analysis when recognition errors occur. The feature set targets three aspects. Motion intensity and motion dynamics describe kinematics of play. Scene reliability describes how stable and consistent the detections are. This combination is useful in broadcast clips where viewpoint changes, occlusions, and variable scene density can mask action-specific motion patterns.

The projected court position of track k at frame t is denoted by in meters. The temporal sampling step is . For detections, denotes the number of detections in frame t, and the total number of detections across the clip is . Each bounding box provides width , height and confidence . From these, the box area and the aspect ratio are derived to capture apparent scale and shape consistency.

Trajectory-derived features are computed from consecutive projected positions and encode motion intensity and motion dynamics. The frame-to-frame displacement vector is , which describes how the player position changes between adjacent frames in the court plane. The displacement magnitude is which gives traveled distance per frame in meters. Instantaneous speed is then , transforming a geometric displacement into a physically meaningful rate of motion (m/s). To characterize direction, the motion angle is computed as , which yields a stable representation of direction on the court. Turning behavior is captured by the direction change . Large absolute values of correspond to sharper turns, while values near zero indicate approximately straight movement.

Because action labels are clip-level, per-frame quantities are aggregated to obtain a fixed-dimensional descriptor. Aggregation is performed in two stages to handle variability in the number of visible players. First, track-level summaries are computed over the temporal extent of each track. The distance covered by track k is , which measures how much the tracked identity moved during its presence in the clip.

Speed behavior is summarized per track using statistics of including mean speed, speed variability, maximum speed, and a high percentile such as the 90th percentile. These descriptors emphasize high-activity segments while remaining robust to occasional spikes. Directional dynamics are summarized similarly by computing statistics of ,the mean turning intensity quantifies how frequently and strongly a player changes direction, while captures variability in turning patterns.

Second, track-level values are pooled across tracks to form clip-level features. The mean and maximum distance across tracks, and , summarize typical and extreme movement within the clip. In parallel, global kinematic statistics are computed directly over all valid (t, k) pairs to capture clip-wide activity independent of track segmentation. These include the global mean speed , global variability and the global maximum . High-intensity bursts are summarized by the global percentile . Turning behavior at clip level is characterized by and . This two-level aggregation strategy produces a consistent feature vector even when some players are missing, tracks are fragmented, or the number of visible identities differs across clips.

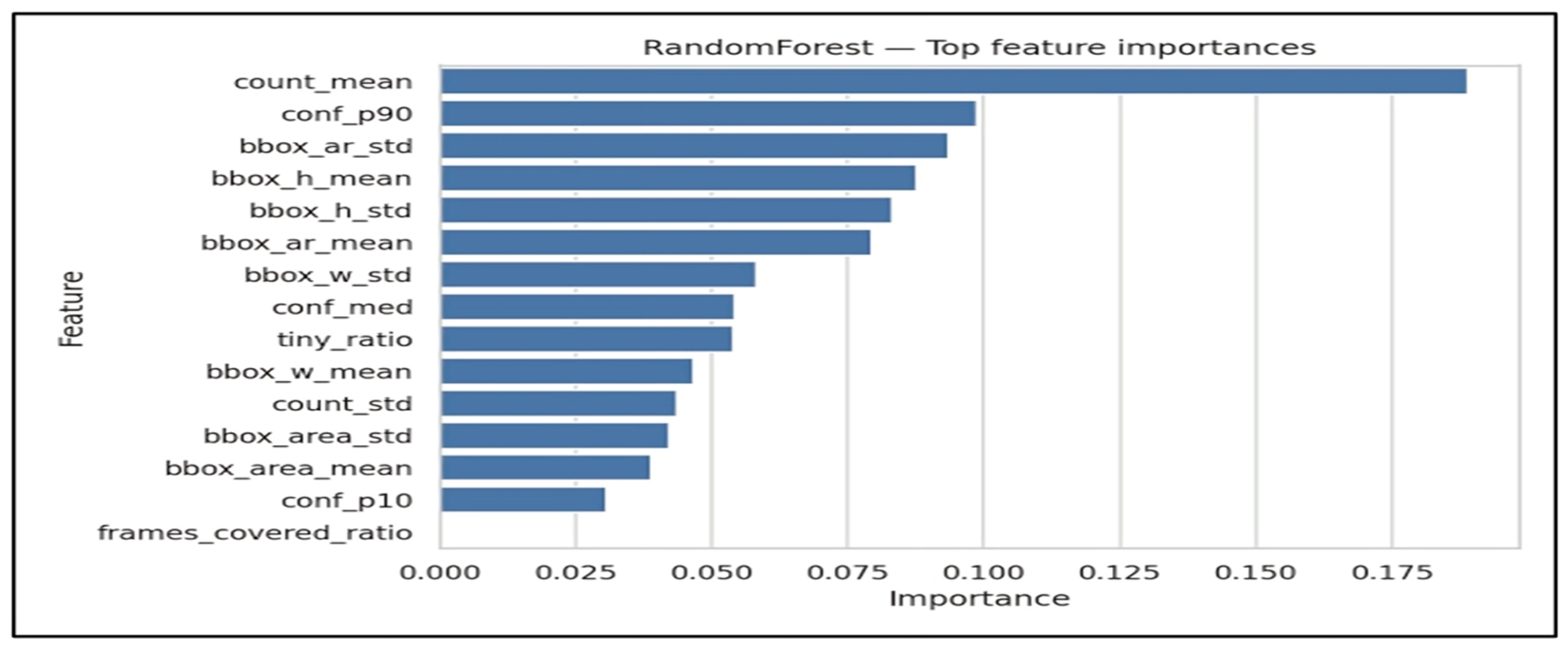

Detection-derived features complement the motion descriptors by quantifying scene conditions and detection stability. Detection density is captured by the mean number of detections per frame and by its variability , which often increase under occlusions and crowded scenes. Geometric statistics of bounding boxes serve as proxies for camera zoom and scale changes. Mean and variability of box height and width , describe how large players appear in the image. Mean and variability of area provide an additional scale descriptor that is sensitive to both width and height. Shape consistency is captured by aspect-ratio statistics , which can reflect pose changes and partial occlusions. Finally, detection reliability is summarized by robust confidence descriptors: the median confidence reflects typical detector certainty, captures the upper tail of confident detections. Together, these features help disentangle genuine motion patterns from errors caused by unstable detections and provide additional context for interpreting classifier behavior.

To facilitate reproducibility,

Table 1 summarizes the detection-derived features used in our clip-level representation. The features quantify detection density, bounding-box geometry, and confidence statistics aggregated over time. Since they are computed independently of the classifier, the same feature set is used across all evaluated models. Feature-importance analysis is presented in the Results section for the model that achieves the best overall performance.

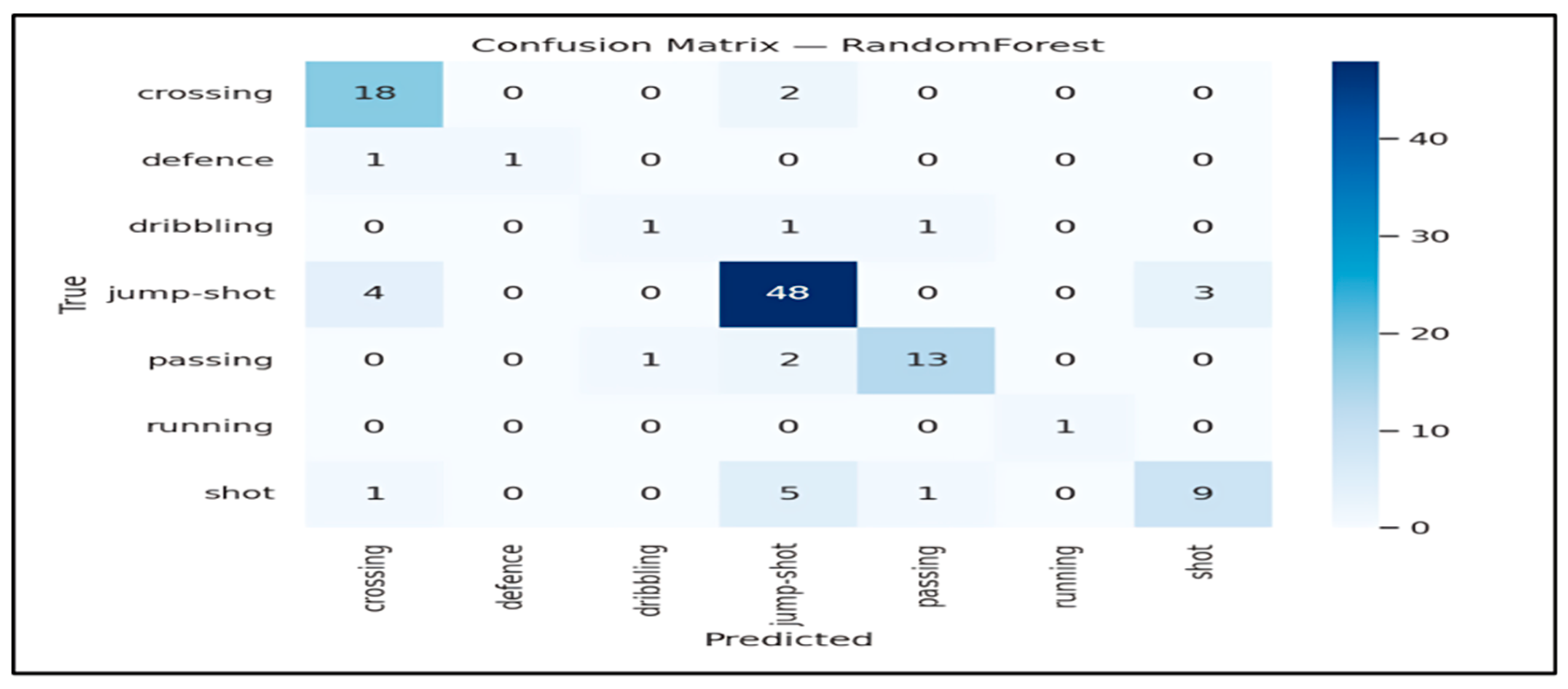

The final feature vector x is used for clip-level action classification. The input to every classifier is the clip representation x. The output is a predicted action label among the predefined classes. When available, models also output class scores or posterior probabilities p (y ∣ x). The evaluated classifiers include Random Forest, Extra Trees, Logistic Regression, XGBoost, Gradient Boosting, and Gaussian Naive Bayes. Each model is trained with hyperparameter tuning on the training data using cross-validation. Class imbalance is handled using class weighting or balanced sample weights where supported. This feature-based formulation maintains interpretability throughout the pipeline and supports traceable error analysis by linking misclassifications to measurable properties of trajectories and detections.

All classifiers are trained and tuned under a single shared protocol. The training split is used for model fitting and cross-validation based hyperparameter selection. The validation split is used only when an explicit validation-based selection step is required, such as selecting the number of boosting rounds for boosted trees. Reporting and comparison of model performance are presented in the Results section using accuracy and macro-averaged metrics to account for class imbalance.

Model evaluation uses accuracy and macro-averaged precision, recall, and F1 score to account for class imbalance. The chosen metrics are defined as follows. For each class c, precision is defined as , and recall is . The class-wise F1 score is , and macro-averaged F1 is computed as , ensuring equal weighting across action categories regardless of frequency.

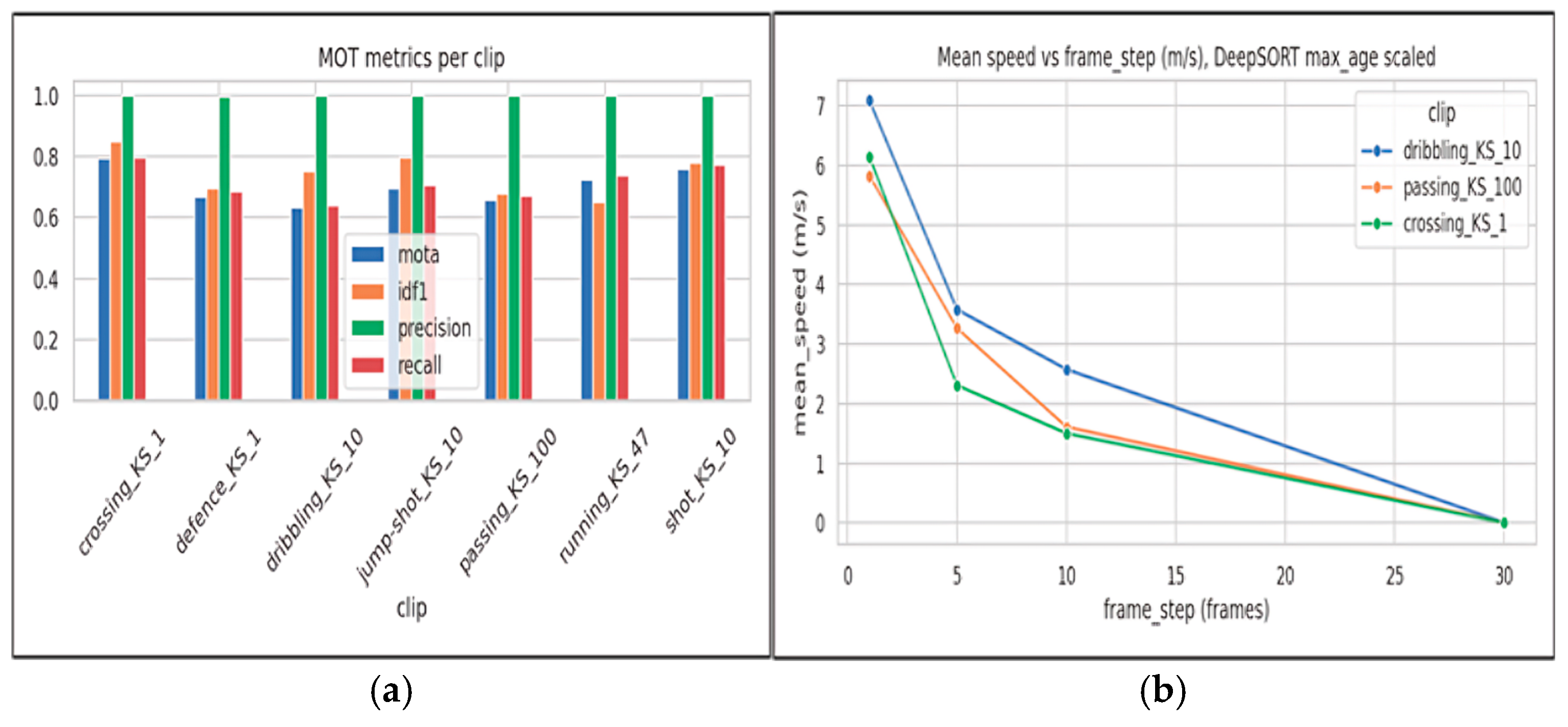

2.7. Evaluation Metrics

Performance is evaluated at three levels: detection, tracking, and action recognition.

For detection, we report precision, recall, and mean Average Precision. Precision and recall are defined using true positives, false positives, and false negatives as and A predicted detection is considered correct if its Intersection over Union (IoU) with the corresponding ground-truth bounding box exceeds a predefined threshold. For a predicted box A and a ground-truth box B, IoU is defined . Average Precision is computed as the area under the precision–recall curve, and mean Average Precision is obtained by averaging AP across classes. These metrics capture detection accuracy and sensitivity to missed players, particularly under occlusions and motion blur.

Tracking performance is evaluated using CLEAR MOT metrics, with emphasis on MOTA and IDF1 to reflect overall tracking quality and identity stability. Multi-Object Tracking Accuracy is defined as , where , , and denote the numbers of false negatives, false positives, and identity switches at frame t, respectively, is the number of ground-truth objects at frame t. Identity F1 measures identity consistency and is defined as , where IDTP, IDFP, and IDFN denote identity-based true positives, false positives, and false negatives.

Action recognition is evaluated using accuracy and macro-averaged precision, recall, and F1 score to account for class imbalance. For each class c, class-wise precision and recall are defined as and. The class-wise F1 score is then computed as. Macro-averaged scores are obtained by averaging class-wise values over all C classes. For example, macro-averaged F1 is defined as . In addition, confusion matrices are reported to visualize error patterns and highlight which action classes are most frequently confused. This evaluation setup supports systematic error analysis and helps link downstream recognition failures to upstream issues such as detection reliability, tracking stability, and court projection accuracy.

2.8. Implementation Details and Reproducibility

All clips are processed at a fixed spatial and temporal resolution of 960×540 pixels and 25 fps. Interlaced footage is deinterlaced prior to analysis to reduce motion artifacts that may affect detection and tracking. Each clip is processed independently using the same pipeline stages, namely detection, multi object tracking, court plane projection via homography, and action recognition. The same data splits and evaluation protocol are used across all experiments to ensure direct comparability.

To ensure reproducibility, operating parameters are selected on the validation set and then kept constant during testing. This includes the detector confidence threshold used to filter detections before tracking, as well as tracking parameters that control track initiation and termination under occlusions. When frame sub sampling with step s is applied, tolerance parameters are adjusted consistently to preserve comparable temporal behavior. The implementation is modular and produces explicit intermediate outputs, including per frame detections, per clip trajectories with persistent identities, and court projected trajectories in metric units. Configuration files store the experimental settings and enable exact replication of results, as also described in the Data Availability Statement.

In terms of runtime, the dominant cost is the detector. Using YOLOv8n on CPU with inference input size 512, after resizing the 960×540 frames for model inference, the validation run reports average speeds of 0.4 ms for preprocessing, 19.7 ms for inference, and 0.5 ms for postprocessing per frame, which corresponds to approximately 20.6 ms per frame for detection. This establishes an upper bound on the achievable throughput of the full pipeline, since subsequent stages operate on detector outputs and are computationally lighter. Detection cost scales primarily with input resolution, while tracking by detection increases with the number of active tracks and detections due to the association step. Homography projection is linear in the number of transformed points and is typically negligible after calibration. Action recognition cost depends mainly on the temporal window length and the feature dimensionality, with classifier inference remaining comparatively lightweight for shallow models.