Submitted:

23 February 2026

Posted:

25 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

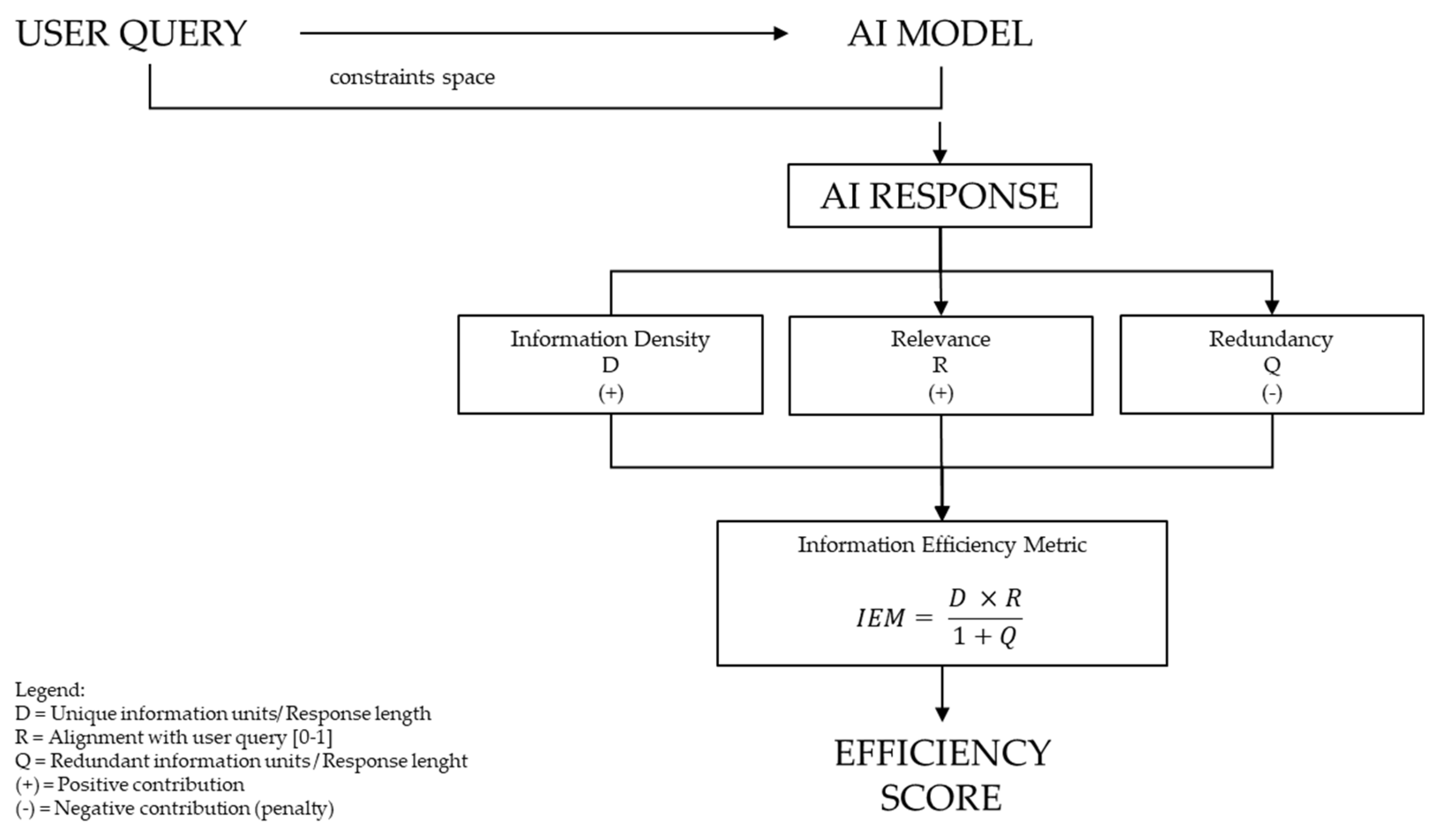

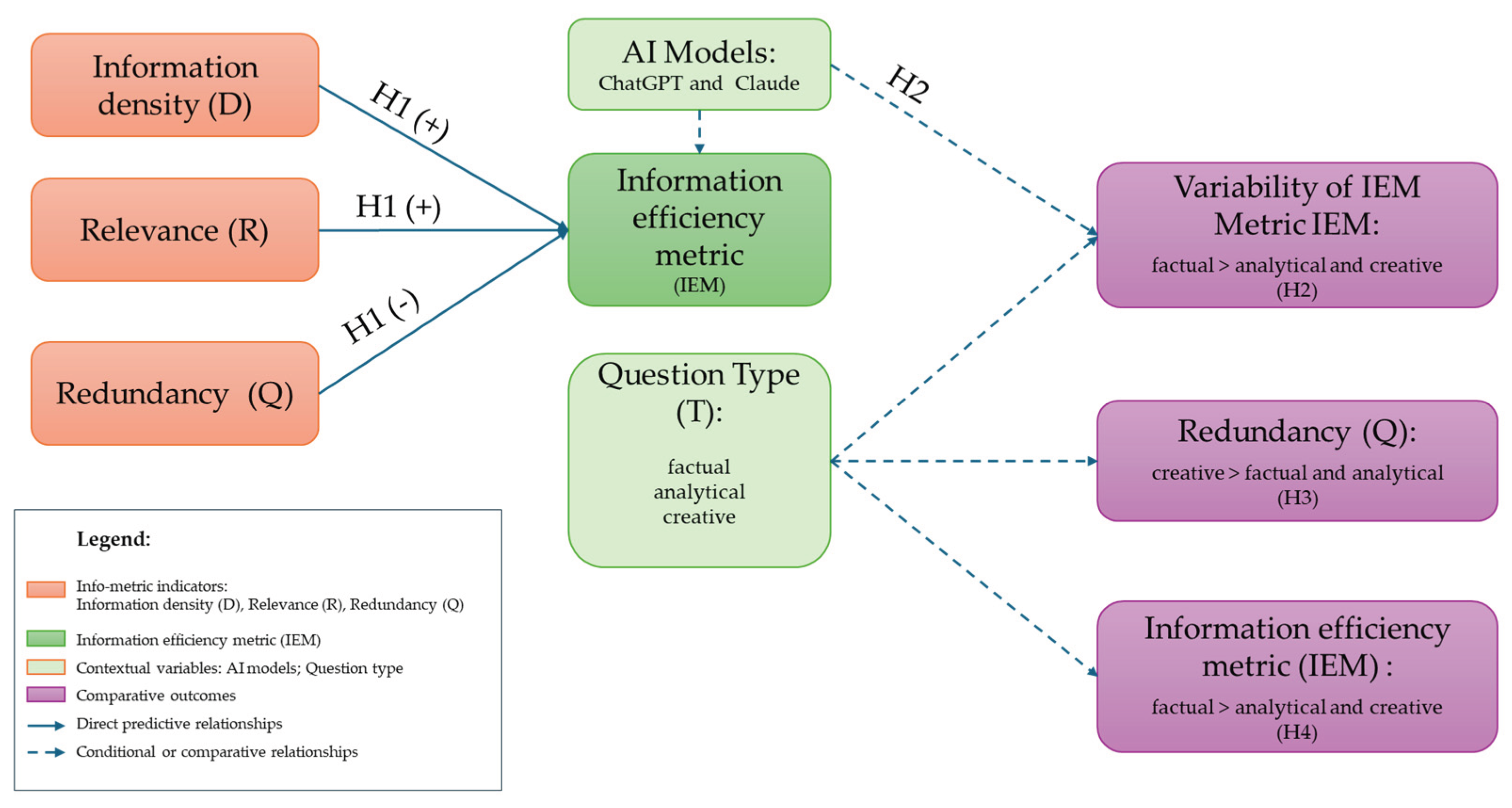

- H1: Information density (D) and relevance (R) have a positive effect on information efficiency (IEM), while redundancy (Q) has a negative effect on information efficiency (IEM).

- H2: The variability in information efficiency (IEM) between AI models is greater for factual questions than for analytical and creative questions.

- H3: Responses to creative questions will have a statistically significantly higher level of redundancy (Q) compared to responses to factual and analytical questions.

- H4: Responses to factual questions achieve higher information efficiency (IEM) compared to responses to analytical and creative questions.

2. Literature Review

- Information-theoretical approaches to human–AI communication

- Info-metric approaches in the AI context

- Digital intelligence and measurement of efficiency

- Communication efficiency of conversational systems

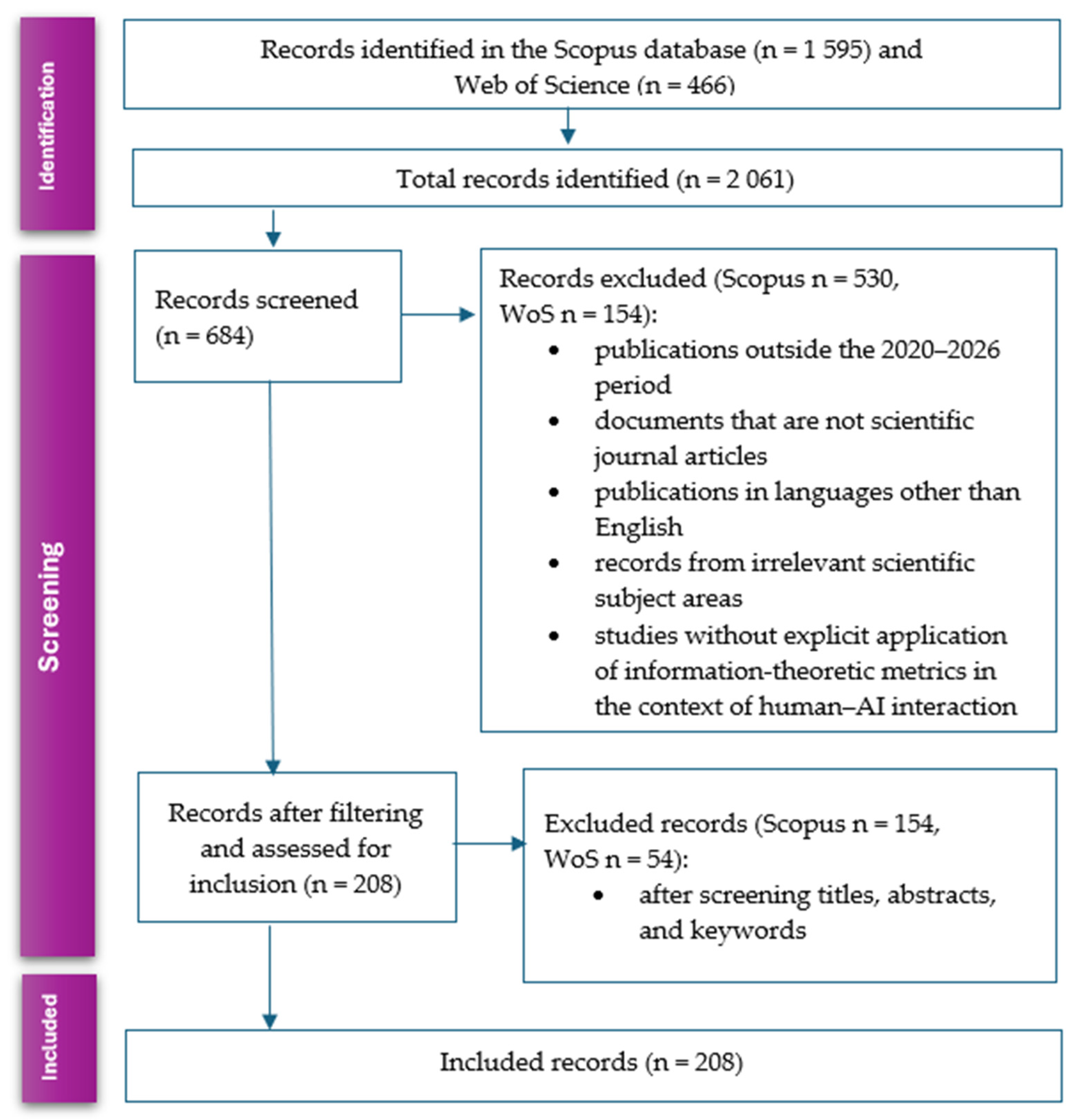

- publication year: 2020-2026

- type of publication: scientific articles and book chapters

- language: English

- research areas: computer science, social sciences, information science, communication, engineering, business

- articles in the fields of medicine, chemistry, neuroscience, and physics (unless directly relevant to AI communication)

- publications without a peer-review process

- articles that do not address human–AI communication or evaluation of AI systems

- conference abstracts, editorials, and reviews without original research

- the presence of an explicit info-metric or information-theoretic framework

- focus on quantification or measurement of information efficiency

- relevance to human–AI communication or evaluation of AI systems

- empirical validation of proposed measures (where applicable)

- Evaluation of AI systems based on accuracy and performance (~40% of papers): Papers that focus on technical metrics such as precision, responsiveness, F1-score, and accuracy, but do not address the information structure of the response or the efficiency of information transfer.

- Subjective user experience measures (~25% of papers): Research that uses questionnaires and scales to assess user satisfaction, perceived usefulness, and trust in AI systems, but without a quantitative information framework.

- Semantic and linguistic metrics (~20% of papers): Approaches based on measuring similarity to reference texts, semantic vectors, or response coherence, without explicitly modeling information density, relevance, and redundancy.

- Theoretical papers on digital intelligence (~10% of papers): Conceptual discussions on digital literacy, AI competencies, and human–AI collaboration, without operationalization through quantitative info-metric measures.

- Other approaches (~5% of papers): Research focused on trust in AI, ethical aspects, explainability, cognitive load, and biases of AI systems.

3. Conceptual Model Design

3.1. Conceptual Framework

3.2. Conceptual Model Design

3.2.1. Information Density

3.2.2. Relevance

3.2.3. Redundancy

3.2.4. Definition of Information Efficiency Metric (IEM)

- Information efficiency assumes economy and utility. A larger amount of text does not mean greater informativeness.

- Information brings value only if it is directed towards the goal, that is, if it is relevant to the user’s query.

- Excess content that does not contribute to the goal acts as information noise, increasing the communication cost and cognitive load of the user.

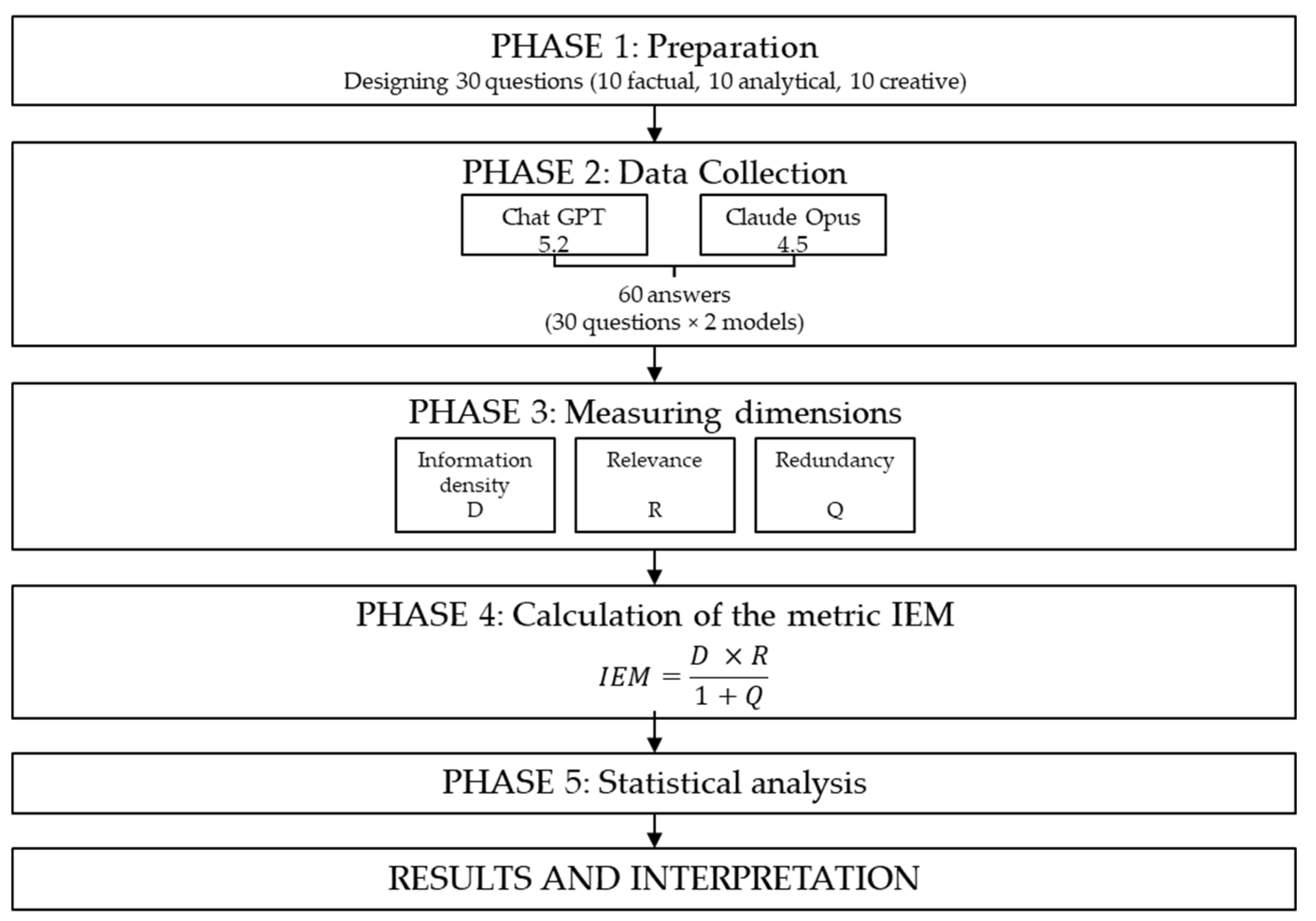

4. Materials and Methods

- What does it mean that an answer is “clear”?

- What is “redundancy” in a text?

- What does “relevant” mean in an answer?

- What is a “summary”?

- In simple terms, what is “information density”?

- How many minutes are in one hour?

- What is 12 × 8?

- What does “PDF” mean?

- What is a password?

- What is a reminder?

- Why can a very long answer be a problem?

- How can you tell an answer is “wandering off topic”?

- Why does repetition reduce usefulness?

- How would you shorten an answer but keep it accurate?

- Why is relevance sometimes more important than “explaining everything”?

- How do you write a good AI prompt in one sentence?

- How do you choose daily priorities?

- Why is it good to double-check an AI answer?

- How can you tell an AI answer is too general?

- How do you shorten a text that is too long?

- Create a metaphor for an answer “full of noise.”

- Write an 8 – 12 word slogan about “less, but better.”

- Write a two-sentence mini-story about an overly long answer.

- Write a short rhyme about relevance.

- Give a one-sentence rule for a good answer.

- Write a short text to a friend saying you’ll be 10 minutes late.

- Create a title for a note about a healthier lunch.

- Write one motivational sentence for studying.

- Suggest a cheap weekend idea.

- Write a one-sentence “about you” line for a profile.

- Segmentation: Responses were segmented into sentences and items (clauses). Each item that conveyed a different claim was considered a candidate for a new information unit.

- Uniqueness criterion: A unit was coded as unique (Iu) if it introduced information that was not previously provided in the response, even if expressed in different words. Different styles of expressing the same content were coded as one unit. Example: “AI is a computer program” and “Artificial intelligence is a software system” are considered the SAME information unit because they convey identical information/logic.

- Relevance criterion: An information unit is coded as relevant (Irel) if it directly answers the user’s query, provides the necessary context for understanding the response, and contributes to the fulfillment of the communication goal. Units that wander into related topics or provide information that does not contribute to the goal are coded as irrelevant.

- Redundancy criterion: A unit is coded as redundant (Ired) if it repeats information previously provided in the response, rephrases the query without adding new information, contains overgeneralized statements that do not add specific content (e.g., “This is an important topic”), or includes unnecessary details that do not improve understanding. What is important to note is that an information unit can be both unique (Iu) and relevant (Irel), but it cannot be both relevant and redundant.

- H1: Information density (D) and relevance (R) have a positive effect on information efficiency (IEM), while redundancy (Q) has a negative effect on information efficiency (IEM).

- H2: The variability of information efficiency (IEM) between AI models is greater for factual questions than for analytical and creative questions.

- H3: Responses to creative questions will have a statistically significantly higher level of redundancy (Q) compared to responses to factual and analytical questions.

- H4: Responses to factual questions achieve higher information efficiency (IEM) compared to responses to analytical and creative questions.

5. Results

6. Discussion

6.1. Interpretation of Results

- IP1: How does the information efficiency of AI responses differ with respect to the type of user question (factual, analytical, creative)?

- IP2: Are there significant differences in information efficiency between different major language models?

- IP3: To what extent do information density, relevance, and redundancy contribute to explaining the variability of the efficiency index?

- IP4: How does the information efficiency (IEM) of AI responses change with respect to the level of complexity of the user question?

6.2. Discussion of Results from the Perspective of Previous Studies

6.3. Methodological Limitations and Future Research

6.4. Practical Implications

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| IEM | Information Efficiency Metric |

| LLM | Large Language Model |

References

- Guzman, A.L.; Lewis, S.C. Artificial intelligence and communication: A Human–Machine Communication research agenda. New Media Soc. 2020, 22, 70–86. [Google Scholar] [CrossRef]

- Guzman, A. Voices in and of the Machine: Source Orientation toward Mobile Virtual Assistants. Computers in Human Behavior 2019. [Google Scholar] [CrossRef]

- Mirek-Rogowska, A.; Kucza, W.; Gajdka, K. AI in Communication: Theoretical Perspectives, Ethical Implications, and Emerging Competencies; CT, 2024; Vol.15. No.2.2, pp. 16–29. [Google Scholar] [CrossRef]

- Shneiderman, B. Human- -Centered Artificial Intelligence: Reliable, Safe & Trustworthy. International Journal of Human–Computer Interaction 2020, 36, 495–504. [Google Scholar] [CrossRef]

- Floridi, L. The Fourth Revolution: How the Infosphere Is Reshaping Human Reality; Oxford University Press: Oxford, UK, 2014. [Google Scholar]

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; Schafer, B.; Valcke, P.; Vayena, E. AI4People—An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. Minds Mach. 2018, 28, 689–707. [Google Scholar] [CrossRef]

- Luger, E.; Sellen, A. “Like Having a Really Bad PA”: The Gulf between User Expectation and Experience of Conversational Agents. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems (CHI ’16); Association for Computing Machinery: New York, NY, USA, 2016; pp. 5286–5297. [Google Scholar] [CrossRef]

- Aftab, O.; Cheung, P.; Kim, A.; Thakkar, S.; Yeddanapudi, N. Information Theory and the Digital Revolution; Unpublished manuscript; Massachusetts Institute of Technology: Cambridge, MA, USA, 2001. [Google Scholar]

- Banks, J.; Bowman, N.D. Avatars Are (Sometimes) People Too: Linguistic Indicators of Parasocial and Social Ties in Player–Avatar Relationships. New Media & Society 2016, 18, 1257–1276. [Google Scholar]

- Shannon, C.E. A Mathematical Theory of Communication. Bell System Technical Journal 1948, 27, 379–423, 623–656. [Google Scholar] [CrossRef]

- Gao, J.; Galley, M.; Li, L. Neural Approaches to Conversational AI. Foundations and Trends® in Information Retrieval 2019, 13, 127–298. [Google Scholar] [CrossRef]

- Mahmud, B.; Hong, G.; Fong, B. A Study of Human–AI Symbiosis for Creative Work: Recent Developments and Future Directions in Deep Learning. ACM Transactions on Multimedia Computing, Communications, and Applications 2023, 20, 47:1–47:21. [Google Scholar] [CrossRef]

- Hassani, H.; Silva, E.S.; Unger, S.; TajMazinani, M.; Mac Feely, S. Artificial Intelligence (AI) or Intelligence Augmentation (IA): What Is the Future? AI 2020, 1, 143–155. [Google Scholar] [CrossRef]

- Cabitza, F.; Campagner, A.; Fregosi, C.; Cameli, M.; Gallazzi, E.; Sconfienza, L.M.; Tontini, G.E. Five Degrees of Separation: Investigating the Unexpected Potential of Displaced Human–AI Collaboration Protocols for Apter AI Support. Proceedings of the ACM on Human-Computer Interaction 2025, 9. [Google Scholar] [CrossRef]

- Epstein, Z.; Hertzmann, A. Investigators of Human Creativity. Art and the Science of Generative AI: Understanding Shifts in Creative Work Will Help Guide AI’s Impact on the Media Ecosystem. Science 2023, 380, 1110–1111. [Google Scholar] [CrossRef]

- Bajić, D. Information Theory, Living Systems, and Communication Engineering. Entropy 2024, 26, 430. [Google Scholar] [CrossRef] [PubMed]

- Floridi, L. The Philosophy of Information; Oxford University Press: Oxford, UK, 2011. [Google Scholar]

- Golan, A. Foundations of Info-Metrics: Modeling, Inference, and Imperfect Information; Oxford University Press: Oxford, UK, 2018. [Google Scholar]

- Golan, A. On the State of the Art of Info-Metrics. In Uncertainty Analysis in Econometrics with Applications; Springer: Berlin, Heidelberg, Germany, 2013; pp. 3–15. [Google Scholar]

- Anbalagan, B.; Valliyammai, C. Information Entropy Based Event Detection during Disaster in Cyber-Social Networks. Journal of Intelligent & Fuzzy Systems 2019, 36, 1–12. [Google Scholar] [CrossRef]

- Kumar, A.; Rana, S.; Shilton, A.; Venkatesh, S. Human–AI Collaborative Bayesian Optimisation. In Advances in Neural Information Processing Systems 35 (NeurIPS 2022); Koyejo, S., Mohamed, S., Agarwal, A., Belgrave, D., Cho, K., Oh, A., Eds.; 2022. [Google Scholar]

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mané, D. Concrete Problems in AI Safety. arXiv 2016, arXiv:1606.06565. [Google Scholar]

- Gehrmann, S.; Adewumi, T.; Aggarwal, K.; Aremu, A.; Bosselut, A.; et al. The GEM Benchmark: Natural Language Generation, Its Evaluation and Metrics. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2021; pp. 96–120. Available online: https://aclanthology.org/2021.gem-1.10/.

- Huang, L.; Yu, W.; Ma, W.; Zhong, W.; Feng, Z.; Wang, H.; Chen, Q.; Peng, W.; Feng, X.; Qin, B.; Liu, T. A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. In ACM Computing Surveys; 2023. [Google Scholar] [CrossRef]

- Karpinska, M.; Akoury, N.; Iyyer, M. The Perils of Using Mechanical Turk to Evaluate Open-Ended Text Generation. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing (EMNLP); Association for Computational Linguistics: Online & Punta Cana, Dominican Republic, 2021; pp. 1265–1285. [Google Scholar] [CrossRef]

- Hao, X.; Demir, E.; Eyers, D. Exploring Collaborative Decision-Making: A Quasi-Experimental Study of Human and Generative AI Interaction. Technology in Society 2024, 78, 102662. [Google Scholar] [CrossRef]

- Rosbach, E.; Ammeling, J.; Krügel, S.; Kiessig, A.; Fritz, A.; Ganz, J.; Puget, C.; Donovan, T.; Klang, A.; Köller, M.; Bolfa, P.; Tecilla, M.; Denk, D.; Kiupel, M.; Paraschou, G.; Kok, M.; Haake, A.; de Krijger, R.; Sonnen, A.; Kasantikul, T.; Dorrestein, G.; Smedley, R.; Stathonikos, N.; Uhl, M.; Bertram, C.; Riener, A.; Aubreville, M. “When Two Wrongs Don’t Make a Right”: Examining Confirmation Bias and the Role of Time Pressure during Human–AI Collaboration in Computational Pathology. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI 2025); Association for Computing Machinery: New York, NY, USA, 2025. [Google Scholar] [CrossRef]

- Golan, A.; Maasoumi, E. Information theoretic and entropy methods: An overview. Econometr. Rev. 2008, 27, 317–328. [Google Scholar] [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; et al. The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef]

- Sengar, S.S.; Hasan, A.B.; Kumar, S.; Carroll, F. Generative Artificial Intelligence: A Systematic Review and Applications. Multimedia Tools and Applications 2025, 84, 23661–23700. [Google Scholar] [CrossRef]

- Song, J.; Ashktorab, Z.; Pan, Q.; Dugan, C.; Geyer, W.; Malone, T.W. Interaction Configurations and Prompt Guidance in Conversational AI for Question Answering in Human–AI Teams. Proceedings of the ACM on Human-Computer Interaction 2025, 9. [Google Scholar] [CrossRef]

- Hosawi, A.; Stone, R. Enhancing Trust in Human–AI Collaboration: A Conceptual Review of Operator–AI Teamwork. International Journal of Advanced Computer Science and Applications 2025, 16, 1–15. [Google Scholar] [CrossRef]

- Chiang, W.-L.; Li, L.; Zheng, T.; Li, J.; Zhang, M.; Zhang, X.; Sheng, J.; Jin, Y.; Zhu, Z.; Chen, A.; et al. Chatbot Arena: An Open Platform for Evaluating LLMs by Human Preference. arXiv 2023, arXiv:2306.05685. [Google Scholar]

- Weaver, W. Recent Contributions to the Mathematical Theory of Communication. ETC: A Review of General Semantics 1953, 10, 261–281. [Google Scholar]

- Zheng, L.; Chiang, W.-L.; Sheng, Y.; Zhuang, S.; Wu, Z.; Zhuang, Y.; Lin, Z.; Li, Z.; Li, D.; Xing, E.P.; Zhang, H.; Gonzalez, J.E.; Stoica, I. Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena. arXiv 2023, arXiv:2306.05685v4. [Google Scholar]

- Boden, M.A. AI: Its Nature and Future; Oxford University Press: Oxford, UK, 2016. [Google Scholar]

- Amershi, S.; Cakmak, M.; Knox, W.B.; Kulesza, T. Guidelines for Human–AI Interaction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, ACM, 2019; pp. 1–13. [Google Scholar] [CrossRef]

- Pan, W.; Liu, D.; Meng, J.; Liu, H. Human–AI Communication in Initial Encounters: How AI Agency Affects Trust, Liking, and Chat Quality Evaluation. New Media & Society 2025, 27, 5822–5847. [Google Scholar] [CrossRef]

- Hancock, J.T.; Naaman, M.; Levy, K. AI-Mediated Communication: Definition, Research Agenda, and Ethical Considerations. Journal of Computer-Mediated Communication 2020, 25, 89–100. [Google Scholar] [CrossRef]

| Search strategy | Hits | Timeframe | Index | |

| TITLE-ABS-KEY(((“information theory” OR “entropy” OR “information efficiency” OR “information density” OR “redundancy”) AND (“human-AI interaction” OR “human-computer interaction” OR “digital intelligence” OR “AI communication”) AND (“evaluation” OR “metric” OR “quantitative analysis” OR “performance measurement”)) OR ((“info-metrics” OR “maximum entropy econometrics” OR “entropy-based inference”) AND (“artificial intelligence” OR “decision-making” OR “data modeling”)) OR ((“digital intelligence” OR “AI quotient” OR “AI literacy” OR “human-AI collaboration”) AND (“measurement” OR “quantification” OR “index” OR “metric” OR “efficiency”)) OR ((“communication efficiency” OR “information transfer” OR “semantic efficiency” OR “information redundancy”) AND (“AI” OR “language model” OR “conversational agent” OR “chatbot”))) AND PUBYEAR > 2014 | 1595 | All years | Scopus | |

| Refined by: ( TITLE-ABS-KEY ( ( ( “information theory” OR “entropy” OR “information efficiency” OR “information density” OR “redundancy” ) AND ( “human-AI interaction” OR “human-computer interaction” OR “digital intelligence” OR “AI communication” ) AND ( “evaluation” OR “metric” OR “quantitative analysis” OR “performance measurement” ) ) OR ( ( “info-metrics” OR “maximum entropy econometrics” OR “entropy-based inference” ) AND ( “artificial intelligence” OR “decision-making” OR “data modeling” ) ) OR ( ( “digital intelligence” OR “AI quotient” OR “AI literacy” OR “human-AI collaboration” ) AND ( “measurement” OR “quantification” OR “index” OR “metric” OR “efficiency” ) ) OR ( ( “communication efficiency” OR “information transfer” OR “semantic efficiency” OR “information redundancy” ) AND ( “AI” OR “language model” OR “conversational agent” OR “chatbot” ) ) ) AND PUBYEAR > 2014 ) AND PUBYEAR > 2021 AND PUBYEAR < 2027 AND ( LIMIT-TO ( DOCTYPE , “ar” ) OR LIMIT-TO ( DOCTYPE , “bk” ) ) AND ( LIMIT-TO ( LANGUAGE , “English” ) ) AND ( LIMIT-TO ( SUBJAREA , “COMP” ) OR LIMIT-TO ( SUBJAREA , “SOCI” ) OR LIMIT-TO ( SUBJAREA , “DECI” ) OR LIMIT-TO ( SUBJAREA , “MULT” ) OR LIMIT-TO ( SUBJAREA , “ENGI” ) OR LIMIT-TO ( SUBJAREA , “BUSI” ) ) | 530 | 2020-2026 | Scopus | |

| Search strategy | Hits | Timeframe | Index |

|---|---|---|---|

| (( “information theory” OR “entropy” OR “information efficiency” OR “information density” OR “redundancy” ) AND ( “human-AI interaction” OR “human-computer interaction” OR “digital intelligence” OR “AI communication” ) AND ( “evaluation” OR “metric” OR “quantitative analysis” OR “performance Measurement” ) ) OR ( ( “info-metrics” OR “maximum entropy econometrics” OR “entropy-based inference” ) AND ( “artificial intelligence” OR “decision-making” OR “data modeling” ) )OR ( ( “digital intelligence” OR “AI quotient” OR “AI literacy” OR “human-AI collaboration” ) AND ( “measurement” OR “quantification” OR “index” OR “metric” OR “efficiency” ) )OR ( ( “communication efficiency” OR “information transfer” OR “semantic efficiency” OR “information redundancy” ) AND TITLE-ABS-KEY ( “AI” OR “language model” OR “conversational agent” OR “chatbot” ) ) | 466 | All years | CPCI-S, SCI-EXPANDED, ESCI, CPCI-SSH, SSCI, BKCI-S |

| Refined search: DOCUMENT TYPES: (ARTICLE OR PROCEEDINGS PAPER) AND LANGUAGES: (ENGLISH) AND PUBLICATION YEARS: (2026 OR 2025 OR 2024 OR 2023 OR 2022 OR 2021 OR 2020) AND WEB OF SCIENCE CATEGORIES: (COMPUTER SCIENCE ARTIFICIAL INTELLIGENCE OR COMPUTER SCIENCE INFORMATION SYSTEMS OR COMPUTER SCIENCE INTERDISCIPLINARY APPLICATIONS OR COMMUNICATION OR COMPUTER SCIENCE THEORY METHODS OR INFORMATION SCIENCE LIBRARY SCIENCE OR MULTIDISCIPLINARY SCIENCES) AND RESEARCH AREAS: (COMPUTER SCIENCE OR COMMUNICATION OR BUSINESS ECONOMICS OR INFORMATION SCIENCE LIBRARY SCIENCE OR SCIENCE TECHNOLOGY OTHER TOPICS OR SOCIAL SCIENCES OTHER TOPICS) | 154 | 2020-2026 | CPCI-S, SCI-EXPANDED, ESCI, CPCI-SSH, SSCI, BKCI-S |

| Variable | N | Min | Max | Mean | Med | St dev |

|---|---|---|---|---|---|---|

| Response length (L) | 60 | 1 | 276 | 81.4 | 24.5 | 91.366 |

| Information units (Iu) | 60 | 1 | 16 | 5.350 | 3.5 | 4.364 |

| Relevant information units (I rel) | 60 | 1 | 12 | 4.800 | 3.5 | 3.511 |

| Redundant information units (I red) | 60 | 0 | 7 | 0.783 | 0 | 1.519 |

| Information density (D) | 60 | 0.030 | 1 | 0.137 | 0.1 | 0.142 |

| Relevance (R) | 60 | 0.333 | 1 | 0.940 | 1 | 0.142 |

| Redundancy (Q) | 60 | 0 | 0.13 | 0.008 | 0 | 0.020 |

| Information efficiency (IEM) | 60 | 0.029 | 1 | 0.131 | 0.1 | 0.143 |

| AI model | N | Response length (L) |

Information density (D) |

Relevance (R) |

Redundancy (Q) |

Information efficiency (IEM) |

|---|---|---|---|---|---|---|

| Chat GPT | 30 | 64.033 ± 60.800 | 0.105 ± 0.051 | 0.944 ± 0.158 | 0.007 ± 0.023 | 0.097 ± 0.051 |

| Claude | 30 | 98.767 ± 112.540 | 0.171 ± 0.190 | 0.936 ± 0.125 | 0.008 ± 0.016 | 0.165 ± 0.192 |

| Question type | N | Response length (L) (Mean ± SDev) |

Information density (D) (Mean ± SDev) |

Relevance (R) (Mean ± SDev) |

Redundancy (Q) (Mean ± SDev) |

Information efficiency (IEM) (Mean ± SDev) |

|---|---|---|---|---|---|---|

| factual | 20 | 102.95 ± 99.566 | 0.171 ± 0.224 | 0.875 ± 0.166 | 0.010 ± 0.014 | 0.162 ± 0.229 |

| analytical | 20 | 118.200 ± 98.613 | 0.101 ± 0.067 | 0.995 ± 0.020 | 0.004 ± 0.006 | 0.101 ± 0.068 |

| creative | 20 | 23.050 ± 28.037 | 0.141 ± 0.072 | 0.950 ± 0.163 | 0.010 ± 0.031 | 0.131 ± 0.072 |

| AI model | Question type |

N | Information density (D) (Mean ± SDev) |

Relevance (R) (Mean ± SDev) |

Redundancy (Q) (Mean ± SDev) |

Information efficiency (IEM) (Mean ± SDev) |

|---|---|---|---|---|---|---|

| ChatGPT | factual | 10 | 0.101 ± 0.047 | 0.900 ± 0.174 | 0.038 ± 0.008 | 0.092 ± 0.053 |

| ChatGPT | analytical | 10 | 0.094 ± 0.054 | 1.000 ± 0.000 | 0.006 ± 0.007 | 0.094 ± 0.054 |

| ChatGPT | creative | 10 | 0.119 ± 0.053 | 0.933 ± 0.211 | 0.013 ± 0.040 | 0.106 ± 0.051 |

| Claude | factual | 10 | 0.242 ± 0.304 | 0.851 ± 0.161 | 0.016 ± 0.017 | 0.213 ± 0.311 |

| Claude | analytical | 10 | 0.108 ± 0.081 | 0.991 ± 0.029 | 0.003 ± 0.003 | 0.108 ± 0.081 |

| Claude | creative | 10 | 0.163 ± 0.084 | 0.967 ± 0.105 | 0.007 ± 0.021 | 0.155 ± 0.083 |

| Coefficientsa | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Model | Unstandardized Coefficients |

Standardized Coefficients |

t | Sig. | Collinearity Statistics |

||||

| B | Std. Error | Beta | Tolerance | VIF | |||||

| 1 | (Constant) | -0.073 | 0.008 | -9.101 | 0.000 | ||||

| Information density (D) | 0.990 | 0.006 | 0.979 | 176.217 | 0.000 | 0.989 | 1.011 | ||

| Relevance (R) | 0.076 | 0.008 | 0.075 | 9.344 | 0.000 | 0.473 | 2.113 | ||

| Redundancy (Q) | -0.542 | 0.058 | -0.075 | -9.379 | 0.000 | 0.477 | 2.097 | ||

| Tests of Homogeneity of Variances | |||||

|---|---|---|---|---|---|

| Levene Statistic | df1 | df2 | Sig. | ||

| Information efficiency (IEM) IEM=(D×R)/(1+Q) |

Based on Mean | 3.846 | 2 | 57 | 0.27 |

| Based on Median | 1.254 | 2 | 57 | 0.293 | |

| Based on Median and with adjusted df | 1.254 | 2 | 22.799 | 0.304 | |

| Based on trimmed mean | 2.089 | 2 | 57 | 0.133 | |

| Test Statistics a,b | |||

|---|---|---|---|

| Kruskal-Wallis H | df | Asymp. Sig. | |

| Redundancy (Q) |

5.217 | 2 | 0.074 |

| a. Kruskal Wallis Test | |||

| b. Grouping Variable: Q_type_num | |||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).