1. Introduction

Iron foundry is one of the most mature and widely adopted manufacturing technologies, enabling the large scale production of metallic components with complex geometries and demanding mechanical requirements. Global cast iron production accounts for hundreds of millions of tonnes per year, supplying key industrial sectors such as automotive, energy, railway transportation, and heavy machinery [1]. Within this domain, ductile (also known as nodular) iron has become a preferred material for critical components due to its improved strength, ductility, fatigue resistance, and damage tolerance when compared to grey cast iron [2].

From a process perspective point of view, iron casting manufacturing procedure involves a sequence of tightly coupled stages, including (i) metal melting, (ii) pouring into moulds, (iii) solidification under controlled thermal conditions, (iv) extraction of the solidified part, and (v) subsequent forming or finishing operations. Among these stages, the pouring phase plays a decisive role in determining the final quality of the casting. During pouring, molten metal is transferred into the mould cavity, where its flow behaviour, temperature evolution, and interaction with the mould directly affect filling completeness, microstructural development, and defect formation. Continuous and semi-continuous pouring processes offer significant advantages, such as high production efficiency, improved dimensional repeatability, and reduced energy consumption due to controlled solidification and elimination of auxiliary cooling steps [3,4]. Nevertheless, these benefits come at the cost of increased process sensitivity. Hence, minor disturbances during pouring can propagate downstream, resulting on defects that are difficult to detect and costly to correct.

Despite the extensive industrial experience achieved by foundries, the pouring phase remains particularly prone to quality deviations. Common defects include incomplete filling, cold shuts, poor filling quality, and surface or internal imperfections such as inclusions, porosity, segregation, and thermal cracking [5]. Many of these defects are directly linked to diverse phenomena occurring during pouring, such as stream instability, turbulence, interruptions, or overflow episodes. Importantly, these events often develop over very short time windows and may not be captured by conventional monitoring systems. Notwithstanding, finding these defective cases on industrial scenarios poses a great challenge. This is mainly due to the scarcity of such examples on normal production conditions, which hinders data collection and annotation, requiring hundreds of expert hours. While anomaly detection algorithms are often used, the techniques carried out for detection generally involve low dimensional statistical outlier detection, or depend on, at least, a partially annotated dataset. Furthermore, relying solely on a single data modality (such as sensor signals or visual information) is often insufficient for comprehensive analysis [6]. Many casting-related events and anomalies are better characterized by the fusion of multiple data sources. For instance, sensor readings may indicate changes in casting level or flow rates, while visual data may reveal surface defects or slag layer behavior that are not captured by numerical sensors alone. Therefore, the integration of both sensor and image data, what is known as a multimodal approach, is essential to capture the full complexity of the process and enhance detection performance [7,8].

In this sense, an effective control of pouring parameters (e.g., metal temperature, pouring duration, inter-pouring time, mould alignment, and flow stability) is critical to achieve consistent production quality. In industrial foundries, this control task presents a serious challenge due to the large number of sensors deployed along the production line, the high acquisition frequency of process signals, and the resulting data volume, which complicates real-time interpretation and decision making [9]. Traditional manual inspection or rule-based supervision strategies struggle to cope with this complexity and often fail to provide early warnings of emerging defects [10].

To address these limitations, automated monitoring and intelligent analysis systems have gained increasing attention. Specifically, artificial intelligence (AI) methods, particularly those based on machine learning (ML) and deep learning (DL), offer powerful tools for processing high-dimensional and heterogeneous data streams, enabling improved event detection, anomaly identification, and predictive capabilities [11]. In the context of casting processes, recent works have explored computer vision (CV) techniques for detecting surface defects and pouring anomalies [12,13], as well as sensor-based approaches for real-time supervision of thermal and flow-related variables [14,15].

Nevertheless, many existing solutions remain limited by their unimodal nature. These solutions, which rely exclusively on either sensor data or visual information, often provide an incomplete view of the process [6]. On the one hand, while sensors can capture global trends such as temperature or flow rate variations, they may overlook localised visual phenomena at the mould cup or pouring stream. On the other hand, vision based systems may struggle under occlusions, glare, or variable lighting conditions without complementary process context. For this reason, multimodal approaches that combine image and sensor data have been increasingly advocated as a means to capture the full complexity of industrial processes and enhance robustness [7,8].

Beyond foundry characterization, industrial digitization efforts increasingly emphasise the need for higher-level integration and interpretability. In this sense, digital twins, the virtual representations of physical systems that remain synchronised with their real counterparts, have emerged as a key enabler for monitoring, optimisation, and decision support in manufacturing. Specifically, in casting processes, digital twin principles have been applied to optimise cooling strategies, control solidification behaviour, and reduce defect rates [16,17]. Notwithstanding, effective digital twin deployment requires structured, time aligned, and semantically meaningful data describing not only sensor readings but also events, conditions, and operational context. This highlights the importance of frameworks capable of transforming raw observations into machine readable representations suitable for integration with this type of systems.

Against this background, this work proposes a multimodal framework for the detection and assessment of pouring related anomalies in industrial iron foundry processes. Rather than focusing solely on visual defect detection or isolated sensor thresholds, the proposed approach aims to unify heterogeneous evidences into a coherent and interpretable description of each pouring cycle. The system is designed to operate under real foundry conditions, accounting for occlusions, non-regular operational contexts, and transient disturbances that frequently occur in production environments.

The proposed framework combines visual analysis of pouring scenes with domain specific process signals under a MoE architecture. In particular, a visual detection backbone based on YOLO identifies relevant Areas of Interest (AoI), such as pouring streams, mould cups, and protective elements, while temporal validation through object tracking ensures spatial consistency across frames. Regarding detection of anomalous pouring events, we further encode visual dynamics through self-supervised VideoMAE features [18], enabling compact representation of pouring behaviour over time, which can be used for anomalous event detection through outlier-aware DBSCAN clustering algorithm [19]. Crucially, the limited inductive biases present on transformer architectures allows for seamless integration of multimodal data. Hence, selected sensor signals are integrated to contextualise visual observations and reinforce interpretation through expert informed reasoning. The current approach achieves crucial advancements on several common issues related to anomaly detection on industrial settings. On the one hand, it allows to pin-point potential anomalies, greatly easing the burden on expert annotators to find and tag such events. On the other hand, it presents a valid approach to categorize various normal and anomalous industrial events, successfully integrating and leveraging multimodal information.

The outputs of the different expert modules are aggregated into a structured, digital twin ready JavaScript Object Notation (JSON) representation that captures the state, quality indicators, and contextual conditions of each pouring event. While a full digital twin implementation is beyond the scope of this work, the proposed representation is explicitly designed to support seamless communication with those higher-level systems, enabling future developments in traceability, root-cause analysis, and predictive maintenance.

In summary, the main operational objectives of this work are:

- 1.

To reliably identify and segment individual pouring events under real industrial conditions.

- 2.

To detect and track key visual elements required for interpreting pouring dynamics and safety conditions.

- 3.

To extract interpretable indicators describing stream stability, filling quality, and process compliance.

- 4.

To find anomalous pouring events through unsupervised multimodal feature learning and clustering.

- 5.

To unify heterogeneous evidences through an explainable Mixture-of-Experts (MoE) decision framework.

- 6.

To generate structured outputs suitable for integration with digital twin and supervisory platforms.

The remainder of this paper is organised as follows.

Section 2 describes the industrial setup, data acquisition strategy, and methodological components of the proposed framework. Then,

Section 3 presents the experimental evaluation of event detection, AoI analysis, unsupervised anomaly detection, and MoE-based decision unification. Finally,

Section 4 discusses industrial deployment aspects and limitations, summarises the main contributions, and outlines future research directions.

2. Materials and Methods

To tackle large number of challenges faced by researchers (see

Section 1), we adopt a well-known methodology

"divide-and-conquer". The main objective, close to the MoE approach, is to simplify the resolution of the original problem by decomposing it into smaller and more manageable subproblems. This strategy allows for a reduction of computational complexity and promotes a structured and efficient problem solving approach. Historically, this methodology has been widely applied to manage diverse challenges, for instance, in legal reasoning [20], mathematical computations [21], and computational problems related to parallel processing [22]. Formally, the general problem is defined as

P, which can be expressed as a set of subproblems

. Once each subproblem is individually handled and its specific solution is obtained, denoted as

, the partial results are systematically combined to construct the complete solution to the original problem:

where C represents the function that combines partial solutions into the final result.

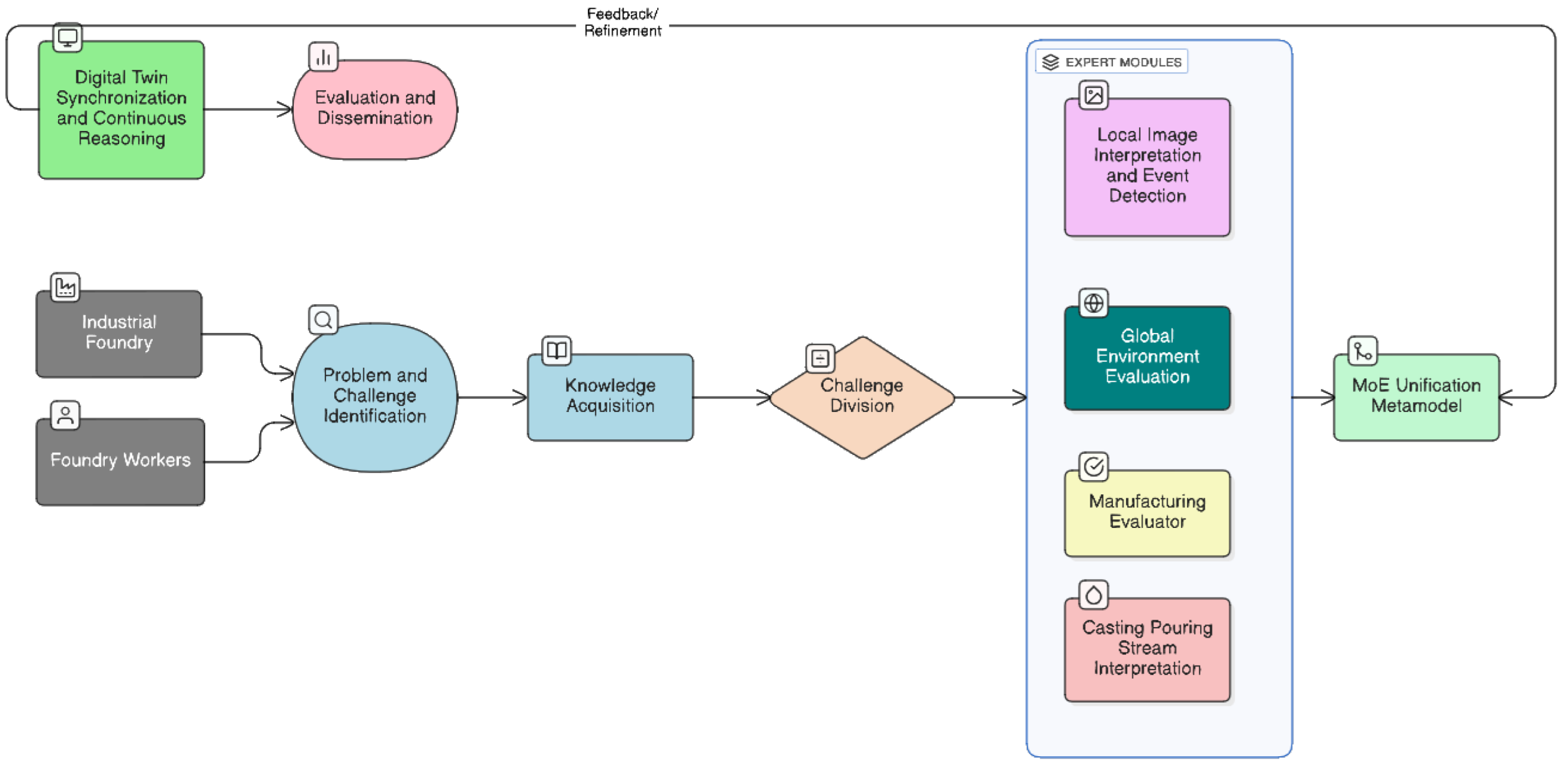

In this work, we decompose the industrial monitoring problem into specialized modules within a multimodal MoE. Each expert represents an independent subproblem, and, their results are unified into a global solution that drives a real-time digital twin of the industrial process. All stages, challenges, and integration phases defined in this work are summarized next and visually represented in

Figure 1.

- 1.

Problem and Challenge Identification. The aim of this initial step is to establish the background and context of the industrial problem to be solved. Analyzing both the multimodal nature of the data (i.e., images, video and sensor streams), as well as other aspects inherent to the problem at hand, such as pouring streams, leaks, overpouring situations or irregular filling patters. In a nutshell, understanding what, how and why must be monitored, providing a clear foundation for each expert specialization.

- 2.

Knowledge Acquisition. This second phase focuses on obtaining a domain specific and technological knowledge to guide the further research and development of each expert subsystem. Here, high level knowledge is firstly acquired to strongly define the overall workflow of the research. This stages also involves defining data sources, formats and the synchronization requirements necessary for a multimodal integration.

- 3.

-

Challenge Division. Following the divide-and-conquer paradigm, the global problem P is divided into smaller subproblems , each corresponding to a functional expert module that will be included in the MoE framework. More accurately, the definition of each expert will include the following sub-phases: (i) acquisition of specific knowledge, (ii) definition of techniques and experiments, and (iii) results evaluation and analysis.

Local Image Interpretation and Event Detection. The challenge behind this subproblem focuses on identifying the most probable sources of anomalies. This challenge requires the acquisition of specific knowledge related to visual feature extraction, keypoint detection, and motion-based event discovery. The achieved solution will contribute to enhance the reliability of visual monitoring in complex production settings through the detection of critical areas where irregularities could appear and finally cause a faulty casting.

Global Environment Evaluation. The second challenge tries to characterize the broader industrial environment and provide and overall process overview. This involves an analysis of plant topology, global process dependencies and large scale events such as line breaks, maintenance operations or overpouring occurrences, among others. This task is an evaluation in a macroscopic level of events occurring during the manufacturing process, assuring that the contextual events are identified and interpreted.

Manufacturing Evaluator. This challenge tackles quantitative process evaluation and compliance verification. It is is grounded on the acquisition of knowledge related to industrial process parameters, tolerance limits, and production quality regulations. That information should be dependent on the current manufactured reference and must be updated and extracted in real time to be able to characterize the full process accurately.

Casting Pouring Stream Interpretation. This last challenge involves understanding the dynamic behaviour of molten metal flow during casting operations for unsupervised identification of anomalous events. This work requires the acquisition of expert knowledge, time synchronized video-sensor fusion, self supervised flow dynamics characterization, and categorization of defect topology associated with pouring streams.

- 4.

Functional Integration through the MoE Unification Metamodel. Once all

partial solutions have been computed by each expert, they will be aggregated through the MoE Unification Metamodel. In fact, this metamodel acts as the combination function

C (shown in Equation (

1)), which merges all outputs into a unified and interpretable representation of the industrial environment.

- 5.

Digital Twin Synchronization and Continuous Reasoning. The unified output of S as a structured JSON stream, together with the processed visual data, are transmitted to a digital twin, ensuring real-time synchronization between the physical plant and its virtual representation. This phase guarantees that the digital twin accurately reflects the state of the production line at any given moment. Thanks to this data distribution, the twin supports other tasks like predictive maintenance, root-cause analysis, and operational optimization.

- 6.

Evaluation of Global Results and Dissemination. After a full system integration, a final evaluation phase is carried out to encourage the global performance of the Multimodal Industrial Monitoring System with MoE. This final phase includes (i) a quantitative evaluation, (ii) qualitative evaluation, and (iii) results dissemination.

2.1. Data Acquisition and Capture Environment

The data used in this work was acquired on a commercial sand-casting production line. To preserve industrial confidentiality, site specific identifiers (for instance, plant name, exact location and proprietary process parameters) have been omitted and, in contrast, they were replaced by aggregated descriptors and representative statistics. Despite this fact, authors provide enough methodological details to enable the replication of the proposed approach.

Casting is one of the oldest and most fundamental manufacturing processes, in which molten metal is poured into a mold cavity that reproduces the desired shape of the final component [23]. Among different casting techniques,

green sand casting remains as one of the most widely used due to its cost effectiveness, reusability of materials, and suitability for mass production [24,25]. In this process, the mold is made of a mixture of sand, clay, and water (also known as

green sand). This mixture provides enough strength and plasticity to retain the shape during metal pouring and cooling. A modern variant of this method is the

vertical molding process, where the sand molds are created and aligned vertically in a continuous production line. This configuration, usually associated with DISA moulding machines, enables a high speed production (close to an average of 550 moulds per hour) with excellent dimensional accuracy and automation capabilities [26]. The vertical arrangement allows molds to cool down as they move continuously over a conveyor.

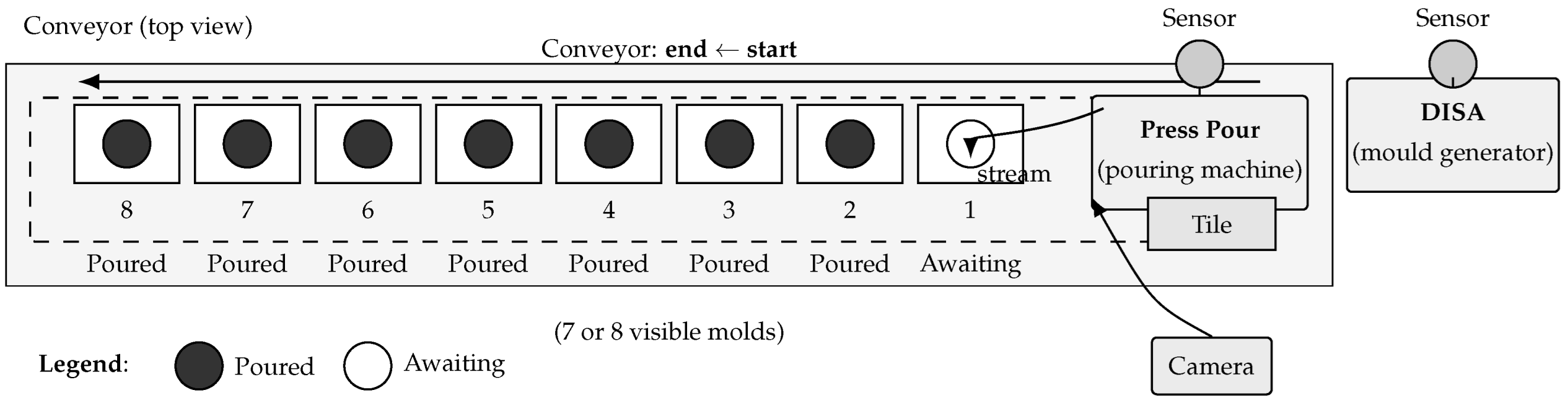

Figure 2 illustrates the schematic of the vertical sand-casting line used for this study.

There are several types of metals used to create castings. The material used in our case is nodular cast iron, also known as ductile iron. This metal is a ferrous alloy characterized by the spheroidal morphology of its graphite inclusions. This microstructural feature provides a superior combination of strength, toughness, and ductility compared to gray cast iron, making it ideal for automotive, hydraulic, and structural applications [27,28].

The molding line operation starts with the moulding machine. It compacts green sand around a pattern, and eject the created mould to the conveyor. The formed moulds advance along the mould train until they reach the pouring station, where a press-pour mechanism deposits the molten metal into the pouring cup. Then, gravity pulls down the metal which ends up filling the cavity inside the mould. During pouring, a protective cover know as tile, is placed over the next pouring cup to prevent unintended spillage of metal into downstream moulds. This reduces the risk of cross contamination between them. The quality of the pouring operation is critical as bad pouring procedures frequently cause defects. These may have different consequences for component performance [5,23,25,29], and can be categorized as follows:

Severe (structural) defects: phenomena that may cause catastrophic failure or render a part as unusable. For instance, this group includes carbide formation or hard brittle phases, compromising toughness and increasing the risk of premature fractures under load; cold shots, causing weak discontinuities due to incomplete coalescence of metal streams; shrinkage porosity, voids produced by volume contraction during the solidification stage, which cause leakage; and gross lack of material due to underfilling.

Functional defects: defects that affect the final functionality of a part or the subsequent finalization operations, such as machining. Some examples are (i) hard inclusions (for instance, slag, sand inclusions or oxides) and (ii) local carbide pockets that damage cutting tools or reduce the service life of a part. All those defects are usually formed by a turbulent pouring, inadequate filtration, or a poor gating/riser design.

Aesthetic defects: surface flaws or minor inclusions, such as light sand inclusions or small blow-holes, among others. These do not compromise mechanical performance but may require rework to solve them or cause the rejection of a component.

An automated monitoring system that combines video evidences of the pouring process with synchronized sensor signals provides a practical route for early detection of these faults, supporting root-cause analysis and corrective actions on the line [13,15]. In order to handle this kind of monitoring, the video camera is positioned to capture the pouring event, the filling level of each mould, and any special events like visible overflow or spillage. Simultaneously, process sensors (e.g., temperature, flow/weight, among others) and the material flow behaviour are synchronized with the video to try to identify any problem along the pouring area.

Figure 3 illustrates how this pouring monitoring system is deployed in a real-life green sand foundry plant. The resulting multimodal dataset is used throughout this paper, and is the basis for model training, anomaly detection and the real-time updates sent to the digital twin.

The data used was captured at the selected foundry by a centralized industrial DataLogger-based acquisition system that was configured to communicate with the plant Programmable Logic Controllers (PLCs), map their memory zones and register the relevant signal tags (i.e., digital I/O, analog channels, counters and event markers). Moreover, network cameras were integrated into the same acquisition topology via Real Time Stream Protocol (RSTP) interfaces, and both camera streams and sensor traces were timestamped and recorded into a centralized storage with metadata descriptors. Unfortunately, recorded data does not index pouring events nor mould identification, hence, video frame ranges, synchronized sensor windows and derived descriptors (e.g., filling time, peak flow, temperature profile) cannot be directly matched with a real casting event evidence registered in the Manufacturing Execution System (MES) database.

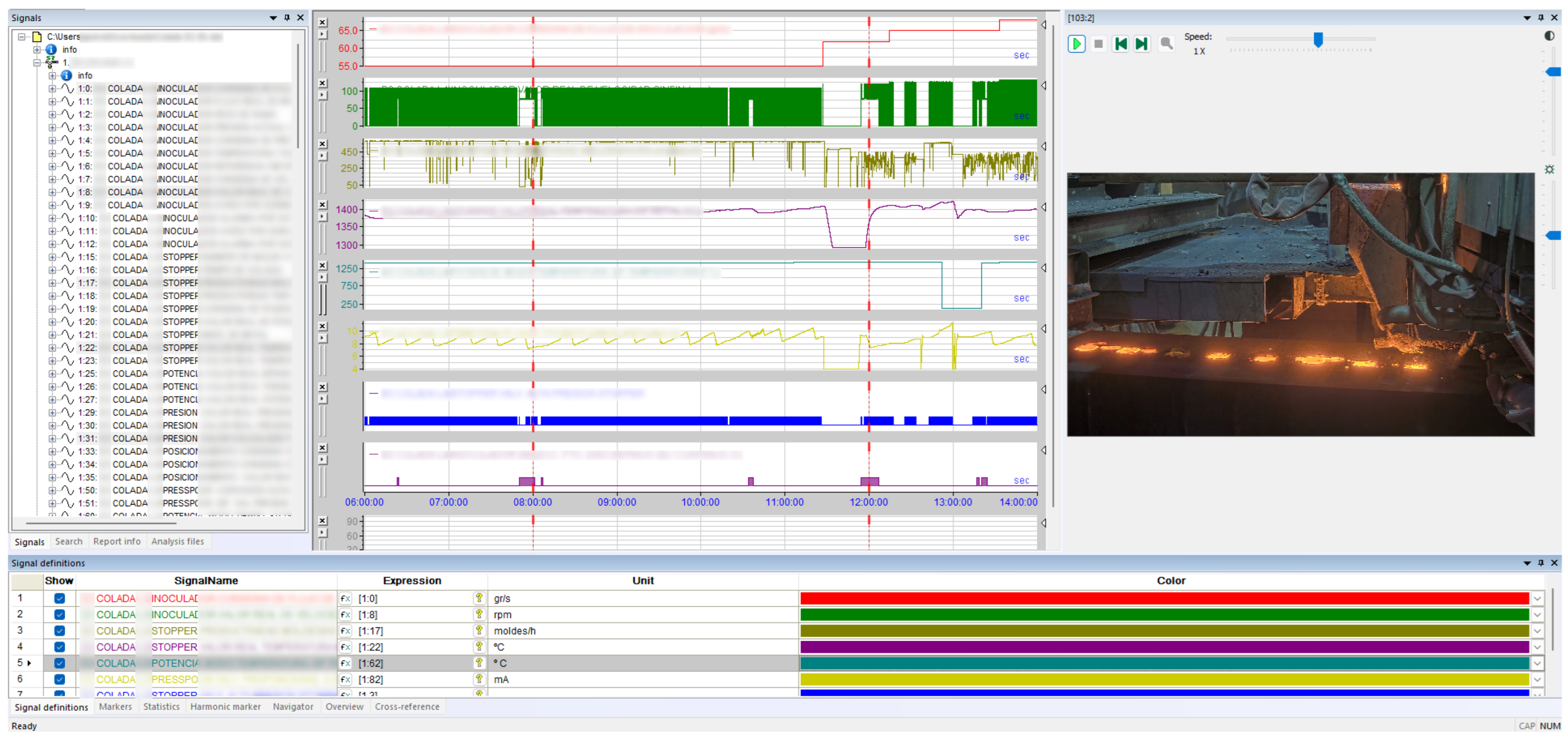

Figure 4.

Screenshot of the centralized DataLogger capture software used in this study. The panel shows a synchronized video frame of the pouring area alongside three representative process signals that correspond to typical pouring telemetry (e.g., melt temperature, stopper mechanism, and hydraulic/pressure channel). All streams are time-aligned and indexed to the active mould identifier. Note that all signal labels are intentionally obfuscated for confidentiality.

Figure 4.

Screenshot of the centralized DataLogger capture software used in this study. The panel shows a synchronized video frame of the pouring area alongside three representative process signals that correspond to typical pouring telemetry (e.g., melt temperature, stopper mechanism, and hydraulic/pressure channel). All streams are time-aligned and indexed to the active mould identifier. Note that all signal labels are intentionally obfuscated for confidentiality.

Next, we provide a detailed explanation of the contents of the dataset used in this study, including data types, acquisition settings, and synchronization strategy to ensure full methodological reproducibility. Nevertheless, to preserve industrial confidentiality, exact counts and specific parameters are omitted and replaced by aggregated descriptors and representative statistics.

Video Recordings

The video dataset comprises approximately 12 hours recorded across 2 sessions under normal operating conditions (with 8 and 4 hours respectively). All sequences were acquired at a resolution of

pixels and 20 fps, encoded with the H.264/AVC standard at bitrates ranging between 3.0 and 3.2 Mbps. No audio tracks were included in the recordings. Both videos show a stable keyframe period of 1.5 s, ensuring temporal alignment with sensor data. In both recordings, the camera placement corresponds to the field of view illustrated in

Figure 3, capturing the pouring stream, the tile/cup area, and the first produced moulds in the beginning area of the conveyor (all detailed in

Figure 2).

The captured footage represents the continuous operation of the vertical green-sand moulding line producing nodular iron castings of different references and cup geometries. Recordings display typical working conditions of the industrial casting line, including changes in lighting due to natural illumination and the radiant emission of the molten metal, turbulent jets in a pouring stream, sporadic metal splashing, overflows, and short production stops during reference changes or mould rejection. The lighting control of the camera is automatic and maintains overall visual consistency despite diurnal variations. Besides pouring events occurring approximately every 5 seconds, these videos also recorded short pauses such as maintenance interventions or reference changes, among others. This special events are ∼2% of the total time. In addition, the dataset also includes both regular and irregular pouring sequences, covering normal variability of the foundry day-by-day work. Although no event tags are included with the data, we were able to identify the special using CV and the synchronized records from the process sensors. Furthermore, reference changes are visually recognizable due to a series of empty moulds and a manual mark on the conveyor. With this, we have identified 14 such events, confirming the visual stability of the setup and the absence of camera shake or abrupt illumination changes. All videos are stored in raw format without stabilization or image corrections to preserve authentic process dynamics and facilitate a further reproducible multimodal analysis.

Crucially, the precise synchronization of video and sensor data enables robust multimodal analysis. By correlating visual features, like pouring stream shape, with process variables, such as flow rate, this alignment provides the foundation for effective digital twins and data-driven anomaly detection, supporting predictive quality control in real production environments.

Sensor Recordings

Parallel to video acquisition, a complete set of process and control signals was recorded from the plant. These signals were sampled at an approximate rate of 2.5 Hz and they are synchronized with the video frames using shared timestamps. The gathered dataset contains 155 distinct process variables, reflecting both continuous and discrete values across the foundry line. Although the precise sensor identifiers are hidden for confidentiality reasons, the signals can be grouped into several functional subsystems that are representative of a vertical green-sand casting process.

Table 1 describes the approximate distribution of signals among these subsystems and provides representative examples of each group. These can be summarized into four groups:

Moulding area, which includes critical variables for maintaining a consistent mould density and ensuring dimensional integrity before pouring.

Pouring process, including variables related to the press-pour unit and supervising molten metal conditions. These parameters directly determine casting quality and correlate strongly with the aforementioned casting defects.

Conveyor and cooling line, which monitor mould evolution after pouring.

Auxiliary and safety systems signals, which provide contextual information about line stops and maintenance events.

Quality and traceability signals identifies reference changes, rejected moulds, or operator interventions, facilitating synchronization between the physical process and the third-party systems.

2.2. Global Context Evaluation

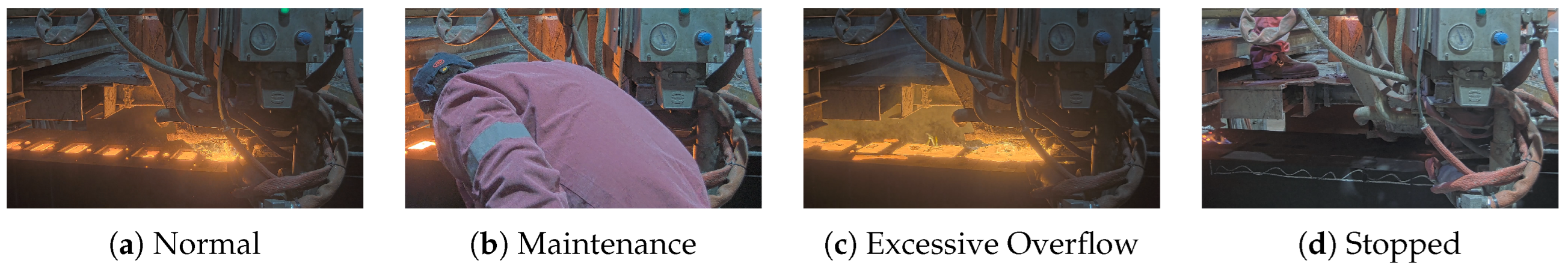

Then, we focus on understanding the global production context in which these operations occur. This stage handles the classification of the overall operational state of the foundry line from visual information. Its specific goal is to distinguish whether the process is running under normal conditions or affected by contextual disturbances such as maintenance tasks (which might create operator occlusions), over-pouring, or technical stops. This enables both real-time alerts and the exclusion of anomalous sequences from subsequent analytical modules. In fact, this classification enables filtering non-productive intervals and contextual anomalies that could otherwise distort quality assessment or multimodal fusion with process signals. We have defined 4 contextual states, which represent the production workflow itself (see

Figure 5):

- 1.

Normal operation, corresponding to regular casting cycles where pouring, mould transport and cooling proceed without interference.

- 2.

Maintenance, when operators enter the scene or perform manual adjustments, often producing occlusions that compromise visual analysis.

- 3.

Overflow, describing situations where molten metal splashes outside the mould cup, typically caused by misalignment or excessive pouring rate.

- 4.

Stop line, representing planned or unplanned halts such as pattern changes and safety stops, among others. Usually, moulds are manually marked as rejected with chalk.

To automate this contextual classification, several image classification architectures were considered. Traditional convolutional networks such as ResNet-50 [30] and EfficientNet [31] have been the foundation of manufacturing vision systems due to their accuracy and fine tuning capabilities. Nevertheless, more recent transformer based architectures such as ViT [32] and ConvNeXt [33] introduce improved global attention mechanisms, achieving outstanding accuracy in large scale datasets but with significant computational demands. Hence, they are less suitable for real-time inspection problems. On the contrary, the YOLOv11-class [34] model offers an optimal balance between precision and latency, optimized for real-time industrial deployment. It also ensures architectural compatibility with the area detector and classifier used in this work (see Sections 2.3.2 and 2.4.1), simplifying deployment and reducing hardware requirements.

The dataset employed for training comprised approximately 262,600 images extracted from the 4-hour video recording. Each frame was manually labelled by a foundry expert into one of four categories: normal operation (212,000 samples), overflow (45,000), maintenance (2,500), and stop line (2,100). The data were split into training, validation, and test sets with a 60/20/20 ratio. We fine tuned the pretrained YOLOv11 backbone, employing the standard Ultralytics augmentation strategies (for instance, including random scaling and cropping, horizontal flipping, HSV adjustments, gaussian blur and mosaic), which have shown to significantly improve robustness under varying illumination (e.g., molten metal glare) and occlusions commonly found in casting environments [34].

In summary, this module completes the visual perception pipeline by attaching a contextual state to every detected pouring event. As a result, only segments corresponding to normal operation are forwarded to quality evaluation and MoE fusion, while frames affected by maintenance tasks, line stoppages, overflows, or camera occlusions are automatically flagged and excluded.

2.3. Local Image Interpretation and Event Detection

This section addresses the local analysis of the video stream, where visual information is structured into meaningful process entities. It comprises 2 complementary stages: first, the Pouring Event Identification, which temporally segments the continuous sequence into discrete casting cycles; and second, the Detection of AoIs, which spatially isolates the relevant regions within each event for subsequent interpretation. Together, these stages transform raw video data into semantically organized information that serves as the axes for a high level modelling and process understanding.

2.3.1. Pouring Event Identification

The final objective of this stage is to enable the automatic interpretation of manufacturing visual content through event delimitation within the pouring process. This stage aims to temporally segment the continuous video sequence into well-defined pouring cycles that correspond to real process operations. We denote these segments as

pouring events. From this stage, every frame is processed not as an isolated observation but as part of a dynamic process where recurrent operations occur under industrial constraints, since each production cycle can be represented as a repetitive task of mould generation, pouring, transport, and cooling. Formally, we define the set of pouring events as

, where each event

represents a complete pouring operation within the production line. Each event

is delimited in time by the lowering and subsequent rise of the pouring unit, specifically,

, with

the instant when the pouring unit begins its descent and

when the filled mould leaves the pouring zone or the next empty mould arrives. During this interval, we observe a multimodal sequence of variables:

where

denotes the video stream,

the stream shape descriptors,

the environment characterization indicators,

the process variables such as pressure, temperature, among others, and

the quality measurements related to the current production reference.

In theory, the acquired signals can provide direct temporal markers. Nevertheless, in practice, industrial data acquisition often suffers from synchronization drift, missing packets, or timing mismatches between video and process signals [39]. Therefore, a double check provided by a artificial vision-based approach was adopted to autonomously identify event boundaries by analysing image motion and frame similarity.

For this purpose, several algorithms were considered to determine an optimal trade-off between accuracy, robustness, and computational efficiency. One common approach is dense Optical Flow (OF), such as Recurrent All-Pairs Field Transforms (RAFT) [40], which computes pixel-wise motion vectors across consecutive frames. OF has become a benchmark for high precision motion estimation in industrial and robotic inspection tasks, as it offers a fine grained motion tracking. However, this approach exhibits high computational cost and large memory footprint, limiting real time deployments on full HD sequences. Although this fact is discussed in [41] and authors provide new optimization possibilities, we decided to discard it.

Alternatively, simple frame or histogram difference methods were also tested as baseline strategies for motion quantification, given their minimal computational load. Notwithstanding, as noted by Karbalaie et al. [42], such techniques are highly sensitive to the molten metal, due to the produced glow and automatic exposure corrections by cameras, which may trigger false positives.

Finally, we consider the Structural Similarity Index Measure (SSIM), which has been validated as a perceptual metric for detecting state transitions in industrial video monitoring [43,44]. SSIM quantifies luminance, contrast, and structural coherence between successive frames, enabling detection molds and metal movements. Recent studies have validated its robustness against illumination fluctuations and flicker noise in real production environments [45]. Its low computational complexity makes it particularly well suited for embedded industrial monitoring systems.

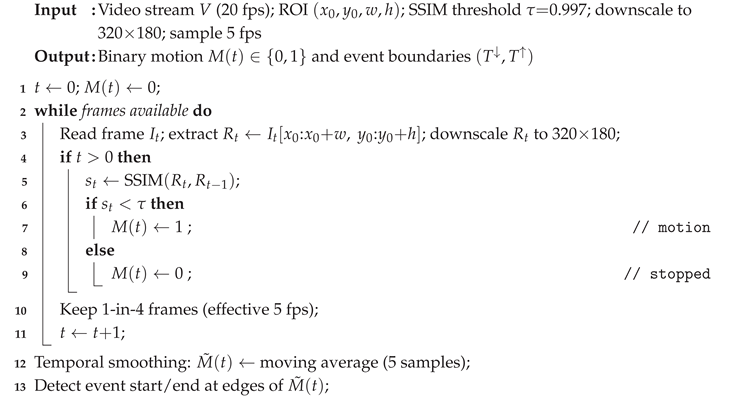

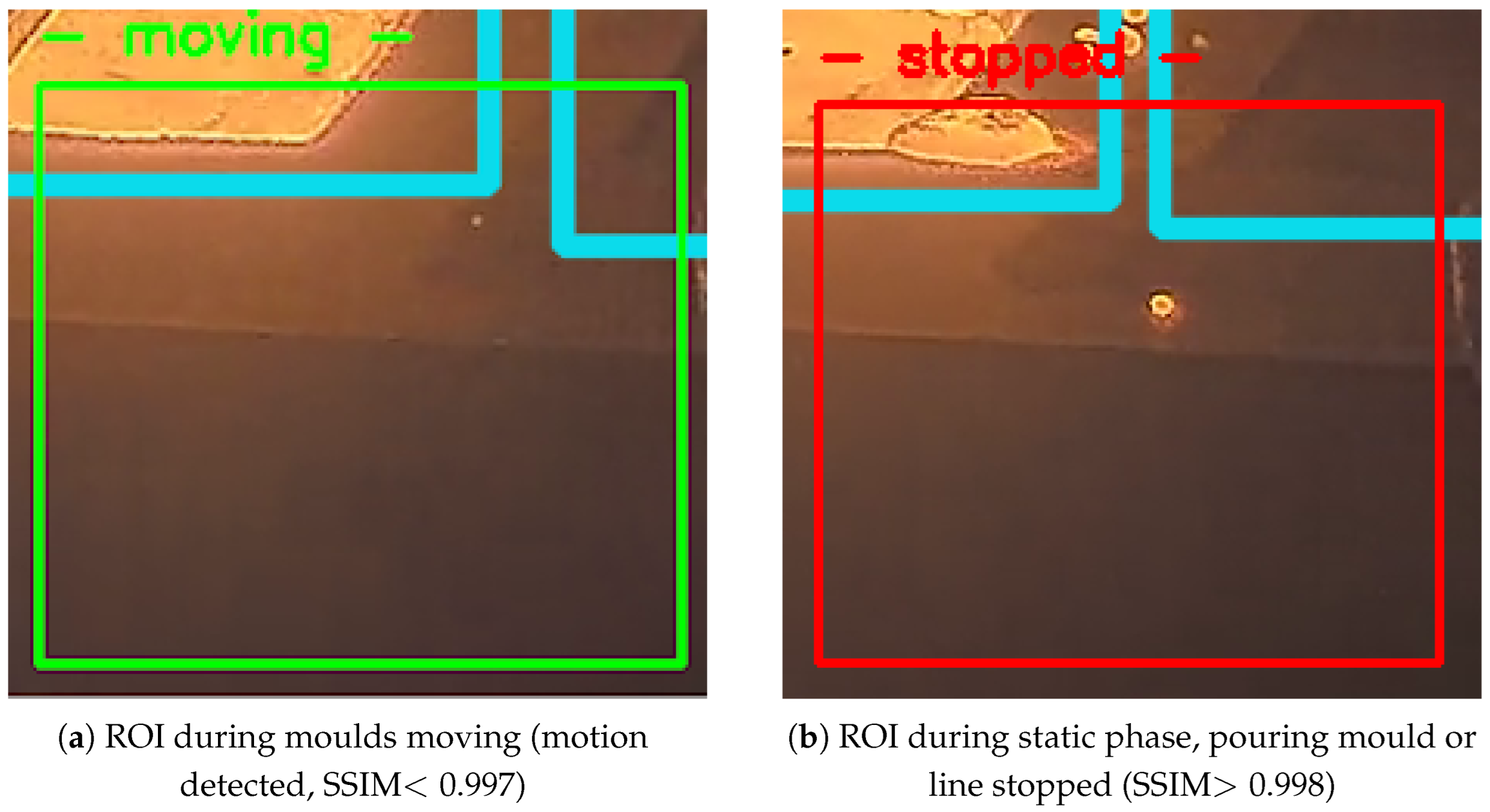

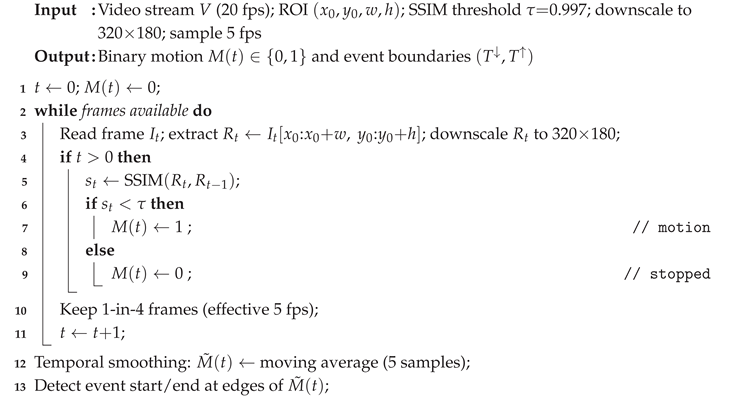

To further optimize performance, the analysis was restricted to a dynamically defined Region of Interest (ROI) enclosing the pouring stream and adjacent mould cup, as illustrated in

Figure 6. In addition, the pouring stream region is detected dynamically through an adaptive ROI strategy. This allows the system to automatically adjust to camera viewpoint changes, ensuring that the pouring zone and the first poured mould exiting the scene remain consistently localised even under shifts in perspective or framing. Hence, the performace is improved when reducing redundant pixel processing and only maintaining focus on semantically relevant areas [39,45]. In our implementation, the ROI position is automatically updated based on the previously detected stream centroid, creating our own self adaptive mechanism that maintains consistent spatial tracking.

Algorithm 1 summarizes the final adopted implementation using SSIM motion detector. The algorithm processes consecutive frames within a small ROI enclosing the pouring zone and computes their structural similarity to quantify inter frame changes. Motion is declared whenever the similarity drops below a threshold

, which effectively distinguishes between static and dynamic phases. Temporal smoothing, carried out as a 5 frame moving average, reduces spurious transitions caused by exposure fluctuations or molten metal glow. Finally, to ensure lightweight processing, we downscale the ROI region down to

px. This configuration provides a robust and computationally efficient solution that runs in real time while preserving accurate event delimitation. The resulting binary signal

marks the start and end of each pouring cycle and serves as the temporal reference for subsequent analysis and synchronization with sensor data.

|

Algorithm 1: SSIM-based motion detection within ROI (casting event delimitation) |

|

2.3.2. Detection of Areas of Interest

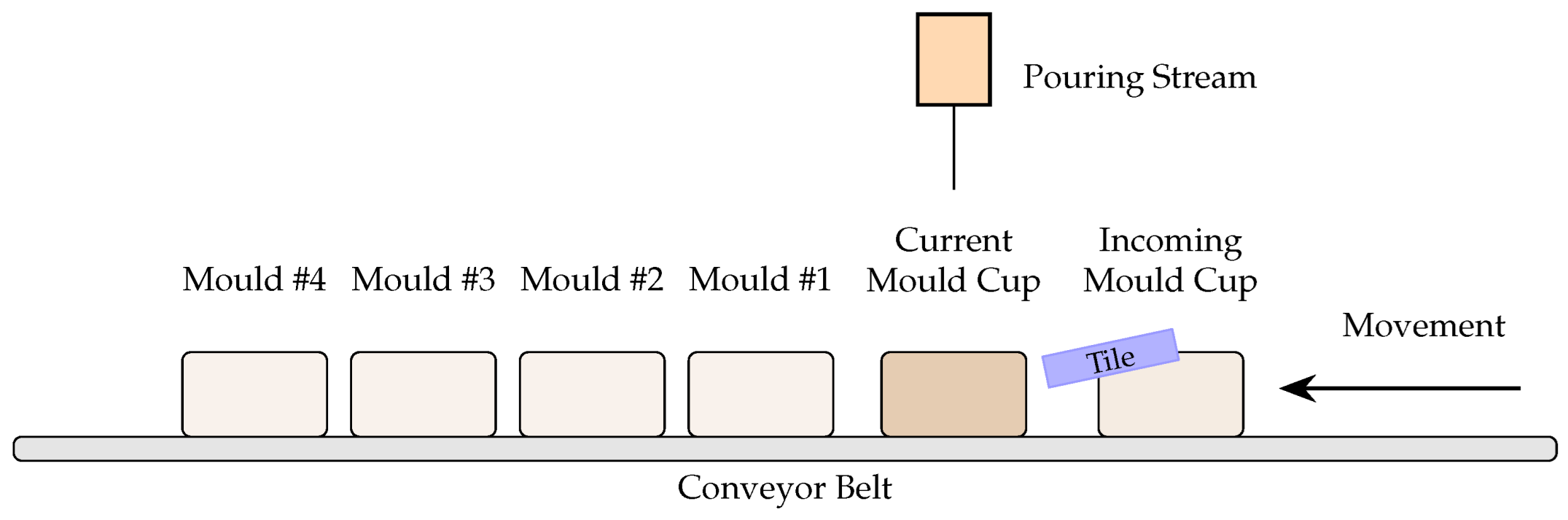

Once the pouring events are detected, the next step is concerned with the interpretation of the visual content within each event. Despite the high spatial richness of each frame, large regions of the image correspond to static background or machine structures with little relevance to measure the quality of the pouring process. Therefore, this analysis must be focused on specific

Areas of Interest, which correspond to the relevant regions where molten metal interacts with the mould and auxiliary devices during the pouring stage (see

Figure 7), such as:

Mould cup(s): visible holes that are positioned in front of the camera on arrival to the pouring area. They may appear empty, partially, or fully filled. Monitoring their fill level provides contextual information on pouring accuracy and metal delivery consistency.

Pouring stream: the continuous jet of molten metal descending from the pouring unit. Its shape, thickness, and turbulence reflect process stability and are strongly correlated with casting defects.

Tile (splash guard): a protective device that prevents splashes or secondary jets from contaminating the next mould.

Incoming mould cup: the next cup that becomes visible when the conveyor advances. Occasionally, early contact with residual metal or splashes may occur, and its early detection allows triggering predictive alarms.

To automatically identify these areas, several object detection architectures were considered, spanning both two-stage and one-stage paradigms. Two-stage models, such as Faster R-CNN [46], first generate region proposals and then classify them, achieving excellent accuracy but at high computational cost. In contrast, one-stage models like SSD [47] or the YOLO family [48] predict bounding boxes directly over feature maps, delivering competitive precision at significantly lower latency, making them ideal for industrial applications with real time constraints. In particular, the YOLO family consistently provides the best balance between inference speed and detection accuracy [47,48], outperforming SSD and achieving significantly lower latency than Faster R-CNN. Given the real time operational requirements of our foundry line, YOLOv11 was selected as the detection backbone. For the initial proof-of-concept, we adopted the lightweight YOLOv11-nano variant to evaluate feasibility and runtime, leaving open the possibility of upscaling to larger versions for accuracy improvements.

The model was trained using a dataset of approximately

258,000 frames extracted from a 4-hour production video. Due to manual annotation of such volume is prohibitive, we employed a two-stage semi-supervised strategy similar to the human-in-the-loop approaches described by Lee et al. [51] and Liu et al. [52]. The first phase involved

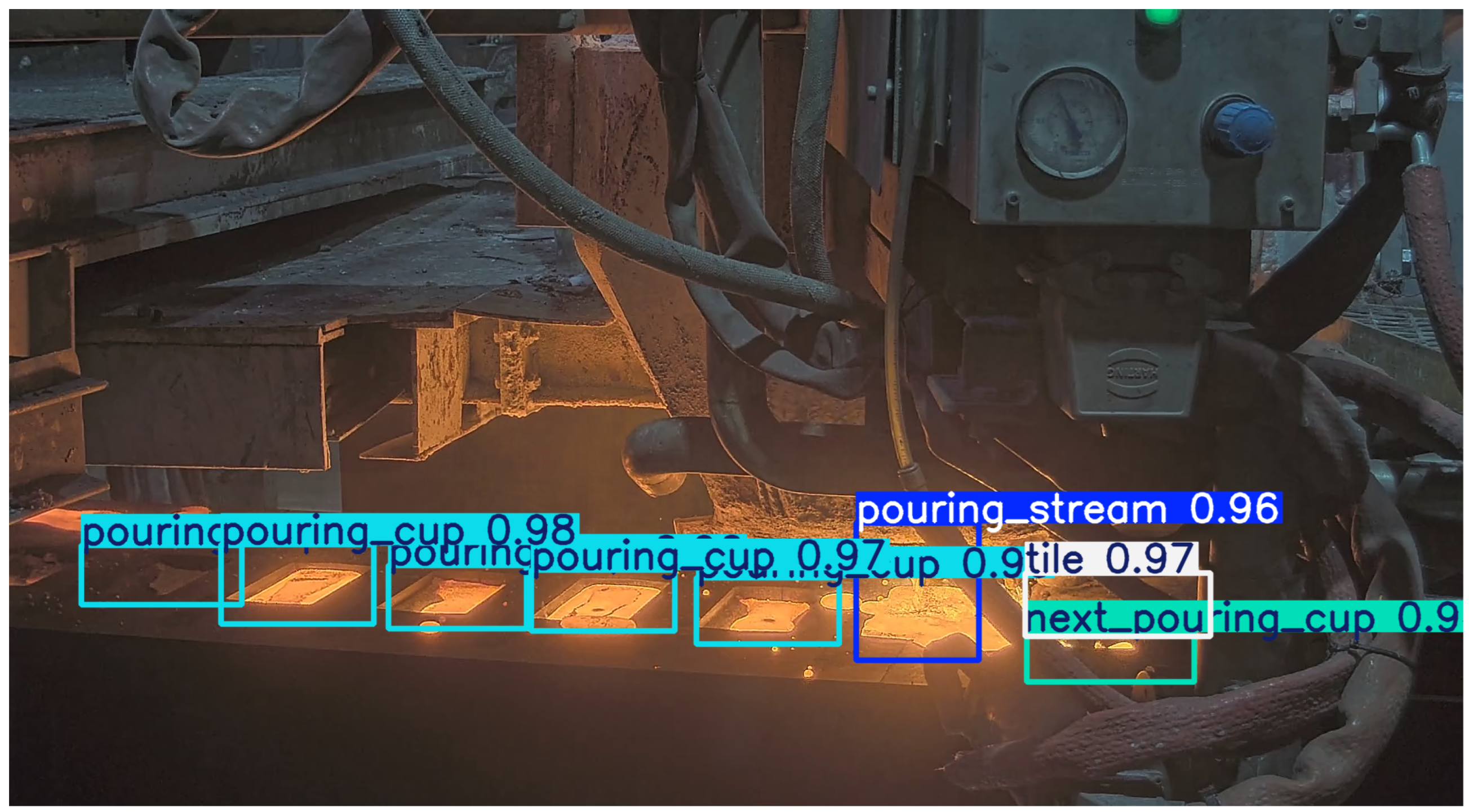

17,000 manually labelled frames covering diverse conditions (illumination, references, turbulence levels). The second phase used the preliminary detector trained on those to automatically label the remaining frames, which were then manually reviewed and corrected to create the final training corpus. This iterative refinement increased annotation efficiency and ensured accurate bounding boxes. In summary, the combination of the pouring event and area-of-interest detection yields a highly structured video representation aligned with the actual foundry workflow. This pre-processing stage eliminates irrelevant visual noise, focuses computation on semantically meaningful areas, and supplies clean and time-aligned visual features to be fed the subsequent models responsible for pouring quality assessment and the further integration with the MoE framework. An example of detected AoIs can be seen in

Figure 8.

2.4. Areas of Interest Visual Classification

Next we aim to perform local classification within each of the detected AoIs to characterize their visual state in the manufacturing process. This step focuses on the static regions extracted from the YOLOv11 detections (see

Section 2.3.2), specifically, the mould cups and the next incoming cup. It was excluded the pouring stream, which requires a temporal analysis and is described separately in

Section 3.4.

2.4.1. Mould Cup Filling State Classification

Each detected mould cup is analysed to determine its filling level condition. The classes considered are

empty,

medium,

full, and

overpoured, illustrated in

Figure 9. These states represent, respectively: (i) a mould cup that has not yet received metal, (ii) one that has been partially filled, (iii) a correctly filled cup, and (iv) an excessive pouring resulting in overflow or splashing.

This classification step is essential because the mould path is exposed to multiple sources of variability that may compromise casting quality. For instance, mechanical vibrations of the conveyor, mould misalignment, sand defects, or microleakages along the pouring channel. This issues can cause deviations from the expected filling level. Therefore, tracking the state of every mould cup across the detected pouring event allows early detection of anomalous behaviour thanks to the continuous identification of potential metal losses along the line, and the verification that the pouring stream reaches the mould under the required process conditions.

To this end, we employ the same backbone and hyperparameter configuration described in

Section 2.2, again, YOLOv11 classification branch was employed for this task. The data consisted of approximately 184,041 annotated mould cup instances, divided according to the following distribution: 59,414 empty, 50,522 medium, 49,877 full, and 24,228 overpoured. The data were randomly partitioned into training (60%), validation (20%), and testing (20%) subsets. In the same way as in previous classification problems, augmentation strategies such as brightness jittering, small angle rotation, and contrast scaling were applied to improve generalisation under variable illumination and exposure conditions typical of the foundry environment.

Overall, the mould cup classifier extends the AoI level perception layer by offering a fine grained interpretation of each mould’s filling state. Together with the incoming cup detector and the pouring stream analyser, this component contributes to a unified and temporally aligned understanding of the pouring operation, later integrated by the MoE framework.

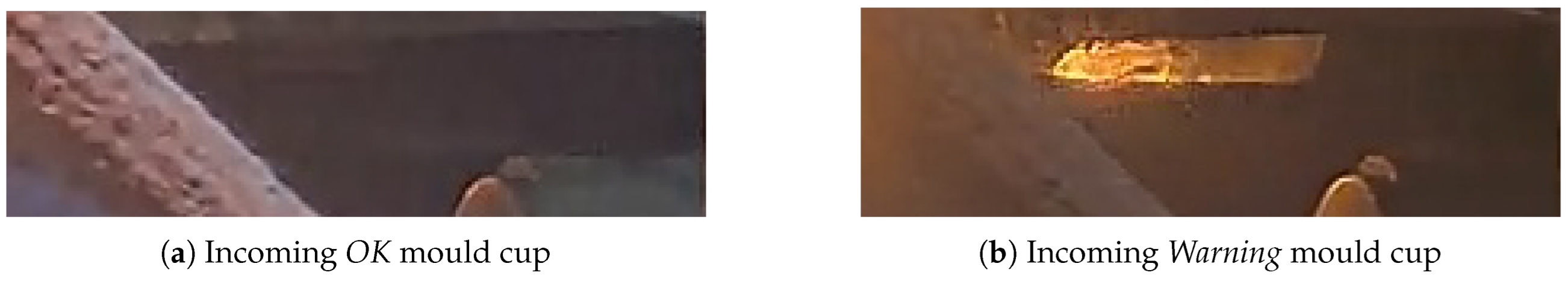

2.4.2. Incoming Mould Cup Classification

In addition to the active pouring zone, the next incoming mould cup is also analysed to anticipate potential quality issues before pouring begins. Detecting metal residues inside an incoming mould cup is critical because even small droplets of prematurely solidified metal can generate severe casting defects. Residual metal may obstruct proper flow during the next pouring, promote cold shut defects when fresh metal meets already solid fragments, or induce local porosity by disrupting thermal gradients inside the mould. Moreover, splashes accumulated during previous cycles may indicate instabilities in the pouring stream or mould alignment issues. For these reasons, the incoming mould cup must be monitored to ensure that its condition does not compromise the quality of the next cast piece in the production sequence.

Two states are considered, as illustrated in

Figure 10: (i)

OK, when the mould cup is clean and ready for filling, and (ii)

Warning, when metal residues or splashes are visually detected inside it. Early identification of such conditions enables predictive alarms and improves downstream traceability of potentially defective moulds. This classifier allows to reliably distinguish clean cups from those containing metal residues even under strong brightness variations or partial occlusions.

The dataset used for this task included 280,363 cropped AoI samples, where 93% represent

OK samples, and 7% are

Warning ones. Each class was divided into training, validation, and test subsets with a 60/20/20 ratio. Training was performed by fine tuning ImageNet-pretrained YOLOv11 weights, applying similar augmentation strategies as in

Section 2.4.1 to ensure robustness as we applied for the pouring cups.

Altogether, the incoming cup classifier acts as an anticipatory safeguard within the pouring pipeline. By detecting hazardous pre-filling conditions several seconds before the molten metal reaches the mould, it enables the system to flag potential risks earlier than any downstream inspection stage could. This early warning capability not only reduces scrap generation but also provides the MoE with critical contextual evidence that enriches the final event evaluation and strengthens decision reliability across the entire production chain.

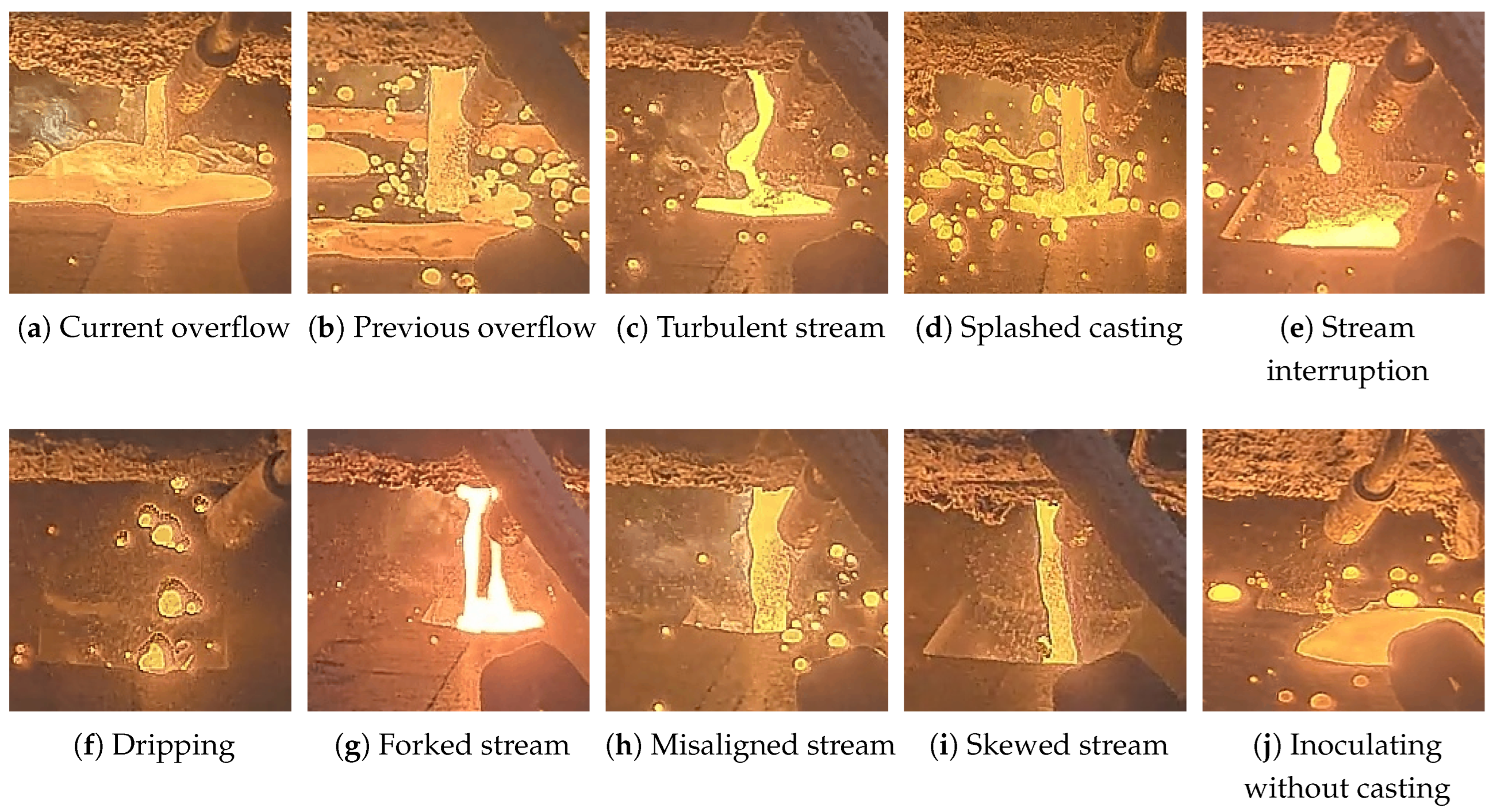

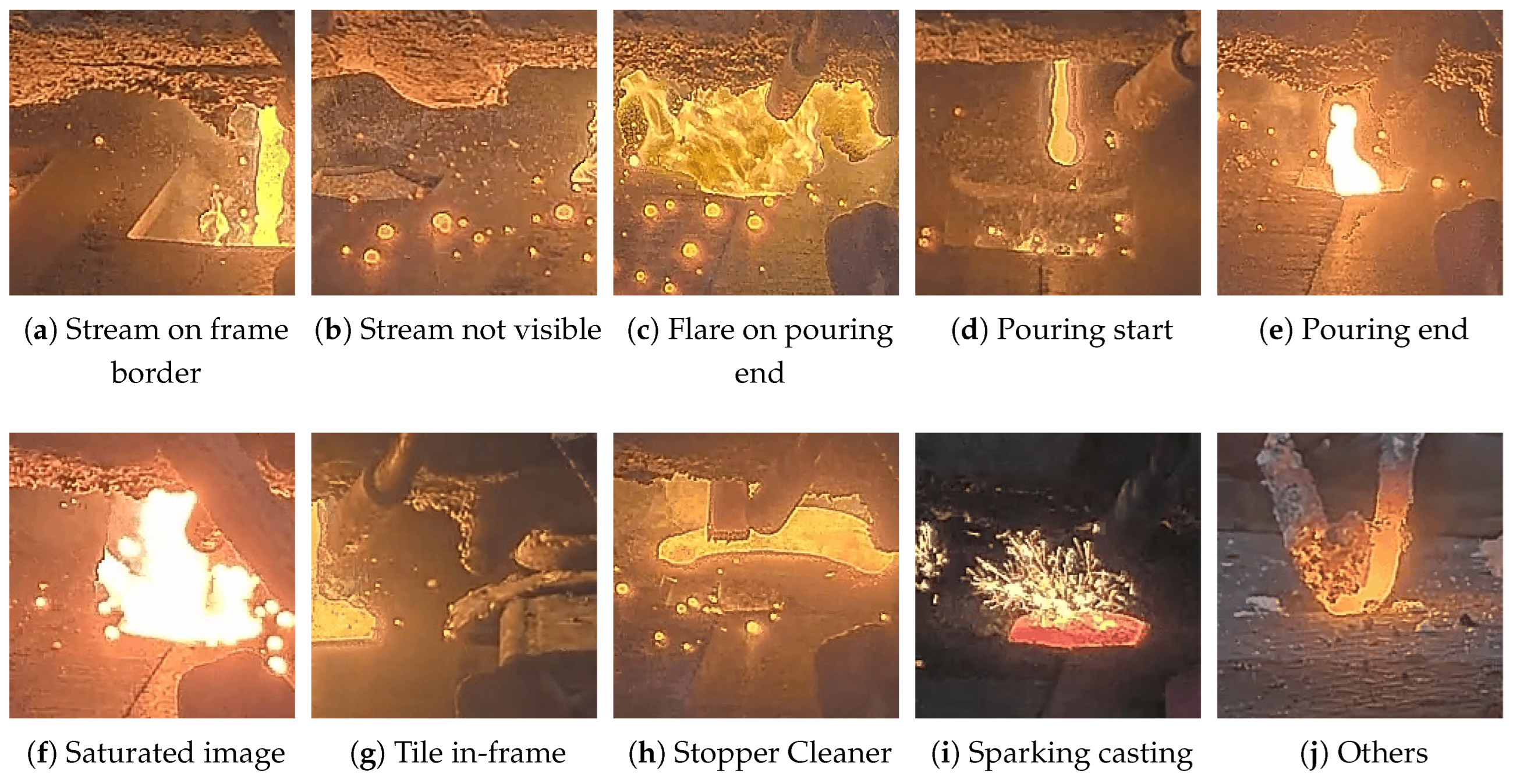

2.5. Pouring Stream Interpretation

In this section we focus on characterizing the pouring stream. Beyond common issues like overflow, maintenance, or sudden drops, defective streams in industrial settings can take on many subtle forms, for example forked, unaligned, crooked, turbulent, or interrupted flows. These variations are visually distinct but often hard to categorize using traditional methods. Complicating matters further, industrial environments typically produce very few defective samples, making it difficult to even confirm their presence in the data. This in turn, requires an extensive annotation process going through hundreds or thousands of normal samples until the anomalous ones are found. As a result, we approach this problem from a different perspective, more akin to anomaly or outlier detection, hypothesizing that this perspective may help uncover not only known defects but also unexpected or rare anomalies.

Our approach can be summarized into two steps. First, we employ an autoencoder-like architecture for the characterization of jet stream dynamics. In particular, by solving a simple self-supervised reconstruction task, the network learns to model the inherent behaviour and dynamics of molten metal, producing multimodal representations of the video and sensory information. Then, we drop the decoder and extract features for each sequence at the output of the encoder. These are fed into a clustering algorithm to properly categorize them into different semantically coherent classes, which we can use to analyze the different types of normal and anomalous samples.

2.5.1. Background

Most anomaly detection techniques rely on having a clean set of normal samples [55,56]. For example, reconstruction-based methods [57,58] use autoencoders trained on normal data to flag anomalies via reconstruction error, while one-class classification [59,60] and normalizing flow [61,62] approaches also depend on clear separation between normal and anomalous data to learn discernable distributions. However, when the dataset is noisy or contaminated, these methods often struggle [63,64]. Some recent works address this by explicitly filtering out anomalies (like in [65,66,67]) or mitigating their impact during training (as it was describen in [65,68,69]). However, these methods either disregard information provided by the anomalous samples, or require a priori knowledge of the contamination degree. Furthermore, many of these works have only been tested on MNIST [70], CIFAR10 [71], or COCO [72] where one class is used as normal samples, and other classes are used as anomalies. While some have been evaluated on MVTec [73], the application of these techniques may not directly translate into our industrial video scenario.

Traditional outlier detection [74] often relies on unsupervised techniques like feature extraction followed by clustering, which are especially useful when labeled data is scarce. This technique has also been used for anomaly detection [75,76,77], as both terms are often used interchangeably. In visual data, feature extraction can be done using supervised models pre-trained on large datasets, but these can be biased towards supervised tasks, hence they may miss the fine-grained, low-level details needed for industrial contexts. Unsupervised methods trained on natural generic images also tend to struggle when applied to specialized domains [78]. We believe that a more promising approach is to learn features directly from the target data, most commonly done by leveraging convolutional autoencoders (e.g., [79,80]), though in our case, we explore transformer-based architectures, as they have been shown to better handle non-local interactions in the data [81].

Regarding feature extraction, supervised approaches are often impractical in our setting. Annotating casting videos requires expert knowledge and is prohibitively expensive, while models trained on labeled data tend to learn task-specific features that do not generalize as well [82]. Similarly, relying on pre-trained models, even those trained with self-supervised objectives, faces the same limitation: most existing models are trained on natural images or videos [83], which differ significantly from our industrial setting. This domain gap makes direct transfer ineffective for capturing the fine-grained patterns relevant to molten metal jet streams. Alternative self-supervised strategies, such as contrastive or joint-embedding methods, excel in categorical tasks, specially in object-centric datasets [84] but struggle in other situations [85,86]. In contrast, generative approaches have shown superior performance in tasks requiring detailed spatial understanding, such as object detection or segmentation [87]. Given that our goal involves characterizing fluid dynamics and texture-level variations, we require a method that preserves high-frequency details rather than focusing solely on global semantics [88].

To address these challenges, we adopt VideoMAE (Video Masked Autoencoder) [18], a self-supervised framework designed for video representation learning. VideoMAE reconstructs masked spatio-temporal patches, encouraging the model to capture fine-grained texture and motion cues essential for understanding jet stream behavior. Its transformer-based architecture effectively models long-range temporal dependencies [88] and supports seamless multi-modal integration, which is advantageous for incorporating additional sensory data [89,90]. Furthermore, its self-supervised nature allows us to exploit large volumes of unlabeled casting videos, learning domain-specific representations without costly annotation. These properties make VideoMAE particularly well-suited for our application, where meaningful spatio-temporal features are critical for downstream clustering and anomaly detection.

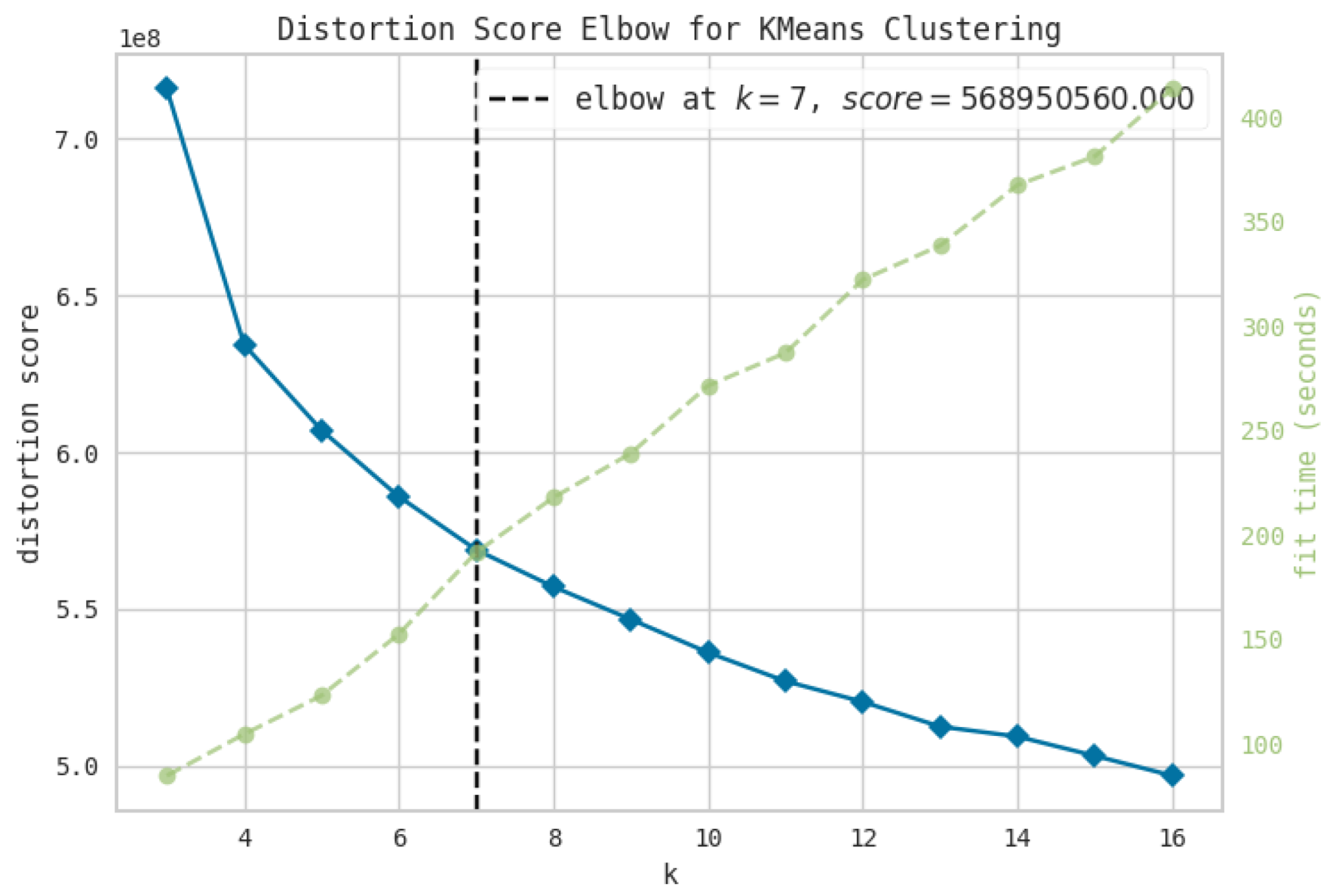

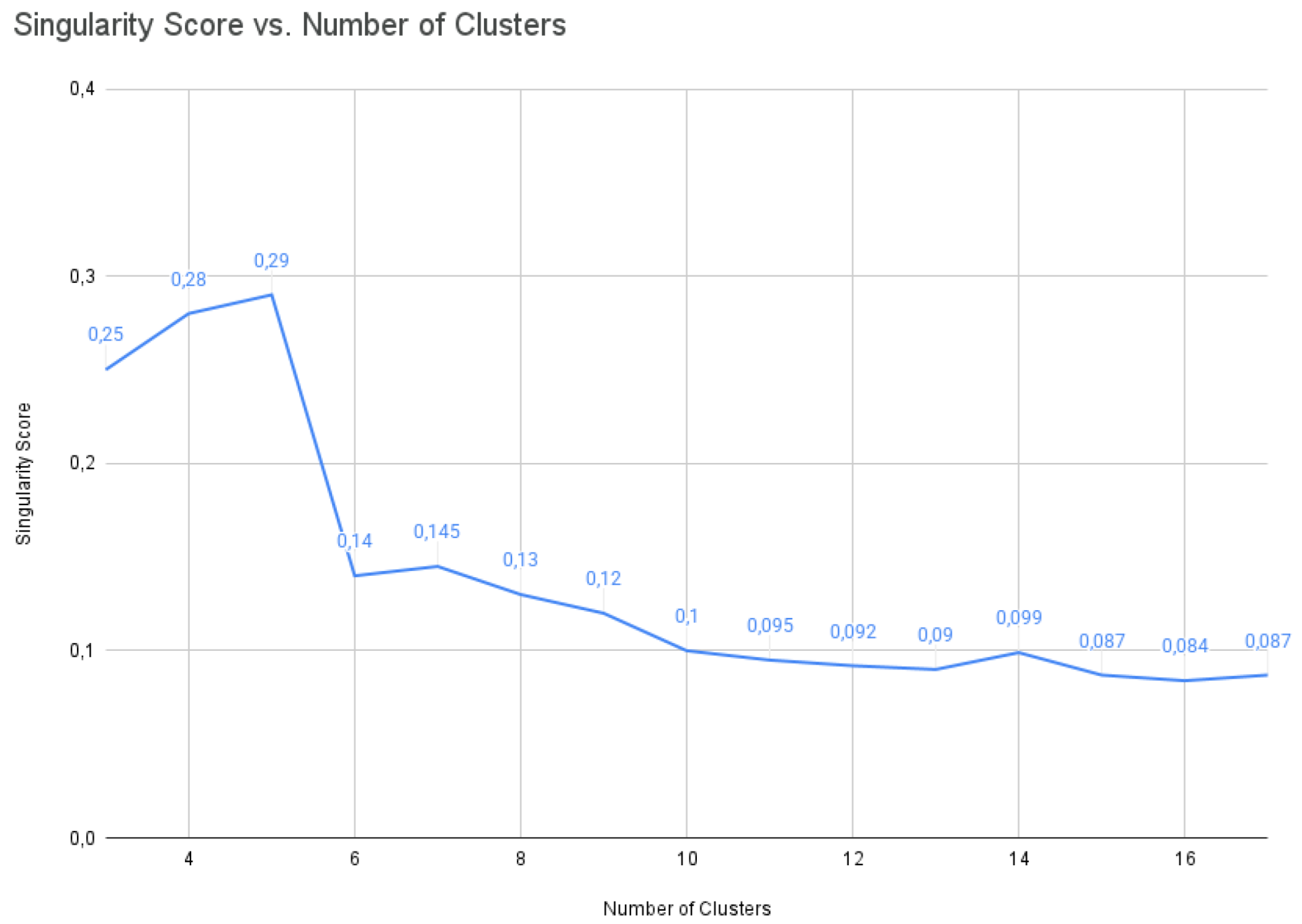

Once features are extracted,

clustering is typically used to separate normal from anomalous samples. K-means is widely used but presents several limitations. It forces all data into clusters, assumes clusters of similar size, and requires the number of clusters to be specified in advance (see also

Section 3.4). Alternatives like CBLOF [91] and LDCOF [92] offer more flexibility but are harder to tune due to excessive hyperparameters. Density-based methods such as DBSCAN [19], Density Peak Clustering [93], HDBSCAN [94], and OPTICS [95] are better suited for identifying outliers directly. Among these, DBSCAN stands out for its robustness and simplicity, and although it has been used extensively in prior work (e.g., [96,97,98]), we have not seen it applied to video data. We believe one reason for this gap in the literature may be related to the

curse of dimensionality (see [99] for an intuitive definition) affecting common distance metrics used in clustering algorithms [100]. Although this has been challenged in other works [101], and we hypothesize that employing a neural network to embed the visual data may help alleviate this problem [102,103].

To the best of our knowledge, there are very few previous works with similarities to our proposal. Current approaches to clustering-based video anomaly detection are generally limited to dual-stream autoencoders paired with parametric clustering methods, like K-means or Spectral, that require a fixed number of clusters [104,105]. While isolated attempts have been made to incorporate spatiotemporal features via 3D convolutions [106] or distance-based outlier removal [105], these methods lack density-based adaptability and do not fully exploit modern transformer architectures. We propose a novel framework that leverages multi-modal video transformers and utilizes DBSCAN to enable density-based outlier detection.

2.5.2. Data Preparation

The position of the pouring stream varies along the moulding sessions. For this reason, for each pouring event defined in

Section 2.3.1, we leveraged the YOLOv11 detector trained in

Section 2.3.2 to extract RoIs of the pouring stream from each frame. To ensure temporal consistency and avoid false detections, we employ a simple algorithm to combine the AoI detected on each individual frame from the pouring event. For each new detection, a decision is made to keep it only if: a) the detection confidence is above a threshold (in practice 0.5), and b) the IoU with the accumulated box (the union of all previously detected boxes within the current pouring event) is above a given threshold (in practice 0.6). When the detector fails to detect a pouring stream for more than a margin of 10 frames, we consider the pouring event is over. As a final cleanup step, we compute the average detected box and remove any outlier box (those for which IoU with the average is below a threshold). The final AoI is computed as the union of the remaining boxes.

2.5.3. Methodology

VideoMAE

In our implementation, VideoMAE [18] processes sequences of 16 frames extracted from the YOLO-detected stream regions, producing rich spatiotemporal embeddings that encode both the spatial characteristics of the stream shape and its temporal evolution. The encoder architecture transforms these video clips into high-dimensional feature vectors that capture subtle variations in stream behavior, such as flow stability, turbulence patterns, and directional changes. These embeddings serve as the foundation for our clustering analysis, where similar behaviors are grouped together, enabling the identification of distinct operational modes and the detection of anomalous patterns that deviate from normal casting conditions.

The temporal modeling capability of VideoMAE is particularly valuable for stream analysis, as casting anomalies often manifest as temporal irregularities rather than instantaneous defects. By encoding sequences rather than individual frames, the model can distinguish between normal flow variations and genuine anomalies, such as bifurcations or unstable flow patterns that develop over time. This temporal awareness, combined with the model’s ability to learn from unlabeled data, makes VideoMAE an ideal choice for developing robust, interpretable characterizations of industrial streams that can support both real-time monitoring and predictive maintenance applications.

In a nutshell, VideoMAE masks part of the input video and reconstructs it using an encoder–decoder architecture. The encoder learns a compact representation of the input, while the decoder reconstructs the masked tokens from that representation. The video input

is initially partitioned into non-overlapping spatio-temporal patches. Each patch is passed through a lightweight convolutional network (CNN) to produce an embedding, yielding

, where

denotes the number of resulting patches (hereafter denoted as

tokens) and

their feature dimension. A portion of these tokens is then randomly masked, and only the unmasked tokens are provided to the transformer encoder

. Importantly, the masking scheme suppresses an entire spatial location across all time steps to prevent the model from exploiting information carried over from nearby frames (i.e., shortcut learning [107]). The decoder

subsequently consumes both the visible tokens from the encoder and a set of learnable mask tokens to reconstruct the original video. The mean squared error (MSE) loss is used between the masked and reconstructed tokens:

where

p is the token index,

is the set of masked tokens,

I is the input image, and

is the reconstructed one. The weights are initialized with the ViT-B pre-trained model on Kinetics-400 [108] (1600 epochs) provided by the authors on the model zoo [109]. We then remove the decoder and keep the final features at the output of the encoder.

Sensory data

We employ the implementation provided by the authors in [109]. To include sensory data, we sample readings from the same time-range as the frames: where is the number of sensors used and is the number of readings sampled during a given video sequence. We use a fully connected network to map the readings into the same dimensionality as the visual tokens, resulting in . These are then simply enhanced with sinusoidal positional encodings [110] and concatenated together with the visual tokens. As the number of sensory tokens is notably smaller than that of visual tokens, we also add a weight to the loss, weighting the contribution of each element to the loss. In this manner, the final loss results in where and are the weights for the visual and sensory loss respectively, set in practice to 1 and 5.

For the sensory data we combine two masking strategies. First, masking tokens as done for the visual ones, resulting in a reduced set of sensory tokens. However, the model could simply learn to interpolate between readings of the same sensor. For this reason, we also mask entire sensors across all temporal tokens. In order to preserve the temporal dimension across tokens, we choose to mask by setting the values of those sensors to 0. We hypothesize that, with this combined approach we force the model to better understand visio-sensory relationships to properly infer the values of the missing sensors and visual information as a whole. We use a masking ratio of 82% for visual tokens and 45% for sensory data. The remaining parameters were left as in the original paper.

Clustering

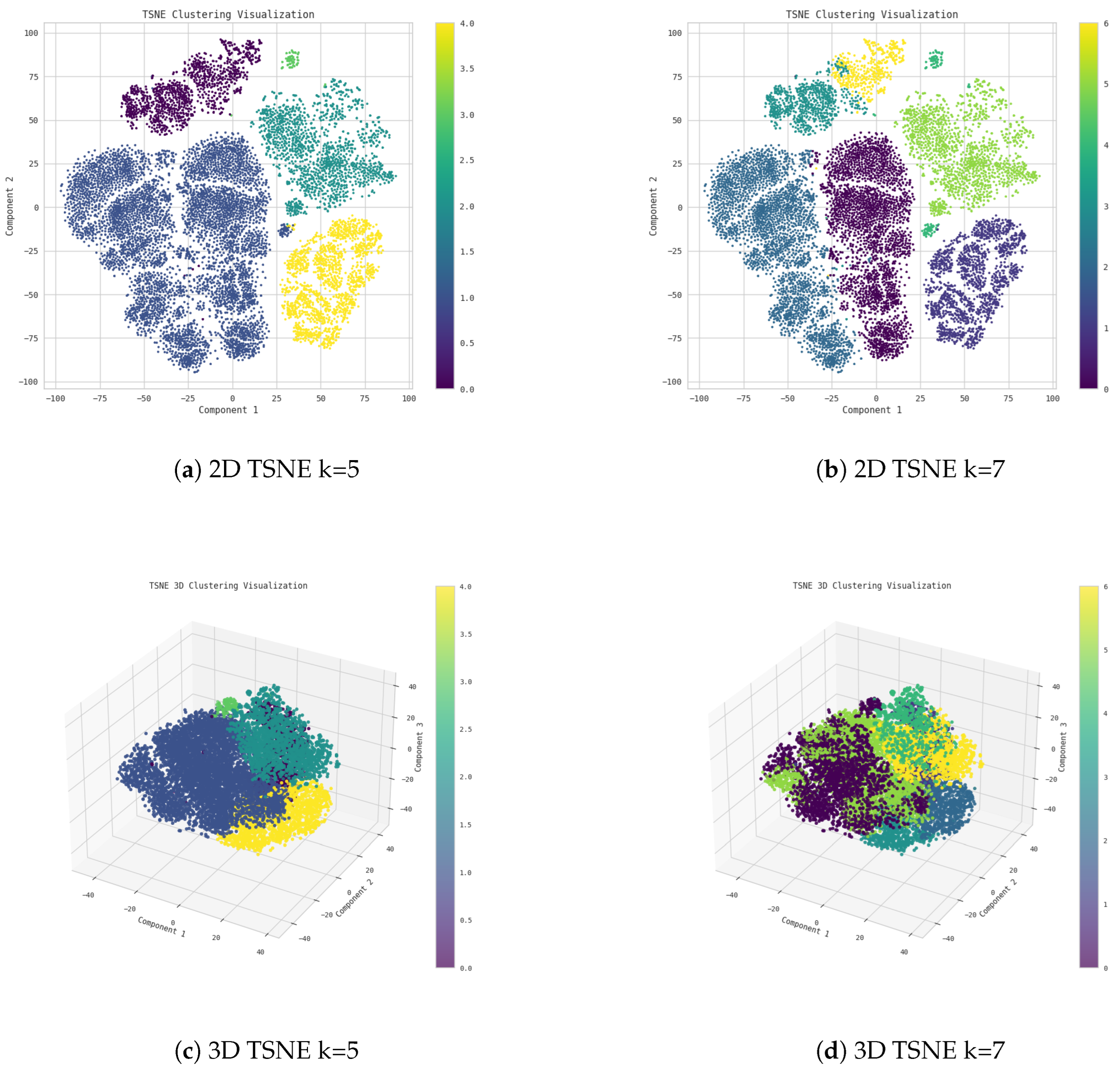

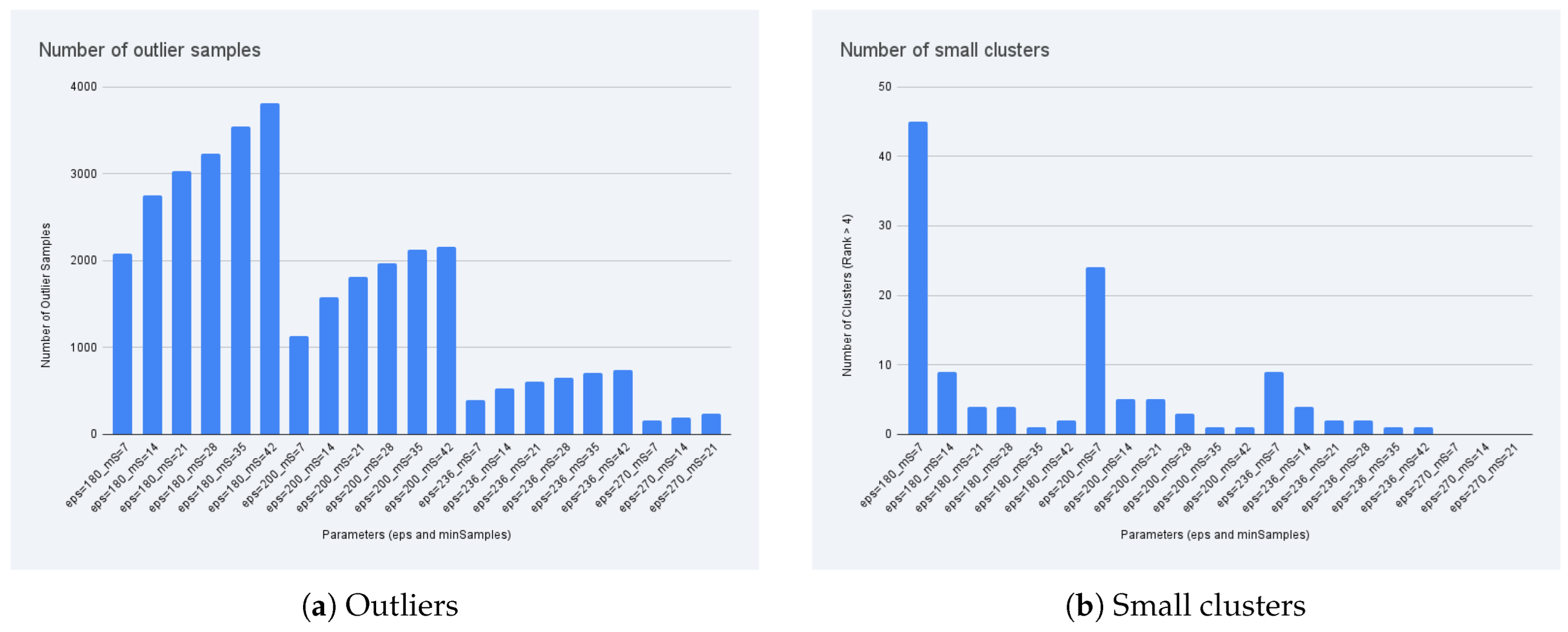

Once training converges, the decoder is discarded and the encoder processes full, unmasked sequences. Spatially pooled visual tokens and sensory features are extracted, normalized, and further reduced, while temporal features are preserved. The resulting representation is fed into a clustering stage (see

Section 3.4). DBSCAN is adopted as the primary method with empirically tuned parameters, and k-means is evaluated as a baseline.

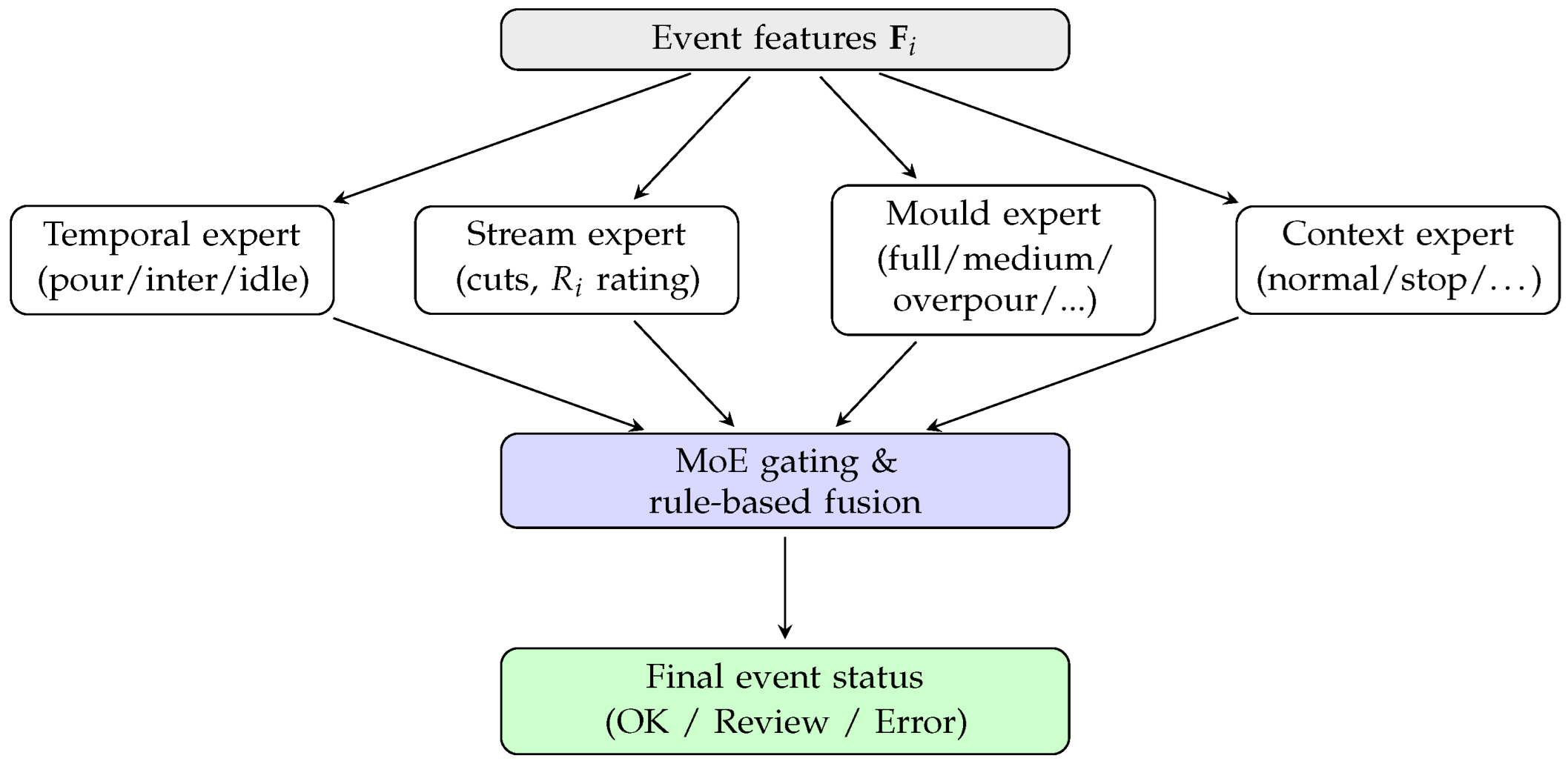

2.6. Mixture of Experts

Once the pouring events have been identified thanks to the movement detection (see

Section 2.3.1) and the relevant AoIs detected and classified (

Section 2.3.2), the next step focuses on evaluating the manufacturing outcome of each cycle. Specifically, this stage analyses both (i) the visual state of each detected element involved in the pouring operation and (ii) the process performance according to the foundry control plan. The resulting measurements is a structured set of variables that, lately, will be employed by the MoE system and its integrated rule-based expert system for data aggregation, correlation and knowledge generation. The overall interaction between the different experts and the supervisory MoE layer is summarised in

Figure 11, which illustrates how heterogeneous assessments are combined into a single event level decision.

Evaluation of AoIs and pouring stream conditions.

For every detected pouring event

, the visual elements are interpreted to produce a set of semantic labels. In this case, the pouring stream is characterised by the anomaly score computed in

Section 3.4:

.

Moreover, the number of interruptions in the stream is also quantified as

, while the total pouring duration is defined as:

Furthermore, each mould cup in the conveyor is tracked before, during, and after reaching the pouring zone, giving the identification of its filling state like

The following cup (i.e., the incoming mould cup) is simultaneously monitored to detect undesired metal droplets or premature splashes, producing a binary safety flag , where denotes a warning condition. Similarly, the presence or absence of the tile (also known as the splash guard) is inferred to contextualise if the protection mechanisms are present.

These indicators allow tracking each mould individually along the the visible span of the conveyor belt captured by the camera, detecting undesired metal losses or incomplete filling throughout its trajectory over the visible part of the conveyor. This information is preserved and later aligned with the production references for final traceability.

Evaluation of process performance and control limits.

Beyond the visual part, each pouring event is checked against the process constraints defined in the foundry control plan. For every event

, the following temporal variables are computed:

These variables are compared against the acceptable ranges established by the specific control specifications of the produced reference:

When the process exceeds these tolerances, process deviations are identified. Those deviations can be translated into special and also risky situations. Additionally, stream interruptions are also recorded through and their temporal position related to the event. These cuts are often correlated with turbulence, clogged nozzles or thermomechanical instabilities. In addition, a prolonged idle time may indicate inoculation delays, metal cooling trends, or transient line imbalance that propagates downstream..

Finally, each event is annotated with its corresponding operational context, obtained from the global context classifier (explained in

Section 2.2). Rather than a single binary label, all context changes occurred during the event are logged as

, where

denotes the

k-th context state (e.g.

normal,

maintenance,

overflow,

stoppage), together with its start and end timestamps. This work allows to measure the total duration of each context situation (

) and identify whether any portion of the event was affected by maintenance operations, operator occlusions or technical stops. In that way, segments labelled as non-regular production are still stored for traceability but are excluded from strict quality assessment and statistical evaluation.

In summary, the manufacturing evaluation stage transforms raw visual observations and temporal measurements into a structured and fully interpretable description of each casting cycle. This representation captures not only the physical behaviour of the pouring stream and the mould cups but also the operational context and the compliance of the process with its reference control plan. Nevertheless, these heterogeneous outputs still constitute independent evidences that must be jointly analysed to infer whether the event is globally acceptable, risky or defective. For this reason, the next stage introduces a MoE architecture that unifies all previously extracted indicators, applies a rule-based expert system grounded in foundry knowledge, and produces a final, coherent decision to be consumed by higher level systems such as digital twin platforms or supervisory decision tools.

2.6.1. Unification and Digital Twin

Once all visual and temporal layers have been independently processed, which means that temporally delimited pouring events are extracted (explained in

Section 2.3.1), the detection and segmentation of areas of interest are carried out (described in

Section 2.3.2), the manufacturing context classification is performed (summarized in

Section 2.2), the interpretation of the pouring stream itself (determining its continuity, turbulence or flow interruptions in the way that is explained in

Section 3.4), and the extraction of process metrics is achieved; it becomes necessary to unify these heterogeneous outputs into a consolidated and decision-ready representation of the pouring process.

To achieve this integration in a robust and interpretable manner, we adopt a Mixture-of-Experts paradigm [111]. MoE architectures have proven highly effective in coordinating specialised expert modules by relying on a sparse expert routing mechanism, enabling scalable decision making while preserving interpretability.

Their suitability for heterogeneous industrial data is further reinforced by recent advances in multi-source fusion and diagnostic modelling. Previous work has consistently shown that modular expert-based architectures are especially advantageous in scenarios such as foundry pouring control, where heterogeneous sensing modalities, strong temporal dependencies, and strict operational reliability constraints must be jointly handled within a single decision framework. For example, Ma et al. [112] demonstrate that complex manufacturing processes benefit from architectures capable of unifying symbolic, temporal and visual information within a common representation space, showing that heterogeneous data fusion significantly improves fault discrimination and process interpretability. Similarly, the survey by Han et al. [113] highlights that modern fault diagnosis pipelines increasingly rely on expert decomposed reasoning, where different submodels specialise in operating conditions, regimes or sensor subsets. Finally, recent multimodal approaches such as the dual-attentive fusion model of Chu et al. [114] demonstrate that selectively combining specialised feature extractors leads to superior robustness against noise, variable regimes and transient disturbances.

In our system, the MoE performs the following key functions: (i) aggregating all expert outputs into a single structured representation, (ii) evaluating consistency with process specifications via a rule-based industrial expert system, and (iii) generating a final judgement for each casting event. Moreover, for each detected event , the MoE maintains a temporal memory so that late visual updates (for example, an overflow detected after the mould cup has left the pouring zone) can be retroactively assigned to the correct event.

Accordingly, the raw feature set ingested by the MoE can be reformulated as:

where:

, and denote the measured pouring duration, inter pouring interval and idle time between moulds for event .

, and are the control plan thresholds that define acceptable operating limits. These values are not binary decisions, specifically, they are numerical references used by the MoE to assess compliance with production standards.

represents the regressed anomalous status of the pouring stream.

denotes the ordered set of mould cups observed during , each tagged with its filling state.

is a binary or categorical indicator describing the state of the protective tile during event .

encodes detected overflow or metal splashes affecting mould position j.

stores the time resolved evolution of the manufacturing context throughout event .

Rule-based expert system inside the MoE.

The rule-based layer operates as a deterministic supervisor that interprets according to domain knowledge. A simplified subset of the logic is as follows:

Pouring time rule: if then (underpouring); if then (overpouring).

Inter pouring rule: if then .

Idle rule: if then .

-

Stream integrity rule: the MoE evaluates the stability of the pouring stream by jointly analysing: (i) the number of flow interruptions , (ii) their durations , (iii) their quartile locations within the event , and (iv) the deviation of the actual pouring time from the control plan target . The resulting stream integrity rating is determined as follows:

- −

High risk: long interruptions ( above the control plan tolerance) or cuts occurring in the initial or early quartiles. These situations often produce partial filling, cold shuts or early loss of stream coherence, making them critical for defect formation.

- −

Medium risk: interruptions of short duration located in middle or late quartiles, or a moderate number of cuts () that do not exceed duration thresholds. Deviations of from the reference target also increase the risk to medium level.

- −

Low risk: short and isolated cuts occurring in the last quartile, where their impact on filling quality is typically minimal, and the pouring duration remains close to the control plan target.

To formalise this rule into a quantitative and interpretable metric, the MoE computes a

stream integrity rating for each event

, defined as:

where:

- −

is the total number of detected interruptions;

- −

is the duration of the k-th interruption;

- −

is the quartile index of the interruption;

- −

is a monotonically decreasing quartile weight (), reflecting higher sensitivity to early cuts;

- −

is the nominal pouring duration from the control plan;

- −

are expert–defined coefficients calibrated to the sensitivity of the process.

This scalar is then used by the MoE as an aggregated quality indicator whose value increases with the number of cuts , their severity, their temporal position within the event, and the deviation of from the expected reference value.

Cup fill consistency rule: if any mould cup transitions from full to medium after leaving the pouring zone, the system flags metal loss after pouring.

Context rule: if any context segment within corresponds to maintenance or occlusion, then the event is marked as non-valid for quality.

Digital Twin integration via structured event JSON.

Once the MoE finalises the event assessment, the system serialises all results into a structured JSON document. This format enables real-time communication through a WebSocket based publisher/subscriber interface and ensures interoperability with any external system, including digital twins, MES, or predictive maintenance modules.

This structured output (see

Appendix A for an example) facilitates downstream data ingestion, enables full traceability of each mould and its related pouring event, and ensures that the entire pipeline remains compatible with real-time digital representations of the foundry process. Moreover,

This structured output facilitates downstream data ingestion, enables full traceability of each mould and its associated pouring event, and ensures that the entire pipeline remains compatible with real-time digital representations of the foundry process. Moreover, the JSON package includes not only the MoE derived decisions but also the raw measurements, allowing third-party digital twins and supervisory systems to reproduce the full operational state, both descriptive and interpretative, with complete consistency and synchronisation.

3. Results

In this section we report the experimental performance of each functional block introduced in

Section 2. In particular, we first evaluate the local image interpretation and event detection, then the manufacturing context classifier, the AoI evaluation modules, anomalous pouring stream results, and finally the global MoE aggregation.

3.1. Global Context Evaluation

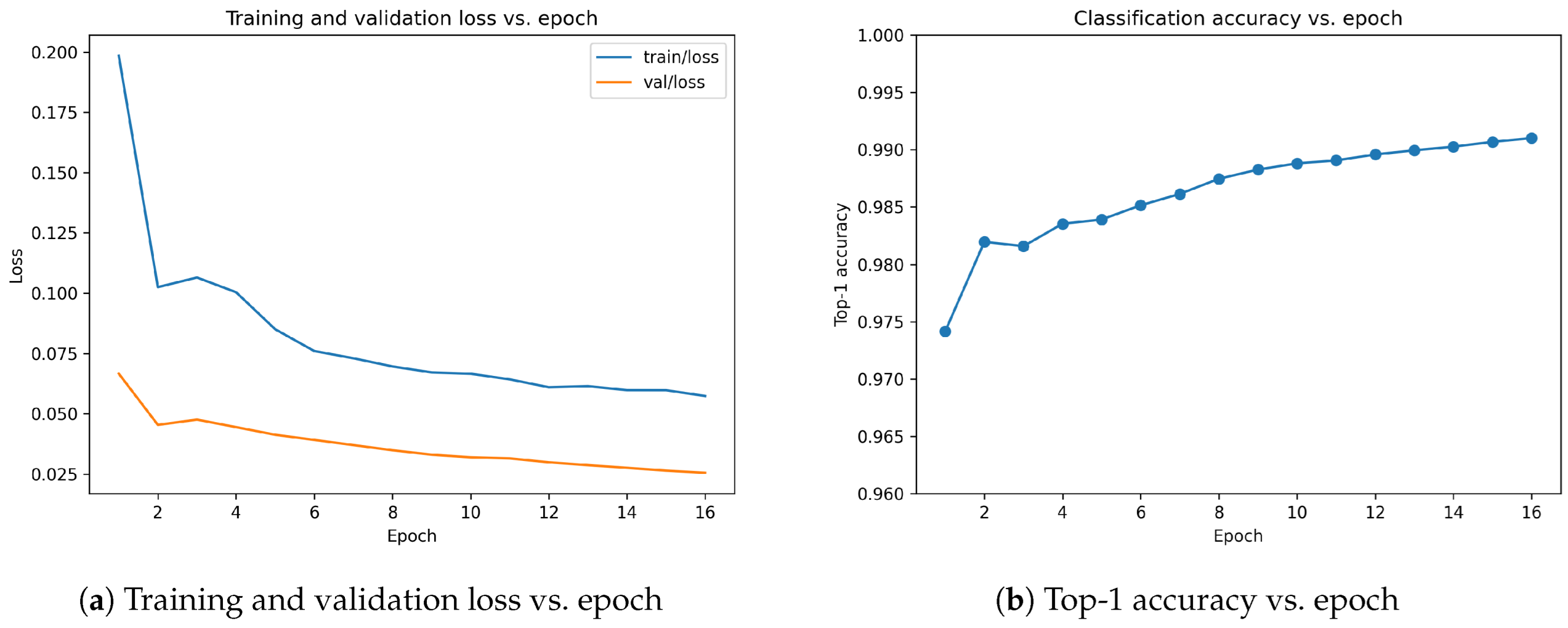

The environmental context classifier exhibited a fast, stable and monotonic convergence throughout the training process.

Figure 12 summarises the evolution of the main learning metrics across the training epochs. As shown, the

train/loss decreases steadily from 0.198 to 0.057, while the validation loss mirrors this behaviour, reaching 0.025 at the final epoch. This sustained and simultaneous reduction of both curves indicates an absence of overfitting and a highly consistent optimisation process.

In parallel, the classification accuracy increases rapidly. The

top-1 accuracy improves from 97.4% in the very first epoch to 99.1% by epoch 16, reflecting the strong visual separability of the context categories. The validation loss decreases smoothly without oscillations, suggesting that the model generalises well even in the presence of the significant class imbalance described in

Section 2.2. Interestingly, only a small number of epochs are required to reach high performance, which is consistent with the characteristic behaviour of YOLOv11 classification models when trained on visually distinct categories. According to industrial visual inspection literature, top-1 accuracy values above 0.95 and small train–validation loss gaps are considered indicators of reliable deployment in production environments.

The results confirm that the model reliably extracts and discriminates the contextual states associated with the 4 production conditions (i.e., normal, maintenance, stopline and overpouring). This robustness is essential for downstream integration into the MoE, where the environmental context acts as a gating signal for validating the usability of each pouring event.

3.2. Local Image Intepretation and Event Detection

We first quantify the ability of the system to detect pouring events over long sequences, and then, we evaluate the performance of the AoI detector.

Pouring event detection

To evaluate the robustness of the event detector under realistic operating conditions, we use one hour of continuous production video with its corresponding sensor readings. These signals record, among other variables, the number of produced moulds. Then, we compared the automatically detected mould counts with the ground-truth number of pours.

Table 2 summarises the statistics of this experiment.

Our approach underestimates the number of moulds by only . The mean production rate measured by the detector ( moulds/min) is therefore very close to the actual rate ( moulds/min), and the ratio between detected and real counts remains within . The missed events are mostly associated with non-standard situations, such as contrast variations caused by molten metal that reduce or maintenance periods during which personnel partially occlude the camera view. Nonetheless, thanks to additional detectors downstream, we are able to identify these atypical situations and label them accordingly.

From a qualitative perspective, the annotated video confirms that the detector behaves consistently under regular operating conditions. It successfully tracks consecutive pours, short interruptions, and occasional variations in the number of active streams or the flow geometry. These patterns are clearly reflected in the temporal series of detected events, which will later be exploited for the characterization of different pouring conditions.

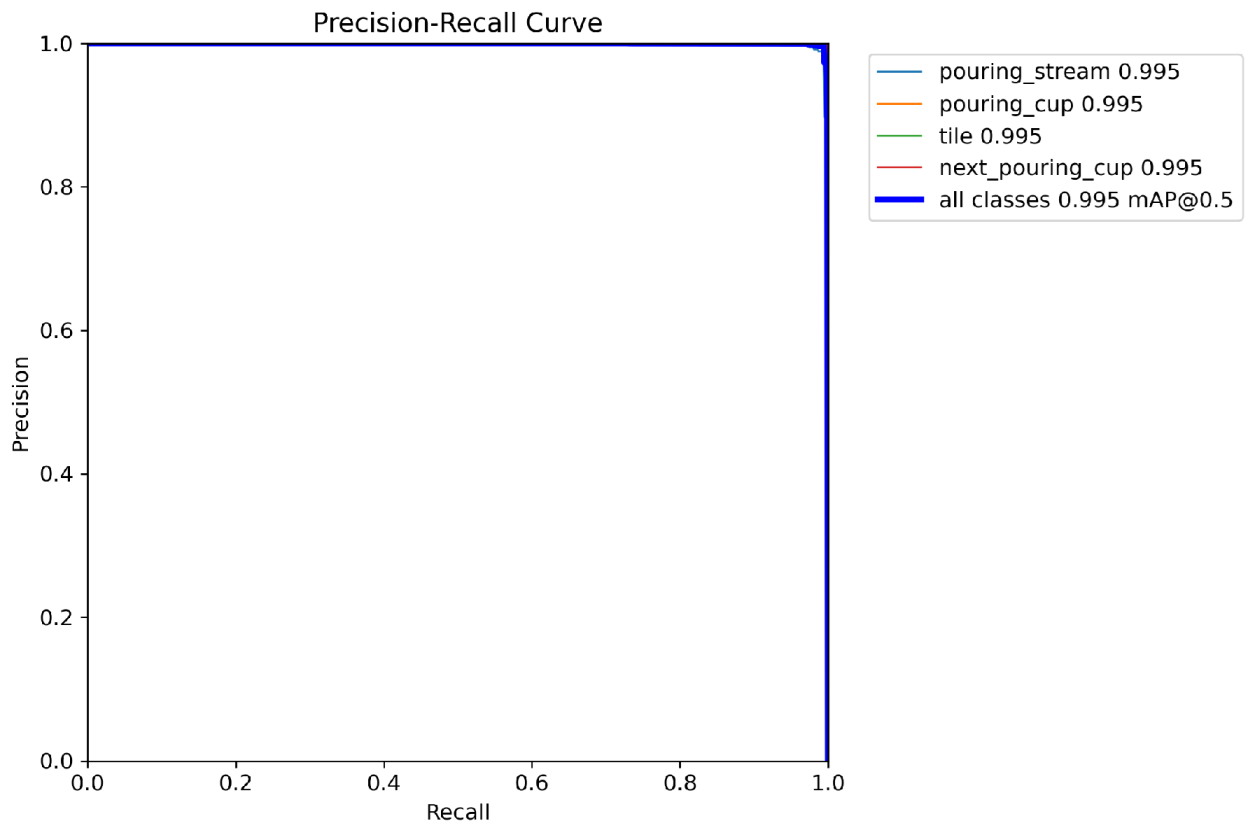

AoI detection

The second component of the foundry image interpreter focuses on frame-wise detection of the AoIs involved in the pouring process. As discussed in

Section 2.3.2, the detector was trained to recognise 4 semantic regions: (i)

pouring_stream, (ii)

pouring_cup, (iii)

tile, and (iv)

next_pouring_cup.

The resulting model achieves an average precision of

To further illustrate the stability of detection across confidence thresholds, the precision–recall curves in

Figure 13 summarise behaviour for each class as well as the aggregated performance. Indeed. all curves remain close to the upper right corner, showing that the detector maintains precision above 0.98 for almost the complete recall range. Furthermore, the aggregated curve exhibits a typical “flat–steep” profile associated with models that rarely trade recall for false positives, which is particularly beneficial for downstream temporal reasoning.

The normalised confusion matrix in

Table 3 provides an additional perspective on the residual misclassifications. Its strongly diagonal structure reflects accuracies that are nearly perfect. In that way, minor confusions arise in two scenarios: (i)

pouring stream vs. background, which occurs when very faint streams appear at the beginning or end of a pour; and (ii) adjacent cup confusion between

pouring cup and

next pouring cup, typically in frames where both cups are closely aligned along the vertical axis. Nevertheless, these cases represent borderline visual situations rather than systematic failure modes.

Taken together, the detection results show that the AoI model provides a highly trustworthy spatial description of each frame. This is particularly relevant because the output of the AoI detector serves as the spatial foundation for temporal reasoning, environmental assessment, and expert fusion.

3.3. Areas of Interest Visual Classification

The specific AoI classifiers operate on the regions detected by the perception module, assigning semantic labels to two key visual elements of the pouring line, firstly, the

mould cups that are already been poured and, secondly, the

incoming mould cup that will be poured in the conveyor sequence. Both classifiers employ the same training protocol described in

Section 2.2, including identical data management strategy, augmentation policies, loss functions and evaluation metrics. This ensures methodological consistency across all recognition and classification tasks.

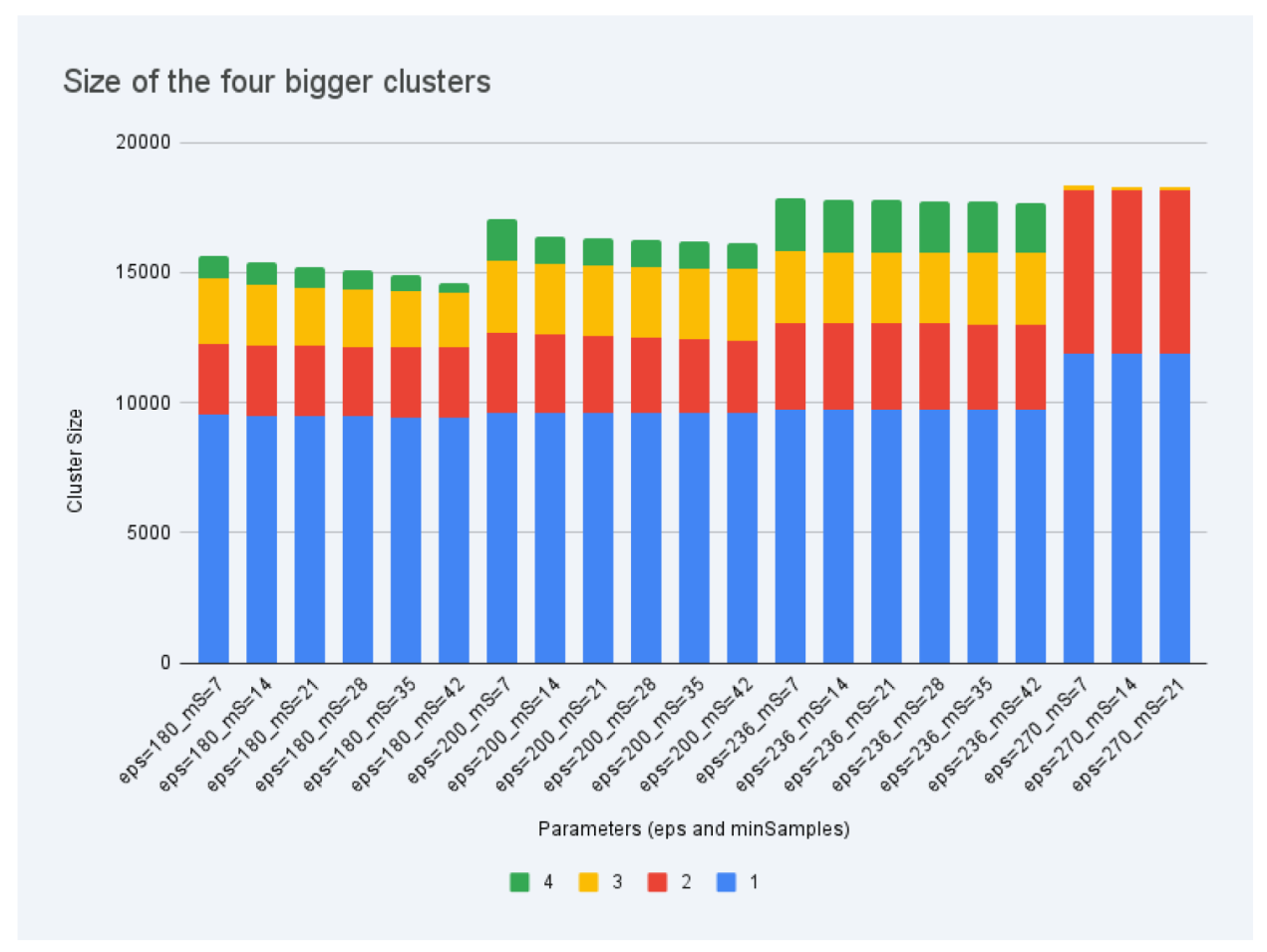

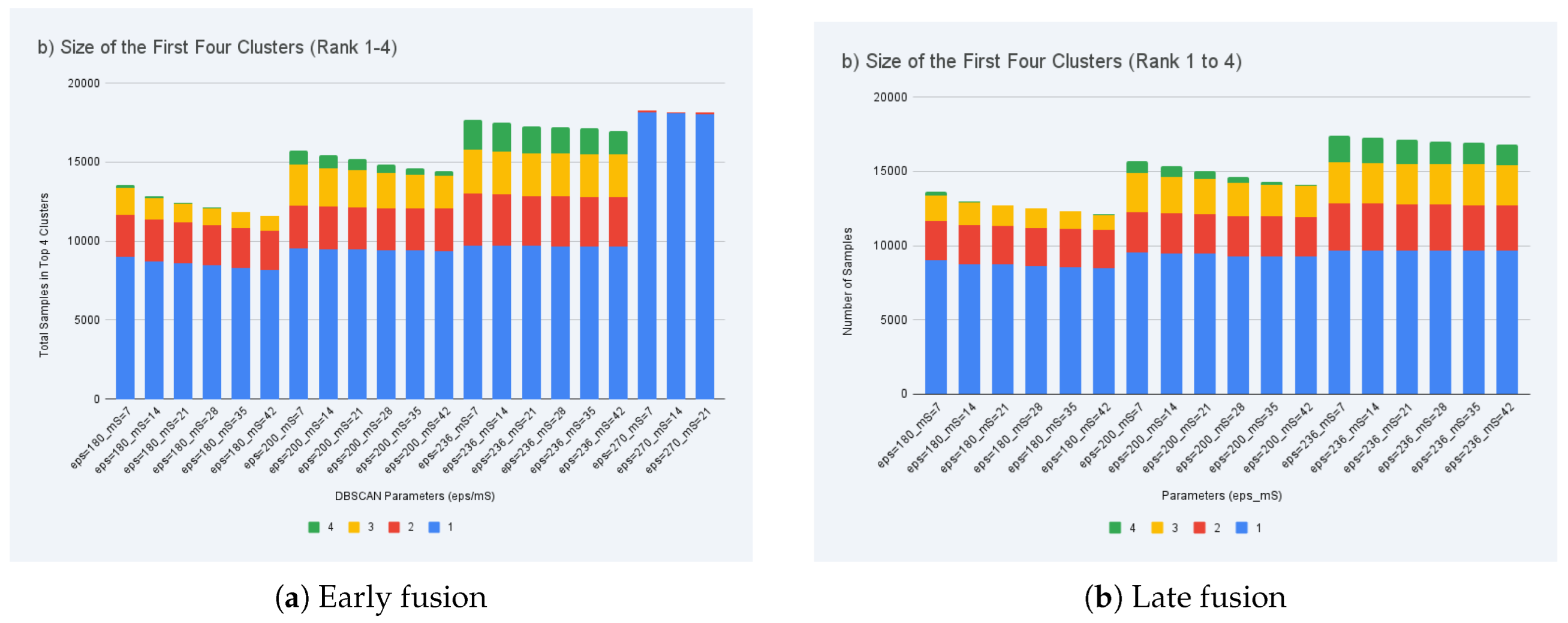

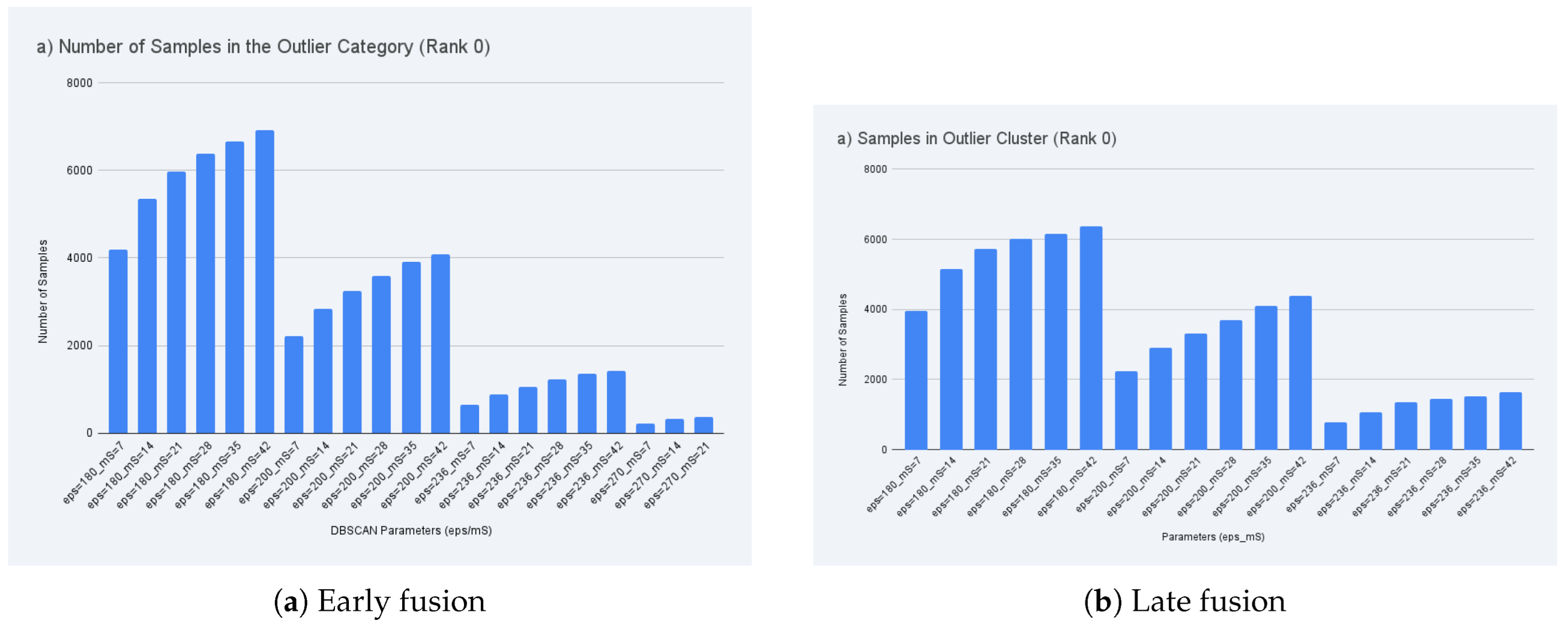

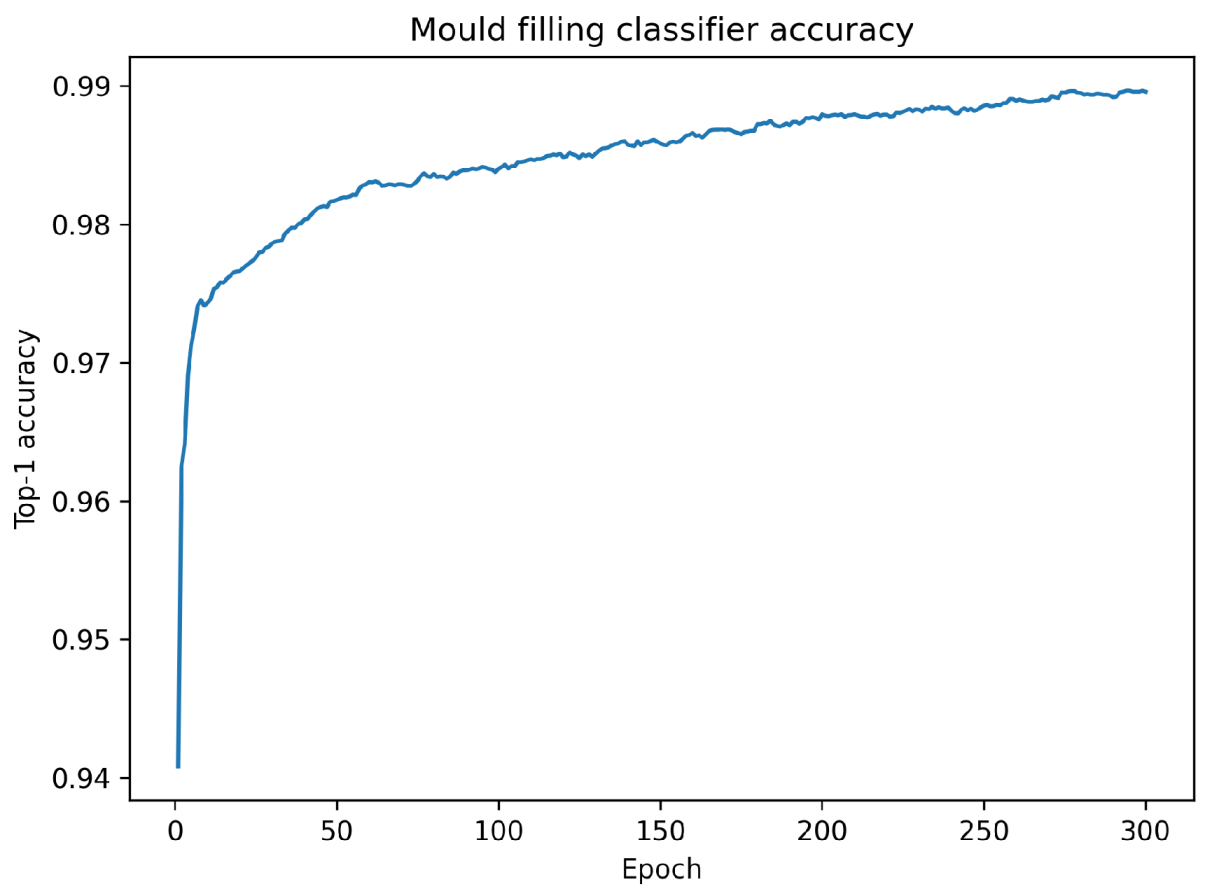

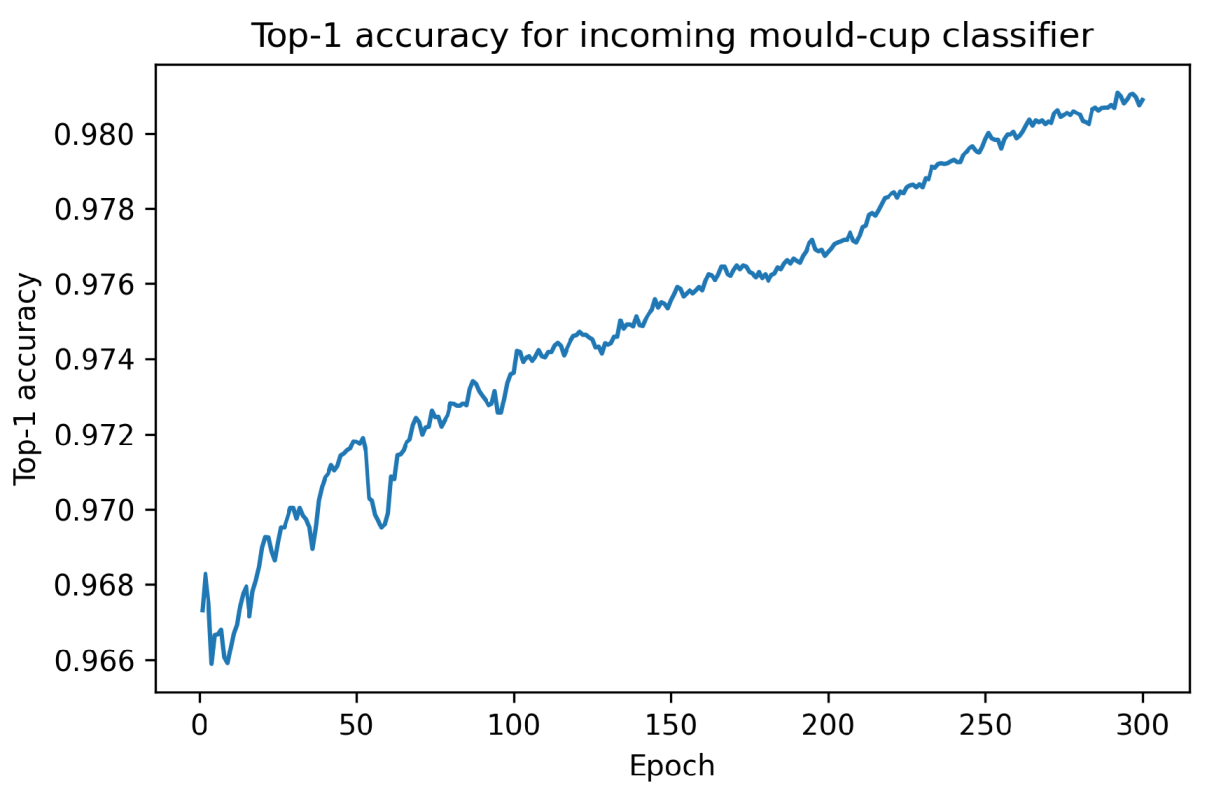

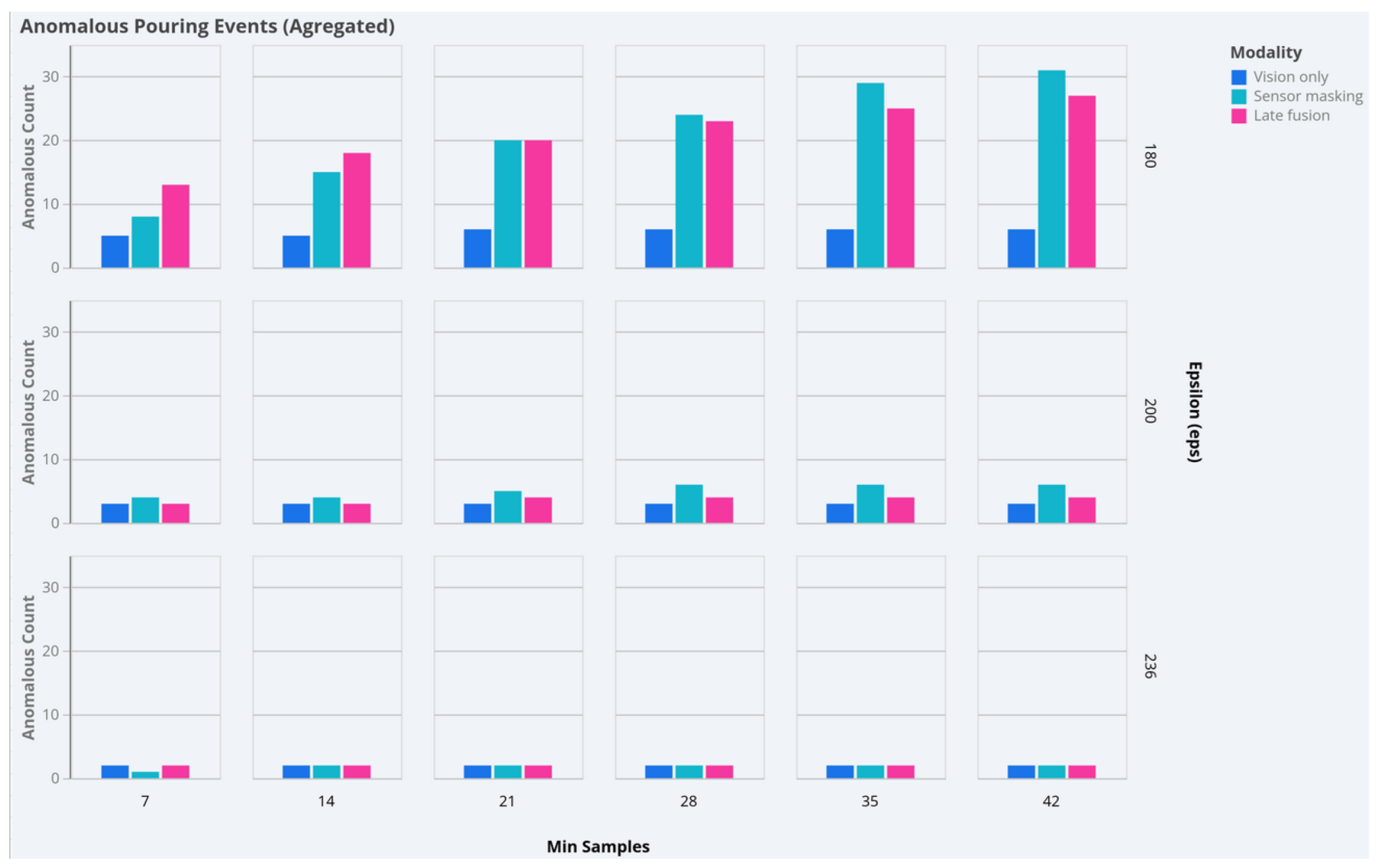

Mould cup filling state classification