Submitted:

13 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- To improve the control system of OrBot using Robot Operating System 2(ROS2),

- 2.

- To improve the fruit vision system by adding another camera with a wider field of view,

- 3.

- To evaluate the performance of OrBot in fruit harvesting.

2. Materials and Methods

2.1. Fruit Harvesting Robot—Orchard Robot (OrBot)

2.1.1. Manipulator

2.1.2. Eye-in-Hand Vision System

2.1.3. Eye-to-Hand Vision System

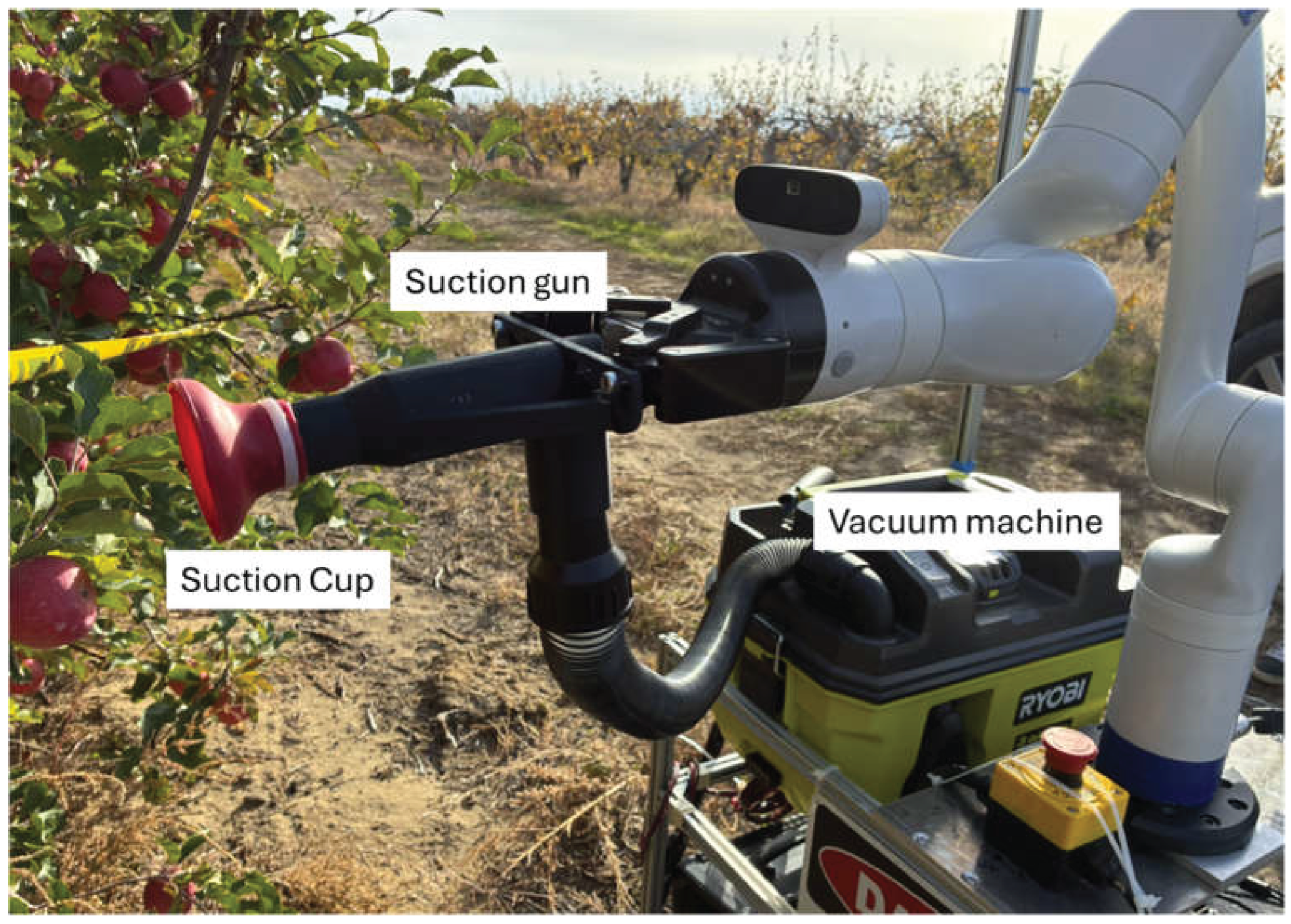

2.1.4. End Effector

2.1.5. Control System

2.1.6. Tank-Tread Navigation Platform

2.2. Object Detection Algorithm

2.3. Control System for Fruit Harvesting

2.3.1. Robot Operating System

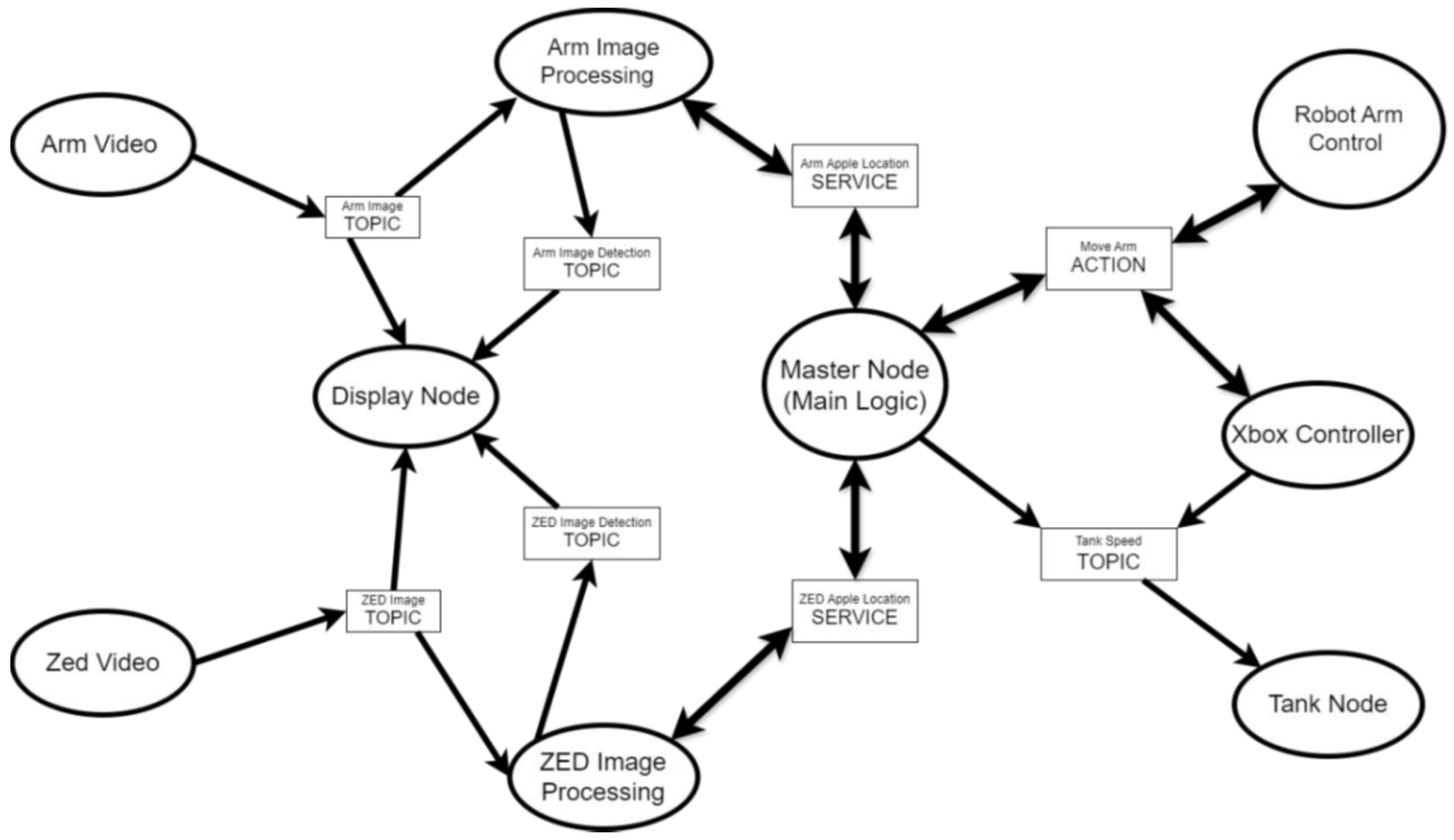

2.3.2. Designing a ROS2 Application for OrBot

- 1.

- Cameras

- 2.

- Image processing algorithms

- 3.

- The robotic arm

- 4.

- The tank treads

- 5.

- Apple Picking Algorithm

- 6.

- Display

- 7.

- Gamepad connection

2.4. Two-Camera System

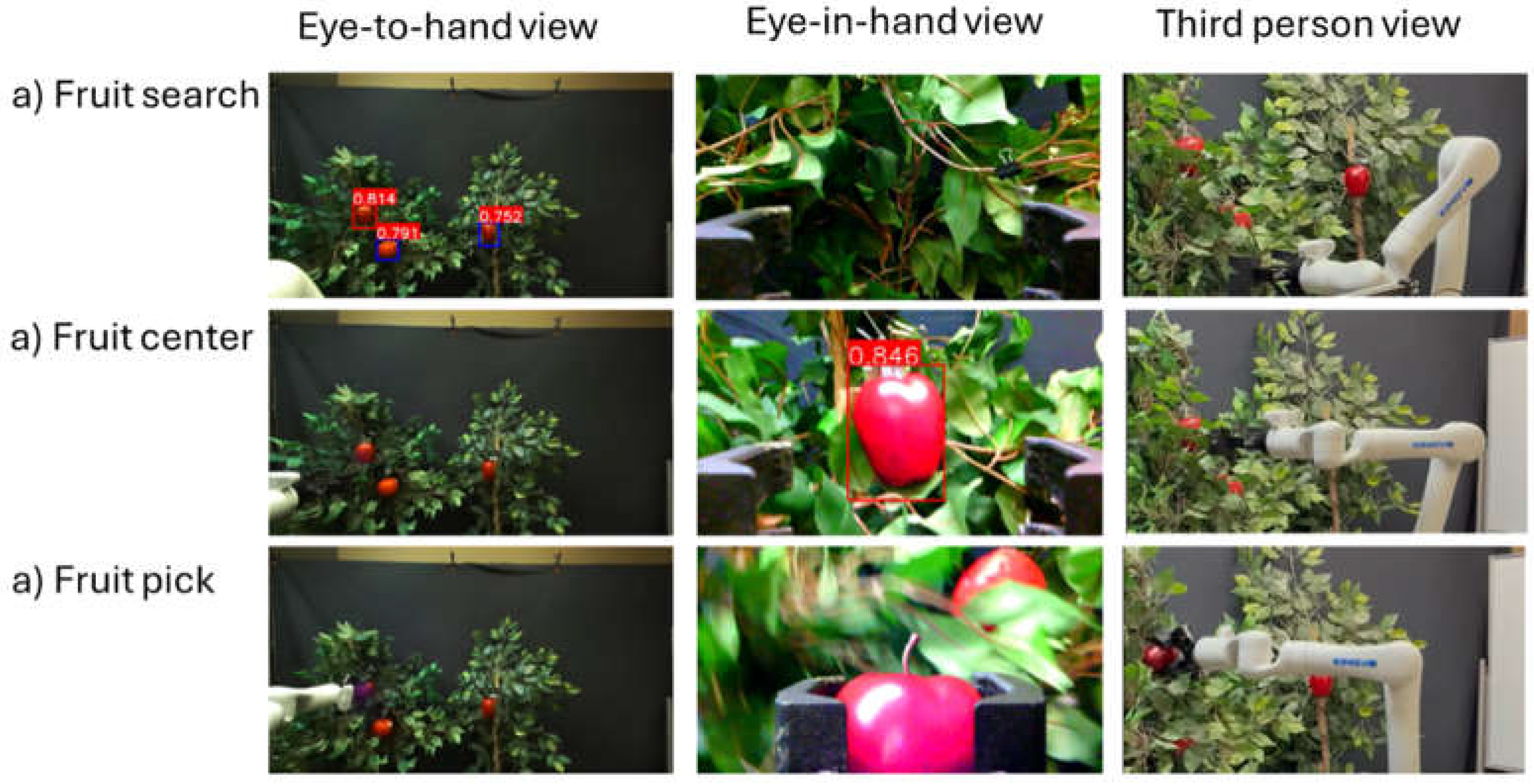

2.4.1. Eye-in-Hand and Eye-to-Hand Systems

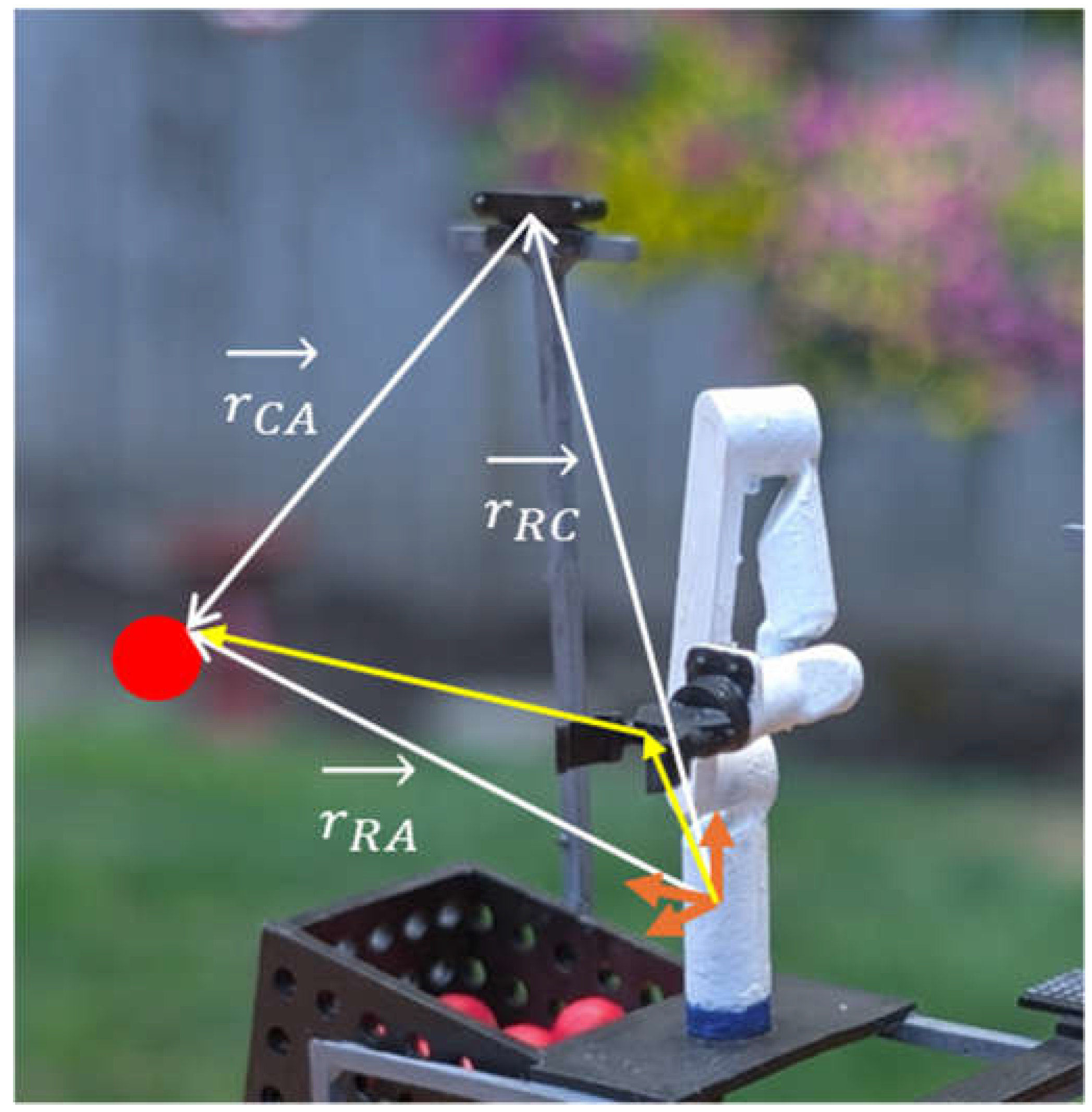

2.4.2. Eye-To-Hand Coordination

2.5. Evaluation of Fruit Harvesting

3. Results

3.1. Indoor Test

| Operation | Time (s) |

|---|---|

| Moving to target fruit | 3.9 |

| Centering target fruit | 2.7 |

| Picking target fruit | 7.5 |

| Total | 14.1 |

3.2. Outdoor Test

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- van Dijk, M.; Morley, T.; Rau, M.L.; et al. A meta-analysis of projected global food demand and population at risk of hunger for the period 2010–2050. Nat Food 2021, 2, 494–501. [Google Scholar] [CrossRef]

- Wei, X.; Campbell, B. L.; Khachatryan, H.; Brumfield, R. G. What Firms Hire H-2A Workers? Evidence from the US Ornamental Horticulture Industry. HortScience 2023, 58(4), 375–382. [Google Scholar] [CrossRef]

- Karunathilake, E.M.B.M.; Le, A.T.; Heo, S.; Chung, Y.S.; Mansoor, S. The Path to Smart Farming: Innovations and Opportunities in Precision Agriculture. Agriculture 2023, 13, 1593. [Google Scholar] [CrossRef]

- Botta, A.; Cavallone, P.; Baglieri, L.; Colucci, G.; Tagliavini, L.; Quaglia, G. A Review of Robots, Perception, and Tasks in Precision Agriculture. Appl. Mech. 2022, 3, 830–854. [Google Scholar] [CrossRef]

- Kootstra, G.; Wang, X.; Blok, P.M.; et al. Selective Harvesting Robotics: Current Research, Trends, and Future Directions. Curr Robot Rep 2021, 2, 95–104. [Google Scholar] [CrossRef]

- Hou, G.; Chen, H.; Jiang, M.; Niu, R. An Overview of the Application of Machine Vision in Recognition and Localization of Fruit and Vegetable Harvesting Robots. Agriculture 2023, 13, 1814. [Google Scholar] [CrossRef]

- Williams, Henry A.M.; Jones, Mark H.; Nejati, Mahla; Seabright, Matthew J.; Bell, Jamie; Penhall, Nicky D.; Barnett, Josh J.; Duke, Mike D.; Scarfe, Alistair J.; Ahn, Ho Seok; Lim, JongYoon; MacDonald, Bruce A. Robotic kiwifruit harvesting using machine vision, convolutional neural networks, and robotic arms. Biosystems Engineering 2019, Volume 181, Pages 140–156. [Google Scholar] [CrossRef]

- Zhao, Yuanshen; Gong, Liang; Huang, Yixiang; Liu, Chengliang. A review of key techniques of vision-based control for harvesting robot. Computers and Electronics in Agriculture 2016, Volume 127, 311–323. [Google Scholar] [CrossRef]

- Waltman, J.; Buchanan, E.; Bulanon, D.M. Nighttime Harvesting of OrBot (Orchard RoBot). AgriEngineering 2024, 6, 1266–1276. [Google Scholar] [CrossRef]

- Bulanon, D.M.; Burr, C.; DeVlieg, M.; Braddock, T.; Allen, B. Development of a Visual Servo System for Robotic Fruit Harvesting. AgriEngineering 2021, 3, 840–852. [Google Scholar] [CrossRef]

- Discover our Gen3 robotic arm. Available online: https://www.kinovarobotics.com/product/gen3-robots (accessed on 10 February 2026).

- ZED 2 Versatile stereo camera for spatial perception. Available online: https://www.stereolabs.com/products/zed-2 (accessed on 10 February 2026).

- ZED Box Orin. Available online: https://www.stereolabs.com/docs/embedded/zed-box-orin (accessed on 10 February 2026).

- Ultralytics YOLOv5. Available online: https://docs.ultralytics.com/models/yolov5/ (accessed on 10 February 2026).

- Ruybalid, Connor. Improving OrBot. Bachelor of Arts in Computer Science, Northwest Nazarene University, April 2025. [Google Scholar]

- ROS—Robot Operating System. Available online: https://www.ros.org/ (accessed on 10 February 2026).

- ROS 2 Documentation. Available online: https://docs.ros.org/en/foxy/index.html (accessed on 10 February 2026).

- Zhou, H.; Wang, X.; Au, W.; et al. Intelligent robots for fruit harvesting: recent developments and future challenges. Precision Agric 2022, 23, 1856–1907. [Google Scholar] [CrossRef]

- Silwal, A.; Davidson, J. R.; Karkee, M.; Mo, C.; Zhang, Q.; Lewis, K. Design, integration, and field evaluation of a robotic apple harvester. Journal of Field Robotics 2017, 34(6), 1140–1159. [Google Scholar] [CrossRef]

- Li, Z.; Miao, F.; Yang, Z.; Chai, P.; Yang, S. Factors affecting human hand grasp type in tomato fruit-picking: A statistical investigation for ergonomic development of harvesting robot. Computers and Electronics in Agriculture 2019, 157, 90–9. [Google Scholar] [CrossRef]

- You, K. Development of an adaptable vacuum based orange picking end effector. Agricultural Engineering International: CIGR Journal 2019, 21(1), 58–66. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).