Submitted:

12 February 2026

Posted:

15 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

2.1. Participants

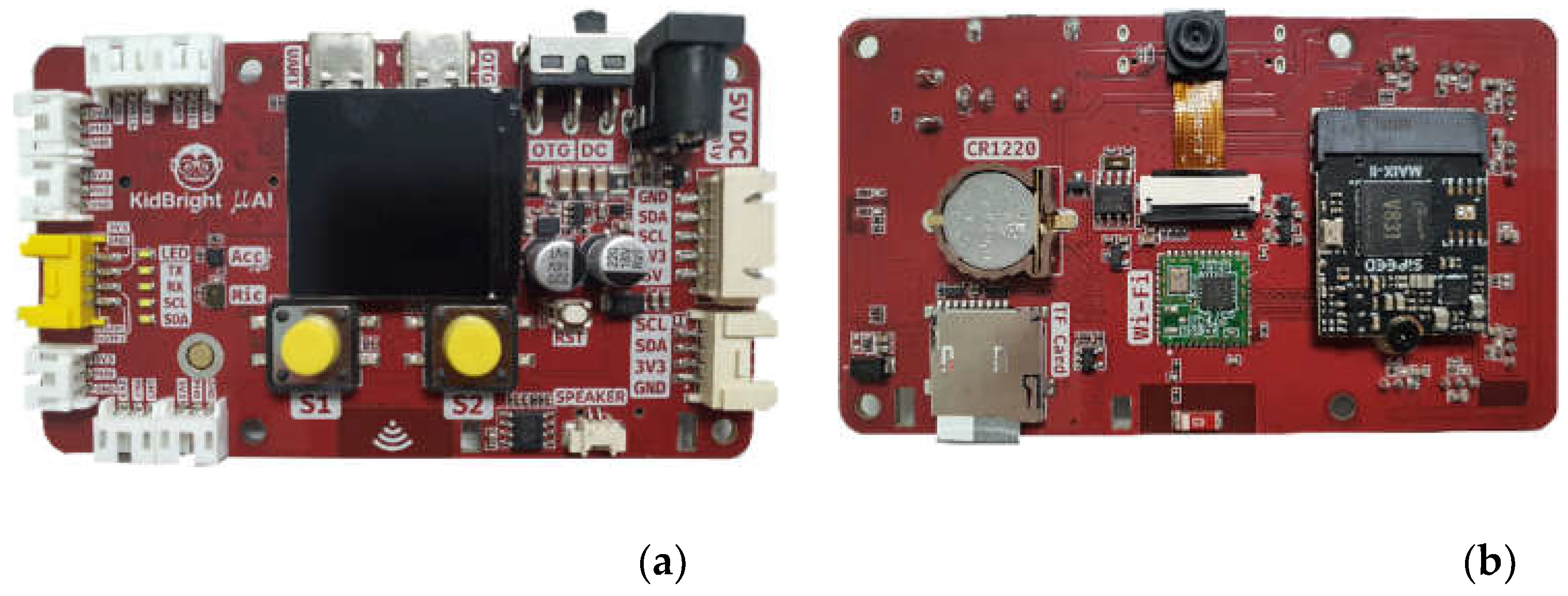

2.2. Educational Tool

2.3. Measurement Framework

2.4. AI leaning Implementation

3. Results

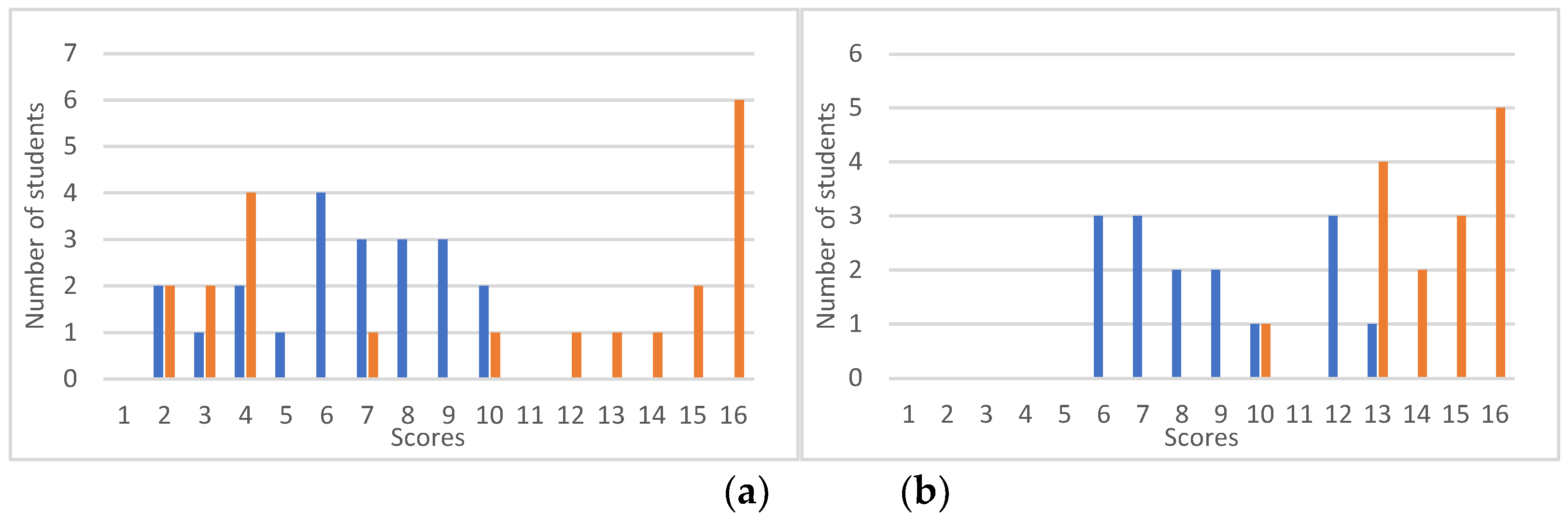

3.1. Measurement of CT concepts

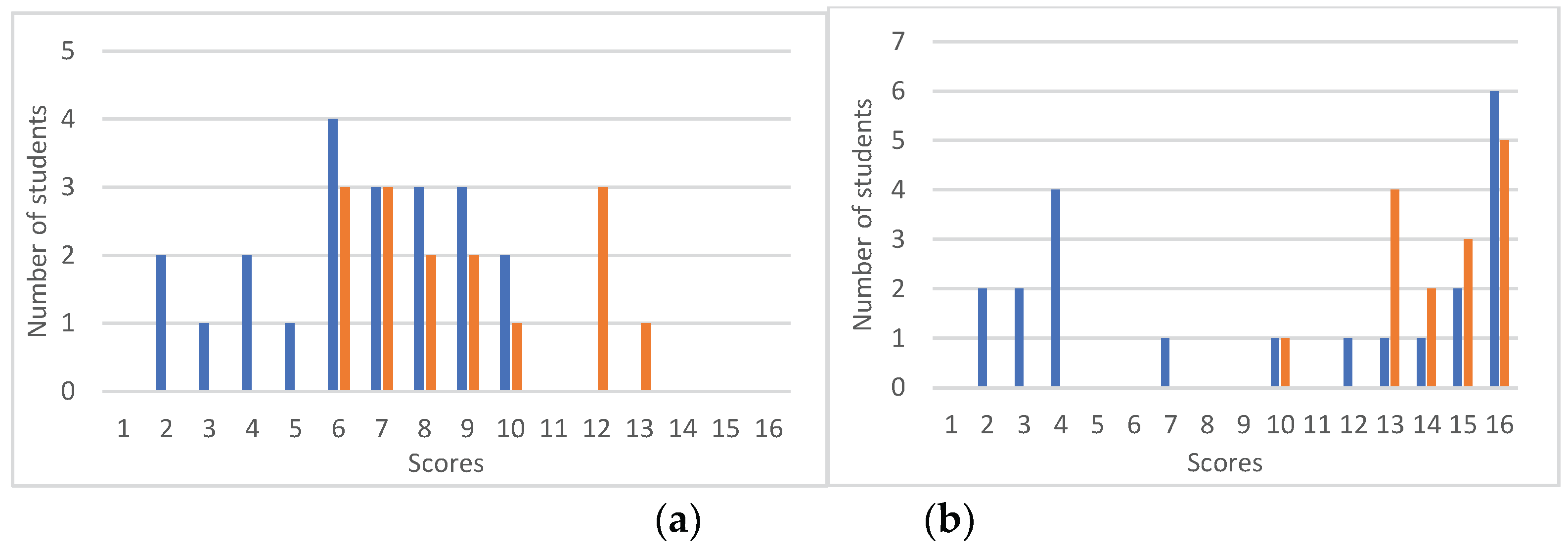

3.2. Measurement of CT practices

3.3. Measurement of CT perspectives

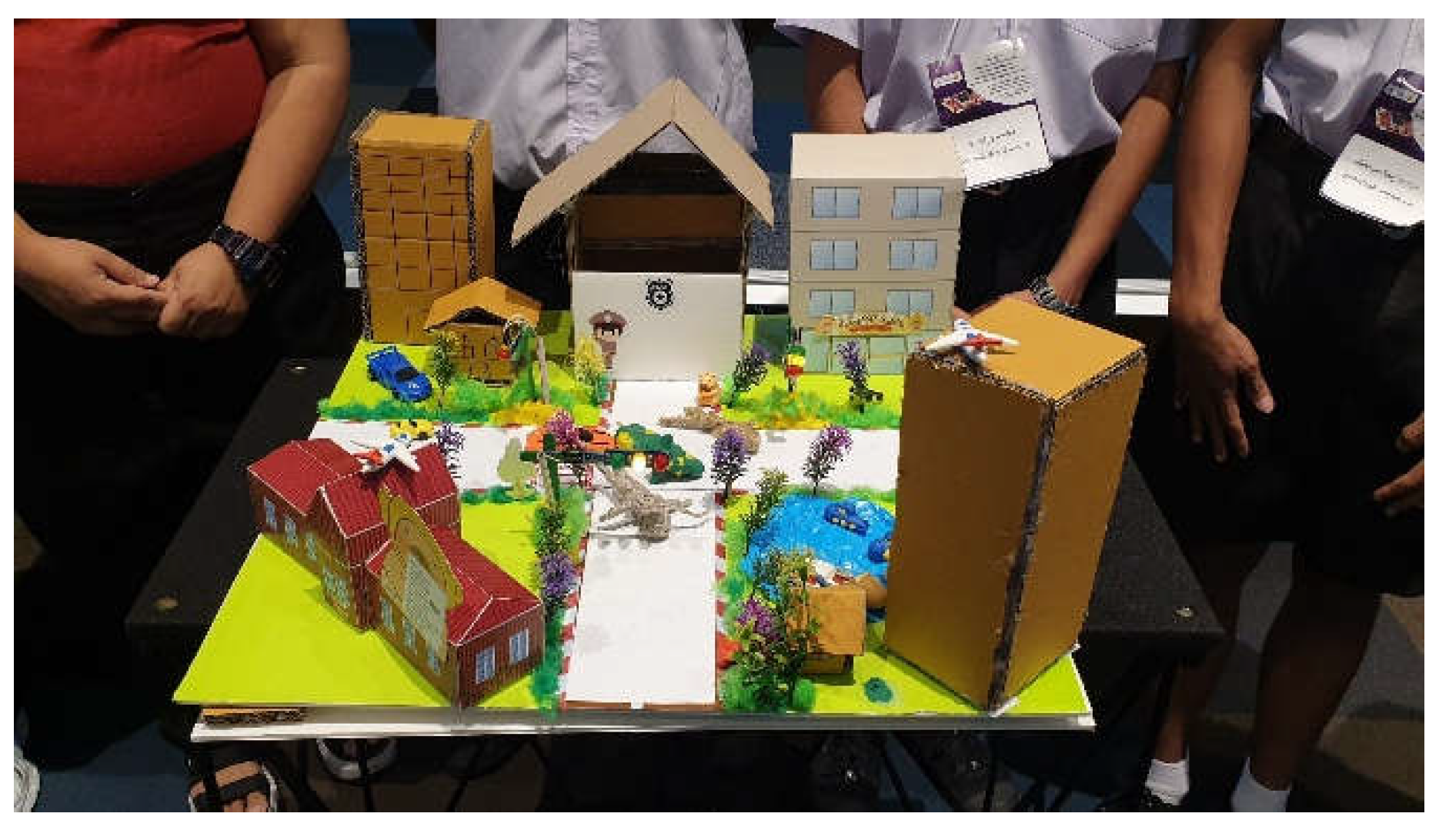

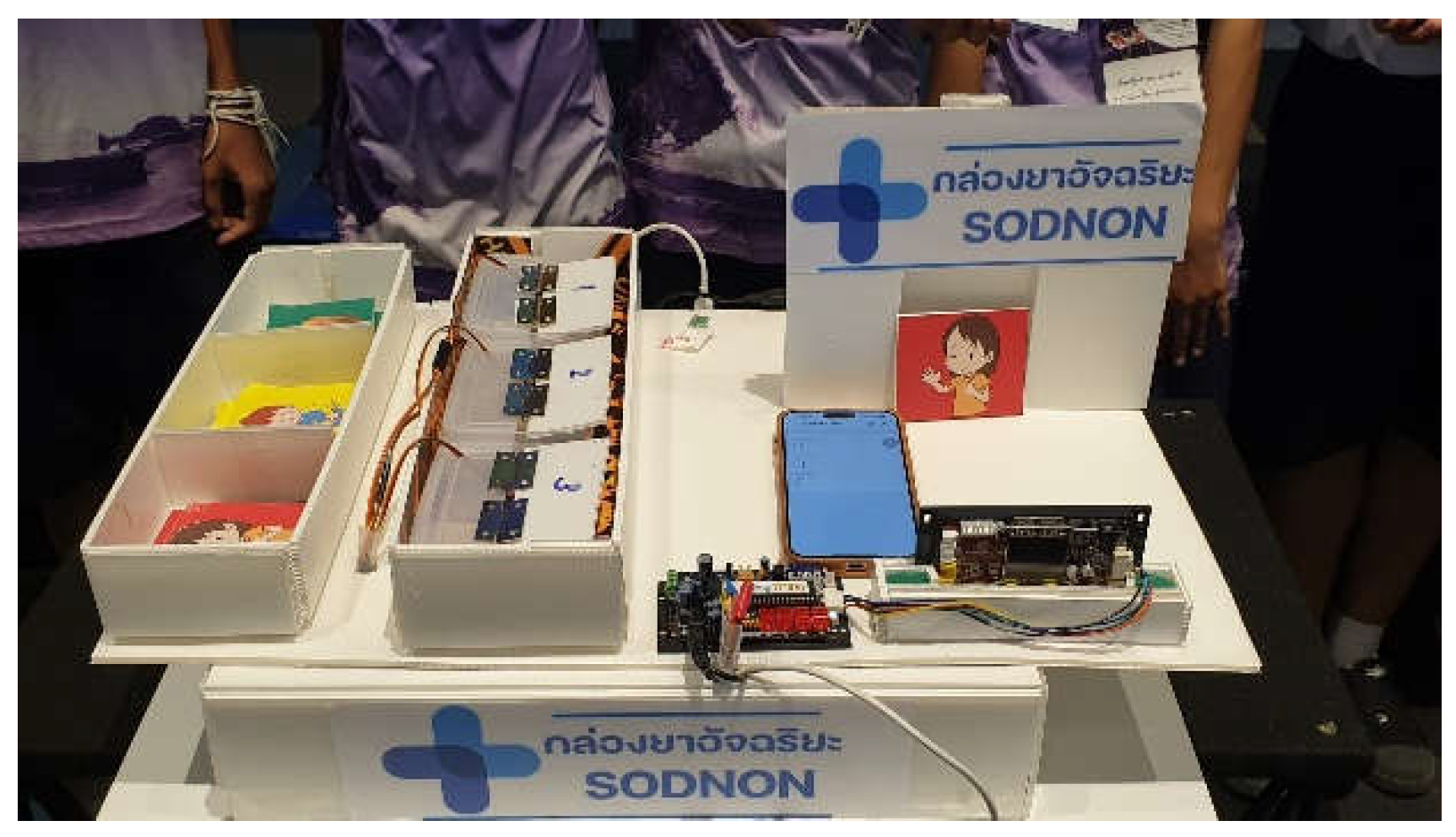

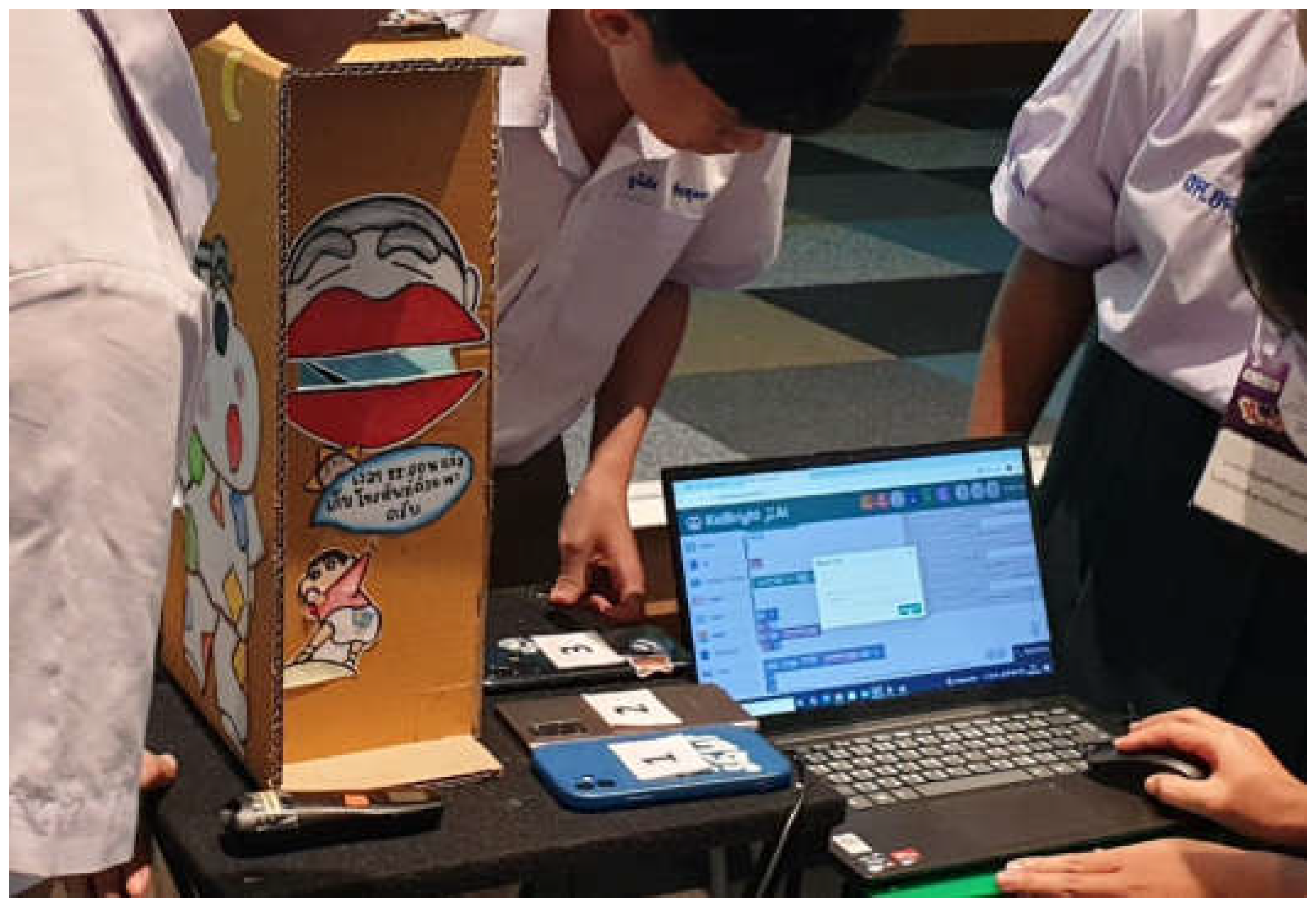

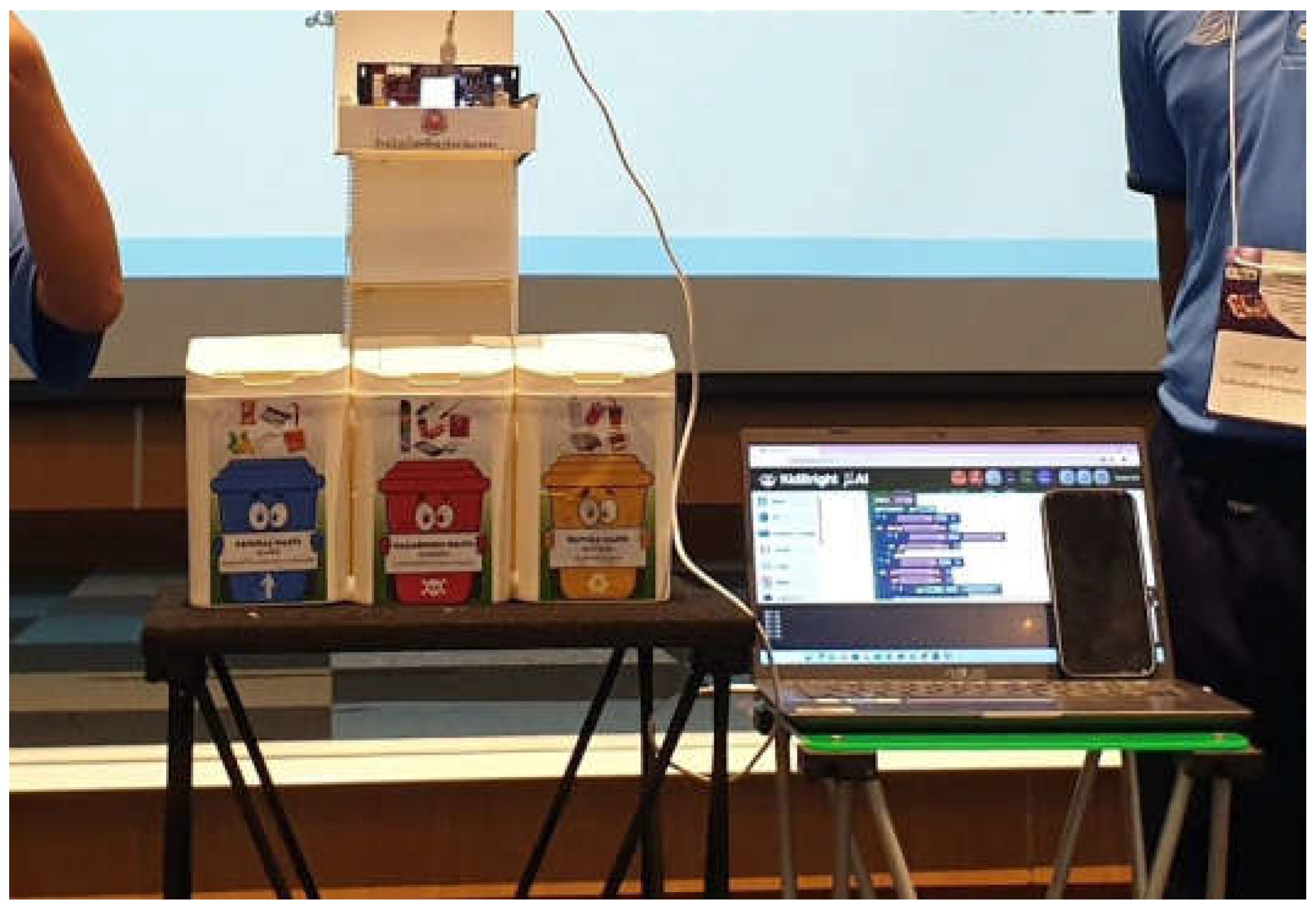

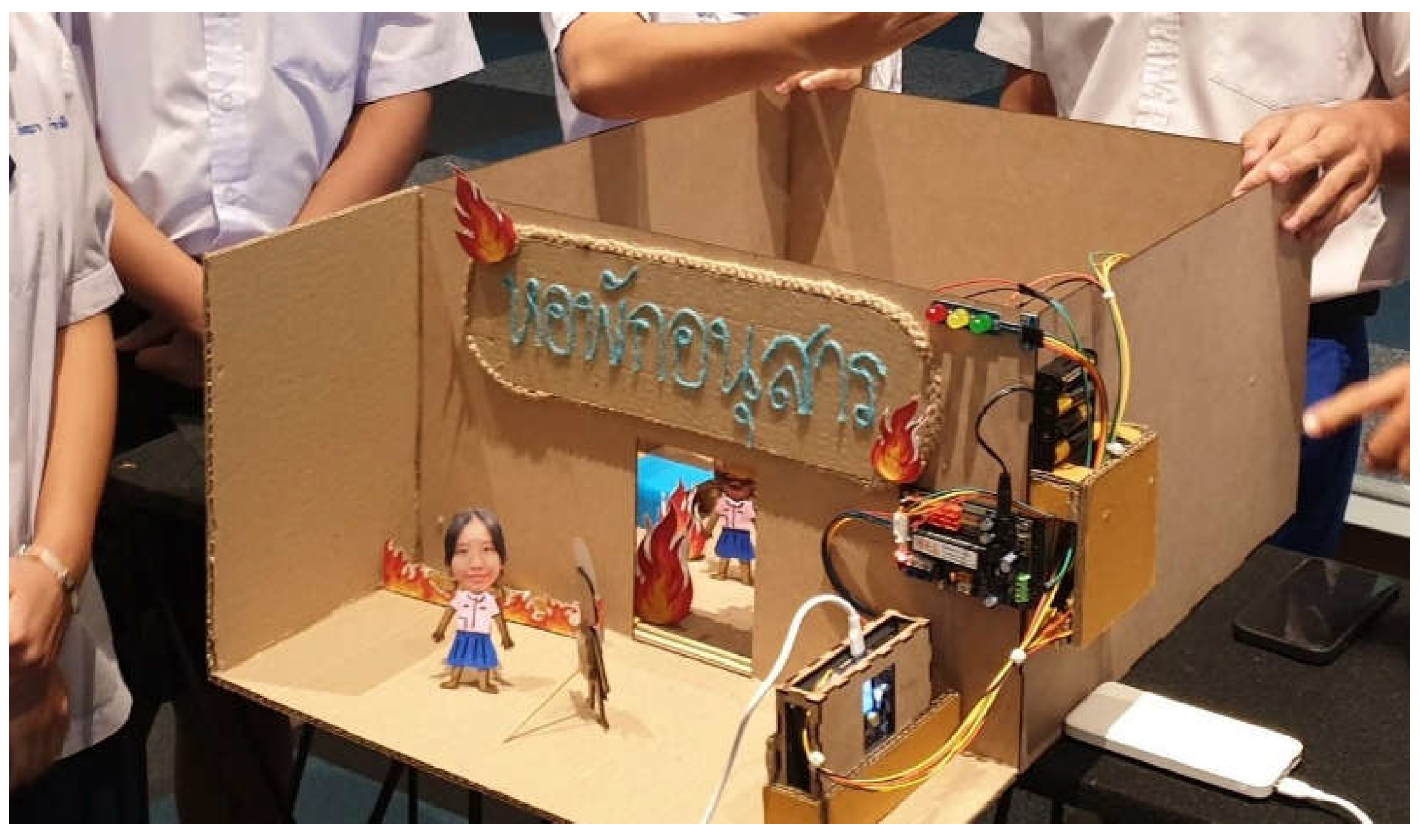

3.4. Students’ AI-based automated system

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| CFS | Competencies Framework for Students |

| CT | Computational Thinking |

| STEAM | Science, Technology, Engineering, Art, and Mathematics |

References

- UNESCO. AI competency framework for students; the United Nations Educational, Scientific and Cultural Organization; 7, place de Fontenoy, 75352 Paris 07 SP, France, 2024; pp. 1-80. [CrossRef]

- OECD. Core foundations for 2030; Organization of Economic Co-operation and Development; OECD Publishing, Paris, 2019; pp. 1-14. https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/concept-notes/Core_Foundations_for_ 2030_concept_note.pdf. Paris.

- OECD. Knowledge for 2030; Organization of Economic Co-operation and Development; OECD Publishing, Paris, 2019; pp. 1-13. https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/concept-notes/Knowledge_for_2030_concept_note.pdf. Paris.

- OECD. Skills for 2030; Organization of Economic Co-operation and Development; OECD Publishing, Paris, 2019; pp. 1-16. https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/concept-notes/Skills_for_2030_concept_note.pdf. Paris.

- OECD. Attitude and Value for 2030; Organization of Economic Co-operation and Development; OECD Publishing, Paris, 2019; pp. 1-19. https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/concept-notes/Attitudes_and_Values_for_2030_concept_note.pdf. Paris.

- Al-Zahrani, A.; Khalil, I.; Awaji, B.; Mohsen, M. AI Technologies in STEAM Education for Students: Systematic Literature Review. Journal of Ecohumanism 2024, 3, 3380–3397. [Google Scholar] [CrossRef]

- Luckin, R.; Holmes, W.; Griffiths, M.; Forcier, L.B. Intelligence unleashed: An argument for AI in education, Pearson: London, 2016; pp. 1-60. https://static.googleusercontent.com/media/edu.google.com/en//pdfs/Intelligence-Unleashed-Publication.pdf.

- Holmes, W.; Bialik, M.; Fadel, C. (2019). Artificial intelligence in education: Promises and implications for teaching and learning. Center for Curriculum Redesign, 2019; pp. 1-242.

- Bender, E. M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the dangers of stochastic parrots: Can language models be too big? In Proceedings of the ACM Conference on Fairness, Accountability, and Transparency, Toronto, Canada, 3-10 March 2021. [Google Scholar] [CrossRef]

- Conde, M. Á.; Rodríguez-Sedano, F. J.; Fernández-Llamas, C.; Gonçalves, J.; Lima, J.; García-Peñalvo, F. J. Fostering STEAM through challenge-based learning, robotics, and physical devices: A systematic mapping literature review. Computer Applications in Engineering Education 2020, 29, 46–65. [Google Scholar] [CrossRef]

- Mejias, S.; Thompson, N.; Sedas, R. M.; Rosin, M.; Soep, E.; Peppler, K.; Roche, J.; Wong, J.; Hurley, M.; Bell, P.; Bevan, B. The trouble with STEAM and why we use it anyway. Science Education Journal 2021, 105, 209–231. [Google Scholar] [CrossRef]

- Grover, S.; Pea, R. Computational thinking in K–12: A review of the state of the field. Educational Researcher 2013, 42(1), 38–43. [Google Scholar] [CrossRef]

- Bocconi, S.; Chioccariello, A.; Dettori, G.; Ferrari, A.; Engelhardt, K.; Kampylis, P.; Punie, Y. Exploring the field of computational thinking as a 21St century skill. The 8th International Conference on Education and New Learning Technologies, Barcelona, Spain, 4–6 July 2016. [Google Scholar] [CrossRef]

- Tsarava, K.; Moeller, K.; Román-González, M.; Golle, J.; Leifheit, L.; Butz, M.V.; Ninaus, M. A cognitive definition of computational thinking in primary education. Computers and Education 2022, 179, 104425. [Google Scholar] [CrossRef]

- Shute, V. J.; Sun, C.; Asbell-Clarke, J. Demystifying computational thinking. Educational Research Review 2017, 22, 142–158. [Google Scholar] [CrossRef]

- Li, Y.; Schoenfeld, A. H.; diSessa, A. A.; Graesser, A. C.; Benson, L. C.; English, L. D.; Duschl, R. A. Computational thinking is more about thinking than computing. Journal for STEM Education Research 2020, 3(1), 1–18. [Google Scholar] [CrossRef] [PubMed]

- Hsu, T. C.; Chang, S. C.; Hung, Y. T. How to learn and how to teach computational thinking: Suggestions based on a review of the literature. Computers & Education 2018, 126, 296–310. [Google Scholar] [CrossRef]

- Mills, K. A.; Cope, J.; Scholes, L.; Rowe, L. Coding and computational thinking across the curriculum: A review of educational outcomes. Review of Educational Research 2024, 00346543241241327. [Google Scholar] [CrossRef]

- Mariana, E. P.; Kristanto, Y. D. Integrating STEAM education and computational thinking: analysis of students’ critical and creative thinking skills in an innovative teaching and learning. Southeast Asia Mathematics Education Journal 2023, 13, 1–18. [Google Scholar] [CrossRef]

- Li, X.; Xie, K.; Vongkulluksn, V.; Stein, D.; Zhang, Y. Developing and testing a design-based learning approach to enhance elementary students’ self-perceived computational thinking. Journal of Research on Technology in Education 2021, 55, 1–24. [Google Scholar] [CrossRef]

- Hutchins, N. M.; Biswas, G.; Maróti, M.; Lédeczi, Á.; Grover, S.; Wolf, R.; Blair, K. P.; Chin, D.; Conlin, L.; Basu, S.; McElhaney, K. C2STEM: A system for synergistic learning of physics and computational thinking. Journal of Science Education and Technology 2020, 29, 83–100. [Google Scholar] [CrossRef]

- Hsiao, H. S.; Lin, Y. W.; Lin, K. Y.; Lin, C. Y.; Chen, J. H.; Chen, J. C. Using robot-based practices to develop an activity that incorporated the 6E model to improve elementary school students’ learning performances. Interactive Learning Environments 2022, 30, 85–99. [Google Scholar] [CrossRef]

- Siew, N. M.; Ambo, N. The scientific creativity of fifth graders in a STEM project-based cooperative learning approach. Problems of Education in the 21st Century 2020 78, 627–643. [CrossRef]

- Moeller, M. P. An introduction to the outcomes of children with hearing loss study. Ear and Hearing 2015, 36 (Suppl. 1), 4S–12S. [Google Scholar] [CrossRef] [PubMed]

- Rusilowati, A.; Ulya, E.; Sumpono, L. STEAM-deaf learning model assisted by rube goldberg machine for deaf student in junior special needs school. Journal of Physics: Conference Series 2020, 1567(4), 042087. [Google Scholar] [CrossRef]

- Cutumisu, M.; Adams, C.; Lu, C. A Scoping Review of Empirical Research on Recent Computational Thinking Assessments. Journal of Science Education Technology 2019, vol. 28, 651–676. [Google Scholar] [CrossRef]

- Han, J. A systematic review of computational thinking assessment in the context of 21st century skills. In Proceedings of the 2nd International Conference on Humanities, Wisdom Education and Service Management (HWESM 2023), Shanghai, China, 10-12 March 2023. [Google Scholar]

- Brennan, K.; Resnick, M. New frameworks for studying and assessing the development of computational thinking. In Proceedings of the American Educational Research Association, Vancouver, Canada, 1-25 April 2012. [Google Scholar]

- Kong, S. C. Components and methods of evaluating computational thinking for fostering creative problem-solvers in senior primary school education. In Computational thinking education; Kong, S., Abelson, H., Eds.; Springer: Singapore, 2019; pp. 119–141. [Google Scholar] [CrossRef]

| Participants | Males | Females | Total |

| Number of participants | 21 | 15 | 36 |

| Percentage of participants | 58.33% | 41.67% | 100% |

| Question | Choice | Choice |

| 1. Why should we learn about Artificial Intelligence (AI)? | a. To develop essential learning and life skills such as analytical and creative thinking. | b. To prepare ourselves for living in an AI-driven world. |

| c. To understand and use technologies effectively. | d. All the above. | |

| 2. AI is becoming a bigger part of our daily routines. Which of the following is not a typical example of AI in everyday life? | a. Automatic sliding doors. | b. Chatbots. |

| c. Facial recognition systems. | d. Route recommendation systems on Google Maps. | |

| 3. What is KidBright µAI? | a. A controllable robot that responds to block commands. | b. A learning tool designed to support AI education in STEM/STEAM approach. |

| c. A robot used in industrial factories. | d. Coding software. | |

| 4. What are the working processes of KidBright µAI? | a. Annotating data -> Collecting data -> Training AI model -> Implementing the trained model. | b. Collecting data -> Training AI model -> Annotating data -> Implementing the trained model. |

| c. Implementing the trained model -> Training AI model -> Annotating data -> Collecting data. | d. Collecting data -> Annotating data -> Training AI model -> Implementing the trained model. | |

| 5. In the context of machine learning, a camera functions as eyes, a microphone as ears, and wheels as legs. With this analogy, which human organ is most like KidBright µAI board? | a. Mouth. | b. Heart. |

| c. Nose. | d. Brain. | |

| 6. Which categories of AI system are KidBright µAI belong to? | a. Semi-supervised learning – Classification. | b. Semi-supervised learning – Generative model. |

| c. Supervised learning – Classification. | d. Supervised learning – Generative model. | |

| 7. Which practice is correct when gathering images for classification or object detection training? | a. Capture all images of objects from afar for higher accuracy. | b. Capture all images of objects from one fixed angle for higher accuracy. |

| c. Capture many clear images from various angles and distances to increase data diversity. | d. Position objects close to lens to emphasize surface detail. | |

| 8. If identical labels are assigned to different objects, how would this affect training in the KidBright µAI system? | a. No effect, since KidBright µAI trains its model using images only. | b. A major effect, as KidBright µAI trains model from both object images and their labels. |

| c. A minor effect, as KidBright µAI may be confused but could learn to distinguish differences. | d. None of the above. | |

| 9. Which following scenarios demonstrate the use of AI? | a. Choojai uploads travel photos to social media platforms (Facebook, Instagram). | b. Piti uses Siri on an iPhone to search for information. |

| c. Mana attends online classes during the COVID-19 outbreak. | d. Mani orders food via online delivery service to her home. | |

10. Which button in KidBright µAI IDE is used for KidBright µAI board connection?

|

a. Button 1. | b. Button 2. |

| c. Button 3. | d. Button 4. | |

11. What is the purpose of the “Upload” button in KidBright µAI IDE?

|

a. To convert block-based commands into machine code and send converted code to the KidBright µAI board for execution. | b. To send the AI model to the KidBright µAI board for execution. |

| c. To convert block-based commands into machine code and send both the converted code and AI model to the KidBright µAI board for execution. | d. To send the image dataset used for training to the KidBright µAI board. | |

| 12. Which types of AI models can be created using the KidBright µAI IDE? | a. Image classification. | b. Object detection. |

| c. Voice classification. | d. All the above. | |

| 13. Which plugin block is used to send data from KidBright µAI board to the cloud? | a. iKB1 plugin. | b. MQTT plugin. |

| c. I2C plugin. | d. DHT plugin. | |

| 14. Which of the following statements are true? | a. Image classification and object detection are the same. | b. Image classification analyzes an entire image to identify what it is. |

| c. Object detection analyzes an image to locate and identify specific objects in an image. | d. Both b and c are correct. | |

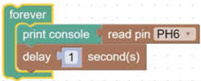

15. What is the purpose of the command blocks below?

|

a. Communicating between KidBright µAI board and external sensors. | b. Performing conditional logic operations. |

| c. Displaying image and text on screen. | d. Performing mathematical operations. | |

16. How do the below command blocks function?

|

a. Send data from KidBright µAI board to a digital sensor at PH6 port. | b. Send data from KidBright µAI board to an analog sensor at PH6 port. |

| c. Read data from a digital sensor at PH6 port. | d. Read data from an analog sensor at PH6 port. |

| Criteria | Rating |

| 1. Creativity in design. | New ideas 5—Not new ideas 1. |

| 2. Innovation functionality. | Completed 5—incomplete 1. |

| 3. Innovation complexity. | Complex 5—uncomplex 1. |

| 4. Relevance to real-life problems. | Relevant 5—irrelevant 1. |

| 5. Concepts of the innovations. | Correct 5—incorrect 1. |

| Number | Question |

| 1. | What motivated you to develop this innovation? |

| 2. | Which challenges did you face during the development process? |

| 3. | How did you address or overcome these challenges? |

| 4. | What are potential improvements that can be made to your innovation? |

| 5. | Can the concept behind your innovation be applied to solve other problems? If so, how? |

| Test | Males | Females | Mean of Total Students |

| Pre-test Mean | 40.47% | 55% | 47.74% |

| Post-test Mean | 61.90% | 89.58% | 75.74% |

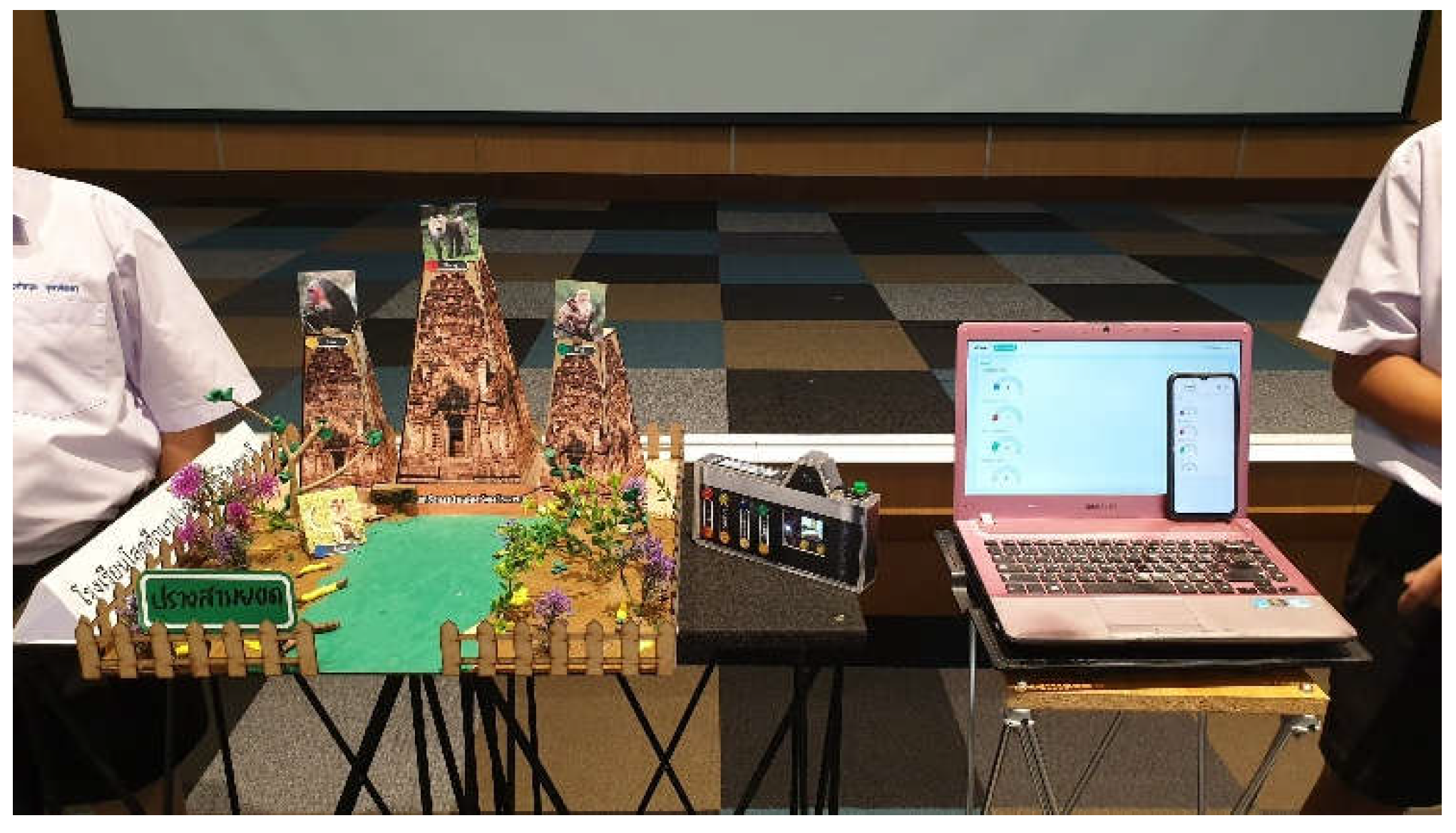

| Criteria | Group 1 | Group 2 | Group 3 | Group 4 | Group 5 | Group 6 | Group 7 | Average |

| 1. Creativity in design. | 75% | 85% | 75% | 85% | 95% | 80% | 100% | 85% |

| 2. Innovation functionality. | 60% | 60% | 60% | 75% | 85% | 55% | 90% | 69.29% |

| 3. Innovation complexity. | 60% | 65% | 55% | 80% | 75% | 60% | 85% | 68.57% |

| 4. Relevance to real-life problems. | 70% | 75% | 70% | 85% | 80% | 70% | 90% | 77.14% |

| 5. Concepts of the innovations. | 60% | 65% | 65% | 75% | 85% | 65% | 85% | 71.43% |

| Question | Group 1 | Group 2 | Group 3 | Group 4 | Group 5 | Group 6 | Group 7 | Average |

| 1. What motivated you to develop this innovation? | 75% | 75% | 75% | 75% | 85% | 70% | 80% | 76.43% |

| 2. Which challenges did you face during the development process? | 75% | 65% | 55% | 80% | 80% | 70% | 80% | 72.14% |

| 3. How did you address or overcome these challenges? | 80% | 70% | 60% | 75% | 80% | 65% | 80% | 72.86% |

| 4. What are potential improvements that can be made to your innovation? | 70% | 70% | 65% | 75% | 85% | 65% | 75% | 72.14% |

| 5. Can the concept behind your innovation be applied to solve other problems? If so, how? | 60% | 60% | 60% | 75% | 85% | 55% | 90% | 69.29% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).