Submitted:

10 February 2026

Posted:

15 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The cognitive background: from gossips to heuristics

1.2. Cognitive Heuristics

1.3. Modeling humans and recommender systems interplay

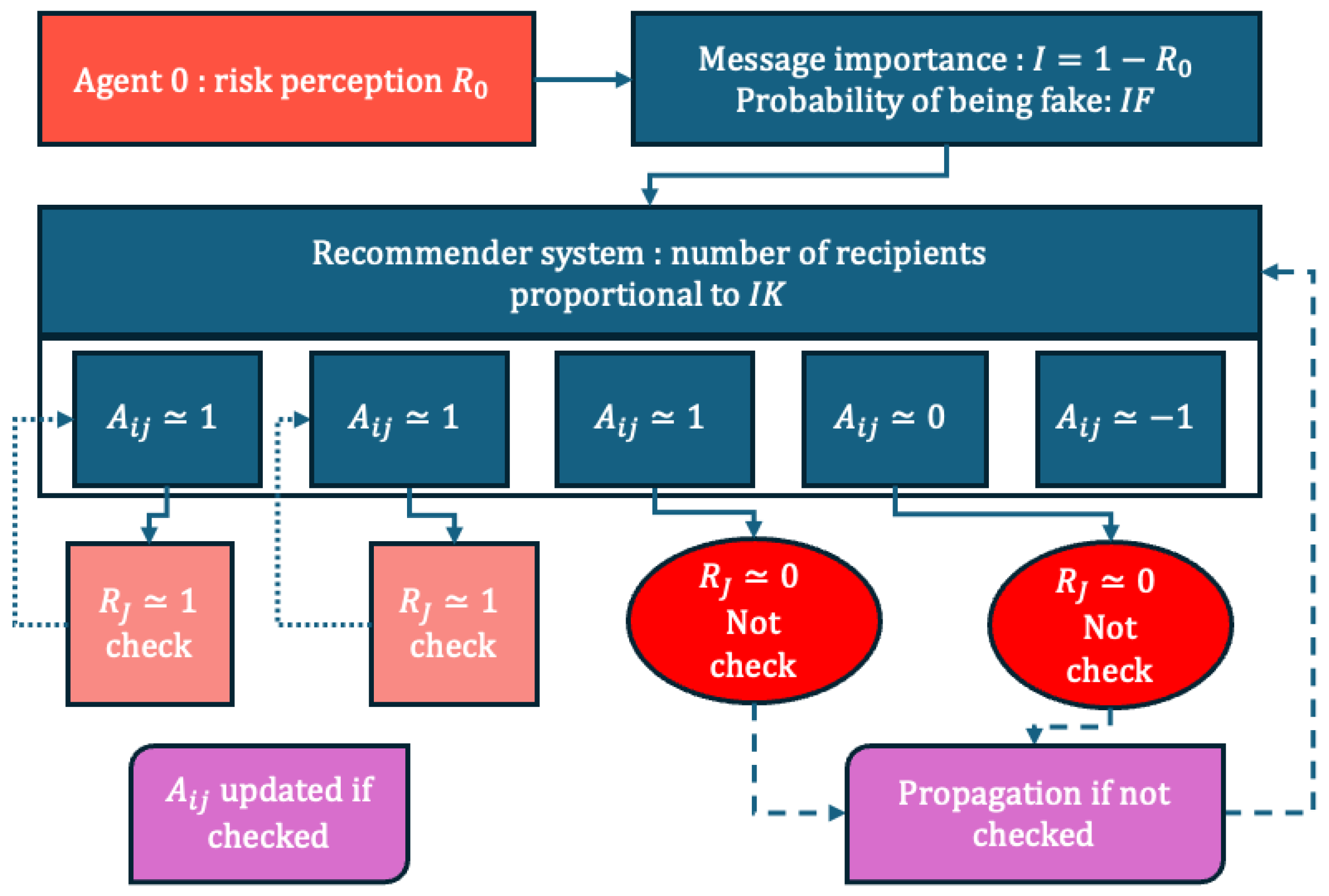

2. The Computer Model

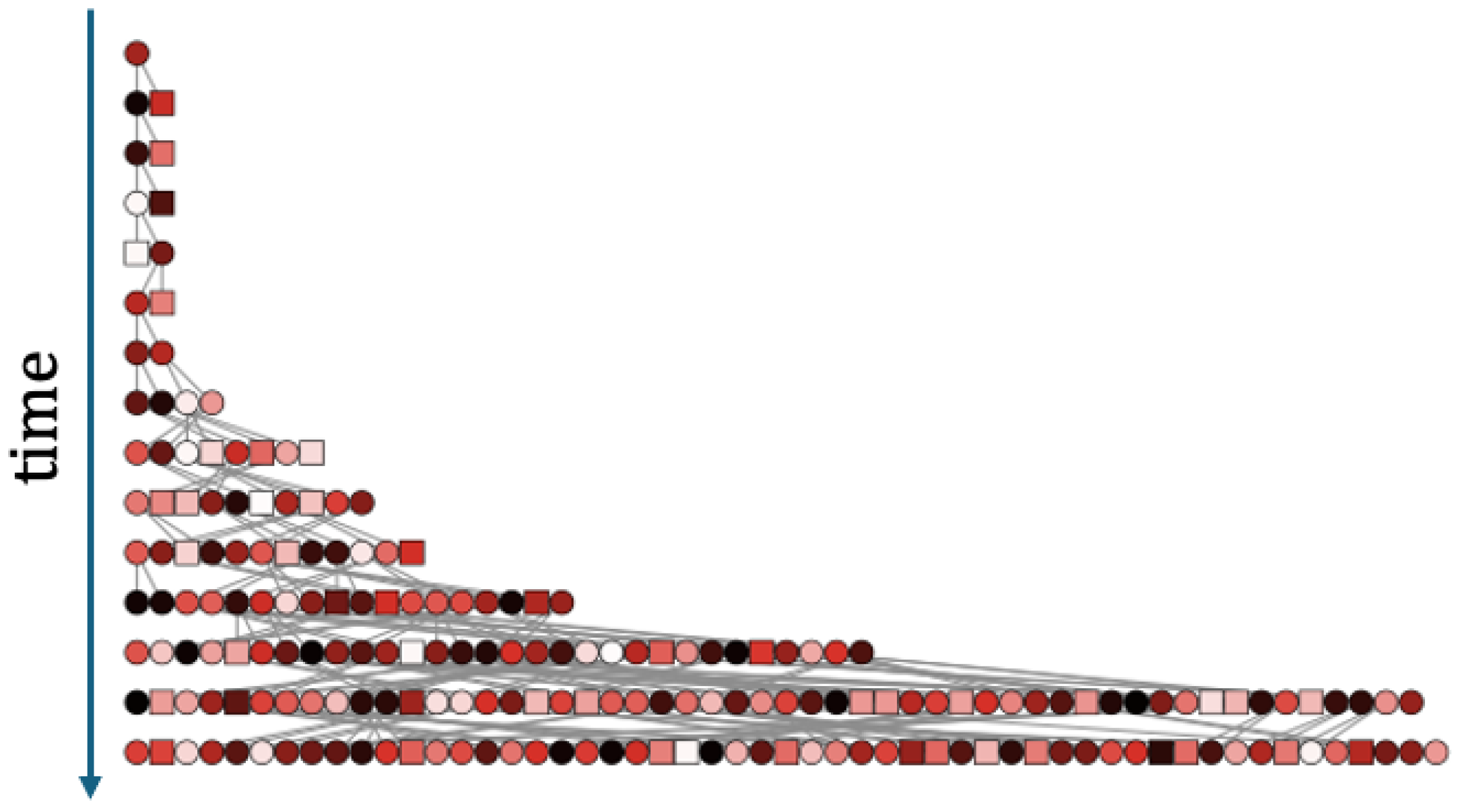

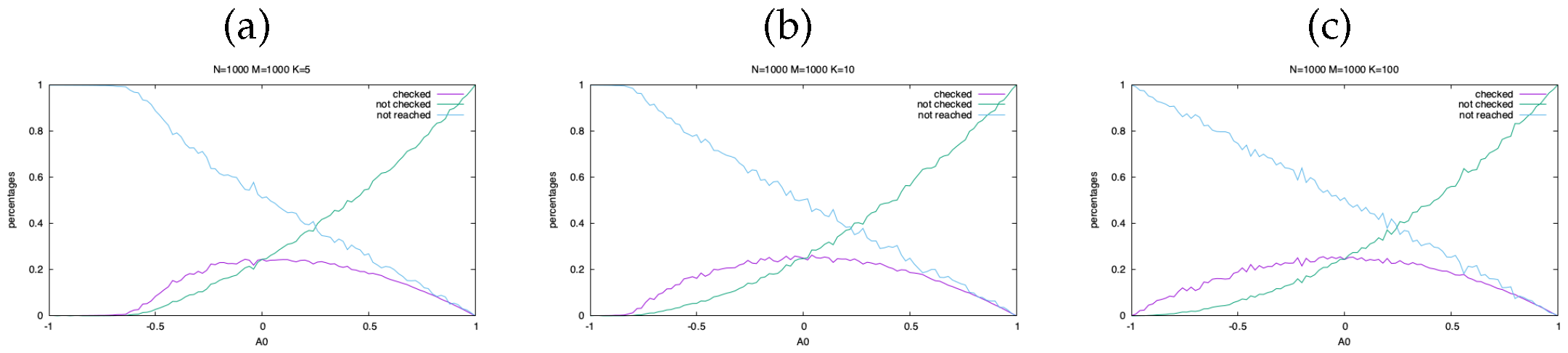

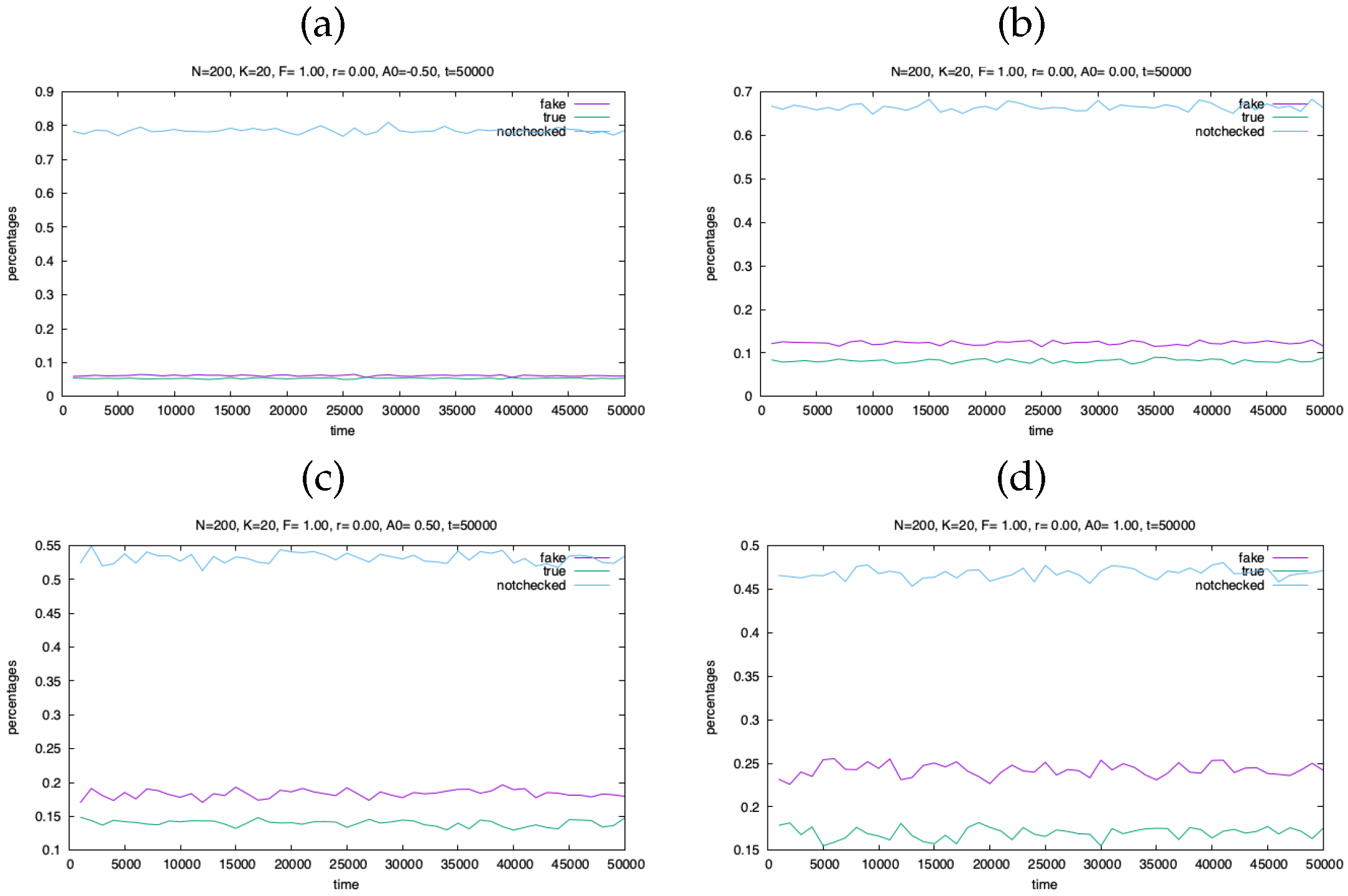

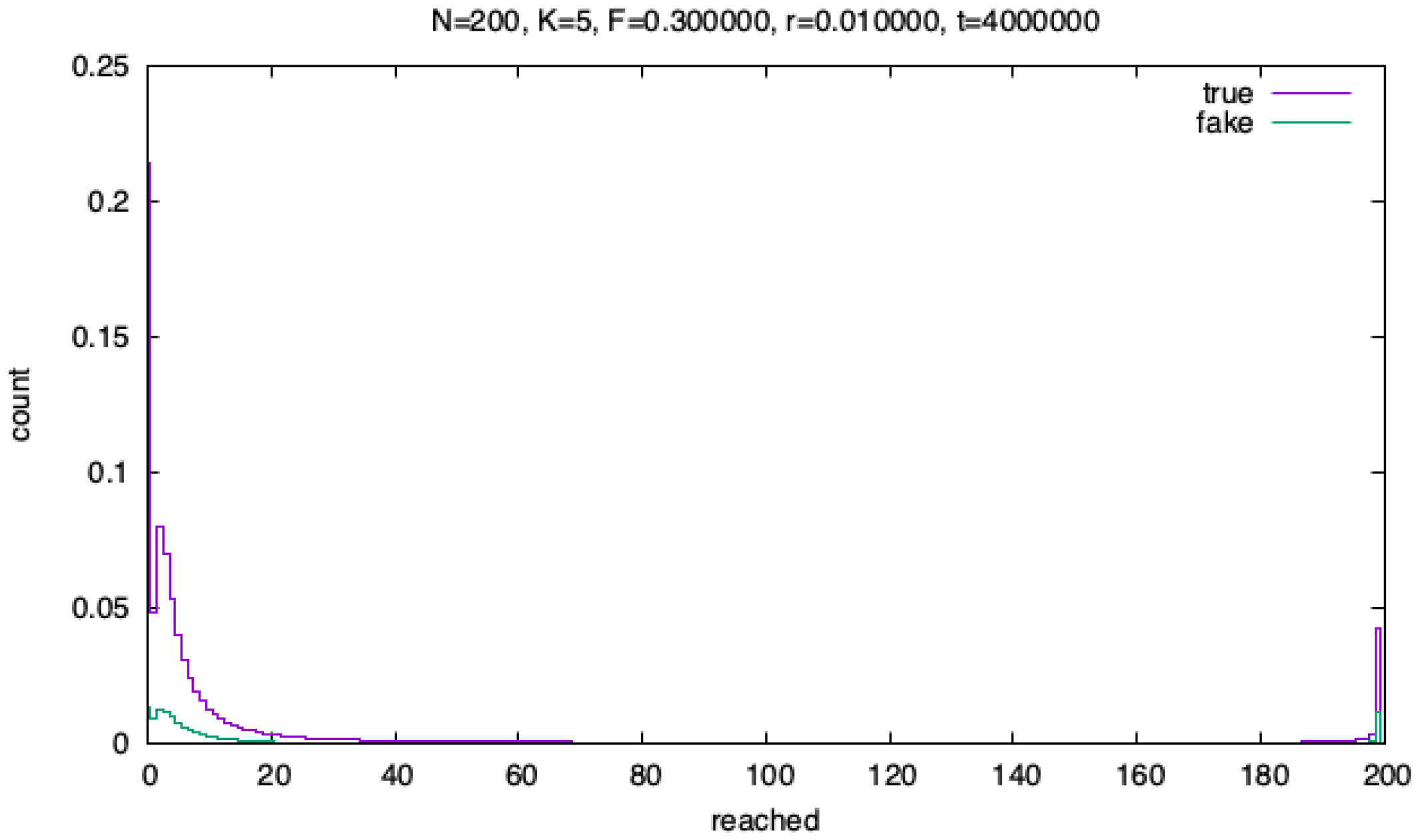

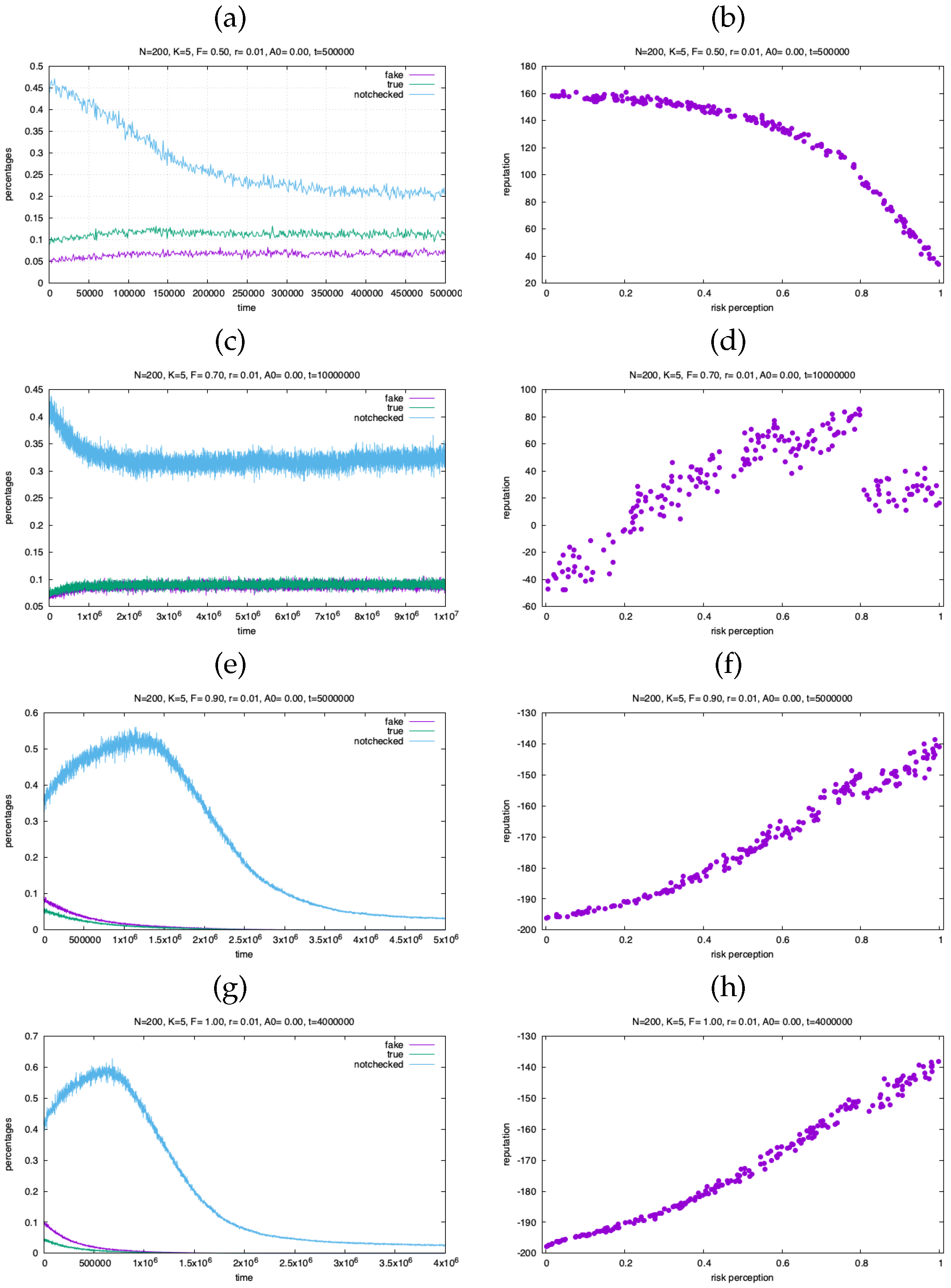

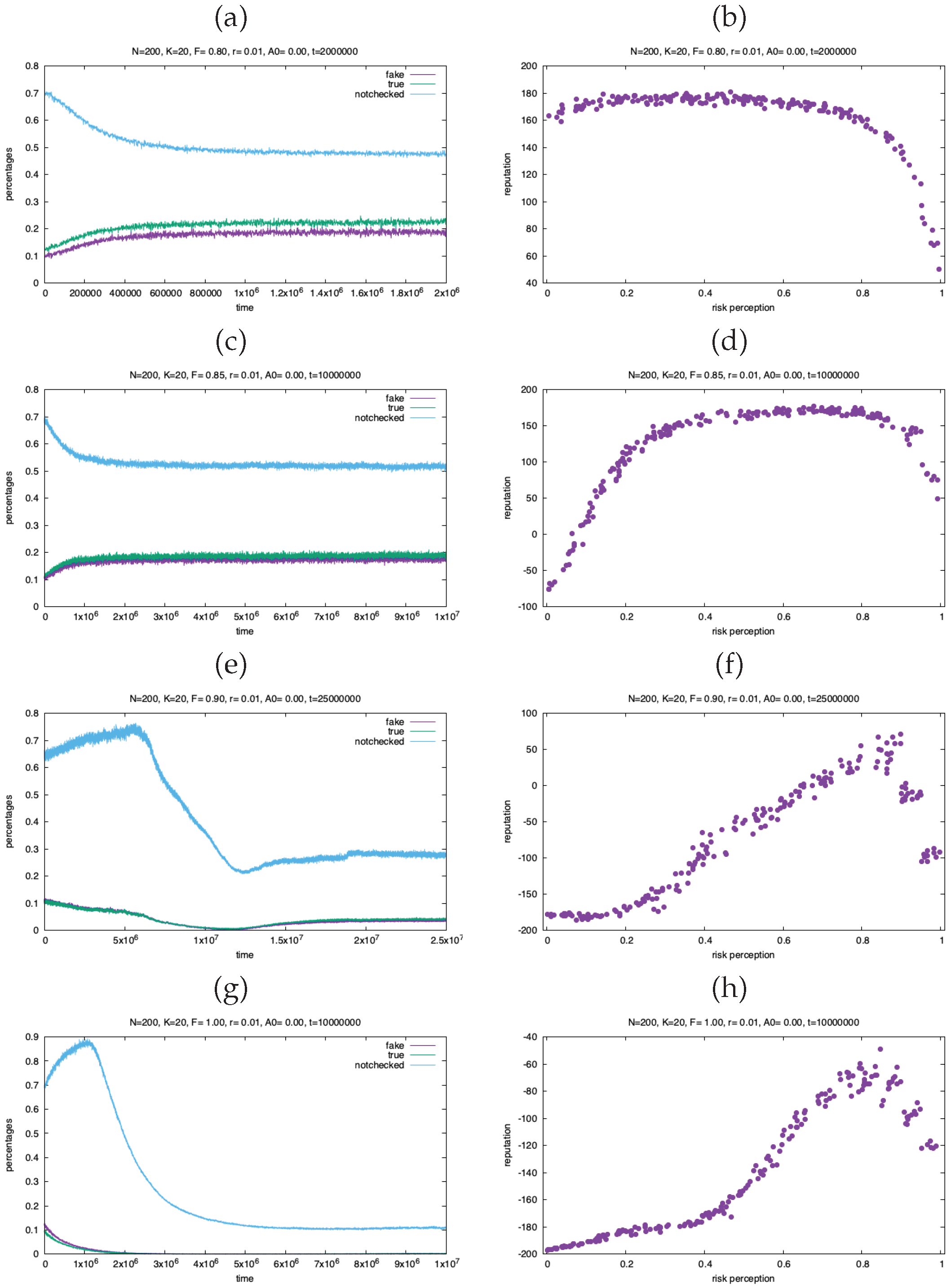

3. Simulation Results

4. Discussion: Bridging Modern Recommender Systems and Cognitive Modeling

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Tversky, A.; Kahneman, D. Judgment under Uncertainty: Heuristics and Biases: Biases in judgments reveal some heuristics of thinking under uncertainty. Science 1974, 185, 1124–1131. [Google Scholar] [CrossRef]

- Arima, Y. Psychology of Group and Collective Intelligence; Springer International Publishing, 2021. [Google Scholar] [CrossRef]

- Petrofsky, J. Facilitating Knowledge Sharing; M.f.a., Indiana University, 2017. [Google Scholar]

- Feld, S.L.; Webster, M.; Foschi, M. Status Generalization: New Theory and Research. Social Forces 1990, 68, 948. [Google Scholar] [CrossRef]

- Areeb, Q.M.; Nadeem, M.; Sohail, S.S.; Imam, R.; Doctor, F.; Himeur, Y.; Hussain, A.; Amira, A. Filter bubbles in recommender systems: Fact or fallacy—A systematic review. WIREs Data Mining and Knowledge Discovery 2023, 13. [Google Scholar] [CrossRef]

- Alatawi, F.; Cheng, L.; Tahir, A.; Karami, M.; Jiang, B.; Black, T.; Liu, H. A Survey on Echo Chambers on Social Media: Description, Detection and Mitigation. 2021. [Google Scholar] [CrossRef]

- Wang, Z.; Zhu, H.; Sun, L. Social Engineering in Cybersecurity: Effect Mechanisms, Human Vulnerabilities and Attack Methods. IEEE Access 2021, 9, 11895–11910. [Google Scholar] [CrossRef]

- Barak, A. Phantom emotions. In Oxford handbook of Internet psychology; Oxford University Press, USA, 2007; p. 303. [Google Scholar]

- Simon, H.A. Bounded Rationality. In Utility and Probability; Palgrave Macmillan UK, 1990; pp. 15–18. [Google Scholar] [CrossRef]

- Dunbar, R.I.M. The social brain hypothesis. Evolutionary Anthropology: Issues, News, and Reviews 1998, 6, 178–190. [Google Scholar] [CrossRef]

- Festinger, L. Informal social communication. Psychological Review 1950, 57, 271–282. [Google Scholar] [CrossRef]

- Wolpert, D.H.; Tumer, K. An Introduction to Collective Intelligence; 1999. [Google Scholar] [CrossRef]

- Leimeister, J.M. Collective Intelligence. Business & Information Systems Engineering 2010, 2, 245–248. [Google Scholar] [CrossRef]

- Nitecki, M.H.; Nitecki, D.V.e. Origins of Anatomically Modern Humans; Springer US, 1994. [Google Scholar] [CrossRef]

- Bicchieri, C.; Muldoon, R.; Sontuoso, A. Social norms. In The Stanford Encyclopedia of Philosophy (Winter 2023 Edition); Zalta, E.N., Nodelman, U., Eds.; 2011. [Google Scholar]

- Dores Cruz, T.D.; Thielmann, I.; Columbus, S.; Molho, C.; Wu, J.; Righetti, F.; de Vries, R.E.; Koutsoumpis, A.; van Lange, P.A.M.; Beersma, B.; et al. Gossip and reputation in everyday life. Philosophical Transactions of the Royal Society B 2021, 376. [Google Scholar] [CrossRef]

- Dunbar, R.I.M. Gossip in Evolutionary Perspective. Review of General Psychology 2004, 8, 100–110. [Google Scholar] [CrossRef]

- Dunbar, R. Grooming, gossip, and the evolution of language; Harvard University Press: London, England, 2020. [Google Scholar]

- Gigerenzer, G.; Todd, P.M.; ABC Research Group. Simple heuristics that make us smart; Evolution and Cognition; Oxford University Press: New York, NY, 2000. [Google Scholar]

- Tversky, A.; Kahneman, D. Availability: A heuristic for judging frequency and probability. Cognitive Psychology 1973, 5, 207–232. [Google Scholar] [CrossRef]

- Kahneman, D.; Tversky, A. Prospect theory: An analysis of decision under risk. Econometrica 1979, 47, 363–391. [Google Scholar] [CrossRef]

- Tversky, A.; Kahneman, D. The Framing of Decisions and the Psychology of Choice. Science 1981, 211, 453–458. [Google Scholar] [CrossRef]

- Tversky, A.; Kahneman, D.; Slovic, P. Judgment under uncertainty: Heuristics and biases; Cambridge, 1982. [Google Scholar]

- McNeil, B.J.; Pauker, S.G.; Sox, H.C.; Tversky, A. On the Elicitation of Preferences for Alternative Therapies. New England Journal of Medicine 1982, 306, 1259–1262. [Google Scholar] [CrossRef]

- Taylor, S.E. The availability bias in social perception and interaction. In Judgment under Uncertainty; Tversky, A., Kahneman, D., Slovic, P., Eds.; Cambridge University Press, 1982; pp. 190–200. [Google Scholar] [CrossRef]

- Slovic, P.; Fischhoff, B.; Lichtenstein, S. Facts versus fears: Understanding perceived risk. In Judgment under Uncertainty; Cambridge University Press, 1982; pp. 463–490. [Google Scholar] [CrossRef]

- Christensen, C.; Abbott, A.S. Team Medical Decision Making. In Decision making in health care: Theory, psychology, and applications; Chapman, G., Sonnenberg, F., Eds.; Cambridge University Press: New York, NY, 2003; Vol. 267, chapter 10, pp. 267–284. [Google Scholar]

- Weiss, G. Multiagent systems; Intelligent Robotics & Autonomous Agents Series; MIT Press: London, England, 2000. [Google Scholar]

- Wooldridge, M. An Introduction to MultiAgent Systems, 2 ed.; John Wiley & Sons: Chichester, England, 2009. [Google Scholar]

- d’Inverno, M.; Luck, M. Understanding Agent Systems, 2 ed.; Springer Series on Agent Technology; Springer: Berlin, Germany, 2003. [Google Scholar]

- Sun, R. (Ed.) Cognition and multi-agent interaction; Cambridge University Press: Cambridge, England, 2008. [Google Scholar]

- Merelli, E.; Armano, G.; Cannata, N.; Corradini, F.; d’Inverno, M.; Doms, A.; Lord, P.; Martin, A.; Milanesi, L.; Moller, S.; et al. Agents in bioinformatics, computational and systems biology. Briefings in Bioinformatics 2006, 8, 45–59. [Google Scholar] [CrossRef]

- Dini, S.; Guazzini, A.; Cvetkovic, T.J.; Bagnoli, F. Crowdsourced Fact Checking. In preparation. 2026. [Google Scholar]

- Maslov, S.; Zhang, Y.C. Extracting Hidden Information from Knowledge Networks. Physical Review Letters 2001, 87. [Google Scholar] [CrossRef]

- Lü, L.; Medo, M.; Yeung, C.H.; Zhang, Y.C.; Zhang, Z.K.; Zhou, T. Recommender systems. Physics Reports 2012, 519, 1–49. [Google Scholar] [CrossRef]

- Zhang, J.C.; Zain, A.M.; Zhou, K.Q.; Chen, X.; Zhang, R.M. A review of recommender systems based on knowledge graph embedding. Expert Systems with Applications 2024, 250, 123876. [Google Scholar] [CrossRef]

- Bagnoli, F.; Berrones, A.; Franci, F. De gustibus disputandum (forecasting opinions by knowledge networks). Physica A: Statistical Mechanics and its Applications 2004, 332, 509–518. [Google Scholar] [CrossRef]

- Di Patti, F.; Bagnoli, F. Biologically Inspired Classifier. In Bio-Inspired Computing and Communication; Springer Berlin Heidelberg, 2008; pp. 332–339. [Google Scholar] [CrossRef]

- Nguyen, V.A.; Koukolíková-Nicola, Z.; Bagnoli, F.; Lió, P. Noise and non-linearities in high-throughput data. Journal of Statistical Mechanics: Theory and Experiment 2009, 2009, P01014. [Google Scholar] [CrossRef]

- Bagnoli, F.; de Bonfioli Cavalcabo’, G.; Casu, B.; Guazzini, A. Community Formation as a Byproduct of a Recommendation System: A Simulation Model for Bubble Formation in Social Media. Future Internet 2021, 13, 296. [Google Scholar] [CrossRef]

- Bagnoli, F.; Guazzini, A.; Liò, P. Human Heuristics for Autonomous Agents. In Bio-Inspired Computing and Communication; Springer Berlin Heidelberg, 2008; pp. 340–351. [Google Scholar] [CrossRef]

- Kang, W.C.; McAuley, J. Self-Attentive Sequential Recommendation. arXiv 2018, arXiv:1808.09781. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in neural information processing systems, 2017; pp. 5998–6008. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).