Submitted:

11 February 2026

Posted:

12 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

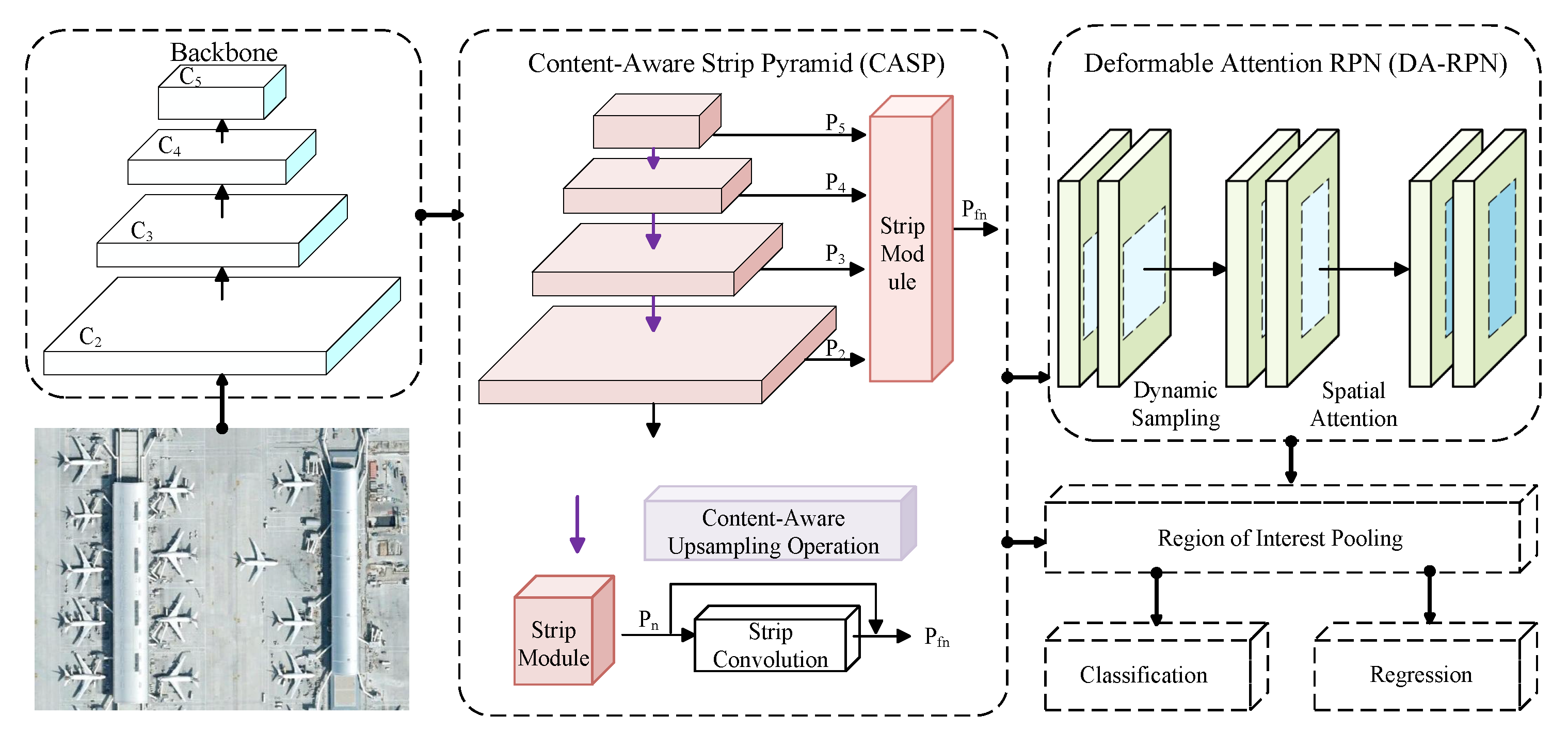

- A dual-attention–guided detection model, DAFSDet, is proposed under the transfer learning paradigm. By combining two specialized modules for multi-scale target perception and background suppression, this approach improves feature learning from limited samples in remote sensing imagery.

- 2.

- The Content-Aware Strip Pyramid (CASP) module improves multi-scale feature representation. It combines content-aware upsampling with bidirectional strip convolutions to focus attention on relevant spatial regions and capture long-range contextual information, forming a spatial-semantic attention mechanism. As a result, CASP produces multi-scale features that blend semantic richness with spatial details, creating a solid basis for few-shot object detection.

- 3.

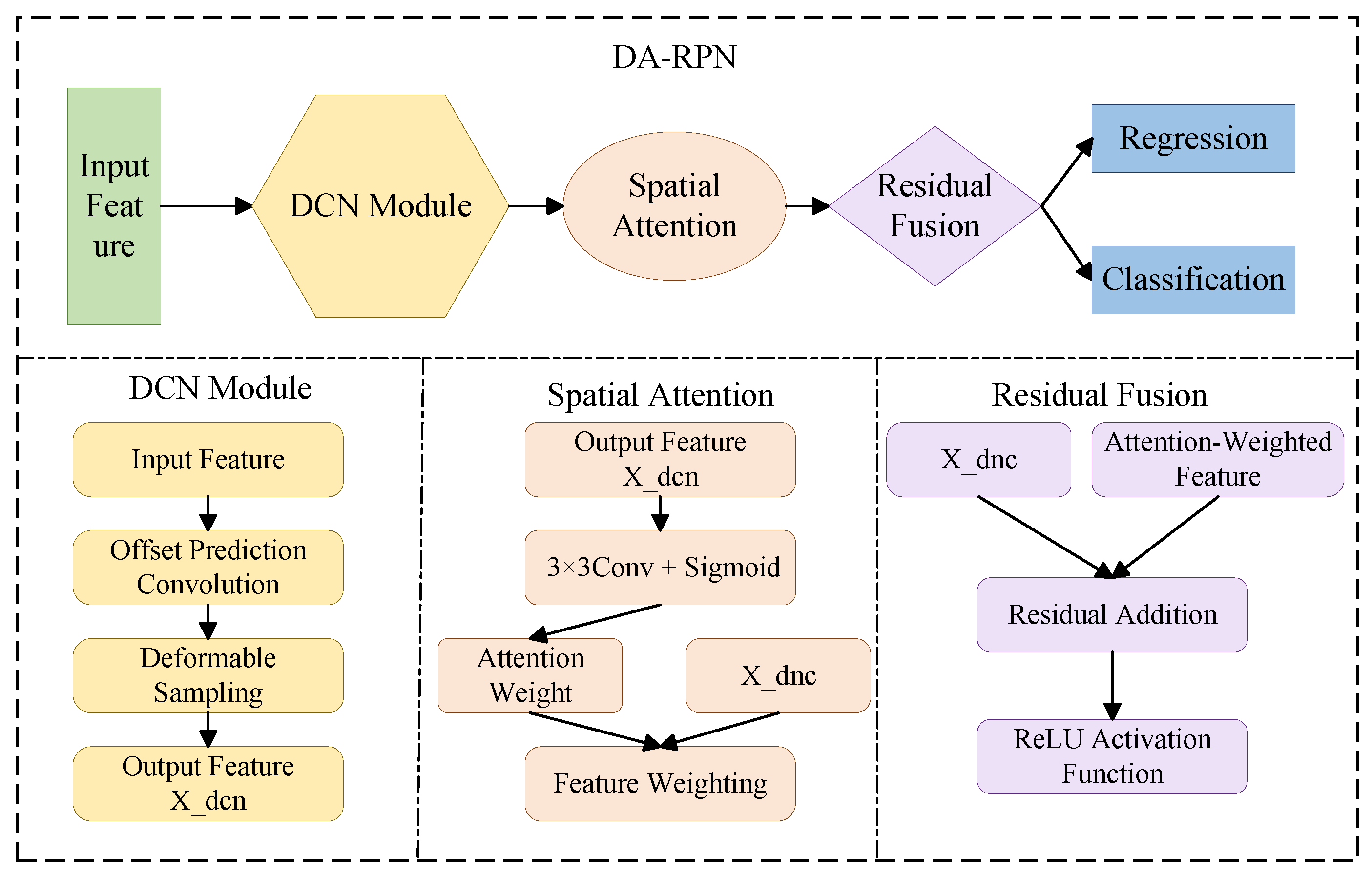

- The Deformable Attention Region Proposal Network (DA-RPN) enhances localization accuracy for targets in complex backgrounds. By combining deformable convolutions with a spatial attention mechanism, this network allows the receptive field to conform to target geometries and automatically attend to important foreground regions, effectively reducing background interference and improving the quality of candidate proposals.

2. Related Work

2.1. Few-Shot Object Detection

2.2. Few-Shot Object Detection in Remote Sensing Images

3. Methods

3.1. Preliminaries

3.2. Overall Network Architecture

- Neck: The CASP module combines content-aware upsampling with bidirectional strip convolution to form a collaborative attention mechanism across spatial and semantic dimensions. It improves multi-scale feature representations and strengthens the model’s ability to capture long-range context. As a result, the network produces multi-scale features that are semantically rich and spatially detailed, providing a solid foundation for few-shot detection.

- Detection Head: The DA-RPN uses deformable convolutions to adjust to the geometric shapes of targets and applies spatial attention to dynamically emphasize key feature regions. This design effectively suppresses complex background interference and enhances the response of the foreground target, thus generating candidate target regions with more accurate localization and higher quality.

3.3. Content-Aware Strip Pyramid (CASP)

3.4. Deformable Attention Region Proposal Network (DA-RPN)

4. Experimental Results and Analysis

4.1. Datasets and Evaluation Metrics

4.2. Experimental Setup

4.3. Experimental Results and Comparisons

4.3.1. Experimental Results on the DIOR Dataset

4.3.2. Experimental Results on the NWPU VHR-10 Dataset

4.4. Ablation Study

4.4.1. Effect of CASP

4.4.2. Effect of DA-RPN

4.4.3. Combined Effect

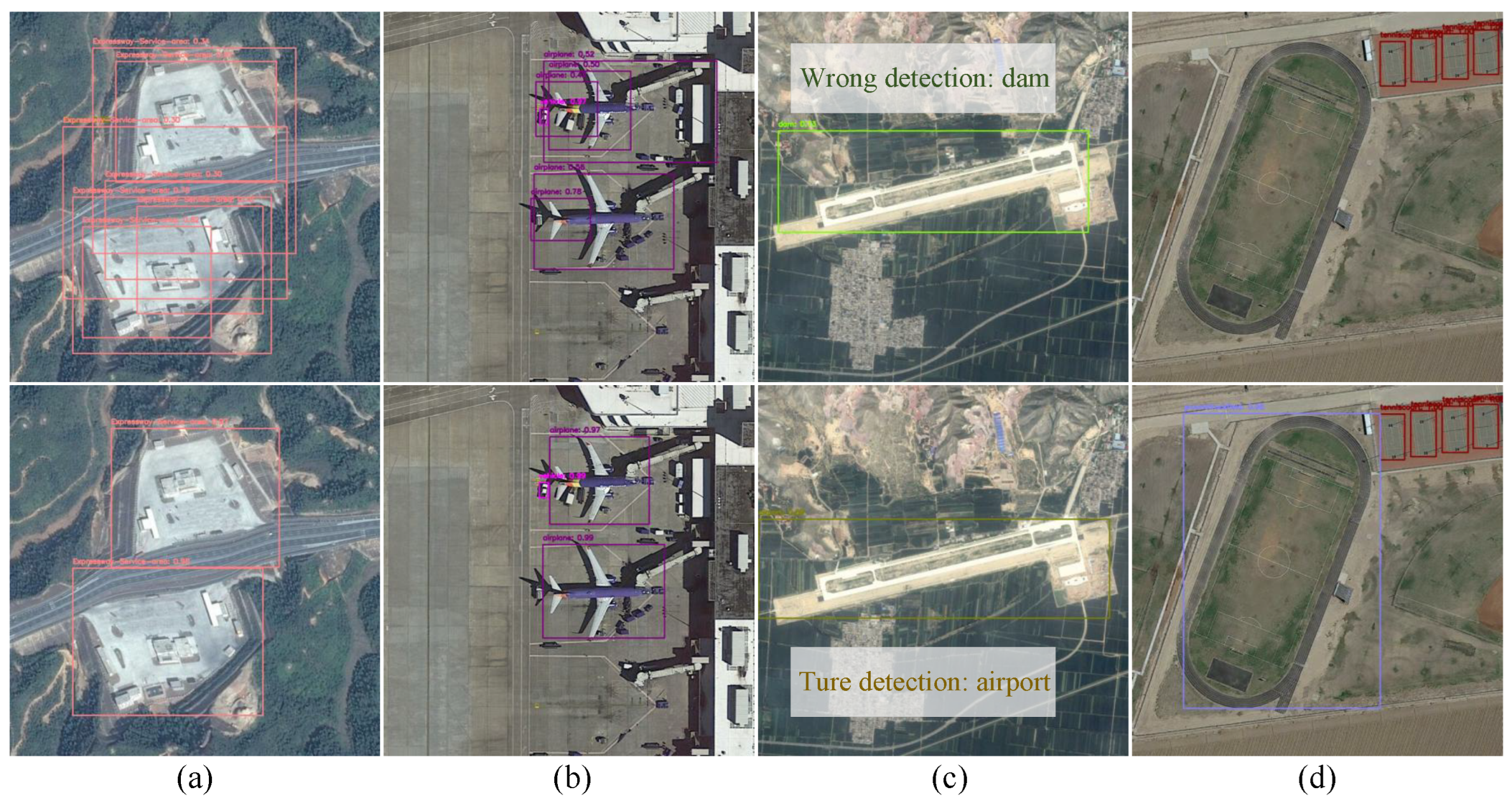

4.5. Failure Case Analysis

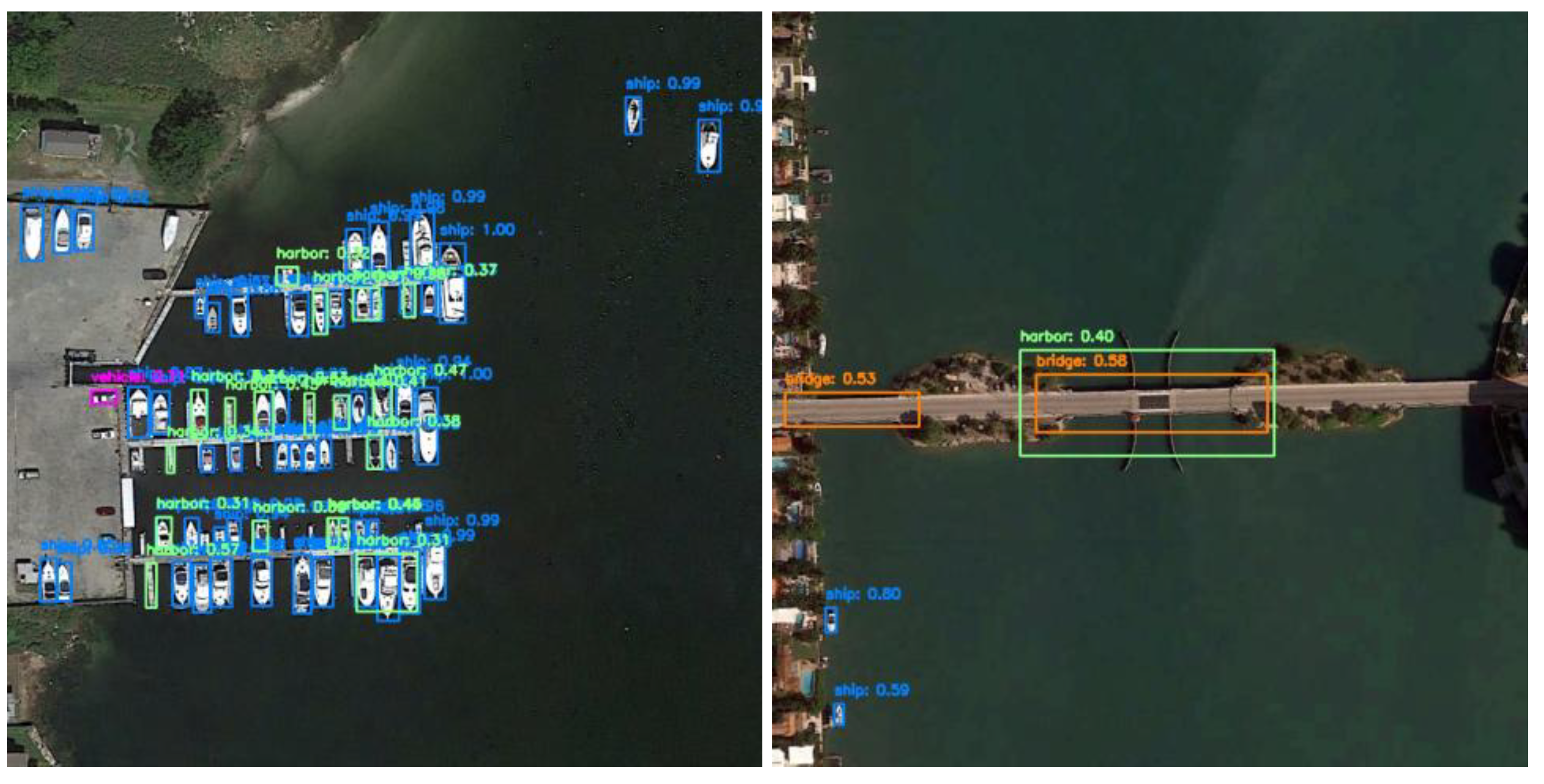

- Performance degradation in densely distributed small-object scenes. In typical remote sensing scenarios, targets such as ships often exhibit high density, small scale, and compact spatial arrangements. Due to extremely close distances between objects and frequent boundary overlaps, the proposed method may still suffer from missed detections or redundant predictions during region proposal generation and subsequent classification. In particularly crowded areas, the discriminative features of small targets are easily overwhelmed by neighboring objects or background clutter, leading to lower detection confidence or missed detections.

- Confusion among visually similar categories. Objects such as bridges, harbors, and overpasses share similar geometric structures and texture patterns when observed from an aerial perspective. In few-shot settings, the scarcity of category-specific samples hinders the model from acquiring adequately discriminative features, resulting in confusion between classes. As shown in Figure 6, some bridge regions are misclassified as harbors or overpasses, indicating that fine-grained category discrimination remains challenging when visual differences between classes are subtle.

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| RPN | Region Proposal Network |

| FPN | Feature Pyramid Network |

| FSOD | Few-shot object detection |

| mAP | Mean Average Precision |

| SGD | Stochastic Gradient Descent |

| GPU | Graphics Processing Unit |

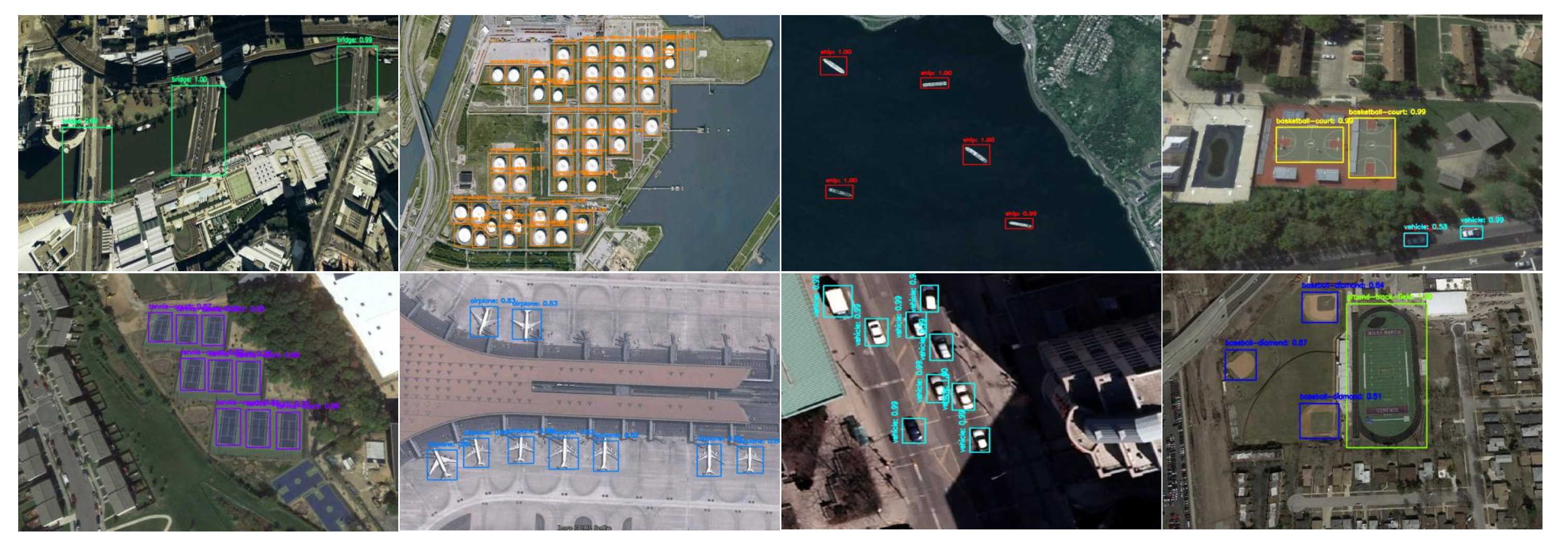

Appendix A. Task Setting and Dataset Characteristics of DIOR

Appendix A.1. Detection Task

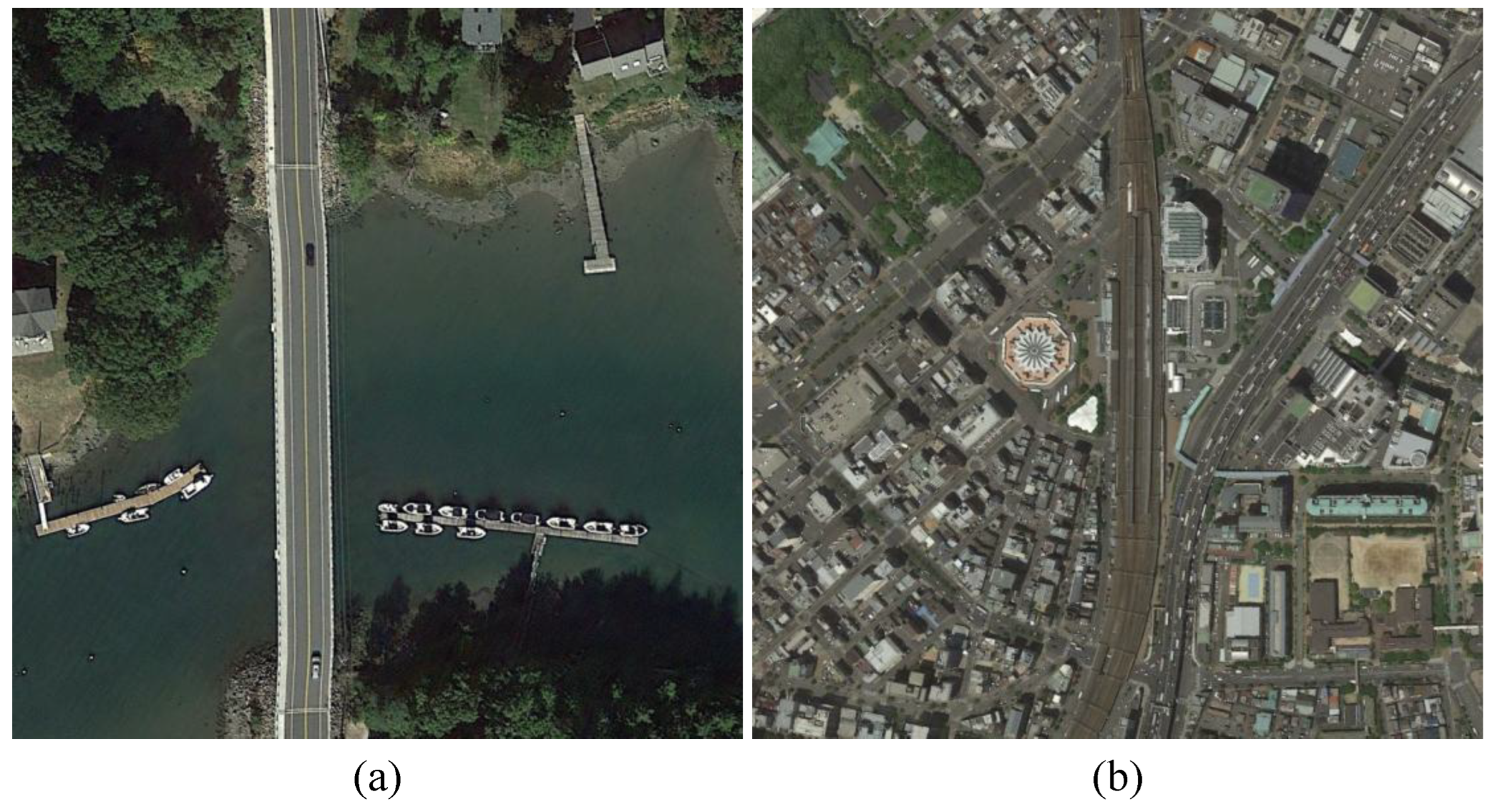

Appendix A.2. Image Properties and Scene Diversity

Appendix A.3. Category Structure

- Aerial transport: covering airplanes and airport facilities as seen from above.

- Maritime transport: including vessels and port facilities located along coastal and inland waterways.

- Road transport and associated infrastructure: including vehicles and highway-linked structures, for example, service zones, toll plazas, bridges, and flyovers.

- Rail transport: including railway stations and rail hubs with extended track arrangements.

Appendix A.4. Summary

Appendix B. Task Setting and Dataset Characteristics of NWPU VHR-10

Appendix B.1. Detection Task

Appendix B.2. Image Properties and Scene Diversity

Appendix B.3. Category Structure

- Transportation-related objects include airplanes, ships, vehicles, bridges, and harbors, which appear in scenes structured by runways, road systems, or water routes.

- Infrastructure and functional facilities are represented by storage tanks and ground track fields, characterized by regular geometry and extensive spatial coverage.

- Sports and recreational facilities include baseball fields, basketball courts, and tennis courts, which are distinguished by clear markings and highly symmetric layouts.

Appendix B.4. Summary

References

- Li, B.; Zhou, Z.; Wu, T.; Luo, J. Fine-Grained Land Use Remote Sensing Mapping in Karst Mountain Areas Using Deep Learning with Geographical Zoning and Stratified Object Extraction. Remote Sens. 2025, 17, 2368. [Google Scholar] [CrossRef]

- Wang, Z.; Liang, Y.; He, Y.; Cui, Y.; Zhang, X. Refined Land Use Classification for Urban Core Area from Remote Sensing Imagery by the EfficientNetV2 Model. Appl. Sci. 2024, 14, 7235. [Google Scholar] [CrossRef]

- Zhang, R.; Tang, X.; You, S.; Duan, K.; Xiang, H.; Luo, H. A Novel Feature-Level Fusion Framework Using Optical and SAR Remote Sensing Images for Land Use/Land Cover (LULC) Classification in Cloudy Mountainous Area. Appl. Sci. 2020, 10, 2928. [Google Scholar] [CrossRef]

- Ragab, M.; Abdushkour, H. A.; Khadidos, A. O.; Alshareef, A. M.; Alyoubi, K. H.; Khadidos, A. O. Improved Deep Learning-Based Vehicle Detection for Urban Applications Using Remote Sensing Imagery. Remote Sens. 2023, 15, 4747. [Google Scholar] [CrossRef]

- Ye, F.; Ai, T.; Wang, J.; Yao, Y.; Zhou, Z. A Method for Classifying Complex Features in Urban Areas Using Video Satellite Remote Sensing Data. Remote Sens. 2022, 14, 2324. [Google Scholar] [CrossRef]

- Wang, M.; Ding, W.; Wang, F.; Song, Y.; Chen, X.; Liu, Z. A Novel Bayes Approach to Impervious Surface Extraction from High-Resolution Remote Sensing Images. Sensors 2022, 22, 3924. [Google Scholar] [CrossRef]

- Du, X.; Song, L.; Lv, Y.; Qiu, S. A Lightweight Military Target Detection Algorithm Based on Improved YOLOv5. Electronics 2022, 11, 3263. [Google Scholar] [CrossRef]

- Zeng, B.; Gao, S.; Xu, Y.; Zhang, Z.; Li, F.; Wang, C. Detection of Military Targets on Ground and Sea by UAVs with Low-Altitude Oblique Perspective. Remote Sens. 2024, 16, 1288. [Google Scholar] [CrossRef]

- Sun, L.; Chen, J.; Feng, D.; Xing, M. Parallel Ensemble Deep Learning for Real-Time Remote Sensing Video Multi-Target Detection. Remote Sens. 2021, 13, 4377. [Google Scholar] [CrossRef]

- Liu, J.; Luo, Y.; Chen, S.; Wu, J.; Wang, Y. BDHE-Net: A Novel Building Damage Heterogeneity Enhancement Network for Accurate and Efficient Post-Earthquake Assessment Using Aerial and Remote Sensing Data. Appl. Sci. 2024, 14, 3964. [Google Scholar] [CrossRef]

- Xie, Z.; Zhou, Z.; He, X.; Fu, Y.; Gu, J.; Zhang, J. Methodology for Object-Level Change Detection in Post-Earthquake Building Damage Assessment Based on Remote Sensing Images: OCD-BDA. Remote Sens. 2024, 16, 4263. [Google Scholar] [CrossRef]

- Da, Y.; Ji, Z.; Zhou, Y. Building Damage Assessment Based on Siamese Hierarchical Transformer Framework. Mathematics 2022, 10, 1898. [Google Scholar] [CrossRef]

- Wang, D.; Yan, Z.; Liu, P. Fine-Grained Interpretation of Remote Sensing Image: A Review. Remote Sens. 2025, 17, 3887. [Google Scholar] [CrossRef]

- Liu, S.; You, Y.; Su, H.; Meng, G.; Yang, W.; Liu, F. Few-Shot Object Detection in Remote Sensing Image Interpretation: Opportunities and Challenges. Remote Sens. 2022, 14, 4435. [Google Scholar] [CrossRef]

- Nguyen, K.; Huynh, N. T.; Le, D. T.; et al. A Comprehensive Review of Few-Shot Object Detection on Aerial Imagery. Comput. Sci. Rev. 2025, 57, 100760. [Google Scholar] [CrossRef]

- Zhang, J.; Hong, Z.; Chen, X.; Li, Y. Few-Shot Object Detection for Remote Sensing Imagery Using Segmentation Assistance and Triplet Head. Remote Sens. 2024, 16, 3630. [Google Scholar] [CrossRef]

- Liu, Y.; Pan, Z.; Yang, J.; Zhou, P.; Zhang, B. Multi-Modal Prototypes for Few-Shot Object Detection in Remote Sensing Images. Remote Sens. 2024, 16, 4693. [Google Scholar] [CrossRef]

- Zhang, Y.; Lyu, X.; Li, X.; Zhou, S.; Fang, Y.; Ding, C.; Gao, S.; Chen, J. Complementary Local–Global Optimization for Few-Shot Object Detection in Remote Sensing. Remote Sens. 2025, 17, 2136. [Google Scholar] [CrossRef]

- Wu, X.; Sahoo, D.; Hoi, S. Meta-RCNN: Meta Learning for Few-Shot Object Detection. In Proceedings of the 28th ACM International Conference on Multimedia (ACM MM), Seattle, WA, USA, October 2020; ACM; pp. 1679–1687. [Google Scholar]

- Han, G.; Huang, S.; Ma, J.; He, Y.; Chang, S.-F. Meta Faster R-CNN: Towards Accurate Few-Shot Object Detection with Attentive Feature Alignment. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2022, Vol. 36(No. 1), 780–789. [Google Scholar] [CrossRef]

- Wang, X.; Huang, T. E.; Darrell, T.; Gonzalez, J. E.; Yu, F. Frustratingly Simple Few-Shot Object Detection. arXiv 2020, arXiv:2003.06957. [Google Scholar] [CrossRef]

- Qiao, L.; Zhao, Y.; Li, Z.; Qiu, X.; Wu, J.; Zhang, C. DeFRCN: Decoupled Faster R-CNN for Few-Shot Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, October 2021; IEEE; pp. 8681–8690. [Google Scholar]

- Lin, H.; Li, N.; Yao, P.; Dong, K.; Guo, Y.; Hong, D. Generalization-Enhanced Few-Shot Object Detection in Remote Sensing. IEEE Trans. Circuits Syst. Video Technol. 2025. [Google Scholar] [CrossRef]

- Gao, H.; Wu, S.; Wang, Y.; Kim, J. Y.; Xu, Y. FSOD4RSI: Few-Shot Object Detection for Remote Sensing Images via Features Aggregation and Scale Attention. IEEE J. Sel. Top. Appl. Earth Observ. Remote Sens. 2024, 17, 4784–4796. [Google Scholar] [CrossRef]

- Su, H.; You, Y.; Meng, G. Multi-Scale Context-Aware R-CNN for Few-Shot Object Detection in Remote Sensing Images. In Proceedings of the IGARSS 2022 – 2022 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), July 2022; IEEE: Kuala Lumpur, Malaysia; pp. 1908–1911. [Google Scholar]

- Li, X.; Hu, X.; Yang, J. Spatial Group-Wise Enhance: Improving Semantic Feature Learning in Convolutional Networks. arXiv 2019, arXiv:1905.09646. [Google Scholar] [CrossRef]

- Qin, A.; Chen, F.; Li, Q.; Song, T.; Zhao, Y.; Gao, C. Few-Shot Remote Sensing Scene Classification via Subspace Based on Multiscale Feature Learning. IEEE J. Sel. Top. Appl. Earth Observ. Remote Sens. 2024. [Google Scholar] [CrossRef]

- Wang, J.; Chen, K.; Xu, R.; Liu, Z.; Loy, C.C.; Lin, D. CARAFE: Content-Aware ReAssembly of Features. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), 27 October–2 November 2019; IEEE: Seoul, Republic of Korea; pp. 3007–3016. [Google Scholar]

- Fan, Q.; Zhuo, W.; Tang, C.; Tai, Y. Few-Shot Object Detection with Attention-RPN and Multi-Relation Detector. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, June 2020; IEEE; pp. 4013–4022. [Google Scholar]

- Xiao, Y.; Lepetit, V.; Marlet, R. Few-Shot Object Detection and Viewpoint Estimation for Objects in the Wild. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 45, 3090–3106. [Google Scholar] [CrossRef]

- Lu, X.; Diao, W.; Mao, Y.; Li, J.; Wang, P.; Sun, X.; Fu, K. Breaking Immutable: Information-Coupled Prototype Elaboration for Few-Shot Object Detection. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2023, Vol. 37(No. 2), 1844–1852. [Google Scholar] [CrossRef]

- Han, J.; Ren, Y.; Ding, J.; Yan, K.; Xia, G. Few-Shot Object Detection via Variational Feature Aggregation. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2023, Vol. 37(No. 1), 755–763. [Google Scholar] [CrossRef]

- Sun, B.; Li, B.; Cai, S.; Yuan, Y.; Zhang, C. FSCE: Few-Shot Object Detection via Contrastive Proposal Encoding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual Event, June 2021; IEEE; pp. 7352–7362. [Google Scholar]

- Kim, S.; Nam, W.; Lee, S. Few-Shot Object Detection with Proposal Balance Refinement. In Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR), Montreal, QC, Canada, August 2022; IEEE; pp. 4700–4707. [Google Scholar]

- Li, X.; Deng, J.; Fang, Y. Few-Shot Object Detection on Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–14. [Google Scholar] [CrossRef]

- Huang, X.; He, B.; Tong, M.; Wang, D.; He, C. Few-Shot Object Detection on Remote Sensing Images via Shared Attention Module and Balanced Fine-Tuning Strategy. Remote Sens. 2021, 13, 3816. [Google Scholar] [CrossRef]

- Zhou, Z.; Li, S.; Guo, W.; Gu, Y. Few-Shot Aircraft Detection in Satellite Videos Based on Feature Scale Selection Pyramid and Proposal Contrastive Learning. Remote Sens. 2022, 14, 4581. [Google Scholar] [CrossRef]

- Lin, T.; Dollar, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, July 2017; IEEE; pp. 2117–2125. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Chen, J.; Qin, D.; Hou, D.; Zhang, J.; Deng, M.; Sun, G. Multiscale Object Contrastive Learning-Derived Few-Shot Object Detection in VHR Imagery. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–15. [Google Scholar] [CrossRef]

- Cheng, G.; Yan, B.; Shi, P.; Li, K.; Yao, X.; Guo, L. Prototype-CNN for Few-Shot Object Detection in Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–10. [Google Scholar] [CrossRef]

- Lu, X.; Sun, X.; Diao, W.; Mao, Y.; Li, J.; Zhang, Y. Few-Shot Object Detection in Aerial Imagery Guided by Text-Modal Knowledge. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–19. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhong, Y.; Wang, J.; Ma, A. Foreground-Aware Relation Network for Geospatial Object Segmentation in High Spatial Resolution Remote Sensing Imagery. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual Event, June 2020; IEEE; pp. 4096–4105. [Google Scholar]

- Li, K.; Wan, G.; Cheng, G.; Meng, L.; Han, J. Object Detection in Optical Remote Sensing Images: A Survey and a New Benchmark. ISPRS J. Photogramm. Remote Sens. 2020, 159, 296–307. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J.; Zhou, P.; Guo, L. Multi-Class Geospatial Object Detection and Geographic Image Classification Based on Collection of Part Detectors. ISPRS J. Photogramm. Remote Sens. 2014, 98, 119–132. [Google Scholar] [CrossRef]

- Liu, Y.; Pan, Z.; Yang, J.; Zhang, B.; Zhou, G.; Hu, Y. Few-Shot Object Detection in Remote-Sensing Images via Label-Consistent Classifier and Gradual Regression. IEEE Trans. Geosci. Remote Sens. 2024, 62, 1–14. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, June 2016; IEEE; pp. 770–778. [Google Scholar]

| Split | Novel Classes | Base |

|---|---|---|

| 1 | BF, BC, BR, CH, SH | Rest |

| 2 | AP, AT, ETS, HB, GTF | Rest |

| 3 | DM, GC, STK, TC, VE | Rest |

| 4 | ESA, OP, ST, TS, WM | Rest |

| Split | Method | 3-shot | 5-shot | 10-shot | 20-shot |

|---|---|---|---|---|---|

| 1 | Meta RCNN[19] | 12.02 | 13.09 | 14.07 | 14.45 |

| FsDetView[30] | 13.19 | 14.29 | 18.02 | 18.01 | |

| TFA w/cos[21] | 16.07 | 15.36 | 16.45 | 18.93 | |

| P-CNN[41] | 18.00 | 22.80 | 27.60 | 29.60 | |

| FSOD[29] | 15.94 | 20.27 | 24.22 | 28.16 | |

| FSCE[33] | 27.91 | 28.60 | 33.05 | 37.55 | |

| MSOCL[40] | 24.97 | 27.27 | 33.37 | 39.22 | |

| ICPE[31] | 11.68 | 12.34 | 12.95 | 14.33 | |

| VFA[32] | 21.94 | 21.27 | 23.32 | 24.28 | |

| SAE-FSDT[46] | 28.80 | 32.40 | 37.09 | 42.46 | |

| SAE-FSDT*[46] | 25.08 | 28.91 | 35.57 | 41.77 | |

| DA-FSDeT(Ours) | 27.22 | 29.54 | 33.86 | 39.15 | |

| 2 | Meta RCNN[19] | 8.84 | 10.88 | 14.90 | 16.71 |

| FsDetView[30] | 10.83 | 9.63 | 13.57 | 14.76 | |

| TFA w/cos[21] | 6.81 | 7.53 | 8.93 | 11.05 | |

| P-CNN[41] | 14.50 | 14.90 | 18.90 | 22.80 | |

| FSOD[29] | 9.35 | 9.73 | 14.84 | 16.20 | |

| FSCE[33] | 13.17 | 14.07 | 15.79 | 20.93 | |

| MSOCL[40] | 13.31 | 13.40 | 15.00 | 18.15 | |

| ICPE[31] | 10.92 | 10.56 | 12.39 | 13.18 | |

| VFA[32] | 12.10 | 12.70 | 14.72 | 15.47 | |

| SAE-FSDT[46] | 13.99 | 15.65 | 17.41 | 21.34 | |

| SAE-FSDT*[46] | 12.85 | 14.04 | 14.53 | 20.78 | |

| DA-FSDeT(Ours) | 15.83 | 18.57 | 22.07 | 25.52 | |

| 3 | Meta RCNN[19] | 9.10 | 12.29 | 11.96 | 16.14 |

| FsDetView[30] | 7.49 | 12.61 | 11.49 | 17.02 | |

| TFA w/cos[21] | 8.73 | 9.31 | 12.19 | 16.97 | |

| P-CNN[41] | 16.50 | 18.80 | 23.30 | 28.80 | |

| FSOD[29] | 10.40 | 10.74 | 12.26 | 11.52 | |

| FSCE[33] | 15.59 | 16.24 | 23.75 | 28.89 | |

| MSOCL[40] | 13.11 | 15.07 | 23.39 | 27.44 | |

| ICPE[31] | 10.56 | 11.21 | 12.38 | 13.08 | |

| VFA[32] | 11.97 | 13.19 | 15.45 | 17.61 | |

| SAE-FSDT[46] | 16.74 | 19.07 | 28.44 | 29.88 | |

| SAE-FSDT*[46] | 14.04 | 16.48 | 26.65 | 28.42 | |

| DA-FSDeT(Ours) | 18.21 | 20.78 | 29.76 | 31.44 | |

| 4 | Meta RCNN[19] | 13.94 | 15.84 | 15.07 | 18.17 |

| FsDetView[30] | 14.28 | 15.95 | 15.37 | 16.96 | |

| TFA w/cos[21] | 9.54 | 13.82 | 13.82 | 16.61 | |

| P-CNN[41] | 15.20 | 17.50 | 18.90 | 25.70 | |

| FSOD[29] | 11.84 | 12.98 | 17.17 | 18.46 | |

| FSCE[33] | 17.45 | 20.42 | 22.22 | 24.96 | |

| MSOCL[40] | 10.40 | 12.29 | 16.64 | 22.67 | |

| ICPE[31] | 14.45 | 14.52 | 15.95 | 15.61 | |

| VFA[32] | 15.52 | 17.76 | 18.62 | 20.05 | |

| SAE-FSDT[46] | 17.27 | 20.48 | 22.69 | 26.75 | |

| SAE-FSDT*[46] | 14.87 | 16.92 | 20.21 | 24.96 | |

| DA-FSDeT(Ours) | 17.82 | 22.63 | 28.15 | 30.51 | |

| * All results are obtained from our own reimplementation under identical experimental configurations. | |||||

| Method | 3-shot | 5-shot | 10-shot | 20-shot |

|---|---|---|---|---|

| Meta R-CNN[19] | 20.51 | 21.77 | 26.98 | 28.24 |

| FsDetView[30] | 24.56 | 29.55 | 31.77 | 32.73 |

| TFA w/cos[21] | 16.17 | 20.49 | 21.22 | 21.57 |

| P-CNN[41] | 41.80 | 49.17 | 63.29 | 66.83 |

| FSOD[29] | 41.80 | 49.17 | 63.29 | 66.83 |

| FSCE[33] | 10.95 | 15.13 | 16.23 | 17.11 |

| MSOCL[40] | 41.63 | 48.80 | 59.97 | 79.60 |

| ICPE[31] | 6.10 | 9.10 | 12.00 | 12.20 |

| VFA[32] | 13.14 | 15.08 | 13.89 | 20.18 |

| SAE-FSDT[46] | 57.96 | 59.40 | 71.02 | 85.08 |

| DA-FSDeT(Ours) | 60.05 | 60.80 | 72.50 | 85.56 |

| CASP | DA-RPN | 3-shot | 5-shot | 10-shot | 20-shot | Params | FLOPs |

|---|---|---|---|---|---|---|---|

| - | - | 12.85 | 14.04 | 14.53 | 20.78 | 60.89 | 181.13 |

| √ | - | 15.76 | 16.51 | 20.17 | 24.81 | 68.65 | 202.02 |

| - | √ | 13.62 | 15.36 | 18.03 | 23.08 | 60.75 | 175.79 |

| √ | √ | 15.83 | 18.57 | 22.07 | 25.52 | 68.65 | 202.15 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).