Submitted:

21 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

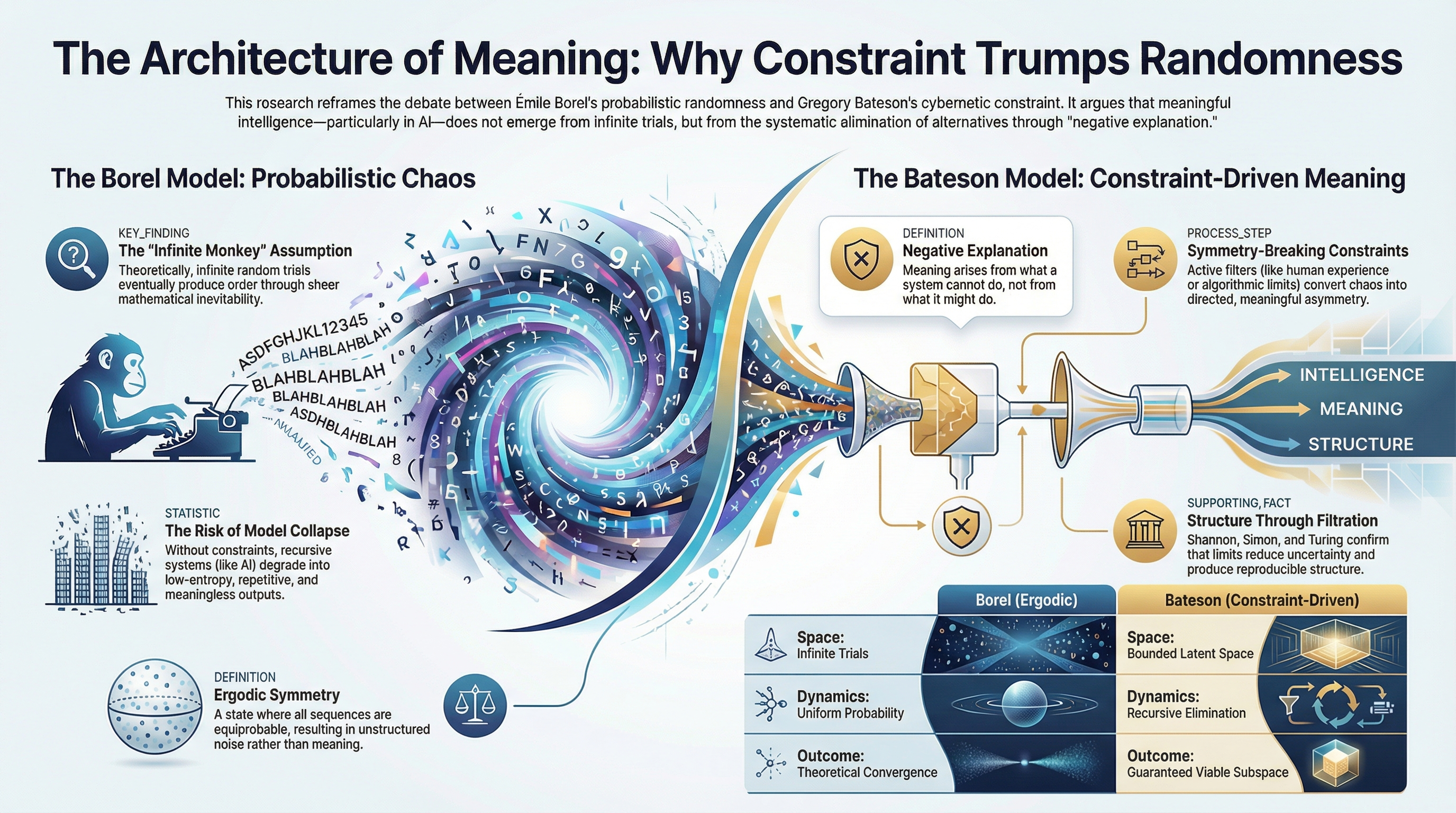

- Deconstructing the “Borelean” limits of current ergodic assumptions in AI architectures.

- Mapping the transition from symmetry (uniform probability) to asymmetry (directed information) via Bateson’s negative explanation.

- Correlating these concepts with real-world mechanisms such as regularization, pruning, attention masks, and other constraint-inducing techniques.

The Long-Standing Debate

Philosophical Foundations: Chance, Necessity, and the Limits of Randomness

Probability Theory: Formalizing Randomness and the Infinite Possibility Horizon

Systems Theory and Cybernetics: From Holism to Negative Explanation

Complexity Science: Intermediate Regimes and Emergent Order

AI: Scaling, Emergence, and the Limits of Ergodicity

Theoretical Framework

Key Conceptual Definitions

Unified Borel–Bateson Framework

Methods

Stage 1: Conceptual Explication

- Bateson (1967, 2000a, pp. 407–418, Cybernetic Explanation) introduces negative explanation as the principle that structure arises through the recursive elimination of non-viable alternatives within bounded systems. This establishes the abstract, conceptual foundation of constraint-driven emergence.

- Bateson (2000b, pp. 315–344, The Cybernetics of ‘Self’) provides a concrete application: the behavior of the “alcoholic self” illustrates how systemic constraints and feedback loops produce viable outcomes without reliance on direct linear causation. This demonstrates negative explanation in a bounded, real-world context.

- Bateson (2000c, pp. 455–471, Form, Substance, and Difference) grounds the epistemology of structured emergence: meaningful outcomes arise from distinctions (“differences that make a difference”) rather than from stochastic or material forces, highlighting the ontological dimension of constraint.

- Borel (1909, 1913, 1914), by contrast, treats structure as an artifact of ergodic probability: uniform distribution over infinite sequences theoretically guarantees the emergence of order, independent of systemic boundaries or feedback.

Stage 2: Comparative Synthesis

- Information theory: Shannon’s entropy reduction via constraint aligns Bateson’s elimination

- Decision theory: Simon’s satisficing operationalizes cybernetic selection

- Computation theory: Turing’s algorithmic limits formalize constraint-driven convergence

Stage 3: Formal Set-Theoretic Derivation

Epistemological Positioning

Analytic Outputs

- Comparative Operational Table (Table 1). Summarizes core differences between Borel’s ergodic model and Bateson’s constraint-driven logic, highlighting how bounded latent spaces and recursive elimination shape viable system outcomes.

- Comparative Epistemological Table (Table 2). Synthesizes cybernetic constraints versus probabilistic randomness in a matrix-like format, linking these distinctions to Information Theory (Shannon), Bounded Rationality (Simon), and Computability Theory (Turing). This table demonstrates how constraints generate meaningful structure and reveal the limits of infinite-trial assumptions.

- Formal Set-Theoretic Derivation. Operationalizes the recursive application of constraint functions in latent spaces, illustrating how ergodic symmetry is broken and structured emergence is preserved.

Analysis

Stage 1: Conceptual Explication

Stage 2: Comparative Synthesis

Stage 3: Formal Set-Theoretic Derivation

Analytic Outputs

Stage 1: Conceptual Explication

Stage 2: Comparative Synthesis

Stage 3: Formal Set-Theoretic Derivation

Discussion

Interpretation of Results

Clarifying Non-Equivalence with Probabilistic Convergence

Observer Inclusion and Second-Order Cybernetics

Symmetry-Breaking and Experientia Humana

Applied Examples and the Constraint Operator

Contributions to Contemporary Debates

Further Implications

- Reframes the Infinite Monkey Theorem. This study challenges the classical interpretation of Borel’s probabilistic ergodicity, arguing that meaningful structure does not emerge from unbounded random sampling, but from the recursive elimination of improbable alternatives. While Feller (1957) emphasizes the mathematical inevitability of structure through indefinite trials, this perspective overlooks how meaningful outcomes are actively constrained in real-world systems. Bateson’s negative explanation redirects attention from the probability of random success to the systemic boundaries that prevent failure. Popper’s (1959) emphasis on falsifiability and Spencer-Brown’s (1969) logic of distinction reinforce the view that meaningful emergence arises from what is excluded, not from what is permitted.

- Advances Cybernetic Epistemology. Building on Bateson’s (1967) critique of causality, this study advances cybernetic epistemology by formalizing negative explanation as a foundation for understanding how structure and meaning arise. Rather than framing causality as a chain of productive events, it centers on recursive feedback and systemic restraint. This argument aligns with von Foerster’s (1979) second-order cybernetics, which emphasizes the observer’s embeddedness within self-organizing systems, and Varela’s (1980) theory of autopoiesis, which shows that systems maintain coherence through self-regulation. This reconceptualization of causality shifts the discourse from generative randomness to epistemic boundaries.

- Demonstrates the Limits of Probabilistic Models. Traditional probabilistic models rely on asymptotic reasoning, suggesting that probabilities converge with infinite trials. Cantelli (1917) formalized this convergence, and de Finetti (1974) emphasized the subjective foundation of probability as a limiting frequency. However, these views often abstract away from how systems actually generate meaningful outcomes in bounded conditions. This study argues that such models obscure the role of feedback, thresholds, and selection constraints in shaping complex behavior. Morin’s (1992) critique of linear models in complexity science further supports the claim that probabilistic infinity is insufficient for explaining structure. Instead, cybernetic frameworks foreground constraint as the organizing principle of emergence.

- Bridges Philosophy, Cybernetics, and Probability. This study offers an integrative framework that connects philosophical epistemology, cybernetic systems theory, and classical probability. Bateson’s cybernetic logic is shown to intersect with Shannon’s information theory and Simon’s bounded rationality, while also resonating with Wiener’s (1948) theory of feedback and control. Foucault’s (1970) analysis of discourse as constrained by regimes of knowledge complements this cybernetic view, highlighting that epistemic systems are shaped by what they exclude rather than what they contain. Together, these thinkers converge on the idea that structure and intelligibility emerge from the filtration of possibilities—not their proliferation.

- Contributes to Contemporary Debates. By revisiting a canonical paradox in probability theory, this study contributes fresh insight into active debates within epistemology, complexity science, AI, and systems design. Rescher (1977) emphasizes the role of methodological pragmatism, advocating for knowledge systems that reflect real-world constraints. Kauffman’s (1993) notion of the adjacent possible illustrates how novelty arises from bounded combinations, not infinite random recombination. This study’s cybernetic synthesis supports those models that prioritize feedback, boundary conditions, and recursive structuring as mechanisms for emergence, thereby challenging the continued reliance on stochastic excess in contemporary theories of cognition and computation.

- Introduces a Constraint-Driven Model of Emergence. The cumulative insights from this study establish a formal model of constraint-driven emergence. This model reorients traditional assumptions about structure and meaning, placing the emphasis on what systems exclude through negative selection rather than on the statistically inevitable emergence of order. It highlights the explanatory power of cybernetic feedback, algorithmic restriction, and epistemic thresholds in producing intelligible outcomes within complex systems.

- Establishes a Foundation for Interdisciplinary Research. Finally, by integrating foundational insights from cybernetics, epistemology, and probability theory, this study lays the groundwork for interdisciplinary applications. It offers a theoretical architecture that can inform models in AI, cognitive science, ecology, organizational systems, and beyond. This foundation encourages a shift toward constraint-centric frameworks, aligning theoretical insight with practical design principles across disciplines.

Limitations & Future Research

Conclusion

Epistemological Addendum

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Artificial Intelligence Use

References

- Achille, A.; Soatto, S. Emergence of invariance and disentanglement in deep representations. J. Mach. Learn. Res. 2018, 19, 1–34, Available online: https://jmlr.org/papers/v19/17-646.html. [Google Scholar]

- Alemohammad, S.; Casco-Rodriguez, J.; Luzi, L.; Humayun, A.I.; Babaei, H.; LeJeune, D.; Siahkoohi, A.; Baraniuk, R.G. Self-consuming generative models go MAD. Presented at the International Conference on Learning Representations (ICLR 2024), Vienna, Austria, 16–20 January 2024. Available online: https://openreview.net/forum?id=ShjMHfmPs0. [Google Scholar]

- Aristotle. Physics; Hardie, R.P.; Gaye, R.K., Translators. In The Works of Aristotle; Vol. 2; Oxford University Press: Oxford, UK, 1929. (Original work published ca. 350 B.C.E.).

- Ascham, R. The Scholemaster; Arber, E., Ed.; Constable & Company: London, UK, 1923. (Original work published posthumously 1545).

- Bateson, G. Cybernetic explanation. Am. Behav. Sci. 1967, 10, 29–32. [Google Scholar] [CrossRef]

- Bateson, G. Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; Chandler Publishing Company: San Francisco, CA, USA, 1972. [Google Scholar]

- Bateson, G. Cybernetic explanation. In Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; University of Chicago Press: Chicago, IL, USA, 2000; pp. 407–418. (Original work published 1972). [Google Scholar]

- Bateson, G. The cybernetics of “self”: A theory of alcoholism. In Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; University of Chicago Press: Chicago, IL, USA, 2000; pp. 315–344. (Original work published 1972). [Google Scholar]

- Bateson, G. Form, substance, and difference. In Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; University of Chicago Press: Chicago, IL, USA, 2000; pp. 455–471. (Original work published 1972). [Google Scholar]

- Bateson, G. Redundancy and coding. In Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; University of Chicago Press: Chicago, IL, USA, 2000; pp. 419–440. (Original work published 1972). [Google Scholar]

- Bateson, G. Pathologies of epistemology. In Steps to an Ecology of Mind: Collected Essays in Anthropology, Psychiatry, Evolution, and Epistemology; University of Chicago Press: Chicago, IL, USA, 2000; pp. 484–493. (Original work published 1972). [Google Scholar]

- Boolos, G.; Jeffrey, R. Computability and Logic, 4th ed.; Cambridge University Press: Cambridge, UK, 2002. [Google Scholar]

- Borel, É. Les probabilités dénombrables et leurs applications arithmétiques. Rend. Circ. Mat. Palermo 1909, 27, 247–271. [Google Scholar] [CrossRef]

- Borel, É. Mécanique statistique et irréversibilité. J. Phys. Théor. Appl. 1913, 5, 189–196. [Google Scholar]

- Borel, É. Le Hasard; Librairie Félix Alcan: Paris, France, 1914. [Google Scholar]

- Brunswic, L.M.; Clémente, M.; Yang, R.H.; Sigal, A.; Rasouli, A.; Li, Y. Ergodic generative flows. In Proceedings of the 42nd International Conference on Machine Learning; Proceedings of Machine Learning Research, 2025; pp. 5649–5668. [Google Scholar]

- Cantelli, F.P. Sulla probabilità come limite della frequenza. Atti Accad. Naz. Lincei 1917, 26, 39–45. [Google Scholar]

- Cervantes, M. de. Don Quixote; Vol. 1; Jarvis, J., Translator; Doré, G., Illustrator; Clark, D.W., Ed.; Project Gutenberg, n.d.

- Cilliers, P. Complexity and Postmodernism: Understanding Complex Systems; Routledge: London, UK, 1998. [Google Scholar]

- de Finetti, B. Theory of Probability; Wiley: New York, NY, USA, 1974; Vol. 1. [Google Scholar]

- Eagle, A. Chance versus randomness. In The Stanford Encyclopedia of Philosophy, Spring 2021 ed.; Zalta, E.N., Ed.; Metaphysics Research Lab, Stanford University: Stanford, CA, USA, 2021. [Google Scholar]

- Feller, W. An Introduction to Probability Theory and Its Applications; Wiley: New York, NY, USA, 1957; Vol. 1. [Google Scholar]

- Ferbach, D.; Bertrand, Q.; Bose, A.J.; Gidel, G. Self-consuming generative models with curated data provably optimize human preferences. arXiv 2024, arXiv:2407.09499. Available online: https://arxiv.org/abs/2407.09499. [Google Scholar]

- Foucault, M. The Order of Things: An Archaeology of the Human Sciences; Pantheon: New York, NY, USA, 1970. [Google Scholar]

- Gödel, K. On Formally Undecidable Propositions of Principia Mathematica and Related Systems; Meltzer, B.; Braithwaite, R.B., Translators; Dover: New York, NY, USA, 1992. (Original work published 1931). [Google Scholar]

- Guba, E.G.; Lincoln, Y.S. Epistemological and methodological bases of naturalistic inquiry. Educ. Technol. Res. Dev. 1982, 30, 233–252. [Google Scholar] [CrossRef]

- Horman, W. Vulgaria; Wynkyn de Worde: London, UK, 1519. [Google Scholar]

- Jowett, B. The Republic of Plato; Clarendon Press: Oxford, UK, 1888. [Google Scholar]

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling laws for neural language models. arXiv 2020, arXiv:2001.08361. Available online: https://arxiv.org/abs/2001.08361. [Google Scholar]

- Kauffman, S. The Origins of Order: Self-Organization and Selection in Evolution; Oxford University Press: New York, NY, USA, 1993. [Google Scholar]

- Knorr, K. Epistemic Cultures: How the Sciences Make Knowledge; Harvard University Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Ladyman, J.; Wiesner, K. What Is a Complex System? Yale University Press: New Haven, CT, USA, 2020. [Google Scholar]

- Lin, H.W.; Rolnick, D.; Tegmark, M. Why does deep and cheap learning work so well? J. Stat. Phys. 2017, 168, 1223–1247. [Google Scholar] [CrossRef]

- Lockhart, E.N.S. Creativity in the age of AI: The human condition and the limits of machine generation. J. Cult. Cogn. Sci. 2024, 9, 83–88. [Google Scholar] [CrossRef]

- Lockhart, E.N.S. Bounded knowledge bases and the limits of epistemic production. ResearchGate 2025. [Google Scholar] [CrossRef]

- Lockhart, E.N.S. Experientia Humana contra Simulacra et Technē Artificialis: Re-envisioning humanoid robots as trans-synthetic species beyond corporate technosimulacra. Presented at Roboi 2025: The 1st International Symposium on Embodied Intelligence and Humanoid Robots, Osaka, Japan, 20–22 November 2025. [Google Scholar]

- Machamer, P.; Darden, L.; Craver, C.F. Thinking about mechanisms. Philos. Sci. 2000, 67, 1–25. [Google Scholar] [CrossRef]

- Majumdar, A. The relativity of AGI: Distributional axioms, fragility, and undecidability. arXiv 2026, arXiv:2601.17335. Available online: https://arxiv.org/abs/2601.17335. [Google Scholar]

- Morgan, M.S. Models, stories, and the economic world. J. Econ. Methodol. 2001, 14, 361–385. [Google Scholar] [CrossRef]

- Morin, E. From the concept of system to the paradigm of complexity. J. Soc. Evol. Syst. 1992, 15, 371–385. [Google Scholar] [CrossRef]

- Nagel, E.; Newman, J.R. Gödel’s Proof, rev. ed.; New York University Press: New York, NY, USA, 2001. (Original work published 1958). [Google Scholar]

- Peters, O. The ergodicity problem in economics. Nat. Phys. 2019, 15, 1216–1221. [Google Scholar] [CrossRef]

- Popper, K. The Logic of Scientific Discovery; Routledge: London, UK, 1959. [Google Scholar]

- Rescher, N. Methodological Pragmatism: A Systems-Theoretic Approach to the Theory of Knowledge; New York University Press: New York, NY, USA, 1977. [Google Scholar]

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Shumailov, I.; Shumaylov, Z.; Zhao, Y.; Papernot, N.; Anderson, R.; Gal, Y. AI models collapse when trained on recursively generated data. Nature 2024, 631, 755–759. [Google Scholar] [CrossRef] [PubMed]

- Simon, H.A. A behavioral model of rational choice. Q. J. Econ. 1955, 69, 99–118. [Google Scholar] [CrossRef]

- Smith, K.; Kirby, S.; Guo, S.; Griffiths, T.L. AI model collapse might be prevented by studying human language transmission. Nature 2024, 633, 525. [Google Scholar] [CrossRef]

- Spencer-Brown, G. Laws of Form; Allen & Unwin: London, UK, 1969. [Google Scholar]

- Swedberg, R. Before theory comes theorizing or how to make social science more interesting. Br. J. Sociol. 2016, 67, 5–22. [Google Scholar] [CrossRef] [PubMed]

- Tomczak, J.M. Deep generative modeling: From probabilistic framework to generative AI. Entropy 2025, 27, 238. [Google Scholar] [CrossRef]

- Turing, A.M. Computing machinery and intelligence. Mind 1950, 59, 433–460. [Google Scholar] [CrossRef]

- Varela, F.J. Autopoiesis and society. In Autopoiesis, Dissipative Structures, and Spontaneous Social Orders; Zeleny, M., Ed.; Pergamon: Oxford, UK, 1980; pp. 85–100. [Google Scholar]

- Vassilev, A. Robust AI security and alignment: A Sisyphean endeavor? arXiv 2025, arXiv:2512.10100. Available online: https://arxiv.org/abs/2512.10100. [Google Scholar]

- von Bertalanffy, L. General System Theory, rev. ed.; George Braziller: New York, NY, USA, 1973. [Google Scholar]

- von Foerster, H. Cybernetics of cybernetics. In Communication and Control in Society; Krippendorff, K., Ed.; Gordon and Breach: New York, NY, USA, 1979; pp. 5–8. [Google Scholar]

- von Mises, R. Probability, Statistics and Truth; Neyman, J., Translator; George Allen & Unwin: London, UK, 1957. (Original work published 1936). [Google Scholar]

- Walker, L.O.; Avant, K.C. Strategies for Theory Construction in Nursing, 6th ed.; Pearson: Upper Saddle River, NJ, USA, 2019. [Google Scholar]

- Wei, J.; Tay, Y.; Bommasani, R.; Raffel, C.; Zoph, B.; Borgeaud, S.; ... Fedus, W. Emergent abilities of large language models. arXiv 2022. [CrossRef]

- Wiener, N. Cybernetics: Or Control and Communication in the Animal and the Machine; MIT Press: Cambridge, MA, USA, 1948. [Google Scholar]

- Wolpert, D.H. The stochastic thermodynamics of computation. J. Phys. A: Math. Theor. 2019, 52, 193001. [Google Scholar] [CrossRef]

- Zhang, J.-J.; Cheng, N.; Li, F.-P.; Wang, X.-C.; Chen, J.-N.; Pang, L.-G.; Meng, D. Symmetry breaking in neural network optimization: Insights from input dimension expansion. npj Artif. Intell. 2025, 1, 12. [Google Scholar] [CrossRef]

| Dimension | Borel (Ergodic) | Bateson (Constraint-Driven) |

|---|---|---|

| Space | Infinite trials | Bounded latent space |

| Dynamics | Uniform probability | Recursive elimination |

| Outcome | Theoretical convergence | Guaranteed viable subspace |

| Stability | Recursive collapse | Diversity preserved |

| Concept | Borel Infinite Monkey Theorem | Bateson Cybernetic Explanation | Supporting Theories |

|---|---|---|---|

| Core Principle | Random keystrokes + uniform probability → infinite trials → structured sequences | Constraints → recursive elimination → viable sequences → emergent meaning | IT: uncertainty → selective filtering → structured information BR: limited search + satisficing → tractable, structured decisions CT: algorithmic limits → constrained exploration → reproducible structure |

| Causal Explanation | Probabilistic causality: any sequence is possible + independent selection mechanisms → theoretical convergence | Negative explanation: constraints → eliminate improbable sequences → reinforce viable pathways → observed outcomes | IT: constraints → signal amplification → meaningful patterns BR: limited evaluation + satisficing → directed selection CT: algorithmic rules + halting limits → feasible outputs only |

| Role of Constraints | Operates independently of eliminative constraints; stochastic processes + infinite trials → passive structure | Constraints → remove implausible alternatives + iterative refinement → structured emergence | IT: signal filtering + probability shaping → information clarity BR: search-space limitation + satisficing → structured decisions CT: algorithmic bounds + stepwise rules → constrained system behavior |

| Logical Structure | Reductio ad absurdum → infinite trials resolve disorder → theoretical structure | Recursive refinement → feedback constraints → structured meaning, non-random outcomes | IT: filtering → measurable reduction of uncertainty → preserved structure BR: bounded exploration + satisficing → consistent outcome logic CT: computation constraints → enforce reproducible structure |

| Implications | Infinite stochastic possibility + no systemic restraints → passive emergence | Bounded selection + recursive constraint enforcement → viable sequences, preserved diversity | IT: constraint application → high signal-to-noise ratio → informative sequences BR: bounded rationality + iterative selection → structured emergence within cognitive limits CT: algorithmic control → predictable, non-random outputs |

| Category | Example | Context | Application |

|---|---|---|---|

| Artificial Intelligence | Machine Learning Algorithms | In computational models, algorithms are used to predict outcomes based on data input. | Regularization and loss functions guide algorithms to exclude poor models and converge on optimal solutions. |

| Art and Music | Composition of a Fugue | In music composition, the creation of a fugue follows strict counterpoint and harmony rules. | Excludes dissonant or structurally flawed note combinations, producing a harmonious and coherent musical piece. |

| Biology | Cellular Apoptosis (Programmed Cell Death) | In living organisms, cells undergo apoptosis to maintain health and function. | Cells that are damaged or malfunctioning are eliminated, preventing the spread of harm. |

| Communication Systems | Error-Correcting Communication Systems | In digital communication, systems ensure reliable message transmission despite noise or interference. | Error-correcting codes eliminate invalid messages, ensuring only correct sequences are transmitted. |

| Cybersecurity | Intrusion Detection Systems | In information security, systems monitor network traffic to detect unauthorized access. | Identifies and blocks anomalous behavior, preventing security breaches and maintaining system integrity. |

| Economics | Central Banks (Monetary Policy) | Central banks regulate the economy through monetary policy to maintain economic stability. | Constraints such as interest rates eliminate extreme market behaviors, preventing inflation or deflation. |

| Education | Adaptive Learning Platforms | In educational technology, platforms adjust to students’ learning needs. | Excludes mastered topics and focuses on areas needing improvement to optimize the learning experience. |

| Environmental Systems | Ecosystem Management (Predator-Prey Balance) | In ecological systems, maintaining balance among species ensures ecosystem health. | Prevents ecological collapse by managing populations and excluding unsustainable population levels. |

| Healthcare | Insulin Pumps (Diabetes Management) | In medical technology, insulin pumps regulate blood sugar levels for diabetic patients. | Excludes non-optimal insulin dosing patterns to maintain homeostasis and prevent glucose instability. |

| Law | Legal Systems (Judicial Decision-Making) | In legal frameworks, courts make decisions based on established statutes and precedents. | Eliminates unlawful or inconsistent outcomes by ensuring decisions align with legal standards. |

| Linguistics | Grammar Checkers (Word Processors) | In word processing, software tools check for grammatical accuracy. | Excludes ungrammatical sentences by using syntactic rules, helping users create correct language structures. |

| Manufacturing | Assembly Line Quality Control | In industrial settings, production lines ensure that products meet certain standards. | Detects and removes defective products from the production line, ensuring high-quality output. |

| Psychology | Cognitive Behavioral Therapy (CBT) | In mental health, CBT helps individuals reframe their thought patterns. | Identifies and excludes irrational thoughts, guiding individuals toward healthier cognitive patterns. |

| Robotics | Adaptive Robots | In robotics, autonomous systems adjust based on sensor feedback to optimize actions. | Excludes unsafe or ineffective movements, ensuring that robots perform tasks safely and efficiently. |

| Social Systems | Organizational Management (Performance Reviews) | In business environments, performance reviews are used to evaluate employee effectiveness. | Excludes unproductive behaviors through feedback and guides the organization toward its goals. |

| Transportation | Air Traffic Control Systems | In aviation, air traffic control ensures safe management of aircraft movements in busy airspace. | Excludes unsafe flight paths by preventing collisions and ensuring safe air traffic management. |

| Urban Planning | Zoning Laws and Building Codes | In urban planning, laws regulate how buildings and structures are designed and placed in cities. | Excludes non-compliant or unsafe building designs, ensuring safe and functional city planning. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.